Abstract

Nowadays, various technologies exist with differing rendering performance for interactive web maps. These maps are consumed on devices with varying capabilities; therefore, choosing the best-performing library for a dataset is emphasized. Unlike existing research, this study presents a comparative analysis on libraries’ native performance for rendering large amounts of GeoJSON vector data, partially extracted from OpenStreetMap (OSM). Four libraries were analyzed. Results showed that regardless of feature types, Leaflet and OpenLayers excelled for features up to 10,000. Up to 5000 points, these two were the fastest, above which the libraries’ performance converged. For 50,000 or more, Mapbox GL JS rendered them the quickest, followed by OpenLayers, MapLibre GL JS and Leaflet. For up to 50,000 lines and 10,000 polygons, Leaflet and OpenLayers were the fastest in all scenarios. For 100,000 lines, OpenLayers was almost twice as fast as the others, while Mapbox rendered 50,000 polygons the quickest. The performance of Leaflet and OpenLayers scales with the increasing feature quantities, yet for Mapbox and MapLibre, any performance impact is offset to 1000 features and beyond. Slow initalization of map elements makes Mapbox and MapLibre less suitable for rapid rendering of small feature quantities. Other behavioural differences affecting user experience are also explored.

1. Introduction

On the web, we expect speed, responsiveness and interactivity, and this also applies to webpages incorporating web maps. These days, the World Wide Web is a prominent medium to distribute and visualize geospatial data and maps due to the availability of web technologies, services and data. Interactive web mapping has been increasingly popular in recent decades [1]. Web mapping libraries have also matured a lot recently, thanks to constant development and increasing popularity in web applications used by end-users. When it comes to web mapping technologies, there are various libraries and application programming interfaces (APIs) to choose from, both proprietary and free to use. Every web mapping client-side library has its own strengths both in terms of performance and their feature sets, the latter of which allows them to display a given spatial data type in a customized, streamlined and interactive way. While using these libraries is easy and straightforward, creating cartographically comprehensive, clean and visually appealing maps requires both cartographic expertise and programming skills. Today, web maps are consumed on devices capable of differing computational power and performance; therefore, choosing the best-performing library for a particular dataset requires greater emphasis. Four popular JavaScript (JS) libraries have been evaluated in this paper in terms of rendering performance for large amounts of raw vector geometry data (Leaflet, Mapbox GL JS, MapLibre GL JS and OpenLayers). Leaflet, first released in 2011 by Vladimir Agafonkin [2], is considered one of the most popular and easy to use open-source solutions [3]. Based on the work of [4], Leaflet and OpenLayers are the most feature-rich, open-source web mapping libraries, which can be considered for building massive web GIS clients. Mapbox GL JS and MapLibre GL JS originate from the same, initially open and free-to-use project repository from 2014, but in 2020 Mapbox GL JS was branched out as a separate library with a proprietary licence incorporating usage fees using tokens. Mapbox GL JS’s source code remained partially open, and while both their APIs share a lot of similarities, the two libraries have been developed separately since.

We aim to provide web map developers with quantitative performance information and recommendations to aid them in choosing from the available web mapping libraries to use in their web application, from a complete rendering process perspective for specific amounts of vector data. While standards like vector tiles already exist to optimize data loading and visualization rendering speed, and both OpenLayers and Mapbox GL JS support them natively, Leaflet does not. Therefore, the analysis was performed with GeoJSON vector data loaded in the web map directly to find the native capabilities and limits of the libraries. The aim of this research is to assess and compare the loading and rendering performance of readily available, open-source web mapping libraries for vector data. The main research questions were:

- Which libraries offer the best performance for loading and rendering large amounts of vector data, i.e., points, lines and polygons, respectively, on an interactive web map?

- Are there any thresholds, in terms of the number of features, above which the libraries show a significant slowdown in performance?

- Are there any other aspects and behavioural differences related to specific libraries, which affect user experience, that one has to keep in mind when rendering large amounts of vector data?

In the context of web GIS and web cartography, a 2011 study compared open-source geospatial technologies for web mapping [5], where 14 projects have been analyzed, including client-side and server-side components and tools. The questionnaire conducted by the study focused on collecting users’ and developers’ opinions on a set of characteristics of these tools. It is worth noting that out of the four client-sided technologies included in the study, only OpenLayers was examined specifically as a front-end web mapping library. Leaflet was not available at the time. A 2014 study [6] established common metrics for web GIS applications to be used in academic libraries: data content, GIS functionality and usability. The study selected six web GIS products based on their prominence among academic libraries. Usability analyses of public web mapping sites and services provided by various companies were conducted by [7,8]. However, their experiments do not touch on the performance and capabilities of the underlying API used for visualizing geospatial data, but rather focus on the web mapping site and service as a whole, from a user experience and usability standpoint. In 2019, ref. [9] showcased a self-developed application comprising open-source tools, especially tailored for creating interfaces for and delivering historic georeferenced map layers on the web, using a combination of GeoServer on the server-side and OpenLayers on the client-side for visualization. Ref. [4] concluded that the geographically enabled JavaScript (JS) libraries can be categorized into two groups, based on their design concepts: web mapping libraries, which generally better support GIS features, and virtual globes, which offer native 3D and 2.5D visualization. Some of our previous publications in the domain of web cartography seek and offer solutions to specific problems and deficiencies in geovisualization in web environments, focusing primarily on Leaflet. Article [10] explores challenges (data classification, symbology and legend creation) that all come up when producing thematic maps with Leaflet and offers a simple workaround, ref. [11] discusses various workarounds addressing the problem of automatic labelling in Leaflet, and ref. [12] implements hatch fills in Leaflet, a common solution in traditional thematic cartography. This time, we solely investigate the rendering speed of various web mapping libraries, potentially exposing performance differences between them. While previous research was performed on qualitative aspects and usability of web mapping libraries (e.g., ref. [3] conducted three studies on web mapping technologies, comparing features, user needs and experiences), research on evaluating their performance is more diverse. The previous literature on web mapping library performance analyses mostly approached the subject from a specific point of view, tailored to a specific use case. Ref. [13] tested the performance of vector vs. raster map tiles by conducting a comparative study on load metrics. In their testing, they used the Mapbox GL JS library for all tests, as it is one of the libraries that fully support both vector and raster tiles (besides OpenLayers 3 and ArcGIS API for JS). Ref. [14] conducted a comparative study on the libraries based on clustering and heatmap visualization performance, therefore focusing on geovisualization techniques involving large amounts of spatial point data. In their research, specific, third-party plugins were selected for Leaflet, OpenLayers and Mapbox GL JS to assess and compare their rendering performance. While the methodology for measuring performance was based on rendering time, the method did not eliminate the effect of dataset file sizes (dataset loading time was included in measurements). In their testing, some libraries’ performance degraded when rendering more than 100,000 points, while Mapbox GL JS was concluded to be the library with the highest overall performance. The paper’s aim being point clustering and heatmaps, it did not use all vector data types for testing, nor did it focus on the general raw vector rendering performance of the libraries. Ref. [15] compared the performance of a self-developed graphics processing unit (GPU)-based approach for heatmap visualization to other solutions provided by plugins. Reference [16] evaluated the execution time and network usage of Leaflet, OpenLayers and Mapbox GL JS for raster and vector data simultaneously, using a Life Quality Index (LQI) web map on 14 different mobile devices. They also concluded that Mapbox GL JS achieved the best overall performance on mid- and high-end devices, while OpenLayers was the best for raster maps on all devices. In their work, while the LQI dataset was created in both raster and vector formats, the focus was on testing the same dataset on various device and library combinations and comparing those results, without changing the number of features and data between tests.

In contrast, this paper uses a different approach compared to [16], emphasizing raw vector data performance based on datasets containing a gradually increasing number of features of the same type, with the correlation between feature count and rendering time being the main indicator of performance. It is also worth pointing out that none of the mentioned papers involved MapLibre GL JS in their testing. Comparing MapLibre GL JS and Mapbox GL JS could potentially provide interesting insight into their evolution and how they differ these days in terms of performance, since they basically started out from the same codebase in 2014, and in December 2020, they branched into two separate libraries, with Mapbox GL JS switching to a proprietary licence [17].

2. Materials and Methods

The goal of the research is to assess and compare the loading and rendering performance of selected open-source web mapping libraries. Four JavaScript libraries were analyzed and compared: Leaflet v1.9.4, Mapbox GL JS v3.7.0, MapLibre GL JS v4.7.1 and OpenLayers v10.2.1.

The research concentrated on the native visualization of vector data only. The comparative testing and analysis were performed using consistent datasets of varying feature types and numbers of features, for all tested libraries. Loading and rendering time were compared for each dataset, for each library. The datasets were created in the widely used interoperable geospatial format GeoJSON. All four libraries support GeoJSON as vector data input natively, and it can be regarded as the de facto data standard for web map usage. The latest available stable versions of the libraries were used for testing. The performed tests focus on raw performance and do not take cartographical aspects into account for the resulting web map. Visualizing this number of features (also called “big data”) most likely results in a cartographically incomprehensive map that is not suitable for effective communication between the map maker and its reader, with many problems, including overlapping symbols and features, making them impossible to distinguish. Testing and comparing the performance of libraries across different devices and platforms is not part of the scope of this research; neither is testing the performance evolution of a single library throughout its lifetime (compared to previous versions).

Precisely assessing performance, therefore measuring loading and rendering times for web mapping technologies, is convoluted. Since the libraries and their APIs all use JavaScript, measuring execution time with the native JS methods comes to mind (e.g., using a Date object or performance timers). Most of these methods provide the precision of a millisecond, which might make them suitable for this purpose. However, the tested web mapping libraries all do numerous internal processes asynchronously, so using saved timestamps before and after the relevant JS code in the script is not suitable, because there might be cases when the measurement ends before the actual map and features are rendered; therefore, the measurement would not correspond to what the user would see on their screen. The other problem with measuring time with these libraries is that determining render completion is hard as there is not a definite point that signals it consistently. Ideally, listening to events related to render completion could work, but not all libraries have these in a consistent manner, and the events get fired before anything appears on the map object itself.

With the mentioned issues and with the goal of the research in mind, it was critical to keep measurements limited to the temporal range between just after the dataset is loaded in memory and when the features are actually rendered in the browser. To do this, similarly to [14], the Chrome DevTools Performance panel was examined after each run. This panel provides precise timeline information of the whole page loading and rendering process, including all scripting phases, with all running functions distinguished. The panel does provide overall times of specific webpage loading processes, but these are prone to inaccuracies, as it is almost impossible to keep the beginning and end of a recorded profile consistent. This timeline was carefully inspected to delineate library processes of interest and also keep all measurements as objective as possible. In order to minimize any external effects and focus on data loading and rendering upon comparison, some constraints were taken into consideration to regulate and keep the analysis and comparison as consistent as possible:

- Library-related processes are isolated from data source loading: The testing scenarios were realized by using a combination of the Fetch API and the “then()” method of Promise; therefore, the GeoJSON file is wholly loaded from the local web server, before the map gets initialized. This way, the impact of the dataset’s file size and the time it takes to retrieve the file are eliminated from the timing analysis.

- No background map layers: Web maps in everyday use have some kind of a background map to give spatial context to the visualization. These background maps are usually in the form of XYZ-tiles, which are retrieved directly from a remote source. During testing, all libraries have had their background map layers disabled or not loaded at all to eliminate network delays and rendering of tiles from the timing tests. Leaflet and OpenLayers had no tile layers loaded; for Mapbox GL JS and MapLibre GL JS, a local “empty” JavaScript Object Notation (JSON)-formatted map style definition was used, which does not retrieve any data from the network.

- Consistent map object canvas resolution: To make sure map objects show the same spatial extent and have to render the same features among all libraries, the webpage canvas has been limited to 1000 × 953 pixels for all tests.

- Matching view extent and zoom level: When using a dataset that has a spatial extent covering the areas near the 180th meridian (antimeridian), some libraries rendered the features once more over this meridian (Mapbox GL JS, MapLibre GL JS), while some did not (Leaflet, OpenLayers). To eliminate this difference, a matching view extent has been set for all libraries, and the datasets only contain features that are not near that area spatially.

- Symbology: The styling of features was performed differently in each library by default; therefore, the symbology was matched throughout the four libraries. For points, a black scalable vector graphics (SVG) circle was used as symbol; both lines and polygons had blue strokes, with polygons also having a semi-transparent blue fill colour (alpha: 0.2). Leaflet’s default method of using markers for points has been replaced with rendering SVG symbols (further discussed in Section 3.1.1).

- Visualization coordinate reference system (CRS): While the sample datasets were created in CRS EPSG:4326 (since most libraries expect latitude and longitude coordinates as data input), all libraries were configured to use EPSG:3857 (Web Mercator) for visualization, which is widely popular on web map services and is taken as default. Mapbox GL JS uses a 3D Globe representation by default, and the other libraries do not support 3D modes; therefore, for Mapbox GL JS, manual override was necessary to keep a consistent 2D map rendering across all libraries for testing.

- Browser plugins: Browser plugins might do some work asynchronously, while the map is being loaded and rendered. The testing was conducted in the strictest mode, with no third-party plugins or extensions running to further eliminate any external effects.

- Network activity for Mapbox GL JS: Since the usage of Mapbox GL JS is tied to an access token that is checked and authenticated right on every map object instantiation, the delay of this request and response from Mapbox servers cannot be fully eliminated. However, since implementing this library in a real web environment would always involve the authentication process, this delay is accounted in the library performance itself. The tests were performed on a 10-gigabit internet connection to keep this authentication as fast as possible and to keep the variance introduced by the network communication as low as possible.

- With all these considerations in mind, the tests were conducted in a local environment (further discussed in Section 2.1). The start of all timings was regarded as the point where the GeoJSON file was fully loaded with the aforementioned method, after which the library initializes the map. Since the four libraries have different structures and parallelization of code, the measurement range needed to be refined for each.

- Leaflet and OpenLayers: measured until the Largest Contentful Paint (LCP) metric (matches First Paint and First Contentful Paint as well) [18], since this is the moment when all the features appear at once on the map for the user. These two libraries finish their relevant functions and processes well before this mark, but the paint and commit processes are called to start rendering after the library functions finish.

- Mapbox GL JS and MapLibre GL JS: measured until the last library-relevant task finishes, with a major commit process at its end. For these libraries, since they do GPU rendering asynchronously, the LCP metric did not correspond to the moment when the features actually appeared on the map.

Besides conducting the tests individually for points, lines and polygons, a combined test involving multiple layers on the same map was also created to simulate a more complex scenario that might reflect common user scenarios better. The combined test used the same datasets as the previous tests, but this time, the test map had three datasets of matching feature counts loaded, for each feature geometry type.

The workflow involved time measurements performed to the precision of a millisecond; the results were averaged over 10 runs for each data sample. Standard deviation values for all 10 runs for each data sample were averaged for later comparison of libraries based on performance consistency. A total of 1240 runs were measured, all of which contributed to the performance analysis.

2.1. Testing Environment

The testing was conducted in a local environment to minimize latency due to client–server communication. The environment consisted of a desktop PC with the following specifications: AMD Ryzen 5 3600 (4.2 GHz), 16 GB DDR4-3200 RAM, NVIDIA GeForce RTX 3070 12 GB (driver 560.94), and Windows 10 Pro 64-bit, with a desktop resolution of 1920 × 1080. The environment further consisted of a local webserver created with the “Live Server” extension for VS Code, which uses the npm package “live-server” to host a simple local webserver for development purposes. Google Chrome 131.0.6778.86 (64-bit) was used as the browser for running the tests. The JavaScript libraries were loaded by native HTML script loading (not as modules, packages or server-side NodeJS).

2.2. Sample Datasets

For the sample datasets used in performance testing, the aim was to create a consistent range of dataset files, which only differ in the number of features, while minimizing other differences (like vertex count and feature inconsistency) between them. Also, the data should keep its “realness” as much as possible. Points, since there is no room for geometrical variety as they are a single node described by a coordinate pair, were randomly generated into a given spatial extent using geographic information system (GIS) software. Generating lines (linestrings) and polygons could also be done programmatically, but that way, the end result will most likely not resemble any kind of real data. So, for creating consistent datasets for lines and polygons, two major issues arise:

- Feature complexity (vertices);

- Making sure features in a smaller dataset are definitely part of a larger one.

Lines and polygons were extracted from OpenStreetMap (OSM) open data, available under the Open Database License (ODbL) [19]. To make sure feature complexity variance is less of a factor between libraries, each dataset had an even split of simple and complex features, based on vertex count. This was performed by classifying them based on vertex count into two classes per type: for lines 2–4 and 5+, for polygons 3–6 and 7+ vertices. These classes were determined by inspecting OSM datasets for countries and finding the average number of vertices for lines and polygons, separately. Finding this average made sure that the subsequent datasets can still be generated with the even splitting of feature complexity in mind.

Gradually subsetting a large dataset (, with a transitive relation between all sets in order), therefore making the datasets build on each other by keeping the same exact features as part of the larger datasets, was performed to eliminate differences between datasets with differing numbers of features. If this had not been enforced, randomly selecting, for example, 500 and 1000 features from a large dataset could result in much simpler features in one and much more complex features in the other. This might introduce inconsistency between libraries as well, if, hypothetically, one handles features with more nodes better than the others.

To meet these two criteria, the datasets were created by gradually reducing a large dataset into smaller ones, step by step. The initial large dataset contained roughly twice the number of features that the largest planned dataset of feature type had. After classifying lines and polygons into two classes based on vertex count, the “random selection within subsets” algorithm of QGIS was used to reduce a large dataset into a smaller one, while also making sure the resulting selection had an even split of simple and complex features. Upon saving the sample GeoJSON files containing features in the CRS of EPSG:4326, all attributes were stripped from the features, and a 6-decimal coordinate precision was applied to the geometry to keep file sizes small. Nine dataset variants based on feature count were defined, with 50, 100, 500, 1000, 5000, 10,000, 50,000, 100,000 (points and lines only) and 500,000 (points only) features. This way, 24 separate datasets were created in total.

3. Results and Discussion

This section summarizes and discusses the results of the performance analysis presented previously. First, in Section 3.1, general results, regardless of feature type, are presented; then, type-specific conclusions are demonstrated. Later, Section 3.2 and Section 3.3 discuss performance consistency and notable behavioural observations, which emerged during the testing workflow, while Section 3.4 highlights size differences between the libraries.

3.1. Performance

Generally, regardless of feature types, Leaflet and OpenLayers both excel at small number of features up to 10,000. Between them, Leaflet has an advantage over OpenLayers and is approximately 1.15× faster for feature numbers up to the 1000 mark. Above this number, OpenLayers usually takes the lead. Still, regardless of feature types, Mapbox GL JS is generally faster than MapLibre GL JS in all tested scenarios, by 70–200 ms for up to 5000 features, at which point the difference is down to approximately 60 ms between them. In absolute time, this of course does increase with the number of features.

A common phenomenon can be seen across the performance of all libraries, namely a threshold-like limit at 50,000 and more features, where overall loading times exceeded a second, and above this threshold, the measured times were exponentially increasing and the libraries showed a significant slowdown in performance. Loading times exceeding a second are more obvious for users waiting for the webpage to load and might cause some inconvenience.

A notable anomaly is that while the performances of Leaflet and OpenLayers scale nicely with the increasing number of features, for Mapbox GL JS and MapLibre GL JS, this cannot be stated. Regardless of feature count (even with no features or layers loaded), they initially load their map object elements in 160 and 350 ms, respectively. These values, compared to the other two, are approximately 130 and 315 ms slower, respectively, depending on the dataset scenario. Results showed that for Mapbox GL JS and MapLibre GL JS, any effect of a larger number of features on their performance is offset and starts to appear only for datasets with 1k features and beyond. Under this amount, they render any number of features with a consistent speed with respect to themselves; the differences between runs on datasets (containing 50 to 1000 features) are well within margin of error (SD = 3–5 ms). Although Mapbox GL JS requires token authentication and that takes some time, it does not singlehandedly justify the initial slow loading times, as receiving a response to the requests usually only take a few milliseconds, and even if they took longer, it would not delay rendering (as later discussed in Section 3.3). For MapLibre GL JS, even though there is no token authentication happening, it takes almost twice as long to load its map object element compared to Mapbox GL JS. Due to their shared development phase at the beginning (up to Mapbox GL v1), these libraries might still use the same codebase (and also GPU-based WebGL), which might cause much slower initialization compared to the other libraries. However, the cause of the initial loading time difference between the two has not been determined and is therefore inconclusive.

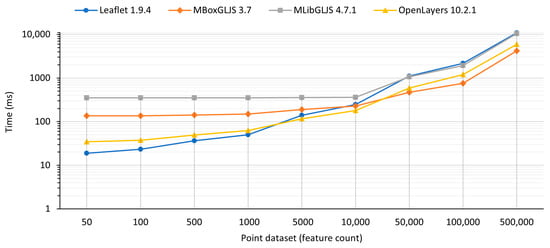

3.1.1. Points

As illustrated in Figure 1, the results of performance testing, specifically with point features, indicate that, while for a small number of points, up to 5000, Leaflet and OpenLayers still excel (140 and 115.5 ms, respectively), for 10,000 points, the libraries’ performances somewhat converge (within a range of 240 ms), and for 50,000 and or more, Mapbox GL JS clearly handles and renders them the quickest, with OpenLayers not far behind (466.9 and 588.4 ms, SD = 15.96 and 8.45 ms, respectively). MapLibre GL JS is generally slower throughout, and from 50,000 points and onwards, Leaflet joins it among the slowest libraries for lots of points (they take roughly twice the time as the other two). Since with 50,000 points and above the rendering times exceeded a second, one might consider using clustering plugins or creating a heatmap visualization, if appropriate to their dataset, as discussed by [14].

Figure 1.

Results of testing with point features.

As touched on in Section 2, Leaflet’s default method of displaying points from a GeoJSON source is placing “markers” for each, which are represented by a 25 × 41 px resolution .png image (with an extra .png file at a 41 × 41 px resolution used as a shadow) that is supplied with Leaflet. Loading lots of these raster images is exceptionally slow, causing map loading to take tens of seconds. For reference, loading 500 points took 450 ms, 5000 points took ~9.5 s and 10,000 points with markers took half a minute (with an inconsistent and glitchy rendering, where sometimes one third of the markers were not rendered at all). Besides the slowness, testing performance using this method was not practical for multiple reasons, even if it is the default way of rendering points. First, showing raster images for points by default is only performed by Leaflet, so using SVG symbols for points was much more suitable for the comparison. Second, these markers take up lots of space on the map canvas, making them less suitable for mass data. Third, using raster images as markers would introduce another variable into comparative testing (marker image format, resolution). Moreover, while the default markers do look good, for users just starting out with Leaflet and trying to load lots of points, the slowness of using this default method might make them quickly turn away from Leaflet and use some other library for a web map visualization. In order to use SVG symbols for points in Leaflet, a “pointToLayer” function was passed in the GeoJSON layer options object, altering the default behaviour to use L.circleMarker instead of L.marker.

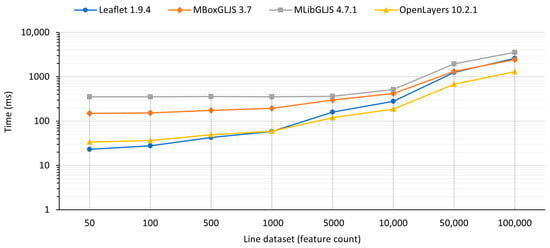

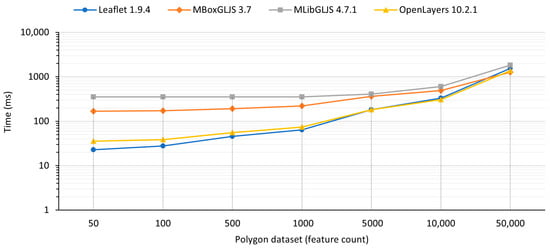

3.1.2. Lines and Polygons

The rendering performance of lines and polygons showed differences compared to rendering of SVG points, as shown in Figure 2 and Figure 3. Leaflet and OpenLayers were the fastest in all tested scenarios up to 50,000 lines and 10,000 polygons. For 100,000 lines, OpenLayers proved to be almost twice as fast as the other libraries, taking only 1295.5 ms (SD = 9.35 ms). For 50,000 polygons, Mapbox GL JS excelled, with an average time of 1265 ms (SD = 28.13 ms), aligning with the findings of [14]. In this same scenario, for the first time in the test series, OpenLayers was slightly slower, with 1366.4 ms (SD = 11.42 ms), while Leaflet took 1539.9 ms (SD = 25.68 ms). MapLibre GL JS was significantly slower than all other libraries for both lines and polygons across all scenarios. One would expect that Mapbox GL JS and MapLibre GL JS takes advantage of GPU acceleration in WebGL, but their potential edge did not clearly come out. While Mapbox GL JS was the fastest with the largest number of polygons, MapLibre GL JS did not even beat the HTML canvas-based libraries at any point during testing. There is a possibility that rendering even more features would gradually reveal the advantage provided by WebGL, but the absolute rendering times of the sample datasets already surpassed a second, which is not optimal for keeping user-perceived loading speed high.

Figure 2.

Results of testing with line features.

Figure 3.

Results of testing with polygon features.

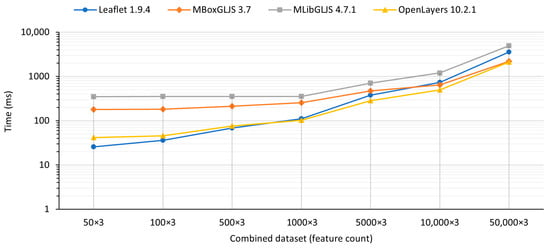

3.1.3. Combined Map with Multiple Layers

As shown in Figure 4, the combined test yielded similar results to those of the feature type-dependent tests. While the feature number was tripled for each run, Leaflet and OpenLayers still rendered the map fastest, with up to 5000×3 features. Between them, Leaflet excelled with low numbers of features, while OpenLayers proved to be the fastest from 1000×3 features and up. At 50,000×3 features, Mapbox GL JS got close to the speed of OpenLayers, with only 79.7 ms between them in that test.

Figure 4.

Results of testing with combined datasets.

Mapbox does offer a web article for improving rendering performance in their library, specifically for working with GeoJSON data, including more general tips like cleaning up data (lowering coordinate precision, pruning unused properties), using clustering for dense data, limiting zoom levels and disabling hiding overlapping symbols. Their web article also highlights that Mapbox GL JS handles GeoJSON sources by turning the data into Mapbox vector tiles on-the-fly on the client side [20].

As [14] also pointed out, even though the libraries are under active development, major versions only get released with long intervals; therefore, the results of this performance analysis should be relevant for some time. However, since their development is not tied to a strict development strategy, the libraries and their performance may change significantly over time.

3.2. Performance Consistency

To gauge performance consistency across the libraries, the standard deviation of execution time has been calculated, based on the 10 runs for each sample dataset, and then averaged per feature type.

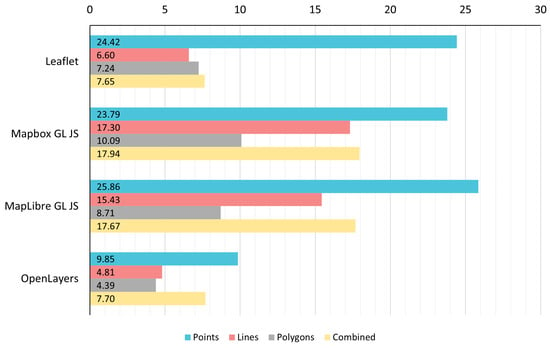

In Figure 5, consistency results for each library and feature type can be observed. In terms of overall performance consistency, OpenLayers provided the most consistent results, regardless of feature type (points SD = 9.85 ms, lines and polygons SD < 5 ms). In general, point rendering was the most inconsistent across the libraries. OpenLayers and Leaflet rendered lines in the most consistent way, with an SD = 4.8 ms and 6.6 ms, respectively, while Mapbox GL JS and MapLibre GL JS were roughly three times less consistent, with SDs between 15 and 17 ms. Rendering polygons showed the least amount of variation across all libraries, with OpenLayers being the most consistent. In the combined test, the pair of Leaflet and OpenLayers was the most consistent with an SD around 7.7 ms, while MapLibre GL JS and Mapbox GL JS showed an SD = 17.67 ms and 17.94 ms, respectively.

Figure 5.

Rendering speed consistency of libraries for different feature types (SD, in ms).

3.3. Behavioural Observations

This section provides insight into other notable behavioural differences and aspects between the libraries that can affect user experience, but, in general, are less or not related to the quantitative performance analysis itself.

When rendering large numbers of features, there is a significant difference in how the libraries keep the zooming and panning interaction and animation fluent when great quantities of features are in the viewport. While changing the zoom level, Leaflet waits for render completion at every level before proceeding to the next one, meaning that zooming in multiple levels quickly is erratic and not fluent at all. While waiting for the subsequent level to render, Leaflet shows an enlarged/scaled down static view of the previous level’s render (including basemaps and symbols). On the other hand, OpenLayers, Mapbox GL JS and MapLibre GL JS provide a smooth transition between zoom levels, constantly rendering the transition frame by frame. This feels natural, fluid and very smooth in all three libraries, improving the perceived user experience by a lot. For panning, all libraries use some kind of occlusion to hide features outside the current viewport to improve performance. In this regard, the method used by Leaflet and OpenLayers is vastly different from that of the other two. Both Leaflet and OpenLayers have a slight amount of buffer-like padding, beyond which they hide all features. When panning, both of them wait until the “dragend” event has been fired, i.e., the user released the left mouse button, and only then start to render the view again with the updated set of extra features. The combination of the thin buffer and this behaviour makes panning very apparent to the user interacting with the map in a negative way, because the user might momentarily see missing features on the new viewport extent, which are then suddenly rendered. When drawing SVG points, OpenLayers slightly blurs the circle symbols during the entire panning event. On the other hand, Mapbox GL JS and MapLibre GL JS perform panning flawlessly. During the panning, the user never sees missing or unrendered features at any point, for any amount of panning on an axis.

For Mapbox GL JS, even if the library starts authenticating the token by sending a request to Mapbox servers right upon initialization of the map object, it does start rendering while waiting for the response. This means that the rendering itself is not directly delayed by the authentication step. This behaviour showed when the token was set, but by changing some token characters to make it deliberately invalid, the features occasionally rendered for a split second, and then the map object was instantly cleared once the invalid authentication response was received. This behaviour could clearly be observed by artificially throttling the network speed in Chrome DevTools (“Slow 3G” preset), which delayed the authentication response by a lot, and the step of clearing the map content was obvious. In terms of performance this asynchronous authentication is beneficial, but on the other hand, in the rare case when an invalid token is used and it is confirmed invalid in a response, completing the rendering is basically a waste of resources due to it being cleared right after. If the token is not set at all in code (the “mapboxgl.accesstoken” property is either null or non-existent), the library shows an error message in the console and does no further processes or rendering.

While examining the performance profiler, for Leaflet and OpenLayers, there is a distinct, stacked GPU usage phase that happens only after the library has finished processing features, following a distinctive paint and commit process. On the other hand, Mapbox GL JS and MapLibre GL JS constantly use the GPU for small chunks of work, in parallel with processing. There is no distinct phase where the GPU is utilized. While the effect of WebGL was not evaluated explicitly, the tests were conducted on two desktop computers with different capabilities and performance. The major difference between them was the GPU, where one was integrated (Intel HD Graphics 530) and the other had a dedicated GPU (NVIDIA GeForce RTX 3070 12 GB). The integrated graphics processing unit (iGPU) supported OpenGL 4.4; the dedicated GPU supported OpenGL 4.6. Comparing the two systems, while the PC with more computational power generally had lower processing times, the difference was consistent over all variants of the datasets with varying number of features. One exception was the test with 50,000 polygons, where the two GL-accelerated libraries (Mapbox GL JS and MapLibre GL JS) performed better compared to the non-GL-accelerated ones, but only on the weaker PC. This seems strange, since the PC with the dedicated GPU, besides supporting OpenGL 4.6, has much more graphics processing power, yet still, the difference between the accelerated and non-accelerated libraries only showed on the weaker PC, the opposite way one would expect. The difference delta on the weaker PC was approximately 816 ms in favour of the accelerated libraries, while on the dedicated GPU PC, it was 430 ms in favour of the non-accelerated libraries. Other than this anomaly for polygons, there was no scenario for points and lines, when the rendering times increased or dropped significantly due to hardware differences, even for features in the multiple hundred-thousands. Ultimately, whether using WebGL contributed, and if so, by how much it contributed to speeding up Mapbox GL JS and MapLibre GL JS, is not conclusive and needs more refined testing.

The parallel usage of GPUs allows Mapbox GL JS and MapLibre GL JS to gradually show features in a partitioned manner, while the rendering is still ongoing. This was particularly visible at and above 5000 points, 1000 lines and 500 polygons (a slower system would highlight this behaviour). For many points, quadrants of all points appeared sequentially in the viewport. A part of these features appearing sooner than the rendering finishes completely means that the user already sees some content on the map earlier; therefore, the further delay of rendering all features is less noticeable.

3.4. Library Size Differences

While not closely related to the performance of a specific library but to the general speed of webpage loading, the libraries do show differences in file size. The required JS and Cascading Style Sheets (CSS) files both have to be loaded in the clients’ browser; therefore, their size might matter on lower bandwidth connections. When writing source code, developers usually use comments, spacing and variable names that make working with the file easier. However, for end-user usage in web browsers, well-structured code is not necessary. Code minification is one of the major methods used to reduce file sizes and, therefore, bandwidth usage and loading times. The work of [21] concluded that, in general, the total size of the top 500 websites could be potentially reduced by 40% by script minification solely.

Since these web mapping libraries are client-sided, code minification is also a relevant factor for them. Some library files are available as non-minified sources and also in TypeScript format in their respective repositories. The size of both minified and non-minified versions of these required JS files and CSS files can be seen in Table 1. When comparing minified versions, Leaflet, with its small file size, is much smaller and more lightweight than the other three libraries, at only 10.5% of the size of the largest library, Mapbox GL JS, and 18% of the size of MapLibre GL JS and OpenLayers. It is worth noting that Leaflet also supplies some image files with the library, which weigh a total of 6 KB. Overall, while these file sizes are not too large for the common bandwidth in use these days, they show another difference between the libraries.

Table 1.

Sizes of JavaScript and CSS files for the four tested libraries (in kilobytes).

4. Conclusions

Although using the available web mapping libraries is pretty easy and straightforward, an important aspect of creating maps with these libraries is their rendering performance. Web maps are consumed on devices with differing computational power and performance; therefore, choosing the best-performing library for a particular dataset requires greater emphasis.

In this work, we conducted a comparative performance analysis between four web mapping libraries, namely Leaflet, Mapbox GL JS, MapLibre GL JS and OpenLayers, to assess and compare their capabilities for rendering large amounts of GeoJSON vector data. We aimed to provide web map developers with quantitative performance information for rendering different amounts of features. The latest versions of libraries available at the end of 2024 were tested. A total of 24 separate datasets were generated, with data extracted from OpenStreetMap data, including datasets containing 50 to 500,000 features for points, lines and polygons, respectively. By defining constraints across all libraries and tests, a regulated workflow in a local environment was established, in which the libraries’ performance was measurable and comparable during testing. Perceived render completion was measured for each dataset and each library, while eliminating external effects like the impact of dataset size and bandwidth, or loading other layers besides the vector data sample. All testing scenarios were conducted using Google Chrome 131 on a desktop PC, with the necessary files served by the “live-server” npm package. The JavaScript libraries were loaded using native HTML script loading.

Regardless of feature types, Leaflet and OpenLayers were evaluated as the most suitable libraries for rendering small number of features, up to 10,000. Mapbox GL JS was faster than MapLibre GL JS in all tested scenarios, with a significant difference of 70–200 ms for 50 to 5000 features. When examining point features only, at up to 5000 points, Leaflet and OpenLayers still excelled, whereas at 10,000 points, the four libraries’ performance somewhat converged, beyond which point Mapbox GL JS was evaluated as the fastest, with OpenLayers not far behind, with a difference of 122, 442 and 1736 ms for 50,000, 100,000 and 500,000 points, respectively. MapLibre GL JS and Leaflet were the slowest libraries for 50,000 points or more, with rendering taking approximately twice the time of the other two. For up to 50,000 lines and 10,000 polygons specifically, Leaflet and OpenLayers were evaluated as the fastest libraries in all tested scenarios. For 100,000 lines, OpenLayers was the most efficient, taking only 1296 ms, rendering them twice as fast as any other library, while Leaflet, Mapbox GL JS and MapLibre GL JS took 2594, 2446 and 3579 ms, respectively. For rendering 50,000 polygons, Mapbox GL JS proved to be the fastest, ahead of OpenLayers and Leaflet. MapLibre GL JS was significantly slower than all other libraries for both lines and polygons across all scenarios. Based on the conducted testing, a threshold-like performance limit can be observed at 50,000 or more features across all libraries. At this amount, overall rendering completion times exceeded a second, and beyond this limit, the measured times exponentially increased, showing a significant slowdown in the performance of the libraries. Loading times exceeding a second are more apparent for users waiting for the webpage to load, and this might cause some inconvenience and worsen the overall user experience.

The results also showed that while the performance of Leaflet and OpenLayers scaled nicely with feature numbers, for Mapbox GL JS and MapLibre GL JS, any effect of larger a number of features on their performance was offset and was only applicable to datasets with 1000 features or more. Under this amount, they rendered any number of features with a consistent speed with respect to themselves, due to the slow initialization of their map element. This makes Mapbox GL JS and MapLibre GL JS much less suitable for the rapid rendering of small number of features. For overall performance consistency, OpenLayers provided the most consistent results across all feature types, with an SD = 9.85 ms and <5 ms for points and lines/polygons, respectively.

Other behavioural differences and aspects, when rendering a vast number of features, were also observed between the libraries, which can affect user experience. For example, when quickly zooming multiple levels, OpenLayers, Mapbox GL JS and MapLibre GL JS provided a smooth transition between each of the zoom levels, constantly rendering the transition frame by frame, while Leaflet waited for render completion at every level, resulting in erratic motion and interaction. Panning interaction, feature occlusion and asynchronous GPU usage also varied between the libraries.

The results facilitate decision making when choosing a web mapping library to build data-heavy, web-based interactive platforms for spatial data visualization on the web. Even though the libraries are in constant development, major versions are seldom released; therefore, the results of this comparative analysis should stay relevant for some time.

Author Contributions

Conceptualization, Dániel Balla and Mátyás Gede; methodology, Dániel Balla; investigation, Dániel Balla; writing—original draft preparation, Dániel Balla; supervision, Mátyás Gede; writing—review and editing, Mátyás Gede. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Data Availability Statement

The original data presented in the study are openly available in GitHub at https://github.com/balladaniel/webmaplibs_performance (accessed on 28 August 2025).

Conflicts of Interest

The authors declare no conflicts of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| API | Application Programming Interface |

| CRS | Coordinate Reference System |

| CSS | Cascading Style Sheets |

| GIS | Geographic Information System |

| GPU | Graphics Processing Unit |

| HTML | Hypertext Markup Language |

| iGPU | Integrated Graphics Processing Unit |

| JS | JavaScript |

| JSON | JavaScript Object Notation |

| LCP | Largest Contentful Paint metric |

| LQI | Life Quality Index |

| OSM | OpenStreetMap |

| SVG | Scalable Vector Graphics |

| WebGL | Web Graphics Library |

References

- Köbben, B.J.; Kraak, M.J. Mapping, Web. In International Encyclopedia of Human Geography, 2nd ed.; Kobayashi, A., Ed.; Elsevier: Amsterdam, The Netherlands, 2020; pp. 333–337. [Google Scholar] [CrossRef]

- Leaflet—A JavaScript Library for Interactive Maps. Available online: https://leafletjs.com/ (accessed on 1 January 2025).

- Roth, R.E.; Donohue, R.G.; Sack, C.M.; Wallace, T.R.; Buckingham, T.M.A. A Process for Keeping Pace with Evolving Web Mapping Technologies. Cartogr. Perspect. 2014, 78, 25–52. [Google Scholar] [CrossRef]

- Farkas, G. Applicability of open-source web mapping libraries for building massive Web GIS clients. J. Geogr. Syst. 2017, 19, 273–295. [Google Scholar] [CrossRef]

- Ballatore, A.; Tahir, A.; McArdle, G.; Bertolotto, M. A comparison of open source geospatial technologies for web mapping. Int. J. Web Eng. Technol. 2011, 6, 354–374. [Google Scholar] [CrossRef]

- Kong, N.; Zhang, T.; Stonebraker, I. Common metrics for web-based mapping applications in academic libraries. Online Inf. Rev. 2014, 38, 918–935. [Google Scholar] [CrossRef]

- Nivala, A.M.; Brewster, S.A.; Sarjakoski, L.T. Usability Evaluation of Web Mapping Sites. Cartogr. J. 2008, 45, 129–138. [Google Scholar] [CrossRef]

- Wang, C. Usability Evaluation of Public Web Mapping Sites. Int. Arch. Photogramm. Remote Sens. Spatial Inf. Sci. 2014, XL-4, 285–289. [Google Scholar] [CrossRef]

- Fleet, C. An open-source web-mapping toolkit for libraries. e-Perimetron 2019, 14, 59–76. [Google Scholar]

- Balla, D.; Gede, M. Beautiful thematic maps in Leaflet with automatic data classification. Int. Arch. Photogramm. Remote Sens. Spatial Inf. Sci. 2024, 48, 3–10. [Google Scholar] [CrossRef]

- Gede, M. Automatic Labels in Leaflet. Adv. Cartogr. GIScience Int. Cartogr. Assoc. 2023, 4, 8. [Google Scholar] [CrossRef]

- Gede, M. Hatch Fill on Webmaps—to Do or Not to Do, and How to Do. Abstr. Int. Cartogr. Assoc. 2022, 5, 48. [Google Scholar] [CrossRef]

- Netek, R.; Masopust, J.; Pavlicek, F.; Pechanec, V. Performance Testing on Vector vs. Raster Map Tiles—Comparative Study on Load Metrics. ISPRS Int. J. Geo-Inf. 2020, 9, 101. [Google Scholar] [CrossRef]

- Netek, R.; Brus, J.; Tomecka, O. Performance Testing on Marker Clustering and Heatmap Visualization Techniques: A Comparative Study on JavaScript Mapping Libraries. ISPRS Int. J. Geo-Inf. 2019, 8, 348. [Google Scholar] [CrossRef]

- Ježek, J.; Jedlička, K.; Mildorf, T.; Kellar, J.; Beran, D. Design and Evaluation of WebGL-Based Heat Map Visualization for Big Point Data. In The Rise of Big Spatial Data, Proceedings of the Symposium GIS Ostrava 2016, Ostrava, Czech Republic, 16–18 March 2016; Ivan, I., Singleton, A., Horák, J., Inspektor, T., Eds.; Springer: Cham, Switzerland, 2016; pp. 13–26. [Google Scholar] [CrossRef]

- Zunino, A.; Velázquez, G.; Celemín, J.P.; Mateos, C.; Hirsch, M.; Rodriguez, J.M. Evaluating the Performance of Three Popular Web Mapping Libraries: A Case Study Using Argentina’s Life Quality Index. ISPRS Int. J. Geo-Inf. 2020, 9, 563. [Google Scholar] [CrossRef]

- Mapbox GL New License and 6 Free Alternatives. Available online: https://www.geoapify.com/mapbox-gl-new-license-and-6-free-alternatives (accessed on 11 October 2024).

- Largest Contentful Paint (LCP). Available online: https://web.dev/articles/lcp (accessed on 27 November 2024).

- OpenStreetMap® Is Open Data, Licensed Under the Open Data Commons Open Database License (ODbL) by the OpenStreetMap Foundation (OSMF). Available online: https://www.openstreetmap.org/copyright (accessed on 19 November 2024).

- Working with Large GeoJSON Sources in Mapbox GL JS. Available online: https://docs.mapbox.com/help/troubleshooting/working-with-large-geojson-data/ (accessed on 11 November 2024).

- Sakamoto, Y.; Matsumoto, S.; Tokunaga, S.; Saiki, S.; Nakamura, M. Empirical study on effects of script minification and HTTP compression for traffic reduction. In Proceedings of the 2015 Third International Conference on Digital Information, Networking, and Wireless Communications (DINWC), Moscow, Russia, 3–5 February 2015; IEEE: Moscow, Russia, 2015; pp. 127–132. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Published by MDPI on behalf of the International Society for Photogrammetry and Remote Sensing. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).