1. Introduction

Speech Emotion Recognition (SER) plays a crucial role in various applications—including human–computer interaction, virtual assistants, education, healthcare, and emotion-aware interfaces—because it enables systems to understand a speaker’s internal emotional state and support more natural interactions [

1,

2,

3]. Recently, multimodal emotion recognition (MER), which jointly utilizes both speech and text information, has gained significant attention due to its ability to more accurately capture the complex characteristics of emotional expression [

4]. Acoustic elements such as intonation, stress, and speaking rate, together with the semantic content of text, provide complementary information, and numerous models have been proposed to integrate these heterogeneous sources to infer emotional states. In particular, with the introduction of Transformer-based architectures and self-supervised learning (SSL) models such as Wav2Vec 2.0 [

5], HuBERT [

6], and WavLM [

7], MER performance has steadily improved [

8]. However, due to the complexity of emotional signals and variations between utterances, several challenges remain.

Recent multimodal emotion recognition models such as MuLT [

9], MISA [

10], and MFDR [

11] have achieved performance gains by adopting cross-modal attention or modality-specific representation learning to effectively capture interactions between modalities [

12,

13]. Nevertheless, these state-of-the-art models primarily rely on single-scale representations at the utterance level, which limits their capacity to reflect the diverse spatiotemporal characteristics of emotional cues. For example, in text, certain words may convey strong emotional signals within a short span, whereas in speech, the overall prosodic pattern across the entire utterance may determine the emotional flow. Despite this, prior studies have struggled to simultaneously leverage fine-grained temporal cues and utterance-level prosody, and simple utterance-level fusion approaches remain insufficient for capturing such subtle signals [

14]. Consequently, existing models face inherent limitations in fully representing the multi-scale emotional structure of expressive speech.

Emotional signals possess a layered structure that cannot be fully captured by a single-level representation. In text, local semantic cues—such as emotionally charged words, emphasized expressions, or subtle shifts in polarity—play a central role, whereas in speech, global prosody, such as overall intonation flow or speaking style, substantially contributes to emotional interpretation. Meanwhile, speech-based studies have reported that specific short segments within an utterance exert a stronger influence on emotion classification, highlighting the importance of local acoustic cues [

15]. Multi-scale acoustic modeling, which integrates information across segments of varying lengths, has been shown to improve SER performance [

16,

17]. In addition, segment-level pooling and SSL-based local representations have proven effective for capturing the fine-grained structure of emotional signals [

18]. More recently, multi-scale acoustic representations have been experimentally demonstrated to enhance the stability and generalization of emotion recognition models [

19]. Therefore, relying on a single temporal scale risks missing important emotional cues depending on the utterance type or speaker characteristics, and incorporating multi-scale feature extraction that combines local–global granularity is essential for developing more robust and generalizable MER systems.

However, simply merging multi-scale representations or integrating them through cross-attention is insufficient for actively selecting between local and global information, whose relative importance varies depending on modality and speaking context. Emotional signals change dynamically with factors such as utterance type, speaker style, and emotional intensity, making it necessary for the model to automatically adjust its focus according to input characteristics. Mixture-of-Experts (MoE)–based routing methods are well aligned with these requirements because they provide an input-dependent expert selection mechanism. The effectiveness of such routing has been demonstrated in models like Switch Transformer and GShard [

20,

21], and recent efforts such as ST-MoE have further strengthened routing stability [

22], drawing significant attention to MoE-based selection mechanisms across different domains. Furthermore, MoEfication research has shown that MoE structures promote representation specialization depending on input characteristics, enhancing adaptability [

23]. In speech-based emotion recognition, MoE structures have also been reported to effectively integrate SSL features with spectral cues [

24]. Although FuseMoE adopted an MoE structure to achieve fleximodal fusion in multimodal settings, it did not consider scale-aware expert selection between local and global experts [

25]. Similarly, the recent MER model CAG-MoE combines cross-attention with MoE but still does not provide scale-aware expert selection [

26]. To address these limitations, this study introduces a Dual Routing MoE framework that adaptively selects local or global representations according to contextual conditions, enabling more fine-grained utilization of multi-scale information in MER.

The main contributions of this study are as follows.

We construct a multi-scale representation framework that simultaneously extracts local cues and global prosody from speech and text, effectively reflecting the spatiotemporal diversity of emotional signals.

To address the varying importance of local/global representations depending on utterance context and modality characteristics, we propose a Dual Routing MoE structure that selects experts at each scale based on input properties. This enables the model to dynamically adjust its focus across scales and perform more precise information selection.

To integrate the multi-scale information selected by the proposed multi-scale extraction and Dual Routing MoE in a complementary manner across modalities, we apply Bidirectional Cross-Attention, allowing the local/global cues of each modality to naturally interact with the representation of the other. This ensures that fine-grained cues and global patterns are effectively conveyed during multimodal interaction, enabling a balanced representation of both detailed and holistic emotional structures.

2. Related Work

2.1. Speech & Multimodal Emotion Recognition

Early research in SER primarily relied on traditional acoustic features such as MFCCs combined with classical classifiers including SVM, LDA, and hierarchical decision trees [

27,

28,

29,

30]. With the emergence of deep learning, SER performance improved substantially through CNN-, RNN-, and attention-based architectures, which enhanced the quality of feature representations. More recently, SSL models such as Wav2Vec 2.0, HuBERT, and WavLM have further advanced SER by providing robust contextualized representations [

31].

However, speech alone is often insufficient for fully reflecting the semantic aspects of emotion. As a result, MER, which jointly leverages the semantic cues of text and the prosodic cues of speech, has become an important research direction. By combining the complementary properties of the two modalities, MER offers higher stability and generalization than unimodal approaches, and has been widely studied using large-scale conversational datasets such as IEMOCAP [

32] and MELD [

33].

2.2. Multimodal Fusion Approaches

The core challenge in multimodal emotion recognition is the effective integration of information across different modalities. Representation-level fusion has improved MER performance by separating modality-specific representations or learning modality alignment and cross-modal dependencies. For example, MuLT learns inter-modal relationships without explicit temporal alignment using a cross-modal Transformer [

9], while MISA separates modality-invariant and modality-specific representations to obtain refined emotional features [

10].

However, these models primarily rely on single-scale representations and therefore struggle to simultaneously capture the momentary emotional signals (local cues) in text and the overall prosodic patterns (global cues) in speech. Attention-based approaches—such as cross-attention and bidirectional attention—can model modal interactions more precisely [

34], but they do not selectively emphasize local cues or explicitly differentiate between temporal scales. In other words, existing fusion models effectively capture inter-modal dependencies but remain structurally limited in leveraging both fine-grained and global cues through a multi-scale framework.

2.3. Mixture-of-Experts for Representation Selection

MoE provides a selective routing mechanism that dynamically chooses experts based on input characteristics, making it effective for enhancing representation specialization and scalability. Sparsely-Gated MoE [

35], Switch Transformer [

20], Expert Choice Routing [

36] and ST-MoE [

22] have demonstrated the efficiency of such routing mechanisms across diverse input spaces. MoEfication [

23] further emphasized the advantages of selective learning by analyzing how MoE structures autonomously specialize experts according to input distributions.

In the emotion recognition domain, studies have applied MoE architectures to integrate SSL-based speech features with spectral cues [

24]. In MER, models such as CAG-MoE, which combines cross-attention with MoE [

26], FuseMoE for fleximodal fusion [

25], and the multimodal contrastive MoE structure LIMoE [

37], have been proposed. However, these approaches largely remain at the level of modality-aware or input-dependent expert selection and do not provide scale-aware routing that distinguishes between local and global temporal cues in emotional signals. Thus, while existing MoE structures are effective for modality mixture, they are not sufficiently extended to address the MER-specific challenge of multi-scale cue selection.

3. Method

In this study, we propose a multimodal emotion recognition model that extracts local–global representations from speech (WavLM) and text (RoBERTa [

38]), selects the most suitable scale-specific representation for each modality through a Dual Routing MoE, and subsequently integrates the complementary information of the two modalities using bidirectional Cross-Attention.

Figure 1 provides an overview of the overall architecture.

The global representation extracted from each modality reflects the utterance-level semantic and prosodic structure, while the local representation captures fine-grained emotional cues at specific temporal segments or token-level units. These two representations are then fed into the Dual Routing MoE, where either the local or global expert is selectively activated depending on modality and utterance characteristics. The final modality-specific representations generated through routing, , are transformed into mutually conditioned representations via bidirectional Cross-Attention, and their combined output is fed into the classifier.

3.1. Audio Encoder

The speech signal is normalized to 16 kHz and then fed into WavLM-Base to obtain frame-level hidden representations.

The global embedding

, which reflects the overall information of the entire utterance, is computed by applying mean pooling over all frames:

Meanwhile, the local embedding is computed using a VAD-based Multi-Head Self-Attention (MHSA) pooling structure. WebRTC VAD is first applied to remove non-speech intervals that are emotionally irrelevant, and a valid speech frame set is selected.

A single MHSA layer is then applied to the selected frames to obtain contextualized representations

that reflect interactions across segments:

The MHSA output contains segment-level emotional cues, and the local embedding

is computed by applying mean pooling:

This SAP-like structure is designed with reference to multi-scale contextual representation learning methods proposed in recent multimodal emotion recognition studies [

18]. The process of selecting speech segments and applying MHSA to capture inter-segment interactions inherits the structural advantages of existing work. By stably preserving both the prosodic cues and segment-level fine-grained information captured by WavLM, this design naturally normalizes the scale difference between local and global representations at the encoder level.

3.2. Text Encoder

Text input is processed through RoBERTa-Base to obtain token embeddings

. The global embedding is constructed by concatenating the CLS embeddings extracted from the last four encoder layers:

The local embedding is computed using an SAP-like approach that mirrors the structure used for audio while being adapted to the characteristics of text. PAD, CLS, and SEP tokens are removed, and a set of content tokens is selected.

A single Multi-Head Self-Attention (MHSA) layer is then applied to these content tokens:

The MHSA output is a contextualized token representation that captures interactions among content words, and the text local embedding is computed through mean pooling:

The local embedding of text reflects the same multi-scale design philosophy, applying a SAP-like contextual pooling structure tailored to textual representations. This naturally aligns the local–global scales of audio and text at the encoder stage. By maintaining a local–global structure similar to audio SAP, this design more effectively captures token-level semantic information and contextual interactions within the text modality.

3.3. Dual Routing MoE

Figure 2 shows that the multi-scale embedding

of each modality is concatenated and used as the input to the Gate MLP, which determines the selection ratio between the local expert and the global expert through routing scores.

The output of the Gate MLP is defined as follows:

where denotes the GELU activation.

The routing probabilities are computed through a softmax operation:

The transformations of the local and global experts are defined as independent linear mappings:

The final routed representation is the weighted sum of the two experts:

This structure enables the model to automatically capture the scale-dependent characteristics of emotional signals and adjust the relative importance of local and global cues in a learnable manner.

3.4. Bidirectional Cross-Attention Fusion

After obtaining the routed representations of audio and text through Dual Routing, bidirectional Cross-Attention is performed to exchange complementary information between the two modalities, as illustrated in

Figure 3.

The Query, Key, and Value for Audio-to-Text attention are defined as:

The attention output is computed as:

Text-to-Audio attention is computed in the same manner but in the opposite direction, and the two representations are concatenated to form the final fused representation:

This bidirectional architecture provides higher expressive power than single-direction attention or simple concatenation, as it balances complementary information from both modalities.

3.5. Loss Function

To ensure stable training of the Dual Routing MoE with multi-scale representations, this study adopts a total loss composed of three components.

The overall learning objective is defined as:

For the main classification loss, a weighted focal loss is employed to mitigate class imbalance and increase sensitivity to hard samples. It is defined as:

This loss assigns higher weights to hard samples than to easy ones, allowing the model to learn emotional class boundaries more effectively.

Next, to prevent a collapse in which routing becomes concentrated on either the local or global expert, a balance loss is introduced. The balance loss encourages higher entropy in routing probabilities, thereby alleviating expert-selection imbalance:

This loss ensures that both experts remain involved during training, thereby maintaining diversity and stability in scale selection (local/global routing).

Because the Dual Routing MoE processes different levels of emotional cues through the local and global experts, auxiliary supervision is applied to ensure that each expert independently acquires emotion classification capability. Each expert produces emotion probabilities through an independent classification head, and the branch loss is defined as the sum of two cross-entropy losses:

This auxiliary loss encourages each expert to learn representations that are independently capable of emotion classification, providing an additional safeguard against routing collapse.

Furthermore, by ensuring that both the local and global experts learn meaningful emotional information within their respective representation spaces, this design enhances the stability and interpretability of the final routing decisions.

4. Experiment

4.1. Dataset

This study utilized the IEMOCAP (Interactive Emotional Dyadic Motion Capture) dataset [

32] for experiments on voice- and text-based multimodal emotion recognition. IEMOCAP is a publicly available multimodal dataset that provides English dialogue data containing emotions across speech, text, and video modalities, and it is widely used in various SER studies. The dataset consists of 5 sessions involving five pairs (10 individuals) of adult male and female speakers. Each session includes both scripted and spontaneous speech. All utterances are annotated with emotion labels, and motion-capture and video information are also provided; however, this study utilized only the speech and text modalities. Furthermore, because each session features a different pair of speakers, model evaluation was conducted using leave-one-session-out (LOSO) cross-validation. This approach is a widely adopted evaluation strategy in SER research, as it enables verification of speaker-independent generalization performance. To maintain consistency with prior SER studies, this experiment focused on four primary emotion classes. Specifically, it included 1103 angry, 1084 sad, 1636 happy (combining “happy” and “excited”), and 1708 neutral samples, totaling 5531 utterances across these classes. This class selection targeted categories from the original IEMOCAP dataset—among more than ten emotion labels—that had relatively sufficient data volume and ensured high comparability across studies.

4.2. Implementation Details

Preprocessing and Encoder Configuration: Audio waveforms were sampled at 16 kHz and processed using the WavLM-Base encoder. WebRTC-based VAD was applied to remove non-speech regions, and utterances exceeding 3 s were segmented into 2–3 s chunks. Global audio embeddings were obtained via mean pooling, while local embeddings were computed by applying a single MHSA layer to VAD-selected speech frames. For the text branch, RoBERTa-Base was employed with a maximum sequence length of 128. Both WavLM and RoBERTa were partially fine-tuned by unfreezing only the last four layers.

Model Structure: The Dual Routing MoE architecture received 768-dimensional local and global embeddings from each modality. Routing weights were produced through a two-layer gating MLP with a hidden size of 128. Experts were implemented as MLPs with GELU activation and a dropout rate of 0.1. Fusion between audio and text was performed using two bidirectional cross-attention layers, each with four attention heads. The overall loss combined focal loss ), an auxiliary branch loss weighted by , and a routing-balance loss weighted by .

Training Settings: Models were optimized using AdamW with separate learning rates for SSL encoder parameters () and the MoE/fusion layers (). A cosine-annealing scheduler with two warm-up epochs was applied. Training was conducted for up to 40 epochs with a batch size of 8, weight decay of 0.01, gradient clipping, and early stopping with a patience of 3. Class weights were additionally applied to address label imbalance.

Hardware Environment: All experiments were performed in a Python 3.10 environment using PyTorch 2.5.1. Training was executed on an NVIDIA GeForce RTX 4070 Ti GPU.

5. Result

The experimental results conducted on all five sessions of IEMOCAP are summarized in

Table 1. The proposed Dual Routing-based multimodal model achieved an average UA of 75.27% and WA of 74.09%, demonstrating overall stable performance. Here, UA (unweighted accuracy) represents the mean recall across classes, while WA (weighted accuracy) denotes the accuracy weighted by class distribution. Despite differences in speaker composition or speaking style across sessions, the model maintained a UA of at least 0.72 in all folds, and achieved comparatively high performance in fold 2 (UA 0.796) and fold 4 (WA 0.753). These results suggest that the multi-scale representation and Dual Routing architecture effectively adapt to speaker- and session-dependent variations in utterance characteristics. Furthermore, the small performance deviations across sessions indicate that the model does not overly rely on any specific session or speaker, exhibiting consistent performance across diverse speaking environments.

The performance comparison with existing multimodal emotion recognition models reported on IEMOCAP is summarized in

Table 2. SERVER exhibited a basic performance level of approximately 63% UA, while IA-MMTF and BERT–RoBERTa BiLSTM reported stable results in the range of 72–74%. Meanwhile, the recent state-of-the-art model MFDR achieved UA 77% and WA 75.7%, representing one of the highest accuracies reported to date. The proposed Dual Routing model recorded UA 75.27% and WA 74.09%, which is lower than MFDR but comparable to or slightly better than models such as BERT–RoBERTa BiLSTM. Notably, whereas most existing models rely on single-scale representations, the proposed model introduces a Dual Routing mechanism that adaptively adjusts the importance of local–global representations based on input characteristics, offering a distinctive perspective for selectively leveraging multi-scale emotional cues. As demonstrated in the ablation results and routing analysis, this structural property plays a crucial role in adapting to emotion- and session-specific variations in speaking patterns. Therefore, although the performance gap with the latest SOTA model is acknowledged, this study provides academic value by presenting a novel structural direction—scale-aware expert selection—that has not been sufficiently addressed in previous MER research.

5.1. Ablation Study

To examine the contribution of the key components of the proposed model—namely the multi-scale representation and the Dual Routing mechanism—an ablation study was conducted. Four model variants were compared: a model using only local representations, a model using only global representations, a concat model that simply merges local and global features, and the full Dual Routing-based model. UA and WA were measured for each model.

As shown in

Table 3, the Default model achieved the highest performance in both UA and WA. Single-scale models that use only local or only global representations performed worse than the Default model in both cases, demonstrating that emotional information cannot be sufficiently captured using a single-level representation alone. Moreover, the concat model, which simply combines local and global features, also showed lower performance than the Default model across all metrics, indicating that simple feature aggregation cannot properly reflect the varying importance of emotional cues depending on utterance context. These results suggest that the Dual Routing mechanism, which selects between local and global experts based on input characteristics, is a key factor in effectively utilizing multi-scale information and directly contributes to the performance improvement of the model.

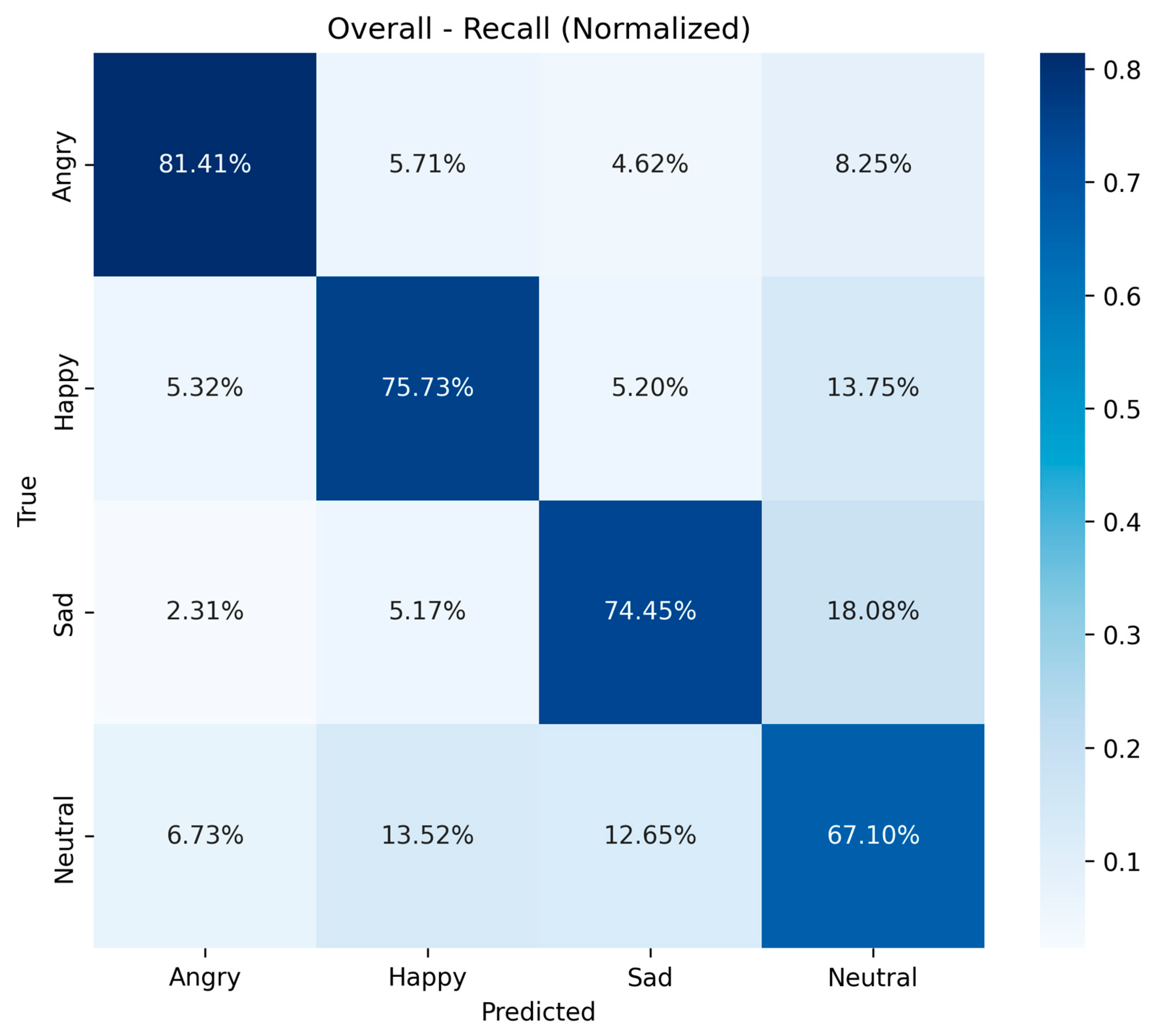

5.2. Confusion Matrix Analysis

To more precisely analyze the classification performance for each emotion class across the entire test set, we examined the recall-based confusion matrix, as shown in

Figure 4. The Angry class achieved the highest recall at 81.41%, indicating that it was classified most reliably. This can be attributed to the strong prosodic intensity and distinctive local acoustic cues that typically appear in angry utterances, making them relatively easier to distinguish from other emotions. In contrast, Happy showed a misclassification rate of 13.75% toward Neutral. This tendency can arise when happy utterances exhibit low arousal or lack strong prosodic expression, resulting in neutral-like characteristics under certain speaking conditions.

The Sad class recorded a recall of 74.45%, but it also showed the highest confusion toward Neutral, with an 18.08% misclassification rate—the largest among the four emotions. This is because sad utterances generally exhibit low energy and slower tempo, which creates prosodic similarities with neutral speech. The Neutral class had the lowest recall at 67.10% and exhibited misclassifications toward both Happy and Sad. Neutral utterances tend to contain weaker emotional cues and lack distinctive features, resulting in ambiguous decision boundaries. Consequently, they can be easily confused with emotions in different directions.

5.3. Routing Behavior Analysis

The local/global expert selection ratio analyzed by session and emotion serves as an important indicator of how the model utilizes multi-scale information depending on input characteristics, as shown in

Figure 5. In the audio modality, clear variations were observed across sessions. For example, in Session 2, the selection ratio of local cues was high, whereas in Session 4, the selection of global cues increased overwhelmingly. This reflects substantial differences among sessions in terms of speaking habits, emotional expression patterns, and conversational context, demonstrating that the Dual Routing mechanism responds sensitively to such variations and selects the appropriate scale of features accordingly. In Session 3, local and global cues were selected at nearly equal proportions, which can be interpreted as indicating that the utterances in this session tend to require both local cues and global prosody.

In contrast, the text modality exhibited consistently high utilization of global cues across all sessions, with particularly high global selection ratios observed in Sessions 4 and 5. Since text-based emotional signals rely heavily on sentence-level semantic structure and contextual flow, these results show that text routing operates in a stable and session-robust manner.

Emotion-wise analysis of local/global utilization also revealed notable differences, as shown in

Figure 6. For audio, Angry showed strong dominance of local cues, while Happy and Neutral exhibited higher proportions of global cue selection. Sad demonstrated a balanced pattern, with local and global cues selected at nearly equal ratios. This indicates that the prosodic structure differs across emotions: emotions like anger, characterized by abrupt changes in energy, depend more on local cues, whereas emotions with more static and stable prosody, such as neutral or happy, rely more heavily on global cues. The text modality showed consistently high selection of global cues across all emotions, reflecting the central role of semantic context in emotion judgment.

Overall, the routing behavior analysis empirically validates the necessity of multi-scale representations and the practical contribution of the Dual Routing mechanism.

6. Discussion

This study proposed a Dual Routing-based MER model that integrates local–global representations, motivated by the observation that emotional signals in speech and text cannot be sufficiently captured by single-scale representations alone. The experimental results clearly demonstrate that the proposed architecture effectively reflects fine-grained components of emotional signals and addresses the limitations inherent in previous single-scale approaches.

In the ablation experiments, models using only local or only global representations showed overall performance degradation, and simple feature concatenation was also limited in its effectiveness. In contrast, the proposed Dual Routing structure selectively activates local or global experts according to input characteristics, enabling more appropriate utilization of multi-scale information. These findings indicate that emotional signals require cues at different temporal scales depending on context and utterance conditions, and that fixed single-scale representations are insufficient to capture such diversity.

The routing behavior analysis provides important qualitative insight into this structural mechanism. In the audio modality, the proportion of local/global cue utilization varied significantly across sessions, reflecting differences in speaking habits, emotional expression patterns, and conversational context. However, such session-wise differences cannot be attributed solely to variation in speaker style. Since IEMOCAP does not maintain a uniform emotional distribution across sessions, it is also possible that imbalances in emotional labels contributed to the observed session-level variation. Although this study does not perform a quantitative analysis of session-wise emotional distributions, acknowledging this possibility allows for a more careful interpretation of session variability.

In contrast, the text modality showed strong and consistent reliance on global cues across all sessions, reflecting the natural linguistic tendency that sentence-level semantic structure plays a central role in emotion judgment. This analysis suggests that multi-scale representation offers benefits beyond performance improvement, contributing to a deeper structural understanding of emotional signals.

Emotion-wise analysis also revealed clear distinctions. The Angry emotion exhibited the highest utilization of local cues due to its strong local acoustic bursts, whereas Happy and Neutral showed higher reliance on global cues because of their smoother and more stable prosody. Sad displayed a balanced pattern, with local and global cues selected at nearly similar rates. These differences indicate that prosodic patterns vary across emotions and that fixed representation approaches struggle to clearly separate emotional boundaries.

The confusion matrix analysis further highlighted these structural properties. Angry achieved the highest classification performance due to its strong arousal and distinctive prosodic patterns, while Neutral exhibited the lowest recall and was frequently confused with other emotions because of its weak emotional cues and ambiguous boundaries. In particular, misclassifications from Happy to Neutral and from Sad to Neutral were high, reflecting the shared prosodic characteristics of low-arousal emotions. These results support the conclusion that the scale characteristics of emotional signals affect both classification performance and misclassification patterns.

Although the proposed model demonstrates slightly lower absolute performance than the latest SOTA model MFDR, its contribution lies not in outperforming existing models but in addressing an underexplored structural aspect of MER research: multi-scale cue selection. While conventional MoE-based models primarily focus on expert routing based on modality differences, this study introduces a selective expert activation mechanism that considers the temporal scale inherent in emotional signals. This design allows the model to more precisely reflect the hierarchical structure of emotional expression, ultimately enhancing interpretability, representational power, and adaptability to contextual variation.

In summary, this study provides academic value by presenting a new direction in representation design that accounts for the intrinsic structure of emotional signals rather than merely pursuing performance improvement. The experimental results and routing analysis clearly demonstrate that multi-scale information plays a critical role in MER and establish a foundation for future research combining the proposed approach with various self-supervised models, lightweight routing mechanisms, or hierarchical fusion strategies.

7. Conclusions

This study proposed a Dual Routing-based multimodal emotion recognition model that selectively utilizes local–global representations according to input characteristics, motivated by the fact that emotional signals cannot be sufficiently captured by single-scale representations in either temporal or semantic dimensions. The proposed model simultaneously incorporates multi-scale cues from speech and text modalities and automatically adjusts their relative importance through Dual Routing, thereby more faithfully capturing the hierarchical structure of emotional expression that existing MER models often fail to reflect.

The experimental results showed that although the absolute performance of the proposed model was somewhat lower than that of the latest SOTA model, the ablation and routing behavior analyses confirmed that multi-scale cue selection plays a meaningful role in the actual emotion recognition process. This supports the conclusion that the main contribution of this study lies not in performance competition but in presenting a new modeling paradigm that reflects the structural properties of emotional signals. In particular, the concept of scale-aware expert activation complements limitations of previous modality-centered MoE approaches and plays an important role in explaining the variability and context dependency of emotional signals.

Future work may extend the proposed multi-scale selection mechanism by integrating it with more lightweight routing structures or various self-supervised encoders, and further potential lies in combining it with hierarchical fusion strategies. Additionally, visualizing cue-selection patterns or validating the approach across different languages, corpora, or domains would further enhance the model’s generalization and practical value.

Overall, this study introduces a novel structural approach to addressing the scale-dependent cue selection problem—an essential aspect of multimodal emotion recognition—and provides foundational insights that highlight the importance of multi-scale information in MER.