1. Introduction

Augmented reality (AR) has become a valuable tool for improving the interaction between people and their environment, offering innovative solutions in areas such as navigation, accessibility, and personalization of experiences. In particular, its use in wayfinding within university campuses has emerged as a transformative technology capable of transforming these spaces into intelligent and adaptive environments.

Historically, campus wayfinding has relied on static maps, physical signage, and digital markers. While useful, these tools have limitations such as reliance on fixed infrastructure and poor adaptability to frequent changes. Markerless AR overcomes these limitations by using advanced technologies such as simultaneous localization and mapping (SLAM) and computer vision to identify environmental features and generate dynamic and personalized routes in real time. In this work, the term markerless refers to augmented reality systems that operate without artificial visual tags, QR codes, fiducial markers, or any additional physical infrastructure. Instead, localization relies exclusively on naturally occurring elements already present in the environment—in our case, official campus signage-detected through computer vision. This distinguishes markerless navigation from marker-based approaches, where recognition and tracking depend on predefined visual patterns placed specifically for AR interaction.

In the university environment, the applications of this technology are many. For events such as new student welcome events or conferences, markless AR can efficiently guide users across campus, taking them directly to classrooms, libraries, or key points of interest. In addition, its ability to provide personalized assistance significantly improves accessibility for people with disabilities, allowing them to navigate independently using visual, auditory, or haptic cues.

Among the benefits of markless navigation in higher education environments is its ability to adapt to the constant evolution of campuses, such as remodeling or changes in classroom layout. By eliminating visible signage, this technology maintains architectural aesthetics and reduces the use of physical materials, supporting the sustainability goals of educational institutions.

Furthermore, although markerless AR navigation reduces dependence on artificial markers or additional infrastructure, its long-term effectiveness depends on the visibility and maintenance of existing physical signage. Over time, building renovations or replacement of campus signs may slightly affect recognition accuracy. However, these maintenance activities are standard facility management processes that generally incur much lower operational costs than systems requiring frequent calibration of beacons, sensors, or printed markers. Additionally, the proposed approach can easily be updated by retraining lightweight CNN models with new photographs of modified signs. This ensures the approach can adapt to the natural evolution of the campus environment without requiring new infrastructure. This balance of flexibility, scalability, and minimal maintenance supports educational institutions’ sustainability goals.

In this way, the integration of augmented reality and markless mobility on university campuses not only improves the navigation experience, but also positions these institutions as intelligent environments that respond to the needs of a modern academic community. By combining flexibility, accessibility, and sustainability, this technology redefines the interaction between people and educational spaces and marks a significant step toward the future of connected education.

Recent research on augmented reality (AR) wayfinding has shown that although this technology offers clearer visual guidance and reduces cognitive load compared to traditional maps, most existing systems depend on external infrastructure or controlled environments. Previous studies have often required Bluetooth beacons, QR codes, spatial anchors, or high-performance hardware to maintain localization accuracy. These constraints limit the deployment of AR wayfinding systems in large-scale public spaces, such as university campuses. Furthermore, studies report persistent challenges regarding lighting sensitivity, device ergonomics, and long-distance localization, especially when integrating indoor and outdoor navigation. Despite promising advances in visual–inertial SLAM (VSLAM), BIM-assisted routing, and hybrid multimodal localization, few systems can operate fully infrastructure-free while remaining computationally lightweight on standard mobile devices.

These gaps underscore the necessity of solutions that can operate using only the environmental features already present in real-world settings. This approach avoids the installation and maintenance costs associated with artificial markers or beacons. University campuses are compelling case studies for this approach because they are dynamic environments where buildings, routes, and signage evolve over time. This makes sustainable, easily updatable navigation systems particularly valuable. Campuses also increasingly host diverse user groups, including new students, visitors, and individuals with accessibility needs, for whom intuitive wayfinding tools can significantly improve mobility and inclusion.

This study directly addresses the limitations of previous research by introducing a markerless augmented reality (AR) navigation framework that relies exclusively on existing campus signage as natural visual landmarks. Unlike most AR-based navigation studies, this method requires no additional infrastructure and runs entirely on the device through a lightweight, two-stage machine learning pipeline. The system combines a color-based binary classifier with compact convolutional neural networks optimized for real-time execution in TensorFlow Lite. This design enables high recognition accuracy (>99.5%) with a minimal memory footprint (under 1 MB per model). This design enables continuous, infrastructure-free localization, even across multi-building environments where consistent performance has been difficult to maintain in prior research.

This study demonstrates a practical, scalable, and sustainable alternative to existing AR navigation solutions by grounding the navigation process in the real physical layout of the campus and validating its usability with real users under natural lighting conditions. Results show that over 85% of participants successfully reached their destination and most users found the system easy to operate and valuable for other public spaces, such as airports and shopping malls. These findings reinforce the importance of developing lightweight AR systems that align with institutional sustainability goals and improve accessibility and user experience.

The rest of the paper is structured as follows: the state of the art is presented in

Section 2, the methodology is explained in

Section 3,

Section 4 and

Section 5 outline the results and their analysis, and finally, conclusions and perspectives are given in

Section 6.

2. State of the Art

Research in augmented reality (AR) for navigation has advanced rapidly in recent years, integrating deep learning, multimodal localization, and inclusive design strategies for diverse user groups.

Qiu et al. (2025) [

1] conducted a systematic review of 65 studies on AR wayfinding, classifying them into four categories: marker-based, markerless, beacon-assisted, and vision-only systems. Their findings reveal that users generally prefer AR guidance over traditional maps, mainly due to the immediacy and clarity of visual cues. Nevertheless, challenges such as device ergonomics, lighting sensitivity, and cognitive load remain. The review highlights emerging trends, persistent issues, and offers a roadmap for developing more reliable and user-friendly AR navigation systems.

In terms of map generation and routing, Putra et al. (2025) [

2] proposed a real-time adaptive method that dynamically builds environmental models as users explore, eliminating the need for preloaded maps. Similarly, Lu et al. (2024) [

3] presented a hybrid framework that combines geofencing with spatial anchors, reducing visual–inertial odometry drift and providing stable localization in large spaces such as airports or campuses. Batubulan et al. (2025) [

4] further contributed a tool for automatic map data collection, integrating VSLAM, street-level imagery, and inertial data. Their system minimizes setup effort while maintaining robustness under varied conditions, enabling scalable deployment in complex environments.

A growing body of work focuses on machine learning and multimodal localization. Łukasik et al. (2024) [

5] reviewed ML-based strategies that fuse vision, inertial, and radio data. They underline the robustness and adaptability of such approaches while noting their computational and hardware demands. To complement this, Zhang et al. (2025) [

6] combined Building Information Modeling (BIM) with AR to improve indoor routing in complex facilities, showing reduced cognitive effort and greater efficiency for users.

At the same time, accessibility and inclusivity have become central themes. García-Catalá et al. (2024) [

7] developed a system for people with cognitive disabilities, tailoring routes to user needs and providing support in emergencies. Messi et al. (2025) [

8] designed an audio-based AR solution for blind users, validated on a university campus. The system delivered real-time auditory cues, achieving navigation performance comparable to sighted individuals and demonstrating the potential of non-visual AR solutions.

The role of hardware and interfaces has also been scrutinized. Lee et al. (2025) [

9] compared head-mounted AR displays with handheld smartphones, concluding that wearable devices offered better immersion, smoother orientation, and improved outcomes, while handheld AR often underperformed even against traditional non-AR methods. These findings emphasize the importance of hardware design for effective AR navigation.

University campuses and educational spaces have emerged as prominent testbeds. Qi et al. (2024) [

10] introduced an ARCore-based system ensuring seamless transitions between indoor and outdoor spaces, with 3D directional cues rendered on standard smartphones. Messi (2024) [

11] proposed a markerless system leveraging image-to-map comparisons, demonstrating high accuracy and usability as a web service deployed on a campus. Gotoh (2023) [

12] designed a multi-floor navigation solution combining vision-based and Bluetooth methods, offering a low-cost, low-maintenance approach compatible with ARKit/ARCore. Meanwhile, Akmal Zaki (2023) [

13] compared QR-based navigation with LIDAR+SLAM systems, showing superior usability and accuracy for the latter.

Overall, the state of the art reveals a dynamic field where technical innovations (VSLAM, BIM, ML) converge with inclusive design and hardware improvements. The trend points toward increasingly adaptive, accessible, and scalable AR navigation systems, with direct applications in campuses, hospitals, and smart urban environments.

Despite these advances, there are still several challenges to scaling AR navigation systems from small, experimental setups to large real-world environments, such as multi-building campuses. Most existing research focuses on controlled trials or limited indoor paths where localization and routing depend heavily on predefined maps or specialized hardware. Maintaining accurate user localization over extended areas, connecting indoor and outdoor spaces seamlessly, and ensuring the system’s adaptability to architectural changes remain open research problems. Furthermore, few studies address the computational efficiency required to run deep learning-based localization models on standard mobile devices without external sensors or beacons. This study contributes to closing this gap by proposing a lightweight, markerless AR navigation framework that uses computer vision and machine learning to provide scalable, infrastructure-free guidance across a university campus.

To address these shortcomings, this work pursues two main research objectives.

The first objective is to develop an infrastructure-free, markerless augmented reality (AR) navigation system that can operate entirely on-device using existing environmental signage as natural visual landmarks.

The second objective is to design and evaluate a lightweight, two-stage machine learning pipeline comprising a binary color classifier and compact convolutional neural networks (CNNs) that ensure high classification accuracy, low latency, and energy efficiency suitable for real-time campus navigation.

These objectives define the scope of the proposed system and distinguish it from existing AR-based navigation solutions.

3. Methodology

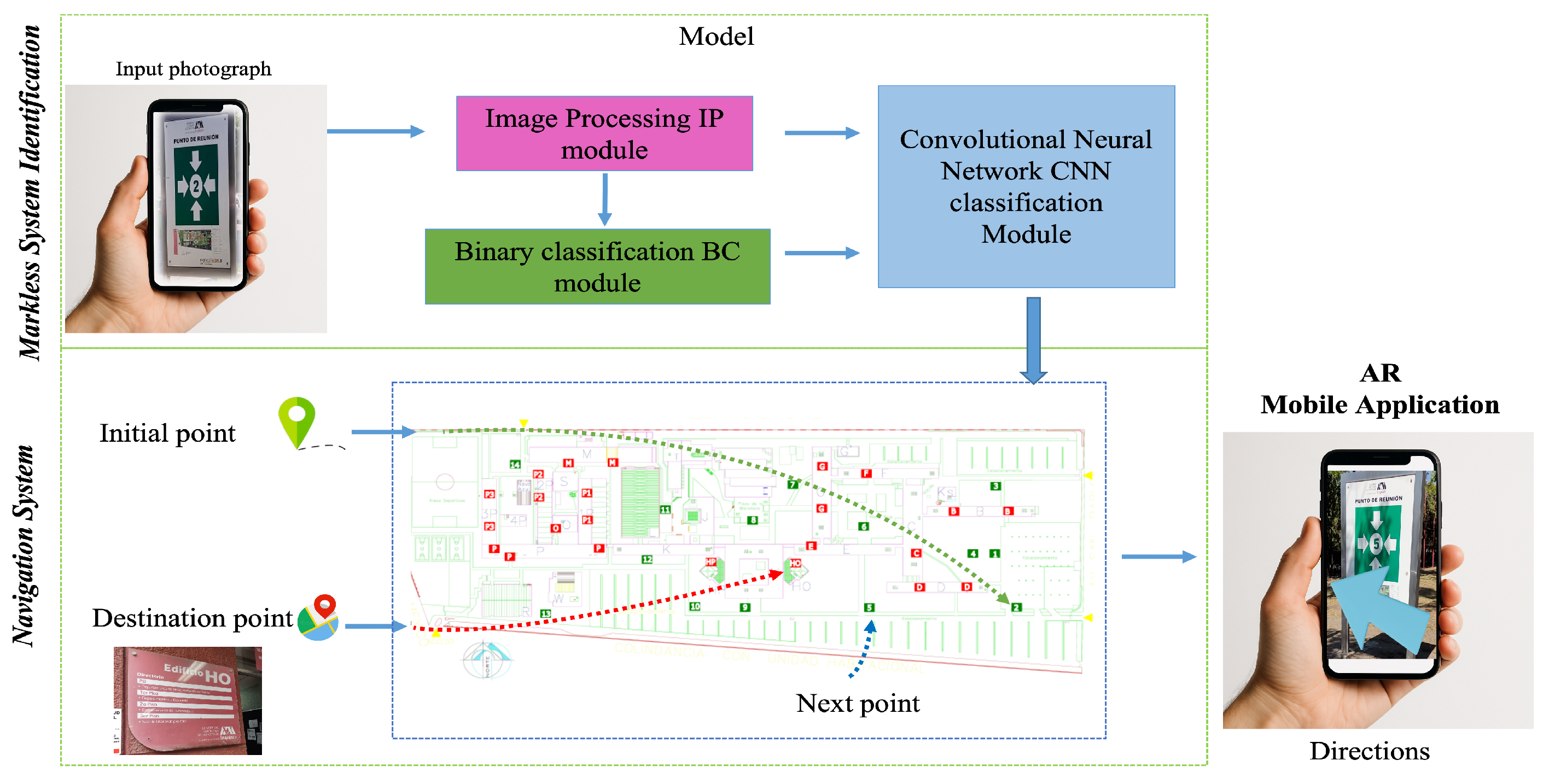

In order to guide the user to his destination, it is necessary to know his current location (localization) as well as the place he wants to go to. Therefore, the system needs two inputs. The first is the destination, which the user selects from a list. The second input is required to determine the user’s location, and since this is a vision system, this is achieved by taking an image (photo). This image enters the localization module, which consists of an image processing (IP) module, a binary classification (BC) module, and a convolutional neural network (CNN) module. With the starting point obtained from this module and the indicated destination, the navigation module provides the route through AR directions and shows the next node that the user must register to reach their destination.

Figure 1 shows the architecture of the proposed system.

At the start of the navigation process, users must locate and photograph a campus sign—either a “Meeting Point” (MP) or a “Directory” (Dir)—using the application’s camera interface. The system does not process arbitrary images. Instead, it identifies one of these official signs to determine the user’s position. Each sign corresponds to a predefined node on the campus map, and the captured image serves as the localization input to initiate route computation. This design ensures accurate georeferencing without the need for external sensors or GPS, which are often unreliable indoors.

Regarding path planning, the digital campus map was transformed into a weighted graph consisting of 36 nodes and 58 bidirectional connections. Each node represents a signage location (MP or Dir), and the edges encode the physical distance between nodes as measured directly from the campus .dwg blueprint. The cost function used by the Floyd–Warshall algorithm corresponds to Euclidean distance, ensuring the shortest and most accessible route between any two points. Additional constraints, such as obstacles or building walls, were incorporated by removing non-walkable edges from the graph.

Figure 2 shows examples of the two types of signage used. During navigation, each recognized sign acts as a checkpoint. When the user reaches a sign, the system confirms their position and retrieves the next node from the precomputed route matrix. Then, it overlays an augmented directional arrow to guide the next step. For instance, if a user starts at “MP2” and selects the “HO” building as their destination, the system initially displays an arrow pointing toward “MP5” (the subsequent node on the route). Once “MP5” is detected, the application updates the guidance toward “HO.” This incremental process enables users to visually follow the sequence of signs in the physical environment, ensuring intuitive and continuous navigation across the campus.

It is important to clarify that the proposed system was designed to provide continuous visual guidance through augmented reality. In this context, holding the smartphone during navigation is an essential component of the user experience, not a limitation. The mobile device acts as a visual navigator, projecting a virtual arrow in real time that indicates the direction and next checkpoint until the final destination is reached. This persistent AR visualization replaces traditional map-based interfaces and enables an intuitive, real-time understanding of the spatial environment. Therefore, keeping the smartphone in hand is consistent with the system’s design philosophy of immersive, visual, continuously updated guidance rather than an interruptive task.

To train the BC and CNN, a dataset is required. Initially, this consists of photographs of the Meeting Point MP and Directory “Dir” signaling on campus, of which there are 14 MP and 22 Dir. However, many of these signs are duplicated, so these cases are grouped into the same class, leaving a total of 14 different classes. Examples of the resulting classes are shown in

Figure 2.

For each of these classes, 50 photographs were taken, except in the case of repeated signs (i.e., all MP, as the sign has two sides and 8 Dir), where 25 photographs were taken for each. Color photographs in .png format with a size of 1080 × 1080 pixels were used, and for the MPs, care was taken to ensure that the shot coincided with the green box containing the arrows and the ID. For the MP, the image should start with the letter “E” for “Edificio” (building) and end with its ID, all in the upper part of the image. Examples of these images are shown in

Figure 2. These photographs were taken by a dedicated application, which takes the photographs in the same way as the final application presented, in order to avoid inconsistencies between the captured images.

To train the BC, one image per class was used (chosen as the “most representative”)—14 MP and 14 Dir—on which the following processing was performed:

Thus, for each initial image, a three-dimensional vector of the average values of each channel is obtained. These 28 vectors were first used in the k-means algorithm to see if the classes could be distinguished from each other.

Then the Pearson correlation coefficient was obtained, where it was found that the R channel was highly significant (0.96), so two functions were proposed that would allow us to discriminate between classes given a vector, as follows:

Dir if ;

Dir if (The reason for choosing this value is that the average value of the MP vectors is 52.12, with a standard deviation of 4.87. The average of the Dir vectors is 166.18, with a standard deviation of 20.18. Adding three standard deviations to the average of MP (which in a normal distribution would be 99.7%) gives a value of 66.75. Although all the vectors fall within this value, the sample is small, so other criteria are taken into account. The maximum value of R for MP is 62.55, while the minimum value of Dir is 101.56. The mean between these values is 82.06. If we take the average between this number and 66.75, we obtain 74.41)

Tests were also carried out to classify other images in the dataset (after obtaining a vector of averages) using KNN with , with the criterion that k must be odd and also less than or equal to the square root of the number of elements, trying different combinations and obtaining the best results using only the R channel.

The initial vectors were also used to obtain the separation plane equation using a single neuron (Perceptron), which was achieved in 326 iterations. The obtained plane Equation also shows the high relevance of the R channel.

Another algorithm used was the Bayesian classifier, which, from its expression for two classes and in terms of the Mahalanobis distance, is defined by Equation (

1). The values of the mean vectors per class are

and

; the logarithm of the determinant quotient is

; and the inverse matrices per class are

and

, which are used in the implementation to determine the class.

Each of these expressions, given an input vector, provides a class membership decision (MP or Dir). The one with the most votes is taken as the final decision of the BC, which is used to perform a second IP and to select a CNN.

This strategy intentionally employs a two-stage classification pipeline (a binary classifier followed by CNN-based identification) to achieve robustness and computational efficiency under real-world conditions. While a simple threshold rule can distinguish between red (Dir) and green (MP) signs under ideal laboratory conditions, this approach becomes unreliable in outdoor environments due to variations in illumination, color fading, reflections, and camera exposure. The binary classifier is trained using real photographs captured under various lighting and weather conditions. This allows it to compensate for these variations and provide consistent performance without manual calibration.

Furthermore, this stage enables modular, resource-efficient operation on mobile devices. Once the sign type is detected, only the corresponding CNN model loads into memory, optimizing inference time and minimizing GPU and RAM consumption.

Regarding the second stage, the 14-class classification problem resembles digit recognition, but it is more complex due to real-world variability. The images are not clean, centered, or standardized. They contain environmental noise, perspective distortions, partial occlusions, and nonuniform lighting. Unlike MNIST digits, the signs exhibit heterogeneous geometric and textual patterns, such as letters, arrows, and borders, and they must be recognized directly from camera input rather than from cropped, idealized samples. The proposed CNN architecture is optimized to generalize across these challenging visual conditions and achieves over 99.5% accuracy in realistic campus scenarios.

The second IP depends on the type of signaling detected. For MP, since the ID is in the center, the image is divided into 9 frames (), where the corner squares are 30% of the size of the original image and the center square is 40% of the size of the original image. In the case of Dir, the region of interest is in the upper right corner, so 40% of this image is extracted. In both cases, the resulting image has a size of pixels. This is resized to a final size of pixels, which is less than 10% of the extracted image (and less than 4% of the original image taken). This final size was determined experimentally to be as small as possible without compromising the relevant information (the ID) in the image.

Otsu’s method is a global, non-parametric thresholding technique that determines the optimal threshold by maximizing the statistical separability between two classes of gray levels: background and foreground. The method assumes a bimodal histogram and selects the threshold that yields the greatest distinction between these classes.

For a threshold

t, the normalized histogram is separated into two classes:

L = number of gray levels.

We associate probabilities and , and mean gray levels , , and global mean .

The method maximizes the between-class variance, defined as follows:

Thus, the optimal threshold is obtained by the following:

Since the method relies exclusively on histogram statistics, it is fully automatic and particularly effective for bimodal gray-level distributions, although its performance decreases under unimodal or noisy conditions.

Finally, the resulting images (still in color) were converted to binary, using a threshold of 152 for Dir and 112 for MP in most cases. This threshold was determined by Otsu’s method giving the following values: [M:182; HO:162; P1:120; P3:164] (preserving information without loss).

Figure 3 shows the process described in this IP.

This processing was carried out for each of the images in the initial dataset, and then data augmentation of 20 images (binary) was performed for each of them, with the parameters shown in

Table 1, so that in the end, there were 1000 images per class, of which 800 were randomly selected for training and the rest for testing the CNN.

Although there are two CNNs (one for Dir and one for MP), the network structure is the same in both cases and is shown in

Figure 4. Each CNN has 3 feature extraction modules, where each module consists of the following layers: Conv2D with 32 filters and a

kernel, LeakyReLU with an alpha of 0.2, MaxPool2D of size

, and a batch normalization layer.

Then, there is a flattened layer, a dense layer with 256 neurons and a ReLU activation function followed by a dropout with a range of 0.2, and then another dense layer with 64 neurons and a ReLU activation function followed by a dropout with a range of 0.3. Finally, there is a layer with a softmax activation function that distinguishes between 14 classes depending on whether it is Dir or MP.

The network was trained using a Python 3 execution environment and a T4 GPU hardware accelerator. For the training of both networks, the batch size was set to 32, and training was conducted for 100 epochs. The model obtained after training is in .h5 format, which needs to be converted to .tflite format, which is the one that can be loaded on the Android device.

3.1. Route and Planning

Figure 5 shows an example of how the application works. In this example, the user has selected Building HO as the destination and MP2 as the starting point. Using this information, the system consults the table generated by the algorithm. A fragment of this table is shown in

Table 2, where the start of the route is marked in red (MP2, numbered 17 in the table). At the intersection of the starting point column and the HO destination row, the next node to be passed through to reach the destination appears (numbered 20, corresponding to MP5, marked in yellow). The AR directions are displayed on the device, as shown in

Figure 1 (augmented reality mobile application). Using the same logic, one can see that the next intersection (green) corresponds to the chosen destination. If the user takes a different route due to carelessness, personal preference, or special conditions on campus, for example, the entire process is repeated when a new signal is registered. In other words, the route would be determined from any new point registered.

In order to obtain the route, we first need a map provided by the university in .dwg format. In this map, each of the signposts was located (a center was used for those that were repeated) to obtain its coordinates in this reference system.

The connections between the nodes were then made considering the distance and accessibility between them, resulting in two types of connections: direct (when there are no obstacles between them) and indirect (when there is an obstacle, usually buildings). The resulting map with signage and connections is shown in

Figure 6.

An association was also made of all possible destinations on campus with at least one of the proposed nodes (signposts). All this information was emptied and numerically processed in .csv files, and from this, the matrix of connections and distances between nodes was obtained, which was used as input in the Floyd–Warshall algorithm, from which the matrix of minimum distances and connections between nodes was generated.

Each sign on campus corresponds to a unique node on the digital map used by the navigation engine. While some physical signs share a visual template, they differ in their alphanumeric identifiers (e.g., “MP2, “MP5,” “HO”), which the CNN explicitly recognizes. The classifier output is directly linked to a node ID in the adjacency matrix used by the Floyd–Warshall algorithm. Once a sign is detected and classified, the application retrieves its coordinates and updates the user’s current location. Then, the route to the destination is recalculated or confirmed, and the AR module renders a directional arrow pointing toward the next node in the sequence.

This procedure repeats dynamically. Every time the user captures a new sign, the classification result triggers a position update and refreshes the direction overlay in real time. Thus, the process provides a closed-loop interaction between the physical environment, vision-based localization, and the AR navigation layer.

Regarding scalability, the graph in

Figure 6 is a simplified representation of the actual campus network, which currently comprises 36 nodes and 58 bidirectional edges. However, the architecture is fully extensible. Additional nodes and paths can be integrated by retraining the CNN with new sign images and updating the connectivity matrix. Since each node corresponds to an existing sign, and no artificial markers or sensors are required, this approach is scalable and sustainable for large-scale deployments, such as multi-building campuses or other public facilities.

Thus, given a starting point and a destination, the nodes to be crossed and the coordinates needed to indicate the direction to follow are determined, as shown in Equation (

2), which results in the angle between the nodes, which, adjusted to magnetic north (obtained using the device’s accelerometer and magnetic field sensors), gives the angle at which an arrow (object .glb) is rotated in real time (every 200 ms). This arrow is shown to the user in the device’s camera view as an AR, accompanied by Activity-Based Navigation (ABN) and the image of the next node to be found.

Implementation Workflow and Data Flow Pipeline

To improve reproducibility, the complete data processing workflow is summarized below. The system follows a modular input–process–output (IPO) architecture composed of four main stages.

The input stage involves the user capturing an image of a “Meeting Point” (MP) or “Directory” (Dir) sign and selecting a destination from the menu. The image and the selected destination form the system inputs.

Preprocessing and binary classification: The captured image is downscaled to pixels, and the RGB channels are extracted. The average of each channel is then computed to form a 3D vector. A binary classifier (Perceptron, Bayesian, or KNN ensemble) then determines whether the sign belongs to the MP (green) or Dir (red) group.

Depending on the BC output, the corresponding CNN model is loaded. The region of interest (ROI) is cropped (center for MP and upper right for Dir) and resized to pixels. After binarization (MP: threshold = 112; Dir: threshold = 152), the CNN infers the class label (e.g., MP5, HO, or P3) and links it to a node in the campus graph.

Route Generation and AR Overlay: Using the node index, the Floyd–Warshall matrix is queried to obtain the next waypoint toward the destination. The navigation vector is computed with , corrected for magnetic north via sensor fusion. An arrow object (.glb) is rotated and rendered through ARCore every 200 ms.

The intermediate outputs include the binary vector (RGB averages), the binarized ROI image, and the CNN class prediction. Each output is logged for debugging purposes. The pseudocode summary for the main loop is presented in Algorithm 1.

| Algorithm 1 Main loop of the UAMNav navigation system. |

- 1:

while navigation_active do - 2:

- 3:

- 4:

- 5:

- 6:

- 7:

- 8:

- 9:

- 10:

- 11:

render_arrow() - 12:

end while

|

3.2. Cell Phone Application

Like most existing applications, the presented application includes a welcome screen (

Figure 7a) and an instruction screen (

Figure 7c) for its correct use. The design of the application takes into account the input and output requirements. As inputs, a destination and an image captured by the camera are required, so the corresponding screens are provided. Since the target is an element of a finite and defined set, all possibilities are presented in a list from which one can be selected. This list, which the user can scroll through, corresponds to a screen that also contains a search field where text can be entered for the match. This screen is shown in

Figure 7b.

The other input requires the use of a camera to capture the signage, so a screen is dedicated solely to this purpose, as shown in

Figure 7d. Since one of the outputs involves the use of AR, it is also necessary to use the camera, and this includes the remaining outputs (ABN and the image of the next node). Examples of this screen are shown later in

Figure 8.

The application was developed in Android Studio Giraffe 2022.3.1 Patch 2 and is available for Android 12 (API Level 31) or higher devices as it uses ARCore. The application is written in Java and tested on a Xiaomi Poco X3 Pro phone with an MIUI 13.0.3 (Android 12) customization layer. The equipment was sourced from Xiaomi, based in Beijing, China

4. Results

For the BC, two steps were performed. In the first, the response of the different functions that make up the BC was evaluated, showing the results for each one and the final result as shown in

Figure 8. The second phase was a confirmation of the first, verifying other aspects of the application, as this phase is necessary and is shown in all the subsequent ones. The result of the majority vote (the identification of the type of signal) was always satisfactory in all the tests carried out, so it was considered to have an accuracy of 100%, since there were no false cases; that is, the system always correctly identified whether it was MP or Dir.

Below are the graphs showing the error and accuracy obtained in training the CNNs, where accuracies of 99.60% for PR (see

Figure 9a) and 99.67% for Dir (see

Figure 9b) were achieved in only 100 epochs, although they converged much earlier.

Using the test images, the confusion matrices for MP and Dir were obtained, shown in

Figure 10a and

Figure 10b, respectively, and it can be seen that most of the test images were correctly classified. The assignment of class numbers for these signals appearing in the confusion matrices is shown in

Table 3 and

Table 4.

However, despite the good results obtained, there were cases where the identification of the type of sign was not correct. This occurred in the case of the Dir type, which is located in the open air and therefore shows wear and tear compared to the others, as seen in

Figure 11a. Another case that makes the correct classification difficult is that of the signals where a reflection appears in the ID, as seen in

Figure 11b, but this case can be easily remedied by correcting the shot, as seen in

Figure 11c.

Finally, the models obtained in .h5 format were converted to .tflite format in order to implement them in the application, where tests continued to be carried out directly on the UAM signs, with difficulties in classification only in the case of discolored signs and those where the focus causes light reflections on the identifier, which can be corrected by taking into account this detail.

Figure 12 shows more examples of classifications from the application. Contrary to the previous ones (

Figure 11), where the image obtained after the second IP (binary) can be seen, in these, the next signpost that the user must record on the route already appears.

The results were satisfactory; therefore, it was possible to reach the selected destination from the locations tested, except when passing through a faded or reflective Dir signal (which, as already mentioned, made classification difficult).

Figure 12 shows that navigation is adequate in the examples presented, as it shows the selected destination, the registered signal, and the next node to be passed. Examples of the application in action can be seen at the following link:

https://youtu.be/4CGseCxCJcI (accessed on 13 March 2025).

The superiority of the proposed system lies in its infrastructure-free, modular, and scalable design. Unlike other AR navigation solutions, which depend on Bluetooth beacons, QR codes, or LIDAR-based SLAM systems, this implementation uses only existing physical signage as natural visual markers. This drastically reduces setup and maintenance costs while preserving architectural aesthetics.

The computational efficiency achieved through the two-stage classification process—which combines lightweight color-based detection and compact CNN models in TensorFlow Lite format, with each model being under 1 MB—enables smooth, real-time inference on standard smartphones, eliminating the need for external hardware acceleration. The system updates localization and AR rendering every 200 milliseconds, providing consistent navigation feedback, even across large open areas.

In addition to the 200 ms update interval described previously, a full end-to-end latency analysis was conducted to quantify the actual response time of the system. End-to-end latency was defined as the period encompassing image acquisition, preprocessing, binary classification, CNN inference, node retrieval, and the subsequent ARCore scene update. Across 50 controlled trials performed under natural lighting conditions, the system achieved a mean end-to-end latency of 312 ms (SD = 41 ms). This value reflects the complete operational cycle experienced by the user and remained consistent across standard mid-range Android devices.

To evaluate real-world navigation performance, participants completed six predefined tasks distributed across the campus. The associated path lengths ranged from 86 m to 241 m, representing typical short- and medium-distance routes encountered in daily campus mobility. Participants were instructed to follow the AR guidance and register at least one official sign per route segment. The timed success rate reached 85.2%, with a median completion time of 4.8 min (IQR = 1.1). Statistical analysis comparing successful and unsuccessful attempts showed no significant differences in lighting conditions (Mann–Whitney U = 63.5, p = 0.41), suggesting adequate robustness of the system under common daylight usage scenarios.

In terms of scalability, the current prototype successfully covers a full-scale university campus with 36 nodes and 58 bidirectional paths, proving its ability to manage multi-building environments. Expanding the system to larger contexts, such as airports or shopping malls, requires only retraining the CNNs with new signage samples and updating the graph connectivity matrix. No structural or algorithmic modifications are necessary.

Thus, the proposed approach demonstrates practical superiority due to its independence from additional infrastructure and high portability, as well as technical scalability, making it suitable for deployment in extensive and evolving environments.

4.1. Comparaison Versus the Most Competitive Works

As summarized in

Table 5, the proposed model was designed to be lightweight and fully on-device, contrasting with pre-trained backbones, such as ResNet, EfficientNet, and Vision Transformers, which were employed in related studies. While these models achieve high accuracy in benchmark datasets such as GTSRB and BelgiumTS, they depend on large-scale training data and high-performance hardware. This limits their deployment on mobile devices for real-time AR navigation.

Proposed compact CNN and machine learning classifiers (Perceptron, K-Nearest Neighbors, and Bayesian) were optimized for inference directly on Android smartphones using TensorFlow Lite. They achieved 99.5% accuracy in the CNN stage and 100% accuracy in the binary classifier. The total model size is below 1 MB, and the latency is under 200 ms per frame. This enables continuous, on-device inference without cloud connectivity or GPU acceleration.

However, we acknowledge that expanding the dataset and using more sophisticated pre-trained models, such as Vision Transformers, could improve recognition in challenging conditions, such as strong reflections or faded signage. However, such architectures would increase computational cost and energy consumption, contradicting the system’s objective of being scalable, efficient, and infrastructure-free.

Future work will include experiments with compact pre-trained backbones, such as MobileNetV3, EfficientNet-Lite, and distilled vision transformers, to evaluate the trade-off between accuracy and on-device performance in large-scale environments, such as airports and shopping malls.

4.2. A Qualitative Study of the Usability

After the individual tests, tests were carried out with members of the UAM-A community, in which 27 users participated and were given a questionnaire with six questions (see

Table 6), of which the first three correspond to the use of the application and its conditions, while the rest evaluate the perception of this type of application in a general way.

The first question deals with the moment of use of the application, which is relevant because it is associated with the lighting present, an important aspect that influences the accuracy of signal recognition, since it is associated with reflections, but especially when it comes to obtaining binary images, since they depend on a cut-off threshold. In this question, more than 88% of the users replied that they had performed the test in the morning or in the afternoon, as shown in

Figure 13a.

The second question is the most important, because it determines whether or not the final goal of the application has been achieved, to get the user to his or her destination. In this case, more than 85% of the users succeeded, as shown in

Figure 13b.

The third question has to do with ease of use, an important aspect that in many cases can make the difference between using a product or not, and is key to the user experience. In this question, more than 50% of users found the application easy or very easy to use, and only 7.4% found it difficult to use (and nobody found it very difficult), as can be seen in

Figure 13c.

The following questions show that all users consider this type of application useful, and 96.3% think that its usefulness could be extended to other environments such as shopping malls or airports. In addition, 77.8% believe that the type of navigation used facilitates the navigation process compared to other means such as GPS. All of this information is shown in

Figure 13d–f.

5. Analysis of Results

The proposed system represents a distinctive contribution compared to previous AR navigation approaches in three fundamental aspects.

First, it eliminates the need for any external infrastructure such as Bluetooth beacons, QR codes, or LIDAR systems, relying exclusively on existing physical signage as natural visual markers. This makes the solution infrastructure-free, cost-effective, and immediately deployable in any environment that already has directional signage.

Second, the integration of a two-stage vision pipeline—combining a lightweight color-based binary classifier with a compact CNN trained on local data—achieves real-time performance directly on mobile devices without cloud computing. This architecture maintains high recognition accuracy (99.5% CNN, 100% BC) while keeping the total model size below 1 MB and ensuring low-latency inference (≈200 ms).

Third, the navigation framework provides a closed-loop AR interaction that continuously updates the route as each sign is recognized. The use of the Floyd–Warshall algorithm and node-based mapping enables dynamic route recalculation and scalability to multi-building environments.

These features together differentiate the proposed system from other AR navigation solutions that depend on pre-trained backbones or controlled environments. The work demonstrates a novel balance between computational efficiency, scalability, and real-world applicability, positioning it as an innovative contribution to infrastructure-free, mobile AR navigation.

Several works [

19,

20,

21] report positive user acceptance of applications that allow navigation using AR, as well as their preference over traditional 2D methods such as GPS, and in this case, the results are confirmed. This implementation of AR displacement is related to the nature of the system itself; i.e., it is easily integrated into vision-based systems (with or without markers), and the only work found that achieves it using a different method is [

22].

However, this realization varies in its implementation. Some works [

22,

23] require additional equipment, which in practice translates into more or less considerable costs, depending on the development, location, and size of the site, unlike the work presented which only uses the existing infrastructure—signs. These elements, which are usually found in most scenarios of this type, and which stand out from their surroundings due to the nature of their size and color, have the additional advantage of being durable, as they are usually made of long-lasting materials, as well as being located in important and accessible locations, which fully comply with the natural markers proposed in [

24], but without the problems reported in this work, since the signage used is unique.

In [

19], the disadvantages of vision systems are mentioned as sensitivity to lighting conditions, which was verified with the difficulties of the system to classify signals with reflections, although this did not represent a major problem due to the small number of signals that present this problem and the relative ease of correcting it, that is, by focusing properly from an angle where the reflection does not interfere with the identifier.

However, the existence of other possible effects of light was not ignored, and the first question of the questionnaire was aimed at recording the time of use of the application, in order to get an idea of the existing lighting conditions and their impact on the final result (whether the destination was reached or not) of using the application.

This analysis showed that three out of the four users who did not reach their destination carried out the tests in the morning, which could be due to the fact that they encountered signs with reflections, as these difficulties are mainly encountered at this time of day, when the greater intensity of the sun and the angle of incidence make reading more difficult. However, another explanation could be the difficulty in using the application, since only one of these users found it easy to use.

Only three users carried out the tests at dusk (two in the last light of the day and one at night), and it is noteworthy that all of them managed to reach their goal. Although this is not a large sample, these data show that the problem associated with the lighting diminishes in importance. Firstly, this is because most activities on university campuses take place in the morning and afternoon. Secondly, because its possible implementation in other environments, such as shopping malls or airports, which have longer hours of activity (and where 96.3% of users considered it useful) and tend to be enclosed spaces with more uniform lighting, it would be easier to reduce lighting errors associated with variability.

Thus, the difficulties related to the lighting are limited to reflections, as initially described, so if we check the signals that present this problem, they are Dir signals because they have an acrylic cover that causes the reflection. Of course, the reflection problem does not occur in all signals or at all times, nor does it always affect the identifier, so the appearance of this difficulty is not frequent. In any case, this type of protection is unusual in signals found in other scenarios, so its implementation would not have this problem in most cases.

Another disadvantage of vision systems presented in [

19] is the computational cost that this type of system generally requires due to the fact that they tend to use characteristics of the environment such as corners or textures for recognition, which makes storing, scanning, and comparing very costly in terms of memory and processing. However, this is not the case in the proposed system due to the proposed methodology. That is, first the image is classified by signal type using color with a small image to reduce operations, and this does not change the result with respect to the original because the proportion is maintained. Then, the relevant information of this signal is extracted, that is, its identifier, which is reduced to an extremely simple form of expression, binary images of

pixels, which are translated into the simplicity of the implemented CNN models (layers, neurons, parameters, weights, computational resources for training, number of epochs, training time, etc.) and whose final model weight in .tflite format is barely 950 kb, something insignificant for the capacity of current devices.

In addition to the lighting-related limitations described above, wayfinding performance can also be influenced by the relative distance or partial occlusion of signage. When users initiate navigation from a point located between nodes, or when nearby signs are temporarily out of view, the system must wait for the next valid sign capture before updating the user’s position. While this design ensures high localization accuracy at each confirmation step, it introduces short intervals during which the user’s position is not explicitly refreshed.

A practical strategy to mitigate this limitation is the incorporation of a lightweight dead-reckoning bridge between confirmed sign detections. By combining inertial sensor readings and heading information, the system could maintain an approximate user trajectory until the next sign is registered. Such an approach would not replace the vision-based localization but would serve as an intermediate estimator, particularly useful in long, open corridors or outdoor pathways where signs may be spaced farther apart.

For mixed indoor–outdoor transitions, a coarse outdoor anchor—such as GPS-based initialization—may further stabilize the system by providing a global reference before fine-grained, sign-based localization resumes. Given that campus environments often combine building interiors with open plazas or walkways, this hybrid approach represents a promising extension that would enhance continuity of navigation without compromising the infrastructure-free nature of the solution.

These considerations outline a clear path for future development and demonstrate how the current framework may be extended to address distant signage, occlusions, and intermediate starting locations, thereby increasing robustness in complex real-world scenarios.

Our findings reinforce the practical relevance of lightweight, markerless AR navigation in real environments, but they also invite a broader interpretation when viewed alongside the existing literature. Unlike most vision-based navigation systems that depend on spatial anchors, Bluetooth beacons, or controlled indoor setups, our approach demonstrates that reliable wayfinding can be achieved using only environmental features that already exist on a campus. This aligns with recent efforts toward sustainable, infrastructure-free localization, while highlighting that high recognition accuracy and low-latency AR feedback can be obtained without resorting to heavy pre-trained backbones or specialized hardware. Furthermore, the usability outcomes complement previous reports showing user preference for AR-guided navigation over conventional 2D maps. In our study, participants navigated freely across large outdoor areas under naturally varying lighting, yielding success rates comparable to those of more complex multimodal systems. This supports the notion that constrained computational pipelines—when carefully designed—can still deliver dependable real-world performance. At the same time, the results point to important considerations for long-term scalability. Occlusions, partial deterioration of signs, and morning reflections impose real constraints that do not appear in synthetic benchmarks but are characteristic of authentic outdoor use. These observations echo the challenges identified in hybrid indoor–outdoor AR research and help position our contribution as a practical, deployable alternative that intentionally embraces the imperfections of real environments. Consequently, the present work not only validates the feasibility of infrastructure-free AR navigation at campus scale but also outlines how this paradigm may evolve toward more adaptive, multimodal systems capable of handling sparse signage, mixed lighting, and extended travel corridors. This contextualized perspective better situates our contribution inside the broader trajectory of AR wayfinding research.

Although the test took place under partially controlled conditions on a university campus, it was designed to resemble real-world navigation scenarios. Participants used their own smartphones, moved through outdoor and semi-indoor areas under natural lighting, and interacted with the system independently, without researcher intervention while navigating. Consequently, the study reflects the environmental variables that users would encounter in everyday situations, such as lighting changes, reflections, and sign deterioration.

Thus, the usability outcomes (85.2% successful navigation and high perceived ease of use) are not laboratory artifacts but rather reflections of how the system performs in authentic contexts. The results validate the robustness of the proposed markerless AR approach in real campus conditions where factors such as sunlight, occlusions, and user motion dynamically influence performance. Future work will extend these evaluations to other public environments, such as shopping centers and airports, to generalize the findings beyond the academic setting.

Although the system demonstrated robust performance across the entire campus, it is important to acknowledge that the experimental evaluation could not be extended to complex public environments such as airports or shopping malls. Conducting tests in these facilities requires institutional permissions, security clearances, and strict compliance with privacy regulations that restrict image capture and software deployment. These operational constraints prevented the collection of signage samples or the execution of navigation experiments beyond the campus. Nonetheless, the proposed framework is technically extensible to such environments: adapting the system would primarily involve acquiring representative samples of the target signage and updating the corresponding graph topology. For this reason, large public venues are identified as a relevant direction for future research rather than part of the present experimental scope.

6. Conclusions

This paper presents a markerless visual navigation system for Android devices that allows mobility within the UAM-A campus.

The system consists of three main components. The first is a classifier capable of discriminating between two types of signals based on their color (RGB), using machine learning models (Perceptron, Bayes, CCP, KNN) for this task.

The second is a CNN that recognizes the identifier of each sign and is associated with a specific location on campus. Each of these represents nodes that, using the FW algorithm, make it possible to determine the best route to take the user to their destination using AR and ABN, which is the third component of the system.

For both machine learning and deep learning models, a dataset was created consisting of square color photographs of the signs of interest present in the UAM-A, on which IP was performed depending on the trained model. These trainings were carried out in the free version of Google Colab, which accounts for the simplicity of the solution to the challenges posed.

The CB achieved an accuracy of 100% in all the tests performed, even with degraded signals, while with the CNNs, an accuracy of more than 99.5% was obtained, also identifying the conditions that prevent a correct classification.

The tests carried out with users were satisfactory: 85.2% of the users achieved their goal and more than half of them considered the application to be easy to use. All the users considered this type of application useful and 96.3% believe that it would be useful in other environments, in addition to the fact that more than 77% believe that the navigation process used facilitates the task compared to traditional methods such as GPS.