Based on this visual property, we propose a fast CU segmentation algorithm based on spatial saliency. In spatial saliency detection, we determine the edge regions with dramatic spatial depth changes as high saliency regions, and categorize the non-edge regions with uniform spatial depth changes as low saliency regions. Through spatial saliency extraction, we designed the geometric visual perception factor, GVP, classified partially occupied CU blocks into visually sensitive CUs (VS Poc_CU), moderately visually sensitive CUs (MVS Poc_CU), and non-visually sensitive CUs (NVS Poc_CU) by the visual perception factor, and obtained the optimal depth ranges of partially occupied CU blocks of different kinds of sensitivities through statistical analysis, and, finally, obtained the optimal depth ranges of partially occupied CU blocks of different kinds of sensitivities through statistical analysis. The optimal depth range of partially occupied CU blocks is obtained through statistical analysis, and, finally, according to the obtained optimal CU depth range, the depths outside the optimal depth range are skipped in advance, while the division depths within the optimal depth range are calculated through the original RD cost calculation to arrive at the final optimal division depths; thus, the redundant division process of partially occupied CU blocks is skipped, and an optimized balance between coding efficiency and image quality is achieved under the premise of maintaining the quality of visual perception by the human eye, which significantly improves the overall coding speed.

3.2.1. Spatial Significance Extraction Based on Occupancy Graph Improvement

The main purpose of saliency extraction is to identify the region of an image or video that attracts the most visual attention. Traditional saliency extraction methods will mainly focus on the key points that the user notices at first glance, but, in practice, the context of the key points and the key points are inextricably linked, so the salient region should not only contain the main key points, but also the contextual part of the background region.

Context-based salient extraction methods follow three core principles: local low-level features, such as contrast and color, etc., are used to identify surrounding pixels that are significantly different, and a block of pixels is considered as salient when the pixel value is significantly different from other pixels; frequent usual features are suppressed and unusual features are highlighted from a global perspective; and, based on the laws of visual organization, salient regions should be clustered and distributed rather than isolated Based on the law of visual organization, salient regions should be clustered rather than isolated and dispersed. Based on these principles, it can be seen that saliency extraction needs to be based on the pixel blocks around the pixel rather than individual pixels, and also consider the spatial distribution relationship; i.e., a pixel block will be more salient when it is significantly different from the surrounding pixel blocks and there are other pixel blocks similar to the pixel block in the vicinity, and the saliency of this pixel block will be weakened when the pixel blocks that are similar are far away from the pixel block.

Based on the above analysis, we design an effective extraction method for spatial saliency by suppressing regions with relatively flat spatial locations and highlighting regions with high spatial contrast from both global and local perspectives, combined with contextual parts. According to the three core principles of saliency extraction, for the local image block

centered at pixel

with radius r, the degree of saliency is determined by calculating its visual feature difference with other image blocks

, and the Euclidean distance of the normalized luminance component,

, is used to represent the visual feature luminance difference between image blocks

and

. This luminance difference value directly reflects the significance strength; i.e., the larger

is, the stronger the significance of the center pixel

is indicated. Based on the law of visual perception, i.e., similar image blocks with spatial proximity will enhance the saliency, while similar image blocks with farther positional distance will weaken the saliency, the normalized Euclidean distance

is introduced to measure the spatial positional distance between image blocks

and

. Therefore, the dissimilarity between image blocks

and

is expressed as follows:

where

is the control coefficient, where it takes the value of 3. As seen in the equation, the variability between a pair of image blocks is proportional to the difference in luminance components and inversely proportional to the spatial location distance.

In significance extraction, in order to assess the difference of an image block

, it is only necessary to examine the degree of difference of its most similar N neighboring blocks in order to effectively assess its significance. The basis of this design is that, if even the most similar N blocks are significantly different from

, it can be deduced that

is significantly different from the overall image content. This is achieved by first filtering the N most similar image blocks by the difference measure

for each image block

, and, when the difference value

between these most similar blocks and

is large, it can be determined that the center pixel

of this block

has a high significance. This approach maintains the accuracy of the saliency assessment and significantly reduces the computational complexity. From this, it can be introduced that the significance value of pixel

on the r scale is as follows:

where

is a natural exponential function that maps the output to (0,1]: when the average difference is larger,

is closer to 1—i.e., the pixel

is more significant; and, when the average difference is larger,

is closer to 0—i.e., the pixel

is less significant. According to the experimental statistics, the significance extraction is better when N is taken as 256. Here, r = 4 is chosen as the basic unit of image processing.

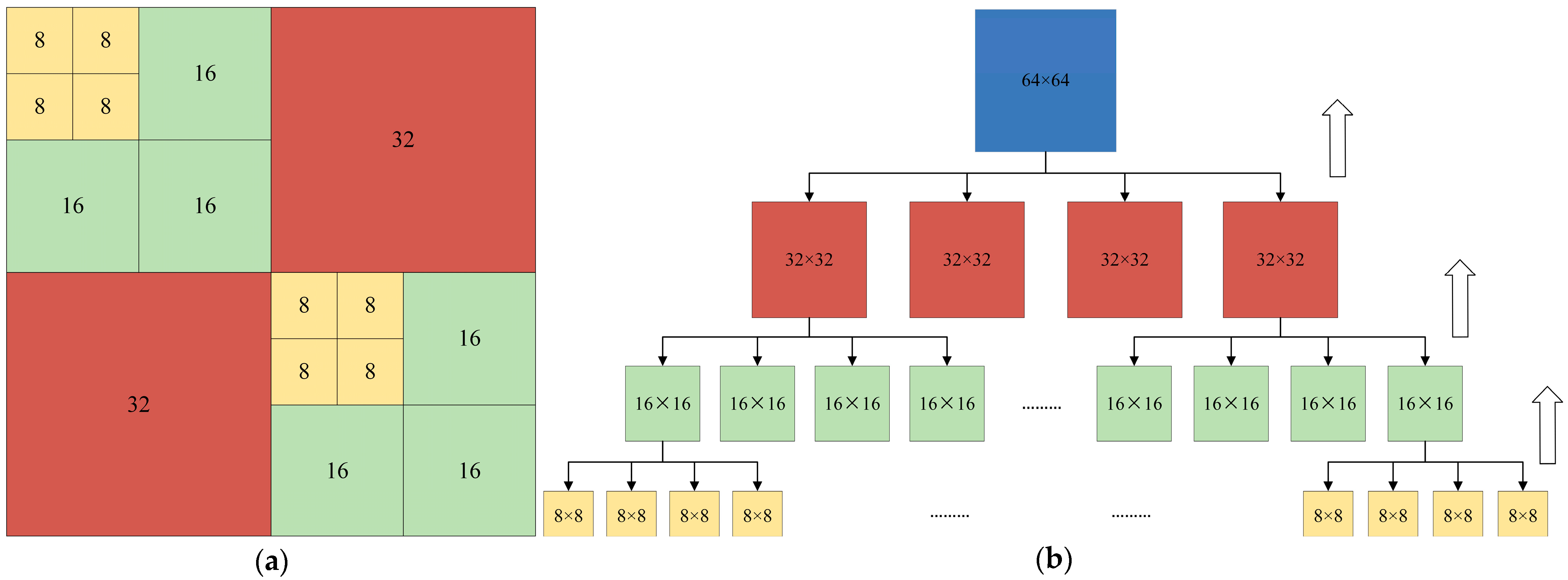

However, the above saliency extraction methods require complex computation for each pixel with a high time complexity. Therefore, we propose a hierarchical strategy: first, the original image is down-sampled by a factor of 4 to reduce the computational scale, and then up-sampled to restore the original resolution after completing the significance detection in the low-resolution space, which ensures the accuracy of the results and significantly improves the efficiency at the same time. In addition, the geometric image is composed of multi-projection surface patches with significant depth differences, and the direct significance extraction of the geometric image may suffer from accuracy degradation. Therefore, we innovatively introduce the occupancy map-guided chunking mechanism, i.e., we utilize the occupancy map to independently perform the significance extraction for each patch, so that the significance extraction result of each patch is only affected by the occupied pixels and will not be affected by the other patches and unoccupied pixels, which makes the saliency extraction results more consistent with the perception of the human eye, and achieves a balance between accuracy and computational efficiency.

3.2.2. Design of Geometric Visual Perception Factor, GVP

Since different CUs have different visual importance, we designed a Geometry Visual Perception factor (GVP) to measure the visual importance of each partially occupied CU. After introducing the occupancy map, the saliency mean value of the occupied pixels in each partially occupied CU is used as the saliency value V of the current partially occupied CU, which is calculated as follows:

where

is the significance value of the xth partially occupied CU. The significance value of unoccupied pixels is 0 since no significance extraction is performed for unoccupied pixels, and N is the number of occupied pixels in the current partially occupied CU.

Next, we take the saliency mean of all partially occupied blocks in the current frame as the average visual importance value

of the current frame, which is defined as follows:

where

is the number of partially occupied blocks in the current frame.

Finally, the geometric visual perception factor, GVP, for each partially occupied block in the current frame is defined as follows:

where

is the intensity factor, and a larger value of

indicates a larger range of values for

. The optimal

should lie in the interval that makes

most sensitive to changes in

/

, usually 1 <

< 3. The role of

is to regulate the sensitivity of the

to changes in

/

. When

=

,

is constant at 1 regardless of the value of

. We analyzed the distribution range of

/

and tested different values of

= 1.5, 2.0, 2.5, 3.0, and so on. When

= 2, the best balance between “avoiding to categorize too many CUs into fuzzy moderately sensitive categories” and “ensuring effective differentiation of visual sensitivity” is achieved.

= 2 produces the most optimal nonlinear mapping of the

over the typical range of

/

variations. Therefore, in this paper,

is set to 2.

When is less than 1, it means that the xth part of the occupied CU belongs to a non-significant region—i.e., the human eye is not sensitive to this region; while, when is greater than 1, it means that the xth part of the occupied CU belongs to a significant region, and the human eye is sensitive to this region. This significance-based analysis can effectively determine the visual importance of different regions in the point cloud video, which facilitates the optimal design of the subsequent coding process.

3.2.3. Early Skip CU Division Algorithm Based on Spatial Significance

- (1)

Correlation analysis of geometric visual perception factor, GVP, size and partially occupied CU block division:

Regions with larger GVPs represent visually perceptually sensitive areas and usually contain more information. In the process of CU division for video coding, in order to improve the prediction efficiency, such regions can be divided into smaller CUs—i.e., the division depth is larger, while, for non-visually sensitive regions, they are always divided into large CUs—i.e., the division depth is small. Therefore, there is a positive correlation between GVP and CU depth; i.e., the higher the GVP, the greater the coding unit division depth.

Since the degree of HVS sensitivity has a positive correlation with the division depth of CUs, we use the GVP size to categorize all partially occupied CUs and obtain the optimal depth division range by statistical methods. Based on the optimal depth range, the encoder can skip the division depths that exceed the optimal depth range, thus saving coding time.

- (2)

Partial occupancy CU classification based on GVP size:

Based on the GVP size, the partially occupied CUs in a video frame are classified into three categories: visually sensitive coding blocks (VS Poc_CU), moderately visually sensitive coding blocks (MVS Poc_CU), and non-visually sensitive coding blocks (NVS Poc_CU). The classifier is shown in the following equation:

V-PCC has a unique dual frame rate structure; i.e., a far-layer frame and a near-layer frame will be generated in one dynamic point cloud frame. The statistics of the CU division pattern probability between the far-layer frame and the near-layer frame reveals that there is a significant difference in the coding unit division pattern probability between the near-layer frame and the far-layer frame under the same Quantization Parameter (QP). Therefore, we classify the partially occupied CUs of near-layer frames and far-layer frames separately. The optimal depth range is obtained by counting the different categories of CU division depths for near-layer frames and far-layer frames separately.

- (3)

Optimal depth range for partially occupied CUs:

The partially occupied CUs of geometric videos are categorized using the above formulae, and the depth ranges of different kinds of partially occupied CU divisions are statistically analyzed to obtain the optimal depth ranges of partially occupied CUs of geometric videos.

Table 1 represents the probability of dividing the partial CUs of the three visual sensitivity levels at each depth under the near-layer frame. By analyzing

Table 1, it can be concluded that the probability of selecting depths 0 and 1 for NVS Poc_CU is 98.21% on average, and all other depth levels are less than 2%, so the depth of NVS Poc_CU tends to select depths 0 and 1; the average probability of selecting depths 0, 1, and 2 for MVS Poc_CU is 96.68%, and the average probability of selecting depth 3 is only 3.32%, and, therefore, MVS Poc_CU tends to choose depths 0, 1, and 2; and, although the average probability of VS Poc_CU choosing depth 0 is only 2.15%, its depths are chosen to be 0, 1, 2, and 3 due to the large influence of geometric video near-layer frames on the quality of the point cloud reconstruction and the significant influence of VS Poc_CU on the subjective perception quality.

Table 2 represents the depth distribution of partially occupied CUs for the three types of geometric video far-layer frames. By analyzing

Table 2, it can be concluded that the average probability of NVS Poc_CU selecting depth 2 and depth 3 is only 1.66% and 1.71%, respectively, and, thus, tends to select depths 0 and 1; the average probability of MVS Poc_CU selecting depths 0 and 1 is 96.30% while the average probability of selecting other depths is less than 3%, and, thus, MVS Poc_CU also tends to select depths 0 and 1; and the average probability of VS Poc_CU selecting depths 0, 1, and 2 is 97.70%, while the average probability of selecting depth 3 is only 2.30%, so the depths tend to select 0, 1, and 2.

Since the optimal delineation depths of the NVS Poc_CU and the MVS Poc_CU in the distant frames are the same and the data of the distant frames are the supplements of the data of the nearer frames, the delineation is relatively simple and has less impact on the reconstruction quality; the geometric video far layer partially occupied CUs are, therefore, divided into two categories, NVS Poc_CU and VS Poc_CU. Based on the above analysis, the optimal depth ranges of partially occupied CUs in geometric videos with different degrees of visual sensitivity under near-layer frames and far-layer frames are shown in

Table 3 and

Table 4, respectively.