1. Introduction

Deep learning (DL) comprises a set of machine learning (ML) methods based on artificial neural networks (ANN) that can learn complex representations directly from input data through representation learning [

1,

2]. DL techniques are employed in complex tasks where manual feature engineering can be time-consuming or impossible to design. Moreover, DL architectures can be generalizable, and pre-trained DL models can be used across domains. For example, DL predictors have been adopted in numerous applications, including image processing [

3], recommender systems [

4], cyber-security [

5], natural language processing [

6], and in multiple decision support systems (DSS) [

7]. DSS can strongly influence and persuade individuals and organizations. This raises ethical concerns about trust, privacy, and accuracy [

8], as well as legal questions about liability, transparency, and risk exposition [

9]. Therefore, DSS’s inner workings of the principles/mechanisms and outcomes must be intelligible to accept the DSS conclusions.

Interpreting and explaining DL predictors (both from human [

10] and virtual agents [

11,

12] perspectives) have been, to date, widely investigated in the discipline named eXplainable Artificial Intelligence (XAI) [

13]. XAI methods can be categorized into

intrinsically interpretable (interpretable by design—i.e., decision trees [

14], linear regression [

15], and rule sets [

15,

16]), and

post-hoc explainable (predictors trained on inputs–outputs—i.e., LIME [

17], SHAPLEY [

18], ECLAIRE [

19], and CIU [

20]).

Despite the significant advances in XAI, the quality of the explanations still calls for improvements. For example, on the one hand, model-agnostic approaches do not provide highly accurate descriptions of the predictor’s internal behavior. Instead, they focus solely on surrogate models (intrinsically interpretable models that mimic the original models) and ignore the internal structure of the actual model. On the other hand, model-specific approaches are restricted to a few DL architectures, limiting their scope of explanation. DL predictors are connectionist models that store their knowledge through a distributed structure represented by weighted connections between neurons and non-linear activation functions. Decompositional rule-extraction methods are suitable for extracting knowledge stored in the DL predictors. However, their scope is limited to specific architectures, usually one-hidden layer neural networks, and it is computationally expensive (requiring several phases of pruning and training).

This paper presents a tool for Deep Explanations and Rule Extraction (DEXiRE). Such a tool approximates rules for DL models with any number of hidden layers. The proposed approach employs binary neural networks to induce Boolean functions in the hidden layers, generating intermediate rule sets for every hidden layer. In turn, a rule set is inducted between the first hidden layer and the input layer. Finally, the complete rule set is obtained using inverse substitution on intermediate rule sets and first-layer rules. To reduce the size and complexity of the final rule set, the algorithm uses statistical tests and satisfiability algorithms to filter redundant, inconsistent, and non-frequent rules.

The rest of the paper is organized as follows:

Section 2 presents the state of the art on DL, XAI, and rule extraction from ANNs.

Section 3 describes the methodology.

Section 4 presents the experimental setup and the tests’ execution.

Section 5 presents the experimental results.

Section 6 discusses and evaluates the results and what we can learn from them. Finally,

Section 7 concludes the paper.

2. State of the Art

The XAI contributions can be classified into two main branches: Explainable-by-design and Post-hoc Explanations [

21,

22].

As shown in

Figure 1, on the one hand, decision trees, linear models, and rule-based approaches are considered explainable-by-design, meaning that the output is derived from the set of rules or thresholds within them. On the other hand, deep learning models, such as ANN, belong to post-hoc explanations, meaning that the output requires a third-party approach to be explained. Post-hoc explanations can be divided into local explanations (e.g., features importance) and global explanations (e.g., rule extraction). The concept of global or local explanations refers to interpreting a model from a different perspective. Global explanations focus on the model’s perspective, while local explanations focus on a particular data point or observation [

21].

This paper focuses on rule-extraction approaches, which elicit the hidden knowledge embedded in DL predictors and help explain the ANN outcomes. Extracted rules can be used for hazard mitigation, traceability, system stability and fault tolerance, operational verification and validation, and more [

23,

24]. Andrews et al. [

25] propose a multidimensional taxonomy for several rule-extraction methods, from shallow ANN architectures. They use the (i) decompositional approach: rule-extraction algorithms that work on the neuron level; (ii) pedagogical approach: rule-extraction algorithm regards the neural network as a black box; and (iii) eclectic approach: the combination of both decompositional and pedagogical approaches.

Decompositional algorithms work on the neuron level by extracting rules from neurons and inputting them into the whole network. In decompositional algorithms, every neuron and connection is transformed into a set of rules. A pioneer of decompositional algorithms is Fu [

26,

27], which proposed the KT algorithm and outlined the general algorithm by setting a threshold for each neuron. Fu resolved the problem by mapping the output result from each neuron (in hidden or output layers) into a Boolean function according to a given threshold. Towell and Shavlik [

28] expanded the subset of Fu’s algorithm, which can extract M-of-N rules from MLP models. However, a significant complexity of computing time was added for finding all potential sets of links per neuron unit. The authors in [

29] extracted rules from a neural network with one single hidden layer and one linear output unit for regression problems. The activation function in the hidden layer is approximately by piece-wise linear functions. To reduce the complexity, the network is pruned before rule extraction. Despite this limitation, the inherent simplicity of decompositional algorithms makes them extremely useful for explaining the mechanics of rule extraction and for providing interpretability of ANN models at the level of individual hidden and output units.

Pedagogical rule-extraction algorithms consider ANN as a black box, extracting based on the ANN’s inputs and outputs [

30]—neurons and their connections are not subjected to analysis. The rules extracted using Pedagogical algorithms are directly extracted from the ANN’s output, which changes as the ANN’s input changes. Therefore, contrary to decompositional algorithms, the extracted rules are still intelligible if the ANN’s internal structure is complex. Thrun in [

31] extracted symbolic knowledge rules from ANN based on Validity Interval Analysis by propagating the activations through the network. This technique simulates the ANN train coupling the system’s input and output patterns. HYPINV algorithm extracts rules from trained neural networks used in classification problems regardless of the structure of the network [

32]. Model-agnostic methods, such as HYPINV, explain the predictions of arbitrary machine learning models independently of the implementation. They provide a way to explain predictors by considering models as black boxes. HYPINV approximates the network decision boundary by finding hyperplanes tangent to the decision hypersurface. Then the network decision boundary is represented in rules. Sethi et al. [

30] proposed a pedagogical algorithm named KDRuleEx for rule extracting in the form of IF-THEN. The method can deal with discrete and continuous features and handles non-binary input. The authors in [

33] compared rule sets extracted from ensembles of interpretable neural networks DIMLP, and their conclusion is that rule sets extracted from neural networks have greater accuracy than those extracted from decision tree ensembles but at a higher computational cost. The authors in [

19] presented a new method to extract rules from Deep neural networks with any number of hidden layers. This efficient algorithm induces intermediate rule sets between the hidden layer and the predicted labels and with a process call-wise substitution to produce the final rule set.

Eclectic approach combines both the Decompositional and Pedagogical approaches. Most recent contributions in XAI are based on Eclectic approaches, such as Ebecken [

34], which present the RX algorithm to extract rules from trained MLP in classification problems. RX (i) applies a clustering genetic algorithm to find clusters of hidden unit activation values, and (ii) generates classification rules describing these clusters w.r.t. the input. Local Interpretable Model-agnostic Explanations (LIME) [

35] is a technique that explains how the input characteristics of an ML model affect its predictions. For instance, for image classification tasks, LIME seeks to find the region of an image (set of super-pixels) having the strongest association with a prediction label. LIME creates explanations by generating a new dataset of random disturbances (with their respective predictions) around the explained instance. Then it fits a weighted local surrogate model. This local model is usually simpler with inherent interpretability, such as a linear regression model. The first theoretical analysis of LIME was published in [

36], confirming the crucial role of LIME, but also demonstrating its limitation (i.e., poor choice of parameters causes LIME to miss essential features).

In [

37], authors concluded that pruning algorithms based on Mutual Information and Significance could be more efficient than the methods based on sensitivity and magnitude, as they consider the mutual dependency between the inputs of the network and outputs of the hidden neurons. This is a comparative study of pruning algorithms that compares six different algorithms of large variability with details explanations and uses only one three-class problem application (out of four) since it is the Iris dataset (popular and generally used in creating/testing algorithms) that might suggest that the results should be looked at critically.

Local Interpretable Visual Explanations (LIVE) [

38] uses a surrogate model to predict the black box model’s local properties, generating the representative model’s coefficients. LIVE differs from LIME regarding local discoverability and how it handles interpretable inputs. LIVE does not create an interpretable input space by transforming input features but instead uses the original feature space. Since the shuffled data points correspond very closely to the original points, the similarity between them is measured using the identity kernel, while the original features are used as an interpretable input.

SHapley Additive exPlanations (SHAP) [

39] is a game theory-inspired method that tries to improve interpretability by calculating the importance of each feature in individual predictions. First, the author defines a class of feature attribution methods. It integrates six methods, including LIME [

35], DeepLIFT [

40], and Layer-Wise Relevance Propagation [

41]. Then, they propose the SHAP values as unified measures of feature importance. These valued hold three desirable properties: local accuracy, missingness, and consistency. In the end, the authors present various methods for estimating SHAP values, demonstrating the superiority of these values in distinguishing various output classes, and better aligning with human intuitions compared to other methods [

21].

The BreakDown method [

40] is similar to SHAP [

39], in trying to attribute the conditioned responses of a black-box model proportionally to the input features. However, unlike SHAP, the BreakDown method is greedy in terms of conditional responses as it considers only one series of nested conditional responses. This method is theoretically not as good as SHAP, but it is faster to compute and more natural to interpret.

Comparing LIVE, LIME, SHAP, and BreakDown, there is no best-for-all method developed to meet all requirements. Most methods are focused on a particular model or data type, or their scope is either local or global, but not both. Even though SHAP is nearly the most complete method, it is not without flaws; KernelSHAP, the kernel version of SHAP, like most permutation-based methods, does not consider feature dependencies, hence, emphasizing unlikely data points. TreeSHAP is the tree version of SHAP that could solve this problem. However, it relies on conditionally expected predictions that may give non-intuitive feature importance values, as features that have no impact on prediction could be given non-zero importance values [

21].

The work in [

42] shows that model trees can be used to build guidelines for learning problems, but this needs binary actions and does not apply to continuous action problems. Satisfiability (SAT) solvers are considered alternative methods, used mainly to construct decision trees and different constraint programming methods. SAT solvers such as [

43,

44] and constraint programming methods like [

45,

46] work mainly on classification problems with binary input features and do not apply to robotics applications. Several SAT solvers and constraint programming solvers do not scale well to large datasets, mainly because these methods add per-sample constraints to the dataset [

47].

3. DEXiRE: Rational, Methodology, Algorithm, and Performance Evaluation

This section presents a novel approach to extract rules from DL predictors (with any number of hidden layers) using binarization and Boolean rule induction. In particular, this study presents a rule-extraction approach to explain the inner behavior of DL classifiers—elaborating on the actual (and not a surrogated) model.

Approaching such a novelty, we have formulated (and validated) the following underlying hypotheses.

Hypothesis 1. The binarization of hidden layers in DL predictors allows the identification of neuron activation patterns characteristic of each class.

Hypothesis 2. It is possible to use binary hidden layers to induce Boolean functions that approximate the behavior of each hidden layer.

Hypothesis 3. Intermediate rule sets approximating the behavior of each hidden layer can be combined to produce a final (global) rule set that describes the overall behavior of DL predictors.

Hypothesis 4. The verification of Hypotheses 1–3 implies the existence of rule sets able to explain binary and multiclass classifications of DL predictors.

Figure 2 schematizes and highlights the relationship between the hypotheses, the evaluation metrics, and the algorithm’s goal.

3.1. Underlying Rational Design

DEXiRE is a

decompositional rule-extraction algorithm designed to test the Hypotheses 1–4 defined above. Overall, it combines activation analysis and

binary neural networks to

extract logic rules from deep neural networks (also referred to as rules induction).

Figure 3 schematizes the working pipeline, and the listing Algorithm 1 details the internal steps in pseudocode.

Decompositional rule extraction methods extract rules at a neuron/layer level and combine them in the final rule set. Most of these methods are usually limited to networks with a single hidden layer and require pruning phases that involve retraining the model before rule extraction [

19,

48]. As a result, rule sets produced by these methods are longer and more difficult to interpret than those produced by pedagogical methods. DEXiRE uses satisfiability (SAT) algorithms [

49,

50,

51,

52], coverage [

53], and information gain to reduce the size of intermediate and final rule sets without applying pruning phases.

Binary neural networks (BNN) are characterized by using binary weights and activations, reducing the memory space required to represent quantities. BNNs are suitable for working in limited-resource devices, improving the inference speed. However, in contraposition, BNNs may lose accuracy and precision, in particular when both activation and weights are binary [

54,

55,

56]. DEXiRE employs binary neural networks to discretize only the neuron activations, based on Hypothesis 1, identifying active neurons, and making easier the induction of Boolean functions [

57].

DL predictors are able to approximate real-value and Boolean functions. Choi et al. [

58] and Mhaskar et al. [

59] have shown that it is possible to compile feedforward neural networks into logic circuits, interpreting hidden layers as logic gates. Based on the previous works and Hypotheses 1 and 2, DEXiRE identifies the activation pattern per class in every hidden layer and then induces Boolean functions between the relevant neurons identified in the activation pattern.

Rule induction algorithms take a set of observations and, based on their statistical properties and correlations between features, identify rules that split the input space into decision regions. Complex decision regions, such as those learned by DL predictors, produce complex rule sets that may be prone to overfitting. In order to avoid excessively complex rule sets, DEXiRE induces rule sets and prunes those rules with low coverage over the train samples. Once the intermediate rule sets are found, DEXiRE merges them into a final rule set, generated based on Hypotheses 3 and 4.

Figure 2 conceptualizes the hypothesis (H1–H4) and their validation process through evaluation metrics (EM) w.r.t. DEXiRE’s goal (G).

Figure 2.

Overall conceptual schematization from Hypotheses (H) to the goal (G) via validation with Evaluation Metrics (EM).

Figure 2.

Overall conceptual schematization from Hypotheses (H) to the goal (G) via validation with Evaluation Metrics (EM).

3.2. DEXiRE Algorithm, Methodology, and Performance Evaluation

The following definitions are introduced to formally describe DEXiRE.

Definition 1. An annotated training dataset is defined as the tuple , where is a matrix with m training samples and n features and is the label matrix that assigns a label l to every sample in m.

Definition 2. A pretrained Deep Learning classification predictor is a function , which maps the input features in to the predicted labels .

Definition 3. An inference rule is a logic function that draws a conclusion or conclusions by evaluating a premise. An inference rule can be expressed in an IF-THEN statement as follows: Premises can be composed of different terms (logic expressions) linked by logic operators (∧, ∨, ¬, ⊕). In this paper, rules are presented in IF-THEN form and premises are constructed from terms in disjunctive normal form (DNF). Definition 4. Accuracy of a predictor is a measure of the quality of predictions, which compares the prediction of a model with the ground-truth labels, counting the fraction of correct predictions over the total number of predictions (Equation (3)). Accuracy is measured using different metrics like accuracy-score, F1-score, precision, recall, and other performance metrics, evaluating the predictions against the ground-truth labels on supervised datasets [60,61]. Definition 5. Fidelity is a metric that compares the predictions from the original black-box model () and the predictions from an interpretable model (), measuring how reliable the explanations are in reflecting the underlying model’s behavior (Equation (4)). Fidelity is measured in terms of accuracy, F1-score, and other similarity measures, using the predictions of the black-box model as the ground truth [60,61,62]. Definition 6. Coverage can be defined as the number of data instances that activate a rule over the set of total data instances that belong to the same class , where is a subset of instances on that belongs to class [53]. Let be a rule whose conclusion is , coverage for this rule can be defined as follows: Definition 7. Rule length is a measure of the number of terms (atomic Boolean expressions, e.g., ) in a rule set.

Definition 8. An activation pattern is the set of most frequent discretized activation values for each hidden layer that characterizes each class.

DEXiRE Formal goal: Given a training set (), as was described in Definition 1, and a pretrained DL classifier () as was described in Definition 2; DEXiRE aims to induce a set of rules in IF-THEN form, as was described in Definition 3, which defines a logic model or theory that approximates the behavior of the DL predictor such that , the logic model () semantically entails (⊧) conclusions ().

Figure 3 and Algorithm 1 describe the DEXiRE pipeline step by step. The steps (S) are numbered and described below. Such a tool requires a trained model

and the training set,

with

m samples and

n features.

Figure 3.

DEXiRE pipeline describes the series of steps executed by the DEXiRE algorithm (Algorithm 1).

Figure 3.

DEXiRE pipeline describes the series of steps executed by the DEXiRE algorithm (Algorithm 1).

| Algorithm 1 DEXiRE algorithm pseudocode. |

- Require:

Pretrained DL predictor with hidden layers (). - Require:

Training feature matrix . - Require:

Training labels matrix . - Ensure:

Last layer on predictor has as many neurons as classes. - 1:

▹ Generates predictions from model [S1] - 2:

- 3:

for hidden layer i = 0,…k do - 4:

- 5:

▹ Hidden layers are binarized producing a binary model [S2] - 6:

end for - 7:

- 8:

for binary hidden layer i = 0,…k do - 9:

▹ Binary patterns are extracted, [S3] - 10:

end for - 11:

for each class do - 12:

- 13:

for hidden layer i = 0,…k do - 14:

▹ Activation pattern identification [S4] - 15:

end for - 16:

end for - 17:

for each class do - 18:

- 19:

- 20:

- 21:

for hidden layer i = 0,…k do - 22:

▹ Int. rule set generation [S5] - 23:

▹ Intermediate rule set running [S6] - 24:

end for - 25:

▹ Feature rule set generation [S7] - 26:

▹ Final rule set gen. [S8] - 27:

end for - 28:

- 29:

▹ Final rule-set evaluation [S9] - 30:

|

The DEXiRE pipeline (Algorithm 1) consists of several steps described as follows:

- S1:

Model predictions: Using the pre-trained DL predictor (

) and the training set (

), predicted labels (

) are obtained, as is described in line 1 on Algorithm 1 and in Equation (

6).

- S2:

Binary models generation: To test Hypothesis 1, it is necessary to identify the most frequently active neurons per class. DEXiRE binarize the activation functions on hidden layers using the hard-tanh function, described in Equation (

7) [

63,

64]. The hard-tanh activation function is commonly used in binary neural networks (BNN) to approximate the sign function, particularly during the backpropagation [

65].

The binarized predictor

is created cloning (coping architecture and weights) from the original model

, line 2 on Algorithm 1, then every hidden layer on

is replaced for a binary layer maintaining the same weights, lines 3–6 on Algorithm 1. Once the binary predictor

has been created, a fine-tuning training (line 7 in Algorithm 1) is executed on it to ensure high accuracy and fidelity.

- S3:

Binary activations extraction: Binary activations are discrete approximations of logic values obtained from the hard-tanh activation function. Binary activation for hidden layer

i is defined as follows:

Binary neural network () is used to obtain the binary activations for each data sample in the training set, . The binary activation extraction process is executed for each binary hidden layer (see Algorithm 1, lines 8–10).

- S4:

activation pattern identification (per class): are obtained for each hidden binary layer

, in the previous step. Binary activations are grouped by class, and then the most frequent activation values are identified and stored in the dictionary (

). Then a probability ranking is constructed per class using the likelihood of activation values per class, which describes the activation pattern per class as the collection of frequently activated neurons per layer (Algorithm 1 lines 11–15). Step S4 aims to test Hypothesis 1 identifying the activation pattern per class. Two example activation patterns are shown in

Figure 4 and

Figure 5.

- S5:

Intermediate rules generation (per class): Intermediate rules are generated, per class, from the activation pattern. Per each hidden layer and class, the most frequently activated neurons are selected, and a Boolean rule is inducted using the Sum of Products (SoP) or OneR methods. Intermediate rules are Boolean and operate with logic values, interpreting binary activation value 1 as the logic and binary activation value as the logic . (1 lines 21–24). In this step, Hypothesis 2 is tested.

- S6:

Intermediate rule sets pruning: The intermediate rule sets are pruned based on the probability ranking (likelihood of activation) of the terms which compose the Boolean function and applying satisfiability (SAT) algorithms that check the consistency of the intermediate Boolean functions (Algorithm 1 line 23).

- S7:

Features’ rule generation: Binary activation of the first hidden layer () is used as the target label to induce features’ rule set.

Explainable layers (ExpL) are hidden layers that are able, through their weights and activation, to explain the underlying neuron behavior [

60]. ExpL layers can learn an activation threshold

for the features producing partitions in the feature space, in the form

, according to the class label. However, the partitions on the input space are binary and cannot be extended to the multiclass problem. For this reason, ExpL as a method for feature rule induction is only suitable for the binary classification task. For the multiclass task, we employ algorithms such as decision trees, random forest, ID3, CART, C4.5, and OneR. (Algorithm 1 line 25).

- S8:

Final rule set generation: Per class, intermediate rule sets are merged with the initial_rule_set to produce the final_rule_set (Algorithm 1 line 26). In this step, Hypotheses 3 and 4 are tested. The substitution process carried out to produce the final rule set can be visualized in

Figure 6.

- S9:

Final rule-set evaluation: Once the final rule set has been generated, its predictions are evaluated against the true labels () predictions () to measure rule accuracy and fidelity (Algorithm 1 lines 28 and 30).

6. Analysis and Discussion

This section elaborates on the obtained results and discusses the points characterizing DEXiRE and its results.

6.1. Analysis Scenario (SC1)

It is worth recalling that the length of the rules refers to the number of Boolean terms composing a rule set (see Definition 7). The average rule length obtained by DEXiRE in scenario SC1 is reported in

Table 1 column 4. Looking at it closely, it is possible to notice that for BCWD and Prima Indians diabetes datasets, the average rule length for DEXiRE’s rule sets is 4.8 and 8.4, respectively. Both results are shorter than those obtained by ECLAIRE (6.4 and 11.8, respectively). The DEXiRE and ECLAIRE’s average rule lengths for the banknote dataset are mostly similar, with a value of 13.8.

On the one hand, rule length overlaps can be due to the influence of the dataset characteristics and model internal structure (see

Section 6.3.1). On the other hand, the difference (if any) in the average rule length relies on the DEXiRE’s use of binarized hidden layers, explainable layers, and related activation patterns. Differently, ECLAIRE uses continuous activations and decision trees to induce the features and intermediate rule sets. DEXiRE and ECLAIRE reach the same objective (generate a rule from a DL predictor) with different approaches—yet obtain comparable performance. For example, from an accuracy/fidelity point of view, DEXiRE sensibly outperforms ECLAIRE on the Prima Indians diabetes dataset, it equates ECLAIRE w.r.t. in the banknote dataset, and it sensibly underperforms w.r.t. BCWD (see

Table 1—columns 3 and 4). However, concerning the execution time, DEXiRE outperforms ECLAIRE (

faster in the best-case scenario—BCWD,

w.r.t. the Prima Indianas dataset) and mostly identical w.r.t. the banknote dataset.

Although the results obtained by DEXiRE and ECLAIRE are comparable, DEXiRE is able to provide additional insights about the internal decision process carried out by the neurons in the DL predictor (i.e., identify those neurons and their output values highly related with the predicted output). This benefit is provided due to the binarization process and activation pattern generated per class within DEXiRE.

6.2. Analysis Scenario (SC2)

Table 2 column 4 shows the average rule length obtained by DEXiRE in the scenario SC2.

It is worth recalling that DEXiRE employs a coverage threshold that allows to control the number of terms in each rule set (intermediate and final) by setting a minimum coverage threshold (Th) percentage and filtering all the rules with coverage (Definition 6) below the threshold. In the digits dataset, the rule length is shorter than the one produced by ECLAIRE. However, the accuracy and fidelity obtained by DEXiRE are very poor. To achieve the performance obtained by ECLAIRE, we have decreased the Th value. In particular, with a Th value of 25%, accuracy and fidelity are and away from matching the ECLAIRE’s performance while improving on the average rule length, which is 57% shorter than the for ECLAIRE.

In the case of the Iris dataset, the average rule length obtained by DEXiRE is shorter than the one obtained by ECLAIRE. Whereas in the case of the wine quality dataset, the ECLARE’s average rule length is sensibly shorter than the one from DEXiRE. The difference (if any) in the average rule length depends on two main factors: (i) the interrelation between the dataset characteristics and the DL predictor’s internal structure and (ii) the differences in rule-extraction algorithms’ approaches. While DEXiRE employs binarized hidden layers and related activation patterns to extract rules, ECLAIRE uses continuous activations and decision trees to induce the features and intermediate and final rule sets.

Another benefit to DEXiRE’s approach is SEEN in the overall execution time. DEXiRE outperforms ECLAIRE in all datasets ( faster in the best-case scenario – Iris dataset, w.r.t the wine quality dataset). Additionally, in this scenario, the standard deviation of the execution time is low for DEXIRE (never exceeding 9 ms). Low variance levels are due to the use of the activation pattern to identify the most relevant neurons in each hidden layer, thus reducing the time for the generation of the intermediate datasets.

In this scenario, DEXiRE and ECLAIRE exhibit comparable results in terms of accuracy and fidelity. While DEXiRE indicates a tendency to produce shorter rule sets and execution times due to the binarization process and activation pattern generated per class, DEXiRE is able to provide additional insights about the internal decision process carried out by the neurons in the DL predictor and filter out those neurons that are not relevant to the decision process carried out by the DL predictor.

6.3. Discussion

This section discusses the results obtained w.r.t. the Hypotheses 1–4 initially formulated.

6.3.1. Data and Models Interdependency

DEXiRE can have similar rule sets’ lengths with (partial) overlapping terms with ECLAIRE. Such overlap(s) can occur since, although they use different procedures, both rely on the same inputs (model and the training dataset) to induce the set of rules.

The final rule set(s) are logical formulations containing (the most relevant) data features integrating the intermediate rule sets. Both ECLAIRE and DEXiRE have similar procedures to induce (predict) intermediate rule sets, relying on the data features. DEXiRE can employ one of two methods (ExpL or decision trees) to generate the feature rule set (Step S7 in

Section 3). Similarly, ECLAIRE employs decision trees to induce the features’ rule set.

To understand the effect of the input features on the final rule set, let us analyze the case of the BCWD dataset. This dataset has 30 input features. Although the final rule sets generated by ECLAIRE and DEXiRE differ in the number of terms and the composition of rules, most of them contain the feature concave_points_worst. This tendency raises a question: why is the feature concave_points_worst present in the majority of rule sets produced by different rule-extraction algorithms?

To answer this question, we must analyze the behavior of the feature

concave_points_worst w.r.t the DL model’s preconditions and the other features in the dataset.

Figure 8 shows the feature ranking according to variance per class w.r.t. DL predictor’s labels (

). The features in the upper area present lower variance per class and higher

information gain value (see

Table A8 and

Table A9). Thus, there is a high probability that this feature is selected by the rule generation algorithms (ExpL, decision trees) and included in the final rule set.

Finally, we can infer that features with a high discrimination capability have high chances (yet no certainty) of being included in the final rule set, regardless of the rule induction algorithm employed—explaining the (partial) overlapping obtained in some datasets.

Concerning the influence of the DL predictor’s internal structure (architecture, activations, weights) on the final rule set, it is worth noticing that both DEXiRE and ECLAIRE are decompositional rule-extraction algorithms. This implies that they use the DL predictor’s internal structure to induce rules and identify the neurons responsible for the decisions (verifying Hypotheses 1 and 2).

In conclusion, DL predictors and datasets are essential inputs for rule-extraction algorithms. For this reason, their composition and structure profoundly influence the final rule sets. Finally, it is worth highlighting the interdependence between the data and the DL predictors: the latter (being data-driven models) learn from the former, adjusting their structure and parameters to fit the task.

6.3.2. The Role of Binarization

Extracting rules from hidden layers in neural networks is still an open challenge. The hidden layer’s activations are continuous and non-linear, which is an excellent characteristic for learning parameters with the back-propagation algorithm. However, it is more difficult to interpret their output values w.r.t. to the inputs. To solve this challenge, several decompositional rule-extraction algorithms approximate continuous activations through discrete segments to induce rules on each segment [

29]. Nevertheless, these approaches have some limitations. For example, they tend to generate long rule sets (with many terms/rules—not human-readable) and require several phases of pruning and re-training. Moreover, most decompositional approaches are limited to one or a predefined number of hidden layers. DEXiRE overcomes these limitations and simplifies the identification of frequent activation patterns (verifying Hypotheses 1–3).

In particular, Hypothesis 1 states that it is possible to identify activation patterns more simply by binarizing the hidden layers of a DL predictor (

). To test Hypothesis 1, we binarized a trained DL predictor and then extracted the binary activations per hidden layer for each instance in the training set. Finally, using the binary activations, we calculate the kernel density estimation (KDE) for each neuron and visualize it in

Figure 9 and

Figure 10.

Figure 9 shows the KDE for the first hidden binary layer (hidden layer 0) discriminated by class (class 0 and 1). Moreover, we can observe the high level of polarization present in neurons h_0_0, h_0_7, and h_0_8. Empty plots in this figure indicate neurons with zero variance (the same value for all the instances). Neurons with zero variance do not provide discriminative information, while highly polarized neurons become effective discriminators. Thus, the polarization induced by binary hidden layers and binary neural networks (BNN) allows the identification of activation patterns as described in Algorithm 1.

Figure 10 shows the KDE for the second hidden binary layer (hidden layer 1) discriminated by class (class 0 and 1). Similar to the previous layer, we can identify the relevant neurons in the decision of the classifier (neuron h_1_0) and discard those with zero variance. It is worth noticing that the number of discriminative neurons decreases, and the polarization increases as we approach the output layer. Thus, we can consider Hypotheses 2 and 3 validated. Additional KDE analysis for different datasets and DL predictors can be found in

Appendix C.

6.3.3. From Neurons to Decisions: Activation Pattern

As was discussed above, binary activations in the hidden layers generate binary patterns representing the input examples transformed by each hidden layer. From those binary patterns, we can filter out those neurons with zero variance because they do not apport discriminative information. With the filtered set of binary patterns, we can identify the most frequently active neurons and their activation values per class, generating an activation pattern.

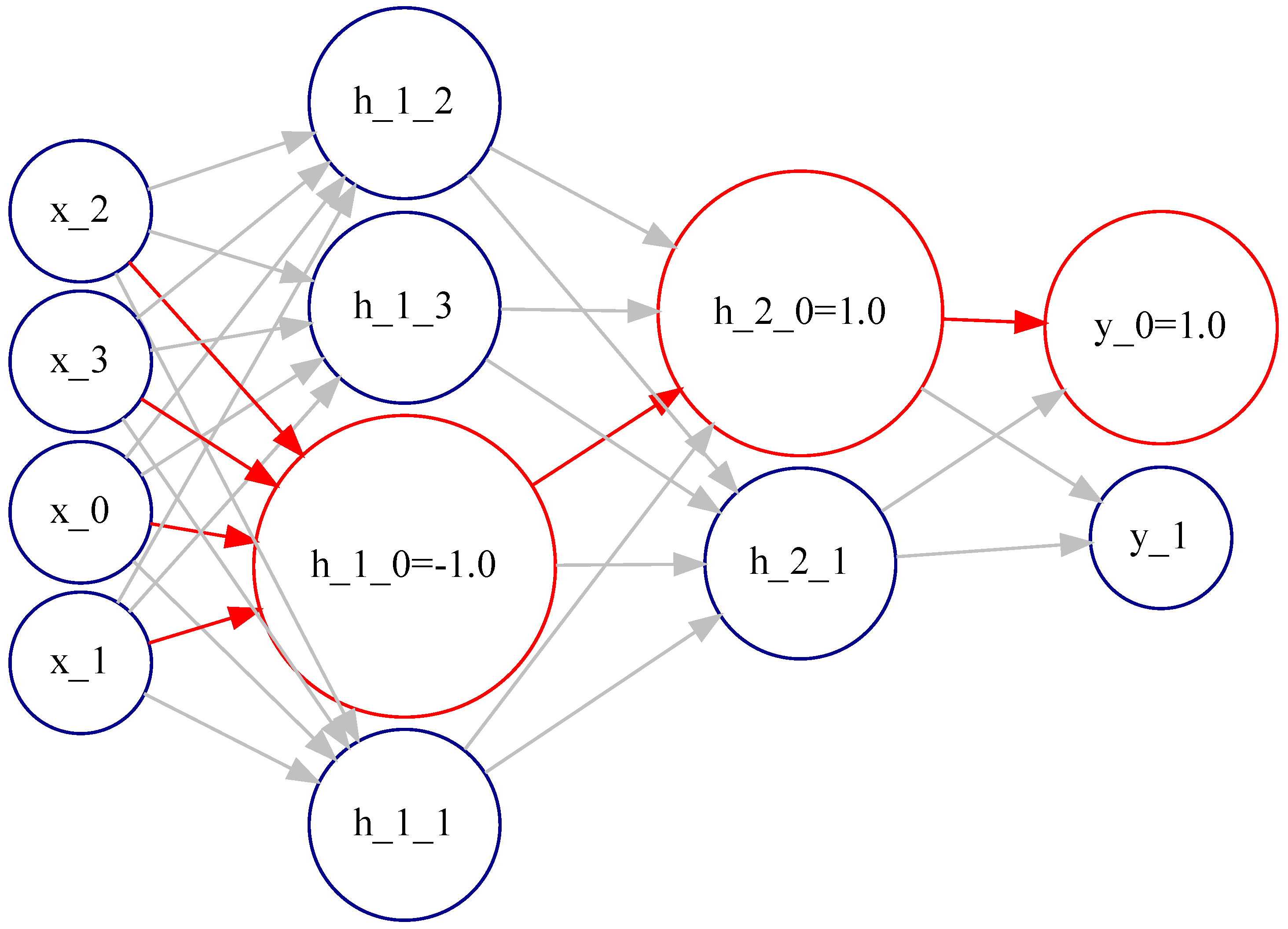

Figure 11 (automatically generated) shows the activation pattern for class 0 in the banknote dataset. The activation pattern is highlighted in red and involves neurons: h_1_0 with activation value

, h_2_0 with activation value 1, and the output neuron y_0_1 with activation value 1. The activation pattern reveals layer by layer the most likely decisions the network is making to reach the final decision.

Figure 12 describes the activation pattern for class 1 in the banknote dataset. The activation pattern is highlighted in red and involves neurons: h_1_1 with activation value 1, h_2_0 with activation value -1, and the output neuron y_1_1 with activation value 1. The activation pattern reveals layer by layer the most likely decisions the network makes to reach the final decision. Comparing the activation pattern for class 0 and class 1, we can gain insight into the internal decision process carried out by the binary DL predictor. For example, we know that decision relies on neurons h_1_0 and h_1_1 for the first hidden layer. For the second hidden layer, the decision is taken by neuron h_2_0, which, acting as a switch, selects the output neuron to activate.

Finally, we can infer that the activation pattern is a valuable explainable artifact that complements the rule sets and provides additional insight into the internal decision process carried out by the binary DL predictor.

6.3.4. Rationales behind Rule Induction

This work employs two methods to induce the feature rules: explainable layers (ExpL) for binary classification tasks (SC1) and decision trees for multiclass classification (SC2).

In scenario SC1, an ExpL is added between the input layer and the first hidden layer. After a fine-tuning training with the rest of the layers frozen, the weights of ExpL codify a threshold value (

) per feature (

) that splits the input space in two, indicating the point of maximum information gain per feature. Using the learned threshold values is possible to induce simple rules per feature (

) in the form of

, combining these simple rules into the intermediate rule set the final rule set is obtained [

60]. ExpL layers are suitable for binary classification tasks, given their ability to split the space into binary buckets with complementary rules.

Figure 13 shows the density function discriminated by class for the feature

variance_of_the_wavelet_transform (class 0 in blue and class 1 in orange), and the learned threshold value (

) (shown as a vertical red line) that splits the input space for class 0 and 1.

In the multiclass task case, and given its binary rule induction characteristic, ExpL must employ a series of One-vs-Rest setups. However, one-vs-Rest setups imply a high computational cost due to the increasing number of models to train as the number of classes increases. Equation (

9) provides the number of models to train in a One-vs-Rest setup. Applying such a formula to a dataset with three target classes, the required number of models to induce the rule set is 3. However, if we extend the target classes to 10 (Digits dataset), the models to train scale to 45. Additionally, ExpL produces binary splits per class (One-vs-Rest), which are not expressive enough to model the complex decision boundaries of multiclass models, producing low-accurate rule sets in the case of the multiclass task. Due to these reasons, in scenario SC2, we have employed as features’ rule inductor the decision trees algorithm (C4.5). Finally, through SC1 and SC2, we have shown that DEXiRE can be applied for both binary and multiclass classification—verifying Hypothesis 4.

6.3.5. Beyond Rule Sets

DEXiRE provides characteristics that go beyond other rule-extraction algorithms. In particular:

Tuneable threshold rule set granularity: DEXiRE provides a fine granularity control over the number of terms in the intermediate and final rule sets. While algorithms such as ECLAIRE control term expansion indirectly through decision tree parameters, DEXiRE provides fine control over the number of terms and the number of clauses through the coverage threshold (Th) parameter. It filters out terms and clauses with low coverage or those conflicting with other rules with higher coverage or confidence values.

Understand per class discriminative neurons: As discussed above, using binary activations and identifying the activation pattern allow us to obtain clues about the predictor’s decision process. It is also possible to identify those neurons discriminating by class and their value and concentrate the analysis on them, removing those neurons with zero variance.

activation pattern supports the knowledge injection: The activation pattern allows identifying the more frequently activated binary neurons per class in each hidden layer along with its activation value. This information helps to describe the DL estimator’s internal decision process, and additionally, the activation pattern can also be used to inject symbolic knowledge within the neural network [

69,

70,

71]. Symbolic artificial intelligence (AI) relies on high-level knowledge usually expressed as rules, logic predicates, or ontologies [

22]. On the other hand, subsymbolic AI is data-driven, as in the case of DL predictors. Nevertheless, subsymbolic and symbolic AI paradigms can be combined following the Hypothesis 2, which states that binary neurons behave as a Boolean logic function. Therefore, first-order logic predicates can be embedded into a Boolean function and then integrated as a binary neuron or layer into the binary DL predictor, with the additional advantage that both symbolic and subsymbolic neurons can co-exist and interact in the same network.

6.3.6. Constraints and Limitations

Besides the advantages discussed above, as of today, DEXiRE is still subjected to some constraints (C) and limitations (L):

- C:

To identify the activation pattern, DEXiRE requires that the output layer has as many neurons as classes and that the class labels are encoded in one hot encoder.

- L:

Currently, DEXiRE has been tested on the classification task (binary and multiclass classification) with comparable results to the state-of-the-art rule extractors. However, DEXiRE needs to be extended to perform additional tasks, such as rule extraction in the regression task and in the multi-labeling task. These additional capabilities are proposed in future work.

- L:

To date, DEXiRE can work with a feed-forward neural network (with any number of hidden layers). DEXiRE has not been designed to work with convolutional neural networks (CNN), recurrent neural networks (RNN), or Transformers. However, based on the verified hypotheses, it can also be extended to work with these kinds of DL models.

7. Conclusions and Future Work

This paper presents DEXiRE, a decompositional rule-extraction algorithm based on hidden layers’ binarization, path identification, and layer-by-layer rule induction. DEXiRE has been developed following the Hypotheses 1–4, and it has been tested in two scenarios, binary classification (SC1) and multiclass classification (SC2), with six publicly available datasets.

Attributes and performance of DEXiRE have been compared with ECLAIRE (state-of-the-art for decompositional rule extraction), leading to the conclusion that:

DEXiRE can be applied to extract rules from DL predictors with

any number of hidden layers. The layer-by-layer generated rules do not affect nor depend on rules in other layers. The resulting independence reduces the complexity of the intermediate rule sets and eliminates the limitation of being able to process only one or a fixed predefined number of layers. Indeed, approaches such as FERNN [

48] do not scale as they express the rules of a given layer as a function of those from other layers.

DEXiRE has been tested in binary and multiclass classification scenarios, obtaining performance (accuracy and fidelity) comparable to ECLAIRE.

Binary neurons with a high degree of polarization in each hidden layer become effective discriminators capable of identifying, through a binary pattern, instances that belong to a particular class. Binary neurons can be expressed as Boolean functions and the relative Boolean intermediate rule sets.

The activation pattern identifies the most frequently activated binary neurons per class for each layer and filters out irrelevant or redundant neurons. The activation pattern provides additional explainable insights about the DL predictor’s internal decision process and reduces the size of the intermediate rule sets and the execution time.

As the feature work, we envision extending DEXiRE to (i) handle regression tasks, (ii) develop a module for convolutional neural networks, and (iii) develop a module for recurrent neural networks.