Enhancing Interaction with Augmented Reality through Mid-Air Haptic Feedback: Architecture Design and User Feedback

Abstract

1. Introduction

2. State of the Art

- Basic cutaneous devices. This kind of device is one of the most popular, since they are simple and provide great results. The vibration of the device is perceived by the user through cutaneous perception that the user is accustomed to, such as when they receive an alert on the smartphone. However, this kind of device presents some limitations; for example, users may find it difficult to understand complicated vibration patterns. Some examples of this kind of device are vibration wristbands [19] (discontinued from 2018) [27]. Also, there are possibilities like fingertip devices [28] or smartphone vibration itself.

- Active surfaces. This devices uses physical components to represent the feedback, for example, by using vertical bars. This kind of device is good for rendering surfaces, having nice resolution and accuracy. However, they have limited functionality due to the actuators required to perform the feedback. The biggest limitation of this kind of device is the lack of portability, since they are usually required to be installed in a certain manner. Some examples of this kind of device are Relief project [29] and Materiable [30].

- Mid-air devices provide haptic feedback without requiring the user to wear any device (in contrast with devices such as gloves, bracelets, or rings). The main difference with the previous group is that, in the previous one, the user came into physical contact with the haptic device, while in this group, that interaction occurs in the air (hence, the name). There are different kinds of mid-air haptic devices, but they can be divided into three groups according to the technology they use: air vortices [31], air-jets [32], and ultrasounds [33,34].

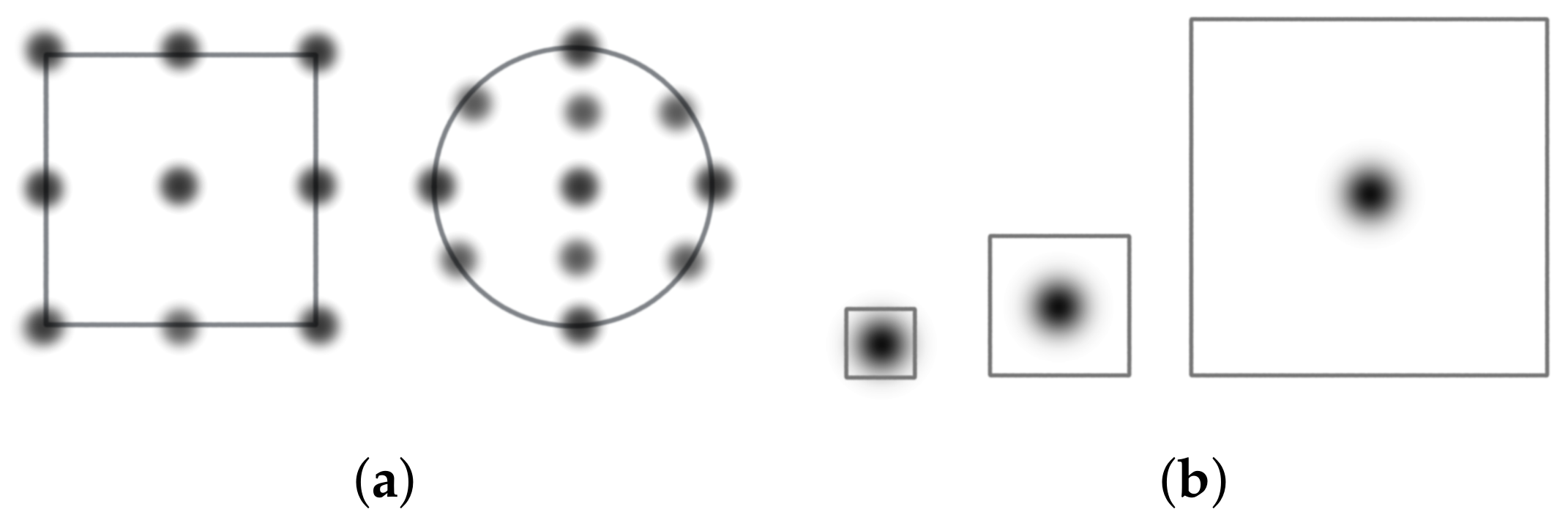

3. Haptic Representation of an Object’s Shape and Size by Using Haptic Devices

- High-fidelity representation: This type of representation seeks to give haptic feedback that resembles as close as possible the physical characteristics of the object to be represented. More specifically, the characteristics that we contemplate in this paper are volume, size, and shape, leaving aside more advanced ones like weight and textures due to technology limitations. Hence, the haptic device will need to reproduce the 3D model characteristics, mainly the vertices and the faces. This type of representation is useful in those situations in which it is required for the user to explore a model (e.g., to perceive its dimensions, to resize it, etc.). To build high-fidelity representations, it is feasible to use different strategies:

- –

- Vertex-based represents only the vertices that shape the model as scattered points.

- –

- Edge-based represents only the edges between the devices of the model. These edges are defined by the faces. By definition, vertices are also represented, since they are present in the limit of the edges.

- –

- Volume-based: In this case, both vertices and edges are represented as are the surface of the faces as well as the interior of the figure.

- Single-feature-based representation: In this case, we seek to approximate a 3D object to one or several haptic points, leaving aside fidelity. This is especially appropriate for those 3D models that are small enough and in which details would go unnoticed when using the high-fidelity representation. Instead of representing all the features that form the 3D model, it may be translated to a single point, calculated, for example, using the centroid of all its vertices. Figure 2 illustrates the issue in which the larger the 3D object is, the worse the adaptation will be using this type of representation.

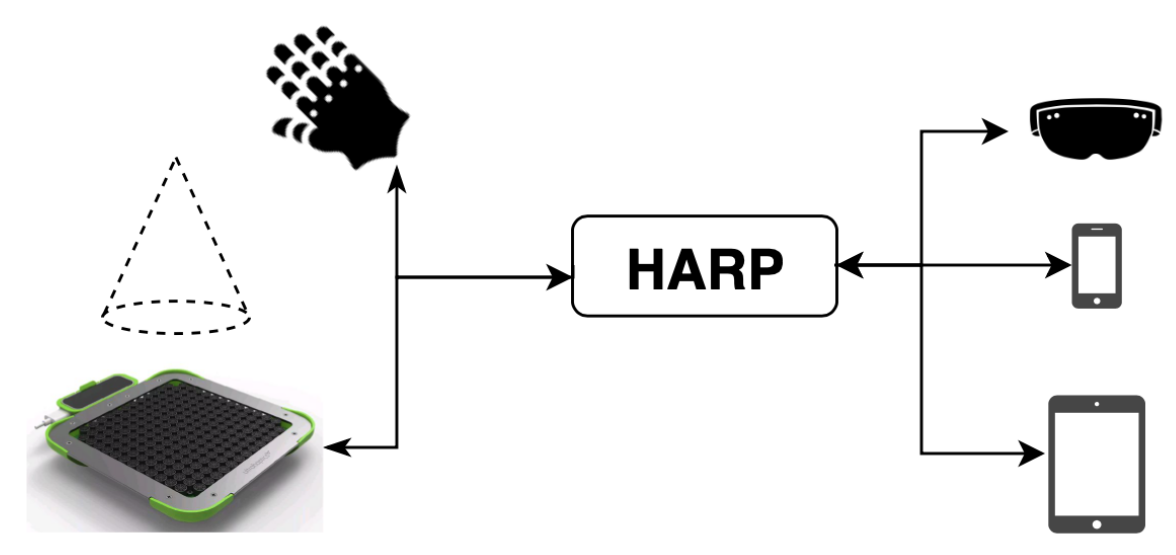

4. HARP: An Architecture for Haptically Enhanced AR Experiences

- The solution should be able to generate a haptic representation for any 3D model (taking into account the limitations of the technology in use), and it should be able to work with both haptic representation possibilities, high-fidelity and feature-based. Other types of representations should be easily incorporated into the system.

- The haptic representation of a given virtual object must be aligned with the visual representation of the digital element. In other words, given a 3D model with a certain appearance located in a certain position with respect to the real world, its haptic representation must occupy the same space as it while adapting to its form.

- The solution must be multi-platform. Since each AR device works with its own platform and development frameworks, it is necessary to define a workflow to support all of them and to speed up the development process.

- The objective is to allow the integration of any type of haptic device, be it mid-air, a glove, or a bracelet. As a starting point and to show the viability of the solution, it should support the Ultrahaptics device, which we use as a representative of mid-air haptic devices. However, it should be extensible to other kind of haptic devices. The main reason for focusing on mid-air technology is that it does not require the user to wear any device. Additionally, the availability of some recent devices and integrable technology that might be available as part of commercial equipment makes it interesting to explore both the performance and acceptance of mid-air devices.

- To maximize compatibility with other platforms, the architecture should work with structures similar to those of the main development frameworks.

4.1. Data Model

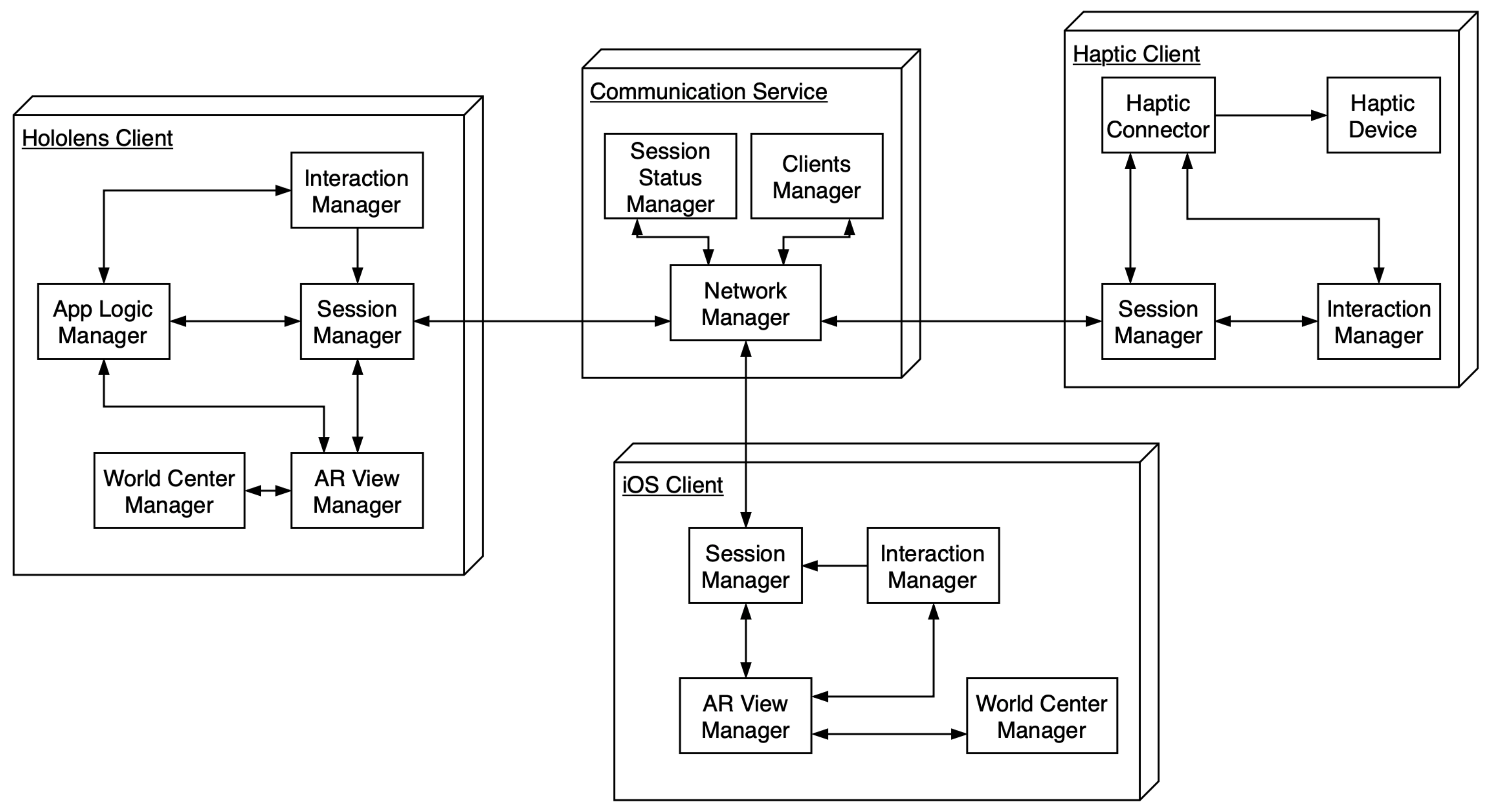

4.2. System Architecture

- HARP client: This block brings together the minimum functionalities that a client must accomplish to satisfy the requirements mentioned in Section 3:

- –

- The Session Manager component acts as an entry point into the system. As its name indicates, it is in charge of managing the session information, which, briefly recalling, includes information of the 3D objects to be represented by combining AR and haptics. It is capable of receiving the status of the session from the Communication Service and of generating and updating it.

- –

- The AR View Manager has a very specific purpose. It is in charge of receiving the session status from the Session Manager and representing it using the AR framework of the device on which it is deployed. For example, in the case of being deployed to an iOS device, after receiving the session status, it has to generate the scene and to create a node (SCNNode in Scenekit) on that scene for each element on the session. As updates from the state arrive, it must adapt the AR view to them. At this point, an issue arises: to which point in the physical space should that AR content be attached?

- –

- The World Center Manager component emerges to address that issue. It must define the required functionality to set the origin of coordinates for the digital content and its relation to the real world. The final implementation is open to all kind of possibilities. In Section 5.1, we give as an example the possibility of establishing the alignment manually or semiautomatically.

- –

- The App Logic manager groups all the logic on which the application depends. For example, in the Simon example in Section 6.2, this component was integrated on the HoloLens client. Its main occupation was to keep track of the buttons that the user presses, the generation of new color sequences, and other related functionality.

- –

- The Interaction Manager component abstracts the interaction capabilities of the system. For example, the Simon prototype was deployed in two different devices: an iOS one and an HoloLens one. On the one hand, the Interaction Manager from the iOS client is in charge of detecting touches on the screen, translating them into touches on digital objects and generating the corresponding events. On the other hand, the implementation on the HoloLens client receives information of hand gestures and reacts to it. In any case, the resulting interaction should be able to trigger certain logics of the application and to cooperate with the Session Manager. An example of this process in the Simon system happens when the user pushes the haptic button with their hand and the HoloLens has received the interaction event. The HoloLens then processes the color of the button and checks if it is the proper one. In this case, the logic is that checking process in which the next color of the sequence is compared to the introduced one, but any other logic could be implemented here. To finish with this component, it may be possible for the result of the interaction to generate a haptic response (e.g., to change the haptic pattern or to disable the haptic feedback). It is also possible that the device that provides the aforementioned response also delivers relevant information (e.g., the Ultrahaptics device has a Leap Motion on it that generates a representation of the user hand), which can be processed.

- Communication Service: Most haptic devices require a wired connection to a computer, connections that smartphones and wearable AR devices cannot guarantee. Hence, this component will act as a bridge between different clients that collaborate in the process. Besides that, when multiple users are collaborating (e.g., the iOS and HoloLens scenario of Simon), it serves as a networking component. The Communication Service has three main parts:

- –

- A Network Manager component is in charge of handling the events on the network.

- –

- When the event contains updates of the session status, the Session Status manager component updates its local representation, which may then be sent to other clients who connect later.

- –

- Finally, the Clients Manager component handles information about client connections (e.g., protocol used, addresses, etc.).

|

| Listing 1: Example of the data model as it is stored in the communication service, presented in JSON format. |

5. Practical Issues on Haptic Representation

5.1. Alignment Method between the Physical and the Virtual World

- Manual: We have equipped the Ultrahaptics board with three stickers on three of its corners, as Figure 7 shows. In order to perform the alignment, the first task of the user is to move three digital cube anchors using gestures and to place them on top of those stickers. Once this is done, the system is able to calculate a local coordinate system centered on the device. This coordinate system is then used to locate the digital representation of the Ultrahaptics itself on top of which the digital elements will be attached. From this moment on, the haptic representation and the AR content should share the same space. The main problem with this method is that the user may make mistakes when positioning the anchors. Figure 7 illustrates this problem, since the user has positioned some elements on top of the device and some inside it. The more accurate the alignment is, the more precisely the haptic feedback will match the AR content.

- Automatic: In this strategy, the application establishes the coordinate system using mark detection. A QR code or an image is placed next to the Ultrahaptics in a specific position (i.e., known distance and orientation). The device will always be situated in the same position with respect to the mark. By knowing the logical relation between the mark and the device, transformations between both of them are trivial. The framework used to perform the mark detection has been the Vuforia SDK for Unity [49]. Once the system has detected the marker, it shows a transparent red-colored plane over it as will be shown later in Section 6.1. Under certain circumstances (e.g., low illumination or lack of space near the physical device), mark detection may not work as well as expected.

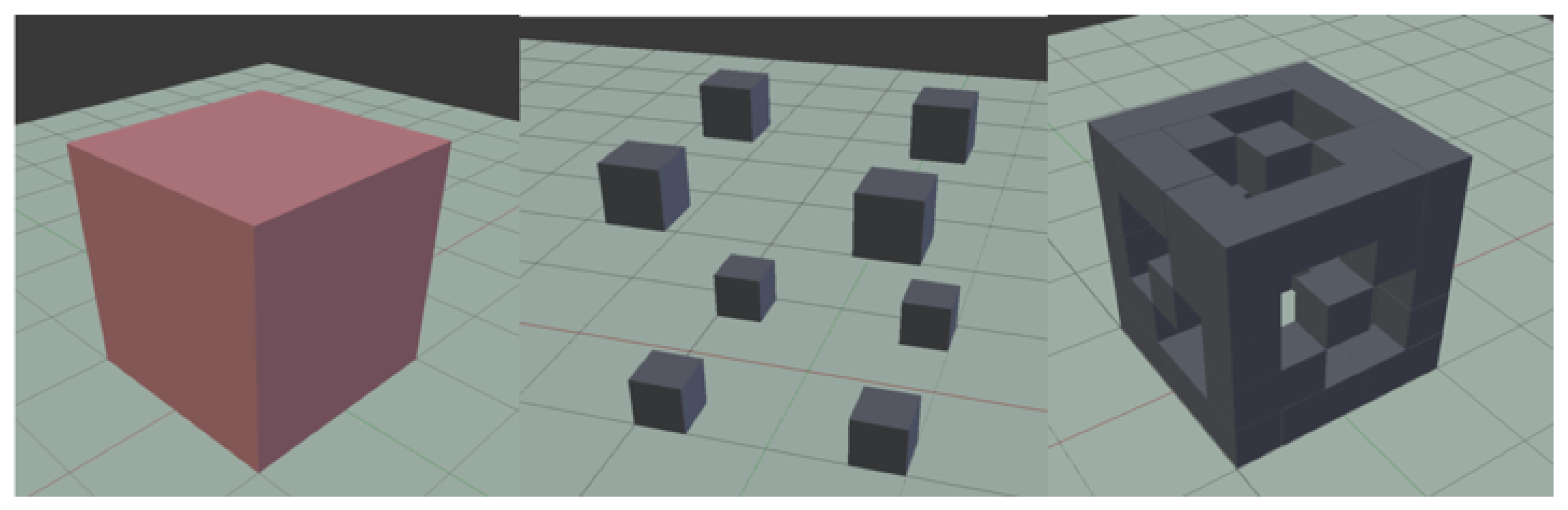

5.2. Haptic Representation of a 3D Model in Mid-Air Haptic Scenario

6. Development over HARP: Process and Prototypes

6.1. Building a New Application: The Haptic Inspector

6.2. Building Haptically Interactive Applications: The Example of the HARptic Simon Game

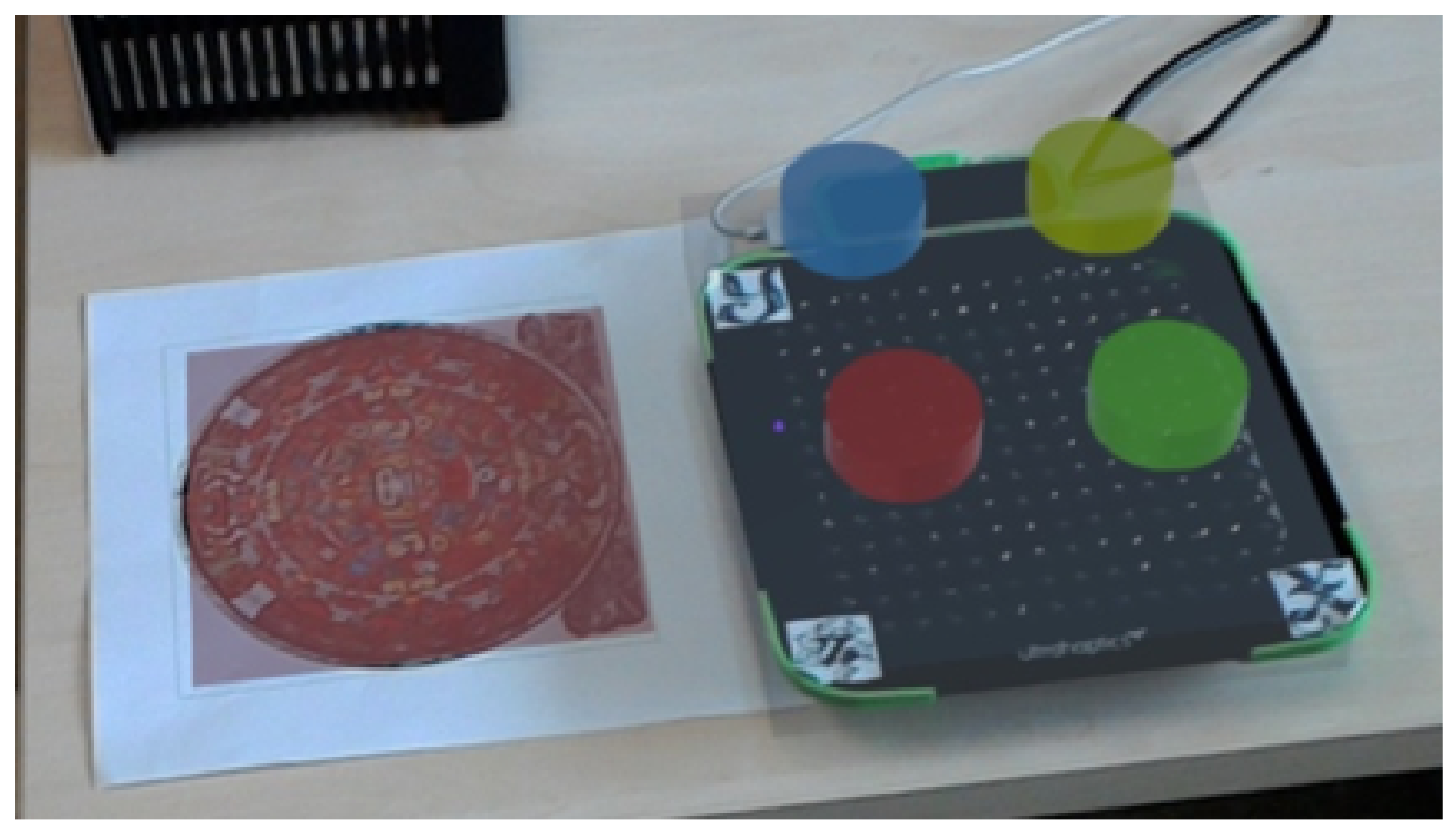

- The haptic representation of the 3D buttons is generated through their centroids, using the feature-based representation. This is possible because buttons are small and haptics are used to enhance their perception, i.e., to locate them and to tangibly show the resistance that a non-pressed, real button naturally shows.

- The position of the user’s hand is tracked with the Leap Motion integrated in the Ultrahaptics. This allows us to make the buttons react to the position of the hand. As the user presses a button, it will get closer to the device, and to simulate this resistance, the ultrasound intensity will rise. When the user effectively presses the button, it generates an event that includes the color information. Once the user releases it, the button “returns” to its original position, with the ultrasound intensity back to the normal level. In this case, the status of the session is updated from the haptic client and changes are notified to HoloLens.

- When the user pushes a button, an interaction event is generated. This event is just a message that encapsulates certain information about the user having interacted with a digital element (e.g., an object that generates the event, time, and session to which it is attached). This event is then sent to the communication service and forwarded to all the devices that are participating. This kind of event is not exclusive of the haptic client, as they could be generated anywhere in the architecture.Let us suppose that the user has pressed the blue button. The haptic client encodes that information within an interaction event, which is sent to the HoloLens client. When the HoloLens receives that message, it decodes to which element of the scene it refers (each node has an unique ID), checking if any reaction is expected. If so, it checks whether the next element of the sequence that the user must enter is the blue color. If that is correct, the user is one step closer to matching the sequence. Otherwise, there has been an error, so the system alerts the player and shows them the same or a different sequence. In summary, this kind of event allows great flexibility when integrating different devices in the architecture ecosystem.

- The logic of the whole application is located on the HoloLens client. The haptic client is able to receive the 3D elements and to generate proper haptic representation without obstructing the logic of the application. This feature is highly desired, since at any time it is possible to replace the Ultrahaptics with any other haptic device.

- A second participant using the mobile iOS client can receive the status of the session as seen by the HoloLens user. Going one step further, this user can interact with the buttons by touching them on the device’s screen. This interaction is integrated in a seamless way with the rest of the components.

- For creating the iOS client, we followed the same steps explained in Section 6.1 but, this time, adapted them to the mobile system. As a result, we can offer a library similar to the prefabs mentioned in Section 6.1 but for the iOS platform.

7. Validation with Users

7.1. User Study Objectives

7.2. Participants and Apparatus

7.3. Procedure, Tasks and Experimental Design

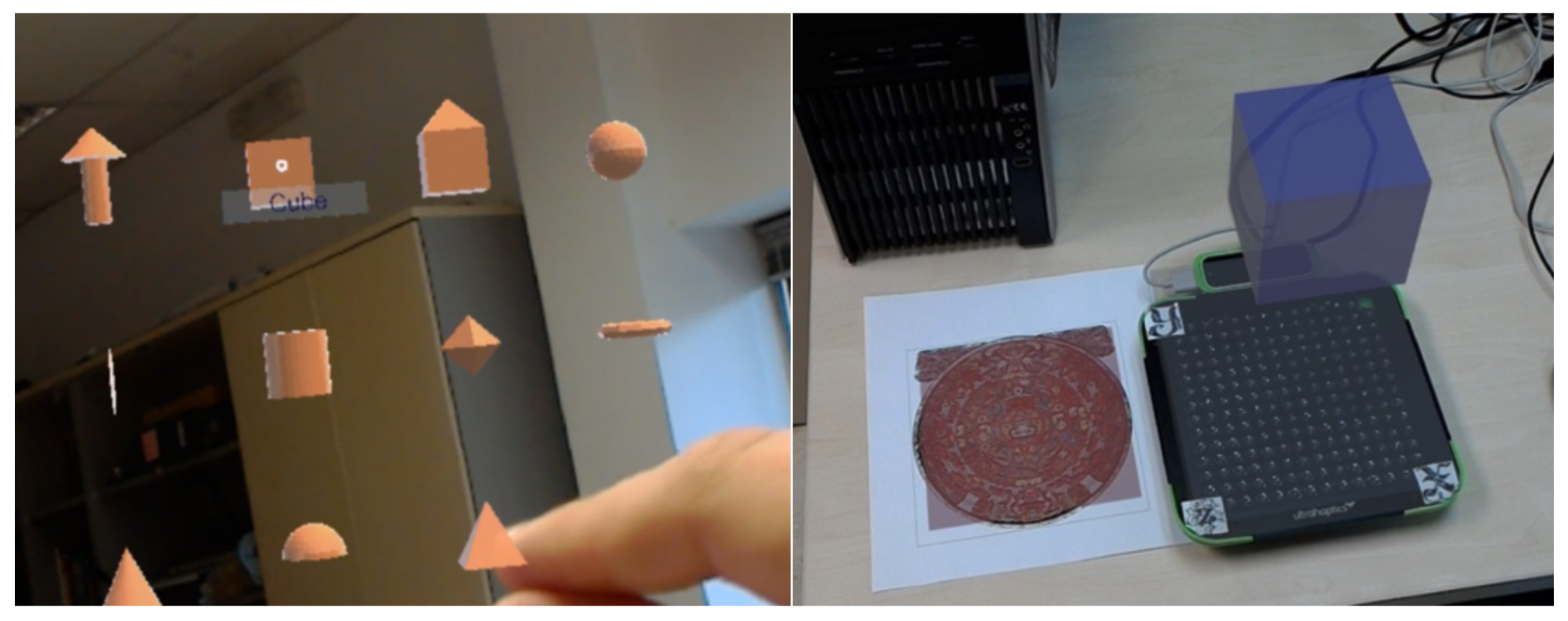

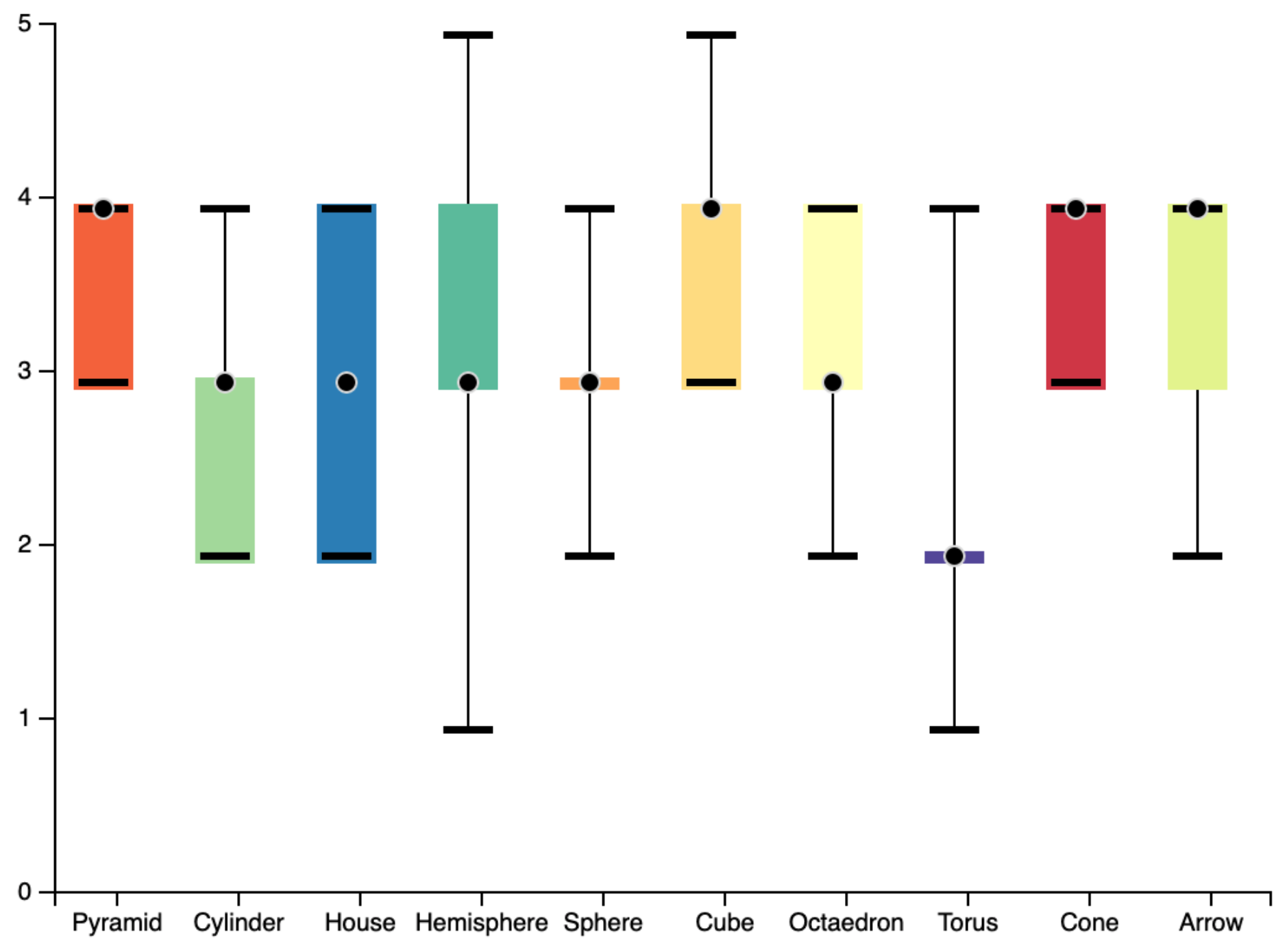

- Task 1, perception of the contribution of haptic feedback over AR visualization for inspection tasks: This task has been designed to offer the user a first contact with the combination of haptic feedback and AR or with HARptic. The basics of the system are introduced to the participants: they will be able to haptically inspect different geometrical figures that will be visible through HoloLens, and they can inspect with their hand. Participants are not told how to actually inspect the figures; their own interpretation of the action will condition the way they interact with the views. This process is repeated for the 10 images in Figure 11. For each figure, the exploration time is saved. Additionally, the user is asked to rate from 1 (very difficult) to 5 (very easy) to which extent the figure would be guessable only through the delivered haptics (no visualization available). The images chosen to be included in the test (for Tasks 1–3), are divided into the following groups: A first group of basic geometries (pyramid, cone, sphere, hemisphere, cube, and cylinder), a second one of more complex geometries (octahedron and torus), and one last group of compound figures (house and arrow) thought to simulate objects. Figure 11 shows how figures are presented to the users.

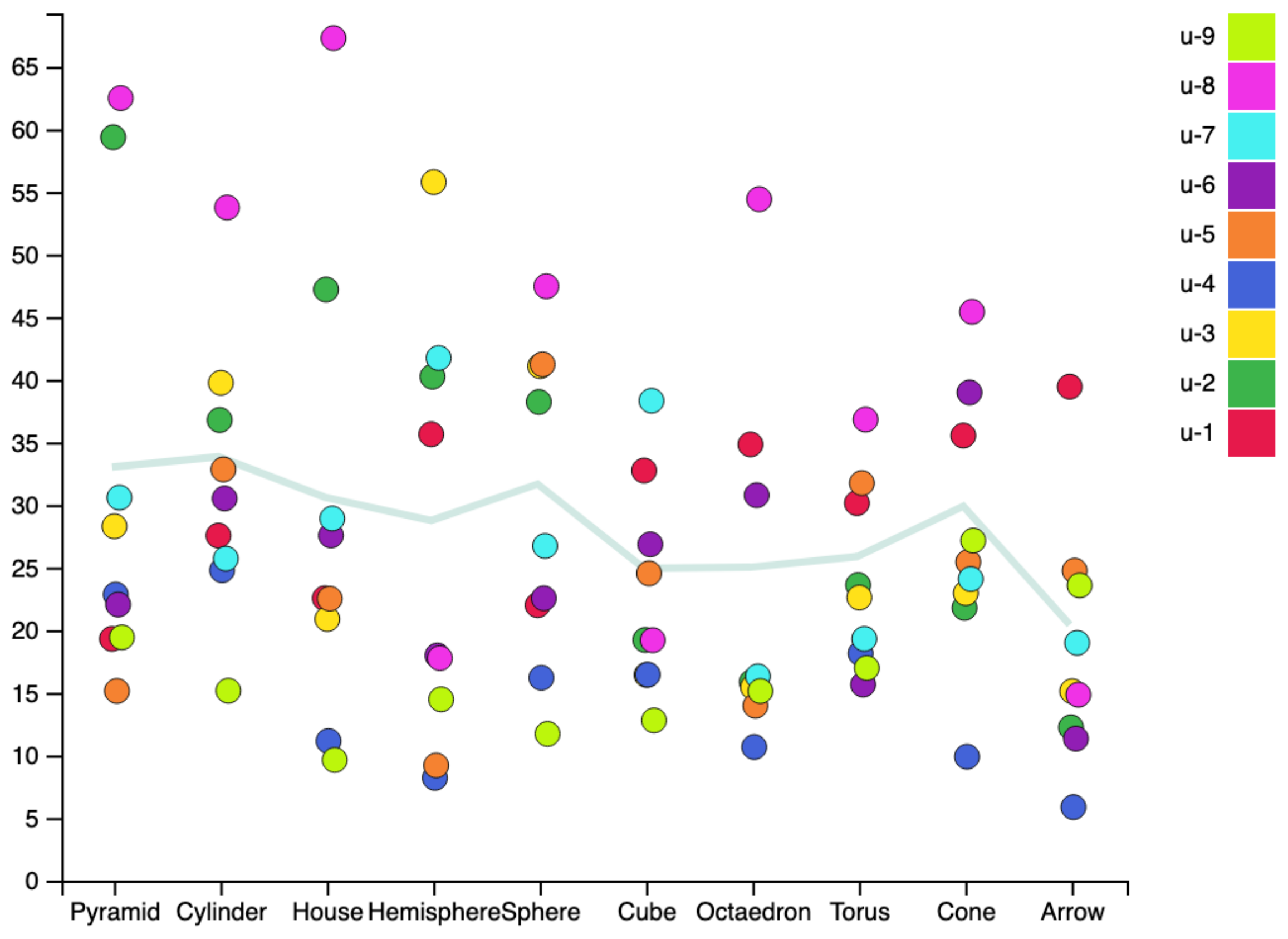

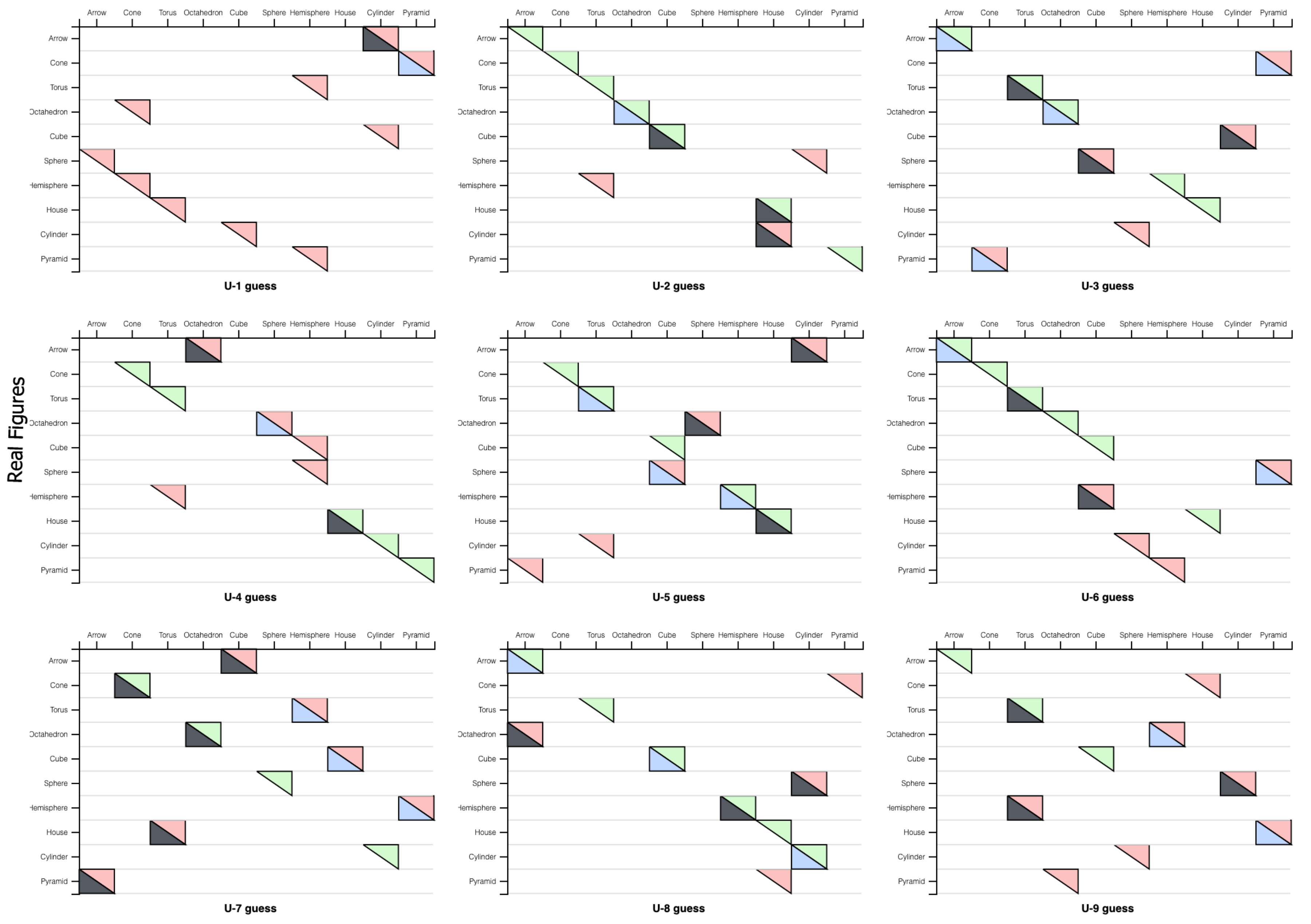

- Task 2, Ultrahaptics-based haptics expressiveness for shape identification: In this task, the user has to guess the shape of the object under exploration only relying on haptic feedback. The system provides the haptic representation of different 3D figures, and the user has to guess the object identity from the ones available in a list. The participant’s guesses are stored as well as the time required to give an answer. The number of hits is not revealed to the user until the entire task has been completed, since after the task, a questionnaire on their perception of the task difficulty is administered. The figures are the same as that in Task 1 without repetitions. Figure 12 shows three participants while they are exploring the harptic figures. The captures have been taken using the HoloLens client.

- Task 3, usefulness of haptic aids for AR resizing tasks: For this task, visual and haptic representations are again merged and delivered. In this task, the system presents the participant a certain figure with preset dimensions. We have preset a virtual ground at 17 cm from the surface of the Ultrahaptics; thus, every figure stands over this ground. With this information in mind, participants are asked to resize the figure to reach a given dimension (height). A haptic anchor on the target height is deliver through the haptic device. To perform the task, the participant must put their hand over the Ultrahaptics and move it up and down as required. The process should be supported by estimations using the augmented reality. Once a participant determines that the target height has been reached, the actual size of the model is recorded as well as the required time to perform the process.

- Task 4, mimicking real-life interaction metaphors for AR with mid-air haptics: Both, this task and Task 5 are based on the Simon prototype. Again, the user is wearing the HoloLens which shows four colored buttons over the Ultrahaptics. Once the task starts, the user has two and a half minutes to complete as many sequences as possible. The participants are told to press the buttons, with no reference about how to do it. If the user hits the sequence, the system generates a new one with one more color. Otherwise, if the user fails, the system generates a new sequence with the same size but with different colors and the fail is saved. Besides that, the number of correct sequences is stored.

- Task 5, acceptance of AR with mid-air haptics with respect to a standard mobile baseline: At this stage of the test, the participant is already familiar with the system. In this task, the user is asked to participate in a collaborative Simon game in two rounds. In the first round, the user will be interacting with the game through HoloLens and UHK while the facilitator will be using an AR iOS version of the game. The goal is the same as in Task 4, i.e., to hit as many correct sequences as possible within two and a half minutes. However, in this case, the user and the facilitator must act in turns. First, the HoloLens client has to push a button and, then, the iOS client has to push the next one and so on. Both users receive the sequence at the same time, each one in their own device; at any time, it is possible to ask for help or instructions from the other user. Once this is finished, both users exchange the positions. Now, the facilitator works with the HoloLens and the Ultrahaptics and the participant uses the iOS application. A new round begins then, just like the previous one.

8. Experimental Results

8.1. Perception of the Contribution of Haptic Feedback over AR Visualization

8.2. UHK-Based Haptic Expressiveness for Shape/Volume Identification

8.3. Usefulness of Haptic Aids for AR Resizing Tasks

8.4. Mimicking Real-Life Interaction Metaphors for AR with Mid-Air Haptics

8.5. Acceptance of AR with Mid-Air Haptics with Respect to a Standard Mobile Baseline

8.6. Discussion and Design Notes

9. Conclusions and Future Work

Author Contributions

Acknowledgments

Conflicts of Interest

References

- Pokémon, G.O. Available online: https://pokemongolive.com/ (accessed on 22 November 2019).

- Azuma, R.T. A survey of augmented reality. Presence Teleoper. Virtual Environ. 1997, 6, 355–385. [Google Scholar] [CrossRef]

- Simon Game. Available online: https://en.wikipedia.org/wiki/Simon_(game) (accessed on 22 November 2019).

- Chu, M.; Begole, B. Natural and Implicit Information-Seeking Cues in Responsive Technology. In Human-Centric Interfaces for Ambient Intelligence; Elsevier: Amsterdam, The Netherlands, 2010; pp. 415–452. [Google Scholar]

- Nilsson, S. Interaction Without Gesture or Speech—A Gaze Controlled AR System. In Proceedings of the 17th International Conference on Artificial Reality and Telexistence (ICAT 2007), Esbjerg, Denmark, 28–30 November 2007; pp. 280–281. [Google Scholar]

- McNamara, A.; Kabeerdoss, C. Mobile Augmented Reality: Placing Labels Based on Gaze Position. In Proceedings of the 2016 IEEE International Symposium on Mixed and Augmented Reality (ISMAR-Adjunct), Merida, Mexico, 19–23 September 2016; pp. 36–37. [Google Scholar]

- Wang, P.; Zhang, S.; Bai, X.; Billinghurst, M.; He, W.; Wang, S.; Zhang, X.; Du, J.; Chen, Y. Head Pointer or Eye Gaze: Which Helps More in MR Remote Collaboration? In Proceedings of the 2019 IEEE Conference on Virtual Reality and 3D User Interfaces (VR). Osaka, Japan, 23–27 March 2019; pp. 1219–1220. [Google Scholar]

- Mardanbegi, D.; Pfeiffer, T. EyeMRTK: A toolkit for developing eye gaze interactive applications in virtual and augmented reality. In Proceedings of the 11th ACM Symposium on Eye Tracking Research & Applications, Denver, CO, USA, 25–28 June 2019; p. 76. [Google Scholar]

- Khamis, M.; Alt, F.; Bulling, A. The past, present, and future of gaze-enabled handheld mobile devices: Survey and lessons learned. In Proceedings of the 20th International Conference on Human-Computer Interaction with Mobile Devices and Services, Barcelona, Spain, 3–6 September 2018; p. 38. [Google Scholar]

- Lee, L.H.; Hui, P. Interaction methods for smart glasses: A survey. IEEE Access 2018, 6, 28712–28732. [Google Scholar] [CrossRef]

- Billinghurst, M.; Kato, H.; Myojin, S. Advanced interaction techniques for augmented reality applications. In Proceedings of the International Conference on Virtual and Mixed Reality, San Diego, CA USA, 19–24 July 2009; pp. 13–22. [Google Scholar]

- Lee, J.Y.; Rhee, G.W.; Seo, D.W. Hand gesture-based tangible interactions for manipulating virtual objects in a mixed reality environment. Int. J. Adv. Manuf. Technol. 2010, 51, 1069–1082. [Google Scholar] [CrossRef]

- Shotton, J.; Fitzgibbon, A.W.; Cook, M.; Sharp, T.; Finocchio, M.; Moore, R.; Kipman, A.; Blake, A. Real-time human pose recognition in parts from single depth images. In Proceedings of the CVPR 2011, Providence, RI, USA, 20–25 June 2011; Volume 2, p. 3. [Google Scholar]

- Marin, G.; Dominio, F.; Zanuttigh, P. Hand gesture recognition with leap motion and kinect devices. In Proceedings of the 2014 IEEE International Conference on Image Processing (ICIP), Paris, France, 27–30 October 2014; pp. 1565–1569. [Google Scholar]

- Piumsomboon, T.; Clark, A.; Billinghurst, M.; Cockburn, A. User-defined gestures for augmented reality. In Proceedings of the IFIP Conference on Human-Computer Interaction, Cape Town, South Africa, 2–6 September 2013; pp. 282–299. [Google Scholar]

- Morris, D.; Tan, H.; Barbagli, F.; Chang, T.; Salisbury, K. Haptic feedback enhances force skill learning. In Proceedings of the Second Joint EuroHaptics Conference and Symposium on Haptic Interfaces for Virtual Environment and Teleoperator Systems (WHC’07), Tsukaba, Japan, 22–24 March 2007; pp. 21–26. [Google Scholar]

- Massie, T.H.; Salisbury, J.K. The phantom haptic interface: A device for probing virtual objects. In Proceedings of the ASME Winter Annual Meeting, Symposium on Haptic Interfaces for Virtual Environment and Teleoperator Systems, Chicago, IL, USA, November 1994; Volume 55, pp. 295–300. [Google Scholar]

- Aleotti, J.; Micconi, G.; Caselli, S. Programming manipulation tasks by demonstration in visuo-haptic augmented reality. In Proceedings of the 2014 IEEE International Symposium on Haptic, Audio and Visual Environments and Games (HAVE) Proceedings, Richardson, TX, USA, 10–11 October 2014; pp. 13–18. [Google Scholar]

- MYO Armband. Available online: https://support.getmyo.com/hc/en-us (accessed on 22 November 2019).

- Cœugnet, S.; Dommes, A.; Panëels, S.; Chevalier, A.; Vienne, F.; Dang, N.T.; Anastassova, M. A vibrotactile wristband to help older pedestrians make safer street-crossing decisions. Accid. Anal. Prev. 2017, 109, 1–9. [Google Scholar] [CrossRef] [PubMed]

- Pezent, E.; Israr, A.; Samad, M.; Robinson, S.; Agarwal, P.; Benko, H.; Colonnese, N. Tasbi: Multisensory squeeze and vibrotactile wrist haptics for augmented and virtual reality. In Proceedings of the 2019 IEEE World Haptics Conference (WHC), Tokyo, Japan, 9–12 July 2019. [Google Scholar]

- Nunez, O.J.A.; Lubos, P.; Steinicke, F. HapRing: A Wearable Haptic Device for 3D Interaction. In Mensch & Computer; De Gruyter: Berlin, Germany; Boston, MA, USA, 2015; pp. 421–424. [Google Scholar]

- Hussain, I.; Spagnoletti, G.; Pacchierotti, C.; Prattichizzo, D. A wearable haptic ring for the control of extra robotic fingers. In Proceedings of the International AsiaHaptics Conference, Chiba, Japan, 29 November–1 December 2016; pp. 323–325. [Google Scholar]

- Blake, J.; Gurocak, H.B. Haptic glove with MR brakes for virtual reality. IEEE/ASME Trans. Mechatron. 2009, 14, 606–615. [Google Scholar] [CrossRef]

- HaptX Gloves. Available online: https://haptx.com/technology/ (accessed on 22 November 2019).

- VRgluv haptic Gloves. Available online: https://www.vrgluv.com (accessed on 22 November 2019).

- Carcedo, M.G.; Chua, S.H.; Perrault, S.; Wozniak, P.; Joshi, R.; Obaid, M.; Fjeld, M.; Zhao, S. Hapticolor: Interpolating color information as haptic feedback to assist the colorblind. In Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems, San Jose, CA, USA, 7–12 May 2016; pp. 3572–3583. [Google Scholar]

- Gabardi, M.; Solazzi, M.; Leonardis, D.; Frisoli, A. A new wearable fingertip haptic interface for the rendering of virtual shapes and surface features. In Proceedings of the 2016 IEEE Haptics Symposium (HAPTICS), Philadelphia, PA, USA, 8–11 April 2016; pp. 140–146. [Google Scholar]

- Leithinger, D.; Ishii, H. Relief: A scalable actuated shape display. In Proceedings of the Fourth International Conference on Tangible, Embedded, and Embodied Interaction, Cambridge, MA, USA, 25–27 January 2010; pp. 221–222. [Google Scholar]

- Nakagaki, K.; Vink, L.; Counts, J.; Windham, D.; Leithinger, D.; Follmer, S.; Ishii, H. Materiable: Rendering dynamic material properties in response to direct physical touch with shape changing interfaces. In Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems, San Jose, CA, USA, 7–12 May 2016; pp. 2764–2772. [Google Scholar]

- Sodhi, R.; Poupyrev, I.; Glisson, M.; Israr, A. AIREAL: Interactive tactile experiences in free air. ACM Trans. Graph. (TOG) 2013, 32, 134. [Google Scholar] [CrossRef]

- Tsalamlal, M.Y.; Issartel, P.; Ouarti, N.; Ammi, M. HAIR: HAptic feedback with a mobile AIR jet. In Proceedings of the 2014 IEEE International Conference on Robotics and Automation (ICRA), Hong Kong, China, 31 May–7 June 2014; pp. 2699–2706. [Google Scholar]

- Carter, T.; Seah, S.A.; Long, B.; Drinkwater, B.; Subramanian, S. UltraHaptics: Multi-Point Mid-Air Haptic Feedback for Touch Surfaces. In Proceedings of the 26th Annual ACM Symposium on User Interface Software and Technology, St. Andrews, UK, 8–11 October 2013; pp. 505–514. [Google Scholar]

- Iwamoto, T.; Tatezono, M.; Shinoda, H. Non-contact method for producing tactile sensation using airborne ultrasound. In Proceedings of the International Conference on Human Haptic Sensing and Touch Enabled Computer Applications, Madrid, Spain, 10–13 June 2008; pp. 504–513. [Google Scholar]

- UltraHaptics. Available online: https://www.ultrahaptics.com/ (accessed on 22 November 2019).

- Long, B.; Seah, S.A.; Carter, T.; Subramanian, S. Rendering volumetric haptic shapes in mid-air using ultrasound. ACM Trans. Graph. (TOG) 2014, 33, 181. [Google Scholar] [CrossRef]

- Shtarbanov, A.; Bove, V.M., Jr. Free-Space Haptic Feedback for 3D Displays via Air-Vortex Rings. In Proceedings of the Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems—CHI ’18, Montreal QC, Canada, 21–26 April 2018. [Google Scholar]

- Sand, A.; Rakkolainen, I.; Isokoski, P.; Kangas, J.; Raisamo, R.; Palovuori, K. Head-mounted display with mid-air tactile feedback. In Proceedings of the 21st ACM Symposium on Virtual Reality Software and Technology—VRST ’15, Beijing, China, 13–15 November 2015; pp. 51–58. [Google Scholar]

- Monnai, Y.; Hasegawa, K.; Fujiwara, M.; Yoshino, K.; Inoue, S.; Shinoda, H. HaptoMime: Mid-Air Haptic Interaction with a Floating Virtual Screen. In Proceedings of the 27th annual ACM symposium on User interface software and technology (UIST ’14), Honolulu, HI, USA, 5–8 October 2014; pp. 663–667. [Google Scholar]

- Kamuro, S.; Minamizawa, K.; Kawakami, N.; Tachi, S. Ungrounded kinesthetic pen for haptic interaction with virtual environments. In Proceedings of the IEEE International Workshop on Robot and Human Interactive Communication, Toyama, Japan, 27 September–2 October 2009; pp. 436–441. [Google Scholar]

- Eck, U.; Sandor, C. HARP: A framework for visuo-haptic augmented reality. In Proceedings of the IEEE Virtual Reality, Lake Buena Vista, FL, USA, 18–20 March 2013; pp. 145–146. [Google Scholar]

- Leithinger, D. Haptic Aura: Augmenting Physical Objects Through Mid-Air Haptics; ACM: Montreal QC, Canada, 2018. [Google Scholar]

- Goncu, C.; Kroll, A. Accessible Haptic Objects for People with Vision Impairment; 2018; pp. 2–3. Available online: https://www.ultrahaptics.com/wp-content/uploads/2018/09/Accessible-Haptic-Objects-for-People-with-Vision-Impairment-RaisedPixels.pdf (accessed on 22 November 2019).

- Song, P.; Goh, W.B.; Hutama, W.; Fu, C.W.; Liu, X. A handle Bar Metaphor for Virtual Object Manipulation with Mid-Air Interaction; ACM: Austin, TX, USA, 2012; p. 1297. [Google Scholar]

- Geerts, D. User Experience Challenges of Virtual Buttons with Mid-Air Haptics; ACM: Montreal, QC, Canada, 2018. [Google Scholar]

- Dzidek, B.; Frier, W.; Harwood, A.; Hayden, R. Design and Evaluation of Mid-Air Haptic Interactions in an Augmented Reality Environment. In Proceedings of the International Conference on Human Haptic Sensing and Touch Enabled Computer Applications, Pisa, Italy, 13–16 June 2018; pp. 489–499. [Google Scholar]

- Frutos-Pascual, M.; Harrison, J.M.; Creed, C.; Williams, I. Evaluation of Ultrasound Haptics as a Supplementary Feedback Cue for Grasping in Virtual Environments. In Proceedings of the 2019 International Conference on Multimodal Interaction, Suzhou, China, 14–18 October 2019; pp. 310–318. [Google Scholar]

- Kervegant, C.; Raymond, F.; Graeff, D.; Castet, J. Touch Hologram in Mid-Air; ACM: Los Angeles, CA, USA, 2017; pp. 1–2. [Google Scholar]

- Vuforia SDK. Available online: https://www.vuforia.com/ (accessed on 22 November 2019).

- Node.js. Available online: https://nodejs.org/ (accessed on 22 November 2019).

| Hardest Figure | Easiest Figure | ||

|---|---|---|---|

| (Number of Votes) | (Number of Votes) | ||

| Torus | 7 | Cube | 6 |

| Arrow | 4 | Cone | 5 |

| Sphere | 4 | Arrow | 4 |

| House | 3 | Sphere | 3 |

| Cone | 2 | Pyramid | 3 |

| Cube | 1 | House | 2 |

| Pyramid | 1 | Cylinder | 1 |

| Cylinder | 1 | Hemisphere | 1 |

| Hemisphere | 1 | Octahedron | 1 |

| Octahedron | 0 | Torus | 0 |

| Error in cms | |||||||

|---|---|---|---|---|---|---|---|

| F1 | F2 | F3 | F4 | F5 | Avg | Std. Dev. | |

| U1 | 3 | 3 | 1 | 1 | 1 | 1.8 | 1.09 |

| U2 | 0 | 2 | 0 | 0 | 0 | 0.4 | 0.89 |

| U3 | 0 | 0 | 1 | 1 | 0 | 0.4 | 0.54 |

| U4 | −1 | 1 | 2 | 3 | 5 | 2 | 2.23 |

| U5 | −1 | 0 | 0 | 0 | 0 | −0.2 | 0.44 |

| U6 | 1 | 1 | 0 | 1 | 0 | 0.6 | 0.54 |

| U7 | 3 | 6 | 2 | 5 | 4 | 4 | 1.58 |

| U8 | 0 | 1 | 1 | 0 | 0 | 0.4 | 0.54 |

| U9 | −1 | 2 | 1 | −1 | 0 | 0.4 | 1.30 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Vaquero-Melchor, D.; Bernardos, A.M. Enhancing Interaction with Augmented Reality through Mid-Air Haptic Feedback: Architecture Design and User Feedback. Appl. Sci. 2019, 9, 5123. https://doi.org/10.3390/app9235123

Vaquero-Melchor D, Bernardos AM. Enhancing Interaction with Augmented Reality through Mid-Air Haptic Feedback: Architecture Design and User Feedback. Applied Sciences. 2019; 9(23):5123. https://doi.org/10.3390/app9235123

Chicago/Turabian StyleVaquero-Melchor, Diego, and Ana M. Bernardos. 2019. "Enhancing Interaction with Augmented Reality through Mid-Air Haptic Feedback: Architecture Design and User Feedback" Applied Sciences 9, no. 23: 5123. https://doi.org/10.3390/app9235123

APA StyleVaquero-Melchor, D., & Bernardos, A. M. (2019). Enhancing Interaction with Augmented Reality through Mid-Air Haptic Feedback: Architecture Design and User Feedback. Applied Sciences, 9(23), 5123. https://doi.org/10.3390/app9235123