A Channel Correction and Spatial Attention Framework for Anterior Cruciate Ligament Tear with Ordinal Loss

Abstract

1. Introduction

- (i)

- In this paper, a channel correction module is used for the correlative MRI dataset. The negative effects of different ACL MRI image distributions for various patients are attenuated.

- (ii)

- An ordinal loss function is introduced into this task because ACL tear grades are as sequential as knee injury grades, and the ordinal loss function serves as a solution to the training difficulties caused by label imbalances in a small sample dataset.

- (iii)

- The effectiveness of the designed network structure is tested in different backbone networks, and the generalizability of the framework is demonstrated, as it can be added to many backbone networks.

- (iv)

- The ablation experiments on ResNet-18 show that the channel correction module, spatial attention module, and ordinal loss function used in this paper are practical and improve the accuracy by 6.6% over the baseline.

2. Related Work

2.1. Medical Image Classification

2.2. Attention Mechanism

2.3. Loss Function

3. Method

3.1. Framework

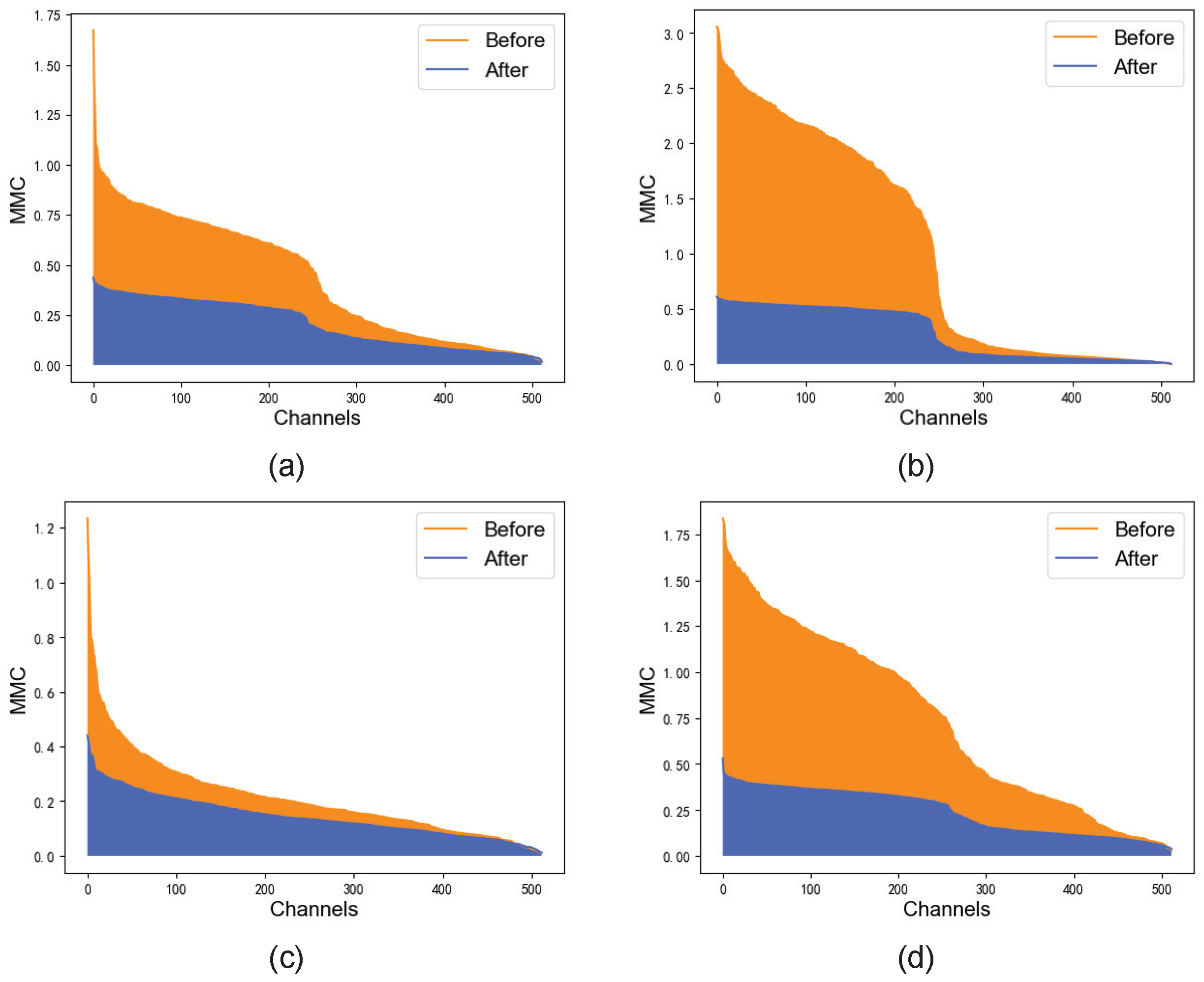

3.2. Channel Correction Module

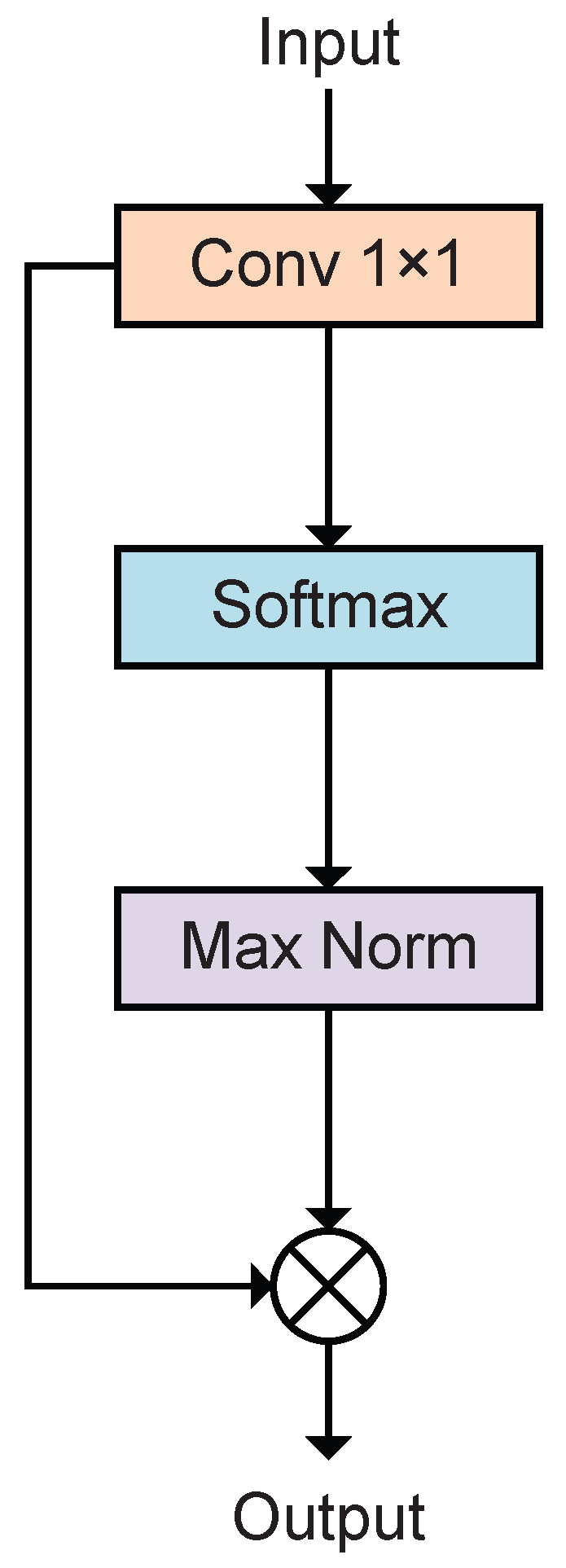

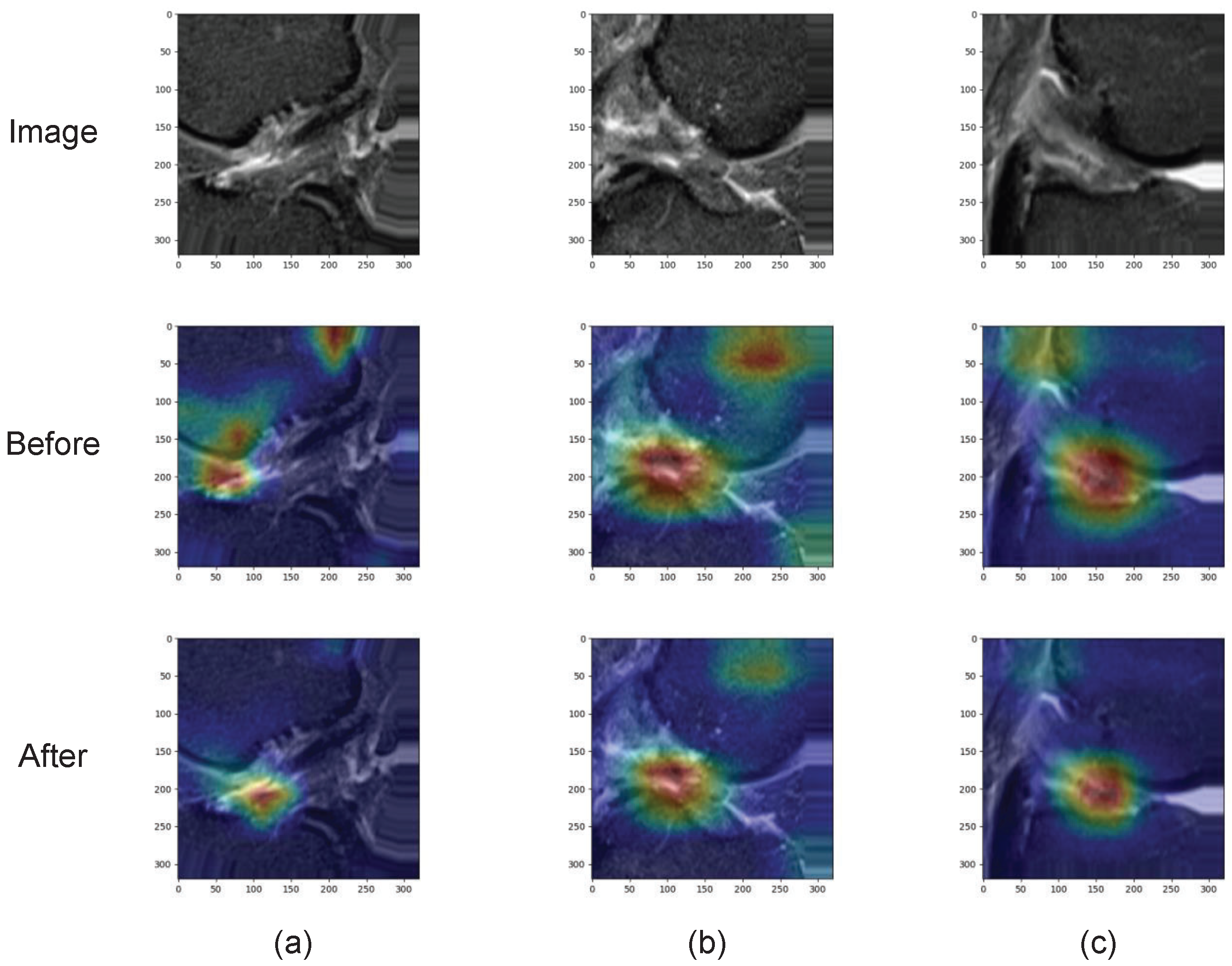

3.3. Spatial Attention

3.4. Ordinal Loss

4. Experiment

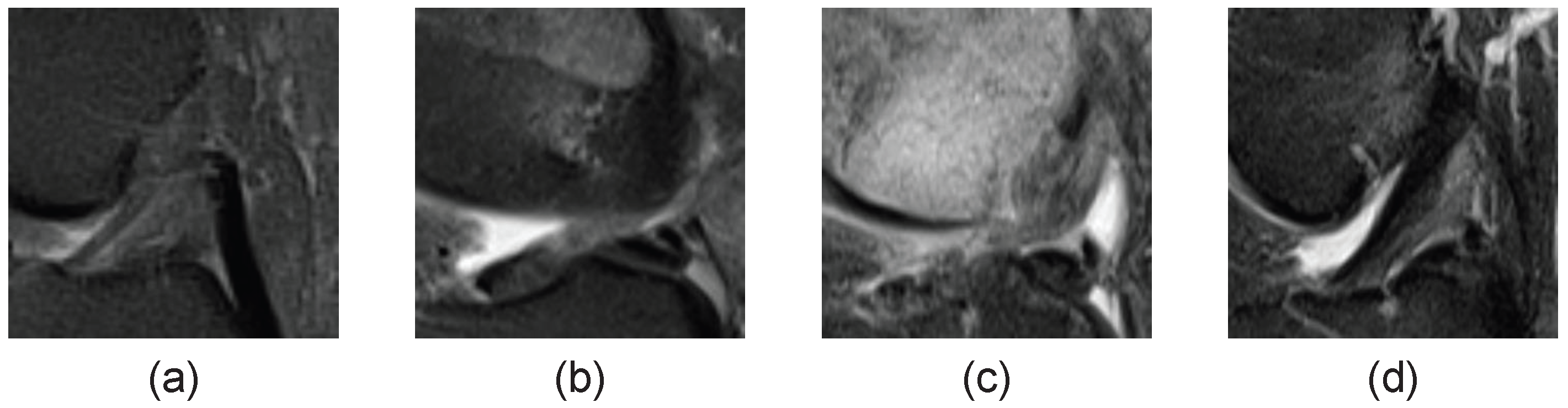

4.1. Data and Task

4.2. Implementation Details and Metrics

4.2.1. Training

4.2.2. Evaluation Metrics

4.3. Results

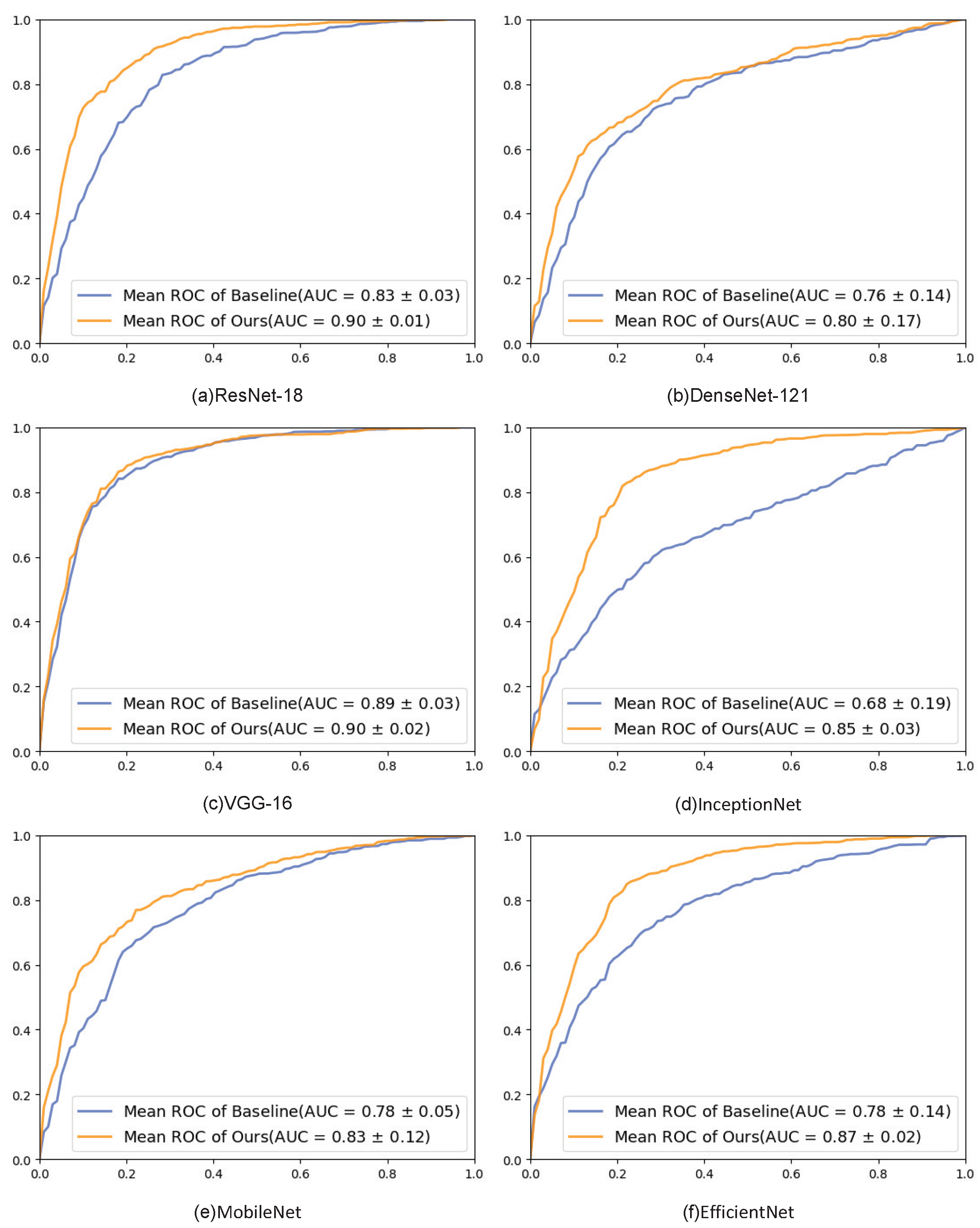

4.3.1. Different Backbone

4.3.2. Ablation Experiments

Channel Correction

Spacial Attention

Ordinal Loss

5. Discussion

- (1)

- In this paper, a simple function is used to work on the channel correction, but the function’s parameter is set according to human experience. Therefore, using the algorithm to obtain the hyperparameters and design a function more suitable for this task can be considered.

- (2)

- Ordinal loss function is used as the loss function for classification, and the initial weight matrix is set according to human experience. A more reasonable heuristic method can be considered to obtain suitable initial weights for the data.

- (3)

- The method proposed in this paper is applied to a triple classification task for ACL tears. In addition, the modules are applicable to many datasets. Channel correction, for instance, can be used in datasets with different image distributions, the ordinal loss can be used in datasets with orderliness between them, and so on, which merits further discussion.

6. Conclusions

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Brantigan, O.C.; Voshell, A.F. The Mechanics of the Ligaments and Menisci of the Knee Joint. J. Bone Jt. Surg. Am. 1941, 23, 44–66. [Google Scholar]

- Agel, J.; Arendt, E.; Bershadsky, B. Anterior Cruciate Ligament Injury in National Collegiate Athletic Association Basketball and Soccer: A 13-Year Review. Am. J. Sports Med. 2005, 33, 524–530. [Google Scholar] [CrossRef] [PubMed]

- Pujol, N.; Blanchi, M.; Chambat, P. The Incidence of Anterior Cruciate Ligament Injuries Among Competitive Alpine Skiers: A 25-year Investigation. Am. J. Sports Med. 2007, 35, 1070–1074. [Google Scholar] [CrossRef]

- Herzog, M.; Marshall, S.; Lund, J.; Pate, V.; Mack, C.; Spang, J. Trends in Incidence of ACL Reconstruction and Concomitant Procedures Among Commercially Insured Individuals in the United States, 2002–2014. Sports Health Multidiscip. Approach 2018, 10, 523–531. [Google Scholar] [CrossRef]

- Kaeding, C.; Léger-St-Jean, B.; Magnussen, R. Epidemiology and Diagnosis of Anterior Cruciate Ligament Injuries. Clin. Sports Med. 2016, 36, 1–8. [Google Scholar] [CrossRef]

- Gupta, R.; Singhal, A.; Rai, A.; Shail, S.; Masih, G. Strong association of meniscus tears with complete Anterior Cruciate Ligament (ACL) injuries relative to partial ACL injuries. J. Clin. Orthop. Trauma 2021, 23, 101671. [Google Scholar] [CrossRef]

- Simon, D.; Mascarenhas, R.; Saltzman, B.; Rollins, M.; Bach, B.; Macdonald, P. The Relationship between Anterior Cruciate Ligament Injury and Osteoarthritis of the Knee. Adv. Orthop. 2015, 2015, 928301. [Google Scholar] [CrossRef] [PubMed]

- Schwenke, M.; Singh, M.; Chow, B. Anterior Cruciate Ligament and Meniscal Tears: A Multi-modality Review. Appl. Radiol. 2020, 49, 42–49. [Google Scholar] [CrossRef]

- Roberts, C.; Towers, J.; Spangehl, M.; Carrino, J.; Morrison, W. Advanced MR Imaging of the Cruciate Ligaments. Radiol. Clin. N. Am. 2007, 45, 1003–1016. [Google Scholar] [CrossRef]

- Moon, S.; Hong, S.; Choi, J.Y.; Jun, W.; Choi, J.A.; Park, E.A.; Kang, H.; Kwon, J. Grading Anterior Cruciate Ligament Graft Injury after Ligament Reconstruction Surgery: Diagnostic Efficacy of Oblique Coronal MR Imaging of the Knee. Korean J. Radiol. Off. J. Korean Radiol. Soc. 2008, 9, 155–161. [Google Scholar] [CrossRef]

- Štajduhar, I.; Mamula, M.; Miletić, D.; Ünal, G. Semi-automated detection of anterior cruciate ligament injury from MRI. Comput. Methods Programs Biomed. 2017, 140, 151–164. [Google Scholar] [CrossRef]

- Kapoor, V.; Tyagi, N.; Manocha, B.; Arora, A.; Roy, S.; Nagrath, P. Detection of Anterior Cruciate Ligament Tear Using Deep Learning and Machine Learning Techniques. In Data Analytics and Management; Khanna, A., Gupta, D., Pólkowski, Z., Bhattacharyya, S., Castillo, O., Eds.; Springer Nature: Singapore, 2021; pp. 9–22. [Google Scholar]

- Namiri, N.; Flament, I.; Astuto, B.; Shah, R.; Tibrewala, R.; Caliva, F.; Link, T.; Pedoia, V.; Majumdar, S. Deep Learning for Hierarchical Severity Staging of Anterior Cruciate Ligament Injuries from MRI. Radiology 2020, 2, e190207. [Google Scholar] [CrossRef] [PubMed]

- Jeon, Y.; Watanabe, A.; Hagiwara, S.; Yoshino, K.; Yoshioka, H.; Quek, S.; Feng, M. Interpretable and Lightweight 3-D Deep Learning Model For Automated ACL Diagnosis. IEEE J. Biomed. Health Inform. 2021, 25, 2388–2397. [Google Scholar] [CrossRef]

- Belton, N.; Welaratne, I.; Dahlan, A.; Hearne, R.; Hagos, M.T.; Lawlor, A.; Curran, K. Optimising Knee Injury Detection with Spatial Attention and Validating Localisation Ability. In MIUA 2021: Medical Image Understanding and Analysis; Springer: Cham, Switzerland, 2021; pp. 71–86. [Google Scholar] [CrossRef]

- Tao, Q.; Ge, Z.; Cai, J.; Yin, J.; See, S. Improving Deep Lesion Detection Using 3D Contextual and Spatial Attention. In MICCAI 2019: Medical Image Computing and Computer Assisted Intervention; Springer: Cham, Switzerland, 2019; pp. 185–193. [Google Scholar] [CrossRef]

- Awan, M.; Rahim, M.; Salim, N.; Rehman, A.; Nobanee, H.; Shabir, H. Improved Deep Convolutional Neural Network to Classify Osteoarthritis from Anterior Cruciate Ligament Tear Using Magnetic Resonance Imaging. J. Pers. Med. 2021, 11, 1163. [Google Scholar] [CrossRef] [PubMed]

- Chang, P.; Wong, T.; Rasiej, M. Deep Learning for Detection of Complete Anterior Cruciate Ligament Tear. J. Digit. Imaging 2019, 32, 980–986. [Google Scholar] [CrossRef]

- Minamoto, Y.; Akagi, R.; Maki, S.; Shiko, Y.; Tozawa, R.; Kimura, S.; Yamaguchi, S.; Kawasaki, Y.; Ohtori, S.; Sasho, T. Automated detection of anterior cruciate ligament tears using a deep convolutional neural network. BMC Musculoskelet. Disord. 2022, 23, 577. [Google Scholar] [CrossRef]

- Becker, H.C.; Nettleton, W.J.; Meyers, P.H.; Sweeney, J.W.; Nice, C.M. Digital Computer Determination of a Medical Diagnostic Index Directly from Chest X-Ray Images. IEEE Trans. Biomed. Eng. 1964, BME-11, 67–72. [Google Scholar] [CrossRef]

- Lee, W.L.; Chen, Y.C.; Hsieh, K.S. Ultrasonic liver tissue classification by fractal feature vector based on M-band wavelet transform. IEEE Trans. Med. Imaging 2003, 22, 382–392. [Google Scholar] [CrossRef]

- Paredes, R.; Keysers, D.; Lehmann, T.; Wein, B.; Ney, H.; Vidal, E. Classification of Medical Images Using Local Representations. In Bildverarbeitung für die Medizin 2002; Springer: Berlin/Heidelberg, Germany, 2002. [Google Scholar] [CrossRef]

- Caicedo, J.; Cruz-Roa, A.; González, F. Histopathology Image Classification Using Bag of Features and Kernel Functions. In AIME 2009: Artificial Intelligence in Medicine; Springer: Berlin/Heidelberg, Germany, 2009; pp. 126–135. [Google Scholar] [CrossRef]

- Shen, W.; Zhou, M.; Yang, F.; Yang, C.; Tian, J. Multi-scale Convolutional Neural Networks for Lung Nodule Classification. In IPMI 2015: Information Processing in Medical Imaging; Springer: Cham, Switzerland, 2015; Volume 24, pp. 588–599. [Google Scholar] [CrossRef]

- Payan, A.; Montana, G. Predicting Alzheimer’s disease: A neuroimaging study with 3D convolutional neural networks. In Proceedings of the ICPRAM 2015—4th International Conference on Pattern Recognition Applications and Methods, Lisbon, Portugal, 10–12 January 2015; Volume 2. [Google Scholar]

- Gong, X.; Xia, X.; Zhu, W.; Zhang, B.; Doermann, D.S.; Zhuo, L. Deformable Gabor Feature Networks for Biomedical Image Classification. arXiv 2020, arXiv:2012.04109. Available online: http://xxx.lanl.gov/abs/2012.04109 (accessed on 10 March 2023).

- Wei, J.; Suriawinata, A.; Ren, B.; Liu, X.; Lisovsky, M.; Vaickus, L.; Brown, C.; Baker, M.; Nasir-Moin, M.; Tomita, N.; et al. Learn like a Pathologist: Curriculum Learning by Annotator Agreement for Histopathology Image Classification. In Proceedings of the 2021 IEEE Winter Conference on Applications of Computer Vision (WACV), Waikoloa, HI, USA, 3–8 January 2021; pp. 2472–2482. [Google Scholar] [CrossRef]

- Guo, M.H.; Xu, T.X.; Liu, J.J.; Liu, Z.N.; Jiang, P.T.; Mu, T.J.; Zhang, S.H.; Martin, R.; Cheng, M.M.; Hu, S.M. Attention Mechanisms in Computer Vision: A Survey. Comput. Vis. Media 2021, 8, 331–368. [Google Scholar] [CrossRef]

- Jaderberg, M.; Simonyan, K.; Zisserman, A.; Kavukcuoglu, K. Spatial Transformer Networks. In Proceedings of the NIPS, Montreal, QB, Canada, 7–12 December 2015. [Google Scholar]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-Excitation Networks. arXiv 2017, arXiv:1709.01507. Available online: http://xxx.lanl.gov/abs/1709.01507 (accessed on 10 March 2023).

- Woo, S.; Park, J.; Lee, J.Y.; Kweon, I.S. CBAM: Convolutional Block Attention Module. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018. [Google Scholar]

- Schlemper, J.; Oktay, O.; Schaap, M.; Heinrich, M.; Kainz, B.; Glocker, B.; Rueckert, D. Attention Gated Networks: Learning to Leverage Salient Regions in Medical Images. Med. Image Anal. 2019, 53, 197–207. [Google Scholar] [CrossRef]

- Dai, Y.; Gao, Y.; Liu, F.; Fu, J. Mutual Attention-based Hybrid Dimensional Network for Multimodal Imaging Computer-aided Diagnosis. arXiv 2022, arXiv:2201.09421. [Google Scholar]

- Rubinstein, R.Y. The Cross-Entropy Method for Combinatorial and Continuous Optimization. Methodol. Comput. Appl. Probab. 1999, 1, 127–190. [Google Scholar] [CrossRef]

- Mannor, S.; Peleg, D.; Rubinstein, R. The Cross Entropy Method for Classification. In ICML ’05: Proceedings of the 22nd International Conference on Machine Learning; Association for Computing Machinery: New York, NY, USA, 2005; pp. 561–568. [Google Scholar] [CrossRef]

- Liu, W.; Wen, Y.; Yu, Z.; Yang, M. Large-Margin Softmax Loss for Convolutional Neural Networks. In Proceedings of the 33rd International Conference on Machine Learning, New York, NY, USA, 19–24 June 2016; pp. 507–516. [Google Scholar]

- Sun, Y.; Cheng, C.; Zhang, Y.; Zhang, C.; Zheng, L.; Wang, Z.; Wei, Y. Circle Loss: A Unified Perspective of Pair Similarity Optimization. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 6397–6406. [Google Scholar] [CrossRef]

- Lin, T.Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal Loss for Dense Object Detection. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 2999–3007. [Google Scholar] [CrossRef]

- Li, X.; Wang, W.; Wu, L.; Chen, S.; Hu, X.; Li, J.; Tang, J.; Yang, J. Generalized Focal Loss: Learning Qualified and Distributed Bounding Boxes for Dense Object Detection. In Proceedings of the NeurIPS, Virtual, 6–12 December 2020. [Google Scholar]

- Mazumdar, I.; Mukherjee, J. Fully Automatic MRI Brain Tumor Segmentation Using Efficient Spatial Attention Convolutional Networks with Composite Loss. Neurocomputing 2022, 500, 243–254. [Google Scholar] [CrossRef]

- Chen, P.; Gao, L.; Shi, X.; Allen, K.; Yang, L. Fully Automatic Knee Osteoarthritis Severity Grading Using Deep Neural Networks with a Novel Ordinal Loss. Comput. Med. Imaging Graph. 2019, 75, 84–92. [Google Scholar] [CrossRef]

- Liu, W.; Ge, T.; Luo, L.; Hong, P.; Xu, X.; Chen, Y.; Zhuang, Z. A Novel Focal Ordinal Loss for Assessment of Knee Osteoarthritis Severity. Neural Process. Lett. 2022, 54, 5199–5224. [Google Scholar] [CrossRef]

- Su, H.; Maji, S.; Kalogerakis, E.; Learned-Miller, E. Multi-view Convolutional Neural Networks for 3D Shape Recognition. In Proceedings of the 2015 IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015; pp. 945–953. [Google Scholar] [CrossRef]

- Luo, X.; Xu, J.; Xu, Z. Channel Importance Matters in Few-Shot Image Classification. Int. Conf. Mach. Learn. 2022, 162, 14542–14559. [Google Scholar]

| Backbone | Accuracy | Precision | Recall | Specificity | F1 | |

|---|---|---|---|---|---|---|

| ResNet-18 | Baseline | 0.767 | 0.499 | 0.483 | 0.821 | 0.480 |

| Ours | 0.833 | 0.488 | 0.512 | 0.852 | 0.498 | |

| DenseNet-121 | Baseline | 0.780 | 0.419 | 0.438 | 0.780 | 0.423 |

| Ours | 0.816 | 0.471 | 0.505 | 0.846 | 0.486 | |

| VGG-16 | Baseline | 0.795 | 0.456 | 0.493 | 0.839 | 0.471 |

| Ours | 0.823 | 0.477 | 0.502 | 0.846 | 0.488 | |

| InceptionNet | Baseline | 0.748 | 0.409 | 0.473 | 0.757 | 0.434 |

| Ours | 0.784 | 0.454 | 0.501 | 0.834 | 0.471 | |

| MobileNet | Baseline | 0.742 | 0.501 | 0.414 | 0.753 | 0.405 |

| Ours | 0.807 | 0.565 | 0.454 | 0.789 | 0.450 | |

| EfficientNet | Baseline | 0.761 | 0.511 | 0.466 | 0.752 | 0.439 |

| Ours | 0.787 | 0.415 | 0.447 | 0.790 | 0.428 |

| Backbone | Channel Correction | Ordinal Loss | Space Attention | Accuracy |

|---|---|---|---|---|

| ResNet-18 | 0.767 | |||

| ✓ | 0.775 | |||

| ✓ | 0.768 | |||

| ✓ | 0.807 | |||

| ✓ | ✓ | 0.782 | ||

| ✓ | ✓ | 0.819 | ||

| ✓ | ✓ | 0.811 | ||

| ✓ | ✓ | ✓ | 0.833 |

| Backbone | Loss | Accuracy | Precision | Recall | Specificity | F1 |

|---|---|---|---|---|---|---|

| ResNet-18 | Cross-entropy Loss | 0.767 | 0.499 | 0.483 | 0.821 | 0.480 |

| Ordinal Loss | 0.768 | 0.552 | 0.519 | 0.835 | 0.508 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lin, W.; Miao, K. A Channel Correction and Spatial Attention Framework for Anterior Cruciate Ligament Tear with Ordinal Loss. Appl. Sci. 2023, 13, 5005. https://doi.org/10.3390/app13085005

Lin W, Miao K. A Channel Correction and Spatial Attention Framework for Anterior Cruciate Ligament Tear with Ordinal Loss. Applied Sciences. 2023; 13(8):5005. https://doi.org/10.3390/app13085005

Chicago/Turabian StyleLin, Weilun, and Kehua Miao. 2023. "A Channel Correction and Spatial Attention Framework for Anterior Cruciate Ligament Tear with Ordinal Loss" Applied Sciences 13, no. 8: 5005. https://doi.org/10.3390/app13085005

APA StyleLin, W., & Miao, K. (2023). A Channel Correction and Spatial Attention Framework for Anterior Cruciate Ligament Tear with Ordinal Loss. Applied Sciences, 13(8), 5005. https://doi.org/10.3390/app13085005