Predicting the Mine Friction Coefficient Using the GSCV-RF Hybrid Approach

Abstract

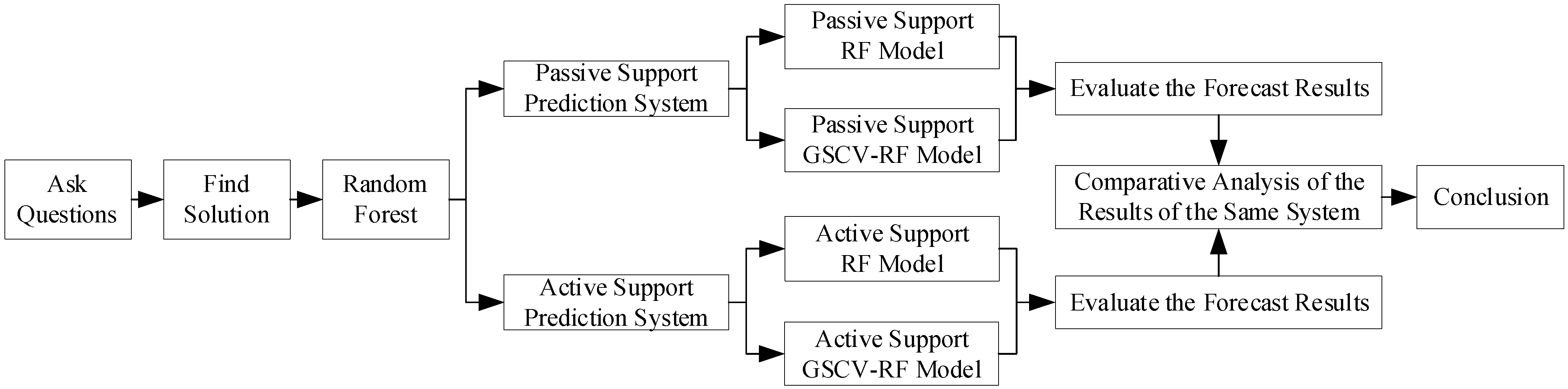

1. Introduction

2. Predictive Model Construction

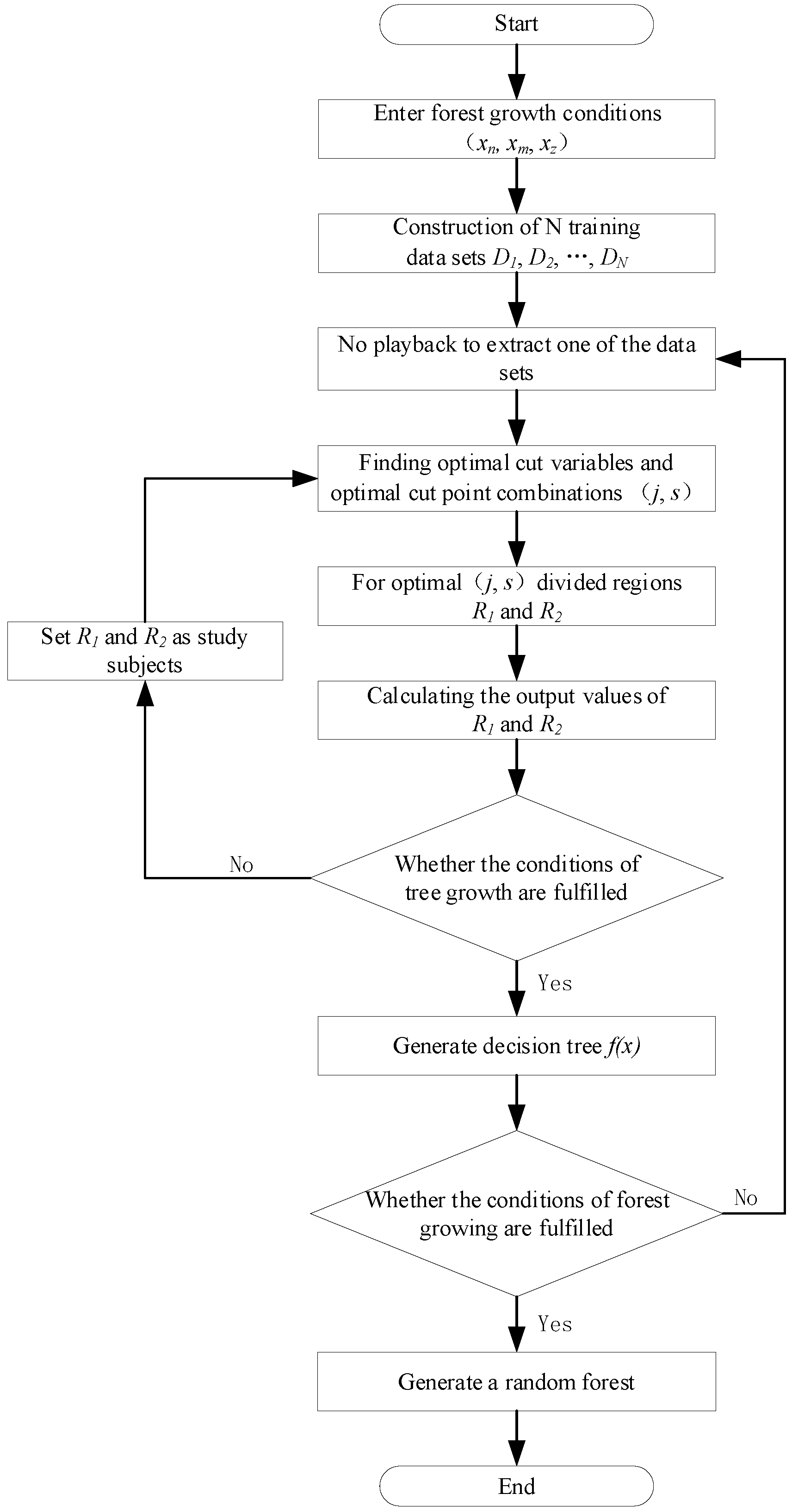

2.1. RF Prediction Models

- 1.

- Input conditions for forest growth: RF prediction results are heavily influenced by three hyperparameters: the number of decisions, the maximum number of features and the maximum depth of the decision tree, which are, respectively, defined as x1, x2 and x3 and used as the input growth conditions (x1, x2, x3).

- 2.

- The dataset containing N samples with O input features is sampled N times using put-back sampling, and o features are selected randomly to serve as the input features. This process is repeated N times to generate N training datasets including N samples with o input features (o ≤ O):where DN is the Nth dataset of training numbers; xNN is the input data under the Nth sample of the Nth training dataset; and yNN is the output data under the Nth sample of the Nth training dataset.

- 3.

- Choose one of the datasets, select the appropriate cut variable j and cut point s, and ensure the segmentation effect using Equation (3).where is the output data for the ith sample in the dataset; is the mean of all under the partitioned region ; and is the mean of all under the partitioned region .

- 4.

- The optimal (j, s) is partitioned into regions to obtain and , and the output value of the corresponding region is determined:where and are the data region according to (j, s); is the selected optimal division variable; and is the number of samples in the delimited region .

- 5.

- Until the requirements for the decision tree’s growth are satisfied, repeat steps 2 and 3 for the divided subregions.

- 6.

- To construct a decision tree, divide the input space into M regions, R1, R2, , RM.where f(x) is the resulting decision tree.

- 7.

- Repeat steps 2, 3, 4 and 5 until the forest’s growth requirements are satisfied and an equal number of decision trees are formed so as to form a random forest.

- Select the appropriate input and output properties to build a prediction indicator system.

- Use numerical and clustering methods to process the data such that it satisfies the RF model’s requirements.

- Adopting a given percentage, divide the dataset into a training set for training the model and a test set for testing the prediction.

- Input the model parameters (growth conditions), such as the number of decisions, maximum number of features and maximum depth of the decision tree.

- Train the training set’s α prediction model.

- Predict the α of the test set.

- According to the predictions to evaluation the constructed RF forecasting model. The evaluation indicators include mean absolute error (MAE), root mean square error (RMSE) and model goodness of fit (R2):where n is the test set sample size; is the predicted value of the ith test set sample; is the true value of the ith test set sample; and is the mean of the sample true values.

2.2. GSCV Optimization Algorithm

- Set the range of each hyperparameter and set range of RF’s hyperparameter (growth condition) as an example:where n is the upper limit of the value of the hyperparameter x1; m is the upper limit of the value of the hyperparameter x2; and z is the upper limit of the value of the hyperparameter x3.

- To obtain a hyperparameter combination, set each hyperparameter individually. Assuming that each hyperparameter step is 1, n × m × z hyperparameter combinations (xn, xm, xz) are created. Hence, each hyperparameter combination represents one of the growth conditions of RF.

- To avoid the chance of outcomes owing to dataset partitioning, the dataset is divided into K mutually exclusive subsets of the same size, d1, d2, , dk, and each subset is utilized as a separate validation set once, and the remaining K-1 subsets are used to produce K new datasets.

- Each hyperparameter combination (xn, xm, xz) is trained once on each of the K new datasets, the goodness-of-fit under each dataset is produced, and the output of the hyperparameter combination is the mean value of the corresponding goodness-of-fit for each dataset.

- Repeat step 4 for each combination of hyperparameters in order to identify the optimal output as an input parameter for the algorithm combined with GSCV. The following is an expression of the optimal output:

2.3. GSCV-RF Prediction Model

- The range and step size of the three hyperparameters, including the number of decision trees, the maximum number of features and the maximum decision tree depth, are established.

- Combining the values of each hyperparameter to individually yields all the possible hyperparameter combinations.

- The α dataset is divided into K equal parts, with K-1 parts serving as the training set and the remaining 1 part serving as the test set. After K repetitions, each sample serves as one test set, resulting in K new datasets.

- Using the new dataset, each combination of hyperparameters is subjected to K-fold cross-validation.

- The results produced for each hyperparameter combination are scored, and the combination with the highest score is used as the model’s input parameter.

3. Example Analysis

3.1. Constructing a Forecasting Indicator System

3.2. Data Selection and Processing

| No. | Indicator | Data Type |

|---|---|---|

| 1 | Support Type | Character type |

| 2 | Cross-Section Profile | Character type |

| 3 | Bracket Size | Numerical type |

| 4 | Roadway Cross-Sectional Area | Numerical type |

| 5 | Lane Circumference | Numerical type |

| 6 | Perimeter of Unsupported Section | Numerical type |

| 7 | Bracket Longitudinal Bore | Numerical type |

| 8 | Cross-Bore of Bracket | Numerical type |

| 9 | Equivalent Radius | Numerical type |

| 10 | Effective Ventilation Area Factor | Numerical type |

| 11 | Coefficient of frictional resistance | Numerical type |

3.3. Data Statistics

| Indicators | Min. | Max. | Avg. | St. D. | Med. | S. Var. | St. E. | Kurt. | Skew. | Range | Mode |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Training Datasets | |||||||||||

| Support Type | 1 | 2 | 1.5 | 0.503 | 1.5 | 0.253 | 0.05 | −2.041 | 0 | 1 | |

| Bracket Size | 10 | 26 | 15.68 | 4.126 | 15 | 17.028 | 0.413 | 0.002 | 0.592 | 16 | 10 |

| Roadway Cross-Sectional Area | 4 | 10 | 6.94 | 2.247 | 6 | 5.047 | 0.225 | −1.366 | 0.033 | 6 | 4 |

| Lane Circumference | 8.32 | 13.16 | 10.812 | 1.816 | 10.19 | 3.297 | 0.182 | −1.358 | −0.13 | 4.84 | 8.32 |

| Perimeter of Unsupported Section | 2.13 | 3.37 | 2.712 | 0.509 | 2.61 | 0.259 | 0.051 | −1.668 | 0.021 | 1.24 | 2.13 |

| Bracket Longitudinal Bore | 3 | 8 | 4.97 | 1.85 | 5 | 3.423 | 0.185 | −1.218 | 0.416 | 5 | 3 |

| Cross-Bore of Bracket | 0.033 | 0.135 | 0.065 | 0.021 | 0.062 | 0 | 0.002 | 0.69 | 0.816 | 0.102 | 0.059 |

| α | 0.071 | 0.261 | 0.121 | 0.042 | 0.106 | 0.002 | 0.004 | 1.049 | 1.291 | 0.19 | 0.137 |

| Testing Datasets | |||||||||||

| Support Type | 1 | 2 | 1.667 | 0.482 | 2 | 0.232 | 0.098 | −1.568 | −0.755 | 1 | 2 |

| Bracket Size | 10 | 24 | 15.708 | 4.059 | 16 | 16.476 | 0.829 | −0.294 | 0.32 | 14 | 16, 18 |

| Roadway Cross-Sectional Area | 4 | 10 | 6.542 | 1.865 | 6 | 3.476 | 0.381 | −0.927 | 0.39 | 6 | 5 |

| Lane Circumference | 8.32 | 13.16 | 10.538 | 1.51 | 10.19 | 2.281 | 0.308 | −1.058 | 0.196 | 4.84 | 9.30 |

| Perimeter of Unsupported Section | 2.13 | 3.37 | 2.702 | 0.386 | 2.61 | 0.149 | 0.079 | −1.052 | 0.188 | 1.24 | 2.39 |

| Bracket Longitudinal Bore | 3 | 8 | 4.75 | 1.622 | 4 | 2.63 | 0.331 | −0.049 | 0.976 | 5 | 4 |

| Cross-Bore of Bracket | 0.035 | 0.094 | 0.066 | 0.018 | 0.066 | 0 | 0.004 | −1.347 | −0.164 | 0.059 | 0.084 |

| α | 0.092 | 0.273 | 0.134 | 0.047 | 0.118 | 0.002 | 0.01 | 1.735 | 1.407 | 0.181 | 0.916 0.118 0.143 |

| Indicators | Min. | Max. | Avg. | St. D. | Med. | S. Var. | St. E. | Kurt. | Skew. | Range | Mode |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Training Datasets | |||||||||||

| Support Type | 1 | 2 | 1.367 | 0.49 | 1 | 0.24 | 0.089 | −1.784 | 0.583 | 1 | 1 |

| Cross-Section Profile | 1 | 2 | 1.433 | 0.504 | 1 | 0.254 | 0.092 | −2.062 | 0.283 | 1 | 1 |

| Equivalent Radius | 0.828 | 2.3 | 1.389 | 0.505 | 1.158 | 0.255 | 0.092 | −1.372 | 0.539 | 1.472 | 0.835 2.000 |

| Effective Ventilation Area Factor | 0.84 | 1 | 0.954 | 0.044 | 0.96 | 0.002 | 0.008 | 0.927 | −1.122 | 0.16 | 1.00 |

| α | 0.01 | 0.045 | 0.021 | 0.01 | 0.017 | 0 | 0.002 | −0.139 | 0.91 | 0.035 | 0.029 |

| Testing Datasets | |||||||||||

| Support Type | 1 | 2 | 1.333 | 0.516 | 1 | 0.267 | 0.211 | −1.875 | 0.968 | 1 | 1 |

| Cross-Section Profile | 1 | 2 | 1.5 | 0.548 | 1.5 | 0.3 | 0.224 | −3.333 | 0 | 1 | |

| Equivalent Radius | 0.884 | 2.05 | 1.283 | 0.482 | 1.048 | 0.232 | 0.197 | −0.697 | 1.064 | 1.166 | |

| Effective Ventilation Area Factor | 0.87 | 1 | 0.962 | 0.047 | 0.975 | 0.002 | 0.019 | 4.225 | −1.967 | 0.13 | |

| α | 0.01 | 0.042 | 0.023 | 0.012 | 0.02 | 0 | 0.005 | −0.089 | 0.802 | 0.032 | |

3.4. RF Model Prediction

| No. | Parameter Name | Value (Passive Support) | Value (Active Support) |

|---|---|---|---|

| 1 | n_estimators | 100 | 100 |

| 2 | max_features | Auto | Auto |

| 3 | max_depth | None | None |

3.5. GSCV-RF Model Predictions

3.5.1. Searching for the Optimal Input Parameters

3.5.2. Model Training and Prediction

3.6. BP Model Prediction

| No. | Parameter Name | Value (Passive Support) | Value (Active Support) |

|---|---|---|---|

| 1 | Number of nodes in the input layer | 7 | 4 |

| 2 | Number of nodes in the output layer | 15 | 9 |

| 3 | Number of nodes in the implicit layer | 1 | 1 |

3.7. Prediction Result Comparison

| Predictive Models | MAE | RMSE | R2 | |

|---|---|---|---|---|

| RF | Passive Support Training Set | 0.0019 | 0.0029 | 0.9952 |

| GSCV-RF | 0.0018 | 0.0025 | 0.9965 | |

| BP | 0.0111 | 0.0136 | 0.8945 | |

| RF | Passive Support Test Set | 0.0112 | 0.0159 | 0.8814 |

| GSCV-RF | 0.0112 | 0.0157 | 0.8845 | |

| BP | 0.0127 | 0.0182 | 0.8455 | |

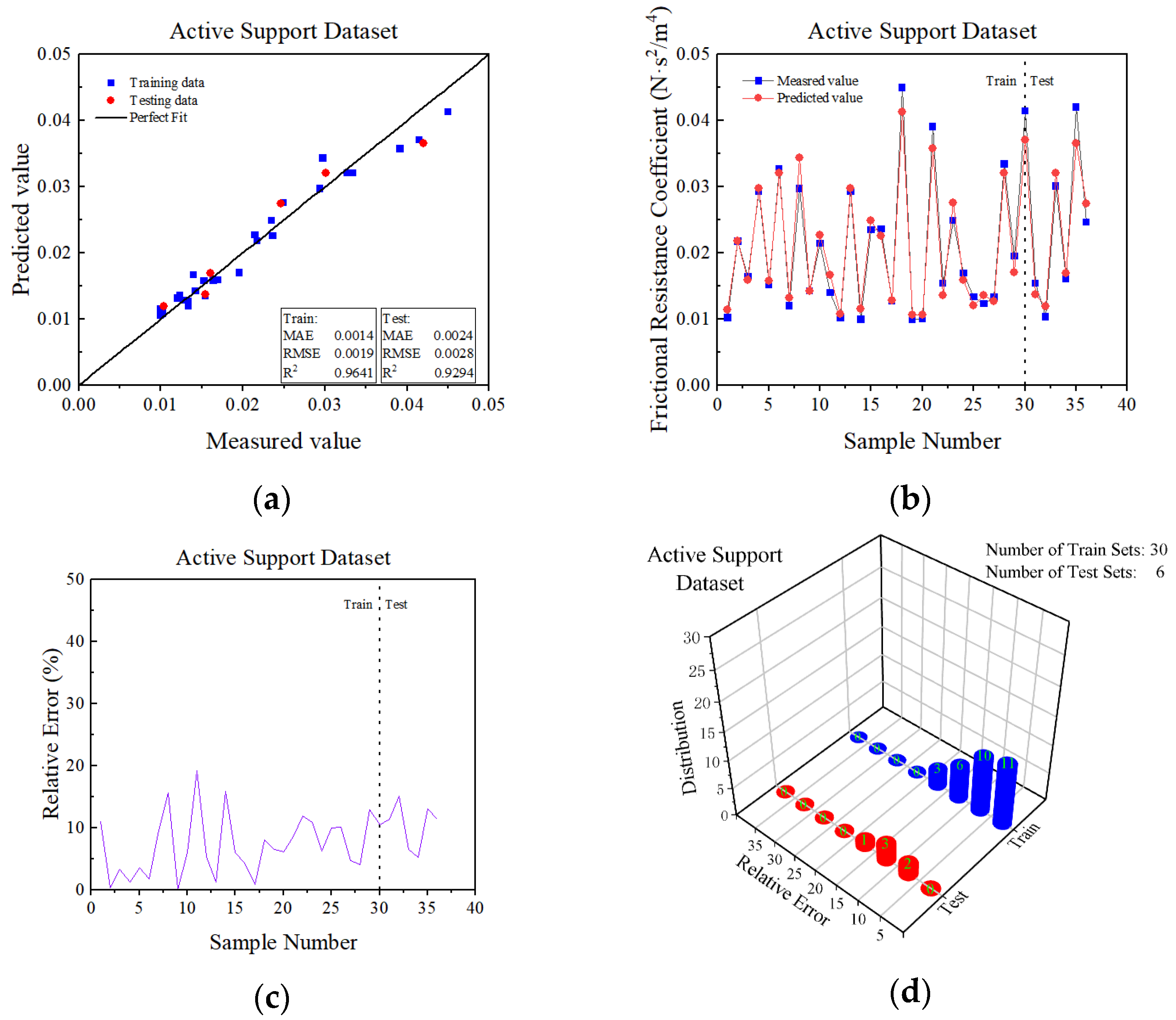

| RF | Active Support Training Set | 0.0010 | 0.0015 | 0.9775 |

| GSCV-RF | 0.0014 | 0.0019 | 0.9641 | |

| BP | 0.0038 | 0.0050 | 0.7533 | |

| RF | Active Support Test Set | 0.0027 | 0.0031 | 0.9165 |

| GSCV-RF | 0.0024 | 0.0028 | 0.9294 | |

| BP | 0.0041 | 0.0056 | 0.7235 | |

4. Conclusions

- The paper began with the roadway support type, and after classifying the roadways as passive support or active support, the passive support α prediction indicator system and the active support α prediction indicator system were developed, respectively. The study demonstrated that the accuracy of these two support systems combined with machine learning, which can successfully predict α, is dependent on the algorithm employed.

- The paper introduced the RF algorithm to solve the problem of α determination. To avoid the super parameter’s influence, the GSCV algorithm was also introduced, and the GSCV-RF prediction model was constructed to predict the passive support training sets. The results were MAE = 0.0018, RMSE = 0.0025 and R2 = 0.9965. In the prediction of the passive support test sets, the results were MAE = 0.0112, RMSE = 0.0157 and R2 = 0.8845. In the prediction of the active support training sets, the results were MAE = 0.0014, RMSE = 0.0019 and R2 = 0.964. In the prediction of the active support test sets, the results were MAE = 0.0024, RMSE = 0.0028 and R2 = 0.9294. The smaller MAE and RMSE, as well as the larger R2, demonstrated that the GSCV-RF model can produce more accurate and reliable predictions of α.

- After comparing and analyzing the three models, we concluded that the GSCV-RF model was superior in α prediction, followed by the RF model and the BP model. The BP model’s R2 was too low, proving that the GSCV-RF model was superior in α prediction.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Gao, K.; Qi, Z.; Liu, Y.; Zhang, J. Calculation Model for Ventilation Friction Resistance Coefficient by Surrounding Rock Roughness Distribution Characteristics of Mine Tunnel. Sci. Rep. 2022, 12, 3193. [Google Scholar] [CrossRef] [PubMed]

- Wu, C. Mine Ventilation and Air Conditioning, 1st ed.; Zhongnan University Press: Changsha, China, 2008; pp. 63–68. [Google Scholar]

- Shao, B. Research on Fuzzy Querying System for Mine Roadway’s Frictional Resistance Coefficient; Liaoning Technical University: Fuxin, China, 2015. [Google Scholar]

- Song, Y.; Zhu, M.; Wei, N.; Deng, L.J. Regression analysis of friction resistance coefficient under different support methods of roadway based on PSO-SVM. J. Phys. Conf. Ser. 2021, 1941, 012046. [Google Scholar] [CrossRef]

- Liang, J.; Wang, Q. Design and Implementation of Friction Coefficient Database for Roadway Ventilation. Saf. Coal Mines. 2019, 50, 99–101. [Google Scholar]

- Hamdia, K.M.; Zhuang, X.; Rabczuk, T. An Efficient Optimization Approach for Designing Machine Learning Models Based on Genetic Algorithm. Neural Comput. Appl. 2021, 33, 1923–1933. [Google Scholar] [CrossRef]

- Zhang, P. The Research of New Methods to Compute Coefficient of Mine Roadway’s Frictional Resistance; Liaoning Technical University: Fuxin, China, 2004. [Google Scholar]

- Wang, S. Calculation of Mine Tunnel Friction Coefficient Based on Multilayer Feedforward Neural Networks; Liaoning Technical University: Fuxin, China, 2014. [Google Scholar]

- Wei, N.; Liu, J. Prediction of Mine Frictional Resistance Coefficient Based on BP Neural Network. Mine Saf. Environ. Prot. 2018, 45, 7–10. [Google Scholar]

- Zhang, J.; Yin, G.; Ni, Y.; Chen, J. Prediction of Industrial Electric Energy Consumption in Anhui Province Based on GA-BP Neural Network. IOP Conf. Ser. Earth Environ. Sci. 2018, 108, 052061. [Google Scholar] [CrossRef]

- Qian, K.; Hou, Z.; Sun, D. Sound Quality Estimation of Electric Vehicles Based on GA-BP Artificial Neural Networks. Appl. Sci. 2020, 10, 5567. [Google Scholar] [CrossRef]

- Breiman, L. Random Forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Tao, H.; Salih, S.Q.; Saggi, M.K.; Dodangeh, E.; Voyant, C.; Al-Ansari, N.; Yaseen, Z.M.; Shahid, S. A Newly Developed Integrative Bio-Inspired Artificial Intelligence Model for Wind Speed Prediction. IEEE Access 2020, 8, 83347–83358. [Google Scholar] [CrossRef]

- Furqan, F.; Muhammad, N.A.; Kaffayatullah, K.; Muhammad, R.S.; Muhammad, F.J.; Fahid, A.; Rayed, A. A Comparative Study of Random Forest and Genetic Engineering Programming for the Prediction of Compressive Strength of High Strength Concrete (HSC). Appl. Sci. 2020, 10, 7330. [Google Scholar]

- Li, S.; Gao, K.; Liu, Y.; Zhou, H.; Liu, Z. Random Forest Inversion Method for Mine Ventilation Resistance Coefficient. Mod. Min. 2020, 36, 205–207. [Google Scholar]

- Zhu, Y.; Huang, L.; Zhang, Z.; Behzad, B. Estimation of Splitting Tensile Strength of Modified Recycled Aggregate Concrete Using Hybrid Algorithms. SSRN Electron. J. 2021, 3, 389–406. [Google Scholar]

- Fu, Y.; Pan, L.; Wang, Q. Simulation and Optimization of Boiler Air Supply Control System Based on Machine Learning. J. Eng. Thermophys. 2022, 43, 1777–1782. [Google Scholar]

- Li, L.; Liang, T.; Ai, S.; Tang, X. An Improved Random Forest Algorithm and Its Application to Wind Pressure Prediction. Int. J. Intell. Syst. 2021, 36, 4016–4032. [Google Scholar]

- Sudhakar, S.; Srinivas, P.; Soumya, S.S.; Rambabu, S.; Suresh, K. Prediction of Groundwater Quality Using Efficient Machine Learning Technique. Chemosphere 2021, 276, 130265. [Google Scholar]

- Sarkhani Benemaran, R.; Esmaeili-Falak, M.; Javadi, A. Predicting Resilient Modulus of Flexible Pavement Foundation Using Extreme Gradient Boosting Based Ptimised OModels. Int. J. Pavement Eng. 2022, 1–20. [Google Scholar] [CrossRef]

- Lu, H. Statistical Learning Method, 1st ed.; Tsinghua University Press: Beijing, China, 2012; pp. 67–72. [Google Scholar]

- Nguyen, H.; Drebenstedt, C.; Bui, X.N.; Bui, D.T. Prediction of Blast-Induced Ground Vibration in an Open-Pit Mine by a Novel Hybrid Model Based on Clustering and Artificial Neural Network. Nat. Resour. Res. 2020, 29, 691–709. [Google Scholar]

- Shan, R.; Peng, Y.; Kong, X.; Xiao, Y.; Yuan, H.; Huang, B.; Zheng, Y. Research Progress of Coal Roadway Support Technology at Home and Abroad. Chin. J. Rock Mech. Eng. 2019, 38, 2377–2403. [Google Scholar]

- Liu, Y. Study on The Air Quantity of Mine Ventilation Network Based on BP Neural Network Prediction Model of Friction Resistance Coefficient in Roadway. Min. Saf. Environ. Prot. 2021, 48, 101–106. [Google Scholar]

- Pi, Z. Study on the Clustering Analysis of Ventilation Resistance Characteristics and Supporting Pattern of Mine Roadway; Liaoning Technical University: Fuxin, China, 2012. [Google Scholar]

- Bao, W.; Ren, C. Research on Prediction Method of Battery Soc Based on GWO-BP Network. Comput. Appl. Softw. 2022, 39, 65–71. [Google Scholar]

| No. | Parameter Name | Parameter Range | Step Length |

|---|---|---|---|

| 1 | n_estimators | [10, 150] | 1 |

| 2 | max_features | [0.1, 1.0] | 0.1 |

| 3 | max_depth | [3, 50] | 1 |

| No. | Parameter Name | Optimum Value (Passive Support) | Optimum Value (Active Support) |

|---|---|---|---|

| 1 | n_estimators | 67 | 110 |

| 2 | max_features | 0.9 | 1.0 |

| 3 | max_depth | 9 | 3 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Guo, C.; Wang, X.; He, D.; Liu, J.; Li, H.; Jiang, M.; Zhang, Y. Predicting the Mine Friction Coefficient Using the GSCV-RF Hybrid Approach. Appl. Sci. 2022, 12, 12487. https://doi.org/10.3390/app122312487

Guo C, Wang X, He D, Liu J, Li H, Jiang M, Zhang Y. Predicting the Mine Friction Coefficient Using the GSCV-RF Hybrid Approach. Applied Sciences. 2022; 12(23):12487. https://doi.org/10.3390/app122312487

Chicago/Turabian StyleGuo, Chenyang, Xiaodong Wang, Dexing He, Jie Liu, Hongkun Li, Mengjiao Jiang, and Yu Zhang. 2022. "Predicting the Mine Friction Coefficient Using the GSCV-RF Hybrid Approach" Applied Sciences 12, no. 23: 12487. https://doi.org/10.3390/app122312487

APA StyleGuo, C., Wang, X., He, D., Liu, J., Li, H., Jiang, M., & Zhang, Y. (2022). Predicting the Mine Friction Coefficient Using the GSCV-RF Hybrid Approach. Applied Sciences, 12(23), 12487. https://doi.org/10.3390/app122312487