FNNS: An Effective Feedforward Neural Network Scheme with Random Weights for Processing Large-Scale Datasets

Abstract

1. Introduction

2. The Related Work

3. Our Proposed FNNS

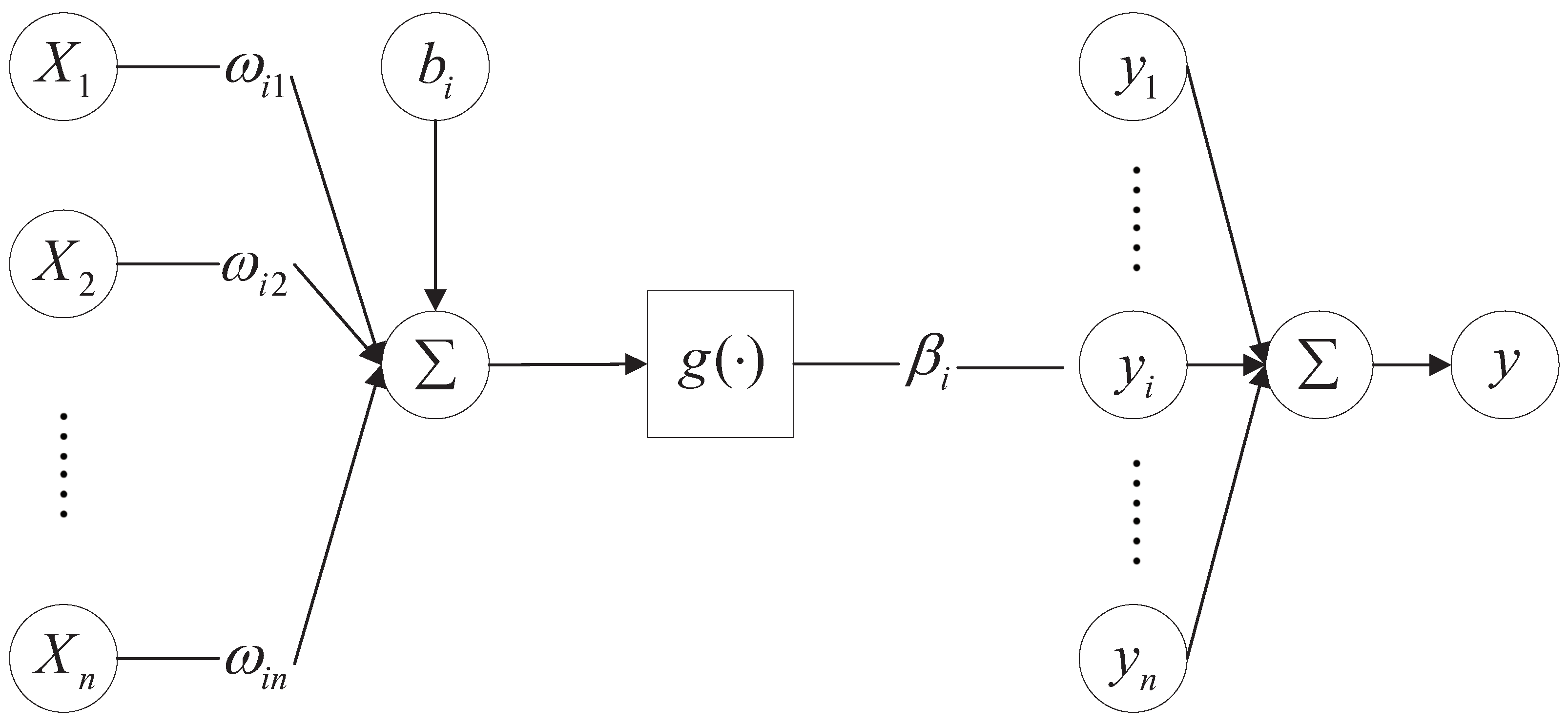

3.1. FNNRW Learning Algorithm

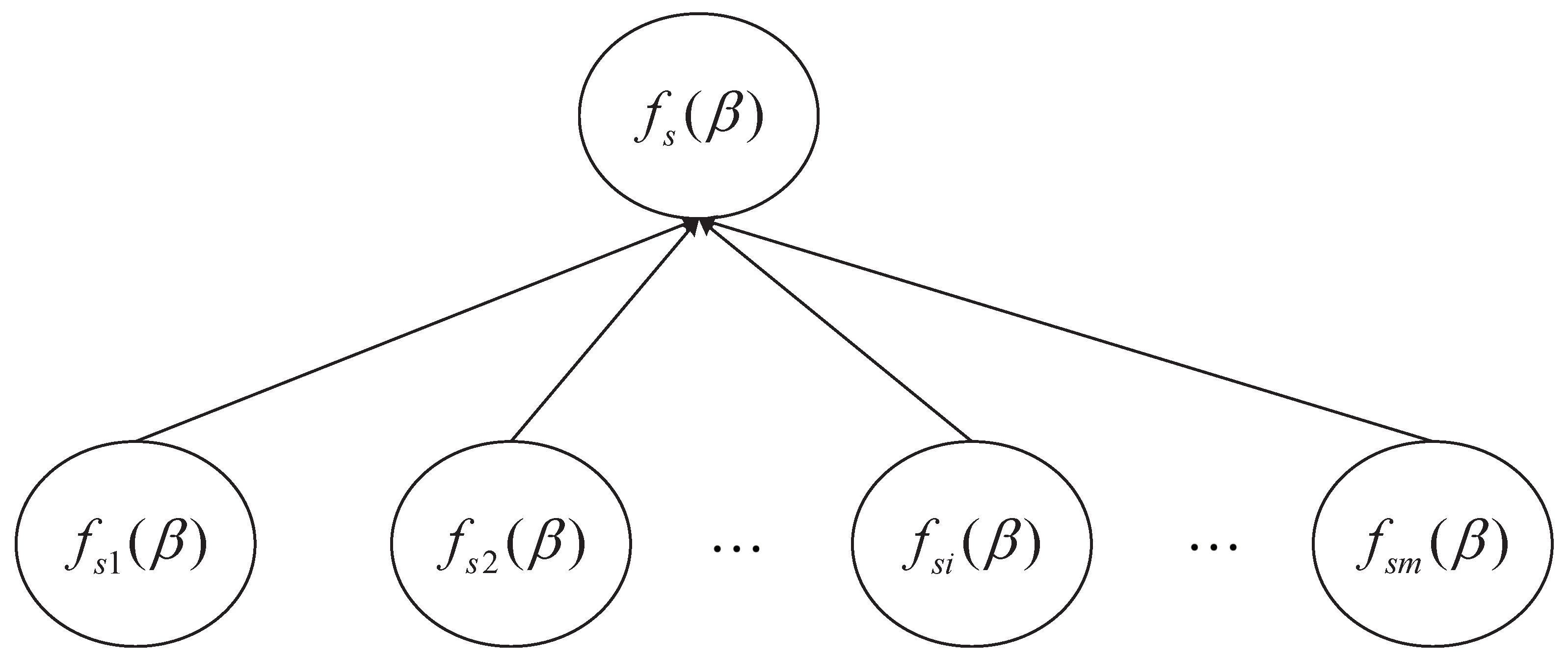

3.2. Improved FNNRW Learning Algorithm

| Algorithm 1 Improved FNNRW learning algorithm. |

| Step 1: Divide the sample randomly into m parts, . For each subset , derive the corresponding submodel. |

| Step 2: Determine the range of input weights and biases that the activation function deems to be optimum,

|

| Step 3: Randomly initialize the same input weights and biases within the range of values. |

| Step 4: Calculate the hidden layer output matrix and initialize the output matrix at random. |

| Step 5: Calculate the required components such as local gradients and global gradients.

|

| Step 6: Calculate the output weight,

|

| Step 7: if or reach the maximum number of iterations, then break; else go on Step 5 and Step 6. |

| Step 8: Train the network using the calculated weights. |

| Step 9: Return result. |

4. Performance Analysis

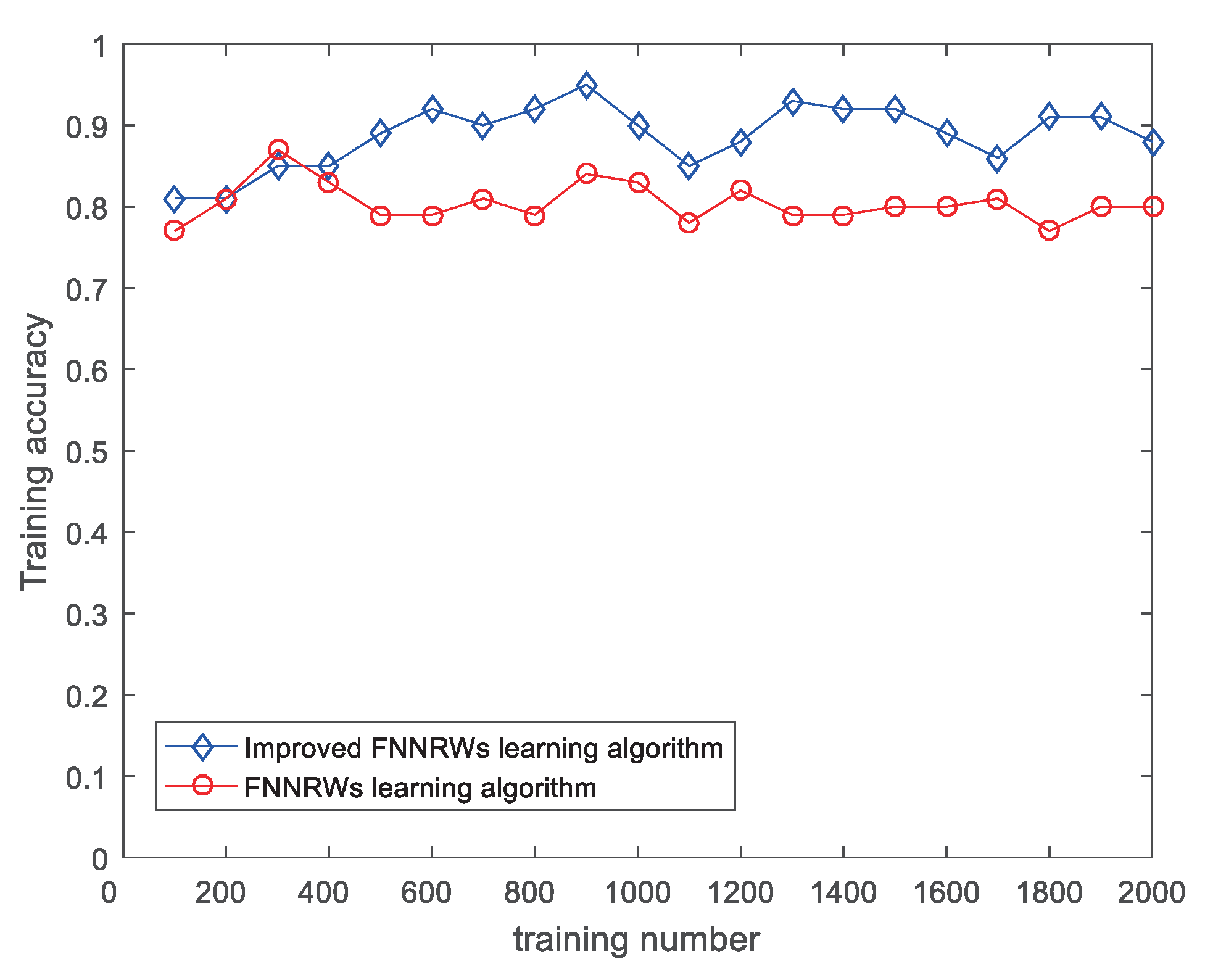

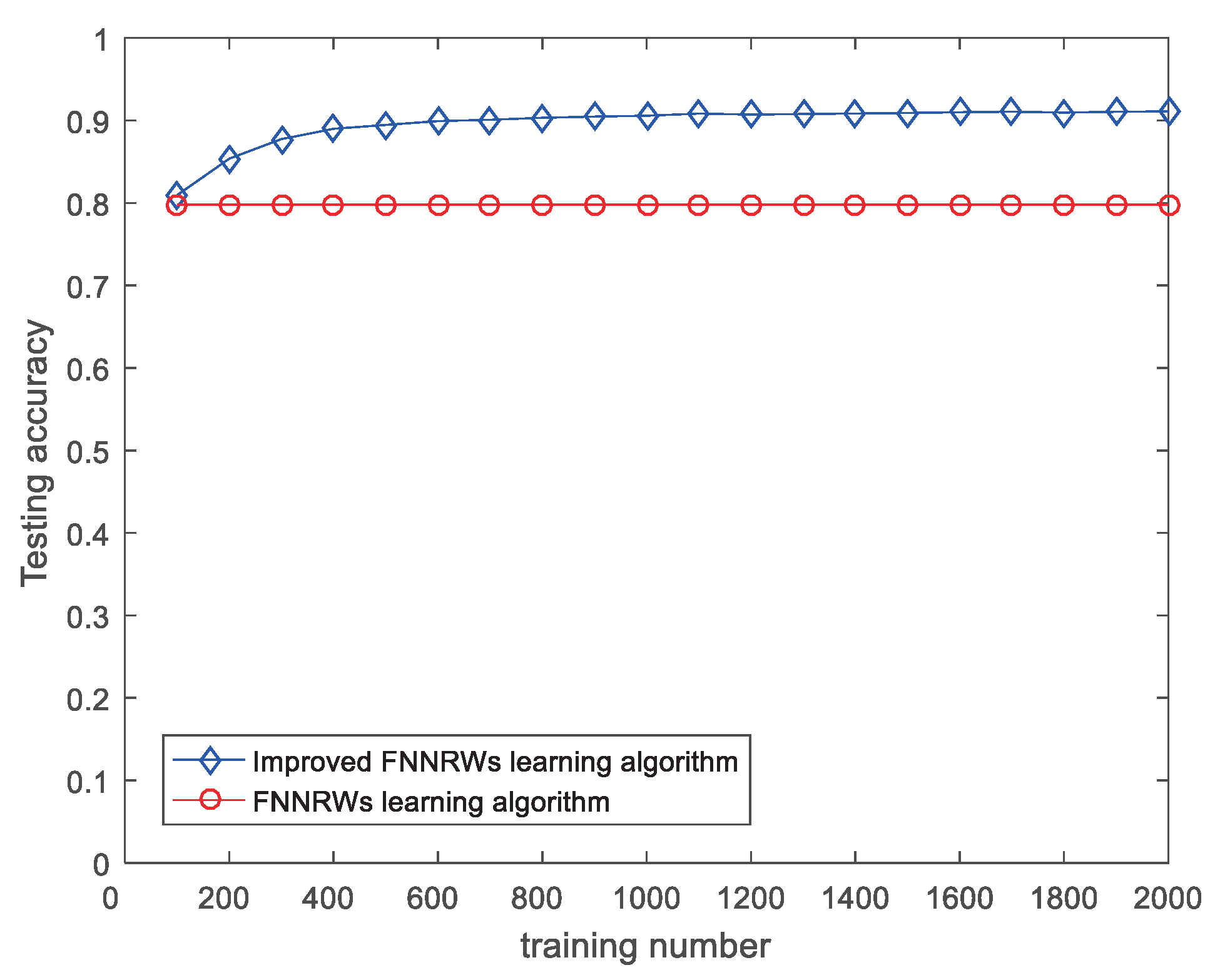

4.1. Results and Discussion

4.2. Engineering Applications

5. Conclusions and the Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Han, F.; Jiang, J.; Ling, Q.H.; Su, B.Y. A survey on metaheuristic optimization for random single-hidden layer feedforward neural network. Neurocomputing 2019, 335, 261–273. [Google Scholar] [CrossRef]

- Li, F.-j.; Li, Y. Randomized algorithms for feedforward neural networks. In Proceedings of the 2016 35th Chinese Control Conference, Chengdu, China, 27–29 July 2016; pp. 3664–3668. [Google Scholar]

- Scardapane, S.; Wang, D.; Panella, M.; Uncini, A. Distributed learning for random vector functional-link networks. Inf. Sci. 2015, 301, 271–284. [Google Scholar] [CrossRef]

- Tang, S.; Chen, L.; He, K.; Xia, J.; Fan, L.; Nallanathan, A. Computational intelligence and deep learning for next-generation edge-enabled industrial IoT. IEEE Trans. Netw. Sci. Eng. 2022. [Google Scholar] [CrossRef]

- Li, M.; Wang, D. Insights into randomized algorithms for neural networks: Practical issues and common pitfalls. Inf. Sci. 2017, 382, 170–178. [Google Scholar] [CrossRef]

- Xia, F.; Hao, R.; Li, J.; Xiong, N.; Yang, L.T.; Zhang, Y. Adaptive GTS allocation in IEEE 802.15. 4 for real-time wireless sensor networks. J. Syst. Archit. 2013, 59, 1231–1242. [Google Scholar] [CrossRef]

- Dudek, G. A method of generating random weights and biases in feedforward neural networks with random hidden nodes. arXiv 2017, arXiv:1710.04874. [Google Scholar]

- Cao, F.; Tan, Y.; Cai, M. Sparse algorithms of Random Weight Networks and applications. Expert Syst. Appl. 2014, 41, 2457–2462. [Google Scholar] [CrossRef]

- Wu, C.; Luo, C.; Xiong, N.; Zhang, W.; Kim, T.H. A greedy deep learning method for medical disease analysis. IEEE Access 2018, 6, 20021–20030. [Google Scholar] [CrossRef]

- Schmidt, W.F.; Kraaijveld, M.A.; Duin, R.P.W. Feed forward neural networks with random weights. In Proceedings of the 11th IAPR International Conference on Pattern Recognition. Vol. II. Conference B: Pattern Recognition Methodology and Systems, The Hague, The Netherlands, 30 August–3 September 1992. [Google Scholar]

- Ye, H.; Cao, F.; Wang, D.; Li, H. Building feedforward neural networks with random weights for large scale datasets. Expert Syst. Appl. 2018, 106, 233–243. [Google Scholar] [CrossRef]

- Scardapane, S.; Wang, D. Randomness in neural networks: An overview. Wiley Interdiscip. Rev. Data Min. Knowl. Discov. 2017, 7, e1200. [Google Scholar] [CrossRef]

- Wang, D.; Li, M. Stochastic Configuration Networks: Fundamentals and Algorithms. IEEE Trans. Cybern. 2017, 47, 3466–3479. [Google Scholar] [CrossRef] [PubMed]

- Mikolov, T.; Deoras, A.; Povey, D. Strategies for training large scale neural network language models. In Proceedings of the 2011 IEEE Workshop on Automatic Speech Recognition & Understanding, Waikoloa, HI, USA, 11–15 December 2012. [Google Scholar]

- Yoo, P.D.; Ng, J.W.; Zomaya, A.Y. An Energy-Efficient Kernel Framework for Large-Scale Data Modeling and Classification. In Proceedings of the 25th IEEE International Symposium on Parallel and Distributed Processing Workshops and Phd Forum, Anchorage, AK, USA, 16–20 May 2011. [Google Scholar]

- Nykamp, D.Q.; Tranchina, D. A Population Density Approach That Facilitates Large-Scale Modeling of Neural Networks: Extension to Slow Inhibitory Synapses. Neural Comput. 2001, 13, 511–546. [Google Scholar] [CrossRef] [PubMed]

- Gu, R.; Shen, F.; Huang, Y. A parallel computing platform for training large scale neural networks. In Proceedings of the 2013 IEEE International Conference on Big Data, Silicon Valley, CA, USA, 6–9 October 2013; pp. 376–384. [Google Scholar]

- Elias, J.G.; Fisher, M.D.; Monemi, C.M. A multiprocessor machine for large-scale neural network simulation. In Proceedings of the IEEE Ijcnn-91-seattle International Joint Conference on Neural Networks, Seattle, WA, USA, 8–12 July 1991. [Google Scholar]

- Osuna, E.; Freund, R.; Girosi, F. An Improved Training Algorithm for Support Vector Machines. In Proceedings of the Neural Networks for Signal Processing Vii-IEEE Workshop, Amelia Island, FL, USA, 24–26 September 1997. [Google Scholar]

- Osuna, E.; Freund, R.; Girosi, F. Training Support Vector Machines: An Application to Face Detection. In Proceedings of the IEEE Computer Society Conference on Computer Vision & Pattern Recognition, Quebec City, QC, Canada, 6 August 2002. [Google Scholar]

- Lu, B.L.; Ito, M. Task decomposition and module combination based on class relations: A modular neural network for pattern classification. IEEE Trans. Neural Netw. 1999, 10, 1244–1256. [Google Scholar]

- Schwaighofer, A.; Tresp, V. The Bayesian Committee Support Vector Machine. In Artificial Neural Networks—ICANN 2001; Springer: Berlin/Heidelberg, Germany, 2001. [Google Scholar]

- Tresp, V. A Bayesian committee machine. Neural Comput. 2000, 12, 2719–2741. [Google Scholar] [CrossRef] [PubMed]

- Cheng, H.; Xie, Z.; Shi, Y.; Xiong, N. Multi-step data prediction in wireless sensor networks based on one-dimensional CNN and bidirectional LSTM. IEEE Access 2019, 7, 117883–117896. [Google Scholar] [CrossRef]

- Yao, Y.; Xiong, N.; Park, J.H.; Ma, L.; Liu, J. Privacy-preserving max/min query in two-tiered wireless sensor networks. Comput. Math. Appl. 2013, 65, 1318–1325. [Google Scholar] [CrossRef]

- Jain, A.K.; Mao, J.; Mohiuddin, K.M. Artificial neural networks: A tutorial. Computer 1996, 26, 31–44. [Google Scholar] [CrossRef]

- Krogh, A. What are artificial neural networks? Nat. Biotechnol. 2008, 26, 195–197. [Google Scholar] [CrossRef] [PubMed]

- Wang, X.; Dai, P.; Cheng, X.; Liu, Y.; Cui, J.; Zhang, L.; Feng, D. An online generation method of ascent trajectory based on feedforward neural networks. Aerosp. Sci. Technol. 2022, 128, 107739. [Google Scholar] [CrossRef]

- Cui, W.; Cao, B.; Fan, Q.; Fan, J.; Chen, Y. Source term inversion of nuclear accident based on deep feedforward neural network. Ann. Nucl. Energy 2022, 175, 109257. [Google Scholar] [CrossRef]

- Xiao, L.; Fang, X.; Zhou, Y.; Yu, Z.; Ding, D. Feedforward neural network-based chaos encryption method for polarization division multiplexing optical OFDM/OQAM system. Opt. Fiber Technol. 2022, 72, 102942. [Google Scholar] [CrossRef]

- Mouloodi, S.; Rahmanpanah, H.; Gohari, S.; Burvill, C.; Davies, H.M. Feedforward backpropagation artificial neural networks for predicting mechanical responses in complex nonlinear structures: A study on a long bone. J. Mech. Behav. Biomed. Mater. 2022, 128, 105079. [Google Scholar] [CrossRef] [PubMed]

- Fontes, C.H.; Embiruçu, M. An approach combining a new weight initialization method and constructive algorithm to configure a single Feedforward Neural Network for multi-class classification. Eng. Appl. Artif. Intell. 2021, 106, 104495. [Google Scholar] [CrossRef]

- Dudek, G. Generating random weights and biases in feedforward neural networks with random hidden nodes. Inf. Sci. 2019, 481, 33–56. [Google Scholar] [CrossRef]

- Cao, F.; Wang, D.; Zhu, H.; Wang, Y. An iterative learning algorithm for feedforward neural networks with random weights. Inf. Sci. 2016, 328, 546–557. [Google Scholar] [CrossRef]

- Ai, W.; Chen, W.; Xie, J. Distributed learning for feedforward neural networks with random weights using an event-triggered communication scheme. Neurocomputing 2017, 224, 184–194. [Google Scholar] [CrossRef]

- Kumar, P.; Kumar, R.; Srivastava, G.; Gupta, G.P.; Tripathi, R.; Gadekallu, T.R.; Xiong, N.N. PPSF: A privacy-preserving and secure framework using blockchain-based machine-learning for IoT-driven smart cities. IEEE Trans. Netw. Sci. Eng. 2021, 8, 2326–2341. [Google Scholar] [CrossRef]

- Yam, J.Y.; Chow, T.W. A weight initialization method for improving training speed in feedforward neural network. Neurocomputing 2000, 30, 219–232. [Google Scholar] [CrossRef]

- Li, D.; Liu, Z.; Xiao, P.; Zhou, J.; Armaghani, D.J. Intelligent rockburst prediction model with sample category balance using feedforward neural network and Bayesian optimization. Underground Space 2022, 7, 833–846. [Google Scholar] [CrossRef]

- Deng, X.; Wang, X. Incremental learning of dynamic fuzzy neural networks for accurate system modeling. Fuzzy Sets Syst. 2009, 160, 972–987. [Google Scholar] [CrossRef]

- Makantasis, K.; Georgogiannis, A.; Voulodimos, A.; Georgoulas, I.; Doulamis, A.; Doulamis, N. Rank-r fnn: A tensor-based learning model for high-order data classification. IEEE Access 2021, 9, 58609–58620. [Google Scholar] [CrossRef]

| Nodes | FNNRW Learning Algorithm | Improved FNNRW Learning Algorithm | ||

|---|---|---|---|---|

| Training Accuracy | Testing Accuracy | Training Accuracy | Testing Accuracy | |

| 400 | 0.8300 | 0.7980 | 0.8200 | 0.8500 |

| 800 | 0.8427 | 0.7786 | 0.8786 | 0.8700 |

| 1200 | 0.8583 | 0.7977 | 0.9011 | 0.8935 |

| 1600 | 0.8600 | 0.8034 | 0.9300 | 0.9084 |

| 2000 | 0.8621 | 0.8022 | 0.9406 | 0.9099 |

| Nodes | FNNRW Learning Algorithm | Improved FNNRW Learning Algorithm | ||

|---|---|---|---|---|

| Training Accuracy | Testing Accuracy | Training Accuracy | Testing Accuracy | |

| 400 | 0.8058 | 0.7323 | 0.8129 | 0.7572 |

| 800 | 0.8183 | 0.7885 | 0.8238 | 0.7704 |

| 1200 | 0.8595 | 0.8007 | 0.8693 | 0.8331 |

| 1600 | 0.8600 | 0.8042 | 0.9021 | 0.8947 |

| 2000 | 0.8679 | 0.8145 | 0.9130 | 0.9249 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, Z.; Feng, F.; Huang, T. FNNS: An Effective Feedforward Neural Network Scheme with Random Weights for Processing Large-Scale Datasets. Appl. Sci. 2022, 12, 12478. https://doi.org/10.3390/app122312478

Zhang Z, Feng F, Huang T. FNNS: An Effective Feedforward Neural Network Scheme with Random Weights for Processing Large-Scale Datasets. Applied Sciences. 2022; 12(23):12478. https://doi.org/10.3390/app122312478

Chicago/Turabian StyleZhang, Zhao, Feng Feng, and Tingting Huang. 2022. "FNNS: An Effective Feedforward Neural Network Scheme with Random Weights for Processing Large-Scale Datasets" Applied Sciences 12, no. 23: 12478. https://doi.org/10.3390/app122312478

APA StyleZhang, Z., Feng, F., & Huang, T. (2022). FNNS: An Effective Feedforward Neural Network Scheme with Random Weights for Processing Large-Scale Datasets. Applied Sciences, 12(23), 12478. https://doi.org/10.3390/app122312478