1. Introduction

Facial Expression Recognition (FER) has a number of different applications, including service robots [

1], driver fatigue monitoring [

2], mood prediction [

3], sentiment analysis in customer reviews [

4], and many others. For humans, recognizing and interpreting facial expressions comes naturally and is a basic form of communication. In some cases, facial expressions are the only means of communication, such as in newborns and unconscious patients in the ICU [

5]. However, facial expression recognition is still challenging for computer systems due to factors such as camera view angles, occlusions, image noise, and changes in scene illumination. All these issues must be accounted for when developing a robust system for a given application. In the past decade, much work has been done to recognize a group of expressions, which are often related to prototypical facial expressions associated with basic emotions [

6]. A FER system usually operates in three stages: in the first stage, a face region is located, then facial features are extracted in the second stage, and finally, an expression is classified in the third stage.

FER systems can either be categorized as frame or sequence-based depending on whether a static image or a video sequence is used to extract facial features for classification [

7]. In frame-based approaches, only spatial information, such as the appearance and geometry of the facial image, can be used to describe the expression. In contrast, the sequence-based approach can extract spatial and temporal features to describe the evolution of expressions during a video sequence. Frame-based approaches are preferred due to their simplicity, and no assumption is made about how the expression evolves (an assumption for many sequence-based approaches). Therefore, much attention has been given to developing FER systems based on static images. In this category, we can identify two main trends in the development of FER systems. On the one hand, there are FER systems based on the extraction and classification of handcrafted features, and on the other, we have approaches based on deep learning. Handcrafted techniques rely on domain-specific knowledge about the human face, such as facial muscle deformations and the movement of facial components, such as raising the eyes and the opening/closing of the mouth during emotion elicitation.

Within the handcrafted techniques, the geometrical features were developed to describe the shape and location of facial components [

8]. Some work was also undertaken to reconstruct Action Units and interpret their combinations as facial expressions [

9]. Geometric-based FER systems were difficult to develop because they required the accurate labeling of Action Units [

6] and landmarks, which is time-consuming and tedious. During this time, appearance-based FER systems were being developed, giving rise to local descriptors for facial expression representation. Local descriptors were used to extract texture information such as edges, corners, and spots that make up facial expressions and achieved the same or better results while being less tedious to develop than the geometric features.

Recently, deep neural networks have been studied for facial expression recognition [

10,

11]. Unlike traditional techniques, deep learning includes little knowledge about facial structure and appearance. Deep learning systems automatically detect and extract facial features based on a series of layers, such as convolution, pooling, and fully-connected layers. This field is attracting more and more attention due to the improved recognition capacity offered by deep neural networks for static and sequence-based recognition. Mainly, CNN (Convolution Neural Networks) is the basic model for extracting more discriminative spatial features from face images, while RNN (Recurrent Neural Networks) and LSTM (Long Short-Term Memory) networks are suitable for characterizing the spatio-temporal features of sequences [

12].

In the literature, there have been a few attempts to compare the different methods based on local descriptors and traditional classifiers for facial expression recognition. In 2018, Turan and Lam [

13] studied 27 local descriptors under different conditions, such as varying image resolutions and the number of sub-regions. Two classifiers were used to recognize a different number of expressions on four facial expression databases. Their results were comparable to the state-of-the-art deep learning approaches on well-known datasets. Slimani et al. [

14] studied the independent performance of 46 LBP variants for facial expression recognition. A single classification technique (i.e., SVM) was used to classify seven expressions. The study showed that several local descriptors, initially proposed for other classification problems, outperformed the state-of-the-art four databases.

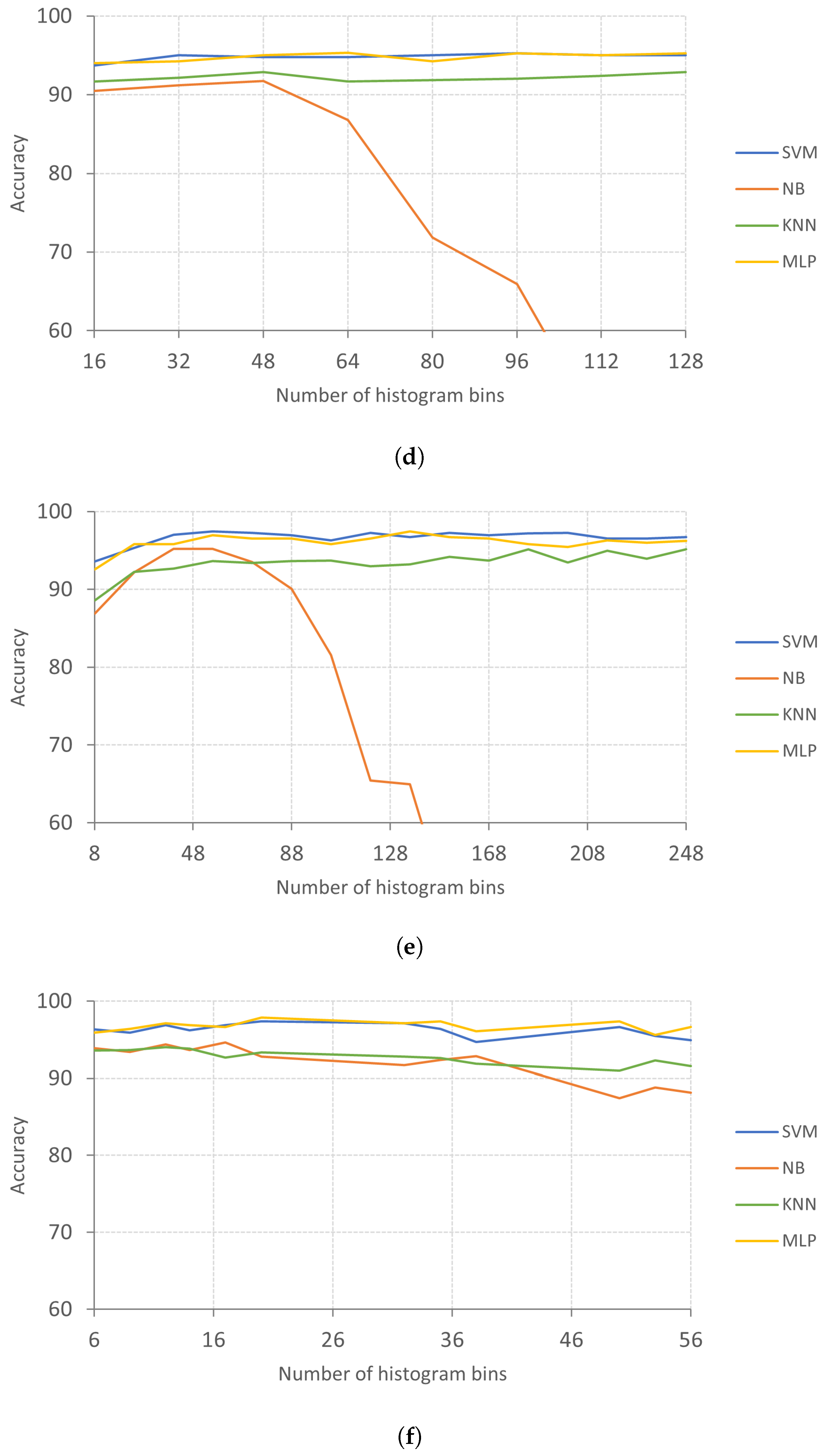

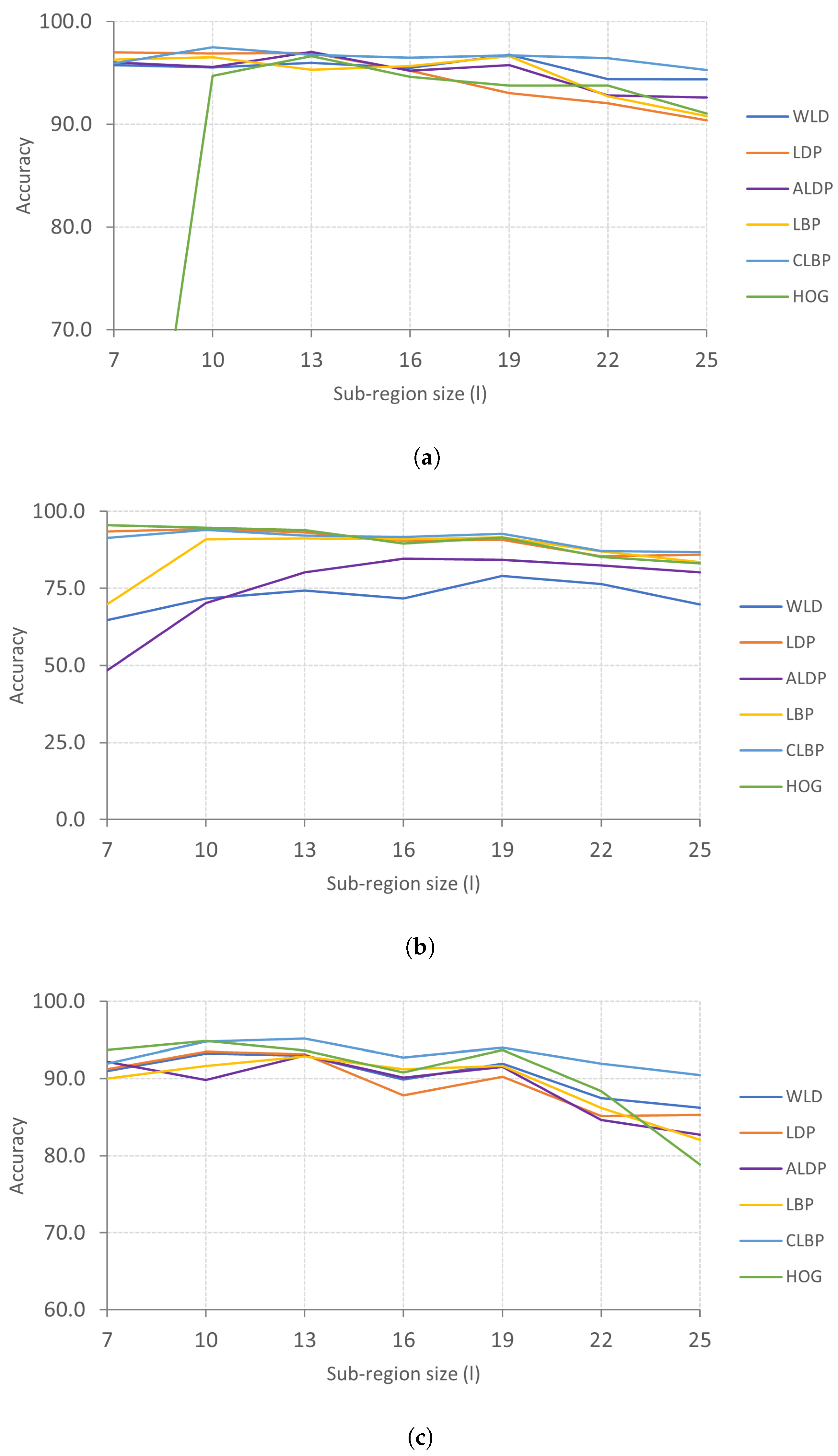

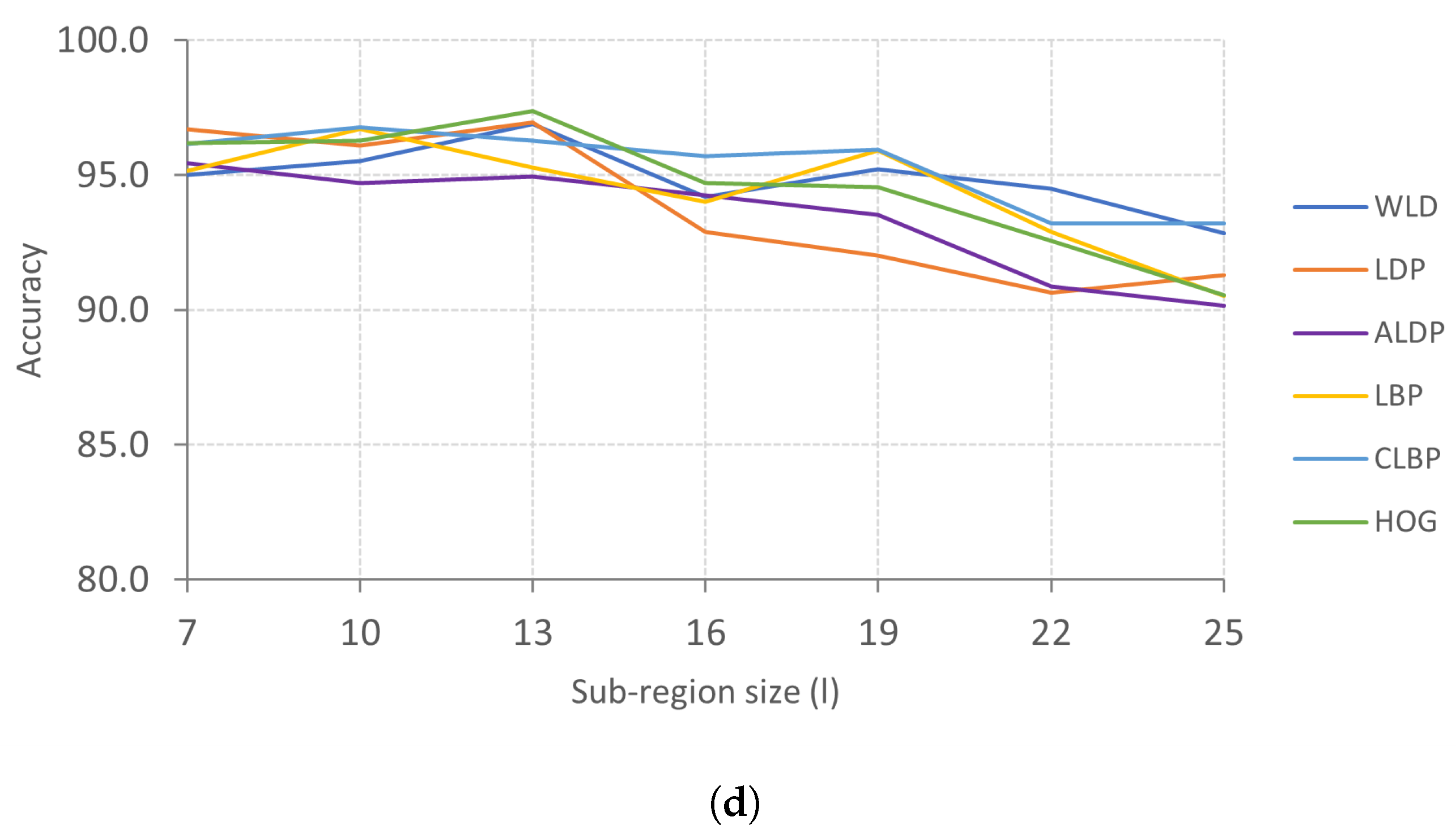

This study investigates the performances of six local descriptors and four machine-learning techniques for automatic facial expression recognition. The novelty of this approach is in using a variable sub-region size and a variable number of histogram bins to optimize the performance of histogram-based local descriptors. Face registration and feature vector normalization also contributed significantly to the results obtained in this work compared to previous works. Many experiments were conducted under different settings, such as varied extraction parameters, different numbers of expressions, and two datasets, to discover the best combinations of local descriptors and classifiers for facial expression recognition. The rest of this paper is organized as follows:

Section 2 reviews local descriptors and classifiers used in facial expression recognition,

Section 3 is the materials and methods,

Section 5 presents the experimental results and discussion, and

Section 6 concludes the paper.

3. An Approach for Optimized Facial Expression Recognition

A simple and effective method for facial expression recognition based on local descriptors and conventional classifiers was defined by Slimani et al. [

14]. The method involves dividing the face region into several non-overlapping regions and extracting local descriptors from each sub-region. The histogram of each sub-region is calculated separately and concatenated to form a single feature vector for classification. The current work extends Slimani et al.’s [

14] approach by optimizing the feature extraction process.

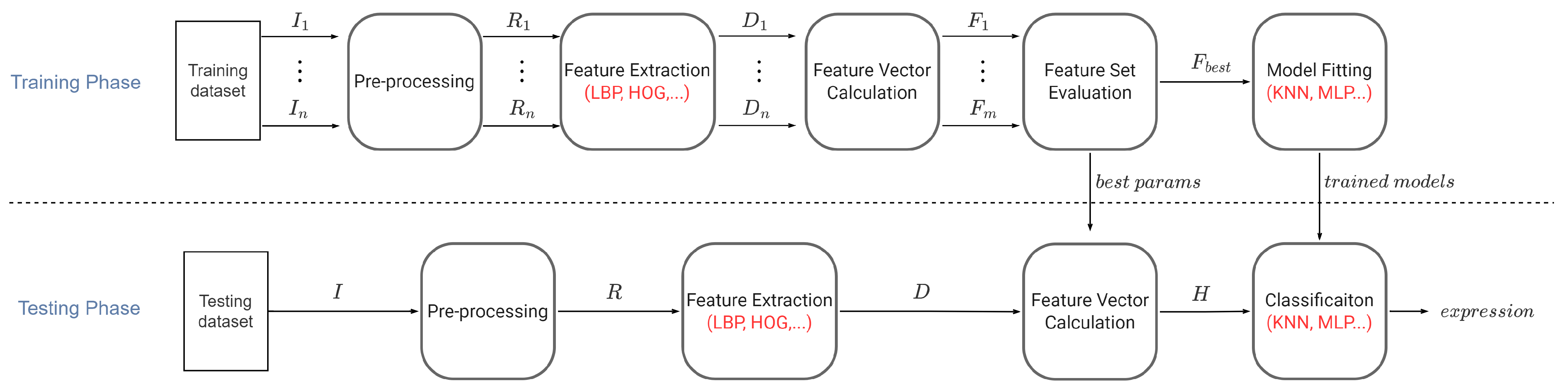

Figure 2 summarizes the proposed approach for optimized facial expression recognition, consisting of two phases: a training phase and a testing phase. In the training phase, images are pre-processed using face detection and registration. Next, a local descriptor is applied to the registered faces to extract facial features. Subsequently, feature vectors are generated by dividing a face region into several sub-regions and calculating the histograms of each sub-region. The feature vectors are normalized based on the type of local descriptor used (see

Section 3.2). To optimize the process of feature vector calculation, the extraction parameters (i.e., the sub-region size and the number of histogram bins) are varied, producing several feature sets. Then, the best extraction parameter settings are found by comparing the classification performances produced by each feature set. Finally, the best feature set and corresponding expressions are used to train a machine-learning model.

In the testing phase, images are processed, and the feature vectors are generated based on the best extraction parameter values obtained from the training phase. After that, the feature vectors are classified as facial expressions using the trained classifier. Additionally, the classifier’s performance is evaluated by 10-fold cross-validation. The novelty of this approach is in using a variable sub-region size and a variable number of histogram bins to optimize the performance of each local descriptor.

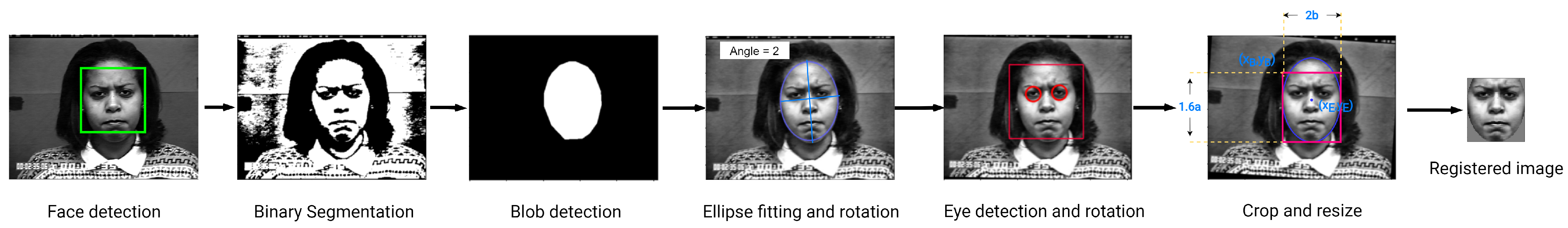

3.1. Face Detection and Registration

Faces were detected using a frontal face detector implemented by the OpenCV library for the Python programming language (OpenCV is available at

https://pypi.org/project/opencv-contrib-python/ accessed on 24 July 2021). The faces were then registered, giving them a predefined pose, shape, and size. First, an input image was converted to gray-scale, and then Contrast Limited Histogram Equalization (CLAHE) was applied using Equation (

13). Then, a face blob was obtained by performing a thresholding operation followed by filtration techniques. Then, an ellipse-fitting algorithm (the ellipse fitting algorithm was implemented by the Python OpenCV library.) estimates the shape and angle of inclination of the face blob. The ellipse’s angle is used to rotate the face to a vertical position. This process is not always perfect. Hence, the eye locations are used to rotate the image a second time. Finally, an elliptical crop followed by a rectangular crop is employed to produce an image where the eyes are in a predefined position relative to the sides of the image.

Figure 3 illustrates face detection and registration.

where

is defined as

and

is defined as

3.2. Feature Extraction and Feature Vector Calculation

In this study, six local descriptors were investigated: Local Binary Patterns (LBP), Compound Local Binary Patterns (CLBP), Local Directional Patterns (LDP), Angled Local Directional Patterns (ALDP), Weber’s Local Descriptor (WLD), and Histogram of Oriented Gradients (HOG). Feature extraction and feature vector calculations are key stages of this FER method. Although the two processes were portrayed separately in the preamble of this section, their workings are very interlinked. The method used in this study divides the face image into several equally sized non-overlapping sub-regions, and a local descriptor is applied to each sub-region, producing several histograms. The final feature vector is generated by normalizing the histograms and concatenating them into a single 1D vector. It must be noted that the sub-region histograms are calculated separately and normalized such that the frequencies sum to one (this rule is applied to all the considered descriptors except the HOG descriptor, where the blocks are normalized in groups using L2-Hys [

26]). The normalized histograms are then concatenated to form the final feature vector. Suppose

is a

matrix of intensities representing the

j-th sub-region in the image, and the function

computes the descriptor at a position

in

, and then the histogram associated with this sub-region is defined as

where

n is the number of possible descriptor values, and

is the number of histogram bins.

The final feature vector is given by

where

m is the number of sub-regions.

3.3. Feature Set Evaluation

In this step, a model is trained with different feature sets, and the best feature set is selected based on its classification performance. The performances were ranked using the average recall during 10-fold cross-validation. Then, the best feature set and the corresponding best parameter setting are identified. Algorithm 1 defines the process of feature set evaluation in more detail.

| Algorithm 1 Feature Set Evaluation |

Inputs:

Outputs:

- 1:

// Initialize the best feature set - 2:

// Initialize the best parameters - 3:

// Construct a new model e.g., SVM, KNN etc. - 4:

) // Obtain a cross-validation score - 5:

for i in do // Train new models with the remaining feature sets - 6:

- 7:

- 8:

if then // Find the maximum score - 9:

- 10:

- 11:

- 12:

end if - 13:

end for - 14:

return

|

3.4. Facial Expression Classification

This research is focused on the recognition of prototypic facial expressions of emotion from facial images. The classification stage aims at training a machine learning model using labeled feature vectors extracted from the facial images. Once trained, the model can make predictions on new data. The current work considers four classification methods, including Support Vector Machines (SVM), K-Nearest Neighbors (KNN), Naive Bayes (NB) (this work used scikit-learn’s [

29] implementation of the Gaussian Naive Bayes classifier), and Multi-layer Perceptron (MLP).

Table 1 gives the hyper-parameter settings used to train each classifier.

4. Datasets

This study conducted experiments using two well-known FER datasets: the Extended Cohn–Kanade (CK+) and Radboud Faces Dataset (RFD).

CK+ gathers facial expression images of subjects from various ethnicities, ages, and genders. This database consists of 593 image sequences from 123 subjects. The database contains posed and non-posed images taken under lab-controlled conditions. To form the first dataset for our study, we followed the guidelines in [

35] by selecting our samples as follows: the last images in the sequences for anger, disgust, and happiness; the last and fourth images for the first 68 sequences related to surprise; the last and fourth from last images for the images of fear and sadness. A second dataset is created by adding images for the expression related to neutral. The number of images collected amounted to 347 and 407 for the first and the second datasets, respectively.

The Radboud Faces Database contains pictures of 67 subjects (adults and children) displaying eight facial expressions of emotion. The subjects are of Caucasian ethnicity, and the images are taken in different gaze directions and head orientations [

36]. Three subsets were created: the first subset consisted of 402 images labeled with six expressions, including anger, disgust, fear, happiness, sadness, and surprise; the second subset was formed by augmenting the first set with 67 images labeled as contempt, and the third subset was formed by adding 67 images labeled as neutral to the second set. The total number of image samples was 536.

Table 2 summarizes the number of samples and expressions in each dataset.

6. Conclusions

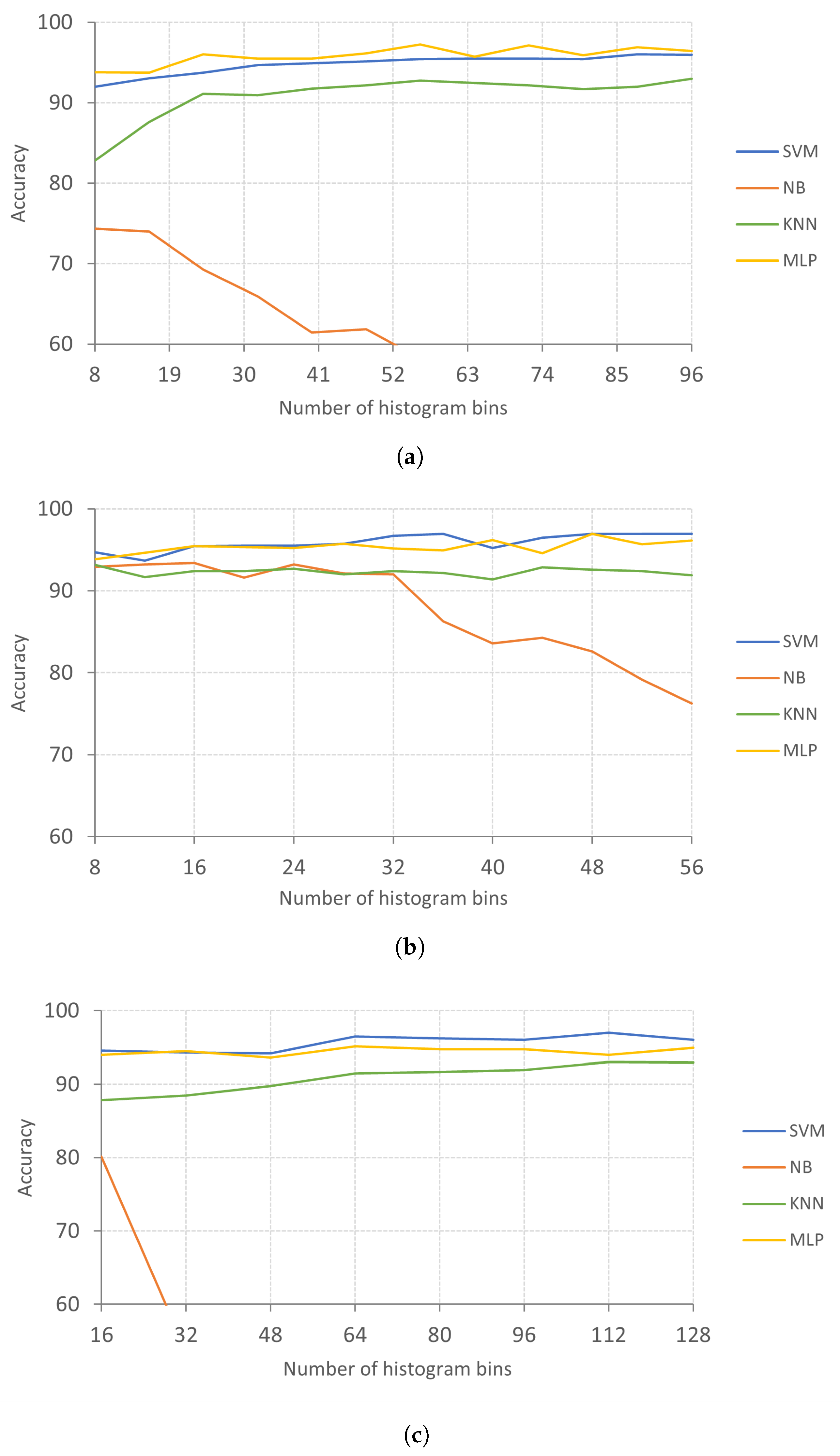

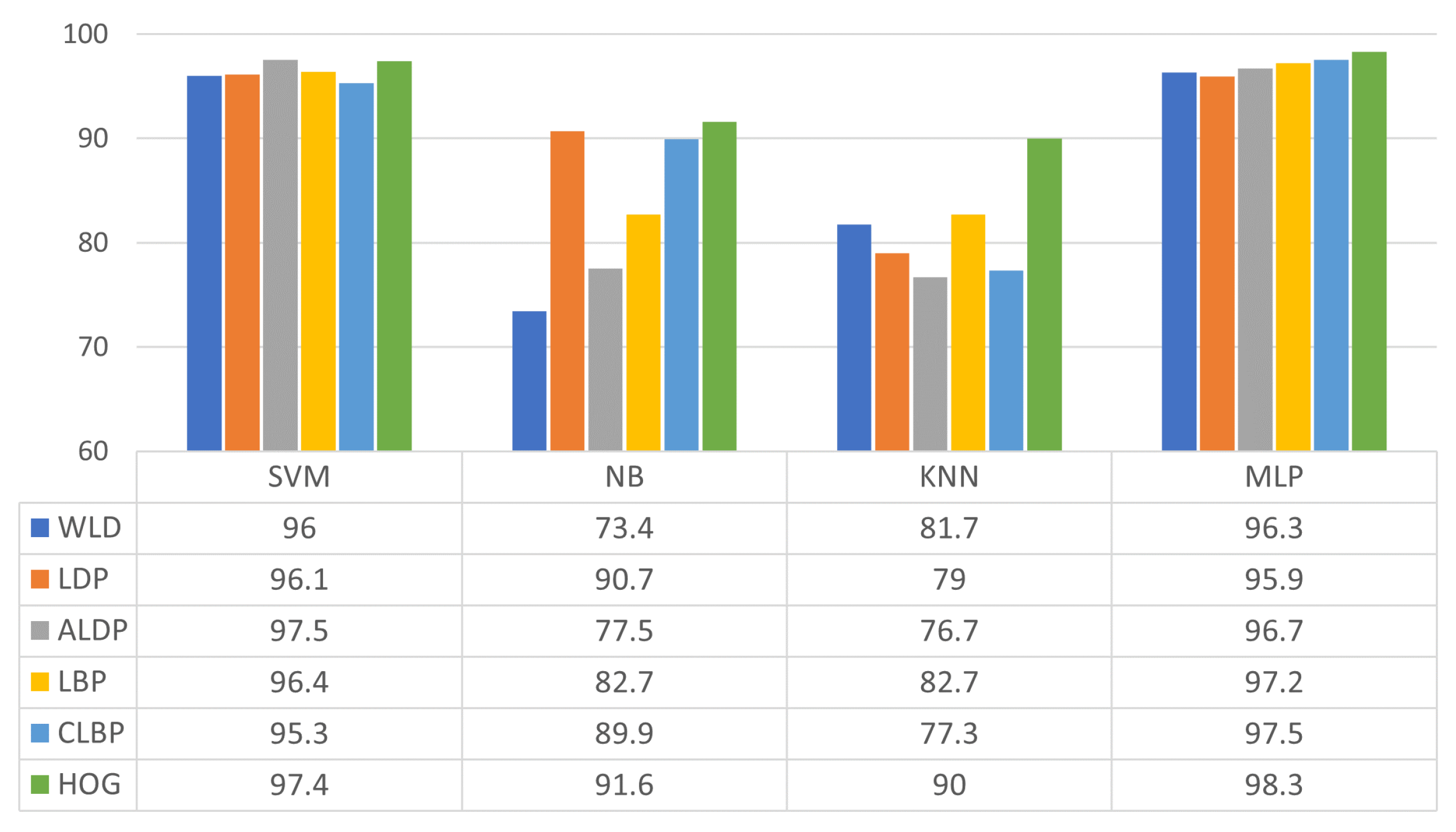

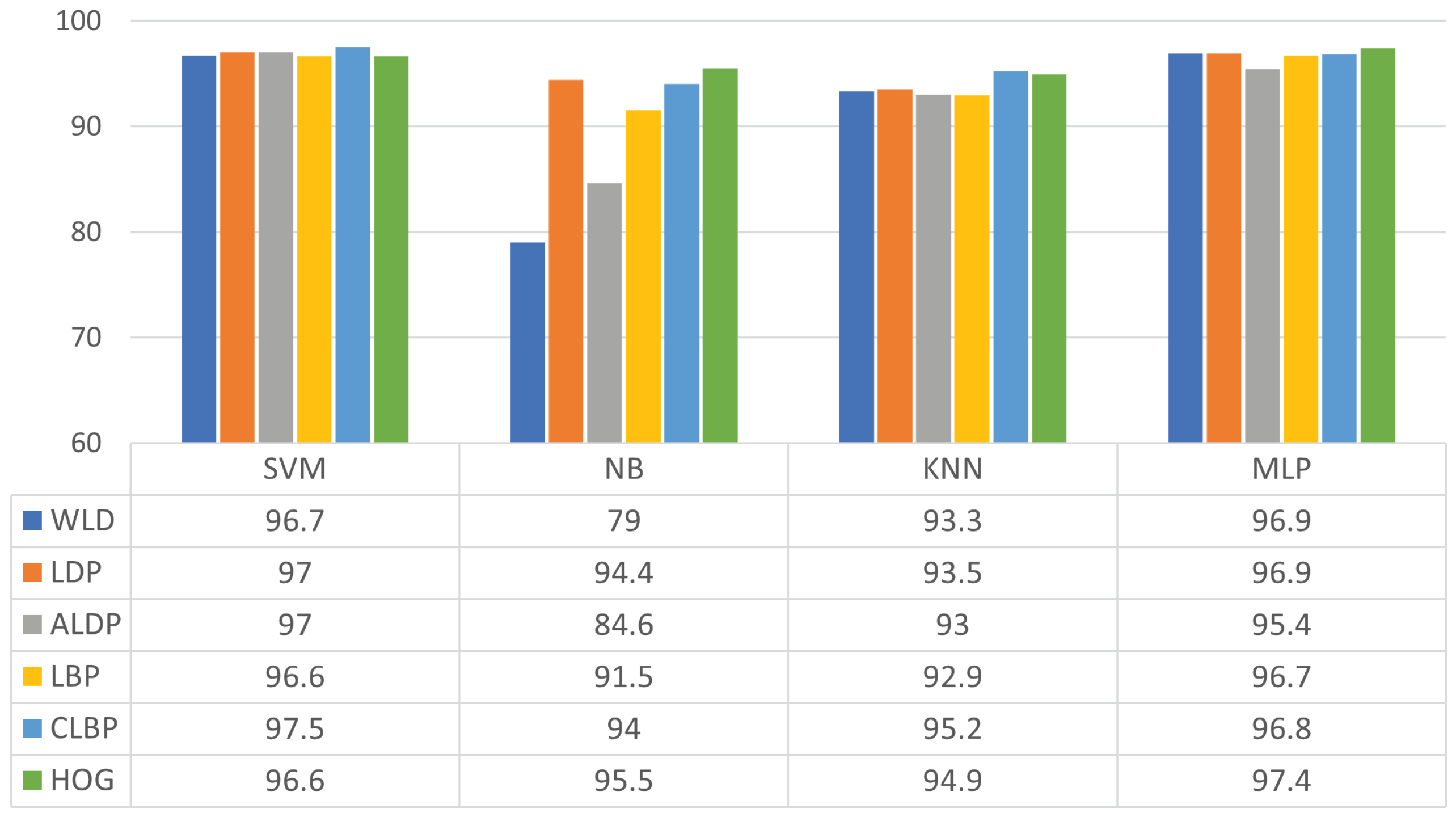

This study evaluated different combinations of local descriptors and classifiers for facial expression recognition. The experiments comprehensively evaluated six descriptors and four classifiers on two famous datasets, such as CK+ and RFD. Notably, the appropriate choice of extraction parameters improved several descriptors’ performances. We can single out HOG as the descriptor that achieved the most consistent performance across all the datasets. The computational costs of each classifier and descriptor were also studied, and we found that the NB and MLP classifiers provided the fastest predictions. However, MLP can be time-consuming during training compared to the other classifiers. When evaluating the classifiers’ recognition efficiencies, we found that SVM and MLP achieved the best results.

Out of the six considered descriptors, HOG and ALDP are some of the most promising, while SVM and MLP rank at the top of the considered classifiers. We also found that SVM was faster to train and required little model tuning compared to MLP. On the other hand, MLP has a notably faster prediction, which could be an advantage in real-time applications.