Virtual Humans in Museums and Cultural Heritage Sites

Abstract

1. Introduction

2. Methods and Context of the Study

2.1. Related Work

2.2. Research Questions

- (i)

- What are the types and roles of virtual agents and avatars used in the cultural heritage sector, and how do they evolve over time?

- (ii)

- What are the trends in the use of technologies such as VR and AR regarding their evolvement over time?

- (iii)

- What are the trends in the use of virtual agents and avatars in relation to whether such uses regard onsite or online applications, and what are their characteristics?

- (iv)

- What conclusions can be drawn for future research and pertinent practices regarding uses of digital human agents and avatars in the field of cultural heritage?

2.3. Search Strategy

3. Results

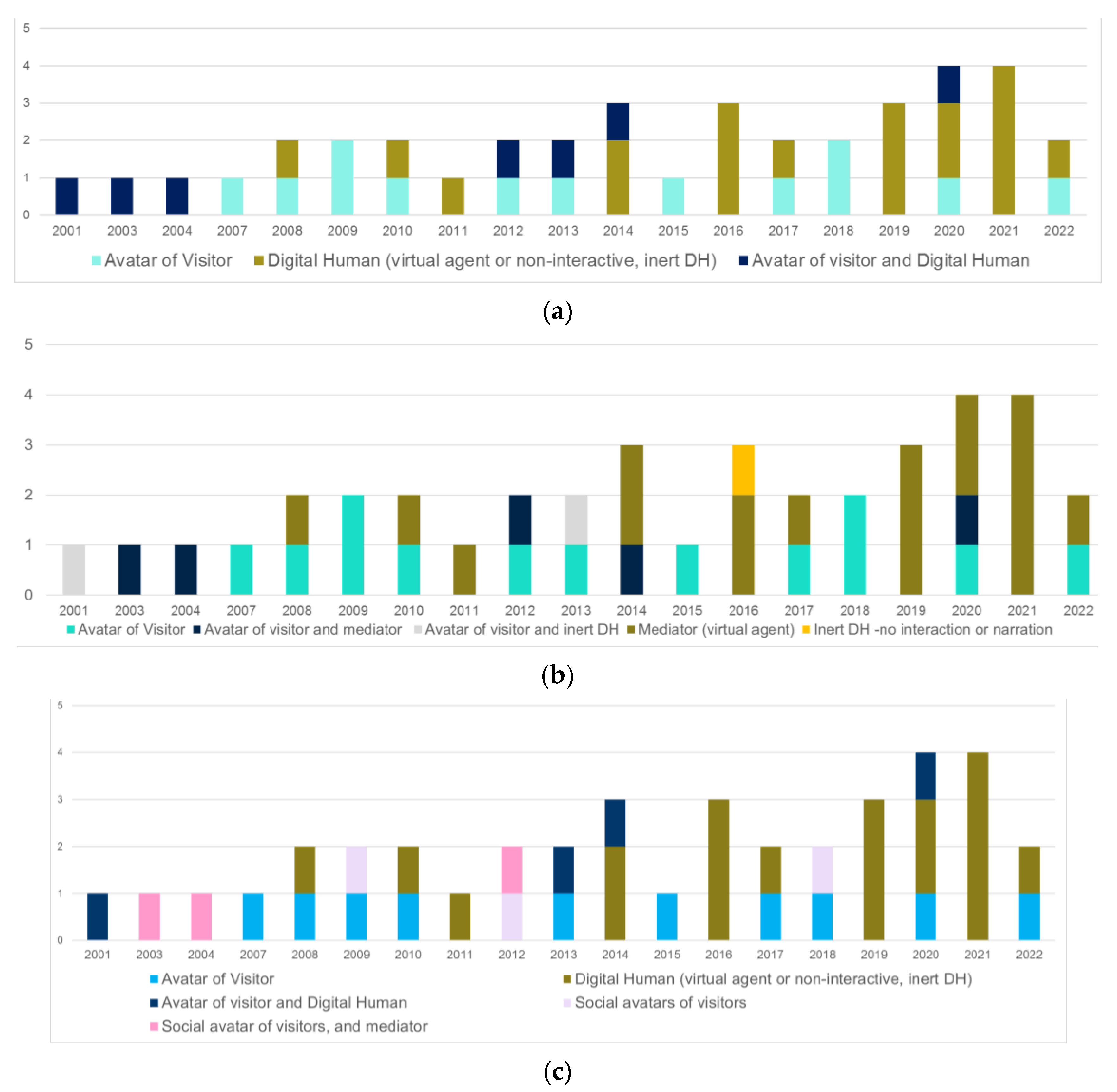

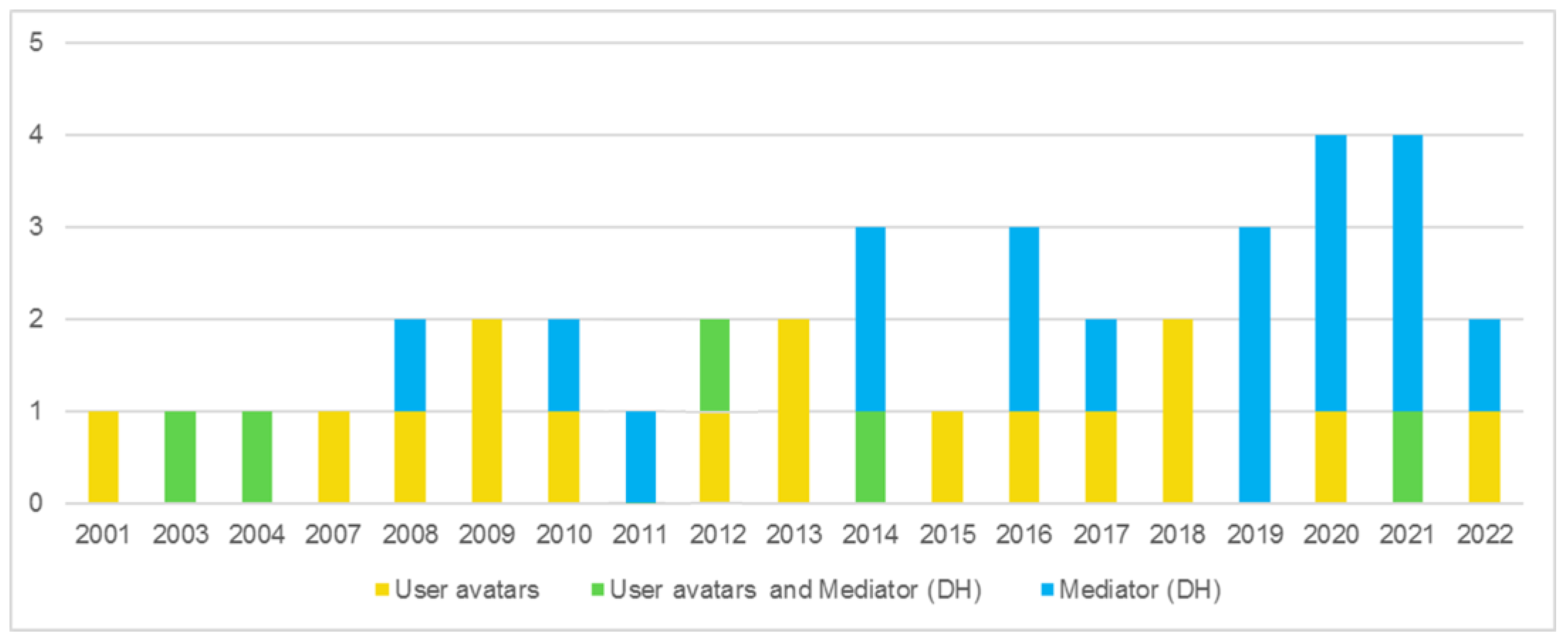

3.1. Typology of Virtual Agents and Avatars and Trends across Time

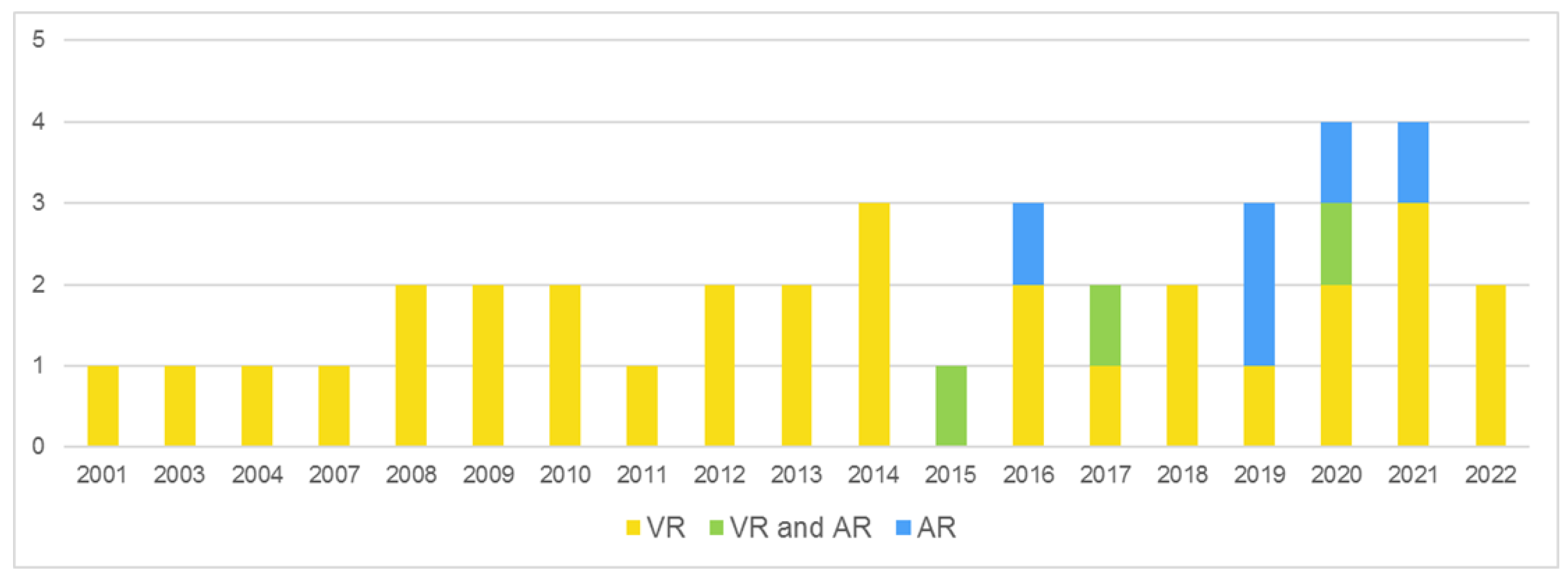

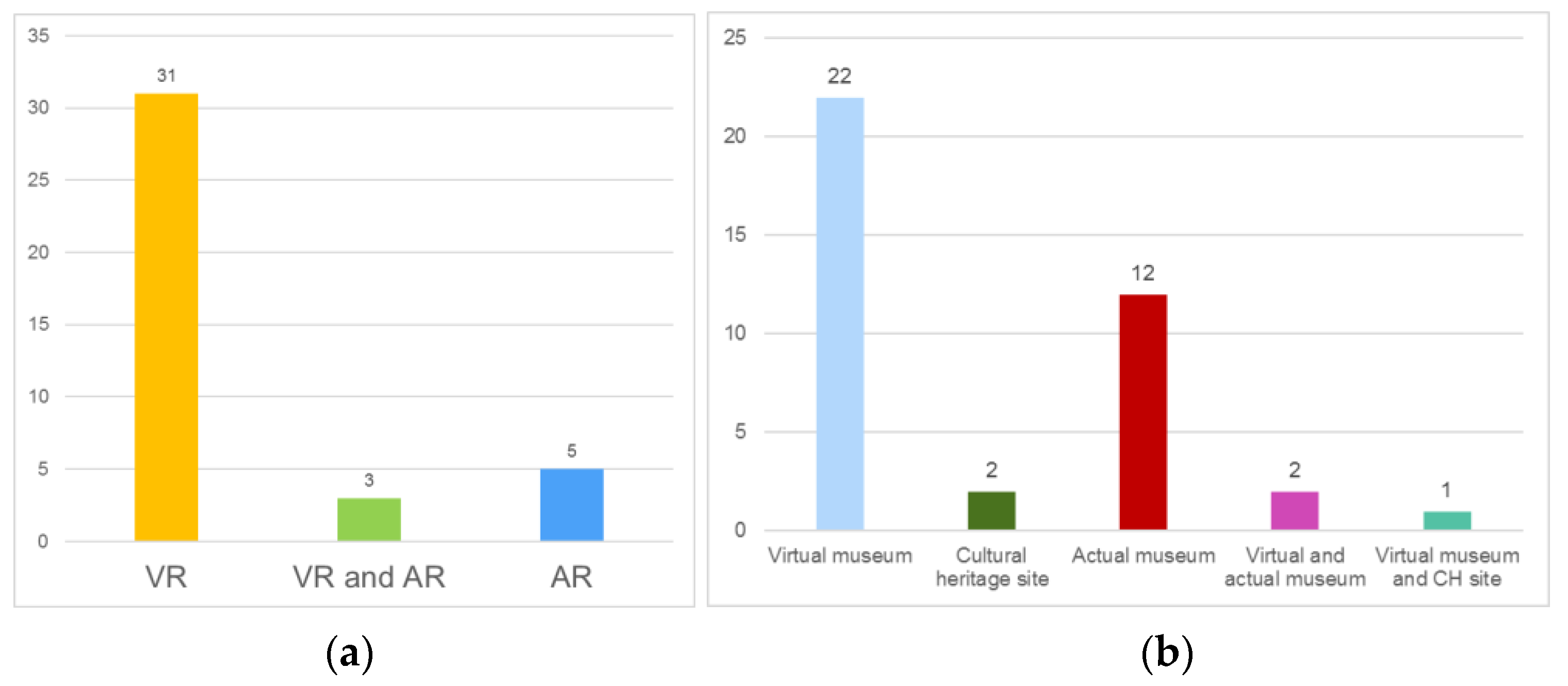

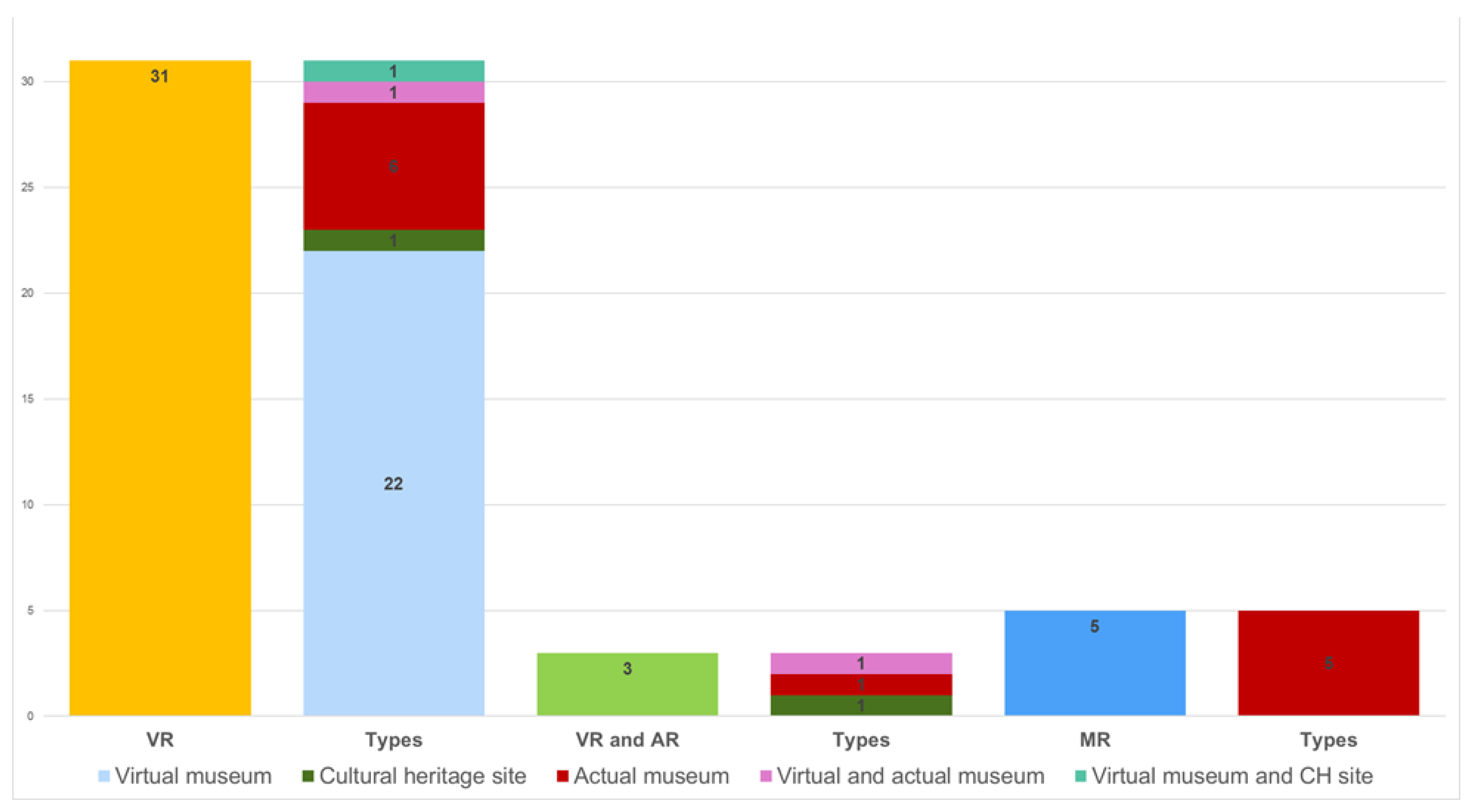

3.2. Uses of VR and MR

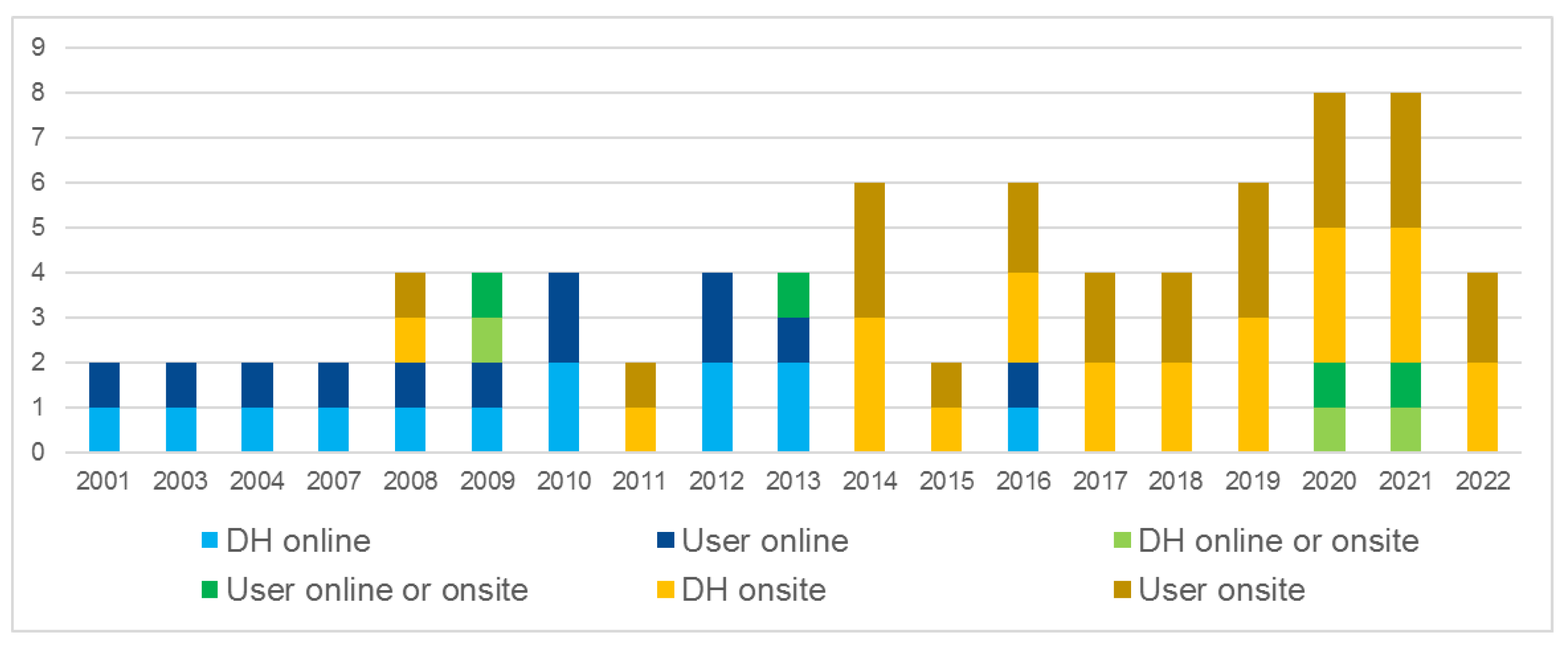

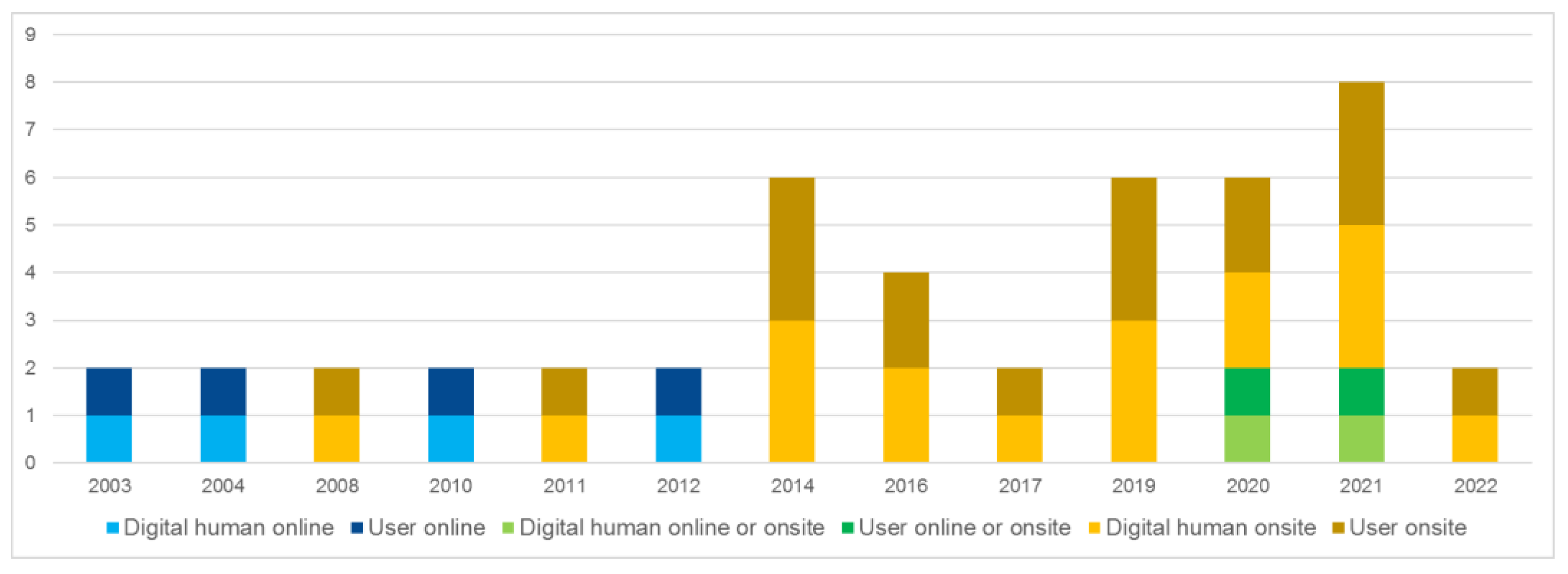

3.3. Uses of Digital Humans Onsite and Online

4. Discussion

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Kolesnichenko, A.; McVeigh-Schultz, J.; Isbister, K. Understanding Emerging Design Practices for Avatar Systems in the Commercial Social VR Ecology. In Proceedings of the DIS ’19: Designing Interactive Systems Conference, San Diego, CA, USA, 23–28 June 2019. [Google Scholar] [CrossRef]

- Nowak, K.L.; Rauh, C. The influence of the avatar on online perceptions of anthropomorphism, androgyny, credibility, homophily, and attraction. J. Comput.-Mediat. Commun. 2005, 11, 153–178. [Google Scholar] [CrossRef]

- Nowak, K.L.; Fox, J. Avatars and computer-mediated communication: A review of the definitions, uses, and effects of digital representations on communication. Rev. Commun. Res. 2018, 6, 30–53. [Google Scholar] [CrossRef]

- Machidon, O.M.; Duguleana, M.; Carrozzino, M. Virtual humans in cultural heritage ICT applications: A review. J. of Cult. Heritage 2018, 33, 249–260. [Google Scholar] [CrossRef]

- Carrozzino, M.A.; Galdieri, R.; Machidon, O.M.; Bergamasco, M. Do Virtual Humans Dream of Digital Sheep? IEEE Comp. Graph. Appl. 2020, 40, 71–83. [Google Scholar] [CrossRef] [PubMed]

- Sylaiou, S.; Fidas, C. First results of a survey concerning the use of digital human avatars in museums and cultural heritage sites. In Proceedings of the 2nd International Conference on Interactive Media, Smart Systems and Emerging Technologies (IMET 2022), Nicosia, Cyprus, 4–7 October 2022. [Google Scholar]

- Kico, I.; Zelníček, D.; Liarokapis, F. Assessing the Learning of Folk Dance Movements Using Immersive Virtual Reality. In Proceedings of the 24th International Conference Information Visualisation (IV), Melbourne, Australia, 7–11 September 2020; pp. 587–592. [Google Scholar] [CrossRef]

- Stergiou, M.; Vosinakis, S. Exploring costume-avatar interaction in digital dance experiences. In Proceedings of the 8th International Conference on Movement and Computing (MOCO ’22), Chicago, IL, USA, 22–25 June 2022. [Google Scholar] [CrossRef]

- Trajkova, M.; Alhakamy, A.; Cafaro, F.; Mallappa, R.; Kankara, S.R. Move Your Body: Engaging Museum Visitors with Human-Data Interaction. In Proceedings of the Conference on Human Factors in Computing Systems (CHI ’20), Honolulu, HI, USA, 25–30 April 2020. [Google Scholar] [CrossRef]

- Teixeira, N.; Lahm, B.; Peres, F.F.F.; Mauricio, C.R.M.; Xavier Natario Teixeira, J.M. Augmented Reality on Museums: The Ecomuseu Virtual Guide. In Proceedings of the Symposium on Virtual and Augmented Reality (SVR’21), Virtual Event, Brazil, 18–21 October 2021. [Google Scholar] [CrossRef]

- Breuss-Schneeweis, P. The speaking celt. In Proceedings of the 2016 ACM International Joint Conference on Pervasive and Ubiquitous Computing (UbiComp ’16), Heidelberg, Germany, 12–16 September 2016. [Google Scholar] [CrossRef]

- Geigel, J.; Shitut, K.S.; Decker, J.; Doherty, A.; Jacobs, G. The Digital Docent: XR storytelling for a Living History Museum. In Proceedings of the 26th ACM Symposium on Virtual Reality Software and Technology (VRST ’20), Virtual Event, Canada, 1–4 November 2020. [Google Scholar] [CrossRef]

- Rivera-Gutierrez, D.; Ferdig, R.; Li, J.; Lok, B. Getting the Point Across: Exploring the Effects of Dynamic Virtual Humans in an Interactive Museum Exhibit on User Perceptions. IEEE Trans. Vis. Comput. Graph. 2014, 20, 636–643. [Google Scholar] [CrossRef] [PubMed]

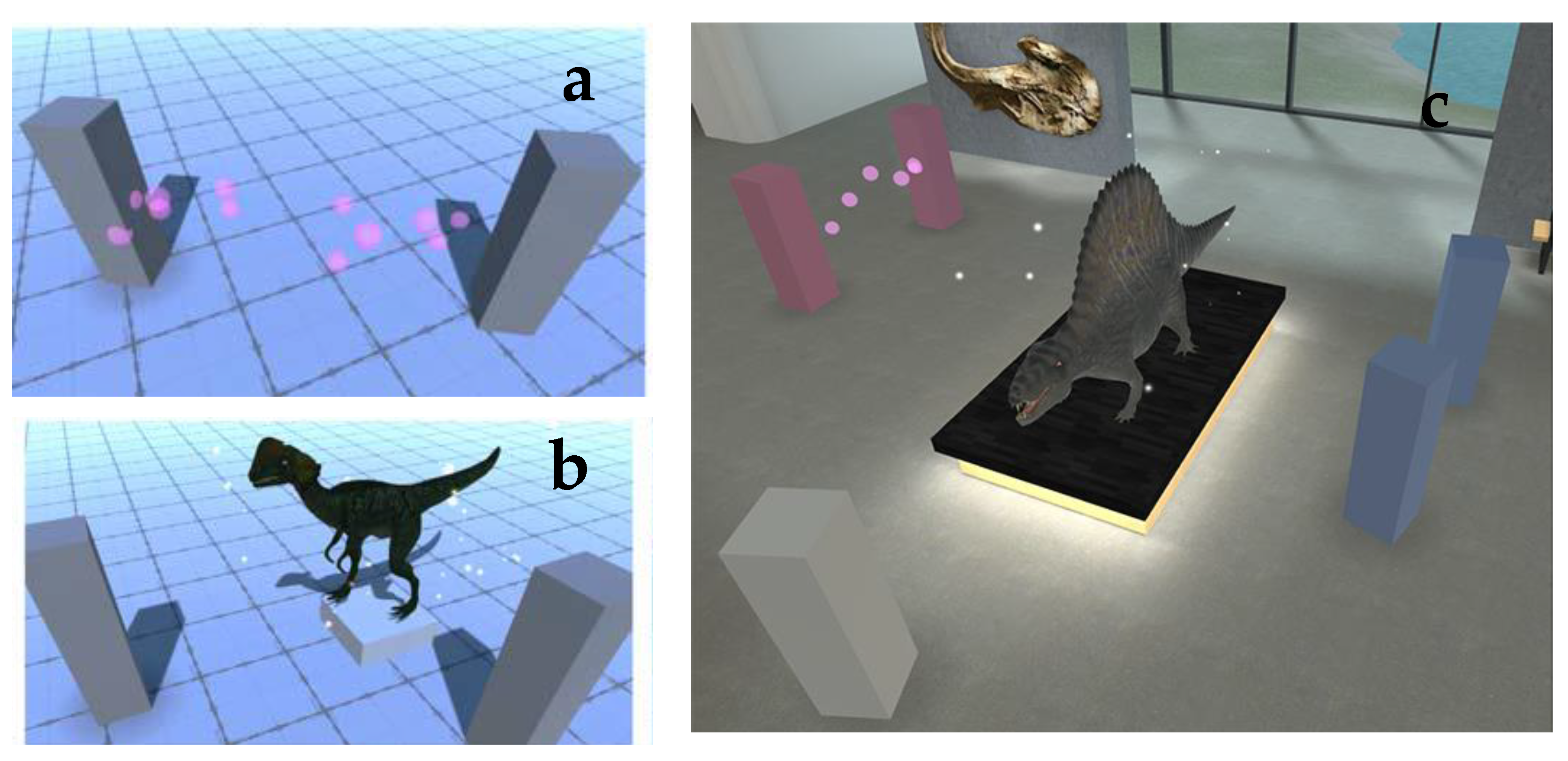

- Sylaiou, S.; Kasapakis, V.; Gavalas, D.; Djardanova, E. Avatars as Storytellers: Affective Narratives in Virtual Museums. J. Pers. Ubiquitous Comput. 2020, 24, 829–841. [Google Scholar] [CrossRef]

- Stylianidis, E.; Evangelidis, K.; Vital, R.; Dafiotis, P.; Sylaiou, S. 3D Documentation and Visualization of Cultural Heritage Buildings through the Application of Geospatial Technologies. Heritage 2022, 5, 2818–2832. [Google Scholar] [CrossRef]

- Rzayev, R.; Karaman, G.; Wolf, K.; Henze, N.; Schwind, V. The Effect of Presence and Appearance of Guides in Virtual Reality Exhibitions. In Proceedings of the Mensch und Computer 2019 (MuC’19), Hamburg, Germany, 8–11 September 2019. [Google Scholar] [CrossRef]

- Rzayev, R.; Karaman, G.; Henze, N.; Schwind, V. Fostering Virtual Guide in Exhibitions. In Proceedings of the 21st International Conference on Human-Computer Interaction with Mobile Devices and Services (MobileHCI ’19), Taipei, Taiwan, 1–4 October 2019. [Google Scholar] [CrossRef]

- Kim, Y.; Kesavadas, T.; Paley, S.M.; Sanders, D.H. “Real-time animation of King Ashur-nasir-pal II (883–859 BC) in the virtual recreated Northwest Palace. In Proceedings of the Seventh International Conference on Virtual Systems and Multimedia, Berkeley, CA, USA, 25–27 October 2001; pp. 128–136. [Google Scholar] [CrossRef]

- Chen, J.X.; Yang, Y.; Loffin, B. MUVEES: A PC-based multi-user virtual environment for learning. In Proceedings of the IEEE Virtual Reality, Los Angeles, CA, USA, 22–26 March 2003; pp. 163–170. [Google Scholar] [CrossRef]

- Tavares, T.A.; Oliveira, S.A.; Canuto, A.; Goncalves, L.M.; Filho, G.S. An infrastructure for providing communication among users of virtual cultural spaces. In Proceedings of the WebMedia and LA-Web, Ribeirao Preto, Brazil, 15 October 2004; pp. 54–61. [Google Scholar] [CrossRef]

- Sari, R.F. Interactive Object and Collision Detection Algorithm Implementation on a Virtual Museum based on Croquet. In Proceedings of the Innovations in Information Technologies (IIT), Dubai, United Arab Emirates, 18–20 November 2007; pp. 685–689. [Google Scholar] [CrossRef]

- Schulman, D.; Sharma, M.; Bickmore, T.W. The identification of users by relational agents. In Proceedings of the 7th international joint conference on Autonomous agents and multiagent systems (AAMAS), Estoril, Portugal, 12–16 May 2008; pp. 105–111. [Google Scholar]

- Xinyu, D.; Pin, J. Three Dimension Human Body Format and Its Virtual Avatar Animation Application. In Proceedings of the Second International Symposium on Intelligent Information Technology Application, Shanghai, China, 21–22 December 2008; pp. 1016–1019. [Google Scholar] [CrossRef]

- Pan, Z.; Chen, W.; Zhang, M.; Liu, J.; Wu, G. Virtual Reality in the Digital Olympic Museum. IEEE Comput. Graph. Appl. 2009, 29, 91–95. [Google Scholar] [CrossRef] [PubMed]

- Mu, B.; Yang, Y.; Zhang, J. Implementation of the Interactive Gestures of Virtual Avatar Based on a Multi-user Virtual Learning Environment. In Proceedings of the International Conference on Information Technology and Computer Science, Beijing, China, 8–11 August 2009; pp. 613–617. [Google Scholar] [CrossRef]

- Nimnual, R.; Chaisanit, S.; Suksakulchai, S. Interactive virtual reality museum for material packaging study. In Proceedings of the ICCAS 2010, Gyeonggi-do, Korea, 27–30 October 2010; pp. 1789–1792. [Google Scholar] [CrossRef]

- Dantas, R.R.; de Melo, J.C.P.; Lessa, J.; Schneider, C.; Teodósio, H.; Gonçalves, L.M.G. A path editor for virtual museum guides. In Proceedings of the IEEE International Conference on Virtual Environments, Human-Computer Interfaces and Measurement Systems, Taranto, Italy, 6–8 September 2010; pp. 136–140. [Google Scholar] [CrossRef]

- Pan, Z.; Jiang, R.; Liu, G.; Shen, C. Animating and Interacting with Ancient Chinese Painting—Qingming Festival by the Riverside. In Proceedings of the Second International Conference on Culture and Computing, Kyoto, Japan, 20–22 October 2011; pp. 3–6. [Google Scholar] [CrossRef]

- Oliver, I.; Miller, A.; Allison, C. Mongoose: Throughput Redistributing Virtual World. In Proceedings of the 21st International Conference on Computer Communications and Networks (ICCCN), Munich, Germany, 30 July–2 August 2012; pp. 1–9. [Google Scholar] [CrossRef]

- Hill, V.; Mystakidis, S. Maya Island virtual museum: A virtual learning environment, museum, and library exhibit. In Proceedings of the 18th International Conference on Virtual Systems and Multimedia, Milan, Italy, 2–5 September 2012; pp. 565–568. [Google Scholar] [CrossRef]

- Kyriakou, P.; Hermon, S. Building a dynamically generated virtual museum using a game engine. In Proceedings of the 2013 Digital Heritage International Congress (DigitalHeritage), Marseille, France, 28 October–1 November 2013; p. 443. [Google Scholar] [CrossRef]

- Dawson, T.; Vermehren, A.; Miller, A.; Oliver, I.; Kennedy, S. Digitally enhanced community rescue archaeology. In Proceedings of the 2013 Digital Heritage International Congress (DigitalHeritage), Marseille, France, 28 October–1 November 2013; pp. 29–36. [Google Scholar] [CrossRef]

- Tsoumanis, G.; Kavvadia, E.; Oikonomou, K. Changing the look of a city: The v-Corfu case. In Proceedings of the 5th International Conference on Information, Intelligence, Systems and Applications (IISA 2014), Chania, Greece, 7–9 July 2014; pp. 419–424. [Google Scholar] [CrossRef]

- Aguirrezabal, P.; Peral, R.; Pérez, A.; Sillaurren, S. Designing history learning games for museums. In Proceedings of the Virtual Reality International Conference (VRIC ’14), Laval, France, 9–11 April 2014. [Google Scholar] [CrossRef]

- Moreno, I.; Prakash, E.C.; Loaiza, D.F.; Lozada, D.A.; Navarro-Newball, A.A. Marker-less feature and gesture detection for an interactive mixed reality avatar. In Proceedings of the 20th Symposium on Signal Processing, Images and Computer Vision (STSIVA), Bogotá, Colombia, 2–4 September 2015; pp. 1–7. [Google Scholar] [CrossRef]

- Cafaro, A.; Vilhjálmsson, H.H.; Bickmore, T. First Impressions in Human-Agent Virtual Encounters. ACM Trans. Comput.-Hum. Interact. 2016, 23, 1–40. [Google Scholar] [CrossRef]

- Ghani, I.; Rafi, A.; Woods, P. Sense of place in immersive architectural virtual heritage environment. In Proceedings of the 2016 22nd International Conference on Virtual System & Multimedia (VSMM), Kuala Lumpur, Malaysia, 17–21 October 2016; pp. 1–8. [Google Scholar] [CrossRef]

- Bruno, F.; Lagudi, A.; Ritacco, G.; Agrafiotis, P.; Skarlatos, D.; Cejka, J.; Kouril, P.; Liarokapis, F.; Philpin-Briscoe, O.; Poullis, C.; et al. Development and integration of digital technologies addressed to raise awareness and access to European underwater cultural heritage. An overview of the H2020 i-MARECULTURE project. In Proceedings of the OCEANS 2017, Aberdeen, Scotland, 19–22 June 2017. [Google Scholar] [CrossRef]

- Linssen, J.; Theune, M. R3D3: The Rolling Receptionist Robot with Double Dutch Dialogue. In Proceedings of the Companion of the 2017 ACM/IEEE International Conference on Human-Robot Interaction (HRI ’17), Vienna, Austria, 6–9 March 2017. [Google Scholar] [CrossRef]

- Roth, D.; Klelnbeck, C.; Feigl, T.; Mutschler, C.; Latoschik, M.E. Beyond Replication: Augmenting Social Behaviors in Multi-User Virtual Realities. In Proceedings of the IEEE Conference on Virtual Reality and 3D User Interfaces (VR), Reutlingen, Germany, 18–22 March 2018; pp. 215–222. [Google Scholar] [CrossRef]

- Sorce, S.; Gentile, V.; Oliveto, D.; Barraco, R.; Malizia, A.; Gentile, A. Exploring Usability and Accessibility of Avatar-based Touchless Gestural Interfaces for Autistic People. In Proceedings of the 7th ACM International Symposium on Pervasive Displays (PerDis ’18), Munich, Germany, 6–8 June 2018. [Google Scholar] [CrossRef]

- Ali, G.; Le, H.-Q.; Kim, J.; Hwang, S.-W.; Hwang, J.-I. Design of Seamless Multi-modal Interaction Framework for Intelligent Virtual Agents in Wearable Mixed Reality Environment. In Proceedings of the 32nd International Conference on Computer Animation and Social Agents (CASA ’19), Paris, France, 1–3 July 2019. [Google Scholar] [CrossRef]

- Ye, Z.-M.; Chen, J.-L.; Wang, M.; Yang, Y.-L. PAVAL: Position-Aware Virtual Agent Locomotion for Assisted Virtual Reality Navigation. In Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Bari, Italy, 4–8 October 2021; pp. 239–247. [Google Scholar] [CrossRef]

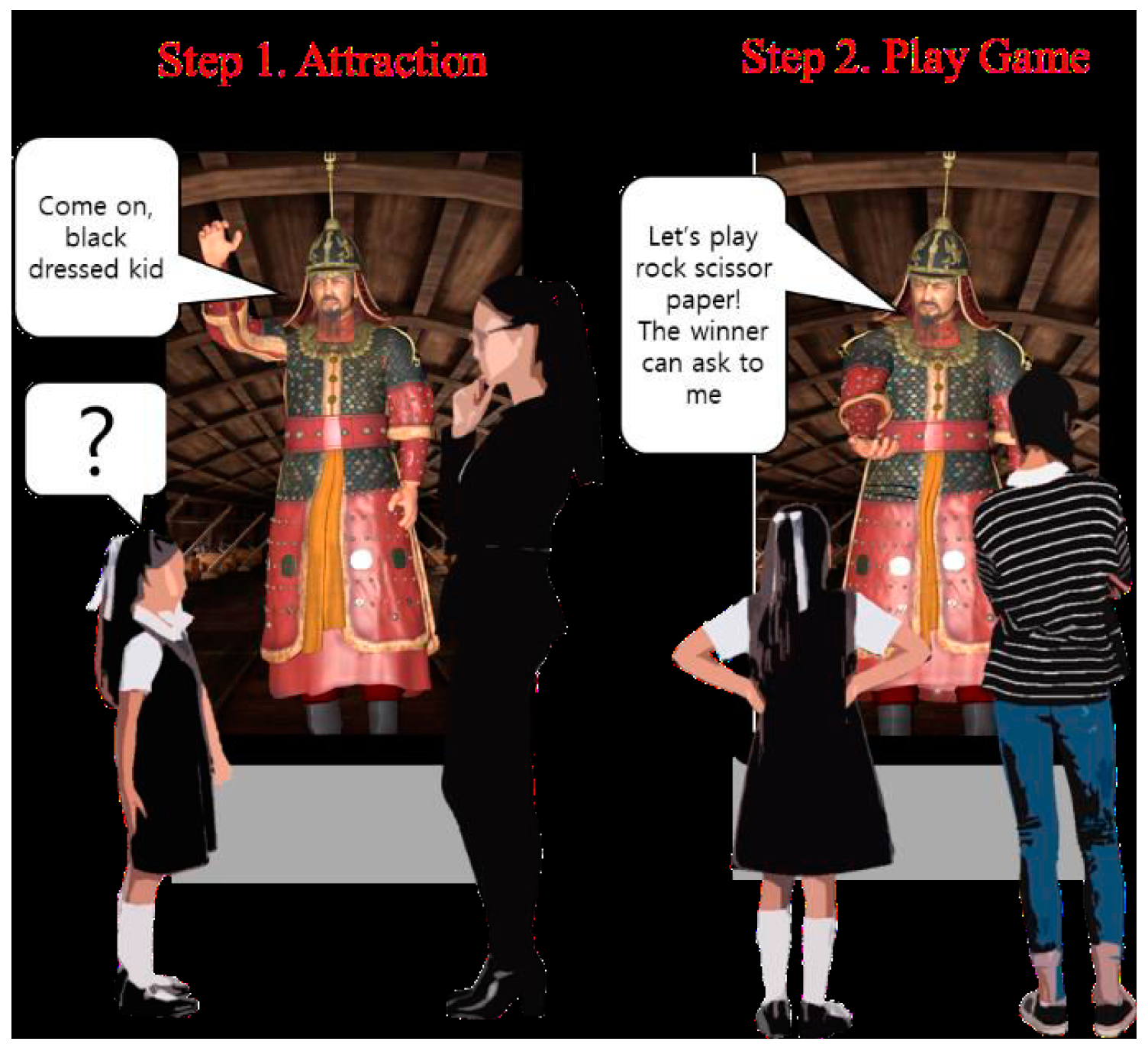

- Ko, J.-K.; Koo, D.W.; Kim, M.S. A Novel Affinity Enhancing Method for Human Robot Interaction—Preliminary Study with Proactive Docent Avatar. In Proceedings of the 21st International Conference on Control, Automation and Systems (ICCAS), Jeju, Korea, 12–15 October 2021; pp. 1007–1011. [Google Scholar] [CrossRef]

- Bönsch, A.; Hashem, D.; Ehret, J.; Kuhlen, T.W. Being Guided or Having Exploratory Freedom. In Proceedings of the 21st ACM International Conference on Intelligent Virtual Agents (IVA ’21), Fukuchiyama, Kyoto, Japan, 14–17 September 2021. [Google Scholar] [CrossRef]

- Li, P.; Wei, A.; Peng, F.; Zhang, N.; Chen, C.; Wei, Q. Virtual Reality Roaming System Design Based on Motor Imagery-Based Brain-Computer Interface. In Proceedings of the IEEE 6th Information Technology and Mechatronics Engineering Conference (ITOEC), Chongqing, China, 4–6 March 2022; pp. 1619–1623. [Google Scholar] [CrossRef]

- Bente, G.; Ruggenberg, S.; Kramer, N.C.; Eschenburg, F. Avatar-Mediated Networking: Increasing Social Presence and Interpersonal Trust in Net-Based Collaborations. Hum. Commun. Res. 2008, 34, 287–318. [Google Scholar] [CrossRef]

- Latoschik, M.E.; Roth, D.; Gall, D.; Achenbach, J.; Waltemate, T.; Botsch, M. The effect of avatar realism in immersive social virtual realities. In Proceedings of the 23rd ACM Symposium on Virtual Reality Software and Technology (VRST), Gothenburg, Sweden, 8–10 November 2017. [Google Scholar]

- Kopp, S.; Gesellensetter, L.; Krämer, N.C.; Wachsmuth, I. A conversational agent as museum guide—Design and evaluation of a real-world application. In IVA 2005. LNCS (LNAI); Panayiotopoulos, T., Gratch, J., Aylett, R., Ballin, D., Olivier, P., Rist, T., Eds.; Springer: Berlin/Heidelberg, Germany, 2005; Volume 3661, pp. 329–343. [Google Scholar] [CrossRef]

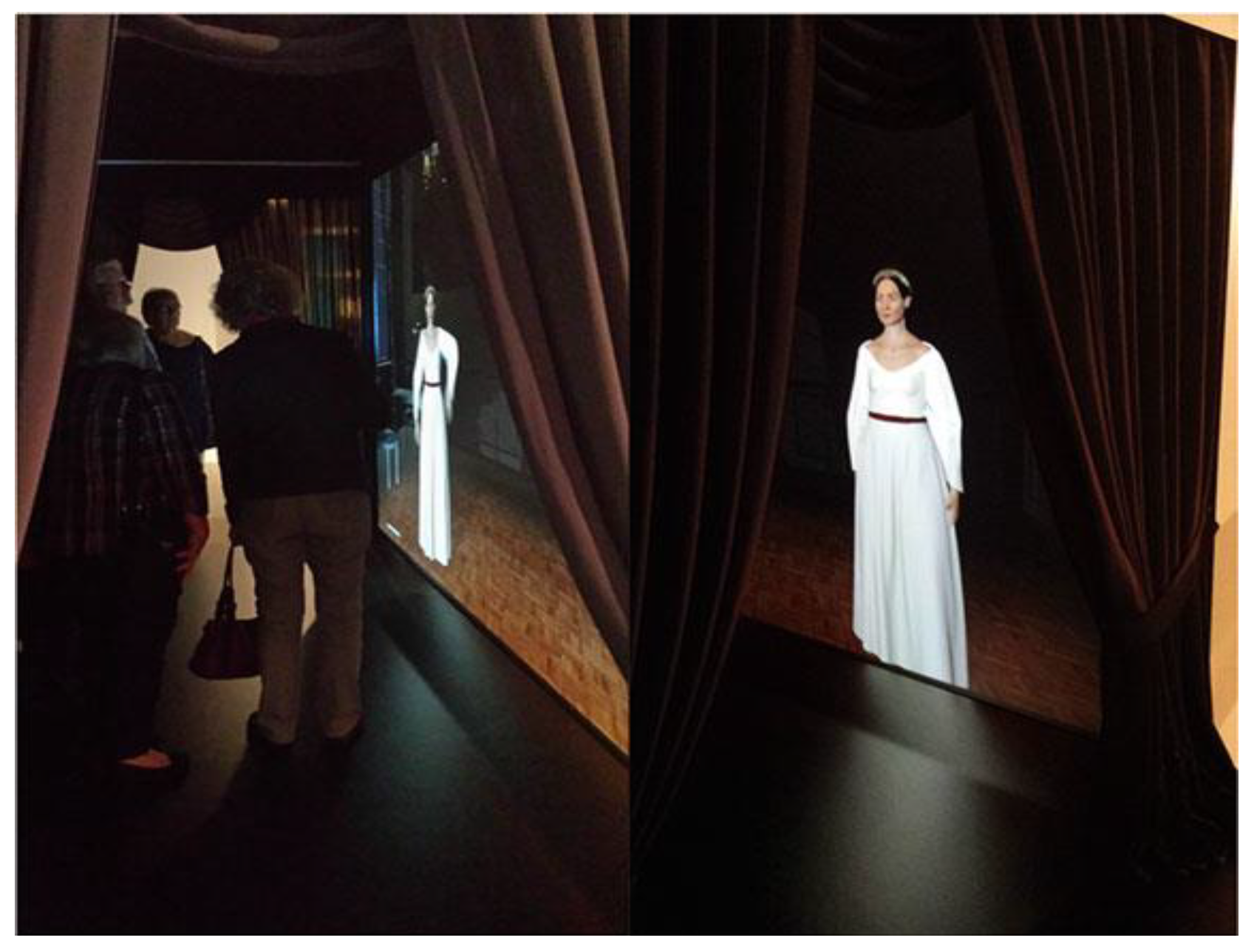

- Swartout, W.; Traum, D.; Artstein, R.; Noren, D.; Debevec, P.; Bronnenkant, K.; Williams, J.; Leuski, A.; Narayanan, S.; Piepol, D.; et al. Ada and Grace: Toward Realistic and Engaging Virtual Museum Guides. In Intelligent Virtual Agents. IVA 2010. Lecture Notes in Computer Science; Allbeck, J., Badler, N., Bickmore, T., Pelachaud, C., Safonova, A., Eds.; Springer: Berlin/Heidelberg, Germany, 2010; Volume 6356. [Google Scholar] [CrossRef]

- Sénécal, S.; Cadi, N.; Arévalo, M.; Magnenat-Thalmann, N. Modelling Life Through Time: Cultural Heritage Case Studies. In Mixed Reality and Gamification for Cultural Heritage; Ioannides, M., Magnenat-Thalmann, N., Papagiannakis, G., Eds.; Springer: Cham, Switzerland, 2017. [Google Scholar] [CrossRef]

- Yee, N.; Bailenson, J. The Proteus Effect: The Effect of Transformed Self-Representation on Behavior. Hum. Commun. Res. 2007, 33, 271–290. [Google Scholar] [CrossRef]

| Publication | VR | AR | On- Line | On- Site | Avatar of User | Digital Human | Virtual Museum | CH Site | Actual Museum | Lab or Museum/CH Site | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | Kim, Y. et al. (2001) [18] | X | X | X | X Inert | X | Lab | ||||

| 2 | Chen, J. X. et al. (2003) [19] | X | X | X Social | X | X | Lab | ||||

| 3 | Tavares T. A. et al. (2004) [20] | X | X | X Social | X | X | Lab | ||||

| 4 | Sari R. F. and Muliawan (2007) [21] | X | X | X | X | Lab | |||||

| 5 | Schulman D. et al. (2008) [22] | X | X | X | X | M/CHs | |||||

| 6 | Xinyu D. and J. Pin J. (2008) [23] | X | X | X | X | Lab | |||||

| 7 | Pan Z. et al. (2009) [24] | X | X | X | X | M/CHs | |||||

| 8 | Mu B. et al. (2009) [25] | X | X | X | X Social | X | Lab | ||||

| 9 | Nimnual B. et al. (2010) [26] | X | X | X | X | Lab | |||||

| 10 | Dantas R. R. et al. (2010) [27] | X | X | X | X | Lab | |||||

| 11 | Pan Z. et al. (2011) [28] | X | X | X | X | M/CHs | |||||

| 12 | Oliver I. et al. (2012) [29] | X | X | X Social | X | Lab | |||||

| 13 | Hill V. and Mystakidis S. (2012) [30] | X | X | X Social | X | X | M/CHs | ||||

| 14 | Kyriakou P. & Hermon S. (2013) [31] | X | X | X | X | X | Lab | ||||

| 15 | Dawson T. et al. (2013) [32] | X | X | X | X | X Inert | X | X | M/CHs | ||

| 16 | Tsoumanis G. et al. (2014) [33] | X | X | X | X | M/CHs | |||||

| 17 | Aguirrezabal, P. et al. (2014) [34] | X | X | X | X | X | Lab | ||||

| 18 | Rivera-Gutierrez D. et al. (2014) [13] | X | X | X | X | Museum | |||||

| 19 | Moreno I. et al. (2015) [35] | X | X | X | X | X | M/CHs | ||||

| 20 | Cafaro A. (2016) [36] | X | X | X | X | Lab | |||||

| 21 | Breuss-Schneeweis, P. (2016) [11] | X | X | X | X | M/CHs | |||||

| 22 | Ghani I. et al. (2016) [37] | X | X | X Inert | X | X | Lab | ||||

| 23 | Bruno F. et al. (2017) [38] | X | X | X | X | X | M/CHs | ||||

| 24 | Linssen, J., & Theune, M. (2017) [39] | X | X | X | X | Lab | |||||

| 25 | Roth D. et al. (2018) [40] | X | X | X Social | X | Lab | |||||

| 26 | Sorce, S. (2018) [41] | X | X | X | X | Lab | |||||

| 27 | Rzayev, R. et al. (2019a) [16] | X | X | X | X | Lab | |||||

| 28 | Rzayev, R. et al. (2019b) [17] | X | X | X | X | Lab | |||||

| 29 | Ali G. et al. (2019) [42] | X | X | X | X | Lab | |||||

| 30 | Geigel J. et al. (2020) [12] | X | X | X | X | X | X | X | M/CHs | ||

| 31 | Sylaiou S. et al. (2020) [14] | X | X | X | X | Lab | |||||

| 32 | Trajkova M. et al. (2020) [9] | X | X | X | X | M/CHs | |||||

| 33 | Kico I. et al. (2020) [7] | X | X | X | X | X | Lab | ||||

| 34 | Teixeira N. (2021) [10] | X | X | X | X | M/CHs | |||||

| 35 | Ye Z. -M. et al. (2021) [43] | X | X | X | X | X | Lab | ||||

| 36 | Ko J. -K. et al. (2021) [44] | X | X | X | X | M/CHs | |||||

| 37 | Bönsch A. et al. (2021) [45] | X | X | X | X | Lab | |||||

| 38 | Li P. et al. (2022) [46] | X | X | X | X | Lab | |||||

| 39 | Stergiou M. & Vosinakis S. (2022) [8] | X | X | X | Lab |

| Publication | Interface Device and Type of Technology | Description of Use and Interaction Type | |

|---|---|---|---|

| 1 | Kim Y. et al. (2001) [18] | VR Stereo glasses, large screen/cave | User interacts with content; inert DH |

| 2 | Chen J.X. et al. (2003) [19] | VR, PC screen, 3D VE | Multi-user learning VE |

| 3 | Tavares T.A. et al. (2004) [20] | VR, PC screen, 3D VE | Multi-user learning VE, virtual guide |

| 4 | Sari R.F. and Muliawan (2007) [21] | VR, PC screen, 3D VE | User interacts with content |

| 5 | Schulman D. et al. (2008) [22] | VR, large screen, onsite installation | User interacts with virtual guide |

| 6 | Xinyu D. and Pin J. (2008) [23] | VR, PC screen, 3D VE | Crowd user avatar; no interaction |

| 7 | Pan Z. et al. (2009) [24] | VR, PC screen, 3D VE | User interacts with content |

| 8 | Mu B. et al. (2009) [25] | VR, PC screen, 3D VE | User avatars’ gestural interaction |

| 9 | Nimnual B. et al. (2010) [26] | VR, PC screen, 3D VE | User interacts with content |

| 10 | Dantas R. R. et al. (2010) [27] | VR museum, PC screen | DH agent guides users |

| 11 | Pan Z. et al. (2011) [28] | VR, multi-screen projection, VE | Gesture interactions with system and DH |

| 12 | Oliver I. et al. (2012) [29] | VR, PC screen, 3D VE | Multi-user learning VE |

| 13 | Hill V. and Mystakidis S. (2012) [30] | VR, PC screen, 3D VE | DH agent provides information |

| 14 | Kyriakou P. & Hermon S. (2013) [31] | VR, PC screen, 3D VE | User interacts with content |

| 15 | Dawson T. et al. (2013) [32] | VR, multiple screens, 3D VE | Non-interactive VHs (illustrative) |

| 16 | Tsoumanis G. et al. (2014) [33] | VR, PC or projection screen, 3D VE | User interacts with content |

| 17 | Aguirrezabal, P. et al. (2014) [34] | VR, PC or projection screen, 3D VE | User interacts with content |

| 18 | Rivera-Gutierrez D. et al. (2014) [13] | VR, large screen, onsite installation | DH agent provides information to users |

| 19 | Moreno I. et al. (2015) [35] | VR/AR headset, Kinect sensor | User interacts with content |

| 20 | Cafaro A. (2016) [36] | Tablet, large screen, VE | User responds to DH questions |

| 21 | Breuss-Schneeweis, P. (2016) [11] | AR app, smartphone | Visitors prompt VH narrations |

| 22 | Ghani I. et al. (2016) [37] | VR headset, 3D VE | User interacts with content |

| 23 | Bruno F. et al. (2017) [38] | VR headset/controller and tablet | User interacts with content |

| 24 | Linssen, J., & Theune, M. (2017) [39] | Screen held by actual robot | User interacts with VH on screen |

| 25 | Roth D. et al. (2018) [40] | VR headset, Immersive 3D V Env. | User interacts with content |

| 26 | Sorce, S. (2018) [41] | Projection on screen, sensors | User interacts with content |

| 27 | Rzayev, R. et al. (2019a) [16] | VR headset, Immersive 3D V Env. | DH provides information |

| 28 | Rzayev, R. et al. (2019b) [17] | AR headset | DH provides information |

| 29 | Ali G. et al. (2019) [42] | AR headset | User interacts with content |

| 30 | Geigel J. et al. (2020) [12] | AR headset/mobile, VR headset/PC | Users prompt VH narrations |

| 31 | Sylaiou S. et al. (2020) [14] | VR headset, Immersive 3D V Env. | Users prompt VH narrations |

| 32 | Trajkova M. et al. (2020) [9] | Large screen, PC, sensors, camera | Users interact with content, gesture cmnd. |

| 33 | Kico I. et al. (2020) [7] | VR headset, 3D VE | Users watch and mimic DH |

| 34 | Teixeira N. (2021) [10] | AR app., portable device. | Users interact with content, DH |

| 35 | Ye Z. -M. et al. (2021) [43] | VR headset, 3D VE | Users interact with content, DH |

| 36 | Ko J. -K. et al. (2021) [44] | Large screen, PC, sensors, camera | Users interact with content, DH |

| 37 | Bönsch A. et al. (2021) [45] | VR HMD, 3D VE, sensors | Users interact with content, DH |

| 38 | Li P. et al. (2022) [46] | BCI equipment/sensors, PC screen | Users interact with content |

| 39 | Stergiou M. & Vosinakis S. (2022) [8] | VR headset, 3D VE | Users watch and mimic DH |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sylaiou, S.; Fidas, C. Virtual Humans in Museums and Cultural Heritage Sites. Appl. Sci. 2022, 12, 9913. https://doi.org/10.3390/app12199913

Sylaiou S, Fidas C. Virtual Humans in Museums and Cultural Heritage Sites. Applied Sciences. 2022; 12(19):9913. https://doi.org/10.3390/app12199913

Chicago/Turabian StyleSylaiou, Stella, and Christos Fidas. 2022. "Virtual Humans in Museums and Cultural Heritage Sites" Applied Sciences 12, no. 19: 9913. https://doi.org/10.3390/app12199913

APA StyleSylaiou, S., & Fidas, C. (2022). Virtual Humans in Museums and Cultural Heritage Sites. Applied Sciences, 12(19), 9913. https://doi.org/10.3390/app12199913