XAI Systems Evaluation: A Review of Human and Computer-Centred Methods

Abstract

1. Introduction

2. Background

2.1. Types of ML Explanations

- Attribution-based explanations: These type of explanations aim to rank or assign an importance value to input features based on their relevance to the final prediction. These are, arguably, some of the most common explanations to be evaluated in the literature.

- Model-based explanations: These explanations are represented by models used to interpret the task model. These can be the task model itself or other more interpretable post-hoc models created for that purpose. Common metrics to evaluate these are related to model size (e.g., decision trees depth or number of non-zero weights in linear models).

- Example-based explanations: As the name implies, Example-based explanations provide an understanding of the predictive models through representative examples or high-level concepts. When analysing specific instances, these methods can either return examples with the same prediction or with different ones (counterfactual example).

2.2. Importance of XAI Evaluation

2.3. Current Taxonomies for XAI Evaluation

2.4. Shortfalls of Current XAI Evaluation

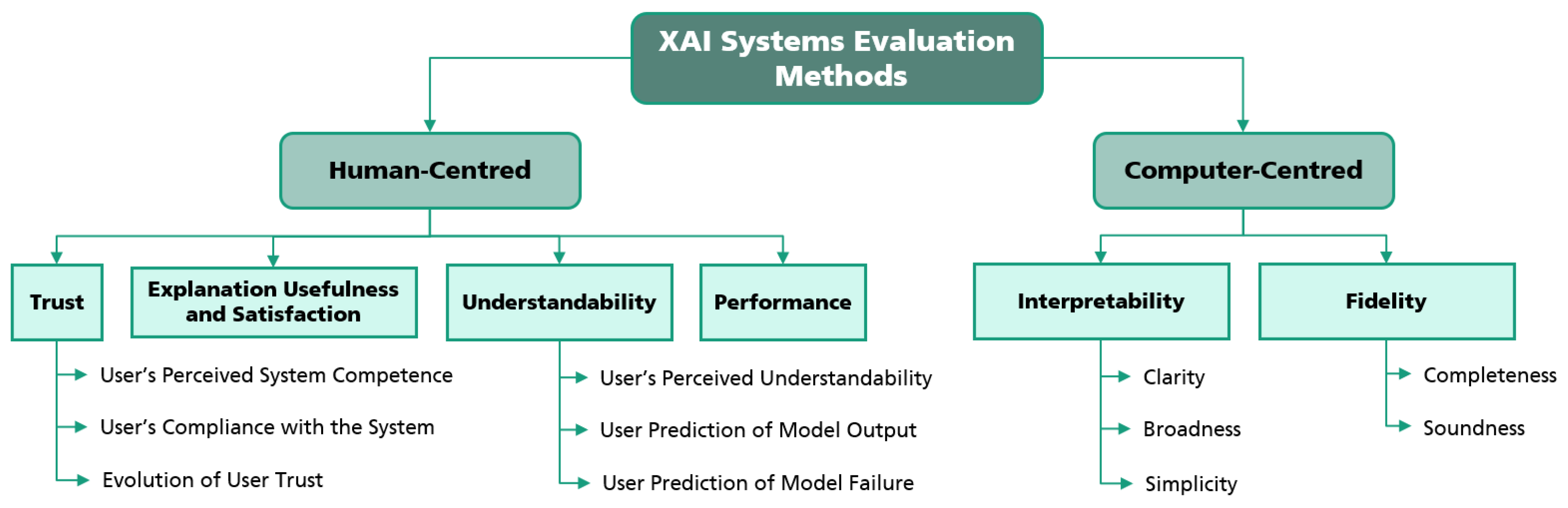

3. Taxonomy for XAI Systems Evaluation Methods

4. Human-Centred Evaluation Methods

4.1. Trust

4.2. Understandability

4.3. Explanation Usefulness and Satisfaction

4.4. Performance

4.5. Summary

5. Computer-Centred Evaluation Methods

5.1. Interpretability

5.2. Fidelity

5.3. Summary

6. Discussion

Limitations

7. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| XAI | eXplainable Artificial Intelligence |

| ML | Machine Learning |

| HCI | Human–computer interaction |

References

- Vilone, G.; Longo, L. Notions of explainability and evaluation approaches for explainable artificial intelligence. Inf. Fusion 2021, 76, 89–106. [Google Scholar] [CrossRef]

- General Data Protection Regulation (GDPR)–Official Legal Text. Available online: https://gdpr-info.eu/ (accessed on 15 May 2022).

- Mohseni, S.; Zarei, N.; Ragan, E.D. A multidisciplinary survey and framework for design and evaluation of explainable AI systems. ACM Trans. Interact. Intell. Syst. (TiiS) 2021, 11, 1–45. [Google Scholar] [CrossRef]

- Miller, T. Explanation in Artificial Intelligence: Insights from the Social Sciences. Artif. Intell. 2019, 267, 1–38. [Google Scholar] [CrossRef]

- Liao, Q.V.; Varshney, K.R. Human-Centered Explainable AI (XAI): From Algorithms to User Experiences. arXiv 2022, arXiv:2110.10790. [Google Scholar]

- Markus, A.F.; Kors, J.A.; Rijnbeek, P.R. The role of explainability in creating trustworthy artificial intelligence for health care: A comprehensive survey of the terminology, design choices, and evaluation strategies. J. Biomed. Inform. 2021, 113, 103655. [Google Scholar] [CrossRef] [PubMed]

- Eiband, M.; Buschek, D.; Kremer, A.; Hussmann, H. The Impact of Placebic Explanations on Trust in Intelligent Systems. In Extended Abstracts of the 2019 CHI Conference on Human Factors in Computing Systems; ACM: Glasgow, UK, 2019; pp. 1–6. [Google Scholar] [CrossRef]

- Herman, B. The promise and peril of human evaluation for model interpretability. arXiv 2017, arXiv:1711.07414. [Google Scholar]

- Doshi-Velez, F.; Kim, B. Towards a rigorous science of interpretable machine learning. arXiv 2017, arXiv:1702.08608. [Google Scholar]

- Zhou, J.; Gandomi, A.H.; Chen, F.; Holzinger, A. Evaluating the quality of machine learning explanations: A survey on methods and metrics. Electronics 2021, 10, 593. [Google Scholar] [CrossRef]

- Van der Waa, J.; Nieuwburg, E.; Cremers, A.; Neerincx, M. Evaluating XAI: A Comparison of Rule-Based and Example-Based Explanations. Artif. Intell. 2021, 291, 103404. [Google Scholar] [CrossRef]

- Adadi, A.; Berrada, M. Peeking Inside the Black-Box: A Survey on Explainable Artificial Intelligence (XAI). IEEE Access 2018, 6, 52138–52160. [Google Scholar] [CrossRef]

- Hedstrom, A.; Weber, L.; Bareeva, D.; Motzkus, F.; Samek, W.; Lapuschkin, S.; Hohne, M.M.C. Quantus: An Explainable AI Toolkit for Responsible Evaluation of Neural Network Explanations. arXiv 2022, arXiv:2202.06861. [Google Scholar]

- Kahneman, D. Thinking, Fast and Slow; Macmillan: New York, NY, USA, 2011. [Google Scholar]

- Gunning, D.; Aha, D. DARPA’s Explainable Artificial Intelligence (XAI) Program. AI Mag. 2019, 40, 44–58. [Google Scholar] [CrossRef]

- Bhatt, U.; Xiang, A.; Sharma, S.; Weller, A.; Taly, A.; Jia, Y.; Ghosh, J.; Puri, R.; Moura, J.M.F.; Eckersley, P. Explainable Machine Learning in Deployment. arXiv 2020, arXiv:1909.06342. [Google Scholar]

- Bussone, A.; Stumpf, S.; O’Sullivan, D. The Role of Explanations on Trust and Reliance in Clinical Decision Support Systems. In Proceedings of the 2015 International Conference on Healthcare Informatics, Dallas, TX, USA, 21–23 October 2015; pp. 160–169. [Google Scholar] [CrossRef]

- Cahour, B.; Forzy, J.F. Does Projection into Use Improve Trust and Exploration? An Example with a Cruise Control System. Saf. Sci. 2009, 47, 1260–1270. [Google Scholar] [CrossRef]

- Berkovsky, S.; Taib, R.; Conway, D. How to Recommend? User Trust Factors in Movie Recommender Systems. In Proceedings of the 22nd International Conference on Intelligent User Interfaces, Limassol, Cyprus, 13–16 March 2017; Association for Computing Machinery: New York, NY, USA, 2017; pp. 287–300. [Google Scholar] [CrossRef]

- Nourani, M.; Kabir, S.; Mohseni, S.; Ragan, E.D. The Effects of Meaningful and Meaningless Explanations on Trust and Perceived System Accuracy in Intelligent Systems. In Proceedings of the AAAI Conference on Human Computation and Crowdsourcing, Washington, DC, USA, 28–30 October 2019; Volume 7, pp. 97–105. [Google Scholar]

- Yin, M.; Wortman Vaughan, J.; Wallach, H. Understanding the Effect of Accuracy on Trust in Machine Learning Models. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems, Glasgow, UK, 4–9 May 2019; Association for Computing Machinery: New York, NY, USA, 2019; pp. 1–12. [Google Scholar] [CrossRef]

- Kunkel, J.; Donkers, T.; Michael, L.; Barbu, C.M.; Ziegler, J. Let Me Explain: Impact of Personal and Impersonal Explanations on Trust in Recommender Systems. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems, Glasgow, UK, 4–9 May 2019; ACM: Glasgow, UK, 2019; pp. 1–12. [Google Scholar] [CrossRef]

- Holliday, D.; Wilson, S.; Stumpf, S. User Trust in Intelligent Systems: A Journey Over Time. In Proceedings of the 21st International Conference on Intelligent User Interfaces, Sonoma, CA, USA, 7–10 March 2016; Association for Computing Machinery: New York, NY, USA, 2016; pp. 164–168. [Google Scholar] [CrossRef]

- Yang, X.J.; Unhelkar, V.V.; Li, K.; Shah, J.A. Evaluating Effects of User Experience and System Transparency on Trust in Automation. In Proceedings of the 2017 ACM/IEEE International Conference on Human-Robot Interaction, Vienna, Austria, 6–9 March 2017; ACM: Vienna, Austria, 2017; pp. 408–416. [Google Scholar] [CrossRef]

- Zhang, Y.; Liao, Q.V.; Bellamy, R.K.E. Effect of Confidence and Explanation on Accuracy and Trust Calibration in AI-assisted Decision Making. In Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, Barcelona, Spain, 27–30 January 2020; ACM: Barcelona, Spain, 2020; pp. 295–305. [Google Scholar] [CrossRef]

- Gleicher, M. A Framework for Considering Comprehensibility in Modeling. Big Data 2016, 4, 75–88. [Google Scholar] [CrossRef]

- Madsen, M.; Gregor, S. Measuring Human-Computer Trust. In Proceedings of the 11th Australasian Conference on Information Systems, Brisbane, Australia, 6–8 December 2000; pp. 6–8. [Google Scholar]

- Rader, E.; Gray, R. Understanding User Beliefs About Algorithmic Curation in the Facebook News Feed. In Proceedings of the 33rd Annual ACM Conference on Human Factors in Computing Systems, Seoul, Korea, 18–23 April 2015; ACM: Seoul, Korea, 2015; pp. 173–182. [Google Scholar] [CrossRef]

- Lim, B.Y.; Dey, A.K. Assessing Demand for Intelligibility in Context-Aware Applications. In Proceedings of the 11th International Conference on Ubiquitous Computing, Orlando, FL, USA, 30 September–3 October 2009; Association for Computing Machinery: New York, NY, USA, 2009; pp. 195–204. [Google Scholar] [CrossRef]

- Nothdurft, F.; Richter, F.; Minker, W. Probabilistic Human-Computer Trust Handling. In Proceedings of the 15th Annual Meeting of the Special Interest Group on Discourse and Dialogue (SIGDIAL), Philadelphia, PA, USA, 18–20 June 2014; Association for Computational Linguistics: Philadelphia, PA, USA, 2014; pp. 51–59. [Google Scholar] [CrossRef]

- Ribeiro, M.T.; Singh, S.; Guestrin, C. “ Why should i trust you?” Explaining the predictions of any classifier. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13–17 August 2016; pp. 1135–1144. [Google Scholar]

- Ribeiro, M.T.; Singh, S.; Guestrin, C. Anchors: High Precision Model-Agnostic Explanations. In Proceedings of the AAAI Conference on Artificial Intelligence, New Orleans, LA, USA, 2–7 February 2018; Volume 32. [Google Scholar] [CrossRef]

- Bansal, G.; Nushi, B.; Kamar, E.; Weld, D.S.; Lasecki, W.S.; Horvitz, E. Updates in Human-AI Teams: Understanding and Addressing the Performance/Compatibility Tradeoff. In Proceedings of the AAAI Conference on Artificial Intelligence, Honolulu, HI, USA, 27 January–1 February 2019; Volume 33, pp. 2429–2437. [Google Scholar] [CrossRef]

- Bansal, G.; Nushi, B.; Kamar, E.; Lasecki, W.; Weld, D.S.; Horvitz, E. Beyond Accuracy: The Role of Mental Models in Human-AI Team Performance. In Proceedings of the Seventh AAAI Conference on Human Computation and Crowdsourcing, Washington, DC, USA, 28 October 2019; Volume 7, p. 10. [Google Scholar]

- Nushi, B.; Kamar, E.; Horvitz, E. Towards Accountable AI: Hybrid Human-Machine Analyses for Characterizing System Failure. arXiv 2018, arXiv:1809.07424. [Google Scholar]

- Shen, H.; Huang, T.H.K. How Useful Are the Machine-Generated Interpretations to General Users? A Human Evaluation on Guessing the Incorrectly Predicted Labels. arXiv 2020, arXiv:2008.11721. [Google Scholar]

- Kulesza, T.; Stumpf, S.; Burnett, M.; Wong, W.K.; Riche, Y.; Moore, T.; Oberst, I.; Shinsel, A.; McIntosh, K. Explanatory Debugging: Supporting End-User Debugging of Machine-Learned Programs. In Proceedings of the 2010 IEEE Symposium on Visual Languages and Human-Centric Computing, Leganes, Spain, 21–25 September 2010; pp. 41–48. [Google Scholar] [CrossRef]

- Binns, R.; Van Kleek, M.; Veale, M.; Lyngs, U.; Zhao, J.; Shadbolt, N. ’It’s Reducing a Human Being to a Percentage’; Perceptions of Justice in Algorithmic Decisions. In Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems, Montreal, QC, Canada, 21–26 April 2018; pp. 1–14. [Google Scholar] [CrossRef]

- Kim, B.; Wattenberg, M.; Gilmer, J.; Cai, C.; Wexler, J.; Viegas, F.; Sayres, R. Interpretability Beyond Feature Attribution: Quantitative Testing with Concept Activation Vectors (TCAV). In Proceedings of the 35th International Conference on Machine Learning, Stockholm, Sweden, 10–15 July 2018; Volume 80, pp. 2668–2677. [Google Scholar]

- Lakkaraju, H.; Bach, S.H.; Leskovec, J. Interpretable decision sets: A joint framework for description and prediction. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13–17 August 2016; pp. 1675–1684. [Google Scholar]

- Gedikli, F.; Jannach, D.; Ge, M. How Should I Explain? A Comparison of Different Explanation Types for Recommender Systems. Int. J.-Hum.-Comput. Stud. 2014, 72, 367–382. [Google Scholar] [CrossRef]

- Lim, B.Y.; Dey, A.K.; Avrahami, D. Why and Why Not Explanations Improve the Intelligibility of Context-Aware Intelligent Systems. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Boston, MA, USA, 4–9 April 2009; Association for Computing Machinery: New York, NY, USA, 2009; pp. 2119–2128. [Google Scholar] [CrossRef]

- Kahng, M.; Andrews, P.Y.; Kalro, A.; Chau, D.H. ActiVis: Visual Exploration of Industry-Scale Deep Neural Network Models. arXiv 2017, arXiv:1704.01942. [Google Scholar] [CrossRef]

- Strobelt, H.; Gehrmann, S.; Pfister, H.; Rush, A.M. LSTMVis: A Tool for Visual Analysis of Hidden State Dynamics in Recurrent Neural Networks. arXiv 2017, arXiv:1606.07461. [Google Scholar] [CrossRef]

- Coppers, S.; Van den Bergh, J.; Luyten, K.; Coninx, K.; van der Lek-Ciudin, I.; Vanallemeersch, T.; Vandeghinste, V. Intellingo: An Intelligible Translation Environment. In Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems, CHI ’18, Montreal, QC, Canada, 21–26 April 2018; Association for Computing Machinery: New York, NY, USA, 2018; pp. 1–13. [Google Scholar] [CrossRef]

- Poursabzi-Sangdeh, F.; Goldstein, D.G.; Hofman, J.M.; Wortman Vaughan, J.W.; Wallach, H. Manipulating and Measuring Model Interpretability. In Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems, Yokohama, Japan, 8–13 May 2021; Association for Computing Machinery: New York, NY, USA, 2021; pp. 1–52. [Google Scholar]

- Bunt, A.; Lount, M.; Lauzon, C. Are Explanations Always Important?: A Study of Deployed, Low-Cost Intelligent Interactive Systems. In Proceedings of the 2012 ACM International Conference on Intelligent User Interfaces, Lisbon, Portugal, 14–17 February 2012; Association for Computing Machinery: New York, NY, USA, 2012; pp. 169–178. [Google Scholar]

- Samuel, S.Z.S.; Kamakshi, V.; Lodhi, N.; Krishnan, N.C. Evaluation of Saliency-based Explainability Method. arXiv 2021, arXiv:2106.12773. [Google Scholar]

- ElShawi, R.; Sherif, Y.; Al-Mallah, M.; Sakr, S. Interpretability in healthcare: A comparative study of local machine learning interpretability techniques. Comput. Intell. 2021, 37, 1633–1650. [Google Scholar] [CrossRef]

- Honegger, M. Shedding light on black box machine learning algorithms: Development of an axiomatic framework to assess the quality of methods that explain individual predictions. arXiv 2018, arXiv:1808.05054. [Google Scholar]

- Nguyen, A.; Martínez, M. On Quantitative Aspects of Model Interpretability. arXiv 2020, arXiv:2007.07584. [Google Scholar]

- Slack, D.; Friedler, S.A.; Scheidegger, C.; Roy, C.D. Assessing the local interpretability of machine learning models. arXiv 2019, arXiv:1902.03501. [Google Scholar]

- Hara, S.; Hayashi, K. Making tree ensembles interpretable. arXiv 2016, arXiv:1606.05390. [Google Scholar]

- Deng, H. Interpreting tree ensembles with intrees. Int. J. Data Sci. Anal. 2019, 7, 277–287. [Google Scholar] [CrossRef]

- Lakkaraju, H.; Kamar, E.; Caruana, R.; Leskovec, J. Interpretable & explorable approximations of black box models. arXiv 2017, arXiv:1707.01154. [Google Scholar]

- Bhatt, U.; Weller, A.; Moura, J.M. Evaluating and aggregating feature-based model explanations. arXiv 2020, arXiv:2005.00631. [Google Scholar]

- Bau, D.; Zhou, B.; Khosla, A.; Oliva, A.; Torralba, A. Network dissection: Quantifying interpretability of deep visual representations. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 6541–6549. [Google Scholar]

- Zhang, Q.; Wu, Y.N.; Zhu, S.C. Interpretable convolutional neural networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 8827–8836. [Google Scholar]

- Zhang, Q.; Cao, R.; Shi, F.; Wu, Y.N.; Zhu, S.C. Interpreting cnn knowledge via an explanatory graph. In Proceedings of the AAAI Conference on Artificial Intelligence, New Orleans, LA, USA, 2–7 February 2018; Volume 32. [Google Scholar]

- Laugel, T.; Lesot, M.J.; Marsala, C.; Renard, X.; Detyniecki, M. The dangers of post-hoc interpretability: Unjustified counterfactual explanations. arXiv 2019, arXiv:1907.09294. [Google Scholar]

- Plumb, G.; Al-Shedivat, M.; Cabrera, A.A.; Perer, A.; Xing, E.; Talwalkar, A. Regularizing black-box models for improved interpretability. arXiv 2019, arXiv:1902.06787. [Google Scholar]

- Alvarez Melis, D.; Jaakkola, T. Towards robust interpretability with self-explaining neural networks. Adv. Neural Inf. Process. Syst. 2018, 31, 1087–1098. [Google Scholar]

- Alvarez-Melis, D.; Jaakkola, T.S. On the robustness of interpretability methods. arXiv 2018, arXiv:1806.08049. [Google Scholar]

- Sundararajan, M.; Taly, A.; Yan, Q. Axiomatic attribution for deep networks. In Proceedings of the 34th International Conference on Machine Learning, Sydney, NSW, Australia, 6–11 August 2017; Volume 70, pp. 3319–3328. [Google Scholar]

- Montavon, G.; Samek, W.; Muller, K.R. Methods for interpreting and understanding deep neural networks. Digit. Signal Process. 2018, 73, 1–15. [Google Scholar] [CrossRef]

- Kindermans, P.J.; Hooker, S.; Adebayo, J.; Alber, M.; Schütt, K.T.; Dähne, S.; Erhan, D.; Kim, B. The (un) reliability of saliency methods. In Explainable AI: Interpreting, Explaining and Visualizing Deep Learning; Springer: Berlin/Heidelberg, Germany, 2019; pp. 267–280. [Google Scholar]

- Ylikoski, P.; Kuorikoski, J. Dissecting explanatory power. Philos. Stud. 2010, 148, 201–219. [Google Scholar] [CrossRef]

- Yeh, C.K.; Hsieh, C.Y.; Suggala, A.; Inouye, D.I.; Ravikumar, P.K. On the (in) fidelity and sensitivity of explanations. Adv. Neural Inf. Process. Syst. 2019, 32, 10967–10978. [Google Scholar]

- Deng, H.; Zou, N.; Du, M.; Chen, W.; Feng, G.; Hu, X. A Unified Taylor Framework for Revisiting Attribution Methods. In Proceedings of the AAAI Conference on Artificial Intelligence, Vancouver, DC, USA, 2–9 February 2021; Volume 35, pp. 11462–11469. [Google Scholar]

- Kohlbrenner, M.; Bauer, A.; Nakajima, S.; Binder, A.; Samek, W.; Lapuschkin, S. Towards best practice in explaining neural network decisions with LRP. In Proceedings of the 2020 International Joint Conference on Neural Networks (IJCNN), Glasgow, UK, 19–24 July 2020; pp. 1–7. [Google Scholar]

- Hooker, S.; Erhan, D.; Kindermans, P.J.; Kim, B. A benchmark for interpretability methods in deep neural networks. arXiv 2018, arXiv:1806.10758. [Google Scholar]

- Samek, W.; Binder, A.; Montavon, G.; Lapuschkin, S.; Müller, K.R. Evaluating the visualization of what a deep neural network has learned. IEEE Trans. Neural Netw. Learn. Syst. 2016, 28, 2660–2673. [Google Scholar] [CrossRef]

- Adebayo, J.; Gilmer, J.; Muelly, M.C.; Goodfellow, I.; Hardt, M.; Kim, B. Sanity Checks for Saliency Maps. Adv. Neural Inf. Process. Syst. 2018, 31, 9505–9515. [Google Scholar]

- Ignatiev, A. Towards Trustable Explainable AI. In Proceedings of the 29th International Joint Conference on Artificial Intelligence, Yokohama, Japan, 7–15 January 2021; pp. 5154–5158. [Google Scholar]

- Langer, E.; Blank, A.; Chanowitz, B. The Mindlessness of Ostensibly Thoughtful Action: The Role of “Placebic” Information in Interpersonal Interaction. J. Personal. Soc. Psychol. 1978, 36, 635–642. [Google Scholar] [CrossRef]

- Buçinca, Z.; Lin, P.; Gajos, K.Z.; Glassman, E.L. Proxy Tasks and Subjective Measures Can Be Misleading in Evaluating Explainable AI Systems. In Proceedings of the 25th International Conference on Intelligent User Interfaces, Cagliari, Italy, 17–20 March 2020; pp. 454–464. [Google Scholar] [CrossRef]

- Kaklauskas, A.; Jokubauskas, D.; Cerkauskas, J.; Dzemyda, G.; Ubarte, I.; Skirmantas, D.; Podviezko, A.; Simkute, I. Affective analytics of demonstration sites. Eng. Appl. Artif. Intell. 2019, 81, 346–372. [Google Scholar] [CrossRef]

| Category | Sub-Category |

|---|---|

| Trust: a variable factor shaped by user interaction across time and usage, which affects how comfortable the user is when using the XAI system. User perception influences its beliefs on the XAI system outputs. | User’s Perceived System Competence: depicts the user position on how capable an ML system is when solving a particular task. |

| User’s Compliance with the System: focused on understanding if the user would rely on the system’s decision or not to act upon a task. | |

| Evolution of User Trust: represents how a user’s Trust can vary across time and usage of a particular ML system. | |

| Explanation Usefulness and Satisfaction: two inherently connected aspects relevant to assess user experience. The same explanation can imply different levels of Usefulness, depending on the information revealed, and can also lead to a different level of user Satisfaction. | |

| Understandability: the ability to outline the relation between the input and output of a particular system with respect to its parameters. It is usually defined as a user’s mental model of the system and its underlying functions. | User’s Perceived Understandability: depicts the user understanding of system’s underlying functions. |

| User Prediction of Model Output: focused on understanding if the user is able to define model behaviour on a particular instance or kind of data. | |

| User Prediction of Model Failure: focused on understanding if the user is correctly able to identify the scenarios where the system fails a particular task. | |

| Performance: the performance of ML systems usually depends not only on the models but also on their respective users. Evaluating the performance of both agents and their interaction is essential to assess the expected performance on real scenarios. |

| Category | Sub-Category |

|---|---|

| Interpretability: implies that the explanation should be understandable to humans, being important to manage the social interaction of explainability. | Clarity: implies that the explanation should be unambiguous. |

| Broadness: describes how generally applicable is an explanation. | |

| Simplicity: implies that the explanation is presented in a simple and compact form. | |

| Fidelity: implies that the explanations should accurately describe model behaviour in the entire feature space, being important to assist in verifying other model desiderata or discover new insights of explainability. | Completeness: implies that the explanation describes the entire dynamic of the ML model. |

| Soundness: describes how correct and truthful is an explanation. |

| Reference | Category | Sub-Category | Research Question | Methods and/or Metrics | XAI Type |

|---|---|---|---|---|---|

| Coppers et al., 2018 [45] | Explanation Usefulness and Satisfaction | - | What is the impact of intelligibility on the Perceived Value, Trust, Usage, Performance, and User Satisfaction for different translation aids? | SUS questionnaire and 5-point Likert scale to evaluate Perceived Usefulness, general Usability and the perception of the following features: understandable, useful, enjoyable, trustworthy, improves quality and efficiency. | Attribution-based |

| Bunt et al., 2012 [47] | Explanation Usefulness and Satisfaction | - | To what extent does the participant want to know more about how the system generates its intelligent behaviour? | Qualitative interviews, 2-week diary study and 7-point Likert scale to evaluate perceived utility, perceived accuracy and matching expectations. | Example-based |

| Lim et al., 2009 [42] | Explanation Usefulness and Satisfaction“ | - | How to evaluate if different types of explanations improve the users perception of system’s Usefulness and Satisfaction? | Survey that asked users to explain how the system works and to report their perceptions of the explanations and system in terms of Usefulness, Satisfaction, Understandability, and Trust. | Model-based |

| Ribeiro et al., 2016 [31] | Performance | - | Can non-experts improve a classifier through explanations? | Iterative model Performance assessment through explanations based on feature importance. | Attribution-based |

| Ribeiro et al., 2018 [32] | Performance | - | How do explanations influence user Performance when trying to predict model behaviour on unseen instances? | Coverage: fraction of instances predicted after seeing the explanation; Precision: Fraction of correct predictions; Time: Seconds the user took to complete the task per prediction. | Attribution-based |

| Bansal et al., 2019 [34] | Performance | - | How updates to an AI system can affect human-AI team Performance? | ROC: Team Performance metric; Compatibility score: fraction of examples on which the older model version recommends the correct action, the new model version also recommends the correct action; Locally-compatible update: Whether the action given by the new model version affect the a user’s mental model created during an older model version usage; Globally-compatible update: Update is compatible for all mental-models (users). | Model-based |

| Lim et al., 2009 [42] | Performance | - | How to evaluate if different types of explanations lead to better task Performance? | Task Performance: evaluated total learning time and average time completion. | Model-based |

| Holliday et al., 2016 [23] | Trust | Evolution of User Trust | How does user trust evolve over time? | 7-point Likert scale to indicate the extent of agreement with a statement of trust in the system before and after performing a task assisted by the system; Think aloud protocol: through which authors identified four factors of trust: perceived system ability, perceived control, perceived predictability, and perceived transparency. | Attribution-based |

| Yang et al., 2017 [24] | Trust | Evolution of User Trust | How does user trust evolve and stabilize over time as humans gain more experience interacting with automation? | TrustEND: trust rating elicited after the terminal trial T); TrustAUTC: Area Under the Trust Curve; and Truste: Trust of entirety; Response Rates (RR) and Response Times (RT) of system Reliance (trusting the automation in the absence of threat alarms) and system compliance (trusting the automation in the presence of one or more threats). | Model-based |

| Eiband et al., 2019 [7] | Trust | User’s Compliance with the System | Do placebic explanations invoke similar levels of Trust as real explanations? | 5-point Likert scale questionnaire to evaluate user’s perception of Trust. | Attribution-based |

| Nourani et al., 2019 [20] | Trust | User’s Perceived System Competence | How local explanations influence user perception of model’s accuracy? | Implicit perceived accuracy: percentage of responses where participants predicted correct system classifications; Explicit perceived accuracy: users’ numerical estimate of the system’s accuracy on a scale 0 to 100%. | Attribution-based |

| Kunkel et al.., 2019 [22] | Trust | User’s Perceived System Competence | How to evaluate the impact of explanations on user Trust in recommender systems? | 5-point rating scale to evaluate explanations quality. | Example-based |

| Yin et al.., 2019 [21] | Trust | User’s Perceived System Competence | How model’s stated accuracy affects Trust? | Agreement fraction: percentage of tasks in which users’ final prediction matched model’s predictions; Switch fraction: percentage of tasks in which the users revised their predictions to match model’s predictions. | Model-based |

| Zhang et al., 2020 [25] | Trust | User’s Perceived System Competence | How Trust, Accuracy and Confidence score on Trust calibration are affected by: (1) showing AI’s prediction versus not showing, and (2) knowing to have more domain knowledge than the AI? | Switch percentage: how often participants chose the AI’s predictions as their final predictions); Agreement percentage: trials in which the participant’s final prediction agreed with the AI’s prediction). | Model-based |

| Samuel et al., 2021 [48] | Trust, Understandability | User Prediction of Model Output | What is the impact of showing AI Performance and predictions’ explanations on people’s Trust in the AI and the decision outcome? | Human subject experiments with 5-Likert scale surveys to evaluate predictability, reliability and consistency. | Attribution-based |

| Kim et al., 2018 [39] | Trust, Understandability | User Prediction of Model Output | How to quantitatively evaluate what information saliency maps are able to communicate to humans? | 10-point Likert scale to evaluate how important they thought the image and the caption were to the model. 5-point Likert scale for evaluating how confident they were in their answers. Evaluated accessibility, customization, plug-in readiness and global quantification. | Model-based |

| Shen et al., 2020 [36] | Understandability | User Prediction of Model Failure | How useful is showing machine-generated visual interpretations in helping users understand automated system errors? | Accuracy of human inferences on model misclassification. | Attribution-based |

| Nushi et al., 2018 [35] | Understandability | User Prediction of Model Failure | How detailed Performance views can be beneficial for analysis and debugging? | Human Satisfaction: Indicates whether the user agrees with the image / caption pair presented; System Performance / Prediction Accuracy: Accuracy of the system prediction compared to ground truth (defined by human Satisfaction). | Model-based |

| Ribeiro et al., 2016 [31] | Understandability | User Prediction of Model Output | Do explanations lead to insights? | A counter of how many models each human subject trusts, and open-ended questions to indicate their reasoning behind their decision. | Attribution-based |

| Ribeiro et al., 2018 [32] | Understandability | User Prediction of Model Output | How do explanations influence user Understandability when trying to predict model behaviour on unseen instances? | Coverage: fraction of instances predicted after seeing the explanation; Precision: Fraction of correct predictions; Time: Seconds the user took to complete the task per prediction. | Attribution-based |

| Nourani et al., 2019 [20] | Understandability | User’s Perceived Understandability | How human perceived meaningfulness of explanation affects their perception of model accuracy? | Post-study questionnaire and think-aloud approach to evaluate implicit perceived accuracy and explicit perceived accuracy. | Attribution-based |

| Nothdurft et al., 2014 [30] | Understandability | User’s Perceived Understandability | How different explanations goals affect human-computer Trust? | Evaluate Perceived Understandability, Perceived Technical Competence, Perceived Reliability, Personal Attachment and Faith. | Attribution-based |

| Lim et al., 2009 [42] | Understandability | User’s Perceived Understandability | How to evaluate if different types of explanations help users better understand the system? | User understanding: evaluated correctness and detail of reasons participants provided by participants in a Fill-in-the-Blanks test and a Reasoning test. | Model-based |

| Reference | Category | Sub-Category | Research Question | Methods and/or Metrics | XAI Type |

|---|---|---|---|---|---|

| Lakkaraju et al., 2016 [40] | Fidelity | Completeness | How to evaluate Completeness of rule-based models? | Fraction of classes: Measures what fraction of the class labels in the data are predicted by at least one rule (optimal value is 1 - every class is described by some rule). | Model-based |

| Ignatiev, 2020 [74] | Fidelity | Completeness | How to evaluate if explanations hold in the entire instance space? | Correctness: percentage of correct, incorrect and redundant explanations. | Model-based |

| Nguyen et al., 2007 [51] | Fidelity | Soundness | How to measure the strength and direction of association between attributes and explanations? | Monotonicity: Spearman’s correlation between feature’s absolute Performance measure of interest and corresponding expectations. | Attribution-based |

| Nguyen et al., 2007 [51] | Fidelity | Soundness | How data processing changed the information content of the original samples (target-level analysis)? | Target Mutual Information: Measured between extracted features and corresponding target values (e.g., class labels). | Attribution-based |

| Nguyen et al., 2007 [51] Ylikoski et al., 2010 [67] | Fidelity | Soundness | How robust is an explanation to unimportant details? | Non-sensitivity: Cardinality of the symmetric difference between features with assigned zero attribution and features to which the model is not functionally dependent on. | Attribution-based |

| Bhatt et al., 2020 [56] | Fidelity | Soundness | Does the explanation captures which features the predictor used to generate an output? | Faithfulness: Correlation between the differences in function outputs and the sum of random attribution subsets replaced with baseline values. | Attribution-based |

| Bhatt et al., 2020 [56] | Fidelity | Soundness | How sensitive are explanation functions to perturbations in the model inputs? | Sensitivity: If inputs are near each other and their model outputs are similar, then their explanations should be close to each other. | Attribution-based |

| Alvarez-Melis et al., 2018 [62] | Fidelity | Soundness | Are relevance features scores indicative of ”true“ importance? | Faithfulness: Correlation between the model’s Performance drops when removing certain features and the attributions. | Attribution-based |

| Alvarez-Melis et al., 2018 [63] Plumb et al., 2019 [61] | Fidelity | Soundness | How consistent are the explanations for similar/neighboring examples? | Robustness: Local Lipschitz estimate. | Attribution-based |

| Sundararajan et al., 2017 [64] | Fidelity | Soundness | How sensitive are explanation functions to small perturbations in the model inputs? | Sensitivity: Measures the degree to which the explanation is affected by insignificant perturbations from the test point. | Attribution-based |

| Sundararajan et al.,2017 [64] | Fidelity | Soundness | Are attributions identical for functionally equivalent networks with different implementations? | Implementation invariance: Measures the similarity between explanations provided by two functionally equivalent networks. | Attribution-based |

| Montavon et al., 2018 [65] | Fidelity | Soundness | How fast the prediction value goes down when removing features with the highest relevance scores? | Selectivity: Measures the ability of an explanation to give relevance to variables that have the strongest impact on the prediction value. | Attribution-based |

| Kindermans et al., 2019 [66] | Fidelity | Soundness | Is the explanation method input invariant, i.e., mirrors the behaviour of the predictive model with respect to transformations of the input? | Input invariance: Method that demonstrates that there is at least one input transformation that causes a target explanation method to attribute incorrectly. | Attribution-based |

| Yeh et al., 2019 [68] | Fidelity | Soundness | How sensitive are explanation functions to small perturbations in the model inputs? | Max-sensitivity: Calculated based on the maximum change in the explanation when adding small perturbations to the input. | Attribution-based |

| Yeh et al., 2019 [68] | Fidelity | Soundness | Does the explanation method captures how the predictor function changes in the face of perturbations? | Explanation infidelity: Expected difference between the dot product of the input perturbation to the explanation and the output perturbation (i.e., the difference in function values after significant perturbations on the input). | Attribution-based |

| Kohlbrenner et al., 2020 [70] | Fidelity | Soundness | Does the explanation method reflect the object understanding of the model closely, i.e., both predictions and explanations are only based on the object itself? | Attribution localization: Ratio between the sum of positive relevance inside a bounding box and the total positive sum of relevance in the image. | Attribution-based |

| Hooker et al., 2018 [71] | Fidelity | Soundness | How to avoid that distribution shift influences the estimated feature importance? | Remove and retrain (ROAR): Remove the data points estimated to be most important, and retraining the model to measure the degradation of model Performance. | Attribution-based |

| Samek et al., 2016 [72] | Fidelity | Soundness | How predictions change when the most relevant data points are progressively removed? | Region perturbation via MoRF (Most Relevant First): Measure how the class encoded in the image disappears when the information is progressively removed from the image using an ordered sequence of locations by relevance. | Attribution-based |

| Adebayo et al., 2018 [73] | Fidelity | Soundness | How to assess similarity between two visual explanations? | Spearman rank correlation with absolute value (absolute value), and without absolute value (diverging); SSIM: The structural similarity index; Pearson correlation: Correlation of the histogram of gradients (HOGs) derived from two maps. | Attribution-based |

| Kim et al., 2018 [39] | Fidelity | Soundness | How to quantify the concept importance of a particular class? | TCAV score (TCAVq): measures the positive and negative influence of a defined concept on a particular activation layer of a model. | Attribution-based |

| Laugel et al., 2019 [60] | Fidelity | Soundness | How to evaluate the behaviour of a post-hoc Interpretability method in the presence of counterfactual explanation? | Justification score: Binary score that equals 1 if the counterfactual explanation is justified, 0 if unjustified; Average justification score: Average value of the justification score computed over multiple instances and multiple runs. | Example-based |

| Ribeiro et al., 2016 [31] | Fidelity | Soundness | How to evaluate if explanations retrieve the most important features for the model? | Recall of important features: Measures the amount of a gold set of features considered important by the model that are recovered by the explanations. | Model-based |

| Ribeiro et al., 2016 [31] | Fidelity | Soundness | How explanations can be used to select between competing models with similar Performance? | Trustworthiness: Prediction analysis after adding noisy and untrustworthy features. | Model-based |

| Lakkaraju et al., 2017 [55] | Fidelity | Soundness | How to evaluate if transparent approximations used as explanations capture the black-box model behaviour in all parts of the feature space? | Disagreement: Percentage of predictions in which the label assigned by the model explanation does not match the label assigned by the black box model. | Model-based |

| Plumb et al., 2019 [61] | Fidelity | Soundness | How each feature influences the model’s prediction in a certain neighborhood? | Neighborhood-fidelity (NF): Accuracy of the model in a certain neighborhood. | Model-based |

| Nguyen et al., 2007 [51] | Fidelity | Completeness | How to measure the representativeness of Example-based explanations? | Non-representativeness: Performance measure of interest (e.g.,cross-entropy) between the predictions of interest and model outputs, divided by the number of examples. | Example-based |

| Nguyen et al., 2007 [51] | Interpretability | Broadness and Simplicity | How data processing changed the information content of the original samples (feature-level analysis)? | Feature mutual information: Measured between original samples and corresponding features extracted for explanations. | Attribution-based |

| Nguyen et al., 2007 [51] | Interpretability | Broadness and Simplicity | How to assess the effects of non-important features? | Effective complexity: Minimum number of attribution-ordered features that can meet an expected Performance measure of interest. | Attribution-based |

| Montavon et al., 2018 [65] | Interpretability | Clarity | If model response to certain data points are nearly equivalent, are the respective explanations also nearly equivalent? | Continuity: Measures the strongest variation of the explanation in the input domain. | Attribution-based |

| Bau et al., 2017 [57] | Interpretability | Clarity | How to evaluate explanations’ Clarity using human-labeled visual concepts? | Network Dissection: Measure the intersection between the internal convolutional units and pixel-level semantic concepts previously annotated in an image. | Attribution-based |

| Zhang et al., 2018 [58] | Interpretability | Clarity | How to evaluate explanations’ Clarity through the semantic meaningfulness of CNN filters? | Location instability: Measured by the distance between known image landmarks and inference locations (areas with highest activation scores). | Attribution-based |

| Lakkaraju et al., 2017 [55] | Interpretability | Clarity | How to measure the unambiguity of transparent approximations used to explain rule-based models? | Rule overlap: For every pair of rules, sum up all the instances which satisfy both rules’ conditions simultaneously (optimal value is zero); Cover: The number of instances which satisfy the condition of the target rule (optimal value is the size of the entire dataset). | Model-based |

| Lakkaraju et al., 2016 [40] | Interpretability | Clarity | How to evaluate Clarity of rule-based models? | Fraction uncovered: Computes the fraction of data points which are not covered by any rule. | Model-based |

| Bhatt et al., 2020 [56] | Interpretability | Simplicity | How complex is the explanation? | Complexity: Entropy of the fractional contribution of every feature to the total magnitude of the attribution. | Attribution-based |

| Nguyen et al., 2007 [51] | Interpretability | Simplicity | How to measure the diversity of Example-based explanations? | Diversity: Distance function in the input space between different examples, divided by the number of examples. | Example-based |

| Ribeiro et al., 2016 [31] | Interpretability | Simplicity | How to measure the complexity of explanations? | Complexity: Decision tree depth, number of non-zero weights in linear models. | Model-based |

| Hara et al., 2016 [53] | Interpretability | Simplicity | How to measure the Interpretability of tree ensembles? | Tree ensembles complexity: Measured by the number of regions in which the input space is divided. | Model-based |

| Deng, 2019 [54] | Interpretability | Simplicity | How to measure the quality of rules extracted from tree ensembles? | Rule complexity: Measured by the length of the rule condition, defined as the number of variable-value pairs in the condition; Rule frequency: Proportion of data instances satisfying the rule condition; Rule error: Number of incorrectly classified instances determined by the rule divided by the number of instances satisfying the rule condition. | Model-based |

| Lakkaraju et al, 2017. [55] | Interpretability | Simplicity | How to measure the complexity and intuitive representation of transparent approximations used to explain rule-based models? | Size: The number of model rules; Max width: The maximum width computed over all the elements; No. of predicates: Number of predicates (appearing in both the decision logic rules and neighborhood descriptors); No. of descriptors: the number of unique neighborhood descriptors; Feature overlap: For every pair of a unique neighborhood descriptor and decision logic rule, number of features that occur in both. | Model-based |

| Lakkaraju et al., 2016 [40] | Interpretability | Simplicity | How to evaluate Simplicity of rule-based models? | Average rule length: The average number of predicates a human reader must parse to understand a rule. | Model-based |

| Slack et al., 2019 [52] | Interpretability | Simplicity | How to evaluate simulatability, i.e., user’s ability to run an explainable model on a given input? | Runtime operation counts: Measure the number of Boolean and arithmetic operations needed to run the explainable model for a given input. | Model-based |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lopes, P.; Silva, E.; Braga, C.; Oliveira, T.; Rosado, L. XAI Systems Evaluation: A Review of Human and Computer-Centred Methods. Appl. Sci. 2022, 12, 9423. https://doi.org/10.3390/app12199423

Lopes P, Silva E, Braga C, Oliveira T, Rosado L. XAI Systems Evaluation: A Review of Human and Computer-Centred Methods. Applied Sciences. 2022; 12(19):9423. https://doi.org/10.3390/app12199423

Chicago/Turabian StyleLopes, Pedro, Eduardo Silva, Cristiana Braga, Tiago Oliveira, and Luís Rosado. 2022. "XAI Systems Evaluation: A Review of Human and Computer-Centred Methods" Applied Sciences 12, no. 19: 9423. https://doi.org/10.3390/app12199423

APA StyleLopes, P., Silva, E., Braga, C., Oliveira, T., & Rosado, L. (2022). XAI Systems Evaluation: A Review of Human and Computer-Centred Methods. Applied Sciences, 12(19), 9423. https://doi.org/10.3390/app12199423