How to Correctly Detect Face-Masks for COVID-19 from Visual Information?

Abstract

1. Introduction

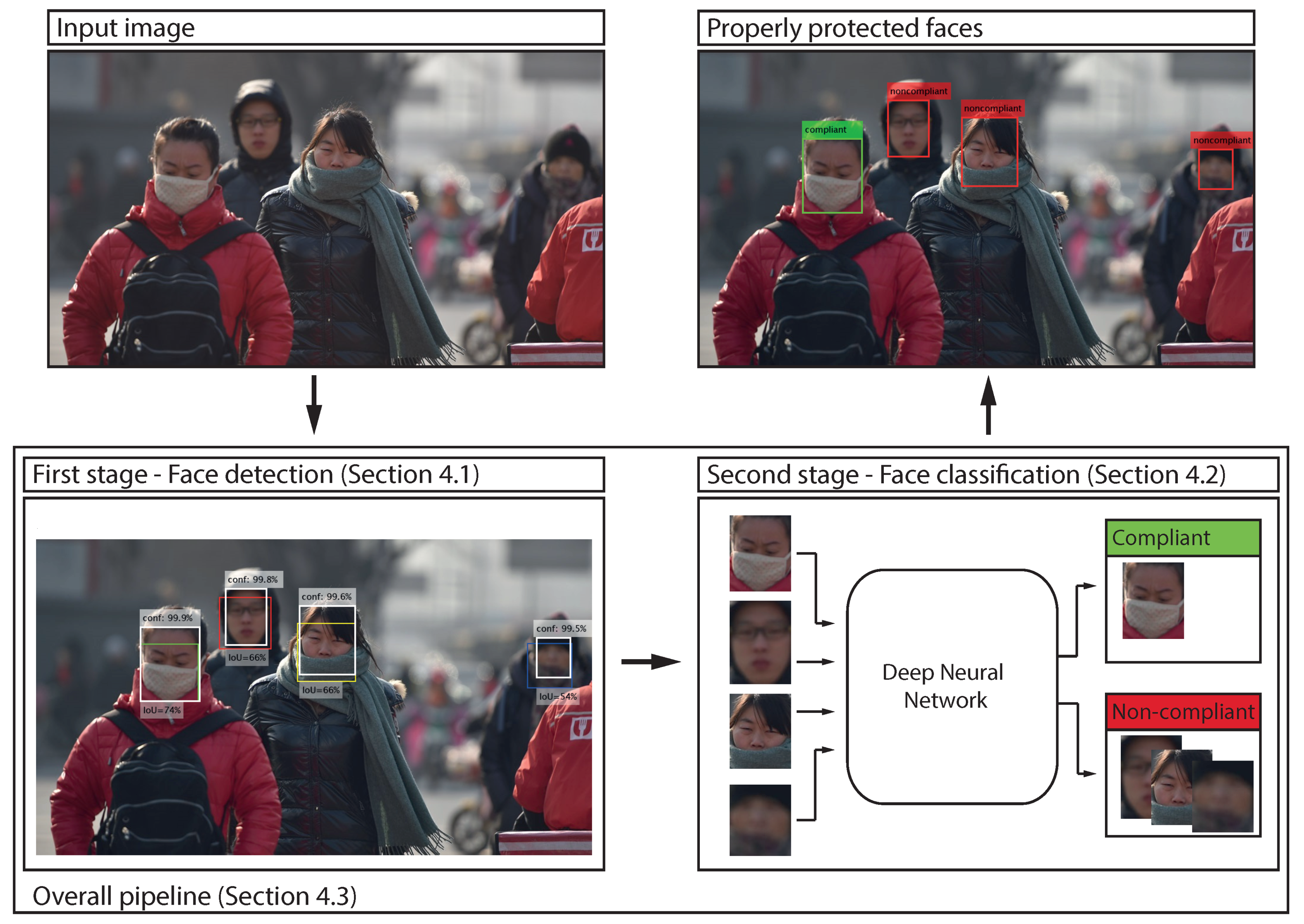

- We compile a dataset of facial images for the problem of predicting correct face-masks placements in the context of the COVID-19 pandemic and annotate it with respect to placement—correctness, gender, ethnicity and pose. We make the list of images and labels publicly available in the form of a dataset of annotations (annotation dataset hereafter) called Face-Mask Label Dataset (FMLD). FMLD can be downloaded from: https://github.com/borutb-fri/FMLD (accessed on 25 February 2021).

- We investigate the performance of various face-detection models with masked as well as mask-free face images and study the impact of face-masks on existing face detectors. Although the impact of face-masks has recently been studied for the problem of face recognition, e.g., in [23,24], this problem has not been investigated widely for face detectors.

- Using off-the-shelf deep-learning models, we implement and train a highly accurate and effective pipeline for detecting properly worn face-mask and show that existing solutions from the literature advocated for this task are in fact designed for a different problem and have only limited use for the task studied in this work.

2. Related Work

2.1. Face-Mask Datasets

2.2. Mask-Detection Methods

3. The Face-Mask Label Dataset (FMLD)

3.1. Dataset Construction

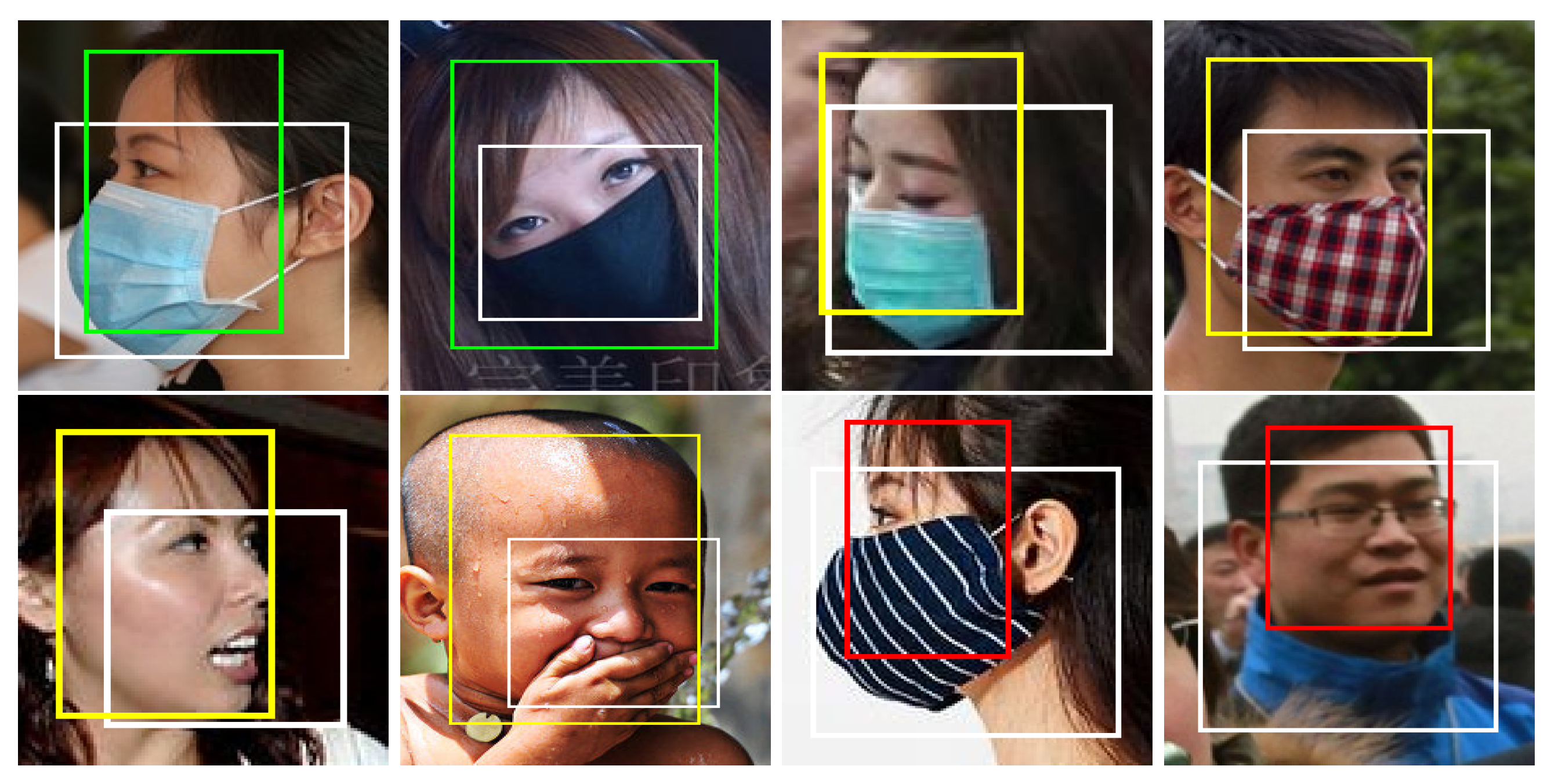

- Selection of images with masked faces: Masked faces are taken from the MAFA dataset only. MAFA has a total of 30,811 images that contain 35,806 occluded faces and 991 unoccluded faces. Faces in the dataset come in various poses. At least one part of each face is typically occluded, mostly by some sort of mask. A few example images from the MAFA are shown in Table 2, including faces with different types and degrees of occlusion—these are labeled in the dataset. As can be seen, some faces are masked by hands or other objects rather than physical masks, and are, hence, not useful for studying face-mask detection problems. For FMLD, we first identify candidate images for the group with correctly placed masks. For this group, we consider faces that have an occlusion of type 1 or 2 and an occlusion degree of 3 (illustrated with the first two images in the last row of Table 2 with a green border). Such faces typically have masks that cover the mouth, nose and chin. For the group of incorrectly worn masks, we consider faces with occlusions of type 1 and an occlusion degree of 1 or 2. The rest of the MAFA faces are added to the group of faces without masks. The presented prefiltering generates a fairly clean list of candidate images for FMLD, but still results in several incorrectly classified faces. We, therefore, inspect all images manually and modify incorrect annotations. The main criterium for annotating faces as compliant was that the nose, mouth, and chin were covered, even if the occluding object was not necessarily a mask, but something akin to a scarf or buff. The complete statistics of the MAFA part of FMLD are given in Table 3.

- Selection of face images without masks: Because MAFA contains many faces with masks, additional images of faces without masks are needed to balance the classes. Thus, we include images from the Wider Face-detection benchmark [22] in FMLD. Specifically, 11,123 images are selected that contain 26,278 faces with a high degree of variability in scale, pose and occlusion. No bounding box ground-truth is available for the test images, so we only consider images from the training and validation sets of Wider Face. We select images with faces that are at least 40 pixels in size () and exclude faces that are marked as invalid, are heavily blurred, have heavy occlusions and atypical poses. We also omit the entire “30—Surgeons” group because most of the faces contain masks. Through this prefiltering we generate an initial candidate list of faces without masks that are then manually inspected for the presence of facial masks. The presented procedure results in the overall statistics presented in Table 3 for the Wider Face part of FMLD.

3.2. Available Annotations

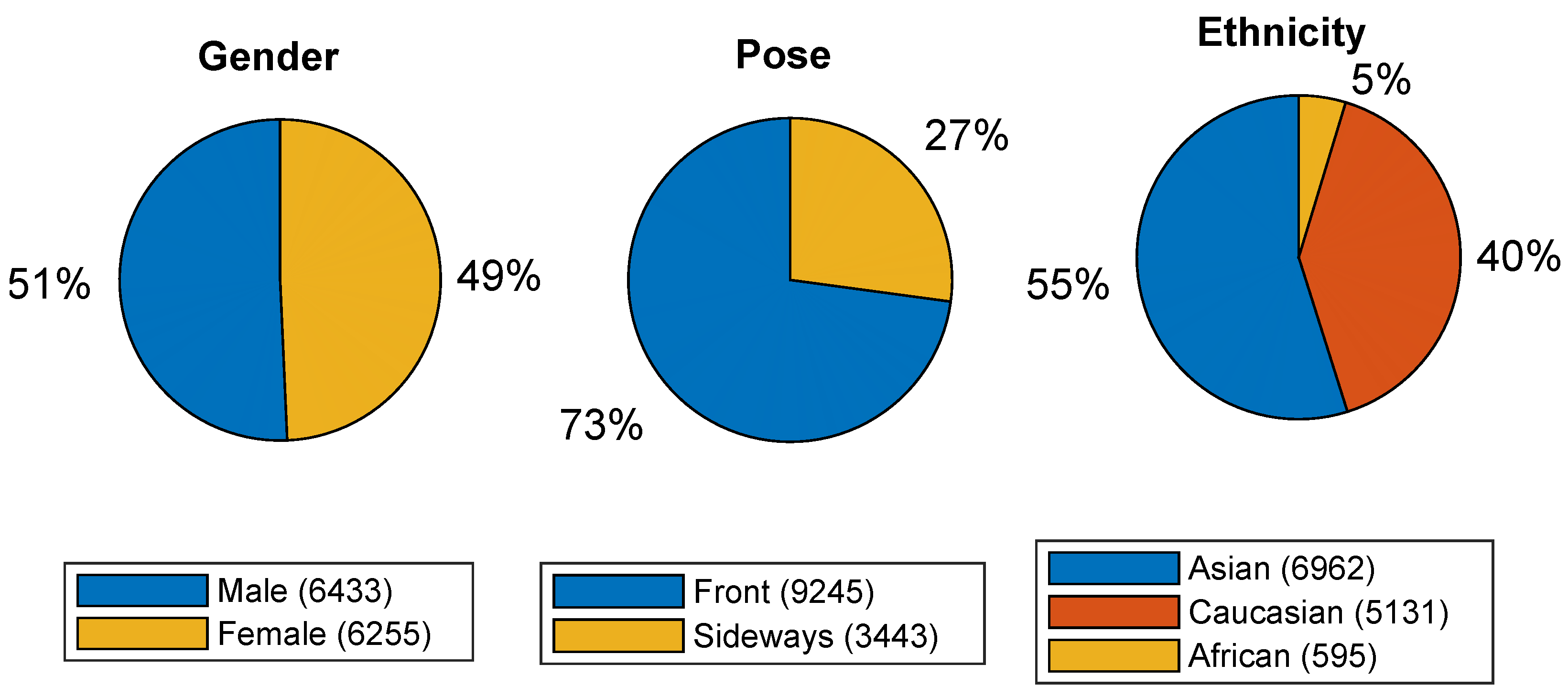

- Gender information: Each face bounding box is annotated with information on whether a male (6433 faces) or a female subject (6255 faces) is shown. The complete dataset is approximately gender balanced as shown in the corresponding pie chart in Figure 3.

- Pose information: Pose is annotated at a coarse level with respect whether faces are frontal or in profile view. As illustrated by the pie chart in the middle of Figure 3, most faces (9245) are frontal, and 3443 faces are shown in profile.

- Ethnicity: Three categories are considered to describe ethnic origin, i.e., Caucasian, Asian, and African. FMLD is dominated by faces of Asian origin (6962), followed by Caucasian (5131) and African (595) faces. The label distribution across ethnicity is shown in the last pie chart of Figure 3.

3.3. FMLD Availability

4. Evaluation Methodology

4.1. First Stage—Face Detection

- Multi-Task Cascaded Convolutional Neural Network (MTCNN) [36]—MTCNN represents a carefully designed cascaded CNN-based framework for joint face detection and alignment. The detection process of MTCNN consists of three stages of convolutional networks that can predict face and landmark locations in a coarse-to-fine manner. A publicly available version of MTCNN is used for the experiments (https://github.com/matlab-deep-learning/mtcnn-face-detection/releases/tag/v1.2.3, accessed on 25 February 2021).

- The OpenCV single-shot detector (OCVSSD)—OCVSSD is a Single-Shot Detector (SSD) framework with a ResNet base network [37]. The detector is Caffe-based and has been part of OpenCV since OpenCV 3.3 (https://github.com/opencv/opencv/tree/master/samples/dnn/face_detector, accessed on 25 February 2021).

- The Dual Shot Face Detector (DSFD) [38]—The DSFD is an extension of the standard single-shot face detector (SSD) that improves upon SSD in terms of more descriptive feature maps, a more efficient learning objective (loss) and an improved strategy of matching predictions to the faces in the input images. We use improved Pytorch implementation of the original algorithm (https://github.com/hukkelas/DSFD-Pytorch-Inference, accessed on accessed on 25 February 2021).

- RetinaFace [29]—RetinaFace is a single-shot multi-level face localization method that performs pixel-wise face localization on various scales by taking advantage of multi-task learning. It jointly performs three different face localization tasks, i.e., face detection, 2D face alignment and 3D face reconstruction within a single framework (https://github.com/biubug6/Pytorch_Retinaface, accessed on 25 February 2021).

- BAIDU detector—The Baidu detector is based on PyramidBox: A context-assisted single-shot face detector [35] that is used as part of Baidu’s face-mask detector. PyramidBox implements multiple strategies to use context information to improve the face-detection results.

- AntiCov—The RetinaFace AntiCov is a customized one-stage face detector based on RetinaFace [29]. The model is publicly available as part of the InsightFace: open-source 2D and 3D deep face analysis toolbox (https://github.com/deepinsight/insightface/tree/master/detection/RetinaFaceAntiCov, accessed on 25 February 2021). It uses a RetinaFace MobileNet as the backbone network.

- The Mask detector by AIZooTech—The AIZoo face-mask detector is an open-source detector again based on the SSD framework [39] (https://github.com/AIZOOTech/FaceMaskDetection, accessed on 25 February 2021). It uses a lightweight backbone network to ensure low computational complexity.

4.2. Second Stage—Face Classification

- AlexNet [54]—AlexNet is one the first large-scale convolutional neural networks that showed the potential of the deep-learning models ImageNet. The model consists of five conv layers of decreasing filter size followed by three fully connected layers upon which a learning objective can be defined [55]. One of the main characteristics of the model is the very rapid downsampling of the intermediate representations through strided convolutions and max-pooling layers.

- VGG-19 [56]—The VGG network is a 19-layer CNN model that uses a series of convolutional layers with small filters sizes , to facilitate training. As with AlexNet, the last two layers of VGG-19 are again fully connected and used to define the learning objective for the model.

- ResNet [57]—The model is built around the residual CNN architecture and uses skip connections to allow for training of very deep CNN models. We use three ResNet variants with 18 (ResNet-18), 34 (ResNet-34), 50 (ResNet-50) and 101 (ResNet-101) layers. The skip connections allow for efficient training by ensuring appropriate gradient flow.

- SqueezeNet [58]—SqueezeNet is a convolutional neural network that employs design strategies to reduce the number of parameters, notably with the use of fire modules that “squeeze” parameters using convolutions. In general, SqueezeNet is a special variant of ResNet, optimized for efficiency (in space and time). We used two version of the model for the experiments, the initial SqueezeNet v1.0 from [58], but also SqueezeNet v1.1 (https://github.com/forresti/SqueezeNet/tree/master/SqueezeNet_v1.1, accessed on 25 February 2021), which is an improved version that has less computations and slightly fewer parameters than SqueezeNet v1.0.

- DenseNet [59]—DenseNet is a powerful recent CNN model that reuses features from preceding layers in all subsequent layers within a DenseNet block. This architecture makes it possible to learn highly descriptive features that are very different from competing models. Several dense blocks are then concatenated in a ResNet manner to allow for gradient flow and efficient training.

- GoogLeNet [60]—GoogLeNet is another type of CNN model based on the Inception architecture. It uses so-called Inception modules, which allow the network to analyze images at different scales within each block. GoogLeNet stacks these modules in a feed forward architecture, with occasional max-pooling layers in between to reduce data dimensionality along the model.

- MobileNet [61]—MobileNet is a convolutional neural network architecture optimized for mobile devices. It is based on an inverted residual structure where the residual connections are between bottleneck layers. The intermediate expansion layer uses lightweight depthwise convolutions to filter features as a source of non-linearity. MobileNet version 2 is used in our experiments.

4.3. Overall Pipeline

5. Results and Discussion

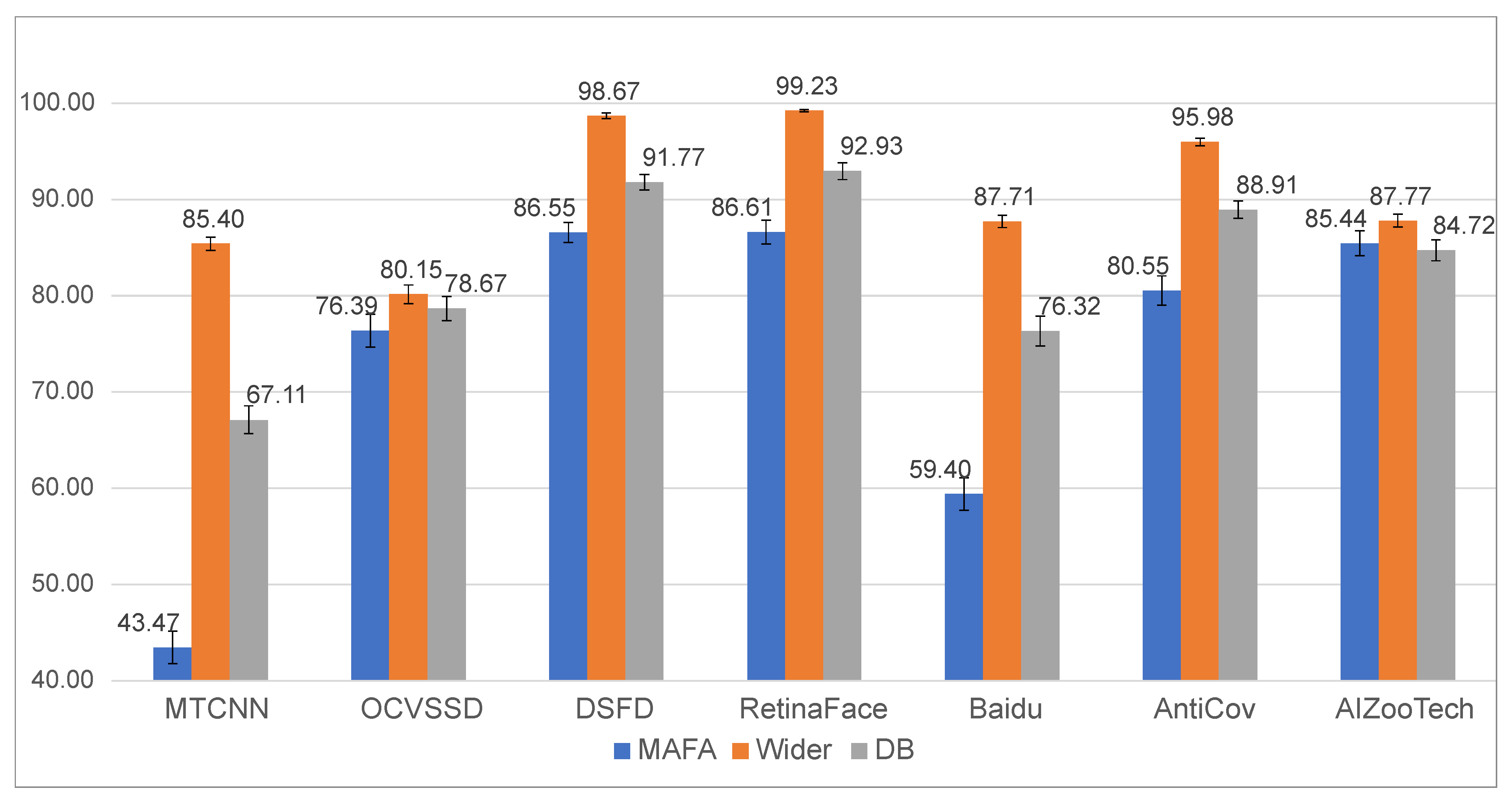

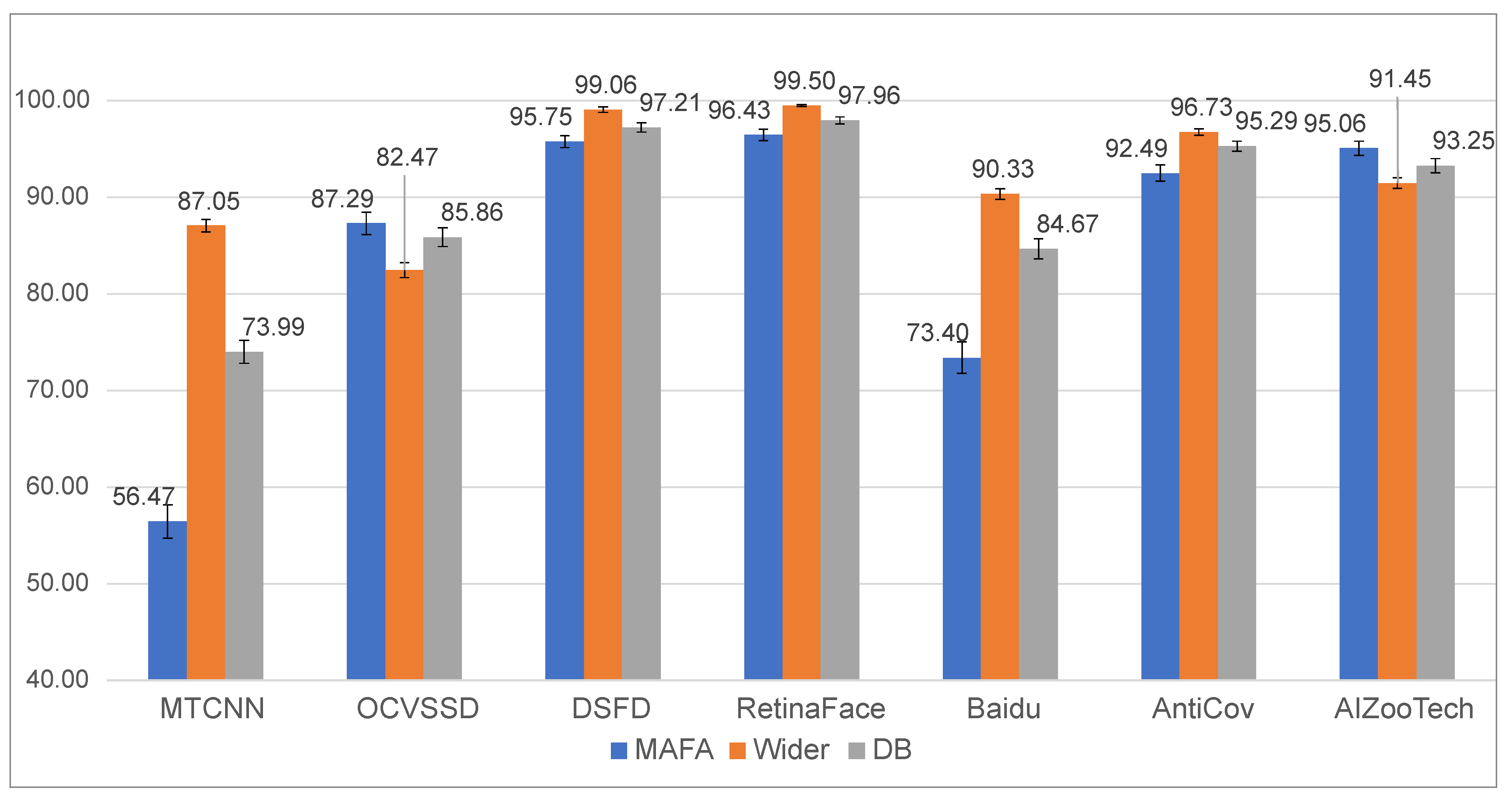

5.1. Face-Detection Results

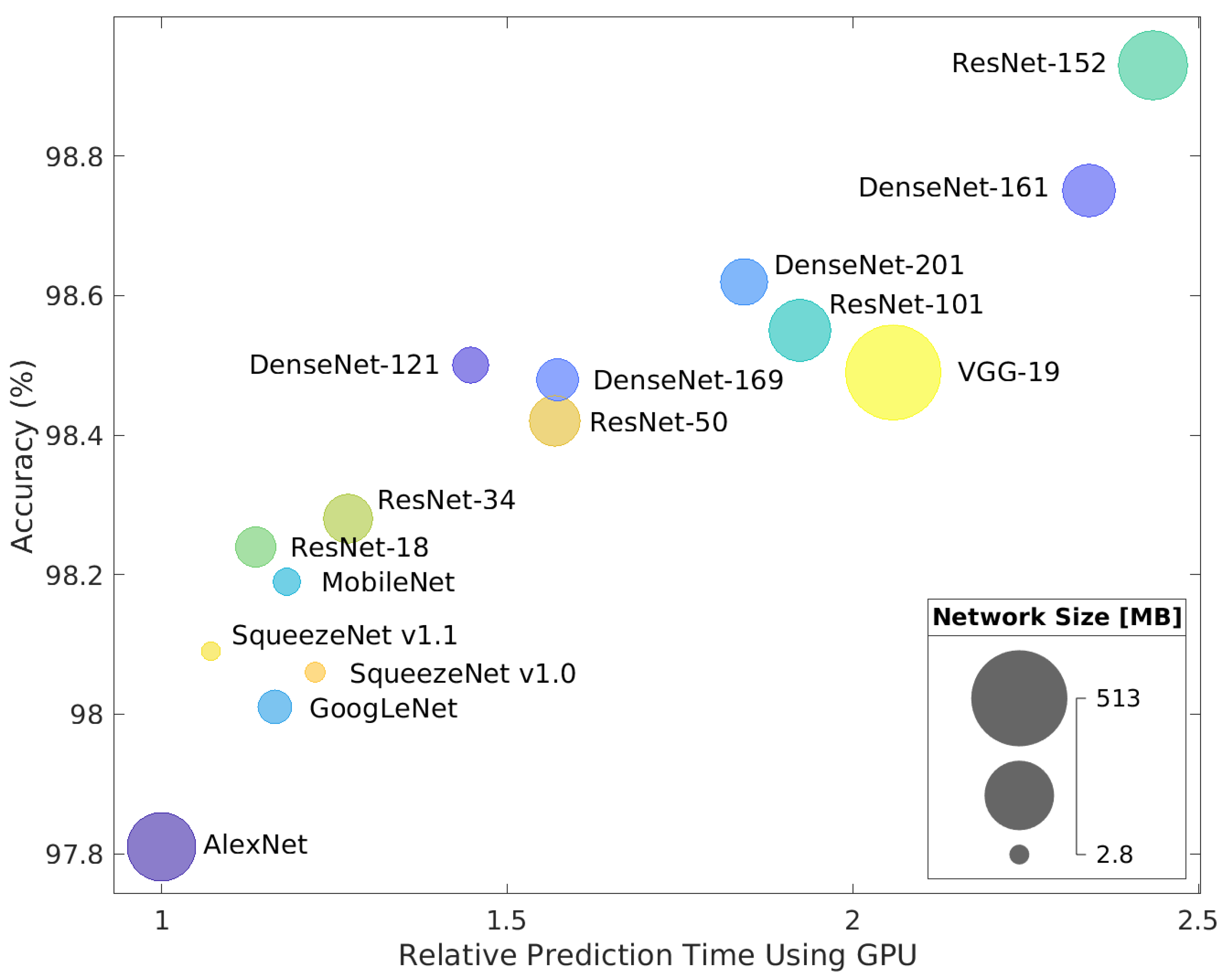

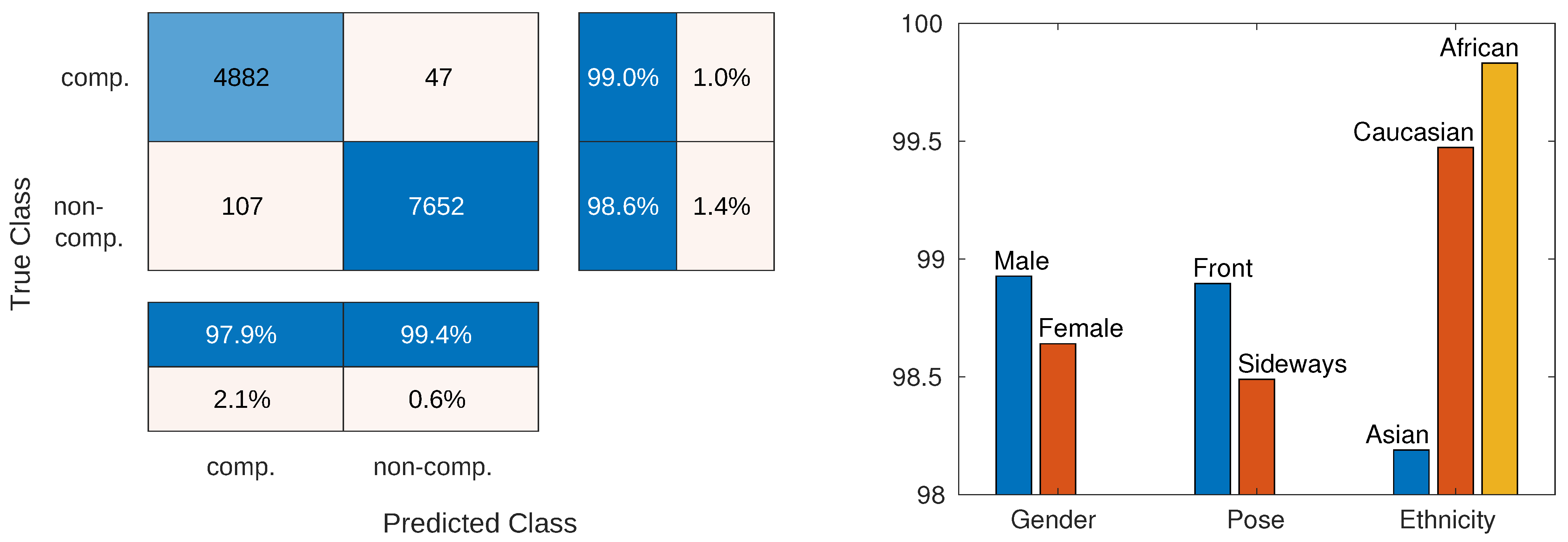

5.2. Classification Results

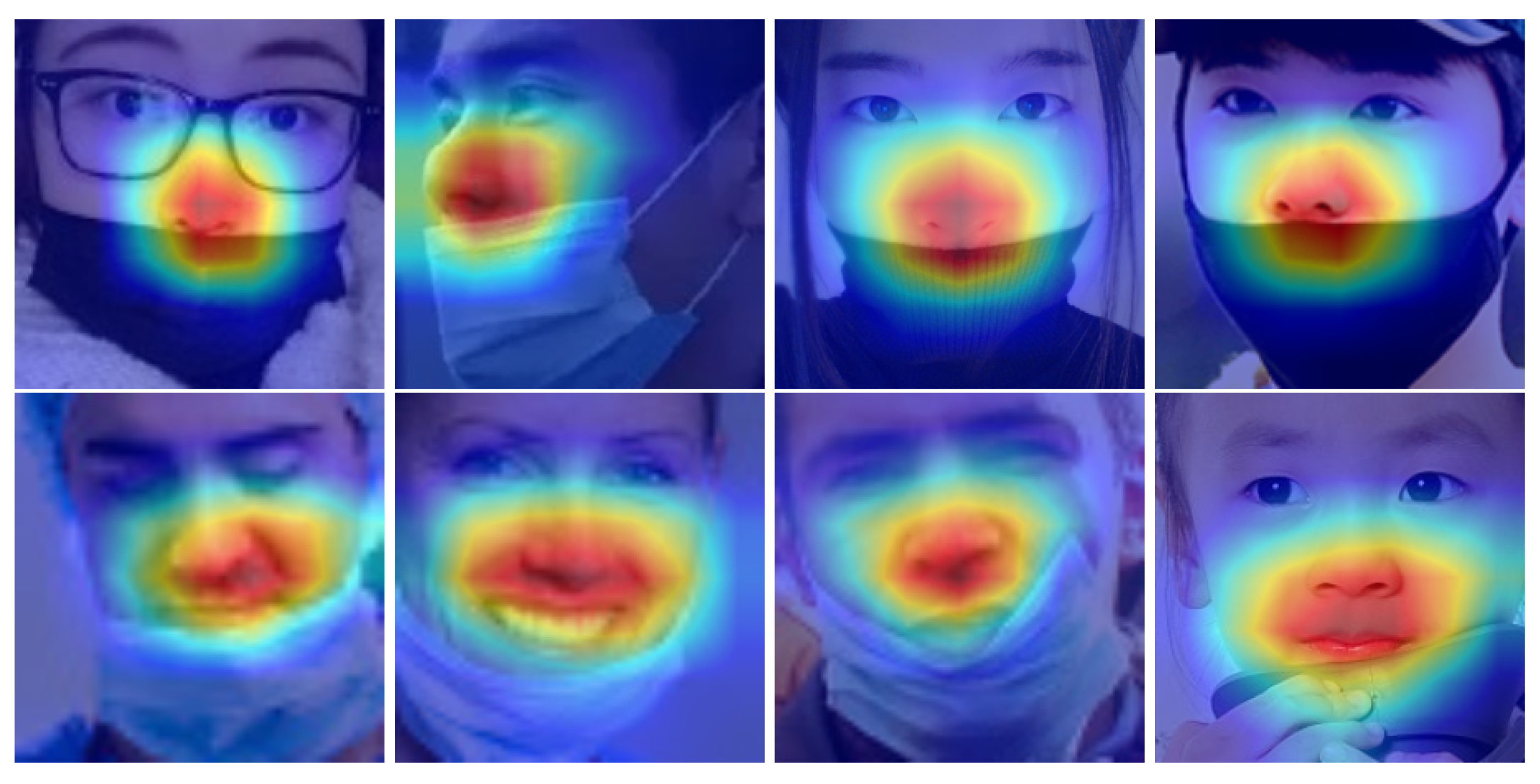

5.2.1. Analysis of Results

5.2.2. Error Analysis

5.3. Evaluation of Overall Pipeline

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- World Health Organization. Coronavirus Disease (COVID-19) Advice for the Public. Available online: https://www.who.int/emergencies/diseases/novel-coronavirus-2019/advice-for-public (accessed on 1 December 2020).

- Centers for Disease Control and Prevention. Considerations for Wearing Masks. USA; 2020. Available online: https://www.cdc.gov/coronavirus/2019-ncov/prevent-getting-sick/cloth-face-cover-guidance.html (accessed on 12 November 2020).

- Leung, N.H.; Chu, D.K.; Shiu, E.Y.; Chan, K.H.; McDevitt, J.J.; Hau, B.J.; Yen, H.L.; Li, Y.; Ip, D.K.; Peiris, J.M.; et al. Respiratory virus shedding in exhaled breath and efficacy of face masks. Nat. Med. 2020, 26, 676–680. [Google Scholar] [CrossRef] [PubMed]

- Feng, S.; Shen, C.; Xia, N.; Song, W.; Fan, M.; Cowling, B.J. Rational use of face masks in the COVID-19 pandemic. Lancet Resp. Med. 2020, 8, 434–436. [Google Scholar] [CrossRef]

- Wang, Z.; Wang, G.; Huang, B.; Xiong, Z.; Hong, Q.; Wu, H.; Yi, P.; Jiang, K.; Wang, N.; Pei, Y.; et al. Masked face recognition dataset and application. arXiv 2020, arXiv:2003.09093. [Google Scholar]

- Zafeiriou, S.; Zhang, C.; Zhang, Z. A survey on face detection in the wild: Past, present and future. Comput. Vis. Image Underst. 2015, 138, 1–24. [Google Scholar] [CrossRef]

- Wang, Y. Which Mask Are You Wearing? Face Mask Type Detection with TensorFlow and Raspberry Pi. Available online: https://towardsdatascience.com/which-mask-are-you-wearing-face-mask-type-detection-with-tensorflow-and-raspberry-pi-1c7004641f1 (accessed on 26 November 2020).

- Pranilshinde. Face-Mask Detector Using TensorFlow-Object Detection (SSD_MobileNet). Available online: https://medium.com/pranil-shinde/face-mask-detector-using-tensorflow-object-detection-ssd-mobilenet-37f233202c67 (accessed on 26 November 2020).

- Bhandary, P. Mask Classifier. Available online: https://github.com/prajnasb/observations (accessed on 26 November 2020).

- Rosebrock, A. COVID-19: Face Mask Detector with OpenCV, Keras/TensorFlow, and Deep Learning. Available online: https://www.pyimagesearch.com/2020/05/04/covid-19-face-mask-detector-with-opencv-keras-tensorflow-and-deep-learning (accessed on 26 November 2020).

- Khanna, R. COVID-19-Authorized-Entry-Using-Face-Mask-Detection. Available online: https://github.com/Rahul24-06/COVID-19-Authorized-Entry-using-Face-Mask-Detection (accessed on 26 November 2020).

- Bornstein, A.A. Personal Face Mask Detection with Custom Vision and Tensorflow.js. Available online: https://medium.com/microsoftazure/corona-face-mask-detection-with-custom-vision-and-tensorflow-js-86e5fff84373 (accessed on 26 November 2020).

- Yicong, O. Python Face Masks Detection Project. Available online: https://github.com/ohyicong/masksdetection (accessed on 26 November 2020).

- K, G.M. COVID-19: Face Mask Detection Using TensorFlow and OpenCV. Available online: https://towardsdatascience.com/covid-19-face-mask-detection-using-tensorflow-and-opencv-702dd833515b (accessed on 26 November 2020).

- Song, W. COVID19 Face Mask Detection Using Deep Learning. Available online: https://www.mathworks.com/matlabcentral/fileexchange/76758-covid19-face-mask-detection-using-deep-learning (accessed on 26 November 2020).

- Loey, M.; Manogaran, G.; Taha, M.H.N.; Khalifa, N.E.M. A hybrid deep transfer learning model with machine learning methodsfor face mask detection in the era of the COVID-19 pandemic. Measurement 2021, 167, 108288. [Google Scholar] [CrossRef] [PubMed]

- Chowdary, G.J.; Punn, N.S.; Sonbhadra, S.K.; Agarwal, S. Face mask detection using transfer learning of inceptionv3. In Proceedings of the International Conference on Big Data Analytics, Sonepat, India, 15–18 December 2020; Springer: Berlin/Heidelberg, Germany, 2020; pp. 81–90. [Google Scholar]

- Ge, S.; Li, J.; Ye, Q.; Luo, Z. Detecting masked faces in the wild with LLE-CNNs. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 2682–2690. [Google Scholar]

- RetinaFace Anti Cov Face Detector. Available online: https://github.com/deepinsight/insightface/tree/master/detection/RetinaFaceAntiCov (accessed on 26 November 2020).

- Daniell Chiang, Y.F. Detect Faces and Determine Whether they are Wearing Mask. Available online: https://github.com/AIZOOTech/FaceMaskDetection (accessed on 26 November 2020).

- Jiang, M.; Fan, X. RetinaMask: A Face Mask detector. arXiv 2020, arXiv:2005.03950. [Google Scholar]

- Yang, S.; Luo, P.; Loy, C.C.; Tang, X. Wider face: A face detection benchmark. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 5525–5533. [Google Scholar]

- Ngan, M.L.; Grother, P.J.; Hanaoka, K.K. Ongoing face recognition vendor test (FRVT) Part 6A: Face recognition accuracy with masks using pre-COVID-19 algorithms. In NIST Interagency/Internal Report (NISTIR); NIST: Gaithersburg, MD, USA, 24 July 2020. [Google Scholar]

- Damer, N.; Grebe, J.H.; Chen, C.; Boutros, F.; Kirchbuchner, F.; Kuijper, A. The effect of wearing a mask on face recognition performance: An exploratory study. In Proceedings of the 2020 International Conference of the Biometrics Special Interest Group (BIOSIG), Darmstadt, Germany, 16–18 September 2020; pp. 1–6. [Google Scholar]

- Wang, J.; Yuan, Y.; Yu, G. Face attention network: An effective face detector for the occluded faces. arXiv 2017, arXiv:1711.07246. [Google Scholar]

- Larxel. Face Mask Detection, 853 Images Belonging to 3 Classes. Available online: https://www.kaggle.com/andrewmvd/face-mask-detection (accessed on 26 November 2020).

- Wobot Intelligence. Face Mask Detection Dataset. Available online: https://www.kaggle.com/wobotintelligence/face-mask-detection-dataset (accessed on 26 November 2020).

- Humans in the Loop. Medical Mask Dataset. Available online: https://humansintheloop.org/medical-mask-dataset (accessed on 26 November 2020).

- Deng, J.; Guo, J.; Ververas, E.; Kotsia, I.; Zafeiriou, S. RetinaFace: Single-Shot Multi-Level Face Localisation in the Wild. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Conference on Computer Vision and Pattern Recognition (CVPR2020), Seattle, WA, USA (Virtual), 14–19 June 2020; pp. 5203–5212. [Google Scholar]

- Khandelwal, P.; Khandelwal, A.; Agarwal, S. Using Computer Vision to enhance Safety of Workforce in Manufacturing in a Post COVID World. arXiv 2020, arXiv:2005.05287. [Google Scholar]

- Qin, B.; Li, D. Identifying Facemask-wearing Condition Using Image Super-Resolution with Classification Network to Prevent COVID-19. Sensors 2020, 20, 5236. [Google Scholar] [CrossRef]

- Trident. Face Mask Detection System Using Artificial Intelligence. Available online: https://www.tridentinfo.com/face-mask-detection-systems (accessed on 26 November 2020).

- Leewayhertz. Face Mask Detection System Using Artificial Intelligence. Available online: https://www.leewayhertz.com/face-mask-detection-system (accessed on 26 November 2020).

- Udemans, C. Baidu Releases Open-Source Tool to Detect Faces without Masks. Available online: https://technode.com/2020/02/14/baidu-open-source-face-masks (accessed on 26 November 2020).

- Tang, X.; Du, D.K.; He, Z.; Liu, J. Pyramidbox: A context-assisted single shot face detector. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 797–813. [Google Scholar]

- Zhang, K.; Zhang, Z.; Li, Z.; Qiao, Y. Joint face detection and alignment using multitask cascaded convolutional networks. IEEE Signal Process. Lett. 2016, 23, 1499–1503. [Google Scholar] [CrossRef]

- Villán, A.F. Mastering OpenCV 4 with Python: A Practical Guide Covering Topics from Image Processing, Augmented Reality to Deep Learning with OpenCV 4 and Python 3.7; Packt Publishing Ltd.: Birmingham, UK, 2019. [Google Scholar]

- Li, J.; Wang, Y.; Wang, C.; Tai, Y.; Qian, J.; Yang, J.; Wang, C.; Li, J.; Huang, F. DSFD: Dual shot face detector. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 16–20 June 2019; pp. 5060–5069. [Google Scholar]

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.Y.; Berg, A.C. Ssd: Single shot multibox detector. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 8–16 October 2016; Springer: Berlin/Heidelberg, Germany, 2016; pp. 21–37. [Google Scholar]

- Everingham, M.; Eslami, S.A.; Van Gool, L.; Williams, C.K.; Winn, J.; Zisserman, A. The pascal visual object classes challenge: A retrospective. Int. J. Comput. Vis. 2015, 111, 98–136. [Google Scholar] [CrossRef]

- Deng, J.; Dong, W.; Socher, R.; Li, L.J.; Li, K.; Fei-Fei, L. Imagenet: A large-scale hierarchical image database. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Miami, FL, USA, 20–25 June 2009; pp. 248–255. [Google Scholar]

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft coco: Common objects in context. In Proceedings of the European Conference on Computer Vision (ECCV), Zurich, Switzerland, 6–12 September 2014; Springer: Berlin/Heidelberg, Germany, 2014; pp. 740–755. [Google Scholar]

- Cartucho, J.; Ventura, R.; Veloso, M. Robust Object Recognition Through Symbiotic Deep Learning In Mobile Robots. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 2336–2341. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Valueva, M.V.; Nagornov, N.; Lyakhov, P.A.; Valuev, G.V.; Chervyakov, N.I. Application of the residue number system to reduce hardware costs of the convolutional neural network implementation. Math. Comput. Simulat. 2020, 177, 232–243. [Google Scholar] [CrossRef]

- Van den Oord, A.; Dieleman, S.; Schrauwen, B. Deep content-based music recommendation. Adv. Neural Inform. Process. Syst. 2013, 26, 2643–2651. [Google Scholar]

- Collobert, R.; Weston, J. A unified architecture for natural language processing: Deep neural networks with multitask learning. In Proceedings of the 25th International Conference on Machine Learning, Helsinki, Finland, 5–9 July 2008; pp. 160–167. [Google Scholar]

- Kwon, O.Y.; Lee, M.H.; Guan, C.; Lee, S.W. Subject-independent brain-computer interfaces based on deep convolutional neural networks. IEEE Trans. Neural Netw. Learn. Syst. 2019, 31, 3839–3852. [Google Scholar] [CrossRef] [PubMed]

- Tsantekidis, A.; Passalis, N.; Tefas, A.; Kanniainen, J.; Gabbouj, M.; Iosifidis, A. Forecasting stock prices from the limit order book using convolutional neural networks. In Proceedings of the 2017 IEEE 19th Conference on Business Informatics (CBI), Thessaloniki, Greece, 24–27 July 2017; Volume 1, pp. 7–12. [Google Scholar]

- Bortolato, B.; Ivanovska, M.; Rot, P.; Križaj, J.; Terhorst, P.; Damer, N.; Peer, P.; Štruc, V. Learning privacy-enhancing face representations through feature disentanglement. In Proceedings of the FG 2020, IEEE International Conference on Automatic Face & Gesture Recognition, Buenos Aires, Argentina (Virtual), 16–20 May 2020; pp. 495–502. [Google Scholar]

- Štepec, D.; Emeršič, Ž.; Peer, P.; Štruc, V. Constellation-Based Deep Ear Recognition. In Deep Biometrics: Unsupervised and Semi-Supervised Learning; Jiang, R., Li, C., Crookes, D., Meng, W., Rosenberger, C., Eds.; Springer: Berlin/Heidelberg, Germany, 2020. [Google Scholar] [CrossRef]

- Vitek, M.; Rot, P.; Štruc, V.; Peer, P. A comprehensive investigation into sclera biometrics: A novel dataset and performance study. Neural Comput. Appl. 2020, 1–15. [Google Scholar] [CrossRef]

- Grm, K.; Štruc, V. Deep face recognition for surveillance applications. IEEE Intell. Syst. 2018, 33, 46–50. [Google Scholar]

- Krizhevsky, A. One weird trick for parallelizing convolutional neural networks. arXiv 2014, arXiv:1404.5997. [Google Scholar]

- Grm, K.; Štruc, V.; Artiges, A.; Caron, M.; Ekenel, H.K. Strengths and weaknesses of deep learning models for face recognition against image degradations. IET Biometrics 2017, 7, 81–89. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Iandola, F.N.; Han, S.; Moskewicz, M.W.; Ashraf, K.; Dally, W.J.; Keutzer, K. SqueezeNet: AlexNet-level accuracy with 50x fewer parameters and< 0.5 MB model size. arXiv 2016, arXiv:1602.07360. [Google Scholar]

- Huang, G.; Liu, Z.; Van Der Maaten, L.; Weinberger, K.Q. Densely connected convolutional networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 4700–4708. [Google Scholar]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 1–9. [Google Scholar]

- Sandler, M.; Howard, A.; Zhu, M.; Zhmoginov, A.; Chen, L.C. Mobilenetv2: Inverted residuals and linear bottlenecks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 4510–4520. [Google Scholar]

- Russakovsky, O.; Deng, J.; Su, H.; Krause, J.; Satheesh, S.; Ma, S.; Huang, Z.; Karpathy, A.; Khosla, A.; Bernstein, M.; et al. Imagenet large scale visual recognition challenge. Int. J. Comput. Vis. 2015, 115, 211–252. [Google Scholar] [CrossRef]

- Pinkney, J. MTCNN Face Detection. Available online: https://github.com/matlab-deep-learning/mtcnn-face-detection/releases/tag/v1.2.3 (accessed on 26 November 2020).

- Rosebrock, A. Face Detection with OpenCV and Deep Learning. Available online: https://www.pyimagesearch.com/2018/02/26/face-detection-with-opencv-and-deep-learning/ (accessed on 26 November 2020).

- Hukkelås, H. DSFD-Pytorch-Inference. Available online: https://github.com/hukkelas/DSFD-Pytorch-Inference (accessed on 26 November 2020).

- biubug6. RetinaFace in PyTorch. Available online: https://github.com/biubug6/Pytorch_Retinaface (accessed on 26 November 2020).

- Selvaraju, R.R.; Cogswell, M.; Das, A.; Vedantam, R.; Parikh, D.; Batra, D. Grad-cam: Visual explanations from deep networks via gradient-based localization. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 618–626. [Google Scholar]

| Dataset | Type | Mask Type | #Images | #Faces | Available Labels (Reported Only) | |||

|---|---|---|---|---|---|---|---|---|

| #Mask | #No Mask | #Inc. Worn | Other | |||||

| MFDD [5] | IM | Real-world | 4343 | n/a | 24,771 | 0 | 0 | n/a |

| RMFRD [5] | IM | Real-world | 92,671 | 92,671 | 2203 | 90,000 | 0 | n/a |

| SMFRD [5] | IM | Simulated | 500,000 | 500,000 | 500,000 | 0 | 0 | n/a |

| MAFA [18] | IM | Real-world | 30,811 | 36,797 | 35,806 | 991 | 0 | G, E, P |

| FMD [26] | IM | Real-world | 853 | 4072 | 3232 | 717 | 123 | n/a |

| MMD [27,28] | IM | Real-world | 6024 | 9067 | 6758 | 2085 | 224 | n/a |

| FMLD (ours) | AN | Real-world | 41,934 | 63,072 | 29,532 | 32,012 | 1528 | G, E, P |

| Degree | Occlusion Type (MAFA Labels) | ||

|---|---|---|---|

| 1-Simple | 2-Complex | 3-Human Body | |

| 1 |  |  |  |

| 2 |  |  |  |

| 3 |  |  |  |

| Donor Dataset | Purpose | #Images | #Faces | Labels | ||

|---|---|---|---|---|---|---|

| with Mask | without Mask | |||||

| Correctly Worn | Incorrectly Worn | |||||

| MAFA | Training | 25,876 | 29,452 | 24,603 | 1204 | 3645 |

| Testing | 4935 | 7342 | 4929 | 324 | 2089 | |

| Wider Face | Training | 8906 | 20,932 | 0 | 0 | 20,932 |

| Testing | 2217 | 5346 | 0 | 0 | 5346 | |

| FMLD | Training | 34,782 | 50,384 | 24,603 | 1204 | 24,577 |

| Testing | 7152 | 12,688 | 4929 | 324 | 7435 | |

| Totals | 41,934 | 63,072 | 29,532 | 1528 | 32,012 | |

| Metric | ||||||

|---|---|---|---|---|---|---|

| Dataset | MAFA | Wider | DB | MAFA | Wider | DB |

| MTCNN [63] | ||||||

| OCVSSD [64] | ||||||

| DSFD [65] | ||||||

| RetinaFace [66] | ||||||

| Baidu [34] | ||||||

| AntiCov [19] | ||||||

| AIZooTech [20] | ||||||

| Model | Model Size [MB] | Time | Accuracy () [%] | |

|---|---|---|---|---|

| Overall [s] | Per Image [ms] | |||

| ResNet-152 | 223 | 79 | 6.23 | |

| DenseNet-161 | 103 | 76 | 5.99 | |

| DenseNet-201 | 71 | 60 | 4.73 | |

| ResNet-101 | 163 | 64 | 5.04 | |

| DenseNet-121 | 28 | 51 | 4.02 | |

| VGG-19 | 513 | 62 | 4.89 | |

| DenseNet-169 | 49 | 54 | 4.26 | |

| ResNet-50 | 90 | 51 | 4.02 | |

| ResNet-34 | 82 | 41 | 3.23 | |

| ResNet-18 | 43 | 38 | 2.99 | |

| MobileNet | 8.8 | 38 | 2.99 | |

| SqueezeNet v1.1 | 2.8 | 35 | 2.76 | |

| SqueezeNet v1.0 | 2.9 | 39 | 3.07 | |

| GoogLeNet | 22 | 38 | 2.99 | |

| AlexNet | 218 | 33 | 2.60 | |

| Gender | Pose | Ethnicity | |||||

|---|---|---|---|---|---|---|---|

| Male | Female | Front | Sideways | Asian | Caucasian | African | |

| Faces | 6433 | 6255 | 9245 | 3443 | 6962 | 5131 | 595 |

| #Errors | 69 | 85 | 102 | 52 | 126 | 27 | 1 |

| Acc [%] | 98.93 | 98.64 | 98.90 | 98.49 | 98.19 | 99.47 | 99.83 |

| Method | Compliant, | Non-Compliant, | |

|---|---|---|---|

| Baidu | |||

| AntiCov | |||

| AIZooTech | |||

| This paper |

| Method | Compliant, | Non-Compliant, | |

|---|---|---|---|

| Baidu | |||

| AntiCov | |||

| AIZooTech | |||

| This paper |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Batagelj, B.; Peer, P.; Štruc, V.; Dobrišek, S. How to Correctly Detect Face-Masks for COVID-19 from Visual Information? Appl. Sci. 2021, 11, 2070. https://doi.org/10.3390/app11052070

Batagelj B, Peer P, Štruc V, Dobrišek S. How to Correctly Detect Face-Masks for COVID-19 from Visual Information? Applied Sciences. 2021; 11(5):2070. https://doi.org/10.3390/app11052070

Chicago/Turabian StyleBatagelj, Borut, Peter Peer, Vitomir Štruc, and Simon Dobrišek. 2021. "How to Correctly Detect Face-Masks for COVID-19 from Visual Information?" Applied Sciences 11, no. 5: 2070. https://doi.org/10.3390/app11052070

APA StyleBatagelj, B., Peer, P., Štruc, V., & Dobrišek, S. (2021). How to Correctly Detect Face-Masks for COVID-19 from Visual Information? Applied Sciences, 11(5), 2070. https://doi.org/10.3390/app11052070