Abstract

As one of the major sources of global carbon emissions, the manufacturing industry urgently requires green transformation. The utilization of renewable energy in production workshop offers a promising route toward zero-carbon manufacturing. However, renewable energy fluctuations and dynamic workshop events make efficient scheduling increasingly challenging. This paper introduces a low-carbon and energy-efficient dynamic flexible job shop scheduling problem oriented towards renewable energy integration, and develops a multi-agent deep reinforcement learning framework for dynamic and intelligent production scheduling. Inspired by the Proximal Policy Optimization (PPO) algorithm, a routing agent and a sequencing agent are designed for machine assignment and job sequencing, respectively. Customized state representations and reward functions are also designed to enhance learning performance and scheduling efficiency. Simulation results demonstrate that the proposed method achieves superior performance in multi-objective optimization, effectively balancing production efficiency, energy consumption, and carbon emission reduction across various job shop scheduling scenarios.

1. Introduction

Globally, energy security and environmental pollution have become increasingly prominent issues, posing significant constraints on sustainable economic and social development. In recent years, global greenhouse gas emissions have remained at a high level and continued to increase. Statistics show that global fossil fuel CO2 emissions reached approximately 36.8 GtCO2 in 2023, representing an increase of about 1.4% compared with 2022, and are projected to further rise to around 37.4 GtCO2 in 2024, setting a new historical record [1]. Existing studies indicate that global carbon emissions have not yet shown clear signs of peaking, underscoring the growing urgency of low-carbon transitions and emission-reduction efforts [2]. As one of the major contributors to global energy consumption and carbon emissions, the manufacturing sector is highly dependent on energy supply; under energy structures dominated by fossil fuels, its carbon emissions remain particularly significant. In this context, reducing carbon emissions while ensuring production efficiency and system stability has become a critical challenge for the transformation and upgrading of the manufacturing sector [3,4,5].

To achieve sustainable development, the concept of green manufacturing has gradually become a mainstream direction in industrial development. In this context, the integration of renewable energy presents a significant opportunity to reduce carbon emissions in the manufacturing sector. Clean energy sources such as wind, solar, hydro, and biomass are not only renewable and low-emission but also effectively reduce dependence on fossil fuels [6,7]. With the maturity of photovoltaic and wind power technologies, an increasing number of manufacturing enterprises have begun to adopt clean energy for production. However, renewable energy is inherently intermittent and fluctuating. When utilizing such energy sources, manufacturers must ensure both supply stability and continuity of the production process. This imposes higher demands on production planning and scheduling strategies, particularly in terms of multi-objective trade-offs and adaptation to dynamic environments.

The flexible job shop scheduling problem (FJSP) provides an important theoretical foundation for optimization and scheduling research in manufacturing systems. Compared with the traditional job shop scheduling problem (JSP), FJSP offers greater flexibility in resource allocation and task execution routing, enabling better adaptation to the diverse demands of complex manufacturing environments [8]. This characteristic has led to the widespread application of FJSP in industries such as semiconductor manufacturing, aerospace, and precision machinery. In recent years, researchers have begun to focus on green FJSP, aiming to incorporate energy consumption and carbon emission metrics in addition to traditional objectives such as makespan and tardiness [9]. Such studies have played a positive role in promoting green manufacturing; however, most are based on static scenarios and give limited consideration to the uncertainty of energy supply.

In actual manufacturing systems, the environment is often dynamic. Random job arrivals, temporary changes in delivery deadlines, equipment failures and maintenance, as well as the insertion of high-priority jobs, can all disrupt the original scheduling plan [10]. In the context of integrating renewable energy, the complexity of the problem increases further. On one hand, production loads need to be matched in real time with the fluctuating supply of clean energy; on the other hand, the uncertainty of energy supply often coincides with production disturbances, making it difficult for traditional scheduling methods to generate high-quality schedules within a limited time [11]. Furthermore, existing green FJSP studies have largely focused on energy consumption optimization and lack systematic modeling of the fluctuation characteristics of renewable energy, resulting in limited robustness of scheduling solutions under dynamic environments [12]. In practice, this manifests as reduced production efficiency, insufficient energy utilization, and difficulty in achieving carbon reduction targets.

Therefore, an intelligent scheduling approach capable of simultaneously perceiving both production and energy states is required to achieve coordinated optimization of multiple objectives such as production efficiency, energy consumption, and carbon emissions. In recent years, deep reinforcement learning (DRL), with its adaptability in uncertain environments and its ability for policy learning, has received growing attention in complex scheduling problems. In particular, multi-agent reinforcement learning methods, which model different scheduling components through distributed decision-making, have shown promising potential in dynamic FJSP (DFJSP) involving stochastic job arrivals and machine status variations.

However, as renewable energy is increasingly integrated into manufacturing power systems, the time-varying and fluctuating nature of energy supply begins to directly affect scheduling feasibility and optimization outcomes. Although existing studies have made progress in handling production-side disturbances, uncertainties on the energy side are still largely overlooked. Most existing DRL-based FJSP approaches assume a stable energy supply, lacking systematic modeling of renewable energy variability and a unified decision-making framework that can jointly address production disturbances and energy fluctuations. This research gap limits the full potential of DRL in dynamic green scheduling scenarios where energy availability plays a significant role.

Motivated by these challenges, this study focuses on DRL-based FJSP under renewable energy fluctuations and formulates a low-carbon and energy-efficient renewable-energy-integrated dynamic FJSP (LEDFJSP-RE). The main contributions of this work are as follows:

- A dynamic flexible job shop scheduling model with renewable energy integration (LEDFJSP-RE) is formulated. The model establishes a real-time coupling mechanism between production workloads and renewable energy supply, enabling energy-aware and low-carbon decision-making under dynamic job arrivals.

- A multi-agent PPO framework is developed to solve the LEDFJSP-RE. The framework supports variable-length state inputs and incorporates a state representation compatible with dynamic events. The state space, action space, and reward mechanism are specifically designed to capture both production dynamics and renewable energy fluctuations.

- Numerical experiments with different scales of job insertions are conducted to assess the adaptability and performance of the proposed approach. In addition to comparisons with conventional dispatching rules, further experiments against representative DRL-based and evolutionary scheduling methods, as well as an energy-oriented scheduling rule, are carried out to provide a more comprehensive evaluation. The results indicate that the proposed approach achieves consistently superior performance in terms of scheduling efficiency, energy consumption, carbon emissions, and overall cost across diverse problem settings.

The structure of this paper is organized as follows: Section 2 reviews the research progress on green FJSP and DRL-based FJSP; Section 3 introduces the mathematical modeling of the LEDFJSP-RE; Section 4 presents the multi-agent reinforcement learning solution framework; Section 5 describes the experimental design and results analysis; and Section 6 concludes the study and discusses future research directions.

2. Literature Review

This chapter reviews recent studies on green FJSP and DRL-based FJSP, and then summarizes the main research gaps in existing work.

2.1. Green FJSP

As the pressure from energy consumption and carbon emissions increases, the manufacturing industry urgently needs to transition towards green, low-carbon, and intelligent systems for sustainable development [13,14]. Therefore, green scheduling has gradually become an important research direction in FJSP.

Early research on green scheduling mainly focused on maximizing production efficiency using metaheuristic methods such as genetic algorithms and simulated annealing, while minimizing makespan and load balancing [15]. With the rise of green manufacturing, scheduling objectives have expanded to include energy consumption, carbon emissions, and other green factors [4,16]. These methods break the static load assumptions of traditional scheduling models, promoting the coupling of production scheduling with energy management systems.

In recent years, researchers have gradually proposed low-carbon scheduling and multi-objective optimization models aimed at reducing carbon emissions and optimizing production scheduling. For example, Ref. [13] introduced makespan, delay time, bottleneck load rate, and carbon emissions into a low-carbon multi-objective scheduling model and proposed an improved multi-objective evolutionary algorithm (IMOEA/D-HS). Ref. [17] proposed a model based on an improved gray wolf optimization algorithm (SC-GWO) to optimize the weighted sum of carbon emissions and makespan. However, these studies, while effectively reducing carbon emissions, do not account for fluctuations in renewable energy supply or dynamic events on the shop floor.

The introduction of renewable energy offers a new perspective for green FJSP. Research shows that incorporating renewable energy into flexible manufacturing systems not only reduces carbon emissions but also enhances energy efficiency and reduces energy costs [18]. For instance, Ref. [19] proposed an “energy self-sufficient manufacturing system” that integrates renewable distributed energy to replace traditional grid power, reducing grid dependence and electricity costs. Additionally, Ref. [20] introduced distributed energy and energy storage systems into re-entrant hybrid flow shop scheduling, demonstrating energy-saving, carbon reduction, and cost control advantages. These studies show that renewable energy holds significant potential in green manufacturing, but managing its instability in dynamic scheduling while meeting production needs remains a challenge.

2.2. DRL-Related FJSP

With the development of DRL technologies, DFJSPs have gradually become a research hotspot. DRL allows self-learning in dynamic environments, optimizing task allocation, resource scheduling, and responses to unexpected events, thereby enhancing the adaptability and robustness of production systems.

In DFJSP research, DRL methods typically model job scheduling and resource allocation as a Markov Decision Process (MDP) to achieve decision optimization. Common DRL algorithms include deep Q-networks (DQN), Double DQN (DDQN) [21,22], and proximal policy optimization (PPO), which can effectively handle complex state spaces and high-dimensional action spaces, providing optimal decisions for production scheduling [23,24]. For instance, PPO, known for its stability and support for continuous action spaces, has become a common optimization method in DFJSP. Additionally, DQN and DDQN adjust action selection strategies through reinforcement learning, effectively reducing job delays and improving resource utilization. Ref. [25] proposed a deep multi-agent reinforcement learning scheduling framework based on DDQN for high-variance dynamic job shops. The framework treats each machine as an independent agent and uses a centralized training-decentralized execution approach with parameter sharing to mitigate non-stationarity in the environment. By combining knowledge-driven reward shaping and scalable state representation, this approach successfully achieves the dual goals of reducing job delays and improving resource utilization. To address high-dimensional, multi-objective scheduling problems, recent research has also introduced multi-agent DRL into DFJSP. By assigning job scheduling and machine allocation tasks to multiple agents for parallel learning, multi-agent reinforcement learning (MARL) enhances the flexibility and efficiency of scheduling systems, reducing scheduling conflicts and improving overall scheduling performance [26]. For example, Ref. [9] proposed an improved multi-agent PPO (MMAPPO) algorithm to solve the “partial re-entry-dynamic disturbance-multi-objective” hybrid flow shop scheduling problem. This algorithm effectively reduces job delays, reduces energy consumption, and improves resource utilization.

In summary, while DRL has made significant progress in handling dynamic factors (such as processing time variations and equipment failures) in traditional DFJSP, existing studies have not considered the impact of clean energy, particularly the fluctuations of renewable energy, on scheduling. Our research innovatively combines multi-agent reinforcement learning with the dynamic characteristics of renewable energy to address the scheduling challenges posed by energy fluctuations, thus further enhancing the adaptability and energy efficiency of scheduling systems. Table 1 offers a detailed comparison between this study and prior DRL-oriented scheduling research.

Table 1.

Some studies on DRL-related flexible job shop scheduling.

2.3. Research Gaps

Based on the review of existing studies, two research gaps can be identified:

- Most existing studies on green FJSP primarily adopt metaheuristic approaches such as genetic algorithms and typically assume that energy supply conditions are stable or exogenously given. Although distributed renewable energy is introduced in [20], the corresponding scheduling decisions remain largely production-oriented, with limited consideration of the temporal variability of renewable energy output. In particular, the energy supply state has not been modeled as an endogenous dynamic factor influencing scheduling decisions. To address this limitation, this study explicitly incorporates renewable energy generation states into the scheduling decision process and develops an energy-aware hierarchical and distributed multi-agent scheduling framework, enabling dynamic coordination between production scheduling and fluctuating renewable energy supply.

- As summarized in Table 1, existing works mainly focus on local dynamic events such as new job arrivals or changes in processing times, and carbon emissions are usually not included as optimization objectives. Meanwhile, under green scheduling settings, production dynamics and energy dynamics often occur simultaneously, whereas the volatility of renewable energy supply is still widely overlooked in the literature. In response to these gaps, this study considers a broader range of dynamic job events together with fluctuating renewable energy supply, incorporates carbon emissions into the optimization objectives, and establishes an energy-aware scheduling model for fully dynamic environments.

3. Problem Formulation

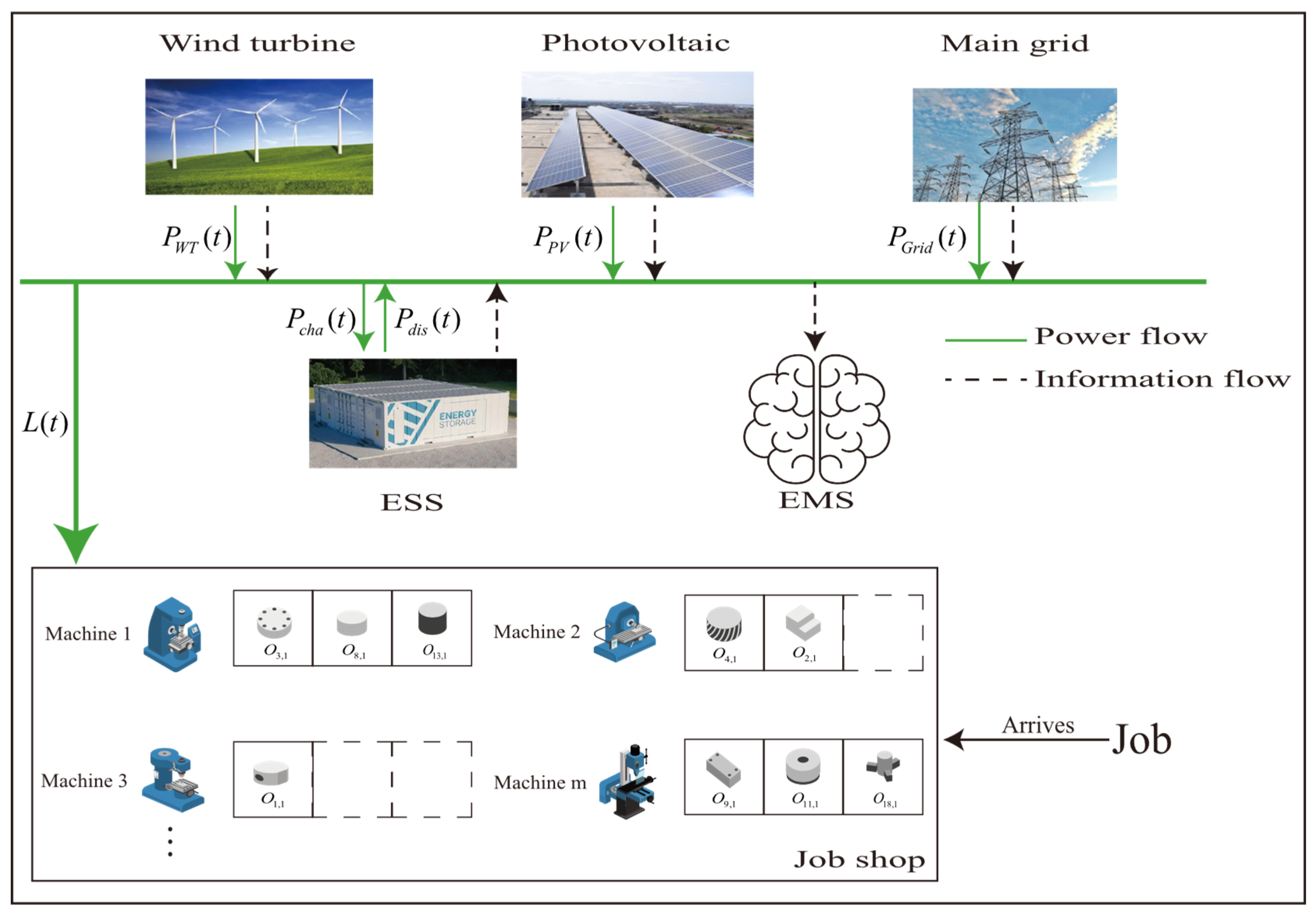

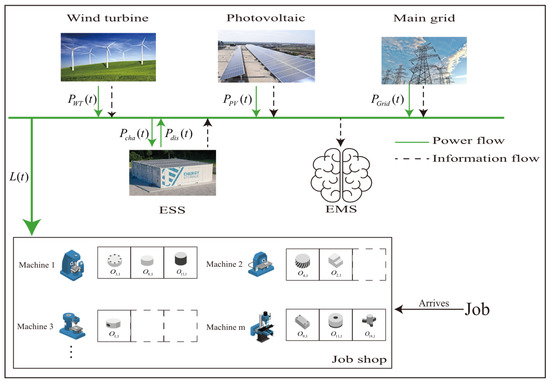

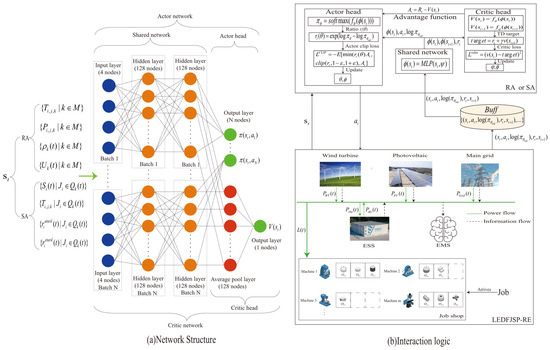

As shown in Figure 1, the LEDFJSP-RE model consists of photovoltaic (PV), wind turbines (WT), an energy storage system (ESS), an energy management system (EMS), and a job shop. The job shop system, serving as the load unit of the LEDFJSP-RE model, is composed of m heterogeneous machines that execute processing tasks of arriving jobs. Each job consists of multiple operations, and each operation can be processed on any of the m machines, with varying processing times and energy consumption across different machines.

Figure 1.

LEDFJSP-RE model.

During the production process, the PV and WT units can directly supply energy to the job shop. Let and denote the power outputs of the PV and WT units at time , and let represent the load demand of the job shop at time . When , the EMS stores the surplus energy into the ESS. Conversely, when , the ESS is first used to cover the shortage; if the remaining energy in the ESS is insufficient, additional power is purchased from the main grid to meet the load demand. This energy management logic defines the real-time energy balance constraints under which scheduling decisions are executed in the job shop.

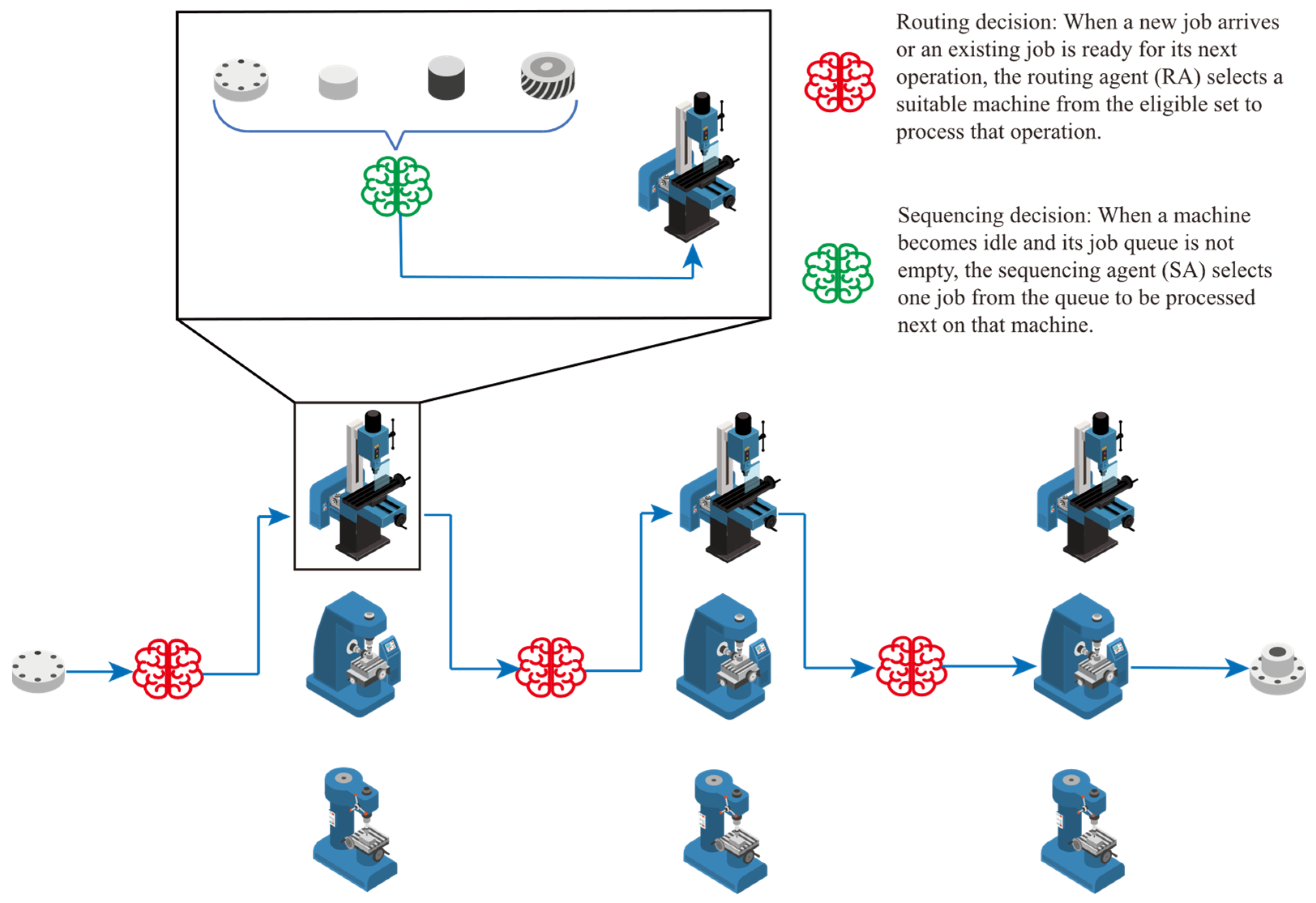

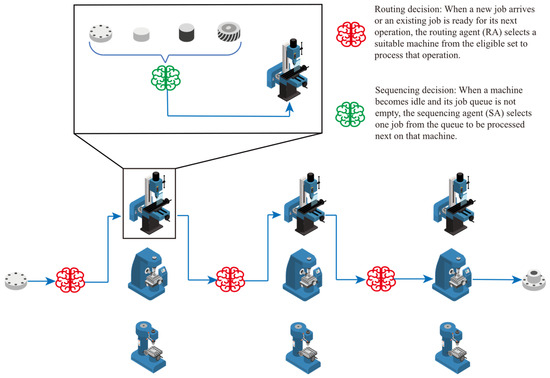

Under this energy structure, different scheduling decisions can significantly affect both the production performance and the energy-related outcomes of the job shop. Therefore, the scheduling system must optimize traditional objectives while simultaneously pursuing low-carbon operation under fluctuating renewable energy supply. To this end, an energy-aware scheduling strategy driven by two cooperative intelligent agents is designed. As shown in Figure 2, the routing agent (RA) determines the assignment of operations to machines, while the sequencing agent (SA) selects the next job to be processed from the waiting queue when a machine becomes idle. At each decision epoch triggered by dynamic events such as job arrivals or machine idle states, the agents observe both production states and energy-related information and generate corresponding scheduling actions. Through this hierarchical decision structure, job routing and sequencing decisions are jointly optimized, enabling the system to dynamically adapt to variations in job arrivals and renewable energy supply while balancing scheduling efficiency and carbon emission control. In this way, the LEDFJSP-RE is formulated as a sequential decision-making problem under coupled production and energy dynamics. To simplify the modeling complexity and focus on the scheduling and energy-aware decision-making mechanisms, a set of commonly adopted assumptions is introduced in this study, following related research on flexible job shop scheduling and energy-aware production scheduling. It should be noted that these assumptions mainly correspond to operational constraints that are frequently encountered in practical manufacturing systems but are not central to the decision logic investigated in this work. Neglecting these constraints allows clearer analysis and comparison of scheduling strategies without affecting the core scheduling mechanisms:

Figure 2.

Framework of the routing and sequencing scheduling system.

- Each machine can process at most one operation at any given time.

- The operations of each job must be executed sequentially according to a predefined technological order.

- Once an operation starts processing, it cannot be interrupted.

- Job transportation times between machines are not considered.

- Finite buffer constraints between consecutive operations are not considered, and unlimited intermediate buffers are assumed.

- Sequence-dependent setup times, such as tool changes or product changeovers, are not considered.

- Random machine failures and maintenance downtime are not taken into account.

- Transitions between idle and processing states of machines are assumed not to incur additional time delays.

These assumptions are widely adopted in the flexible job shop scheduling literature to maintain model tractability while preserving the essential characteristics of scheduling decision-making. The incorporation of the above omitted constraints into the proposed framework will be considered as an important direction for future research.

The parameters and key variables of the LEDFJSP-RE model are presented in Table 2.

Table 2.

Notations and descriptions for the LEDFJSP-RE model.

Mathematical Model

Objective functions:

Equations (1)–(4) represent the optimization objectives of this study: makespan, average job tardiness, total carbon emissions, and total energy consumption. In Equation (3), denotes the unit carbon emission coefficient, denotes the power of main grid at time , min. In Equation (4), , min. This study aims to minimize these objectives.

Cost:

Equations (5)–(7) define the total cost model used to evaluate the economic performance of a scheduling solution. In Equation (5), Cost represents the total cost incurred during the scheduling process, which consists of the tardiness cost and the electricity cost . Equation (6) formulates the tardiness cost . Here, and denote the completion time and due date of job , respectively. The term represents the tardiness of job , and the total tardiness is weighted by the unit tardiness penalty coefficient . Equation (7) describes the electricity cost , where denotes the unit electricity price and represents the power purchased from the main grid at time . The electricity cost is obtained by accumulating the grid power consumption over the scheduling horizon with a time interval of .

System constraints:

Equations (8)–(17) represent the constraints of this study. Equation (8) indicates that each operation can only be assigned to one machine. if , it means that is unassigned at time . Equation (9) enforces the machine capacity constraint, indicating that two different operations assigned to the same machine cannot be processed simultaneously, and their processing intervals must not overlap. Equation (10) represents the technological precedence constraint, which ensures that the operations of each job are executed sequentially in the predefined order. Equation (11) defines the completion time of each operation as the sum of its start time and the processing time on the selected machine, thereby reflecting the non-preemptive processing assumption. Equation (12) ensures that the power consumption of each machine does not fall below its idle power , reflecting the baseline energy consumption of machines even when they are not actively processing. Equation (13) defines the power balance within the job shop. The total load demand at time must be met by the sum of renewable generation from PV and WT units, the discharging power of ESS, and supplementary power purchased from the main grid, minus the charging power of ESS. Equation (14) describes the state update of ESS. The energy stored in ESS at time , denoted by , depends on the previous energy level, the effective charging power, and the effective discharging power. Equations (15) and (16) sets the feasible ranges of charging and discharging power. Equation (17) imposes the operational constraint that ESS cannot charge and discharge simultaneously. The binary variables and are introduced to indicate the charging and discharging states, ensuring mutual exclusivity.

4. Proposed Approach

4.1. DRL and PPO

In reinforcement learning (RL), the interaction process between an agent and the environment is typically modeled as a Markov decision process (MDP), which is widely used for sequential decision-making problems. An MDP is usually defined as a five-tuple , where is the state space, is the action space, is the state transition probability function, is the reward function, and is the discount factor.

At each time step , the agent observes the current state , selects an action , receives an immediate reward , and transitions to the next state . The goal is to learn an optimal policy that maximizes the expected cumulative discounted reward , as defined in Equation (18):

where . To achieve this objective, policy gradient (PG) methods are widely applied, especially in complex environments with high-dimensional or continuous state-action spaces. PG optimizes the policy by computing the gradient of the expected return with respect to the policy parameters and iteratively updating the policy toward optimality. However, traditional PG methods face challenges in selecting appropriate policy update step sizes, which can lead to instability during training.

To address this, trust region policy optimization (TRPO) was proposed. It introduces a Kullback–Leibler (KL) divergence constraint between the new and old policies to limit the magnitude of policy updates, thereby improving learning stability [27]. Building on this, PPO further replaces the KL divergence constraint with a clipping mechanism in the objective function, simplifying the optimization process and improving training efficiency and stability in large-scale tasks [28]. Unlike fixed exploration probability strategies, PPO employs a sampling mechanism based on the policy’s probability distribution, allowing it to automatically adjust the exploration intensity during training. This approach notably enhances policy robustness and convergence efficiency, especially in the later stages of learning.

The optimization objective of PPO is formulated as , as defined in Equation (19):

where represents the probability ratio of the new and old policies for the current action, is the hyperparameter for the cutting range, and is the estimated advantage function, commonly computed via generalized advantage estimation (GAE), as defined in Equation (20):

In the study, considering the characteristics of the job shop scheduling problem—such as dynamic state scales, heterogeneous constraints, and long-term dependencies—we adopt a unified PPO-based policy optimization approach to train both RA and SA. This method is well-suited to handling variable input structures and offers strong policy sampling capabilities and convergence stability. By combining the clipping mechanism with advantage function estimation, PPO effectively controls the magnitude of policy updates, preventing policy oscillation and degradation, thereby enabling more efficient scheduling decisions in complex scheduling systems.

4.2. State

Before detailing the state modeling of RA, we introduce the concept of the power purchase ratio, denoted as . The power purchase ratio is defined as the ratio of actual purchased power to the total energy consumption, serving as a key indicator for the system to perceive changes in energy availability. The power purchase ratio aggregates the expected dependence of a job–machine assignment on grid electricity, reflecting the overall balance between energy demand and renewable supply during the upcoming operation.

The state of RA is composed of four categories of information sets, detailed as follows:

- The set of estimated utilization rates for all machines in the system.

- The set of estimated processing times of job on each machine.

- The set of estimated processing power consumptions of job on each machine.

- The set of estimated power purchase ratios of job on each machine.

We first define the expected utilization rate of at scheduling time t as , as defined in Equation (21):

where denotes the estimated idle time of machine at rescheduling time . It is important to note that the machine utilization rate calculated here is forward-looking, taking into account the jobs in the machine’s queue that have not yet started processing.

We further define the expected energy consumption of the system at rescheduling time as , as defined in Equation (22):

where denotes the power consumption of machine at time . If , then represents the actual power consumption, which includes both idle and processing power, depending on the machine’s operational state. For instance, if is processing operation at time , then . If , then represents the expected power, which is estimated based on the job queue . Jobs are assumed to be processed sequentially according to the queue, and the corresponding power usage is projected accordingly.

Furthermore, to characterize the energy consumption and carbon emissions associated with assigning a job to different machines, we define the expected total power purchase rate when is assigned to , as defined in Equation (23):

where denotes the estimated maximum machine idle end time across all machines; the numerator represents the expected amount of electricity to be purchased from the grid over the upcoming processing period—i.e., the net power demand, calculated as the machine’s power requirement minus the available renewable energy supply, and the denominator corresponds to the expected energy consumption required to process the assigned job.

Although short-term renewable variations are not explicitly included in the state, the use of still enables adaptive behavior. When renewable output drops, the effective grid purchase for certain assignments increases, leading to lower rewards under the grid-purchase penalty. Through training, the routing agent (RA) learns to avoid such assignments and favor those with lower . Thus, RA responds to renewable fluctuations implicitly without relying on high-frequency renewable measurements. This design is consistent with existing multi-agent scheduling studies that use aggregated process-level indicators to maintain tractability while preserving sensitivity to dynamic energy conditions [29,30].

The state of RA is modeled as a matrix with dimensions where each row corresponds to one of the four categories of information described above, and each column represents a specific machine. The detailed composition of the state set is presented in Table 3.

Table 3.

State features for RA.

We assign one SA to each machine in the system. The state information for each SA also consists of four categories:

- The set of processing times for each job.

- The set of slack times for each job.

- The set of expected tardiness rates for each job.

- The set of actual tardiness rates for each job.

The slack time of at time is defined as the ratio between its remaining time until the due date and the estimated remaining processing time, as defined in Equation (24):

where is the estimated processing time of operation .

To calculate the tardiness rate, we first introduce the tardiness indicator function: . During each decision-making step, SA on selects a job from the queue and places it at the front, forming a new job queue: . For each job in the new queue , its waiting time is calculated as , as defined in Equation (25):

Based on this, we define the expected tardiness rate and the actual tardiness rate of the selected job , as defined in Equations (26) and (27), respectively:

In the actual scheduling process, the job queue on each machine dynamically evolves as jobs arrive and are completed. Accordingly, the state of the sequencing agent (SA) is explicitly modeled as a job-level matrix with dimensions , where denotes the current number of jobs waiting in the queue of machine , and each column corresponds to the feature vector of one queued job. The four state features describe the dynamic attributes of each job and are detailed in Table 4.

Table 4.

State features for SA.

4.3. Action

The action space of RA is a discrete set representing the candidate machines to which the current operation can be assigned. If there are machines available in the system, the action space is defined as: , where each action corresponds to assigning the current operation to machine with index .

For SA on machine , the action space consists of the indices of all jobs waiting in the current queue. It is defined as: , where each action selects the job at the corresponding position in the queue for processing next.

4.4. Reward

To guide RA to make decisions that balance energy efficiency and scheduling quality whenever an operation arrives in the system, we design an immediate reward feedback mechanism based on the state-action pair. The instant reward for RA upon completing action is defined as the sum of three components, as defined in Equation (28):

Firstly, RA is encouraged to prioritize jobs with lower power purchase ratios. The corresponding reward is defined as in Equation (29):

In addition to controlling the power purchase intensity, further reducing the processing energy consumption of jobs also helps decrease the total purchased electricity, thereby indirectly enhancing the utilization of clean energy and reducing carbon dioxide emissions. Therefore, we introduce a reward component based on energy consumption deviation. Let denote the average processing energy consumption of the target operation across all machines, and be its processing energy consumption on the currently selected machine . The energy efficiency reward deviation term is then defined as in Equation (30):

where , . Let the average expected machine utilization before and after the current scheduling action be and , respectively, where , The machine utilization reward deviation term is defined as in Equation (31):

SA is triggered when machine becomes idle and selects a job from its queue to process. To guide SA to consider both scheduling urgency and queue disruption risk when selecting jobs, we formulate the following immediate reward function , as defined in Equation (32):

where the slackness guidance term is used to encourage prioritizing jobs with smaller slack times. Let be the normalized slack time, and the set of normalized slack times. If the currently selected job satisfies:

then the slackness reward is defined as in Equation (34):

where is tolerance threshold for slackness. The values and are assigned to establish slackness as the dominant priority signal in the reward structure. A relatively strong positive value (0.7) ensures that jobs with the smallest slack are consistently prioritized, whereas a mild penalty (−0.1) prevents non-urgent selections without destabilizing learning. The magnitude difference reflects the importance of slackness while allowing sufficient exploration.

Let denote the normalized processing time, and the set of normalized processing times. If, within the slackness neighborhood, the currently selected job’s processing time satisfies:

then the processing time reward is defined as in Equation (36):

where is tolerance threshold for processing time. The value is intentionally smaller than the slack reward (0.7), reflecting that shorter processing time is a secondary preference used only to refine decisions within the urgent-job neighborhood. The zero reward for non-qualifying jobs avoids imposing unnecessary negative signals and helps maintain policy diversity.

The expected and actual tardiness interference terms, and , are defined in Equations (37) and (38), respectively:

The penalty magnitudes follow a rational hierarchy: a mild penalty (–0.1) is applied to expected tardiness, as it is an estimated risk, whereas a stronger penalty (–0.3) is applied to actual tardiness, which directly harms scheduling performance. Keeping both penalties moderate avoids destabilizing the learning dynamics while ensuring that the agent consistently avoids tardiness-inducing decisions.

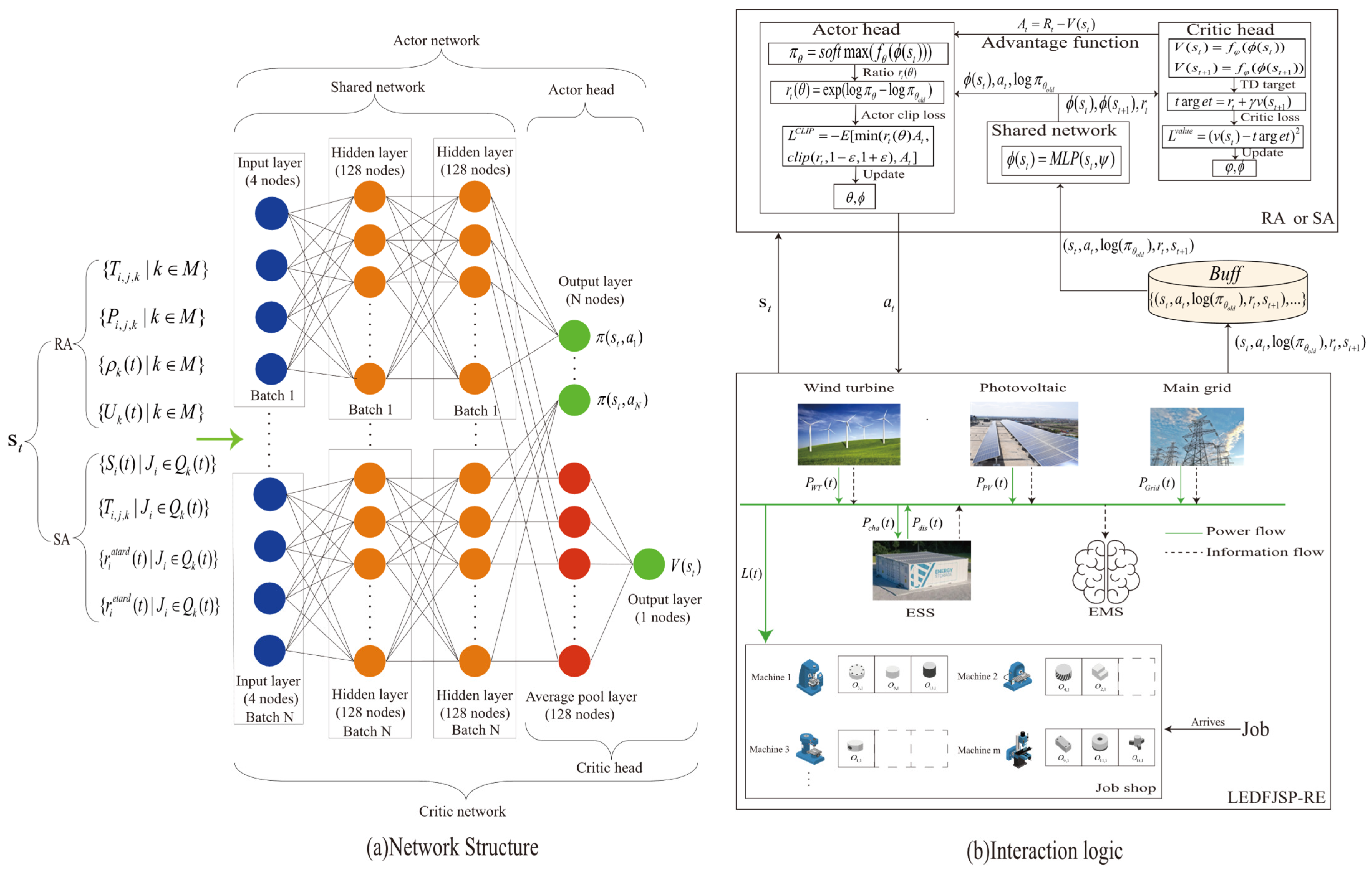

4.5. Process and Structure of Network

To address the dynamic job queue length faced by SA at each decision point, we design a variable-length job feature sequence-based policy modeling approach (this method is also applicable to RA). As shown in Figure 3, both RA and SA adopt the same Actor–Critic architecture and train their scheduling policies based on the PPO algorithm.

Figure 3.

Process and structure of the network.

This architecture consists of two subnetworks: the Actor network and the Critic network. At each scheduling decision point , the agent receives the current system state —a variable-length feature sequence of size —as input. The policy network outputs the probability distribution over all candidate actions, from which an action is sampled. After executing , the agent obtains an immediate reward and transitions to the next state .

Simultaneously, we record , the log-probability of the chosen action under the old policy. This is crucial because in PPO, the policy ratio used for updates is: , and corresponds to the policy used to sample the current action. Hence, we must store at action sampling to serve as a baseline during subsequent training for ratio computation. The tuple is recorded and stored in a centralized replay buffer as the data foundation for future policy updates. We compute the advantage vector to update the Actor network and use the mean squared error loss between the return and the state value estimate to update the Critic network.

The entire neural network consists of three main components:

- Shared network: This part consists of two fully connected layers with 128 and 128 nodes, respectively, each using the ReLU activation function. It extracts state features and constructs a unified feature embedding. This module is shared between the Actor and Critic networks to enhance representation consistency and training efficiency.

- Actor head: After obtaining the embedding for each state feature, a linear transformation layer (dimension 128 → 1) generates a score for each feature. Then, a Softmax function normalizes these scores into a probability distribution, representing the action-selection policy under the current state.

- Critic head: First, average pooling is applied to all state feature embeddings to obtain a compact representation of the global state. Then, a linear layer (128 → 1) estimates the value function of this state.

Algorithm 1 Presents the details of the PPO algorithm.

| Algorithm 1: PPO algorithm |

|

5. Numerical Experiments

This section presents the experimental design and performance evaluation of the proposed framework. Specifically, Section 5.1 describes the parameter settings of the numerical test instances, followed by the evaluation metrics for multi-objective optimization in Section 5.2. The training details of the RA and SA are then introduced in Section 5.3. Section 5.4 and Section 5.5 report the experimental results on static and dynamic instances, respectively, including comparisons with rule-based heuristics as well as representative DRL-based and evolutionary scheduling methods. Finally, Section 5.6 provides a case study to analyze the scheduling behaviors of different methods in energy-aware scheduling scenarios.

5.1. Parameters of Numerical Instances

Based on the study [31], the paper randomly generates numerical simulation instances to train and evaluate the proposed RA and SA. The job arrival process is assumed to follow an exponential distribution , where , and denotes the average inter-arrival time of new jobs. Each job consists of multiple operations, and the number of operations is randomly sampled within a predefined range to represent manufacturing tasks of varying complexity.

Each operation can be processed on candidate machines. To capture heterogeneous processing capabilities and energy consumption differences, the processing time and power consumption of each operation on each machine are randomly generated. Additionally, the idle power of each machine is assigned varying values to highlight the scheduling challenges under energy constraints. The due date of job is calculated by scaling the total average processing time of its operations with a tightness factor and adding the current system time offset, creating a mix of high-urgency and low-urgency tasks:

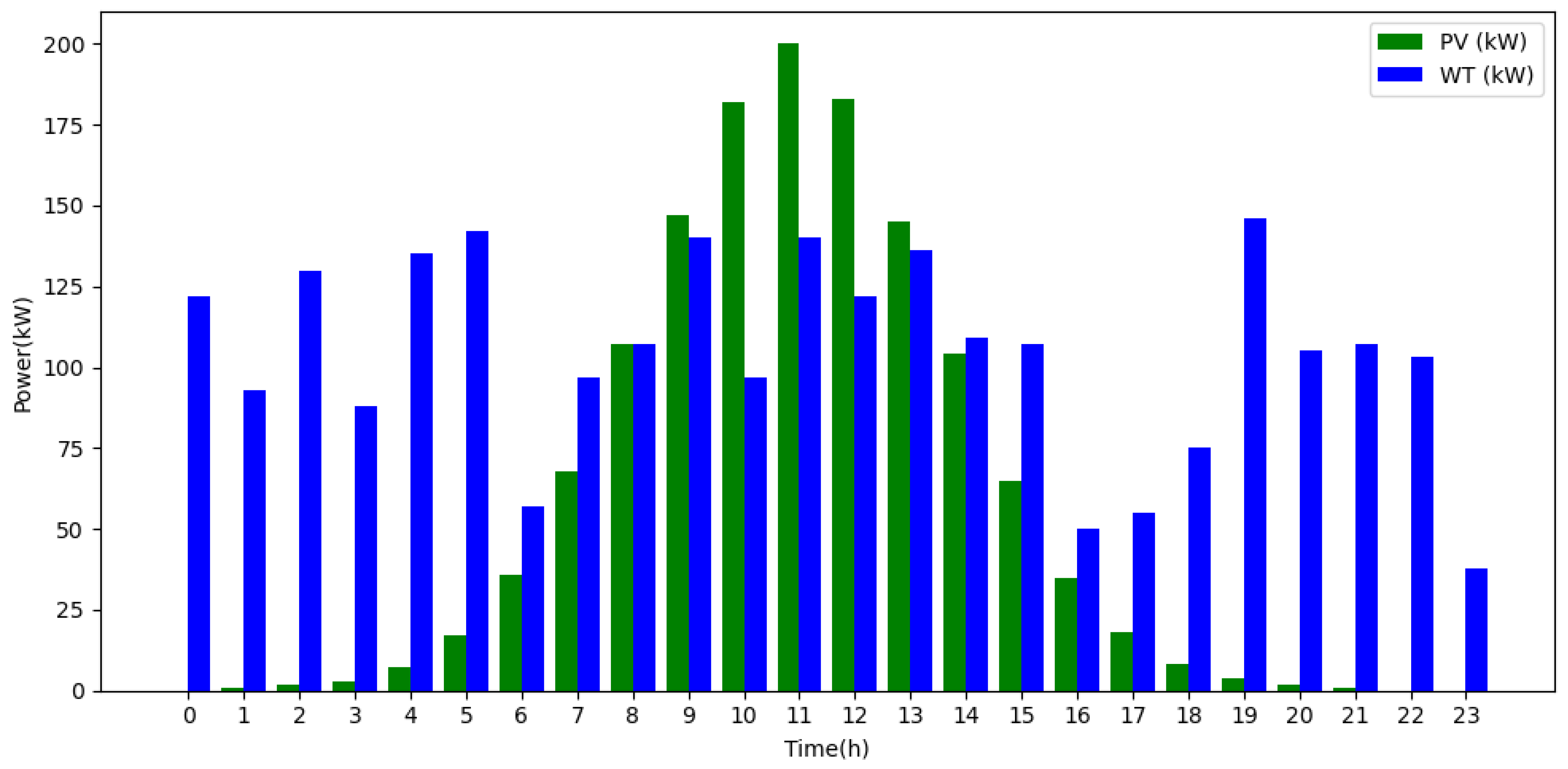

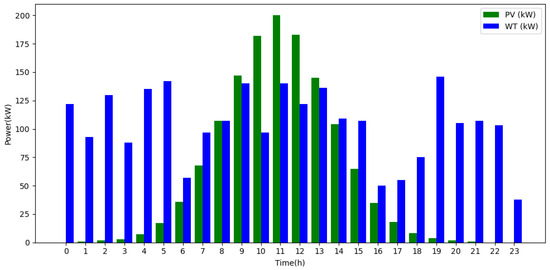

Regarding energy modeling, the outputs of PV and WT are subject to natural variability and are modeled as stochastic time series. Wind power output depends on wind speed and follows a Weibull distribution [32], expressed as:

where is the actual wind speed, is the rated wind speed, and are the cut-in and cut-out speeds, and is the rated output power. PV power generation primarily depends on solar irradiance and panel efficiency [33], and can be approximated as:

where is the PV panel area, is the solar irradiance, and is the PV conversion efficiency. The daily outputs of PV and WT over 24 h are illustrated in Figure 4 [32] and serve as input for scheduling simulations.

Figure 4.

Energy output of PV and WT in 24 h.

The value ranges and generation methods of all parameters are summarized in Table 5, where denotes uniform integer sampling and denotes uniform floating-point sampling. This instance generation framework enables realistic simulation of dynamic job arrivals, heterogeneous machine capabilities, and renewable energy fluctuations, providing a robust basis for training and evaluating multi-agent scheduling strategies.

Table 5.

Parameter settings of training and testing benchmarks.

5.2. Evaluation Metrics

To evaluate the overall performance of RA and SA in the multi-objective scheduling task, we adopt three comprehensive performance metrics: Generational Distance (GD) [34], Inverted Generational Distance Plus (IGD+) [35], and Hypervolume (HV) [36]. Among these, GD measures the convergence of the solution set. IGD+ evaluates both convergence and distribution uniformity, using a modified distance calculation to more accurately reflect the quality of the solution set relative to the reference Pareto front. HV indicates the dominance extent and distribution quality of the solution set within the objective space.

Before calculating these metrics, all solution sets are normalized using min-max scaling.

- (1)

- GD

GD measures the average distance from the obtained solution set to the true Pareto front , as defined in Equation (42):

where denotes the Euclidean distance between solution and its closest point on the reference Pareto front. A smaller GD value indicates that the solution set is closer to the true Pareto front.

- (2)

- IGD+

IGD+ is a commonly used comprehensive metric in multi-objective optimization for evaluating the quality of a solution set, taking into account both convergence and distribution uniformity, as defined in Equation (43):

where denotes the solution set to be evaluated, represents the reference Pareto front, and is the direction-sensitive modified distance function. This function only accounts for the distance when the objective values of solution are worse than those of the reference point , thereby emphasizing the penalty for solutions that are dominated relative to the Pareto front.

Compared to GD, which measures from the solution set’s perspective, IGD+ is based on the reference Pareto front, allowing it to comprehensively assess both how well the current solution set approximates and covers the true Pareto boundary. A smaller IGD+ value indicates that the solution set is more uniformly distributed along the reference front and closer to the Pareto optimum, reflecting better overall performance.

- (3)

- HV

HV measures the volume covered by the solution set in the objective space and is defined as in Equation (44):

where is the reference point (typically chosen beyond the maximum values of all objectives in the solution set), and denotes the number of objectives. A larger HV value indicates that the solution set dominates a larger region in the objective space, reflecting higher solution quality.

5.3. The Training Details of RA and SA

Before introducing the content of this section, we first present the well-known routing and sequencing rules as shown in Table 6 and Table 7. In this study, we adopt a separated asynchronous learning architecture: RA is independently trained using the PPO method under the FIFO sequencing baseline rule, while SA is independently trained using PPO under the EA routing baseline rule. Specifically, both RA and SA are trained independently in a job shop with 10 machines. The training simulates 100 new job insertions, with the urgency factor for each job set to 1.

Table 6.

Benchmark routing rules.

Table 7.

Benchmark sequencing rules.

The training tasks are implemented using the SimPy discrete-event simulation framework (SimPy 4.0.1) [37] and executed on a locally configured computing platform equipped with an Intel i7 processor, 16 GB RAM, and an NVIDIA RTX 3060 GPU. To ensure experimental reproducibility, the Python environment (Python 3.9.0) and deep learning framework (PyTorch 2.1) are explicitly specified, a fixed random seed (seed = 42) is applied to all training runs, and the entire training duration is recorded.

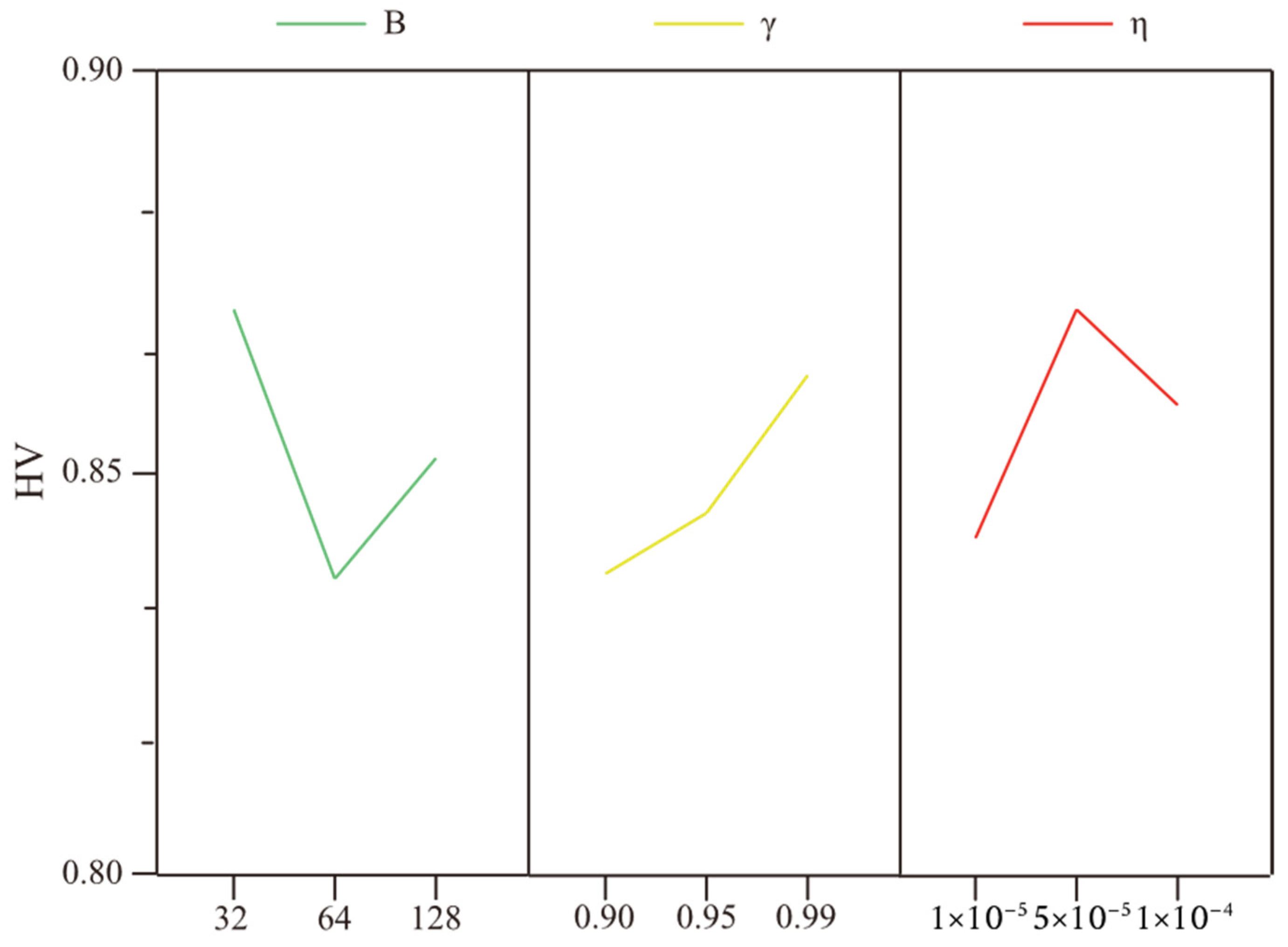

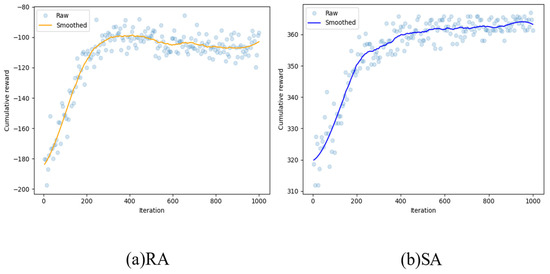

The main PPO parameters include the learning rate , mini-batch size , and discount factor . In addition, the recommended clipping ratio of 0.2 is adopted, and the Adam optimizer is used to improve gradient-based optimization efficiency. Considering the significant influence of these parameters on training performance, an orthogonal experimental design is employed to determine their combinations, with the specific parameter levels provided in Table 8. For each parameter configuration, 10 independent training runs are conducted for every instance, with the number of training episodes fixed at 1500. The averaged HV values obtained from these experiments are subsequently compared and analyzed, and the trends of the seven parameter settings are illustrated in Figure 5.

Table 8.

Levels of each parameter in PPO.

Figure 5.

The trend plots for the three parameters.

Based on the experimental results, the optimal parameter combination is determined to be , , and . The final PPO parameter configurations are summarized in Table 9.

Table 9.

Parameter settings for the proposed PPO.

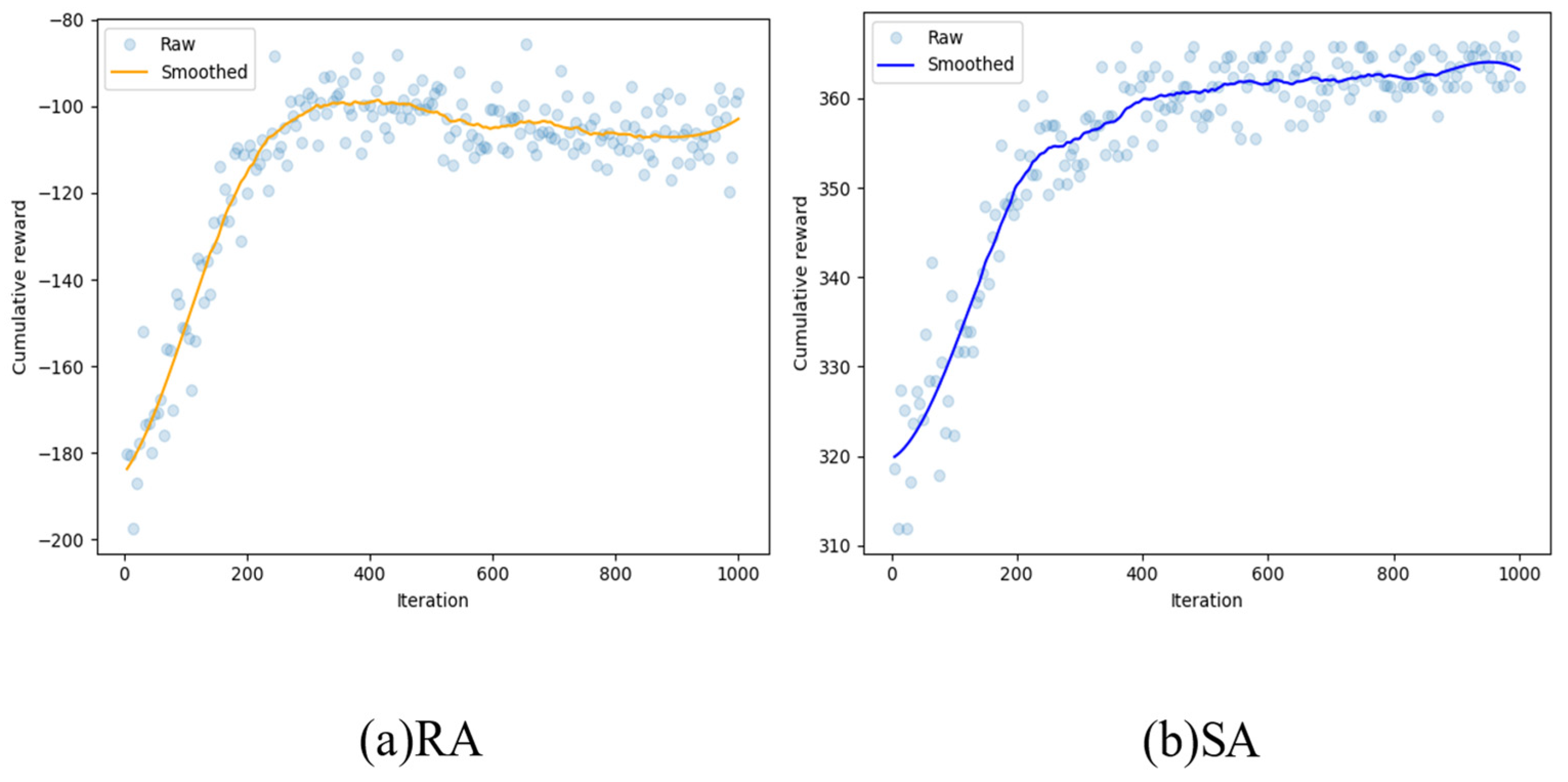

The training results are shown in Figure 6. As training episodes increase, the cumulative rewards of both RA and SA show an overall upward trend, indicating that the agents have successfully learned effective policies.

Figure 6.

Convergence curve of cumulative reward during training.

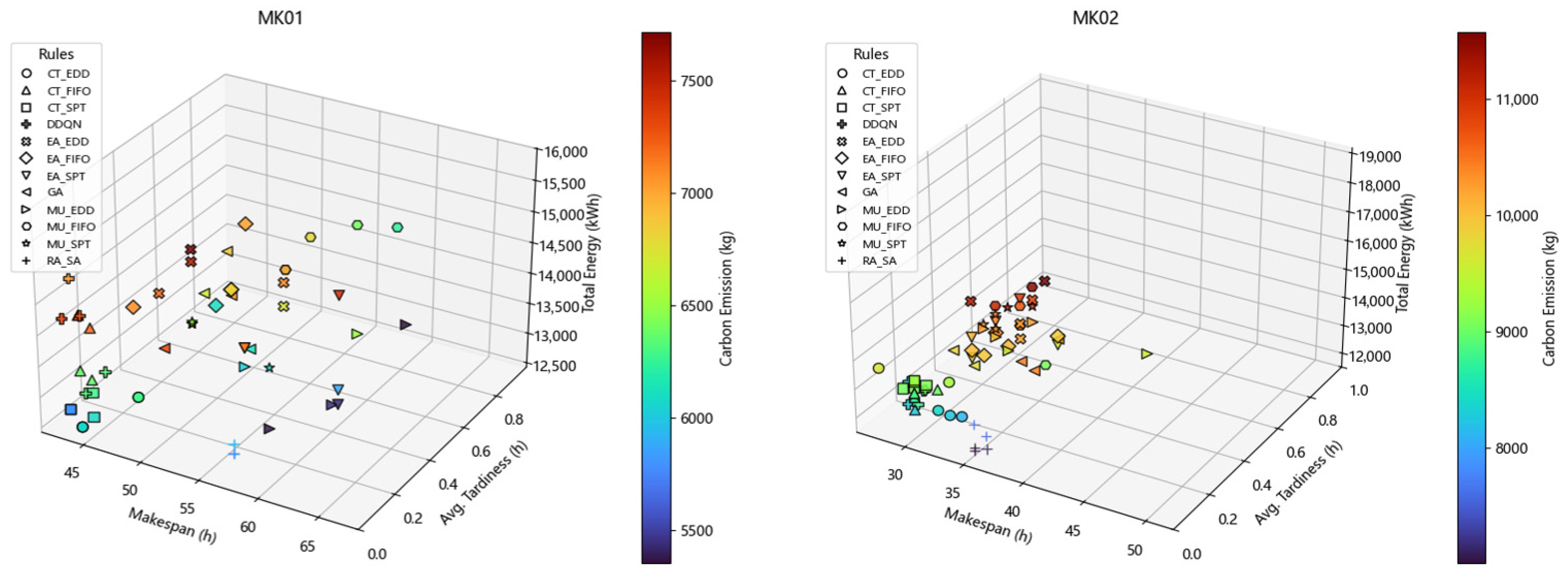

5.4. Experimental Results on Static Instances

To comprehensively evaluate the generalization ability and robustness of the proposed RA_SA method for solving the LEDFJSP-RE problem, this section compares it with eleven representative scheduling approaches, including the DDQN-based method proposed in [31], the classical genetic algorithm (GA), and nine rule-based strategies listed in Table 10. To ensure fairness and comparability among different solution paradigms, all experiments were conducted on the widely used Brandimarte benchmark instances. In the static experimental setting, job arrival dynamics and renewable energy fluctuations are not considered. The machine power levels and job due-date parameters are set according to [31], thereby ensuring the rationality and consistency of the experimental configuration. Each instance is independently executed ten times, and the average values of all evaluation metrics are reported to mitigate the influence of randomness.

Table 10.

Combined dispatching rules.

Table 11, Table 12 and Table 13 present the comparative results of different algorithms under multiple performance metrics, where the best results are highlighted in bold. The experimental results show that RA_SA achieves the lowest IGD+ values in most benchmark instances, particularly in Mk02, Mk03, and Mk04, indicating its superior convergence performance. Meanwhile, RA_SA attains the minimum GD values in several instances, including Mk02, Mk04, Mk05, and Mk10, suggesting that the obtained solution sets are closer to the true Pareto front and exhibit higher overall solution quality. Even in cases where RA_SA does not achieve the best IGD+ or GD values, it still demonstrates a clear advantage in terms of HV, reflecting stronger stability and consistency in balancing multiple objectives.

Table 11.

Comparison of IGD+ values between RA_SA and other rules on static instances.

Table 12.

Comparison of GD values between RA_SA and other rules on static instances.

Table 13.

Comparison of HV values between RA_SA and other rules on static instances.

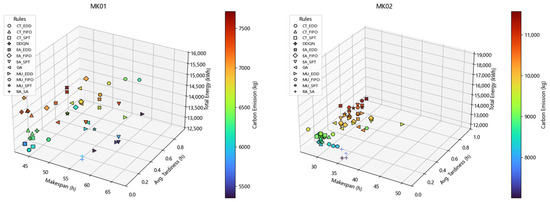

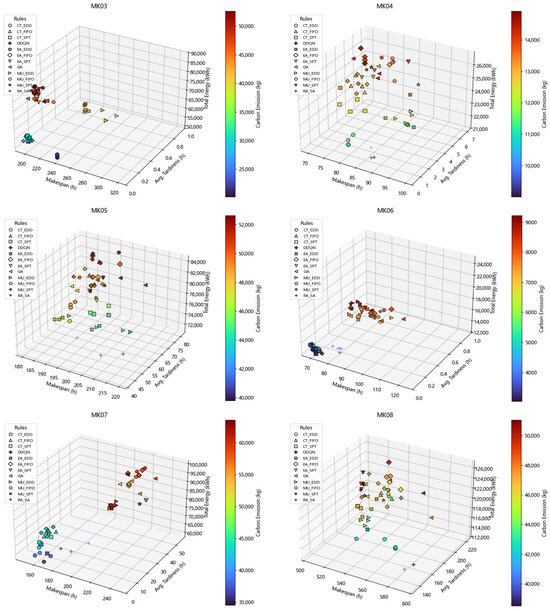

Furthermore, as illustrated by the Pareto fronts in Figure 7, RA_SA is able to obtain solution sets that are closer to the ideal front than those produced by DDQN and GA in most benchmark instances. This observation indicates that scheduling methods based on fixed or predefined rules are limited in their ability to fully capture system state characteristics at each decision stage and to adapt effectively to environmental changes, leading to performance degradation in complex scheduling scenarios. In contrast, reinforcement learning–based agents can dynamically perceive environmental states through continuous interaction and make adaptive decisions accordingly, thereby exhibiting superior optimization capability in multi-objective scheduling problems.

Figure 7.

Pareto fronts obtained by RA_SA and other dispatching rules on static instances.

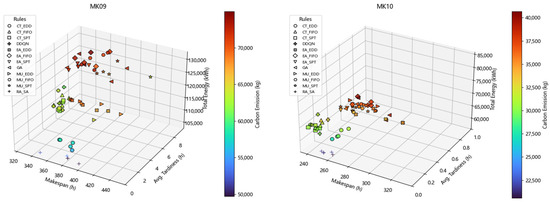

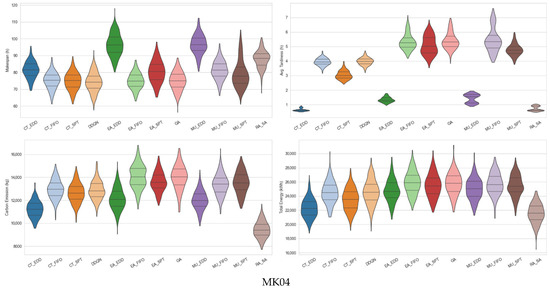

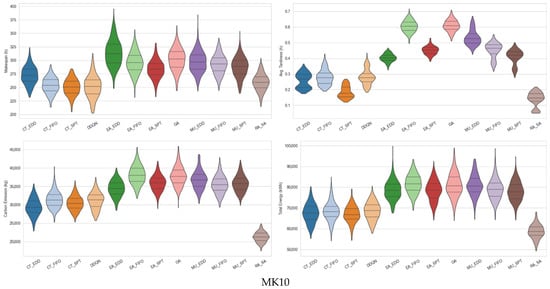

Further analysis indicates that although the single-agent DDQN method exhibits a certain degree of convergence in some instances, it still suffers from notable limitations in terms of solution diversity and stability. This limitation mainly arises from its single-agent decision-making structure, in which job assignment and sequencing decisions are coupled within a unified policy, thereby lacking sufficient flexibility and coordination when handling conflicting multiple objectives. Figure 8 presents violin plots of the four optimization objectives for the MK04 and MK10 instances, from which it can be observed that the solution sets obtained by RA_SA are clearly superior to those of the other comparative methods in terms of both distribution uniformity and numerical performance.

Figure 8.

Violin plot of non-dominated solutions across dispatching rules for four objectives.

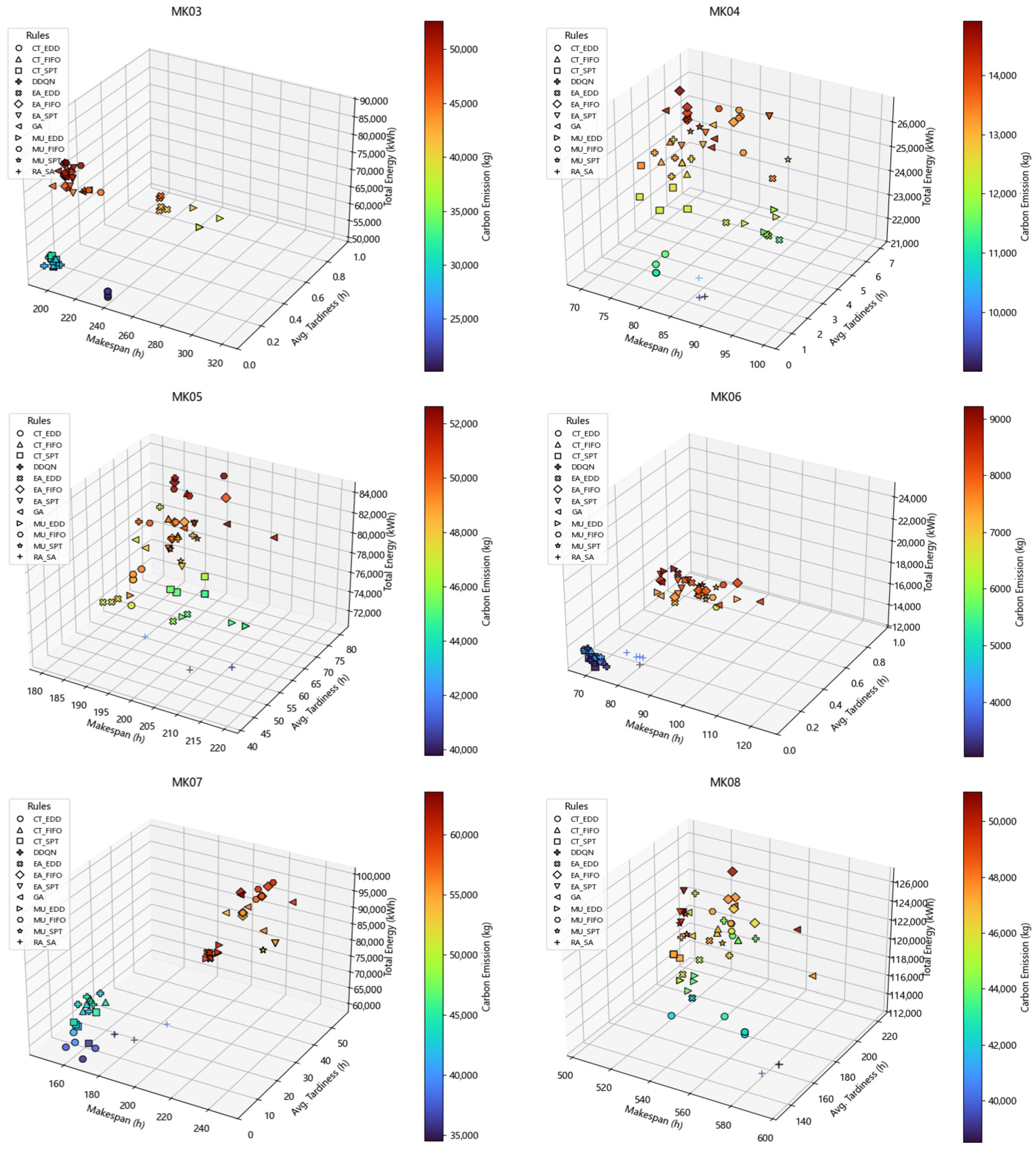

5.5. Experimental Results on Dynamic Instances

To comprehensively evaluate the performance of the proposed RA_SA framework under dynamic scheduling scenarios, a series of systematic experiments were conducted on multiple dynamic test instances. All experiments were carried out under conditions involving dynamic job arrivals and scheduling disturbances, in order to more realistically characterize the uncertainties encountered in practical production environments.

Table 14, Table 15 and Table 16 present the comparative results of different algorithms under multiple performance metrics, where the best results are highlighted in bold. The experimental results indicate that RA_SA achieves the lowest IGD+ values in most instances, attains the minimum GD values in several representative cases, and shows clear advantages in terms of the HV metric for the majority of instances. Overall, across all static test instances, RA_SA achieves the best IGD+ values in approximately 55.6% of the cases, the best GD values in about 61.1% of the cases, and the best HV values in roughly 88.8% of the cases. These quantitative results provide concrete support for the qualitative observations regarding the advantages of RA_SA presented earlier. This demonstrates its superior convergence performance, higher solution quality, and stronger stability and consistency in balancing multiple objectives.

Table 14.

IGD+ values for the pareto fronts obtained by RA_SA and other rules on dynamic instances.

Table 15.

GD values for the pareto fronts obtained by RA_SA and other rules on dynamic instances.

Table 16.

HV values for the pareto fronts obtained by RA_SA and other rules on dynamic instances.

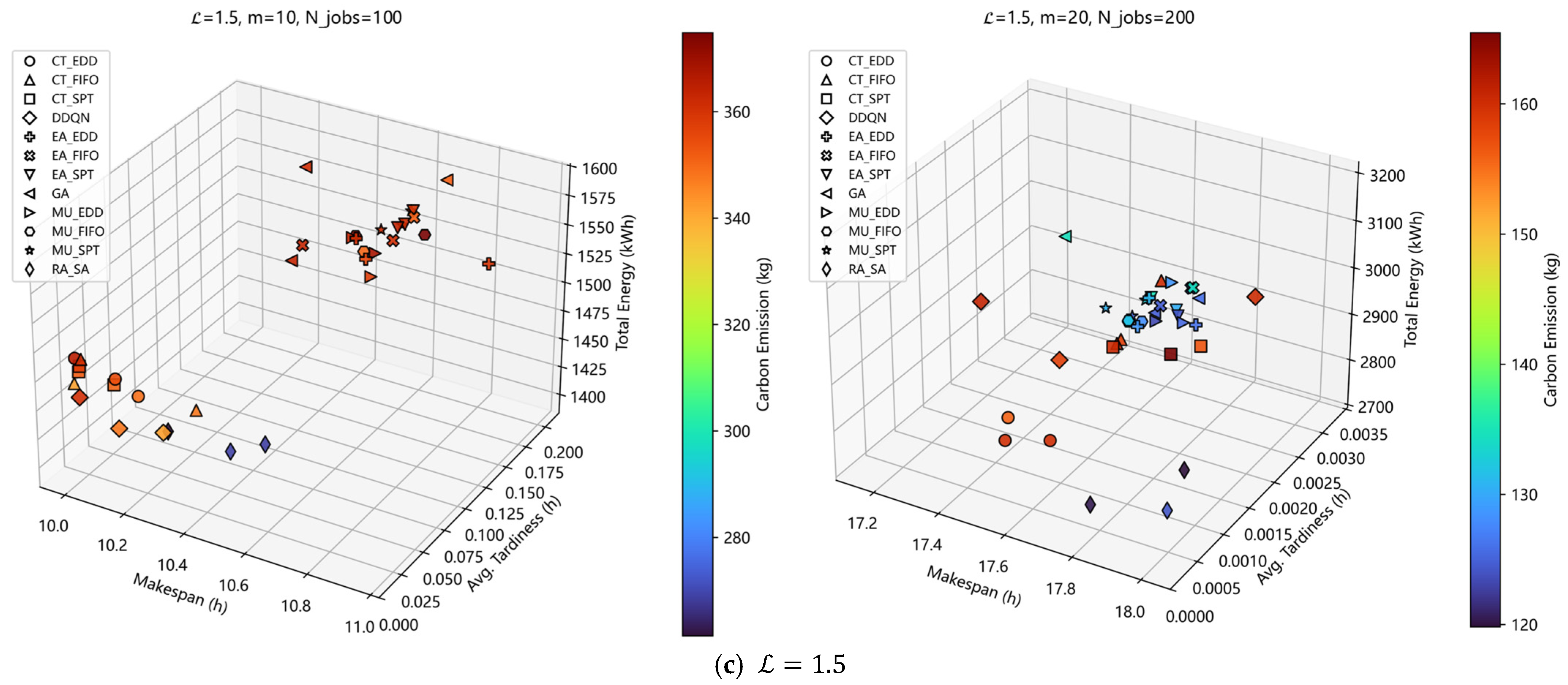

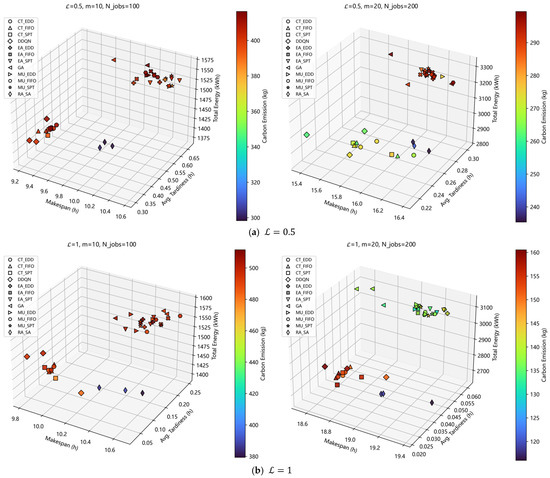

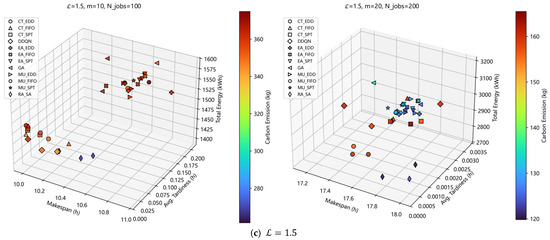

Furthermore, the Pareto fronts obtained by RA_SA and other competing algorithms for several representative instances are illustrated in Figure 9. It can be observed that RA_SA is able to generate solution sets that are closer to the ideal Pareto front and exhibit a more balanced distribution among different objectives, highlighting its comprehensive advantages in terms of convergence and diversity. These results indicate that RA_SA can effectively coordinate conflicting objectives such as makespan, energy consumption, and carbon emissions in dynamic environments.

Figure 9.

Pareto fronts obtained by RA_SA and other dispatching rules on dynamic instances.

Further analysis from the perspective of problem scale reveals that RA_SA performs particularly well on medium- and large-scale instances, especially those with high job volumes and system complexity, such as the case of 1.5 × 20 × 200 ( × × ). Such instances typically involve higher decision dimensionality and more complex job–resource coupling relationships, posing greater challenges to scheduling strategies. The experimental results demonstrate that RA_SA is able to maintain good convergence behavior and solution quality under these conditions, reflecting its strong robustness and scalability.

Overall, the dynamic experimental results demonstrate that RA_SA can effectively handle job insertions, renewable energy fluctuations, and multi-objective conflicts. Compared with traditional rule-based methods and single-agent approaches, RA_SA exhibits stronger adaptability and superior overall optimization performance in dynamic environments, confirming its effectiveness and practicality for complex green scheduling problems.

5.6. Case Study

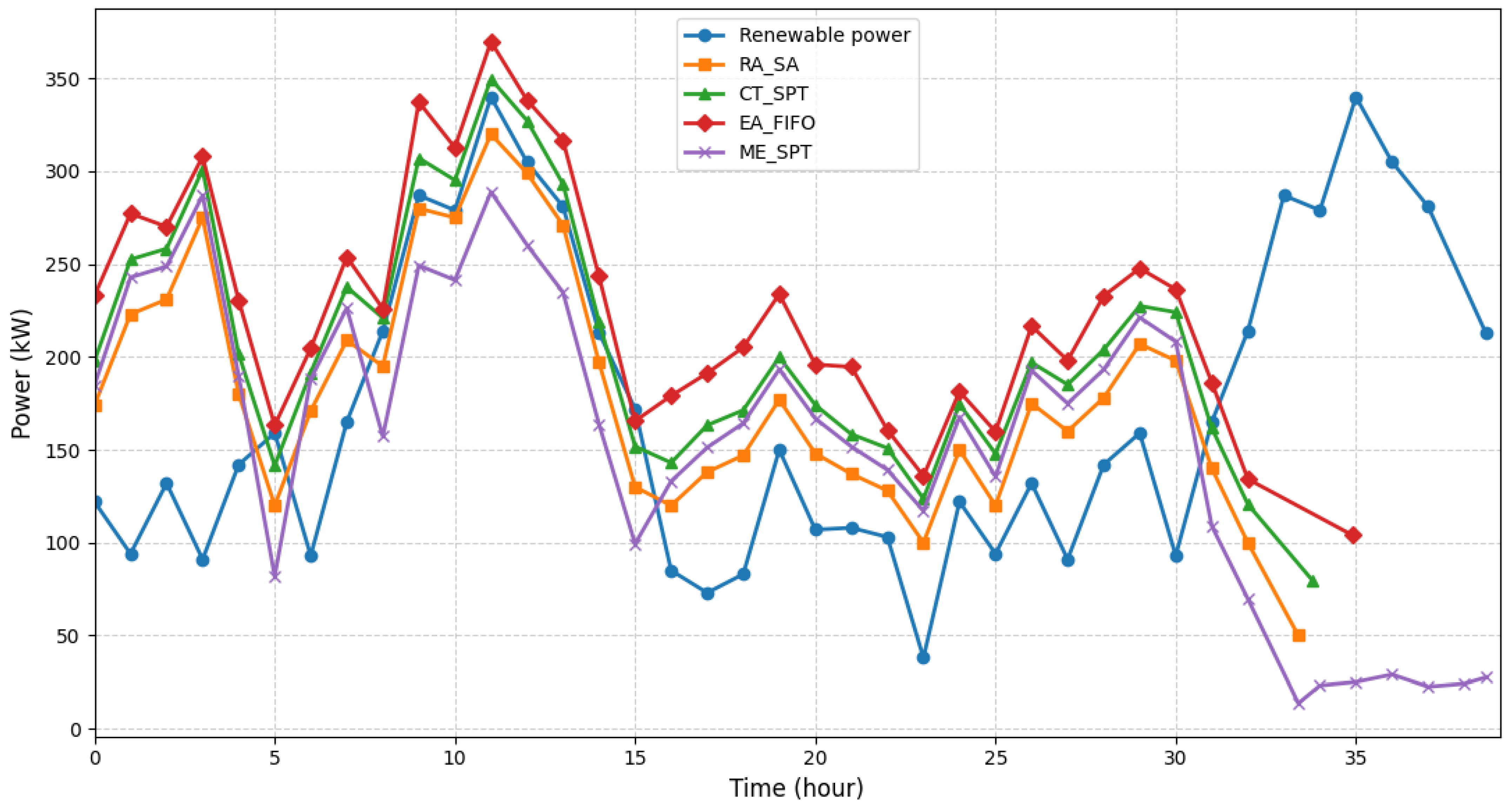

It should be noted that the primary objective of this case study is not to directly demonstrate the immediate industrial deployability of the proposed framework, but rather to analyze and illustrate the decision-making behaviors of different scheduling paradigms under coupled job–energy dynamics. By constructing a scheduling scenario with renewable energy participation, this case study aims to provide insights into how different scheduling strategies respond to interactions among production load, energy utilization, and carbon emission control, thereby offering an analytical basis for future extensions toward practical industrial applications.

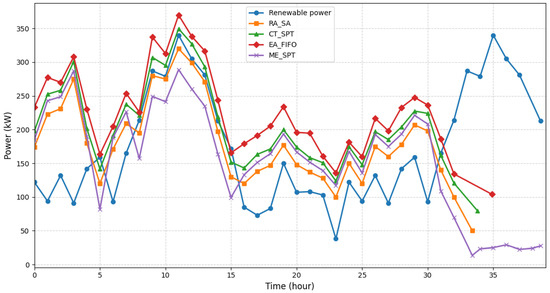

A test instance with a scale of 1.5 × 20 × 200 () is selected to evaluate the scheduling performance of RA_SA in terms of carbon emission control and operational cost under scenarios involving renewable energy participation. In addition to RA_SA, three comparative scheduling strategies are considered in the case study, including the EA_FIFO, the CT_SPT rule that ranked second overall in the previous experiments, and an energy-oriented scheduling method that combines a minimum energy routing rule (denoted as ME) with the SPT sequencing rule. Specifically, the ME routing rule prioritizes the assignment of each operation to the machine with the lowest processing energy consumption at the machine-selection stage.

Figure 10 presents a comparative illustration of the production load and renewable energy supply curves obtained by the three scheduling rules under the same renewable energy scenario. It can be observed that the ME routing rule consistently favors low-energy-consumption machines during machine selection, while neither explicitly accounting for the temporal fluctuations of renewable energy supply nor imposing constraints on job completion time and tardiness risk. As a result, jobs tend to be concentrated on a small number of low-energy machines, leading to load congestion in the time dimension and a significant extension of the makespan. Meanwhile, traditional rules such as EA_FIFO and CT_SPT, which lack a joint consideration of energy states and overall system load, struggle to achieve a balanced trade-off among makespan, energy consumption, carbon emissions, and job tardiness. In contrast, RA_SA not only accounts for machine-level energy consumption differences but also incorporates explicit awareness of renewable energy generation states. Through cooperative multi-agent decision-making, RA_SA dynamically adjusts job assignments and processing sequences, enabling the production load to better align with the renewable energy supply curve over time. This allows RA_SA to effectively reduce energy consumption and carbon emissions while simultaneously restraining the growth of makespan and job tardiness.

Figure 10.

Hourly-averaged production load and renewable energy supply under different scheduling strategies for the 1.5 × 20 × 200 test case.

Furthermore, as shown in Table 17, additional experiments conducted on multiple randomly selected test instances yield consistent results with the above observations. Across different problem instances, RA_SA is able to achieve a more balanced trade-off between carbon emission reduction and operational cost, demonstrating its effectiveness and robustness in complex scheduling environments with renewable energy participation.

Table 17.

Comparison of total cost and carbon emissions under different scheduling strategies across test cases.

6. Conclusions and Future Work

This study develops an LEDFJSP-RE scheduling framework to support low-carbon and efficient operation in smart manufacturing environments. To address dynamic job arrivals and fluctuations in renewable energy supply, two cooperative agents—RA and SA—are designed and independently trained using PPO. With problem-specific state representations, tailored reward mechanisms, and a self-adaptive job-selection strategy, both agents maintain stable decision-making performance under dynamic conditions. Experimental results show that the proposed multi-agent framework consistently outperforms a wide range of baseline scheduling strategies under different disturbance scenarios. Case analyses further indicate that the coordinated RA_SA mechanism provides greater scheduling flexibility and enables better temporal alignment between production load and renewable energy generation profiles. Overall, the framework offers an effective intelligent solution for energy-aware scheduling in flexible job-shop environments.

Several limitations should be acknowledged. The framework is validated in a simulation environment, which enables controlled modeling of dynamic events and systematic analysis of scheduling behaviors, but inevitably abstracts certain complexities of real production systems. Factors such as transportation delays, sequence-dependent setup operations, finite buffer capacities, stochastic machine failures, and detailed machine-level energy behaviors (e.g., start-up peaks, shutdown policies, and idle-to-active transition costs) are not included. These simplifications reduce modeling complexity but may constrain direct applicability in certain industrial scenarios.

As manufacturing systems continue to evolve toward higher uncertainty and tighter coupling between production and energy resources, incorporating the omitted operational constraints will be essential. This includes modeling equipment failures, material-handling delays, buffer limitations, and setup operations, as well as integrating the scheduling framework more closely with energy management functions such as renewable energy forecasting, energy storage operation, and time-dependent electricity pricing. In addition, constructing standardized dynamic benchmark sets would facilitate more systematic and comprehensive comparisons across different classes of scheduling methods. Furthermore, recent advances in digital-twin-assisted dynamic rescheduling provide promising directions for enabling real-time state synchronization, disturbance prediction, and adaptive schedule adjustment [38]. Integrating digital twin mechanisms into the proposed MARL framework may further enhance its responsiveness and robustness in complex industrial environments.

Overall, the LEDFJSP-RE framework establishes a solid foundation for real-time, energy-aware scheduling in flexible job-shop systems. With the progressive integration of more realistic operational constraints, advanced energy management strategies, and real-time feedback mechanisms, the framework has the potential to evolve into a practical and scalable solution for more complex and large-scale manufacturing settings.

Author Contributions

Conceptualization, Q.Z. and Y.L.; methodology, Y.L.; software, Q.Z.; validation, Q.Z. and Y.L.; formal analysis, Q.Z. and Y.L.; investigation, Q.Z.; resources, Y.L.; data curation, Q.Z.; writing—original draft preparation, Q.Z.; writing—review and editing, Y.L., C.T., T.Z., E.H. and Y.L.; visualization, Q.Z.; supervision, Y.L., C.T., T.Z., E.H. and Y.L.; project administration, Q.Z.; funding acquisition, Y.L. All authors have read and agreed to the published version of the manuscript.

Funding

The research reported in this paper is financially supported by the Guizhou Provincial Basic Research Program (Natural Science) (Grant No. Qian Ke He Ji Chu-ZK [2024] General 439).

Data Availability Statement

All data generated or analyzed during this study are included in this paper.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Friedlingstein, P.; O’SUllivan, M.; Jones, M.W.; Andrew, R.M.; Hauck, J.; Landschützer, P.; Le Quéré, C.; Li, H.; Luijkx, I.T.; Olsen, A.; et al. Global Carbon Budget 2024. Earth Syst. Sci. Data 2025, 17, 965–1039. [Google Scholar] [CrossRef]

- Deng, Z.; Zhu, B.; Davis, S.J.; Ciais, P.; Guan, D.; Gong, P.; Liu, Z. Global carbon emissions and decarbonization in 2024. Nat. Rev. Earth Environ. 2025, 6, 231–233. [Google Scholar] [CrossRef]

- Ghorbanzadeh, M.; Davari, M.; Ranjbar, M. Energy-aware flow shop scheduling with uncertain renewable energy. Comput. Oper. Res. 2024, 170, 106741. [Google Scholar] [CrossRef]

- Meng, L.; Zhang, C.; Shao, X.; Ren, Y. MILP models for energy-aware flexible job shop scheduling problem. J. Clean. Prod. 2019, 210, 710–723. [Google Scholar] [CrossRef]

- Xin, X.; Jiang, Q.; Li, S.; Gong, S.; Chen, K. Energy-efficient scheduling for a permutation flow shop with variable transportation time using an improved discrete whale swarm optimization. J. Clean. Prod. 2021, 293, 126121. [Google Scholar] [CrossRef]

- Meeks, R.C.; Thompson, H.; Wang, Z. Decentralized renewable energy to grow manufacturing? Evidence from microhydro mini-grids in Nepal. J. Environ. Econ. Manag. 2025, 130, 103092. [Google Scholar] [CrossRef]

- Subramanyam, V.; Jin, T.; Novoa, C. Sizing a renewable microgrid for flow shop manufacturing using climate analytics. J. Clean. Prod. 2020, 252, 119829. [Google Scholar] [CrossRef]

- Destouet, C.; Tlahig, H.; Bettayeb, B.; Mazari, B. Flexible job shop scheduling problem under Industry 5.0: A survey on human reintegration, environmental consideration and resilience improvement. J. Manuf. Syst. 2023, 67, 155–173. [Google Scholar] [CrossRef]

- Fazli Khalaf, A.; Wang, Y. Energy-cost-aware flow shop scheduling considering intermittent renewables, energy storage, and real-time electricity pricing. Int. J. Energy Res. 2018, 42, 3928–3942. [Google Scholar] [CrossRef]

- Wu, J.; Liu, Y. A modified multi-agent proximal policy optimization algorithm for multi-objective dynamic partial re-entrant hybrid flow shop scheduling problem. Eng. Appl. Artif. Intell. 2025, 140, 109688. [Google Scholar] [CrossRef]

- Islam, M.M.; Rahman, M.; Heidari, F.; Gude, V. Optimal onsite microgrid design for net-zero energy operation in manufacturing industry. Procedia Comput. Sci. 2021, 185, 81–90. [Google Scholar] [CrossRef]

- Hao, L.; Zou, Z.; Liang, X. Solving multi-objective energy-saving flexible job shop scheduling problem by hybrid search genetic algorithm. Comput. Ind. Eng. 2025, 200, 110829. [Google Scholar] [CrossRef]

- Wang, Z.; He, M.; Wu, J.; Chen, H.; Cao, Y. An improved MOEA/D for low-carbon many-objective flexible job shop scheduling problem. Comput. Ind. Eng. 2024, 188, 109926. [Google Scholar] [CrossRef]

- Wu, X.; Sun, Y. A green scheduling algorithm for flexible job shop with energy-saving measures. J. Clean. Prod. 2018, 172, 3249–3264. [Google Scholar] [CrossRef]

- Shahsavari-Pour, N.; Ghasemishabankareh, B. A novel hybrid meta-heuristic algorithm for solving multi objective flexible job shop scheduling. J. Manuf. Syst. 2013, 32, 771–780. [Google Scholar] [CrossRef]

- Yin, L.; Li, X.; Gao, L.; Lu, C.; Zhang, Z. A novel mathematical model and multi-objective method for the low-carbon flexible job shop scheduling problem. Sustain. Comput. Inform. Syst. 2017, 13, 15–30. [Google Scholar] [CrossRef]

- Zhou, K.; Tan, C.; Wu, Y.; Yang, B.; Long, X. Research on low-carbon flexible job shop scheduling problem based on improved Grey Wolf Algorithm. J. Supercomput. 2024, 80, 12123–12153. [Google Scholar] [CrossRef]

- Beier, J.; Thiede, S.; Herrmann, C. Energy flexibility of manufacturing systems for variable renewable energy supply integration: Real-time control method and simulation. J. Clean. Prod. 2017, 141, 648–661. [Google Scholar] [CrossRef]

- Schulz, J.; Scharmer, V.M.; Zaeh, M.F. Energy self-sufficient manufacturing systems—Integration of renewable and decentralized energy generation systems. Procedia Manuf. 2020, 43, 40–47. [Google Scholar] [CrossRef]

- Dong, J.; Ye, C. Green scheduling of distributed two-stage reentrant hybrid flow shop considering distributed energy resources and energy storage system. Comput. Ind. Eng. 2022, 169, 108146. [Google Scholar] [CrossRef]

- Lu, S.; Wang, Y.; Kong, M.; Wang, W.; Tan, W.; Song, Y. A Double Deep Q-Network framework for a flexible job shop scheduling problem with dynamic job arrivals and urgent job insertions. Eng. Appl. Artif. Intell. 2024, 133, 108487. [Google Scholar] [CrossRef]

- Li, Y.; Gu, W.; Yuan, M.; Tang, Y. Real-time data-driven dynamic scheduling for flexible job shop with insufficient transportation resources using hybrid deep Q network. Robot. Comput. Manuf. 2022, 74, 102283. [Google Scholar] [CrossRef]

- Zhang, L.; Feng, Y.; Xiao, Q.; Xu, Y.; Li, D.; Yang, D.; Yang, Z. Deep reinforcement learning for dynamic flexible job shop scheduling problem considering variable processing times. J. Manuf. Syst. 2023, 71, 257–273. [Google Scholar] [CrossRef]

- Zhang, W.; Zhao, F.; Li, Y.; Du, C.; Feng, X.; Mei, X. A novel collaborative agent reinforcement learning framework based on an attention mechanism and disjunctive graph embedding for flexible job shop scheduling problem. J. Manuf. Syst. 2024, 74, 329–345. [Google Scholar] [CrossRef]

- Liu, R.; Piplani, R.; Toro, C. A deep multi-agent reinforcement learning approach to solve dynamic job shop scheduling problem. Comput. Oper. Res. 2023, 159, 106294. [Google Scholar] [CrossRef]

- Wan, L.; Fu, L.; Li, C.; Li, K. An effective multi-agent-based graph reinforcement learning method for solving flexible job shop scheduling problem. Eng. Appl. Artif. Intell. 2025, 139, 109557. [Google Scholar] [CrossRef]

- Schulman, J.; Levine, S.; Moritz, P.; Jordan, M.I.; Abbeel, P. Trust region policy optimization. arXiv 2015, arXiv:1502.05477. [Google Scholar] [CrossRef]

- Schulman, J.; Wolski, F.; Dhariwal, P.; Radford, A.; Klimov, O. Proximal policy optimization algorithms. arXiv 2017, arXiv:1707.06347. [Google Scholar] [CrossRef]

- Xiao, J.; Zhang, Z.; Terzi, S.; Tao, F.; Anwer, N.; Eynard, B. Multi-scenario digital twin-driven human-robot collaboration multi-task disassembly process planning based on dynamic time petri-net and heterogeneous multi-agent double deep Q-learning network. J. Manuf. Syst. 2025, 83, 284–305. [Google Scholar] [CrossRef]

- Xiao, J.; Gao, J.; Anwer, N.; Eynard, B. Multi-Agent Reinforcement Learning Method for Disassembly Sequential Task Optimization Based on Human–Robot Collaborative Disassembly in Electric Vehicle Battery Recycling. J. Manuf. Sci. Eng. 2023, 145, 121001. [Google Scholar] [CrossRef]

- Luo, S. Dynamic scheduling for flexible job shop with new job insertions by deep reinforcement learning. Appl. Soft Comput. 2020, 91, 106208. [Google Scholar] [CrossRef]

- Yuan, G.; Gao, Y.; Ye, B.; Huang, R. Real-time pricing for smart grid with multi-energy microgrids and uncertain loads: A bilevel programming method. Int. J. Electr. Power Energy Syst. 2020, 123, 106206. [Google Scholar] [CrossRef]

- Tan, Z.; Fan, W.; Li, H.; De, G.; Ma, J.; Yang, S.; Ju, L.; Tan, Q. Dispatching optimization model of gas-electricity virtual power plant considering uncertainty based on robust stochastic optimization theory. J. Clean. Prod. 2020, 247, 119106. [Google Scholar] [CrossRef]

- Zitzler, E.; Deb, K.; Thiele, L. Comparison of multiobjective evolutionary algorithms: Empirical results. Evol. Comput. 2000, 8, 173–195. [Google Scholar] [CrossRef]

- Ishibuchi, H.; Masuda, H.; Tanigaki, Y.; Nojima, Y. Modified distance calculation in generational distance and inverted generational distance. In Evolutionary Multi-Criterion Optimization; Gaspar-Cunha, A., Henggeler Antunes, C., Coello, C.C., Eds.; Springer International Publishing: Cham, Switzerland, 2015; pp. 110–125. [Google Scholar]

- Zitzler, E.; Thiele, L. Multiobjective evolutionary algorithms: A comparative case study and the strength Pareto approach. IEEE Trans. Evol. Comput. 1999, 3, 257–271. [Google Scholar] [CrossRef]

- Matloff, N. Introduction to Discrete-Event Simulation and the SimPy Language; University of California, Davis: Davis, CA, USA, 2008. [Google Scholar]

- Yang, Y.; Yang, M.; Anwer, N.; Eynard, B.; Shu, L.; Xiao, J. A novel digital twin-assisted prediction approach for optimum rescheduling in high-efficient flexible production workshops. Comput. Ind. Eng. 2023, 182, 109398. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.