Particle Swarm Optimization Embedded in UAV as a Method of Territory-Monitoring Efficiency Improvement

Abstract

:1. Introduction

2. Related Work

2.1. Monitoring with UAVs

2.2. Computer Vision and Pattern Recognition

- Image preprocessing—the procedure of removing visible drawbacks of the input image (noise, blur) [20];

- Image preparation for pattern-recognition process—procedure of extracting image features (texture, patterns, contours) [21];

- Pattern recognition—detection and classification of particular objects [22].

2.2.1. Image Preprocessing

- Denoising;

- Deblurring;

- Brightness equalization.

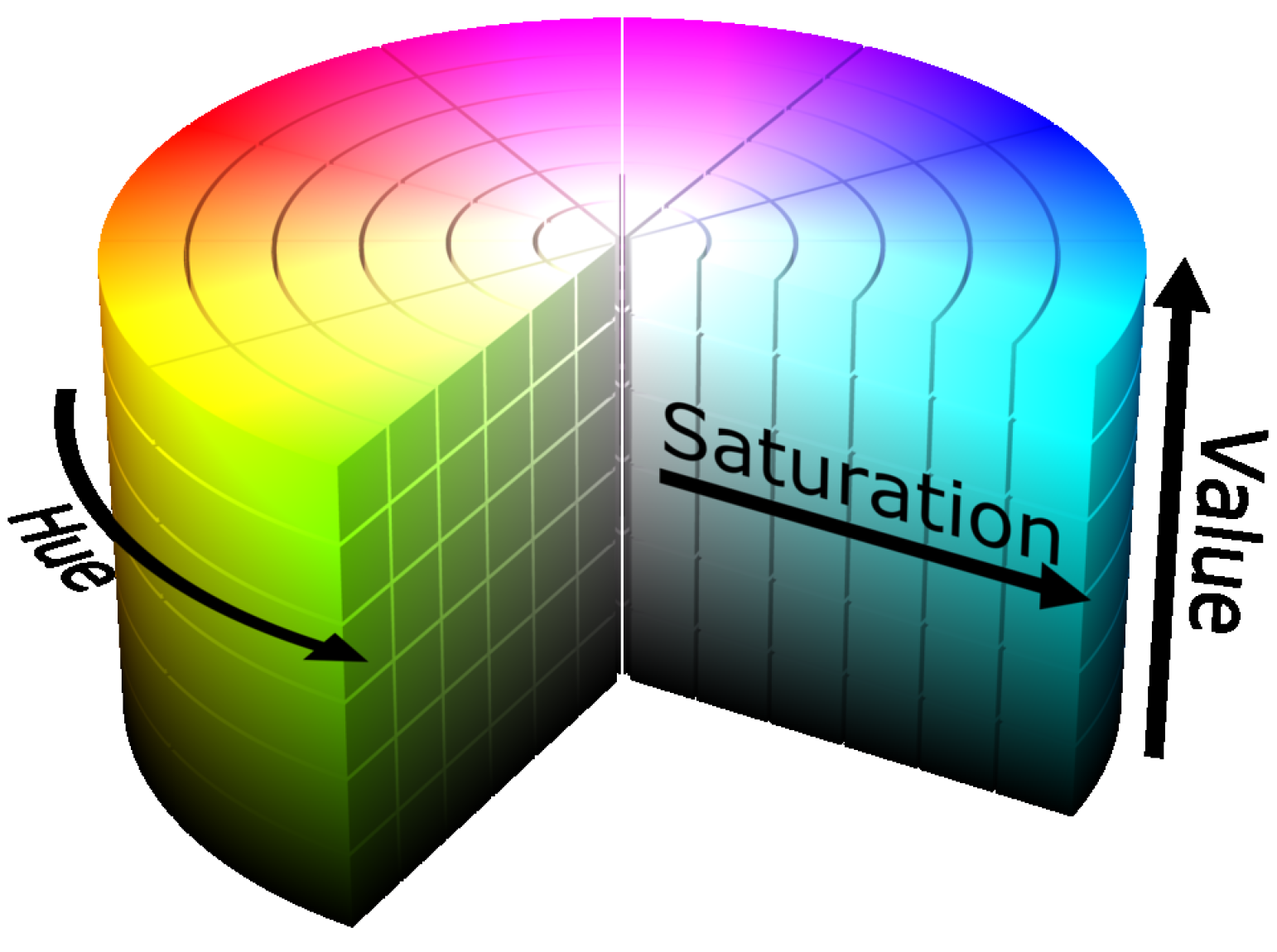

2.2.2. Image Preparation for Pattern-Recognition Process

- Pixel-based methods;

- Edge-based methods;

- Region-based methods.

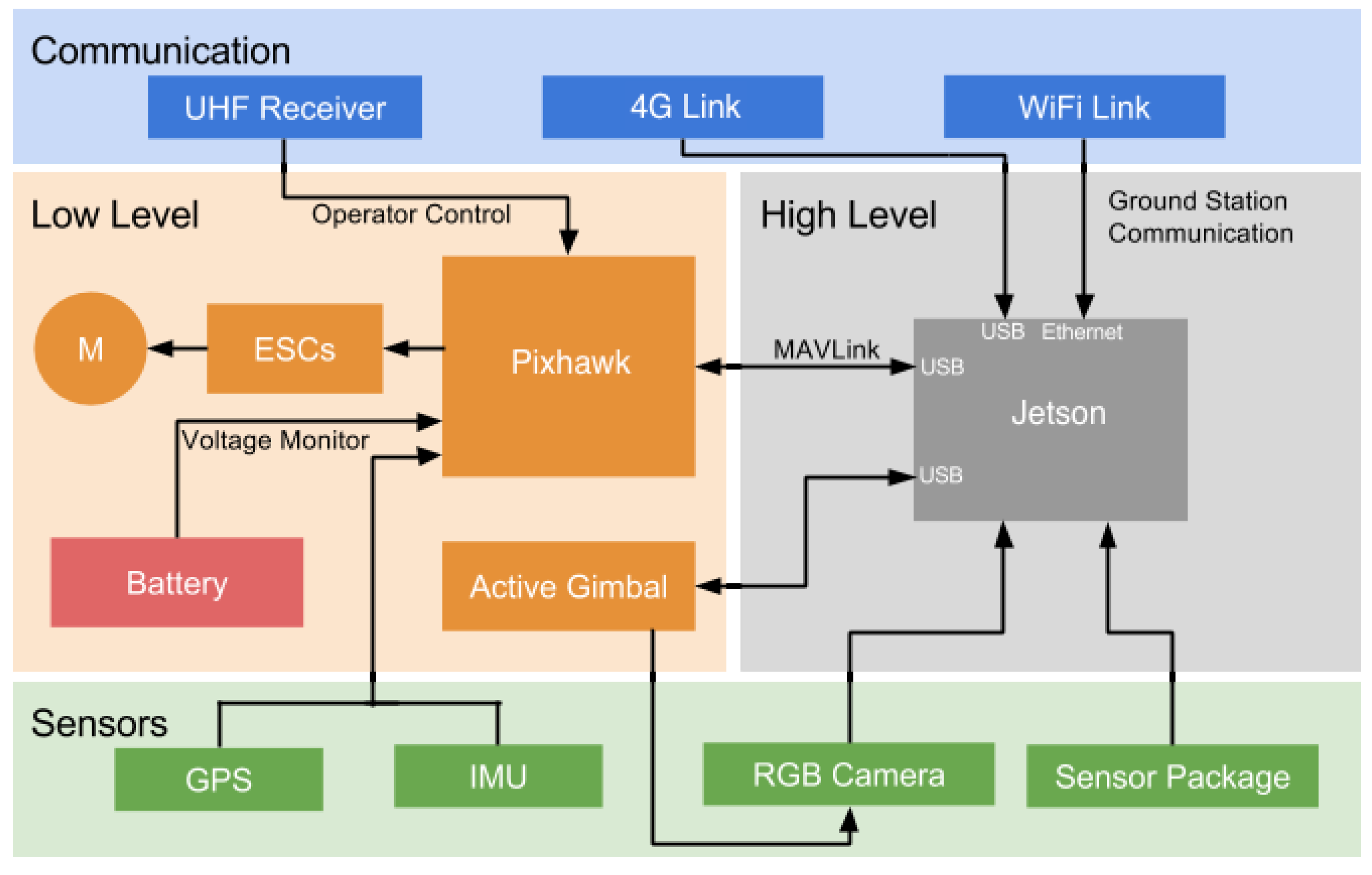

3. System Description

3.1. Hardware

- Ardupilot (Pixhawk) hardware is used to control low-level operation. This hardware contains an IMU and a GPS to know the UAV’s position and orientation; in addition, the Pixhawk connects to the Jetson nano embedded system via MAVlink protocol and the UAV’s battery; lastly, to control the UAV’s motors, it is connected to a UHF receiver;

- A Jetson nano is used to control high-level operation. It receives data from the distance sensor (depth cameras) and the RGB camera, and it communicates with external devices via Wi-Fi link;

- An RGB camera with a gimbal stabilizer is installed to capture onboard images at a resolution of 640 × 480 pixels;

- The camera and lens specifications are known, allowing the field of view (FOV) and the pixel size in meters to be computed.

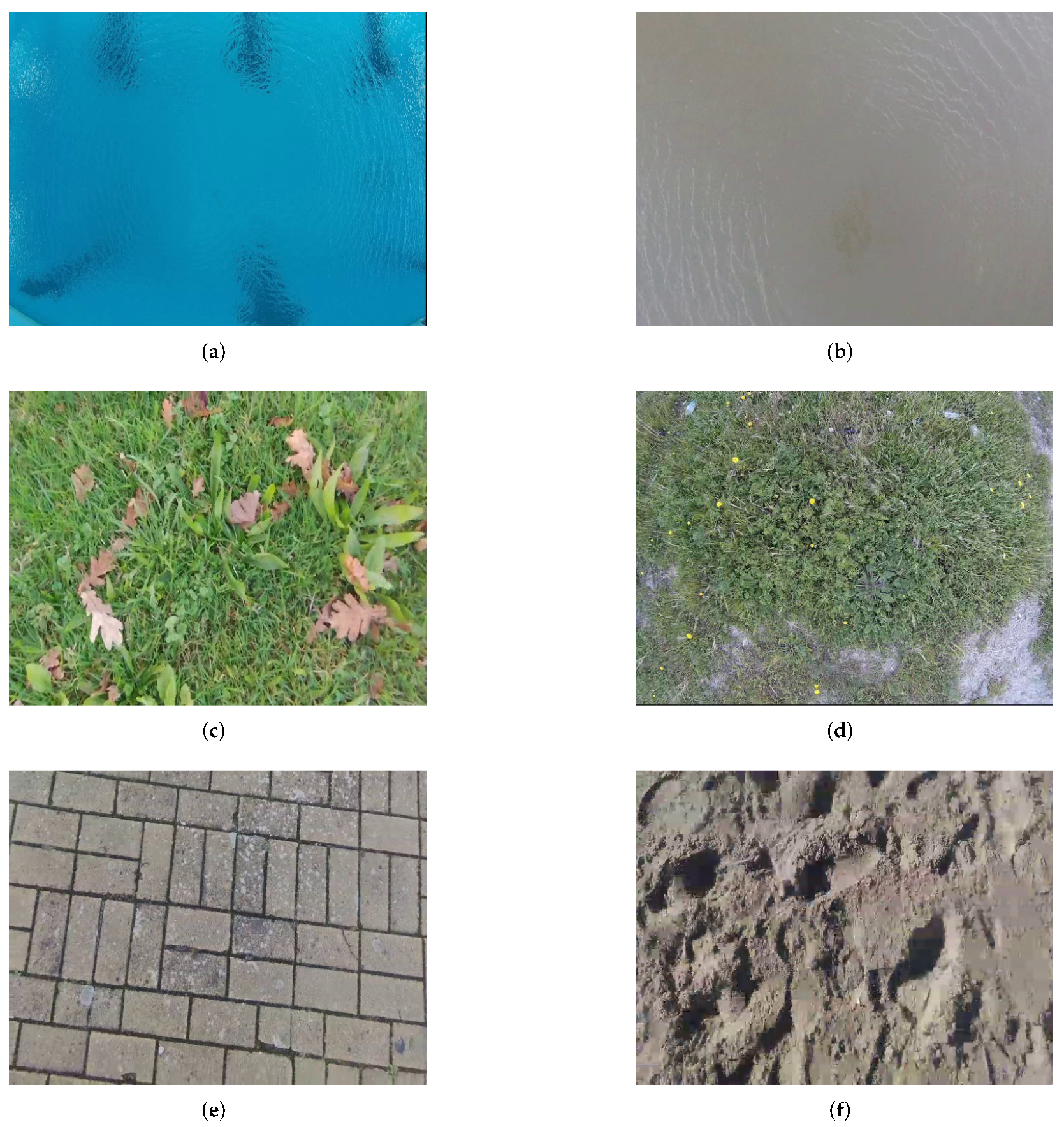

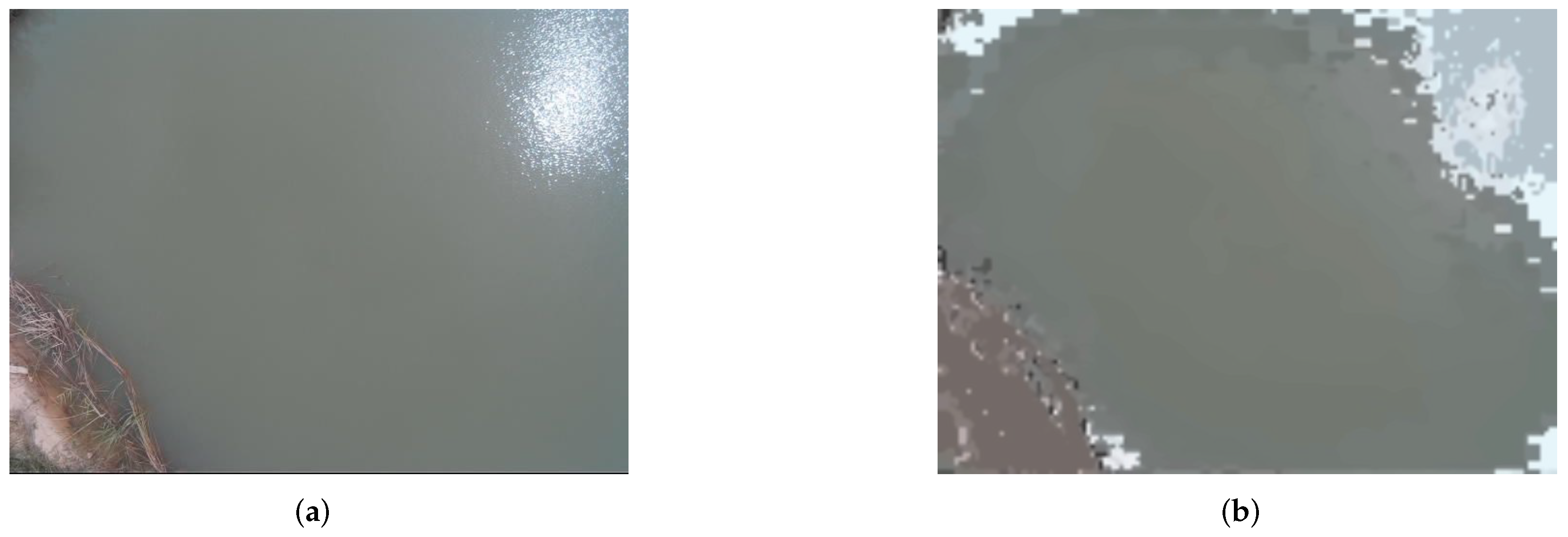

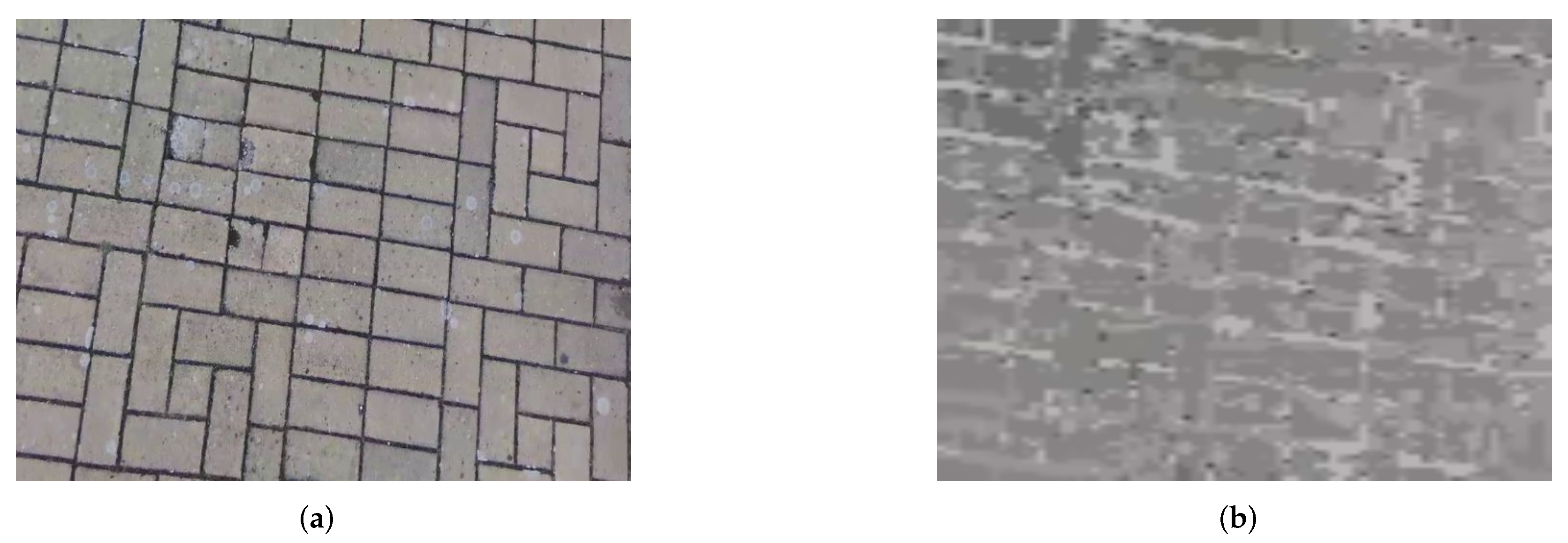

3.2. Data Gathering

3.3. Pattern-Recognition Methods

3.3.1. Image Preparation

3.3.2. Particle Swarm Optimization

- Sensitivity to outliers;

- Need to specify clustering parameters beforehand;

- Uncertainty of actions with objects that possess the properties of two different clusters simultaneously.

- 1.

- There is a swarm of particles, and each of them has coordinate ∈ and velocity ; f is the function that needs to be minimized; —the best-known particle position; g—the best-known state of the entire swarm;

- 2.

- For each particle ∈S, it is necessary to:

- Generate an initial position;

- Assign to the best-known position of particle its initial value ;

- In the case where , there is a necessity to update the value from g to p;

- Generate a particle velocity.

- 3.

- Until the stopping criterion is reached, the following points should be fulfilled for each particle:

- Generate random vectors and , which have a range of values between 0 and 1;

- Update the particle velocity as in Equation (2):where × is a vector product operation, and w, , and are the parameters specified by the user;

- The particle position should be changed according to Equation (3):

- Compare the values of and ; if the first value is less than the second one, the best-known position of particle should be replaced by ; if , the value of parameter g is to be changed to the value of .

- 4.

- In the end, parameter g contains the best solution.

- 1.

- Image unification: The rounding of pixels horizontally and pixels vertically to the nearest values of W and H, respectively, which are multiples of 10, in the normalized image;

- 2.

- Initial cluster distribution: The sequential selection of 10-by-10 regions in the image;

- 3.

- Automated initial centroid allocation: The choosing of the pixel with maximum average intensity; average intensity is calculated as in Equation (4):where , , and are the normalized values of a pixel’s RGB vector;

- 4.

- Cluster merging: The comparison of the rounded average intensity values for centroids from neighboring regions; the comparison is conducted vertically and horizontally relatively to each cluster. If the rounded average intensity values are equal to each other, two neighboring clusters are combined into one. In the merged cluster, the centroid is the pixel with the maximum average intensity value; this step needs to be repeated until there are M clusters , j∈ with the pairwise distinct rounded average intensity values of the centroids. In terms of PSO, the stopping criteria is a situation whereby further cluster merging is impossible, and all the rounded average intensity values are different;

- 5.

- For each pixel, the distance function d and color function f need to be calculated; they are shown in Equation (5) and Equation (6), respectively:where and are the coordinates of the given pixel per width and height of the image, and and are the coordinates of the centroid per image width and height;where , , and are the normalized values of the RGB vector of pixel ; is the centroid of the cluster ; and , , and are the normalized values of the RGB vector of centroid ;

- 6.

- For each pixel , it is necessary to find a center of mass , relative to which the distance function value is minimum, and a centroid , relative to which the value of the color function is minimum ();

- 7.

- 8.

- The function with the least difference should be chosen as a priority function (in case equals to , the distance function obtains priority because pixels that are closer to each other are more likely to belong to the same object than the ones that have similar colors);

- 9.

- The allocation of pixels to clusters is realized according to the priority function, e.g., pixel is assigned to a cluster if the priority function value between this pixel and this cluster’s centroid is minimum. Thus, the objective function of the elaborated clustering method can be expressed by Equation (9):

- 10.

- Denoising by the non-local means method [37]: The non-local means algorithm was chosen due to its ability to preserve the image texture details [38]. This method is illustrated by Equation (10):where is a filtered intensity value of pixel color component at point p; is an unfiltered intensity value of pixel color component at point q; —weighting function; —normalizing factor. A Gaussian function is used as the weight function, and it is represented in Equation (11):where h is the filter parameter (for RGB images, h equals 3); is a local average intensity value of pixel color components around point p; and is a local average intensity value of pixel color components around point q. Normalizing factor is calculated as in Equation (12):

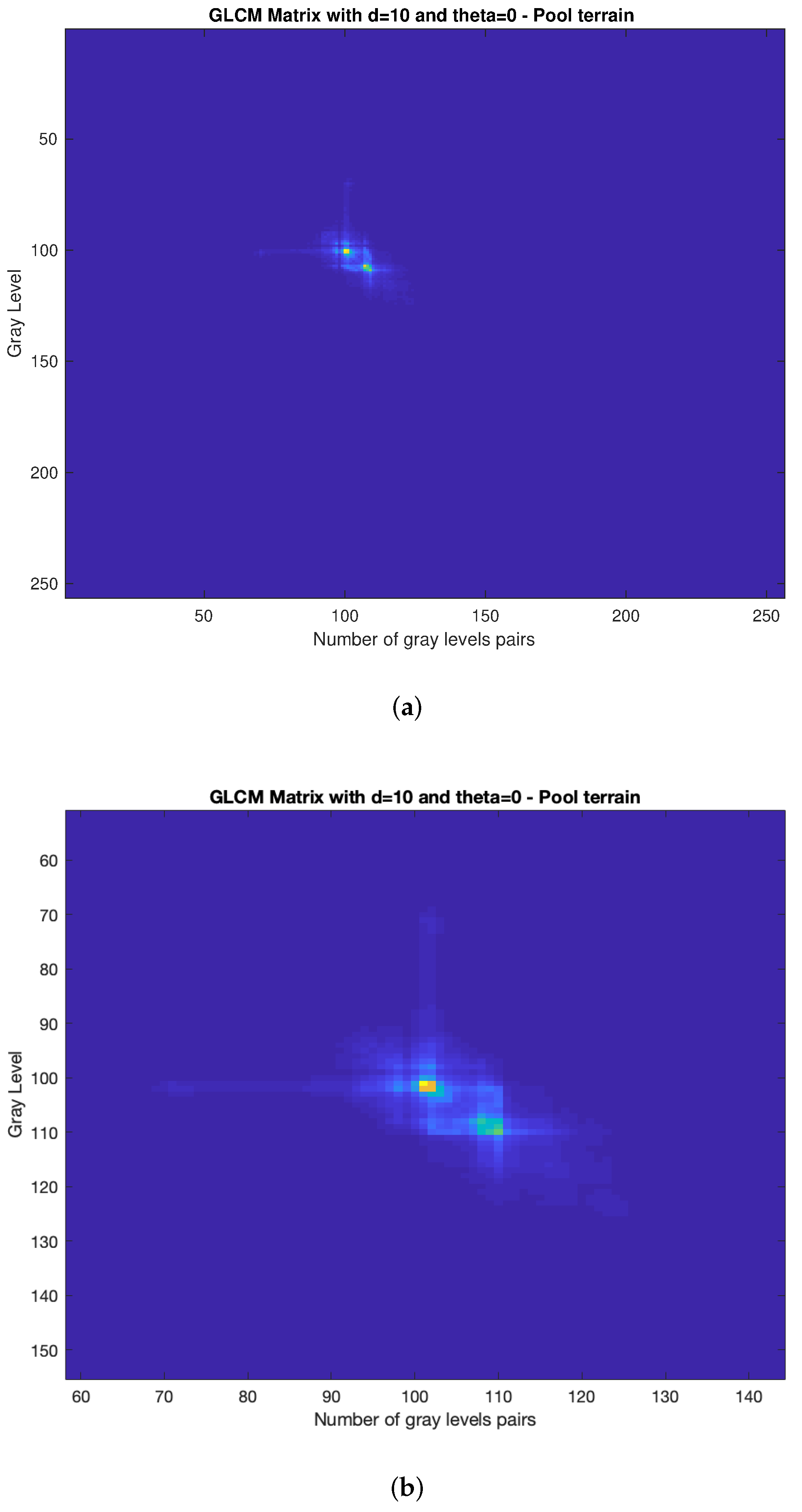

3.3.3. Gray-Level Co-Occurrence Matrix

- 1.

- GLCM mask design;

- 2.

- Calculation of 27 features.

| Algorithm 1 GLCM mask design. |

for to do for to do += 1 end for end for |

| Algorithm 2 GLCM transpose mask design. |

for to do for to do += end for end for |

| Algorithm 3 GLCM transpose mask design. |

for to do for to do += end for end for |

- Auto-correlation: A measure of the magnitude of the fineness and coarseness of texture;

- Joint Average: The gray-level weighted sum of joint probabilities;

- Cluster Prominence: It measures the GLCM asymmetry; higher values indicate more asymmetry, whereas lower values indicate the peak of the distribution centered around the mean;

- Cluster Shade: It measures the skewness of the GLCM matrix; a higher value indicates asymmetry;

- Cluster Tendency: It measures the heterogeneity that places higher weights on neighboring intensity level pairs that deviate more from the mean;

- Contrast: It is used to return the intensity level between a pixel and its neighbor throughout the entire image;

- Correlation: This method is important to define how a pixel is correlated with its neighbor throughout the entire image;

- Difference Average: It measures the mean of the gray-level difference distribution of the current frame;

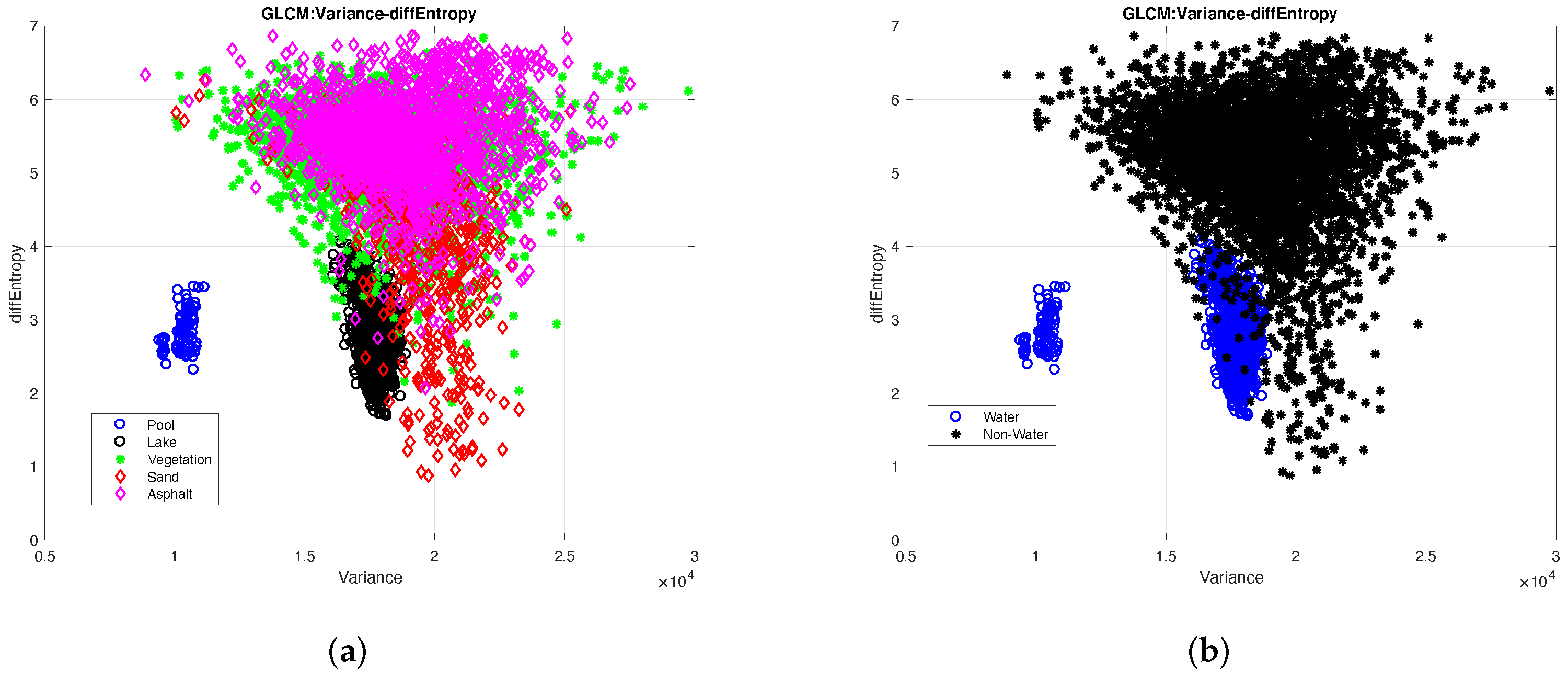

- Difference Entropy: It measures the disorder related to the gray-level difference distribution of the current frame;

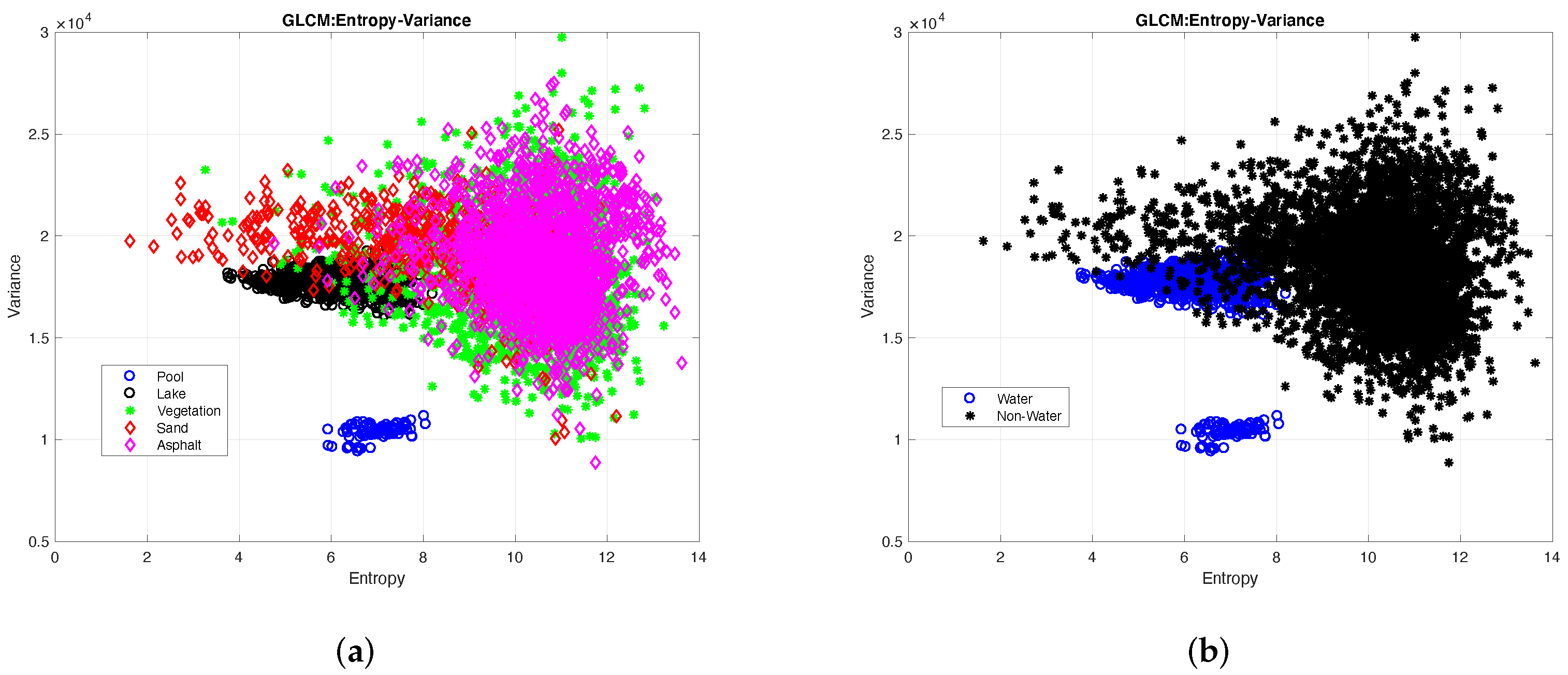

- Entropy: This feature measures the randomness of the intensity distribution. The greater the information’s heterogeneity in an image, the greater the entropy value is; however, when homogeneity increases, the entropy tends to 0;

- Homogeneity: Known as Inverse Difference Moment, this equation returns 1 when the GLCM is uniform (diagonal matrix);

- Homogeneity Moment: It measures the local homogeneity of an image; the result is a low Homogeneity Moment value for heterogeneous images and a relatively higher value for homogeneous images;

- Homogeneity Moment Normalized: It has the same goal as the Homogeneity Moment, but the neighboring intensity values are normalized by the highest intensity value with a power of 2;

- Homogeneity Normalized: It has the same goal as the Homogeneity Moment but the neighboring intensity values are normalized by the highest intensity value;

- Sum Average: It measures the mean of the intensity level sum distribution of the current frame;

- Sum Entropy: It measures the disorder related to the intensity value sum distribution of the current frame;

- Sum Variance: It measures the dispersion of the intensity number sum distribution of the current frame;

- Variance: It represents the measure of the dispersion of the values around the mean.

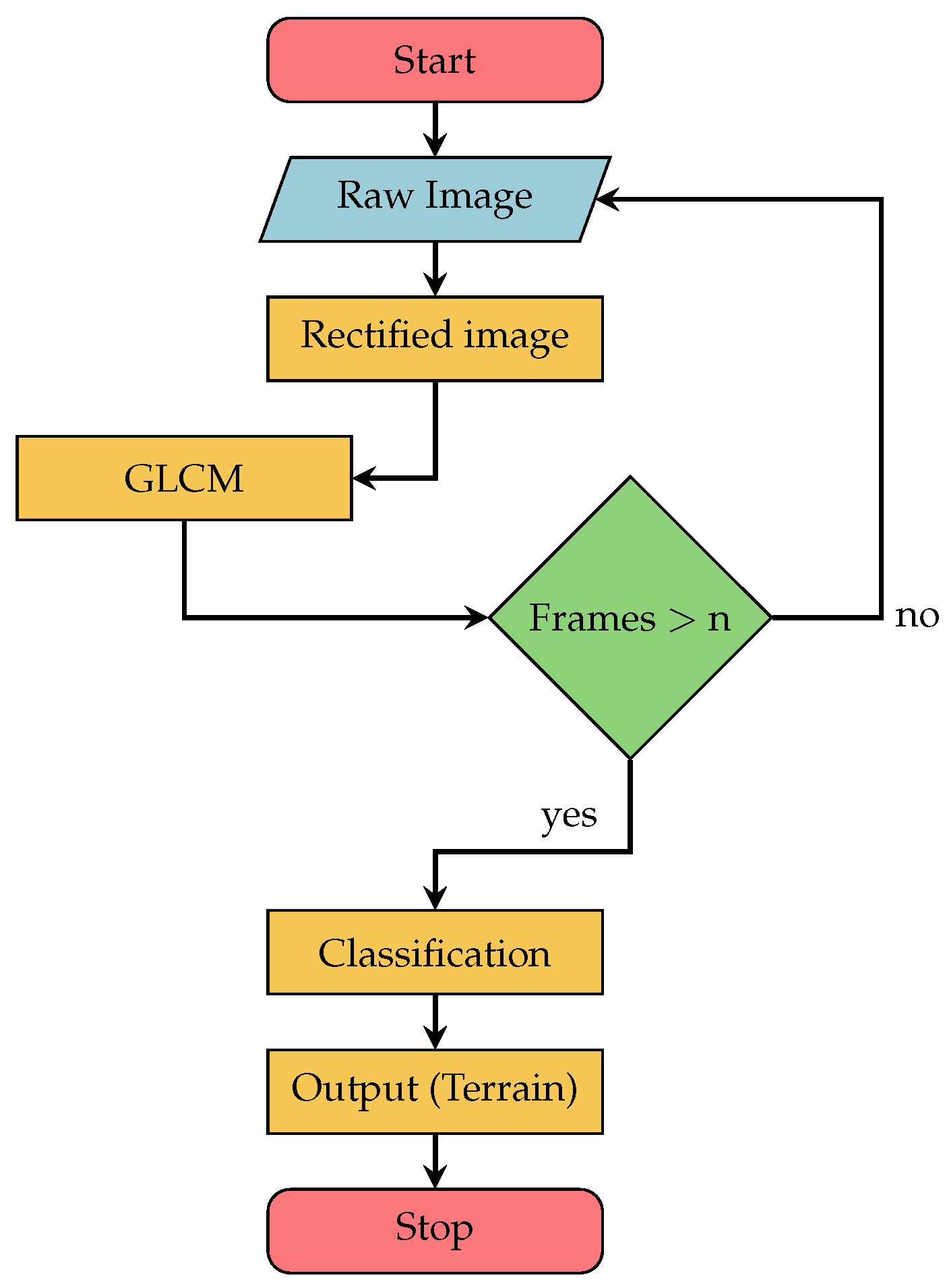

3.4. System Architecture

- Raw Image: The images obtained by the RGB camera have a resolution of 640 by 480 pixels; after obtaining these images, the RGB information is sent to the next layer;

- Rectified Image: To work with all RGB cameras with the same proposed algorithm, it is necessary to calibrate the cameras; Section 3.5 explains how to calibrate these RGB cameras;

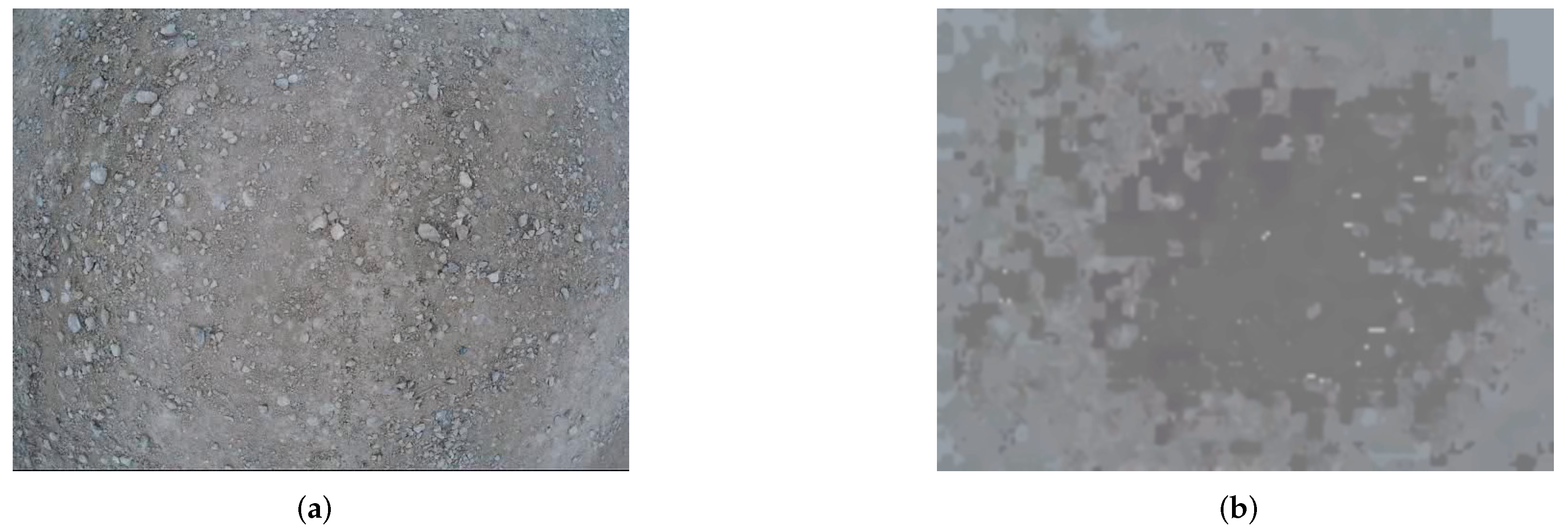

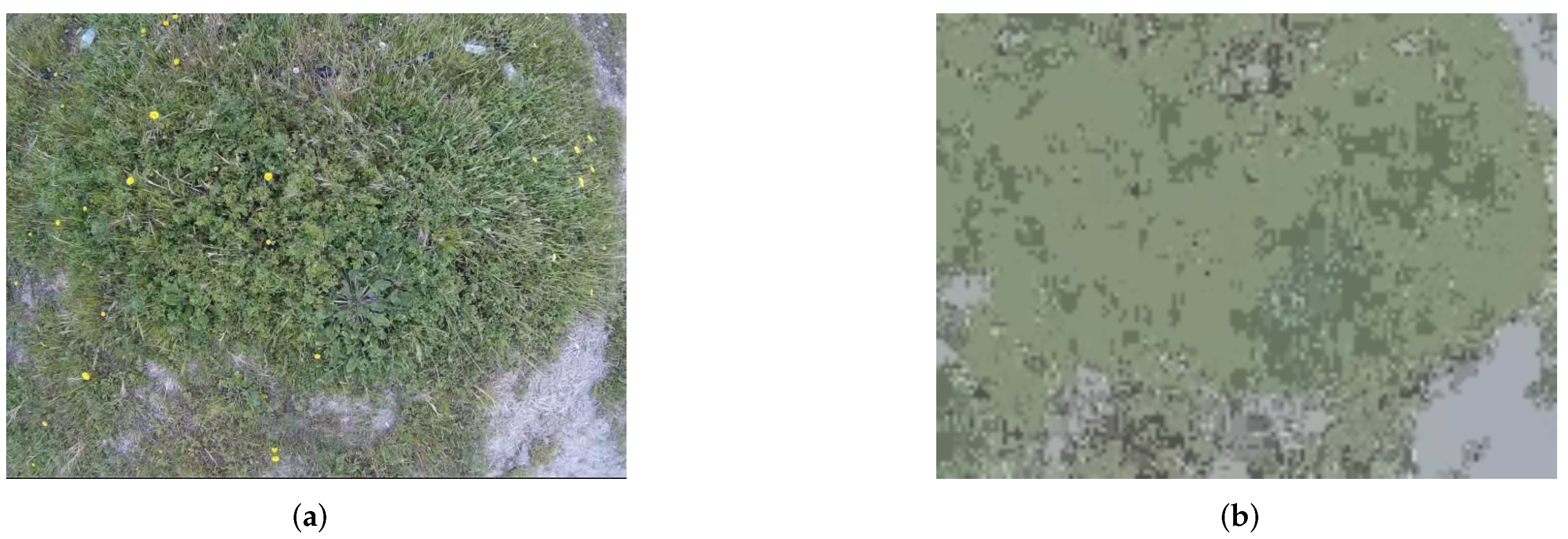

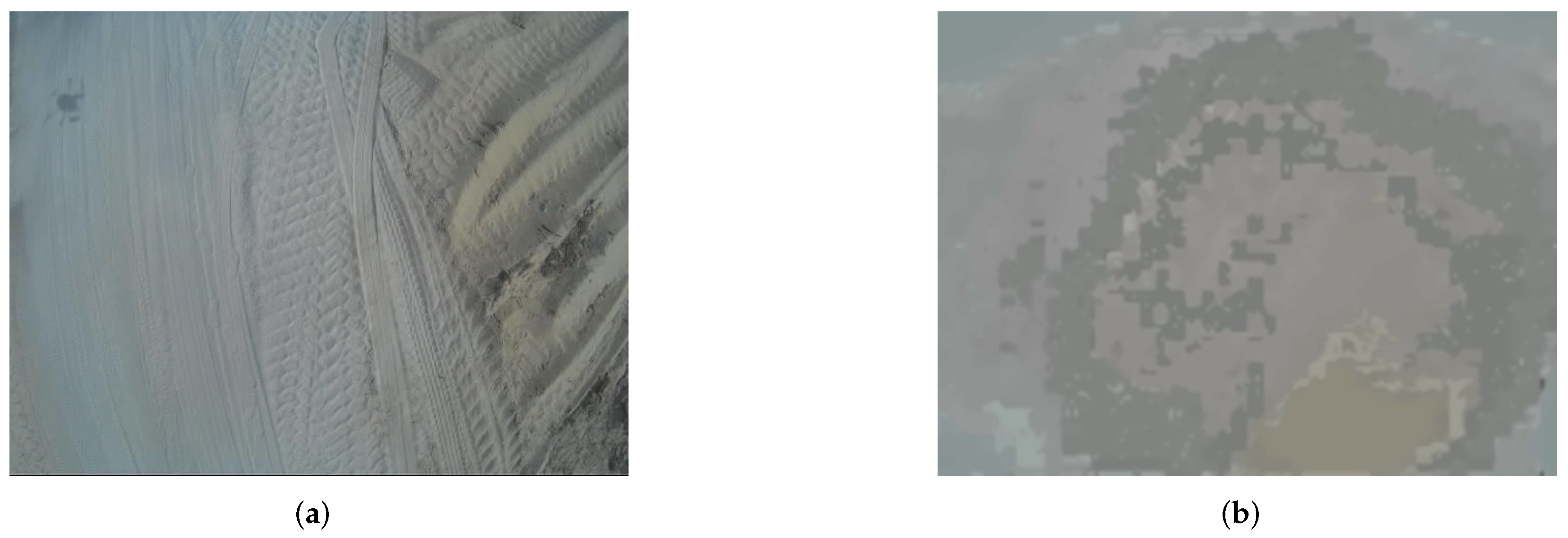

- Swarm Optimization: This layer is responsible for segmenting the terrain type captured by the RGB camera to be sent to the next layer (GLCM);

- Gray-Level Co-Occurrence Matrix: When receiving the segmented images from the PSO layer, this layer is responsible for transforming a segmented image into a GLCM matrix, where the features in Section 3.3.3 are calculated;

- Classification: The outputs generated by the GLCM phases are turned into inputs for a neural network (NN), which classifies the terrain. In this section, a Multilayer Perceptron (MLP) architecture is used. The neural network inputs are the output values from GLCM phase. The neural network model consists of three layers: the hidden layer contains 10 neurons, whereas the third layer corresponds to the system output and has the number of possible terrain outputs under study in this paper. Each neuron is activated by a sigmoidal function; they present a fully connected feed-forward neural network. During the training stage, 70% of the total data are used for training, 15% for testing, and 15% for validation.

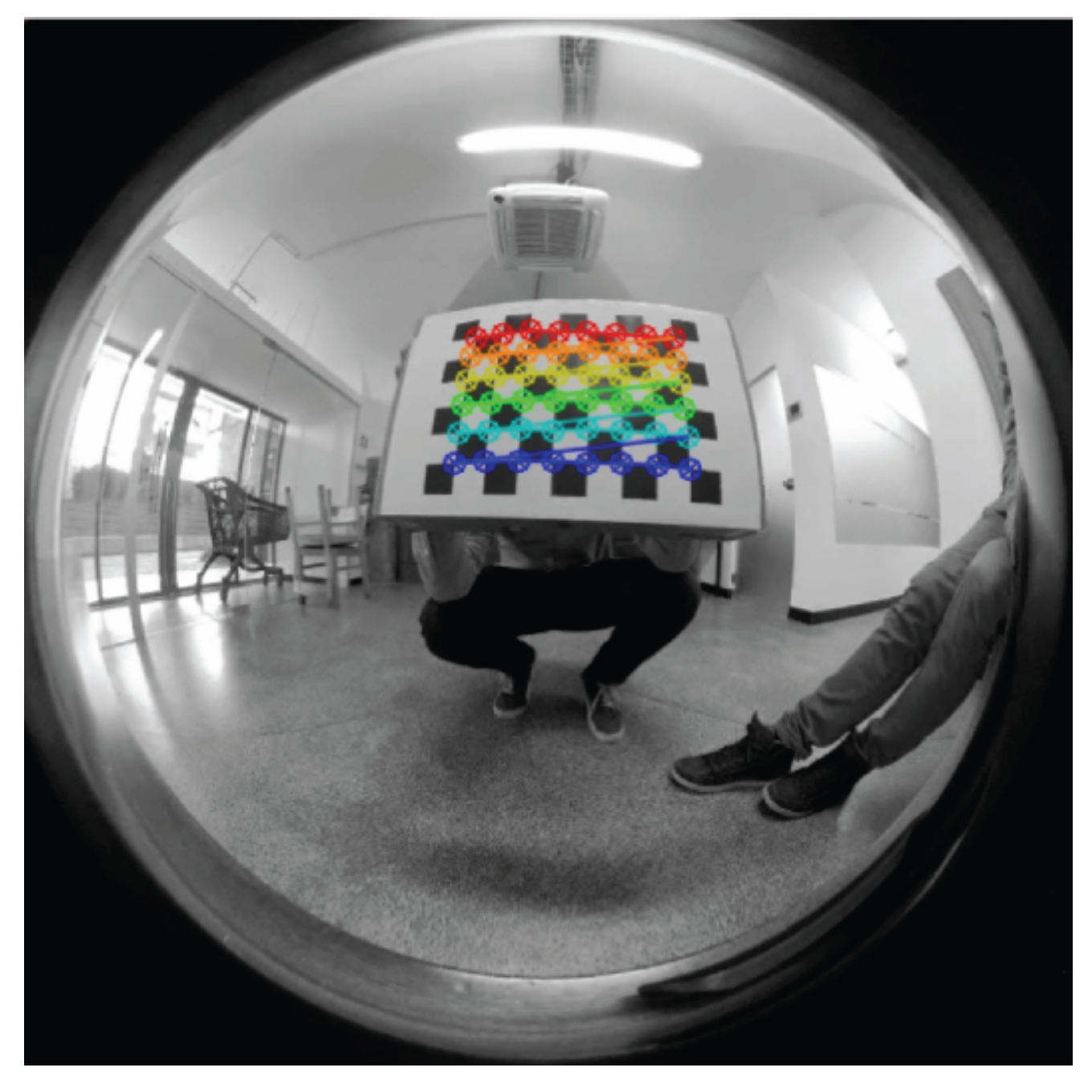

3.5. Camera Calibration

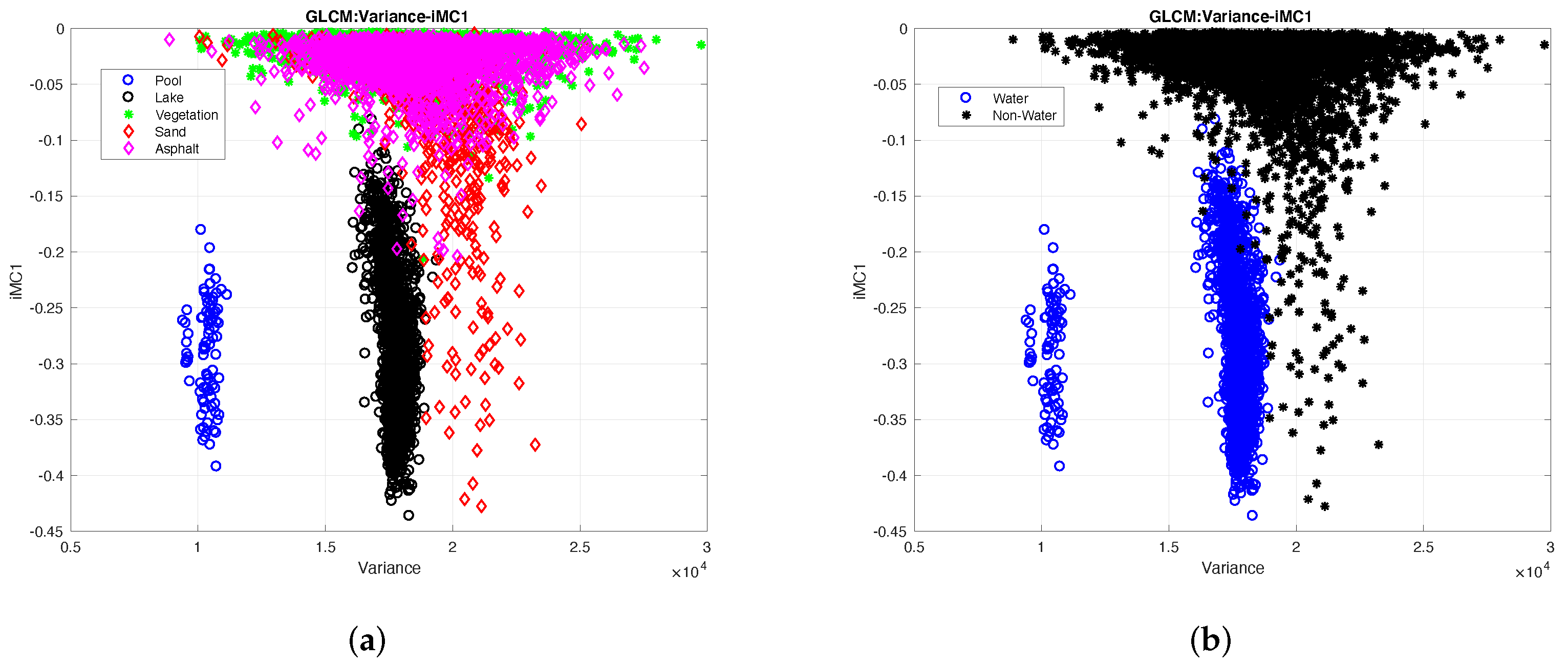

4. Empirical Part and Discussion of Results

- Variance—41.67% of impact;

- IMC2—33.33% of impact;

- IMC1—25.00% of impact;

- Sum Average—25.00% of impact;

- Joint Average—16.67% of impact;

- Difference Entropy—16.67% of impact;

- Auto-Correlation—16.67% of impact;

- Correlation—8.33% of impact;

- Entropy—8.33% of impact;

- Homogeneity—8.33% of impact.

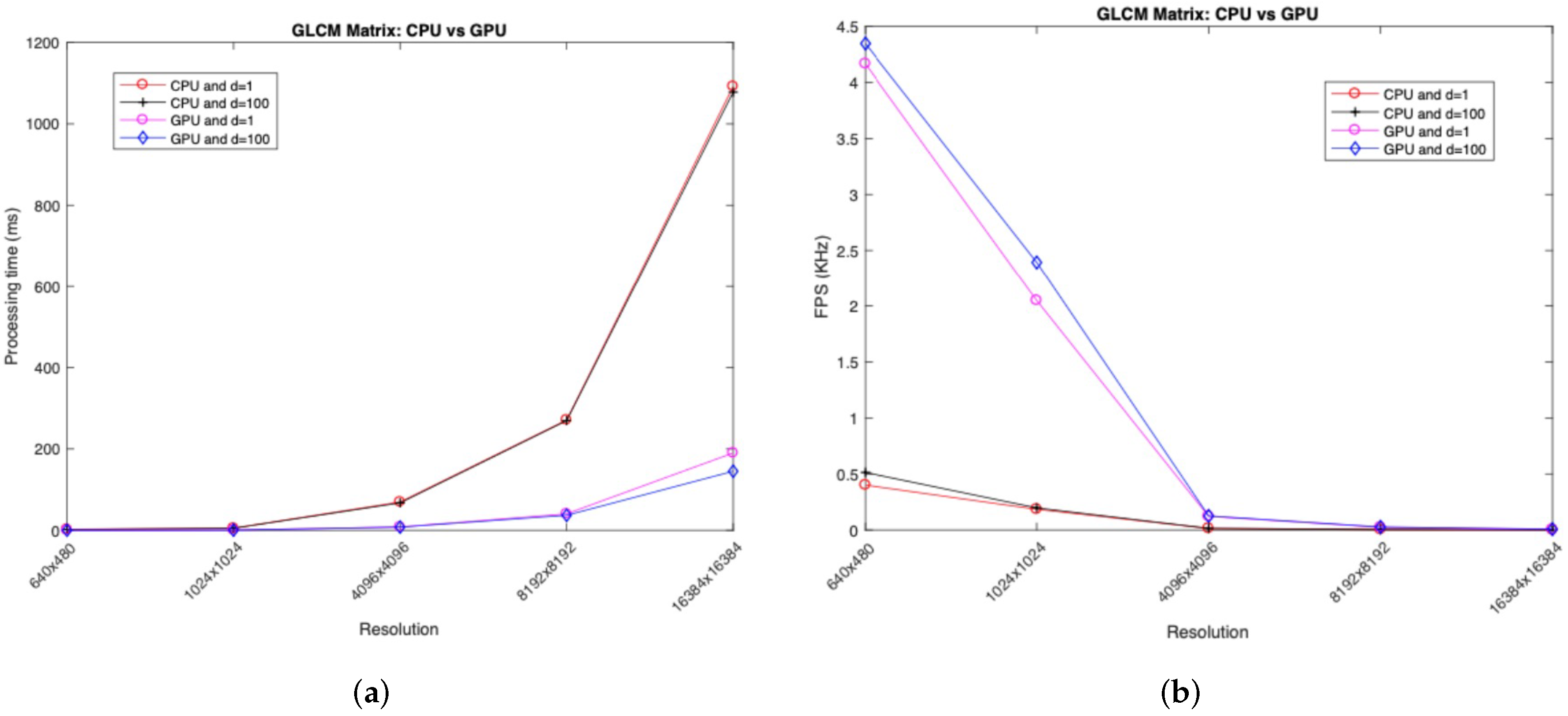

- Advantage—The atomic operation ensures that there are no undefined values and the final result is correct;

- Disadvantage—The disadvantage centers on the fact that parallelism gets lost in these situations of having to write in the same memory; in this way, the processing time is reduced.

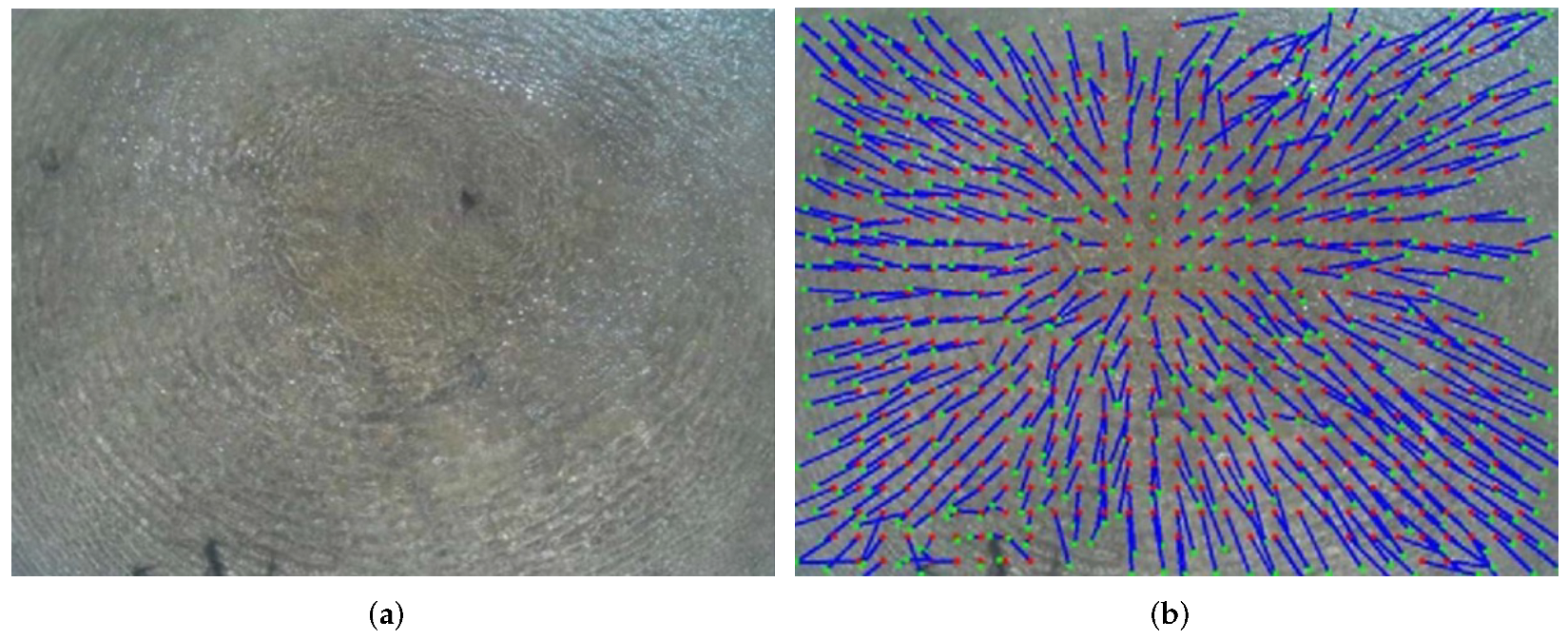

- 1.

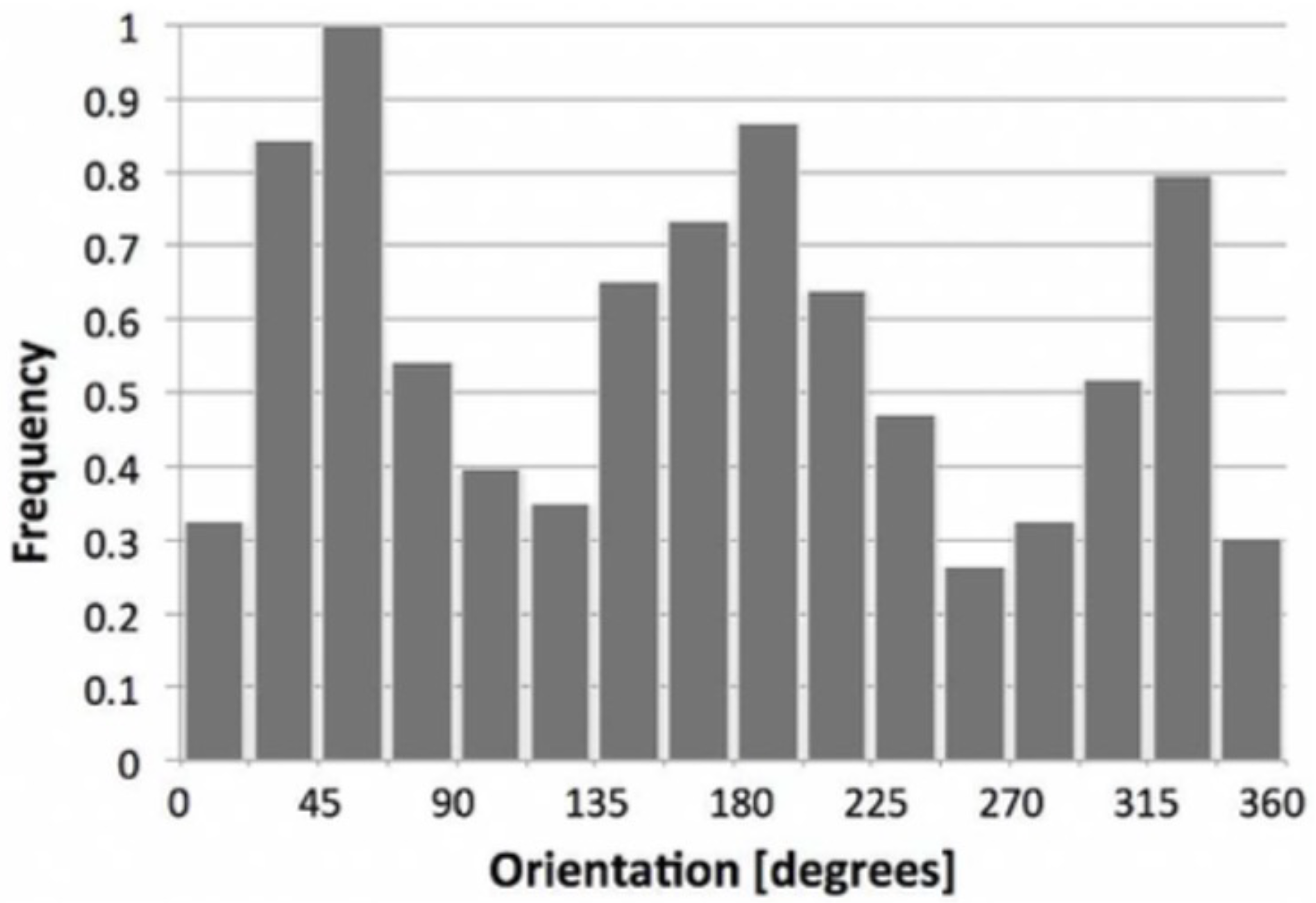

- Robustness of the algorithm—Since in water-type terrains movement is circular, it is natural that there is a dispersion at all angles of the captured image; however, if the center of the downwash is not in the center of the image, this dispersion may not occur at all angles in a water-type terrain. In this way, the authors may erroneously conclude that they are not on water-type terrain when, apparently, they are; consequently, the drone would land and damage its hardware;

- 2.

- Processing time—The significantly long algorithm processing time is an issue, since it needs at least four seconds to classify whether the terrain under study is water-type or not, which means that the UAV needs to stand still for at least four seconds to classify which type of terrain it is flying over.

5. Mapping

- 1.

- Black: It represents the water-type terrain;

- 2.

- White: It represents the non-water-type terrain;

- 3.

- Gray: It represents the unknown-type terrain.

6. Conclusions

6.1. Results

- Focus on the preprocessing stage via PSO, which contributes to the recognition-accuracy improvement and can be useful for the systems that are used in a dynamic environment and operate in changing conditions;

- The downwash effect is taken as a base in terrain-type recognition that forms significant features to provide high classification accuracy.

6.2. Future Work

- Perform a more in-depth study on changing the environment colors and improving the robustness of the algorithm. One of the method’s limitations is that a color variation could influence the results with false positives/negatives; although the dynamic texture does not suffer much from these changes, due to the similarity in the way the terrain moves, this could highly affect the static texture;

- Since the algorithm was designed for a UAV flying at an altitude of between one and two meters, it is likely that at this altitude, the UAV may collide with objects in the environment. One of the authors of this paper developed an algorithm [60] to avoid obstacles from images taken from a depth camera; however, currently, it only works with static objects. An improved version of this algorithm could be used to support the autonomous navigation of the proposed system;

- It is also important to conduct a more in-depth study into different camera resolutions in order to improve the robustness of the proposed system; however, the system performance may be affected by higher resolutions.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| GLCM | Gray-Level Co-Occurrence Matrix |

| HSV | Hue Saturation Value |

| PSO | particle swarm optimization |

| ROS | Robot Operating System |

| HEIFU | Hexa Exterior Intelligent Flying Unit |

| BEV | Beyond Vision |

| PDMFC | Projeto Desenvolvimento Manutenção Formação e Consultadoria |

| UAV | unmanned aerial vehicle |

References

- Kim, S.H. Choice model based analysis of consumer preference for drone delivery service. J. Air Transp. Manag. 2020, 84, 101785. [Google Scholar] [CrossRef]

- Koslowski, R. Drones and border control: An examination of state and non-state actor use of UAVs along borders. In Research Handbook on International Migration and Digital Technology; Edward Elgar Publishing: Cheltenham, UK, 2021. [Google Scholar]

- Stokes, D.; Apps, K.; Butcher, P.A.; Weiler, B.; Luke, H.; Colefax, A.P. Beach-user perceptions and attitudes towards drone surveillance as a shark-bite mitigation tool. Mar. Policy 2020, 120, 104127. [Google Scholar] [CrossRef]

- Correia, S.D.; Fé, J.; Tomic, S.; Beko, M. Drones as Sound Sensors for Energy-Based Acoustic Tracking on Wildfire Environments. Internet Things. Technol. Appl. 2022, 643, 109–125. [Google Scholar] [CrossRef]

- Kim, I.; Matos-Carvalho, J.P.; Viksnin, I.; Campos, L.M.; Fonseca, J.M.; Mora, A.; Chuprov, S. Use of Particle Swarm Optimization in Terrain Classification based on UAV Downwash. In Proceedings of the IEEE Congress on Evolutionary Computation (CEC), Wellington, New Zealand, 10–13 June 2019; pp. 604–610. [Google Scholar]

- Shang, Z.; Shen, Z. Vision Model-Based Real-Time Localization of Unmanned Aerial Vehicle for Autonomous Structure Inspection under GPS-Denied Environment. In Computing in Civil Engineering 2019: Smart Cities, Sustainability, and Resilience; American Society of Civil Engineers: Reston, VA, USA, 2019; pp. 292–298. [Google Scholar]

- Hu, T.; Sun, X.; Su, Y.; Guan, H.; Sun, Q.; Kelly, M.; Guo, Q. Development and performance evaluation of a very low-cost UAV-LiDAR system for forestry applications. Remote Sens. 2020, 13, 77. [Google Scholar] [CrossRef]

- Chen, H.; Lan, Y.; Fritz, B.K.; Hoffmann, W.C.; Liu, S. Review of agricultural spraying technologies for plant protection using unmanned aerial vehicle (UAV). Int. J. Agric. Biol. Eng. 2021, 14, 38–49. [Google Scholar] [CrossRef]

- Han, D.; Lee, S.B.; Song, M.; Cho, J.S. Change detection in unmanned aerial vehicle images for progress monitoring of road construction. Buildings 2021, 11, 150. [Google Scholar] [CrossRef]

- Sibanda, M.; Mutanga, O.; Chimonyo, V.G.; Clulow, A.D.; Shoko, C.; Mazvimavi, D.; Dube, T.; Mabhaudhi, T. Application of drone technologies in surface water resources monitoring and assessment: A systematic review of progress, challenges, and opportunities in the global south. Drones 2021, 5, 84. [Google Scholar] [CrossRef]

- Solvin, T.M.; Puliti, S.; Steffenrem, A. Use of UAV photogrammetric data in forest genetic trials: Measuring tree height, growth, and phenology in Norway spruce (Picea abies L. Karst.). Scand. J. For. Res. 2020, 35, 322–333. [Google Scholar] [CrossRef]

- Dainelli, R.; Toscano, P.; Di Gennaro, S.F.; Matese, A. Recent advances in unmanned aerial vehicle forest remote sensing—A systematic review. Part I: A general framework. Forests 2021, 12, 327. [Google Scholar] [CrossRef]

- Zaporozhets, A. Overview of quadrocopters for energy and ecological monitoring. In Systems, Decision and Control in Energy I; Springer: Berlin/Heidelberg, Germany, 2020; pp. 15–36. [Google Scholar]

- D’Oleire-Oltmanns, S.; Marzolff, I.; Peter, K.D.; Ries, J.B. Unmanned aerial vehicle (UAV) for monitoring soil erosion in Morocco. Remote Sens. 2012, 4, 3390–3416. [Google Scholar] [CrossRef] [Green Version]

- Gonçalves, J.; Henriques, R. UAV photogrammetry for topographic monitoring of coastal areas. ISPRS J. Photogramm. Remote Sens. 2015, 104, 101–111. [Google Scholar] [CrossRef]

- Choi, K.; Lee, I. A UAV based close-range rapid aerial monitoring system for emergency responses. ISPAr 2011, 3822, 247–252. [Google Scholar] [CrossRef] [Green Version]

- Zheng, X.; Yang, X.; Ma, H.; Ren, G.; Yu, Z.; Yang, F.; Zhang, H.; Gao, W. Integrative Landslide Emergency Monitoring Scheme Based on GB-INSAR Interferometry, Terrestrial Laser Scanning and UAV Photography. J. Phys. Conf. Ser. 2019, 1213, 052069. [Google Scholar] [CrossRef]

- Al-Kaff, A.; Madridano, Á.; Campos, S.; García, F.; Martín, D.; de la Escalera, A. Emergency Support Unmanned Aerial Vehicle for Forest Fire Surveillance. Electronics 2020, 9, 260. [Google Scholar] [CrossRef] [Green Version]

- Domnitskya, E.; Mikhailova, V.; Zoloedova, E.; Alyukova, D.; Chuprova, S.; Marinenkova, E.; Viksnina, I. Software Module for Unmanned Autonomous Vehicle’s On-Board Camera Faults Detection and Correction. In Proceedings of the CEUR Workshop, Saint Petersburg, Russia, 10–11 December 2020. [Google Scholar]

- Stojnev Ilić, A.; Stojnev, D. Preprocessing Image Data for Deep Learning. In Sinteza 2020—International Scientific Conference on Information Technology and Data Related Research; Singidunum University: Belgrade, Serbia, 2020; pp. 312–317. [Google Scholar]

- Li, Z.; Han, X.; Wang, L.; Zhu, T.; Yuan, F. Feature Extraction and Image Retrieval of Landscape Images Based on Image Processing. Trait. Signal 2020, 37, 1009–1018. [Google Scholar] [CrossRef]

- Patil, A.; Rane, M. Convolutional neural networks: An overview and its applications in pattern recognition. In International Conference on Information and Communication Technology for Intelligent Systems; Springer: Berlin/Heidelberg, Germany, 2020; pp. 21–30. [Google Scholar]

- Xu, Z.; Song, H.; Wu, Z.; Xu, Z.; Wang, S. Research on Crop Information Extraction of Agricultural UAV Images Based on Blind Image Deblurring Technology And SVM. INMATEH-Agric. Eng. 2021, 64, 33–42. [Google Scholar] [CrossRef]

- Li, Y.; Qian, M.; Liu, P.; Cai, Q.; Li, X.; Guo, J.; Yan, H.; Yu, F.; Yuan, K.; Yu, J.; et al. The recognition of rice images by UAV based on capsule network. Clust. Comput. 2019, 22, 9515–9524. [Google Scholar] [CrossRef]

- Zhang, Z.; Yao, M. Illumination Invariant Face Recognition By Expected Patch Log Likelihood. In Proceedings of the SoutheastCon, Online, 10–14 March 2021; pp. 1–4. [Google Scholar]

- Heusch, G.; Rodriguez, Y.; Marcel, S. Local binary patterns as an image preprocessing for face authentication. In Proceedings of the 7th International Conference on Automatic Face and Gesture Recognition (FGR06), Southampton, UK, 10–16 April 2006; p. 6. [Google Scholar]

- Ramadan, H.; Lachqar, C.; Tairi, H. A survey of recent interactive image segmentation methods. Comput. Vis. Media 2020, 6, 355–384. [Google Scholar] [CrossRef]

- Minaee, S.; Boykov, Y.Y.; Porikli, F.; Plaza, A.J.; Kehtarnavaz, N.; Terzopoulos, D. Image segmentation using deep learning: A survey. IEEE Trans. Pattern Anal. Mach. Intell. 2021, PP, 33596172. [Google Scholar] [CrossRef]

- Zhang, Y.; Liu, M.; Zhang, H.; Sun, G.; He, J. Adaptive Fusion Affinity Graph with Noise-free Online Low-rank Representation for Natural Image Segmentation. arXiv 2021, arXiv:2110.11685. [Google Scholar]

- Lian, X.; Pang, Y.; Han, J.; Pan, J. Cascaded hierarchical atrous spatial pyramid pooling module for semantic segmentation. Pattern Recognit. 2021, 110, 107622. [Google Scholar] [CrossRef]

- Dubey, S.; Gupta, Y.K.; Soni, D. Comparative study of various segmentation techniques with their effective parameters. Int. J. Innov. Res. Comput. Commun. Eng. 2016, 4, 17223–17228. [Google Scholar]

- Liu, J.; Wang, D.; Yu, S.; Li, X.; Han, Z.; Tang, Y. A survey of image clustering: Taxonomy and recent methods. In Proceedings of the 2021 IEEE International Conference on Real-time Computing and Robotics (RCAR), Xining, China, 15–19 July 2021; pp. 375–380. [Google Scholar]

- Dasgupta, S.; Frost, N.; Moshkovitz, M.; Rashtchian, C. Explainable k-means and k-medians clustering. arXiv 2020, arXiv:2002.12538. [Google Scholar]

- Zhang, L.; Luo, M.; Liu, J.; Li, Z.; Zheng, Q. Diverse fuzzy c-means for image clustering. Pattern Recognit. Lett. 2020, 130, 275–283. [Google Scholar] [CrossRef]

- Freitas, D.; Lopes, L.G.; Morgado-Dias, F. Particle swarm optimisation: A historical review up to the current developments. Entropy 2020, 22, 362. [Google Scholar] [CrossRef] [Green Version]

- Kim, I.; Matveeva, A.; Viksnin, I.; Patrikeev, R. Methods of Semantic Integrity Preservation in the Pattern Recognition Process. Int. J. Embed. Real-Time Commun. Syst. (IJERTCS) 2019, 10, 118–140. [Google Scholar] [CrossRef] [Green Version]

- Kartsov, S.; Kupriyanov, D.Y.; Polyakov, Y.A.; Zykov, A. Non-Local Means Denoising Algorithm Based on Local Binary Patterns. In Computer Vision in Control Systems—6; Springer: Berlin/Heidelberg, Germany, 2020; pp. 153–164. [Google Scholar]

- Huang, F.; Lan, B.; Tao, J.; Chen, Y.; Tan, X.; Feng, J.; Ma, Y. A parallel nonlocal means algorithm for remote sensing image denoising on an intel xeon phi platform. IEEE Access 2017, 5, 8559–8567. [Google Scholar] [CrossRef]

- Study and Analysis of Different Camera Calibration Methods. Available online: http://repositorio.roca.utfpr.edu.br/jspui/handle/1/6454 (accessed on 12 April 2020).

- Ntouskos, V.; Kalisperakis, I.; Karras, G. Automatic calibration of digital cameras using planar chess-board patterns. In Optical 3-D Measurement Techniques VIII; ETH Zurich: Zurich, Switzerland, 2007; Volume 1. [Google Scholar]

- Pombeiro, R.; Mendonça, R.; Rodrigues, P.; Marques, F.; Lourenço, A.; Pinto, E.; Santana, P.; Barata, J. Water detection from downwash-induced optical flow for a multirotor UAV. In Proceedings of the OCEANS 2015-MTS/IEEE Washington, Washington, DC, USA, 19–22 October 2015; pp. 1–6. [Google Scholar]

- Lookingbill, A.; Rogers, J.; Lieb, D.; Curry, J.; Thrun, S. Reverse optical flow for self-supervised adaptive autonomous robot navigation. Int. J. Comput. Vis. 2007, 74, 287–302. [Google Scholar] [CrossRef]

- Campos, I.S.; Nascimento, E.R.; Chaimowicz, L. Terrain Classification from UAV Flights Using Monocular Vision. In Proceedings of the 2015 12th Latin American Robotics Symposium and 2015 3rd Brazilian Symposium on Robotics (LARS-SBR), Uberlandia, Brazil, 28 October–1 November 2015; pp. 271–276. [Google Scholar]

- Lucas, B.D.; Kanade, T. An iterative image registration technique with an application to stereo vision. In Proceedings of the 7th International Joint Conference on Artificial Intelligence, Vancouver, BC, Canada, 24–28 August 1981. [Google Scholar]

- Caridade, C.; Marçal, A.R.; Mendonça, T. The use of texture for image classification of black & white air photographs. Int. J. Remote Sens. 2008, 29, 593–607. [Google Scholar]

- Mboga, N.; Persello, C.; Bergado, J.R.; Stein, A. Detection of informal settlements from VHR images using convolutional neural networks. Remote Sens. 2017, 9, 1106. [Google Scholar] [CrossRef] [Green Version]

- Woods, M.; Guivant, J.; Katupitiya, J. Terrain classification using depth texture features. In Proceedings of the Australian Conference of Robotics and Automation, Sydney, Australia, 5–7 December 2013; pp. 1–8. [Google Scholar]

- Sofman, B.; Bagnell, J.A.; Stentz, A.; Vandapel, N. Terrain classification from aerial data to support ground vehicle navigation. Comput. Sci. 2006. Available online: https://www.ri.cmu.edu/pub_files/pub4/sofman_boris_2006_1/sofman_boris_2006_1.pdf (accessed on 1 April 2022).

- de Troia Salvado, A.B. Aerial Semantic Mapping for Precision Agriculture using Multispectral Imagery. Ph.D. Thesis, Faculdade de Ciencias e Tecnologia (FCT), Lisboa, Portugal, 2018. [Google Scholar]

- Wang, L.; Peng, J.; Sun, W. Spatial–spectral squeeze-and-excitation residual network for hyperspectral image classification. Remote Sens. 2019, 11, 884. [Google Scholar] [CrossRef] [Green Version]

- Ebadi, F.; Norouzi, M. Road Terrain detection and Classification algorithm based on the Color Feature extraction. In Proceedings of the 2017 Artificial Intelligence and Robotics (IRANOPEN), Qazvin, Iran, 9 April 2017; pp. 139–146. [Google Scholar]

- Sharma, M.; Ghosh, H. Histogram of gradient magnitudes: A rotation invariant texture-descriptor. In Proceedings of the 2015 IEEE International Conference on Image Processing (ICIP), Quebec, QC, Canada, 27–30 September 2015; pp. 4614–4618. [Google Scholar]

- Zhao, Q.; Wang, Y.; Li, Y. Voronoi tessellation-based regionalised segmentation for colour texture image. IET Comput. Vis. 2016, 10, 613–622. [Google Scholar] [CrossRef]

- Khan, Y.N.; Komma, P.; Zell, A. High resolution visual terrain classification for outdoor robots. In Proceedings of the 2011 IEEE International Conference on Computer Vision Workshops (ICCV Workshops), Barcelona, Spain, 6–13 November 2011; pp. 1014–1021. [Google Scholar]

- Ma, X.; Hao, S.; Cheng, Y. Terrain classification of aerial image based on low-rank recovery and sparse representation. In Proceedings of the 2017 20th International Conference on Information Fusion (Fusion), Xi’an, China, 10–13 July 2017; pp. 1–6. [Google Scholar]

- Shen, K.; Kelly, M.; Le Cleac’h, S. Terrain Classification for Off-Road Driving; Stanford University: Stanforrd, CA, USA, 2017. [Google Scholar]

- Otte, S.; Laible, S.; Hanten, R.; Liwicki, M.; Zell, A. Robust visual terrain classification with recurrent neural networks. In Proceedings; Presses Universitaires de Louvain: Bruges, Belgium, 2015; pp. 451–456. [Google Scholar]

- Glowacz, A. Thermographic fault diagnosis of ventilation in BLDC motors. Sensors 2021, 21, 7245. [Google Scholar] [CrossRef]

- Salvado, A.B.; Mendonça, R.; Lourenço, A.; Marques, F.; Matos-Carvalho, J.P.; Miguel Campos, L.; Barata, J. Semantic Navigation Mapping from Aerial Multispectral Imagery. In Proceedings of the 2019 IEEE 28th International Symposium on Industrial Electronics (ISIE), Vancouver, BC, Canada, 12–14 June 2019; pp. 1192–1197. [Google Scholar] [CrossRef]

- Carvalho, J.; Pedro, D.F.; Campos, L.; Fonseca, J.; Mora, A. Terrain Classification Using W-K Filter and 3D Navigation with Static Collision Avoidance; UNINOVA-Instituto de Desenvolvimento de Novas Tecnologias: Lisboa, Portugal, 2020; pp. 1122–1137. [Google Scholar] [CrossRef]

| Feature | Computation |

|---|---|

| Auto-correlation | |

| Cluster Prominence | |

| Cluster Shade | |

| Cluster Tendency | |

| Contrast | |

| Correlation | |

| Difference Average | |

| Difference Entropy | |

| Difference Variance | |

| Energy | |

| Entropy | |

| Homogeneity | |

| Homogeneity Moment | |

| Homogeneity Moment Normalized | |

| Homogeneity Normalized | |

| Inverse Variance | |

| Informational Measure of Correlation 1 | |

| Informational Measure of Correlation 2 | |

| Joint Average | |

| Joint Maximum | |

| Kurtosis | |

| Skewness | |

| Sum Average | |

| Sum Entropy | |

| Sum Variance | |

| Variance |

| Width | Height | Distance | CPU Working Time (ms) | GPU Working Time (ms) | Acceleration |

|---|---|---|---|---|---|

| 640 | 480 | 1 | 2.491 | 0.240 | 10.379 |

| 100 | 1.944 | 0.230 | 8.452 | ||

| 1024 | 1024 | 1 | 5.408 | 0.488 | 11.082 |

| 100 | 5.067 | 0.418 | 12.122 | ||

| 4096 | 4096 | 1 | 69.617 | 8.194 | 8.496 |

| 100 | 67.392 | 7.965 | 8.461 | ||

| 8192 | 8192 | 1 | 271.039 | 40.576 | 6.680 |

| 100 | 269.634 | 36.995 | 7.288 | ||

| 16,384 | 16,384 | 1 | 1092.070 | 190.093 | 5.745 |

| 100 | 1078.060 | 145.195 | 7.425 |

| Method | Terrain Recognition Accuracy (%) |

|---|---|

| GLCM [45] | 81.90 |

| GLCM [46] | 86.60 |

| LBP [46] | 90.48 |

| Depth Texture Features [47] | 92.30 |

| LiDAR [48] | 75.55 |

| Multispectral Camera [49] | 80.00 |

| Hyperspectral Camera [50] | 81.18 |

| Normalized Color [51] | 67.00 |

| HOG [52] | 86.54 |

| Voronoi [53] | 82.30 |

| LBP [54] | 96.90 |

| LTP [54] | 98.10 |

| LATP [54] | 97.20 |

| Gabor [55] | 96.11 |

| Optical Flow [41] | 87.00 |

| CNNs [56] | 86.25 |

| RNN [57] | 83.49 |

| PSO + GLCM | 88.70 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kim, I.; Matos-Carvalho, J.P.; Viksnin, I.; Simas, T.; Correia, S.D. Particle Swarm Optimization Embedded in UAV as a Method of Territory-Monitoring Efficiency Improvement. Symmetry 2022, 14, 1080. https://doi.org/10.3390/sym14061080

Kim I, Matos-Carvalho JP, Viksnin I, Simas T, Correia SD. Particle Swarm Optimization Embedded in UAV as a Method of Territory-Monitoring Efficiency Improvement. Symmetry. 2022; 14(6):1080. https://doi.org/10.3390/sym14061080

Chicago/Turabian StyleKim, Iuliia, João Pedro Matos-Carvalho, Ilya Viksnin, Tiago Simas, and Sérgio Duarte Correia. 2022. "Particle Swarm Optimization Embedded in UAV as a Method of Territory-Monitoring Efficiency Improvement" Symmetry 14, no. 6: 1080. https://doi.org/10.3390/sym14061080

APA StyleKim, I., Matos-Carvalho, J. P., Viksnin, I., Simas, T., & Correia, S. D. (2022). Particle Swarm Optimization Embedded in UAV as a Method of Territory-Monitoring Efficiency Improvement. Symmetry, 14(6), 1080. https://doi.org/10.3390/sym14061080