Cross-Language End-to-End Speech Recognition Research Based on Transfer Learning for the Low-Resource Tujia Language

Abstract

1. Introduction

2. Review of Related Work

2.1. Feature Extraction Based on CNN

2.2. End-to-End Speech Recognition Based on LSTM-CTC

3. Proposed Method

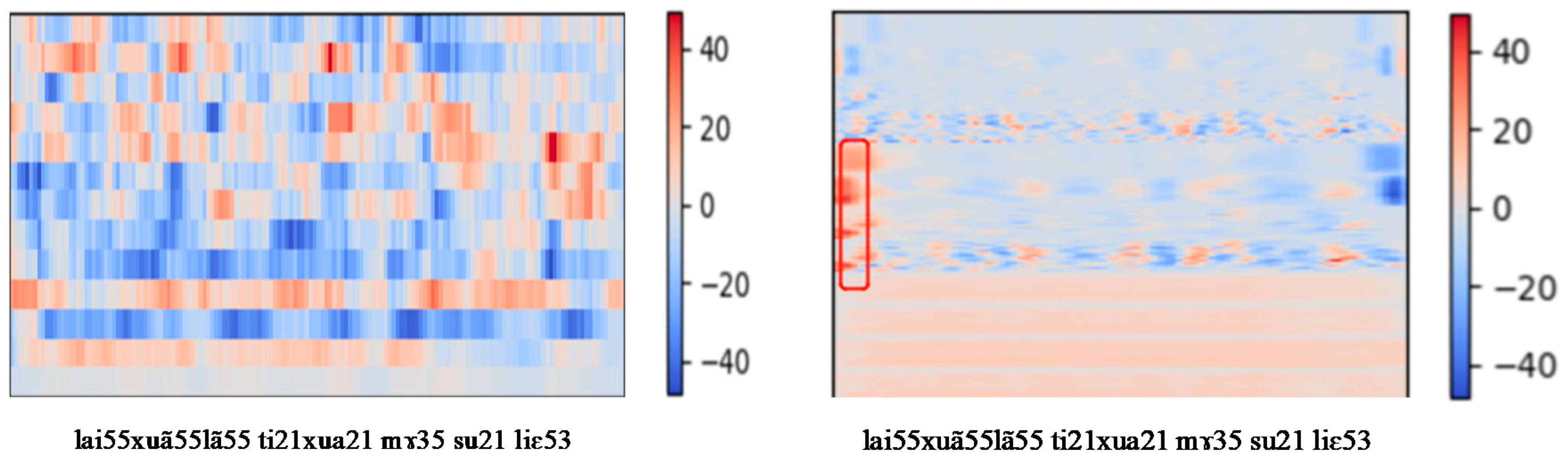

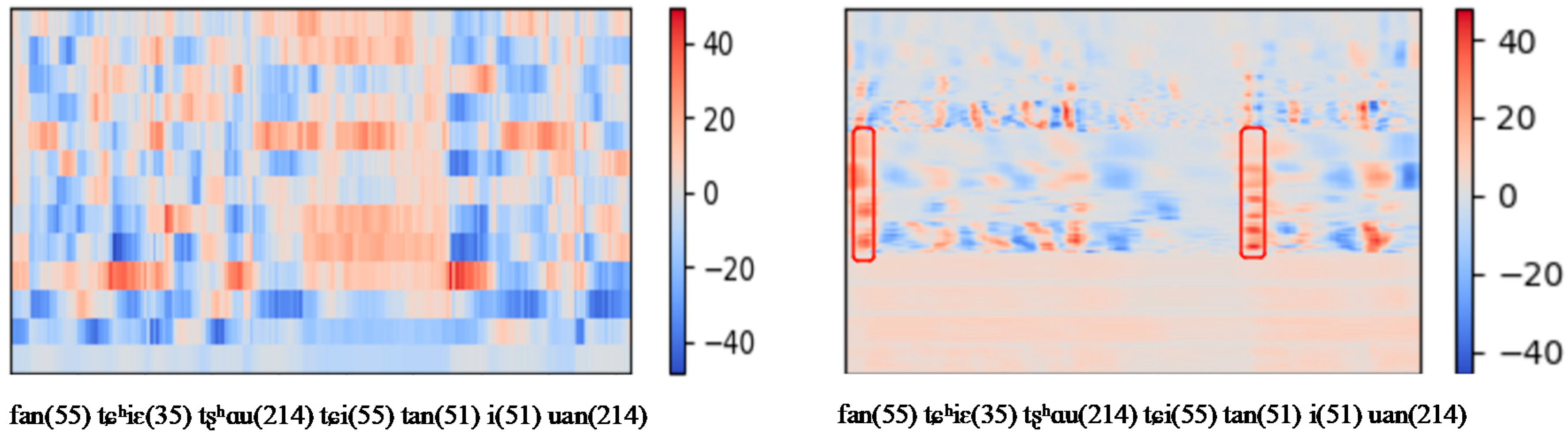

3.1. Cross-Language Data Pre-processing and Feature Extraction

3.1.1. Tujia Language Corpus

3.1.2. Extended Speech Corpus

3.1.3. Feature Extraction

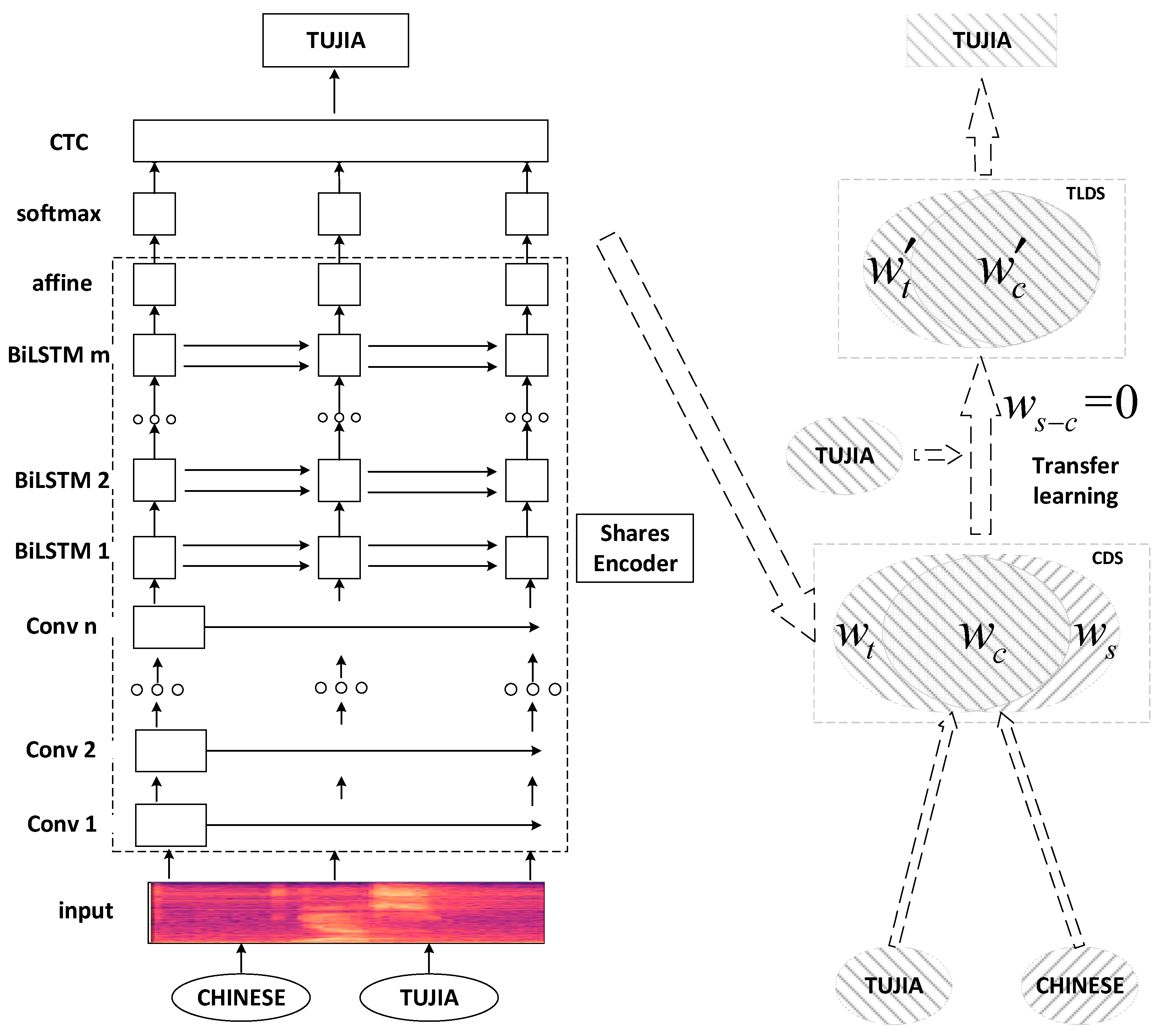

3.2. End-to-End Speech Recognition Model Based on Transfer Learning

| Algorithm 1 Training End-to-end Speech Recognition Model Based on Transfer Learning |

| Input: , training set , input features , output labels , learning rate Output: , CDS parameters , TLDS parameters Initialize CDS parameters While model does not converge do Read a SortaGrad batch from Train model using , Calculate of the softmax layer using equation (5) Calculate using Equation (3) for End while Initialize TLDS parameters While model does not converge do Read a SortaGrad batch from Train model using , Calculate of the softmax layer using Equation (5) Calculate using Equation (3) for End while |

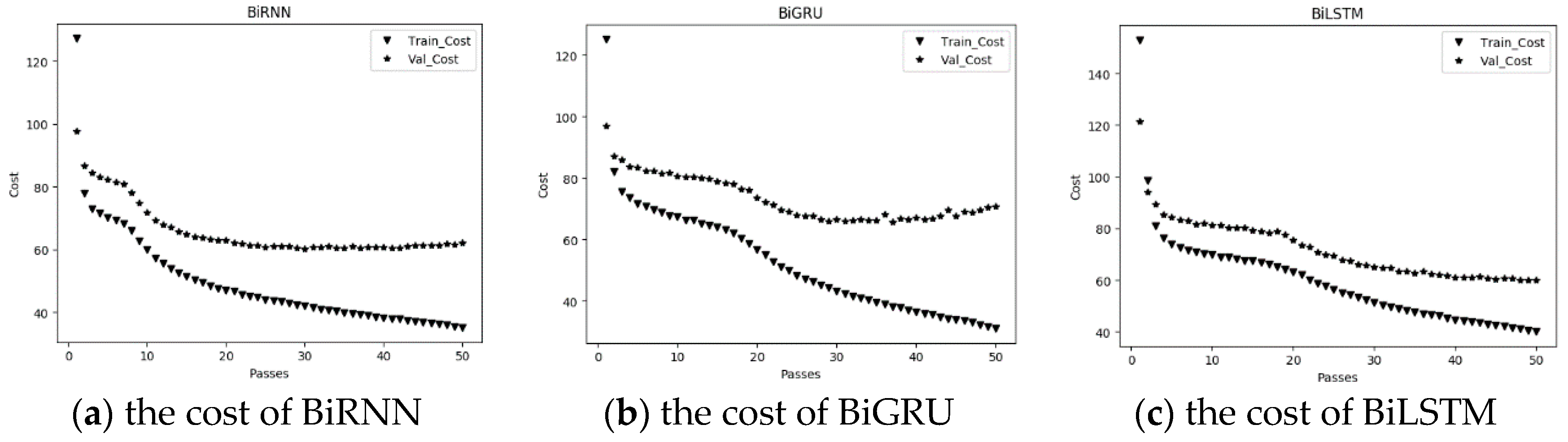

4. Experiments and Result

4.1. Experimental Environment

4.2. Parameters of the Models

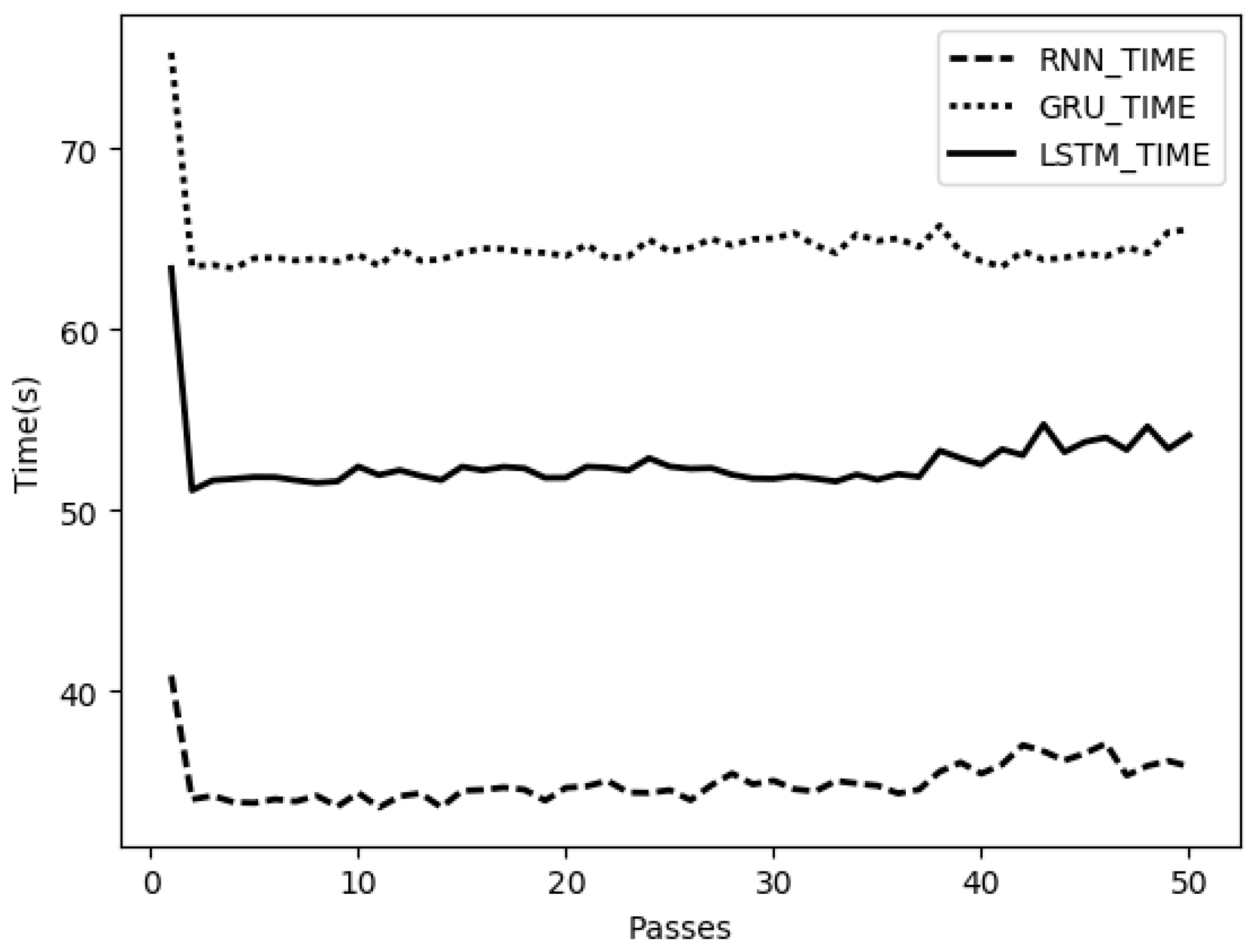

4.3. Experimental Results

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Xu, S. The course and prospect of endangered language studies in China. J. Northwest Univ. Natl. Philos. Soc. Sci. 2015, 83–90. [Google Scholar] [CrossRef]

- Rosenberg, A.; Audhkhasi, K.; Sethy, A.; Ramabhadran, B.; Picheny, M. End-to-end speech recognition and keyword search on low-resource languages. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing, New Orleans, LA, USA, 5–9 March 2017; pp. 5280–5284. [Google Scholar]

- Hannun, A.; Case, C.; Casper, J.; Catanzaro, B.; Diamos, G.; Elsen, E.; Prenger, R.; Satheesh, S.; Sengupta, S.; Coates, A.; et al. Deep Speech: Scaling up end-to-end speech recognition. arXiv, 2014; arXiv:1412.5567. [Google Scholar]

- Zhang, Y.; Chan, W.; Jaitly, N. Very Deep Convolutional Networks for End-to-End Speech Recognition. In Proceedings of the 2017 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), New Orleans, LA, USA, 5–9 March 2017; pp. 4845–4849. [Google Scholar]

- Lu, L.; Kong, L.; Dyer, C.; Smith, N.A. Multitask Learning with CTC and Segmental CRF for Speech Recognition. In Proceedings of the INTERSPEECH 2017, Stockholm, Sweden, 20–24 August 2017; pp. 954–958. [Google Scholar]

- Ochiai, T.; Watanabe, S.; Hori, T.; Hershey, J.R. Multichannel End-to-end Speech Recognition. arXiv, 2017; arXiv:1703.04783. [Google Scholar]

- Parcollet, T.; Zhang, Y.; Morchid, M.; Trabelsi, C.; Linarès, G.; De Mori, R.; Bengio, Y. Quaternion Convolutional Neural Networks for End-to-End Automatic Speech Recognition. arXiv, 2018; arXiv:1806.07789. [Google Scholar]

- Ghoshal, A.; Swietojanski, P.; Renals, S. Multilingual training of deep neural networks. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing, Vancouver, BC, Canada, 26–31 May 2013; pp. 7319–7323. [Google Scholar]

- Heigold, G.; Vanhoucke, V.; Senior, A.; Nguyen, P.; Ranzato, M.A.; Devin, M.; Dean, J. Multilingual acoustic models using distributed deep neural networks. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing, Vancouver, BC, Canada, 26–31 May 2013; pp. 8619–8623. [Google Scholar]

- Evgeniou, T.; Pontil, M. Regularized multi-task learning. In Proceedings of the Tenth ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, New York, NY, USA, 22–25 August 2004; pp. 109–117. [Google Scholar]

- Pan, S.J.; Yang, Q. A Survey on Transfer Learning. IEEE Trans. Knowl. Data Eng. 2010, 22, 1345–1359. [Google Scholar] [CrossRef]

- Dalmia, S.; Sanabria, R.; Metze, F.; Black, A.W. Sequence-based Multi-lingual Low Resource Speech Recognition. arXiv, 2018; arXiv:1802.07420. [Google Scholar]

- Kim, S.; Hori, T.; Watanabe, S. Joint CTC-Attention based End-to-End Speech Recognition using Multi-task Learning. In Proceedings of the 2017 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), New Orleans, LA, USA, 5–9 March 2017; pp. 4835–4839. [Google Scholar]

- Chen, W.; Hasegawa-Johnson, M.; Chen, N.F. Topic and Keyword Identification for Low-resourced Speech Using Cross-Language Transfer Learning. In Proceedings of the INTERSPEECH, Hyderabad, India, 2–6 September 2018; pp. 2047–2051. [Google Scholar]

- Latif, S.; Rana, R.; Younis, S.; Qadir, J.; Epps, J. Transfer Learning for Improving Speech Emotion Classification Accuracy. arXiv, 2018; arXiv:1801.06353. [Google Scholar]

- Deng, L. An Overview of Deep-Structured Learning for Information Processing. In Proceedings of the Asian-Pacific Signal and Information Processing-Annual Summit and Conference (APSIPA-ASC), Xi’an, China, 19–22 October 2011. [Google Scholar]

- Bengio, Y. Learning Deep Architectures for AI. Found. Trends Mach. Learn. 2009, 2, 1–127. [Google Scholar] [CrossRef]

- Mohamed, A.R.; Dahl, G.; Hinton, G. Deep Belief Networks for phone recognition. In Nips Workshop on Deep Learning for Speech Recognition and Related Applications; MIT Press: Whister, BC, Canada, 2009; Volume 39. [Google Scholar]

- Lin, H.; Ou, Z. Partial-tied-mixture Auxiliary Chain Models for Speech Recognition Based on Dynamic Bayesian Networks. In Proceedings of the IEEE International Conference on Systems, Taipei, Taiwan, 8–11 October 2006. [Google Scholar]

- Pundak, G.; Sainath, T.N. Lower Frame Rate Neural Network Acoustic Models. In Proceedings of the INTERSPEECH, San Francisc, CA, USA, 8–12 September 2016; pp. 22–26. [Google Scholar]

- WÖLfel, M.; Mcdonough, J. Speech Feature Extraction. In Distant Speech Recognition; John Wiley & Sons, Ltd.: Hoboken, NJ, USA, 2009. [Google Scholar]

- Lee, J.H.; Jung, H.Y.; Lee, T.W. Speech feature extraction using independent component analysis. In Proceedings of the IEEE International Conference on Acoustics, Istanbul, Turkey, 5–9 June 2000. [Google Scholar]

- Li, H.; Xu, X.; Wu, G.; Ding, C.; Zhao, X. Research on speech emotion feature extraction based on MFCC. J. Electron. Meas. Instrum. 2017. (In Chinese) [Google Scholar] [CrossRef]

- Yu, D.; Seltzer, M. Improved Bottleneck Features Using Pretrained Deep Neural Networks. In Proceedings of the Conference of the International Speech Communication Association, Florence, Italy, 27–31 August 2011; pp. 237–240. [Google Scholar]

- Maimaitiaili, T.; Dai, L. Deep Neural Network based Uyghur Large Vocabulary Continuous Speech Recognition. J. Data Acquis. Process. 2015, 365–371. [Google Scholar] [CrossRef]

- Liu, X.; Wang, N.; Guo, W. Keyword Spotting Based on Deep Neural Networks Bottleneck Feature. J. Chin. Comput. Syst. 2015, 36, 1540–1544. [Google Scholar]

- Ozeki, M.; Okatani, T. Understanding Convolutional Neural Networks in Terms of Category-Level Attributes. In Computer Vision—ACCV 2014; Springer International Publishing: Cham, Switzerland, 2014; pp. 362–375. [Google Scholar]

- Zeiler, M.D.; Fergus, R. Visualizing and Understanding Convolutional Networks. In European Conference on Computer Vision; Springer: Cham, Switzerland, 2013; Volume 8689, pp. 818–833. [Google Scholar]

- Abdel-Hamid, O.; Mohamed, A.R.; Jiang, H.; Penn, G. Applying Convolutional Neural Networks concepts to hybrid NN-HMM model for speech recognition. In Proceedings of the IEEE International Conference on Acoustics, Kyoto, Japan, 25–30 March 2012. [Google Scholar]

- Abdelhamid O Deng, L.; Yu, D. Exploring Convolutional Neural Network Structures and Optimization Techniques for Speech Recognition. In Proceedings of the INTERSPEECH, Lyon, France, 25–29 August 2013; pp. 3366–3370. [Google Scholar]

- Sercu, T.; Puhrsch, C.; Kingsbury, B.; LeCun, Y. Very deep multilingual convolutional neural networks for LVCSR. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing, Shanghai, China, 20–25 March 2016; pp. 4955–4959. [Google Scholar]

- Sercu, T.; Goel, V. Advances in Very Deep Convolutional Neural Networks for LVCSR. arXiv, 2016; arXiv:1604.01792. [Google Scholar]

- Graves, A.; Mohamed, A.R.; Hinton, G. Speech recognition with deep recurrent neural networks. In Proceedings of the 2013 IEEE International Conference on Acoustics, Speech and Signal Processing, Vancouver, BC, Canada, 26–31 March 2013; pp. 6645–6649. [Google Scholar]

- Graves, A. Supervised Sequence Labelling with Recurrent Neural Networks; Springer: Berlin/Heidelberg, Germany, 2012. [Google Scholar]

- Chung, J.; Gulcehre, C.; Cho, K.; Bengio, Y. Empirical Evaluation of Gated Recurrent Neural Networks on Sequence Modeling. arXiv, 2014; arXiv:1412.3555. [Google Scholar]

- Graves, A.; Gomez, F. Connectionist temporal classification: Labelling unsegmented sequence data with recurrent neural networks. In Proceedings of the International Conference on Machine Learning, Pittsburgh, PA, USA, 25–29 June 2006; pp. 369–376. [Google Scholar]

- Miao, Y.; Gowayyed, M.; Metze, F. EESEN: End-to-end speech recognition using deep RNN models and WFST-based decoding. In Proceedings of the IEEE Workshop on Automatic Speech Recognition and Understanding, Scottsdale, AZ, USA, 13–17 December 2016; pp. 167–174. [Google Scholar]

- Zeghidour, N.; Usunier, N.; Synnaeve, G.; Collobert, R.; Dupoux, E. End-to-End Speech Recognition from the Raw Waveform. arXiv, 2018; arXiv:1806.07098. [Google Scholar]

- Watanabe, S.; Hori, T.; Karita, S.; Hayashi, T.; Nishitoba, J.; Unno, Y.; Soplin, N.E.Y.; Heymann, J.; Wiesner, M.; Chen, N.; et al. ESPnet: End-to-End Speech Processing Toolkit. arXiv, 2018; arXiv:1804.00015. [Google Scholar]

- Xu, S. Grammatical and semantic representation of spatial concepts in the Tujia language. J. Minor. Lang. China 2013, 1, 35–45. [Google Scholar]

- Xu, S. Features of Change in the Structure of Endangered Languages: A Case Study of the South Tujia Language. J. Yunnan Natl. Univ. (Soc. Sci.) 2012, 29. (In Chinese) [Google Scholar] [CrossRef]

- Wang, D.; Zhang, X. THCHS-30: A Free Chinese Speech Corpus. arXiv, 2015; arXiv:1512.01882. [Google Scholar]

- Wu, C.; Wang, B. Extracting Topics Based on Word2Vec and Improved Jaccard Similarity Coefficient. In Proceedings of the IEEE Second International Conference on Data Science in Cyberspace, Shenzhen, China, 26–29 June 2017. [Google Scholar]

- Inc, G. Convolutional, long short-term memory, fully connected deep neural networks. In Proceedings of the IEEE International Conference on Acoustics, Brisbane, Australia, 19–24 April 2015. [Google Scholar]

- Amodei, D.; Ananthanarayanan, S.; Anubhai, R.; Bai, J.; Battenberg, E.; Case, C.; Casper, J.; Catanzaro, B.; Cheng, Q.; Chen, G.; et al. Deep Speech 2: End-to-End Speech Recognition in English and Mandarin. arXiv, 2015; arXiv:1512.02595. [Google Scholar]

- Ioffe, S.; Szegedy, C. Batch normalization: Accelerating deep network training by reducing internal covariate shift. In Proceedings of the International Conference on Machine Learning, Lille, France, 6–11 July 2015; pp. 448–456. [Google Scholar]

| Label Type | Label Content |

|---|---|

| Broad IPA | lai55 xuã55 lã55 ti21 xua21, mɨe35 su21 le53 |

| Chinese one-to-one translation | 今天早晨(的话)天亮(过) |

| Chinese translation | 今天早上天亮以后 |

| Label Type | Label Content |

|---|---|

| Chinese Character | 菜 做好 了 一碗 清蒸 武昌鱼 一碗 蕃茄 炒鸡蛋 一碗 榨菜 干 子 炒肉丝 |

| Chinese Pinyin | cai4 zuo4 hao3 le5 yi4 wan3 qing1 zheng1 wu3 chang1 yu2 yi4 wan3 fan1 qie2 chao3 ji1 dan4 yi4 wan3 zha4 cai4 gan1 zi3 chao3 rou4 si1 |

| Narrow IPA | tsʰai(51) tsuo(51) xɑu(214) lɤ i(51) uan(214) tɕʰiŋ(55) tʂəŋ(55) u(214) tʂʰɑŋ(55) y(35) i(51) uan(214) fan(55) tɕʰiɛ(35) tʂʰɑu(214) tɕi(55) tan(51) i(51) uan(214) tʂa(51) tsʰai(51) kan(55) tsɿ(214) tʂʰɑu(214) rou(51) sɿ(55) |

| Broad IPA | tsʰai(51) tsuo(51) xau(214) lɤ i(51) uan(214) tɕʰiŋ(55) tʂəŋ(55) u(214) tʂʰaŋ(55) y(35) i(51) uan(214) fan(55) tɕʰiɛ(35) tʂʰau(214) tɕi(55) tan(51) i(51) uan(214) tʂa(51) tsʰai(51) kan(55) tsi(214) tʂʰau(214) rou(51) si(55) |

| Narrow IPA | Broad IPA |

|---|---|

| ɑ | a |

| iou | iu |

| uei | ui |

| iɛn | ian |

| ɿ | i |

| ʅ | i |

| Convolutional Layer | First Convolutional Layer | Second Convolutional Layer | |

|---|---|---|---|

| Parameters | |||

| Filter size | 11 × 41 | 11 × 21 | |

| Number of input channels | 1 | 1 | |

| Number of output channels | 32 | 32 | |

| Stride size | 3 × 2 | 1 × 2 | |

| Padding size | 5 × 20 | 5 × 10 | |

| Network | Parameter Type | Parameter Content |

|---|---|---|

| BiLSTM | minibatch size | 16 |

| LSTM layers | 3 | |

| cells of per layers | 512 | |

| learning rate | 0.001 | |

| CTC | Decoder | beam search |

| RNN Cell | Dev | Test |

|---|---|---|

| BiRNN (Bi-directional recurrent neural network) | 42.37% | 53.37% |

| BiGRU (Bi-directional long short-term memory) | 37.09% | 51.95% |

| BiLSTM (Bi-directional gated recurrent unit) | 35.82% | 48.30% |

| Model | Dev | Test |

|---|---|---|

| IDS (Improved Deep Speech 2) | 35.82% | 48.30% |

| CDS (Cross-language Deep Speech 2) | 40.11% | 50.26% |

| TLDS (Transfer Learning Deep Speech 2) | 31.11% | 46.19% |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Yu, C.; Chen, Y.; Li, Y.; Kang, M.; Xu, S.; Liu, X. Cross-Language End-to-End Speech Recognition Research Based on Transfer Learning for the Low-Resource Tujia Language. Symmetry 2019, 11, 179. https://doi.org/10.3390/sym11020179

Yu C, Chen Y, Li Y, Kang M, Xu S, Liu X. Cross-Language End-to-End Speech Recognition Research Based on Transfer Learning for the Low-Resource Tujia Language. Symmetry. 2019; 11(2):179. https://doi.org/10.3390/sym11020179

Chicago/Turabian StyleYu, Chongchong, Yunbing Chen, Yueqiao Li, Meng Kang, Shixuan Xu, and Xueer Liu. 2019. "Cross-Language End-to-End Speech Recognition Research Based on Transfer Learning for the Low-Resource Tujia Language" Symmetry 11, no. 2: 179. https://doi.org/10.3390/sym11020179

APA StyleYu, C., Chen, Y., Li, Y., Kang, M., Xu, S., & Liu, X. (2019). Cross-Language End-to-End Speech Recognition Research Based on Transfer Learning for the Low-Resource Tujia Language. Symmetry, 11(2), 179. https://doi.org/10.3390/sym11020179