Highlights

What are the main findings?

- Innovative Fusion of Remote Sensing and AI for Downscaling: The TAUT model successfully demonstrates a novel deep learning framework that effectively fuses high-resolution topographic remote sensing data with coarse-scale numerical weather prediction (NWP) outputs. The core finding is that its dedicated Multi-branch Terrain-Adaptive (MBTA) module significantly enhances spatiotemporal downscaling accuracy for 2 m temperature over complex terrain by effectively capturing the complex nonlinear relationship between elevation and surface temperature.

- Superiority of a Hybrid CNN-Transformer Architecture: Experimental results confirm that the hybrid architecture, which integrates the local feature extraction of 3D CNNs with the global contextual modeling of Swin Transformers, outperforms comparison models (e.g., U-Net, EDVR). This design is particularly adept at reconstructing realistic spatial textures of the temperature field influenced by complex terrain.

What are the implications of the main findings?

- A Novel Pathway for Enhancing Mesoscale Data Utilization: This study provides an efficient AI-based “post-processing” tool to generate high-spatiotemporal-resolution data from standard NWP/reanalysis products. It offers a practical and computationally efficient alternative to dynamical downscaling, enhancing the value of existing mesoscale data for regional applications.

- Paradigm for Integrating Remote Sensing with AI Weather Models: The research underscores the critical value of intelligently integrating static remote sensing data into AI models to dramatically improve the physical consistency and regional adaptability of meteorological variable estimation, especially in data-sparse regions with complex terrain.

Abstract

High-precision temperature prediction is crucial for dealing with extreme weather events under the background of global warming. However, due to the limitations of computing resources, numerical weather prediction models are difficult to directly provide high spatio-temporal resolution data that meets the specific application requirements of a certain region. This problem is particularly prominent in areas with complex terrain. The use of remote sensing data, especially high-resolution terrain data, provides key information for understanding and simulating the interaction between land and atmosphere in complex terrain, making the integration of remote sensing and NWP outputs to achieve high-precision meteorological element downscaling a core challenge. Aiming at the challenge of temperature scaling in complex terrain areas of Southwest China, this paper proposes a novel deep learning model—Terrain Adaptive U-Net Transformer (TAUT). This model takes the encoder–decoder structure of U-Net as the skeleton, deeply integrates the global attention mechanism of Swin Transformer and the local spatiotemporal feature extraction ability of three-dimensional convolution, and innovatively introduces the multi-branch terrain adaptive module (MBTA). The adaptive integration of terrain remote sensing data with various meteorological data, such as temperature fields and wind fields, has been achieved. Eventually, in the complex terrain area of Southwest China, a spatio-temporal high-resolution downscaling of 2 m temperature was realized (from 0.1° in space to 0.01°, and from 3 h intervals to 1 h intervals in time). The experimental results show that within the 48 h downscaling window period, the TAUT model outperforms the comparison models such as bilinear interpolation, SRCNN, U-Net, and EDVR in all evaluation metrics (MAE, RMSE, COR, ACC, PSNR, SSIM). The systematic ablation experiment verified the independent contributions and synergistic effects of the Swin Transformer module, the 3D convolution module, and the MBTA module in improving the performance of each model. In addition, the regional terrain verification shows that this model demonstrates good adaptability and stability under different terrain types (mountains, plateaus, basins). Especially in cases of high-temperature extreme weather, it can more precisely restore the temperature distribution details and spatial textures affected by the terrain, verifying the significant impact of terrain remote sensing data on the accuracy of temperature downscaling. The core contribution of this study lies in the successful construction of a hybrid architecture that can jointly leverage the local feature extraction advantages of CNN and the global context modeling capabilities of Transformer, and effectively integrate key terrain remote sensing data through dedicated modules. The TAUT model offers an effective deep learning solution for precise temperature prediction in complex terrain areas and also provides a referential framework for the integration of remote sensing data and numerical model data in deep learning models.

1. Introduction

Weather and climate change have a profound impact on human production and life. In recent years, extreme temperature events, such as heat waves and cold waves, have become the focus of weather prediction and disaster prevention at both global and regional scales [1,2]. Temperature prediction, as a core topic in atmospheric science, plays a crucial role in various fields such as daily life, industrial and agricultural production, and public health [3,4]. Currently, temperature forecasting mainly relies on numerical weather prediction models. However, due to limitations in computing power, NWP models usually cannot directly provide high spatial and temporal resolution data that meet the needs of regional research. This problem is particularly prominent in areas with complex terrain, as the distribution of surface temperature is mainly controlled by topographic undulations. In this context, the use of remote sensing data, especially the integration of high-resolution terrain data, has become an indispensable means for understanding and simulating the interaction between land and atmosphere. Therefore, effectively integrating the terrain information derived from remote sensing inversion with NWP output data is crucial for achieving high-precision spatial and temporal downscaling of meteorological elements such as near-surface temperature.

In practical applications, numerous fields demand enhanced precision in temperature forecasts and other meteorological elements, particularly in regions with complex terrain. Consequently, various downscaling techniques have been developed to derive high-resolution details from coarse-resolution Numerical Weather Prediction (NWP) model outputs. Traditionally, downscaling approaches are categorized into two primary types: dynamical downscaling and statistical downscaling. Dynamical downscaling employs low-resolution boundary conditions to drive high-resolution regional models, yielding detailed information on small-scale features [5]. Nevertheless, this method requires substantial computational resources while offering limited accuracy and high sensitivity to boundary conditions [6]. Conversely, statistical downscaling is data-driven, leveraging historical datasets to establish relationships between low-resolution model outputs and observations. Its key advantage lies in computational efficiency. However, it depends heavily on long-term, high-quality observational data, and conventional statistical methods may inadequately capture complex nonlinear relationships and spatial details, occasionally resulting in overly smooth or physically implausible outcomes [7].

In recent years, the application of machine learning, particularly deep learning methods, has become increasingly prevalent in the field of meteorological forecasting, offering novel technical pathways for tasks such as temperature prediction and downscaling. Unlike traditional machine learning, which places greater emphasis on data preprocessing, feature engineering, and model selection, deep learning, owing to the complexity of its models, focuses more on data augmentation, network architecture design, regularization techniques, and optimization methods. This often results in superior performance when handling large datasets and complex tasks [8].

Convolutional Neural Networks (CNNs) and Generative Adversarial Networks (GANs) represent two foundational and extensively utilized architectures in image super-resolution (SR). CNNs excel at extracting both local and global image features due to their inherent properties of local connectivity and parameter sharing. The pioneering work by Dong et al. introduced the SRCNN model, which applied a CNN to single-image super-resolution [9]. SRCNN first employs upsampling on the low-resolution (LR) input, followed by reconstruction through a three-layer convolutional network, demonstrating superior performance compared to traditional interpolation methods, albeit at a higher computational cost. To enhance efficiency, Dong et al. subsequently proposed FSRCNN, which learns the mapping directly from the LR image and performs upsampling at the final network stage, thereby significantly improving computational efficiency [10]. This was followed by models incorporating residual learning concepts, such as VDSR [11], LapSRN [12], and MDSR [13], which collectively contributed to continual performance gains. GANs facilitate the generation of highly realistic details through an adversarial training process involving a generator and a discriminator. A landmark model, SRGAN, was introduced by Ledig et al., marking the first successful integration of GANs into SR tasks [14]. By incorporating a perceptual loss function, SRGAN achieved breakthrough performance in the visual quality of reconstructed images. However, GAN-based approaches are often challenged by inherent issues like training instability and mode collapse. To improve training stability, Karras et al. proposed ProGAN, which utilizes a progressive growing strategy for both the generator and discriminator [15]. Additionally, to address multi-scale generation, Karnewar et al. developed MSG-GAN, enabling image synthesis at multiple scales within a single model framework [16]. In recent developments, Transformer and Diffusion models have emerged as prominent new paradigms in SR research. Transformers offer enhanced capability for modeling long-range dependencies via advanced attention mechanisms, often with improved efficiency [17], while Diffusion models demonstrate exceptional generative capabilities, leading to breakthroughs in reconstruction fidelity [18]. Hybrid architectures that fuse these two approaches are also gaining traction as a new research direction [19]. For example, the DiT4SR model integrates generative priors and multi-scale feature fusion, further elevating the visual fidelity and physical consistency of the output images [20].

Spatiotemporal downscaling in meteorology exhibits strong parallels with video super-resolution in computer vision, as both fundamentally aim to enhance resolution across spatial and temporal domains [21]. Spatially, they operate by establishing functional mappings between high- and low-resolution variables on discrete grids to reconstruct detailed spatial patterns of meteorological fields. Temporally, they employ techniques such as frame interpolation or sequence modeling to increase the temporal resolution, thereby effectively capturing the nuances of dynamic evolution. Capitalizing on this conceptual synergy, deep learning models originally developed for video super-resolution have emerged as a powerful and increasingly prevalent pathway for obtaining high-precision, spatiotemporally downscaled data for elements like surface temperature. The application of Convolutional Neural Networks (CNNs) to meteorological downscaling was pioneered by Vandal et al. (2017), who introduced DeepSD, a model based on the Super-Resolution Convolutional Neural Network (SRCNN) architecture [22]. DeepSD enhanced predictability in statistical downscaling by incorporating multiple variable input channels. Its applicability was further corroborated by Kumar et al. [23] through a comparative study of SRCNN, stacked SRCNN, and DeepSD for downscaling Indian summer monsoon rainfall. Subsequent research has yielded various CNN-based refinements. For example, Pan et al. [24] enhanced physical consistency by integrating physical constraints, restricting predictors in daily precipitation downscaling to variables derived directly from atmospheric dynamics equations. Mu et al. [25] proposed the Climate Downscaling Network (CDN) to capture the chaotic nature of the climate system by incorporating multi-scale spatial correlations and meteorological priors. Other notable advances include the use of lightweight CNNs for high-precision precipitation downscaling in complex terrain by Rampal et al. [26] and the improvement of seasonal precipitation downscaling in China via transfer learning and circulation field inputs by Jin et al. [27]. Concurrently, U-Net and its variants (e.g., U-Net AE [28], Nest U-Net [29], AU U-Net [30]) have demonstrated exceptional performance in downscaling tasks, benefiting from their symmetric encoder–decoder structure, which excels at preserving spatial details and consistency. Generative Adversarial Networks (GANs) have also significantly advanced meteorological downscaling. A landmark work, the PhIRE model [31], adapted the Super-Resolution Generative Adversarial Network (SRGAN) framework, incorporating an adversarial perceptual loss to achieve a 50-fold downscaling of wind field data. Similarly, Cheng et al. [32] generated high-quality precipitation downscaling products by leveraging SRGAN alongside fused multi-source meteorological variables. More recently, attention mechanisms have been widely integrated to enhance model performance. Instances include the CLDASSD model by Tie et al. [33] for fine downscaling of 2 m temperature using feature-weighted fusion, the channel attention mechanism embedded in a residual network by Liu et al. [34] for improved wind field downscaling accuracy, and the innovative axial attention mechanism (TIGAM) proposed by Yu et al. [35], which leverages latitudinal and longitudinal correlations for synergistic downscaling of multiple meteorological variables.

Although deep learning has driven significant advances in meteorological downscaling, prevailing approaches still confront several critical challenges. A primary limitation is the scarcity of hybrid architectures that effectively harness the local feature extraction prowess of Convolutional Neural Networks (CNNs) and the global contextual modeling strengths of Transformers. Another persistent difficulty involves integrating crucial auxiliary data, such as topographic information, via more sophisticated mechanisms to improve downscaling accuracy over regions with complex underlying surfaces. To address these challenges, this study proposes a novel framework that integrates the Swin Transformer with the U-Net architecture. A dedicated multi-scale meteorological element adaptive fusion module is designed to effectively incorporate auxiliary data, thereby facilitating an analysis of complex terrain’s influence on temperature downscaling. We conducted a comparative assessment of the downscaling performance of the proposed U-Net-based model against other architectures, including CNN-based networks. The results demonstrate the superior performance of our U-Net-based architecture. Furthermore, our methodology achieves downscaling in both temporal and spatial dimensions, yielding high-resolution spatiotemporal results. The effectiveness of the model is rigorously validated through multiple evaluation metrics, all of which confirm its robust downscaling capabilities.

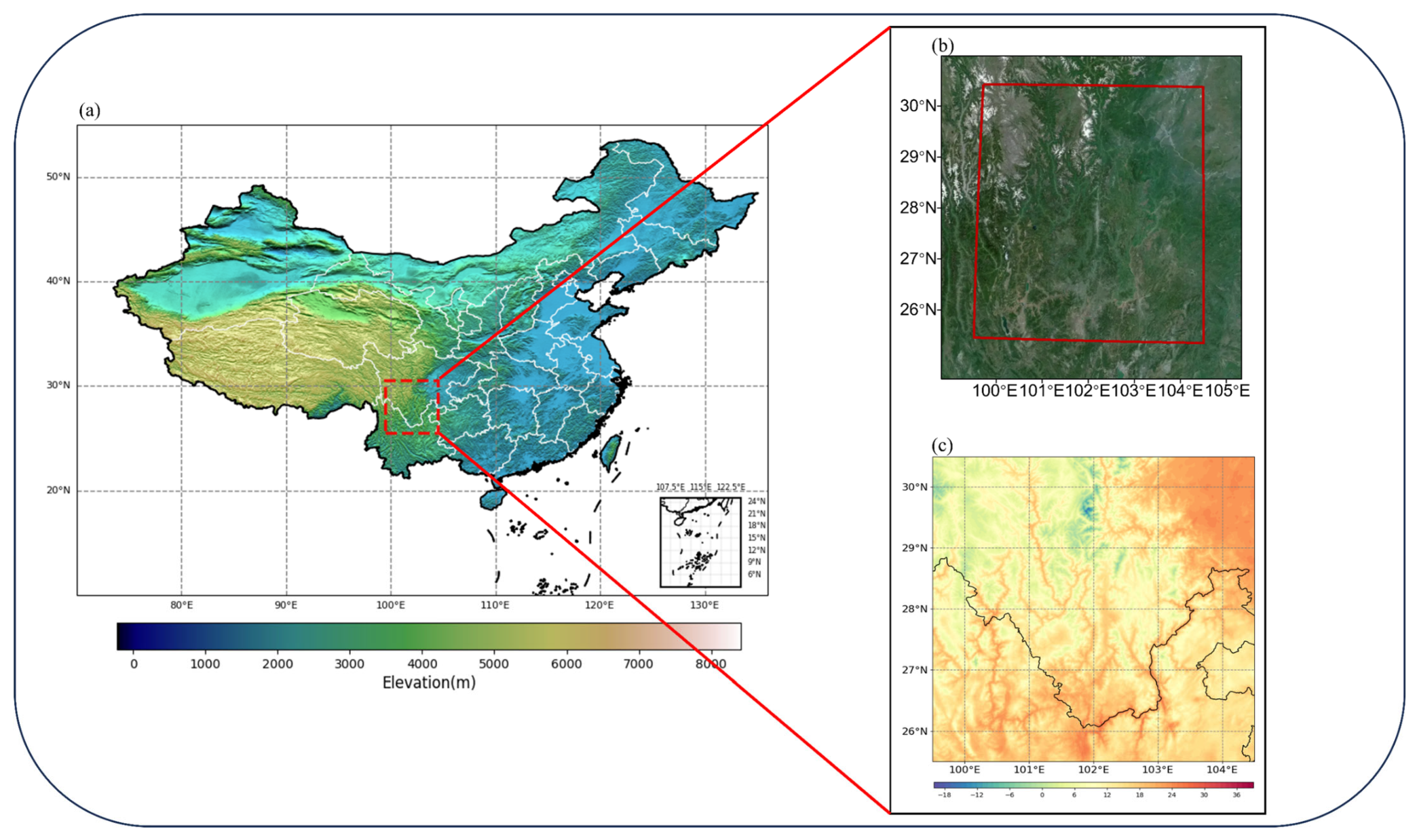

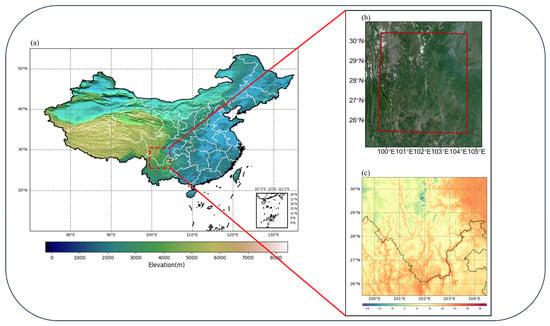

2. Data Sources and Data Preprocessing

The research area selected in this paper ranges from 25.5°N to 30.5°N latitude and from 99.5°E to 104.5°E longitude, as shown in Figure 1a. This area is centered on Xichang City, China, covering most of the Hengduan Mountains, the northwestern part of the Yunnan-Guizhou Plateau, the western part of the Sichuan Basin, and other complex terrains. As the research area is located in the transitional zone of China’s topographic steps, the vertical decline effect of temperature plays a dominant role. The significant difference in the distribution of topographic heights has a decisive effect on the distribution of temperature. In Figure 1b is the terrain remote sensing image of the selected area, and Figure 1c is the actual temperature distribution map characterized by the HRCLDAS dataset used in the text at a specific moment. The two have certain similarities in spatial structure. Therefore, the research in this area is highly representative, and it is necessary to input elevation remote sensing data into the model. Table 1 summarizes the basic information of the dataset used in this paper.

Figure 1.

(a) Topographic elevation distribution across China. The red dashed rectangle delineates the study area selected for this research. (b) The topographic map of the remote sensing data of the selected research area. (c) Spatial distribution of the surface 2 m temperature dataset within the study area.

Table 1.

Summary of the data sets used in this study.

2.1. ECMWF HRES

The High-Resolution Forecast (HRES) produced by the European Center for Medium-Range Weather Forecasts (ECMWF) Integrated Forecast System (IFS) provides global numerical weather predictions. Starting from 1 February 2024, the resolution of its open data has significantly increased from 0.4° to 0.25°, with some areas reaching 0.1°, greatly enhancing the simulation accuracy in complex terrain regions. The high-resolution deterministic forecast provided by this dataset is updated 4 times a day, with a forecast every 6 h, each time covering a forecast time limit of 0 to 240 h. This study selected the temperature at two meters above the ground and the wind field variables at ten meters above the ground as input. In terms of time, samples from both the 00:00 and the 12:00 initialization times were selected. The downscaling period was set within a 48 h range, and the data usage period was from 1 January 2022 to 31 December 2024.

2.2. HRCLDAS

HRCLDAS (High-Resolution China Meteorological Administration Land Data Assimilation System) is a high-resolution land surface data assimilation system developed by the National Meteorological Information Center (NMIC) of China. This system generates a mixture of automatic ground station observation data, numerical prediction data, and remote sensing satellite data through the multi-grid variational analysis technique and terrain correction algorithm. Its spatial coverage range is 70° to 140°E and 15° to 60°N. The spatial resolution is 0.01° × 0.01°, and the temporal resolution is 1 h [36]. In this study, the temperature variable at 2 m above the ground was selected as the true value, and the data usage period was from 1 January 2022 to 31 December 2024.

2.3. GDEMV3

The ASTER GDEM V3 (Advanced Spaceborne Thermal Emission and Reflection Radiometer Global Digital Elevation Model Version 3) is a global digital elevation model jointly released by the United States National Aeronautics and Space Administration (NASA) and Japan’s Ministry of Economy, Trade, and Industry (METI). This is an open-source remote sensing elevation dataset covering the global surface, with a spatial resolution of 1 arc second, a vertical accuracy of 1 m, and a spatial coverage range from 83° north latitude to 83° south latitude. As the altitude of the study area has a significant impact on the temperature distribution, we specially constructed the input of terrain elements in the model to consider the influence of the complex terrain in the southwest region on the temperature downscale.

2.4. Data Preprocessing

The ECMWF and HRCLDAS datasets initially possess differing temporal references and distinct spatio-temporal resolutions. To address these inconsistencies and construct an effective training sample set, we implemented a comprehensive four-step preprocessing procedure. First, we align the initial time of the HRCLDAS data from Beijing Time (CST) to Universal Time (UTC). At the same time, due to the different spatio-temporal resolutions of ECMWF data and HRCLDAS data, when constructing a single input sample, it is necessary to first unify the spatial coverage ranges of the two types of data. Therefore, based on the research scope of this article, we conducted a screening of the latitude and longitude for the two datasets. In terms of time, this paper sets a 48 h downscaling time limit window and aligns time point sampling based on their respective time resolutions. Finally, the data that have been aligned in time and space are subjected to Z-score standardization processing to address the dimensional differences in temperature, wind speed, and elevation data. The specific operation is as follows.

Given an initial time t0, a 48 h downscaling window is defined as W = [t0, t0 + 48 h]. Sampling functions were designed for the different resolution data:

ECMWF (3 h interval):

Data are input at 3 h intervals, resulting in 17 time points and forming a tensor , where the channel dimension C = 4 corresponds to the four input variables: 2 m temperature, 10 m U-component wind speed, 10 m V-component wind speed, and terrain remote sensing data.

HRCLDAS (1 h interval):

Data are input at 1 h intervals, resulting in 49 time points and forming a tensor , where the channel dimension C = 1 corresponds to the one input variable: 2 m temperature.

For Z-score standardization, the mean μ⁽ᶜ⁾ and standard deviation σ⁽ᶜ⁾ were calculated independently for each channel c across the entire dataset. An input sample X was then transformed as follows:

3. Methodology

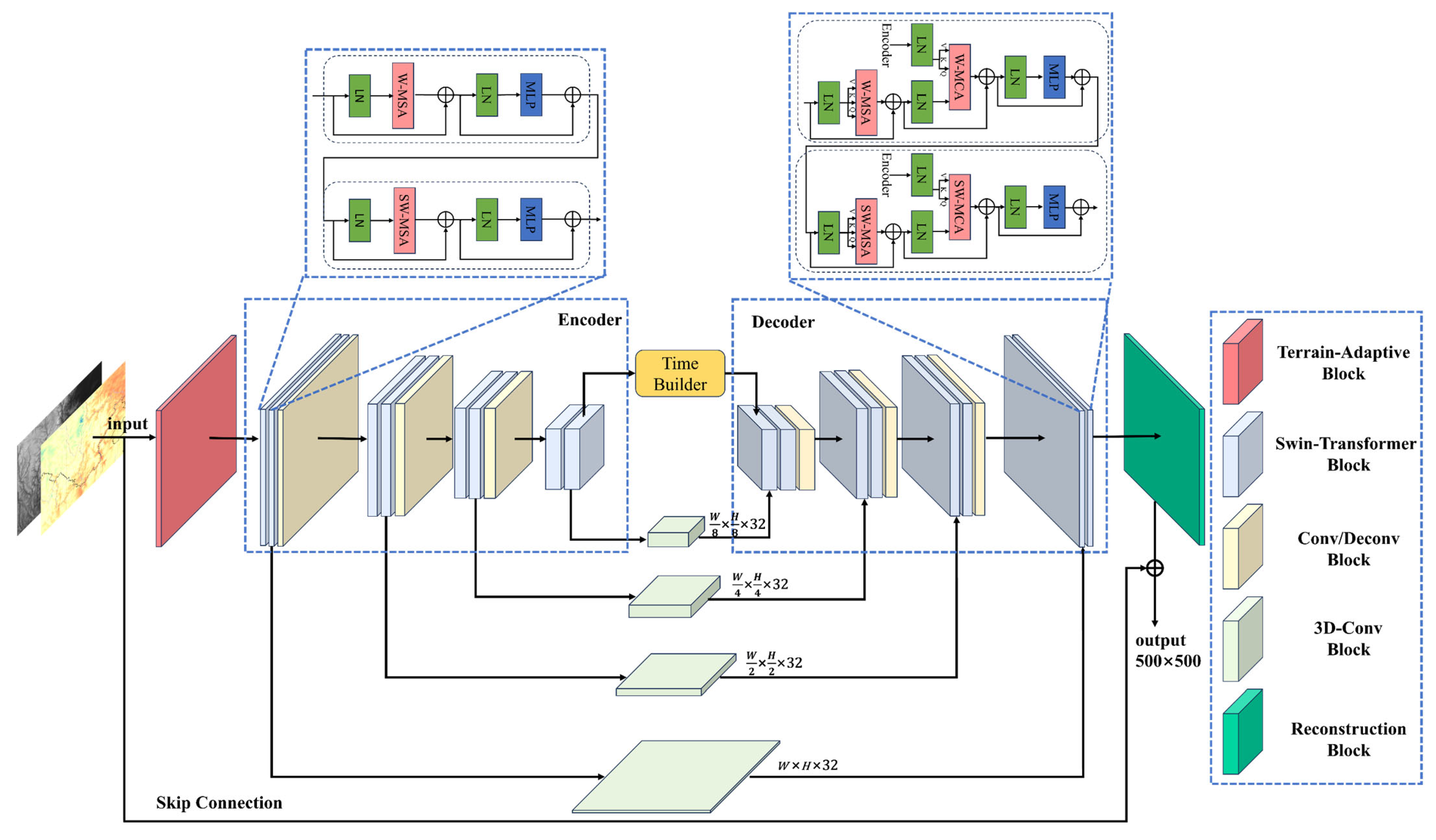

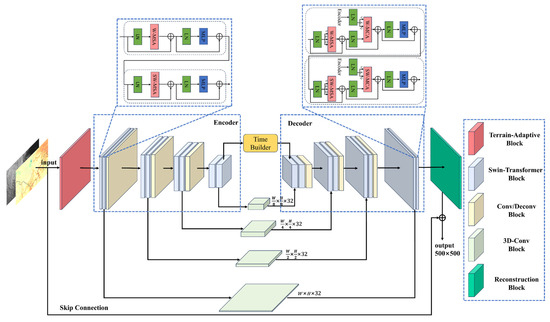

In response to the challenges posed by significant terrain effects in downscaling 2 m ground temperature, this study proposes a multimodal fusion temperature prediction architecture named the Terrain-adaptive U-Net Transformer (TAUT). Built upon the U-Net encoder–decoder structure, the model achieves high-precision temperature sequence downscaling through a multi-stage feature abstraction and reconstruction mechanism. The detailed architecture of TAUT is presented in Figure 2.

Figure 2.

Schematic diagram of the overall workflow and architecture of the proposed TAUT model. The right side of the panel illustrates the key modules employed in the model, while the top section details the internal structure of the Swin Transformer block.

The TAUT framework comprises three core components: an encoder, a decoder, and a time builder. The encoder extracts multi-level features from the input data, whereas the decoder reconstructs these features to generate the final output. Both the encoder and decoder employ a series of Swin Transformer blocks alongside convolutional layers, with the encoder using standard convolutions and the decoder using deconvolutions. Specifically, the Swin Transformer is responsible for hierarchical feature extraction and reconstruction, while the convolutional and deconvolutional layers perform downsampling and upsampling, respectively. Features from multiple encoder stages are further integrated via 3D convolutions before being passed to the decoder. Meanwhile, the time builder generates time-aware tensors and injects them into the decoder to support sequence-aware reconstruction. The following section details each module and the training procedure.

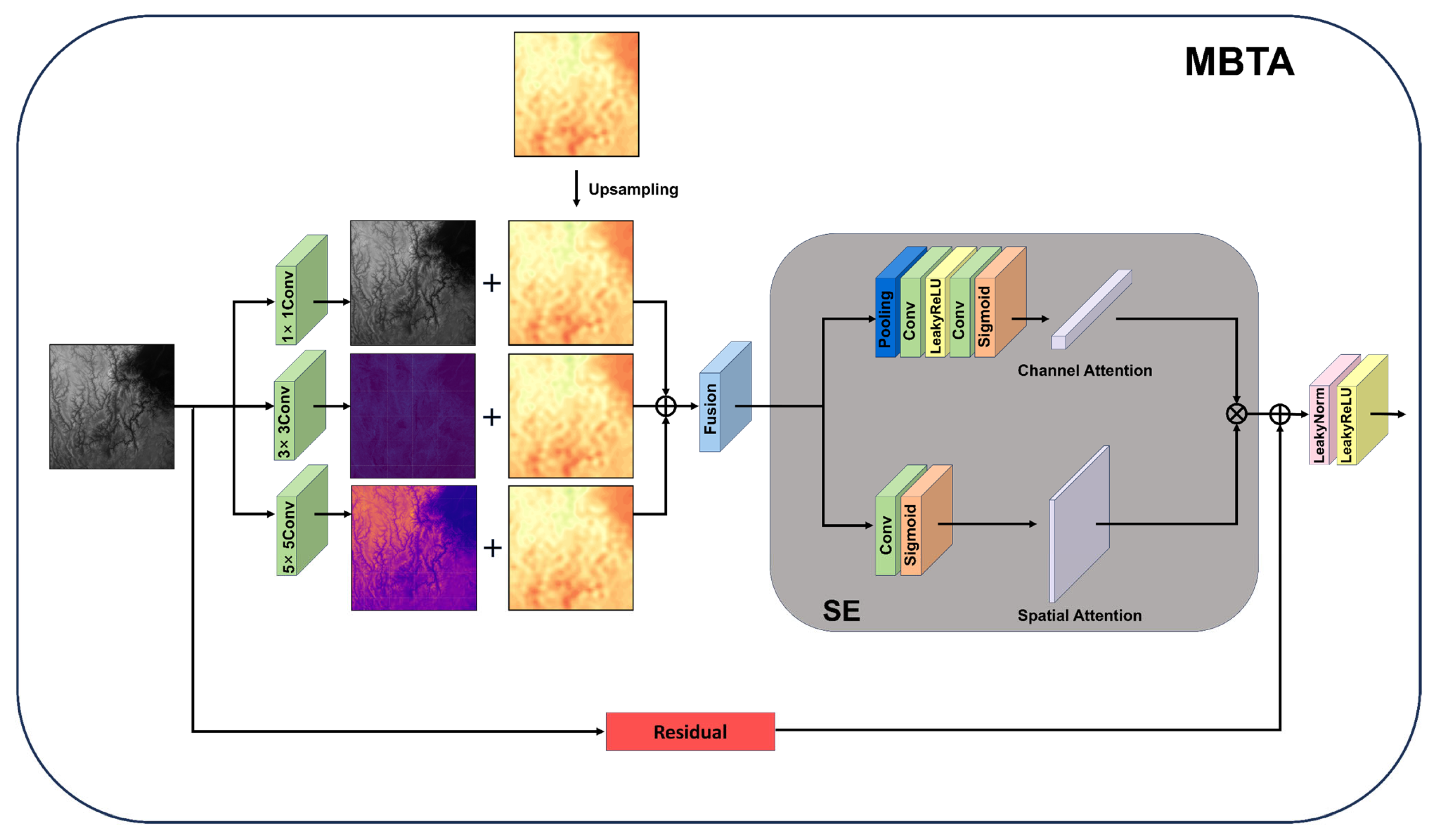

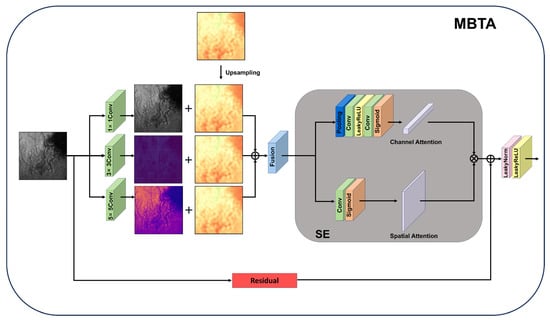

3.1. Terrain-Adaptive Block

To solve the problem of multi-scale feature fusion of meteorological variables and Terrain remote sensing data, this paper proposes a Multi-Branch Terrain-Adaptive module (MBTA). As shown in Figure 3, this module first convolves the elevation data through three convolution kernels of different sizes to initially extract the features of the terrain remote sensing data. Then, based on the extracted features of the remote sensing data, corresponding upsampling is performed on the numerical model data. Subsequently, the extracted remote sensing data features and the upsampled numerical model data features are initially fused through the channel concatenation method. Finally, the fused data features are sent to the modified SE module [37], which consists of two parallel branches. The Channel Attention Branch first applies AdaptiveAvgPool3d for global spatial pooling, followed by two 3D convolutional layers with a LeakyReLU activation and a sigmoid activation to produce channel attention weights. The Spatial Attention Branch employs a 3D convolutional layer with a sigmoid activation to generate spatial attention weights. Finally, the outputs from both branches are fused through element-wise multiplication and input into the next part of the model.

Figure 3.

Schematic architecture of the proposed Multi-Branch Terrain-Adaptive (MBTA) module.

3.2. Swin Transformer Block

The encoder–decoder framework constructed in this paper fully integrates the advantages of the hierarchical attention mechanism of Swin Transformer and the local feature extraction of convolution operations. The core operation of Transformer is the scaled dot-product attention mechanism [38], which is mathematically expressed as follows:

Here, the query matrix , key matrix and value matrix are scaled by a factor of to prevent vanishing gradients during training. Building upon this, Swin Transformer employs a hierarchical structure that optimizes attention computation by alternating between two distinct partitioning strategies: regular window partitioning and shifted window partitioning [39]. As shown in Figure 2, the input features to a Swin Transformer block within the encoder first undergo Layer Normalization (LN). They are then processed through an alternating sequence of Window-based Multi-head Self-Attention (W-MSA) and Shifted Window-based Multi-head Self-Attention (SW-MSA) modules. The number of attention heads follows a symmetric pyramidal configuration across network stages. Finally, the features are non-linearly transformed by a Multilayer Perceptron (MLP). Residual connections are incorporated around both the attention and MLP components to facilitate feature refinement:

This model structure significantly reduces the computational cost of traditional attention through independent computation within the window while maintaining the computational complexity. The model also utilizes the shift window strategy to establish cross-window connection channels for global interaction and captures long-distance dependencies through deep networks. Consequently, it achieves a complementary fusion between the local feature extraction strengths of convolutional operations and the global modeling capabilities of the attention mechanism.

3.3. Encoder Module

The proposed encoder employs a hierarchical architecture designed for the progressive abstraction and compression of spatiotemporal features through multiple processing stages. Comprising four sequential feature-processing units, each stage utilizes a collaborative Transformer-Convolution mechanism to perform feature transformation and resolution control, mathematically formulated as follows:

Here, denotes the Swin Transformer encoder module, represents the 3D convolutional module, refers to the downsampling module, signifies the output of the -th encoder layer, and indicates the output from the Swin Transformer module.

The input features are first processed by the Swin Transformer module to capture global spatiotemporal dependencies. This module employs a window-based multi-head self-attention mechanism, which establishes inter-frame pixel correlations within localized windows. The resulting features are subsequently refined via a 3D convolutional layer, where kernels slide across both spatial and temporal dimensions to extract localized motion patterns. This step compensates for the limitations of pure attention mechanisms in capturing fine-grained details, thereby generating enhanced features .

Downsampling modules are incorporated at the end of the first three processing stages, implemented using convolutions with a stride of 2. These reduce the spatial resolution of the feature maps by half while preserving the channel dimensionality to maintain representational capacity. The fourth stage, serving as the top layer of the encoder, retains the original resolution in its output to prevent excessive information loss that may result from over-compression in deeper layers.

This hierarchical design enables the model to focus on local motion details at lower levels while modeling global spatiotemporal context at higher levels, forming an integrated framework for hierarchical video understanding.

3.4. Time Builder Block

The Time Builder Block reconstructs the feature sequence output by the encoder into the required hourly time series through temporal interpolation. Given that the input meteorological data has a temporal resolution of 3 h, the final feature output from the encoder module can be represented along the time dimension as follows:

where denotes the length of the time steps in . To expand the temporal dimension to match the hourly resolution of the label data, we construct a temporal query tensor T and employ the following strategy:

The purpose of the Time Builder Block is to provide physically reasonable initial estimates at a step size of one hour for the highly abstract feature maps generated by the Swin Transformer layer and 3D convolution layer in the encoder. These initial estimates serve as the starting point for subsequent, powerful nonlinear transformations in the decoder. The selection of coefficients (2/3, 1/3) merely provides a reasonable prior, indicating that features closer in time (for example, t + 1 h is closer to t + 0 h rather than t + 3 h) should have a stronger influence, guiding the initialization to be closer to the true manifold, thereby reducing the learning burden on the decoder.

3.5. Decoder Module

Following the encoder’s feature extraction and the Time Builder Block’s temporal interpolation, the decoder part of the TAUT model adopts a hierarchical feature reconstruction architecture to generate the final high-resolution output. It achieves resolution restoration and detail enhancement of spatio-temporal features through multi-level processing. At each layer of the decoder, the Swin Transformer decoder module generates output features through the time query tensor acting on the key-value pair dictionary. Each Swin Transformer decoder block has two inputs: features from the same layer encoder and a time query vector. This paper employs a 3D convolutional module to process the features of the encoder and input them into the decoder, which takes advantage of the local feature extraction capability of convolution. For the time query vector, it comes from the query builder block of the first layer and the output of the previous layer of other layers. The output of the Swin Transformer decoder block is then upsampled by an upsampling block composed of deconvolution. The specific formula is as follows:

Here, denotes the output from the corresponding Swin Transformer encoder module, is the 3D convolutional module, T is the temporal query tensor, represents the Swin Transformer decoder module, is the upsampling module, and is the output of the k-th decoder layer.

As illustrated in Figure 2, within the decoder module, the temporal query vector is first processed by a Window-based Multi-head Self-Attention (W-MSA) mechanism. The output features from the corresponding encoder layer serve as the key-value dictionary. This dictionary, together with the query, is then fed into a Window-based Multi-head Cross-Attention (W-MCA) module. Here, the temporal query interacts with the encoder-derived key-value pairs to generate task-specific features tailored for the final objective. Shifted Window-based Multi-head Self-Attention (SW-MSA) and Shifted Window-based Multi-head Cross-Attention (SW-MCA) perform analogous operations within shifted window configurations for broader contextual capture.

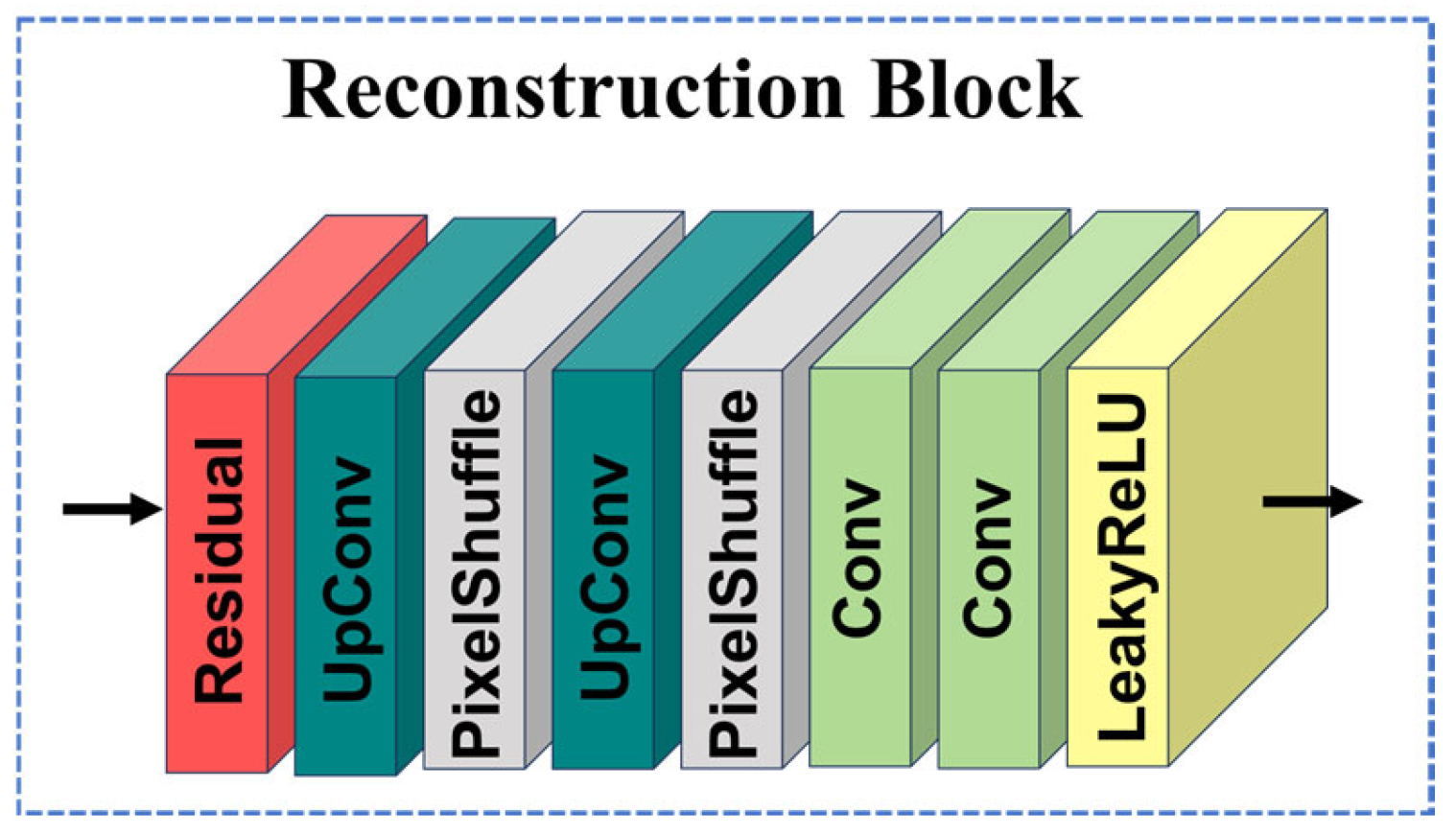

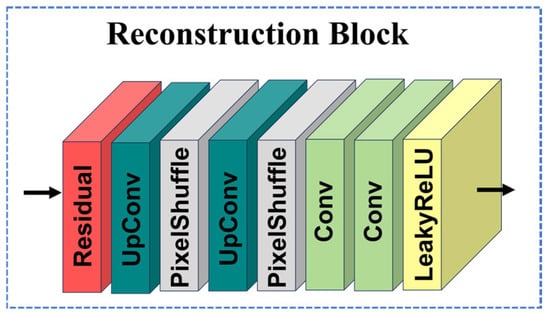

Finally, the features from the last layer of the Swin Transformer decoder blocks are passed through a reconstruction block to generate the final model output. As shown in Figure 4, this reconstruction block consists of a series of residual blocks.

Figure 4.

Schematic architecture of the proposed Reconstruction module.

4. Experiment

4.1. Data Split and Training Details

This study is based on the ground temperature at 2 m and the wind field at 10 m (U and V components) data described in Section 2, and selects the complete data from 1 January 2022 to 31 August 2024. To strictly evaluate the generalization ability of the model and avoid potential data leakage issues in time series prediction, we divide the dataset into three independent parts in chronological order. Each prediction sample is constructed based on the HRCLDAS data at the corresponding moment as the true value. The specific division is as follows: The data from 1 January 2022 to 31 December 2023 is used as the training set for model parameter training; The data from 1 January 2024 to 31 May 2024 will be used as the validation set for hyperparameter optimization and early stop control. The data from 1 June 2024 to 31 August 2024 will be used as the test set for the final independent evaluation of the model performance.

The model training in this study was all conducted on high-performance computing platforms, based on the PyTorch framework and using PyTorch’s DataParallel to implement multi-GPU parallel training to enhance training efficiency. The specific software and hardware environment configuration is shown in Table 2.

Table 2.

Environment configuration.

The model training employs the Adam optimizer and uses an exponential learning rate decay strategy (decay coefficient gamma = 0.98), meaning that the learning rate decayed to 98% of the original after each training period (epoch). The loss function adopts the mean square error (MSE). The key hyperparameter settings for model training, including batch size and the number of training cycles, are all summarized in Table 3.

Table 3.

Model training parameter settings.

4.2. Experiments for Comparison

To rigorously evaluate the performance of our proposed model in the meteorological downscaling task, we designed a systematic comparative experiment, benchmarking it against four widely used models. All experiments were conducted under identical conditions—using the same dataset, identical training-test splits, and the same evaluation metrics—to ensure the comparability and reliability of the results. The models included in the comparison are as follows:

Bilinear Interpolation: A classic image interpolation algorithm, its core principle is to estimate the value of a new pixel based on a 2 × 2 local receptive field by the weighted average of the distances of four known surrounding pixels. This method has extremely high computational efficiency, requires no training, and is a deterministic method. However, its essence is a linear operation, which cannot generate new high-frequency information. The task of downscaling meteorological fields often leads to excessive smoothing, severe loss of details, and makes it difficult to restore the nonlinear structure and sharp features of complex meteorological fields.

SRCNN: As a pioneering work in the application of deep learning to the field of super-resolution, SRCNN learns end-to-end nonlinear mappings from low resolution to high resolution through a relatively lightweight three-layer convolutional network. Compared with traditional interpolation methods, SRCNN can learn more complex mapping relationships and has a stronger potential for detail recovery. However, its model structure is relatively simple, the receptive field is limited, and it is difficult to effectively model and utilize multi-scale information and the dependencies in spatio-temporal sequences.

U-Net: U-Net adopts a symmetric encoder–decoder architecture and uniquely incorporates skip connections to fuse high-resolution, detail-rich shallow features from the encoder path with compressed, semantically rich deep features from the decoder path. This design has proven excellent in image segmentation and generation tasks, enabling effective capture and restoration of multi-scale features. It has seen numerous successful applications in meteorological downscaling and nowcasting, establishing itself as a strong baseline model in the field.

EDVR: EDVR is a high-performance model specifically designed for video super-resolution. Its core innovations include a Pyramid, Cascading, and Deformable (PCD) convolution module for precise frame alignment and a Temporal and Spatial Attention (TSA) fusion module for adaptive feature integration. This design excels in handling dynamic motion blur and complex spatiotemporal degradation. For sequential meteorological data, EDVR can effectively align and fuse spatiotemporal information, mining temporal dependencies within sequences, and holds significant theoretical potential for meteorological downscaling tasks. However, its model complexity is high, resulting in substantial computational costs.

4.3. Ablation Experiment

In the previous section, a comparison was set between this model and other downscaling models and algorithms. To strictly verify the independent contributions and synergistic effects of each core module in the multimodal U-Net Transformer model architecture (TAUT) proposed in this study, we designed a systematic ablation experiment based on the principle of the control variable method, as shown in Table 4.

Table 4.

Ablation experiment design (“√” indicates the module is used).

The ablation experiment in this study takes the U-Net as the Baseline model, and its encoder–decoder architecture and skip connection mechanism are the basic framework of our model. For the task of downscaling in both time and space dimensions, a three-dimensional convolution module is first introduced to extract local features in time and space. Meteorological data is essentially a spatio-temporal sequence. This module extracts local spatio-temporal features between adjacent frames through three-dimensional convolution operations in both temporal and spatial dimensions, capturing the short-term evolution dynamics of the weather system. Subsequently, the Swin Transformer module was introduced into the model to overcome the constraint of the limited receptive field of the convolution operation and observe its effect. Then, DEM elevation data is introduced as auxiliary data input into the model. Finally, the Multi-Branch Terrain-Adaptive (MBTA) module proposed in this paper is introduced to efficiently fuse DEM elevation data as multimodal input and observe the effect.

4.4. Evaluation Metrics

In this study, six evaluation metrics were employed to comprehensively assess the performance of the proposed model: Root Mean Square Error (RMSE), Mean Absolute Error (MAE), Correlation Coefficient (COR), Accuracy (ACC), Peak Signal-to-Noise Ratio (PSNR), and Structural Similarity Index (SSIM). These metrics provide a multi-faceted analysis of the pixel-level performance characteristics of different super-resolution methods. The core metrics and their underlying principles are detailed below.

First, RMSE and MAE, as fundamental error measurement tools, focus on quantifying the reconstruction accuracy of downscaling results. The lower the values of the two indicators are, the smaller the error of downscaling will be. Specifically, MAE is less sensitive to outliers of errors because it uses absolute values for operations, while RMSE enhances its sensitivity to large differences by squaring the mean of errors, and thus is calculated simultaneously for anomaly detection.

Next, COR aims to capture the linear correlation patterns between downscaled images and actual images, with values ranging from [−1, 1], representing a continuous change from complete negative correlation to complete positive correlation. A COR value of 1 indicates a perfect positive match between images, while 0 indicates no obvious linear relationship. ACC is often used to classify task metrics. For regression tasks, it can be transformed by setting thresholds. Here, in the downscaling task, it can be used to measure the overall proportion of the model making correct downscaling in all predictions.

Furthermore, PSNR, as a direct reflection of signal quality, compares the peak signal level and noise level in the image. A higher PSNR value indicates that the reconstruction error between the downscaled image and the real image is very small, reflecting excellent restoration quality.

Lastly, SSIM provides a comprehensive assessment of the overall image quality by evaluating three dimensions: brightness, contrast, and structural similarity. The closer the SSIM value is to 1, the more visually similar the downscaled image is to the actual temperature image, and the higher the structural consistency.

These multi-dimensional indicators together form a systematic evaluation framework, ensuring the comprehensiveness and depth of downscaling performance evaluation. The formulas for these indicators are provided below.

Here, denotes the downscaled prediction for the -th sample, represents the corresponding ground truth observation, is the mean of the predicted values across all samples, and is the mean of the ground truth observations. Following established practice [40], the threshold is set to 2. A sample is considered a true positive, counted as , if < , and a false positive, counted as if . denotes the maximum possible signal intensity, which corresponds to the maximum plausible temperature value in this context. and are the pixel-wise means of the downscaled prediction and the ground truth image, respectively. and represent the pixel-wise variances of the downscaled prediction and the ground truth image, respectively. is the covariance between the downscaled prediction and the ground truth image.

5. Result

5.1. Comprehensive Evaluation of Benchmark Models and Ablation Results

Table 5 presents the overall evaluation results of various temperature downscaling methods with HRCLDAS data as the true values. From this, the following conclusions can be drawn: Firstly, the U-Net model performs the best among all the basic models. Compared with bilinear interpolation, its performance has been significantly improved. It has also been significantly improved compared with SRCNN. This indicates that the encoder–decoder structure based on U-Net has certain advantages in solving such problems. Secondly, the U-Net model using the three-dimensional convolution module performs better than the ordinary U-Net model. This indicates that when dealing with the downscaling problem of multi-dimensional spatiotemporal dimensions, it is necessary to utilize three-dimensional convolution to extract features from more dimensions. Thirdly, the performance of the model without introducing the Swin Transformer module is lower than that of the model with this module added. This indicates that combining the local feature extraction of convolution with the attention mechanism can further enhance the model’s performance. Fourth, after introducing terrain remote sensing data as auxiliary information, the model performance has improved to a certain extent, indicating that within the study area, terrain has a significant impact on the temperature distribution. Finally, the MBTA module proposed in this paper can better adapt to the input of terrain and other auxiliary features, and its effect is superior to the method of directly inputting terrain features. Meanwhile, compared with the super-resolution model EDVR, the TAUT model proposed in this paper achieves higher accuracy and better overall performance.

Table 5.

Comparison of different downscaling models for T2m using testing data (bold indicates the optimal metrics).

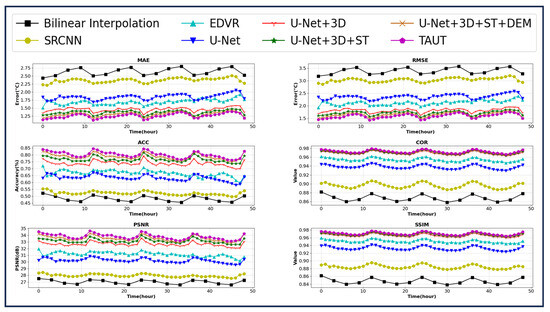

Figure 5 shows the changes in the mean values of the hourly evaluation indicators of each model over time within the 48 h downscaling period. As shown in the figure, the TAUT model maintains the smallest mean absolute error (MAE) and root mean square error (RMSE) across all timings, and the other evaluation indicators are also consistently superior to those of the comparison models. The distribution of the indicators of each model over time is basically consistent with the overall average presented in Table 5. Only the U-Net model and the EDVR model show curve crossover at a few moments.

Figure 5.

Comparison of the hourly average sequences of all evaluation metrics in all models within a 48 h downscaling time range.

It is worth noting that the error does not increase monotonically over time, but rather shows fluctuating characteristics within 24 h. Additionally, the peak and trough values of the error do not directly correspond to the times of the highest and lowest temperatures. This phenomenon is speculated to be related to the diurnal variation in temperature: terrain in the study area is complex, and the basin is prone to heat accumulation during the day, leading to a sudden increase in temperature. However, model downscaling results usually tend to be flat and slippery, with limited ability to capture extreme warming situations, thus resulting in increased errors. By around 09:00 UTC (17:00 Beijing Time), as the solar altitude Angle decreases, solar radiation weakens, temperature changes tend to level off, the stability of the model’s downscaling capability has been enhanced, and the error correspondingly decreases. After 12:00 UTC (20:00 Beijing Time), as solar radiation disappears, the earth’s surface cools rapidly due to long-wave radiation, causing the error to rise again. Despite the fluctuations caused by the above-mentioned diurnal variations, the overall error in the last 24 h of the 48 h downscaling period is still higher than that in the first 24 h, indicating that the downscaling error still shows an overall upward trend with the extension of time.

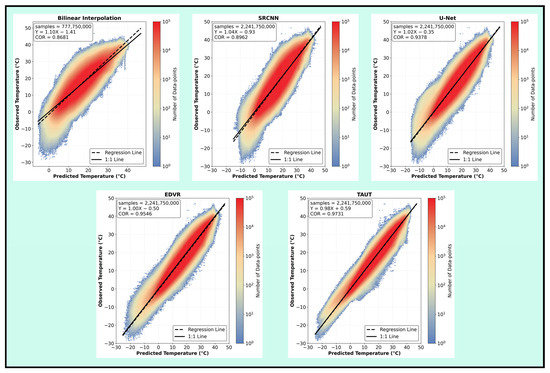

5.2. Comparative Analysis of Benchmark Models

Figure 6 shows the comparison between the predicted values and the actual values of several comparison models in the test set. The horizontal axis represents the predicted temperature values obtained by the comparison models, while the vertical axis represents the actual temperature observed by HRCLDAS. The colors in the scatter plot correspond to the number of scatter points. Through the analysis of scatter plots and regression line trends, the accuracy of predictions and their consistency with observed data can be evaluated. The denser the data points and the closer they are to the 45° dotted line (the black dotted line in Figure 6), the higher the prediction accuracy. The bilinear interpolation model has fewer sample points than other comparison models because it does not downscale in the time dimension.

Figure 6.

Comparison of 2 m temperature downscaling from the baseline models with HRCLDAS data using testing data. The color indicates the density of data points.

Overall, the prediction results of the TAUT model are the closest to the observed values. The scatter distribution of the bilinear interpolation model deviates the most from the 45° reference line and has the lowest correlation coefficient, indicating that its prediction error is relatively significant. The scatter distribution of the SRCNN model is more concentrated compared to the bilinear interpolation method, but the range of the red area near the 45° line is wider, indicating that the distribution of the predicted values is relatively discrete and there is a certain deviation from the true values. The concentration of scattered points in the U-Net model is superior to the previous two methods. The density distribution in the red area is relatively concentrated, but the range of the yellow area is larger than that of the latter two models, reflecting certain prediction fluctuations.

All three models shown in the first row of Figure 6 performed poorly in the low-temperature region. Due to the complex terrain of the selected research area, where the temperature at a depth of 2 m on the ground can even reach around minus 25 degrees Celsius, the first three models generally gave relatively high predicted values for such low temperatures. This also led to a significant deviation of the predicted values of the first three models from the 45° line in the low-temperature area. Compared with the first three models, the EDVR model has significantly improved the density of scattered points and the degree of closeness to the 45° line. It can also make more accurate predictions for samples at lower temperatures. Compared with the EDVR model, the results of the TAUT model have been further improved. In the scatter plot of the results, the red area is more concentrated within the range, and the data points are more densely distributed and closer to the 1:1 line. It has the highest correlation value among all the models compared. For low-temperature samples, TAUT provides the closest predicted values. This excellent performance indicates that by effectively integrating topographic information, TAUT can, to some extent, better capture the nonlinear mechanisms of low temperatures.

5.3. Model Performance in Complex Terrain

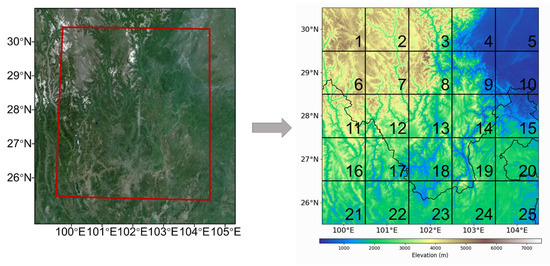

To evaluate the application of topographic remote sensing data in the model, this experiment compared model outputs under different terrain conditions in the selected study area. The comparison focused on three metrics: MAE, RMSE, and PSNR. The first two metrics were used to analyze error distribution across various terrains, while the latter assessed the model’s utilization of topographic remote sensing data and its reconstruction of temperature elements. As shown in the right picture of Figure 7, the specific operation steps include: dividing the selected research area into 1° × 1° grid areas and assigning identifiers to each area. Based on the elevation distribution within each grid block, the terrain was classified into one of three types: mountain, plateau, or basin.

Figure 7.

The remote sensing digital elevation data map of the study area. We divided it into a 5 × 5 chessboard and assigned an ID to each part.

Table 6 details the specific composition of each terrain category. The study area is located in southwestern China, encompassing complex topographical features such as most of the Hengduan Mountains, the northwestern part of the Yunnan-Guizhou Plateau, and the western Sichuan Basin. Accordingly, the terrain was classified into three primary types: mountains, plateaus, and basins. Although water bodies like rivers are present within the area, they were not considered in this classification as they do not form extensive, contiguous features such as large lakes.

Table 6.

Classification of the terrains in Figure 7. We divided them into three types, i.e., mountain, plateau, and basin.

Table 7 details the differences in the three evaluation metrics for the comparative models across various terrain types. For the error metrics, lower MAE and RMSE values indicate smaller temperature downscaling errors achieved by the model when integrated with topographic remote sensing data in that particular terrain. A higher PSNR value signifies that the spatial texture distribution of the downscaled temperature better aligns with reality, contains less noise, and thus reflects a more effective integration and output of numerical model data and topographic remote sensing data within the model.

Table 7.

Performance metrics of different downscaling models across three terrain types (bold indicates the optimal metrics).

Analysis of the table reveals that all models, after incorporating topographic remote sensing data, achieved the best downscaling results in basin areas, exhibited larger errors and poorer spatial texture reconstruction in plateau regions, and delivered moderate performance in the most complex mountainous terrain. Among all models compared, TAUT consistently achieved the best performance across all three terrain types. It produced the smallest temperature downscaling errors, particularly in basin topography, along with the finest temperature distribution details and spatial texture reconstruction.

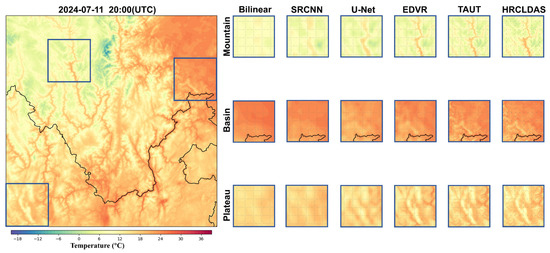

The specific spatial details of the temperature drop scale are shown in Figure 8. By observing these images, it can be intuitively felt that the bilinear interpolation method only increases the density of grid points for temperature scaling, and the spatial texture is relatively blurry. Meanwhile, due to the shallow network of SRCNN, even after inputting terrain remote sensing data, the spatial representation of temperature distribution remains rough. Under three different terrain classifications, the U-Net network begins to show certain spatial textures after combining terrain data, but it is still lacking in specific details. EDVR demonstrates better visual effects than previous models by relying on its super-resolution model construction. However, in contrast, the visual effects of the TAUT constructed in this paper on the three terrains of mountains, plateaus, and basins are the closest to the HRCLDAS dataset. Therefore, it also proves that TAUT better combines terrain remote sensing data and achieves better results in the spatial structure of the temperature reduction scale.

Figure 8.

Visual comparison of the results of each method at 20:00 UTC on 11 July 2018. The large image on the left represents the temperature image of the HRCLDAS dataset, while the right shows the detailed representation of each comparison model under different terrains.

In the next section, we will use a specific high-temperature case to demonstrate the spatial texture of the temperature field reconstructed by the model under the guidance and integration of topographic remote sensing data.

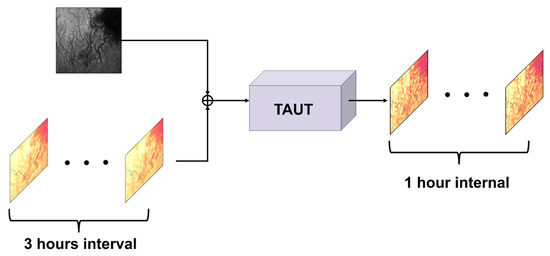

5.4. Case Study: A High-Temperature Event

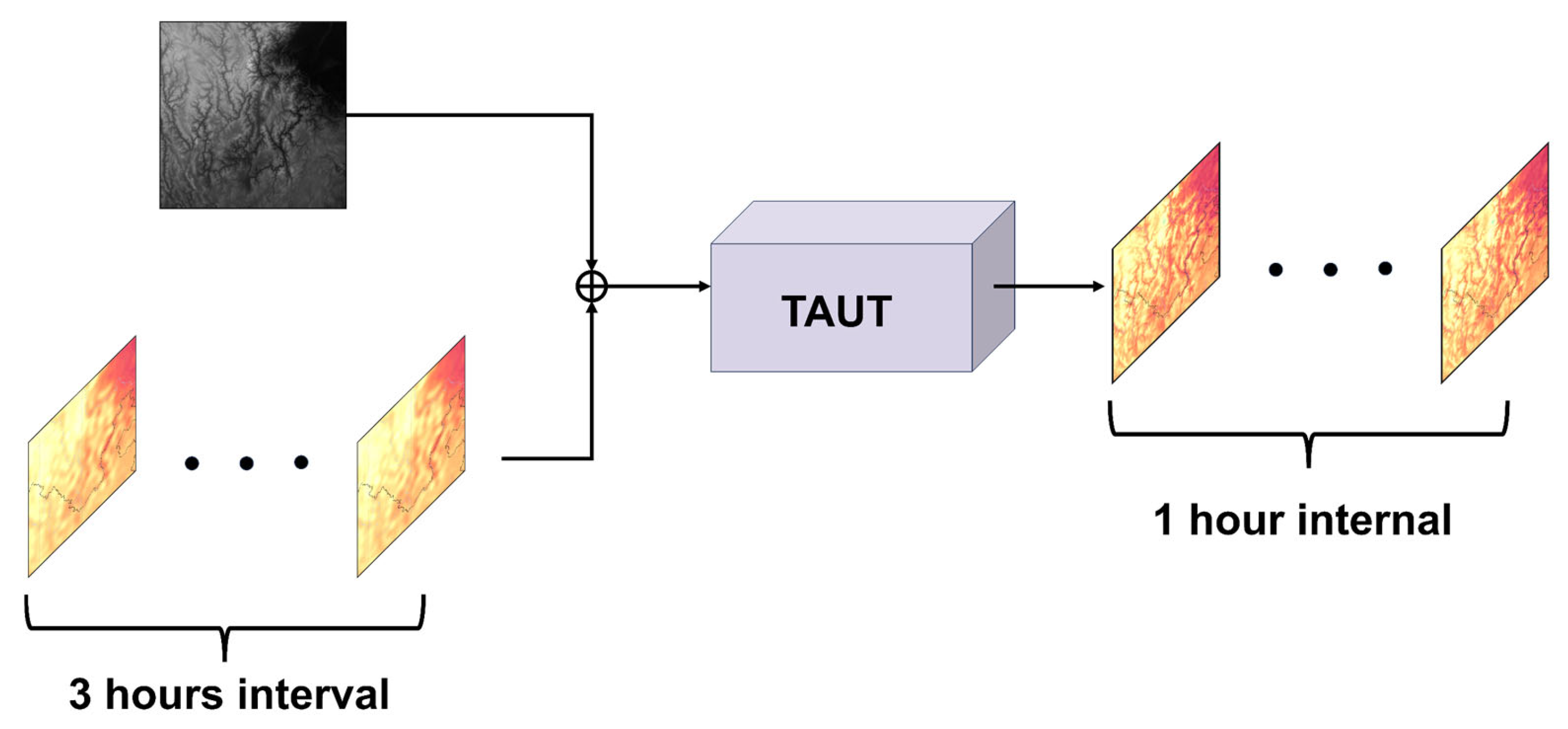

In the middle and late August of 2024, a prolonged period of high-temperature weather occurred in the southwest region. The Meteorological Observatory of Sichuan Province has issued an orange high-temperature warning for several consecutive days. This study selects the typical high-temperature period from 19 to 30 August 2024, as the analysis case, with a focus on analyzing the predictive performance of the research model for this process. Figure 9 is a schematic diagram of the model downscaling process. We attempted to use only low spatial resolution numerical model data with a low 3 h interval within this time period, combined with terrain remote sensing data, to obtain high spatial resolution downscaling results hour by hour.

Figure 9.

Schematic diagram of the process of using the downscaling model.

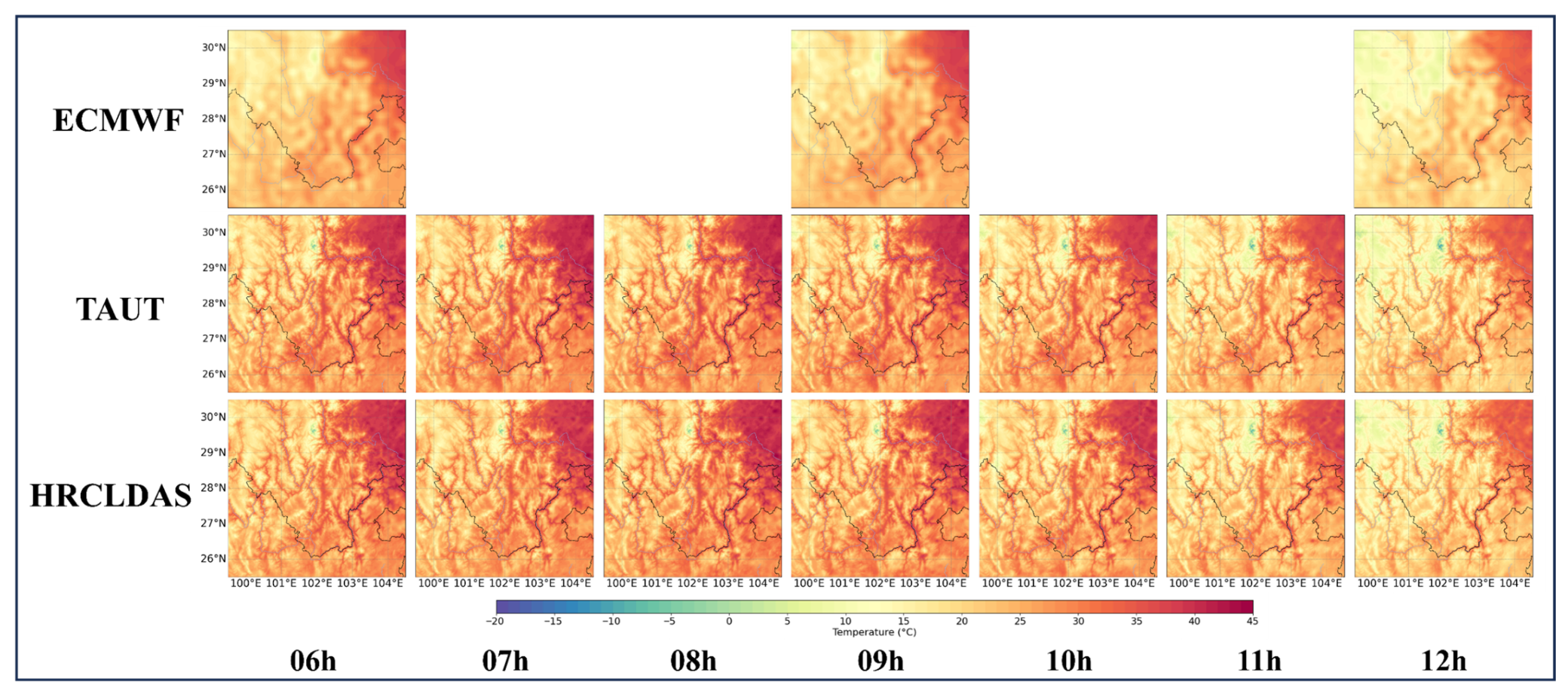

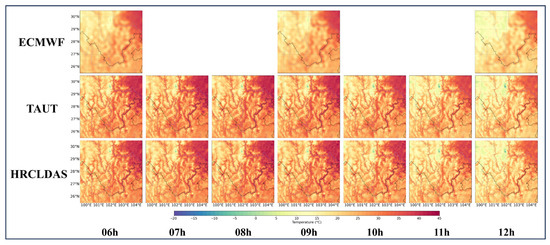

Figure 10 shows the spatiotemporal downscaling results of a representative period during this high-temperature event. The ECMWF in the figure is the numerical forecast data at 06:00, 09:00, and 12:00 on 27 August 2024. The TAUT behavior represents the spatio-temporal downscaling results of the model in this study from 06:00 to 12:00. The real-time HRCLDAS behavior data from 06:00 to 12:00 of the National Meteorological Information Center was taken as the verification benchmark. Comparative analysis shows that this model has a remarkable spatial resolution reconstruction effect. Compared with the original numerical forecast output, it can describe the temperature distribution details and spatial texture under the influence of terrain more accurately. In terms of the time dimension, the model reconstruction results also show good consistency with the real-time data. It is worth noting that in regions experiencing extremely high temperatures, compared with the direct mode output, the mode results provide higher temperature predictions and are more in line with the actual extreme high-temperature conditions.

Figure 10.

The distributions of 2 m temperature on the downscaling time of 06–12 h, including ECMWF, HRCLDAS, and the downscaling results obtained by TAUT.

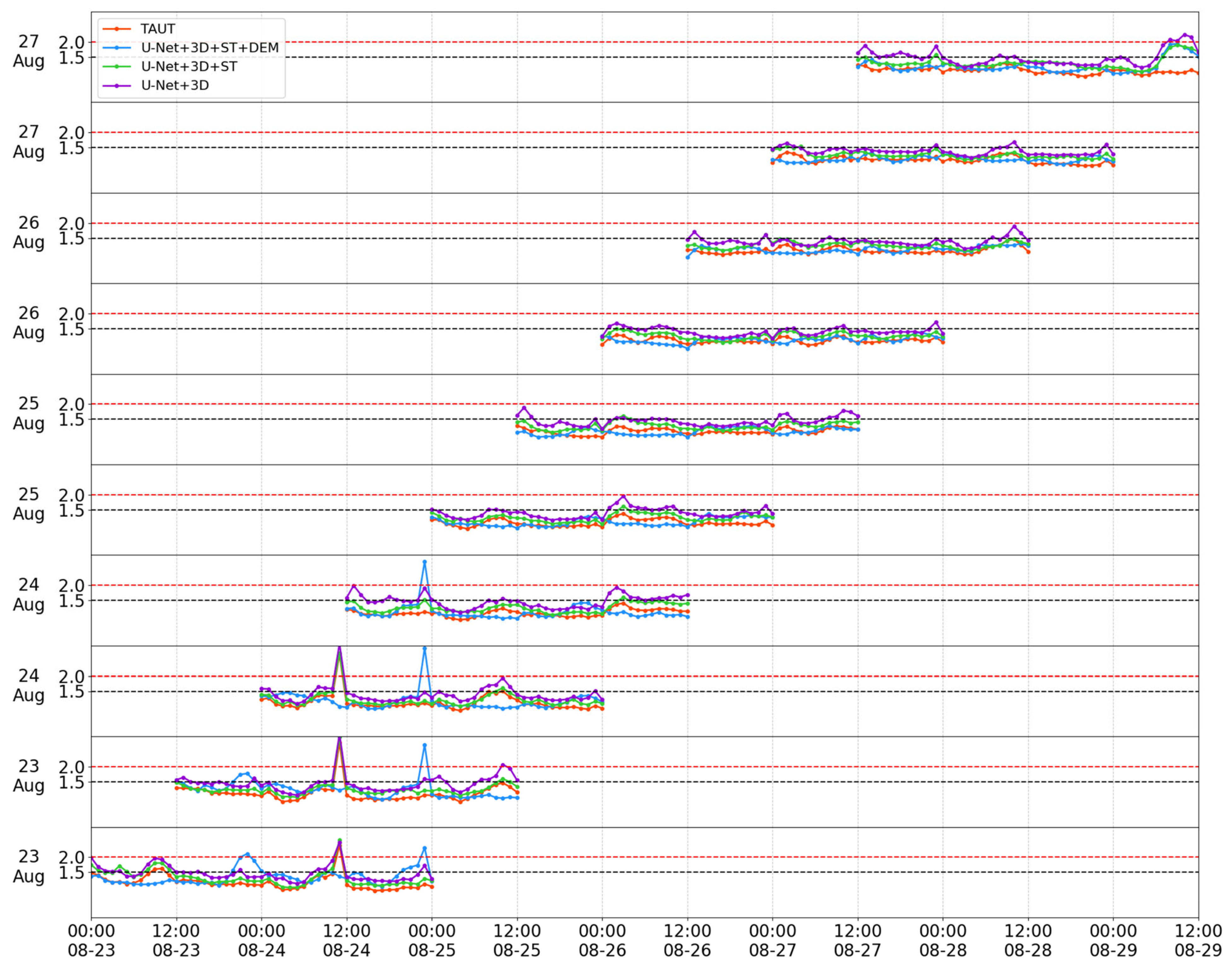

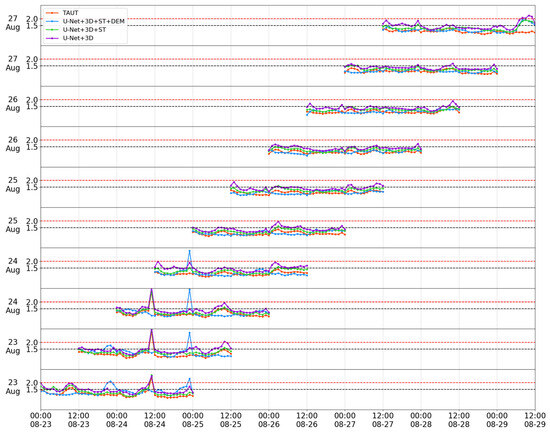

Figure 11 shows the time series of root mean square error (MAE) for 48 h 2 m temperature downscaling by each ablation model from 23 to 27 August 2024. Each point in the figure represents the average value of the error within the study area during that hour. The accuracy threshold Settings in the previous text are the same. In the figure, an MAE above 2.0 °C (red dotted line) is set as a situation with a relatively large error, while an MAE below 1.5 °C (black dotted line) is set as a situation with a relatively small error. Analysis shows that in the study of this high-temperature case, the model (purple line) that only introduces 3D convolution has the largest error and the least satisfactory downscaling effect. After adding the Swin Transformer module (green line), the error was significantly reduced and basically stabilized below the 1.5 °C threshold, but its performance was still inferior to the subsequent two model configurations. After further introducing terrain as an auxiliary variable, the downscaling effect of the model improved in some periods (mainly the last three days), but the overall performance was unstable. For example, in the downscaling results on 23 August, there was a large fluctuation in error. After the MBTA module was added, the downscaling performance of the model in the following days was different from that of the model without this module, but the error was basically controlled below the 1.5 °C line. In the early downscaling, the model with the MBTA module added demonstrated better stability and lower error values. In conclusion, in the downscaling prediction of extreme high temperatures in this study area, the fully constructed TAUT model achieved the best comprehensive performance.

Figure 11.

Temporal evolution of 48 h 2 m temperature downscaling MAEs from ablation models during the high-temperature case from 23 to 27 August 2024.

6. Conclusions and Discussion

This paper mainly focuses on the problem of temperature spatio-temporal downscaling accuracy in Southwest China and proposes a deep learning model, TAUT, based on the U-Net network structure, with the Swin Transformer module as the main component and the CNN module as the auxiliary. The encoder module performs feature extraction and downsampling on the input. The time building module downscales the input in the time dimension and then inputs it into the decoder. The decoder further uses the input as the decoder’s features to generate the output. Meanwhile, to verify the significant impact of complex terrain on temperature distribution, this paper incorporates the use of elevation remote sensing data and constructs an MBTA module to adaptively and efficiently integrate temperature, UV wind, and terrain remote sensing data, and finally uses them as a multimodal data input model.

To verify the effect of downscaling of the model, this paper utilized ECMWF data and HRCLDAS data from January 2022 to August 2024, and took terrain remote sensing data as an important input. Meanwhile, this paper sets up a variety of comparison models and ablation experiments. Firstly, the evaluation of multiple average indicators has established the overall superiority of the TAUT model in terms of average performance. It not only had the two error evaluations of the smallest MAE and RMSE, but also obtained the best results in the correlation coefficient and accuracy evaluation. Meanwhile, the PSNR index of the model was the largest, and the SSIM index was closer to 1. It indicates that the temperature images reconstructed by downscaling have smaller reconstruction errors and better visual similarity to the real temperature images. From the average results of the 48 h downscaling time series, TAUT also has obvious advantages over other models, and it has the best evaluation index results at almost every downscaling time point. Secondly, by analyzing the scatter plots of each comparison model, it can be observed that the distribution results of the TAUT model are significantly closer to the actual values, and to a certain extent, it can also predict extremely low-temperature conditions. Thirdly, this paper also conducted zone verification for different terrains, further demonstrating the model’s adaptability under various levels of terrain complexity. The model achieved satisfactory results in basin terrain and moderate validation results in complex mountain terrain. In all the set terrain classifications, TAUT achieved the best results in each category. Finally, the case studies on high temperatures provided qualitative visual evidence and quantitative time analysis, demonstrating how TAUT successfully integrated terrain remote sensing data inputs and improved the details and spatial texture of temperature distribution under high-temperature conditions, and maintained stability during extreme weather events. By inputting fuzzy numerical prediction data and high-precision remote sensing terrain data into the model, it can output temperature spatio-temporal downscaling results with excellent spatial texture distribution. Meanwhile, during this high-temperature individual case period, the model structure constructed in this paper has better stability and lower error distribution compared with other ablation experiments, fully verifying the advantages of the hierarchical attention mechanism of the Swin Transformer module and the local feature extraction advantage of the convolution operation in this study. The significant role of terrain remote sensing data in temperature downscaling reconstruction.

In conclusion, the model TAUT constructed in this paper provides high-resolution spatio-temporal downscaling results, with a spatial resolution of 0.01° × 0.01° and a temporal resolution of hourly output. This process is based on spatio-temporal modeling and combines the Swin Transformer module and the U-Net framework. Meanwhile, the study integrated the complex terrain remote sensing data of the research area for multimodal input, verifying the influence of the complex terrain in Southwest China on the scale distribution of temperature drop. The model we proposed can be applied to a wide range of model outputs. It is important to note that the current study primarily benchmarks TAUT against advanced computer vision-based super-resolution models to rigorously evaluate its capability in spatiotemporal detail reconstruction. A comprehensive comparison with physics-informed meteorological downscaling methods (e.g., DeepSD) remains a valuable direction for future work, which would provide further insights into the relative strengths of data-driven and physics-aware approaches. In the future, we will also attempt to apply the model to other complex terrain scenarios to analyze the mechanism of terrain characteristics on the downscaling effect and further enhance the universality of the conclusion. At the same time, we will also consider the input of more modal-related data, such as the input of elements like sensible heat flux and latent heat flux, to enhance the physical foundation of the model.

Author Contributions

Conceptualization, Z.C. and L.X.; methodology, Z.C.; software, Z.C.; validation, Z.C. and J.G.; formal analysis, Z.C.; investigation, Z.C., L.X., and J.W.; resources, J.G. and J.X.; data curation, Z.C.; writing—original draft preparation, Z.C.; writing—review and editing, Z.C., J.G. and J.X.; visualization, Z.C.; supervision, J.G.; project administration, J.G.; funding acquisition, J.G. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by the National Natural Science Foundation of China, grant numbers 42575071 and 42505067.

Data Availability Statement

The ECMWF data used in this study are available from the European Center for Medium-Range Weather Forecasts at https://www.ecmwf.int/en/forecasts/datasets/set-i (accessed on 25 January 2025). The remote sensing dataset ASTER GDEM V3 can be obtained from the Geospatial Data Cloud site at https://www.gscloud.cn/search (accessed on 12 July 2025). The HRCLDAS data are not publicly available due to licensing restrictions imposed by the National Meteorological Information Center. HRCLDAS data can be obtained by applying to the National Meteorological Information Center.

Acknowledgments

We thank the reviewers for their comments, which helped us improve the manuscript.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Liu, J.; Li, M.; Liu, T.; Ren, Y.; Hu, Q.; Shalamzari, M.J.; Pham, Q.B.; He, P. Comprehensive Analysis of Compound Drought and Heatwave Events in China over Recent Decades Based on Regional Event Identification Algorithm. Int. J. Digit. Earth 2025, 18, 2514709. [Google Scholar] [CrossRef]

- Qing, Y.; Wu, J.; Luo, J.-J. Characteristics and Subseasonal Prediction of Four Types of Cold Waves in China. Theor. Appl. Climatol. 2025, 156, 192. [Google Scholar] [CrossRef]

- Abdel-Aal, R.E. Hourly Temperature Forecasting Using Abductive Networks. Eng. Appl. Artif. Intell. 2004, 17, 543–556. [Google Scholar] [CrossRef]

- Wang, Q.; Chen, C.; Xu, H.; Liu, Y.; Zhong, Y.; Liu, J.; Wang, M.; Zhang, M.; Liu, Y.; Li, J.; et al. The Graded Heat-Health Risk Forecast and Early Warning with Full-Season Coverage across China: A Predicting Model Development and Evaluation Study. Lancet Reg. Health-West. Pac. 2025, 54, 101266. [Google Scholar] [CrossRef] [PubMed]

- Politi, N.; Nastos, P.T.; Sfetsos, A.; Vlachogiannis, D.; Dalezios, N.R. Evaluation of the AWR-WRF Model Configuration at High Resolution over the Domain of Greece. Atmos. Res. 2018, 208, 229–245. [Google Scholar] [CrossRef]

- Risser, M.D.; Rahimi, S.; Goldenson, N.; Hall, A.; Lebo, Z.J.; Feldman, D.R. Is Bias Correction in Dynamical Downscaling Defensible? Geophys. Res. Lett. 2024, 51, e2023GL105979. [Google Scholar] [CrossRef]

- Tefera, G.W.; Ray, R.L.; Wootten, A.M. Evaluation of Statistical Downscaling Techniques and Projection of Climate Extremes in Central Texas, USA. Weather Clim. Extrem. 2024, 43, 100637. [Google Scholar] [CrossRef]

- Sun, Y.; Deng, K.; Ren, K.; Liu, J.; Deng, C.; Jin, Y. Deep Learning in Statistical Downscaling for Deriving High Spatial Resolution Gridded Meteorological Data: A Systematic Review. ISPRS J. Photogramm. Remote Sens. 2024, 208, 14–38. [Google Scholar] [CrossRef]

- Dong, C.; Loy, C.C.; He, K.; Tang, X. Learning a Deep Convolutional Network for Image Super-Resolution. In Computer Vision–ECCV 2014; Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T., Eds.; Lecture Notes in Computer Science; Springer International Publishing: Cham, Switzerland, 2014; Volume 8692, pp. 184–199. [Google Scholar]

- Dong, C.; Loy, C.C.; Tang, X. Accelerating the Super-Resolution Convolutional Neural Network. In Computer Vision–ECCV 2016; Leibe, B., Matas, J., Sebe, N., Welling, M., Eds.; Lecture Notes in Computer Science; Springer International Publishing: Cham, Switzerland, 2016; Volume 9906, pp. 391–407. [Google Scholar]

- Kim, J.; Lee, J.K.; Lee, K.M. Accurate Image Super-Resolution Using Very Deep Convolutional Networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition; IEEE Computer Society: Washington, DC, USA, 2016. [Google Scholar]

- Lai, W.-S.; Huang, J.-B.; Ahuja, N.; Yang, M.-H. Deep Laplacian Pyramid Networks for Fast and Accurate Super-Resolution. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 5835–5843. [Google Scholar]

- Lim, B.; Son, S.; Kim, H.; Nah, S.; Lee, K.M. Enhanced Deep Residual Networks for Single Image Super-Resolution. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Honolulu, HI, USA, 21–26 July 2017; pp. 1132–1140. [Google Scholar]

- Ledig, C.; Theis, L.; Huszar, F.; Caballero, J.; Cunningham, A.; Acosta, A.; Aitken, A.; Tejani, A.; Totz, J.; Wang, Z.; et al. Photo-Realistic Single Image Super-Resolution Using a Generative Adversarial Network. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 105–114. [Google Scholar]

- Karras, T.; Aila, T.; Laine, S.; Lehtinen, J. Progressive Growing of Gans for Improved Quality, Stability, and Variation. arXiv 2018, arXiv:1710.10196. [Google Scholar] [CrossRef]

- Karnewar, A.; Wang, O. MSG-GAN: Multi-Scale Gradients for Generative Adversarial Networks Supplementary Material. arXiv 2019, arXiv:1903.06048. [Google Scholar]

- Wang, J.; Chen, M.; Karaev, N.; Vedaldi, A.; Rupprecht, C.; Novotny, D. VGGT: Visual Geometry Grounded Transformer. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE Computer Society: Washington, DC, USA, 2025. [Google Scholar]

- Wang, Y.; Yang, W.; Chen, X.; Wang, Y.; Guo, L.; Chau, L.-P.; Liu, Z.; Qiao, Y.; Kot, A.C.; Wen, B. SinSR: Diffusion-Based Image Super-Resolution in a Single Step. In Proceedings of the 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 16 June 2024; pp. 25796–25805. [Google Scholar]

- Peebles, W.; Xie, S. Scalable Diffusion Models with Transformers. In Proceedings of the IEEE/CVF International Conference on Computer Vision; IEEE Computer Society: Washington, DC, USA, 2023. [Google Scholar]

- Duan, Z.-P.; Zhang, J.; Jin, X.; Zhang, Z.; Xiong, Z.; Zou, D.; Ren, J.S.; Guo, C.-L.; Li, C. DiT4SR: Taming Diffusion Transformer for Real-World Image Super-Resolution. In Proceedings of the IEEE/CVF International Conference on Computer Vision; IEEE Computer Society: Washington, DC, USA, 2025. [Google Scholar]

- Xiang, L.; Guan, J.; Xiang, J.; Zhang, L.; Zhang, F. Spatiotemporal Model Based on Transformer for Bias Correction and Temporal Downscaling of Forecasts. Front. Environ. Sci. 2022, 10, 1039764. [Google Scholar] [CrossRef]

- Vandal, T.; Kodra, E.; Ganguly, S.; Michaelis, A.; Nemani, R.; Ganguly, A.R. DeepSD: Generating High Resolution Climate Change Projections through Single Image Super-Resolution. In Proceedings of the 23rd Acm Sigkdd International Conference on Knowledge Discovery and Data Mining; ACM: New York, NY, USA, 2017. [Google Scholar]

- Kumar, B.; Chattopadhyay, R.; Singh, M.; Chaudhari, N.; Kodari, K.; Barve, A. Deep Learning–Based Downscaling of Summer Monsoon Rainfall Data over Indian Region. Theor. Appl. Climatol. 2021, 143, 1145–1156. [Google Scholar] [CrossRef]

- Pan, B.; Hsu, K.; AghaKouchak, A.; Sorooshian, S. Improving Precipitation Estimation Using Convolutional Neural Network. Water Resour. Res. 2019, 55, 2301–2321. [Google Scholar] [CrossRef]

- Mu, B.; Qin, B.; Yuan, S.; Qin, X. A Climate Downscaling Deep Learning Model Considering the Multiscale Spatial Correlations and Chaos of Meteorological Events. Math. Probl. Eng. 2020, 2020, 7897824. [Google Scholar] [CrossRef]

- Rampal, N.; Gibson, P.B.; Sood, A.; Stuart, S.; Fauchereau, N.C.; Brandolino, C.; Noll, B.; Meyers, T. High-Resolution Downscaling with Interpretable Deep Learning: Rainfall Extremes over New Zealand. Weather Clim. Extrem. 2022, 38, 100525. [Google Scholar] [CrossRef]

- Lu, P.; Deng, Q.; Zhao, S.; Wang, Y.; Wang, W. Deep Learning for Seasonal Prediction of Summer Precipitation Levels in Eastern China. Earth Space Sci. 2023, 10, e2023EA003129. [Google Scholar] [CrossRef]

- Sha, Y.; Gagne, I.D.J.; West, G.; Stull, R. Deep-Learning-Based Gridded Downscaling of Surface Meteorological Variables in Complex Terrain. Part I: Daily Maximum and Minimum 2-m Temperature. J. Appl. Meteorol. Climatol. 2020, 59, 2057–2073. [Google Scholar] [CrossRef]

- Sha, Y.; Gagne, I.D.J.; West, G.; Stull, R. Deep-Learning-Based Gridded Downscaling of Surface Meteorological Variables in Complex Terrain. Part II: Daily Precipitation. J. Appl. Meteorol. Climatol. 2020, 59, 2075–2092. [Google Scholar] [CrossRef]

- Sun, A.Y.; Tang, G. Downscaling Satellite and Reanalysis Precipitation Products Using Attention-Based Deep Convolutional Neural Nets. Front. Water 2020, 2, 536743. [Google Scholar] [CrossRef]

- Stengel, K.; Glaws, A.; Hettinger, D.; King, R.N. Adversarial Super-Resolution of Climatological Wind and Solar Data. Proc. Natl. Acad. Sci. USA 2020, 117, 16805–16815. [Google Scholar] [CrossRef]

- Cheng, J.; Liu, J.; Kuang, Q.; Xu, Z.; Shen, C.; Liu, W.; Zhou, K. DeepDT: Generative Adversarial Network for High-Resolution Climate Prediction. IEEE Geosci. Remote Sens. Lett. 2022, 19, 1–5. [Google Scholar] [CrossRef]

- Tie, R.; Shi, C.; Wan, G.; Hu, X.; Kang, L.; Ge, L. CLDASSD: Reconstructing Fine Textures of the Temperature Field Using Super-Resolution Technology. Adv. Atmos. Sci. 2022, 39, 117–130. [Google Scholar] [CrossRef]

- Liu, J.; Sun, Y.; Ren, K.; Zhao, Y.; Deng, K.; Wang, L. A Spatial Downscaling Approach for WindSat Satellite Sea Surface Wind Based on Generative Adversarial Networks and Dual Learning Scheme. Remote Sens. 2022, 14, 769. [Google Scholar] [CrossRef]

- Yu, T.; Yang, R.; Huang, Y.; Gao, J.; Kuang, Q. Terrain-Guided Flatten Memory Network for Deep Spatial Wind Downscaling. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2022, 15, 9468–9481. [Google Scholar] [CrossRef]

- Jiang, Y.; Han, S.; Shi, C.; Gao, T.; Zhen, H.; Liu, X. Evaluation of HRCLDAS and ERA5 Datasets for Near-Surface Wind over Hainan Island and South China Sea. Atmosphere 2021, 12, 766. [Google Scholar] [CrossRef]

- Hu, J.; Shen, L.; Albanie, S.; Sun, G.; Wu, E. Squeeze-and-Excitation Networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition; IEEE Computer Society: Washington, DC, USA, 2019. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention Is All You Need. In NIPS’17: Proceedings of the 31st International Conference on Neural Information Processing Systems; ACM: New York, NY, USA, 2023. [Google Scholar]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin Transformer: Hierarchical Vision Transformer Using Shifted Windows. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; pp. 9992–10002. [Google Scholar]

- Chen, K.; Wang, P.; Yang, X.; Zhang, N.; Wang, D. A Model Output Deep Learning Method for Grid Temperature Forecasts in Tianjin Area. Appl. Sci. 2020, 10, 5808. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.