Highlights

What are the main findings?

- A multi-level hybrid deep learning-based object detection model precisely detects and classifies water bodies from remote sensing images.

- The proposed model exhibits enhanced detection results compared with recent state-of-the-art methods in terms of accuracy, robustness, and classification reliability on remote sensing imagery.

What are the implications of the main findings?

- The proposed model provides an effective and large-scale water resource monitoring system without manual intervention, supporting timely environmental assessment.

- The proposed deep learning model presents a scalable tool for decision-making in water management, drought monitoring, and sustainable resource planning.

Abstract

Water resource monitoring can provide beneficial information supporting water management; however, present operational systems are small and provide only a subset of the information needed. Primary advancements consist of the clear explanation of water redistribution and water use from groundwater and river schemes, achieving better spatial detail and increased precision as evaluated against hydrometric observation. In such cases, Earth Observation (EO) satellite systems are persistently creating extensive data, which is now essential for applications in different fields. With readily available open-source satellite imagery, aerial remote sensing is progressively becoming a quick and efficient tool for monitoring land and water resource development actions, demonstrating time and cost savings. At present, the deep learning (DL) model will be beneficial for monitoring water resources and EO utilizing remote sensing. In this paper, a Deep Neural Network-Based Object Detection for Water Resource Monitoring and Earth Observation (DNNOD-WRMEO) model is introduced. The main intention is to develop an effective monitoring and analysis framework for water resources and Earth surface observations using aerial remote sensing images. Initially, the Wiener filter (WF) model was used for image pre-processing. For object detection, the Yolov12 method was used for identifying, locating, and classifying objects within an image, followed by the DNNOD-WRMEO methodology, which implements the ResNet-CapsNet model for the backbone feature extraction method. Finally, the temporal convolutional network (TCN) model was implemented for the classification of water resources. The comparison analysis of the DNNOD-WRMEO methodology exhibited a superior accuracy value of 98.61% compared with existing models under the AIWR dataset.

1. Introduction

Only a limited portion of the Earth’s water is accessible as freshwater, constituting an essential resource in various economic sectors, from agriculture to industrial processes. Currently, water resources are under significant pressure and are especially limited in dry areas of the world. In several arid and semi-arid zones, a lack of water is a primary barrier to sustainable development and poverty reduction and has led to major disputes between nations [1]. Water scarcity in such regions may worsen due to global climate shifts expected to affect these areas heavily. Therefore, identifying, mapping, and observing water resources is vital for ensuring availability, access, equitable use, and effective management [2]. Though high-resolution imagery through aerial photography has existed for decades in satellite remote sensing (RS), with multispectral digital images now nearing the quality of small- to medium-scale photography, we are entering a new era of practical developments. Water plays a role in every aspect of economic and social progress [3]. Particularly in underdeveloped nations, water supply, sanitation, and a healthy environment are the foundation of effective poverty control and inclusive development plans. The application of remote observation in hydrology and water resources through aerial images, while not recent, is a rapidly expanding area [4].

The advancement of modern sensors and the increasing availability of data obtained through RS methods are encouraging data-driven research and the creation of a novel analytical algorithm. In this scenario, Earth Observation (EO) satellite systems are continually generating extensive data [5]. EO satellites observe the Earth’s surface, ocean, air, ice zones, and carbon movement from orbit in real time and continuously send this data to ground stations [6]. The insights collected by EO satellites are extensively applied across numerous academic areas, particularly those connected to environmental studies, where the readings acquired from EO satellites are crucial [7]. A fundamental aspect of the RS of groundwater is understanding that shallow groundwater flow is frequently influenced by surface forces interpreted through geological features identified from surface-level data [8]. Furthermore, machine learning (ML) has held a crucial position in examining EO data for more than thirty years, and its relevance has steadily increased. Likewise, DL methods have brought significant advancements in numerous scientific areas, particularly in those focused on image data analysis, such as computer vision (CV) or RS [9]. While shallow learning methods could be trained on relatively small data collections, DL needs large-scale datasets to achieve the required precision and generalisation capability. Hence, the presence of labelled datasets has become a key element in many instances of current Earth observation data processing applications that design and assess efficient, DL-driven methods for the automatic analysis of RS information [10]. Environmental monitoring and resource management can be improved by water body analysis, providing more precise and adaptable insights across varied landscapes and conditions.

Wang et al. [11] presented a model for evaluating the water scarcity of OFR in irrigation regions by incorporating multispectral drone information, higher-resolution RS information, and ground observations. The water surface region is removed from multispectral drone information through a threshold segmentation system and a Gaussian Mixture Model (GMM). Sri Bala et al. [12] examined the application of RS technologies within estuarine aquaculture for monitoring variations in land use and evaluating the quality of water. It is currently possible to understand and reduce the environmental impacts of shrimp farming through such techniques. Sun et al. [13] presented WaterDeep, which is a new DL architecture driven by the DeepLabV3 + framework and a groundbreaking fusion module for low-, as well as high-level features. Initially, this architecture forms an inclusive dataset of high-resolution RS imagery and afterwards advances using Xception baseline networks.

Fu et al. [14] presented a dual-stream hybrid network (DSHNet) approach that focuses on the simultaneous elimination of boundary and semantic characteristics in RS imagery and enhances the semantic segmentation performance by thoroughly combining dual-stream data. Lin et al. [15] proposed an innovative unsupervised CD methodology, termed trans-MAD (transformer-driven multivariate alteration detection). It leverages a pre-recognition tactic, which integrates the compressed change vector examination and IR-MAD for generating dependable pseudo-training instances. More precise and stronger CD outcomes are attained through IR-MAD for detecting unimportant variations. Zhang et al. [16] suggested LS-YOLO, a new and efficient paradigm for landslide recognition using RS imaging, by introducing random seeds in data augmentation to increase data robustness. Taking into account the multiscale landslide feature in RS images, a multiscale feature extraction technique was developed depending on effective average pooling, channel attention, and spatial separable convolution. Mia et al. [17] examined the ability of multimodal DL to improve rice yield estimation precision employing UAV multispectral images in the heading phase, alongside weather information. The impacts of the CNN frameworks, layer depths, and weather information incorporation approaches on forecast precision were assessed. In general, the multimodal DL approach incorporating UAV-enabled multispectral images and weather data has the capability to make more accurate forecasts. In [18], lightweight thermal and optical sensors are utilised extensively on UAVs for several applications and are presented by some as the finest method.

Despite enhancements in the existing studies, limitations can be seen due to high computational costs and reliance on large, labelled datasets, reducing practical applicability in resource-constrained regions. Multimodal and multispectral integration techniques that integrate heterogeneous data face challenges, resulting in sub-optimal performance. Lightweight sensor approaches enhance efficiency but may compromise accuracy. The research gap lies in developing robust, efficient, and generalisable models that can accurately handle multi-source RS data while minimising computational overhead and data dependency. This paper develops a Deep Neural Network-Based Object Detection for Water Resource Monitoring and Earth Observation (DNNOD-WRMEO) model using aerial remote sensing imagery. The key contribution of this paper is summarised as follows:

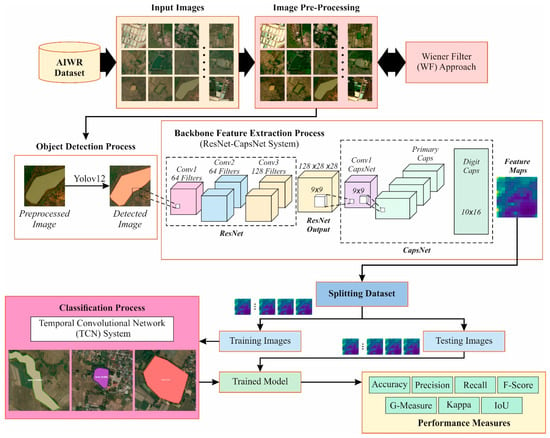

- Proposes a DNNOD-WRMEO study using aerial remote sensing images to develop effective monitoring and analysis of water resources and Earth surface observations.

- The WF was initially employed in the image pre-processing stage to increase the quality of imaging.

- The Yolov12 method is deployed in the object detection step to identify, locate, and classify objects inside the images.

- The DNNOD-WRMEO model executes the ResNet-CapsNet method for the mainstay feature extraction procedure.

- The TCN model is used for the water resource classification technique.

- The comparative outcomes informed the improved characteristics of the DNNOD-WRMEO model.

2. Materials and Methods

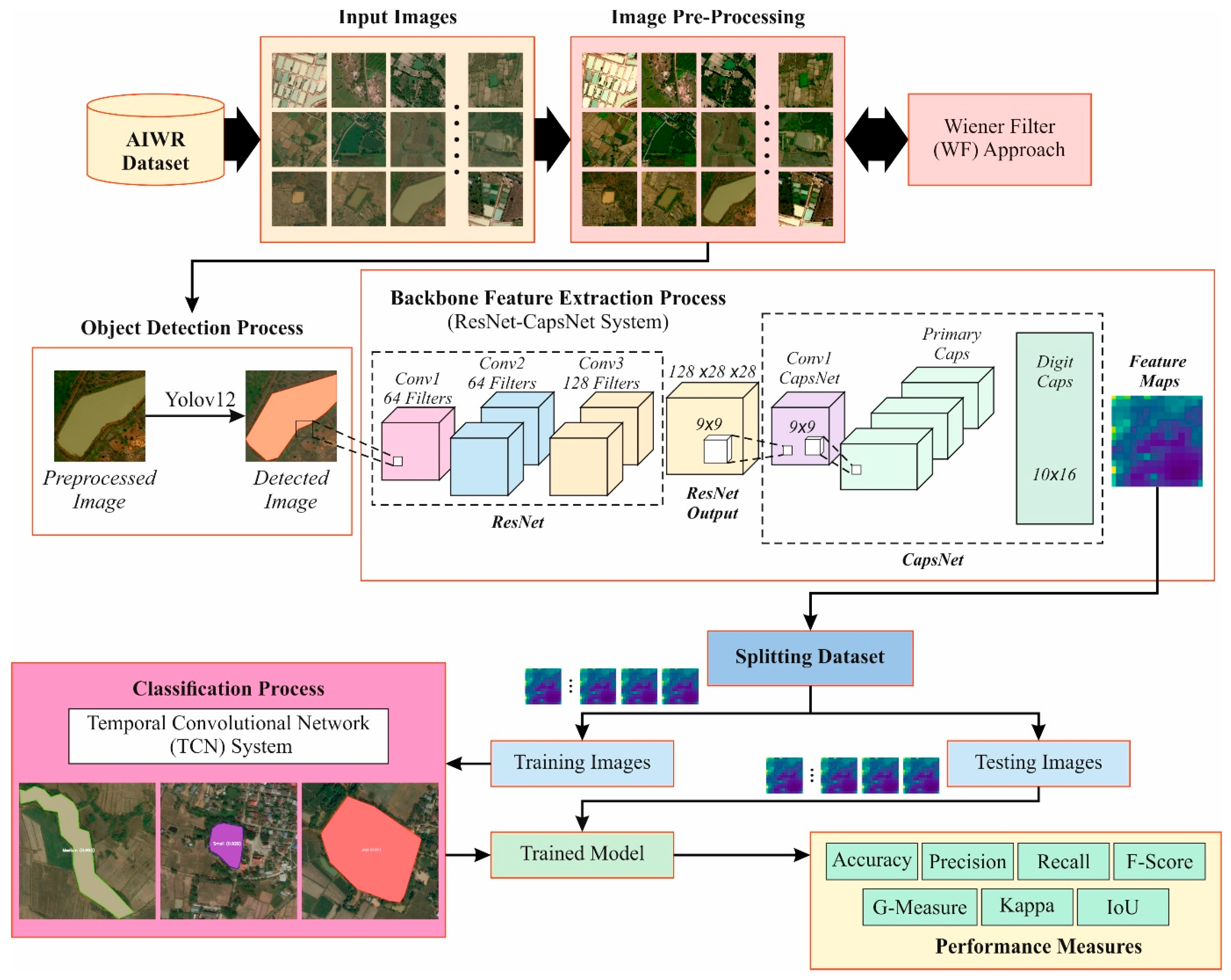

This paper develops a DNNOD-WRMEO method using aerial remote sensing imagery. The aim is to develop effective monitoring and analysis of water resources and Earth surface observations using aerial remote sensing images. It comprises image pre-processing, object detection, a feature extractor model, and water resource classification. Figure 1 represents the complete process of the DNNOD-WRMEO model.

Figure 1.

Complete process of DNNOD-WRMEO model.

2.1. WF-Based Image Pre-Processing Model

Image pre-processing was initially employed by the WF to improve image quality by eliminating noise [19]. This model was chosen for its robust capability to suppress sensor noise, atmospheric noise, and Gaussian noise, which are most commonly seen in remote sensing images due to sensor restrictions and environmental conditions. The mean squared error (MSE) between the restored and original image is reduced by the WF due to its adaptive, statistically optimal filtering ability; thus, it is considered effective when noise characteristics can be estimated. Furthermore, WF conserves radiometric consistency compared to wavelet-based methods, and unlike non-local means, it needs significantly lower computational complexity, making it appropriate for large-scale aerial image processing. The downstream feature extraction and accuracy of this process are also improved by its balance between noise reduction and edge preservation. The WF is a linear optimum filtering technique that assumes a statistical method for either the signal or the noise and aims to reduce the MSE between the unknown original imaging and the restored image. It is usually applied in the frequency domain, while the filter accounts for either the degradation function or the power spectral densities of the noise and signal. WF in the frequency domain is specified by Equation (1):

where denotes the projected original image, denotes the degraded image, stands for the frequency response of the degradation function (for example, blur), is its composite conjugate, refers to the power spectral density of the original image, and symbolises the power noise spectral density.

2.2. Object Detection Using Yolo-v12 Method

For object detection, the Yolov12 method is employed to identify, locate, and classify objects within an image [20]. This method was chosen for its robustness in balancing detection accuracy, inference speed, and architectural stability, which are considered significant for real-time aerial remote sensing applications. Moreover, YOLOv12 provides enhanced small-object detection and multiscale feature fusion, compared to earlier YOLO versions, which are crucial for detecting water bodies with irregular shapes. Although YOLOv13 is the latest release, YOLOv12 was chosen due to its mature, extensively benchmarked, and computationally efficient nature, with stable training behaviour and wider framework support. Thus, YOLOv12 is more reliable for large-scale RS datasets, where consistency and reproducibility are prioritized over incremental performance gains.

Object detection (OD) faces consistent challenges in remote sensing water body detection, such as colour distortion caused by turbidity, spectral confusion between water and shadows or dark surfaces, and the presence of small, irregularly shaped, or partially occluded water bodies. Unlike dissimilar dual-stage indicators, which sacrifice speed for precision, or conventional single-stage methodology, the YOLO-v12 attention-centric design overcomes historical performance limitations while preserving greater accuracy. The structure follows a standard object detector model, comprising Neck for feature fusion, Backbone for feature extraction, and Head for prediction.

2.2.1. Residual Efficient Layer Aggregation (R-ELAN)

The blocks of R-ELAN act as the basis of YOLO-v12 proficiency to tackle severe signal degradation and training complexity built into attention-driven frameworks. In deep-water or turbid settings, colours distort, and fine textures are obscured owing to wavelength-dependent attenuation. Deeper computation paths with Area Attention blocks learn to detect objects by structural forms and abstract silhouettes, rendering the method mainly invariant to low-contrast conditions and chromatic shifts. This framework-invariant method allows identification of the subject, namely starfish, by shape instead of perceived colour, which varies significantly with depth.

The R-ELAN block’s operational flow begins when the input feature map enters and is promptly divided into parallel paths. A residual connection bypasses the major computation block, thoroughly maintaining the original data features. This transformes the output feed into various parallel streams passing over diverse sequential blocks of A2. Output from every parallel stream concatenates along the size of the channel. The aggregated feature maps pass across a scaling layer, which employs a learnable weight . Eventually, the scaled features are fused element to element with the maintained features in the residual paths.

Here, depicts the parallel path with specific blocks of A2; denotes the scaling factor, and refers to the initial convolution layers.

2.2.2. Area Attention-A2

OD addresses constant challenges with turbidity and occlusion, which degrade the traditional attention module. A2 faces these boundaries across a multi-head attention structure with enhanced spatial partition. The input feature map undergoes parallel transformations to create the key, query, and value representations with every dimension [B, (W × H)/area, C]. The principal concept resides in the spatial partitioning approach, where every head’s mapping features are partitioned into non-overlapping areas, decreasing the spatial resolution from (W × H) to (W × H)/area.

Here, every attention head works on partitioned regions:

where depicts the scaled dot-product attention function, and signifies the partitioned region comprising position . The resultant undergoes a reshaping process to restore the original spatial dimensions [B, C, H, W], preserving compatibility with the downstream layer. This framework decreases computation complexity from quadratic to , while maintaining huge receptive areas with the size of the image.

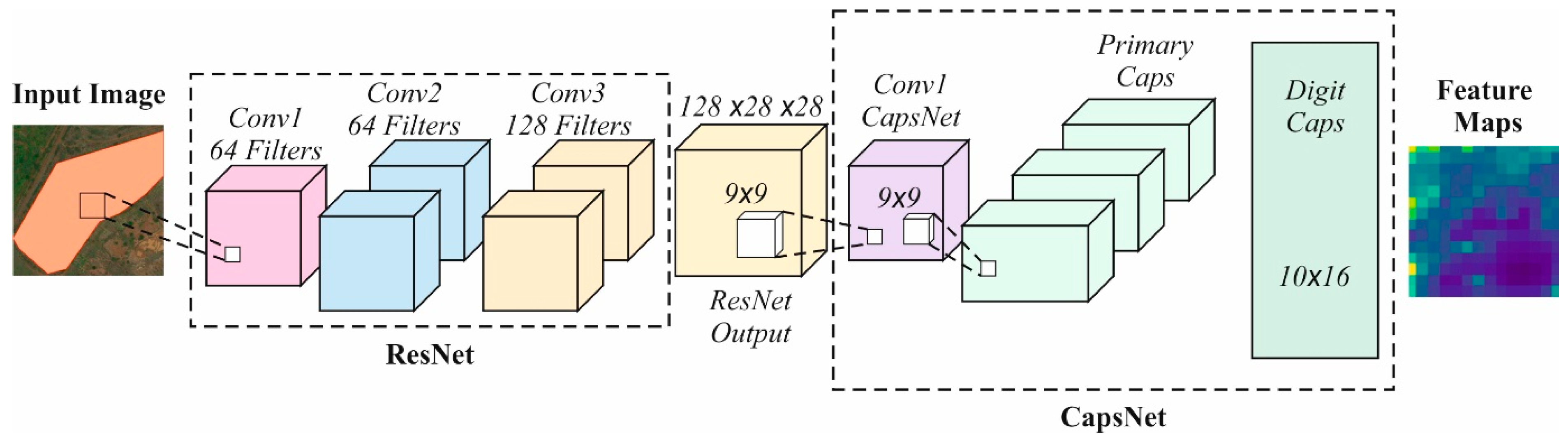

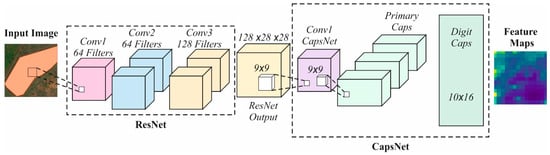

2.3. ResNet-CapsNet-Based Feature Extraction Process

This is followed by the DNNOD-WRMEO model, which implements ResNet-CapsNet as the backbone feature extraction process [21]. This model effectively performs extraction. Robust extraction of high-level semantic features from complex aerial images is achieved by ResNet, which also provides deep hierarchical feature representation with residual connections. Furthermore, spatial hierarchies and relationships between features are preserved by the CapsNet method, which are crucial for detecting irregularly shaped or partially occluded water bodies. The hybrid model also improves robustness to rotation, scale variations, and occlusion, compared to standard CNNs. It outperforms single-model approaches by integrating ResNet’s depth and CapsNet’s structural awareness, resulting in more accurate and reliable feature extraction for downstream object detection. Figure 2 specifies the architecture of the ResNet-CapsNet model.

Figure 2.

Architecture of the ResNet-CapsNet model.

After object detection, the DL method ResNet-CapsNet is applied for automatic feature extraction. In the presented DL method, the average pooling in the ResNet technique is substituted by the CapsNet. Using this DL method, the feature direction and relative position are described. Therefore, the redundant features are removed, and the deeper features are removed by the threshold-based convolution.

ResNet: For the residual block, the term and the corresponding mapping are denoted as and . In addition, the residual learning is and the identity mapping is obtained when

where denotes the input of the residual block.

If the same size maps the shortcuts, the formulation of the residual term is provided as follows:

If changing sizes map the shortcuts, the formulation of the residual term is provided as follows:

CapsNet: The DL method CapsNet has neurons and capsules. The output of the capsule is a vector, and the output of the neuron is a scalar. This CapsNet method utilises dynamic routing for achieving improved outcomes. The classic CNN method has difficulty in the pooling layer; during feature extraction, CNNs might lose essential features, which reduces precision. CapsNet utilises dynamic routing to address this problem. If the predicted and actual values are close, the gained values and the DigitCaps capsule elements are applied. If the predicted and actual values are not near, the gained values and the DigitCaps capsule elements are not promoted. The dynamic routing is calculated using the capsule layer output prediction vector, and the means weighting matrix.

Next, the relationship among the neighbouring capsule layers, , is provided as follows:

where denotes the likelihood values of the and capsule layers. The dynamic routing procedure is provided as follows:

Here, denotes the capsule’s input vector, and the capsule’s output vector is specified as follows:

Finally, the loss applied in the CapsNet is the combination of reconstruction and margin losses.

where denotes function, refers to a false negative being penalised (upper bound), denotes a false positive being penalised (lower bound), and represents a hyperparameter applied for fine-tuning and .

ResCapsNet: It considers CapsNet and ResNet 34 for feature extraction. The ResNet-34 method has identity blocks arranged in groups of 3, 2, 4, and 2 for removing the essential features. Next, after the residual blocks, the average pooling layers are positioned, and this layer is substituted by CapsNet. The outcome of the convolution layer is given to the main capsules, and there are 32 capsules measured. The feature points consist of several redundant features, along with semantic information.

If there are numerous redundant features, the method can influence other feature points. To address this, this study applies a convolutional operator after thresholding to reduce the redundant features and achieve improved semantic features. The presented threshold-based convolutional operation uses the sigmoid, which preserves the output features of the feature map. For every feature point, the values of weights are formed. Additionally, this work measured two array sets with related feature maps. If the threshold values are greater than the weights made by the sigmoid function, then the feature points are set to , thereby reducing redundant features.

2.4. Water Resource Classification Using TCN Model

Finally, the TCN model is employed to classify water resources [22]. This model was chosen for its capability to model sequential and temporal data dependencies. Unlike conventional CNNs, the model captures long-range patterns without recurrent connections, ensuring faster training and inference. Its causal and dilated convolutions allow the model to incorporate both local and global contexts, enhancing classification accuracy for dynamic water bodies. TCNs also present greater stability and parallelisation compared to RNNs, making them appropriate for large-scale aerial image datasets.

TCNs were initially presented as network architectures for action segmentation. They offered a TCN as a strong alternative to conventional recurrent neural network (RNN) frameworks, such as gated recurrent units (GRUs) and LSTMs, for different sequence modelling tasks. An essential feature of TCNs is their causal convolutional layer, while the current and upcoming outputs rely only on previous inputs. Causal convolution makes TCNs suitable for predictive tasks, as it considers the temporal sequence. Dilated convolutional layers with exponentially increasing dilations (for example: 1, 2, 4, 8, and so on) allow for successful receptive field growth without improving the size of the filter, permitting TCNs to execute dilated causal convolution. In addition, akin to Transformers and RNNs, TCNs can map input sequences of random lengths to the same output sequence. In this model, the regularisation of the NN was improved by accepting weight normalisation rather than batch normalisation (BN) or layer normalisation, as demonstrated below:

where denotes a channel-wise vector, characterises a tensor of the same size as the weight , and signifies the Euclidean norm of . Weight normalisation is a reparameterisation that decouples the size of the weight (g) from the weight direction to speed up optimisation convergence. Additionally, TCNs combine residual links and dropout methods to alleviate vanishing gradients and boost training stability. Their flexibility to variable-length inputs and ability in parallel processing have shown TCNs to be a favourable example for time-series data analysis in ML applications.

3. Results

The validation assessment of the DNNOD-WRMEO methodology was evaluated using the Aerial Image Water Resource (AIWR) dataset [23]. The model was simulated using Python 3.6.5 on a PC with an i5-8600k, 250GB SSD, GeForce 1050Ti 4GB, 16GB RAM, and 1TB HDD. The parameters included a learning rate of 0.01, ReLU activation, 50 epochs, 0.5 dropout, and a batch size of 5.

In this paper, investigators aimed to achieve precise land use classification within water body areas. Water bodies are classified into two types: natural bodies of water (W1) and artificial bodies of water (W2). Ground truth annotations are determined for every aerial image before being investigated by remote sensing specialists to safeguard precise classification of the water bodies. The AIWR dataset comprises 800 aerial images with three classes of scales: small, medium, and large. Thus, 720 aerial images are employed for model training and development, and 80 images are reserved for testing. Table 1 presents full details of this dataset. Figure 3 illustrates the sample images. Figure 4 indicates the original and predicted images.

Table 1.

Details of the dataset.

Figure 3.

Sample images.

Figure 4.

(a) Original images and (b) predicted images.

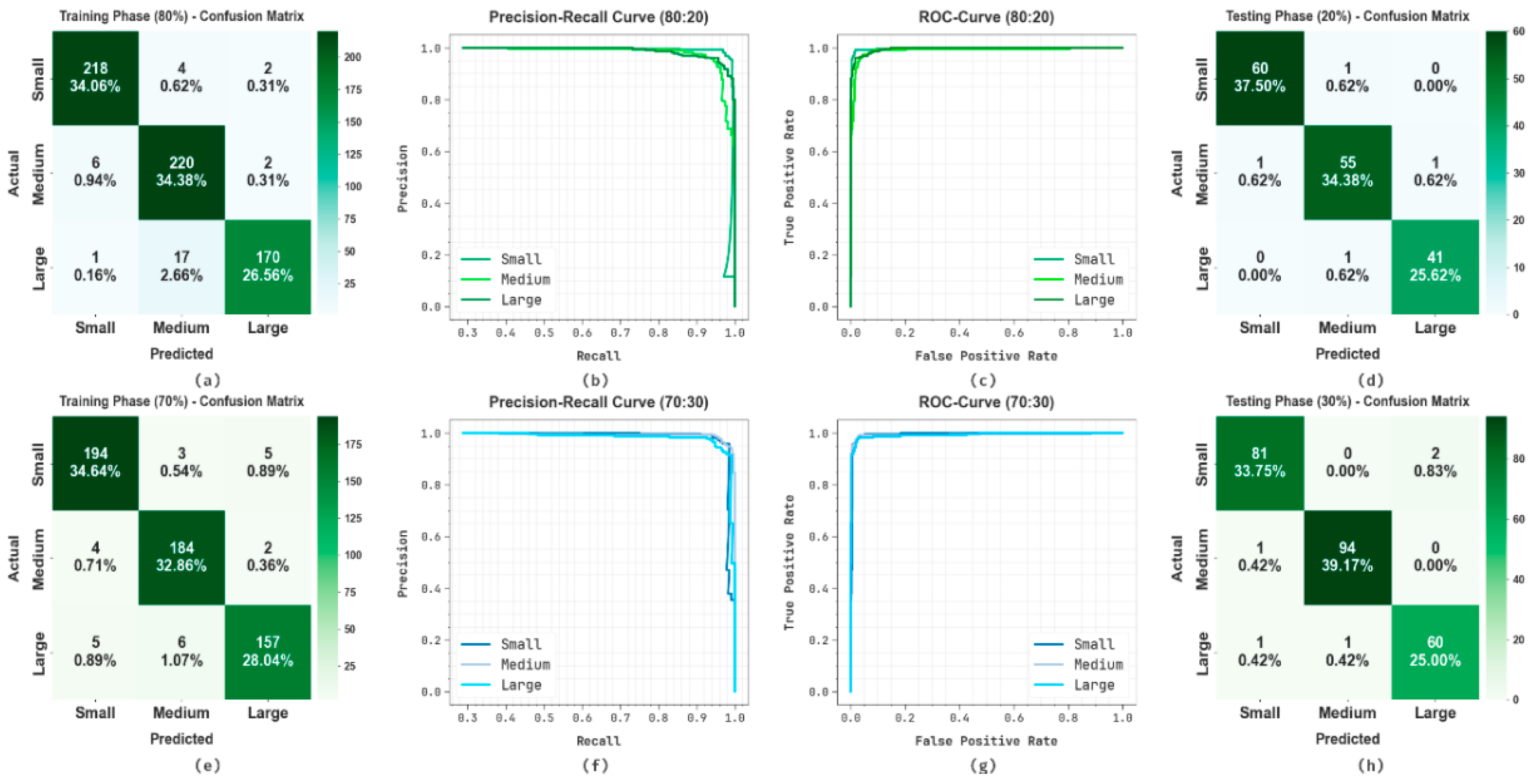

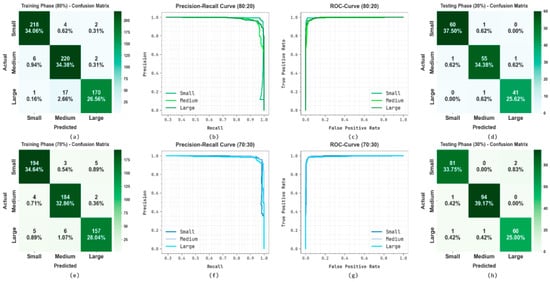

Figure 5 presents the classifier performances of DNNOD-WRMEO methodology under 80:20 and 70:30. Figure 5a–h exhibit the confusion matrices with accurate identification and detection of every class. Figure 5b,f show the PR study, representing the maximal solution through every class label. Eventually, Figure 5c,g exemplify the ROC study, depicting capable results with superior ROC values for diverse classes.

Figure 5.

The 70:30 and 80:20 (a,d,e,h) confusion matrices and (b,c,f,g) the corresponding PR and ROC curves.

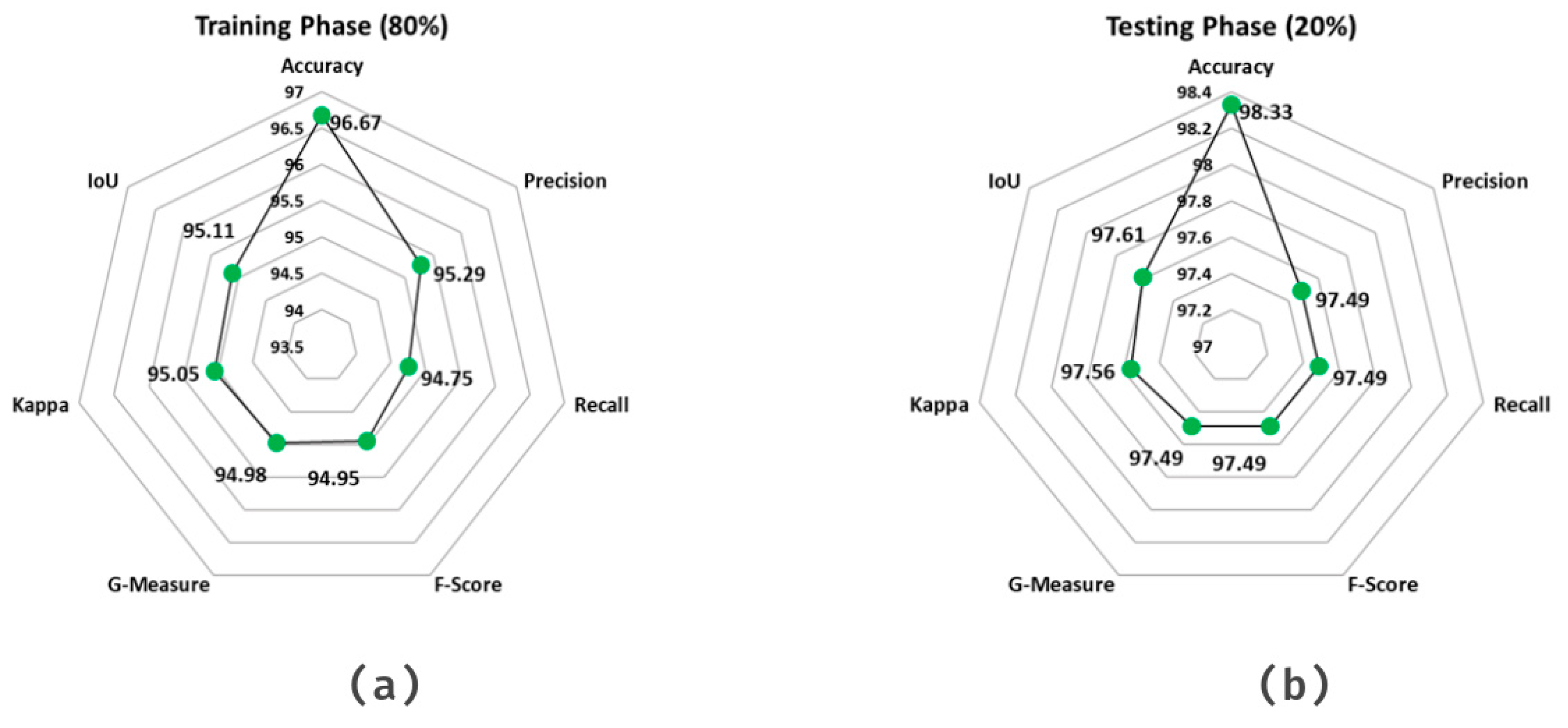

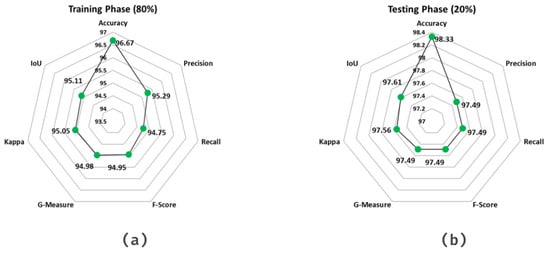

Table 2 and Figure 6 depict the classifier solution of the DNNOD-WRMEO technique under 80:20. Under 80% TRPHE, the DNNOD-WRMEO methodology reaches an average of 96.67%, of 95.29%, of 94.75%, of 94.95%, of 94.98%, Kappa of 95.05%, and an IoU of 95.11%. Moreover, on 20% TRPHE, the DNNOD-WRMEO model achieves an average of 98.33%, of 97.49%, of 97.49%, of 97.49%, of 97.49%, Kappa of 97.56%, and an IoU of 97.61%.

Table 2.

Classifier outcome of DNNOD-WRMEO model under 80:20.

Figure 6.

Average values of the DNNOD-WRMEO model: (a) 80% and (b) 20%.

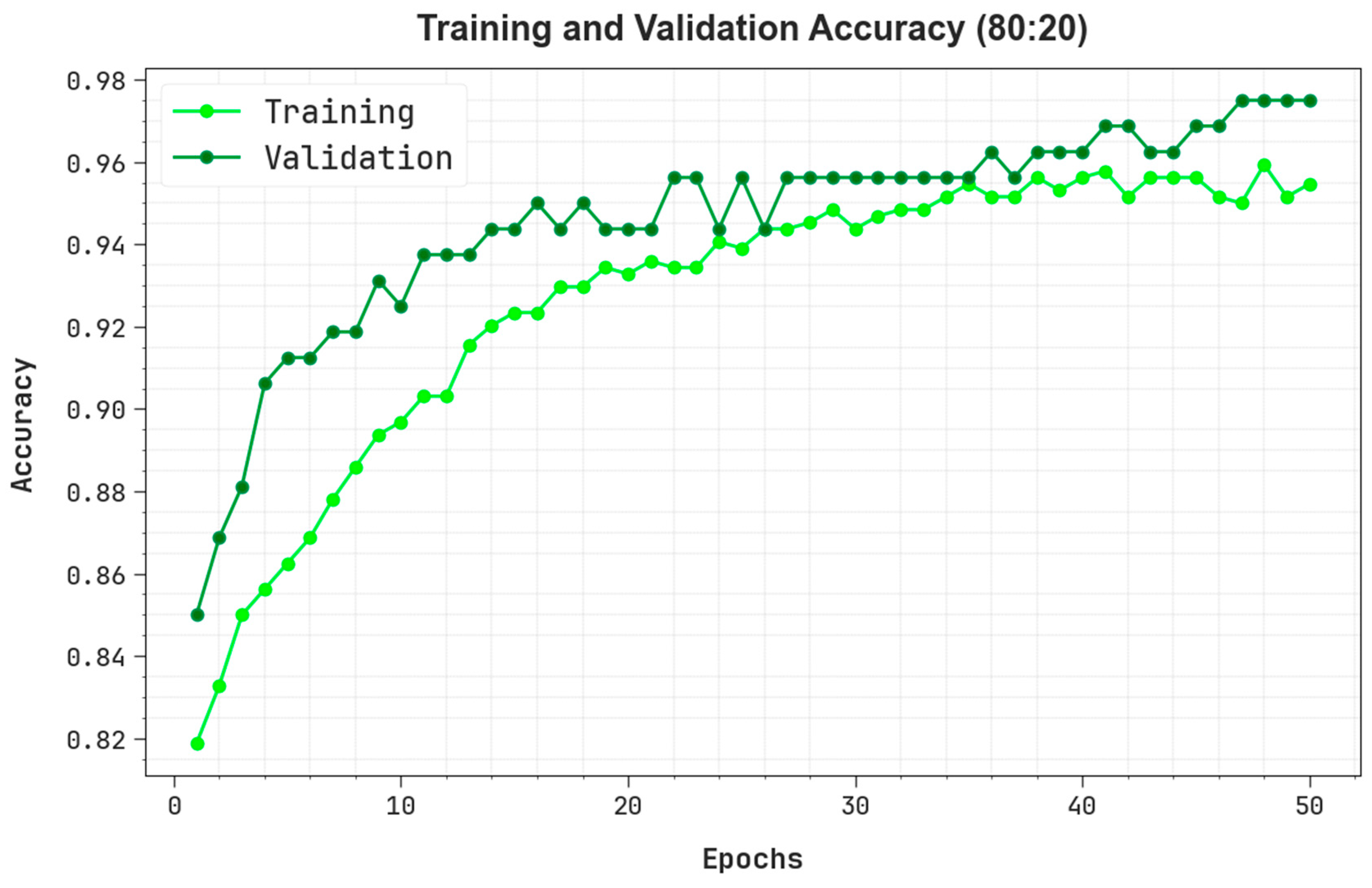

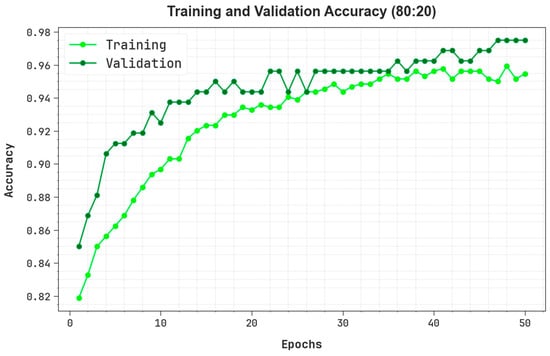

Figure 7 represents the training (TRAIN) and validation (VALID) of a DNNOD-WRMEO method under 80:20 over 50 epochs. Primarily, either TRAIN or VALID rise quickly, depicting effective learning of patterns from the data. Around the epoch, the VALID slightly exceeds the training accuracy, indicating better generalisation without overfitting. As training evolves, they reflect improved outcomes and a minimum performance gap between TRAIN and VALID. The nearby alignment of either curve throughout training suggests that the method is well-regularised and generalised. This indicates the strong capability of the methodology to learn and retain valuable characteristics through either seen or unseen data.

Figure 7.

curve of the DNNOD-WRMEO method under 80:20.

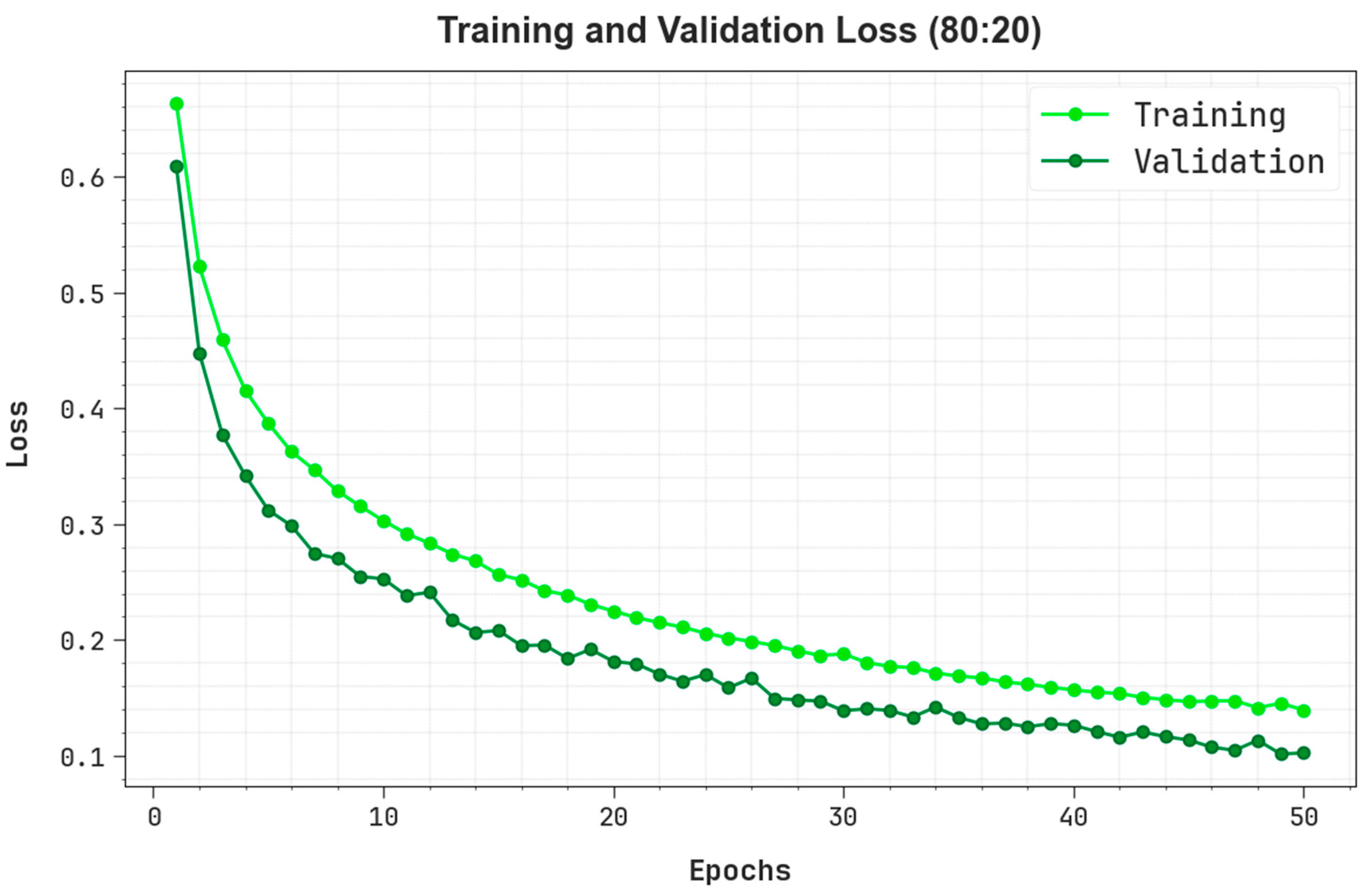

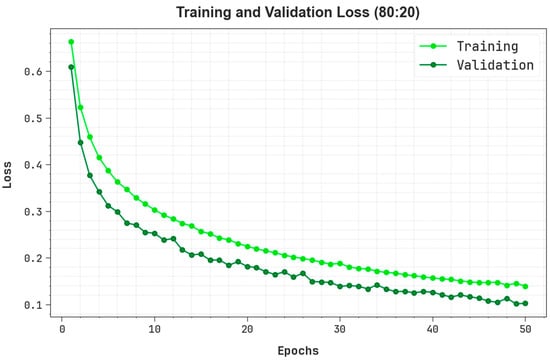

Figure 8 describes the TRAIN and VALID losses of the DNNOD-WRMEO methodology under 80:20 over 50 epochs. Primarily, either TRAIN or VALID losses are superior, indicating that the method begins with an inadequate understanding of the data. As training develops, the loss consistently decreases, indicating that the technique is effectively learned and optimised. The close alignment among the TRAIN and VALID loss curves throughout training implies that the methodology has not overfitted and preserves better generalisation to unseen data.

Figure 8.

Loss curve of the DNNOD-WRMEO method under 80:20.

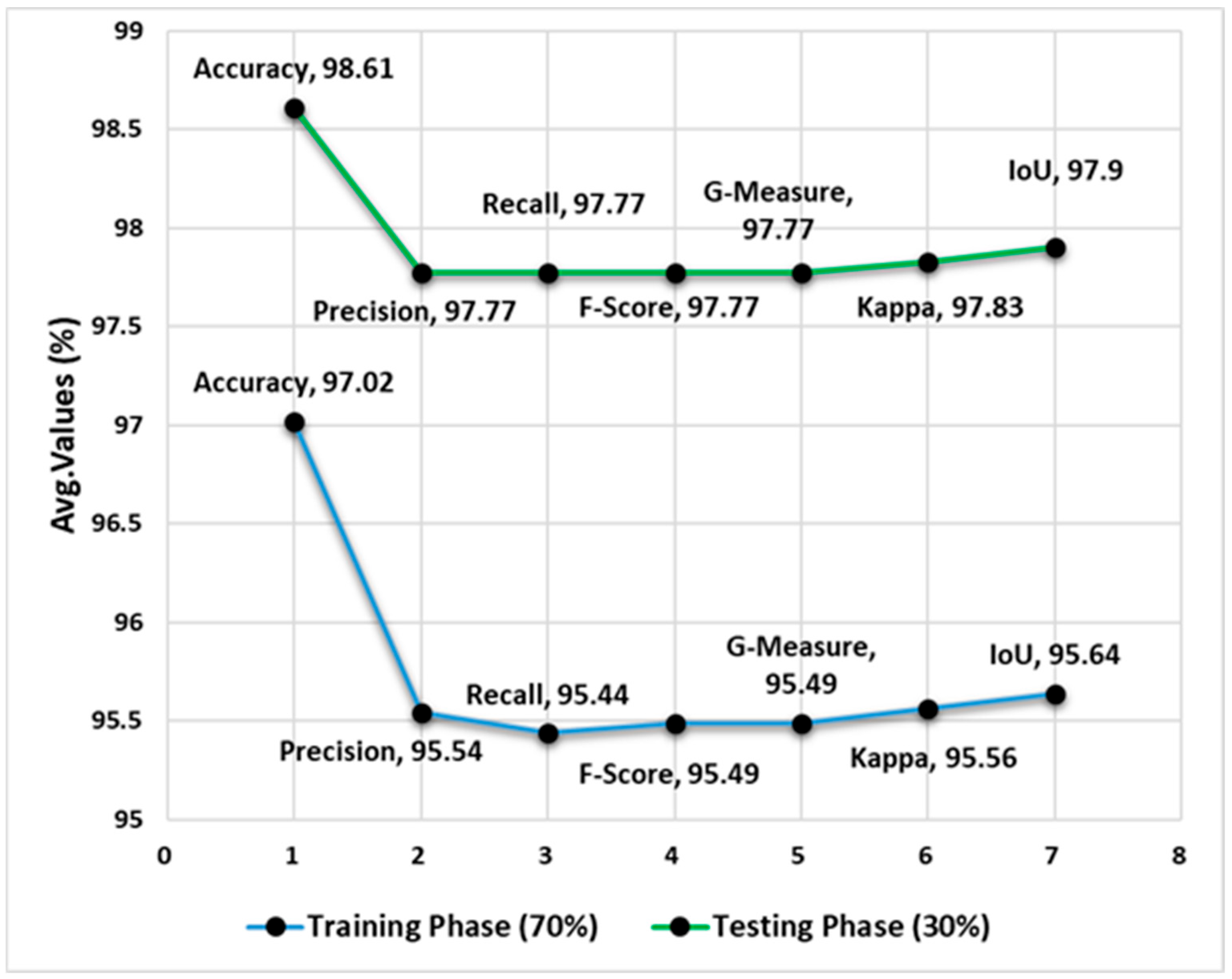

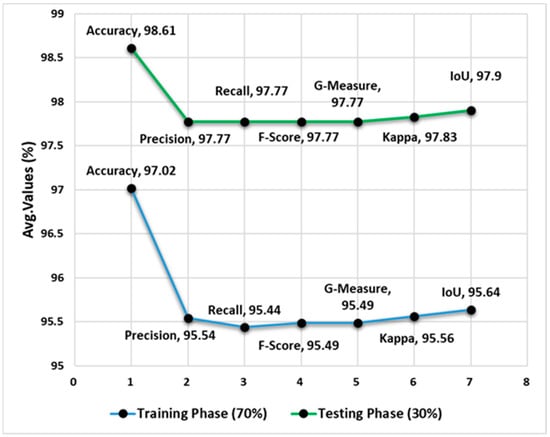

Table 3 and Figure 9 demonstrate the classifier performance of the DNNOD-WRMEO approach under 70:30. On 70% TRPHE, the DNNOD-WRMEO approach reaches an average of 97.02%, of 95.54%, of 95.44%, of 95.49%, of 95.49%, Kappa of 95.56%, and an IoU of 95.64%. Moreover, on 30% TRPHE, the DNNOD-WRMEO model reaches an average of 98.61%, of 97.77%, of 97.77%, of 97.77%, of 97.77%, Kappa of 97.83%, and an IoU of 97.90%.

Table 3.

Classifier outcome of the DNNOD-WRMEO approach under 70:30.

Figure 9.

Average values of the DNNOD-WRMEO approach at 70:30.

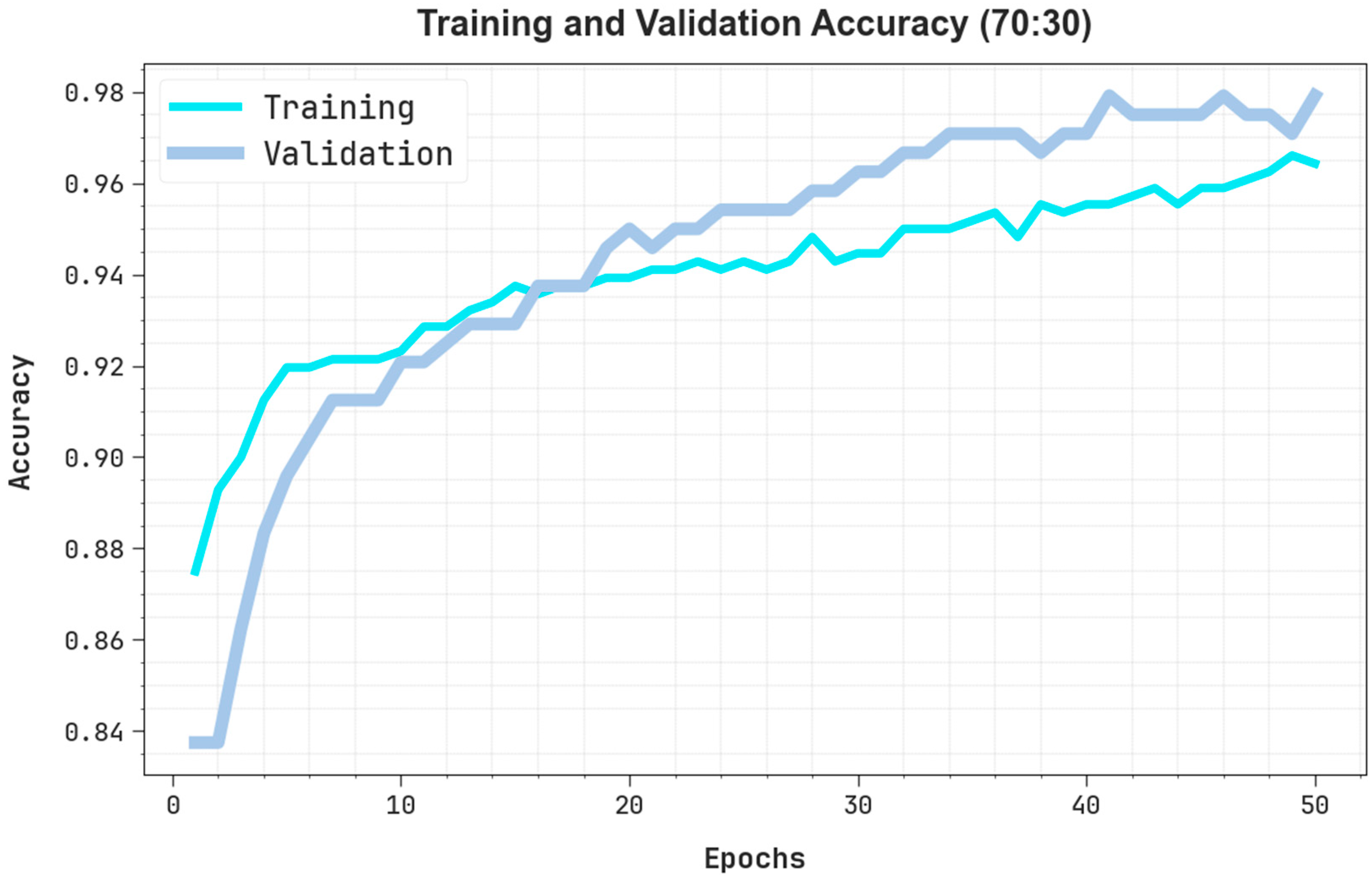

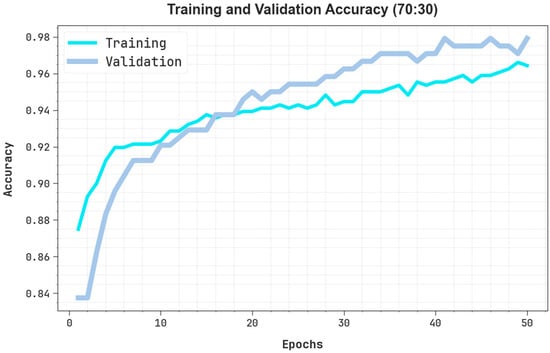

Figure 10 represents the TRAIN and VALID of a DNNOD-WRMEO model under 70:30 over 50 epoch counts. Primarily, both TRAIN and VALID increase rapidly, signifying efficient learning of patterns in the data. Around the epoch, the VALID moderately outperforms training accuracy, indicating better generalisation without overfitting. As training evolves, it reflects superior outcomes and a minimum solution gap between TRAIN and VALID. The close alignment of either curve throughout training suggests that the method regularises and generalises well. This represents the stronger proficiency of the methodology in learning and retaining valuable features through either seen or hidden data.

Figure 10.

curve of the DNNOD-WRMEO approach at 70:30.

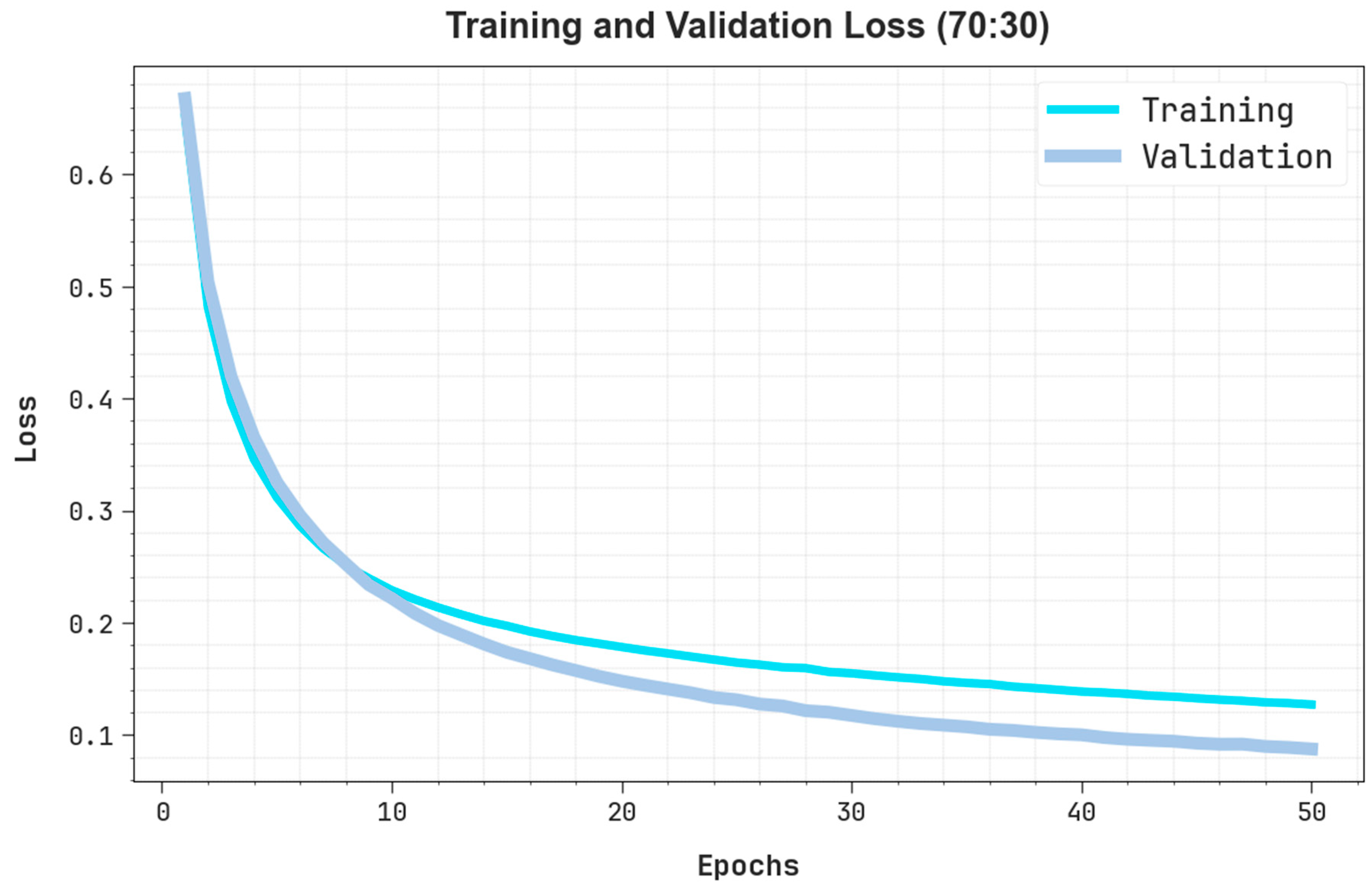

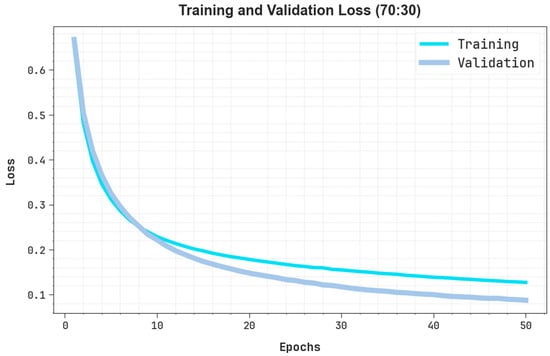

Figure 11 exemplifies the TRAIN and VALID losses of the DNNOD-WRMEO method under 70:30 over 50 epochs. Firstly, both TRAIN and VALID losses are higher, indicating that the method initiates with a restricted understanding of the data. As training progresses, both losses steadily decrease, indicating that the technique efficiently learns and optimises its parameters. The close alignment among the TRAIN and VALID loss curves throughout training suggests that the method has not overfitted and demonstrates good generalisation to unseen data.

Figure 11.

Loss curve of the DNNOD-WRMEO method under 70:30.

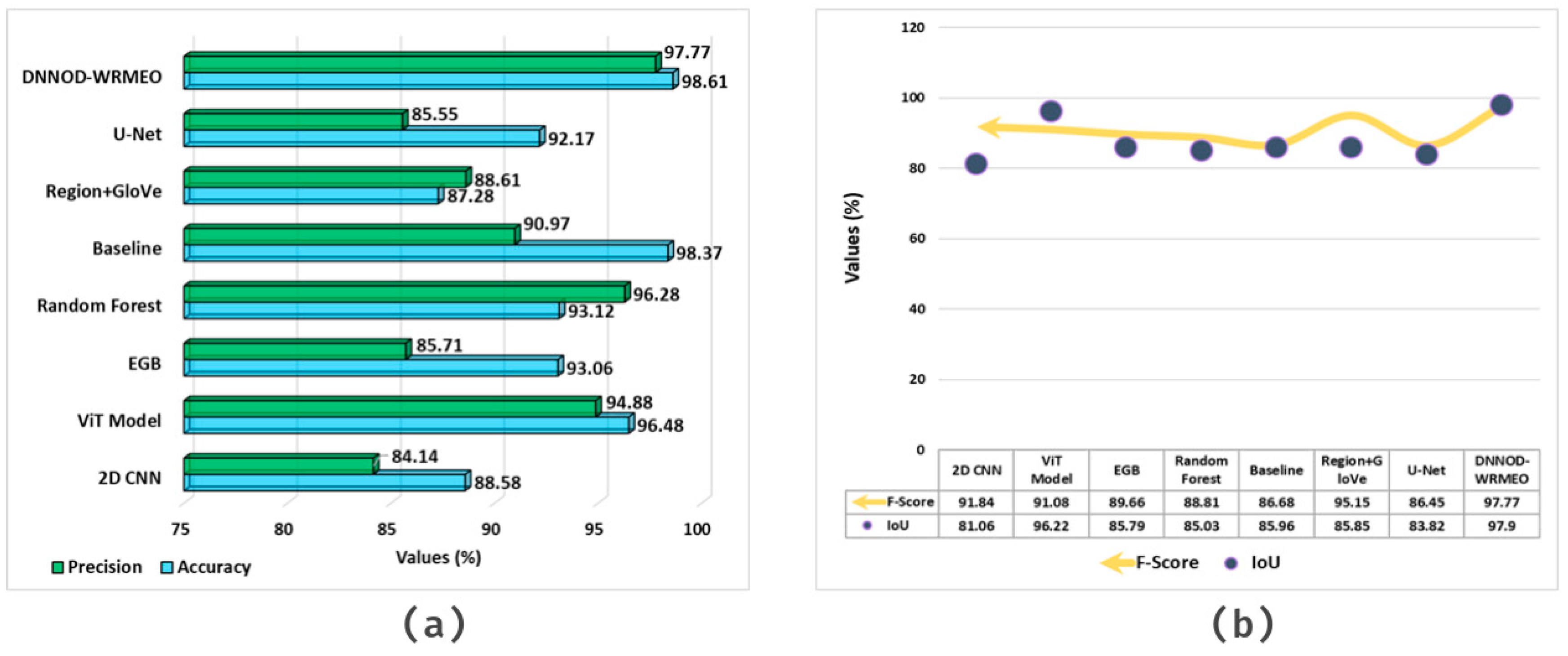

4. Discussion

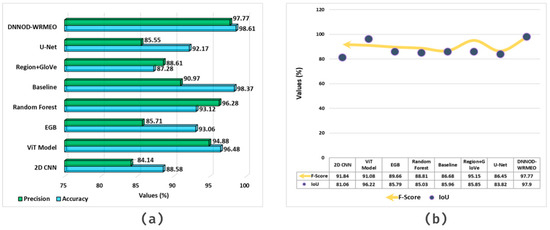

Table 4 and Figure 12 present a comparative study of DNNOD-WRMEO with existing techniques [24,25,26,27]. The solution emphasised that the DNNOD-WRMEO technique attained superior outcomes of , , , and an IoU of 98.61%, 97.77%, 97.77%, and 97.90%, respectively. However, the present models, including 2D CNN, ViT, EGB, Random Forest (RF), Baseline, Region + GloVe, and U-Net, attained worse outcomes compared with the DNNOD-WRMEO technique.

Table 4.

Comparative analysis of the DNNOD-WRMEO approach with existing methods.

Figure 12.

Comparative analysis of the DNNOD-WRMEO model: (a) and (b) and IoU.

5. Conclusions

In this paper, a DNNOD-WRMEO method is developed by utilising aerial remote sensing imagery. The aim is to create an effective monitoring and analysis of water resources and Earth surface observations using aerial remote sensing images. The WF initially employs the image pre-processing stage to improve image quality by eliminating noise. For object detection, the Yolov12 method is deployed for identifying, locating, and classifying objects within an image. Next, the DNNOD-WRMEO model implements the ResNet-CapsNet approach as the backbone feature extraction method. Finally, the TCN approach is exploited for the water resource classification method. The comparison analysis of the DNNOD-WRMEO methodology revealed a superior accuracy value of 98.61% compared with existing models using the AIWR dataset. The limitations include the overly simplistic classification of water bodies into only natural and artificial categories, which does not highlight the complexity of real-world water resource monitoring. The ability to distinguish diverse water body types, namely rivers, lakes, reservoirs, ponds, and canals, each of which has unique spectral, shape, and contextual characteristics, was also limited. Moreover, this study does not account for seasonal or temporal variations in water extent, which can affect classification accuracy. The dataset size and diversity are also restricted, affecting the generalizability of the model. Future work can address these issues by integrating multi-class water body categorisation, incorporating temporal and seasonal dynamics, and expanding dataset coverage, as well as by exploring advanced data fusion techniques to improve robustness and practical applicability across diverse geographic regions.

Author Contributions

Conceptualisation: S.A. Data curation and formal analysis: M.M. and H.A. (Hadeel Alsolai). Investigation and methodology: S.A. Funding support: A.A.A. Project administration and resources: A.A.A. Discussion, review, and editing: H.A. (Hadeel Alsolai), M.B.R., H.A. (Hanadi Alkhudhayr), and S.A. Software and supervision: S.A. Validation and visualisation: H.A. (Hanadi Alkhudhayr). Writing—original draft: S.A. Writing—review and editing: A.A.A. All authors have read and agreed to the published version of the manuscript.

Funding

The authors extend their appreciation to the Deanship of Research and Graduate Studies at King Khalid University for funding this work through Large Research Project under grant number RGP2/247/46. Ongoing Research Funding program, (ORF-2026-787), King Saud University, Riyadh, Saudi Arabia. Princess Nourah bint Abdulrahman University Researchers Supporting Project number (PNURSP2026R303), Princess Nourah bint Abdulrahman University, Riyadh, Saudi Arabia. The authors extend their appreciation to the Deanship of Scientific Research at Northern Border University, Arar, KSA for funding this research work through the project number “NBU-FFR-2026-2913-01”. The author would like to thank the Deanship of Scientific Research at Shaqra University for supporting this work.

Data Availability Statement

The data that support the findings of this study are openly available in: https://data.mendeley.com/datasets/d73mpc529b/3 (accessed on 20 August 2025) reference number [23].

Conflicts of Interest

The authors declare no conflicts of interest.

References

- García, L.; Rodríguez, D.; Wijnen, M.; Pakulski, I. (Eds.) Earth Observation for Water Resources Management: Current Use and Future Opportunities for the Water Sector; World Bank Publications: Washington, DC, USA, 2016. [Google Scholar]

- Sheffield, J.; Wood, E.F.; Pan, M.; Beck, H.; Coccia, G.; Serrat-Capdevila, A.; Verbist, K.J.W.R.R. Satellite remote sensing for water resources management: Potential for supporting sustainable development in data-poor regions. Water Resour. Res. 2018, 54, 9724–9758. [Google Scholar] [CrossRef]

- Jia, L.; Marco, M.; Bob, S.; Lu, J.; Massimo, M. Monitoring water resources and water use from earth observation in the belt and road countries. Bull. Chin. Acad. Sci. 2017, 32, 62–73. [Google Scholar]

- Makapela, L.; Newby, T.; Gibson, L.A.; Majozi, N.; Mathieu, R.; Ramoelo, A.; Mengistu, M.G.; Jewitt, G.P.W.; Bulcock, H.H.; Chetty, K.T.; et al. Review of the use of earth observations remote sensing in water resource management in South Africa. WRC Rep. No. KV 2015, 329, 15. [Google Scholar]

- Behera, M.D.; Gupta, A.K.; Barik, S.K.; Das, P.; Panda, R.M. Use of satellite remote sensing as a monitoring tool for land and water resources development activities in an Indian tropical site. Environ. Monit. Assess. 2018, 190, 401. [Google Scholar] [CrossRef] [PubMed]

- Aldana-Martín, J.F.; García-Nieto, J.; del Mar Roldán-García, M.; Aldana-Montes, J.F. Semantic modelling of earth observation remote sensing. Expert Syst. Appl. 2022, 187, 115838. [Google Scholar] [CrossRef]

- Zhao, Q.; Yu, L.; Du, Z.; Peng, D.; Hao, P.; Zhang, Y.; Gong, P. An overview of the applications of earth observation satellite data: Impacts and future trends. Remote Sens. 2022, 14, 1863. [Google Scholar] [CrossRef]

- Chuvieco, E. Earth Observation of Global Change: The Role of Satellite Remote Sensing in Monitoring the Global Environment; Springer: Berlin/Heidelberg, Germany, 2008. [Google Scholar]

- Diner, D.J.; Asner, G.P.; Davies, R.; Knyazikhin, Y.; Muller, J.P.; Nolin, A.W.; Pinty, B.; Schaaf, C.B.; Stroeve, J. New directions in earth observing: Scientific applications of multiangle remote sensing. Bull. Am. Meteorol. Soc. 1999, 80, 2209–2228. [Google Scholar] [CrossRef]

- Altaee, M.; Talib, A.; Jalil, M.A.; Ali, J.; Alalwani, T.A. Intelligent Multi-level Feature Fusion using Remote Sensing and CNN Image Classification Algorithm. J. Intell. Syst. Internet Things 2023, 9, 36. [Google Scholar] [CrossRef]

- Wang, Y.; Yan, N.; Zhu, W.; Ma, Z.; Wu, B. A method to estimate the water storage of on-farm reservoirs by detecting slope gradients based on multispectral drone data. Agric. Water Manag. 2025, 307, 109241. [Google Scholar] [CrossRef]

- Sri Bala, G.; Nagaraju, T.V.; Krishnam Raju, G.L.V.; Rambabu, T.; Harish Kumar Varma, G. Advancing Estuarine Aquaculture with Remote Sensing for Water Management. In Inland Aquaculture Sustainability and Effective Water Management Strategies; Springer: Cham, Switzerland, 2025; pp. 87–97. [Google Scholar]

- Sun, D.; Gao, G.; Huang, L.; Liu, Y.; Liu, D. Extraction of water bodies from high-resolution remote sensing imagery based on a deep semantic segmentation network. Sci. Rep. 2024, 14, 14604. [Google Scholar] [CrossRef]

- Fu, Y.; Zhang, X.; Wang, M. DSHNet: A semantic segmentation model of remote sensing images based on dual stream hybrid network. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2024, 17, 4164–4175. [Google Scholar] [CrossRef]

- Lin, Y.; Liu, S.; Zheng, Y.; Tong, X.; Xie, H.; Zhu, H.; Du, K.; Zhao, H.; Zhang, J. An unsupervised transformer-based multivariate alteration detection approach for change detection in VHR remote sensing images. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2024, 17, 3251–3261. [Google Scholar] [CrossRef]

- Zhang, W.; Liu, Z.; Zhou, S.; Qi, W.; Wu, X.; Zhang, T.; Han, L. LS-YOLO: A novel model for detecting multiscale landslides with remote sensing images. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2024, 17, 4952–4965. [Google Scholar] [CrossRef]

- Mia, M.S.; Tanabe, R.; Habibi, L.N.; Hashimoto, N.; Homma, K.; Maki, M.; Matsui, T.; Tanaka, T.S. Multimodal deep learning for rice yield prediction using UAV-based multispectral imagery and weather data. Remote Sens. 2023, 15, 2511. [Google Scholar] [CrossRef]

- Ye, N.; Walker, J.P.; Gao, Y.; PopStefanija, I.; Hills, J. Comparison between thermal-optical and L-band passive microwave soil moisture remote sensing at farm scales: Towards UAV-based near-surface soil moisture mapping. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2023, 17, 633–642. [Google Scholar] [CrossRef]

- Dowah, H.; Kirawan, K. Automated Boulder Localization and Recognition in Side-Scan Sonar Data Using Deep Learning Approach. 2025. Available online: http://www.diva-portal.org/smash/record.jsf?pid=diva2%3A1962566&dswid=6901 (accessed on 3 January 2026).

- Nguyen, T. Improve Underwater Object Detection through YOLOv12 Architecture and Physics-informed Augmentation. arXiv 2025, arXiv:2506.23505. [Google Scholar] [CrossRef]

- Manoranjitham, D. Ancient Tamil Character Recognition Using Optimal Thresholding with Resnet-Capsnet. J. Theor. Appl. Inf. Technol. 2025, 103, 5312–5324. [Google Scholar]

- Ryu, C.H.; Han, T.H. BTCP: Binary Temporal Convolutional Network-based Data Prefetcher for Low Inference Latency and Storage Overhead. IEEE Access 2025, 13, 115048–115062. [Google Scholar] [CrossRef]

- AIWR: Aerial Image Water Resource Dataset. Available online: https://data.mendeley.com/datasets/d73mpc529b/3 (accessed on 3 January 2026).

- Jia, J.; Wang, Y.; Zheng, X.; Yuan, L.; Li, C.; Cen, Y.; Si, F.; Lv, G.; Wang, C.; Wang, S.; et al. Design, performance, and applications of AMMIS: A novel airborne multimodular imaging spectrometer for high-resolution Earth observations. Engineering 2025, 47, 38–56. [Google Scholar] [CrossRef]

- Zhao, M.; O’Loughlin, F. A Multiplatform Approach for Chlorophyll Level Estimation for Irish Lakes. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2025, 18, 8261–8274. [Google Scholar] [CrossRef]

- Li, K.; Vosselman, G.; Yang, M.Y. HRVQA: A Visual Question Answering benchmark for high-resolution aerial images. ISPRS J. Photogramm. Remote Sens. 2024, 214, 65–81. [Google Scholar] [CrossRef]

- He, Y.; Wang, J.; Zhang, Y.; Liao, C. Enhancement of urban floodwater mapping from aerial imagery with dense shadows via semisupervised learning. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2022, 15, 9086–9101. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.