Highlights

What are the main findings?

- This review synthesizes the evolution of LiDAR platforms, sensing modalities, and processing workflows for forest remote sensing.

- A standardized framework of LiDAR-derived forest structural and compositional metrics is consolidated across ecological, biomass, and wildfire applications.

- Advances in waveform processing, multispectral and hyperspectral LiDAR, multi-sensor data fusion, and AI-based approaches are critically assessed.

What are the implications of the main findings?

- Harmonized metric definitions enhance reproducibility and cross-domain comparability in forest structure and fuel characterization studies.

- Emerging multiplatform and AI-driven LiDAR approaches support scalable, multitemporal/multispectral forest monitoring for ecosystem management and wildfire risk assessment.

Abstract

Over the past two decades, Light Detection and Ranging (LiDAR) technology has evolved from early National Aeronautics and Space Administration (NASA)-led airborne laser altimetry into commercially mature systems that now underpin vegetation remote sensing across scales. Continuous advancements in laser engineering, signal processing, and complementary technologies—such as Inertial Measurement Units (IMU) and Global Navigation Satellite Systems (GNSS)—have yielded compact, cost-effective, and highly sophisticated LiDAR sensors. Concurrently, innovations in carrier platforms, including uncrewed aerial systems (UAS), mobile laser scanning (MLS), Simultaneous Localization and Mapping (SLAM) frameworks, have expanded LiDAR’s observational capacity from plot- to global-scale applications in forestry, precision agriculture, ecological monitoring, Above Ground Biomass (AGB) modeling, and wildfire science. This review synthesizes LiDAR’s cross-domain capabilities for the following: (a) quantifying vegetation structure, function, and compositional dynamics; (b) recent sensor developments encompassing ALS discrete-return , and ALS full-waveform , photon-counting LiDAR (PCL), emerging multispectral LiDAR (MSL), and hyperspectral LiDAR (HSL) systems; and (c) state-of-the-art data processing and fusion workflows integrating optical and radar datasets. The synthesis demonstrates that many LiDAR-derived vegetation metrics are inherently transferable across domains when interpreted within a unified structural framework. The review further highlights the growing role of artificial-intelligence (AI)-driven approaches for segmentation, classification, and multitemporal analysis, enabling scalable assessments of vegetation dynamics at unprecedented spatial and temporal extents. By consolidating historical developments, current methodological advances, and emerging research directions, this review establishes a comprehensive state-of-the-art perspective on LiDAR’s transformative role and future potential in monitoring and modeling Earth’s vegetated ecosystems.

1. Introduction

The forests cover about 31%, and agricultural land occupies almost 38%, collectively covering 69% of the global land [1,2]. The overlapping forest and agricultural landscapes are major and diverse components of terrestrial ecosystems, largely comprising vegetation and vegetation species spreading across the globe [3]. In the past 50 years, terrestrial ecosystems have undergone significant changes induced by natural and anthropogenic alterations [2]. In the context of anthropogenic alterations, accelerating urbanization, deforestation, and extensive agriculture are major contributors globally [3]. For that reason, some ecosystems are on the verge of collapse, and some are mitigating the climate change impact through adaptation and evolution [4,5]. On the other hand, invasive alien species and non-native species are introduced, adversely affecting the forests and agricultural ecosystems [6,7].

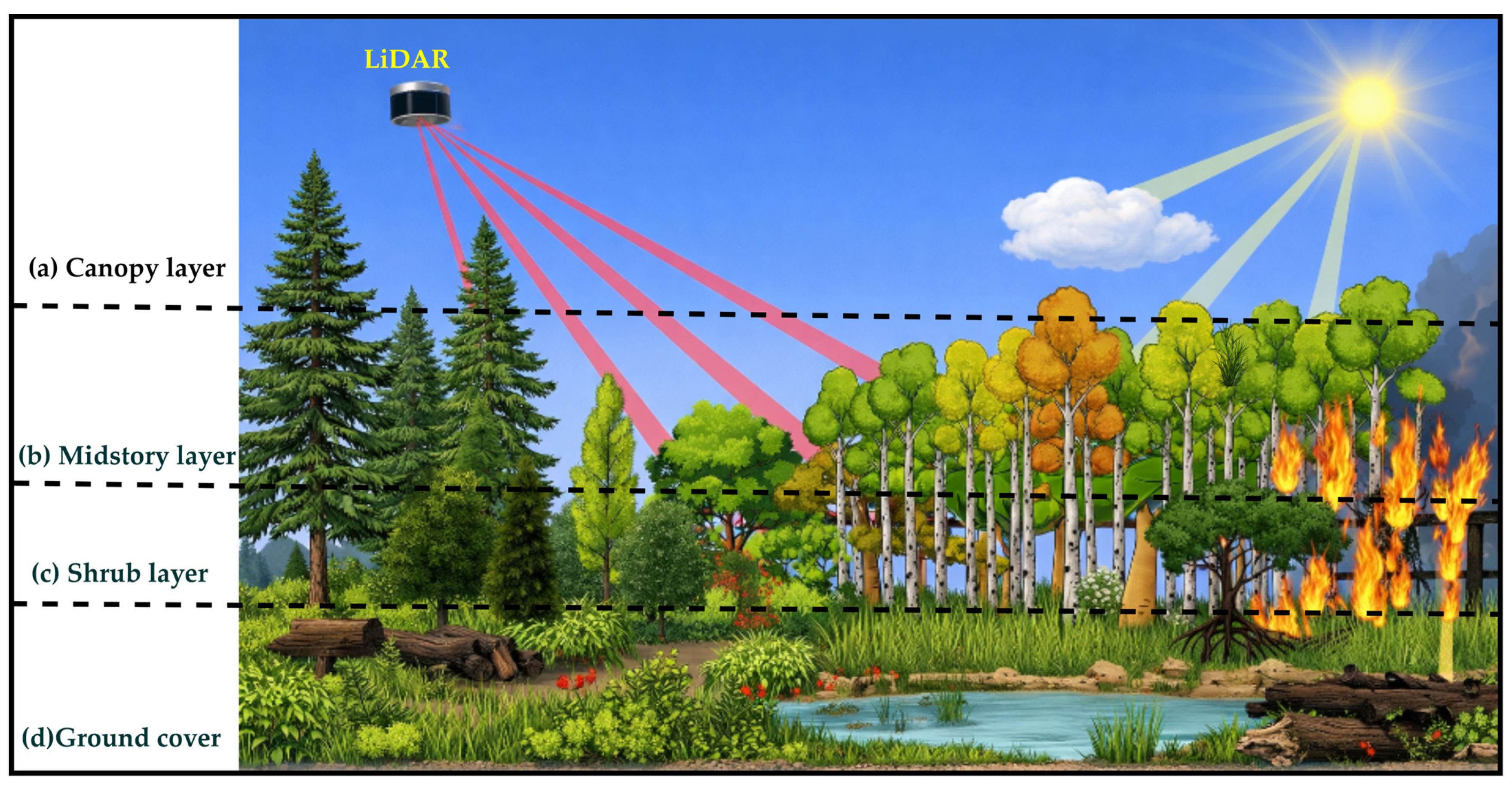

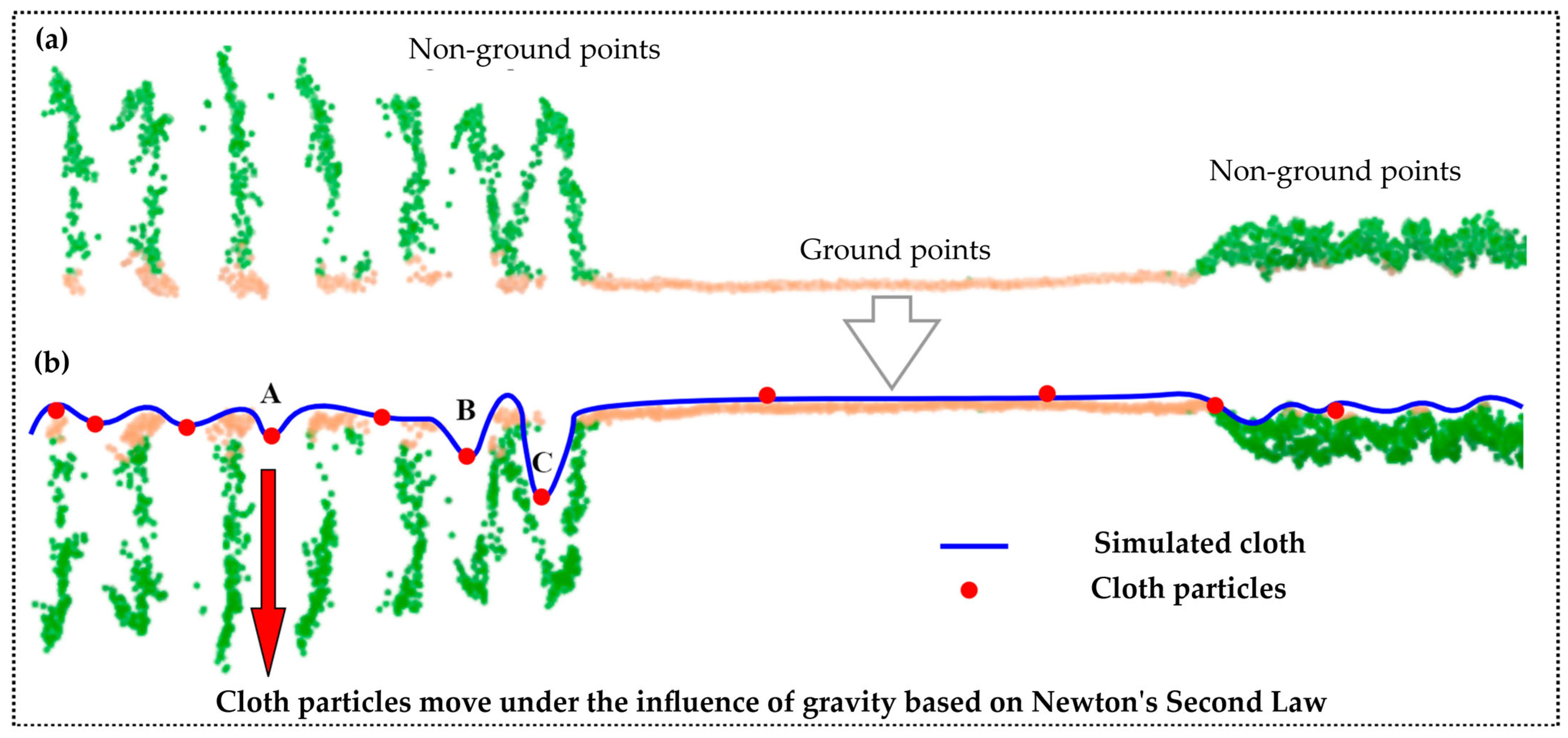

The information on composition, structure, productivity, and disturbance status is important to understand vegetated ecosystem complexity and heterogeneity, deciphering the ecosystem’s resilience and integrity [8,9]. Forest ecosystems vary greatly in vertical and horizontal spatial resolutions and representations. Forest ecosystems are comprising a canopy layer (a tree line visible from the sky), a midstory layer (trees beneath the canopy layer), a shrub layer (small plants, herbs, forbs, shrubs, and grasses), and ground cover comprising downed woody debris, dry leaves, as well as litter, moss, and lichen [9,10]—see Figure 1. Nonetheless, quantifying the forest’s structural complexity and heterogeneity is important to understand its role in above-ground biomass (AGB) modeling, biodiversity, species abundance, forest ecology, productivity, fuel loading, potential fire behavior, fire reduction practices, and forest biological disturbance agents—insects, pathogens, and parasitic plants [3,9,11]. Forests and plant communities are anatomical, morphological, biochemical, physiological, and structural constructs distributed in 3D space and time [12,13]. Plant traits and trait variations are proxies of conditions, abiotic and biotic constraints, processes, and stresses acting on plant species or communities [14].

Figure 1.

Vertical hierarchy of diverse forest structural part distributions. (a) Canopy layer of emergent trees. (b) Midstory layer of smaller trees. (c) Shrub layers. (d) The forest floor is comprising downed woody debris and litter. The optical and LiDAR principal forest’s vertical stratification is depicted.

To address plant traits and trait variations over time, remote sensing emerged as a widely adopted and cost-effective technique for providing large-scale, long-term, consistent, spatiotemporal, and economically viable scientific information on diverse vegetation biomes [15,16,17]. Consequently, in the past 50 years, low-to-medium-resolution satellite imagery (10–500 m/pixel) has shown great success in driving a wide array of vegetation physiological, anatomical, and geochemical traits at global scales in logistically challenging biomes, e.g., Moderate Resolution Imaging Spectrometer (MODIS) Vegetation Continuous Fields (VCF) products—comprising tree cover, non-tree vegetation, and non-vegetated surfaces, including water—and Landsat time series for AGB [15,18,19]. However, while these systems provide seamless and consistent multispectral (MS) imagery useful for mapping vegetation traits and variations at global scales, they suffer from saturation effects for mapping fine-detailed 3D forest ecosystems [20,21].

High-resolution spaceborne imagery (≈5 m/pixel) and VHR aerial imagery from manned and Unmanned Aerial System (UAS) greatly improved the resolution and representation issues of canopy structural information, e.g., fine-scaled Digital Surface Models (DSMs) [22,23]. Optical remote sensing relies on passive measurements of reflected solar radiation from forest canopies. In mountainous and topographically complex landscapes with dense vegetation, signal interpretation is challenged by terrain-induced illumination variability, canopy self-shadowing, and occlusion effects [24]. Additionally, spectral saturation in high-biomass conditions limits sensitivity to structural and compositional variability, reducing the accuracy of forest attribute retrievals [24,25,26]. The dissemination of sunlight through the canopy to reach the midstory, shrub, and/or ground layer is largely dependent on the structural and physiological characteristics of horizontal and vertical structural complexities of vegetation (Figure 1), and highly dependent on seasonality, e.g., phenological stages—leaf-on and leaf-off conditions [27,28]. In addition, optical remote sensing suffers from occlusions and cloud cover [29]. For example, prairie and savanna ecosystems, largely comprising grasses, shrubs, and plant communities, have been mapped successfully using optical remotely sensed datasets, such as Landsat 30 m data products. On the contrary, dense tropical and boreal ecosystems are challenging to decode the 3D forest structure using optical remote sensing alone [30,31].

To address the forest’s 3D vertical stratification using optical sensed data, the forest’s vertical structural characteristics are established using empirical forest models [26], e.g., the Forest Vegetation Simulator (FVS), to understand growth and yield of forest ecosystems throughout the United States [32,33,34]. FVS simulates 3D forest ecosystems based on forest inventory analysis (FIA) input attributes of field-based observations [33]. Recent findings show that FVS is highly sensitive towards species composition, site index, and tree diameter factor among other parameters [34]. To this end, several studies benchmarked the laser remote sensing (LRS) capabilities in deciphering the 3D forest structural complexities—AGB, forest management practices, forest ecology, and wildland fuel mapping—in wide-area capacity compared with sparsely inputted FVS-based simulations [35,36,37].

Scope and Outline of This Review

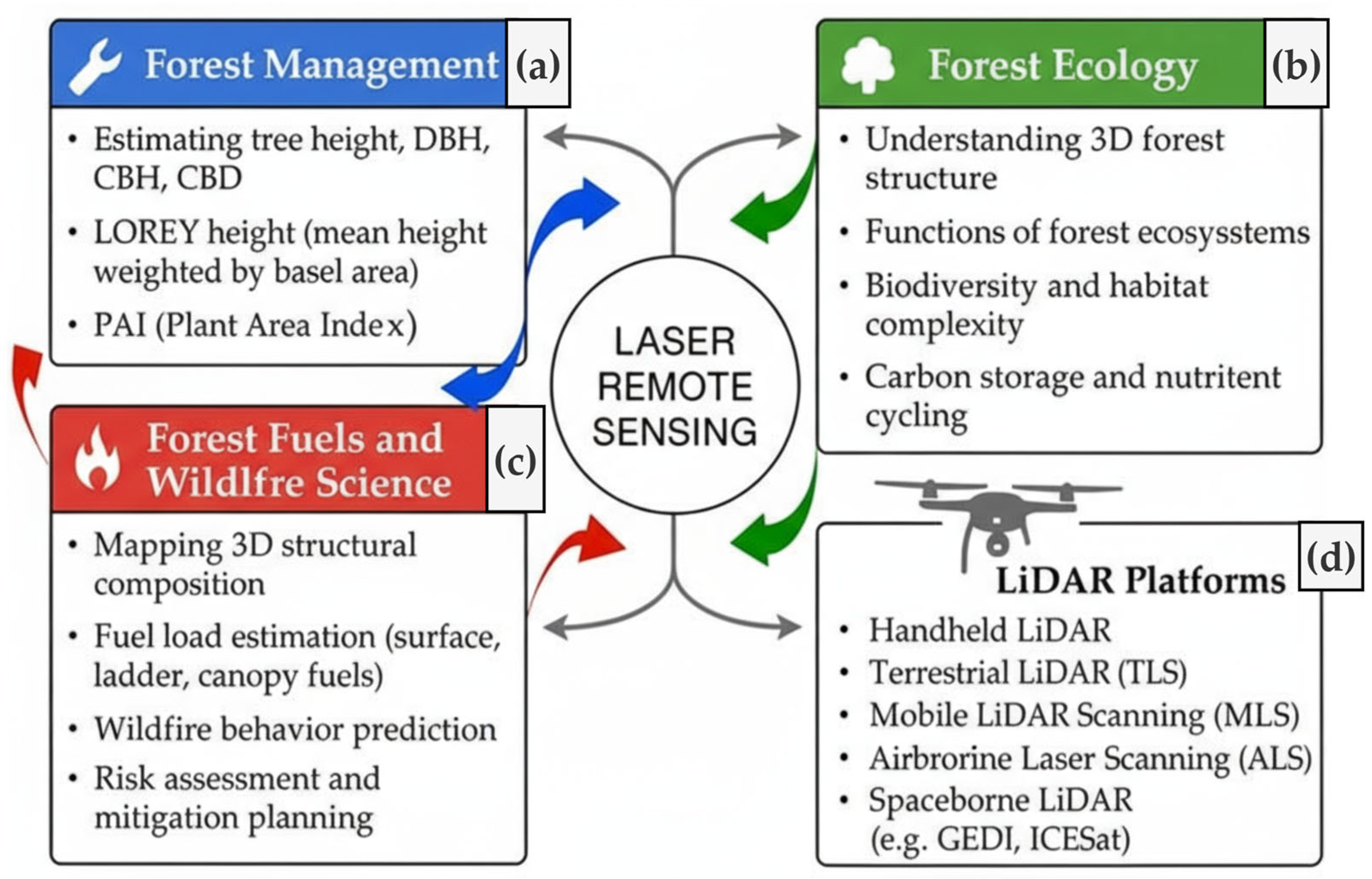

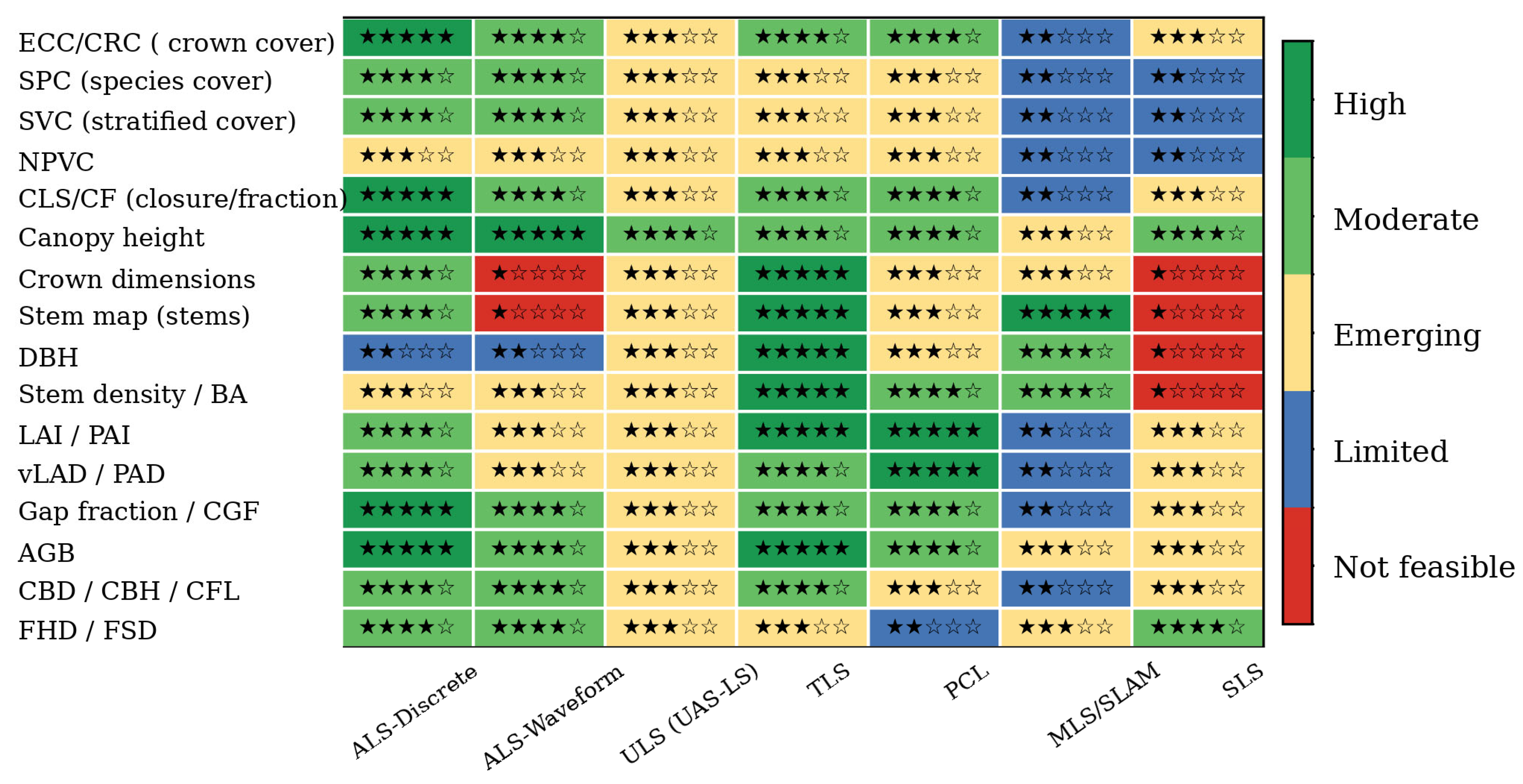

Ecological conservation monitoring programs operate at multiple organizational and spatial levels, from species to ecosystems. However, many suffer from a lack of clearly defined goals and hypotheses, as well as weaknesses in survey design, data quality, and statistical power at the onset [38,39]. To this end, many forest structural and functional metrics derived from LRS extend beyond any single application domain, such as forest management [17,40,41]. These metrics are also essential for AGB estimation, fuel mapping, and forest ecology (Figure 2). Consequently, LiDAR datasets within one domain can often play a crucial role across multiple forestry domains [26,42]. Fully realizing LiDAR’s potential in interdisciplinary forest ecosystem research requires a comprehensive understanding of how forest structural, compositional, and functional attributes are intertwined and how LiDAR-derived products can be applied across domains; however, these capabilities remain insufficiently evaluated and utilized [43,44]. Moreover, as LiDAR technology has diversified—yielding an array of commercially viable sensors—a thorough grasp of each sensor’s capabilities, interdisciplinary application scope, and potential future advancements is of paramount importance yet not fully understood [17,37,45].

Figure 2.

Schematic overview of LiDAR applications across three major forest domains—(a) management, (b) ecology, and (c) wildfire science—and the corresponding (d) LiDAR platforms that enable data acquisition across these themes.

In the light of existing research and review articles addressing LRS of vegetation, this review serves three main objectives: (a) to reinforce the cross-domain applicability of LiDAR-derived a plethora of structural, compositional, functional, and disturbance status indicator forest metrices which are essential for multiple forestry applications [37,42,44]; (b) to identify and synthesize recent technological advancements in LiDAR sensors, operational modalities, and sensor carrier platforms, and to evaluate their demonstrated effectiveness in characterizing forest attributes over large spatial extents [46,47,48]. Moreover, this review highlights the development of multispectral LiDAR (MSL) [49,50], hyperspectral LiDAR systems (HSL) [51], and the integration of LiDAR with optical and Synthetic Aperture Radar (SAR) remote sensing technologies to enhance interdisciplinary investigations of vegetated ecosystems [25,52]. (c) To quantify the forest’s attributes, LiDAR data processing workflows, encompassing both traditional approaches and modern artificial intelligence (AI)-driven techniques [53], with a focus on their practical application in vegetation remote sensing, have been explored [25,54]. Finally, the discussion section provides a brief review of existing reviews and research papers that address the identified knowledge gaps and contribute to one or more application domains of LRS of precision agriculture and precision forestry.

2. Materials and Methods

A comprehensive literature search was conducted on the Web of Science Core Collection (version 5.32) and Scopus to capture peer-reviewed publications from late 1999 through 2025 (n = 619). To improve transparency, search strings combined LiDAR-specific keywords—“space-borne LiDAR (SLS),” ALS, UAS Laser Scanning (ULS), Terrestrial Laser Scanning (TLS), handheld LiDAR, Mobile Laser Scanner (MLS), Simultaneous Localization and Mapping (SLAM), smartphone LiDAR, waveform LiDAR, discrete-return LiDAR, single-photon LiDAR—with ecosystem and application descriptors such as forest*, plantation*, ecosystem*, tropical forest*, dry forest*, wildfire*, fuel mapping*, forest attribute*, and phenotype*. These representative keyword combinations formed the basis of the database queries and ensured broad coverage of vegetation-related LiDAR research. Publications from all major publishers (Elsevier, Springer, Wiley, Taylor & Francis, IEEE, MDPI) were retrieved.

Each record was screened using a simplified PRISMA-style workflow by examining abstracts, figure captions, methodological descriptions, and conclusions to assess relevance. To focus on studies that advanced LiDAR technology or its application in vegetation science, we applied the following inclusion criteria:

- Sensor innovation: New LiDAR hardware or waveform acquisition modes for vegetation remote sensing.

- Platform development: Novel carrier integrations, e.g., multi-sensor or multitemporal deployments of LiDAR sensors.

- Original research: Studies presenting new findings in the LRS of vegetation.

- Processing methods: Development of parametric algorithms or nonparametric (ML/DL) approaches for LiDAR data processing.

- Multi-scale applications: Demonstrations of multitemporal, multiplatform, multispectral, or hyperspectral-LiDAR integration in vegetation contexts

Articles were excluded when they relied solely on established LiDAR data processing frameworks to extract structural attributes without introducing methodological or technological advances or were not directly related to LiDAR applications in precision forestry (n = 285). Statistical distribution analyses and detailed bibliometric assessments were omitted to maintain a focused review of technological development in LiDAR sensor design, platform capabilities, and precision forestry-related applications. Using PRISMA, inclusion and exclusion criteria, as stated above, a total of 334 peer-reviewed articles were included in the present review.

3. Results

3.1. Overview

In the past 20 years or so, LRS has shown great success in deciphering agriculture and forest ecosystems’ structural complexity and diversity in detail, as a stand-alone or through data fusion approaches with multi-source remotely sensed datasets [25,55]. Currently, LiDAR sensors are operational from various carrier platforms, e.g., hand-held sensors, e.g., Simultaneous Localization and Mapping (SLAM) [56], smartphones [57], TLS, MLS, ULS, ALS [58,59,60,61], and SLS, e.g., Ice Cloud and land Elevation Satellite-1 (ICESat-1), ICESat-2 [62], and Global Ecosystem Dynamics Investigation (GEDI) [63], elucidating the vegetation remote sensing by peering through dense forests and deciphering 3D complexities at unprecedented spatiotemporal resolutions [11,64,65,66]. LRS has been widely applied in vegetation studies to quantify forest structural, functional, compositional, and disturbance attributes, e.g., species composition, aboveground biomass, and canopy layering, wildfire science, thereby advancing the understanding of 3D ecosystem functioning, disturbance dynamics, biodiversity, carbon storage, and nutrient cycling [45,65,67,68,69,70,71,72].

3.2. Application Domains

Broadly, LiDAR forest applications can be conceptually grouped into three overarching categories: forest ecosystem research [26,73], forest management and monitoring [74,75], and forest fuels and wildfire science [76,77]. LiDAR datasets collected within one domain are often applicable and can inform analyses across multiple thematic areas (Figure 2). Figure 2a–c illustrate these domains—encompassing management, ecology, and wildfire dynamics—while Figure 2d outlines the LiDAR sensing platforms—terrestrial, airborne, and spaceborne—that enable data acquisition across spatial and temporal scales [31,65,78]. The following sections elaborate on the role of LiDAR within each domain to establish a contextual foundation for this review.

3.2.1. Forest Ecology

The paradigm of the forest ecosystem is rich and dynamic, blue carbon ecosystems (BCE), i.e., mangrove forests [79], tidal marsh ecosystems [80], seagrass meadows [81], dry land ecosystems [82], grassland ecosystems [83], tropical rainforests [84], temperate deciduous [85], temperate coniferous [86], boreal forests [87], tropical montane cloud forests (TMCFs) [72], forest plantations [88], urban forests [89], and savannas, are among the most diverse forest ecosystems globally [90]. The richness and diversity of terrestrial forest ecosystems are not fully understood due to a lack of extensive sampling in 3D space and time [38,78,91].

LiDAR in this respect provides comprehensive mapping covering the state’s entire forest biome with an increasing number of repeated LiDAR surveys decoding the entirety of forest ecosystem structural composition and diversity; for instance, the United States three-dimensional elevation program (3DEP) is one such example for various national and scientific objectives [26,61,68]. In recent studies, LiDAR-based investigations showed that the structural diversity and heterogeneity of terrestrial forest ecosystems promote bird and animal diversity more than single-layer forest canopies [80]. A preponderance of scientific investigation documented the success of the LiDAR datasets originating from various carrier platforms (Figure 2) to provide new insight into forest ecological applications like transpiration [92], microhabitat diversity governs by physical properties of leaves [93], leaf area density (LAD), leaf area index (LAI), canopy cover (CC), canopy closure (CLS), and tree species classification thereby providing 3D attributes of forest environments setting the baseline of “3D ecology” [94,95,96]. For example, a recent study has utilized a novel—the Salford Advanced Laser Canopy Analyzer (SALCA)—dual-wavelength (1063 nm and 1545 nm) TLS to capture species-specific spatial, spectral, and seasonal canopy characteristics, enabling high-resolution assessment of foliage distribution and dynamics beyond the limitations of conventional field surveys [97].

3.2.2. Forest Resource Management

Apart from forest ecosystems investigations (Section 3.2.1), forest resilience and productivity substantially contribute to economic growth, which is assessed through national-level Forest Management Inventories (FMI) [36]. FMIs are subject to the availability of spatially explicit forest structural, compositional, and functional attributes, e.g., DBH, individual tree height ( forest species composition, basal area (BA), stem density, PAI, PAD, and stock volumes [98]. Traditional forest inventories—such as the United States Forest Inventory and Analysis (FIA) program—are effective in delving into forest ecosystems of national significance, yet spatiotemporally constrained to established forest field plots [99,100].

For that reason, extensive wall-to-wall mapping has been accomplished using remotely sensed data to aid FMI’s objectives [65,98,101]. Field data quality, availability, and relevance are subject to the instruments used and the skill of individual surveyors [33,34]. Nevertheless, complex and challenging global and national forest ecosystems’ 3D stratification has not been fully explored to carry out sustainable FMI without integrating multi-sensory remotely sensed datasets at regional and global scales, as witnessed by several publications documenting the success of LiDAR technology in FMI objectives [65]. For example, the role of LiDAR in sustainable forest management [36], operational implementation of a LiDAR inventory in Boreal Ontario [35], and forest age and height mapping using GEDI and ICESat-2 datasets [102]. Despite the operational success of LiDAR in FMI objectives, the application utility of these datasets in forest ecology and wildfire science is not yet fully realized [103,104].

3.2.3. Forest Fuel Structure and Wildfire Hazard Assessment

The paradigm of wildfires globally—and particularly in the United States—has shifted toward more extreme, frequent, and devastating events under the influence of climate change, wildfire suppression, and intensive human intervention in forest ecosystems [105,106]. Wildfires are controlled by four major yet non-linear components, namely Rate of Spread (ROS), Intensity, Flame Length, and Flame Height, which are directly related to fuel load and topography [107]. Broadscale estimation of forest fuel load is therefore critical for wildfire hazard assessment and mitigation, since fuel remains the only fire-related component that can be modified through forest fuel reduction practices, including mechanical thinning, mastication, and prescribed burning [9,11,76,108].

Though wildfires are natural phenomena, and generally they initiate in surface fuelbed, the average physical characteristics of relatively uniform combustible materials define distinct fire environments [108]. Fuelbed organization—its horizontal and vertical continuity—governs fire behavior: surface fuels encompass everything from dead and live herbaceous plants to downed woody debris accumulations of litter, lichen, moss, and duff, while canopy fuels span the understory, middle story, and dominant canopy layers [9,76,109]. Wildfires typically consume surface fuels [9] and transition to crown fires depending on the quantity and continuity of surface and ladder fuels capable of sustaining a flaming front of sufficient height to ignite canopy fuels—namely foliage, and live and dead tree branches [110]. To sustain crown-to-crown wildfire transmission, the canopy fuel loading (CFL)—the combustible fuel available in canopies—plays an important role [111]. Combustible fuel is defined by its chemistry, continuity, and quantity in 3D space and time [112]. Therefore, quantitative estimation requires geometrical representation of the forest’s vertical strata through metrics such as CFL, canopy base height (CBH), and canopy bulk density (CBD) [69,106,112,113,114]. Accurate estimates of these metrics are an essential step towards fire behavior and smoke simulation models, enabling prediction of fire-line intensity, flame height, surface-to-crown wildfires transition, and crown-to-crown propagation, e.g., active crown fire if CBD > 0.10–0.15 kg/m3 [108,112,115].

Spatially explicit metrics such as CBH and CBD can be measured in the field or acquired from NFIs using allometric equations [116,117]. Multiplatform, multispectral, multitemporal LiDAR datasets now enable high-fidelity mapping of both surface and canopy fuels in complex forest landscapes through forest metrics [40,111,118]. Terrestrial LiDAR systems, e.g., TLS, capture fine-scale surface-fuel heterogeneity beneath dense canopies with higher geometric fidelity to estimate canopy and surface fuels through semi-empirical models [118,119,120], while aerial sensors—ULS, ALS, and SLS—provide extensive coverage of canopy fuel metrics and species composition over broad areas [69,121,122,123]. Integrating these platforms yields continuous, high-resolution representations of fuel continuity, heterogeneity, and quantity that exceed those of national fuel products derived primarily from optical remote sensing, e.g., LANDFIRE [121,124,125].

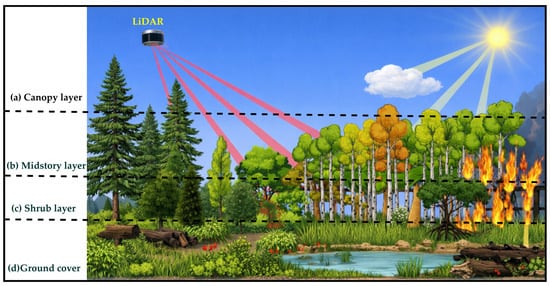

3.3. Structural and Compositional Metrics

To link the forest’s compositional, structural, productivity, and disturbances in interdisciplinary application domains (Section 3.1), quantitative mapping of vegetation structural, compositional, productivity, and disturbance attributes in wide-area capacity is essential [71]—whether for forest ecology, wildland fuel mapping [123], or management applications [52]. These metrics depend on a consistent framework of acronyms, terminology, and precise definitions, as shown in Figure 2. Nevertheless, the naming conventions for forest ecosystem metrics have proliferated and sometimes diverged in subtle ways [14,39,126]. Establishing a unified nomenclature not only provides a clear baseline for advanced studies but also ensures that metrics of canopy architecture, vertical layering, and species composition can be interpreted and compared reliably—particularly when derived from optical and LRS observations [42,127]. Ultimately, an in-depth understanding of the metrics is essential for full utilization in cross-domain application settings [71,104,128]. Therefore, Section 3.2 objectively defines their naming conventions, definitions, and application utility, establishing a baseline for further investigations [129].

3.3.1. Vegetation Cover Fraction (VCF)

VCF or vegetation frictional cover (fCover) is the projection of aboveground vegetation elements, e.g., stems, leaves, and branches, per unit horizontal ground surface [130], as defined in Equation (1). VCF is a dimensionless unit with values of 0 being the lowest (bare ground) and 1 being the highest (dense vegetation cover) for a given area.

where is vertically projected vegetation area onto the horizontal plane surface horizontal ground area of the same unit. Green Vegetation Fraction (GVF) and non-photosynthetic vegetation (NPV) are condition-specific components of VCF [131]. NPV and GVF vary when vegetation condition/type is changed and are frictional components of VCF [132,133]. To address the forest’s structural complexity through species-wise distribution, VCF has gained more specific terms and variants, as listed in Table 1.

Table 1.

Definitions and applications of fCover-based metrics describing horizontal and vertical vegetation structure across forest strata, from canopy dominance to understory and non-photosynthetic components.

Despite the clear definitions of VCF and its variants (Table 1), most image-segmentation approaches of optical remotely sensed data still struggle to separate GVF from heterogeneous backgrounds comprising litter, understory (GVF and NPV), soil, and sky due to spectral variability, uneven illumination, and complex background composition [140,141]. Although ground-based digital photography and UAV imagery (typically 1–5 cm pixel size) can retrieve different variants of fCover with reasonable accuracy, performance declines sharply under shadow effects, specular reflection, or mixed pixels in heterogeneous canopies, which requires extensive calibration and harmonization with other sensors [16,142]. Consequently, optical methods alone are challenging to produce consistent fCover estimates across diverse illumination, canopy structural complexity, and view-angle effects [142,143]. LiDAR relieves many of these limitations by directly capturing the 3D spatial distribution of vegetation elements at submeter to centimeter (cm) resolution [45,48,143]. ALS~0.5–2 m, provides landscape-scale estimates of canopy and subcanopy structure; ULS~2–10 cm, resolves fine-scale crown geometry; TLS~1–5 mm, characterizes foliage, branches, and within-crown gaps; and MLS is capable of capturing fine-resolution forest understory and forest floor [114,144,145,146].

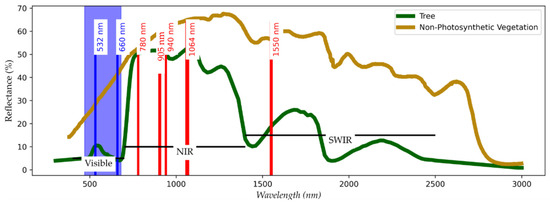

However, single-wavelength LiDAR (e.g., 1064 nm) alone does not capture spectral properties or photosynthetic status, making it complementary to optical and SAR observations [104]. Integrating LiDAR-derived structural metrics with optical VCF products and SAR observations that are sensitive to canopy volume and moisture conditions supports a more comprehensive and physically consistent characterization of vegetation cover, canopy architecture, and disturbance processes across spatial scales [40,62,147]. As emerging modalities, MSL and HSL have the potential to improve, e.g., GVF and NPV characterization using LRS by jointly capturing 3D structural information and reflectance properties [49,148], which is currently obtained through data fusion with optical multispectral and hyperspectral datasets [47].

3.3.2. Stand Structural Metrics

Measurable ecosystem indicators are needed for the scale and purpose at which forest ecosystems are being studied—forest ecology, forest management, and fire behavior modeling [134,149]. Forest ecosystem metrics are placed into two broad categories: the identification of key species-related structural metrics for area-based estimates [103], e.g., stand-level structural complexity [111]; and more specific individual-tree-level structural estimates [150]—as shown in Table 2. Stand-based structural attributes are instrumental in terms of understanding ecosystem functionality, forest management practices, and wildfire fuel loadings [110]. However, the structural complexity of individual trees necessitates segmentation and classification at the plant or organ level (e.g., stem, branches, and foliage), making LiDAR instrumental for resolving 3D tree architecture [110,111]. A list of widely used tree/stand structural attributes is summarized in Table 2.

Table 2.

Structural and functional attributes used to quantify three-dimensional tree and canopy complexity, spanning stem geometry, height structure, foliage organization, AGB cross-domain, and canopy fuel properties relevant to ecosystem functioning, disturbance, and fire behavior.

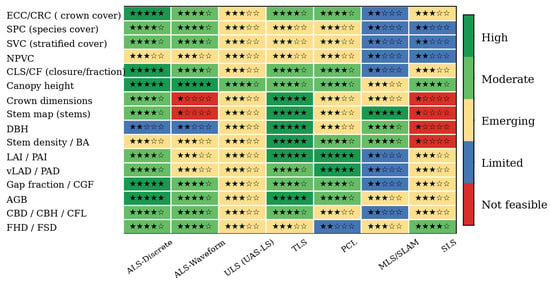

Together, the metrics in Table 1 and Table 2 provide a structured basis for quantifying forest composition, structural diversity, and disturbance status across local-to-global scales [9,66,74]. In general, size- and stocking-related attributes, e.g., DBH, , and AGB, are primarily driven by tree dimensions and wood properties, whereas canopy-functional and vertical-organizational attributes, e.g., LAD and vLAD, reflect foliage quantity, spatiotemporal arrangement over time, and within-canopy light environment—properties that are difficult to characterize comprehensively using traditional FFI surveys alone and are often mapped or scaled using remotely sensed datasets [26,58,65,162]. LRS has proven highly effective for deriving these metrics, providing detailed vertical stratifications at different scales and resolutions—with substantially greater fidelity than optical datasets [31,40,96,113,124]. While CBH, CBD, and CFL are routinely applied in wildfire risk assessment [54,123], other LiDAR-derived structural metrics, such as FHD, LAD, and FSD, remain underexplored in fire behavior research [161], yet are commonly used in forest ecology and biodiversity research despite their potential relevance to wildfire behavior. This gap highlights opportunities for broader cross-domain applications of forest structural metrics (Table 1 and Table 2).

It should be noted that the metrics listed in Table 1 and Table 2 provide fundamental and widely used forest structural and functional attributes [31]. A wide range of derivative attributes—many of which build directly upon these core metrics—can be found in the literature to further delve into forest ecology, forest management, and wildland fuel management practices [163]. As one example, the book “Forest Stand Dynamics” offers a mechanistic understanding of forest growth and stand development that helps silviculturists, forest managers, and ecologists design site-specific treatments balancing timber production, biodiversity, and ecosystem resilience under increasing multi-use demands [39,164].

4. Advances in LiDAR Sensors and Carrier Platforms

An ever-increasing technological advancement in laser, Inertial Measurement Unit (IMU), and Global Navigational Satellite System (GNSS) sensors, along with static, mobile, aerial, and SLS platforms, efficient data storage and processing units, led the development of an unprecedented wide array of LiDAR sensors [33,34,35,36].

4.1. Light Detection and Ranging: Conceptual Framework

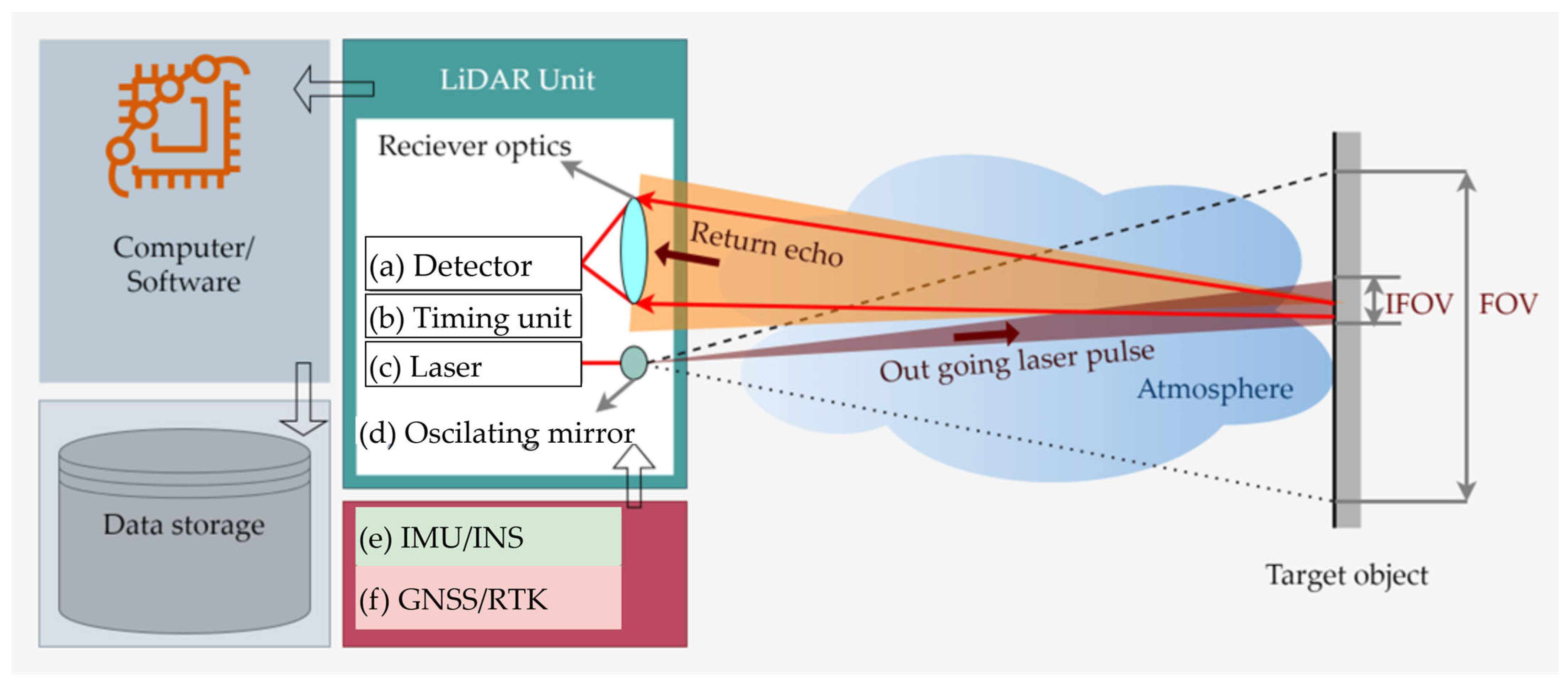

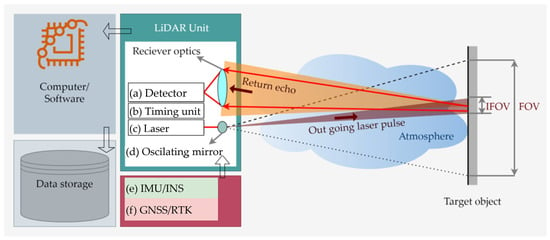

A LiDAR unit is an integration of laser(s), receiver optics, and a timing unit, along with IMU/INS and GNSS (Figure 3) [165,166]. A precise internal timing unit records the time lapse between the outgoing laser pulse and backscattered laser echoes received at the sensor’s receiver–detector (Figure 3a,b) to calculate the range of the target objects. The range of target objects is calculated using two widely used methods: time of flight (TOF), the time taken by an emitted laser echo to return from target objects, and the phase-shift (PS) method [167], in which the transmitted and received laser echoes are superimposed to calculate the phase-shifts, providing the range of the targets [168]. The oscillating mirror is used to cover a FOV, i.e., a maximum area that can be seen by a laser scanner (Figure 3d). The instantaneous (IFOV) is an individual laser footprint that depends on laser beam divergence (Figure 3c), i.e., laser light spreads as it moves through the atmosphere and is measured in milliradians (mrad). The beam divergence depends on several factors, such as atmospheric conditions and distance from the target. For instance, LiDAR casts a larger IFOV when atmospheric conditions deteriorate or when target distance increases; consequently, SLS platforms like ICESat-2 produce a 14 m diameter laser footprint, while airborne LiDAR yields a footprint of about 30 cm. Moreover, beam divergence is characteristic of spectral wavelengths at which laser echo is being transmitted, i.e., lasers emitted at different wavelengths have varying degrees of beam divergence [169]. Understanding these critical differences in LiDAR sensors’ physical concepts is essential to quantify the forest ecosystems in a multi-sensory conundrum [162].

Figure 3.

Schematic illustration of the principal functioning of a LiDAR system. The LiDAR unit consists of (a) a detector, (b) a timing unit, (c) a laser source, (d) an oscillating mirror, (e) an IMU/INS, and (f) a GNSS/RTK unit. The outgoing laser pulse is transmitted toward the target, and the returned echo is received by the detector. The recorded signals are processed by the onboard computer/software and subsequently stored in the data storage unit.

4.2. LiDAR Modalities

With recent advances in multifacet technological advances in sensor optics, LiDAR sensors purely from an operational perspective can be broadly classified into two main categories: linear-mode LiDAR and photon-counting LiDAR (PCL) systems [170,171]; since MSL and HSL are based on the same operational techniques with several wavelengths, they fall within these two broad classes [172,173,174]. LiDAR modalities are distinguished by the sensitivity of their objectively designed receiver optics, which determine how reflected laser echoes are treated by the receiver optics, as shown in Figure 4. The higher the sensitivity of the receiver optics, the weaker the signal they can detect [175]. Linear-mode LiDAR systems are further subdivided into two types: discrete-return and waveform [175].

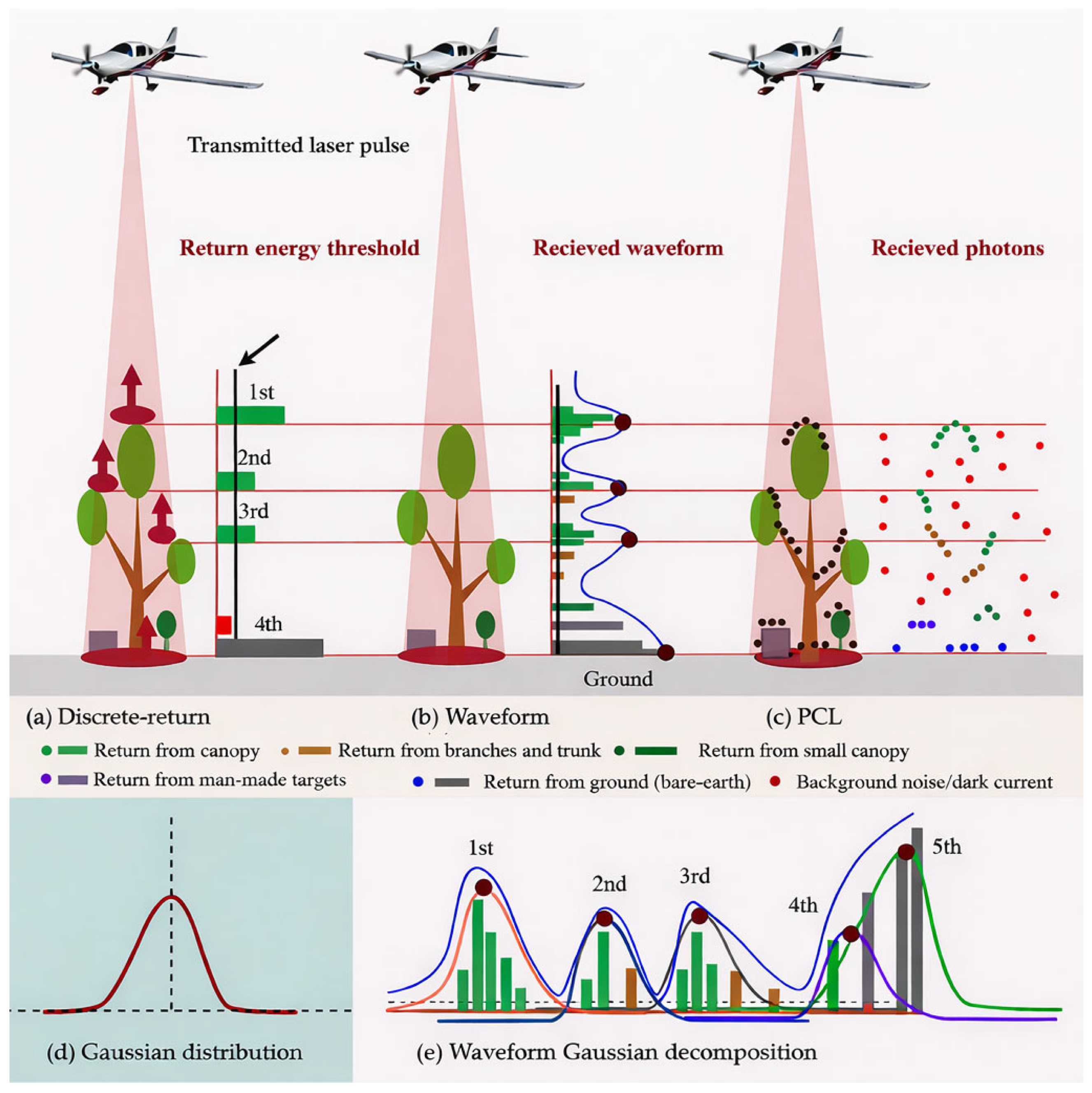

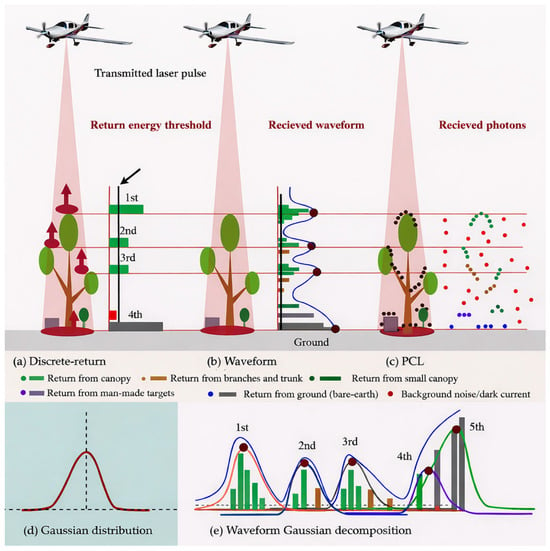

Figure 4.

Illustration of LiDAR modalities. (a) Linear-mode discrete-return. (b) Linear-mode waveform. (c) Photon Counting LiDAR (PCL). (d) Gaussian function for the energy distribution of a laser spot. (e) Gaussian decomposition of LiDAR waveforms to resolve different reflecting surfaces within the footprint of backscatter laser echoes.

4.2.1. Discrete Return

The discrete-return systems record the backscatter laser echoes that meet the receiver’s energy sensitivity threshold [64]—see Figure 4a. Discrete returns are a gated system—only significant returns are allowed through the receiver optics. For example, the portion of energy reflected from the top of the canopy or bare ground is recorded as the first, second, and/or third return from the middle canopies, and fourth return from the understory—shrubs or the ground [61]. Most discrete-return systems provide three or more returns, presenting the simplest geometry of terrestrial objects. Discrete return produces a 3D cloud of point measurements referred to as a “point cloud” [175]. Over the past two decades, discrete return has been the most widely used linear-mode system, resulting in massive point clouds of forest ecosystems [18,65,150,162]. The initial development of discrete-return systems offered easier onboard data storage, processing, and analysis—addressing technological constraints at a time when massive data processing and storage were computationally challenging [61]. In addition, point densities, i.e., total number of returns from a unit area/m2, were in the range of 1 to 1.5 points (pts)/m2 [176]. In recent times, discrete-return systems have undergone significant development in terms of onboard data storage, near-real-time data processing, and receiver optics [61,177]. Hence, massive data collection is possible with significantly higher point densities. Modern, discrete-return systems can register more than 10 returns with point densities ranging from 5 to 360 pts/m2, originating from terrestrial and aerial platforms [94,176,178].

4.2.2. Waveform LiDAR

Waveform systems known as full-waveform laser scanning [64] are capable of registering entire backscattered signals with a much higher sensitivity of receiver optics [179]—see Figure 4b. Consequently, waveform LiDAR presents richer information on vertical vegetation structural complexity than discrete-return systems [180,181].

To extract information from the waveform, Gaussian decomposition is performed to process the waveform into meaningful observations of terrestrial objects [182]—see Figure 4d,e. Waveform processing is a complex task that generally requires advanced signal processing pipelines to extract useful information about reflecting surfaces within the laser footprint, such as Gaussian decomposition [183]. The prerequisite of Gaussian decomposition is to define the number of fitting curves as a function of waveform data processing, i.e., to determine the total number of reflecting surfaces to be resolved (Figure 4e). Over the simplified surfaces, e.g., flat ground, a single surface can be resolved by fitting a single Gaussian curve, yet over complex surfaces, e.g., forest ecosystems, resolving all the reflecting surfaces within the laser footprint becomes challenging when several reflecting surfaces are unknown, or reflectance from different surfaces is diffused [64]—see Figure 4e. Recent advances in LiDAR waveform decomposition include Linearly Approximated Iterative Gaussian Decomposition (LAIGD) [183], which iteratively detects weak laser echoes in complex waveforms; Bayesian decomposition [184], where waveform parameters are estimated within probabilistic frameworks; B-spline and particle swarm optimization [185]. Furthermore, the Fuzzy Mean-Shift approach [186], Gaussian mixture model [187] and DL approaches can resolve individual and defused reflecting surfaces from single-wavelength or MLS/HSL data [188,189,190]. In recent times, with computationally efficient systems, e.g., cloud computing, waveform LiDAR and advanced waveform decomposition methods have become increasingly popular in forest ecosystem studies [97,181,191,192,193,194].

4.2.3. Photon Counting LiDAR

The PCL is an advanced LiDAR modality [195]. Unlike linear mode systems—such as discrete-return and waveform—the PCL technology is sensitive at the photon level, i.e., each photon reflected within the FOV of the sensor is recorded by the single photon detectors (SPDs) and counted as a valid observation, thereby making it capable of recording 1000 s of returns to construct very high-density point clouds [153,196]—see Figure 4c. Photon-level sensitivity enables PCL systems to operate with reduced power at higher altitudes than linear mode systems, e.g., ICESat-2 [193]. Conversely, at photon-level sensitivity, background noise photons are introduced, originating from sunlight of the same spectral wavelength or sensor thermal radiation—specifically dark current—as illustrated by red dots in Figure 4c. Figure 4c indicates that the signal photons are more clustered, and noise photons are randomly distributed, making noise-photon seclusion conceivable. Thanks to advanced noise filtering algorithms, a large share of the noise photons is removed algorithmically from final deliverables [196,197]. Currently, PCL systems are operational at 1550 nm and 532 nm, given that PCL technology is mature at these wavelengths; an example is ICESat-2 [198]. The PCL with much higher point densities can capture forest structural complexities in more detail than its counterpart’s linear mode LiDAR systems [174,175]. PCL systems are viewed as the next-generation LiDAR for high-resolution forest ecosystem studies [195,199].

4.3. Carrier Platforms

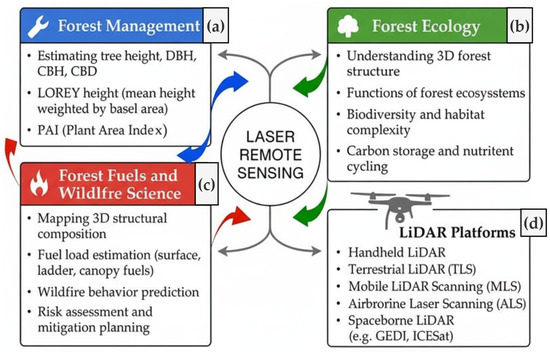

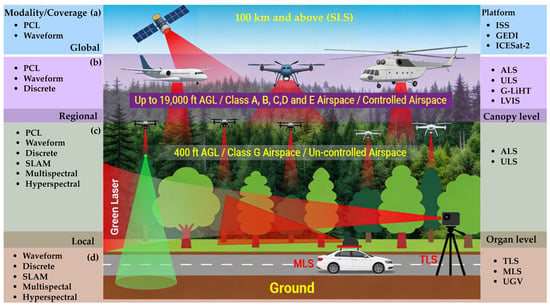

The choice of a LiDAR sensor modality (see Section 4.2) and carrier platform is influenced by several critical factors, including the characteristics of the operational environment, the scale and extent of the area to be mapped, logistics feasibility, and the integration with sister technologies—specifically IMUs and GNSS [200]. These diverse requirements have driven substantial advances in both LiDAR sensors (Section 4.2) and their carrier platforms over the past two decades [61,177]. This section provides a detailed overview of LiDAR sensors based on key aspects: (a) carrier platforms, (b) their payload capacities, (c) data quality and integrity, (d) spatiotemporal resolution, and (e) challenges in geometrical representation (Figure 5).

Figure 5.

Stratification of LiDAR sensor platforms by spatial coverage, acquisition mode, and operating altitude. (a) Spaceborne LiDAR systems (SLS), operating at orbital altitudes (≥~100 km above mean sea level), employ PCL or full-waveform techniques with meter-scale laser footprints to provide global observations (e.g., GEDI, ICESat-2). (b) Airborne LiDAR systems (ALS) deployed from crewed aircraft operate from approximately 120 m to 5800 m above ground level (AGL) within controlled airspace, supporting discrete-return, full-waveform, and photon-counting modalities with footprints ranging from centimeters (cm) to several meters (m), e.g., NASA’s Goddard LiDAR, Hyperspectral and Thermal Imager (G-LiHT), and the Land Vegetation and Ice Sensor (LVIS). (c) Low-altitude airborne and uncrewed systems, including commercial ALS and UAS LiDAR systems (ULS), operate below~120 m AGL (often in uncontrolled airspace), enabling high-resolution canopy-level measurements with high pulse repetition frequencies. (d) TLS and MLS, mounted on ground-based, e.g., Uncrewed Ground Vehicles (UGV) or crewed vehicle platforms, acquire ultra-dense point clouds at the organ and surface-fuel scale, supporting detailed characterization of stems, branches, and understory vegetation.

4.3.1. Terrestrial Laser Scanning

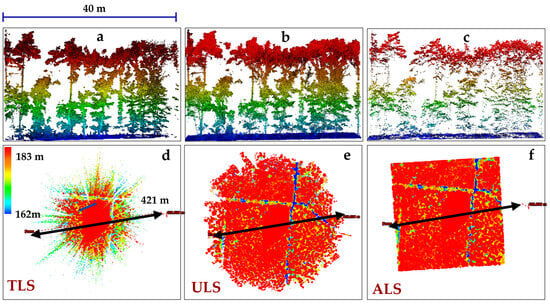

The term TLS is used when a LiDAR is operated in static mode, i.e., LiDAR unit(s) mounted over a fixed platform, e.g., crane, tower, gantry, and/or tripod. Currently, TLS is operational in linear modes, specifically discrete-return and waveform [64,97,167,201]. TLS systems are primarily mounted on tripods with 360-degree scanning configurations in circular patterns [202]. Unlike ALS (Figure 6c–f) and ULS (Figure 6b–e) scanning vegetated landscapes from nadir and off-nadir positions, the occlusions caused by branches, stems, and leaves are less pronounced in TLS point clouds [58,68]—see Figure 6a. However, the occlusion effect prevails with increasing distances from the scanner location; object(s) near the scan station occlude the far objects [126,203]. To cover a larger geographic extent and mitigate the occlusion effect, several TLS stations are analytically distributed throughout the study area, and point clouds originating from TLS stations are registered [58,204]. Point cloud registration is analogous to the georeferencing of satellite imagery to a single geographic coordinate system. In natural landscapes (see Figure 6), finding the conjugate points, i.e., common reference points in point clouds originating from two different TLS stations, is a challenging task. Alternatively, co-registration is achieved using 12–18 retroreflective targets as control points and marker-free registration approaches [204]. TLSs integrated with GNSS can help to align TLS stations automatically. In a multiplatform conundrum (Figure 6), point clouds originating from aerial platforms—such as ALS and ULS—are required to be registered with concurrent TLS point clouds to obtain a detailed geometric representation of forest landscapes [203,205,206]. Under dense canopies, TLS captures the understory in more detail than its counterparts, ALS or ULS, and vice versa [68,202,207]. However, the placement of TLS stations (Figure 6) requires theoretical and practical considerations—such as sensor type, range, scanning configurations—for TLS surveys in diverse forest environments to obtain canopy structural details [208].

TLS—such as the Leica ScanStation—can capture up to 50,000 pts/s, with a maximum range of 134 m at~18% surface reflectance, and a narrow beam divergence of 0.14 mrad, operating at the visible portion of the electromagnetic spectrum (532 nm). The term 18% reflectance means that only 18% of the incident laser beam energy is reflected from the target object(s); the remaining 82% is either transmitted, reflected away from the sensor FOV, or absorbed by the target object(s) [209]. Figure 6a demonstrates TLS-based extensive geometric representation of shrubs and trees’ woody parts, stems, branches, and non-woody leaves, which are essential for extracting forest structural attributes, e.g., DBH, tree height, stem density, PAI, LAI, and LAD [210]. TLS provides a time-series 3D representation of the forest’s vertical strata at a given locale, offering critical insights into the forest ecosystem’s function and its response to physical changes driven by climate change [13,146,211]. To scale up 3D vertical stratification from a locale to landscape level, TLS has extensively been used in conjunction with other carrier platforms—such as ULS and ALS (Figure 6)—and backpack laser scanners (BLS). A LiDAR unit in backpack configuration, carried by a human through forests, is generally useful to compensate for the occlusion effects of TLS [100,212]. Some recent reviews addressing the TLS applications in forestry, e.g., segregating tree wood–leaf components [213], an international TLS network for advancing 3D ecological insights into global tree systems [202], and expanding the horizon of TLS in forest ecology, are the most significant contributions extending the knowledge on TLS applications [100,146].

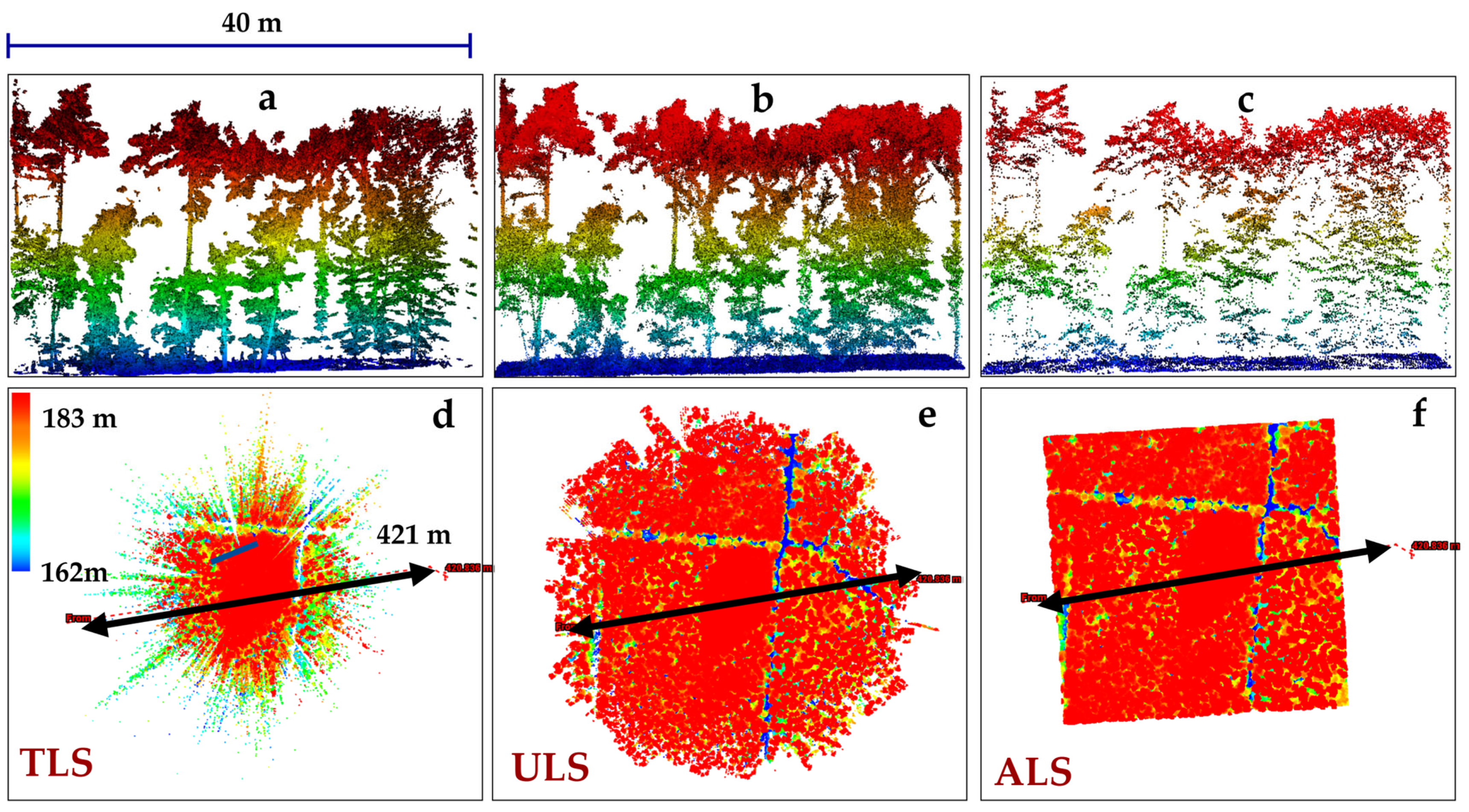

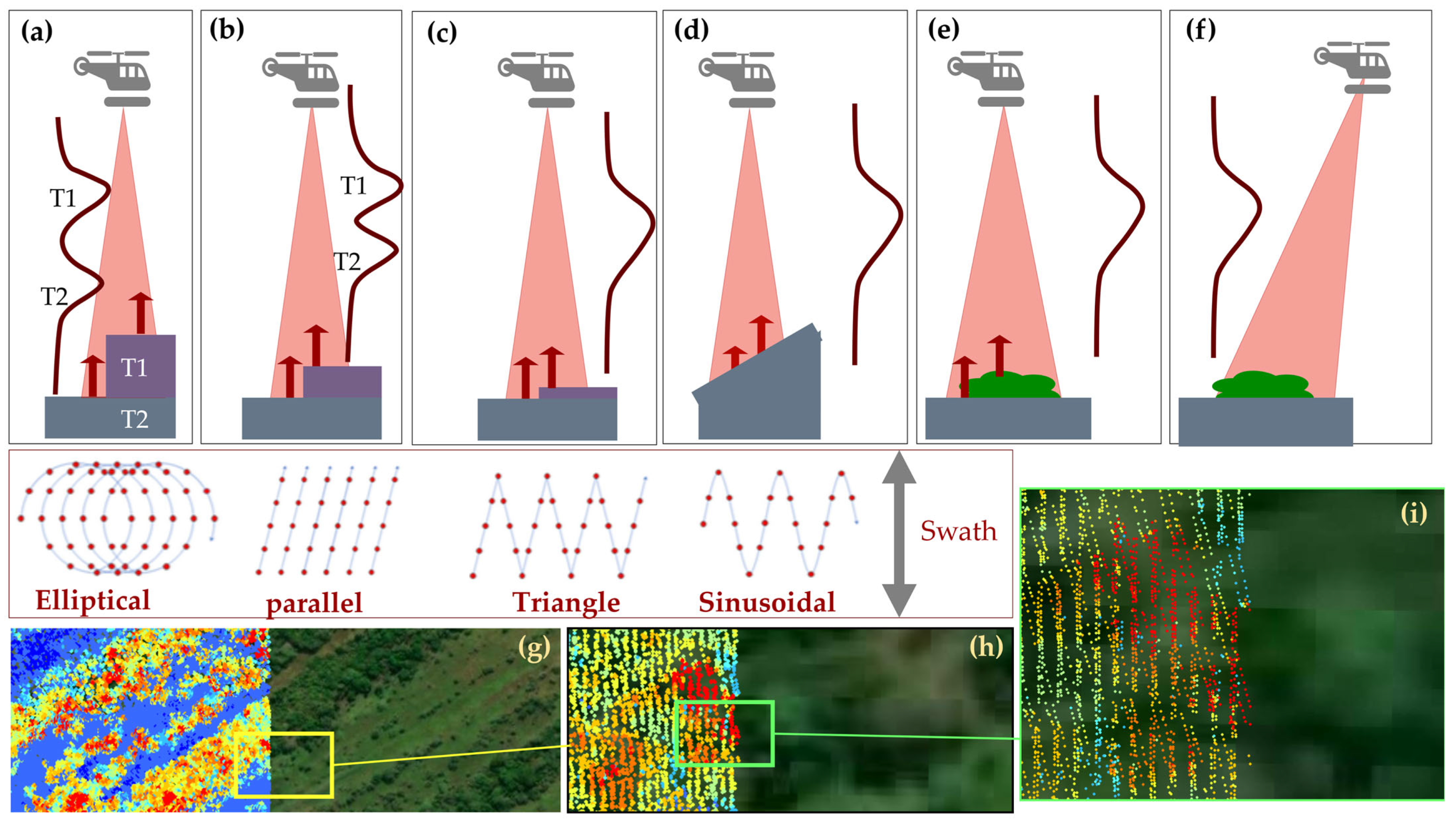

Figure 6.

Comparison of different LiDAR platforms for concurrent single surveys. (a–c) Side-view cross-sections (40 m transect, blue line in (d)) illustrating vertical canopy stratification, with point colors representing elevation above ground. (a) TLS captures detailed lower-canopy, stem, and understory structure but is affected by occlusion in the upper canopy. (b) ULS provides improved sampling of mid- and upper-canopy layers while partially resolving understory structure. (c) ALS primarily samples the upper canopy and ground surface, with limited representation of understory elements. (d–f) Plan-view representations of point-cloud density and sampling geometry for (d) TLS, (e) ULS, and (f) ALS, highlighting platform-dependent area-coverage in a single survey (black arrows). The concurrent open-source TLS, ULS, and ALS datasets were provided by Weiser et al. (2022) [214].

Figure 6.

Comparison of different LiDAR platforms for concurrent single surveys. (a–c) Side-view cross-sections (40 m transect, blue line in (d)) illustrating vertical canopy stratification, with point colors representing elevation above ground. (a) TLS captures detailed lower-canopy, stem, and understory structure but is affected by occlusion in the upper canopy. (b) ULS provides improved sampling of mid- and upper-canopy layers while partially resolving understory structure. (c) ALS primarily samples the upper canopy and ground surface, with limited representation of understory elements. (d–f) Plan-view representations of point-cloud density and sampling geometry for (d) TLS, (e) ULS, and (f) ALS, highlighting platform-dependent area-coverage in a single survey (black arrows). The concurrent open-source TLS, ULS, and ALS datasets were provided by Weiser et al. (2022) [214].

4.3.2. Mobile Laser Scanning

When a LiDAR is mounted on a moving platform—e.g., crewed or uncrewed UGV, a boat, or a robot—it is referred to as an MLS system. Moreover, hand-held MLS (HMLS) and BLS are also considered as MLS [151]. MLS systems integrate several sub-systems: laser unit(s), IMU(s), GNSS, and a control unit/onboard computer that operates all subsystems, synchronizes/integrates their measurements (Figure 3), data storage, near-real-time onboard data processing, and visualization [60,215].

Compared with their counterpart TLS (Figure 6a–d), MLS provides continuous coverage along the carrier platform’s trajectory [215,216]. The geometric complexity and positional accuracies of TLS and MLS are within millimeters (mm), given that LiDAR sensors are integrated with high-precision IMUs and GNSS. MLS is at the forefront of urban forestry—an emerging topic in modern cities and zero-emission ecosystems—combating climate change [217]. However, under the dense forest canopy, GNSS positioning could be challenging due to signal obstruction, compromising the positional accuracy of MLS [166]. Classical MLS, e.g., mounted on vehicles, is challenging to deploy in complex forest ecosystems; however, collecting time-series LiDAR data along roads venturing through the forest landscape provides an opportunistic window that has been successfully used for MLS applications [216]. Given the surveying challenges of complex forest landscapes, MLS is more favorable in urban forestry [217,218], while MLS systems in conjunction with TLS are more feasible in complex forested landscapes [100,119]. SLAM is also classified as an MLS system [217]. Given that the SLAM system is based on LiDAR sensors, the counterpart photogrammetry-based SLAM systems are also available, which can effectively overcome the issues of GNSS-based position errors [56,166]. “Multiplatform MLS: Usability and Performance” is an interesting read on different MLS carrier platforms and their applications in natural and urban environments [60].

4.3.3. UAS Laser Scanning

Unoccupied Aerial Vehicle (UAV) or UAS or Remote Piloted Aerial System (RPAS) are the acronyms used for any aerial platform controlled by a remote pilot in command (PIC), including tether systems, i.e., a permanent physical link through wire/cable to UAS flying above the ground, offering an unobstructed aerial view of large forested landscapes [122,212,219]—see Figure 6b–e. Moreover, in the context of acronyms used to describe LiDAR sensors onboard remotely controlled aerial platforms, acronyms such as UAS-LiDAR [220] and drone LiDAR systems (DLS) are also used [221]. In this article, we use the acronym “ULS,” which is more consistent with other laser scanning acronyms used, e.g., MLS, and ALS [61,215].

In recent times, UAS technology has significantly matured with more endurance, longer duration, autonomous flights, maneuverability, obstacle avoidance, and terrain following, with increased maximum take-off weight (MTOW), and vertical take-off and landing (VTOL) capabilities [222]. High level of miniaturization of onboard computers, GNSS, INS, receivers, and antennas, i.e., integration of subsystems to a hybrid measurement unit (HMU), enables ULS operations in real time with cm-level positional accuracies [223,224]. Therefore, ULS are more versatile and easier to use for Photogrammetry and Remote Sensing (PaRS) applications over desired areas with multiple payload capabilities—including multispectral, hyperspectral, and thermal sensors [219,223,225]. ULS offers the added advantage of reduced operational costs, fuel, total crew members, and controlled-space clearance—a requirement for ALS [143,226]. Moreover, ULS allows faster data collection and pre-/post-processing than ALS [227]. On the contrary, UAS are allowed in uncontrolled space (G, see Figure 5c) with a maximum altitude of 450 ft AGL and MTOW of 25 kg in the U.S., with a valid UAS pilot license [143]. Similar restrictions are applicable in many other countries. Such restrictions limit the ULS data collection over wide areas compared with ALS [228,229].

TLS and MLS—operational from the ground—pose navigational and logistical challenges in complex forest ecosystems [122]. Given the added advantage of aerial platforms, ULS has recently become more popular for LiDAR data collection over larger areas than their counterpart, TLS and MLS, capturing similar geometric complexities of forest ecosystems (Figure 6). With off-nadir pointing and different flight directions, occlusion effects were found to be lessened under dense forest canopies [59,226,230].

4.3.4. Airborne Laser Scanning

, and systems have been operational since 1999 and are the leading technique for LiDAR data collection over a larger extent, in a wall-to-wall mapping configuration [65]—see Figure 6c–f. ALS have been deployed using various LiDAR modalities, including discrete, waveform, PCL, MSL, and HSL [61,174,177]. ALS, onboard crewed aircraft with MTOW several fold higher than ULS, is capable of covering much larger areas in a single flight [231]. The widespread adoption of ALS can be attributed to its operational maturity and development spanning over two decades (Figure 6c–f). ALS are employed at state or national scales, delivering high-resolution topographic data, e.g., the 3DEP program [18,65]. The extensive use of ALS is evidenced by numerous LiDAR-related publications, highlighting its success and reliability [18,150,174,232].

ALS are flown by commercial operators with funding from research institutions or the government to acquire LiDAR datasets over wide areas, generally covering hundreds of km2 [65]. In developed countries, e.g., the United States, national-level ALS data are currently available for research and natural resource management [233]. Though multitemporal or bi-temporal (a site surveyed twice) are only available over certain ecological regions in the U.S. and other developed countries, e.g., Europe and Oceania [96,234]. ALS surveys covering an entire country are costly, time-intensive, and may take years to complete. For example, USGS-3DEP production began in 2016, and at the end of 2023, approximately 94% of the area was covered [235]. Such surveys require airspace clearance, meticulous planning, and favorable weather, making multitemporal ALS data collection at national or continental scales challenging [236].

Instead, a transect-based approach is often adopted, where each ALS transect covers a minimum survey area, e.g., 10 km by 10 km, and can be repeatedly surveyed to obtain multitemporal data. For example, the National Ecological Observatory Network (NEON) has developed the Airborne Observation Platform (AOP) from an ecological perspective to understand socioecological systems and coupled human–natural systems in CONUS [66]. The AOP is objectively designed to collect VHR spectroscopy (426 spectral wavelengths; 380–2510 nm) and LiDAR concurrently over 81 sites—a minimum sampling size of 100 km2. The AOP ensures higher spatial, temporal, spectral, and structural representation of unique terrestrial and aquatic ecosystems to understand their composition, structure, productivity, and disturbance status governed by natural and anthropogenic activities for the next 30 years [66]. ALS generate detailed vertical metrics for both area-based approaches and at a single-tree level. Similarly, ALS 901-transects were randomly distributed across the Brazilian Amazon and were surveyed in two consecutive campaigns (2016/2017 and 2017/2018) [237]. This approach enables efficient LiDAR data collection across distinct forest biomes within the available budget and timeframe. Nevertheless, MSL, HSL, and multitemporal ALS data over an entire country, state, or continent are challenging to acquire due to financial constraints [8].

4.3.5. Space-Borne LiDAR Systems (SLS)

With global coverage, SLS provides higher temporal resolution, on average, a monthly revisit time [105]. Onboard ICESat-1, GLAS was the first space altimeter that provided forest structural measurements using waveform LiDAR, following the ICESat-1, GEDI, and ICESat-2, which have been operational since 2018 [155,238]. Research sensors such as LVIS are also used to support space-borne LiDAR efforts, including GEDI calibration and validation [183]. Currently, SLS is operational in two modalities: full waveform and PCL [155].

GEDI was designed to quantify forest ecosystem dynamics in 3D space and time [31], providing structural attributes such as canopy height, LAI, sub-canopy structure, and AGB through ~25 m footprint waveforms [155]. Onboard the International Space Station (ISS), GEDI aims to characterize forests and topography between 51.6°N and S latitudes with eight lasers producing eight laser tracks with a separation distance of 600 m between two consecutive beams, covering an area of ~4.2 km in a single pass, whereas along-track separation distance between two laser shots on the ground is 60 m [73]. Compared to the waveform ICESat-1 with a single track on the ground with a footprint diameter of 60 m, GEDI provides very high-resolution topographic mapping [73,102]. Conversely, ICESat-2 has global coverage with a footprint diameter of ~14 m, a footprint separation distance of 0.74 m along the track, and a total of six laser tracks on the ground with a separation distance of 90 m across two consecutive laser tracks [73]. Unlike ICESat-1 and GEDI, ICESat-2, equipped with a PCL sensor, provides dense point cloud transects over terrestrial surfaces [239]. GEDI is objectively designed to study tropical forest ecosystems within 51.6°N and S latitudes—a limitation stemming from ISS orbital trajectory. Consequently, boreal forest ecosystems are not covered by GEDI footprints; however, ICESat-2 provides excellent spatiotemporal coverage with geocentric polar low Earth orbit (LEO) at an approximate 480 km above the ground [240]. Though the ICESat-2 science mission is polar icecaps, global coverage further extends ICESat-2 data applications in forest ecosystem investigations, similarly to GEDI, e.g., forest canopy height [116].

GEDI, ICESat-1, and ICESat-2 are profiling LiDAR systems unable to cover the entire Earth’s surface, i.e., wall-to-wall mapping. GEDI is estimated to sample about 4% of Earth’s land surface due to the sampling geometry of space-borne LiDAR. SLS, particularly footprint size, shot spacing, and across-track configuration, directly control the degree to which canopy heterogeneity is captured [62]. Moreover, cloud cover degrades the received laser echoes—most parts reflected from the cloud—further reducing the quality of data [241]. GEDI waveforms can also exhibit saturation behavior in very tall (>60 m) or structurally complex tropical canopies, where the returned energy may not fully represent the lower strata, introducing uncertainty in AGB retrieval, which can be compensated for by using GEDI power beam and nighttime datasets [242]. On the contrary, ICESat-2 ∼14 m PCL sensor footprints and sub-meter along-track spacing provide dense sampling transects but exhibit photon noise, background solar contamination, and the need for temporal and spatial filtering to recover ground and canopy features reliably—particularly in dense forests or high solar angles [241].

Recent studies have shown that these mission characteristics can be mitigated by fusion frameworks that integrate SLS with multispectral imagery, Sentinel-1/2, Landsat, ICESat-2 ATL08 canopy metrics, and high-resolution ALS/ULS datasets [62]. Such hybrid approaches—ranging from statistical upscaling to advanced ML/DL models—greatly improve wall-to-wall AGB estimates, canopy height maps, and disturbance monitoring by transferring detailed structural information from ALS/ULS data to regions sampled sparsely by GEDI or ICESat-2 [40,232]. A growing body of literature demonstrates that incorporating contextual information (spectral, textural, topographic, and phenological predictors) reduces uncertainties in AGB, canopy cover, and height estimates, while also enabling cross-sensor harmonization [15,62,241]. Collectively, this integration framework has transformed SLS missions from stand-alone sampling systems into core components of multi-sensor global AGB and forest-structure mapping [63,239].

SLS has larger footprints with a diameter ≥ 5 m. Consequently, individual stand-level structural attributes, e.g., DBH and individual tree height, are difficult to map. However, PAI, CBD, CBH, FHD, mean canopy height (MCH), and LAI demonstrated successful applications using area-based approaches at a 25 m resolution [20,31,192,243,244]. Currently, GEDI repeat footprints have been successfully used to measure forest disturbances, forest recovery, canopy cover (CC), impact of fire severity on forest structure, vegetation coverage, Clamping Index (CI)—a measure of foliage grouping within the canopy causing non-random leaf spatial distribution, forest canopy height, and AGB at regional, national, and global scales [63,105,155,232,243,245]. Moreover, the GEDI core science mission team has been processing GEDI data at the global level, resulting in global products: Level L1B (geolocated waveform); Level L2B (CCF, LAI, and LAI profile); Level L3 (waveform structural metrics); Level L4A (footprint level, ~25 m AGB); and Level L4B (AGB at 1 km resolution). GEDI data products have been extensively used to study forest ecosystem dynamics at scales and resolutions unprecedented in SLS history [102,155]. Countries with little or no coverage of aerial LiDAR coverage ALS, and SLS data are an exceptional alternative for forest ecosystems investigations and forest management practices, supporting biodiversity conservation of national interests [213], carbon emissions, and forest fires [11,73,246].

The success of GEDI and ICESat-2 compared with predecessor ICESat-1 is self-evident, having significantly improved over the past decade [73]. The future missions, e.g., Multifootprint Observation LiDAR and Imager (MOLI), are a candidate successor of GEDI that will be onboard ISS with laser beams of varying footprints (currently, GEDI is sampling at a fixed footprint ~25 m) [24]. With varying footprint sizes, MOLI is expected to estimate the slope effect on LiDAR waveforms using different laser beam footprints, and MS imager will provide the concurrent vegetation index to precisely determine the LiDAR footprints’ geolocation, thereby reducing the overall footprint geolocation error (GEDI has a nominal footprint error of ~10 m or more) [247]. Furthermore, the Earth Dynamics Geodetic Explorer (EDGE) is the first global swath-imaging lidar satellite, featuring a U.S.-developed 40-beam system that will deliver unprecedented global coverage and rapid targeting of land, ice, and coastal regions; it is projected to launch in 2030 or 2032. EDGE will provide high-value, scientifically verified data for resource management, risk assessment, and strategic planning, while maintaining U.S. leadership in topographic lidar technology. Its laser altimetry will offer unmatched vertical accuracy and canopy penetration for monitoring three-dimensional changes in terrestrial and ice systems, but as of now, EDGE is still in development and not yet contributing data [248].

4.4. LiDAR Specifications

Quality Control (QC) is a first step toward LiDAR data processing; it is generally carried out by commercial operators responsible for LiDAR surveys [249]. QC establishes a standard protocol, such as horizontal and vertical accuracies of point clouds, coordinate systems, point density, and, in some cases, classification of point clouds into several classes, such as ground points (bare earth), trees, buildings, etc. [250]. Small-footprint LiDAR systems—ALS, MLS, and TLS—waveform datasets are decomposed to point clouds using waveform decomposition methods to obtain high-density point clouds [250]. LiDAR performance is assessed by the LiDAR specifications applicable to most of the sensors and available in the user manual or provided by LiDAR operators [224,251]. LiDAR specifications are important to understand the sensor limitations as well as the quality of the data acquired. Furthermore, for LiDAR multisensor data acquisition, the LiDAR specification provides the sensor’s operational parameters for data harmonization and calibration [162].

4.4.1. LiDAR Equation

A thorough understanding of the LiDAR equation (Equation (2)) is crucial, as it forms the basis for comprehending how most LiDAR system specifications are derived [252,253].

Equation (2) is a simplified form of the LiDAR equation, where is the energy received at the detector with an effective aperture area , is the total transmitted pulse energy, is the target range, represents the two-way atmospheric transmission, is the receiver optical efficiency, and is the target reflectance. Here, represents an effective reflectance under the Lambertian approximation; for anisotropic targets, bidirectional reflectance distribution function (BRDF) effects modify the returned energy. The received energy is proportional to the receiver aperture area ; a larger detects more reflected energy than a smaller receiver, thereby increasing the maximum detectable range. For example, the GEDI Receiver Telescope Assemblies (RTA) have a diameter of 0.8 m [247]. Moreover, higher target reflectance () results in increased backscattered energy, and depends on the sensor’s wavelength [254]. Currently, LiDAR systems commonly operate in the green and near-infrared (NIR) regions of the electromagnetic spectrum, where vegetation reflectance is relatively high and research maturity is well established (Section 4.1). Accordingly, topographic LiDAR systems deployed as ULS, ALS, MLS, and TLS platforms predominantly operate in the NIR region [136,254]. The received energy decreases approximately as due to geometric spreading and is further attenuated by the two-way atmospheric transmission , which depends on atmospheric conditions and path length; under haze, aerosols, or high water-vapor content, decreases, resulting in reduced received energy [136].

4.4.2. Range Precision, Range Resolution, Scanning Patterns

Range precision determines the vertical measurement uncertainty (z) of terrestrial objects. For example, a range precision of ±2 cm indicates that repeated LiDAR measurements of the same target exhibit a random uncertainty on the order of 2 cm, typically quantified using the root mean square error (RMSE) relative to high-precision GNSS observations or in situ physical measurements [249]. Range precision is therefore a measure of repeatability rather than systematic bias and is commonly assessed by acquiring multiple measurements over identical targets across successive frames.

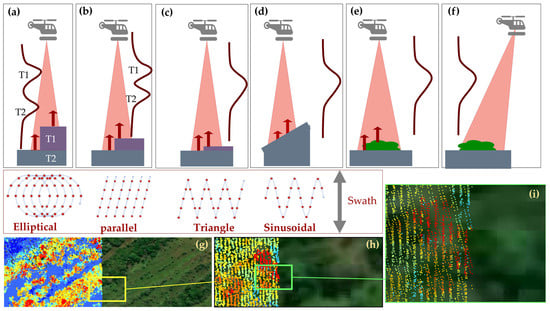

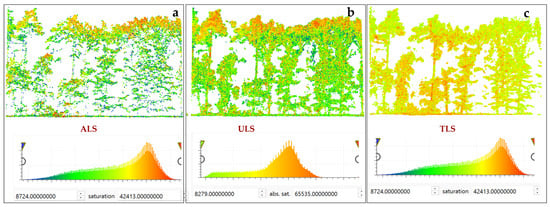

A related but distinct concept is range resolution, which refers to the minimum separable distance between two reflecting targets along the laser propagation direction [97]. LiDAR sensors are characterized by a predefined range resolution, determined by system parameters such as pulse duration and receiver bandwidth. When the range resolution is smaller than or equal to the vertical separation between two reflecting surfaces, the targets can be resolved as distinct returns (e.g., T1 and T2 in Figure 7a,b). Conversely, when the range resolution exceeds the separation between reflecting surfaces, the corresponding echoes overlap and cannot be resolved individually, resulting in broadened waveforms (Figure 7c) [181]. However, the resulting waveform broadens due to diffused reflection. Similar waveform broadening effects are observed over sloping terrain (Figure 7d), low vegetation such as shrubs (Figure 7e), and under off-nadir scanning geometries (Figure 7f). For example, the RIEGL miniVUX-1UAV sensor (RIEGL Laser Measurement Systems GmbH, Horn, Austria) has a nominal range resolution of approximately 0.90 m; consequently, vegetation layers with heights below~0.90 m AGL cannot be reliably separated, whereas the RIEGL VUX-1UAV, with a finer range resolution of~0.45 m, can resolve such low-stature vegetation [59,228]. Full-waveform decomposition techniques are particularly effective for separating overlapping echoes from closely spaced targets [181,185,189,191,196], as explained in Section 4.2.2. As a result, low vegetation is often difficult to identify and remove in DEMs derived from discrete-return LiDAR, whereas waveform decomposition has been shown to improve low-vegetation discrimination in DEM generation [191].

Pulse width (or pulse duration) is a key parameter influencing range resolution and is typically measured in nanoseconds (ns) [177]. For instance, the GEDI sensor operates with a pulse width of approximately 15 ns, quantified using the full width at half maximum (FWHM), defined as the temporal interval between the half-power points on the rising and falling edges of the pulse [255]. Using the FWHM, theoretical range resolution can be estimated using Equation (3):

where is range resolution in Equation (3), and for the GEDI sensor with 15 ns (FWHM), the vertical distance of 2.25 m between two target objects within the footprint of 25 m can be resolved by LiDAR sensor, which is lower range resolution compared with ALS, and ULS systems operational at FWHM of ranges between (3–6) ns provide much higher range resolutions than counterpart space-borne LiDAR sensors. The SLS are designed to provide vertical stratification using area-based approaches—very high range resolutions are not as important a factor as counterparts, terrestrials, and aerial LiDAR systems—TLS, ULS, and ALS, which provide the vertical stratification at the individual tree/plant level [59,143,228] (see Figure 6).

Figure 7.

LiDAR range resolution and scanning patterns. (a–c) Effects of range resolution relative to target separation, illustrating elliptical, parallel, and triangular scan patterns. (d–f) Range resolution over sloped terrain, low vegetation–ground interactions, and off-nadir viewing geometry. (g) ALS point cloud overlaid on Google Satellite imagery [256]. (h,i) Zoomed views showing parallel scanning under different flight directions, yielding high-density point clouds; blue points indicate low elevation (ground/shrubs) and red points indicate high elevation (mangrove canopy) in the Zambezi Delta, Botswana [257].

Figure 7.

LiDAR range resolution and scanning patterns. (a–c) Effects of range resolution relative to target separation, illustrating elliptical, parallel, and triangular scan patterns. (d–f) Range resolution over sloped terrain, low vegetation–ground interactions, and off-nadir viewing geometry. (g) ALS point cloud overlaid on Google Satellite imagery [256]. (h,i) Zoomed views showing parallel scanning under different flight directions, yielding high-density point clouds; blue points indicate low elevation (ground/shrubs) and red points indicate high elevation (mangrove canopy) in the Zambezi Delta, Botswana [257].

The transmitted laser pulses are characterized by their footprint size as a function of flight altitude, peak transmitted power, their shape, beam divergence, and irradiance distribution of target objects. Thus, the received waveform is influenced by target characteristics such as size, orientation, 3D arrangement of target constituents, and bidirectional reflectance distribution function (BRDF) characteristics [181]. Nevertheless, implicit characteristics of emitted pulses, such as peak power, shape, beam divergence, and waveform duration or length, and explicit characteristics of backscatters, surface structural complexity or simplicity, collectively influence the range resolution [138,169]. Among implicit waveform characteristics, separability strongly depends on the pulse duration or length to resolve most of the target objects, e.g., different layers of forest canopy, which is termed “scatterer depth” [228,258]. In general, longer duration laser pulses can penetrate to the forest floor of taller forest canopies than shorter duration laser pulses. This is due to the reason that a shorter length (ns) emitted laser pulse decays quickly, traveling through the scatterer depth, such that the entire waveform is consumed before reaching the forest floor, or reflected pulses from surface layers are too weak to reach the noise exceeding amplitude sequences (NEASs) [259]. NEASs defines a threshold above which the amplitudes of reflected pulses are considered valid target reflections. Typically, to obtain higher confidence in target resolution, NEASs need to be five times higher than the noise threshold, leading to exclusion of weak backscattering by limiting the LiDAR resolution, i.e., the backscatter length is larger than the LiDAR system’s maximum recording length [181,247].

To combat the limiting factors—namely, waveform energy and duration—a more powerful transmitted laser pulse of longer duration is capable of sampling the entire forest vertical 3D strata, thereby increasing the LiDAR resolution [181]. This is generally a two-facet solution: increased pulse energy is useful to resolve the weak target(s) backscattering by surpassing the NEASs, and longer duration ensures covering the entire backscatter(s) length by receiving valid echoes from the forest canopy and ground simultaneously, yielding multiple returns (Equation (3)). On the other hand, sensitive receiver optics help to register even weaker backscatters, thereby increasing the LiDAR resolution. In the context of increased pulse energy, the characteristic behavior of backscatter length is subject to the LiDAR wavelength utilized. For that reason, the counterpart bathymetric LiDAR is operational at visible wavelength (532 nm) to ensure higher reflectance through the water column and seafloor, which is challenging at NIR Nd:YAG type laser operating with a near-infrared wavelength (e.g., 1064 nm) [181,259]. An in-depth LiDAR wavelengths impact on deciphering the terrestrial surfaces is discussed in Section 4.4.4.

The characteristic understanding of transmitted pulse length or duration is an important factor in choosing the right LiDAR sensor for forest LiDAR surveys, given that the pulse duration is generally a built-in LiDAR sensor parameter that cannot be programmed, unlike other LiDAR operational parameters, e.g., PRR, pulse energy, flight altitudes, and flight speed. For that reason, PRR, flight altitudes, waveform energy, and flight speed are generally evaluated to assess the performance of LiDAR sensors [260].

Apart from range resolution, the scanning patterns also affect the structural composition and target response. Along the flight path, the laser signals are oscillated within the given FOV (swath) to cover the larger area in a single flight (Figure 7). Machinal oscillators rotate laser echoes in the sensor FOV using elliptical rings (Figure 7a), parallel lines (Figure 7b), or triangular (Figure 7c) and sinusoidal (Figure 7d) patterns. Unlike optical satellite imagery [133], densely sampled LiDAR point clouds (Figure 7g) are randomly distributed with uneven point distribution per unit area (Figure 7h,i). The uneven distribution is caused by an occlusion effect: laser echoes cannot penetrate through tree stems and branches [184]. To deal with occlusion effects that are caused by directional blocking, several flights over a given area with off-nadir-pointing can help to resolve the directional blocking. Figure 7i shows the point clouds acquired with two different flight directions with a parallel laser scanning pattern [261].

4.4.3. Pulse Repetition Rate/Frequency

The pulse repetition rate (PRR) or pulse repetition frequency (PRF) of LiDAR refers to the total number of emitted laser pulses. It is typically measured in hertz (Hz), where 1 Hz equals 1 pulse, and modern LiDAR sensors have a few hundred Hz to several hundred kHz (1000 Hz is equal to 1 kHz) [262]. The PRF depends on the efficiency of lasing materials: an efficient lasing material sensor generates more laser pulse/s. A laser scanner with a fixed PRF cannot resolve the forest vertical strata fully when the forest survey area transitions from savannas to dry land ecosystems or from boreal forests to tropical dense rainforests. Moreover, at a given PRR, forest vertical stratification is subject to pulse energy and flight speed (m/s) [263].

A general rule of thumb is that higher PRF results in higher backscattered laser echoes, thereby yielding higher point densities [264]. For sensors with lower PRF, by reducing the carrier platform’s speed, the desired point densities are achievable, but it could be more challenging for fixed-wing aircraft than for helicopters [176]. ALS systems allow different PRFs to be programmed for data collection, e.g., ALTM3100 is operational at 50 kHz and 100 kHz. ULSs provide comparable PRF to ALS at much reduced pulse energy and altitudes [259]. For that reason, ULS provides much higher point densities than its counterpart, ALS (Figure 6). Increased PRR consumes power from onboard carrier platforms: ULS are operated at much reduced power, while counterpart ALS can afford the power consumption by harnessing power from manned aircraft to ensure prolonged flight time and significantly large area coverage. The modern LiDAR sensors come up with higher PRF, e.g., Reigl VQ-1560 versus Optech ALTM 3100 (Teledyne Optech Inc., Vaughan, ON, Canada), see Table 3. A higher PRF sensor yields higher point density and productivity; therefore, PRF remains a key selling point of LiDAR sensors [94,200].

Table 3.

A list of frequently used LiDAR sensors with their characteristics, platforms, flight altitudes, and pulse repetition rate (PRR) for terrestrial ecosystems 3D mapping.