Development of an ESD Indicator for Teacher Training and the National Monitoring for ESD Implementation in Germany

Abstract

1. Introduction

2. Formal and Conceptual Context of the Study: National Monitoring of ESD Implementation

2.1. Broader Framework of National Monitoring for ESD

2.2. Theoretical Implications for the Indicator Development

3. Method and Data for ESD-Relevant TTs

3.1. Implications of Method for Indicator Development

3.2. Data Collection

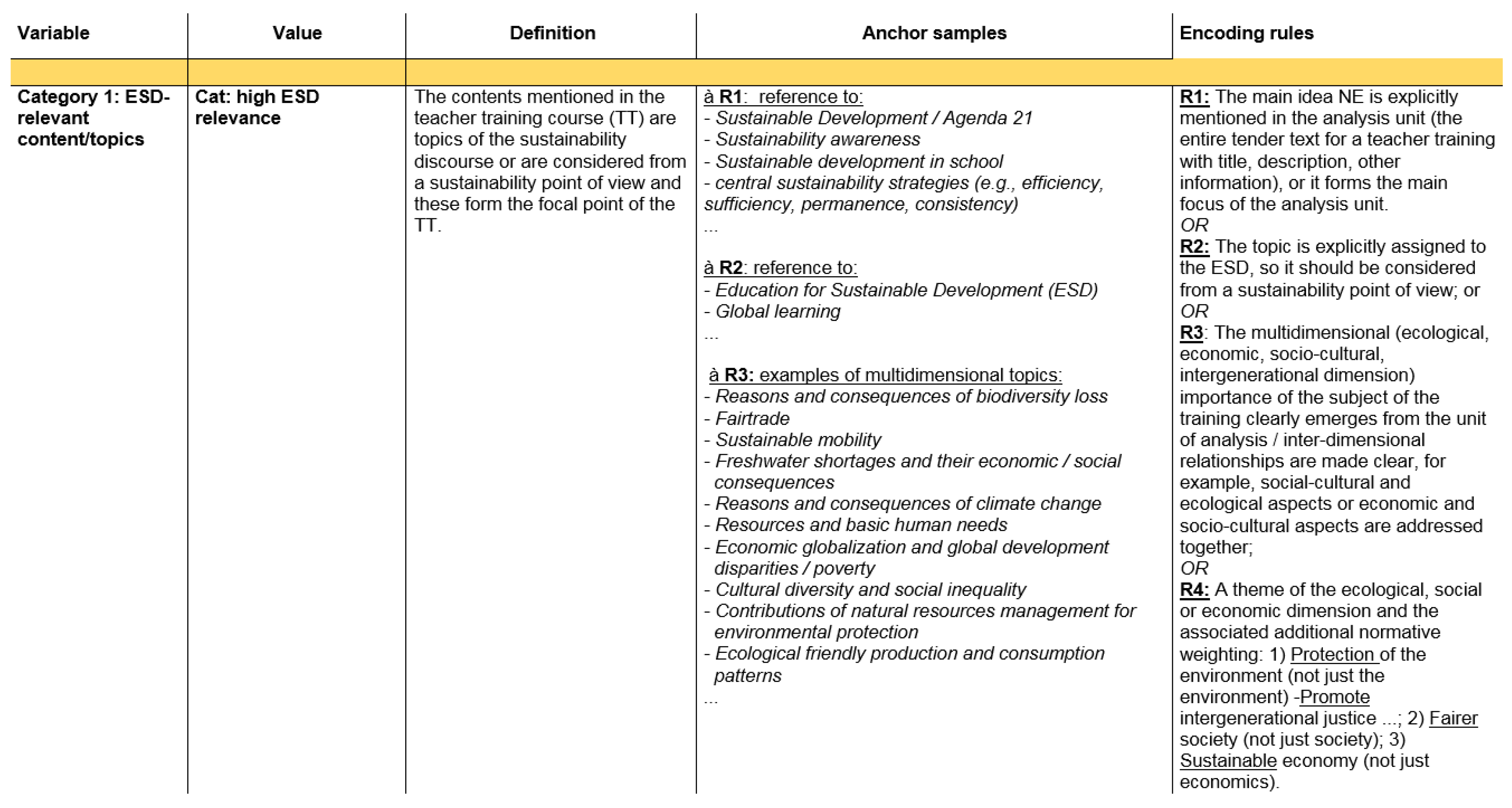

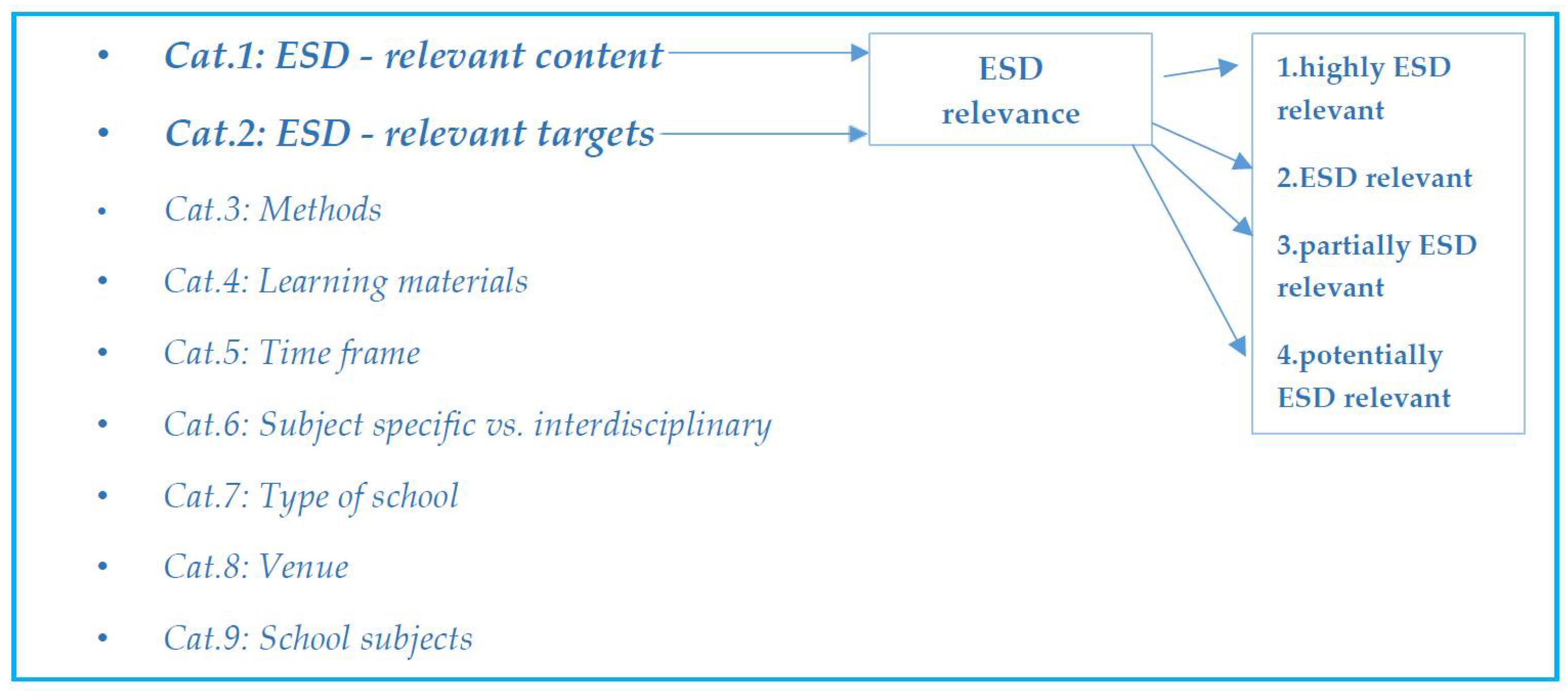

3.3. Data Analysis—Coding ESD-Relevant TT Using a Weighted Ordinal Coding System

4. Results

4.1. Total Numbers and Porpotions for Germany—The Indicator FESD (Basic)

4.2. Rated ESD Relevance—FESD (Basic, Rated)

4.3. FESD(pro)—Qualitative Rating System for ESD Relevance with a Quantitative Aspect

4.4. Summary of the Results

5. Discussion

6. Conclusions & Outlook

Supplementary Materials

Author Contributions

Acknowledgments

Conflicts of Interest

Abbreviations

| BMBF | German Federal Ministry for Education and Research |

| ESD | Education for Sustainable Development |

| FESD | Formula for the ESD-indicator for TTs |

| GAP | Global Action Program |

| PISA | Program for International Student Assessment |

| TIMSS | Trends in International Mathematics and Science Study |

| TT | In-service teacher training accredited by the states |

| UNECE | United Nations Economic Commission for Europe |

| UNESCO | United Nations Educational, Scientific and Cultural Organization |

| SDGs | Sustainable Development Goals |

References

- Leicht, A.; Heiss, J.; Byun, W.J. Issues and Trends in Education for Sustainable Development; UNESCO: Paris, France, 2018; Available online: http://unesdoc.unesco.org/images/0026/002614/261445e.pdf (accessed on 8 March 2018).

- UNECE (United Nations Economic Commission for Europe). Learning from Each Other, the UNECE Strategy for Education for Sustainable Development; UNECE: Geneva, Switzerland, 2009; Available online: http://www.unece.org/fileadmin/DAM/env/esd/01_Typo3site/LearningFromEachOther.pdf (accessed on 6 January 2017).

- Bormann, I.; Heinrich, M.; Hamborg, S.; Lambrecht, M.; Nikel, J.; Haker, C.; Brüsemeister, T. Governance von Transferprozessen im Mehrebenensystem. Gegenstandsbezogene und methodologische Überlegungen. In Governance-Regime des Transfers von Bildung für Nachhaltige Entwicklung: Qualitative Rekonstruktionen; Bormann, I., Hamborg, S., Heinrich, M., Eds.; Springer VS: Wiesbaden, Germany, 2016; pp. 7–41. [Google Scholar]

- Kolleck, N.; Jörgens, H.; Well, M. Levels of Governance in Policy Innovation Cycles in Community Education: The Cases of Education for Sustainable Development and Climate Change Education. Sustainability 2017, 9, 1966. [Google Scholar] [CrossRef]

- Læssøe, J.; Mochizuki, Y. Recent Trends in National Policy on Education for Sustainable Development and Climate Change Education. J. Educ. Sustain. 2015, 9, 27–43. [Google Scholar] [CrossRef]

- Adomßent, M.; Bormann, I.; Burandt, S.; Fischbach, R.; Michelsen, G. Indikatoren für Bildung für nachhaltige Entwicklung. In Bildung für Nachhaltige Entwicklung: Beiträge der Bildungsforschung; Bundesministerium für Bildung und Forschung (BMBF), Ed.; Bundesministerium für Bildung und Forschung (BMBF) Referat Bildungsforschung: Bonn, Germany, 2012; pp. 71–90. [Google Scholar]

- Rode, H. Different Indicators for Different Contexts? Developing Indicators for ESD in Germany; University of Bath: Bath, UK, 2006; Available online: http://www.bath.ac.uk/cree/resources/esrcesd/rode.pdf (accessed on 11 July 2018).

- Siemer, S.; Elmer, S.; Rammel, C. Pilotstudie zu Indikatoren Einer Bildung für Nachhaltige Entwicklung. Available online: http://afnk.de/wp-content/uploads/2012/10/Exkurse_2_Pilotstudie_Indikatoren.pdf (accessed on 5 January 2017).

- Tilbury, D.; Janousek, S. Development of a National Approach to Monitoring, Assessment and Reporting on the Decade of Education for Sustainable Development, Stage 1: Identification of National Indicators. Summarising Documented Experiences on the Development of ESD Indicators and Networking with Expert Groups on ESD Indicators; Australian Research Institute of Education for Sustainability and Australian Government Department of the Environment and Water Resources: Sydney, Australia, 2006. Available online: http://aries.mq.edu.au/projects/esdIndicators/files/ESDIndicators_Feb07.pdf (accessed on 4 May 2018).

- Capelo, A.; Santos, C.; Pedrosa, M.A. Education for sustainable development in East Timor. Asian Educ. Dev. Stud. 2014, 3, 98–117. [Google Scholar] [CrossRef]

- Blum, N.; Nazir, J.; Breiting, S.; Goh, K.C.; Pedretti, E. Balancing the tensions and meeting the conceptual challenges of education for sustainable development and climate change. Environ. Educ. Res. 2013, 19, 206–217. [Google Scholar] [CrossRef]

- Capelo, A.; Conceição Santos, M.; Pedrosa, M.A. Chapter 5: Education for Sustainable Development Indicators, Competences and Science Education. In Contributions to the UN Decade of Education for Sustainable Development; Goncalves, F.J., Pereira, R., Leal Filho, W., Eds.; Lang Peter GmbH Internationaler Verlag der Wissenschaften: Frankfurt, Germany, 2012. [Google Scholar]

- Haan, G.D. Gestaltungskompetenz als Kompetenzkonzept für Bildung für nachhaltige Entwicklung. In Kompetenzen der Bildung für Nachhaltige Entwicklung: Operationalisierung, Messung, Rahmenbedingungen, Befunde; Bormann, I., Haan, G.D., Eds.; VS Verlag für Sozialwissenschaften: Wiesbaden, Germany, 2008; pp. 23–42. [Google Scholar]

- Lingard, B. The impact of research on education policy in an era of evidence-based policy. Crit. Stud. Educ. 2013, 54, 113–131. [Google Scholar] [CrossRef]

- Organisation for Economic Co-operation and Development (OECD). Evidence in Education, Linking Research and Policy 2007. Available online: http://www.oecd.org/education/ceri/47435459.pdf (accessed on 27 March 2018).

- Biesta, G. Good education in an age of measurement: On the need to reconnect with the question of purpose in education. Educ. Assess. Eval. Account. 2009, 21, 33–46. [Google Scholar] [CrossRef]

- United Nations. Final List of Proposed Sustainable Development Goal Indicators, Report of the Inter-Agency and Expert Group on Sustainable Development Goal Indicators (E/CN.3/2016/2/Rev.1). Available online: https://sustainabledevelopment.un.org/content/documents/11803Official-List-of-Proposed-SDG-Indicators.pdf (accessed on 9 April 2018).

- The Federal Government of Germany. Deutsche Nachhaltigkeitsstrategie, Neuauflage 2016. Available online: https://www.bundesregierung.de/Content/DE/_Anlagen/Nachhaltigkeit-wiederhergestellt/2017-01-11-nachhaltigkeitsstrategie.pdf?__blob=publicationFile&v=20 (accessed on 27 March 2018).

- McKenzie, M.; Bieler, A.; McNeil, R. Education policy mobility: Reimagining sustainability in neoliberal times. Environ. Educ. Res. 2015, 21, 319–337. [Google Scholar] [CrossRef]

- Pauw, J.; Gericke, N.; Olsson, D.; Berglund, T. The Effectiveness of Education for Sustainable Development. Sustainability 2015, 7, 15693–15717. [Google Scholar] [CrossRef]

- Oliver, K.; Lorenc, T.; Innvær, S. New directions in evidence-based policy research: A critical analysis of the literature. Health Res. Policy Syst. 2014, 12, 34. [Google Scholar] [CrossRef] [PubMed]

- Smith, P.C.; Nutley, S.M.; Davies, H.T.O. What Works? Evidence Based Policy and Practice in Public Services, 1st ed.; The Policy Press: Bristol, UK, 2000. [Google Scholar]

- Nutley, S.M.; Davies, H.T.O.; Walter, I. Evidence Based Policy and Practice: Cross Sector Lessons from the United Kingdom. Soc. Policy J. N. Z. 2003, 2, 29–48. Available online: https://www.msd.govt.nz/documents/about-msd-and-our-work/publications-resources/journals-and-magazines/social-policy-journal/spj20/20-pages29-48.pdf (accessed on 9 April 2018).

- OECD (Organization for Economic Co-operation and Development). Evidence in Education: Linking Research and Policy; OECD Publishing: Paris, France, 2007. [Google Scholar]

- Ioannidou, A. Educational Monitoring and Reporting as Governance Instruments for Evidence-Based Education Policy. In International Educational Governace, 1st ed.; Amos, K.S., Ed.; Emerald Group Publishing: Bringley, UK, 2010; pp. 155–172. [Google Scholar]

- McCool, S.F.; Stankey, G.H. Indicators of sustainability: Challenges and opportunities at the interface of science and policy. Environ. Manag. 2004, 33, 294–305. [Google Scholar] [CrossRef] [PubMed]

- Arima, A.; Konaré, A.O.; Lindberg, C.; Rockefeller, S. Draft International Implementation Scheme, United Nations Decade of Education for Sustainable Development 2005–2014. Available online: http://www.env-edu.gr/Documents/files/Basika%20Keimena/DESD.pdf (accessed on 4 June 2017).

- Bormann, I. Criteria and indicators as negotiated knowledge and the challenge of transfer. Educ. Res. Policy Pract. 2007, 6, 1–14. [Google Scholar] [CrossRef]

- Burford, G.; Tamás, P.; Harder, M. Can We Improve Indicator Design for Complex Sustainable Development Goals? A Comparison of a Values-Based and Conventional Approach. Sustainability 2016, 8, 861. [Google Scholar] [CrossRef]

- Meadows, D. Indicators and Information Systems for Sustainable Development, a Report to the Balaton Group. Available online: https://pdfs.semanticscholar.org/3372/06350e14a75581b88550fadfd0b39d144d87.pdf (accessed on 12 June 2017).

- Singer-Brodowski, M.; Brock, A.; Etzkorn, N.; Otte, I. Monitoring of education for sustainable development in Germany—Insights from early childhood education, school and higher education. Environ. Educ. Res. 2018, 1–16. [Google Scholar] [CrossRef]

- Di Giulio, A.; Schweizer Ruesch, C.; Adomßent, M.; Blaser, M.; Bormann, I.; Burandt, S.; Fischbach, R.; Kaufmann-Hayoz, R.; Kirkser, T.; Künzli David, C.; et al. Bildung auf dem Weg zur Nachhaltigkeit: Vorschlag eines Indikatoren-Sets zur Beurteilung von Bildung für Nachhaltige Entwicklung. Available online: http://www.ikaoe.unibe.ch/publikationen/PDF-Schriftenreihen/Schriftenreihe%2012%20%282011%29/BNE-Indikatoren_2011_AOe_Nr12.pdf (accessed on 27 March 2018).

- Michelsen, G.; Adomßent, M.; Bormann, I.; Burandt, S.; Fischbach, R. Indikatoren der Bildung für Nachhaltige Entwicklung, Ein Werkstattbericht. Available online: http://www.bne-portal.de/sites/default/files/Indikatoren_2520der_2520BNE.File__0.pdf (accessed on 27 March 2018).

- UNECE (United Nations Economic Commission for Europe). Indicators for Education for Sustainable Development, Addendum—Draft Format for Reporting on Implementation of the UNECE Strategy for Education for Sustainable Development; UNECE: Geneva, Switzerland, 2006. [Google Scholar]

- UNECE-Expert Group on Indicators for ESD. Extract of Issues Relevant to Competences in ESD from the Reporting Format. Indicators/Sub-Indicators under Issues for Reporting 2 and 3. Available online: http://www.unece.org/fileadmin/DAM/env/esd/inf.meeting.docs/EGonInd/8mtg/ExtractRFCompetencesEG_ESD_8_5.pdf (accessed on 3 June 2017).

- UNECE (United Nations Economic Commission for Europe). 2014 National Implementation Reporting, Phase III: Format for Reporting on the Implementation of the UNECE Strategy for Education for Sustainable Development; UNECE: Geneva, Switzerland, 2014; Available online: http://www.unece.org/2014esdreporting.html (accessed on 11 July 2018).

- UNECE (United Nations Economic Commission for Europe). Indicators for Education for Sustainable Development, Progress Report on the Work of the Expert Group. ECE/CEP/AC.13/2006/5; UNECE: Geneva, Switzerland, 2006; Available online: https://www.unece.org/fileadmin/DAM/env/documents/2006/ece/cep/ac.13/ece.cep.ac.13.2006.5.e.pdf (accessed on 9 April 2018).

- Baethge, M.; Brunke, J.; Döbert, H.; Fest, M.; Freitag, H.-W.; Fitzsch, B.; Fuchs-Rechlin, K.; Christian, K.; Kühne, S. Indikatorenentwicklung für den Nationalen Bildungsbericht “Bildung in Deutschland”, Grundlagen, Ergebnisse, Perspektiven. Available online: https://www.bmbf.de/pub/Bildungsforschung_Band_33.pdf (accessed on 12 January 2017).

- Nikel, J.; Müller, S. Indikatoren einer Bildung für nachhaltige Entwicklung. In Kompetenzen der Bildung für Nachhaltige Entwicklung: Operationalisierung, Messung, Rahmenbedingungen, Befunde; Bormann, I., Haan, G.D., Eds.; VS Verlag für Sozialwissenschaften: Wiesbaden, Germany, 2008; pp. 233–251. [Google Scholar]

- Bormann, I.; Michelsen, G. The Collaborative Production of Meaningful Measure(ment)s: Preliminary insights into a work in progress. Eur. Educ. Res. J. 2010, 9, 510–518. [Google Scholar] [CrossRef]

- Berger-Schmitt, R.; Noll, H.-H. Conceptual Framework and Structure of a European System of Social Indicators. Available online: http://www.gesis.org/fileadmin/upload/dienstleistung/daten/soz_indikatoren/eusi/paper9.pdf (accessed on 12 January 2017).

- Brock, A. Indikatorenset zur Verankerung von BNE in den verschiedenen Bildungsbereichen. In Wegmarken zur Transformation: Nationales Monitoring von Bildung für Nachhaltige Entwicklung in Deutschland; Brock, A., Haan, G.D., Etzkorn, N., Singer-Brodowski, M., Eds.; Verlag Barbara Budrich: Berlin, Germany; Toronto, ON, Canada, 2018. [Google Scholar]

- DIPF (Deutsches Institut für Internationale Pädagogische Forschung). Das weiterentwickelte Indikatorenkonzept der Bildungsberichterstattung. Available online: http://www.bildungsbericht.de/de/forschungsdesign/pdf-grundlagen/indikatorenkonzept.pdf (accessed on 26 September 2016).

- Gehrlein, U. Nachhaltigkeitsindikatoren zur Steuerung Kommunaler Entwicklung; VS Verlag für Sozialwissenschaften: Wiesbaden, Germany, 2004. [Google Scholar]

- Huckle, J. A UK Indicator of Education for Sustainable Development, Report on Consultative Workshops; Sustainable Development Commission: London, UK, 2006; Available online: https://research-repository.st-andrews.ac.uk/bitstream/handle/10023/2263/sdc-2006-education-for-sd-indicators.pdf?sequence=2 (accessed on 12 June 2017).

- Huckle, J. Consulting the UK ESD community on an ESD indicator to recommend to Government: An insight into the micro-politics of ESD. Environ. Educ. Res. 2009, 15, 1–15. [Google Scholar] [CrossRef]

- Barrett, A.M.; Sørensen, T.B. Indicators for All? Monitoring Quality and Equity for a Broad and Bold Post-2015 Global Education Agenda; Open Society Foundations: New York, NY, USA, 2015; Available online: https://www.opensocietyfoundations.org/sites/default/files/barrett-indicators-for-all-20150520.pdf (accessed on 27 June 2018).

- EU-Commission of the European Communities. Progress Torwards the Common Objectives in Education and Training, Indicators and Benchmarks; EU-Commission of the European Communities: Brussels, Belgium, 2004. Available online: http://www.nefmi.gov.hu/letolt/eu/progress_report_indicators.pdf (accessed on 28 June 2018).

- Van Ackeren, I.; Hovestadt, G. Indikatorisierung der Empfehlungen des Forum Bildung. Available online: http://d-nb.info/971373620/34 (accessed on 11 January 2017).

- Fritz-Gibbon, C.T.; Tymms, P. Technical and Ethical Issues in Indicator Systems: Doing Things Right and Doing Wrong Things. Available online: http://epaa.asu.edu/ojs/article/viewFile/285/411 (accessed on 12 January 2017).

- Seybold, H. Bedingungen des Engagements von Lehrern für Bildung für nachhaltige Entwicklung. In Bildung für Nachhaltige Entwicklung: Aktuelle Forschungsfelder Und-Ansätze; Rieß, W., Apel, H., Eds.; VS Verlag für Sozialwissenschaften: Wiesbaden, Germany, 2006; pp. 171–183. [Google Scholar]

- Yoon, K.S.; Duncan, T.; Lee, S.W.-Y.; Scarloss, B.; Shapley, K.L. Reviewing the Evidence on How Teacher Professional Development Affects Student Achievement. Available online: https://ies.ed.gov/ncee/edlabs/regions/southwest/pdf/REL_2007033.pdf (accessed on 21 March 2018).

- Stoll, L.; Harris, A.; Handscomb, G. Great Professional Development Which Leads to Great Pedagogy, Research and Development Network National Themes: Theme Two. Available online: https://www.appa.asn.au/wp-content/uploads/2015/08/stoll-article2.pdf (accessed on 21 March 2018).

- Lipowsky, F. Auf den Lehrer kommt es an. Empirische Evidenzen für Zusammenhänge zwischen Lehrerkompetenzen, Lehrerhandeln und dem Lernen der Schüler. Z. Pädagog. 2006, 51, 47–70. Available online: http://www.pedocs.de/volltexte/2013/7370/pdf/Lipowsky_Auf_den_Lehrer_kommt_es_an.pdf (accessed on 29 November 2016).

- Lipsky, M. Street-Level Bureaucracy: Dilemmas of the Individual in Public Services; Russell Sage Foundation: New York, NY, USA, 1980. [Google Scholar]

- Andersson, K.; Jagers, S.; Lindskog, A.; Martinsson, J. Learning for the Future? Effects of Education for Sustainable Development (ESD) on Teacher Education Students. Sustainability 2013, 5, 5135–5152. [Google Scholar] [CrossRef]

- Rieckmann, M.; Holz, V. Zum Status Quo der Lehrerbildung und-weiterbildung für nachhaltige Entwicklung in Deutschland. Pädagog. Blick-Z. Wiss. Prax. Pädagog. Berufen 2017, 25, 4–18. [Google Scholar]

- Hallitzky, M. Forschendes und selbstreflexives Lernen im Umgang mit Komplexität. In Kompetenzen der Bildung für nachhaltige Entwicklung: Operationalisierung, Messung, Rahmenbedingungen, Befunde; Bormann, I., Haan, G.D., Eds.; VS Verlag für Sozialwissenschaften: Wiesbaden, Germany, 2008; pp. 159–178. [Google Scholar]

- Franz, J.; Frieters, N. Kompetenzmodelle in Fortbildungen—Pragmatische Wege. In Kompetenzen der Bildung für Nachhaltige Entwicklung: Operationalisierung, Messung, Rahmenbedingungen, Befunde; Bormann, I., Haan, G.D., Eds.; VS Verlag für Sozialwissenschaften: Wiesbaden, Germany, 2008; pp. 75–87. [Google Scholar]

- Lipowsky, F.; Razejak, D. Lehrerinnen und Lehrer als Lernen-Wann gilt der Rollentausch?—Merkmale und Wirkung wirksamer Lehrerforbildungen. In Reform der Lehrerbildung in Deutschland, Österreich und der Schweiz: Teil 1: Analysen, Perspektiven und Forschung; Bosse, D., Criblez, L., Hascher, T., Eds.; Prolog-Verlag: Immenhausen, Germany, 2012; pp. 235–254. [Google Scholar]

- Huber, S.G. Wirksamkeit von Fort-und Weiterbildung. In Lehrprofessionalität: Bedingungen, Genese, Wirkungen und ihre Messung; Zlatkin-Troitschanskaia, O., Ed.; Beltz: Weinheim, Germany, 2009; pp. 451–463. [Google Scholar]

- UNESCO. UNESCO Roadmap for Implementing the Global Action Programme on Education for Sustainable Development. Available online: http://unesdoc.unesco.org/images/0023/002305/230514e.pdf (accessed on 5 January, 2017).

- Mayring, P. Qualitative Content Analysis. Available online: http://nbn-resolving.de/urn:nbn:de:0168-ssoar-395173 (accessed on 6 January 2017).

- Rieß, W.; Mischo, C.; Reinholz, A.; Richter, K.; Dobler, C.; Seybold, H. Evaluationsbericht Bildung für nachhaltige Entwicklung an weiterführenden Schulen in Baden-Württemberg. Maßnahme Lfd. 15 im Aktionsplan Baden-Württemberg. Available online: https://www.researchgate.net/profile/Werner_Riess/publication/278619237_Evaluationsbericht_Bildung_fur_nachhaltige_Entwicklung_BNE_an_weiterfuhrenden_Schulen_in_Baden-Wurttemberg_Massnahme_Lfd_15_im_Aktionsplan_Baden-Wurttemberg/links/5583dab808ae89172b85fdbd/Evaluationsbericht-Bildung-fuer-nachhaltige-Entwicklung-BNE-an-weiterfuehrenden-Schulen-in-Baden-Wuerttemberg-Massnahme-Lfd-15-im-Aktionsplan-Baden-Wuerttemberg.pdf (accessed on 26 June 2018).

- Statistisches Bundesamt. Bildung und Kultur: Allgemeinbildende Schulen, Schuljahr 2015/2016. Available online: https://www.destatis.de/DE/Publikationen/Thematisch/BildungForschungKultur/Schulen/AllgemeinbildendeSchulen2110100167004.pdf?__blob=publicationFile (accessed on 31 May 2017).

- Razejak, D.; Küsting, J.; Lipowsky, F.; Fischer, E.; Dezhgahi, U.; Reichardt, A. Facetten der Lehrerfortbildungsmotivation-eine faktorenanalytische Betrachtung. J. Educ. Res. Online (JERO) 2014, 6, 139–159. [Google Scholar]

- Lipowsky, F.; Rzejak, D. Lehrerinnen und Lehrer als Lerner—Wann gelingt der Rollentausch? Merkmale und Wirkungen wirksamer Lehrerfortbildungen. Reform Lehrerbildung 2012, 3. [Google Scholar] [CrossRef]

- Beck, K.; Zlatkin-Troitschanskaia, O. Lehrerprofessionalität: Was wir Wissen und Was wir Wissen Müssen; Verl. Empirische Pädagogik: Landau in der Pfalz, Germany, 2010. [Google Scholar]

- Oekes, J. What Educational Indicators? The Case for Assessing the School Context. Educ. Eval. Policy Anal. 1989, 11, 181–199. [Google Scholar] [CrossRef]

- Mogensen, F.; Schnack, K. The action competence approach and the ‘new’ discourses of education for sustainable development, competence and quality criteria. Environ. Educ. Res. 2010, 16, 59–74. [Google Scholar] [CrossRef]

- Reid, A.; Nikel, J.; Scott, W. Indicators for Education for Sustainable Development: A Report on Perspectives, Challenges and Progress; Anglo-German Foundation: Berlin, Germany, 2006; Available online: http://www.agf.org.uk/cms/upload/pdfs/CR/2006_CR1515_e_education_for_sustainable_development.pdf (accessed on 6 January 2017).

- Tilbury, D.; Janousek, S.; Elias, D.; Bacha, J. Asia-Pacific Guidelines for the Development of National ESD Indicators. Available online: http://unesdoc.unesco.org/images/0015/001552/155283e.pdf (accessed on 6 January 2017).

- Rode, H.; Michelsen, G. Levels of indicator development for education for sustainable development. Environ. Educ. Res. 2008, 14, 19–33. [Google Scholar] [CrossRef]

- Cebrián Bernat, G.; Junyent Pubill, M. Competencias profesionales en Educación para la Sostenibilidad: Un estudio exploratorio de la visión de futuros maestros. Ensciencias 2014, 32. [Google Scholar] [CrossRef]

- Cebrián, G.; Junyent, M. Competencies in Education for Sustainable Development: Exploring the Student Teachers’ Views. Sustainability 2015, 7, 2768–2786. [Google Scholar] [CrossRef]

- Sleurs, W. Competencies for ESD (Education for Sustainable Development) Teachers. A Framework to Integrate ESD in the Curriculum of Teacher Training Institutes. Available online: https://www.unece.org/fileadmin/DAM/env/esd/inf.meeting.docs/EGonInd/8mtg/CSCT%20Handbook_Extract.pdf (accessed on 27 March 2018).

- Wiek, A.; Withycombe, L.; Redman, C.L. Key competencies in sustainability: A reference framework for academic program development. Sustain. Sci. 2011, 6, 203–218. [Google Scholar] [CrossRef]

- Shephard, K. Higher education for sustainability: Seeking affective learning outcomes. Int. J. Sustain. High. Educ. 2008, 9, 87–98. [Google Scholar] [CrossRef]

- Hellberg-Rode, G.; Schrüfer, G. Which specific professional action competencies do teachers need in order to implement education for sustainable development in schools? Findings of an exploratory study. Seiten/Biol. Lehr. Lern.—Z. Didakt. Biol. 2016, 1–29. [Google Scholar] [CrossRef]

- UNECE (United Nations Economic Commission for Europe). Lernen für die Zukunft, Kompetenzen für Bildung für Nachhaltige Entwicklung; UNECE: Geneva, Switzerland, 2012; Available online: http://www.education21.ch/sites/default/files/uploads/Lernen%20f%C3%BCr%20die%20Zukunft_dt_3.pdf (accessed on 23 September 2016).

| TTtotal = total number of TTs accredited by the state(s) | 111,589 | ||||

| N = number of teachers who worked in Germany during the evaluation period | 574,8932 | ||||

| highly ESD-relevant TT (rated by y4 = 1) | ESD-relevant TT (rated by y3 = 0.75) | partially ESD-relevant TT (rated by y2 = 0.5) | potentially ESD-relevant TT rated by y1 = 0.25) | TTESD = total of ESD-relevant TT | |

| TTESD = ESD-relevant TT accredited by the state(s) | 385 | 987 | 938 | 1508 | 3818 |

| FESD (Basic) | FESD (Basic, Rated) | FESD (Pro) | |

|---|---|---|---|

| Federal states’ min. | 1.08 | 0.63 | 0.01 |

| Federal states’ max. | 9.01 | 4.20 | 0.19 |

| National indicator ratio (for Germany) | 3.42 | 1.77 | 0.07 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Waltner, E.-M.; Rieß, W.; Brock, A. Development of an ESD Indicator for Teacher Training and the National Monitoring for ESD Implementation in Germany. Sustainability 2018, 10, 2508. https://doi.org/10.3390/su10072508

Waltner E-M, Rieß W, Brock A. Development of an ESD Indicator for Teacher Training and the National Monitoring for ESD Implementation in Germany. Sustainability. 2018; 10(7):2508. https://doi.org/10.3390/su10072508

Chicago/Turabian StyleWaltner, Eva-Maria, Werner Rieß, and Antje Brock. 2018. "Development of an ESD Indicator for Teacher Training and the National Monitoring for ESD Implementation in Germany" Sustainability 10, no. 7: 2508. https://doi.org/10.3390/su10072508

APA StyleWaltner, E.-M., Rieß, W., & Brock, A. (2018). Development of an ESD Indicator for Teacher Training and the National Monitoring for ESD Implementation in Germany. Sustainability, 10(7), 2508. https://doi.org/10.3390/su10072508