Abstract

Aiming at the insufficient capture of temporal dependence and weak coupling of external factors in multivariate load forecasting, this paper proposes a Transformer model integrating timestamp-based positional embedding and convolution-augmented attention. The model enhances temporal modeling capability through timestamp-based positional embedding, optimizes local contextual representation via convolution-augmented attention, and achieves deep fusion of load data with external factors such as temperature, humidity, and electricity price. Experiments based on the 2018 full-year load dataset for a German region show that the proposed model outperforms single-factor and multi-factor LSTMs in both short-term (24 h) and long-term (cross-month) forecasting. The research results verify the model’s accuracy and stability in multivariate load forecasting, providing technical support for smart grid load dispatching.

1. Introduction

With advancements in technology and the transformation of traditional power systems into modern ones, significant changes are expected in power generation structures, load characteristics, balance models, and market environments. At the same time, several pressing issues have emerged that require attention: the power generation structure and load patterns are undergoing substantial changes, leading to a significant increase in the randomness and volatility on both the generation and consumption sides. The integration of renewable energy, electric vehicles, and demand response resources has made power grid operation more complex. Moreover, the intertwining of internal and external environments, along with the compounded requirements of various policies, poses significant challenges to ensuring power supply security. Meanwhile, profound changes in the power market environment offer new opportunities for leveraging load-side resources. As a result, the precise forecasting of electricity load data has become a key research focus.

Taking China Guangdong Power Grid (the core load center of China Southern Power Grid) as an example, its maximum load exceeded 150 GW in the summer of 2024, a year-on-year increase of 7.8%. Driven by manufacturing reflowing, data center clusters, and surging EV charging load, the traditional “double-peak” load pattern has evolved into a “multi-peak + extended plateau.” With a daily peak-valley load difference rate over 50%, the summer evening peak (18:00–20:00) sees a load change rate of 18–22% due to zero PV output and air conditioning load overlap, where traditional prediction models have an above 12%. This directly causes real-time electricity prices to surge 300% above the day-ahead average, local overload risks from insufficient reserve capacity, and up to 13% energy waste in extreme cases. This fully verifies the practical urgency of the three core challenges: “load non-stationarity, weak coupling of external factors, and poor generalization of sudden load”.

By forecasting electricity load data, power departments can better predict and plan load demand to meet the requirements during peak and off-peak periods. This helps avoid power shortages and oversupply, enhances the power system’s efficiency, alleviates pressure on the system, reduces energy waste, and decreases electricity costs.

Load forecasting is affected by multiple factors, such as temporal characteristics, meteorological conditions, and user behavior, presenting three core challenges. The first is the non-stationarity of load time series. Load data shows obvious daily and weekly periodicity but is also disturbed by random factors (e.g., sudden changes in temperature and temporary industrial production), leading to nonlinear fluctuations. The second is the weak coupling of external factors. Temperature, humidity, electricity prices, and other factors have complex nonlinear correlations with load, and it is difficult for traditional models to fully capture this coupling. The third is the poor generalization of sudden load. Load spikes caused by short-term centralized electricity consumption (e.g., air conditioning use in high temperatures) account for only a small part of the total samples, making it easy for models to underfit these extreme cases.

To address these challenges, researchers have proposed various solutions. Traditional statistical methods such as ARIMA and XGBoost rely on linear assumptions or shallow feature engineering, making it difficult to handle nonlinear time-series features. Recurrent neural networks (RNNs), such as LSTMs and GRUs, have made breakthroughs in capturing temporal dependencies, but they suffer from gradient vanishing when processing long sequences, limiting their ability to model long-term load patterns. Convolutional neural networks (CNNs) are effective at local feature extraction but lack the ability to model global temporal dependencies. Recent Transformer-based models leverage self-attention mechanisms to capture long-range dependencies, but most fail to fully integrate external factors and temporal position information, resulting in insufficient local feature modeling for sudden load changes.

To overcome the limitations of existing methods, this paper proposes an enhanced Transformer-based model for multivariate load forecasting. By introducing timestamp-based positional embeddings and convolution-augmented attention, the model enhances the capture of temporal information and local contextual features, enabling deep fusion of load data and external factors. The key contributions of this work are summarized as follows:

- 1.

- Development of an advanced Transformer-based deep neural network for multivariate load forecasting. The model integrates load data with external influencing factors, such as temperature, humidity, and electricity prices, to enhance prediction accuracy.

- 2.

- Introduction of a timestamp embedding mechanism to enhance temporal sequence modeling. Timestamp information is separately extracted and incorporated into the input data via position embedding, thereby strengthening the model’s ability to capture temporal dependencies and improve forecasting precision.

- 3.

- Incorporation of convolution-augmented attention to refine local contextual modeling. A convolution operation is introduced before the multi-head attention mechanism in the Transformer architecture to enhance the representation of local patterns. This reduces the attention mechanism’s focus on anomalous data, thereby minimizing its impact on prediction results and enhancing the accuracy of load forecasting.

- 4.

- Extensive empirical evaluation on a real-world dataset, utilizing electricity load data from a region in Germany. The proposed model demonstrates superior forecasting performance, achieving consistently better results across , , , , , and metrics, indicating improved prediction accuracy in both short-term and long-term load forecasting.

In summary, this paper focuses on core issues in multivariate load forecasting, such as insufficient temporal dependence capture and weak external factor coupling. The research core is to develop an enhanced Transformer algorithm with “timestamp embedding + convolution-augmented attention”, and the ultimate goal is to provide accurate prediction support for power grid load dispatching and supply–demand balance.

2. Related Works

In recent years, the structure and control methods of power systems have undergone significant changes. An increasing volume of power data is being accumulated in the grid, making the extraction of valuable information from large-scale datasets essential for the development of power systems. One of the most critical pieces of information in power data is users’ electricity consumption patterns. By analyzing load data to understand electricity usage in a specific region, power companies can gain valuable insights for planning and policymaking.

User electricity consumption often exhibits periodic variations over time, such as hourly, daily, or weekly. In this study, we analyze and investigate user load data on a daily basis. Depending on the sampling frequency, daily load data can be categorized into 24 data points (sampled hourly), 48 data points (sampled every half hour), or 96 data points (sampled every 15 min). These daily electricity consumption samples provide a real-time reflection of users’ power usage fluctuations throughout the day.

In modern intelligent power systems, such load data are utilized for analyzing electricity usage types (consumption patterns), forecasting future load data, and making intelligent decisions for demand response, among other applications. For instance, Hosking et al. [1] used user load curves for short-term electricity consumption forecasting. Rhodes et al. [2] analyzed users’ electricity demand by clustering their load data, while Hyun et al. [3] investigated user behavior for anomaly detection.

In the field of multivariate load forecasting, extensive research has been conducted, primarily focusing on traditional methods, novel neural network approaches, and their combinations. Fang et al. [4] applied singular spectrum analysis (SSA) to a multivariate input recurrent neural network (RNN). Lang et al. [5] applied random weights and kernel functions to neural networks for short-term load forecasting using historical load and temperature data. Unterluggauer et al. [6] proposed a multivariate multi-step model based on LSTM for predicting short-term charging load data. Bracale et al. [7] and Xing et al. [8] employed multivariate quantile regression for short-term load forecasting. Huang et al. [9] introduced a hybrid forecasting model based on multivariate empirical mode decomposition (MEMD) and support vector regression (SVR), with parameters optimized using particle swarm optimization (PSO) to accurately capture peak power loads. Xiao et al. [10] proposed the multi-scale dilated long short-term memory (MSD-LSTM) model for short-term load forecasting with multivariate data. Khan et al. [11] utilized SVR to develop a multivariate time-series prediction model for load forecasting. These algorithms and models are mainly based on regression methods and simple neural network structures. However, these approaches are primarily applicable to low-dimensional data, limiting their ability to leverage additional factors that are strongly correlated with load data. As a result, they are unable to perform long-term load forecasting effectively.

Convolutional neural networks (CNNs) [12], primarily used in image processing, are effective at extracting features by preserving spatial relationships between pixels. Since time-series data can be transformed into 2-D curves, CNNs can be applied to these datasets for efficient feature extraction. Consequently, many studies have integrated CNNs into their forecasting models. Bendaoud et al. [13] provided 2-D inputs to a CNN for quarter-hourly and 24 h load forecasting. Dong et al. [14] combined CNNs with k-means clustering to enhance the scalability of short-term load forecasting. Deng et al. [15] utilized multi-scale convolutional networks (MS-CNNs) to extract features at different levels for short-term load forecasting. Jin et al. [16] proposed a CNN-GRU hybrid model optimized for short-term load forecasting through parameter-based transfer learning. Perera et al. [17] proposed a hierarchical temporal convolutional neural network (HTCNN) to effectively leverage time-series power data and weather data.

However, as more and more users’ electricity consumption data are collected by the power grid, existing research has revealed certain limitations. Recurrent neural network (RNN) models, such as LSTMs, suffer from issues like vanishing gradients and the inability to train in parallel, among others. To overcome these issues, attention mechanisms and Transformer models [18], which are based on the encoder–decoder architecture, are gaining traction. Tang et al. [19] incorporated attention mechanisms into temporal convolutional networks (TCNs) for short-term load forecasting. Yang et al. [20] developed an attention-based multi-input long short-term memory (MI-LSTM) with additional input gates to extract valuable information from each sub-series. He et al. [21] introduced attention mechanisms alongside convolutional neural networks (CNNs) to improve multivariate load forecasting performance. However, in the field of load forecasting, there is still limited research utilizing the complete Transformer model for prediction tasks.

To address these challenges, this paper introduces the Transformer model for load forecasting and proposes improvements specifically for multivariate load forecasting, exploring its optimization and application in this context.

3. Problem Description

Power systems can optimize electricity distribution and demand response planning through load forecasting. Accurate load forecasting forms the foundation for load assessment, power regulation, demand response, and overall power system management. In real-world electricity usage scenarios, user behavior is prone to fluctuations due to various external factors, such as climate change, holiday activities, and electricity price fluctuations. Traditional time-series regression algorithms or recurrent models may struggle to achieve accurate predictions for load data with such characteristics.

In light of these considerations, this paper proposes a multivariate load forecasting deep neural network model based on the Transformer architecture. The model integrates load data with external factors such as temperature, humidity, and electricity prices to enable precise load forecasting. It extracts timestamp information separately and embeds it into the input data through positional encoding, aiming to enhance the model’s temporal sequence modeling capability and improve prediction accuracy.

Moreover, the model introduces convolution operations before the multi-head attention mechanism of the Transformer architecture to improve the modeling of local contextual information. This approach reduces the attention mechanism’s focus on anomalous data, thereby mitigating its impact on prediction results and enhancing the overall accuracy of load forecasting.

4. Algorithm Model Design

4.1. LSTM Network Principle

To address the issue in RNNs where early information tends to be forgotten and the dependencies between time steps that are far apart are difficult to capture, Sepp Hochreiter et al. [22] proposed the LSTM model in 1997. One unit structure is shown in Figure 1. The core design of LSTM is the introduction of gate structures into the hidden layer of the basic RNN model, including the forget gate , the input gate , and the output gate , which are used to determine the discarding (forgetting) and adding of information. and are the activation functions, and are the input and output of the current memory unit, and is the current cell state. The gate structures enable the load forecasting task to capture long-term dependencies in time-series data, thereby achieving good predictive performance.

Figure 1.

Structure of LSTM memory unit.

4.2. Attention Mechanism

The attention mechanism is a widely used technique in deep learning for processing sequential data and establishing internal relationships within input data. Initially introduced to improve natural language processing (NLP) tasks, it has since been widely adopted in fields such as computer vision and machine translation [23,24]. In the attention mechanism, the model dynamically assigns importance weights to different parts of the input, allowing it to focus more on the relevant sections for the given task or problem. This enables the model to automatically learn dependencies between different input elements without requiring manual encoding of these relationships.

For predictive tasks, the attention mechanism helps the model capture long-range dependencies more effectively, addressing the vanishing gradient problem that traditional recurrent neural networks (RNNs) encounter when handling long sequences.

4.3. Transformer Model

The Transformer model [18], proposed by Google in 2017, is a deep learning architecture originally designed for natural language processing (NLP) tasks. It introduces the self-attention mechanism to handle the dependencies between different parts of the input sequence, allowing the model to consider all positions in the input sequence simultaneously. This helps the model capture long-range dependencies. As shown in Figure 2, the Transformer is based on an encoder–decoder structure, composed of multiple encoder layers and multiple decoder layers. Each encoder and decoder contains structures such as multi-head attention and feed-forward networks. The self-attention mechanism identifies the internal relationships within the input sequence. In multivariate load forecasting, the input sequence consists of multivariate factors and load data, forming multidimensional data. Through the self-attention mechanism, the model can capture the dependencies and relationships between load data and multivariate factors, as well as temporal dependencies, thus enabling accurate forecasting.

Figure 2.

Basic structure of the Transformer model.

4.4. Transformer-Based Multivariate Load Forecasting Model

To adapt the standard Transformer model to the multivariate load forecasting scenario, several improvements and customizations were made. The enhanced Transformer-based multivariate load forecasting model is illustrated in Figure 3.

Figure 3.

Transformer-based multivariate load forecasting model.

This model combines the timestamp vectors after positional embedding with the time-series data matrix of load and external factors as input. It then introduces a convolution kernel before the multi-head attention structure in the Transformer architecture to adjust the attention mechanism. Finally, the model outputs the predicted load values. By using preprocessed load data and external factors, the Transformer-based multivariate load forecasting model can effectively learn the influence of external factors on load data while establishing a temporal model for the load data, ultimately achieving accurate forecasting results.

The position embedding in the model is designed to help the Transformer model capture the positional information in the time-series data, specifically the sequence of the time-series load data. It is one of the core components of the Transformer-based multivariate load forecasting model, and its definition formula is shown as follows:

where is the dimension of the input data, which in load forecasting corresponds to the step size of the input data. Through positional encoding, each time step of the input data is encoded into a unique encoding. Additionally, using sine functions for positional encoding allows the model to focus on relative positional information in the input data, thereby enabling it to understand temporal information. Finally, the positional encoding vector is combined with the time-series data matrix to form the final input data matrix.

If the timestamp vector extracted from the original time-series data is

the positional encoding vector after applying positional encoding to it is

the load data vector is

the external factors matrix is

the final input matrix is

Finally, the input matrix is fed into the Transformer-based multivariate load forecasting model for training and testing.

5. Selection of Prediction Model Metrics

For the forecasting task, this section uses the mean absolute percentage error , root mean square error , mean absolute error , coefficient of determination , symmetric mean absolute percentage error , and mean squared logarithmic error to evaluate the prediction model. Their definition formulas are as follows:

where represents the predicted value sequence, represents the actual value sequence, and represents the mean of the actual values. and are used to measure the magnitude of the error between the predicted and actual values, with smaller values indicating more accurate predictions. provides a robust measure of absolute error that is less sensitive to outliers compared to . reflects the degree of fit of the model to the data, with a range of , where values closer to 1 indicate a better model fit. addresses ’s instability near zero by symmetrically normalizing errors with the sum of predicted and actual values, making it suitable for data with near-zero observations. And focuses on relative errors by penalizing under-prediction more heavily than over-prediction.

6. Experiment and Discussion

In this section, the Transformer-based multivariate load forecasting model is tested on a real dataset. The model’s superiority is demonstrated using the evaluation metrics defined in the previous section. First, the dataset used in the experiment is introduced, followed by an explanation of the comparative methods employed. Finally, the experimental results and their analysis are presented.

6.1. Environment and DataSet

The algorithm is tested on Ubuntu 22.04.2 LTS (Canonical Ltd., London, UK), and the CPU used for the experiments is Intel® Xeon® Platinum 8369B CPU @ 2.70 GHz (Intel, Santa Clara, CA, USA); the GPU is NVIDIA GeForce RTX 3090 (NVIDIA, Santa Clara, CA, USA). The codes are written in Python 3.10.16.

The experiment uses load data for the entire year of 2018 from a region in Germany, along with external factors such as temperature, humidity, and electricity prices. All data are sampled at 24 time points per day. The load and electricity price data are from load data published by a German institution, while meteorological data, such as temperature and humidity, are sourced from the German Meteorological Service Data Center. These two parts of the data form the multivariate input for this experiment. This dataset was chosen because it contains comprehensive information and includes various multivariate factors, making it suitable for testing the proposed model. The information contained in the dataset is shown in Table 1. An example of a day’s load data and multivariate data curves is shown in Figure 4.

Table 1.

Data description.

Figure 4.

Example of load data and external factor data curves from the German dataset: (a) 24-point load data, (b) 24-point meteorological data.

6.2. Comparative Method

Based on the deep forecasting model proposed in this section, the following section briefly introduces the comparison models used in this experiment. The selection of comparison models is mainly based on deep neural network forecasting methods and multivariate load forecasting. The models used in this experiment include the following:

- 1.

- LSTM model with only the load data as input. This model is primarily used to test its performance in multivariate load forecasting. Therefore, the LSTM model with only load data as input, without considering external factors, is used as the baseline.

- 2.

- LSTM model with load data and external factor data as input. This model, like the one proposed in this paper, uses multivariate data as input for prediction, which demonstrates the role of external factors in improving load forecasting accuracy.

- 3.

- Transformer-based multivariate load forecasting model is proposed in this chapter. The model proposed in this chapter uses multivariate data for load forecasting and is compared with the previous two models to demonstrate its advantages.

6.3. Data Preprocessing and Embedding

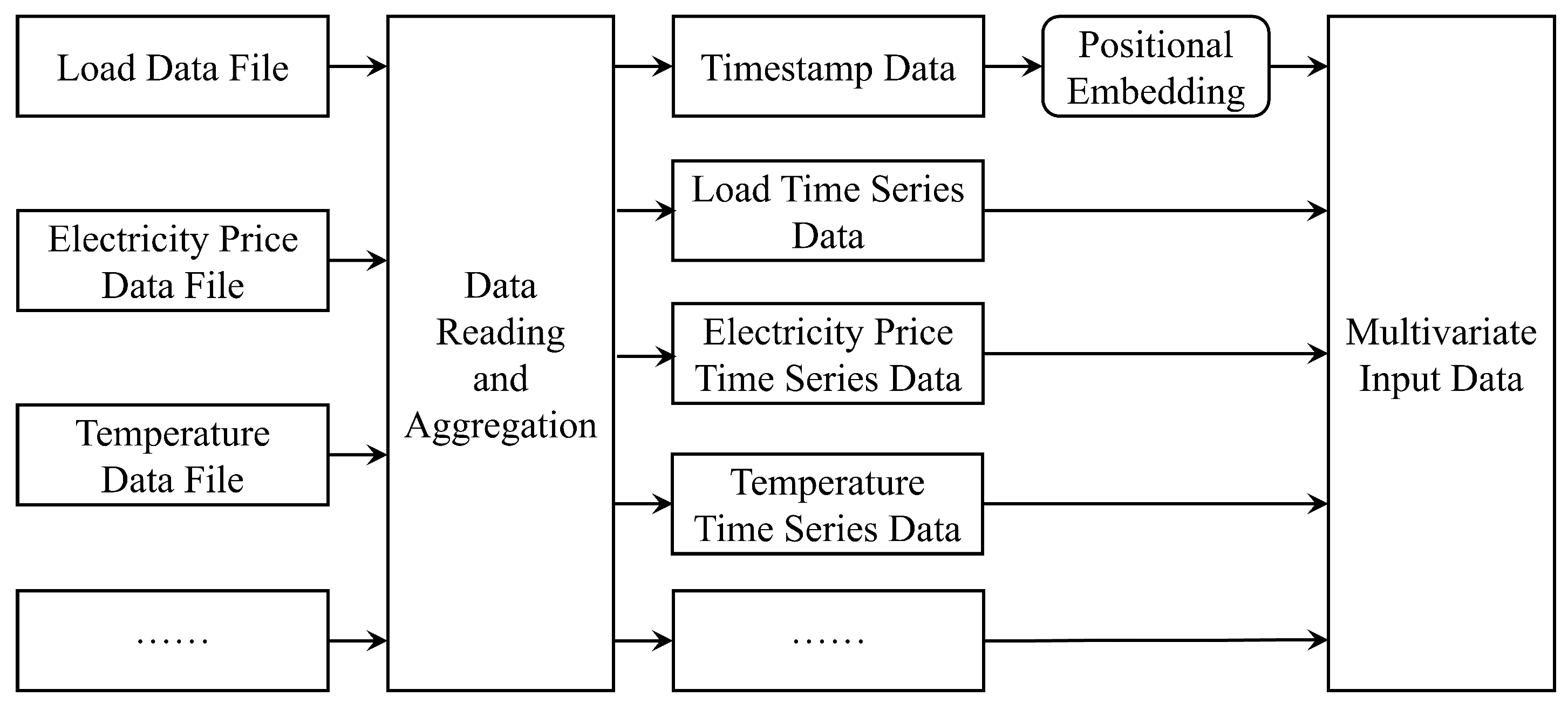

Before conducting the experiment, the data must be integrated and normalized. The multivariate data must be extracted from their respective data files and combined into an input matrix using the method described earlier, as shown in Figure 5.

Figure 5.

Schematic diagram of multivariate input data integration.

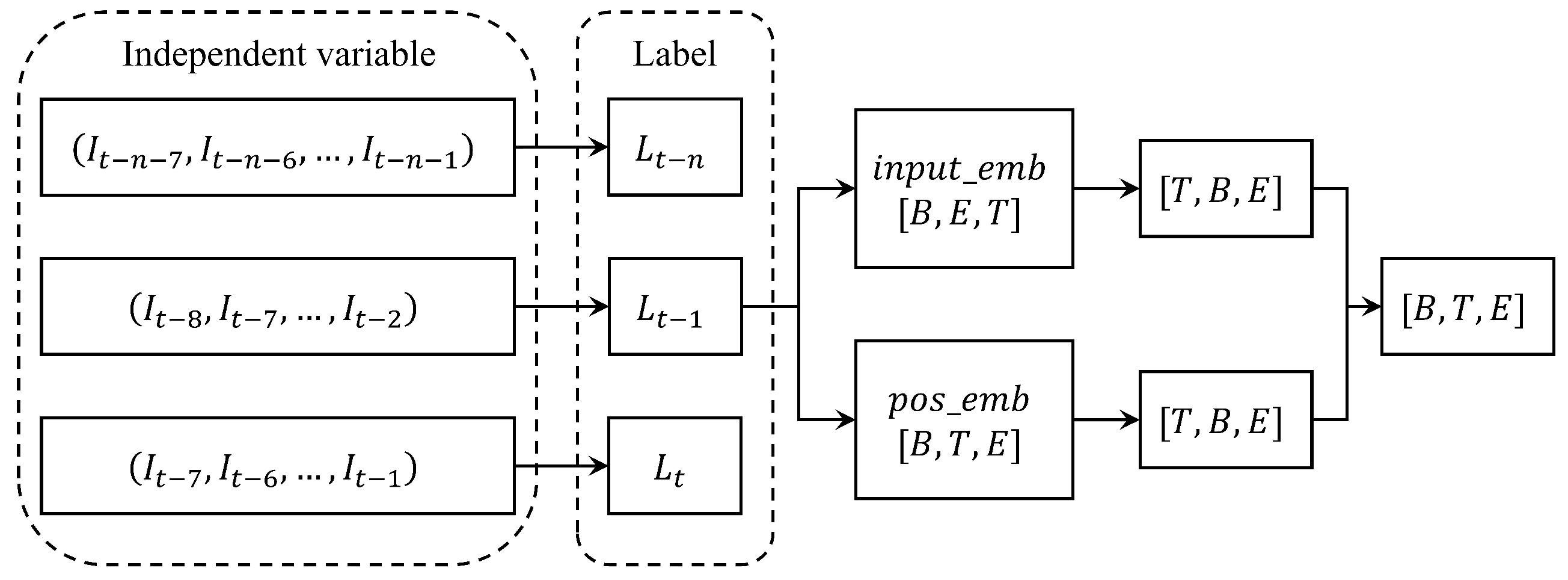

The dataset is divided into a training set and a testing set. Data from 1 January 2018 to 19 October 2018 is used as the training set, while data from 20 October 2018 to 31 December 2018 is used as the testing set, with an approximate ratio of 8:2. In both training and testing, the model uses the past 7 days of data (24 points per day) as input features (independent variables) and predicts the following day’s 24-point load data as the target (dependent variable) for supervised learning. In Figure 6, represents the multivariate data for 24 points in 1 day, and represents the 24-point load data for 1 day. The model combines the multivariate 24-point data for the past 7 days into a matrix as input features and uses the 24-point load data for the 8th day as the target for training. During testing, the same approach is used: the past 7 days of multivariate data are used to predict the load data for the following day.

Figure 6.

Training and testing format for multivariate load forecasting.

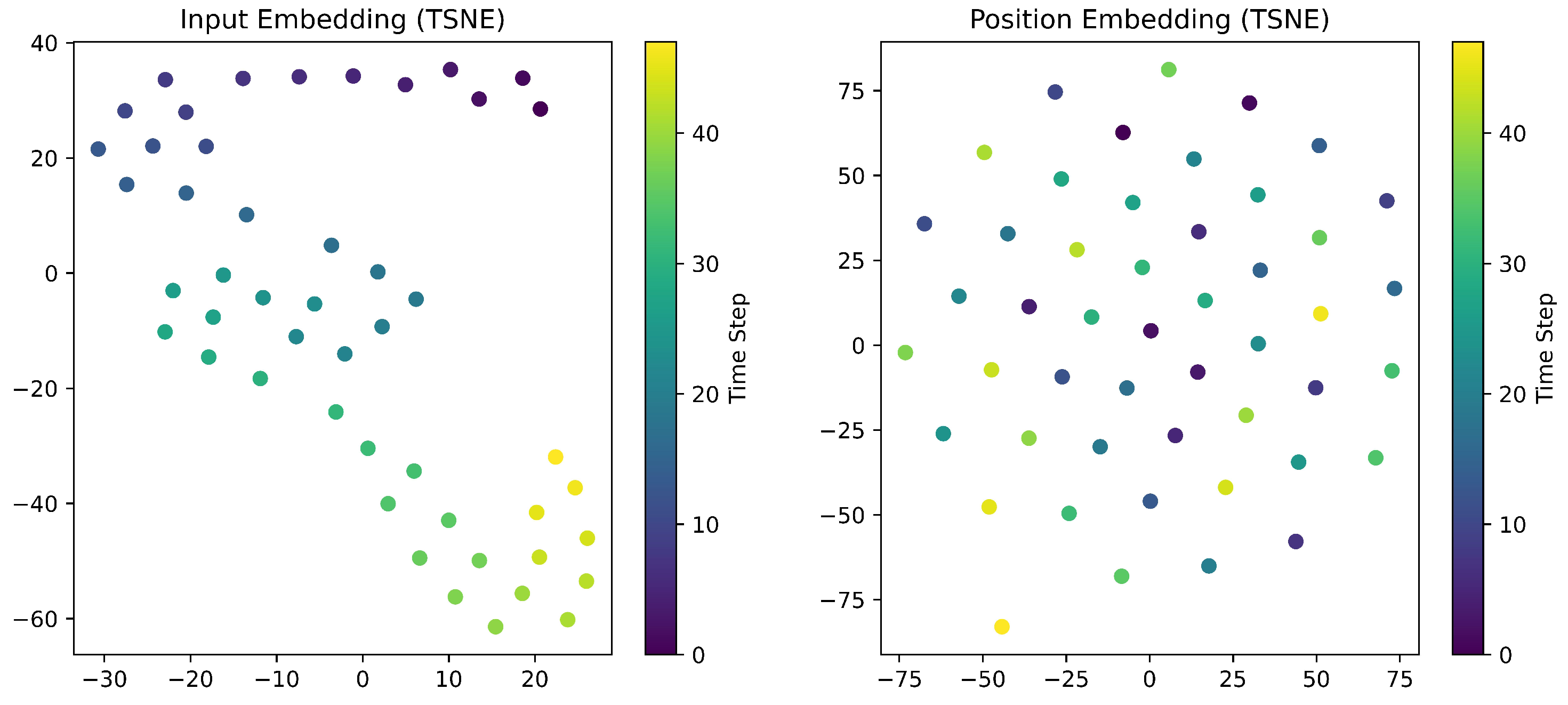

As illustrated by the model architecture, the model first encodes the multivariate data and timestamp separately. The Figure 7 below shows the t-SNE visualization results from a specific training epoch. The left panel displays the encoded representations of the input data, while the right panel shows the encoded timestamp embeddings.

Figure 7.

t-SNE visualization of input and temporal embedding patterns.

From the left figure, it can be observed that the embedding points of adjacent time steps exhibit continuous transitions in the dimensionally reduced space, with colors forming a ribbon-like gradient distribution. The dispersed embedding patterns indicate that the model successfully extracts diversified feature representations from the raw data while effectively capturing local temporal dependencies.

The figure on the right shows that the positional embeddings of timestamps exhibit a uniform, grid-like pattern with a well-organized structure and a compact distribution. This suggests the model’s strong capability for compact temporal sequence encoding, enabling precise preservation of chronological order information.

6.4. Experiment Results and Comparative Analysis

After performing the preprocessing steps mentioned above, the data were input into the model for training, and the model’s predictions for each case were obtained.

To address the concern about the potential overfitting risk of the complex enhanced Transformer model (integrating multi-head attention, convolution, and embedding modules), we first present the learning curve (training loss vs. test loss) to verify the model’s generalization ability before analyzing the prediction results.

As shown in Figure 8, the training loss and test loss show a synchronous downward trend overall, with the training loss decreasing from 0.2506 (Epoch 0) to 0.0014 (Epoch 49) and the test loss dropping from 0.0463 to 0.0026. This confirms that the model continuously learns valid load features without overfitting.

Figure 8.

Learning curve of the enhanced Transformer model (training loss vs. test loss).

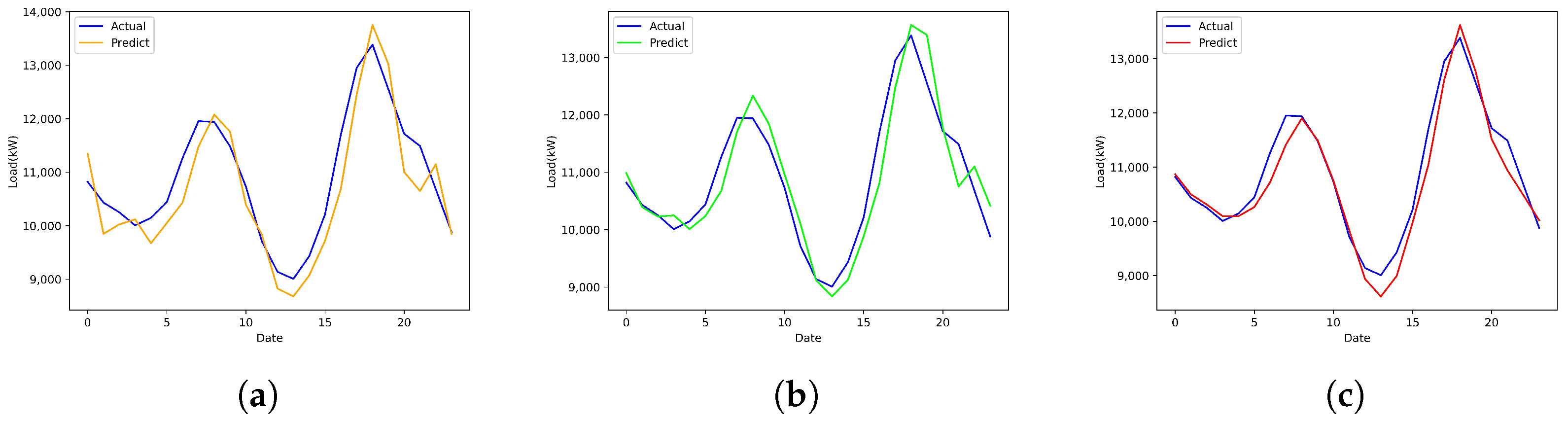

Figure 9 shows the comparison between the predicted load curve for the entire day (24 points) of 20 October 2018 and the actual load curve for each model.

Figure 9.

Load forecasting results for 20 October 2018. (a) Single-factor LSTM. (b) Multi-factor LSTM. (c) Enhanced Transformer (our method).

Table 2 is the evaluation metrics for each model on 20 October 2018. Table 3 is the period-specific performance evaluation.

Table 2.

Comparison of prediction result metrics for each model on 20 October 2018.

Table 3.

Period-specific performance evaluation indicators of multivariate load forecasting models on 20 October 2018.

As shown in Figure 9 and Figure 10, and Table 2, in time-series prediction close to the training set, all three models achieved good predictive performance. From the results, it can be observed that the multi-factor LSTM, compared to the single-factor LSTM, improved prediction accuracy by incorporating meteorological factors. Table 2 presents the comprehensive prediction performance of each model for the full 24 h load on 20 October 2018. It can be seen that our enhanced Transformer model outperforms the single-factor LSTM and multi-factor LSTM in all evaluation metrics, with the lowest (298.620) and (0.021) and the highest (0.932), which verifies the effectiveness of the proposed model in overall daily load forecasting.

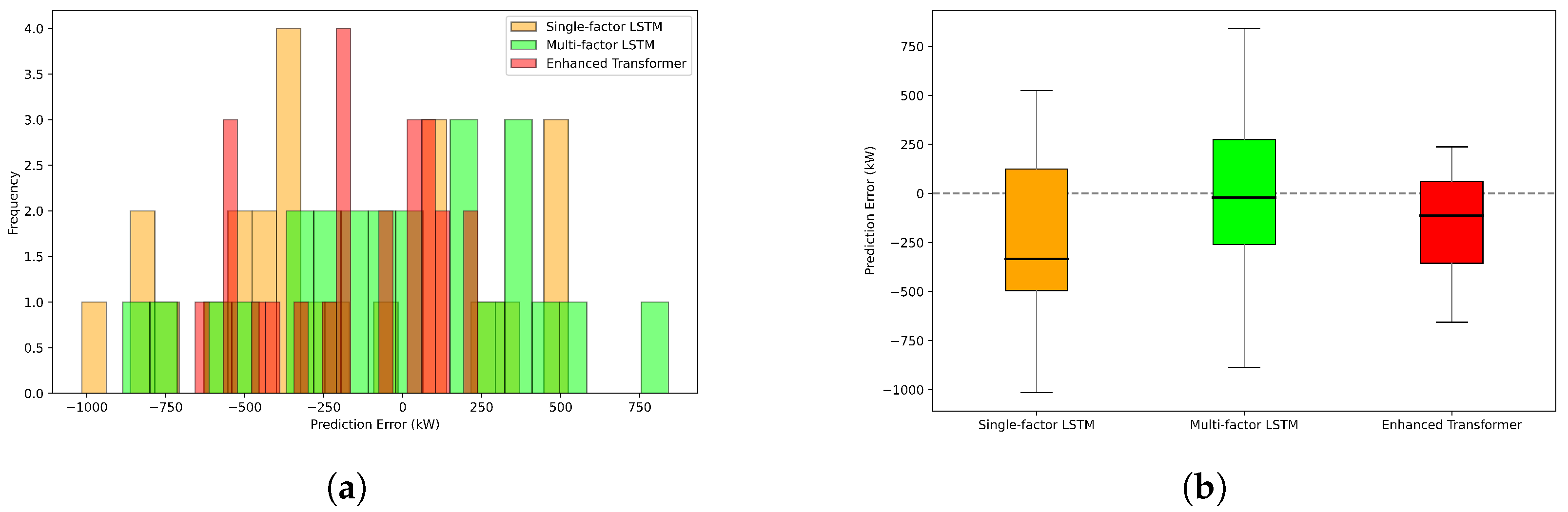

Figure 10.

Error distribution histogram and box plot for 20 October 2018. (a) Error distribution histogram. (b) Box plot.

To further explore the model’s performance in practical energy management scenarios, we conduct period-specific performance analysis, and the results are shown in Table 3. Specifically, we focus on three critical load periods closely related to grid dispatching: the valley load period, the load change period, and the peak load period. During the valley load period with low, stable load demand, the single-factor LSTM achieves the lowest RMSE and MAPE among the three models, while the multi-factor LSTM and our enhanced Transformer show slightly higher errors. This is because the valley load fluctuates minimally, and simple models can capture its trend without relying on complex multi-factor fusion or temporal modeling, though all models maintain low overall errors during this period, which meet the requirements of energy storage planning. For the load change period, which poses great challenges to grid stability, our enhanced Transformer exhibits significant advantages: it achieves the lowest , , and , as well as the highest , demonstrating a strong ability to capture abrupt load variations. This advantage stems from the convolution-augmented attention mechanism, which effectively extracts local contextual features and reduces the impact of load mutations. During the peak load period, the enhanced Transformer outperforms the other two models comprehensively, with better fitting of high-load variation patterns and superior performance across all evaluation metrics.

Additionally, the histogram and box plot of the error distribution reveal that the median of the enhanced Transformer model is closer to zero, indicating a more stable error distribution. This further demonstrates the model’s improved robustness in load forecasting. In summary, the comprehensive 24-h results confirm the overall superiority of our model, and the period-specific analysis further verifies its clear advantages in the load change and peak load periods—two critical scenarios for energy management—while maintaining a reasonable error level in the valley load period. Such adaptability to different load scenarios fully demonstrates the practical value of the enhanced Transformer model for grid dispatching and energy management.

To further validate the prediction and long-term modeling capability of the enhanced Transformer model, this experiment also performed load forecasting for a later time, specifically on 30 December 2018. The results are shown in Figure 11 and Figure 12 and Table 4.

Figure 11.

Load forecasting results for 30 December 2018. (a) Single-factor LSTM. (b) Multi-factor LSTM. (c) Enhanced Transformer (our method).

Figure 12.

Error distribution histogram and box plot for 30 December 2018. (a) Error distribution histogram. (b) Box plot.

Table 4.

Comparison of prediction result metrics for each model on 30 December 2018.

As shown in Figure 11 and Figure 12, Table 4 and Table 5, in the longer-term prediction, the enhanced Transformer model demonstrates a more significant advantage. Similar to the 20 October 2018 results, the single-factor LSTM and multi-factor LSTM models show greater instability in their predictions than the enhanced Transformer model, failing to accurately capture the load trend and achieving lower prediction accuracy. On the other hand, the enhanced Transformer model proposed in this section continues to effectively predict user electricity consumption trends, with higher prediction accuracy.

Table 5.

Period-specific performance evaluation indicators of multivariate load forecasting models on 30 December 2018.

7. Conclusions

Taking multivariate load forecasting as the core research object, this paper aims to address key challenges in the field by improving neural network algorithms and ultimately providing technical support for the optimal operation of power grids. This paper proposes a Transformer-based multivariate load forecasting deep neural network model that integrates load data with external factors, such as temperature, humidity, and electricity pricing, to accurately predict user electricity consumption. The model separately extracts timestamp information and embeds it with positional encoding into the input data, aiming to enhance the model’s ability to capture temporal patterns and improve forecasting accuracy. Furthermore, the model introduces convolution operations before the multi-head attention structure in the basic Transformer architecture to improve the model’s ability to capture local contextual information. This design reduces the attention mechanism’s focus on anomalous data, thereby minimizing its impact on forecasting results and enhancing load prediction accuracy.

Experimental results demonstrate that, compared to traditional LSTM models, the Transformer-based multivariate load forecasting model performs significantly better in both prediction accuracy and stability, especially for long-term and multivariate forecasting. Comparative experiments show that the proposed model accurately predicts user electricity consumption trends and reduces prediction errors. With the widespread adoption of smart grids and smart homes, the Transformer-based load forecasting model will also play a significant role in energy management, optimizing power supply, and reducing overall societal energy consumption.

Author Contributions

Conceptualization, W.S. and D.J.; methodology, W.S., X.Y., and K.L.; software, W.S. and Z.W.; validation, W.S., X.Y., and D.J.; formal analysis, W.S., X.Y., and K.L.; investigation, W.S., Z.W., and R.L.; resources, D.J., K.L., and R.L.; data curation, W.S., D.J., K.L., Z.W., and R.L.; writing—original draft preparation, W.S.; writing—review and editing, X.Y., D.J., K.L., Z.W., and R.L.; visualization, W.S., X.Y., and D.J.; supervision, K.L. and R.L.; project administration, K.L. and R.L.; funding acquisition, W.S. All authors have read and agreed to the published version of the manuscript.

Funding

This work was supported by the State Grid Corporation of China (5400-202355767A-3-5-YS).

Data Availability Statement

The original contributions presented in this study are included in the article. Further inquiries can be directed to the corresponding authors.

Conflicts of Interest

Authors Wanxing Sheng, Xiaoyu Yang, Dongli Jia and Keyan Liu was employed by the company China Electric Power Research Institute. The remaining authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

References

- Hosking, J.R.M.; Natarajan, R.; Ghosh, S.; Subramanian, S.; Zhang, X. Short-term forecasting of the daily load curve for residential electricity usage in the Smart Grid. Appl. Stoch. Model. Bus. Ind. 2013, 29, 604–620. [Google Scholar] [CrossRef]

- Rhodes, J.D.; Cole, W.J.; Upshaw, C.R.; Edgar, T.F.; Webber, M.E. Clustering analysis of residential electricity demand profiles. Appl. Energy 2014, 135, 461–471. [Google Scholar] [CrossRef]

- Oh, H.S.; Lee, S.W. An anomaly intrusion detection method by clustering normal user behavior. Comput. Secur. 2003, 22, 596–612. [Google Scholar] [CrossRef]

- Fang, L.; He, B. A deep learning framework using multi-feature fusion recurrent neural networks for energy consumption forecasting. Appl. Energy 2023, 348, 121563. [Google Scholar] [CrossRef]

- Lang, K.; Zhang, M.; Yuan, Y.; Yue, X. Short-term load forecasting based on multivariate time series prediction and weighted neural network with random weights and kernels. Clust. Comput. 2019, 22, 12589–12597. [Google Scholar] [CrossRef]

- Unterluggauer, T.; Rauma, K.; Järventausta, P.; Rehtanz, C. Short-term load forecasting at electric vehicle charging sites using a multivariate multi-step long short-term memory: A case study from Finland. IET Electr. Syst. Transp. 2021, 11, 405–419. [Google Scholar] [CrossRef]

- Bracale, A.; Caramia, P.; De Falco, P.; Hong, T. Multivariate quantile regression for short-term probabilistic load forecasting. IEEE Trans. Power Syst. 2019, 35, 628–638. [Google Scholar] [CrossRef]

- Xing, Y.; Zhang, S.; Wen, P.; Shao, L.; Rouyendegh, B.D. Load prediction in short-term implementing the multivariate quantile regression. Energy 2020, 196, 117035. [Google Scholar] [CrossRef]

- Huang, Y.; Hasan, N.; Deng, C.; Bao, Y. Multivariate empirical mode decomposition based hybrid model for day-ahead peak load forecasting. Energy 2022, 239, 122245. [Google Scholar] [CrossRef]

- Xiao, Y.; Zheng, K.; Zheng, Z.; Qian, B.; Li, S.; Ma, Q. Multi-scale skip deep long short-term memory network for short-term multivariate load forecasting. J. Comput. Appl. 2021, 41, 231–236. [Google Scholar]

- Khan, M.; Javaid, N.; Iqbal, M.N.; Bilal, M.; Zaidi, S.F.A.; Raza, R.A. Load prediction based on multivariate time series forecasting for energy consumption and behavioral analytics. In Complex, Intelligent, and Software Intensive Systems; Springer: Cham, Switzerland, 2018; pp. 305–316. [Google Scholar]

- O’Shea, K.; Nash, R. An Introduction to Convolutional Neural Networks. arXiv 2015, arXiv:1511.08458. [Google Scholar] [CrossRef]

- Bendaoud, N.M.M.; Farah, N. Using deep learning for short-term load forecasting. Neural Comput. Appl. 2020, 32, 15029–15041. [Google Scholar] [CrossRef]

- Dong, X.; Qian, L.; Huang, L. Short-term load forecasting in smart grid: A combined CNN and K-means clustering approach. In 2017 IEEE International Conference on Big Data and Smart Computing (BigComp); IEEE: Piscataway, NJ, USA, 2017; pp. 119–125. [Google Scholar]

- Deng, Z.; Wang, B.; Xu, Y.; Xu, T.; Liu, C.; Zhu, Z. Multi-scale convolutional neural network with time-cognition for multi-step short-term load forecasting. IEEE Access 2019, 7, 88058–88071. [Google Scholar] [CrossRef]

- Jin, Y.; Acquah, M.A.; Seo, M.; Han, S. Short-term electric load prediction using transfer learning with interval estimate adjustment. Energy Build. 2022, 258, 111846. [Google Scholar] [CrossRef]

- Perera, M.; De Hoog, J.; Bandara, K.; Senanayake, D.; Halgamuge, S. Day-ahead regional solar power forecasting with hierarchical temporal convolutional neural networks using historical power generation and weather data. Appl. Energy 2024, 361, 122971. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is All you Need. In Advances in Neural Information Processing Systems; Curran Associates, Inc.: New York, NY, USA, 2017; Volume 30. [Google Scholar]

- Tang, X.; Chen, H.; Xiang, W.; Yang, J.; Zou, M. Short-term load forecasting using channel and temporal attention based temporal convolutional network. Electr. Power Syst. Res. 2022, 205, 107761. [Google Scholar] [CrossRef]

- Yang, D.; Li, M.; Guo, J.; Du, P. An attention-based multi-input LSTM with sliding window-based two-stage decomposition for wind speed forecasting. Appl. Energy 2024, 375, 124057. [Google Scholar] [CrossRef]

- He, Z.; Lin, R.; Wu, B.; Zhao, X.; Zou, H. Pre-Attention Mechanism and Convolutional Neural Network Based Multivariate Load Prediction for Demand Response. Energies 2023, 16, 3446. [Google Scholar] [CrossRef]

- Hochreiter, S.; Schmidhuber, J. Long Short-Term Memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- Mnih, V.; Heess, N.; Graves, A.; Kavukcuoglu, K. Recurrent models of visual attention. In Advances in Neural Information Processing Systems; Curran Associates, Inc.: New York, NY, USA, 2014; Volume 27. [Google Scholar]

- Devlin, J.; Chang, M.-W.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers); Association for Computational Linguistics: Stroudsburg, PA, USA, 2019; pp. 4171–4186. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.