An Improved FBPN-Based Detection Network for Vehicles in Aerial Images †

Abstract

1. Introduction

2. Related Work

3. Method

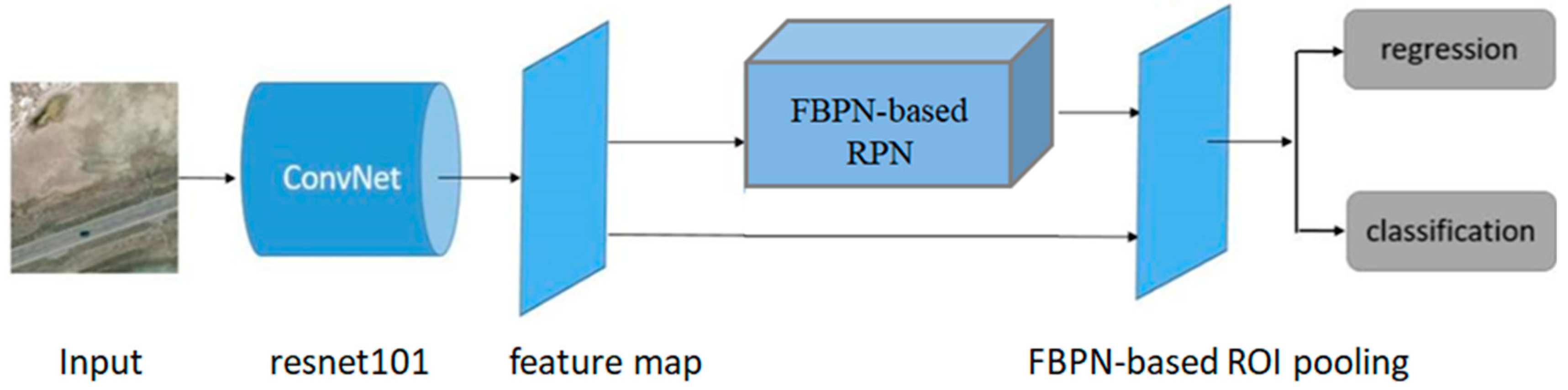

3.1. Introduction of Proposed Framework

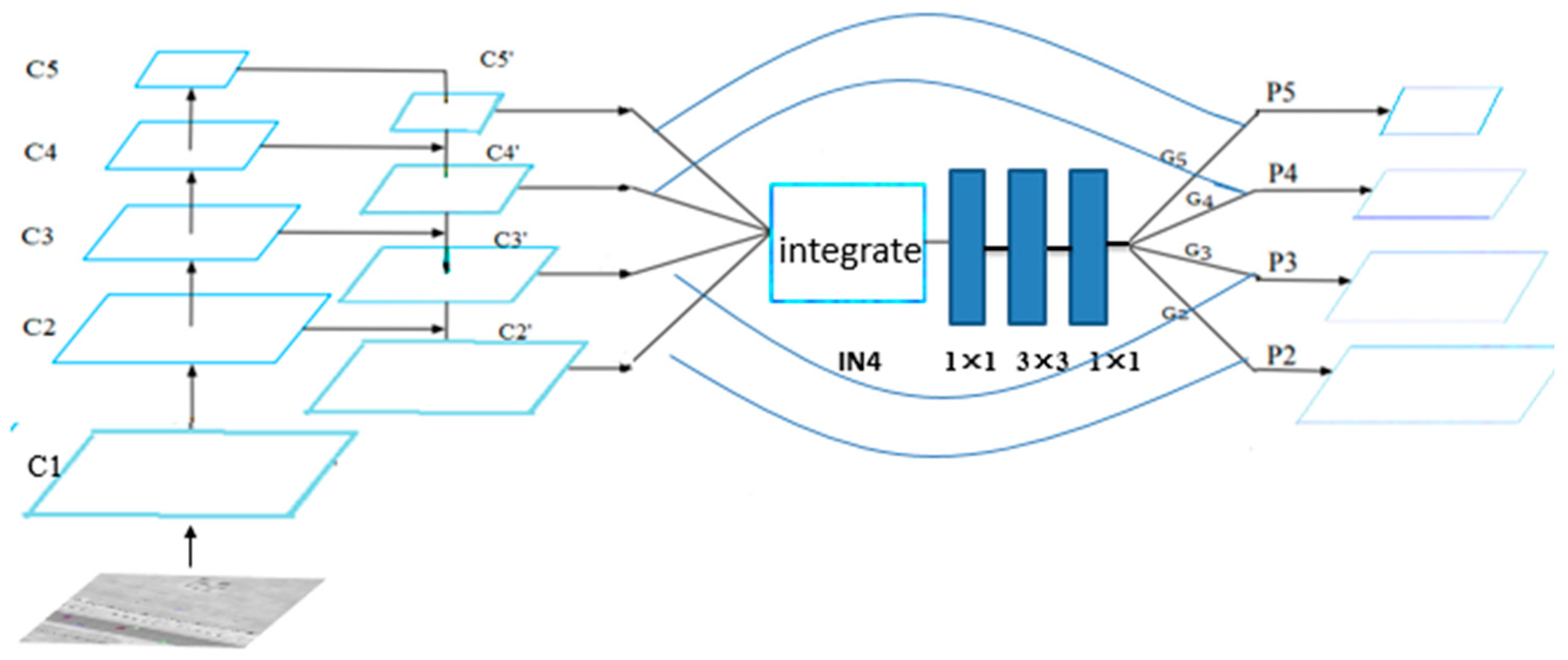

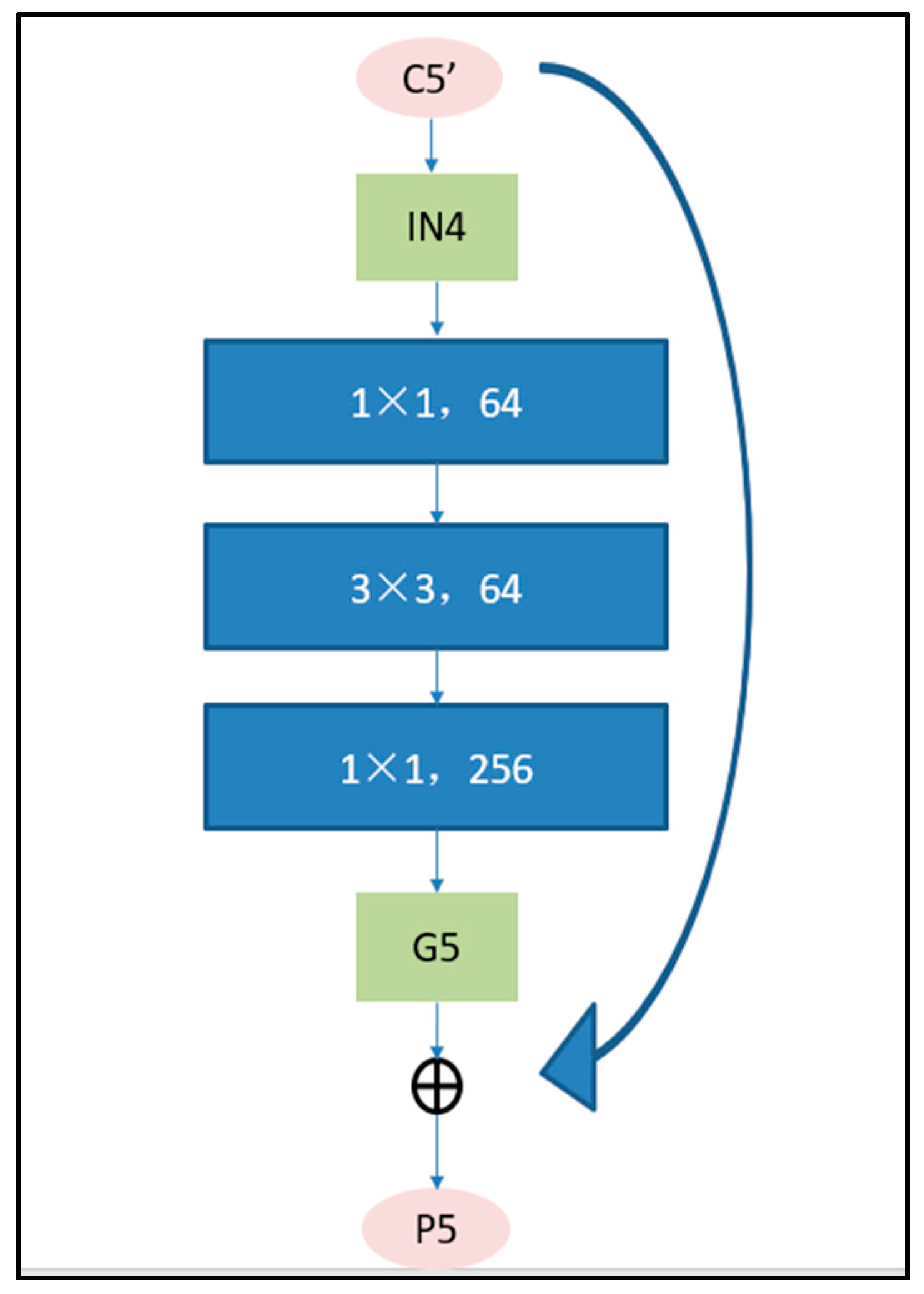

3.2. Introduction of Feature Balanced Pyramid Network (FBPN)

3.3. The Combination of FBPN and Faster Region Convolutional Neural Network (RCNN)

3.3.1. FBPN-Based Region Proposal Network (RPN)

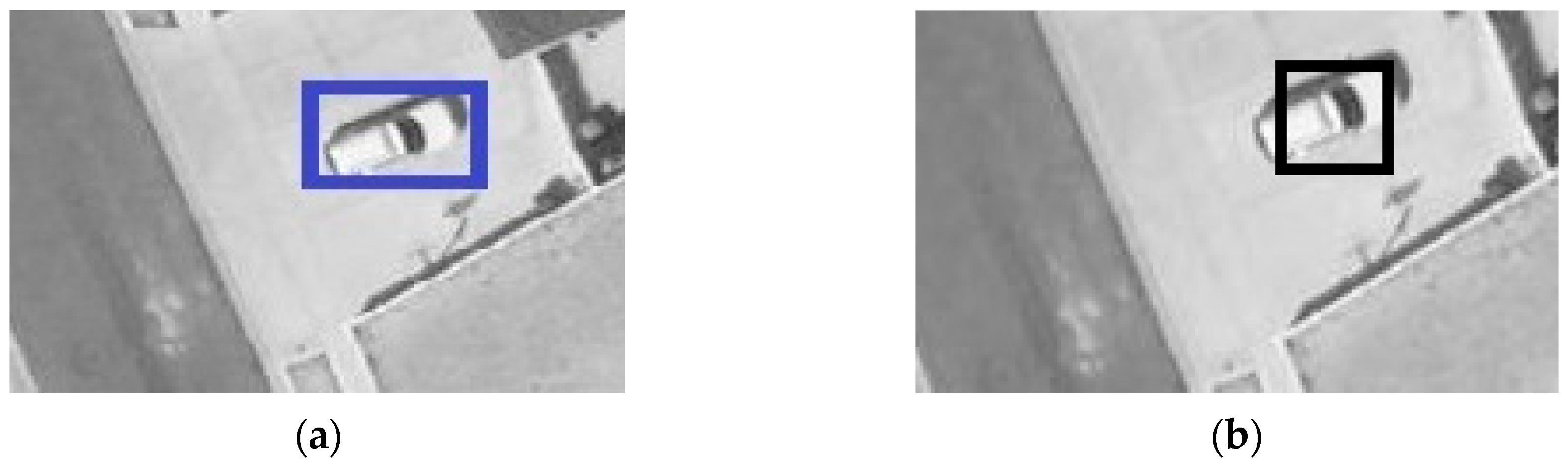

3.3.2. FBPN-Based Region of Interest (RoI) Pooling

3.3.3. Focal Loss Function

4. Experiments and Results

4.1. Dataset

4.1.1. Brief Introduction

4.1.2. VEDIA, USCAS-AOD and DOTA Datasets

4.2. Evaluation Method

4.3. Training Details of Proposed Framework

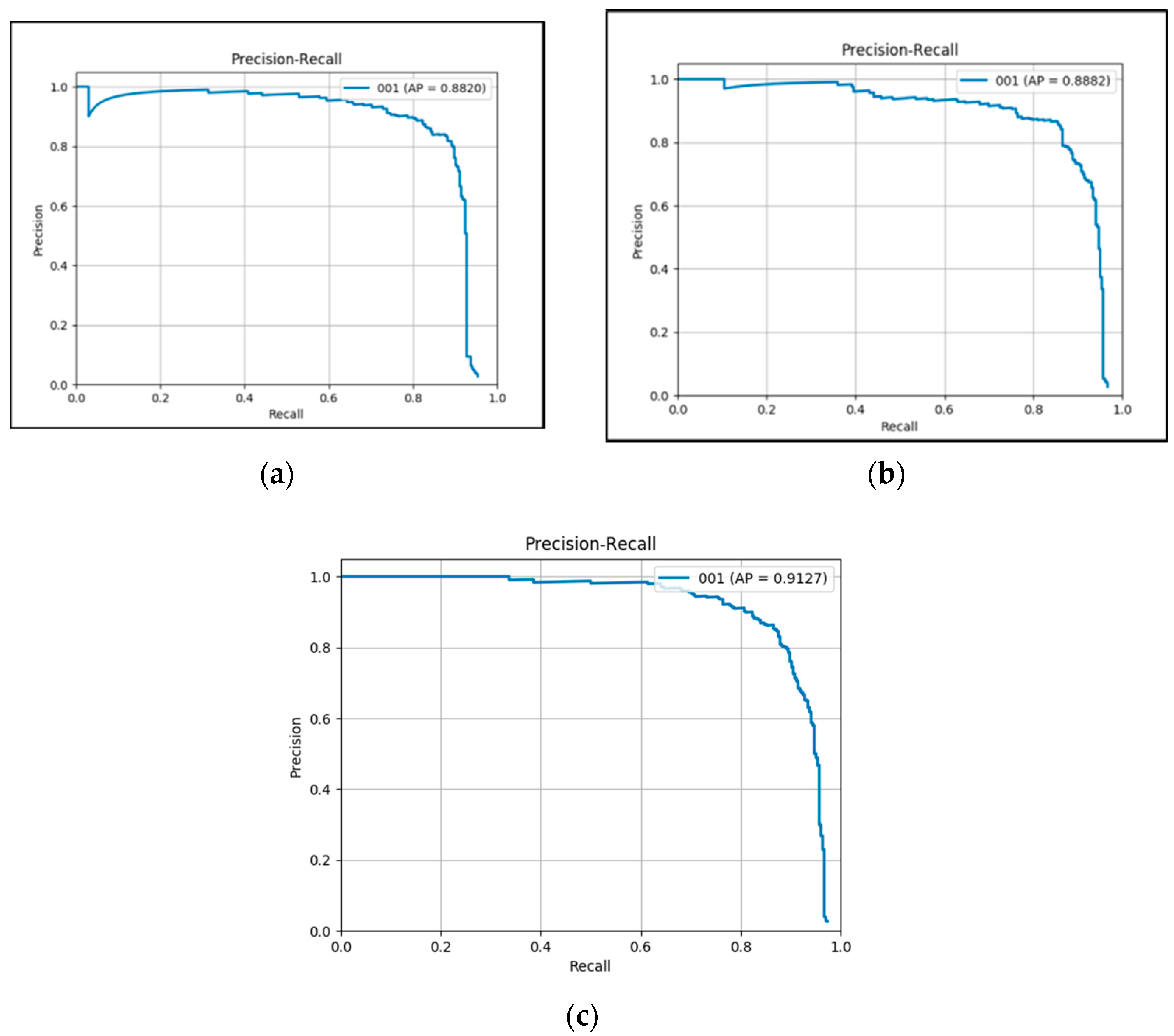

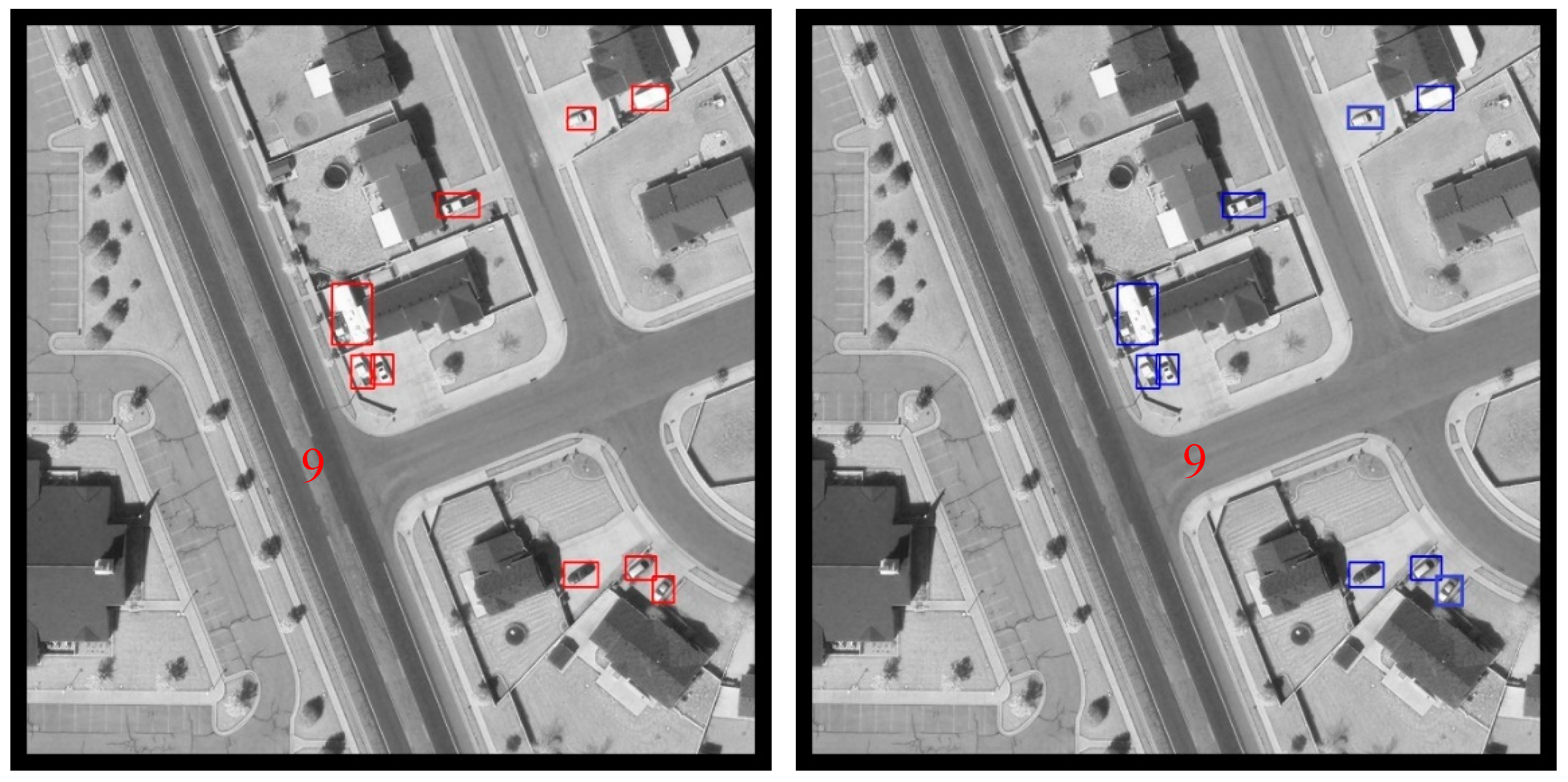

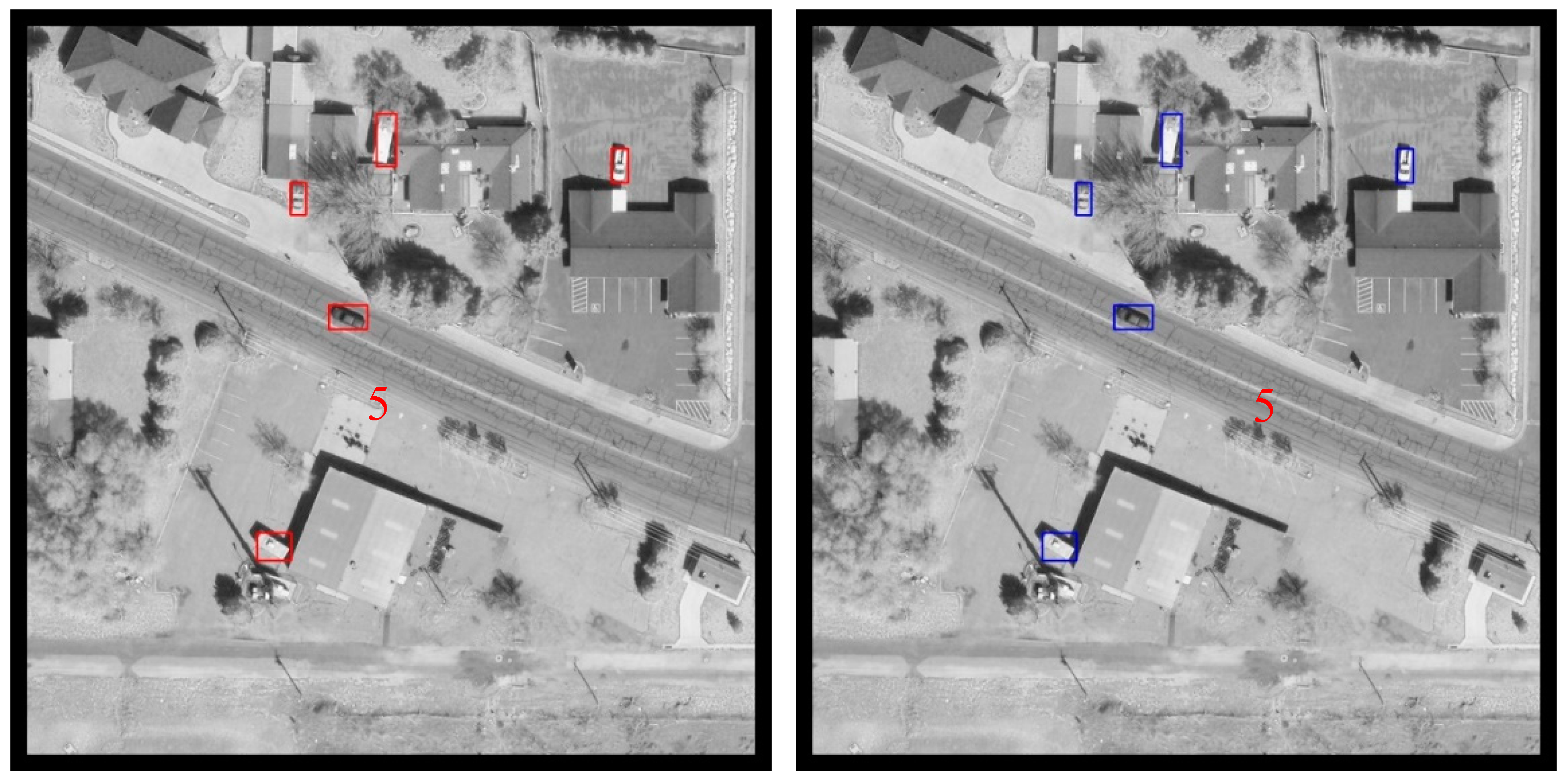

4.4. Results on VEDAI Dataset

| Method | mAP |

|---|---|

| AVDNet 2019 [40] | 51.95 |

| VDN 2017 [41] | 54.6 |

| DPM 2015 [32] | 60.5 |

| R3−Net (R + F) 2019 [42] | 69.0 |

| Faster-RCNN 2017 [32] | 70.9 |

| Improved Faster RCNN 2017 [37] | 74.30 |

| Ju, et al. 2019 [25] | 80.16 |

| YOLOv3_Joint-SRVDNet 2020 [43] | 80.4 |

| Faster RER-CNN 2018 [32] | 83.5 |

| YOLOv3_HR [43] | 85.66 |

| DFL 2018 [40] | 90.54 |

| Faster RCNN + Res2Net (resnet101) 2019 [38] | 81.96 |

| Faster RCNN + WaterFall (resnet50) 2019 [39] | 77.36 |

| Framework 1.1 | 88.20 |

| Framework 1.2 | 88.82 |

| Framework 1.3 | 91.27 |

| Framework | F1-Score | Test Time (Per Pic) | |||

|---|---|---|---|---|---|

| Improved Faster RCNN | 80.7 | 63.1 | 74.3 | 70.8 | 0.048 s |

| Framework 1.1 | 84.5 | 85.6 | 88.20 | 85.9 | 0.049 s |

| Framework 1.2 | 85.7 | 86.7 | 88.82 | 86.5 | 0.049 s |

| Framework 1.3 | 86.5 | 87.5 | 91.27 | 87 | 0.049 s |

4.5. Results on UCAS-AOD Dataset

| Method | mAP |

|---|---|

| YOLO v2 2017 [44] | 79.20 |

| SSD 2020 [12] | 81.37 |

| R-DFPN 2018 [45] | 82.50 |

| DRBox 2017 [46] | 85.00 |

| O2−DNet 2016 [47] | 86.72 |

| P-RSDet 2020 [48] | 87.36 |

| RFCN 2016 [49] | 89.30 |

| Deformable R-FCN 2017 [50] | 91.7 |

| S2ARN 2019 [51] | 92.20 |

| FADet 2019 [52] | 92.72 |

| RetinaNet-H 2019 [53] | 93.60 |

| R3Det 2019 [53] | 94.14 |

| A2RMNet 2019 [54] | 94.65 |

| SCRDet + + 2020 [55] | 94.97 |

| ICN 2018 [33] | 95.67 |

| UCAS + NWPU + VS-GANs 2019 [56] | 96.12 |

| Framework 1.2 | 96.18 |

| Framework | F1-Score | |||

|---|---|---|---|---|

| Framework 1.2 | 93.3 | 92.5 | 0.9618 | 92.89 |

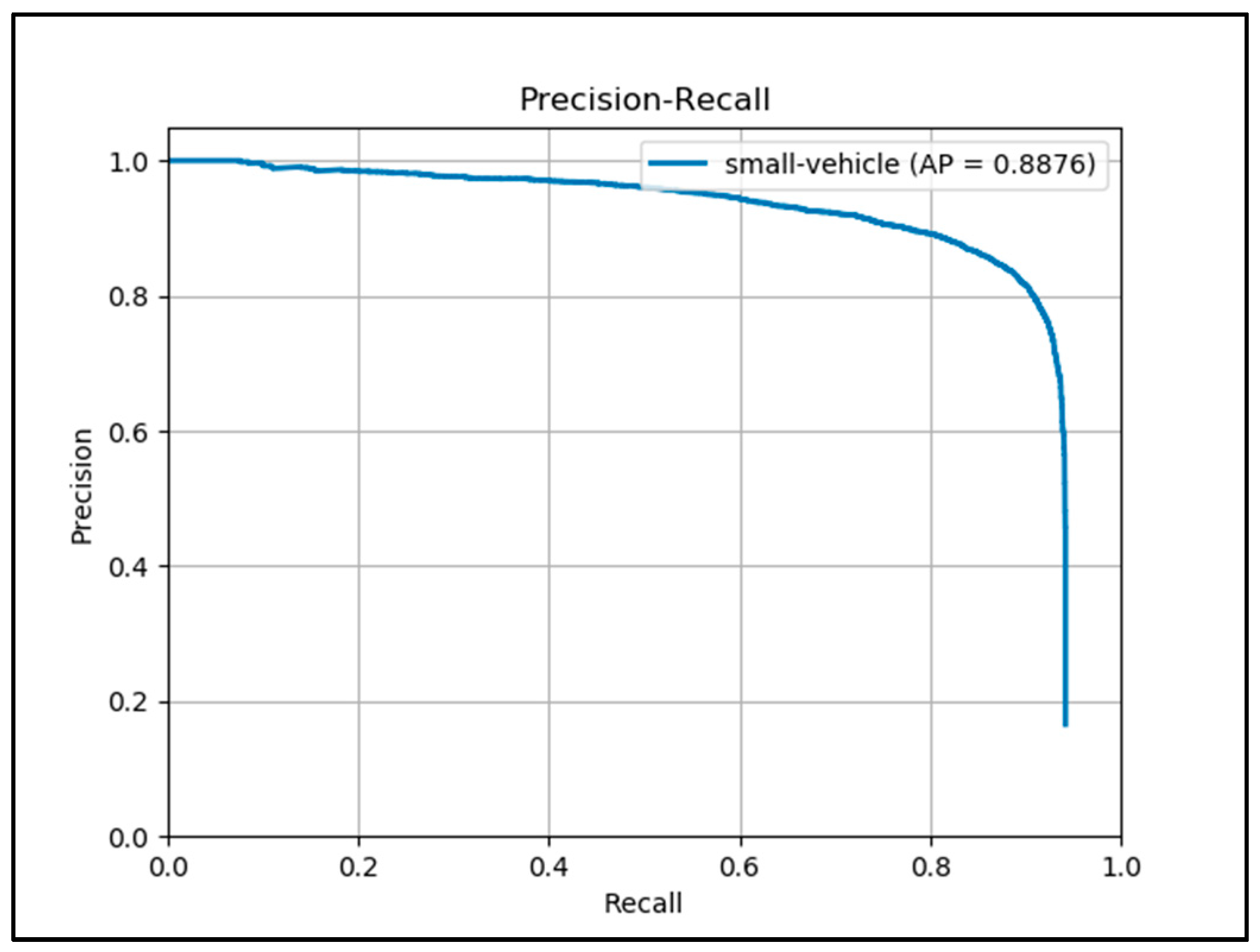

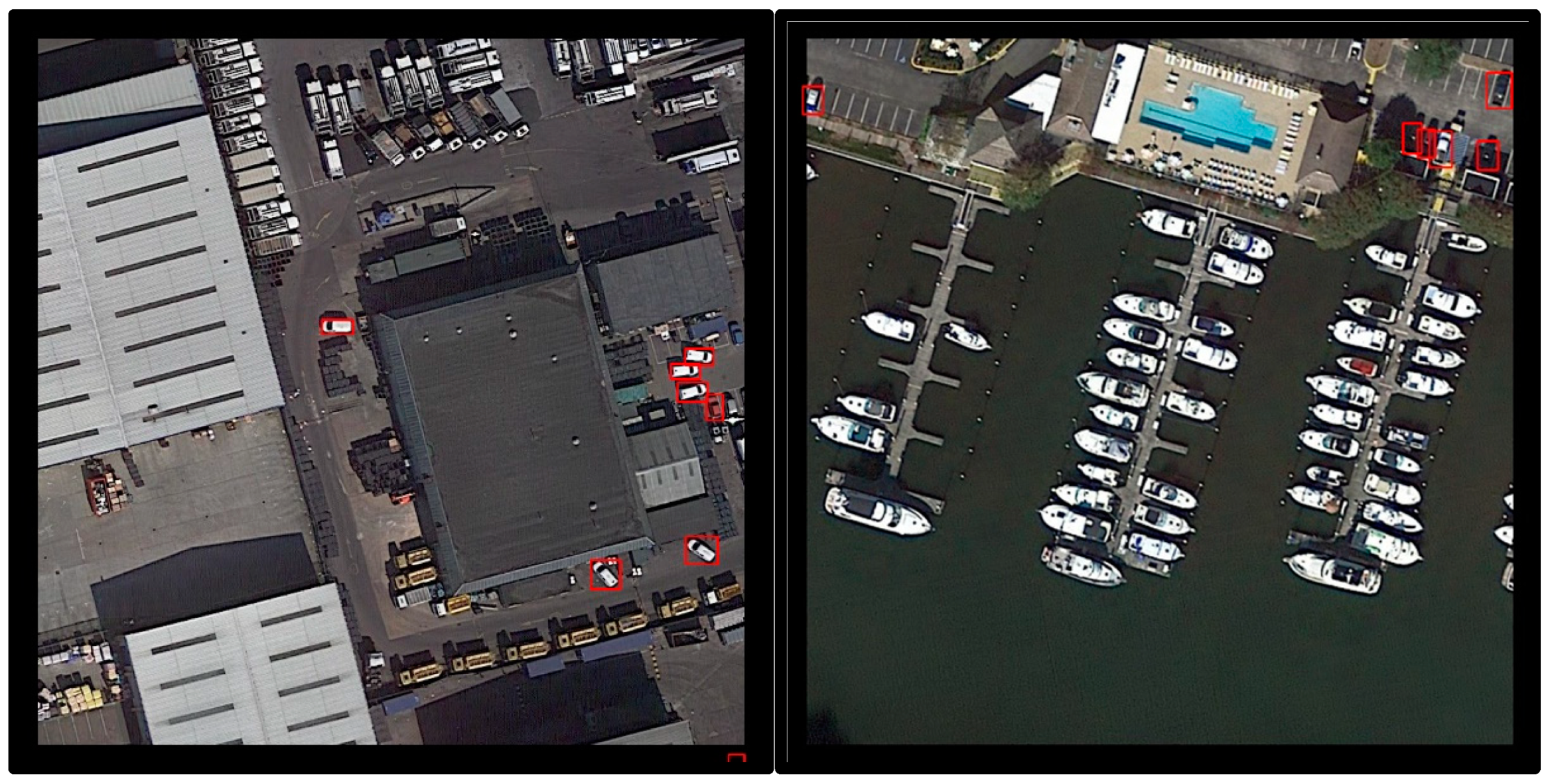

4.6. Results on DOTA Dataset

| Method | mAP(Small Vehicle) |

|---|---|

| DFL 2018 [40] | 45.56 |

| Yang et al. 2018 [57] | 61.16 |

| Ding, et al. 2018 [21] | 70.15 |

| Faster RCNN Adapted 2018 [58] | 74.9 |

| DYOLO Module B 2018 [58] | 76.0 |

| SSD Adapted2018 [58] | 76.3 |

| DFRCNN 2018 [59] | 76.5 |

| * SSSDet 2019 [26] | 77.22 |

| L-RCNN 2020 [29] | 77.86 |

| DSSD 2017 [60] | 79.0 |

| DYOLO Module A 2018 [58] | 79.2 |

| * AVDNet 2019 [40] | 79.65 |

| RefineDet 2018 [58] | 80.0 |

| * YOLO v3 2019 [26] | 88.31 |

| Ju, et al. 2019 [25] | 88.63 |

| Framework 1.2 | 88.76 |

| Framework | F1-Score | |||

|---|---|---|---|---|

| Framework 1.2 | 84.5 | 87.3 | 88.76 | 85.9 |

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Sakla, W.; Konjevod, G.; Mundhenk, T.N. Deep multi-modal vehicle detection in aerial ISR imagery. In Proceedings of the 2017 IEEE Winter Conference on Applications of Computer Vision (WACV), Santa Rosa, CA, USA, 24–31 March 2017. [Google Scholar]

- Koga, Y.; Miyazaki, H.; Shibasaki, R. A CNN-based method of vehicle detection from aerial images using hard example mining. Remote Sens. 2018, 10, 124. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Zhao, W.L.; Ngo, C.W. Flip-invariant SIFT for copy and object detection. IEEE Trans. Image Process. 2013, 22, 980–991. [Google Scholar] [CrossRef]

- Gan, G.; Cheng, J. Pedestrian detection based on HOG-LBP feature. In Proceedings of the Seventh International Conference on Computational Intelligence & Security, Sanya, China, 3–4 December 2011. [Google Scholar]

- Ali, A.; Olaleye, O.G.; Bayoumi, M. Fast region-based DPM object detection for autonomous vehicles. In Proceedings of the IEEE International Midwest Symposium on Circuits & Systems, Abu Dhabi, UAE, 16–19 October 2016. [Google Scholar]

- Girshick, R.; Donahue, J.; Darrell, T.; Malik, J. Rich feature hierarchies for accurate object detection and semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 24–27 June 2014; pp. 580–587. [Google Scholar]

- Girshick, R. Fast R-CNN. Comput. Sci. 2015, 1440–1448. [Google Scholar] [CrossRef]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards real-time object detection with region proposal networks. In Proceedings of the International Conference on Neural Information Processing Systems, Montreal, QC, Canada, 7–12 December 2015; pp. 91–99. [Google Scholar]

- Tang, T.; Zhou, S.; Deng, Z.; Zou, H.; Lei, L. Vehicle detection in aerial images based on region convolutional neural networks and hard negative example mining. Sensors 2017, 17, 336. [Google Scholar] [CrossRef] [PubMed]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You only look once: Unified, real-time object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 26 June–1 July 2016; pp. 779–788. [Google Scholar]

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.; Berg, A. SSD: Single shot multibox detector. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 8–16 October 2016; p. 2. [Google Scholar]

- Bell, S.; Zitnick, C.; Bala, K.; Girshick, R. Inside-outside net: Detecting objects in context with skip pooling and recurrent neural networks. In Proceedings of the IEEE conference on computer vision and pattern recognition, Las Vegas, NV, USA, 26 June–1 July 2016. [Google Scholar]

- Calleja, J.D.L.; Tecuapetla, L.; Medina, M.A.; Everardo, B.; Argelia, B.; Urbina, N. LBP and machine learning for diabetic retinopathy detection intelligent data engineering and automated learning–IDEAL 2014. In Proceedings of the IEEE International Conference on Robotics and Automation, Shanghai, China, 9–13 May 2011; pp. 2065–2070. [Google Scholar]

- Gleason, J.; Nefian, A.V.; Bouyssounousse, X.; Fong, T.; Bebis, G. Vehicle detection from aerial imagery. In Proceedings of the IEEE International Conference on Robotics and Automation, Shanghai, China, 9–13 May 2011. [Google Scholar]

- Razakarivony, S.; Jurie, F. Discriminative auto encoders for small targets detection. In Proceedings of the International Conference on Pattern Recognition, Stockholm Waterfront, Stockholm, Sweden, 24–28 August 2014; pp. 3528–3533. [Google Scholar]

- Andelson, E.H.; Anderson, C.H.; Bergen, J.R.; Burt, P.J. Pyramid methods in image processing. RCA Eng. 1984, 29, 33–41. [Google Scholar]

- Deng, J.; Dong, W.; Socher, R.; Li, L.J.; Li, K.; Li, F.F. ImageNet: A large-scale hierarchical image database. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Miami, FL, USA, 20–25 June 2009. [Google Scholar]

- Razakarivony, S.; Jurie, F. Vehicle detection in aerial imagery: A small target detection benchmark. J. Vis. Commun. Image Represent. 2016, 34, 187–203. [Google Scholar] [CrossRef]

- Yang, M.Y.; Liao, W.; Li, X.; Rosenhahn, B. Vehicle Detection in Aerial Images. Photogramm. Eng. Remote Sens. 2018, 4, 85. [Google Scholar] [CrossRef]

- Ding, J.; Xue, N.; Long, Y.; Xia, G.S.; Lu, Q. Learning RoI Transformer for Detecting Oriented Objects in Aerial Images. arXiv 2018, arXiv:1812.00155. [Google Scholar]

- Liu, S.; Qi, L.; Qin, H.; Shi, J.; Jia, J. Path aggregation network for instance segmentation. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–22 June 2018. [Google Scholar]

- Cheng, G.; Han, J.; Zhou, P.; Xu, D. Learning Rotation-Invariant and Fisher Discriminative Convolutional Neural Networks for Object Detection. IEEE Trans. Image Process. 2018, 28, 265–278. [Google Scholar] [CrossRef]

- Li, W.; Li, H.; Wu, Q.; Chen, X.; Ngan, K.N. Simultaneously Detecting and Counting Dense Vehicles from Drone Images. IEEE Trans. Ind. Electron. 2019, 12, 9651–9662. [Google Scholar] [CrossRef]

- Ju, M.; Luo, J.; Zhang, P.; He, M.; Luo, H. A simple and efficient network for small target detection. IEEE Access 2019, 7, 85771–85781. [Google Scholar] [CrossRef]

- Mandal, M.; Shah, M.; Meena, P.; Vipparthi, S.K. SSSDET: Simple Short and Shallow Network for Resource Efficient Vehicle Detection in Aerial Scenes. In Proceedings of the 2019 IEEE International Conference on Image Processing (ICIP), Taipei, Taiwan, 22–25 September 2019. [Google Scholar]

- Feng, R.; Fan, C.; Li, Z.; Chen, X. Mixed road user trajectory extraction from moving aerial videos based on convolution neural network detection. IEEE Access 2020, 8, 43508–43519. [Google Scholar] [CrossRef]

- Zhou, L.; Min, W.; Lin, D.; Han, Q.; Liu, R. Detecting Motion Blurred Vehicle Logo in IoV Using Filter-DeblurGAN and VL-YOLO. IEEE Trans. Veh. Technol. 2020, 69, 3604–3614. [Google Scholar] [CrossRef]

- Liao, W.; Chen, X.; Yang, J.; Roth, S.; Rosenhahn, B. LR-CNN: Local-aware Region CNN for Vehicle Detection in Aerial Imagery. arXiv 2020, arXiv:2005.14264. [Google Scholar] [CrossRef]

- Rabbi, J.; Ray, N.; Schubert, M.; Chowdhury, S.; Chao, D. Small-Object Detection in Remote Sensing Images with End-to-End Edge-Enhanced GAN and Object Detector Network. Remote Sens. 2020, 12, 1432. [Google Scholar] [CrossRef]

- Lin, T.Y.; Dollar, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature Pyramid Networks for Object Detection. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- Terrail, J.O.D.; Jurie, F. Faster RER-CNN: Application to the Detection of Vehicles in Aerial Images. arXiv 2018, arXiv:1809.07628. [Google Scholar]

- Azimi, S.M.; Vig, E.; Bahmanyar, R.; Krner, M.; Reinartz, P. Towards Multi-class Object Detection in Unconstrained Remote Sensing Imagery. In Proceedings of the 14th Asian Conference on Computer Vision, Perth, Australia, 2–6 December 2018. [Google Scholar]

- Liu, K.; Mattyus, G. Fast Multiclass Vehicle Detection on Aerial Images. IEEE Geosci. Remote Sens. Lett. 2015, 12, 1938–1942. [Google Scholar] [CrossRef]

- Zhu, H.; Chen, X.; Dai, W.; Fu, K.; Ye, Q.; Jiao, J. Orientation robust object detection in aerial images using deep convolutional neural network. In Proceedings of the IEEE International Conference on Image Processing, Quebec City, QC, Canada, 27–30 September 2015. [Google Scholar]

- Xia, G.S.; Bai, X.; Ding, J.; Zhu, Z.; Belongie, S.; Luo, J.; Datcu, M.; Pelillo, M.; Zhang, L. DOTA: A Large-scale Dataset for Object Detection in Aerial Images. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–23 June 2018. [Google Scholar]

- Gu, Y.; Wang, B.; Xu, B. A FPN-based framework for vehicle detection in Aerial images. In ACM International Conference Proceeding Series; Association for Computing Machinery: Shanghai, China, 2018; pp. 60–64. [Google Scholar] [CrossRef]

- Gao, S.; Cheng, M.M.; Zhao, K.; Zhang, X.Y.; Yang, M.H.; Torr, P.H. Res2net: A new multi-scale backbone architecture. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 1. [Google Scholar] [CrossRef]

- Artacho, B.; Savakis, A. Waterfall atrous spatial pooling architecture for efficient semantic segmentation. Sensors 2019, 19, 5361. [Google Scholar] [CrossRef]

- Mandal, M.; Shah, M.; Meena, P.; Devi, S.; Vipparthi, S.K. AVDNet: A Small-Sized Vehicle Detection Network for Aerial Visual Data. IEEE Geosci. Remote Sens. Lett. 2019, 17, 494–498. [Google Scholar] [CrossRef]

- Cai, Z.; Vasconcelos, N. Cascade R-CNN: Delving into High Quality Object Detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–23 June 2018. [Google Scholar]

- Li, Q.; Mou, L.; Xu, Q.; Zhang, Y.; Zhu, X. R3-Net: A Deep Network for Multioriented Vehicle Detection in Aerial Images and Videos. arXiv 2018, arXiv:1808.05560. [Google Scholar] [CrossRef]

- Mostofa, M.; Ferdous, S.N.; Riggan, B.S.; Nasrabadi, N.M. Joint-SRVDNet: Joint Super Resolution and Vehicle Detection Network. IEEE Access 2020, 99, 1. [Google Scholar] [CrossRef]

- Redmon, J.; Farhadi, A. Yolo9000: Better, faster, stronger. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- Yang, X.; Sun, H.; Fu, K.; Yang, J.; Sun, X.; Yan, M.; Guo, Z. Automatic Ship Detection in Remote Sensing Images from Google Earth of Complex Scenes Based on Multiscale Rotation Dense Feature Pyramid Networks. Remote Sens. 2018, 10, 132. [Google Scholar] [CrossRef]

- Liu, L.; Pan, Z.; Lei, B. Learning a Rotation Invariant Detector with Rotatable Bounding Box. arXiv 2017, arXiv:1711.09405. [Google Scholar]

- Wei, H.; Zhou, L.; Zhang, Y.; Li, H.; Guo, R.; Wang, H. Oriented Objects as pairs of Middle Lines. arXiv 2020, arXiv:1912.10694. [Google Scholar]

- Zhou, L.; Wei, H.; Li, H.; Zhao, W.; Zhang, Y. Objects detection for remote sensing images based on polar coordinates. arXiv 2020, arXiv:2001.02988. [Google Scholar]

- Dai, J.; Li, Y.; He, K.; Sun, J. R-FCN: Object detection via region-based fully convolutional networks. In Advances in Neural Information Processing Systems; Curran Associates: Barcelona, Spain, 2016; pp. 379–387. [Google Scholar]

- Dai, J.; Qi, H.; Xiong, Y.; Li, Y.; Zhang, G.; Hu, H.; Wei, Y. Deformable convolutional networks. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 764–773. [Google Scholar]

- Bao, S.; Zhong, X.; Zhu, R.; Zhang, X.; Li, M. Single Shot Anchor Refinement Network for Oriented Object Detection in Optical Remote Sensing Imagery. IEEE Access 2019, 99, 1. [Google Scholar] [CrossRef]

- Li, C.; Xu, C.; Cui, Z.; Wang, D.; Yang, J. Feature-attentioned object detection in remote sensing imagery. In Proceedings of the 2019 IEEE International Conference on Image Processing (ICIP), Taipei, Taiwan, 22–25 September 2019. [Google Scholar]

- Yang, X.; Liu, Q.; Yan, J.; Li, A.; Zhang, Z.; Yu, G. R3Det: Refined Single-Stage Detector with Feature Refinement for Rotating Object. arXiv 2020, arXiv:1908.05612. [Google Scholar]

- Qiu, H.; Li, H.; Wu, Q.; Meng, F.; Ngan, K.N.; Shi, H. A2RMNet: Adaptively aspect ratio multi-scale network for object detection in remote sensing images. Remote Sens. 2019, 11, 1594. [Google Scholar] [CrossRef]

- Yang, X.; Yan, J.; Yang, X.; Tang, J.; Liao, W.; He, T. SCRDet++: Detecting Small, Cluttered and Rotated Objects via Instance-Level Feature Denoising and Rotation Loss Smoothing. arXiv 2020, arXiv:2004.13316. [Google Scholar]

- Zheng, K.; Wei, M.; Sun, G.; Anas, B.; Li, Y. Using vehicle synthesis generative adversarial networks to improve vehicle detection in remote sensing images. ISPRS Int. J. Geo Inf. 2019, 8, 390. [Google Scholar] [CrossRef]

- Yang, X.; Sun, H.; Sun, X.; Yan, M.; Guo, Z.; Fu, K. Position Detection and Direction Prediction for Arbitrary-Oriented Ships via Multitask Rotation Region Convolutional Neural Network. IEEE Access 2018, 6, 50839–50849. [Google Scholar] [CrossRef]

- Acatay, O. Comprehensive evaluation of deep learning based detection methods for vehicle detection in aerial imagery. In Proceedings of the 2018 15th IEEE International Conference on Advanced Video and Signal Based Surveillance (AVSS), Auckland, New Zealand, 27–30 November 2018; pp. 1–6. [Google Scholar]

- Sommer, L.; Schumann, A.; Schuchert, T.; Beyerer, J. Multi feature deconvolutional faster r-cnn for precise vehicle detection in aerial imagery. In Proceedings of the 2018 IEEE Winter Conference on Applications of Computer Vision (WACV), Lake Tahoe, NV, USA, 12–15 March 2018. [Google Scholar]

- Fu, C.Y.; Liu, W.; Ranga, A.; Tyagi, A.; Berg, A.C. DSSD: Deconvolutional Single Shot Detector. arXiv 2017, arXiv:1701.06659. [Google Scholar]

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wang, B.; Gu, Y. An Improved FBPN-Based Detection Network for Vehicles in Aerial Images. Sensors 2020, 20, 4709. https://doi.org/10.3390/s20174709

Wang B, Gu Y. An Improved FBPN-Based Detection Network for Vehicles in Aerial Images. Sensors. 2020; 20(17):4709. https://doi.org/10.3390/s20174709

Chicago/Turabian StyleWang, Bin, and Yinjuan Gu. 2020. "An Improved FBPN-Based Detection Network for Vehicles in Aerial Images" Sensors 20, no. 17: 4709. https://doi.org/10.3390/s20174709

APA StyleWang, B., & Gu, Y. (2020). An Improved FBPN-Based Detection Network for Vehicles in Aerial Images. Sensors, 20(17), 4709. https://doi.org/10.3390/s20174709