Abstract

This study examines the factors driving perceived Study Efficiency and Exam Readiness associated with ChatGPT use among STEM students in higher education. Although prior research on generative artificial intelligence (GenAI) has largely focused on adoption and attitudes using descriptive or linear statistical approaches, limited empirical work has explored how students’ interactions with such tools relate to learning-related outcomes. To address this gap, this study applies an interpretable machine learning (ML) framework to identify key predictors of learning gains from ChatGPT use. Data were obtained from a large-scale global survey of STEM students (n = 10,525) across 109 countries and territories, capturing usage patterns, perceived capabilities, satisfaction, and academic outcomes. Two eXtreme Gradient Boosting (XGBoost)-based ML classification models were developed to predict Study Efficiency and Exam Readiness, and SHapley Additive exPlanations (SHAP) were used to interpret feature-level contributions. The models achieved strong predictive performance for the high-gain class, with an accuracy of 0.93 (F1 = 0.96) for Study Efficiency and 0.86 (F1 = 0.92) for Exam Readiness. Results indicate that motivation, personalized learning support, improved access to knowledge, facilitation of study activities, and exam-focused study assistance are key predictors of learning gains. These findings offer empirical and practical insights for educators and policymakers seeking to design effective and pedagogically sound AI-assisted learning environments in STEM education.

1. Introduction

The rapid advancement of generative artificial intelligence (GenAI) has led to the widespread adoption of tools such as ChatGPT across multiple domains, including higher education. Since its public release, ChatGPT has attracted significant attention from educators and students for its ability to generate human-like responses, summarize complex information, assist with problem-solving, provide on-demand academic support, facilitate self-directed learning, generate practice questions, and assist with academic writing [1,2,3]. In STEM disciplines, where students often face high cognitive demands, complex problem structures, and intensive assessments, GenAI tools have the potential to substantially reshape learning processes and outcomes [4,5].

While these affordances suggest that ChatGPT may positively influence students’ perceived study efficiency and preparedness for assessments, its integration into higher education also raises important challenges. Risks include overreliance on AI-generated content, potential hindrance of critical thinking and deep conceptual understanding, academic integrity concerns such as plagiarism and cheating, occasional inaccuracies in AI outputs, and privacy issues [6,7,8]. Together, these factors indicate that ChatGPT’s impact on learning is complex and context-dependent, with the potential to both support and impede student outcomes depending on how it is used.

Despite the rapid proliferation of ChatGPT, empirical research examining students’ learning-related outcomes remains limited, particularly at a global scale [9]. Most existing studies focus on adoption intentions, usage patterns, or general attitudes toward ChatGPT, often relying on region-specific or institution-specific data [10,11]. Findings regarding the influence of sociodemographic characteristics, such as gender, age, or academic level, are mixed and inconclusive [12], suggesting that perceived learning benefits may be shaped more by how students engage with ChatGPT to support specific study processes rather than by who the students are. Additionally, few studies explicitly examine disciplinary variations, particularly in STEM, where AI-assisted coding, quantitative problem-solving, and exam preparation may play a distinct role in shaping both perceived and actual learning outcomes [5]. Furthermore, most prior analyses rely on conventional statistical methods, which may be limited in capturing complex, nonlinear relationships and interactive effects inherent in students’ use of AI tools [13,14].

Despite prior research examining attitudes toward and adoption of ChatGPT in higher education, there remains a lack of global, STEM-specific empirical evidence on how students’ engagement with ChatGPT influences learning-related outcomes such as perceived Study Efficiency and Exam Readiness, particularly using analytical approaches capable of capturing complex and nonlinear relationships among usage patterns, perceptions, and motivational factors.

To address this gap and to improve understanding of how GenAI tools can support learning and assessment preparedness in higher education, the present study investigates the factors driving perceived Study Efficiency and Exam Readiness from ChatGPT use among STEM students using an interpretable machine learning (ML) approach. Drawing on data from a global survey of STEM students across 109 countries and territories, this study examines self-reported academic outcomes related to ChatGPT use, encompassing perceived usage and experience, perceived capabilities, and satisfaction and attitudes. Two independent eXtreme Gradient Boosting (XGBoost) ML models are developed, one predicting perceived Study Efficiency and the other predicting Exam Readiness, using an identical set of explanatory variables. To enhance transparency and interpretability, SHapley Additive exPlanations (SHAP), an explainable artificial intelligence (XAI) technique, is applied to identify and explain the most influential predictors for each learning outcome. This design allows for a direct comparison of shared and outcome-specific drivers, providing insights into the mechanisms through which ChatGPT supports different dimensions of learning.

By focusing on STEM students and emphasizing explainable ML results, this study makes two primary contributions. Theoretically, it advances understanding of how GenAI tools support learning processes by linking specific perceptions and behaviors to learning-related outcomes. Methodologically, it demonstrates the utility of interpretable ML techniques for uncovering complex, nonlinear relationships in high-dimensional survey data. Collectively, the findings provide actionable insights for educators, policymakers, and AI developers seeking to optimize AI-assisted learning environments in higher education, with an emphasis on responsible and goal-aligned use of ChatGPT.

2. Literature Review

Empirical research on GenAI in higher education has expanded rapidly, with a growing body of studies examining how students perceive, adopt, and use tools such as ChatGPT [1,2,3,4]. Much of this literature has focused on understanding students’ awareness, frequency of use, and general attitudes toward ChatGPT, often framing adoption through established models such as the Technology Acceptance Model (TAM), Unified Theory of Acceptance and Use of Technology (UTAUT), or expectancy–value theory [15,16,17]. These studies consistently report that perceived usefulness, ease of use, and performance expectancy are central determinants of students’ willingness to engage with ChatGPT. However, this strand of research primarily addresses intentions and adoption, rather than how ChatGPT usage translates into concrete learning-related outcomes such as study efficiency or exam readiness.

A parallel line of research has examined students’ experiences and perceptions of ChatGPT across different educational contexts and regions. Institutional or national surveys have documented generally positive student views regarding ChatGPT’s ability to support academic writing, clarify complex concepts, save time, and enhance productivity [18,19,20]. For example, Bosch et al. [18], in a large survey of South African university students, found that learners frequently used AI tools for idea generation, summarization, language support, and referencing. Similarly, Chan and Hu [20] reported that Hong Kong students demonstrated moderate to high levels of understanding and comfort with GenAI tools such as ChatGPT, while simultaneously expressing concerns about its impact on the value of university education. Although these studies provide valuable descriptive insights, they often remain limited to specific national or institutional settings and focus more on usage patterns and attitudes than on learning-related outcomes. Beyond immediate academic support, researchers have emphasized that intentional integration of GenAI within higher education curricula may enhance graduate employability by developing AI-supported problem-solving, communication, and decision-making skills aligned with evolving labor market demands [21].

Empirical studies have further documented how students’ use of GenAI tools intersects with academic integrity and learning challenges. For instance, Kovari [22] reported that some students employed ChatGPT-generated content for essay drafting and referencing without clear awareness of ethical guidelines. Other studies indicate that students are uncertain whether AI-assisted work might constitute plagiarism or violate academic policies [23], while excessive reliance on ChatGPT for problem-solving or writing tasks can reduce opportunities to engage in critical thinking and achieve deep conceptual understanding [24]. Additional concerns include data privacy, transparency, and the alignment of ChatGPT use with human values and long-term career development [25,26]. Institutional responses to these challenges appear uneven; fewer than half of the leading universities had publicly available policies addressing GenAI and ChatGPT use [27]. Together, these findings suggest that while ChatGPT offers educational affordances, its impact on learning depends on contextual factors, ethical guidance, and students’ active engagement.

Several studies have explored how individual differences shape students’ perceptions and use of GenAI tools. Factors such as gender, age, academic level, discipline, and prior experience with digital technologies have been examined, though results remain mixed [18,23,28,29]. Although some research highlights disciplinary differences, with STEM students showing higher familiarity and confidence than their peers in healthcare, arts, or education, other studies report minimal or no differences across fields [15,20]. These inconsistencies highlight the importance of focusing on how specific interactions with ChatGPT support learning processes rather than assuming uniform effects across student populations.

More recently, scholars have begun to examine the relationship between students’ perceptions of ChatGPT and broader educational outcomes, including motivation, engagement, and learning approaches. Learning theory suggests that positive self-perceptions and supportive environments are associated with deeper learning, whereas dissatisfaction or low self-efficacy may lead to surface learning strategies focused on memorization rather than understanding [30,31]. Yet, empirical studies directly linking ChatGPT usage to learning-related outcomes such as study efficiency, exam readiness, or academic performance remain scarce. Moreover, existing analyses often rely on traditional statistical methods that assume linear relationships and limited interactions among variables, potentially overlooking the complex, nonlinear patterns inherent in students’ AI-assisted learning experiences [13,14].

Taken together, the literature can be synthesized into three interrelated insights that directly motivate the present study. First, prior work establishes that students generally perceive ChatGPT as useful and engaging, but evidence connecting its use to concrete learning gains is limited. Second, disciplinary and contextual differences underscore the need to focus on STEM fields, where problem-solving, coding, and assessment preparation may interact uniquely with AI tools. Third, methodological limitations, including the use of descriptive statistics and linear models, constrain the ability to capture complex, nonlinear, and interactive predictors of perceived study efficiency and exam readiness.

Collectively, these gaps highlight the need for a study that foregrounds learning-related outcomes, focuses on STEM students, and adopts analytical methods capable of capturing complex, nonlinear interactions among predictors. To address these needs, the present study applies an interpretable ML framework using XGBoost and SHAP to identify the factors most strongly associated with perceived gains in Study Efficiency and Exam Readiness. By moving beyond descriptive accounts to predictive and explanatory analysis, this study provides a methodologically robust, outcome-focused understanding of how ChatGPT supports learning processes in higher education.

3. Methodology

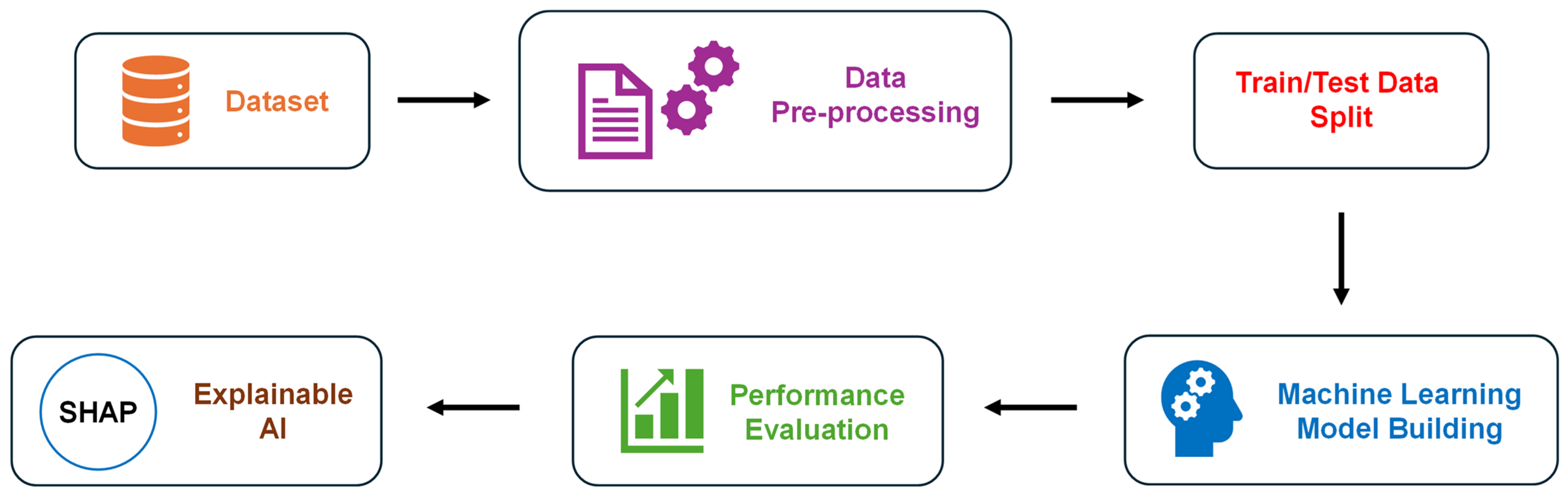

This study adopts an ML-based analytical framework to examine the factors influencing perceived Study Efficiency and Exam Readiness from ChatGPT use among STEM students. The framework integrates survey-based self-reported measures with an ML-based predictive model and post hoc interpretability analysis using XAI techniques such as SHAP. Figure 1 presents an overview of the analytical pipeline, from data preprocessing to model interpretation. Each step of this pipeline is described in detail in the following subsections.

Figure 1.

Overview of the machine learning methodology used to predict Study Efficiency and Exam Readiness from ChatGPT use among STEM students.

3.1. Dataset

This study uses data from a Global ChatGPT Student Survey designed to examine students’ perceptions, usage patterns, and attitudes toward ChatGPT across higher education contexts in 109 different countries and territories [32]. The survey includes detailed information on sociodemographic characteristics, learning environments, ChatGPT usage behaviors, perceived capabilities, ethical concerns, emotional responses, and study-related outcomes. Each survey item is identified by a unique question code (Q-code), consisting of the main question number and, where applicable, a letter suffix (e.g., Q26e, Q18a). These codes are used throughout the analysis for clarity.

For the purposes of this analysis, the sample was restricted to STEM students. This restriction was applied to reduce disciplinary heterogeneity and to focus on fields where computational tools, problem-solving, and exam-based assessment are particularly salient. Only respondents who indicated that they had used ChatGPT were retained for the analysis, as the study outcomes explicitly refer to perceived impacts of ChatGPT use. After applying these filters, the final analytical sample consisted of n = 10,524 responses from STEM students belonging to 109 different countries and territories.

Two learning-related outcomes were examined in this work independently, and they included:

- Study Efficiency (Q26e): the extent to which ChatGPT improves the respondent’s study efficiency.

- Exam Readiness (Q27a): the extent to which ChatGPT improves the respondent’s readiness for exams.

Both outcome variables were measured using five-point Likert scales, ranging from Strongly disagree (1) to Strongly agree (5). Details of the survey questions corresponding to the outcome variables are provided in Appendix A, Table A1.

Input variables that were used to develop the two independent prediction models for the two learning-related outcomes were selected from the survey responses based on prior literature on educational technology adoption, self-regulated learning, and AI-assisted learning. A total of 36 variables were included and grouped into the following four conceptual blocks:

- ChatGPT usage and experience: Frequency of overall use and task-specific applications such as academic writing, summarizing, study assistance, coding, and other relevant tasks.

- Perceived capabilities of ChatGPT: Functional abilities including efficiency, reliability, information summarization, and facilitation of traditional, online, or blended learning.

- Satisfaction and attitudes: Perceived usefulness, ease of interaction with ChatGPT compared to professors or peers, and satisfaction with information quality and assistance provided.

- Academic outcomes: Perceived improvements in knowledge acquisition, study motivation, engagement in class discussions and timely assignment completion.

Table 1 summarizes the number of features included in each conceptual block, along with representative survey Q-codes. All 36 input variables were measured using five-point Likert scales, with specific anchors varying by question type. Details of the survey questions corresponding to the outcome variables are provided in Appendix A, Table A2.

Table 1.

Conceptual grouping of input features along with representative Q-codes for each block.

3.2. Data Pre-Processing

Prior to model training, the survey data was carefully preprocessed to ensure consistency and suitability for ML analysis. The two learning-related outcome variables, Study Efficiency (Q26e) and Exam Readiness (Q27a), were binarized to enable supervised classification. Responses indicating Strongly Disagree or Disagree (values 1–2) were coded as Class 0 (low or no perceived learning gains), whereas responses ranging from Neutral to Strongly Agree (values 3–5) were coded as Class 1 (higher perceived learning gains). This binarization established a clear distinction between students reporting limited versus meaningful benefits from ChatGPT use and aligns with the study’s objective of identifying factors driving Study Efficiency and Exam Readiness. While binarization simplifies the modeling task and enhances the interpretability of ML models, it inherently involves some loss of ordinal information. This trade-off was considered acceptable given the study’s objective of identifying predictors associated with higher perceived learning gains, rather than modeling fine-grained variation across the full Likert scale. Class 1 was treated as the positive class throughout the modeling and analysis phase. All 36 predictor variables used as model inputs were retained in their original numeric form, corresponding to the 1–5 Likert scale, ensuring that the relative ordering of responses was preserved.

Rows with missing values in either of the outcome variables were removed to guarantee that all observations included complete target information. The final datasets included 6302 responses for Study Efficiency (Q26e) and 6308 responses for Exam Readiness (Q27a). For the remaining predictor variables, missing values were imputed using the median of the respective columns, a method that is robust to outliers and maintains the central tendency of the data without introducing bias. After imputation, all features were verified to remain within the expected 1–5 range.

3.3. Machine Learning Model Building

Following data preprocessing, predictive ML models were developed using an Extreme Gradient Boosting (XGBoost) classifier, a tree-based ensemble algorithm that iteratively combines multiple weak learners to optimize predictive performance [33]. XGBoost was specifically selected because Tree SHAP is natively integrated, allowing seamless computation of SHAP values for interpretable feature importance analysis [34]. The dataset was randomly split into a training set (80%) and a test set (20%) to evaluate out-of-sample performance. Within the training set, class imbalance in the binarized outcome variables was observed, with the high-gain class (Class 1) represented by 4483 responses compared to 558 responses for the low-gain class (Class 0) in Study Efficiency (Q26e), and 4212 responses compared to 834 responses in Exam Readiness (Q27a), indicating a substantial imbalance between outcome classes. This class imbalance was addressed using the Synthetic Minority Over-sampling Technique (SMOTE), which generates synthetic examples for the minority class to improve model learning and reduce bias toward the majority class. SMOTE was specifically selected due to its ability to generate synthetic but plausible minority class examples while preserving feature relationships within the training data [35].

Separate XGBoost models were trained for the two outcomes, Study Efficiency and Exam Readiness, using the same set of 36 input features, enabling the identification of both shared and outcome-specific predictive patterns. Hyperparameters, including learning rate and number of estimators, were optimized using a grid search with five-fold cross-validation on the training set to balance model complexity and generalization. This approach allowed the models to capture nonlinear relationships and interactions among the 36 input features while minimizing overfitting. The trained models were then evaluated on the held-out test set to provide unbiased estimates of predictive performance. All modeling and analyses were conducted in Python (version 3.12) using relevant scientific libraries.

3.4. Model Performance Evaluation

Let TP, TN, FP, and FN denote the numbers of true positives, true negatives, false positives, and false negatives, respectively. The performance of the XGBoost classification models was evaluated separately for the two learning-related outcomes, Study Efficiency and Exam Readiness, using standard classification metrics as listed below.

Accuracy: Measures the proportion of correctly classified instances.

Precision: Quantifies the proportion of predicted positive cases that are truly positive.

Recall: Measures the proportion of actual positive cases that are correctly identified.

F1-score: Represents the harmonic mean of precision and recall.

3.5. Model Interpretability with Explainable AI

To investigate the factors driving perceived Study Efficiency and Exam Readiness, this study employed Shapley Additive Explanations (SHAP), an explainable artificial intelligence (XAI) method that provides consistent and interpretable explanations of ML model outputs [36]. While tree-based models such as XGBoost include built-in feature importance measures, these can be sensitive to the choice of importance type and may produce inconsistent estimates [37]. SHAP overcomes this limitation by quantifying the contribution of each feature to individual predictions in a manner grounded in game theory, analyzing the effect of including or excluding each feature on the model’s output [37,38,39]. It is important to note that the SHAP-based explanations describe associative relationships between predictor variables and predicted outcomes and should not be interpreted as evidence of causality.

For each XGBoost model, SHAP values were calculated for all 36 predictor variables to enable global interpretability. Global feature importance was assessed using the mean absolute SHAP value, highlighting the variables that had the largest overall impact on predictions for Study Efficiency and Exam Readiness. To explore functional relationships and nonlinear effects, SHAP dependence plots were generated, illustrating how changes in predictor values, such as task-specific ChatGPT usage or perceived capabilities, influenced model predictions. All SHAP analyses were conducted using the Python (version 3.12) SHAP library.

4. Results

This section presents the predictive performance of the XGBoost models and identifies key factors driving perceived Study Efficiency (Q26e) and Exam Readiness (Q27a) among STEM students.

4.1. ML Model Performance

Two separate XGBoost-based ML classification models were trained to predict perceived drivers of Study Efficiency (Q26e) and Exam Readiness (Q27a) among STEM students. As the objective of this study was to identify factors associated with positive learning gains, model evaluation focused primarily on performance for the high-gain class (Class 1). For Study Efficiency, the model achieved an overall accuracy of 0.93. The high-efficiency class was predicted with high precision (0.95) and very high recall (0.97), yielding an F1-score of 0.96. This indicates that the model was highly effective at identifying students who reported improvements in Study Efficiency when using ChatGPT. The macro-averaged metrics were: precision = 0.83, recall = 0.76, and F1-score = 0.80, reflecting balanced performance across both outcome classes despite class imbalance. For Exam Readiness, the model achieved an overall accuracy of 0.86. Predictions for the high-readiness class showed strong performance, with a precision of 0.90, a recall of 0.94, and an F1-score of 0.92. The macro-averaged metrics were: precision = 0.76, recall = 0.71, and F1-score = 0.73, confirming robust predictive capability while accounting for lower-class performance. These strong predictive results for both the learning outcomes should, however, be interpreted with caution, considering outcome binarization and potential conceptual proximity between certain predictors and outcome variables.

4.2. ML Model Interpretability Using SHAP

To understand the drivers of perceived learning gains, SHAP values were applied to the XGBoost classification model for both learning outcomes: Study Efficiency and Exam Readiness. SHAP values quantify the contribution of each feature to the predicted probability of Class 1 (higher perceived learning gains) on the model output scale [36]. This approach provides interpretable insights into the factors that most strongly influence each learning outcome. To facilitate interpretation, SHAP summary plots were generated for both learning outcomes and are discussed in detail below.

4.2.1. Factors Driving Study Efficiency

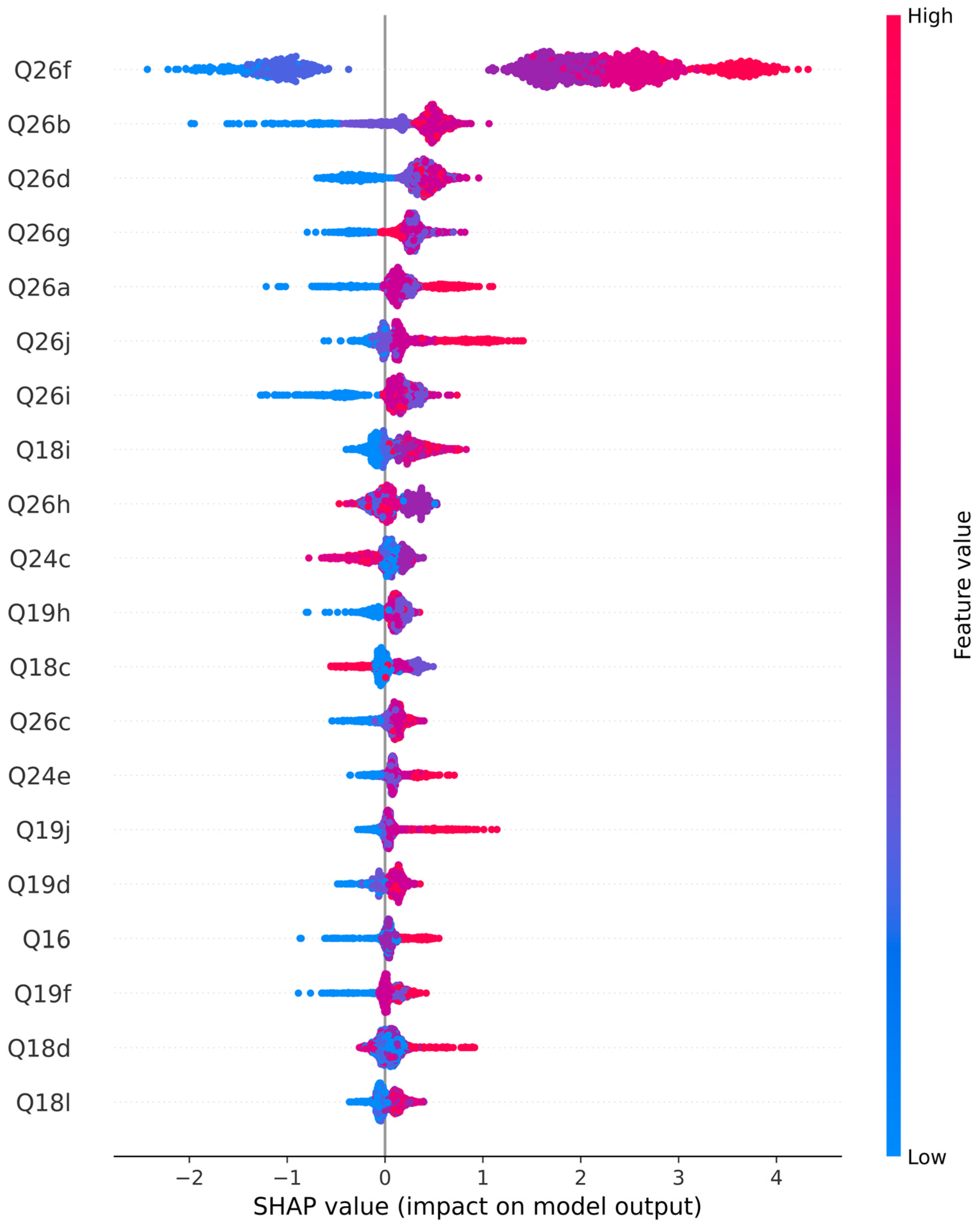

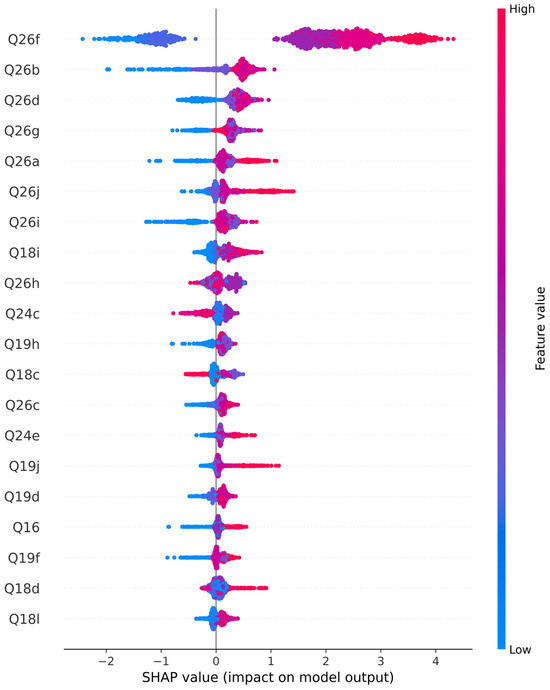

Figure 2 presents the SHAP summary plot for the XGBoost model predicting Study Efficiency.

Figure 2.

SHAP summary plot illustrating feature contributions to Study Efficiency.

On the y-axis of the SHAP plot shown in Figure 2, features influencing perceived Study Efficiency gains are listed in descending order of importance. Features at the top have the strongest influence, while those near the bottom have very little impact. The five most influential features are Q26f, Q26b, Q26d, Q26g, and Q26a, whereas the least influential features include Q18d and Q18l. Table 2 lists the Q-codes of the top five features along with their corresponding survey questions.

Table 2.

Top Five Features Identified by SHAP as Key Drivers of Study Efficiency.

The x-axis of the SHAP summary plot represents the mean SHAP values. According to the SHAP documentation [37], moving from left to right along the x-axis of the SHAP summary plot, a color change from blue to red indicates a positive impact on Study Efficiency. Accordingly, it can be observed that all of the top five features had a positive effect, as the colors corresponding to these features change from blue to red when moving from left to right along the x-axis. This indicates that students who rated higher on the Likert scale (4 or 5 out of 5) for the survey questions listed in Table 2 were predicted to have higher Study Efficiency gains.

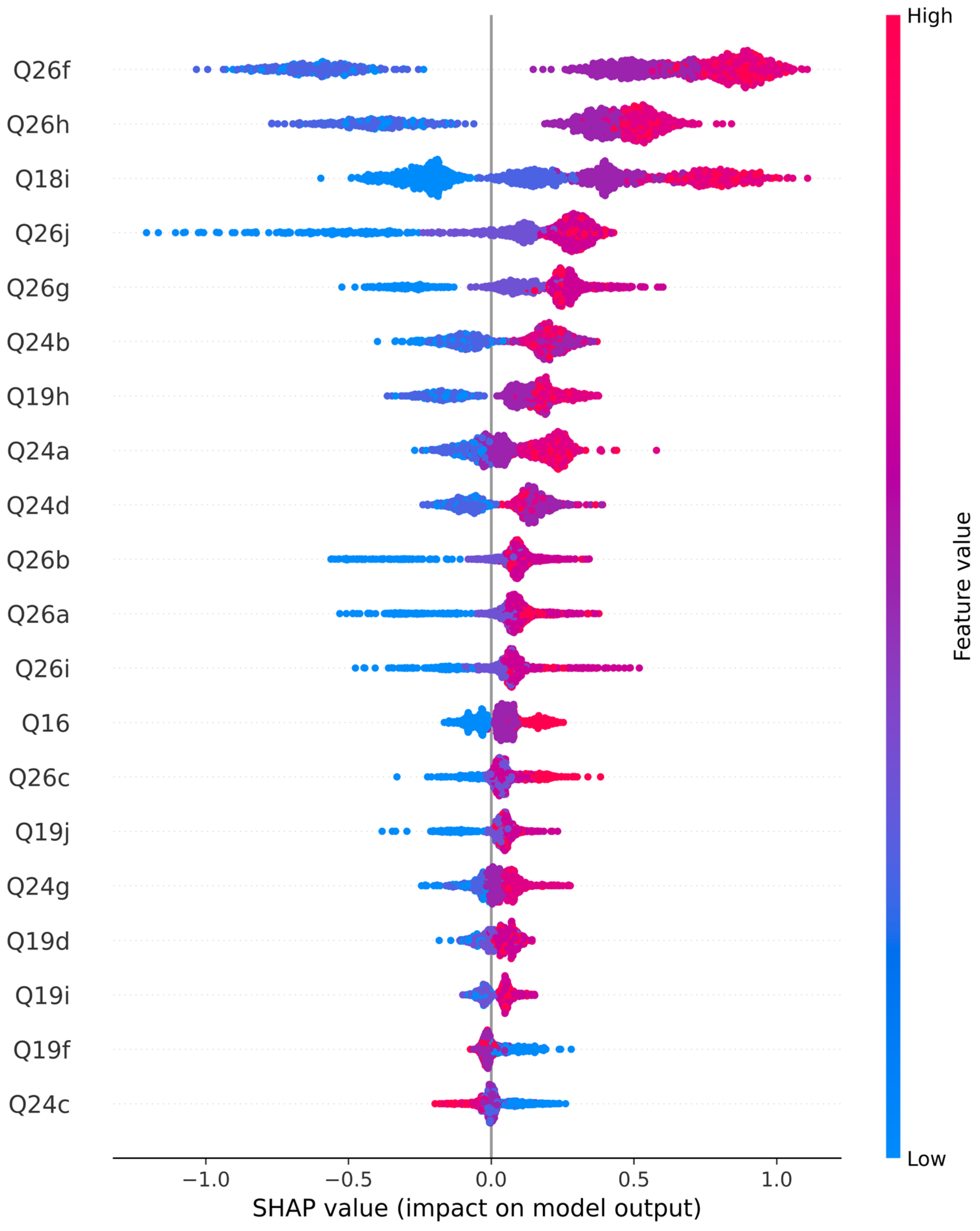

4.2.2. Factors Driving Exam Readiness

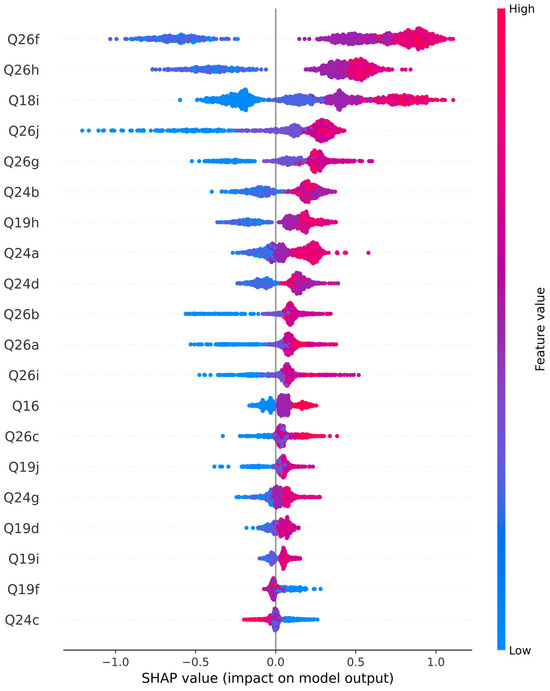

Figure 3 presents the SHAP summary plot for the XGBoost model predicting Exam Readiness. On the y-axis of the SHAP summary plot, features influencing perceived Exam Readiness are listed in descending order of importance. In other words, features at the top of the axis have the strongest influence on Exam Readiness, while features near the bottom have very little impact. The five most influential features are Q26f, Q26h, Q18i, Q26j, and Q26g, whereas the least influential features include Q19f and Q24c. Table 3 lists the Q-codes of these top five features driving Exam Readiness, along with their corresponding survey questions.

Figure 3.

SHAP summary plot illustrating feature contributions to Exam Readiness.

Table 3.

Top Five Features Identified by SHAP as Key Drivers of Exam Readiness.

The x-axis of the SHAP summary plot represents the mean SHAP values. According to the SHAP documentation [37], moving from left to right along the x-axis of the SHAP summary plot, a color change from blue to red indicates a positive impact on Exam Readiness. Accordingly, it can be observed that all of the top five features had a positive effect, as the colors corresponding to these features change from blue to red when moving from left to right along the x-axis. This indicates that students who rated higher on the Likert scale (4 or 5 out of 5) for these survey items were predicted to exhibit higher Exam Readiness.

5. Discussion

This study investigated the factors driving Study Efficiency and Exam Readiness among STEM students with ChatGPT usage, employing explainable ML techniques. This research is an attempt to move beyond prediction towards interpretation. While the XGBoost-based ML models demonstrated strong predictive performance for the positive outcome class, the primary contribution of this work lies in identifying why students perceive learning gains and which aspects of ChatGPT use matter the most.

The SHAP-based interpretability analysis revealed that perceived gains in motivation, personalized learning, access to knowledge, conceptual understanding and quality of assignment with ChatGPT usage consistently contributed positively across the learning outcomes: Study Efficiency and Exam Readiness. These findings suggest that students perceive ChatGPT’s educational value as extending beyond information provision to supporting learning processes that are associated with effective studying and exam preparation. This aligns with emerging literature that characterizes generative AI tools as learning companions rather than mere content generators [40,41].

However, it is important to note that the present results reflect associations between students’ perceptions of AI-supported learning processes and their reported learning-related outcomes, rather than demonstrating that ChatGPT directly improves study efficiency or exam readiness.

5.1. Interpretation of Study Efficiency Drivers

Study Efficiency gains were most strongly influenced by students’ perceived improvements resulting from ChatGPT use, specifically: increased motivation to study as supported by ChatGPT (Q26f), enhancement of general knowledge through ChatGPT interactions (Q26b), the ability to receive personalized guidance and explanations provided by ChatGPT (Q26d), support in completing study tasks efficiently with ChatGPT assistance (Q26g), and improved access to relevant sources of knowledge via ChatGPT (Q26a). These factors collectively align with core dimensions of self-regulated learning theory, in which learners actively manage their motivation, cognitive resources, and learning strategies during goal-directed academic tasks [42].

Motivation emerged as the strongest contributor, consistent with prior research showing that motivational support is a key determinant of study efficiency in technology-enhanced learning environments [43]. ChatGPT may enhance motivation by reducing frustration, offering immediate feedback, and enabling students to progress at their own pace. These features have been shown to sustain engagement in digital learning contexts [40]. In addition, perceived improvements in general knowledge through ChatGPT interactions reflect its role in providing rapid explanations and contextual information, which can support more efficient comprehension of study materials. Similarly, the importance of personalized education aligns with literature on adaptive learning systems, which suggests that tailoring explanations to individual learner needs can reduce cognitive load and improve learning efficiency [2,3,44]. ChatGPT’s ability to rephrase explanations, adjust difficulty, and respond to follow-up questions may explain its strong association with perceived efficiency gains [40].

Moreover, ChatGPT’s ability to support study task completion highlights its practical utility in helping students organize tasks, identify requirements, and overcome learning barriers, thereby streamlining study processes [1,40]. Finally, improved access to sources of knowledge through ChatGPT use underscores its function as an accessible entry point to gain information and retrieve relevant content from the internet within the ChatGPT interface, enabling students to locate and understand learning resources more efficiently.

5.2. Interpretation of Exam Readiness Drivers

Exam Readiness gains were influenced by a distinct but overlapping set of factors related to ChatGPT use, including increased motivation to study supported by ChatGPT (Q26f), enhanced engagement in classroom discussions facilitated by ChatGPT (Q26h), study assistance through ChatGPT such as practicing for exams (Q18i), improvements in the quality of assignments with ChatGPT support (Q26j), and overall facilitation of completing studies using ChatGPT (Q26g). Unlike study efficiency, exam readiness reflects students’ perceived depth of understanding and preparedness for assessments, rather than gains related to speed or convenience.

The prominence of study assistance, such as exam practice and enhancing the quality of assignments, and engagement-related factors is consistent with assessment-focused learning theories, which emphasize that exam readiness depends on integrating, practicing, and applying knowledge rather than surface-level familiarity [45]. Prior studies have shown that AI-based tutoring systems can support exam preparation by offering guided practice, step-by-step explanations, and alternative problem-solving approaches [40]. ChatGPT’s conversational nature may further facilitate this process by allowing iterative clarification and repeated practice, which are particularly relevant for exam-oriented learning.

In addition, motivation and engagement, including enhanced participation in classroom discussions supported by ChatGPT use, reflect important psychological components of exam readiness. These findings echo prior research indicating that confidence, sustained engagement, and effective preparation strategies are critical contributors to successful exam performance [46]. Additionally, ChatGPT’s facilitation of completing study tasks and coursework more efficiently may reduce cognitive and organizational barriers, thereby allowing students to focus more effectively on exam preparation and increasing their overall readiness.

5.3. Shared and Outcome-Specific Mechanisms

A key insight from this study is the distinction between shared drivers and outcome-specific mechanisms underlying perceived Study Efficiency and Exam Readiness gains. Motivation and the facilitation of study activities emerged as influential across both outcomes, highlighting their foundational role in AI-supported learning. In contrast, access to information and personalization were more strongly associated with Study Efficiency, whereas conceptual understanding and confidence were more salient for Exam Readiness.

This differentiation supports the view that GenAI tools, such as ChatGPT, influence learning through multiple pathways depending on the educational objective [4]. While study efficiency-oriented outcomes benefit from improved access to learning resources, exam readiness-oriented outcomes require deeper cognitive engagement and affective support. These findings underscore the importance of aligning ChatGPT use with specific learning goals rather than assuming uniform educational benefits.

At the same time, it is important to interpret model outputs in context. Outcome binarization, class imbalance handling via SMOTE, and conceptual overlap between predictors and outcomes may contribute to the observed performance.

5.4. Interpretation Across Conceptual Feature Blocks

Beyond individual features, interpreting the SHAP results through the conceptual feature groupings introduced in Table 1 provides higher-level insight into the mechanisms through which ChatGPT supports learning.

For both Study Efficiency and Exam Readiness, the most influential predictors predominantly originated from the Academic Outcomes block, particularly items related to motivation, knowledge improvement, personalization, and facilitation of studies (Q26a–Q26g). This indicates that students’ perceived learning gains are primarily associated with how ChatGPT is perceived to support academic processes, rather than with usage frequency or general attitudes alone. Features from the Usage & Experience block played a secondary but meaningful role, especially for exam readiness, where study assistance and conceptual understanding (e.g., Q18i) emerged as important contributors. This suggests that practical engagement with ChatGPT for learning tasks enhances preparedness for assessments when aligned with academic goals.

In contrast, features from the Perceived Capabilities and Satisfaction & Attitudes blocks showed comparatively lower influence in the SHAP rankings. While these dimensions may shape students’ overall perceptions of ChatGPT, they appear to function more as enabling or contextual factors rather than direct drivers of efficiency or readiness gains. Overall, this block-level interpretation reinforces the notion that learning-centered engagement, rather than mere exposure or positive attitudes toward AI, is the primary pathway through which students perceive ChatGPT to support their academic outcomes.

5.5. Implications for STEM Education and AI-Supported Learning

The findings have important implications for students, educators, and AI developers. For students, the results suggest that ChatGPT is most effective when used as a supportive tool for motivation, clarification, and planning, rather than as a shortcut for completing tasks. For educators, understanding the specific dimensions through which ChatGPT is perceived to contribute to learning can inform the development of evidence-based guidelines for responsible AI use in STEM education.

From a system design perspective, the results suggest that AI tools should prioritize features that support personalization, conceptual scaffolding, and learner confidence, rather than focusing solely on content generation. This aligns with calls in the literature to design educational AI systems that support and enhance, rather than replace, human learning processes [4].

It is also important to note that students’ perceptions of ChatGPT use may vary across cultural and contextual settings. Differences in STEM educational practices, familiarity with digital technologies, language proficiency, and access to AI tools could influence how students experience and evaluate ChatGPT’s impact on study efficiency and exam readiness. The global survey used in this study, including students from 109 countries and diverse institutional contexts, allows the analysis to capture varied experiences and identify patterns that are generally consistent across educational settings.

5.6. Limitations and Directions for Future Research

Despite its contributions, this study has a few limitations. Firstly, the learning-related outcomes examined in this study are based on students’ self-reported perceptions of study efficiency and exam readiness. While such measures provide valuable insight into learners’ experiences with ChatGPT, they may not directly correspond to objectively measured academic performance or actual learning gains. As a result, the improvements identified in this analysis should be interpreted as subjective evaluations of perceived learning support rather than definitive indicators of enhanced academic achievement. Additionally, the binarization of five-point Likert-scale outcome variables into two classes may have resulted in some loss of ordinal information, as distinctions between intermediate response categories (e.g., Neutral, Agree, and Strongly Agree) were not explicitly modeled. While this transformation enabled a clear classification framework aligned with the study’s objective of identifying perceived learning gains, it may limit the ability to capture variations in the intensity of these outcomes. In addition, the sample was limited to STEM students, which may reduce the generalizability of the findings to other disciplines with different learning practices or patterns of AI use. Moreover, the proposed methodology does not imply causal inference, nor does it allow examination of how students’ engagement with ChatGPT evolves and impacts learning over time.

Future research could explore ordinal or regression-based modeling approaches to better capture variations in the intensity of perceived learning outcomes. Longitudinal designs may also be considered to assess how sustained ChatGPT use influences learning trajectories over time. Incorporating objective performance measures, such as exam scores or assignment grades, would further strengthen the evidence base. Additionally, qualitative analyses could complement SHAP-based explanations by capturing students’ lived experiences with AI-assisted learning.

6. Conclusions

This study leveraged explainable ML with XGBoost and SHAP to investigate factors associated with STEM students’ perceived gains in study efficiency and exam readiness when using ChatGPT. By combining predictive modeling with interpretable feature importance analyses, the study identified both shared and outcome-specific mechanisms underlying these perceived benefits. Motivation, facilitation of study activities, personalized learning, and access to knowledge emerged as the most influential drivers, indicating that ChatGPT’s educational value primarily arises from learning-centered engagement rather than usage frequency or general positive attitudes.

Theoretically, this work contributes by clarifying how AI-supported learning is perceived to influence different dimensions of academic engagement. Feature-level SHAP analyses revealed which aspects of ChatGPT use students perceive as most supportive, while block-level interpretation contextualized these perceptions within broader constructs such as academic outcomes, perceived capabilities, and usage patterns. This demonstrates that generative AI tools may support learning through multiple, complementary pathways, depending on the learning outcome considered.

Practically, the findings suggest that ChatGPT is most beneficial when used as an active learning tool that fosters motivation, provides personalized guidance, and facilitates access to relevant knowledge. Educators and instructional designers can use these insights to develop guidelines and interventions that enhance AI-assisted learning while mitigating risks of overreliance. System designers may optimize AI features to support conceptual understanding, time management, and study planning, particularly for exam readiness.

Overall, this study demonstrates the value of combining explainable AI methods with survey-based research to interpret educational technology impacts. By highlighting the factors that students perceive as supporting their learning and exam preparation, the work provides actionable insights for students, educators, and AI developers, reinforcing that strategic, learner-centered engagement with ChatGPT can enhance perceived study efficiency and exam readiness.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The dataset used in this study can be obtained from https://data.mendeley.com/datasets/ymg9nsn6kn/2 (accessed on 12 December 2025).

Conflicts of Interest

The author declares no conflicts of interest.

Appendix A

Table A1 provides the detailed list of the two learning-related outcome variables along with their corresponding survey questions and Likert scale ranges.

Table A1.

Learning-related outcome variables.

Table A1.

Learning-related outcome variables.

| Q-Code | Survey Question | Likert Scale Range |

|---|---|---|

| Q26e | ChatGPT can increase my study efficiency | Strongly disagree (1)–Strongly agree (5) |

| Q27a | ChatGPT can improve my readiness for exams | Strongly disagree (1)–Strongly agree (5) |

Table A2 lists all 36 input variables used as predictors, along with their corresponding survey questions and Likert scale ranges.

Table A2.

List of input features used.

Table A2.

List of input features used.

| Q-Code | Survey Question | Likert Scale Range |

|---|---|---|

| Q15 | To what extent do you use ChatGPT in general? | Rarely (1)–Extensively (5) |

| Q16 | What is your experience with ChatGPT? | Very bad (1)–Very good (5) |

| Q18 | How often do you use ChatGPT for the following tasks? | |

| Q18a | Academic writing (writing assignments, research papers…) | Never (1)–Always (5) |

| Q18b | Professional writing (writing e-mails) | Never (1)–Always (5) |

| Q18c | Creative writing (generating stories, poems…) | Never (1)–Always (5) |

| Q18d | Proofreading (receiving feedback on writing) | Never (1)–Always (5) |

| Q18e | Brainstorming (generating new ideas) | Never (1)–Always (5) |

| Q18g | Summarizing (generating concise summaries of lengthy texts) | Never (1)–Always (5) |

| Q18h | Calculating help (solving mathematical problems) | Never (1)–Always (5) |

| Q18i | Study assistance (practicing for exams) | Never (1)–Always (5) |

| Q18j | Personal assistance (seeking advice on various personal topics) | Never (1)–Always (5) |

| Q18k | Research assistance (finding information for research papers) | Never (1)–Always (5) |

| Q18l | Coding assistance (getting assistance with programming) | Never (1)–Always (5) |

| Q19 | How much do you agree with the following statements related to the capabilities of ChatGPT? | |

| Q19d | Provide information efficiently. | Strongly disagree (1)–Strongly agree (5) |

| Q19e | Provide reliable information. | Strongly disagree (1)–Strongly agree (5) |

| Q19f | Summarize extensive information. | Strongly disagree (1)–Strongly agree (5) |

| Q19g | Simplify complex information. | Strongly disagree (1)–Strongly agree (5) |

| Q19h | Facilitate traditional learning (in a classroom). | Strongly disagree (1)–Strongly agree (5) |

| Q19i | Facilitate online learning (using digital technologies). | Strongly disagree (1)–Strongly agree (5) |

| Q19j | Facilitate blended (hybrid) learning (a mix of traditional and online learning). | Strongly disagree (1)–Strongly agree (5) |

| Q24 | How much do you agree with the following statements related to your satisfaction with ChatGPT? | Strongly disagree (1)–Strongly agree (5) |

| Q24a | I find ChatGPT more useful than Google or other web search engines. | Strongly disagree (1)–Strongly agree (5) |

| Q24b | It is easier for me to interact with ChatGPT than with my professors. | Strongly disagree (1)–Strongly agree (5) |

| Q24c | It is easier for me to interact with ChatGPT than with my colleagues. | Strongly disagree (1)–Strongly agree (5) |

| Q24d | The information I get from ChatGPT is clearer than the one provided by my professors. | Strongly disagree (1)–Strongly agree (5) |

| Q24e | I am satisfied with the level of assistance provided by ChatGPT. | Strongly disagree (1)–Strongly agree (5) |

| Q24f | I am satisfied with the quality of information provided by ChatGPT. | Strongly disagree (1)–Strongly agree (5) |

| Q24g | I am satisfied with the accuracy of the information provided by ChatGPT. | Strongly disagree (1)–Strongly agree (5) |

| Q26 | How much do you agree with the following statements related to learning and academic enhancement addressed with ChatGPT? | Strongly disagree (1)–Strongly agree (5) |

| Q26a | Enhance my access to the sources of knowledge. | Strongly disagree (1)–Strongly agree (5) |

| Q26b | Improve my general knowledge. | Strongly disagree (1)–Strongly agree (5) |

| Q26c | Improve my specific knowledge. | Strongly disagree (1)–Strongly agree (5) |

| Q26d | Provide me with personalized education. | Strongly disagree (1)–Strongly agree (5) |

| Q26f | Increase my motivation to study. | Strongly disagree (1)–Strongly agree (5) |

| Q26g | Facilitate completing my studies. | Strongly disagree (1)–Strongly agree (5) |

| Q26h | Improve my engagement in class discussions. | Strongly disagree (1)–Strongly agree (5) |

| Q26i | Enhance my ability to meet assignment deadlines. | Strongly disagree (1)–Strongly agree (5) |

| Q26j | Improve the quality of my assignments. | Strongly disagree (1)–Strongly agree (5) |

References

- Qian, Y. Pedagogical Applications of Generative AI in Higher Education: A Systematic Review of the Field. Tech. Trends 2025, 69, 1105–1120. [Google Scholar] [CrossRef]

- Naznin, K.; Al Mahmud, A.; Nguyen, M.T.; Chua, C. ChatGPT Integration in Higher Education for Personalized Learning, Academic Writing, and Coding Tasks: A Systematic Review. Computers 2025, 14, 53. [Google Scholar] [CrossRef]

- Deep, P.D.; Chen, Y. The Role of AI in Academic Writing: Impacts on Writing Skills, Critical Thinking, and Integrity in Higher Education. Societies 2025, 15, 247. [Google Scholar] [CrossRef]

- Kumar, V. Using ChatGPT for Assessment Development in Design and Manufacturing Engineering Education: Opportunities and Challenges. Int. J. Mech. Eng. Educ. 2025. [Google Scholar] [CrossRef]

- Sánchez-Rodriguez, E.; Merino-Soto, C.; Chen, M.H.; Zavala, G.; Chans, G.M. AI-Supported Learning: Integrating ChatGPT to Enhance Cognitive Skills in STEM Education. In Proceedings of the 2025 IEEE Global Engineering Education Conference (EDUCON), London, UK; IEEE: Piscataway, NJ, USA, 2025; pp. 1–5. [Google Scholar] [CrossRef]

- Cotton, D.R.; Cotton, P.A.; Shipway, J.R. Chatting and Cheating: Ensuring Academic Integrity in the Era of ChatGPT. Innov. Educ. Teach. Int. 2024, 61, 228–239. [Google Scholar] [CrossRef]

- Suriano, R.; Plebe, A.; Acciai, A.; Fabio, R.A. Student Interaction with ChatGPT Can Promote Complex Critical Thinking Skills. Learn. Instruc. 2025, 95, 102011. [Google Scholar] [CrossRef]

- Wu, X.; Duan, R.; Ni, J. Unveiling Security, Privacy, and Ethical Concerns of ChatGPT. J. Inf. Intell. 2024, 2, 102–115. [Google Scholar] [CrossRef]

- Albadarin, Y.; Saqr, M.; Pope, N.; Tukiainen, M. A Systematic Literature Review of Empirical Research on ChatGPT in Education. Discov. Educ. 2024, 3, 60. [Google Scholar] [CrossRef]

- Li, L.; Ma, Z.; Fan, L.; Lee, S.; Yu, H.; Hemphill, L. ChatGPT in Education: A Discourse Analysis of Worries and Concerns on Social Media. Educ. Inf. Technol. 2024, 29, 10729–10762. [Google Scholar] [CrossRef]

- Farhi, F.; Jeljeli, R.; Aburezeq, I.; Dweikat, F.F.; Al-Shami, S.A.; Slamene, R. Analyzing Students’ Views, Concerns, and Perceived Ethics about ChatGPT Usage. Comput. Educ. Artif. Intell. 2023, 5, 100180. [Google Scholar] [CrossRef]

- Adžić, S.; Savić Tot, T.; Vuković, V.; Radanov, P.; Avakumović, J. Understanding Student Attitudes toward GenAI Tools: A Comparative Study of Serbia and Austria. Int. J. Cogn. Res. Sci. Eng. Educ. 2024, 12, 583–611. [Google Scholar] [CrossRef]

- Bzdok, D.; Altman, N.; Krzywinski, M. Statistics versus Machine Learning. Nat. Methods 2018, 15, 233–234. [Google Scholar] [CrossRef]

- Kumar, V.; Butler, R. Opioid Overdose Death Prediction Using Machine Learning and Risk Factor Analysis Using SHAP Values for U.S. Counties. Int. J. Ment. Health Addict. 2025. [Google Scholar] [CrossRef]

- Almassaad, A.; Alajlan, H.; Alebaikan, R. Student Perceptions of Generative Artificial Intelligence: Investigating Utilization, Benefits, and Challenges in Higher Education. Systems 2024, 12, 385. [Google Scholar] [CrossRef]

- Strzelecki, A. Students’ Acceptance of ChatGPT in Higher Education: An Extended Unified Theory of Acceptance and Use of Technology. Innov. High. Educ. 2024, 49, 223–245. [Google Scholar] [CrossRef]

- Chan, C.K.Y.; Zhou, W. An Expectancy Value Theory (EVT) Based Instrument for Measuring Student Perceptions of Generative AI. Smart Learn. Environ. 2023, 10, 64. [Google Scholar] [CrossRef]

- Bosch, T.; Jordaan, M.; Mwaura, J.; Nkoala, S.; Schoon, A.; Smit, A.; Uzuegbunam, C.E.; Mare, A. South African University Students’ Use of AI-Powered Tools for Engaged Learning. SSRN 2023. [Google Scholar] [CrossRef]

- Karkoulian, S.; Sayegh, N.; Sayegh, N. ChatGPT Unveiled: Understanding Perceptions of Academic Integrity in Higher Education-A Qualitative Approach. J. Acad. Ethics 2025, 23, 1171–1188. [Google Scholar] [CrossRef]

- Chan, C.K.Y.; Hu, W. Students’ Voices on Generative AI: Perceptions, Benefits, and Challenges in Higher Education. Int. J. Educ. Technol. High. Educ. 2023, 20, 43. [Google Scholar] [CrossRef]

- Butler, R.; Kumar, V.; Rowel, R. Enhancing Student Employability through AI/ML Infused STEM Education: Interdisciplinary Case Studies from an Urban HBCU. Am. J. STEM Educ. 2026, 19, 1–22. [Google Scholar]

- Kovari, A. Ethical Use of ChatGPT in Education—Best Practices to Combat AI-Induced Plagiarism. Front. Educ. 2025, 9, 1465703. [Google Scholar] [CrossRef]

- Abdaljaleel, M.; Barakat, M.; Alsanafi, M.; Salim, N.A.; Abazid, H.; Malaeb, D.; Mohammed, A.H.; Hassan, B.A.R.; Wayyes, A.M.; Farhan, S.S.; et al. A Multinational Study on the Factors Influencing University Students’ Attitudes and Usage of ChatGPT. Sci. Rep. 2024, 14, 1983. [Google Scholar] [CrossRef]

- Cong-Lem, N.; Soyoof, A.; Tsering, D. A Systematic Review of the Limitations and Associated Opportunities of ChatGPT. Int. J. Hum. Comput. Interact. 2025, 41, 3851–3866. [Google Scholar] [CrossRef]

- Mienye, I.D.; Swart, T.G. ChatGPT in Education: A Review of Ethical Challenges and Approaches to Enhancing Transparency and Privacy. Procedia Comput. Sci. 2025, 254, 181–190. [Google Scholar] [CrossRef]

- Radanliev, P. AI Ethics: Integrating Transparency, Fairness, and Privacy in AI Development. Appl. Artif. Intell. 2025, 39, 2463722. [Google Scholar] [CrossRef]

- Moorhouse, B.L.; Yeo, M.A.; Wan, Y. Generative AI Tools and Assessment: Guidelines of the World’s Top-Ranking Universities. Comput. Educ. Open 2023, 5, 100151. [Google Scholar] [CrossRef]

- Arthur, F.; Salifu, I.; Abam Nortey, S. Predictors of Higher Education Students’ Behavioural Intention and Usage of ChatGPT: The Moderating Roles of Age, Gender and Experience. Interact. Learn. Environ. 2025, 33, 993–1019. [Google Scholar] [CrossRef]

- Kacperski, C.; Ulloa, R.; Bonnay, D.; Kulshrestha, J.; Selb, P.; Spitz, A. Characteristics of ChatGPT Users from Germany: Implications for the Digital Divide from Web Tracking Data. PLoS ONE 2025, 20, e0309047. [Google Scholar] [CrossRef] [PubMed]

- Parra-Díaz, J.A.; Muñoz-Vidal, F.A.; Alves, R.F.; Rodriguez-Garcia, N.M. Learning Approaches of First-Year University Students: A Mixed-Method Study in Chile. Int. J. Learn. Teach. Educ. Res. 2024, 23, 10. [Google Scholar] [CrossRef]

- Kumar, V. Distance/Online Engineering Education During and After COVID-19: Graduate Teaching Assistant’s Perspective. In Proceedings of the ASME 2021 International Mechanical Engineering Congress and Exposition, Volume 9: Engineering Education, Virtual, Online, 1–5 November 2021; ASME: New York, NY, USA, 2021; Paper V009T09A017. [Google Scholar] [CrossRef]

- Ravšelj, D.; Keržič, D.; Tomaževič, N.; Umek, L.; Brezovar, N.; Iahad, N.A.; Abdulla, A.A.; Akopyan, A.; Segura, M.W.A.; AlHumaid, J.; et al. Higher Education Students’ Perceptions of ChatGPT: A Global Study of Early Reactions. PLoS ONE 2025, 20, e0315011. [Google Scholar] [CrossRef] [PubMed]

- Chen, T.; Guestrin, C. XGBoost: A Scalable Tree Boosting System. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, August 2016; Association for Computing Machinery: New York, NY, USA, 2016; pp. 785–794. [Google Scholar] [CrossRef]

- Lundberg, S.M.; Erion, G.G.; Lee, S.I. Consistent Individualized Feature Attribution for Tree Ensembles. arXiv 2018, arXiv:1802.03888. [Google Scholar] [CrossRef]

- Chawla, N.V.; Bowyer, K.W.; Hall, L.O.; Kegelmeyer, W.P. SMOTE: Synthetic Minority Over-Sampling Technique. J. Artif. Intell. Res. 2002, 16, 321–357. [Google Scholar] [CrossRef]

- Lundberg, S.M.; Lee, S.I. A Unified Approach to Interpreting Model Predictions. In Advances in Neural Information Processing Systems; Curran Associates, Inc.: Red Hook, NY, USA, 2017; pp. 4765–4774. [Google Scholar]

- SHAP Documentation. Available online: https://shap.readthedocs.io/en/latest/index.html (accessed on 12 December 2025).

- Kumar, V.; Sznajder, K.K.; Kumara, S. Machine Learning Based Suicide Prediction and Development of Suicide Vulnerability Index for U.S. Counties. npj Ment. Health Res. 2022, 1, 3. [Google Scholar] [CrossRef]

- Musolf, A.M.; Holzinger, E.R.; Malley, J.D.; Bailey-Wilson, J.E. What Makes a Good Prediction? Feature Importance and Beginning to Open the Black Box of Machine Learning in Genetics. Hum. Genet. 2022, 141, 1515–1528. [Google Scholar] [CrossRef]

- Kasneci, E.; Sessler, K.; Küchemann, S.; Bannert, M.; Dementieva, D.; Fischer, F.; Gasser, U.; Groh, G.; Günnemann, S.; Hüllermeier, E.; et al. ChatGPT for Good? On Opportunities and Challenges of Large Language Models for Education. Learn. Individ. Differ. 2023, 103, 102274. [Google Scholar] [CrossRef]

- Dwivedi, Y.K.; Kshetri, N.; Hughes, L.; Slade, E.L.; Jeyaraj, A.; Kar, A.K.; Baabdullah, A.M.; Koohang, A.; Raghavan, V.; Ahuja, M.; et al. “So What If ChatGPT Wrote It?” Multidisciplinary Perspectives on Opportunities, Challenges and Implications of Generative Conversational AI for Research, Practice and Policy. Int. J. Inf. Manag. 2023, 71, 102642. [Google Scholar] [CrossRef]

- Zimmerman, B.J. Becoming a Self-Regulated Learner: An Overview. Theory Pract. 2002, 41, 64–70. [Google Scholar] [CrossRef]

- Broadbent, J.; Poon, W.L. Self-Regulated Learning Strategies and Academic Achievement in Online Higher Education Learning Environments: A Systematic Review. Internet High. Educ. 2015, 27, 1–13. [Google Scholar] [CrossRef]

- Sweller, J.; Ayres, P.; Kalyuga, S. Cognitive Load Theory; Springer: New York, NY, USA, 2011. [Google Scholar] [CrossRef]

- Rhodes, A.E.; Rozell, T.G. Cognitive Flexibility and Undergraduate Physiology Students: Increasing Advanced Knowledge Acquisition within an Ill-Structured Domain. Adv. Physiol. Educ. 2017, 41, 385–392. [Google Scholar] [CrossRef][Green Version]

- Mokoagow, E.; Lantu, M.; Siregar, I.K.; Putri, S.R. Enhancing Exam Readiness: Addressing Concentration, Self-Confidence, and Emotional Preparedness in Student Learning. Super. Educ. J. 2024, 2, 8–15. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.