Validation of a Newly Developed Assessment Tool for Point-of-Care Ultrasound of the Thorax in Healthy Volunteers (VALPOCUS)

Abstract

1. Introduction

2. Methods

2.1. Study Design

2.1.1. Delphi Process and Creation of the Assessment Tool

2.1.2. POCUS Examination and Initial Grading

2.1.3. Examination Setup and Equipment

2.1.4. Statistical Analysis

2.1.5. Reliability Testing

2.1.6. Validation Process

3. Results

3.1. Tool Development and Delphi Process

3.2. Examination Results

3.3. Reliability

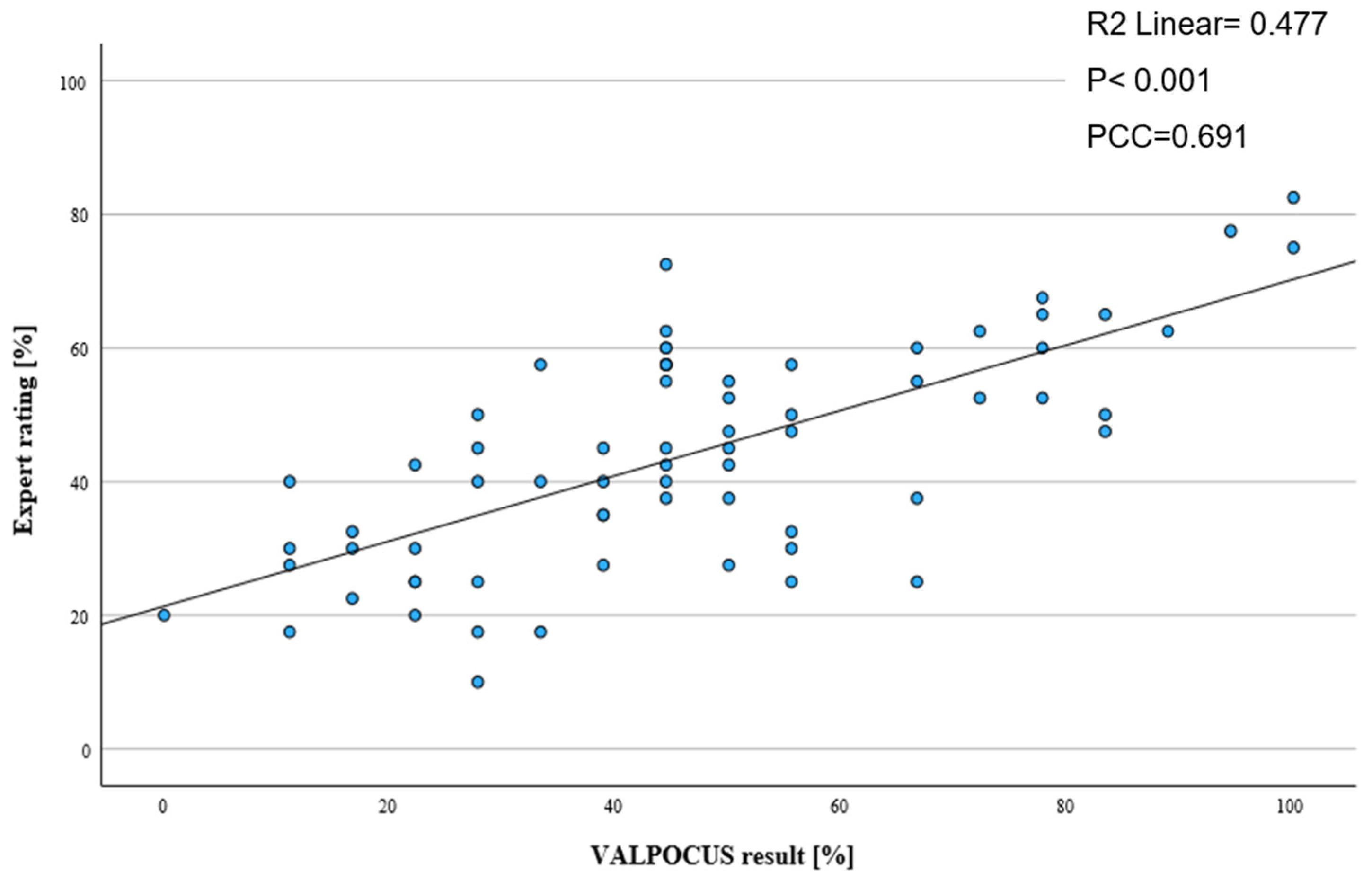

3.4. Validity

4. Discussion

Limitations

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Choi, W.; Cho, Y.S.; Ha, Y.R.; Oh, J.H.; Lee, H.; Kang, B.S.; Kim, Y.W.; Koh, C.Y.; Lee, J.H.; Jung, E.; et al. Role of point-of-care ultrasound in critical care and emergency medicine: Update and future perspective. Clin. Exp. Emerg. Med. 2023, 10, 363–381. [Google Scholar] [CrossRef]

- Naji, A.; Chappidi, M.; Ahmed, A.; Monga, A.; Sanders, J. Perioperative Point-of-Care Ultrasound Use by Anesthesiologists. Cureus 2021, 13, e15217. [Google Scholar] [CrossRef]

- Soar, J.; Böttiger, B.W.; Carli, P.; Couper, K.; Deakin, C.D.; Djärv, T.; Lott, C.; Olasveengen, T.; Paal, P.; Pellis, T.; et al. European Resuscitation Council Guidelines 2021, Adult advanced life support. Resuscitation 2021, 161, 115–151. [Google Scholar] [CrossRef]

- Singh, Y.; Tissot, C.; Fraga, M.V.; Yousef, N.; Cortes, R.G.; Lopez, J.; Sanchez-De-Toledo, J.; Brierley, J.; Colunga, J.M.; Raffaj, D.; et al. International evidence-based guidelines on Point of Care Ultrasound (POCUS) for critically ill neonates and children issued by the POCUS Working Group of the European Society of Paediatric and Neonatal Intensive Care (ESPNIC). Crit. Care 2020, 24, 65. [Google Scholar] [CrossRef]

- Stewart, D.L.; Elsayed, Y.; Fraga, M.V.; Coley, B.D.; Annam, A.; Milla, S.S. Use of Point-of-Care Ultrasonography in the NICU for Diagnostic and Procedural Purposes. Pediatrics 2022, 150, e2022060053. [Google Scholar] [CrossRef]

- Lichtenstein, D.A.; Mezière, G.A. The BLUE-points: Three standardized points used in the BLUE-protocol for ultrasound assessment of the lung in acute respiratory failure. Crit. Ultrasound J. 2011, 3, 109–110. [Google Scholar] [CrossRef]

- Nagre, A. Focus-Assessed Transthoracic Echocardiography: Implications in Perioperative and Intensive Care. Ann. Card. Anaesth. 2019, 22, 302. [Google Scholar] [CrossRef]

- Perera, P.; Mailhot, T.; Riley, D.; Mandavia, D. The RUSH Exam: Rapid Ultrasound in SHock in the Evaluation of the Critically lll. Emerg. Med. Clin. N. Am. 2010, 28, 29–56. [Google Scholar] [CrossRef]

- Mulder, T.A.; van de Velde, T.; Dokter, E.; Boekestijn, B.; Olgers, T.J.; Bauer, M.P.; Hierck, B.P. Unravelling the skillset of point-of-care ultrasound: A systematic review. Ultrasound J. 2023, 15, 19. [Google Scholar] [CrossRef]

- Gracias, V.H.; Frankel, H.L.; Gupta, R.; Malcynski, J.; Gandhi, R.; Collazzo, L.; Nisenbaum, H.; Schwab, C.W. Defining the Learning Curve for the Focused Abdominal Sonogram for Trauma (FAST) Examination: Implications for Credentialing. Am. Surg. 2001, 67, 364–368. [Google Scholar] [CrossRef]

- Ramgobin, D.; Gupta, V.; Mittal, R.; Su, L.; Patel, M.A.; Shaheen, N.; Gupta, S.; Jain, R. POCUS in Internal Medicine Curriculum: Quest for the Holy-Grail of Modern Medicine. J. Community Hosp. Intern. Med. Perspect. 2022, 12, 36–42. [Google Scholar] [CrossRef]

- Bell, C.; Wagner, N.; Hall, A.; Newbigging, J.; Rang, L.; McKaigney, C. The ultrasound competency assessment tool for four-view cardiac POCUS. Ultrasound J. 2021, 13, 42. [Google Scholar] [CrossRef]

- Bell, C.; Hall, A.K.; Wagner, N.; Rang, L.; Newbigging, J.; McKaigney, C. The Ultrasound Competency Assessment Tool (UCAT): Development and Evaluation of a Novel Competency-Based Assessment Tool for Point-of-care Ultrasound. AEM Educ. Train. 2020, 5, e10520. [Google Scholar] [CrossRef] [PubMed]

- Damewood, S.C.; Leo, M.; Bailitz, J.; Gottlieb, M.; Liu, R.; Hoffmann, B.; Gaspari, R.J.; Chan, T.M. Tools for Measuring Clinical Ultrasound Competency: Recommendations from the Ultrasound Competency Work Group. AEM Educ. Train. 2020, 4, S106–S112. [Google Scholar] [CrossRef]

- Adamson, R.; Morris, A.E.; Sun Woan, J.; Ma, I.W.Y.; Schnobrich, D.; Soni, N.J. Development of a Focused Cardiac Ultrasound Image Acquisition Assessment Tool. ATS Sch. 2020, 1, 260–277. [Google Scholar] [CrossRef]

- Ma, I.W.Y.; Desy, J.; Woo, M.Y.; Kirkpatrick, A.W.; Noble, V.E. Consensus-Based Expert Development of Critical Items for Direct Observation of Point-of-Care Ultrasound Skills. J. Grad. Med Educ. 2020, 12, 176–184. [Google Scholar] [CrossRef]

- Boniface, K.S.; Ogle, K.; Aalam, A.; LeSaux, M.; Pyle, M.; Mandoorah, S.; Shokoohi, H.; Egan, D. Direct Observation Assessment of Ultrasound Competency Using a Mobile Standardized Direct Observation Tool Application with Comparison to Asynchronous Quality Assurance Evaluation. AEM Educ. Train. 2019, 3, 172–178. [Google Scholar] [CrossRef]

- Black, H.; Sheppard, G.; Metcalfe, B.; Stone-McLean, J.; McCarthy, H.; Dubrowski, A. Expert Facilitated Development of an Objective Assessment Tool for Point-of-Care Ultrasound Performance in Undergraduate Medical Education. Cureus 2016, 8, e636. [Google Scholar] [CrossRef]

- Kumar, A.; Kugler, J.; Jensen, T. Evaluation of Trainee Competency with Point-of-Care Ultrasonography (POCUS): A Conceptual Framework and Review of Existing Assessments. J. Gen. Intern. Med. 2019, 34, 1025–1031. [Google Scholar] [CrossRef]

- Dessie, A.; Calhoun, A.; Gilbert, G.; Lewiss, R.; Rabiner, J.; Uya, A.; Tsze, D.; Kanjanauptom, P.; Kessler, D. 112 Development and Validation of a Point-of-Care Ultrasound Image Quality Assessment Tool: The POCUS IQ Scale. Ann. Emerg. Med. 2019, 74, S45–S456. [Google Scholar] [CrossRef]

- Messick, S. Validity of psychological assessment: Validation of inferences from persons’ responses and performances as scientific inquiry into score meaning. Am. Psychol. 1995, 50, 741–749. [Google Scholar] [CrossRef]

- Linn, R.L. Educational Measurement, 3rd ed.; Macmillan Publishing Company: Washington, DC, USA, 1989. [Google Scholar]

- Page, M.J.L. Basal and Ceiling Rules. In Encyclopedia of Clinical Neuropsychology; Kreutzer, J., DeLuca, J., Caplan, B., Eds.; Springer: Cham, Switzerland, 2016. [Google Scholar] [CrossRef]

| Responses | Round 1 15/84 | Round 2 15/84 | Round 3 10/15 | Round 4 10/10 |

|---|---|---|---|---|

| V | |||

| 14/15 | 10/10 | ||

| 14/15 | 10/10 | ||

| 14/15 | 10/10 | ||

| 10/15 | 4/10 | No:6/10 | |

| 15/15 | |||

| V | |||

| V | |||

| V | |||

| 15/15 | |||

| 15/15 | |||

| 15/15 | |||

| 12/15 | 8/10 | 10/10 | |

| 10/15 | 9/10 | 10/10 | |

| 13/15 | 10/10 | ||

| 13/15 | 9/10 | 10/10 | |

| 15/15 |

| Assessment Tool | ||

|---|---|---|

| 1 | Correct patient position | |

| 2 | Correct ultrasound mode | |

| 3 | Suitable ultrasound probe | |

| 4 | Suitable positioning of the ultrasound probe | |

| 5 | Identification of organs and tissue | |

| 6 | Identification of artefacts | |

| 7 | Identification of wrong images | |

| 8 | Centering of the target organ/tissue | |

| 9 | Observer/Assessor deems the ultrasound images adequate for evaluation | |

| 10 | Performer optimizes the ultrasound image | |

| 11 | Depth is adjusted | |

| 12 | Gain is adjusted | |

| (1 point per item) points: | ____/12 |

| Cronbach’s Alpha | Corrected Item-Scale-Correlation | |

|---|---|---|

| Total | 0.838 | |

| Item | ||

| 1 | 0.840 | 0.0 |

| 2 | 0.841 | 0.021 |

| 3 | 0.840 | 0.0 |

| 4 | 0.838 | 0.318 |

| 5 | 0.814 | 0.534 |

| 6 | 0.820 | 0.481 |

| 7 | 0.813 | 0.544 |

| 8 | 0.805 | 0.603 |

| 9 | 0.815 | 0.538 |

| 10 | 0.839 | 0.320 |

| 11 | 0.826 | 0.428 |

| 12 | 0.823 | 0.402 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Hoffmann, P.; Hüppe, T.; Poncelet, N.; Weise, J.J.; Berwanger, U.; Conrad, D. Validation of a Newly Developed Assessment Tool for Point-of-Care Ultrasound of the Thorax in Healthy Volunteers (VALPOCUS). Tomography 2025, 11, 97. https://doi.org/10.3390/tomography11090097

Hoffmann P, Hüppe T, Poncelet N, Weise JJ, Berwanger U, Conrad D. Validation of a Newly Developed Assessment Tool for Point-of-Care Ultrasound of the Thorax in Healthy Volunteers (VALPOCUS). Tomography. 2025; 11(9):97. https://doi.org/10.3390/tomography11090097

Chicago/Turabian StyleHoffmann, Patrick, Tobias Hüppe, Nicolas Poncelet, Julius J. Weise, Ulrich Berwanger, and David Conrad. 2025. "Validation of a Newly Developed Assessment Tool for Point-of-Care Ultrasound of the Thorax in Healthy Volunteers (VALPOCUS)" Tomography 11, no. 9: 97. https://doi.org/10.3390/tomography11090097

APA StyleHoffmann, P., Hüppe, T., Poncelet, N., Weise, J. J., Berwanger, U., & Conrad, D. (2025). Validation of a Newly Developed Assessment Tool for Point-of-Care Ultrasound of the Thorax in Healthy Volunteers (VALPOCUS). Tomography, 11(9), 97. https://doi.org/10.3390/tomography11090097