1. Introduction

Diabetic macular edema (DME) is one of the leading causes of vision impairment among working-age individuals with diabetes, affecting approximately 6–7% of the diabetic population worldwide [

1,

2]. Intravitreal injection of anti-vascular endothelial growth factor (anti-VEGF) agents has become the first-line therapy for DME due to its proven ability to reduce macular swelling and improve visual acuity. However, treatment response varies markedly among patients: while some experience substantial anatomical and functional recovery, others show suboptimal or even refractory responses, leading to repeated injections, increased costs, and reduced compliance [

3]. Predicting individual therapeutic efficacy before initiating therapy, especially when anti-VEGF treatment has failed, allows for timely switch to more effective alternative therapies, ultimately securing better visual prognosis for patients [

4]. Therefore, predicting individual therapeutic outcomes prior to treatment initiation is crucial for guiding personalized management and optimizing healthcare resources.

Among known prognostic factors, the presence of an epiretinal membrane (ERM) has been recognized as a potential determinant of reduced anti-VEGF responsiveness as it can exert tangential traction on the macula, distort macular architecture, increase retinal thickness, and impede drug diffusion [

5,

6,

7,

8]. A study by Kakihara S et al. found that among DME patients undergoing anti-VEGF therapy, those with ERM received significantly more anti-VEGF injections during the follow-up period than those without ERM [

5]. Therefore, these authors concluded that ERM is a marker of DR severity and an independent risk factor for increased demand for anti-VEGF therapy. An ERM indicates a more severe condition and a poorer response to anti-VEGF treatment [

4,

9,

10]. For these patients, aggressive surgical intervention (vitrectomy combined with ERM peeling) is considered a key treatment option [

6]. Multiple clinical studies have shown that DME patients with ERM often exhibit limited improvements in both best-corrected visual acuity and central macular thickness (CMT) after anti-VEGF therapy [

11,

12]. Mechanical traction problems at the vitreoretinal interface (such as vitreomacular traction and ERM) are key reasons for the poor response to anti-VEGF therapy in some DME patients, highlighting the importance of identifying these structural abnormalities before treatment to develop personalized treatment plans [

12]. Conversely, ERM peeling—particularly when guided by intraoperative optical coherence tomography (iOCT) or performed with fovea-sparing techniques—has been demonstrated to enhance retinal anatomical restoration and visual recovery in diabetic patients [

13,

14]. These findings underscore the clinical importance of ERM as a key prognostic biomarker worthy of inclusion in efficacy prediction frameworks.

Notably, efforts to predict anti-VEGF outcomes based on ERM-related parameters predated the application of artificial intelligence (AI). Earlier approaches employed optical coherence tomography (OCT) for the qualitative grading or quantitative measurements of ERM, combined with clinical variables such as age, diabetes duration, baseline visual acuity, and CMT, to construct statistical prediction models [

11,

12]. The health of the microstructures (inner retinal layer and photoreceptors) revealed by OCT determines the potential for visual recovery. This helps guide clinical decisions and optimize surgical timing. The retinal microstructures observed through OCT, particularly the condition of the inner retinal layer and the integrity of the ellipsoidal zone (EZ), have significant predictive value for postoperative visual acuity [

15]. However, manual feature-engineered methods suffer from inherent subjectivity and limited dimensionality, making it difficult to capture fine-grained microstructural abnormalities—such as intraretinal cysts (IRCs) and subretinal fluid (SRF) and their complex spatial interactions—thereby restricting predictive performance.

OCT, as the gold standard for DME assessment, provides high-resolution visualization of retinal microstructures, including ERM and associated pathological changes [

16,

17,

18]. Traditional prediction methods, including regression tree models, have limited ability to capture the complex microstructural interactions in OCT and often rely on crude features such as baseline best-corrected visual acuity (BCVA) or CMT, resulting in poor predictive performance [

3,

19,

20]. These findings collectively emphasize the need for a computational framework capable of modeling subtle OCT structural changes, spatial lesion patterns, and multimodal interactions to better predict treatment outcomes for DME with ERM. With the rapid evolution of deep learning, AI-based OCT analysis has achieved substantial advances in disease classification, segmentation, and prognosis prediction [

21,

22]. Convolutional neural networks (CNNs) excel at local feature extraction but have limited receptive fields, whereas vision transformers (ViTs) leverage self-attention mechanisms to capture global dependencies at the expense of quadratic computational cost [

23]. To balance accuracy and efficiency, new architectures such as the Receptance Weighted Key Value (RWKV) model have emerged, integrating the parallel learning capacity of transformers with the inference efficiency of recurrent neural networks (RNNs), achieving linear computational complexity O(N) [

24,

25,

26,

27]. Its visual adaptation, Vision-RWKV (VRWKV), has demonstrated superior performance in global context modeling and efficiency in medical imaging tasks [

28].

In clinical practice, the comprehensive assessment of DME not only relies on OCT, but also combines it with fundus photography and other examinations to improve the classification accuracy of ophthalmic diseases [

29,

30]. He X proposed a multimodal retinal image classification method based on a modality-specific attention network [

29]. This technology has significant application potential for assisting in the diagnosis of various retinal diseases, such as DR and age-related macular degeneration (AMD). Yoo TK also explored the combination of OCT and fundus images, suggesting that a combined approach can improve the accuracy of deep learning-based diagnosis of AMD [

30]. Zuo Q proposed a multi-resolution visual Mamba network that captures different levels of retinal features using a single modality based on OCT [

31]. While capturing global information, it may overlook local details and complex spatial relationships. Beyond OCT, comprehensive evaluation of DME in clinical practice often incorporates ultra-widefield (UWF) imaging, which provides valuable information on peripheral retinal lesions, ischemic areas, prior laser scars, and other features that are typically invisible in OCT’s limited field of view [

28,

32]. Theoretically, integrating OCT and UWF imaging enables a multimodal representation of both central and peripheral retinal pathology, offering a more complete understanding of disease severity and potential treatment response. However, most existing predictive models have relied on a single imaging modality, and multimodal AI frameworks combining OCT and UWF data remain largely unexplored.

Building upon these observations, the present study proposes a novel VRWKV-based architecture that integrates ERM spatial features for simultaneous anti-VEGF response prediction and OCT biomarker segmentation. The model incorporates a Causal Attention Learning (CAL) module to identify causally relevant regions, a curriculum learning strategy to emulate the progressive reasoning process of clinicians, and a global completion (GC) loss function to enhance small-lesion continuity (termed DME-RWKV). Furthermore, a multimodal OCT + UWF input pathway is constructed to simulate a comprehensive clinical assessment, aiming to improve prediction accuracy and interpretability, and ultimately supporting more individualized DME treatment planning.

2. Materials and Methods

2.1. Study Population

This retrospective study included 402 eyes from 371 patients diagnosed with DME at the Ninth People’s Hospital between October 2023 and February 2025. All patients were divided into a responsive group and a non-responsive group based on changes in CMT on OCT before and after treatment. The patient group responding to anti-VEGF treatment was defined as those whose CMT decreased by at least 10% after treatment. The responsive group consisted of 147 patients, and the non-responsive group consisted of 224 patients.

The inclusion criteria were as follows: patients with DME who received anti-VEGF therapy and had follow-up examinations both before treatment and at three months after injection. The exclusion criteria included a history of intraocular surgery or ocular trauma, high myopia (spherical equivalent ≤ −6.0 D), hyperopia (spherical equivalent ≥ +2.0 D), other macular diseases, retinal vein occlusion, cataract, glaucoma, or uveitis, uncontrolled hypertension, and poor-quality OCT images.

Demographic and preoperative clinical data included patient age, gender, intraocular pressure (IOP), and CMT. ERM was annotated by two ophthalmologists based on OCT images and verified by a senior retinal specialist.

2.2. OCT and UWF Image Collection

All eyes in this study were imaged using the spectral-domain OCT (Spectralis OCT, Heidelberg Engineering, Heidelberg, Germany) at baseline and 3 months post-injection, and the scans were tracked. Scans with a signal strength index greater than 25 and without residual motion artifacts were retained for further analysis. For each patient, a single 9 mm horizontal scan passing through the fovea was selected from each follow-up visit. Optomap (Daytona, Optos, Dunfermline, UK) UWF images were captured with the macula centered.

2.3. DME-RWKV Network

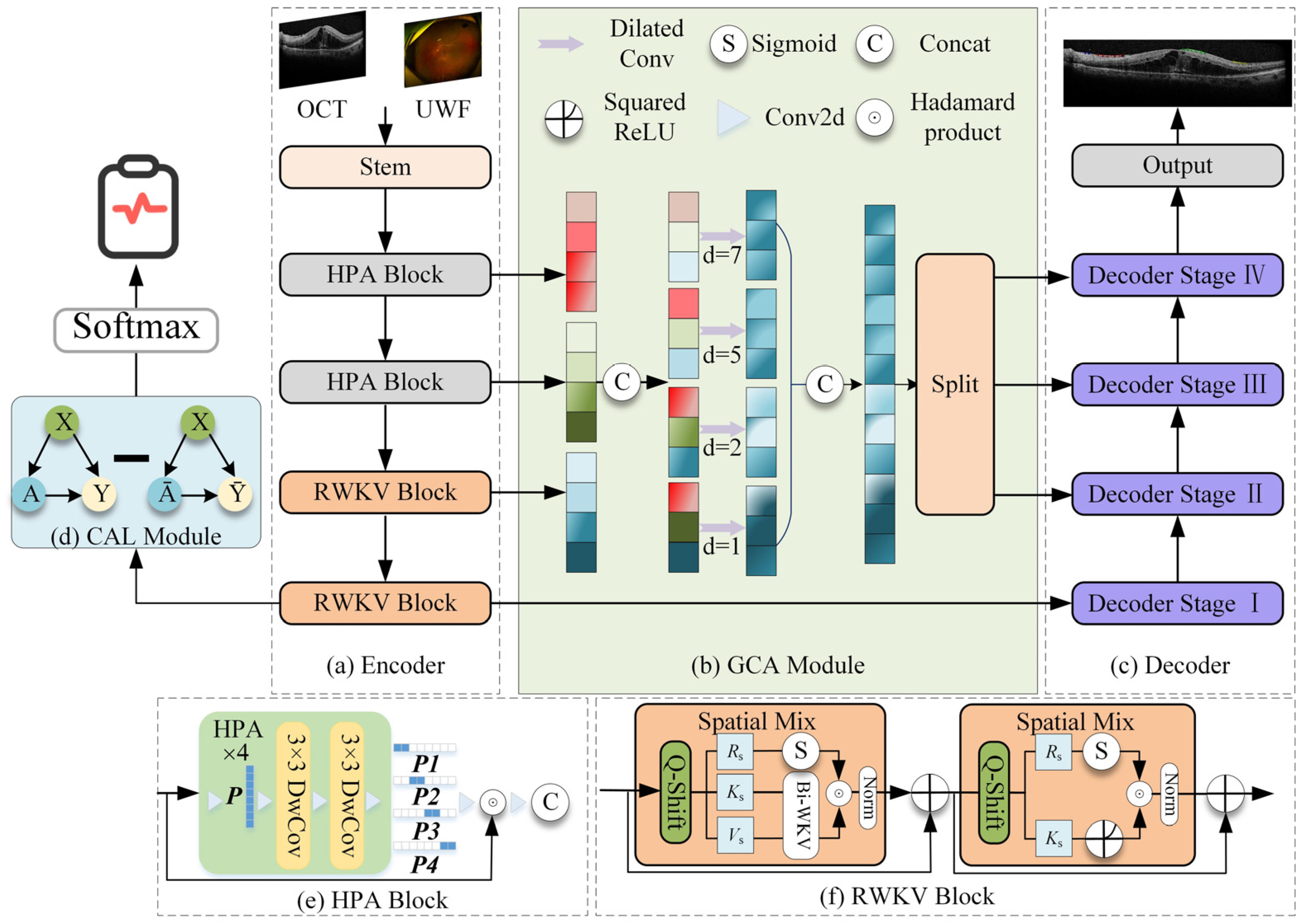

The proposed model adopts a classic encoder–GCA fusion module–decoder architecture, integrating RWKV-based efficient modeling modules and specifically designed attention mechanisms at each stage, aiming to efficiently process high-resolution OCT images while enhancing global understanding and local detail extraction capabilities (

Figure 1).

2.3.1. Encoder

The encoder employs a hybrid architecture designed to balance computational efficiency with feature extraction power. The first two layers consist of the Hadamard Product Attention (HPA) module. Based on a lightweight Hadamard convolution structure, this module replaces traditional heavy convolution or attention mechanisms to effectively aggregate local features. By utilizing rapid Hadamard transforms, the HPA module significantly reduces the network’s parameter count and computational burden, making it highly suitable for processing high-resolution OCT images.

Following this local feature aggregation, the HPA module works seamlessly with the subsequent VRWKV encoding blocks. Built upon the VRWKV architecture, these blocks utilize global receptive modules with linear complexity (e.g., Bi-WKV, SQ-Shift) to capture long-range dependencies and broad contextual information. This hybrid design allows the encoder to incorporate powerful global information while ensuring efficient feature extraction, ultimately forming a structure that balances precision with speed.

2.3.2. GCA Fusion Module

The Global Context Attention (GCA) module is designed to integrate information from different encoder layers, balancing global structure and local anomaly information. It includes two mechanisms: global attention map generation and multi-scale fusion. Global attention map generation is used to generate soft attention maps based on the encoded features, guiding the subsequent decoder to focus on prognosis-related areas (such as IRC, SRF, and ERM). In addition, the GCA module performs multi-scale fusion by combining shallow high-resolution features with deeper semantic information, enhancing the model’s ability to recognize small lesions.

2.3.3. Decoder

The decoder is structured into four distinct stages, symmetrically corresponding to the encoder’s hierarchical levels. This four-stage design is adopted to progressively recover spatial resolution from deep semantic features while mitigating the loss of fine details. In each stage, the decoder combines upsampled high-level semantics with corresponding low-level details from the encoder via skip connections. This stepwise reconstruction strategy allows the model to effectively bridge the semantic gap between encoder and decoder features, ensuring precise boundary delineation for small lesions.

The decoder produces two primary outputs: a region-of-interest (ROI) segmentation map, which automatically delineates key structures in OCT images (e.g., ERM, IRC, and SRF), and a therapeutic efficacy prediction vector, which integrates attention-weighted information to predict treatment response. During training, the loss function includes segmentation loss (such as Dice/BCE) and therapeutic prediction loss, incorporating a GC loss to further improve sensitivity to small lesions.

2.4. GCA Feature Fusion Module

The GCA feature fusion module is designed to efficiently and effectively integrate multi-scale features from different stages of the encoder. Traditional segmentation networks often use a “bridge” or bottleneck layer that simply concatenates or sums features of different resolutions, which tends to underutilize contextual information and increases unnecessary computational overhead. The proposed GCA module addresses these limitations by explicitly modeling long-range dependencies and selectively enhancing key informative features while suppressing redundant or noisy responses.

The GCA module is structured in three stages:

- (1)

Feature alignment: Lightweight upsampling or downsampling operations align multi-resolution features spatially, ensuring consistent feature map sizes during fusion.

- (2)

Global context modeling: By combining global average pooling and learnable attention weights, semantic representations are aggregated, enabling the network to retain important local details while capturing global scene information.

- (3)

Global and local fusion: The globally enhanced features are fused with high-resolution local features via element-wise multiplication (Hadamard fusion) and residual addition, preserving fine-grained structural details while obtaining rich contextual information.

Replacing traditional bridge stages with the GCA module offers two major advantages. The first is enhanced cross-scale interaction: multi-level features are adaptively weighted based on their global relevance, rather than being equally merged. The second is improved computational efficiency: redundant feature channels are suppressed, thereby reducing parameters and computational costs (FLOPs) without compromising prediction performance.

In the DME-RWKV network, the GCA module serves as a critical intermediate processing stage, providing balanced feature representation between the encoder and decoder. This design is particularly important in retinal OCT image analysis, where both fine pathological structures and broader retinal contextual information need to be considered.

2.5. CAL Module

We propose the first use of a CAL module before the prediction head in the RWKV-based encoder. The module consists of one linear layer, one ReLU layer, and another linear layer, which optimizes the quality of the attention map via causal inference, thereby guiding the network to focus on features directly related to the factual structure. Specifically, given input features

and a causal attention map

, the intervention operation

generates a causal attention feature map

, where the

operation uniformly applies randomly generated weight maps of the same size as the attention maps. The network’s output prediction

is represented as the difference between the predicted values based on the results

and the predictions based on the non-result attention maps

. The formula is as follows:

where

is the distribution of causal attention, and

represents the prediction head. The final prediction

is the output after passing through a Softmax layer.

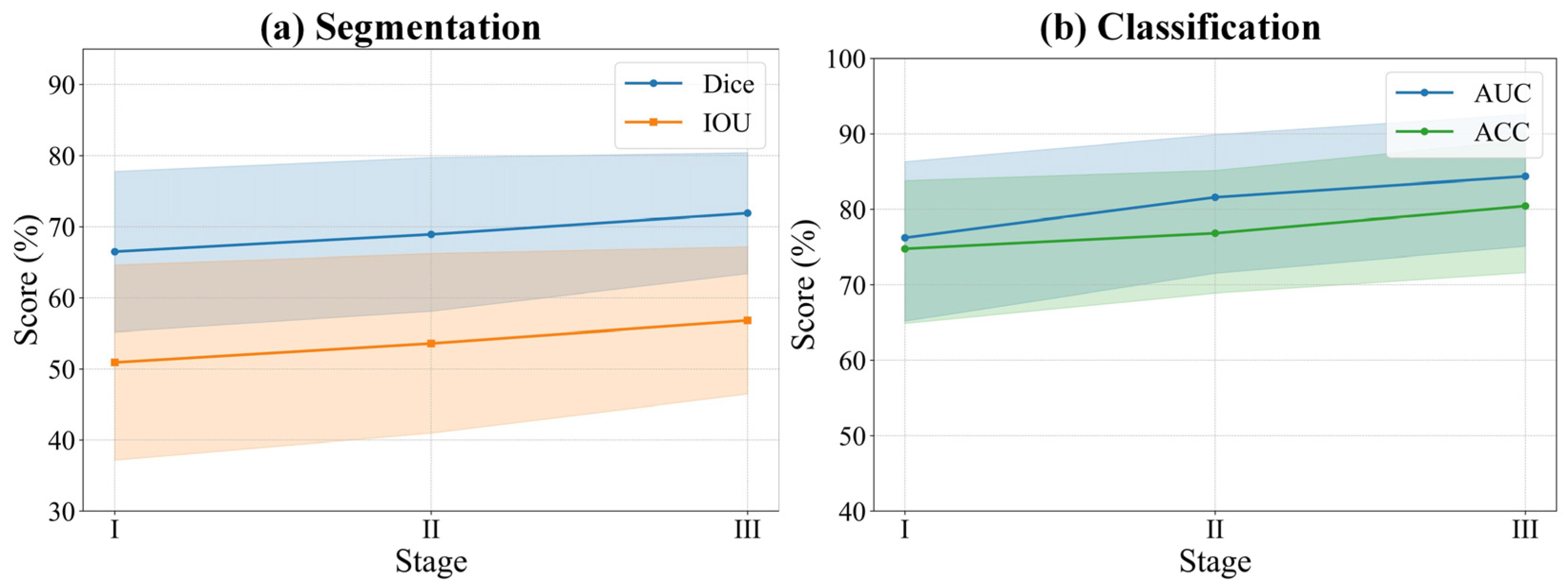

2.6. Curriculum Learning

In terms of visual learning, we integrate various difficulty levels of diagnosing DME therapeutic efficacy from UWF and OCT images, taking into account factors such as signal intensity, area size, and image quality within the ROI. The training curriculum for the DME-RWKV network is designed for three levels of difficulty: easy, medium, and hard. This approach allows the network to first learn features associated with clear and easily predictable treatment responses, followed by progressively harder cases, thus enhancing the overall training process (

Figure 2).

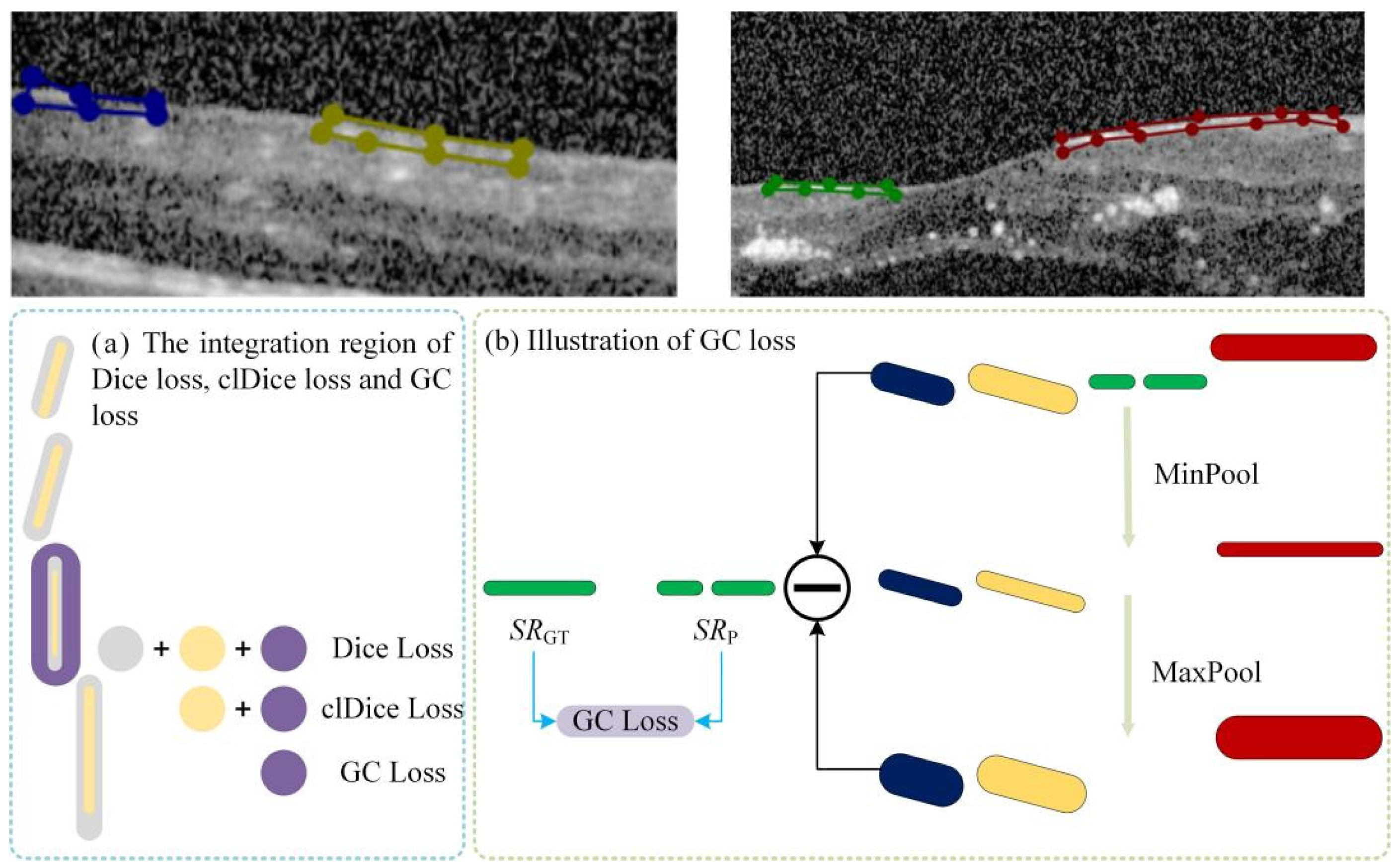

2.7. GC Loss

We observed that certain lesion areas in OCT images are very small or elongated, presenting significant challenges for accurate segmentation. Traditional methods like Dice Loss focus primarily on pixel-wise overlap; consequently, for these fine structures, even a few incorrect pixels can cause topological “breaks” or fragmentation. To address this, we propose a GC loss function designed to maintain the topological structural integrity of lesion regions.

The specific calculation workflow of the GC loss function is illustrated in

Figure 3. The process consists of three key steps: First (Step 1 in

Figure 3), a connected-component analysis is applied to both the predicted map (

Mp) and the ground truth (

MGT) to isolate specific lesion regions based on size and connectivity, ensuring the loss function focuses on relevant micro-structures. Second (Step 2 in

Figure 4), the method extracts the skeletal structures, termed Slim Regions (SRs), from these masked areas. The core mechanism of this extraction is conceptually illustrated in

Figure 4. It utilizes a morphological opening-like operation involving MinPool and MaxPool layers to capture the central topological line. The mathematical formulation is as follows:

where

M represents the lesion mask, and

s is the kernel size of the pooling operations. In this study,

s is set to 5 to efficiently extract the central line representing the lesion’s basic topology. Finally, (Step 3 in

Figure 3), the GC loss is computed by minimizing the difference between the extracted skeletons of the prediction

SRp and the ground truth

SRGT, thereby enforcing structural continuity in the final segmentation.

4. Discussion

This study is centered around the critical role of vitreomacular interface abnormalities, particularly ERM, in predicting anti-VEGF efficacy in DME. We propose a novel VRWKV architecture (DME-RWKV) that integrates ERM spatial information. The model not only jointly predicts anti-VEGF efficacy and performs OCT biomarker segmentation, but also incorporates a CAL module to accurately locate key regions. Combined with a curriculum learning strategy and GC loss, it significantly optimizes the detection of small lesions. Additionally, we developed an OCT + UWF multimodal input channel to simulate clinical decision-making processes, further enhancing prediction accuracy and interpretability, thereby providing stronger support for personalized treatment. Experimental results demonstrate that this model outperforms existing SOTA methods in both diagnostic and segmentation tasks, validating its effectiveness and clinical application potential in AI modeling guided by core clinical issues.

This study proposes a novel modeling strategy that integrates clinical priors with deep learning. In particular, we incorporated 50 quantitative imaging indicators (1 manual OCT measurement and 49 automatically extracted from UWF), integrated with end-to-end feature learning. This approach not only preserves the learning capacity of deep learning models but also explicitly integrates clinical knowledge, thus enhancing both prediction accuracy and interpretability, with a specific focus on modeling ERM-related spatial information. The framework possesses extensibility in that future incorporation of new critical imaging features can be accomplished directly without altering the underlying architecture. This allows the AI model to dynamically assimilate emerging domain knowledge and maintain consistency with clinical rationale, thereby mitigating the “black-box” nature of the model and reinforcing the central role of clinicians in the model development cycle. Experimental results demonstrate that this method outperforms existing SOTA models in both diagnostic and segmentation tasks, validating the potential of clinical-problem-driven AI modeling.

The proposed DME-RWKV model utilizes dual-channel input (OCT and UWF) and incorporates a CAL module to explicitly model the spatial features of ERM. Coupled with a curriculum learning strategy, this design strengthens the model’s recognition capability for the vitreoretinal interface. This architecture not only mimics the comprehensive assessment process used by clinicians for judging treatment efficacy—considering both local (macular microstructure) and global (retinal vascular pathology) features—but also addresses a critical limitation in previous studies. Prior research was often restricted to single-modality inputs, mostly focused on qualitative descriptions or simple statistical analyses, and lacked systematic modeling of the condition’s spatial distribution and pathological characteristics [

2,

5,

12,

32]. Our research is thus able to better delineate the potential impact of ERM on macular morphology and treatment response, yielding prediction results that are more consistent with clinical pathological rationale, and highlighting the significant value of fusing multimodal data with structured prior knowledge.

The curriculum learning strategy in this study not only simulates the stepwise diagnostic reasoning process of clinicians during training but also reflects clinical integrated decision-making logic at the multimodal data fusion level. Specifically, training samples are first categorized into three difficulty levels—easy, moderate, and difficult—based on the signal strength, area size, and image quality of the ROI regions. This approach introduces more complex cases gradually, allowing the model to first learn typical features that are easy to recognize and then progressively master more complex lesion patterns, thus enhancing convergence stability and generalization capability. Moreover, the model integrates OCT and UWF information at the input layer to simulate the comprehensive evaluation process of clinicians, who consider both local macular structures and global retinal lesions when making decisions. This integration helps to improve prediction accuracy and interpretability. The “learning process + multimodal input” dual simulation not only strengthens the model’s robustness but also provides new insights into the transferability of AI in complex clinical scenarios.

DME is a characteristic pathological manifestation that affects the macula in diabetic retinopathy and is a major cause of vision impairment in diabetic patients. The changes in other parts of the retina in diabetic retinopathy are also significant for clinical diagnosis and treatment guidance. The GCA feature fusion module significantly enhances cross-scale information interaction. Unlike traditional encoder–decoder architectures that simply concatenate or sum features from different resolutions, the GCA module models long-range dependencies and introduces a global semantic weighting mechanism. This effectively improves the discriminability of features when they are fused, allowing the network to maintain high-resolution local structures while capturing rich contextual information. This is particularly important for OCT images, as lesions can manifest as large retinal layer changes or be present only in small, localized regions.

In clinical interpretation of OCT images, clinicians do not focus on every region of the image equally but rely on pathological cues to progressively narrow their attention to critical structures. This “causal chain “ reasoning is central to maintaining diagnostic consistency. To align with this process, we designed a CAL module, which introduces causal constraints during feature extraction, aligning attention distribution with clinical diagnostic reasoning and reducing the model’s response to irrelevant background noise. This mechanism improves the model’s sensitivity to key structures, particularly in cases where lesion boundaries are vague or lesion signals are weak, thus enhancing prediction stability and reliability. The quality of the attention maps optimized by CAL significantly improves, increasing the accuracy of identifying key features associated with DME by 10%. This method is not only applicable to DME but can also extend to other ophthalmic diseases, broadening its potential application in the field of ophthalmology.

In OCT images, small and elongated lesions (such as focal edema or exudative bands) significantly affect the treatment prognosis. These lesions are closely monitored by clinicians, as their location, number, and activity directly influence immediate treatment outcomes. Traditional segmentation methods (e.g., Dice Loss) often fail to maintain structural continuity when processing such lesions. The introduction of the GC loss function effectively addresses this challenge by improving segmentation continuity and structural integrity. The accuracy of segmenting small lesions is closely related to patient prognosis and can provide crucial data for early intervention, guiding treatment adjustments.

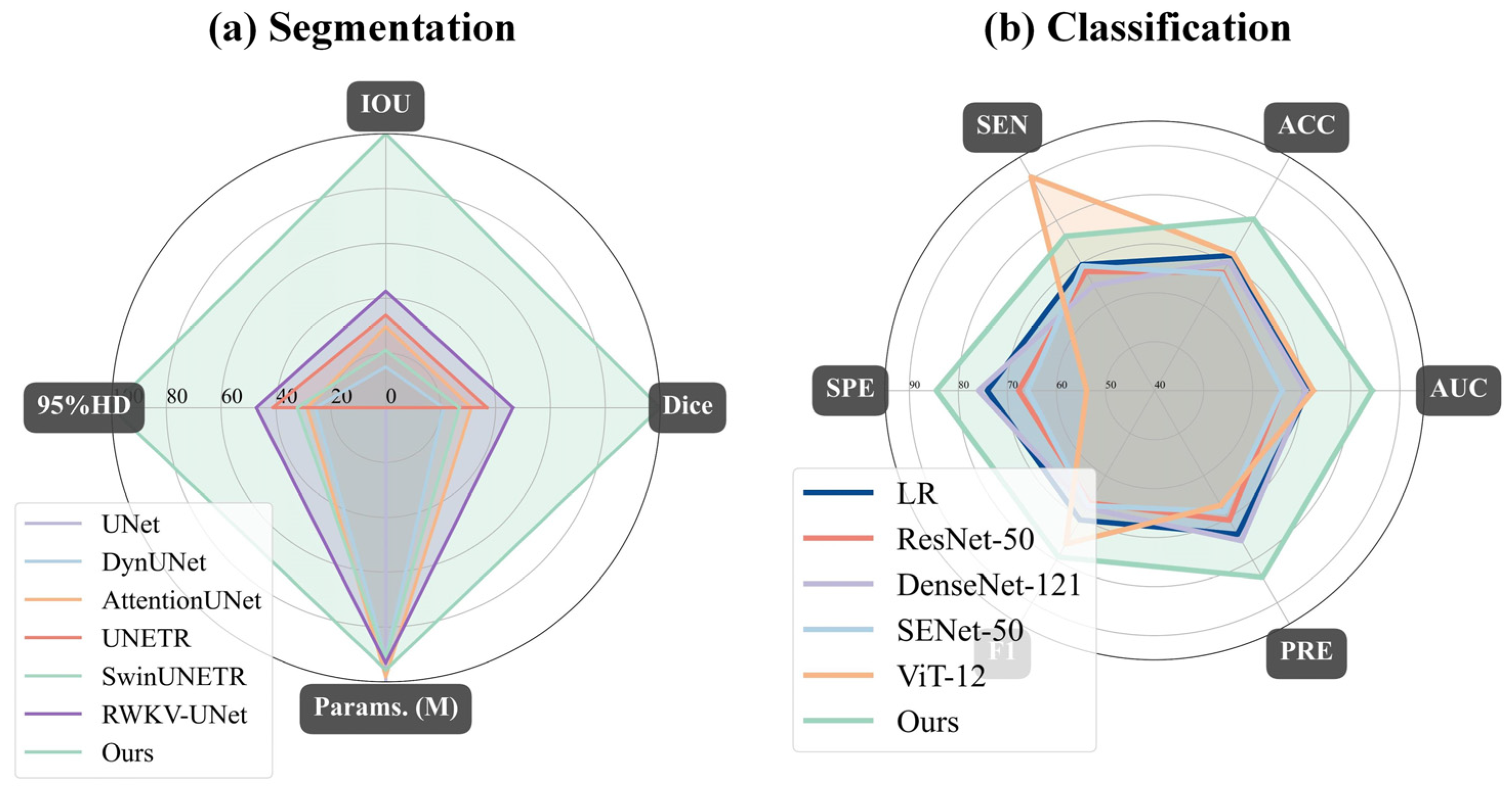

Compared to existing SOTA methods, DME-RWKV achieves significant performance advantages in both diagnostic and segmentation tasks, particularly in small lesion detection and cross-scale feature fusion. As summarized in

Table 10, this superior performance can be attributed to three key qualitative advantages of our framework over existing methods: (1) Efficiency–accuracy balance: unlike CNNs, which lack global context, and Transformers, which are computationally heavy, our RWKV-based encoder captures long-range dependencies with linear complexity, enabling efficient processing of high-resolution OCT images. (2) Structural integrity for micro-lesions: the introduction of the GC loss function explicitly addresses the topological breakage often seen in CNN-based segmentation of small lesions (e.g., IRC, SRF), ensuring continuous and precise boundaries. (3) Clinical interpretability: By integrating the CAL module and a multimodal (OCT + UWF) curriculum learning strategy, the model mimics clinical reasoning. This not only improves robustness against noise but also provides causally relevant attention maps, making the “black-box” decision process transparent to clinicians. These combined advantages suggest that our comprehensive framework better meets the dual clinical demands for precision and stability, holding strong potential for broader application and value.

Despite the encouraging results, this study has several limitations that need to be addressed. First, the retrospective study design and the fact that all data were derived from a single tertiary hospital may introduce selection bias and limit the generalizability of our model. The patient demographics, treatment protocols, and imaging equipment characteristics at our center may not fully represent the broader and more diverse populations and clinical settings encountered in real-world practice. Therefore, the performance of DME-RWKV needs external validation at multiple geographically dispersed centers to confirm its robustness and general applicability. A larger-scale prospective observational trial using standardized imaging protocols is necessary to confirm and extend these findings. Secondly, the sample included in this study exhibited a class imbalance, with the non-responsive group having a larger number than the responsive group. Although this reflects the objective description of real-world clinical outcomes, it may introduce certain biases. Future studies should aim to further eliminate intergroup differences to ensure the rigor of the research. Finally, although the model incorporates interpretability modules (such as CAL), its ultimate clinical utility and impact on actual treatment decisions still require prospective evaluation within clinical workflows. Therefore, future work should focus on conducting multi-center external validation and prospective trials to assess the model’s efficacy in improving patient outcomes and optimizing clinical decision-making in DME management.

5. Conclusions

In conclusion, this study presents a pioneering multi-task analysis framework for OCT images and makes three distinct contributions to the field of ophthalmic AI. First, in terms of architectural innovation, we propose the DME-RWKV, which effectively balances global context modeling with computational efficiency. Unlike traditional CNNs restricted by local receptive fields or Transformers burdened by quadratic complexity, our introduction of the linear-complexity RWKV mechanism allows for efficient processing of high-resolution retinal images. Second, regarding methodological advancement, we explicitly integrate clinical reasoning into deep learning. By designing the CAL module and a curriculum learning strategy, the model mimics the “stepwise” and “evidence-based” diagnostic process of clinicians, significantly enhancing the interpretability and robustness of the “black-box” decision process. Third, addressing the specific challenge of micro-lesion detection, we introduce a novel GC loss. This contribution overcomes the limitations of pixel-wise loss functions (e.g., Dice Loss) by enforcing topological structural integrity, thereby resolving the fragmentation issue in segmenting small lesions like IRC and SRF.

Collectively, these contributions establish a robust system that does not rely solely on overall image patterns but integrates anatomical priors and multimodal data (OCT and UWF) to explicitly characterize vitreomacular interface abnormalities. Future work could further achieve “joint operations” by fusing multimodal imaging (e.g., OCT angiography, fundus photography), functional assessments, and clinical variables to develop a model adaptable across different disease types, which would enhance the developed AI system’s overall understanding and generalization ability for complex ocular conditions.