Abstract

Despite the pervasive role of digital platforms in contemporary higher education, existing measurement tools fail to capture students’ psychological dependence on online approval within academic contexts, focusing instead on technical competencies or clinical addiction symptoms. This study developed and psychometrically validated the Digital Validation Seeking Scale (DVSS), a multidimensional instrument measuring university students’ reliance on digital feedback for academic and identity confirmation. Two independent samples of Egyptian undergraduate students were recruited: an exploratory sample of 511 students and a confirmatory sample of 740 students from six universities. The DVSS underwent rigorous content validation by eleven experts, exploratory factor analysis (EFA) using Principal Axis Factoring with Promax rotation, and confirmatory factor analysis (CFA) comparing competing structural models. Results revealed a robust four-factor structure comprising Academic Self-Quantification (ASQ), Feedback Hyper-vigilance (FHV), Social Comparison (SC), and Performative Studiousness (PS), with the first-order four-factor model demonstrating superior fit indices. The final 19-item scale exhibited excellent internal consistency, with Cronbach’s alpha coefficients ranging from 0.807 to 0.938 for subscales and total score, respectively, and strong test–retest reliability. The DVSS provides researchers and practitioners with a theoretically grounded, psychometrically sound instrument for identifying maladaptive digital validation patterns before they compromise academic engagement or psychological well-being, enabling targeted interventions within hybrid educational environments.

1. Introduction

In contemporary higher education, students increasingly turn to digital platforms not merely for information access but to obtain external confirmation of their competence, identity, and belonging. Digital validation seeking can be defined as a recurrent, intentional pattern of using digital platforms and tools to obtain social, academic, or identity-related confirmation from others or from systems, such that external digital feedback becomes a key regulator of students’ self-evaluation and academic decisions (X. Zhang et al., 2025). This phenomenon manifests through multiple channels: students seek identity validation by posting personal narratives in online forums to confirm self-suspected conditions (X. Zhang et al., 2025); pursue academic competence validation by consulting AI tools and learning analytics to reassure themselves about understanding (Cabero-Almenara et al., 2022; Muñoz-Repiso et al., 2020; Pumptow & Brahm, 2020); engage in SC through monitoring peers’ digital achievements (Chen et al., 2025; Neagu & Vieriu, 2025); and seek health-related normality validation during crises (Htay et al., 2022; Neagu & Vieriu, 2025).

Despite the proliferation of social media measurement tools, existing scales inadequately capture digital validation seeking within university contexts. Current instruments predominantly focus on problematic use and addiction symptoms (Boer et al., 2021; Eijnden et al., 2016; Fung, 2019; Sadeghi et al., 2025), which emphasize clinical impairment rather than the everyday micro-processes of seeking likes, comments, and peer approval that characterize student digital behavior. Adjacent constructs such as digital media self-efficacy (Pumptow & Brahm, 2020), digital competence (Tzafilkou et al., 2022), and digital life balance (Lima-Costa et al., 2024; Malas et al., 2025) measure skills, confidence, or overall online-offline equilibrium but fail to probe students’ emotional dependence on peer approval and validation metrics. Furthermore, scales targeting social media consciousness (Choukas-Bradley et al., 2020), fatigue (S. Zhang et al., 2021), and anxiety (Alkis et al., 2017) were designed for different populations or contexts, missing university-specific validation dynamics around academic identity, performance, and institutional belonging that define contemporary student experience.

University students, particularly those aged 18–24, represent the primary stakeholders affected by digital validation loops, with significant implications for both academic and psychological outcomes (Atlam et al., 2021; Elsayed, 2025; Wang et al., 2021). Research demonstrates that digital engagement patterns influence academic performance through complex pathways: while some studies report positive associations between social media use and grade point average (GPA) (Elsayed, 2025; Shahzad et al., 2024), excessive reliance on digital validation can undermine achievement when it substitutes meaningful human support (Crawford et al., 2024). The psychological toll is equally concerning, as students experience social network exhaustion from constant comparison (Pang & Zhang, 2024), academic burnout from cognitive overload (Wang et al., 2021), and diminished well-being when digital interactions replace authentic connections (Crawford et al., 2024; Neagu & Vieriu, 2025). Female students and those from lower socioeconomic backgrounds appear particularly vulnerable to digital stressors (Wang et al., 2021), highlighting the need for targeted measurement tools that capture these nuanced experiences.

The contemporary academic environment exists as a hybrid assemblage where physical classrooms intersect with learning management systems, social media platforms, and messaging applications, creating entangled spaces where validation seeking fundamentally shapes learning outcomes (Hashim et al., 2021; Lamb et al., 2021; Tondeur et al., 2024). Within these integrated environments, social media used for collaborative learning enhances peer interaction, knowledge sharing, and academic performance when appropriately channeled (Al-Rahmi et al., 2022; Ansari & Khan, 2020). Digital communication tools that foster connectedness, immediacy, and teacher presence transform validation needs into productive engagement through prompt feedback and visible participation (El-Sayad et al., 2021; Hehir et al., 2021; Raes, 2021). However, unbounded digital exposure can redirect validation seeking toward status comparison and non-academic social activities, potentially undermining professionalism and well-being (Guckian et al., 2021). Hybrid courses deliberately balancing technology, pedagogy, and social interaction demonstrate superior academic performance and satisfaction (Abdigapbarova et al., 2025; Mahender & Sharma, 2025), suggesting that how hybrid spaces channel validation drives—toward collaboration or fragmented attention—critically determines educational outcomes.

While digital validation seeking is nearly universal among students, it becomes maladaptive when characterized by compulsive pursuit of online approval as a central regulator of self-worth (Nesi & Prinstein, 2018; X. Zhang et al., 2025). Research identifies critical warning markers: effortful digital status seeking prospectively predicts substance use and sexual risk behaviors (Nesi & Prinstein, 2018), while approval-seeking schemas underpin behavioral addictions and negative self-image (Ang et al., 2025; Vieira et al., 2023). Among university students, psychological distress and online academic difficulties co-occur, negatively affecting course satisfaction and performance (Curelaru & Curelaru, 2024), yet students struggle to discern trustworthy digital mental health resources despite high need (Montagni et al., 2020). The urgency of early measurement is underscored by evidence that unmanaged digital threats can escalate to burnout and mental health distress (Sun et al., 2022), and that digital interventions can effectively support well-being when appropriately targeted (Ferrari et al., 2022; Lattie et al., 2019). Measuring validation-seeking patterns before they compromise academic engagement or clinical thresholds is therefore essential for institutional prevention efforts.

The virtual feedback loop operates through two complementary theoretical mechanisms: social comparison theory and self-determination theory (SDT). From a social comparison perspective, algorithmically curated digital metrics provide continuous standards against which students gauge their relative standing, with comparison orientation mediating how feedback impacts state self-esteem—amplifying both benefits of positive feedback and harms of negative feedback (Chen, 2025; García-Arch et al., 2025). Through an SDT lens, quantified feedback dynamically regulates basic psychological needs: likes and comments function as competence-relevant information, digital interactions signal relatedness satisfaction or thwarting, and platform affordances either support autonomous engagement or promote controlled, contingent behavior driven by external validation (Gagné et al., 2022; Ryan et al., 2018, 2021; Urhahne & Wijnia, 2023; Q. Zhang & Shakibaei, 2025). This recursive cycle—where digital action elicits feedback that shapes self-evaluation and subsequent behavior—creates a self-perpetuating loop wherein comparison processes and need regulation mutually reinforce students’ dependence on external digital validation.

Current educational measurement tools overwhelmingly assess technical and functional digital competencies—device proficiency, information search, content creation, safety, and ethics—derived from frameworks like DigComp and DigCompEdu (Cabero-Almenara et al., 2020; Fan & Wang, 2022; Nguyen & Habók, 2023; Tzafilkou et al., 2022), yet fail to capture the psychological construct of digital validation seeking within academic contexts. Systematic reviews consistently identify critical gaps: limited availability of validated, context-sensitive instruments (Suri et al., 2025), absence of authentic performance assessment (Suri et al., 2025), and neglect of socio-emotional dimensions such as dependence on online approval and feedback-seeking motives in educational platforms (Nguyen & Habók, 2023; Suri et al., 2025). Recent role-specific scales for teachers, students, and parents continue to operationalize what learners can do rather than why and how they seek validation online (Jia et al., 2025; Pelaez-Sanchez et al., 2024; Soriano-Alcantara et al., 2024; Viberg et al., 2024). This study addresses this void by developing the first psychometrically validated, education-specific instrument measuring digital validation seeking in higher education.

Given the proliferation of digital platforms in higher education and the absence of validated instruments measuring students’ psychological dependence on online approval within academic contexts, this study aimed to develop and psychometrically validate the Digital Validation Seeking Scale (DVSS) for university students. Specifically, the research sought to generate a theoretically grounded item pool capturing multiple dimensions of digital validation seeking in educational settings; establish content validity through expert review procedures; determine the underlying factor structure through EFA with an independent sample; confirm the optimal factorial model using CFA; assess internal consistency reliability using multiple coefficients; evaluate temporal stability through test–retest procedures; and examine convergent and discriminant validity through interfactor correlations and composite reliability (CR) indices. By providing a psychometrically sound measurement tool specifically designed for higher education contexts, this study addresses critical gaps identified in systematic reviews and enables future research examining relationships between digital validation seeking and academic outcomes, psychological well-being, and educational intervention effectiveness.

2. Materials and Methods

2.1. Participants and Sampling

The present study adopted a cross-sectional design utilizing two independent samples of Egyptian undergraduate students to conduct exploratory and confirmatory factor analyses sequentially. The exploratory sample comprised 511 students, whereas the confirmatory sample consisted of 740 students, drawn from six geographically distributed Egyptian universities: Al-Azhar University, Zagazig University, Kafr El Sheikh University, Beni Suef University, Damanhour University, and Tanta University. The complete demographic characteristics of both samples are presented in Table 1. Both samples demonstrated relatively balanced gender distributions, with males constituting approximately 53.0% and 52.6% of the exploratory and confirmatory samples, respectively.

Table 1.

Demographic Characteristics of Exploratory and CFA Samples.

Representation across all four academic years was proportionally equitable, with year-level distributions ranging from 23.3% to 27.4% in the exploratory sample and from 24.5% to 25.4% in the confirmatory sample. Urban residence was marginally more prevalent than rural residence across both samples. Participants in the exploratory sample ranged in age from 18 to 24 years (M = 21.18, SD = 2.03), while those in the confirmatory sample ranged from 18 to 23 years (M = 20.47, SD = 1.70). To formally verify age equivalence, an independent-samples t-test was conducted, confirming no statistically meaningful difference between the two groups and thereby supporting sample comparability.

To confirm broader demographic equivalence, chi-square homogeneity tests revealed no statistically significant differences in gender (χ2 = 0.021, df = 1, p = 0.885), academic year (χ2 = 0.412, df = 3, p = 0.938), or residence (χ2 = 1.124, df = 1, p = 0.289), collectively indicating that the two samples were demographically homogeneous and unlikely to introduce differential sampling bias into the factor analytic procedures. With respect to digital platform usage, participants reported engagement across multiple social media platforms, including Facebook, TikTok, Twitter, Snapchat, and Instagram, with Facebook emerging as the most frequently reported platform within the confirmatory sample.

2.2. Procedure

Data collection occurred between 10 and 25 November 2025, using Google Forms to distribute the survey instruments electronically. This online administration method ensured broad accessibility across the geographically dispersed university locations and facilitated efficient data collection during the academic semester. For test–retest reliability assessment, a subsample of 171 students completed the scale on two occasions separated by a 15-day interval, a duration sufficient to minimize memory effects while remaining brief enough to ensure stability of the construct being measured.

2.3. Instrument Development

The Digital Validation Seeking Scale (DVSS) was developed to measure the extent to which university students seek external confirmation and approval through digital platforms in academic contexts. The initial item pool consisted of 24 statements, consistent with recommendations that item pools should contain approximately two to three times the intended final scale length to allow for attrition during validation (DeVellis, 2016; Boateng et al., 2018). These items were designed to capture various dimensions of digital validation seeking behaviors, including dependence on social media feedback for academic self-evaluation, compulsive monitoring of engagement metrics, social comparison with peers’ digital achievements, and performative presentation of study behaviors.

All items were rated on a five-point Likert scale ranging from 1 (strongly disagree) to 5 (strongly agree), a format widely recommended for attitudinal measurement in educational and psychological research due to its balance between response sensitivity and cognitive manageability (Likert, 1932; Revilla et al., 2014; Wakita et al., 2012), with higher scores indicating greater levels of digital validation seeking, following standard unidirectional scoring conventions for behavioral tendency scales (DeVellis, 2016).

2.4. Content Validity

The initial 24-item version of the DVSS underwent rigorous expert review to establish content validity. Eleven experts specializing in educational psychology, mental health, and educational technology were recruited, consistent with recommendations that content validity panels comprise between five and fifteen subject matter experts to ensure stable CVI estimates (Lynn, 1986; Polit & Beck, 2006). These experts evaluated each item for appropriateness of response alternatives, clarity of wording, and alignment with the scoring key. Expert ratings were quantified to calculate the Content Validity Index (CVI), which reached 0.803, exceeding the conventional threshold of 0.80 for acceptable content validity recommended for multi-item scales (Polit et al., 2007; Lynn, 1986). Based on expert feedback and quantitative analysis of item-level agreement, four items were eliminated following established item-level CVI (I-CVI) criteria whereby items rated appropriate by fewer than 78% of experts are recommended for removal (Polit et al., 2007; Almanasreh et al., 2019), resulting in a refined 20-item instrument that proceeded to psychometric evaluation through EFA.

2.5. Data Analysis

All statistical analyses were conducted using SPSS version 27 for EFA and reliability assessment, and AMOS version 26 for CFA and structural equation modeling. The EFA employed Principal Axis Factoring as the extraction method, chosen following formal assessment of distributional assumptions using the Kolmogorov–Smirnov and Shapiro–Wilk goodness-of-fit tests (Razali & Wah, 2011), which indicated statistically significant departures from normality for all 20 items. Principal Axis Factoring is recommended over Maximum Likelihood extraction under such conditions due to its robustness when multivariate normality cannot be confirmed (Fabrigar et al., 1999; Floyd & Widaman, 1995), with Promax rotation to allow for correlated factors. Parallel analysis was conducted to determine the optimal number of factors to retain by comparing eigenvalues from the actual data with those from random data. Model fit in CFA was evaluated using multiple indices including the chi-square statistic, Comparative Fit Index (CFI), Root Mean Square Error of Approximation (RMSEA), and standardized residuals. Reliability was assessed using Cronbach’s alpha, McDonald’s omega, and Guttman’s lambda-2. Internal consistency was examined through inter-factor and item-total correlations, and temporal stability was evaluated through test–retest correlations using Pearson’s r.

3. Results

Before conducting factor analysis, preliminary analyses were performed to ensure the data met necessary assumptions and that the sample was adequate for the planned analyses. The descriptive statistics revealed mean scores ranging from 2.29 to 3.10, with standard deviations ranging from 1.26 to 1.41, indicating reasonable variability across items and the absence of ceiling or floor effects. To formally assess the normality of the data distribution, Kolmogorov–Smirnov and Shapiro–Wilk goodness-of-fit tests were conducted for all 20 items using SPSS version 27. Results indicated statistically significant deviations from normality across all items (K-S statistics ranging from 0.154 to 0.218, p < 0.001; S-W statistics ranging from 0.847 to 0.893, p < 0.001), as presented in Table 2. These findings are consistent with the observed skewness values ranging from −0.023 to 0.693 and kurtosis values ranging from −1.240 to −0.646, and collectively confirm that strict normality assumptions were not met. Accordingly, Principal Axis Factoring was selected as the extraction method for EFA due to its robustness under non-normal distributions (Fabrigar et al., 1999; Floyd & Widaman, 1995), and the use of Promax oblique rotation was retained as planned.

Table 2.

Descriptive Statistics for DVSS Items in Exploratory Sample.

The Kaiser-Meyer-Olkin (KMO) measure of sampling adequacy yielded a value of 0.946, substantially exceeding the recommended minimum of 0.60 and indicating that the correlation patterns among items were appropriate for factor analysis. Bartlett’s Test of Sphericity was highly significant (χ2 = 5856.148, df = 190, p < 0.001), confirming that correlations among items were sufficiently large to warrant factor extraction. These indices collectively demonstrated that the data were suitable for EFA.

To determine the optimal number of factors to extract, both traditional eigenvalue criteria and parallel analysis were employed. The initial extraction using Principal Axis Factoring revealed that the first factor accounted for 47.45% of the total variance with an eigenvalue of 9.490, substantially larger than subsequent factors. Table 3 presents the total variance explained by each potential factor in both the initial extraction and after applying extraction criteria.

Table 3.

Total Variance Explained in EFA.

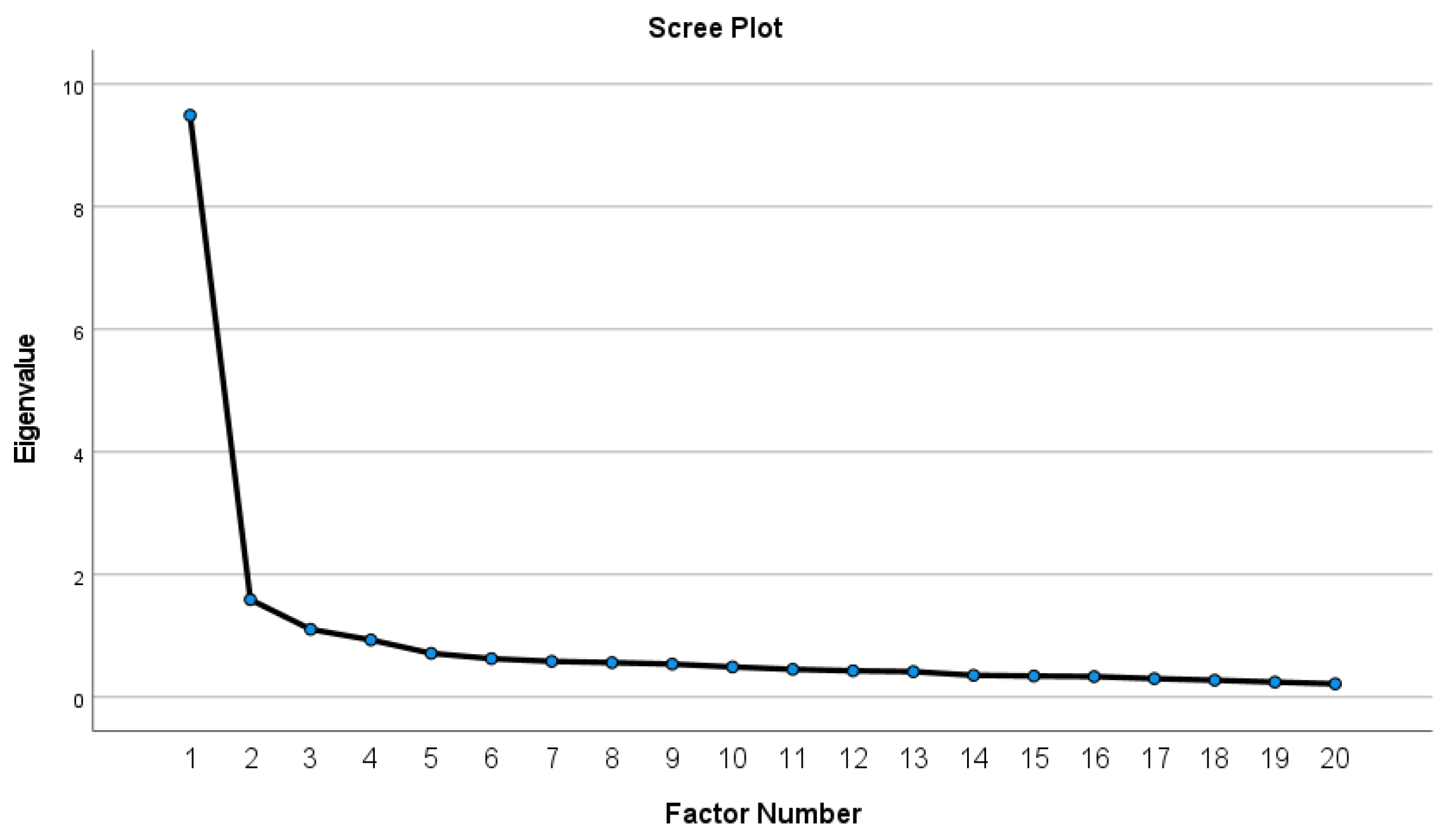

The scree plot (Figure 1) showed a clear elbow after the first factor, with subsequent factors showing much smaller eigenvalues. However, three additional factors demonstrated eigenvalues slightly above 1.0.

Figure 1.

Scree Plot for EFA.

To adjudicate between unidimensional and multidimensional solutions, parallel analysis was conducted comparing observed eigenvalues against those generated from random data with equivalent dimensions. Table 4 presents the comparison between real data eigenvalues and simulated data eigenvalues.

Table 4.

Parallel Analysis: Comparison of Real and Simulated Eigenvalues.

The parallel analysis results indicated that four factors should be retained, as the first four observed eigenvalues (10.140, 1.084, 0.595, and 0.411) exceeded their corresponding random data eigenvalues (0.455, 0.319, 0.266, and 0.219, respectively). Factor 5’s observed eigenvalue (0.111) fell below the random threshold (0.189), clearly indicating that extraction should cease after four factors. Based on these convergent indicators, a four-factor solution was pursued for further analysis. The four-factor solution using Principal Axis Factoring with Promax rotation converged in six iterations and extracted 57.29% of the total variance. Table 5 presents the pattern matrix showing item loadings on each of the four extracted factors.

Table 5.

Pattern Matrix for Four-Factor Solution with Promax Rotation.

The pattern matrix revealed a theoretically interpretable structure with items loading cleanly on their respective factors. Factor 1, labeled FHV, comprised five items (6, 7, 8, 9, 10) with pattern coefficients ranging from 0.664 to 0.901, reflecting compulsive monitoring of digital responses and anxiety about delayed feedback. Factor 2, labeled PS, included five items (16, 17, 18, 19, 20) with loadings between 0.611 and 0.793, capturing staged presentations of academic effort for digital audiences. Factor 3, labeled Social Comparison (SC), consisted of five items (11, 12, 13, 14, 15) with coefficients from 0.571 to 0.854, measuring competitive tracking of peers’ digital achievements. Factor 4, labeled ASQ, contained four items (1, 2, 3, 4) with loadings ranging from 0.506 to 0.809, representing reliance on engagement metrics to evaluate academic competence. One item (Item5) failed to load substantially on any factor and showed the highest uniqueness value (0.581), suggesting weak communality with the underlying factor structure.

Communalities for the four-factor solution ranged from 0.354 to 0.723, indicating that the extracted factors accounted for adequate proportions of item variance. The factor correlation matrix (Table 6) revealed moderate to strong positive intercorrelations among all four factors, supporting the use of oblique rotation and suggesting that these dimensions represent related facets of an overarching digital validation seeking construct.

Table 6.

Factor Correlation Matrix for Four-Factor Solution.

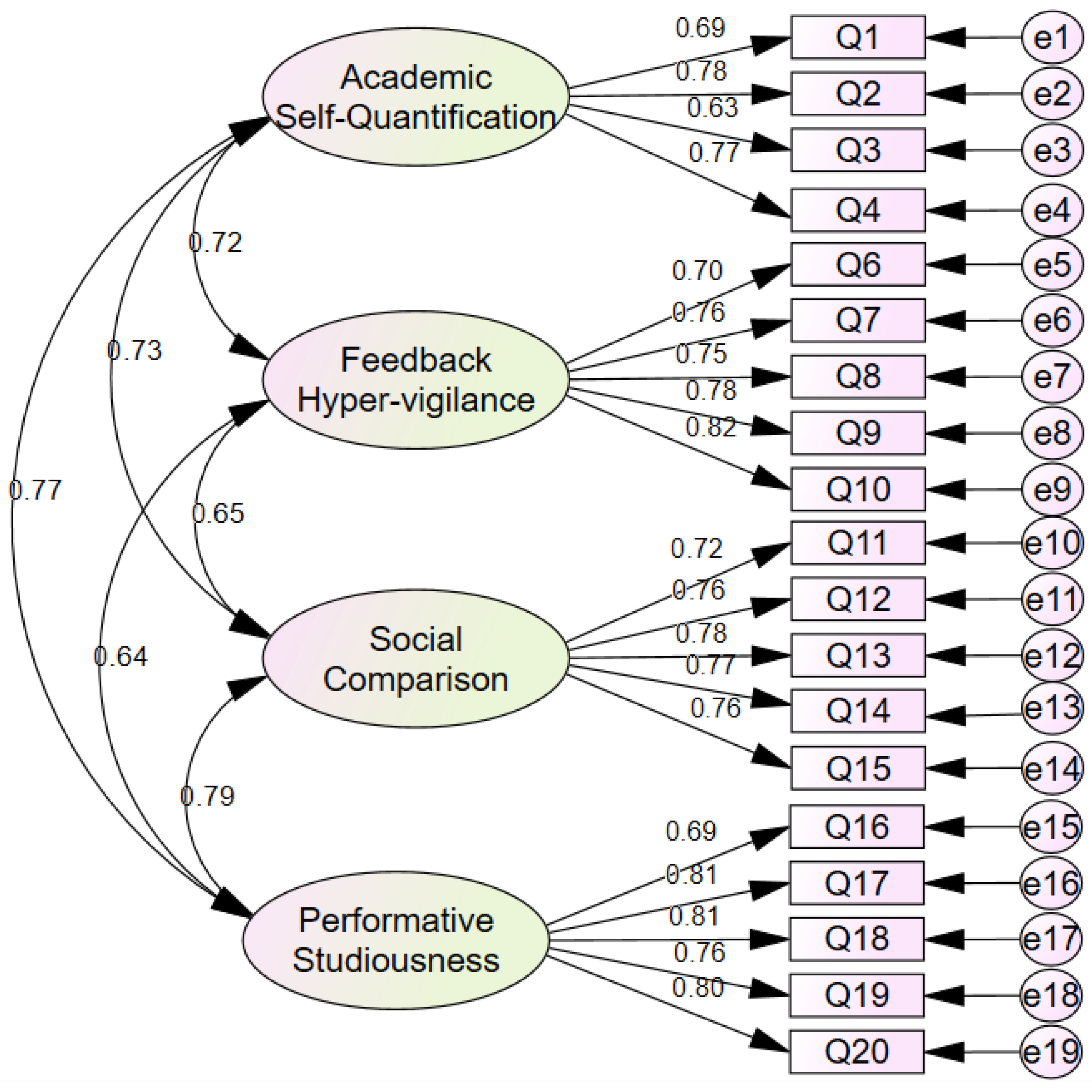

These substantial interfactor correlations (ranging from 0.620 to 0.744) provided empirical justification for testing higher-order factor models in subsequent confirmatory analyses. Three competing structural models were evaluated using the confirmatory sample to determine the optimal representation of the DVSS factor structure: a unidimensional model wherein all 20 items loaded on a single general factor, a first-order multidimensional model with four correlated factors, and a second-order model with four first-order factors loading on a single higher-order Digital Validation Seeking factor. Figure 2 presents the path diagram for the first-order four-factor model, which demonstrated the best fit.

Figure 2.

First-Order Four-Factor Model with Standardized Parameter Estimates.

Table 7 presents the comparative fit indices for these three models, facilitating direct evaluation of their relative adequacy.

Table 7.

Fit Indices for Competing CFA Models.

The unidimensional model demonstrated poor fit across all indices, with an elevated RMSEA of 0.110 (well above the acceptable threshold of 0.08), inadequate CFI of 0.812 (below the recommended 0.90 minimum), and an excessively large chi-square to degrees of freedom ratio of 9.963. These results decisively rejected the hypothesis that digital validation seeking could be adequately represented as a single undifferentiated construct. In contrast, the first-order four-factor model exhibited excellent fit to the data. The RMSEA of 0.046 fell well within the acceptable range, indicating close approximate fit. The CFI of 0.970 and NFI of 0.952 substantially exceeded conventional benchmarks, demonstrating that the model accounted for the vast majority of observed covariances. The normed chi-square ratio of 2.594 was well within acceptable limits, and both GFI (0.949) and AGFI (0.934) indicated strong absolute and parsimony-adjusted fit. The low RMR value of 0.053 further confirmed excellent model fit.

The second-order model, which imposed an additional hierarchical structure with the four first-order factors loading on a higher-order Digital Validation Seeking factor, also demonstrated good fit with an RMSEA of 0.048, CFI of 0.968, and NFI of 0.950. However, comparison of the first-order and second-order models revealed that the more parsimonious first-order structure achieved slightly superior fit with two fewer degrees of freedom, as evidenced by marginally better CFI (0.970 vs. 0.968) and RMSEA (0.046 vs. 0.048) values. The chi-square difference between models was minimal (Δχ2 = 18.005, Δdf = 2), and the more constrained second-order model did not offer meaningful improvement in interpretability or theoretical coherence.

Based on the principle of parsimony and the superior fit indices, the first-order four-factor model was retained as the optimal representation of the DVSS structure for the purposes of scale validation and subscale-level score interpretation. It is acknowledged, however, that when the DVSS total construct is employed alongside other constructs within larger structural equation models, the second-order representation may offer practical advantages by consolidating the four dimensions into a single higher-order factor, thereby reducing the number of structural paths and simplifying model estimation (Hair et al., 2019; Marsh et al., 2020). Researchers are therefore encouraged to select the representation most appropriate to their specific research objectives.

Reliability analyses were conducted separately for each of the four dimensions and for the total scale using the confirmatory sample. Table 8 presents the comprehensive reliability coefficients for each subscale and the overall instrument.

Table 8.

Reliability Coefficients for DVSS Dimensions and Total Scale.

All four subscales demonstrated excellent internal consistency, with Cronbach’s alpha coefficients ranging from 0.807 to 0.882, substantially exceeding the conventional threshold of 0.70 for acceptable reliability. McDonald’s omega coefficients, which are less influenced by the number of items and do not assume tau-equivalence, showed nearly identical values ranging from 0.810 to 0.883, confirming the stability of reliability estimates across different computational approaches. Guttman’s lambda-2 coefficients, representing the greatest lower bound of reliability, were virtually identical to alpha and omega values, further corroborating the precision of these estimates. The total scale demonstrated outstanding reliability, with all three coefficients reaching 0.938 or 0.939, indicating that the full 20-item instrument (excluding Q5) produces highly consistent measurements of digital validation seeking.

For the first-order four-factor model, CR and AVE were calculated to assess convergent validity. ASQ achieved a CR of 0.811 and AVE of 0.519, while FHV, SC, and PS dimensions showed CR values of 0.874, 0.871, and 0.883, respectively, with AVE values of 0.582, 0.574, and 0.602. These values indicated that the extracted factors accounted for substantial proportions of item variance and that convergent validity was established, as CR values exceeded 0.70 and AVE values approached or exceeded the 0.50 benchmark. To assess the discriminant validity and internal structure of the scale, correlations among the four subscales and with the total score were examined. Table 9 displays these intercorrelations for the confirmatory sample.

Table 9.

Intercorrelations Among DVSS Subscales and Total Score.

All subscale intercorrelations were positive, statistically significant at the 0.01 level, and ranged from 0.570 to 0.697, indicating that the four dimensions were moderately to strongly related yet sufficiently distinct to warrant separate measurement. These correlations support the conceptualization of digital validation seeking as a multifaceted construct with interrelated but distinguishable behavioral manifestations. Each subscale demonstrated very strong correlations with the total score (r = 0.820 to 0.870), confirming that all four dimensions contributed substantially to the overarching construct while retaining their unique variance.

Test–retest reliability was assessed using a subsample of 171 students who completed the DVSS on two occasions separated by a 15-day interval. Pearson correlation coefficients between Time 1 and Time 2 scores were calculated for each subscale and the total score. ASQ demonstrated a correlation of 0.546 (p < 0.01), FHV showed r = 0.691 (p < 0.01), SC yielded r = 0.782 (p < 0.01), and PS achieved r = 0.791 (p < 0.01). The total scale test–retest correlation was 0.830 (p < 0.01), indicating strong temporal stability. These coefficients confirm that the DVSS produces consistent measurements across time, with the total score demonstrating particularly robust stability. The somewhat lower correlation for ASQ, while still acceptable, suggests that this dimension may be more situationally variable or sensitive to recent academic experiences than the other three dimensions.

4. Discussion

The present study successfully developed and validated the DVSS, a 19-item instrument comprising four distinct yet interrelated dimensions: ASQ, FHV, SC, and PS (see Appendix A). The first-order four-factor model demonstrated superior fit compared to unidimensional and second-order alternatives, suggesting that digital validation seeking manifests through multiple behavioral pathways rather than a single undifferentiated tendency. The strong interfactor correlations (0.570–0.697) indicate these dimensions represent related facets of an overarching construct, consistent with theoretical frameworks positing that students engage validation-seeking behaviors across multiple digital contexts. Excellent internal consistency (α = 0.807–0.882) and robust test–retest stability (r = 0.830 for total scale) confirm the DVSS produces reliable measurements, while content validity procedures and convergent validity indices establish its construct appropriateness for capturing university students’ reliance on digital platforms for academic and identity confirmation.

It is important to acknowledge that a second-order formative conceptualization of digital validation seeking was considered as a structural alternative. In a formative model, the four dimensions would be treated as causally antecedent components that collectively define the higher-order construct, rather than as reflective manifestations of it (Diamantopoulos & Winklhofer, 2001; MacKenzie et al., 2005). While each dimension captures a qualitatively distinct behavioral domain, three considerations favored the reflective specification. First, the substantial intercorrelations among dimensions (r = 0.570–0.697) are more consistent with reflective than formative logic, which typically assumes component independence (Jarvis et al., 2003). Second, items within each subscale were constructed as interchangeable indicators of a common underlying tendency. Third, AVE values exceeding 0.50 and CR values exceeding 0.70 support the reflective interpretation (Fornell & Larcker, 1981).

Nonetheless, it is important to note that the choice between first-order and second-order representations carries practical implications beyond model fit. When the DVSS is incorporated into broader structural models alongside other constructs, the second-order specification reduces model complexity by replacing four separate first-order relationships with a single path from the higher-order Digital Validation Seeking factor, which may improve model parsimony and estimation stability in such contexts (Hair et al., 2019; Marsh et al., 2020). The good fit of the second-order model (RMSEA = 0.048, CFI = 0.968) confirms that this representation remains psychometrically viable and is recommended for use in future research employing the DVSS within multi-construct structural frameworks.

These findings extend existing research by addressing critical measurement gaps identified in systematic reviews regarding socio-emotional dimensions of digital behavior in educational contexts. While previous instruments focused on technical competencies and problematic use symptoms, the DVSS uniquely captures the everyday micro-processes through which students seek peer approval and external confirmation that characterize contemporary academic life. The moderate correlations among DVSS dimensions parallel Zhang et al.’s conceptualization of digital validation seeking as manifesting through identity, competence, and social channels. Furthermore, the scale’s capacity to differentiate between compulsive feedback monitoring, performative presentation, comparative evaluation, and metric-based self-assessment addresses Nguyen and Habók’s call for instruments measuring why and how students seek validation online rather than merely assessing what they can do technologically, positioning the DVSS as a theoretically inalgrounded, context-sensitive tool.

The DVSS offers significant practical utility for higher education institutions seeking to identify students at risk for maladaptive digital engagement before patterns escalate to clinical impairment. Early identification of elevated validation-seeking behaviors enables targeted prevention programs addressing the psychological mechanisms underlying digital dependence, particularly among vulnerable populations including female students and those from lower socioeconomic backgrounds who experience heightened digital stressors. Educators can use DVSS profiles to design hybrid learning environments that deliberately channel validation drives toward collaborative learning and productive feedback rather than status comparison and fragmented attention. Platform architecture may differentially influence DVSS dimensions, with social media tools amplifying FHV and SC, while structured platforms like Blackboard may primarily engage ASQ. The scale’s multidimensional structure allows institutions to tailor interventions to specific behavioral patterns: students high in FHV may benefit from anxiety management strategies, while those scoring elevated on PS may require authentic engagement opportunities that reduce surface-level digital performance pressures and promote genuine academic involvement.

Several limitations warrant consideration when interpreting these findings. The cross-sectional design precludes causal inferences about relationships between digital validation seeking and academic or psychological outcomes. The Egyptian university sample, while geographically diverse, may not generalize to students in different cultural contexts where digital platform usage and validation-seeking behaviors differ substantially. Self-report methodology introduces potential social desirability bias, particularly for items addressing compulsive or performative behaviors. It should be noted that the psychometric properties of the DVSS were established exclusively within an Egyptian university context, and cross-cultural measurement invariance has not yet been empirically tested, precluding automatic extension of construct validity beyond this geographical scope. Additionally, the removal of one item (Q5) during factor analysis highlights the importance of iterative refinement in scale development. Finally, although demographic homogeneity between samples was confirmed across gender, academic year, and residence, unmeasured variables such as digital platform engagement intensity and socioeconomic status were not formally balanced, representing a remaining potential source of bias.

Future research should employ longitudinal designs tracking digital validation seeking across academic terms to examine temporal patterns, developmental changes, and prospective associations with academic performance, psychological well-being, and clinical outcomes such as burnout and mental health distress. Cross-cultural validation using multigroup CFA across diverse geographical and educational contexts represents a critical priority before broader application of the DVSS can be recommended. Researchers should investigate concurrent and predictive validity by examining correlations with established measures of academic engagement, SC orientation, SDT constructs, and mental health indicators. Experimental studies manipulating feedback availability or SC information could establish causal mechanisms underlying the virtual feedback loop. Finally, intervention research should evaluate whether DVSS-informed programs effectively reduce maladaptive validation seeking while preserving beneficial aspects of digital engagement for collaborative learning and academic support.

5. Conclusions

The DVSS represents a psychometrically sound, theoretically grounded instrument that fills a critical void in educational measurement by capturing the socio-emotional dimensions of digital behavior within higher education contexts. Its multidimensional structure reflects the complexity of contemporary student experiences navigating hybrid academic environments where digital platforms fundamentally shape learning, identity formation, and self-evaluation processes. By enabling early identification of maladaptive patterns before they compromise academic engagement or escalate to clinical thresholds, the DVSS equips researchers and practitioners with an essential tool for understanding and addressing the psychological implications of digitally mediated validation in university settings. As educational institutions increasingly integrate digital technologies into pedagogical practice, instruments like the DVSS become indispensable for ensuring that technological affordances enhance rather than undermine student well-being, authentic learning, and academic success.

Author Contributions

Conceptualization, M.A.N.-a., M.M.M., M.M.H., H.K.A., B.S.A. and A.R.I.; methodology, M.A.N.-a. and A.R.I.; software, A.R.I.; validation, M.A.N.-a., M.M.M. and M.M.H.; formal analysis, A.R.I.; investigation, M.A.N.-a., H.K.A. and B.S.A.; resources, M.M.H., H.K.A. and B.S.A.; data curation, M.A.N.-a. and A.R.I.; writing—original draft preparation, M.A.N.-a.; writing—review and editing, M.A.N.-a.; visualization, A.R.I.; supervision, M.M.M. and M.M.H.; project administration, M.M.H. All authors have read and agreed to the published version of the manuscript.

Funding

This work was supported by the Deanship of Scientific Research, Vice Presidency for Graduate Studies and Scientific Research, King Faisal University, Saudi Arabia [Grant No. KFU260475].

Institutional Review Board Statement

The study was conducted in accordance with the Declaration of Helsinki and approved by the Research Ethics Committee of the Faculty of Education at Al-Azhar University, Dakahlia, Egypt (protocol code Ref. No. EDU-REC-2025-071, approval date 22 October 2025).

Informed Consent Statement

Informed consent was obtained from all subjects involved in the study.

Data Availability Statement

The datasets generated and analyzed during the current study are available from the corresponding authors upon reasonable request.

Acknowledgments

The researchers would like to thank the Deanship of Scientific Research at King Faisal University, Al-Ahsa 31982, for providing the research fund for publishing Research Grant No. [KFU260475]. The authors thank all participants for their valuable contribution to this study.

Conflicts of Interest

The authors declare no conflicts of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| DVSS | Digital Validation Seeking Scale |

| SDT | Self-Determination Theory |

| CVI | Content Validity Index |

| EFA | Exploratory Factor Analysis |

| CFA | Confirmatory Factor Analysis |

| KMO | Kaiser-Meyer-Olkin |

| RMSEA | Root Mean Square Error of Approximation |

| CFI | Comparative Fit Index |

| AGFI | Adjusted Goodness of Fit Index |

| RMR | Root Mean Square Residual |

| CR | Composite Reliability |

| AVE | Average Variance Extracted |

| ASQ | Academic Self-Quantification |

| FHV | Feedback Hyper-vigilance |

| SC | Social Comparison |

| PS | Performative Studiousness |

| GPA | Grade Point Average |

Appendix A. Digital Validation Seeking Scale (DVSS)

Response Instructions: Please read each statement carefully and select the response that best describes your feelings and behaviors. There are no right or wrong answers. Please be as honest as possible.

Likert Scale: 5 = Strongly Agree | 4 = Agree | 3 = Neutral | 2 = Disagree | 1 = Strongly Disagree

Factor 1: Academic Self-Quantification (ASQ)

- I feel smarter when my academic posts receive high engagement.

- I consider the number of “likes” as evidence of my academic success.

- My academic confidence increases when others praise my digital achievements.

- I feel embarrassed if my study-related updates do not get enough interaction.

Factor 2: Feedback Hyper-vigilance (FHV)

- 5.

- I check my phone constantly immediately after posting a study update.

- 6.

- I feel anxious if responses to my academic posts are delayed.

- 7.

- Waiting for notifications distracts me while I am studying.

- 8.

- I find it difficult to focus until I see how people have reacted to my posts.

- 9.

- I refresh my profile repeatedly to track the increase in interactions.

Factor 3: Social Comparison (SC)

- 10.

- I compare the engagement on my posts with those of my classmates.

- 11.

- I feel discouraged if a classmate’s post receives more likes than mine.

- 12.

- I monitor my peers’ digital success to evaluate my own academic standing.

- 13.

- I strive to exceed the “likes” received by the top students in my class.

- 14.

- I am curious to see which of my academic rivals viewed my posts.

Factor 4: Performative Studiousness (PS)

- 15.

- I focus more on photographing my books and tools than actually reading them.

- 16.

- I spend a lot of time “staging” my study space before I actually start.

- 17.

- I am mostly interested in appearing serious about my studies to my followers.

- 18.

- I post images that imply hard work even when I am not actually studying.

- 19.

- I choose my study locations based on how “Instagrammable” they are.

Scoring:

- –

- Academic Self-Quantification (ASQ): Sum of items 1, 2, 3, 4 (Range: 4–20)

- –

- Feedback Hyper-vigilance (FHV): Sum of items 6, 7, 8, 9, 10 (Range: 5–25)

- –

- Social Comparison (SC): Sum of items 11, 12, 13, 14, 15 (Range: 5–25)

- –

- Performative Studiousness (PS): Sum of items 16, 17, 18, 19, 20 (Range: 5–25)

- –

- Total DVSS Score: Sum of all 19 items (Range: 19–95)

References

- Abdigapbarova, U., Sadirbekova, D., Nishanbayeva, S., & Zhiyenbayeva, N. (2025). The impact of digital hybrid education model on teachers’ engagement and academic performance in the context of Kazakhstan. Scientific Reports, 15(1), 17865. [Google Scholar] [CrossRef] [PubMed]

- Alkis, Y., Kadirhan, Z., & Sat, M. (2017). Development and validation of social anxiety scale for social media users. Computers in Human Behavior, 72, 296–303. [Google Scholar] [CrossRef]

- Almanasreh, E., Moles, R., & Chen, T. F. (2019). Evaluation of methods used for estimating content validity. Research in Social and Administrative Pharmacy, 15(2), 214–221. [Google Scholar] [CrossRef] [PubMed]

- Al-Rahmi, A. M., Shamsuddin, A., Wahab, E., Al-Rahmi, W. M., Alyoussef, I. Y., & Crawford, J. (2022). Social media use in higher education: Building a structural equation model for student satisfaction and performance. Frontiers in Public Health, 10, 1003007. [Google Scholar] [CrossRef]

- Ang, B. H., Gollapalli, S. D., Du, M., & Ng, S. (2025). Unraveling online mental health through the lens of early Maladaptive schemas: AI-Enabled Content Analysis of Online Mental Health communities. Journal of Medical Internet Research, 27, e59524. [Google Scholar] [CrossRef]

- Ansari, J. A. N., & Khan, N. A. (2020). Exploring the role of social media in collaborative learning the new domain of learning. Smart Learning Environments, 7(1), 9. [Google Scholar] [CrossRef]

- Atlam, E., Ewis, A., El-Raouf, M. A., Ghoneim, O., & Gad, I. (2021). A new approach in identifying the psychological impact of COVID-19 on university student’s academic performance. Alexandria Engineering Journal, 61(7), 5223–5233. [Google Scholar] [CrossRef]

- Boateng, G. O., Neilands, T. B., Frongillo, E. A., Melgar-Quiñonez, H. R., & Young, S. L. (2018). Best practices for developing and validating scales for health, social, and behavioral research. Frontiers in Public Health, 6, 149. [Google Scholar] [CrossRef]

- Boer, M., Van Den Eijnden, R. J. J. M., Finkenauer, C., Boniel-Nissim, M., Marino, C., Inchley, J., Cosma, A., Paakkari, L., & Stevens, G. W. J. M. (2021). Cross-national validation of the social media disorder scale: Findings from adolescents from 44 countries. Addiction, 117(3), 784–795. [Google Scholar] [CrossRef]

- Cabero-Almenara, J., Gutiérrez-Castillo, J. J., Guillén-Gámez, F. D., & Gaete-Bravo, A. F. (2022). Digital competence of higher education students as a predictor of academic success. Technology Knowledge and Learning, 28(2), 683–702. [Google Scholar] [CrossRef]

- Cabero-Almenara, J., Gutiérrez-Castillo, J. J., Palacios-Rodríguez, A., & Barroso-Osuna, J. (2020). Development of the Teacher Digital Competence Validation of DIGCOMPEDU Check-In Questionnaire in the university context of Andalusia (Spain). Sustainability, 12(15), 6094. [Google Scholar] [CrossRef]

- Chen, Y. (2025). A comparative study of state self-esteem responses to social media feedback loops in adolescents and adults. Frontiers in Psychology, 16, 1625771. [Google Scholar] [CrossRef] [PubMed]

- Chen, Y., Pan, L., Lu, F., Sun, D., Liao, C., & Na, M. (2025). Psychological detachment in Chinese higher education: A multitheoretical model of academic stress, cultural pressure, and coping resources. Frontiers in Psychology, 16, 1647184. [Google Scholar] [CrossRef] [PubMed]

- Choukas-Bradley, S., Nesi, J., Widman, L., & Galla, B. M. (2020). The appearance-related social media consciousness scale: Development and validation with adolescents. Body Image, 33, 164–174. [Google Scholar] [CrossRef]

- Crawford, J., Allen, K., Pani, B., & Cowling, M. (2024). When artificial intelligence substitutes humans in higher education: The cost of loneliness, student success, and retention. Studies in Higher Education, 49(5), 883–897. [Google Scholar] [CrossRef]

- Curelaru, M., & Curelaru, V. (2024). Psychological distress and online academic difficulties: Development and validation of scale to measure students’ mental health problems in online learning. Behavioral Sciences, 15(1), 26. [Google Scholar] [CrossRef]

- DeVellis, R. F. (2016). Scale development: Theory and applications (4th ed.). SAGE Publications. [Google Scholar]

- Diamantopoulos, A., & Winklhofer, H. M. (2001). Index construction with formative indicators: An alternative to scale development. Journal of Marketing Research, 38(2), 269–277. [Google Scholar] [CrossRef]

- Eijnden, R. J., Lemmens, J. S., & Valkenburg, P. M. (2016). The social media disorder scale. Computers in Human Behavior, 61, 478–487. [Google Scholar] [CrossRef]

- El-Sayad, G., Saad, N. H. M., & Thurasamy, R. (2021). How higher education students in Egypt perceived online learning engagement and satisfaction during the COVID-19 pandemic. Journal of Computers in Education, 8(4), 527–550. [Google Scholar] [CrossRef]

- Elsayed, H. A. E. (2025). Fear of Missing Out and its impact: Exploring relationships with social media use, psychological well-being, and academic performance among university students. Frontiers in Psychology, 16, 1582572. [Google Scholar] [CrossRef]

- Fabrigar, L. R., Wegener, D. T., MacCallum, R. C., & Strahan, E. J. (1999). Evaluating the use of exploratory factor analysis in psychological research. Psychological Methods, 4(3), 272–299. [Google Scholar] [CrossRef]

- Fan, C., & Wang, J. (2022). Development and validation of a questionnaire to measure digital skills of Chinese undergraduates. Sustainability, 14(6), 3539. [Google Scholar] [CrossRef]

- Ferrari, M., Allan, S., Arnold, C., Eleftheriadis, D., Alvarez-Jimenez, M., Gumley, A., & Gleeson, J. F. (2022). Digital interventions for psychological well-being in university students: Systematic review and meta-analysis. Journal of Medical Internet Research, 24(9), e39686. [Google Scholar] [CrossRef] [PubMed]

- Floyd, F. J., & Widaman, K. F. (1995). Factor analysis in the development and refinement of clinical assessment instruments. Psychological Assessment, 7(3), 286–299. [Google Scholar] [CrossRef]

- Fornell, C., & Larcker, D. F. (1981). Evaluating structural equation models with unobservable variables and measurement error. Journal of Marketing Research, 18(1), 39–50. [Google Scholar] [CrossRef]

- Fung, S. (2019). Cross-cultural validation of the social media disorder scale. Psychology Research and Behavior Management, 12, 683–690. [Google Scholar] [CrossRef]

- Gagné, M., Parker, S. K., Griffin, M. A., Dunlop, P. D., Knight, C., Klonek, F. E., & Parent-Rocheleau, X. (2022). Understanding and shaping the future of work with self-determination theory. Nature Reviews Psychology, 1(7), 378–392. [Google Scholar] [CrossRef]

- García-Arch, J., Sabio-Albert, M., & Fuentemilla, L. (2025). Selective integration of social feedback promotes a stable and positively biased self-concept. Scandinavian Journal of Psychology, 66(5), 683–701. [Google Scholar] [CrossRef]

- Guckian, J., Utukuri, M., Asif, A., Burton, O., Adeyoju, J., Oumeziane, A., Chu, T., & Rees, E. L. (2021). Social media in undergraduate medical education: A systematic review. Medical Education, 55(11), 1227–1241. [Google Scholar] [CrossRef]

- Hair, J. F., Risher, J. J., Sarstedt, M., & Ringle, C. M. (2019). When to use and how to report the results of PLS-SEM. European Business Review, 31(1), 2–24. [Google Scholar] [CrossRef]

- Hashim, M. A. M., Tlemsani, I., & Matthews, R. (2021). Higher education strategy in digital transformation. Education and Information Technologies, 27(3), 3171–3195. [Google Scholar] [CrossRef] [PubMed]

- Hehir, E., Zeller, M., Luckhurst, J., & Chandler, T. (2021). Developing student connectedness under remote learning using digital resources: A systematic review. Education and Information Technologies, 26(5), 6531–6548. [Google Scholar] [CrossRef] [PubMed]

- Htay, M. N. N., Parial, L. L., Tolabing, M. C., Dadaczynski, K., Okan, O., Leung, A. Y. M., & Su, T. T. (2022). Digital health literacy, online information-seeking behaviour, and satisfaction of COVID-19 information among the university students of East and South-East Asia. PLoS ONE, 17(4), e0266276. [Google Scholar] [CrossRef] [PubMed]

- Jarvis, C. B., MacKenzie, S. B., & Podsakoff, P. M. (2003). A critical review of construct indicators and measurement model misspecification in marketing and consumer research. Journal of Consumer Research, 30(2), 199–218. [Google Scholar] [CrossRef]

- Jia, Y., Xu, B., Li, Y., Zhuang, X., Zhang, J., & Zhang, Y. (2025). A novel digital competence assessment tool for parents: Development and validation study. Digital Health, 11, 20552076251353319. [Google Scholar] [CrossRef]

- Lamb, J., Carvalho, L., Gallagher, M., & Knox, J. (2021). The postdigital learning spaces of higher education. Postdigital Science and Education, 4(1), 1–12. [Google Scholar] [CrossRef]

- Lattie, E. G., Adkins, E. C., Winquist, N., Stiles-Shields, C., Wafford, Q. E., & Graham, A. K. (2019). Digital mental health interventions for depression, anxiety, and enhancement of psychological well-being among college students: Systematic review. Journal of Medical Internet Research, 21(7), e12869. [Google Scholar] [CrossRef]

- Likert, R. (1932). A technique for the measurement of attitudes. Archives of Psychology, 22(140), 1–55. [Google Scholar]

- Lima-Costa, A. R., Tosti, A. E., Bonfá-Araujo, B., & Duradoni, M. (2024). Digital life balance and need for online social feedback: Cross–Cultural Psychometric analysis in Brazil. Human Behavior and Emerging Technologies, 2024(1), 1179740. [Google Scholar] [CrossRef]

- Lynn, M. R. (1986). Determination and quantification of content validity. Nursing Research, 35(6), 382–385. [Google Scholar] [CrossRef]

- MacKenzie, S. B., Podsakoff, P. M., & Jarvis, C. B. (2005). The problem of measurement model misspecification in behavioral and organizational research and some recommended solutions. Journal of Applied Psychology, 90(4), 710–730. [Google Scholar] [CrossRef] [PubMed]

- Mahender, P., & Sharma, M. (2025). Revitalizing learning: Examining how hybrid environments influence student engagement and academic success. Journal of Neonatal Surgery, 14(32S), 4208–4213. [Google Scholar] [CrossRef]

- Malas, O., Khan, M., Zubair, A., Guazzini, A., & Duradoni, M. (2025). Psychometric validation of the digital Life balance scale in Urdu and its relationship with life satisfaction, social media addiction, and internet addiction. Human Behavior and Emerging Technologies, 2025(1), 7873343. [Google Scholar] [CrossRef]

- Marsh, H. W., Guo, J., Dicke, T., Parker, P. D., & Craven, R. G. (2020). Confirmatory Factor Analysis (CFA), Exploratory Structural Equation Modeling (ESEM), and SET-ESEM: Optimal balance between goodness of fit and parsimony. Multivariate Behavioral Research, 55(1), 102–119. [Google Scholar] [CrossRef]

- Montagni, I., Tzourio, C., Cousin, T., Sagara, J. A., Bada-Alonzi, J., & Horgan, A. (2020). Mental health-related digital use by university students: A systematic review. Telemedicine Journal and e-Health, 26(2), 131–146. [Google Scholar] [CrossRef]

- Muñoz-Repiso, A. G., Martín, S. C., & Gómez-Pablos, V. B. (2020). Validation of an indicator Model (INCODIES) for assessing student digital competence in basic education. Journal of New Approaches in Educational Research, 9(1), 110–125. [Google Scholar] [CrossRef]

- Neagu, S. N., & Vieriu, A. M. (2025). Digital and psychological well-being among technical university students: Exploring the impact of digital engagement in higher education. Education Sciences, 15(9), 1192. [Google Scholar] [CrossRef]

- Nesi, J., & Prinstein, M. J. (2018). In search of likes: Longitudinal associations between adolescents’ digital status seeking and health-risk behaviors. Journal of Clinical Child & Adolescent Psychology, 48(5), 740–748. [Google Scholar] [CrossRef]

- Nguyen, L. A. T., & Habók, A. (2023). Tools for assessing teacher digital literacy: A review. Journal of Computers in Education, 11(1), 305–346. [Google Scholar] [CrossRef]

- Pang, H., & Zhang, K. (2024). Determining multidimensional influences of network heterogeneity on university students’ psychological and academic well-being: The mediating role of social network exhaustion. Heliyon, 10(11), e32328. [Google Scholar] [CrossRef]

- Pelaez-Sanchez, I. C., Glasserman-Morales, L. D., & Rocha-Feregrino, G. (2024). Exploring digital competencies in higher education: Design and validation of instruments for the era of Industry 5.0. Frontiers in Education, 9, 1415800. [Google Scholar] [CrossRef]

- Polit, D. F., & Beck, C. T. (2006). The content validity index: Are you sure you know what’s being reported? Research in Nursing & Health, 29(5), 489–497. [Google Scholar] [CrossRef]

- Polit, D. F., Beck, C. T., & Owen, S. V. (2007). Is the CVI an acceptable indicator of content validity? Research in Nursing & Health, 30(4), 459–467. [Google Scholar] [CrossRef] [PubMed]

- Pumptow, M., & Brahm, T. (2020). Students’ digital media self-efficacy and its importance for higher education institutions: Development and validation of a survey instrument. Technology Knowledge and Learning, 26(3), 555–575. [Google Scholar] [CrossRef]

- Raes, A. (2021). Exploring student and teacher experiences in hybrid learning environments: Does presence matter? Postdigital Science and Education, 4(1), 138–159. [Google Scholar] [CrossRef]

- Razali, N. M., & Wah, Y. B. (2011). Power comparisons of Shapiro-wilk, Kolmogorov-Smirnov, Lilliefors and Anderson-darling tests. Journal of Statistical Modeling and Analytics, 2(1), 21–33. [Google Scholar]

- Revilla, M. A., Saris, W. E., & Krosnick, J. A. (2014). Choosing the number of categories in agree-disagree scales. Sociological Methods & Research, 43(1), 73–97. [Google Scholar] [CrossRef]

- Ryan, R. M., Deci, E. L., Vansteenkiste, M., & Soenens, B. (2021). Building a science of motivated persons: Self-determination theory’s empirical approach to human experience and the regulation of behavior. Motivation Science, 7(2), 97–110. [Google Scholar] [CrossRef]

- Ryan, R. M., Soenens, B., & Vansteenkiste, M. (2018). Reflections on self-determination theory as an organizing framework for personality psychology: Interfaces, integrations, issues, and unfinished business. Journal of Personality, 87(1), 115–145. [Google Scholar] [CrossRef]

- Sadeghi, S., Shalani, B., Firouzabadi, S. M., Babaei, Z., Namazi, S. A., & Pouretemad, H. R. (2025). Psychometric validation of the Farsi Bergen social media addiction scale (BSMAS) among Iranian adults. BMC Public Health, 25(1), 1840. [Google Scholar] [CrossRef]

- Shahzad, M. F., Xu, S., Lim, W. M., Yang, X., & Khan, Q. R. (2024). Artificial intelligence and social media on academic performance and mental well-being: Student perceptions of positive impact in the age of smart learning. Heliyon, 10(8), e29523. [Google Scholar] [CrossRef] [PubMed]

- Soriano-Alcantara, J. M., Guillén-Gámez, F. D., & Ruiz-Palmero, J. (2024). Exploring digital competencies: Validation and reliability of an instrument for the educational community and for all educational stages. Technology Knowledge and Learning, 30(1), 307–326. [Google Scholar] [CrossRef]

- Sun, H., Yuan, C., Qian, Q., He, S., & Luo, Q. (2022). Digital resilience among individuals in school education settings: A concept analysis based on a scoping review. Frontiers in Psychiatry, 13, 858515. [Google Scholar] [CrossRef] [PubMed]

- Suri, N. A., Festiyed, Azhar, M., Yerimadesi, Ahda, Y., & Alberida, H. (2025). Measuring what matters: A systematic review and VOSviewer-based bibliometric approach to digital literacy assessment instruments, competency dimensions and challenges in education. Research in Learning Technology, 33, 1–17. [Google Scholar] [CrossRef]

- Tondeur, J., Howard, S., Carvalho, A. A., Kral, M., Petko, D., Ganesh, L. T., Røkenes, F. M., Starkey, L., Bower, M., Redmond, P., & Andresen, B. B. (2024). The DTALE model: Designing digital and physical spaces for integrated learning environments. Technology Knowledge and Learning, 29(4), 1767–1789. [Google Scholar] [CrossRef]

- Tzafilkou, K., Perifanou, M., & Economides, A. A. (2022). Development and validation of students’ digital competence scale (SDiCoS). International Journal of Educational Technology in Higher Education, 19(1), 30. [Google Scholar] [CrossRef]

- Urhahne, D., & Wijnia, L. (2023). Theories of motivation in education: An integrative framework. Educational Psychology Review, 35(2), 45. [Google Scholar] [CrossRef]

- Viberg, O., Mutimukwe, C., Hrastinski, S., Cerratto-Pargman, T., & Lilliesköld, J. (2024). Exploring teachers’ (future) digital assessment practices in higher education: Instrument and model development. British Journal of Educational Technology, 55(6), 2597–2616. [Google Scholar] [CrossRef]

- Vieira, C., Kuss, D. J., & Griffiths, M. D. (2023). Early maladaptive schemas and behavioural addictions: A systematic literature review. Clinical Psychology Review, 105, 102340. [Google Scholar] [CrossRef]

- Wakita, T., Ueshima, N., & Noguchi, H. (2012). Psychological distance between categories in the Likert scale: Comparing different numbers of options. Educational and Psychological Measurement, 72(4), 533–546. [Google Scholar] [CrossRef]

- Wang, X., Zhang, R., Wang, Z., & Li, T. (2021). How does digital competence preserve university students’ psychological well-being during the pandemic? An investigation from self-determined theory. Frontiers in Psychology, 12, 652594. [Google Scholar] [CrossRef]

- Zhang, Q., & Shakibaei, G. (2025). Using social reinforcement in online language learning to foster motivation through self-determination theory. Scientific Reports, 15(1), 34944. [Google Scholar] [CrossRef]

- Zhang, S., Shen, Y., Xin, T., Sun, H., Wang, Y., Zhang, X., & Ren, S. (2021). The development and validation of a social media fatigue scale: From a cognitive-behavioral-emotional perspective. PLoS ONE, 16(1), e0245464. [Google Scholar] [CrossRef]

- Zhang, X., Oh, Y. J., Zhang, Y., & Zhu, J. (2025). Seeking validation in the digital age: The impact of validation seeking on self-image and internalized stigma among self- vs. clinically diagnosed individuals on r/ADHD. PLoS ONE, 20(10), e0331856. [Google Scholar] [CrossRef] [PubMed]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Published by MDPI on behalf of the University Association of Education and Psychology. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.