Abstract

Objective: The aim of this scoping review was to evaluate whether artificial intelligence integrated into breast cancer screening work strategies could help resolve some diagnostic issues that still remain. Methods: PubMed, Web of Science, and Scopus were consulted. The literature research was updated to 28 May 2024. The PRISMA method of selecting articles was used. The articles were classified according to the type of publication (meta-analysis, trial, prospective, and retrospective studies); moreover, retrospective studies were based on citizen recruitment (organized screening vs. spontaneous screening and a combination of both). Results: Meta-analyses showed that AI had an effective reduction in the radiologists’ reading time of radiological images, with a variation from 17 to 91%. Furthermore, they highlighted how the use of artificial intelligence software improved the diagnostic accuracy. Systematic review speculated that AI could reduce false negatives and positives and detect subtle abnormalities missed by human observers. DR with AI results from organized screening showed a higher recall rate, specificity, and PPV. Data from opportunistic screening found that AI could reduce interval cancer with a corresponding reduction in serious outcome. Nevertheless, the analysis of this review suggests that the study of breast density and interval cancer still requires numerous applications. Conclusions: Artificial intelligence appears to be a promising technology for health, with consequences that can have a major impact on healthcare systems. Where screening is opportunistic and involves only one human reader, the use of AI can increase diagnostic performance enough to equal that of double human reading.

1. Introduction

Breast cancer (BC) is a global health problem and is one of the principal causes of morbidity and mortality in females. In women, breast cancer (BC) represents the second highest cause of death, with 2 million new cases in 2020, and 80% of patients with BC are individuals aged >50 [1].

The risk of developing breast cancer increases 1.5% at age 40, 3% at age 50, and more than 4% at age 70 [2]. In 2030, the worldwide number of new cases diagnosed will reach 2.7 million annually, while the number of deaths will be 0.87 million [3]. The estimated global economic cost of cancers from 2020 to 2050 will be $25.2 trillion in international dollars (at constant 2017 prices), equivalent to an annual tax of 0.55% on global gross domestic product. The five cancers with the highest economic costs are tracheal, bronchus, and lung cancer (15.4%); colon and rectum cancer (10.9%); breast cancer (7.7%); liver cancer (6.5%); and leukemia (6.3%) [4,5].

The effectiveness of screening in reducing BC mortality is well-known. In addition, screening programs offer the advantage of early lesion detection, enabling their management before progression and worsening. Several efforts have been made to institutionalize population-based screening programs in many countries worldwide. However, in some countries, there is a lack of population-based (PB) screening programs [6,7].

Despite the advantages of current screening mammography, it is known that it is associated with a high risk of false positives and false negatives; therefore, the diagnostic accuracy must be improved. The introduction of artificial intelligence (AI) is becoming an important application in medical technologies [8,9,10]. Recently, a prevalent field of application concerns the combination of AI and radiological evaluation in mammographic screening.

The current research among researchers is to evaluate whether AI could help to reduce missed cancers and false positives as well as detect cancers at earlier stages [11].

The aim of this scoping review was to evaluate whether artificial intelligence integrated into breast cancer screening work strategies could help resolve some diagnostic issues that still remain.

2. Methods

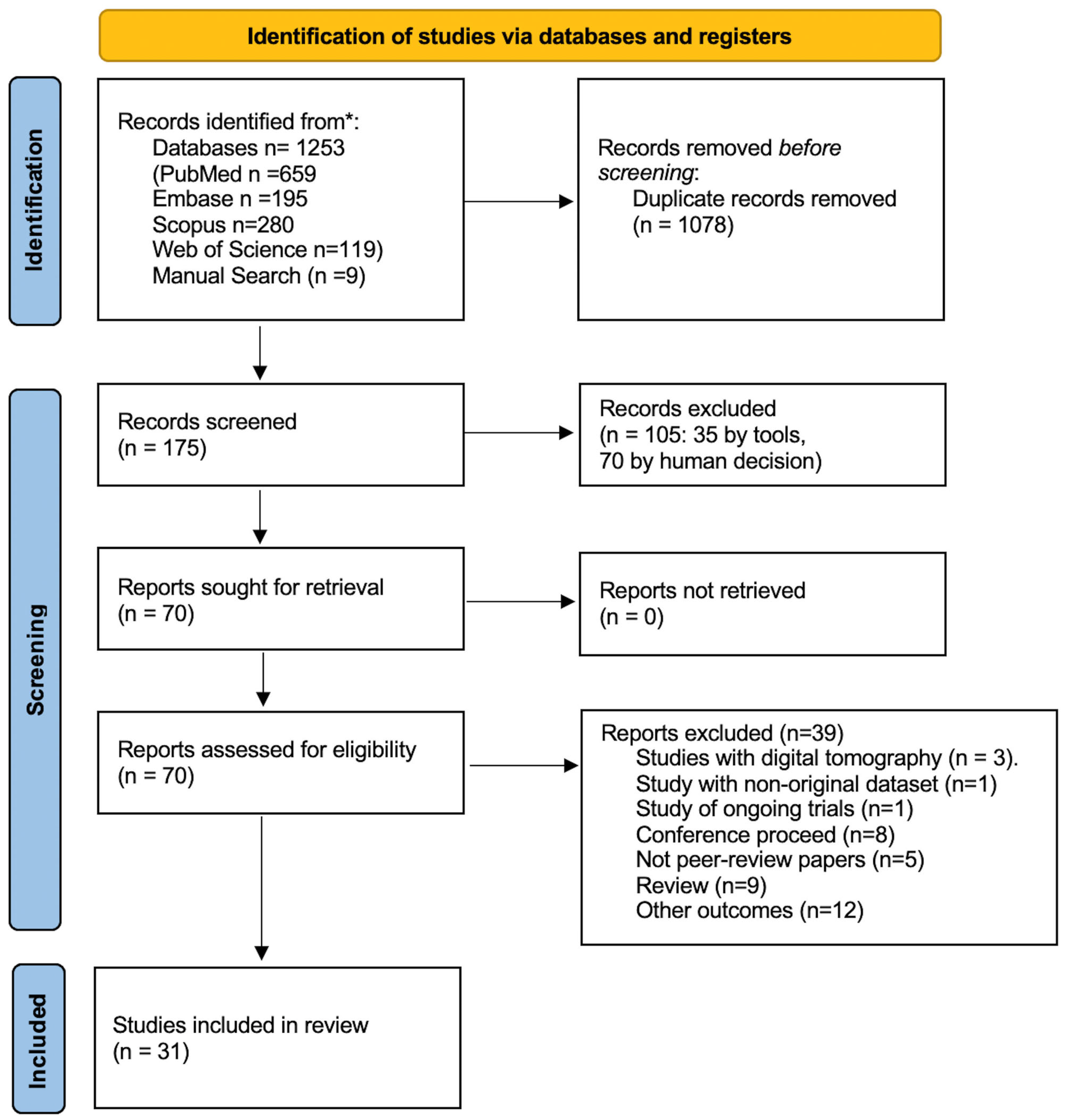

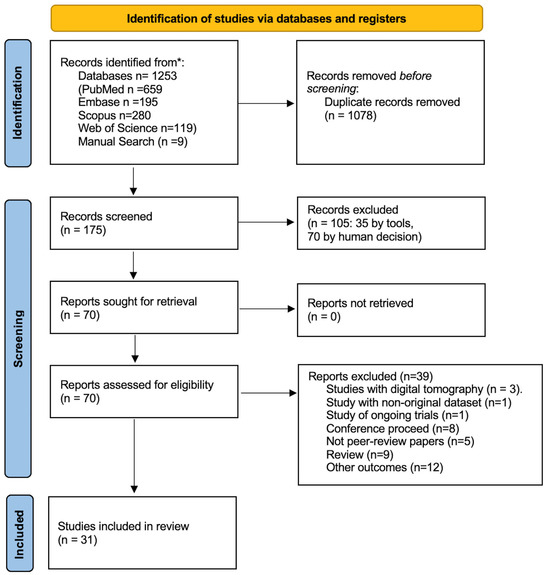

The following databases were consulted: Embase, PubMed, Web of Science, and Scopus. The search keys are reported in the Supplementary Materials. The keywords used for each database are reported in Supplementary Table S1. The literature research was updated to 28 May 2024. Articles published in English in the last 10 years were included. Conference proceedings, articles in preprints, and in general publications not subjected to peer review were excluded. The PRISMA Figure 1 method of selecting articles was used [12]. The quality of the primary studies was tested using the following scales: AMSTAR 2 by Shea et al. [13] for meta-analyses and systematic reviews (Supplementary Table S2); the Cochrane Clinical Trial for randomized studies (Supplementary Table S3) [14]; and the Newcastle–Ottawa for observational studies (Supplementary Table S4) [15]. Finally, a checklist was applied, according to Tricco et al., for the final control of this scoping review (Supplementary Table S5) [16].

Figure 1.

PRISMA flowchart.

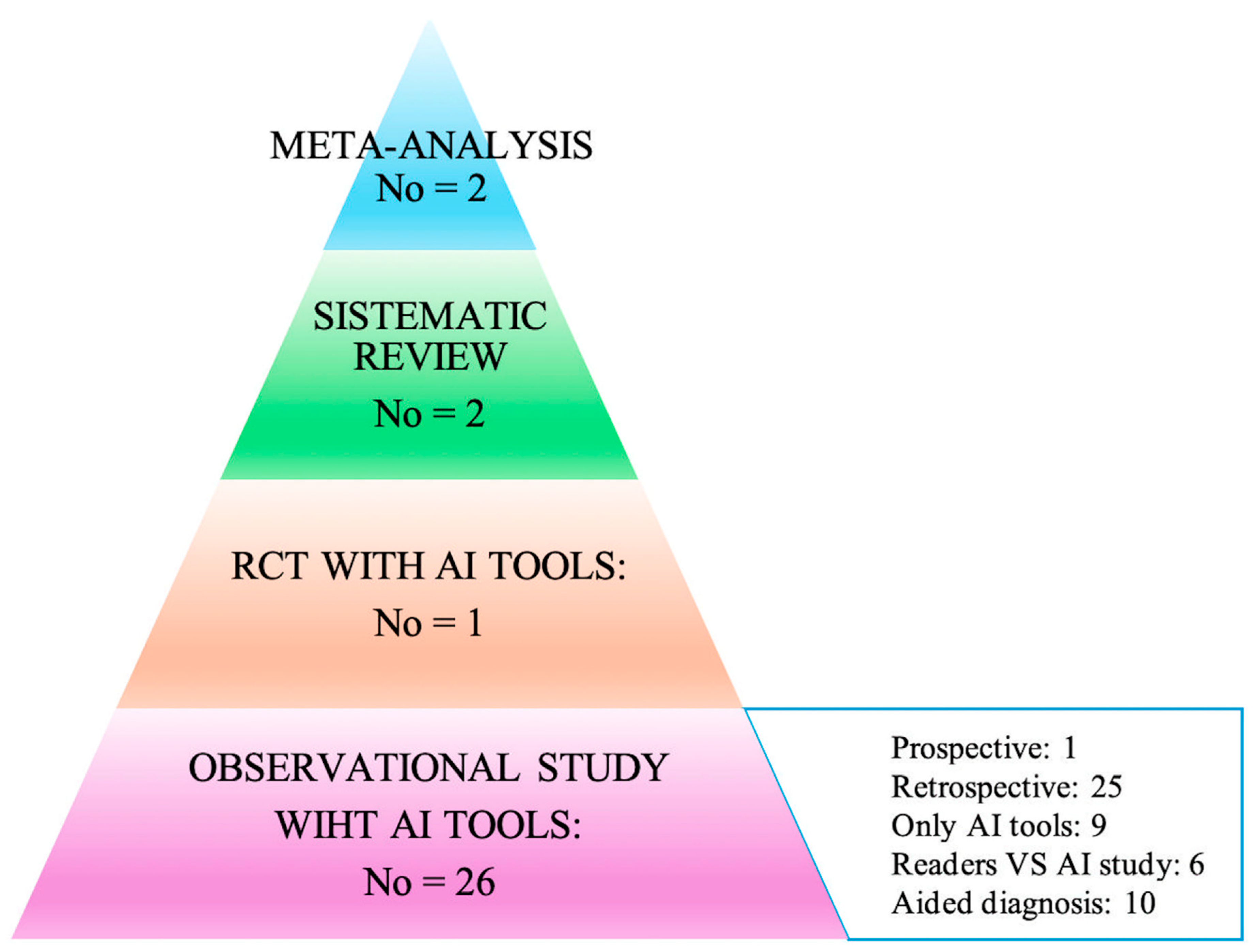

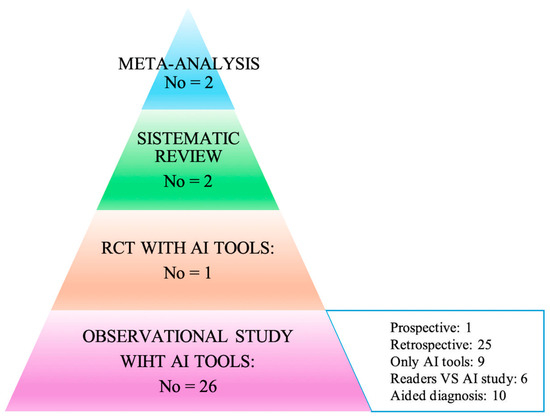

The articles were classified in two ways: the first according to the type of publication (meta-analysis, trial, prospective, and retrospective studies), and the second based on the way in which AI was used within the individual works. In particular, attention was paid to the acquisition method of the primary data (study design), and consequently, how AI was used to analyze the data itself to arrive at the diagnoses: (i) AI only on datasets; (ii) AI toward readers; (iii) AI to support readers. All of this is summarized in the hierarchical model of the pyramid of scientific evidence as reported in Figure 2 [17,18].

Figure 2.

Hierarchy results of the literature research using the evidence-based pyramid with artificial intelligence (adapted from Bellini et al. and Murad et al.) [17,18].

Moreover, retrospectives studies were based on citizen recruitment (organized screening vs. spontaneous screening and a combination of both) (Table 1 and Table 2).

Table 1.

Meta-analyses and reviews.

Table 2.

Studies reporting data on AI performance according to patient recruitment.

3. Results

The results of the literature research (Figure 1 and Figure 2) identified two meta-analyses, one systematic review, one trial, and eighteen cohort studies, of which one was prospective and seventeen retrospective, as highlighted in Figure 2.

3.1. Meta-Analyses

The literature review identified two meta-analyses of interest.

The first by Hickman et al. [19], conducted on 14 studies, highlighted how the use of artificial intelligence software improved the diagnostic accuracy (Table 1). AI demonstrated an effective reduction in the radiologists’ reading time of radiological images, with a variation from 17 to 91%. Furthermore, missed cancers by the readers were diagnosed by AI from 0% to 7%. These results are reported in Table 1.

The second by Yoon [20] shared the results of the previous meta-analysis and also included four digital breast tomography (DTB) studies. For both mammography and DBT, the performance of AI appeared to be greater than that of the human readers (Table 1).

3.2. Systematic Reviews

Sixteen studies were included in Schopf’s review [21]. The purpose of the review was to analyze the use of AI alone or AI in conjunction with clinical risk tools for breast cancer. Although there were no cumulative data, the authors drew the following overall balance: a median AUC with AI of 0.72 (0.62–0.90) compared with a value of 0.61 (0.54–0.69) for the combination AI + clinical risk tools.

Diaz’s overview [22], without a systematic literature research, provided the reader with an overview of the state-of-the-art, dividing the primary studies based on the AI application strategies: (i) used AI as concurrent decision support; (ii) used AI as an independent standalone second reader of screening; (iii) used AI as a triage tool, low risk exams were single read and high-risk exams were double read; and (iv) used AI as a triage tool, where low risk exams were automatically labeled as normal and high-risk exams were double read.

3.3. Primary Studies

The common feature of primary studies concerned the type of study: they were in fact all retrospective studies, with the exception of only one randomized trial [23] and one prospective study [24]. However, the outcomes of retrospective studies were different, as they did not allow the overall results to be summarized in a quantitative manner. Furthermore, as highlighted in Figure 2, the studies differed mainly in the procedure with which the AI was applied. In fact, we could consider the following methods: (i) effectiveness studies in which AI was used in the context of retrospective data to analyze its diagnostic capacity without a human reader; (ii) effectiveness studies in which the diagnostic capacity of AI was compared with other clinically diagnostic tools that validated the risk of malignant neoplasm; (iii) comparison studies in which the diagnostic efficacy was compared with the human reader such as double reading vs. single reading + AI; and (iv) effectiveness studies between different software AI and human reading approaches (Table 2).

Regarding the AI programs, Transpara, MIRAI, LUNIT, ResNet, and others were used.

Finally, concerning the origin of the data, 14 out of 26 studies used histopathology data from the Cancer Registry.

3.4. RTC and Prospective Studies

In the trial conducted in Sweden by Lang et al. [23], 80,033 women aged between 40 and 80 years were enrolled through organized screening and randomly assigned either to the classic diagnosis method with double reading or to the single reading aided by AI. Cancer detection rates were 6.1 (95% CI 5.4–6.9) per 1000 screened participants in the intervention group, above the lowest acceptable limit for safety, and 5.1 (4.4–5.8) per 1000 in the control group with a ratio of 1.2 (95% CI 1.0–1.5; p = 0.052). Recall rates were 2.2% (95% CI 2.0–2.3) in the intervention group and 2.0% (1.9–2.2) in the control group. The false-positive rate was 1.5% (95% CI 1.4–1.7) in both groups. The PPV of recall was 28.3% (95% CI 25.3–31.5) in the intervention group and 24.8% (21.9–28.0) in the control group. In the intervention group, 184 (75%) out of 244 cancers detected were invasive and 60 (25%) were in situ; in the control group, 165 (81%) out of 203 cancers were invasive and 38 (19%) were in situ. The screen-reading workload was reduced by 44.3% using AI.

Demrower’s prospective study concerned women participating in organized population-based screening [24]. The women underwent mammography with two reading modes: traditional double reading and single reader + AI. The following results were obtained: AI was non-inferior for cancer detection compared with double reading by two radiologists, 261 (0.5%) vs. 250 (0.4%) detected cases with a relative proportion of 1.04 (95% CI; 1.00–1.09); single reading by AI with 246 (0.4%) vs. 250 (0.4%) detected cases and a relative proportion 0.98 (95% CI; 0.93–1.04); triple reading by two radiologists + AI with 269 (0.5%) vs. 250 (0.4%) detected cases and a relative proportion of 1.08 (95% CI; 1.04–1.11) were also non-inferior to double reading by two radiologists [24].

- Retrospective studies from organized screening programs

Seven studies reported data from European countries offering organized screening programs: Hungary [25], UK [25,26], Turkey [27], Norway [28], Denmark [29], Germany [30], Spain [31], Sweden [32], The Netherlands [33], and Switzerland [34]. Of these, three were studies that evaluated the effectiveness of AI tools [28,33], while the remaining seven evaluated the diagnostic effectiveness of AI compared with the reader [25,26,29,30,31,32,33,34].

In the works of Lauritzen and Leibig, the sensitivity of AI was lower than that obtained from the readers, 69.7 vs. 70.8 and 84.6 vs. 87.2, respectively. On the contrary, in Romero Martin and Salim, the sensitivity was greater for AI vs. the readers, 70.8 vs. 63.3 and 86.7 vs. 85, respectively.

With reference to specificity, Lauritzen [29], Leibig [30], and Salim [32] found it to be higher in the readers than in AI: 98.6 vs. 98.8; 93.4 vs. 91.3; 98.5 vs. 92.5, respectively,

It is important to underline that in the works considered, there was no direct comparison between the AUCs obtained with the AI methods and with the traditional double reading method. Hickman’s retrospective study tested the diagnostic performance of three different deep learning models. The diagnostic performance of the DL models was evaluated in two different clinical contexts: in triage, in the identification of suspicious images at time 0, and in the identification of interval cancers. Finally, the DL models were compared with double reading, showing a better sensitivity and a non-inferior specificity for both triage and interval cancer [26].

Furthermore, it is important to highlight some aspects of the studies examined. Sharma’s study was comparative with respect to the use of different mammographs [25]. Beker’s study, conducted on 3228, was different from the previous ones as it compared the AI with only three readers. The AUC of the AI was 0.82 (95% CI, 0.75–0.89) with a sensitivity of 73.7% and specificity of 72%. The AUC of the readers seemed to be lower than the AI, but it was not statistically significant. All readers had higher sensitivity and lower specificity [34].

In the studies in which AI was evaluated as a tool, an AUC of 89.6 was found (Seker’s study) [34]. Larsen’s study, however, focused on the study of the threshold values for identifying a lesion as cancerous, considering a scale from 1 to 5, where one corresponded to an image without suspicion of malignancy and 5 was suspicious of malignancy. The threshold value of 3, 80% of cancers, and 30.7% of interval cancers was observed [28].

Finally, in Wanders’ study, the use of a neural network incorporating both AI and a diagnosis system based on breast density for the diagnosis of interval cancers was evaluated. The results showed how the union of the two methods led to an improvement in diagnosis. However, the data were sensitive to the threshold values that were applied [33].

- 2.

- Studies from non-organized screening programs or a sample extracted from organized screening

All studies that used data from non-organized screening or samples selected by the researchers were classified in this category.

In this section, there were twelve studies: three were comparative studies in which the diagnostic performance of the AI was compared with other clinical risk models for breast cancer [35,36,37], and nine in which the AI was used for the diagnostic assessment of radiological images [38,39,40,41,42,43,44,45,46].

Arasu et al. [35] compared AI with a clinical risk tool named the Breast Cancer Surveillance Consortium (BCSC). The comparison highlighted that AI was able to predict the cancer risk better than the clinical risk tool.

Similarly, Lehman’s study tested the application of two risk models, the NCI BCRAT (The Breast Cancer Risk Assessment Tool) and the Tyrer-Cuzick. They essentially showed the diagnostic superiority of artificial intelligence [36].

Similar results were shown by the study by Yala et al., conducted on a larger sample than previous studies [37].

On the other hand, Arefan’s study evaluated the AUC in different mammographic projections compared with breast density, highlighting how the diagnostic performance of mammographic projections was greater than that of breast density [38].

Lang’s study focused on interval cancers, showing how the AI program could act, if correctly programmed, in identifying the tumor and reducing late diagnoses [39].

The studies by Gastoniuoti and Ha highlighted that the CNN (convolutional neural network) model guaranteed better diagnostic performances compared with the image based on breast density alone [40,41].

Hinton’s study recorded a good effectiveness of the deep learning model in correctly identifying tumor images; however, it showed limitations in the identification of interval cancers [42].

Zhu’s study highlighted how a deep learning model, which simultaneously contemplated the radiological image and a clinical risk model, was more effective in the primary diagnosis of cancer, while losing effectiveness in the identification of interval cancers [43]. Sasaki’s study showed a higher AUC in the readers than in AI alone (0.816 vs. 0.706; p < 0.001). Similarly, the sensitivity and specificity for the readers were 89% and 86%, respectively, while with AI, establishing cutoffs of 4 and 7, the sensitivity and specificity were 93%, 85%, 45%, and 67%, respectively [44].

Dang’s study, conducted on 314 patients with 12 different radiologists, showed that the AUC improved with the help of AI (0.74 vs. 0.77, p = 0.004) [45].

Lee’s study was conducted in South Korea with 200 patients, breast radiologists (BSR), and general radiologists (GR). The AUC of the AI was 0.915 (0.876–0.954), while for radiologists with greater experience in the field of mammography, it was 0.813 (0.756–0.870). The AUC of the inexperienced radiologists in the field of mammography was 0.684 (0.616–0.752). Sensitivity was increased in both groups of radiologists (74.6% vs. 88.6% in BSR, p < 0.001; 52.1% vs. 79.4% in GR, p < 0.001), while the specificity was not statistically significant (66.6% vs. 66.4% in BSR, p = 0.238; 70.8% in GR, p = 0.689) [46].

- 3.

- Screening from multicenter studies: US, EU, UK, and SWEDEN

Three were multicenter studies and the data came from both organized and non-organized screening programs.

Shaffer’s study involved organized screened patients from Sweden and non-organized programs from the U.S. He highlighted a better performance of AI algorithms in Sweden compared with those in the United States (0.93 vs. 0.858) [47].

McKinney’s study involved the UK (organized screening) and the U.S. (non-organized screening). In the first, the results showed an improvement in specificity of 1.2% compared with the first operator and of 2.7% in sensitivity. Compared with the second reader, however, the AI showed non-inferiority with respect to sensitivity and specificity, as well as for consensus judgment [48].

Kim’s study collected data from South Korea (organized screening) and the U.S. (non-organized screening); the global data highlighted a better performance of the AI vs. the readers (AUC 0.95 vs. 0.81). For this study, no data regarding sensitivity and specificity were available [49].

4. Discussion

Artificial intelligence appears to be a promising technology for health, with consequences that can have a major impact on healthcare systems [50]. As reported by Higgins et al., the application of AI may be able to change the approach of doctors and patients to the pathology [51]. The technology appears particularly promising, especially for chronic pathologies in which the clinical history of the disease requires constant monitoring. AI has been applied in the management of chronic lung disease [52,53], renal failure [54], diabetes [55], and ocular pathologies [56]. Oncology represents another potential field of application of AI, particularly linked to image analysis [57,58].

The FDA (Food and Drug Administration) has approved the use of CAD (computer-aided diagnosis) for mammographic images since 1998.

It appears clear that deep learning models that integrate clinical risk scores already validated and in use with image analysis are more effective than individual clinical risk tools based on anamnestic, genetic, anthropometric data and single heart rate analysis and images, highlighting how a multidisciplinary approach is necessary in correct diagnosis. A fundamental point is the number of mammograms performed: increasing the number of exams corresponds to an increase in quality and diagnostic accuracy [59]. This is expressed in the training of AI to search for target images on retrospective data, but above all, when applying AI in the daily reality of screening.

It is important to underline that a large number of mammographic tests occur above all with an organized population-based screening program. In most European countries, this involves double reading and implies: (i) considerable know-how of the diagnostic method; (ii) ad hoc training of the specialists involved; and (iii) the robustness of the organizational infrastructure underlying population-based screening. All the evidence deduced from the literature indicates that it is therefore desirable that all countries equip themselves with a double reading, and that they adopt a universalistic model of the early cancer detection service, which, as demonstrated, is cost effective [60].

If, in the European context, AI does not increase the diagnostic performance, in the American context, where screening is opportunistic and involves only one human reader, the use of AI can increase the diagnostic performance enough to equal that of double human reading. In Denbrowen’s prospective study [24] and Lang’s trial [23], both con-ducted in Sweden, it was highlighted that AI would not actually bring about an improvement in the diagnostic performance already achieved by double human reading. As reported by van Nijnatten TJA, several trials are ongoing, and therefore, there is a growing interest in the use of AI in breast cancer screening [61].

From the analysis of this review, the study of breast density and interval cancer still requires numerous applications. Breast density is an independent risk factor for cancer and has a moderate association with cancer risk, thus requires integration with other diagnostic methods such as ultrasound and magnetic resonance imaging. When appropriately set, AI can be extremely valid in identifying a breast at risk, as shown by the studies by Gastounioti and Ha [40,41].

AI is still not performing well in interval cancers, as seen in the retrospective studies by Hinton [42] and Wanders [33].

Moreover, Combi et al. [62] suggest that an AI system must have the following characteristics: interpretability, understandability, usability, and usefulness. Interpretability is the degree to which the user can understand the ways in which the system makes decisions; understandability is the degree to which the user can understand the result indicated by the system and the mechanism with which the system manages to provide that result; usability concerns the ease of using the interface; and usefulness concerns the usefulness of the system for its intended purpose.

In daily clinical practice, these characteristics could be translated into: (i) a system that is focused on the needs of the doctor, in diagnostic decision making, acting as a natural technological evolution in improving patient care; and (ii) a system that integrates with a patient’s diagnostic and treatment path.

Artificial intelligence (AI) could prove to be a valuable ally in mammographic screening for breast cancer, allowing for the identification of any tumors with the same accuracy as a standard reading performed by two radiologists.

It is necessary to understand whether the integration of the radiologists’ interpretation with AI can help identify those tumors that escape traditional screening because they appear in the interval between one exam and the next as well as the cost-effectiveness of the technology.

If future studies confirm the actual benefit and safety of AI in mammography screening, it could become a support tool to overcome the current shortage of radiologists, at least eliminating the need for double reading or of a third radiologist in the case of disagreement. In this way, specialists could focus on more advanced diagnostics, shortening the waiting times for patients.

In this way, AI does not replace doctors, but helps them make faster and more informed decisions by providing a reliable further opinion.

5. Conclusions

However, it should be emphasized that AI systems are often trained on large datasets that can contain biases that reflect historical inequalities or prejudices. Ensuring fairness means recognizing and mitigating bias in data, algorithms, and decision-making processes. AI developers must be mindful of how their systems may unintentionally perpetuate harmful stereotypes or disadvantage certain groups. AI’s ability to collect, analyze, and process vast amounts of personal data raises significant concerns about privacy. Some applications and data mining can lead to invasive surveillance practices. The ethical use of AI involves balancing the benefits of data analysis with the protection of individual privacy rights. Clear guidelines are needed to ensure that personal data are used responsibly and with informed consent.

Supplementary Materials

The following supporting information can be downloaded at: https://www.mdpi.com/article/10.3390/healthcare13040378/s1, Table S1. Search strategy; Table S2. Risk of Bias according to AMSTAR 2 scale; Table S3. Risk of Bias for RTC according to Cochrane Collaboration; Table S4. Risk of bias of Cohort Studies according to New Castle Ottawa scale; Table S5. Preferred Reporting Items for Systematic reviews and Meta-Analyses extension for Scoping Reviews (PRISMA-ScR) Checklist.

Author Contributions

All authors reviewed the literature and conceived the study. E.A. and P.M.A. analyzed the data. E.A. and P.M.A. supervised the study. E.A., P.M.A., R.P. and M.C. wrote the first draft of the manuscript. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Conflicts of Interest

The authors declare no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

References

- Łukasiewicz, S.; Czeczelewski, M.; Forma, A.; Baj, J.; Sitarz, R.; Stanisławek, A. Breast Cancer-Epidemiology, Risk Factors, Classification, Prognostic Markers, and Current Treatment Strategies—An Updated Review. Cancers 2021, 13, 4287. [Google Scholar] [CrossRef] [PubMed]

- Bite, S. Lifetime Probability Among Females of Dying of Cancer. JNCI J. Natl. Cancer Inst. 2004, 96, 1311–1321. [Google Scholar]

- Ferlay, J.; Colombet, M.; Soerjomataram, I.; Parkin, D.M.; Piñeros, M.; Znaor, A.; Bray, F. Cancer statistics for the year 2020: An overview. Int. J. Cancer 2021, 149, 33818764. [Google Scholar] [CrossRef]

- Kreier, F. Cancer will cost the world $25 trillion over next 30 years. Nature 2023. [Google Scholar] [CrossRef]

- Chen, S.; Cao, Z.; Prettner, K.; Kuhn, M.; Yang, J.; Jiao, L.; Wang, Z.; Li, W.; Geldsetzer, P.; Bärnighausen, T.; et al. Estimates and Projections of the Global Economic Cost of 29 Cancers in 204 Countries and Territories from 2020 to 2050. JAMA Oncol 2023, 9, 465–472. [Google Scholar] [CrossRef]

- Altobelli, E.; Rapacchietta, L.; Angeletti, P.M.; Barbante, L.; Profeta, F.V.; Fagnano, R. Breast Cancer Screening Programmes across the WHO European Region: Differences among Countries Based on National Income Level. Int. J. Environ. Res. Public Health 2017, 14, 452. [Google Scholar] [CrossRef]

- Shieh, Y.; Eklund, M.; Sawaya, G.F.; Black, W.C.; Kramer, B.S.; Esserman, L.J. Population-based screening for cancer: Hope and hype. Nat. Rev. Clin. Oncol. 2016, 13, 550–565. [Google Scholar] [CrossRef]

- Bitkina, O.V.; Park, J.; Kim, H.K. Application of artificial intelligence in medical technologies: A systematic review of main trends. Digit. Health 2023, 9, 20552076231189331. [Google Scholar] [CrossRef]

- Pashkov, V.M.; Harkusha, A.O.; Harkusha, Y.O. Artificial intelligence in medical practice: Regulative issues and perspectives. Wiad. Lek. 2020, 73, 2722–2727. [Google Scholar] [CrossRef]

- Amisha, M.P.; Pathania, M.; Rathaur, V.K. Overview of artificial intelligence in medicine. J. Family Med. Prim. Care 2019, 8, 2328–2331. [Google Scholar] [CrossRef]

- Lee, C.I.; Elmore, J.G. Cancer Risk Prediction Paradigm Shift: Using Artificial Intelligence to Improve Performance and Health Equity. J. Natl. Cancer Inst. 2022, 114, 1317–1319. [Google Scholar] [CrossRef] [PubMed]

- Moher, D.; Shamseer, L.; Clarke, M.; Ghersi, D.; Liberati, A.; Petticrew, M.; Shekelle, P.; Stewart, L.A.; PRISMA-P Group. Preferred reporting items for systematic review and meta-analysis protocols (PRISMA-P) 2015 statement. Syst. Rev. 2015, 4, 1. [Google Scholar] [CrossRef] [PubMed]

- Shea, B.J.; Reeves, B.C.; Wells, G.; Thuku, M.; Hamel, C.; Moran, J.; Moher, D.; Tugwell, P.; Welch, V.; Kristjansson, E.; et al. AMSTAR 2: A critical appraisal tool for systematic reviews that include randomised or non-randomised studies of healthcare interventions, or both. BMJ 2017, 358, j4008. [Google Scholar] [CrossRef]

- Higgins, J.P.; Altman, D.G.; Gøtzsche, P.C.; Jüni, P.; Moher, D.; Oxman, A.D.; Savovic, J.; Schulz, K.F.; Weeks, L.; Sterne, J.A.; et al. The Cochrane Collaboration’s tool for assessing risk of bias in randomized trials. BMJ 2011, 343, d5928. [Google Scholar] [CrossRef]

- Stang, A. Critical evaluation of the Newcastle-Ottawa scale for the assessment of the quality of nonrandomized studies in meta-analyses. Eur. J. Epidemiol. 2010, 25, 603–605. [Google Scholar] [CrossRef]

- Tricco, A.C.; Lillie, E.; Zarin, W.; O’Brien, K.K.; Colquhoun, H.; Levac, D.; Moher, D.; Peters, M.D.J.; Horsley, T.; Weeks, L.; et al. PRISMA Extension for Scoping Reviews (PRISMA-ScR): Checklist and Explanation. Ann. Intern. Med. 2018, 169, 467–473. [Google Scholar] [CrossRef]

- Murad, M.H.; Asi, N.; Alsawas, M.; Alahdab, F. New evidence pyramid. Evid. Based Med. 2016, 21, 125–127. [Google Scholar] [CrossRef]

- Bellini, V.; Coccolini, F.; Forfori, F.; Bignami, E. The artificial intelligence evidence-based medicine pyramid. World J. Crit. Care Med. 2023, 12, 89–91. [Google Scholar] [CrossRef]

- Hickman, S.E.; Woitek, R.; Le, E.P.V.; Im, Y.R.; Mouritsen Luxhøj, C.; Aviles-Rivero, A.I.; Baxter, G.C.; MacKay, J.W.; Gilbert, F.J. Machine Learning for Workflow Applications in Screening Mammography: Systematic Review and Meta-Analysis. Radiology 2022, 302, 88–104. [Google Scholar] [CrossRef]

- Yoon, J.H.; Strand, F.; Baltzer, P.A.T.; Conant, E.F.; Gilbert, F.J.; Lehman, C.D.; Morris, E.A.; Mullen, L.A.; Nishikawa, R.M.; Sharma, N.; et al. Standalone AI for Breast Cancer Detection at Screening Digital Mammography and Digital Breast Tomosynthesis: A Systematic Review and Meta-Analysis. Radiology 2023, 307, e222639. [Google Scholar] [CrossRef]

- Schopf, C.M.; Ramwala, O.A.; Lowry, K.P.; Hofvind, S.; Marinovich, M.L.; Houssami, N.; Elmore, J.G.; Dontchos, B.N.; Lee, J.M.; Lee, C.I. Artificial Intelligence-Driven Mammography-Based Future Breast Cancer Risk Prediction: A Systematic Review. J. Am. Coll. Radiol. 2024, 21, 319–328. [Google Scholar] [CrossRef] [PubMed]

- Díaz, O.; Rodríguez-Ruíz, A.; Sechopoulos, I. Artificial Intelligence for breast cancer detection: Technology, challenges, and prospects. Eur. J. Radiol. 2024, 175, 111457. [Google Scholar] [CrossRef] [PubMed]

- Lång, K.; Josefsson, V.; Larsson, A.M.; Larsson, S.; Högberg, C.; Sartor, H.; Hofvind, S.; Andersson, I.; Rosso, A. Artificial intelligence-supported screen reading versus standard double reading in the Mammography Screening with Artificial Intelligence trial (MASAI): A clinical safety analysis of a randomised, controlled, non-inferiority, single-blinded, screening accuracy study. Lancet Oncol. 2023, 24, 936–944. [Google Scholar]

- Dembrower, K.; Crippa, A.; Colón, E.; Eklund, M.; Strand, F. ScreenTrustCAD Trial Consortium. Artificial intelligence for breast cancer detection in screening mammography in Sweden: A prospective, population-based, paired-reader, non-inferiority study. Lancet Digit. Health 2023, 5, e703–e711, Erratum in Lancet Digit. Health 2023, 5, e646.. [Google Scholar] [CrossRef]

- Sharma, N.; Ng, A.Y.; James, J.J.; Khara, G.; Ambrózay, É.; Austin, C.C.; Forrai, G.; Fox, G.; Glocker, B.; Heindl, A.; et al. Multi-vendor evaluation of artificial intelligence as an independent reader for double reading in breast cancer screening on 275,900 mammograms. BMC Cancer 2023, 23, 460. [Google Scholar] [CrossRef]

- Hickman, S.E.; Payne, N.R.; Black, R.T.; Huang, Y.; Priest, A.N.; Hudson, S.; Kasmai, B.; Juette, A.; Nanaa, M.; Aniq, M.I.; et al. Mammography Breast Cancer Screening Triage Using Deep Learning: A UK Retrospective Study. Radiology 2023, 309, e231173. [Google Scholar] [CrossRef]

- Seker, M.E.; Koyluoglu, Y.O.; Ozaydin, A.N.; Gurdal, S.O.; Ozcinar, B.; Cabioglu, N.; Ozmen, V.; Aribal, E. Diagnostic capabilities of artificial intelligence as an additional reader in a breast cancer screening program. Eur. Radiol. 2024, 34, 6145–6157. [Google Scholar] [CrossRef]

- Larsen, M.; Olstad, C.F.; Lee, C.I.; Hovda, T.; Hoff, S.R.; Martiniussen, M.A.; Mikalsen, K.Ø.; Lund-Hanssen, H.; Solli, H.S.; Silberhorn, M.; et al. Performance of an Artificial Intelligence System for Breast Cancer Detection on Screening Mammograms from Breast Screen Norway. Radiol. Artif. Intell. 2024, 6, e230375. [Google Scholar] [CrossRef]

- Lauritzen, A.D.; Rodríguez-Ruiz, A.; von Euler-Chelpin, M.C.; Lynge, E.; Vejborg, I.; Nielsen, M.; Karssemeijer, N.; Lillholm, M. An Artificial Intelligence-based Mammography Screening Protocol for Breast Cancer: Outcome and Radiologist Workload. Radiology 2022, 304, 41–49. [Google Scholar] [CrossRef]

- Leibig, C.; Brehmer, M.; Bunk, S.; Byng, D.; Pinker, K.; Umutlu, L. Combining the strengths of radiologists and AI for breast cancer screening: A retrospective analysis. Lancet Digit. Health 2022, 4, e507–e519. [Google Scholar] [CrossRef]

- Romero-Martín, S.; Elías-Cabot, E.; Raya-Povedano, J.L.; Broeders, M.; Gennaro, G.; Clauser, P.; Helbich, T.H.; Chevalier, M.; Tan, T.; Mertelmeier, T.; et al. Stand-Alone Use of Artificial Intelligence for Digital Mammography and Digital Breast Tomosynthesis Screening: A Retrospective Evaluation. Radiology 2022, 302, 535–542. [Google Scholar] [CrossRef] [PubMed]

- Salim, M.; Wåhlin, E.; Dembrower, K.; Azavedo, E.; Foukakis, T.; Liu, Y.; Smith, K.; Eklund, M.; Strand, F. External Evaluation of 3 Commercial Artificial Intelligence Algorithms for Independent Assessment of Screening Mammograms. JAMA Oncol. 2020, 6, 1581–1588. [Google Scholar] [CrossRef] [PubMed]

- Wanders, A.J.T.; Mees, W.; Bun, P.A.M.; Janssen, N.; Rodríguez-Ruiz, A.; Dalmış, M.U.; Karssemeijer, N.; van Gils, C.H.; Sechopoulos, I.; Mann, R.M.; et al. Interval Cancer Detection Using a Neural Network and Breast Density in Women with Negative Screening Mammograms. Radiology 2022, 303, 269–275. [Google Scholar] [CrossRef] [PubMed]

- Becker, A.S.; Marcon, M.; Ghafoor, S.; Wurnig, M.C.; Frauenfelder, T.; Boss, A. Deep Learning in Mammography: Diagnostic Accuracy of a Multipurpose Image Analysis Software in the Detection of Breast Cancer. Investig. Radiol. 2017, 52, 434–440. [Google Scholar] [CrossRef] [PubMed]

- Arasu, V.A.; Habel, L.A.; Achacoso, N.S.; Buist, D.S.M.; Cord, J.B.; Esserman, L.J.; Hylton, N.M.; Glymour, M.M.; Kornak, J.; Kushi, L.H.; et al. Comparison of Mammography AI Algorithms with a Clinical Risk Model for 5-year Breast Cancer Risk Prediction: An Observational Study. Radiology 2023, 307, e222733. [Google Scholar] [CrossRef]

- Lehman, C.D.; Mercaldo, S.; Lamb, L.R.; King, T.A.; Ellisen, L.W.; Specht, M.; Tamimi, R.M. Deep Learning vs. Traditional Breast Cancer Risk Models to Support Risk-Based Mammography Screening. J. Natl. Cancer Inst. 2022, 114, 1355–1363. [Google Scholar] [CrossRef]

- Yala, A.; Mikhael, P.G.; Strand, F.; Satuluru, S.; Kim, T.; Banerjee, I.; Gichoya, J.; Trivedi, H.; Lehman, C.D.; Hughes, K.; et al. Multi-Institutional Validation of a Mammography-Based Breast Cancer Risk Model. J. Clin. Oncol. 2022, 40, 1732–1740. [Google Scholar] [CrossRef]

- Arefan, D.; Mohamed, A.A.; Berg, W.A.; Zuley, M.L.; Sumkin, J.H.; Wu, S. Deep learning modeling using normal mammograms for predicting breast cancer risk. Med. Phys. 2020, 47, 110–118. [Google Scholar] [CrossRef]

- Lång, K.; Hofvind, S.; Rodríguez-Ruiz, A.; Andersson, I. Can artificial intelligence reduce the interval cancer rate in mammography screening? Eur. Radiol. 2021, 31, 5940–5947. [Google Scholar] [CrossRef]

- Gastounioti, A.; Eriksson, M.; Cohen, E.A.; Mankowski, W.; Pantalone, L.; Ehsan, S.; McCarthy, A.M.; Kontos, D.; Hall, P.; Conant, E.F. External Validation of a Mammography-Derived AI-Based Risk Model in a U.S. Breast Cancer Screening Cohort of White and Black Women. Cancers 2022, 14, 4803. [Google Scholar] [CrossRef]

- Ha, R.; Chang, P.; Karcich, J.; Mankowski, W.; Pantalone, L.; Ehsan, S.; McCarthy, A.M.; Kontos, D.; Hall, P.; Conant, E.F. Convolutional Neural Network Based Breast Cancer Risk Stratification Using a Mammographic Dataset. Acad. Radiol. 2019, 26, 544–549. [Google Scholar] [CrossRef] [PubMed]

- Hinton, B.; Ma, L.; Mahmoudzadeh, A.P.; Malkov, S.; Fan, B.; Greenwood, H.; Joe, B.; Lee, V.; Kerlikowske, K.; Shepherd, J. Deep learning networks find unique mammographic differences in previous negative mammograms between interval and screen-detected cancers: A case-case study. Cancer Imaging 2019, 19, 41. [Google Scholar] [CrossRef] [PubMed]

- Zhu, X.; Wolfgruber, T.K.; Leong, L.; Jensen, M.; Scott, C.; Winham, S.; Sadowski, P.; Vachon, C.; Kerlikowske, K.; Shepherd, J.A. Deep Learning Predicts Interval and Screening-detected Cancer from Screening Mammograms: A Case-Case-Control Study in 6369 Women. Radiology 2021, 301, 550–558. [Google Scholar] [CrossRef]

- Sasaki, M.; Tozaki, M.; Rodríguez-Ruiz, A.; Yotsumoto, D.; Ichiki, Y.; Terawaki, A.; Oosako, S.; Sagara, Y. Artificial intelligence for breast cancer detection in mammography: Experience of use of the ScreenPoint Medical Transpara system in 310 Japanese women. Breast Cancer 2020, 27, 642–651. [Google Scholar] [CrossRef]

- Dang, L.A.; Chazard, E.; Poncelet, E.; Serb, T.; Rusu, A.; Pauwels, X.; Parsy, C.; Poclet, T.; Cauliez, H.; Engelaere, C.; et al. Impact of artificial intelligence in breast cancer screening with mammography. Breast Cancer 2022, 29, 967–977. [Google Scholar] [CrossRef]

- Lee, S.E.; Han, K.; Yoon, J.H.; Youk, J.H.; Kim, E.K. Depiction of breast cancers on digital mammograms by artificial intelligence-based computer-assisted diagnosis according to cancer characteristics. Eur. Radiol. 2022, 32, 7400–7408. [Google Scholar] [CrossRef]

- Schaffter, T.; Buist, D.S.M.; Lee, C.I.; Nikulin, Y.; Ribli, D.; Guan, Y.; Lotter, W.; Jie, Z.; Du, H.; Wang, S.; et al. Evaluation of Combined Artificial Intelligence and Radiologist Assessment to Interpret Screening Mammograms. JAMA Netw. Open 2020, 3, e200265, Erratum in JAMA Netw. Open 2020, 3, e204429.. [Google Scholar] [CrossRef]

- McKinney, S.M.; Sieniek, M.; Godbole, V.; Godwin, J.; Antropova, N.; Ashrafian, H.; Back, T.; Chesus, M.; Corrado, G.S.; Darzi, A.; et al. International evaluation of an AI system for breast cancer screening. Nature 2020, 577, 89–94, Erratum in Nature 2020, 586, E19.. [Google Scholar] [CrossRef] [PubMed]

- Kim, H.E.; Kim, H.H.; Han, B.K.; Kim, K.H.; Han, K.; Nam, H.; Lee, E.H.; Kim, E.K. Changes in cancer detection and false-positive recall in mammography using artificial intelligence: A retrospective, multireader study. Lancet Digit. Health 2020, 2, e138–e148. [Google Scholar] [CrossRef]

- Amann, J.; Blasimme, A.; Vayena, E.; Frey, D.; Madai, V.I.; Precise4Q consortium. Explainability for artificial intelligence in healthcare: A multidisciplinary perspective. BMC Med. Inform. Decis. Mak. 2020, 20, 310. [Google Scholar] [CrossRef]

- Higgins, D.; Madai, V.I. From bit to bedside: A practical framework for artificial intelligence product development in healthcare. Adv. Intell. Syst. 2020, 2, 2000052. [Google Scholar] [CrossRef]

- Hong, L.; Cheng, X.; Zheng, D. Application of Artificial Intelligence in Emergency Nursing of Patients with Chronic Obstructive Pulmonary Disease. Contrast Media Mol. Imaging 2021, 2021, 6423398. [Google Scholar] [CrossRef] [PubMed]

- Das, N.; Topalovic, M.; Janssens, W. Artificial intelligence in diagnosis of obstructive lung disease: Current status and future potential. Curr. Opin. Pulm. Med. 2018, 24, 117–123. [Google Scholar] [CrossRef]

- Kotanko, P.; Nadkarni, G.N. Advances in Chronic Kidney Disease Lead Editorial Outlining the Future of Artificial Intelligence/Machine Learning in Nephrology. Adv. Kidney Dis. Health 2023, 30, 2–3. [Google Scholar] [CrossRef]

- Khodve, G.B.; Banerjee, S. Artificial Intelligence in Efficient Diabetes Care. Curr. Diabetes Rev. 2023, 19, e050922208561. [Google Scholar] [CrossRef]

- Ji, Y.; Chen, N.; Liu, S.; Yan, Z.; Qian, H.; Zhu, S.; Zhang, J.; Wang, M.; Jiang, Q.; Yang, W. Research Progress of Artificial Intelligence Image Analysis in Systemic Disease-Related Ophthalmopathy. Dis. Markers 2022, 2022, 3406890. [Google Scholar] [CrossRef]

- Shimizu, H.; Nakayama, K.I. Artificial intelligence in oncology. Cancer Sci. 2020, 111, 1452–1460. [Google Scholar] [CrossRef]

- Syed, A.B.; Zoga, A.C. Artificial Intelligence in Radiology: Current Technology and Future Directions. Semin. Musculoskelet. Radiol. 2018, 22, 540–545. [Google Scholar]

- Taylor, C.R.; Monga, N.; Johnson, C.; Hawley, J.R.; Patel, M. Artificial Intelligence Applications in Breast Imaging: Current Status and Future Directions. Diagnostics 2023, 13, 2041. [Google Scholar] [CrossRef]

- Jayasekera, J.; Mandelblatt, J.S. Systematic Review of the Cost Effectiveness of Breast Cancer Prevention, Screening, and Treatment Interventions. J. Clin. Oncol. 2020, 38, 332–350. [Google Scholar] [CrossRef]

- Van Nijnatten, T.J.A.; Payne, N.R.; Hickman, S.E.; Ashrafian, H.; Gilbert, F.J. Overview of trials on artificial intelligence algorithms in breast cancer screening—A roadmap for international evaluation and implementation. Eur. J. Radiol. 2023, 67, 111087, Erratum in Eur. J. Radiol. 2024, 170, 111202.. [Google Scholar] [CrossRef]

- Combi, C.; Amico, B.; Bellazzi, R.; Holzinger, A.; Moore, J.H.; Zitnik, M.; Holmes, J.H. A manifesto on explainability for artificial intelligence in medicine. Artif. Intell. Med. 2022, 133, 102423. [Google Scholar] [CrossRef] [PubMed]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).