1. Introduction

Processing of big data, whose domain is irregular and can be represented by a graph, has attracted significant research interest [

1,

2,

3,

4,

5,

6,

7,

8,

9,

10]. For big data, the possibility of using smaller and localized subsets of the available information is crucial for their efficient analysis and processing [

11]. In addition, in practical applications when large graphs are used as the signal domain, we are commonly interested in localized analysis than in global behavior. In order to characterize the vertex-localized behavior of signals and their narrow-band spectral properties, the joint vertex–frequency domain analysis is introduced. This analysis represents a natural analogy to the time–frequency analysis, a well-established area in classical signal processing [

12,

13,

14].

In classical signal analysis, the basic short-time Fourier transform approach uses window functions to localize the signal in time, while the projection of such a windowed signal onto Fourier transform basis functions provides its spectral localization. Time localization, combined with the modulation by the basis functions, produces kernel functions for classical time–frequency analysis. The classical time–frequency analysis approach has been extended to vertex–frequency analysis for signals defined on graphs [

15,

16,

17,

18,

19,

20,

21,

22]. This generalization is not straightforward, since graph is a complex and irregular signal domain. Namely, even a time-shift operation, which is trivial in classical time-domain analysis, cannot be straightforwardly generalized to the graph signal domain. This has resulted in several approaches to define vertex–frequency kernels. One approach is based on the vertex domain windows defined using the graph spectral domain [

23]. The vertex domain windows can also be fully defined in the vertex domain, using the vertex neighborhood [

19].

The vertex domain approaches are based on local analysis with a vertex neighborhood and can be very efficient in the large graph analysis. This paper will focus on the vertex–frequency kernels defined in the spectral domain, with spectral shifts performed as in classical signal analysis, while the vertex shifts are implemented in an indirect way, using the basis functions. This approach produces practically very efficient forms, especially when combined with polynomial approximations of the analysis kernels. This paper’s primary goal is to provide a strong link of the time–frequency analysis with vertex–frequency analysis and to indicate some new possibilities for simple methods in the time–frequency analysis of large-duration signals based on the vertex–frequency forms. Conditions for the signal reconstruction, known as overlap-and-add (OLA) method and weighted overlap-and-add (WOLA) are considered, and the window forms from the classical signal analysis are adapted to satisfy these conditions, with appropriate comments related to their application to the vertex–frequency analysis, when the eigenvalues are used instead of the frequency.

The paper is structured as follows. Basic definitions in graph theory and signals on graphs, including the graph Fourier transform, are reviewed in

Section 2. A solid formal relation between the classical signal processing paradigm and graph signal processing is provided in

Section 3, where benchmark graphs and signals are introduced. In

Section 4, the spectral-domain localized graph Fourier transform is presented, along with a few simple basic implementation forms. The general OLA and WOLA conditions for analysis in the graph spectral domain are introduced, with illustration on several windows for each of these conditions, including the spectral domain wavelet-like transform. The polynomial approximations of the presented kernels are the topic of

Section 5, where the Chebyshev polynomial series, least-squares approximation, and Legendre polynomial approximation are presented. Inversion of the local graph Fourier transform is elaborated on in

Section 6, where both of the defined kernel forms are analyzed. The support uncertainty principle in the general form (such that it can be used for graph signals) is presented in

Section 7, along with the discussion on the relation of the local graph Fourier transform support and the kernel function width in the spectral domain. The possibility of splitting large signals into smaller parts and simplifying the analysis of such signals is considered in

Section 8. The presented theory is illustrated in numerous examples. The manuscript closes with summarized conclusions and the reference list.

2. Basic Graph Definitions

A graph consists of

N vertices,

, which are connected with edges. The weight of edges are

[

24,

25,

26]. For the vertices

m and

n which are not connected, by definition

. The weights of edges are the elements of an

matrix,

. The graphs can be directed and undirected. For undirected graphs it is assumed that the vertices

m and

n are connected by the same edge weight in both directions, resulting in a symmetric weight matrix

, when

holds. A graph is unweighted if all nonzero elements of its weight matrix,

, are equal to 1. In this case the weight matrix,

, assumes specific form and the edges are represented by a connectivity or adjacency matrix,

. In addition to the adjacency and weight matrix,

or

, in graph theory several other matrices are used. All of them can be derived from the adjacency and weight matrix. A matrix that indicates the vertex degree in a graph is called the degree matrix. It is of diagonal form and its common notation is

. The elements

of the degree matrix are obtained as a sum of all weights corresponding to the edges connected to the considered vertex,

n. The diagonal elements of

are equal to

. A combination of the weight matrix,

, and the degree matrix,

, produces one of the most commonly used matrix in the graph theory, the graph Laplacian. It is defined by

In the case of an undirected graph, the symmetric form of the weight matrix results in a symmetric graph Laplacian, .

The eigendecomposition of the graph matrices (for example, of the graph Laplacian

or the adjacency matrix

) is used for spectral analysis of graphs and graph signals. The eigendecomposition of a graph Laplacian (or any other matrix) relates its eigenvalues,

, and the corresponding eigenvectors,

, by

where

,

, …,

, are not necessarily distinct. Since the graph Laplacian is a real-valued symmetric matrix, it is always diagonalizable, that is, the geometric multiplicity equals the algebraic multiplicity for every eigenvalue. The previous

N equations can then be written in a compact matrix form (the eigendecomposition relation for diagonizable matrices) as

The transformation matrix consists of the eigenvectors, , , as its columns, while is matrix of diagonal form, whose diagonal elements are , .

The same eigendecomposition relation can be used for the adjacency matrix , .

For diagonalizable matrix there exist a set of orthonormal eigenvectors. They are used as the transformation basis functions for the definition of the graph Fourier transform (GFT),

of a graph signal,

. The graph signal value at a vertex

n is denoted by

,

, while the notation

is used for the vector of signal values at all vertices. The vector of the GFT of a graph signal

will be denoted by

, and the elements (components) of the GFT vector by

,

. The elements of a graph signal at a vertex

n,

, can then be written as a linear combination of the eigenvectors

where the basis function values

, are the elements of the

k-th eigenvector,

, at the vertex

n,

. This is the definition of the inverse graph Fourier transform (IGFT).

Matrix form of the IGFT is

. For real and symmetric matrices (corresponding to undirected graphs) the transformation matrix

is orthogonal,

, that is

. Then the graph Fourier transform (GFT) is defined by

or in element-wise form

For undirected graphs, both the Laplacian and the adjacency matrix are symmetric, resulting in real-valued eigenvectors and the resulting transformation matrices. However, for directed circular graphs, the eigenvalues (and eigenvectors) of the adjacency matrix are complex-valued. Then, the elements of the inverse transformation matrix

should be used in the GFT definition. When

holds (normal matrices), the complex-conjugate basis functions,

, are used in (

2).

3. Classical Signal Processing within the Graph Signal Processing Framework

The graph signal processing will be related to classical time–frequency analysis in two ways: (1) using the directed circular graph and its adjacency matrix, or (2) using the undirected circular graphs and the graph Laplacian. These two relations are discussed next.

Directed circular graph. The signal values,

, in classical signal processing systems, are defined in a well-ordered time domain, defined by the time instants denoted by

. In the DFT-based classical analysis it has also been assumed that the signal is periodic. The domain of such signals is illustrated in

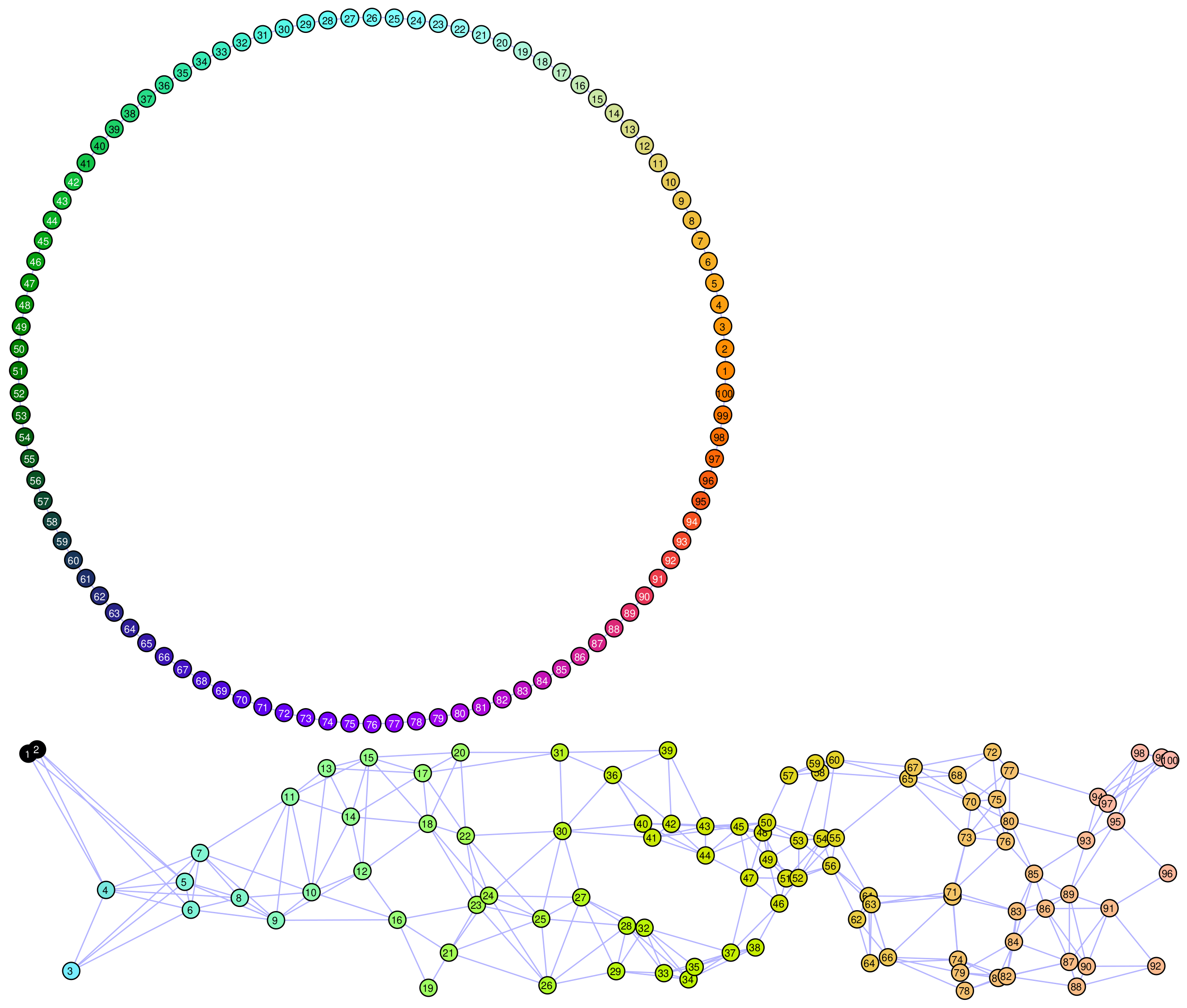

Figure 1 for

.

Consider next a classical form of a discrete-time finite impulse response (FIR) system. The input–output relation for this system is given by

In order to make a connection with graphs and graph notation of the signal domain, notice that this input–output relation of the FIR system can be written in the matrix form as

where

For this system, the time instants are well-ordered and their connectivity matrix is given by the adjacency matrix. The instants (in graph notation vertices) relation is defined by

as shown in

Figure 1 (left), for

. Rows of the adjacency matrix indicate the corresponding vertex connectivity. In the first row, there is value 1 at the position

N. It means that the vertex 1 is related to vertex

N by a directed edge between these two vertices. In the second row there is value 1 at the first position, meaning that the vertex 2 is connected to vertex 1 with an edge, as shown in in

Figure 1 (left).

The elements of the shift matrix relation are , as it has been expected for a simple delay operation. The delay for two instants, , is calculated as , and so on.

Now, we will perform the eigendecomposition of this adjacency matrix

. According to the eigendecomposition relation

or in the matrix form

Recall that

is the matrix whose columns are the eigenvectors,

, and the eigenvalue diagonal matrix is

. The eigenvalues

are on the diagonal of this matrix. The adjacency matrix of the directed circular graph is diagonalizable because all its eigenvalues are distinct. The adjacency matrix of a directed circular graph is a circulant matrix. As it is well-known that this kind of matrix is diagonalizable by the discrete Fourier transform [

27] (as it will be shown next). In general, the adjacency matrix of a directed graph may not be diagonalizable, when the Jordan form should be used [

1] and Appendix A in [

3]. This kind of graphs is not considered in the paper. The input–output relation of a classical FIR system (

3) can now be written as

where the eigendecomposition property

is used.

Now, by left-multiplication by

we can write

or

where

are the discrete Fourier transforms (DFT) of the output signal,

, and the input signal,

. The diagonal transfer function is denoted by

and its elements are given by

for

. Indeed, the presented forms represent the well-known classical DFT-based relations. In order to confirm this conclusion, we will analyze the eigenvalue relation for the presented adjacency matrix,

,

The corresponding characteristic polynomial is given by

Since

, the solutions for the eigenvalues and eigenvectors are

for

. The eigenvectors are equal to the DFT basis functions, normalized in such a way that their energy is unity.

We can easily arrive to the element-wise form of the DFT using the GFT definition given in (

2).

For implementation issues that will be addressed later, it is crucial to notice that for the calculation of we need only the signal neighborhood M with respect to the each considered instant, n. In the time domain, it means the distance defined by . The fact that requires the signal samples within M neighborhood of the considered vertex (instant) will hold for general graphs. The local neighborhood based calculation is of key importance when large graphs are analyzed or signals, representing big data on large graphs, are processed.

Undirected circular graph. When the circular graph is not directed, as shown in

Figure 1 (right), then we should assume that every instant (vertex),

n, is connected to both the predecessor vertex (instant),

, and to the succeeding vertex,

. The adjacency or weight matrix for this kind of connection,

, and the corresponding graph Laplacian, defined by

, are given by

where

is the diagonal (degree) matrix with elements

. The eigendecomposition relation written for the graph Laplacian,

, in element-wise form is given by

where

are the elements of the vector

.

The solution to the difference equation of the second order, (

4), can be obtained in the form

with the eigenvalue

For each of the eigenvalues, we can define two distinct orthogonal eigenvectors in quadrature, for example, using

and

in (

5). These two eigenvectors correspond to the classical sinusoidal basis functions,

and

, in the Fourier series analysis of real-valued signals. The exceptions are the eigenvalues

and the last eigenvalue for an even

N, when there is only one basis function. The sine and cosine functions should be normalized to the unit energy, to represent eigenvectors. Therefore, a definition of the graph Laplacian eigenvalues and eigenvectors for an undirected circular graph (for an even

N, for example,

), taking into account all previous properties, is given by

The smallest eigenvalue, , corresponds to a constant vector, , while the largest eigenvalue, , corresponds to the fastest-varying eigenvector .

Smoothness and local smoothness. Notice that for an undirected circular graph and small frequency,

, the relation in (

6) can be approximated by

This relation means that the graph Laplacian eigenvalue, , corresponding to the eigenvector, , can be related to the classical frequency (squared), , of a sinusoidal basis function in classical Fourier series analysis.

In general, it is easy to show that the eigenvalue of the graph Laplacian can be used to indicate the speed of change (called the smoothness) of an eigenvector or a graph signal, in general. Namely, if we left-multiply by

both sides of the eigenvalue definition relation

we obtain

, since

. Now the quadratic form

measures the change of neighboring values

, weighted by

. Fast changes of

produce large values of

, while the constant

results in

.

The local smoothness can be defined for a vertex

n. It will be denoted by

. This parameter corresponds to the classical time-varying (instantaneous) frequency,

, defined at an time-instant

t, in the form [

28]

In this relation we used

to denote the

n-th element of the vector

. It has been assumed that

. If we use

and the graph Laplacian of an undirected circular graph, as in (

4), we obtain the value from (

8). In general, if the signal

is equal to an eigenvector

at the vertex

n and at its neighboring vertices, then

.

System on a general graph. The relations presented in this section are the special cases of the general graph Fourier transform (

Section 2) and systems for graph signals.

The most important difference between the classical systems and the systems for graph signals is in the fact that the standard shift operator,

, just moves a signal sample from one instant,

n, to another instant,

, while the graph shift operator,

or

, moves the signal sample to all neighboring vertices (in the case of graph Laplacian, in addition to the signal being moved to the neighboring vertices (with a change of sign), its sample is kept at the original vertex as well). Notice that the graph shift operator does not satisfy the isometry property since the shifted signal’s energy is not the same as the energy of the original signal. In analogy with the role of time shift in standard system theory, a system for graph signals is implemented as a linear combination of a graph signal and its graph shifted versions,

where, by definition

, while

,

, …,

are the system coefficients. The spectral form of this relation is given by

where

is a diagonal matrix representing the transfer function of the system for a graph signal. Notice that if the transfer function, in general, can be written in a form of polynomial, as in (

11), then the system can be implemented using the graph-shifted forms of the signal,

,

, up to

, as in (

10), which require only

neighborhoods of each signal sample to obtain the system output, independently of the size of the considered graph.

Graph signal filtering—Graph convolution. Three approaches to filtering of a graph signal using a system whose transfer function is , with elements on the diagonal , , will be presented next.

- (i)

The simplest approach is based on the direct employment of the GFT. It is performed by:

- (a)

Calculating the GFT of the input signal, ,

- (b)

Finding the output signal GFT by multiplying by , ,

- (c)

Calculating the output (filtered ) signal as the inverse DFT of , .

The result of this operation,

is called

a convolution of signals on a graph [

25,

29].

However, this procedure could be computationally unacceptable for very large graphs.

- (ii)

A possibility to avoid the full size transformation matrices for large graphs, is to approximate the filter transfer function,

, at the positions of the eigenvalues,

,

, by a polynomial,

, that is

Then the system of

N equations

is solved, in the least squared sense, for

unknown parameters of the system

, with a given

M and

is the column vector of diagonal elements of

. The elements of matrix

are

,

,

(Vandermonde matrix).

This system can efficiently be solved for a relatively small

M. Then, the implementation of the graph filter is performed in the vertex domain using the so obtained

in (

10) and the

M-neighborhood of a every considered vertex. Notice that the relation between the IGFT of

and the system coefficients

is direct in the classical DFT case only, while it is more complex in the general graph case [

25].

For large

M, the solution to the system of equations in (

12), for the unknown parameters

can be

numerically unstable due to large values of the powers for large

M.

- (iii)

Another approach that allows us to avoid the direct GFT calculation in the implementation of graph filters is in approximating the given transfer function, , by a polynomial , using continuous variable .

This approximation does not guarantee that the transfer functions and its polynomial approximation will be close at a discrete set of points , . The maximal absolute deviation of the polynomial approximation can be kept as small as possible using the so-called

min–max polynomials. After the polynomial approximation is obtained the output of the graph system is calculated using (

10), that is

This approach will be presented in

Section 5.

Case study examples. In the next example we shall introduce two graphs and signals on these graphs, which will be used as benchmark models for the analysis that follows.

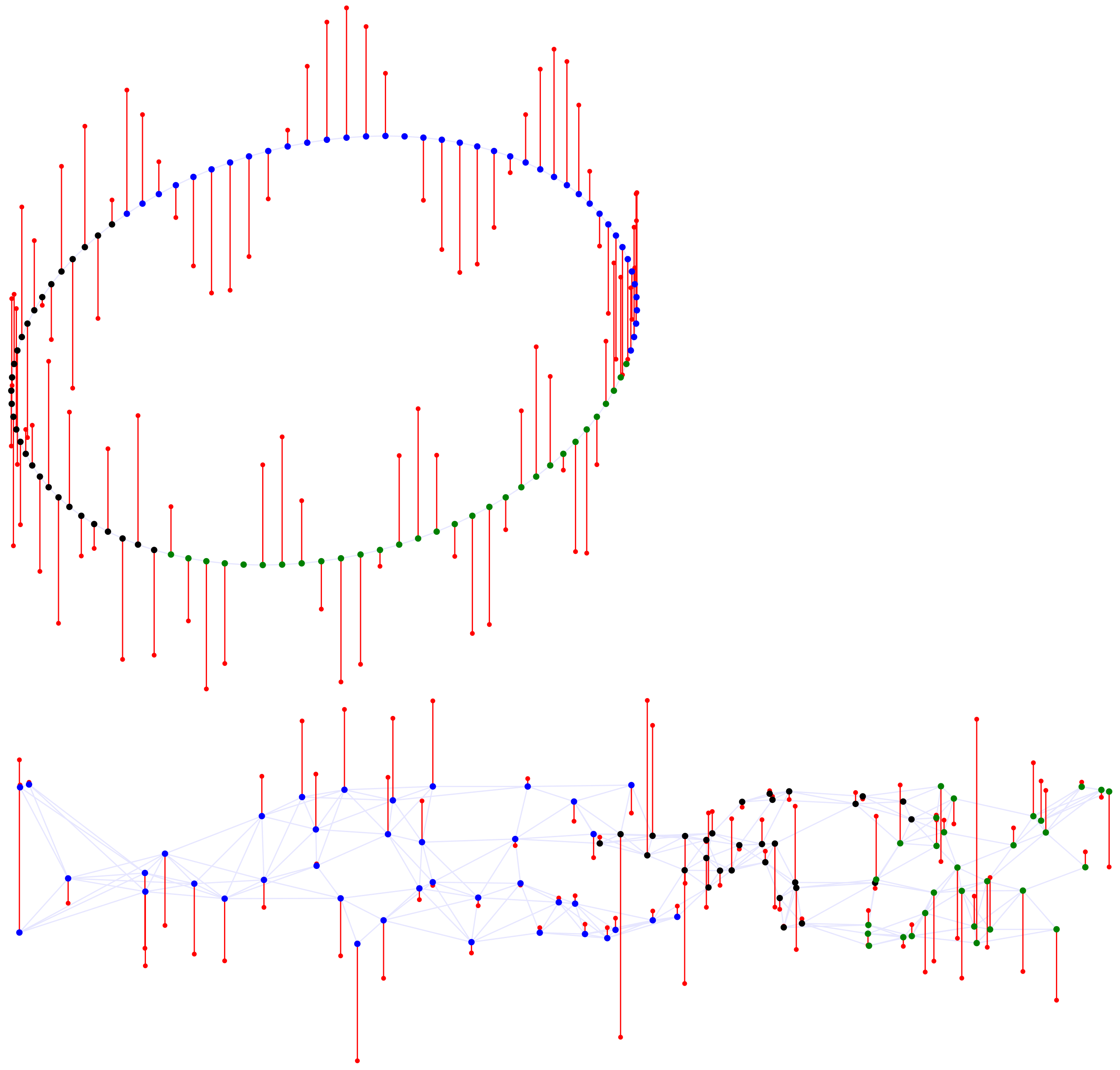

Example 1. Two graphs are shown in Figure 2. A circular undirected unweighted graph represents the domain for classical signal analysis, with each of vertices (instants) being connected to the predecessor and successor vertices (top panel). A general form of a graph, with the same number of vertices, is shown in Figure 2 (bottom). These two graphs will be further used to demonstrate classical and graph signal processing principles and relations. A signal on the circular graph is shown in Figure 3 (top). We have formed this synthetic signal using parts of three graph Laplacian eigenvectors (corresponding to three harmonics in classical analysis). For the vertices in the subset , the eigenvector (harmonic) with the spectral index was used. For the subset , the eigenvector , with , is used to define the signal. The eigenvector with spectral index was used to define the signal on the remaining set of vertices, .

A signal on the general graph is shown in Figure 3 (bottom). It is also composed of parts of three Laplacian eigenvectors. For the vertices in , the eigenvector with spectral index has been used. For the subset of vertices , containing the vertex indices ranging from to , the eigenvector with was used to define the signal. Within the subset, , the spectral index was . Supports of these three components are designated by different vertex colors.

The local smoothness index , which corresponds to the speed of change of the corresponding components, , is shown in Figure 4 for the presented graph signals. The local smoothness in the classical signal analysis is related to the instantaneous frequency of each signal components as . Other graph shift operators. Finally, notice that in relation (

10), we used the graph Laplacian,

, as the shift operator. In addition to the adjacency matrix,

, as another common choice for the shift operation, the normalized version of the adjacency matrix, (

), normalized graph Laplacian (

), or the random walk (also called diffusion) matrix, (

), may be used as graph shift operators, producing corresponding spectral forms of the systems for graph signals [

30].

Remark 1. The normalized graph Laplacian,is used as a shift operator in the first-order system, to define the convolution operation and the convolution layer in the graph convolutional neural networks (GCNN). Its form is Using this relation, the input, and the output, of the c-th channel of the l-th convolution layer in the GCNN are implemented aswhere the weight , in the c-th channel of the l-th convolution layer, corresponds to the weight in (14) and corresponds to in (14). 4. Spectral Domain Localized Graph Fourier Transform (LGFT)

Classical short-time Fourier transform (STFT) admits time–frequency localization of the analyzed signal using the Fourier transform of the windowed and shifted versions of the signal. This principle is possible in the graph signal processing [

29,

31]. However, since this approach requires sophisticated approaches to the vertex shift operation on the signals, the spectral domain localization is more commonly used in vertex–frequency analysis. Although, the spectral domain is possible and well-defined in classical analysis, it has been rarely used for time–frequency analysis of signals. The time–frequency localization of a signal in the spectral domain is obtained using a spectral domain localization window, which is shifted in frequency, while the time shift is achieved by the modulation of the windowed Fourier transform of the signal.

We shall use the spectral approach to perform vertex–frequency localization. The graph Fourier transform localized in the spectral domain (LGFT) is defined as an inverse graph Fourier transform of the graph Fourier transform,

, multiplied by a spectral domain window,

. The spectral domain window is nonzero at and around the spectral index

k. Therefore, the element-wise LGFT is calculated using

The shift is here performed in the well-ordered spectral domain, along the spectral index k, instead of the more complex signal shift in the vertex domain. As it will be shown, this form of the vertex–frequency analysis, will also allow vertex localized implementations of the vertex–frequency analysis, even without calculation of the graph Fourier transform of the signal, which is of crucial importance in the case of very large graphs.

Remark 2. The counterpart of (16) in the classical time–frequency analysis is well-known short-time Fourier transform (STFT) [12]where is a frequency domain localization window. The LGFT defined in the spectral domain by (

16) can be realized by using bandpass transfer functions, denoted by

. Then the LGFT definition is given by

The transfer function in (

17),

, is centered (shifted) at a spectral index,

k, by definition. The vertex–frequency domain kernel,

, of the form

is obtained from

Remark 3. In classical time–frequency analysis the elements of the inverse DFT matrix are equal to and are the bandpass transfer functions, with the kernel The STFT is then defined as The matrix form of the vertex–frequency spectrum (

17) is

or using vector/matrix notation

where the column vector whose elements are

,

, is denoted by

.

4.1. Binomial Decomposition

Consider the simplest decomposition when the total spectral domain of graph signal is divided into

bands. These two bands, indexed by

and

, cover the low-pass part and high-pass part of spectral content of signal, respectively. First we will use the linear functions of eigenvalue

, to achieve these properties

Using the relation between (

10) and (

11) we can conclude that the vertex-domain implementation of this kind of LGFT analysis is very simple

and for each vertex,

m, the calculation of

and

, requires only the combination of the signal at this vertex and its neighboring vertices, to calculate the elements of

.

Remark 4. The classical time–frequency analysis counterpart of (23) is obtained using the eigenvalue to frequency relation for the circular undirected graph to produce low-pass and high-pass type transfer functionsas shown in Figure 5 (top). These spectral transfer functions are dual to the classical Hann (raised cosine) window forms, used for signal localization in the time domain. To improve the spectral resolution and to divide the spectral range into more than two bands. we can use the same transfer function forms by applying them to the low-pass part of the signal and dividing the spectral content of this part of the signal into its low-pass part and its high-pass part. In classical signal processing, two common approaches are applied:

- (a)

The high-pass part is kept unchanged, while the low-pass part is split. This approach corresponds to the wavelet transform or the frequency-varying classical analysis.

- (b)

The high-pass part is also split into its low-pass and high-pass parts to keep the frequency resolution constant for all frequency bands.

Next, we consider these two approaches for the division of frequency bands.

- (a)

In a two-scale wavelet-like analysis we keep the high-pass part

, while the low-pass part,

, is split in its low-pass part,

and high-pass part,

, using the same transfer function, as

For the third scale step we would keep

and the high-pass part of the scale two step,

, and then split the low-pass part in scale two,

, into its low-pass part,

, and high-pass part,

, using

This process could be continued until the desired scale (frequency resolution) is reached.

- (b)

For the uniform frequency bands both the low-pass and the high-pass bands are split in the same way, to obtain

Notice that this kind of spectral band division will produce two times the same result. Once when the original low-pass part is multiplied by the high-pass function, and then again when the original high-pass part is multiplied by the low-pass function. This is the reason why the constant value of 2 has appeared in the new middle pass-band,

.

The bands in relation (

24) can be obtained as the terms of the binomial expression

. If we continue to the next level, by multiplying all the elements in (

24) by the low-pass part,

, and then by the high-part part,

, after grouping the same terms, we would obtain the signal bands of the same form as the terms of the binomial

. We can conclude that the division can be performed into

K bands corresponding to the terms of a binomial form

The transfer function of the

k-th,

, term, has the vertex domain form

Of course, the sum of all parts of signal, filtered by

, produces the reconstruction relation,

, what is obvious from the identity in (

25), that is, from

.

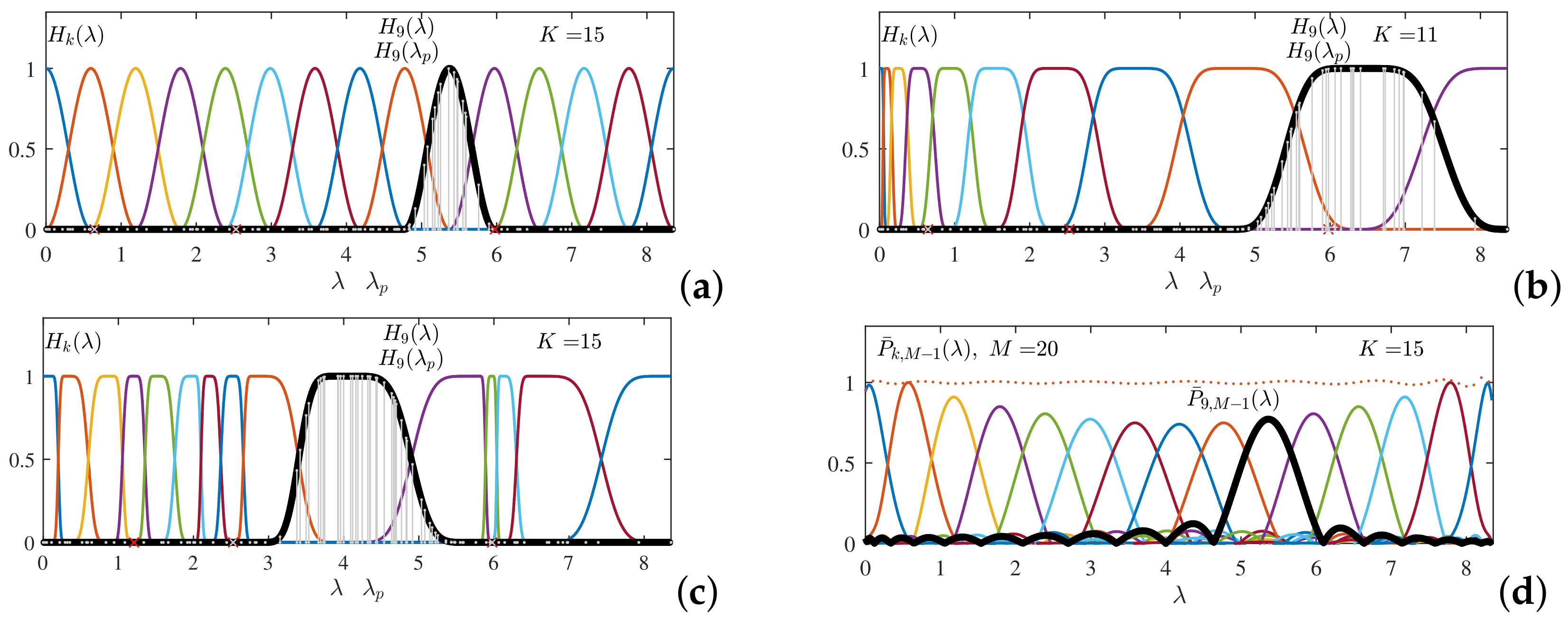

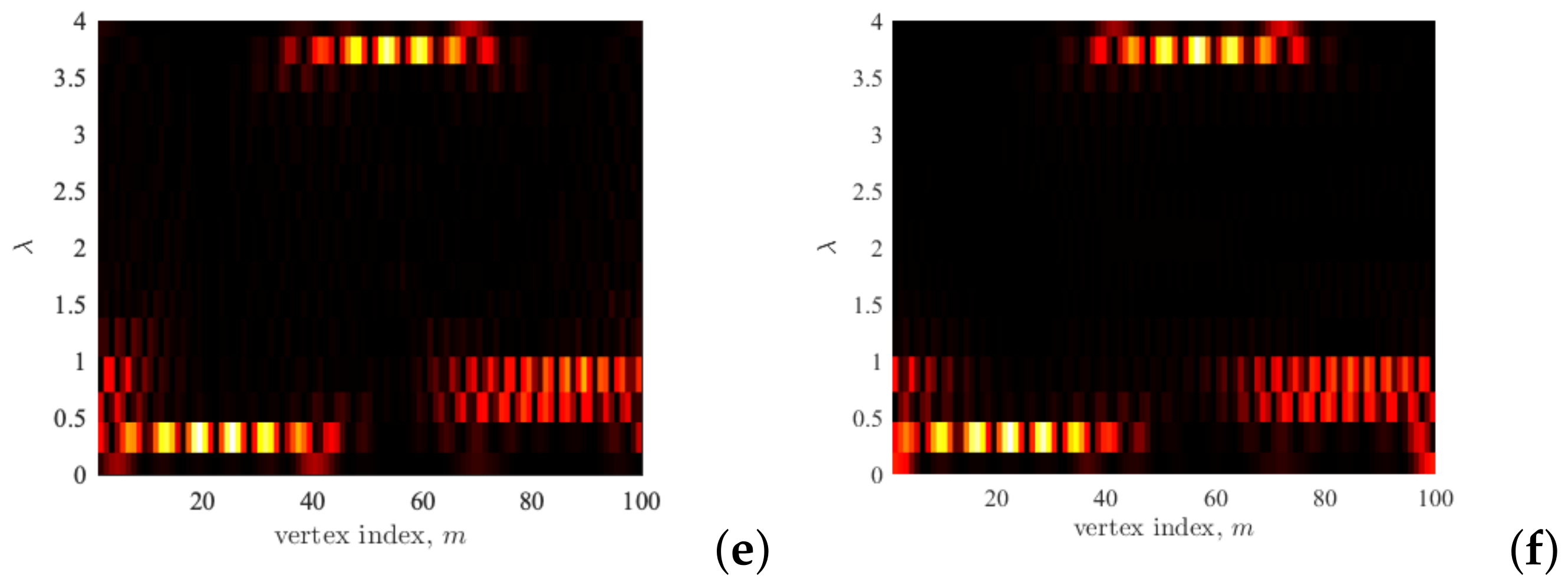

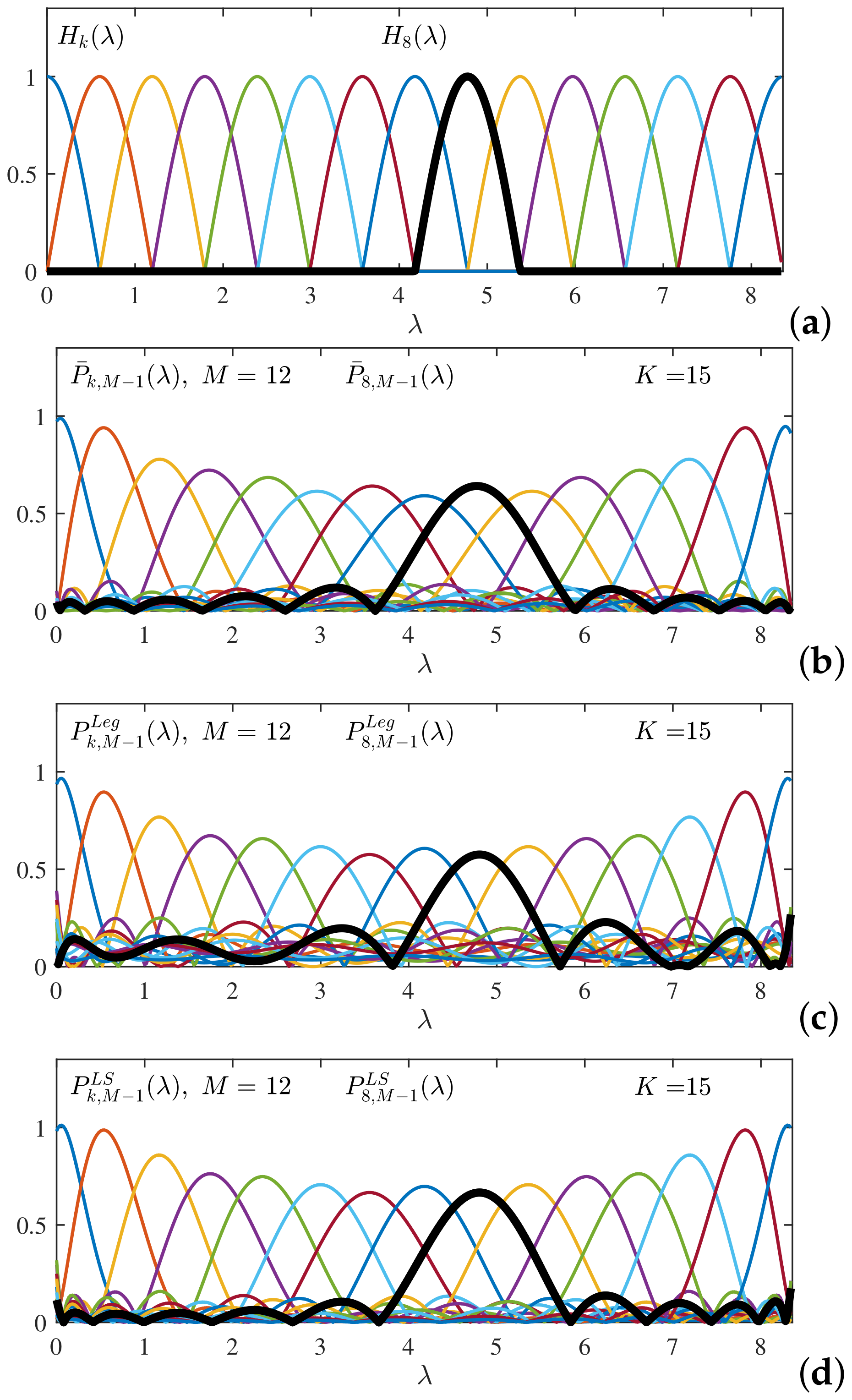

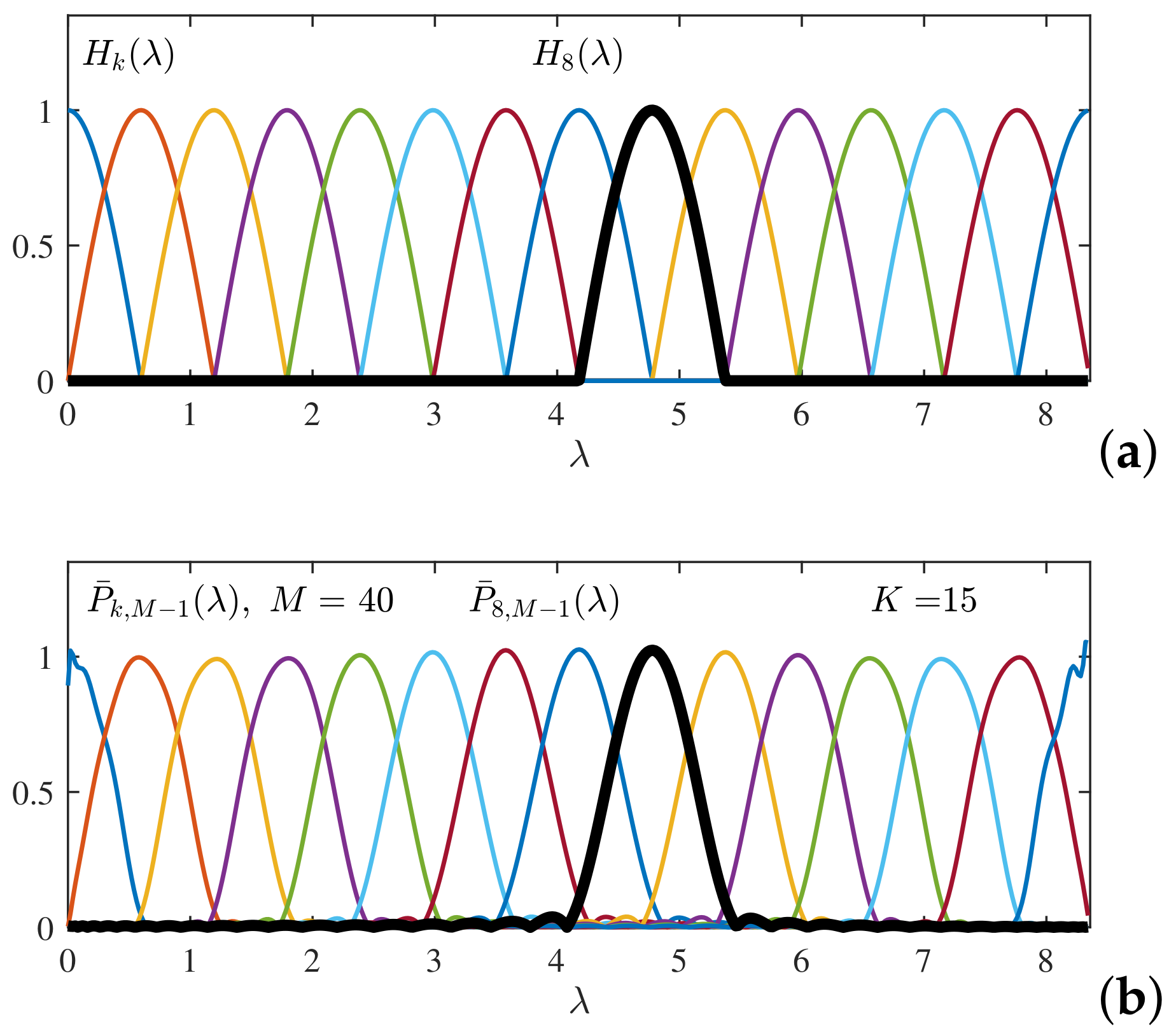

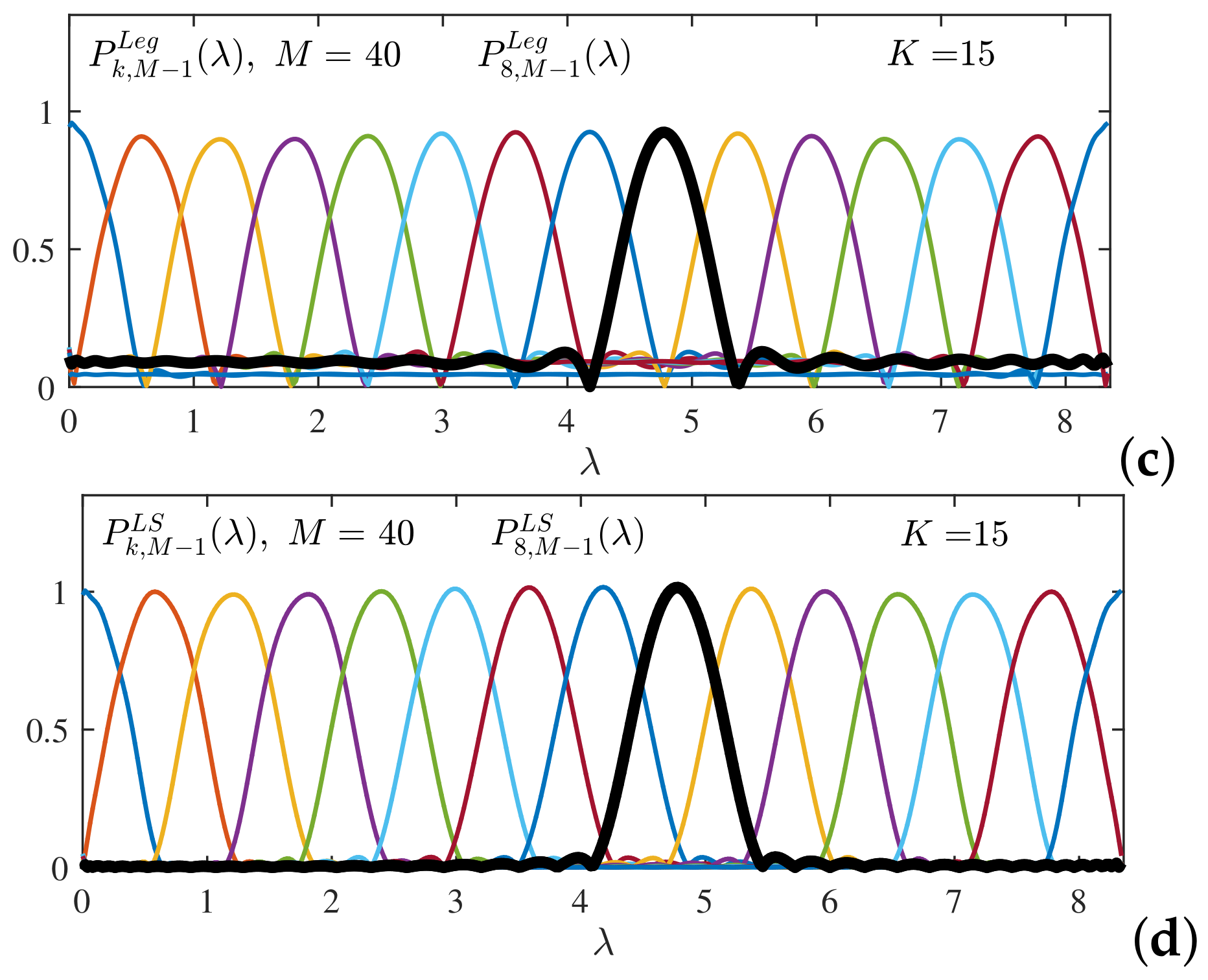

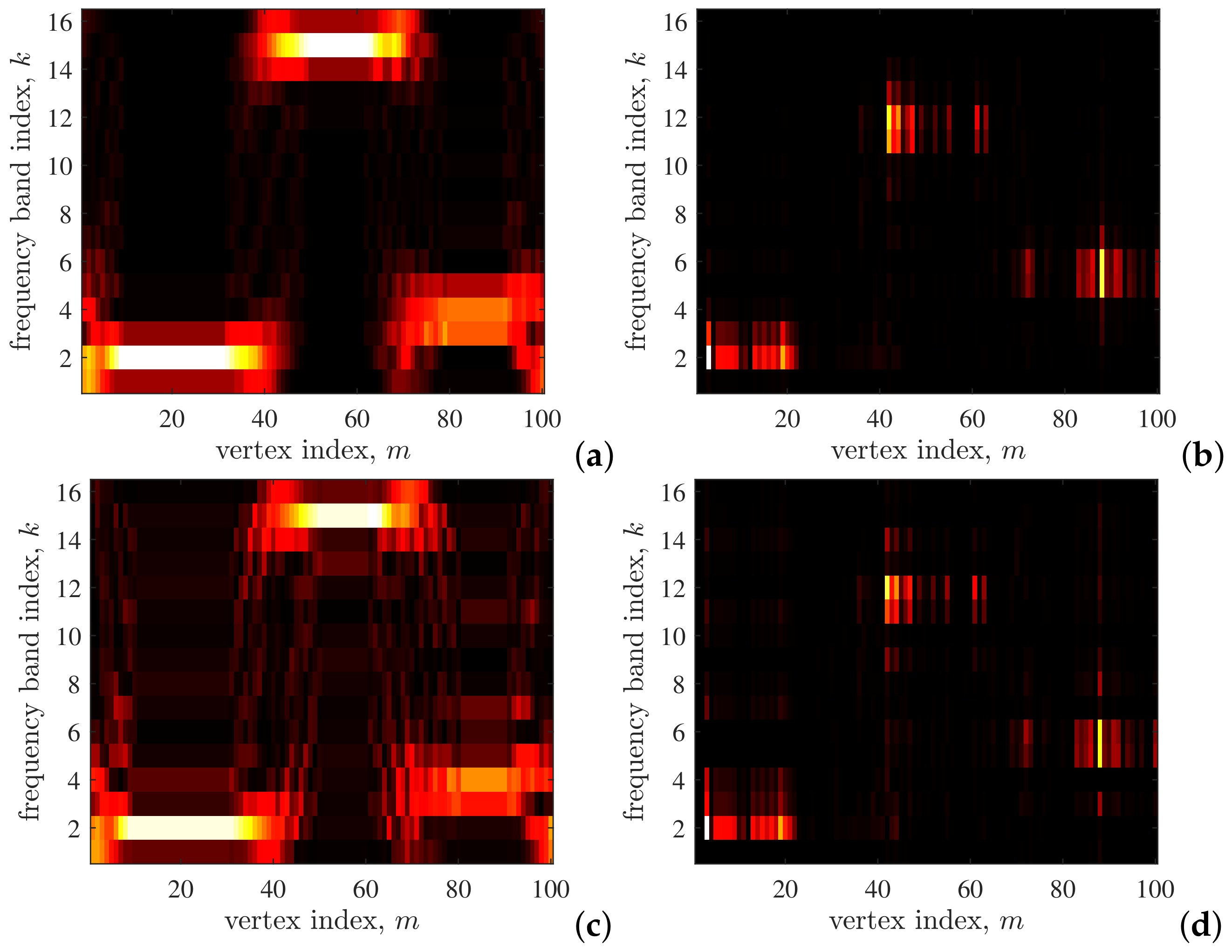

Example 2. The spectral domain transfer functions , , , which correspond to classical time–frequency processing and the binomial form terms for , , and are shown in Figure 5. The last two panels (the third and fourth panel) show the case with . In the third panel, the amplitudes of every transfer function is normalized. In the fourth panel, all transfer functions for are shown, without the amplitude normalization. Example 3. For a general graph, the spectral domain transfer functions , , , that can be obtained as the terms of the binomial form for , , and are shown in Figure 6. The last two panels (the third and fourth panel) again show the case with . In the third panel, the amplitudes of every transfer function is normalized. In the fourth panel, all transfer functions for are shown, without the amplitude normalization. Example 4. Vertex-domain implementation is based on the multiplication of signal, , by the graph Laplacian . For each vertex n it is localized to its neighborhood one. After the signal is calculated, then the new signal is easily obtained as the graph Laplacian multiplication with the calculated signal, , that is . This procedure is continued up to the any order, .

In classical time–frequency analysis the multiplication by the graph Laplacian of an undirected circular graph, with , is equivalent to the convolution of the signal with the impulse response of the finite impulse response filterthat corresponds to the transfer function . It means that the high-pass and low-pass part of the signal are obtained as (the element-wise form of the Laplacian operator applied to the signal is given by (4)andwhere ∗ denotes convolution operation. These convolutions can be repeated to produce wavelet-like band distribution or uniform distribution of frequency bands. If no downsampling is used, then the redundant representation of signal is obtained with each of these components containing the same number of samples as the original signal. However, it is possible to form nonredundant form of this representation. Using downsampling with factor of 2, the valuesare kept. The signal samples at the even indexed instants, , are easily obtained aswhile for the samples at the odd indexed instants, , we have Using the initial condition , we can reconstruct all odd-indexed samples. This reconstruction can be noise sensitive for large N, due to repeated recursions in the last relation.

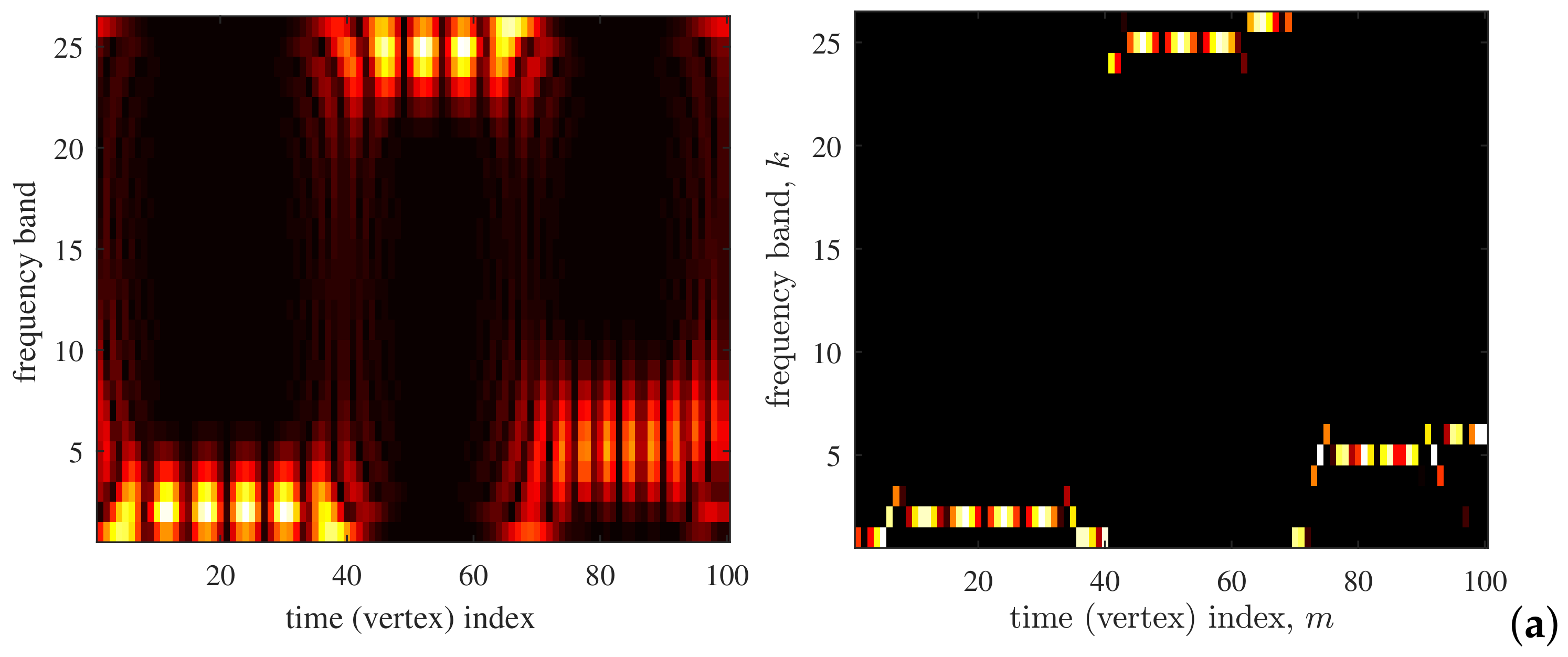

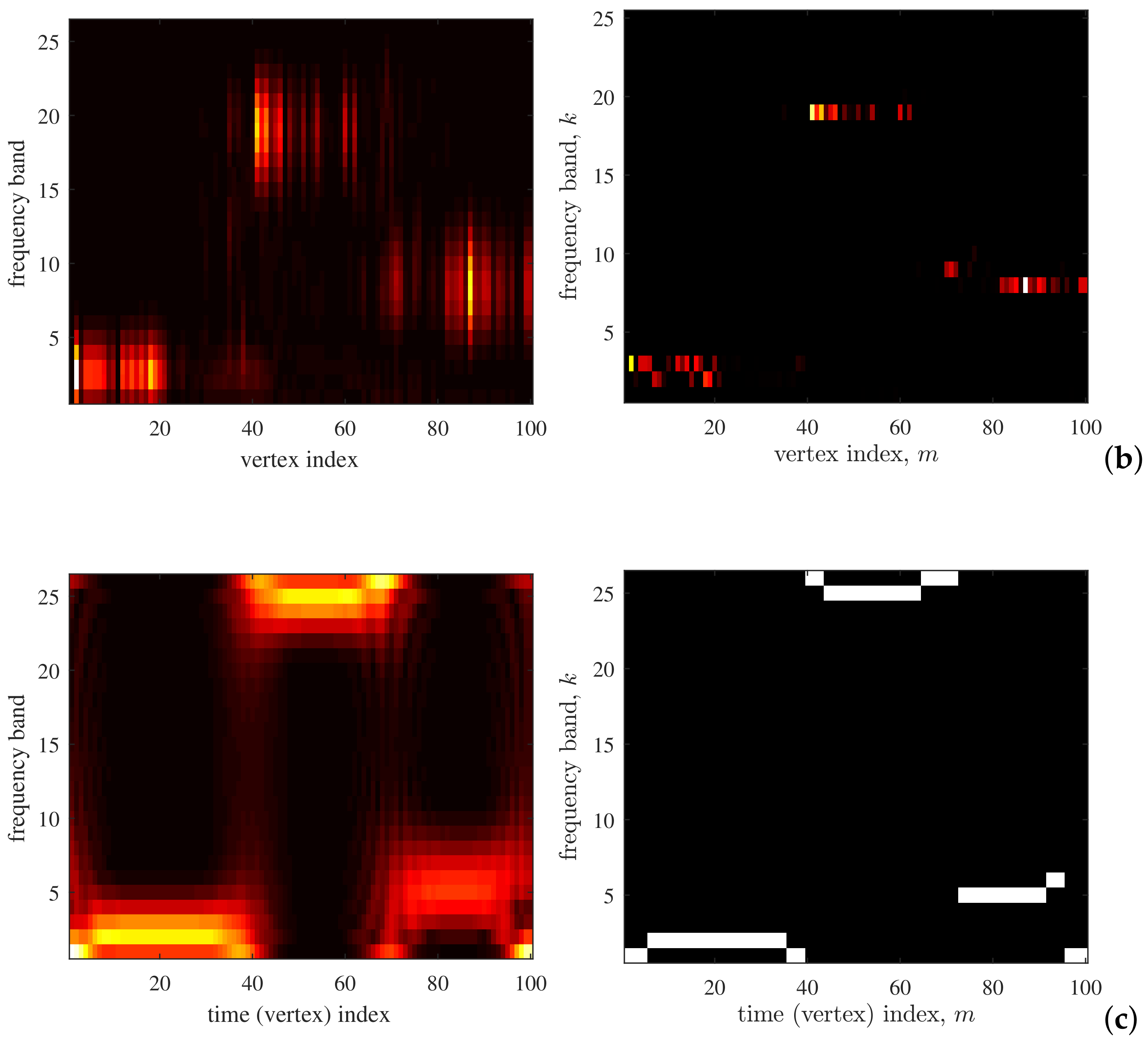

Example 5. Time–frequency and vertex–frequency analysis based on the binomial decomposition of the signals from Example 1 is performed in this example. The corresponding transfer functions for the time–frequency analysis (circular undirected graph, Figure 2 (top)) and vertex–frequency analysis (general graph, Figure 2 (bottom)) are shown in Figure 5 and Figure 6, respectively. The time–frequency representation of the three-harmonic signal from Figure 3 (top) is shown in Figure 7a, (left panel). Its reassigned version to the position of the maximum distribution value is given in Figure 7a (right panel). The same analysis for the general graph signal from Figure 3 (bottom) is shown in Figure 7b. Finally, in order to present the common complex-valued harmonic form, the signal is composed by adding two corresponding sine and cosine components (as in (7)) and forming the complex-valued components , within , , within , and , within . Time–frequency representation of this signal is given in Figure 7c. Selectivity of the transfer functions can be improved using higher order polynomials, instead of the linear functions in (

23). Assuming that the high-pass part should satisfy

and

, and that its derivative is zero at the initial interval point, (

), and the ending interval point (

), as well as that

, we can use the following polynomial forms

The vertex-domain implementation is performed according to

The same analysis can be now repeated as for (

23). These polynomial forms will be revisited later in this paper.

4.2. Hann (Raised Cosine) Window Decomposition

We have presented the simplest decomposition to the low-pass and high-part of a signal. However, the LGFT of the form (

17) can be calculated using any other set of bandpass functions,

,

, as

The spline or raised cosine (Hann window) functions are commonly used as bandpass functions. To further illustrate the concepts, we will consider next transfer functions in general form of the shifted raised cosine functions. They are given by

where the spectral bands for

are defined with

and

,

. If spectral bands were uniform within

, the corresponding intervals are based on

with

and

. Here, only

is used to define the initial transfer function,

, while the interval

in (

28) is used for the last transfer function,

. The transfer functions with

uniform bands and

are shown in

Figure 8 (top).

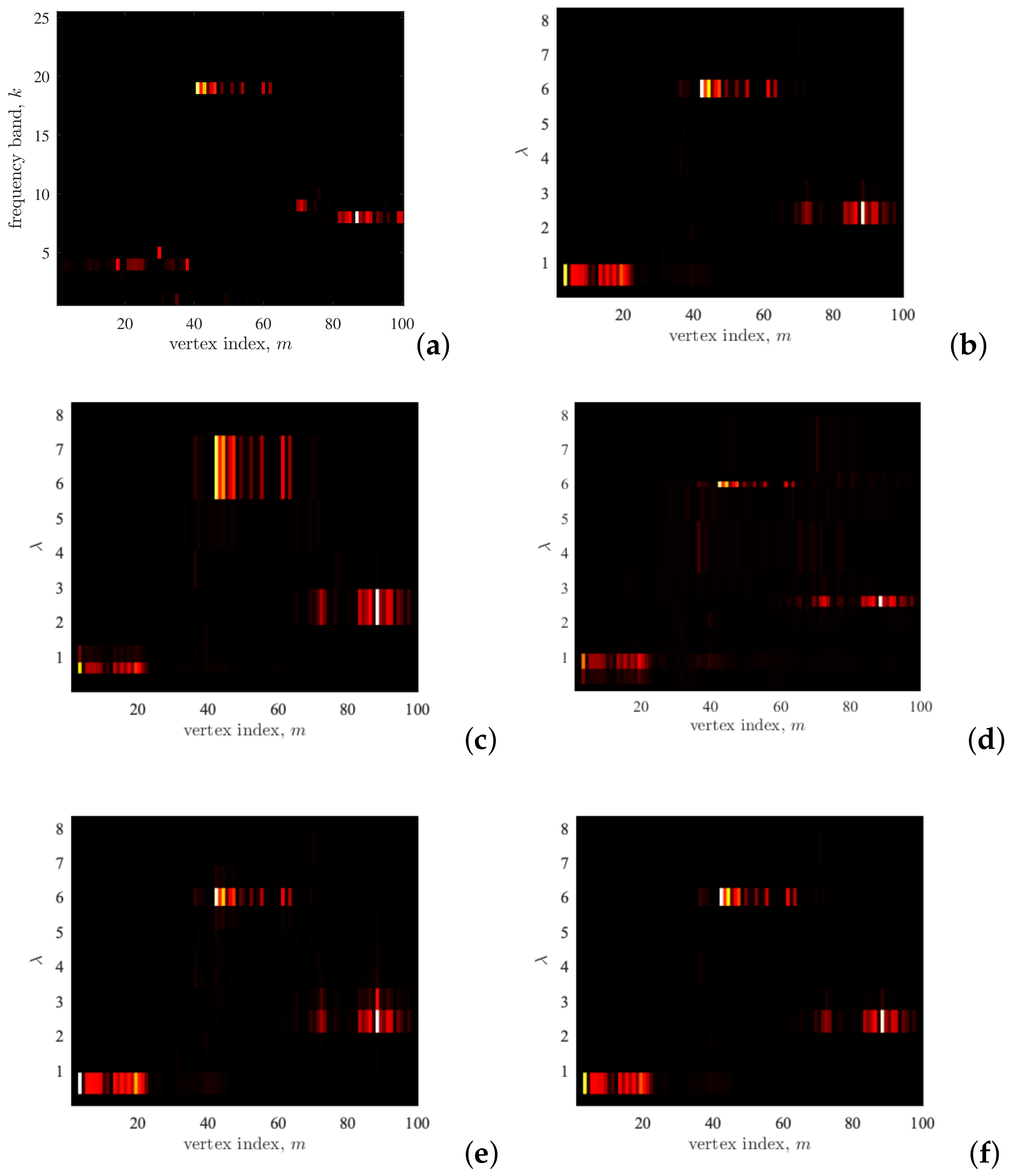

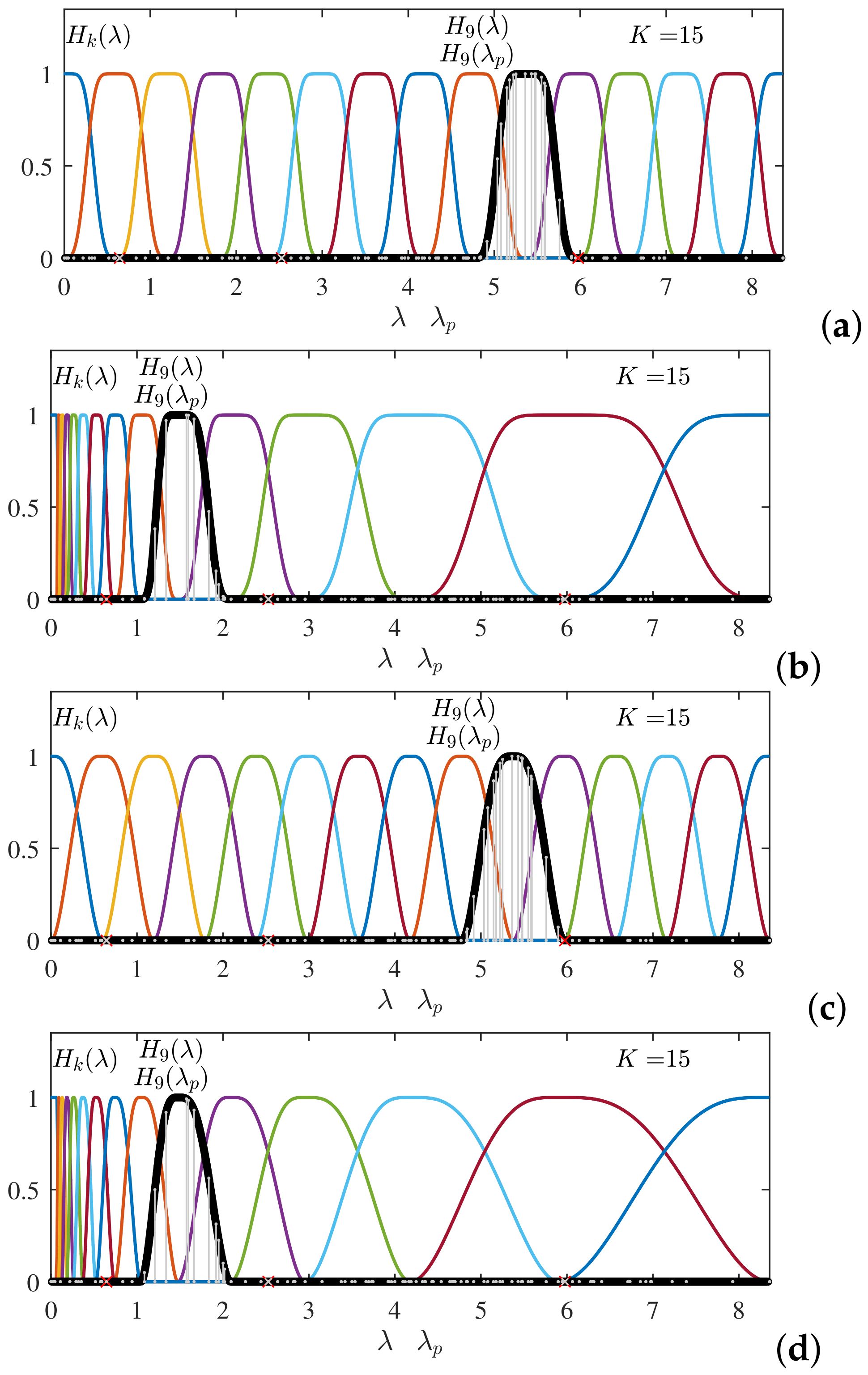

Example 6. The transfer functions (28) in the eigenvalue (smoothness index) spectral domain, , , , and the frequency domain for the classical analysis (graph analysis on circular undirected unweighted graphs) are shown in Figure 8 (top) and (bottom), respectively, for , and . Notice that the relation between these two domains is nonlinear through . Example 7. The transfer function for various widths of the Hann window are shown in Figure 9. The most common case with uniform division of the spectral domain, as defined by (29), is given in Figure 9a. Two forms of the spectral dependent widths are shown in Figure 9b,c. While the widths, defined by the constants in (29) increase as in the wavelet transform case, the widths of the transfer functions in Figure 9c are kept narrow around the spectral indices of signal components, in order to make finer spectral resolution at these regions (signal adaptive approach). Finally, Figure 9d shows polynomial approximations of the transfer functions form Figure 9a, which will be discussed later. Example 8. The transfer functions with various widths of the Hann window form Figure 9 are used for time–frequency representation of the signal on the circular graph from Example 1. The results are shown in Figure Figure 10. Example 9. In this example, the same transfer functions form Figure 5 are used for vertex–frequency representation of the signal on the general graph from Example 1. The results are shown in Figure 11. 4.3. General Window Form Decomposition—OLA condition

The spectral transfer functions in the form of the raised cosine transfer function (

28) are characterized by

We may use any common window for the decomposition which satisfies this relation. Next, we will list some of these windows:

A combination of the raised cosine windows. After one set of the raised cosine windows is defined, we may use another set with different constants

,

,

and overlap it with the existing set. If the window values are divided by

then the resulting window satisfies (

30). In this way, we can increase the number of different

overlapping windows.

Hamming window can be used in the same way as in (

28). The only difference is that the Hamming windows sum-up to

in the overlapping interval, meaning that the result should be divided by this constant.

Bartlett (triangular) window with the same constants

,

,

as in (

28) satisfies the condition (

30), along with combinations with different sets of

,

,

to increase overlapping.

Tukey window has a flat part in the middle and the cosine form in the transition interval. It can also be used with appropriately defined , , to take into account the flat (constant) window range.

4.4. Frame Decomposition—WOLA Condition

For the signal reconstruction, using the kernel orthogonality and the frames concept, the windows should satisfy the condition

The graph signal reconstruction can be performed based on (

30) and (

31), as discussed with more details in

Section 6.

Several windows that satisfy the condition in (

31) will be presented next:

Sine window is obtained as the square root of the raised cosine window in (

28). Obviously, this window will satisfy (

31). Its form is

A window that satisfies (

31) can be formed for

any window in the previous section, by taking its square root.

Example 10. For the case of the Hann window and the triangular (Bartlett) window, their corresponding squared root forms, that will produce , are shown in Figure 12, for a uniform splitting of the spectral domain and a signal-dependent (wavelet-like) form. Notice that the squared root of the Hann window is the sine window form. It is obvious that the windows are not differentiable at the ending interval points, meaning that their transforms will be very spread (slow-converging).

The windows defined as square roots of the presented windows (which originally satisfy the OLA condition), do not satisfy

the first derivative continuity property at the ending interval points. For example, the raised cosine window satisfied that property, but its square root (sine) window loses this desirable property

Figure 12.

To restore this property, we may either define new windows or just use the same windows, such as the raised cosine window, and change argument so that the window derivative is continuous at the ending point. This technique is used to define the following window form.

Mayer’s window form modifies the square root of the raised cosine window (sine window) by adding the function

in the argument,

x, which will make the first derivative continuous at the ending points. In this case, the window functions become [

32]

with

,

, while the initial and the last intervals are defined as in (

29). In order to overcome the non-differentiability of the sine and cosine functions at the interval-end points, the previous argument from (

28), of the form

is mapped as

for

with

, producing Meyer’s wavelet-like transfer functions.

If we now check the derivative of a transfer function, , at the ending interval points, we will find that it is zero-valued. This was the reason for introducing the nonlinear (polynomial) argument form instead x or , having in mind the relation between the arguments x and .

Example 11. The transfer functions from the previous example, for the case of the Hann window and the triangular (Bartlett) window of forms that will produce , whose argument is modified in order to achieve differentiability at the ending points, are shown in Figure 13. Due to differentiability, these transfer functions have a faster convergence than the forms in the previous example, and are appropriate for vertex–frequency and time–frequency analysis. The results of this analysis would be similar to those presented in Figure 10 and Figure 11. The difference exists is in the reconstruction procedure as well. Polynomial windows are obtained if the function

is applied to the triangular window. Their form is

The simplest polynomial for that would satisfy the conditions

,

,

is

with

,

, that is

In general, the conditions:

are satisfied by

for

, if

and

,

,

. With

we obtain

These transfer functions are the extension of the linear forms presented in (

23) and could be very convenient for the vertex (time) implementation. The polynomial of the third order in

will require only neighborhood 3 in the vertex (time) domain implementation.

Spectral graph wavelet transform. In the same way as the LGFT can be defined as a

projection of a graph signal onto the corresponding kernel functions, the spectral graph wavelet transform can be calculated as the projections of the signal onto the wavelet transform kernels. The basic form of the wavelet transfer function in the spectral domain is denoted by

. Then, the other transfer functions of the wavelet transform are obtained as the scaled versions of the basic function

using the scales

,

. The scaled transform functions are

[

21,

22,

33,

34,

35,

36].

The father wavelet is a low-pass scale function denoted by

. It is a low-pass function, in the same way as in the LGFT was the function

. The set of scales for the calculation of the wavelet transform is

/ The scaled transform functions obtained in these scales are

and

. Next, the spectral wavelet transform is calculated as a projection of the signal onto the bandpass (and scaled) wavelet kernel,

, in the same way as the kernel

was used in the LGFT in (

18). It means that the wavelet transform elements are

with the wavelet coefficients given by

The Meyer approach to the transfer functions is defined in (

33) with the argument

. The same form can be applied to the wavelet transform using

and the intervals of the support for this function given by:

- –

for

(sine function in (

33)).

- –

(sine functions in (

33)),

.

- –

(cosine functions in (

33)),

.

- –

where the scales are defined by .

- –

The interval for the low-pass function, , is (cosine function within and the value as ).

Notice that the wavelet transform is just a special case of the varying transfer function, when the narrow transfer functions are used for low spectral indices and wide transfer functions are used for high spectral indices, as shown in

Figure 13b or

Figure 10b,d.

In the implementations, we can use the vertex domain localized polynomial approximations of the spectral wavelet functions in the same way as described in

Section 5.

Optimization of the vertex–frequency representations. As in classical time–frequency analysis, various measures can be used to compare and optimize joint vertex–frequency representations. An overview of these measures may be found in [

37]. Here, we shall suggest the one-norm (in the vector norm sense), introduced to the time–frequency optimization problems in [

37], in the form

where

is the Frobenius norm of matrix

, used for the energy normalization. The normalization factor can be omitted if

is a tight frame. Here we will just underline that the functions,

, are referred to as

a frame. In the case of a graph signal,

, the set functions

, is a frame is [

22]

holds, with

a and

b being positive constants. This constants determine the stability of reconstructing the signal from the values

. A frame is called

Parseval’s tight frame if

. The LGFT, as given by in (

17), represents Parseval’s tight frame when

Notice that Parseval’s theorem is used for the LGFT,

, as it is the GFT of the spectral windowed signal,

. With this fact in mind we obtain

The LGFT defined by (

17) is a tight frame if the condition in (

31) or (

49) holds. This is the condition that is used to define transfer functions shown in

Figure 5b,c.

7. Support Uncertainty Principle in the LGFT

In the classical time–frequency analysis, the window function is used to localize signal in the joint time–frequency domain. As it is known, the uncertainty principle prevents an ideal simultaneous localization in the time and the frequency domains.

The uncertainty principle is defined in various forms. For a survey see [

39,

40,

41,

42]. Concentration measures, reviewed in [

37], are closely related to all forms of uncertainty principle. The product of the effective signal widths in the time and the frequency domain is the basis for the common uncertainty principle form used in the time–frequency signal analysis [

13,

43]. In quantum mechanics, this form is also known as the Robertson–Schrödinger inequality. In general signal theory, the most commonly used form is the support uncertainty principle, closely related to the sparsity support measure [

37,

42]. In the classical signal analysis, the support uncertainty principle relates the discrete signal

, and its DFT,

, as follows

In other words, the product of the number of nonzero signal values, , and the number of its nonzero DFT coefficients, , is greater or equal than the total number of signal samples N. This form of uncertainty principle will be generalized to the LGFT. The LGFT form shall produce the classical support uncertainty principle as a special case.

The support uncertainty principle in the LGFT can be derived in the same way as in the case of the graph Fourier transform. A simple derivation procedure, as in [

44,

45], will be followed. If a function

is formed, then its sum over indices

n and

k,

is equal to the energy of the LGFT,

, for the given frequency band indexed by

p. Assume next that the number of nonzero values of

, for a given

p, is equal to

, while the number of nonzero values of the spectral localized signal

is

. Using the Schwartz inequality

we obtain

since

, and

while the summation in

is performed over nonzero values of

, meaning that

.

Therefore, the support uncertainty principle assumes the following form

The first inequality is written based on the general property that the arithmetic mean is greater than geometric mean of positive numbers.

For the standard DFT, with

and

, when

, we easily obtain the classical uncertainty principle (

52).

Having in mind that

is band-limited to

nonzero samples, then

meaning that the support of the LGFT satisfies

The smallest possible number of nonzero samples in the LGFT is defined by

where

.

For example, if the classical Fourier analysis is considered then the maximal absolute eigenvector value is

and

If we select just one spectral frequency by a bandpass filter, with , then the duration of the must be N. If half of the spectral band is selected by the bandpass function, , then Finally, if all spectral components are used, then a delta pulse is possible in the time domain, that is, for , we can have