Abstract

This paper introduces a novel recursive algorithm for inverting matrix polynomials, developed as a generalized extension of the classical Leverrier–Faddeev scheme. The approach is motivated by the need for scalable and efficient inversion techniques in applications such as system analysis, control, and FEM-based structural modeling, where matrix polynomials naturally arise. The proposed algorithm is fully numerical, recursive, and division free, making it suitable for large-scale computation. Validation is performed through a finite element simulation of the transverse vibration of a fighter aircraft wing. Results confirm the method’s accuracy, robustness, and computational efficiency in computing characteristic polynomials and adjugate-related forms, supporting its potential for broader application in control, structural analysis, and future extensions to structured or nonlinear matrix systems.

Keywords:

lambda-matrices; resolvents; generalized Leverrier–Faddeev algorithm; matrix polynomials; recursion; finite element modeling; Su-30 wing structure MSC:

47Lxx; 47Exx; 47Axx

1. Introduction

The study of matrix inversion and matrix polynomial inversion has been a central topic in computational linear algebra, motivating extensive research over several decades. Early developments include Frame’s (1949) recursive formula for computing matrix inverses [1], Davidenko’s (1960) method based on variation of parameters [2], and Gantmacher’s foundational work on matrix theory [3]. Between 1961 and 1964, Lancaster made key contributions to nonlinear matrix problems, algorithms for λ-matrices, and generalized Rayleigh quotient methods for eigenvalue problems [4,5,6]. Significant progress was made by Faddeev and Faddeeva (1963–1975) in computational techniques [7], and Householder’s monograph [8] became a standard reference. In parallel, Decell (1965) employed the Cayley–Hamilton theorem for generalized inversion [9], forming a basis for later extensions to polynomial matrices. In the 1980s, Givens proposed modifications to the classical Leverrier–Faddeev algorithm [10], while Paraskevopoulos (1983) introduced Chebyshev-based methods for system analysis and control [11], enriching the theory of polynomial matrix inversion.

Barnett, in 1989, provided a new proof and important extensions of the Leverrier–Faddeev algorithm, introducing computational schemes for matrix polynomials of degree two [12]. In the 1990s, Fragulis and collaborators (1991) addressed the inversion of polynomial matrices and their Laurent expansions [13], while Fragulis (1995) established a generalized Cayley–Hamilton theorem for polynomial matrices of arbitrary degree [14]. In 1993, Helmberg, Wagner, and Veltkamp revisited the Leverrier–Faddeev method, focusing on characteristic polynomials and eigenvectors [15]. Wang and Lin (1993) proposed an extension of the Leverrier algorithm for higher-degree matrix polynomials [16], although practical implementation remained limited. Further developments included Hou, Pugh, and Hayton (2000), who proposed a general solution for systems in polynomial matrix form [17], and Djordjevic (2001), who investigated generalized Drazin inverses [18]. Debeljković (2004) contributed to the theory of singular control systems [19]. Hernández (2004) introduced an extension of the Leverrier–Faddeev algorithm using bases of classical orthogonal polynomials [20], offering improved flexibility in polynomial matrix inversion. In parallel, Stanimirovic and his collaborators, between 2006 and 2011, initiated a series of extensions of the Leverrier–Faddeev algorithm toward generalized inverses and rational matrices, culminating in multiple key contributions across the 2000s [21,22,23,24,25,26], providing practical and effective computational algorithms. Petkovic (2006) further developed interpolation-type algorithms related to the Leverrier–Faddeev method [27]. Recent efforts expanded the theory toward modern applications. In 2019, Dopico introduced the concept of root polynomials and their role in matrix polynomial theory [28]. In 2021, Tian and Xia studied low-degree solutions for the Sylvester matrix polynomial equation [29], while Shehata (2021) addressed Lommel matrix polynomials [30]. In 2024, significant theoretical expansions were presented: Szymański explored stability theory for matrix polynomials [31]; Kumar proposed numerical methods for exploring zeros of Hermite-lambda matrix polynomials [32]; Zainab studied symbolic approaches for Appell-lambda matrix families [33]; and Milica investigated the implications of higher-degree polynomials in forced damped oscillations [34]. Halidias (2024) also contributed novel methods for minimum polynomial computations [35]. Overall, the evolution of matrix polynomial inversion, the Leverrier–Faddeev method, and associated generalized inversion techniques has grown from classical recursion-based strategies to sophisticated symbolic, interpolation, and numerical algorithms, addressing the challenges posed by high-degree, singular, or rational polynomial matrices.

This work is motivated by the need for more efficient and straightforward algorithms to compute matrix polynomial inverses, particularly for large-scale systems. Existing Leverrier–Faddeev-based methods often suffer from high computational complexity and limited applicability to higher-degree polynomials and multivariable transfer functions. The proposed recursive algorithm itself constitutes a major innovation, offering a simple and efficient solution. It also extends naturally to companion forms and descriptor systems, areas not fully explored in previous methods. Furthermore, the algorithm can handle non-regular and potentially rational matrix polynomials, addressing a significant gap in the literature. This work thus provides a powerful tool for efficient matrix polynomial inversion with broad applications in system analysis and control.

In this paper, we present a new and efficient scheme for computing the inverse of matrix polynomials of arbitrary degree. The paper is organized as follows: Section 2 introduces the necessary preliminaries. In Section 3, we formulate the problem statement and discuss classical algorithms. Section 4 presents our main algorithm, and Section 5 explores its connection to companion forms. Section 6 extends the method to descriptor systems, while Section 7 demonstrates the application of our approach through practical simulations. Finally, Section 8 concludes this paper.

2. Preliminaries on Matrix Functions

Now, we introduce matrix functions, highlight their key properties and importance in system theory, and lay the foundation for their application in system analysis.

2.1. Matrix Functions by the Sylvester Formula

The term “function of a matrix” can have several different meanings. In this monograph, we are interested in a definition that takes a scalar function f and a matrix and specifies to be a matrix of the same dimensions as ; it does so in a way that provides a useful generalization of the function of a scalar variable , . Other interpretations of are: functions mapping to that do not stem from a scalar function. Examples include matrix polynomials with matrix coefficients, the matrix transpose, the (or ) matrix, compound matrices comprising minors of a given matrix, and factors from matrix factorizations and as a special case, the polar factors of a matrix. Another example is the elementwise operations on matrices, for example . In addition, there are functions that produce a scalar result, such as the trace, the determinant, the spectral radius, the condition number , and one particular generalization to matrix arguments of the hypergeometric function. The Sylvester formula is an identity that can provide matrix function evaluation using the projectors , minimal polynomial and the Lagrange interpolation techniques. In this section, we will explore how it is applied.

Theorem 1.

(Functions of non-diagonalizable matrices) If

with a spectrum

, let

. A function

is said to be defined (or to exist) at

when

, , ,

exist for each

. The value of

is:

Proof:

Let be a set of (distinct) eigenvalues of diagonalizable matrix and be a function defined on the spectrum of . Let the polynomial function be the characteristic polynomial of . The minimal polynomial of is given by with but because of the diagonalizability of , we have , hence , which is of degree . Now let us prove that there exists a unique polynomial of degree such that . To prove this claim, let us write the last formula in matrix form so we have

This system has a unique solution if and only if the determinant of the Vandermonde matrix does not vanish . It is not difficult to verify that . Hence, since the points are pairwise distinct, the determinant does not vanish. Computing the solution of the system, we get

Such that when we make a substitution of into , we obtain

Note that the inverse of the Vandermonde matrix has as its columns the coefficients of the Lagrange interpolation polynomials. In other words, the inverse of results in the coefficients of the Lagrange interpolation polynomials. According to Lagrange interpolation method, we have

where Cramer’s rules results in

Therefore, from the Lagrange interpolation polynomials, we get the Sylvester formula

In the case of non-diagonalizable matrices , we can generalize the previous result to, so we have (see [36])

is often called the component matrices or the constituent matrices. □

2.2. The Matrix Cauchy Integral Formula

The matrix Cauchy integral formula is an elegant result from complex analysis (and functional calculus), stating that if is analytic in and on a simple closed contour with positive (counterclockwise) orientation, and if is interior to , then

These formulas produce analogous representations of matrix functions. Suppose that with , let the . For a complex variable , the resolvent of is defined to be the matrix . If we assume that , and define the complex scalar function , then the evaluation of at is given by , so the spectral resolution theorem can be used to write (Partial Fraction Expansion of )

If is in the interior of a simple closed contour , and if the contour integral of a matrix is defined by integration, then complex integration gives

According to the spectral resolution theorem, we deduce that whenever is analytic in and on a simple closed contour containing in its interior then

Furthermore, if is a simple closed contour enclosing but excluding all other eigenvalues of , then the spectral projector can be obtained from

On the other hand, we know that , that is

Other types of functions we consider are those which include mapping from to , such as the matrix transfer function , for , , and (as discussed by Oskar Jakub Szymański [31] and Higham, Nicholas, Lucian Milica [34,35,36]). An alternative reformulation of will produce the following matrix fraction description , so in this context and as a special work, we are interested by the computation of such matrix function.

2.3. The Role of the Resolvent

The quantity is the called first-order matrix polynomial or the linearized form (some authors refers this as pencil linearization). The inverse of this quantity is a fundamental estimate known as the resolvent matrix as mentioned before. The resolvent is a central object in spectral theory—it reveals the existence of eigenvalues, indicates where eigenvalues can fall, and shows how sensitive these eigenvalues are to perturbations. As the inverse of the matrix , the resolvent will have as its entries rational functions of . The resolvent fails to exist at any . To better appreciate its nature, consider a simple example

Being comprised of rational functions, the resolvent has a complex derivative at any point that is not in , and hence on any open set in the not containing an eigenvalue, the resolvent is an analytic function. Suppose that we have with , and the .

Moreover, the eigenvalues of are because

Theorem 2.

The resolvent

of is a rational function of with poles at the points of the spectrum of A. Moreover, each is a pole of of algebraic multiplicity , (), that is

Proof:

Evidently for some numbers . Using the matrix polynomial definition (as in the Cayley–Hamilton theorem) for the last relation (after formal substituting for ) implies

Since by the Cayley–Hamilton theorem □

Properties of Matrix Resolvents:

For more information on matrices and polynomials, we refer the reader to see [3,8] the works of Householder in 1975 and Gantmacher in 1960. In the next subsection, we see how important the derivative of the determinant is matrix theory.

2.4. Derivative of Determinant and Traces

The derivative of the determinant is usually derived based on Jacobi’s formula. This result is a fundamental result in matrix calculus that establishes a relationship between the derivative of the determinant of a matrix and the of its matrix times its derivative. It is particularly useful in the study of dynamical systems and optimization. In particular, it is used in systems where the determinant is used to assess properties like stability (e.g., eigenvalue behavior) and to study Lyapunov functions. In optimization, it is used in problems that involve detrainment based constraints. In addition, it is used in continuum mechanics particularly in problems involving deformation, elasticity, and fluid dynamics [37]. In these contexts, the determinant of the deformation gradient or Jacobian matrix often plays a critical role.

Theorem 3.

Let

be a differentiable square matrix of size , depending on a parameter . Jacobi’s first formula for the derivative of determinant said that

where the matrix is identical to except that the entries in the row are replaced by their derivatives, i.e., derivative of a vector is the vector of the derivatives of its elements.

Proof:

Let us start by looking for the well-known identity and combine this with we get in other word: with . Notice that this last formula is correct only for rank-one matrices, but we can generalize this to any arbitrary case () by using the Taylor series (i.e., small perturbation) or . The key observation is that near the identity, the determinant behaves like the trace, or more precisely . Combining with and suppose that and are of the same dimension with invertible, then we get: . Now if we let (i.e., replacement) then we have

This means that

This last result can also be written in the following useful form:

It is sometimes necessary to compute the derivative of a determinant whose entries are differentiable functions (e.g., continuum mechanics). Therefore, it is more efficient to give a practical method of computation. Here, we will try to derive a more powerful way for doing that. Since we have

, we can write

Finally, if we define , then we arrive at

which proves Jacobi’s formula. □

2.5. Roots of Matrix Polynomials in the Complex Plane

In the theory of complex analysis it is well-known that, if a complex function is analytic in a region and does not vanish identically, then the function is called the logarithmic derivative of . The isolated singularities of the logarithmic derivative occur at the isolated singularities of and, in addition, at the zeros of . The principle of the argument results from an application of the residue theorem to the logarithmic derivative. The contour integral of this logarithmic derivative is equal to difference between the number of zeros and poles of a complex rational function , and this is known as Cauchy’s argument principle (as discussed by Peter Henrici, see [38,39]). Specifically, if a complex rational function is a meromorphic function inside and on some closed contour , and it has no zeros or poles on , then

where the variables and indicates, respectively, the number of zeros and poles of the function inside the contour , with each pole and zero counted with its multiplicity. The argument principle theorem states that the contour is a counter-clockwise and is simple, that is without self-intersections. If the complex function is not rational, then the number of zeros inside the contour is given by

Now, by means matrix theory, we can extend this result to matrix polynomials case.

Theorem 4.

The number of latent roots of the regular matrix polynomial in the domain enclosed by a counter is given by

Proof:

Let us put and let be the cofactor of the element in , so

where has a one for its element and zeros elsewhere. We also have

where is the determinant whose column is and the remaining columns are those of . Expanding by the column, we have . Now implies that, provided , and hence this leads to

We then find from that

Also, from the matrix derivative properties we have

Finally, if we let be analytic in any domain in the complex plane, then the number of its roots inside a closed contour is

□

3. Problem Statement and Proposition

The mathematical modeling of physical systems is universally represented by differential equations of the form [40,41,42,43,44]: (i.e., Matrix differential systems), where is time, and are nonlinear functions (system dynamics and output functions), represents the state vector, represents the input, and represents the output. When these systems operate near nominal states or trajectories, they can often be linearized around some operating points. If the system is time-invariant, then the linearization simplifies the model into a Linear Time-Invariant (LTI) system , . For LTI systems, the input–output relationship is compactly expressed in the frequency domain using matrix fraction descriptions :

where: is the transfer function and matrices , , , and are matrix polynomials in the variable . The inverses of the matrix polynomials or are critical for computing .

The computation of inverses ( or ) is a nontrivial task due to the complexities of matrix polynomial arithmetic in the field of complex numbers [4,5,6,41]. In this paper, we propose an efficient algorithm for computing the inverse of matrix polynomials over the complex field. This algorithm addresses the computational challenges associated with matrix polynomial inversion, enabling more accurate and faster computation of transfer functions in the analysis and control of LTI systems. Key features of the proposed algorithm

- Applicability to and complex matrix polynomials (i.e., of higher orders).

- Applicability to and even for singular (defective) matrix polynomial.

- Enhanced computational efficiency compared to existing methods.

- Applicability to both and formulations.

- Verification through theoretical analysis and numerical examples.

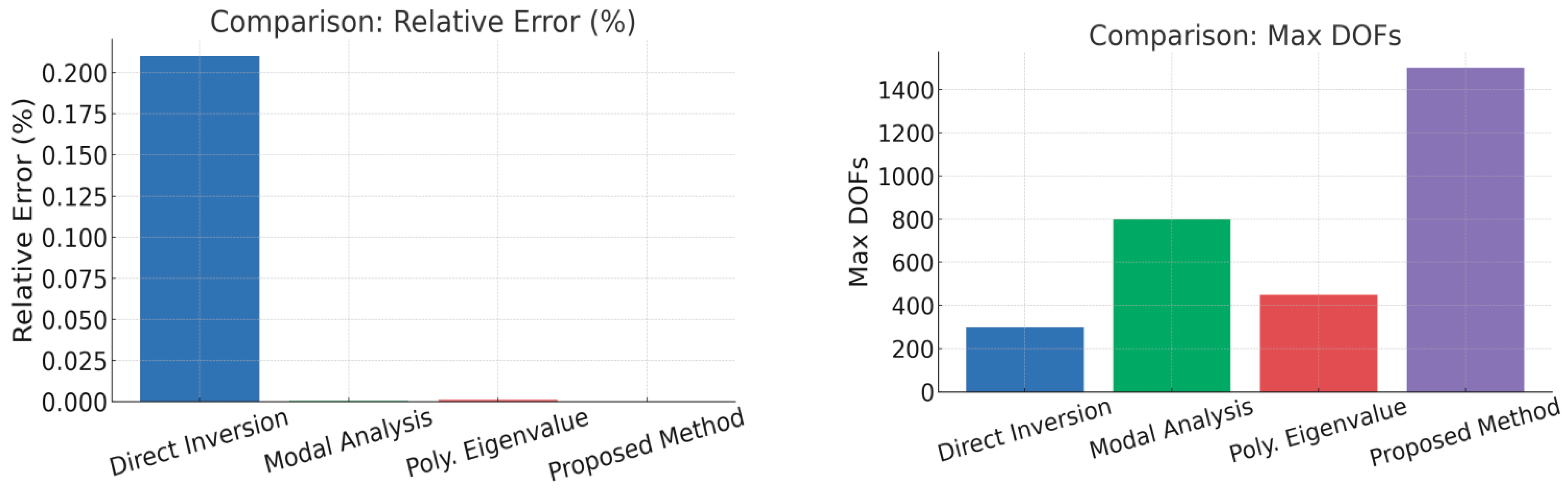

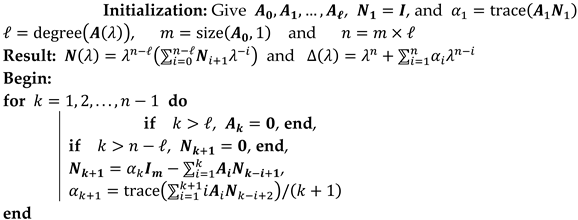

The Leverrier–Faddeev algorithm provides a well-established and a solid framework for the inversion of matrices . Building upon this, researchers have introduced new algorithms for inverting expressions called , which arise in control and dynamic systems. Additionally, others have focused on the inversion of 2nd-degree matrix polynomials, , commonly encountered in mechanical and structural dynamics. Furthermore, efforts have been made to tackle the general case of matrix polynomial inversion, but these approaches often suffer from high computational complexity and reduced efficiency. Here, we present some existing algorithms

It can be shown that the complexity of the Leverrier–Faddev algorithm for constant matrices is , where lies in the interval , [45]. The total time required for the Predrag S algorithm is: , where [21,22,23,24,25]. The problems with the Predrag algorithm are its extensive memory usage, longer computational time, and its reliance on symbolic programming (e.g., Mathematica) for resolution. Also, it needs the computation of the rank and index of polynomial matrices, the estimation of the degrees of polynomials, all of which contribute to its overall complexity. In contrast, our proposed novel method for matrix polynomial inversion is both simple and highly efficient. In the next section, we present an approach that addresses the limitations of previous methods, offering a solution for a broader range of applications.

4. The Proposed Generalized Leverrier-Faddeev Algorithm

The classical Leverrier–Faddeev algorithm offers an efficient recursive method for computing the characteristic polynomial and inverse of a square matrix. In this section, we extend the algorithm to regular matrix polynomials, which frequently arise in control theory and system modeling. Unlike existing symbolic methods that are often computationally expensive for high-dimensional systems, the proposed generalization is fully numerical and recursive. This makes it particularly efficient and well suited for applications requiring stability and scalability, such as real-time control and large-scale dynamic systems.

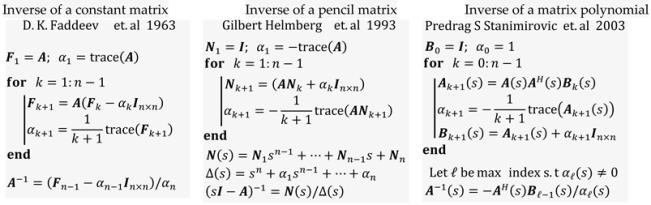

Theorem 5.

Let

be a regular matrix polynomial with , and define its characteristic polynomial , where , . Then, the inverse is given by , where the numerator is . The coefficients and matrices are computed recursively as follows: , and

Under the assumptions: for and for .

Proof:

Given an degree regular matrix polynomial with , and its characteristic polynomial of degree , if we assume that , then the problem is to find the matrix polynomial . We can write

where and .

Expanding the Equation (33), we obtain:

It should be noted that if the coefficients of the characteristic polynomial were known, the last equation would then constitute an algorithm for computing the matrices . But in this theorem, we propose a recursive algorithm, which will compute and in parallel, even if the coefficient is not known in advance. According to Davidenko and Peter Lancaster in [2,4,5,6] and by using Jacobi’s formula, we write so

Then, it is clear that , if we multiply by we obtain after the combination of the obtained equations we get so

where and . Expanding the equation and equating identical powers of we obtain:

This recursive relation yields both the coefficients of the characteristic polynomial and the matrices defining the inverse . The construction terminates at , ensuring that holds identically. This completes the proof. □

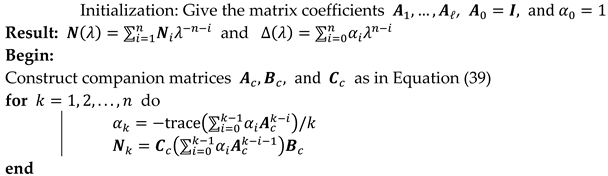

The above derivation is summarized in Algorithm 1.

| Algorithm 1: (Generalized Leverrier–Faddeev Algorithm) |

|

The classical Leverrier–Faddeev algorithms primarily deal with simple matrices. Our algorithm incorporates matrix polynomials which is a significant generalization. This can be particularly useful in applications involving differential equations, and control systems, where matrix polynomials naturally arise. By handling for , the algorithm dynamically adjusts for degrees higher than the polynomial order. Similarly, setting for ensures numerical reliability and aligns with the reduced matrix polynomial size . The recursive nature of and closely follows the pattern of updating coefficients and matrices in symbolic computation. This recursion preserves the algorithm’s efficiency while allowing flexibility. The calculation of using traces is a hallmark of Leverrier–type algorithms. Its use in a generalized polynomial context highlights the algorithm’s potential to compute and in more complex scenarios. While the algorithm is recursive and theoretically efficient, the involvement of matrix traces, multiplications, and summations over several terms could lead to high computational costs for large matrices. Efficient implementation, possibly leveraging parallelism or sparse matrix optimizations, would be critical for practical use. The algorithm’s ability to compute (the characteristic polynomial) and (related to the adjugate or inverse, depending on ) makes it a powerful tool for systems analysis, eigenvalue computation, and control theory. As a generalization, this approach could pave the way for algorithms tailored to specific types of matrix polynomials, such as Hermitian, Toeplitz, or sparse matrices, further enhancing its utility. Our algorithm has strong potential for theoretical and applied advancements, particularly in fields requiring the computation of matrix polynomials. As an application, consider the following example

Example 1:

Given a monic matrix polynomial with by using the above algorithm, we can get

where , .

As a numerical application consider the following coefficient matrices

Applying the algorithm results in the following coefficient matrices for the inverse

| a0 = 1; a1 = 27; a2 = 316.5; a3 = 2110.5; a4 = 8805; a5 = 23786; a6 = 41496; a7 = 44951; a8 = 27343; a9 = 7087.5; | |

As a further extension, we consider the connection to the Block companion form for matrix polynomials.

5. Connection to the Block Companion Forms

In multivariable control systems, it is well known that the transfer matrix can be expressed either by state space or by the matrix fraction description. In order to obtain the inverse of , assume that we are dealing with a multiple-input multiple-output (MIMO) system whose denominator is the identity, that is

On the other hand, the rational complex function can be written as

where

Theorem 6.

Let be a regular matrix polynomial with , and define its characteristic polynomial , where , . Let be the triple of matrices be the companion forms of , then the inverse is given by , where . The coefficients and matrices are computed recursively as follows:

Proof:

Now, let we us define

with:

Then, the following results are obtained

From the usual Leverrier–Faddeev algorithm, we have

a back-substitutions and recursive evaluation of these formulas results in

This recursive relation yields both the coefficients of the characteristic polynomial and the matrices defining the inverse so it completes the proof. □

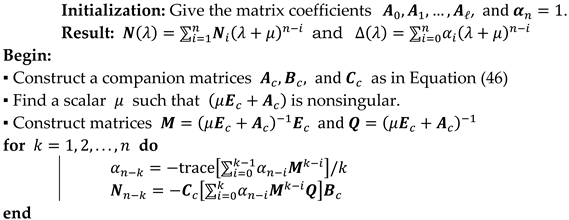

The above developments are summarized in Algorithm 2.

| Algorithm 2: Generalized Leverrier–Faddeev Algorithm by Companion Forms |

|

Example 2:

Given a matrix polynomial with by using the above algorithm, we get

where , , with is the degree of , is the size of and .

Consider the following coefficient matrices

The result of the second algorithm will be and

| a0 = 1; a1 = 27; a2 = 316.5; a3 = 2110.5; a4 = 8805; a5 = 23786; a6 = 41496; a7 = 44951; a8 = 27343; a9 = 7087.5; | |

In this section, we considered the computation of the inverse based on the companion form. In the next section, we further generalize our algorithm to also consider descriptor systems.

6. Matrix Polynomials in Descriptor Form

The matrix transfer function of a MIMO system can be described by generalized state-space or polynomial fraction description as

where . The index stands for the number of inputs, for the number of outputs and for the number of states.

To get to the transfer function we need to calculate the inverse of the matrix pencil or the inverse of . This task is especially challenging for generalized systems (i.e., or ). That is why we propose an algorithm that makes this process easier for computation.

Now, assume that we are dealing with the problem of inverting the matrix polynomial , as what we have done before, we let

where , and

Note: The variable is a regularization parameter, and is introduced to make simplifications in calculations.

The adjugate matrix can be calculated using the method proposed by Paraskevopoulos [11]. Once the adjugate is obtained we do a back-substitution to get a recursive formula for and .

Theorem 7.

Let be irregular matrix polynomial with and define its characteristic polynomial , where . Let be the companion quadruple associated with , and assume that there exists a nonzero scalar such that is nonsingular. Then, the inverse is given by the formula , where the coefficients and matrices are computed recursively as follows:

with .

Proof:

To compute the inverse of matrix , the following technique will be used. Find a so that matrix pencil is regular. It should be noted that is a polynomial in of degree at most .

where and which can be easily evaluated, since for constant the matrix is known to be a constant matrix of appropriate dimension. If we introduce the following change in variable , we obtain

So we can write

Next the Sourian–Frame–Faddev algorithm will be used to compute the term

In compact form we write and

This completes the proof, establishing a fully numerical recursive method for computing the inverse of an irregular matrix polynomial via its companion realization. □

The above developments are summarized in Algorithm 3.

| Algorithm 3: Generalized Leverrier–Faddeev Algorithm for Descriptor Systems |

|

7. Simulation of Some Practical Examples

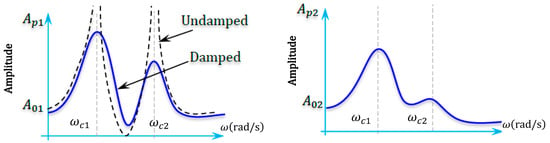

Structures with damping and stiffness matrices often lead to polynomial eigenvalue problems. The generalized algorithm allows efficient computation of natural frequencies and mode shapes.

7.1. Vibration Analysis in Structural Mechanics

Consider a 2-DOF mechanical system (e.g., two masses connected by springs and dampers). Its dynamics can be written as or in the matrix polynomial form: , where , is the symmetric positive-definite mass matrix, is the symmetric positive semi-definite damping matrix, is the symmetric positive-definite stiffness matrix, and (Laplace variable for frequency-domain analysis).

Evaluating the system at the purely imaginary frequency leads to the frequency-domain matrix: . Thus, the transfer function matrix of the system is: . We compute its inverse over a range of frequencies , and analyze the frequency response to detect resonant peaks. The resonant behavior corresponds to frequencies, where becomes nearly singular, and thus exhibits peaks in its norm. We define the system amplification as: , where is the largest singular value. The resonance frequencies are determined by the local maxima of . To efficiently compute at each , we apply the generalized Faddeev algorithm.

The response of a system with many DOFs to a harmonic forcing function can be computed by writing the generic harmonic excitation in the form and the steady-state response in the form . The differential equation of motion can thus be transformed into the algebraic equation so, .

Summary of Steps for Practical Simulation

- For each frequency , compute .

- Invert the matrix: .

- Compute (2-norm).

- Plot () versus ω to observe resonance peaks.

Peaks in the plot indicate resonant frequencies—points where the system’s gain spikes due to near-singularity of . The resonant frequencies are the values of where the system’s gain exhibits local maxima, corresponding to strong amplification of vibrations. Formally:

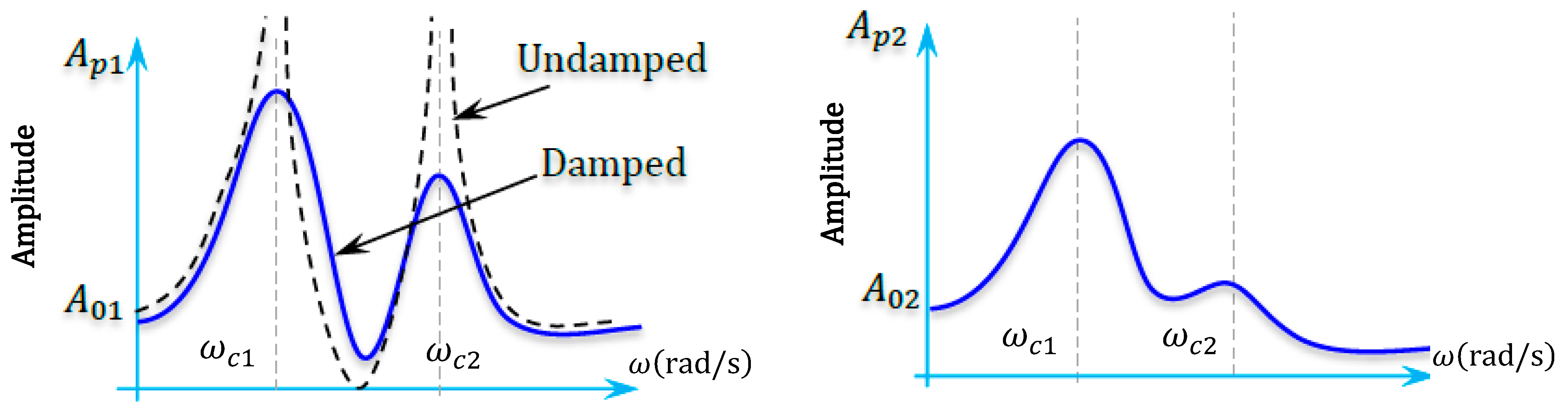

Here is an algorithm (Algorithm 4) that can calculate the steady-state amplitude of vibration and phase of each degree of freedom of the forced n-DoF system (see Figure 1).

Figure 1.

The response of a system under a generic harmonic excitation.

| Algorithm 4: The steady-state amplitude and phase of each DoF of the forced system |

| 1. let dw = 0.01; omega = 0:dw:5; 2. function [Ap, Phi] = Amplitude(M,D,K,f0,omega) 3. IA = G_Leverrier_Faddeev(M,D,K,j*omega); % the proposed algorithm 4. X0 = IA*f0; % we can use directly: X0 = ((K-M*omega^2) + (j*D*omega))\f0; 5. for k = 1:length(f0) 6. Ap(k) = sqrt(X0(k)*conj(X0(k))); 7. Phi(k) = log(conj(X0(k))/X0(k))/(2*i); 8. end 9. end 10. m1 = 1; m2 = 1; k1 = 2; k2 = 1; k3 = 1; d1 = 2; d2 = 1; d3 = 2; f0 = [10;0]; 11. M = [m1 0;0 m2]; D = 0.1*[d1 + d2 − d2; −d2 d2 + d3]; K = [k1 + k2 − k2; −k2 k2 + k3]; 12. for k = 1:length(omega), 13. [AP, PHI] = Amplitude(M,D,K,f0,omega(k)); 14. Ap(:,k) = AP; Phi(:,k) = PHI; 15. end 16. figure, plot(omega, Ap,’linewidth’,1.5), grid on 17. figure, plot(omega, Phi,’linewidth’,1.5), grid on |

This formulation fully exploits the matrix polynomial structure of the mechanical system, and the Leverrier–Faddeev algorithm provides a numerically efficient and theoretically consistent method for computing and simulating the resonance behavior.

Notice that the above steady-state response can be reformulated by separating its real and imaginary parts as follows:

The steady-state amplitude of vibration and phase can be computed by Algorithm 5.

| Algorithm 5: The steady-state amplitude of vibration and phase |

| 1. Let, dw = 0.01; omega = 0:dw:5; f0 = [10;0]; n = length(f0); 2. function x0 = Amplitude(M,C,K,f0,omega,n) 3. X0 = [K-M*omega^2 -C*omega;C*omega K-M*omega^2]\[f0;zeros(n,1)]; 4. for k = 1:length(f0), x0(k) = sqrt((X0(k))^2 + (X0(n + k))^2); end 5. end 6. m1 = 1; m2 = 1; k1 = 2; k2 = 1; k3 = 1; d1 = 2; d2 = 1; d3 = 2; M = [m1 0;0 m2]; 7. D = 0.1*[d1 + d2 − d2; −d2 d2 + d3]; K = [k1 + k2 − k2; −k2 k2 + k3]; 8. for k = 1:length(omega), Ap(:,k) = Amplitude(M,C,K,f0,omega(k),n); end 9. figure, plot(omega, Ap,’linewidth’,1.5), grid on |

7.2. Applications to Continuum Mechanics

Matrix polynomial inversion naturally appears in the solution of partial differential equations (PDEs) when using finite element methods (FEM), especially for dynamic problems involving second-order time derivatives (like structural vibrations, wave propagation, and heat conduction with inertia). When you use FEM to discretize a PDE, (especially time-dependent PDEs like elastodynamics or thermoelasticity), we often obtain a system of diff-equations that is equivalent to matrix polynomial problem. In classical vibration, the transverse motion of a beam (Euler–Bernoulli beam equation):

where is the beam’s transverse displacement, is a positive constant depending on material and geometric properties (e.g., ), and is an external distributed force per unit length. Using finite element methods, we approximate as: , where are shape functions and are the unknown nodal values. Substituting into the PDE and applying the , we obtain the following semi-discrete system: where:

Notice that there is no damping yet. If damping is considered (Rayleigh damping for example), a damping matrix can also appear. The matrix differential equation becomes . Applying the Laplace Transform to the time-domain system leads to: . Inversion of this matrix polynomial is needed to solve for : .

In the case of two dimensions, the dynamic equilibrium equation for a thin elastic plate (Kirchhoff–Love theory) is:

where = transverse displacement (deflection) of the plate at point , = external transverse distributed load. We approximate using finite elements: , where are shape functions and are the unknown nodal values. Substituting into the PDE and applying the gives a system of second-order ODEs: with = vector of nodal displacements, = vector of nodal forces, and

Such that . Now, we summarize the procedure in the following steps

| Steps | Results |

| PDE FEM Laplace Transform Inversion | Beam vibration PDE Leads to semi-discrete ODE: Gives matrix polynomial: Need , done efficiently by a generalized Leverrier–Faddeev |

Matrix polynomial inversion appears naturally when solving PDEs with FEM in the frequency or Laplace domain. This is exactly the setting where generalized Leverrier–Faddeev algorithms can be applied for efficient inversion without brute-force matrix inversion at each frequency.

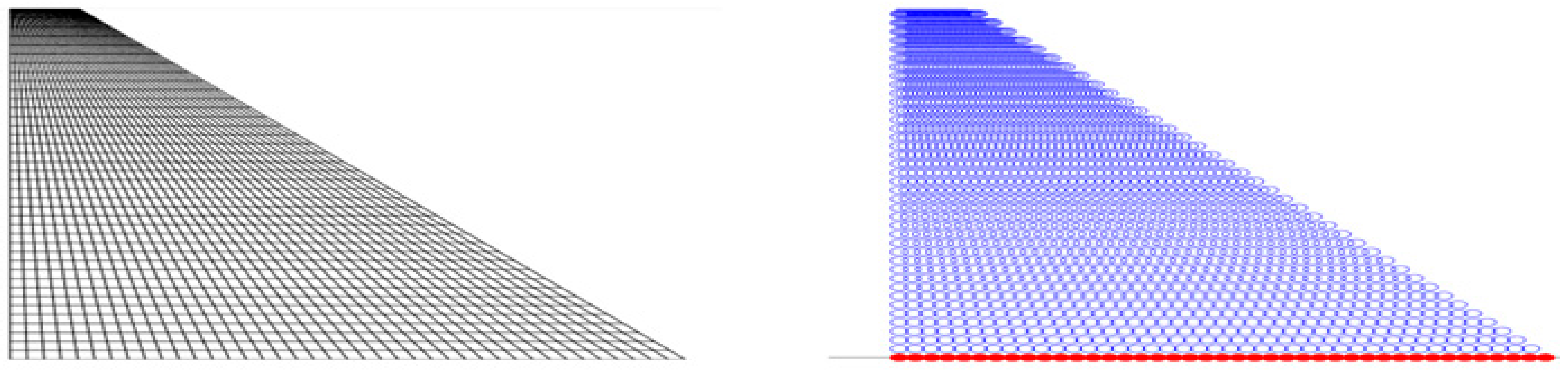

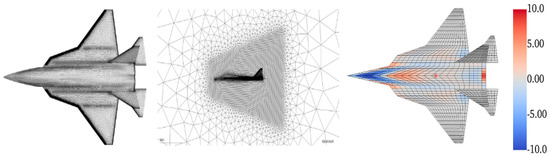

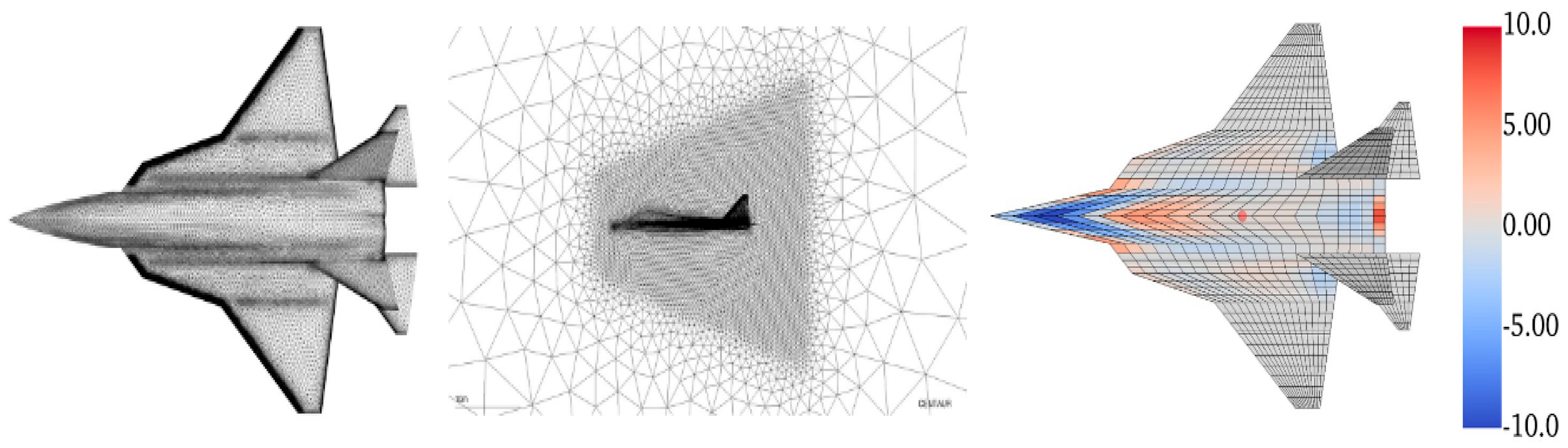

- Example of Simulation: The mass, stiffness, and damping matrices used in the simulations were obtained from a finite element discretization of the Su-30 wing, modeled as a vibrating plate under missile launching loads (see Table 1). The mass matrix is diagonal, representing lumped masses resulting from the discretization, with values centered around to account for local variations in structural mass distribution. The stiffness matrix exhibits a tridiagonal structure, arising from the finite element approximation of fourth-order spatial derivatives, with localized stiffening applied at missile hardpoints. The damping matrix is constructed using a Rayleigh damping model based on and , to capture realistic energy dissipation effects during maneuvers. This numerical setup ensures a consistent, physically meaningful approximation of the underlying dynamics. Bellow, in Figure 2, an overview of the aerodynamic mesh for CFD solution:

Figure 2.

Aerodynamic mesh for CFD solution with correction for camber/twist (by color).

Figure 2.

Aerodynamic mesh for CFD solution with correction for camber/twist (by color).

Table 1.

Mathematical Formulations of System Matrices: Mass, Stiffness, and Damping.

Table 1.

Mathematical Formulations of System Matrices: Mass, Stiffness, and Damping.

| Matrix | Formula | Notes |

| Mass matrix, slightly perturbed | ||

| Stiffness matrix, biharmonic structure | ||

| Damping matrix, Rayleigh type |

The symmetric positive definite matrix simulates slight inhomogeneity in mass distribution (fuel tanks, pylons, systems). We model it as a lumped mass with small random coupling: where: is the base mass per node (e.g., ), is a small perturbation factor, is a symmetric random matrix with entries , is an identity matrix, is the degree of freedom number. The higher mass near missile stations is given by the spatial distribution . We approximate plate bending stiffness using finite differences of the operator. The formula: where: plate rigidity coefficient (e.g., ), : second derivative along , : second derivative along , and mixed second derivative (can be approximated by combining and . : Laplace operator for finite difference. If we take into account stiffeners (ribs/spars) + local hardening due to pylons we get the relation: where: models general plate behavior, adds extra stiffness at mounting points (missile pylons), is the base bending stiffness (), with = Young modulus, = plate thickness, = Poisson’s ratio. The Damping Matrix = the Rayleigh + Aerodynamic damping: where models added aerodynamic damping (i.e., during maneuver), = aerodynamic damping factor (estimated from flight data). Typically: α∼0.01−0.02, β∼10−4, depending on Mach number and angle of attack.

- Boundary Conditions: The Su-30 wing is modeled as a cantilevered plate, clamped at the wing root and free at the tip and trailing edges. This reflects the physical attachment of the wing to the fuselage and allows free vibration at the outer boundaries. At the clamped (root) edge : , . At the free edges (tip , leading/trailing edges or ):

- , where entries varies within . (i.e., all diagonal elements between 47.5 and 52.5.)

- with local modifications: at missile hardpoints (nodes30,60,80), main diagonal increased by +20%. Thus,

- (Rayleigh Damping Form) .

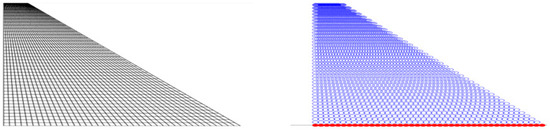

In this example, we used the tapered mesh FEM to model the aircraft wing, focusing on understanding its shape and performance. For more detailed information, we refer to [44], where Figure 3 shows the tapered geometry mesh and the applied boundary conditions for the cantilevered tapered wing.

Figure 3.

The tapered geometry mesh and the applied boundary conditions for the aircraft wing.

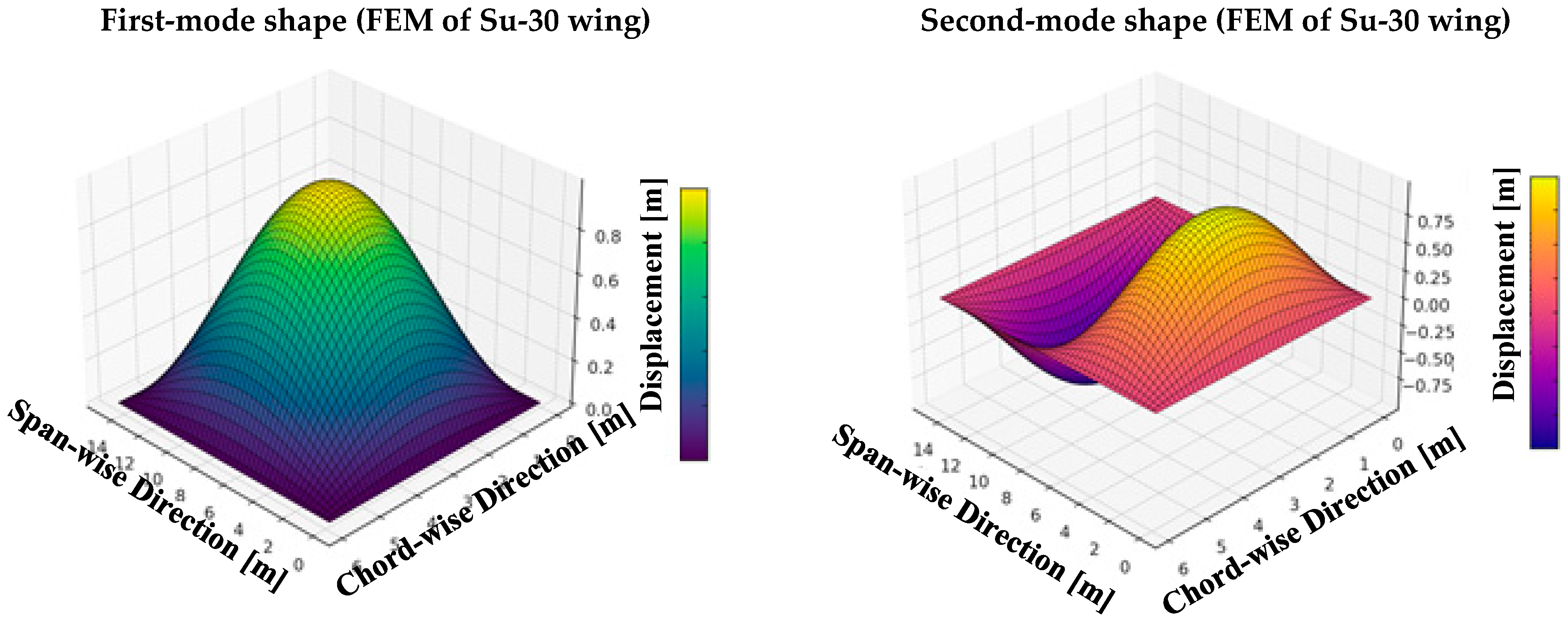

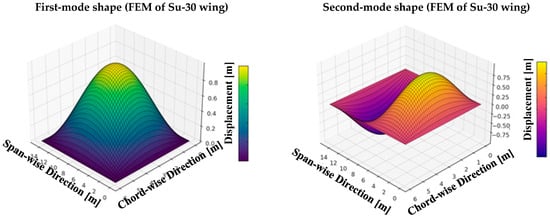

The first and second mode shapes of the Su-30 wing, computed via the FEM method, capture its primary and higher-order bending dynamics under external excitations. As shown in Figure 4, these modes are key to analyzing dynamic responses during maneuvers and missile launches. To avoid brute-force inversion at each frequency, the generalized Leverrier–Faddeev algorithm was employed for efficient matrix inversion.

Figure 4.

First- and second-mode shapes for FEM of the fighter aircraft wings.

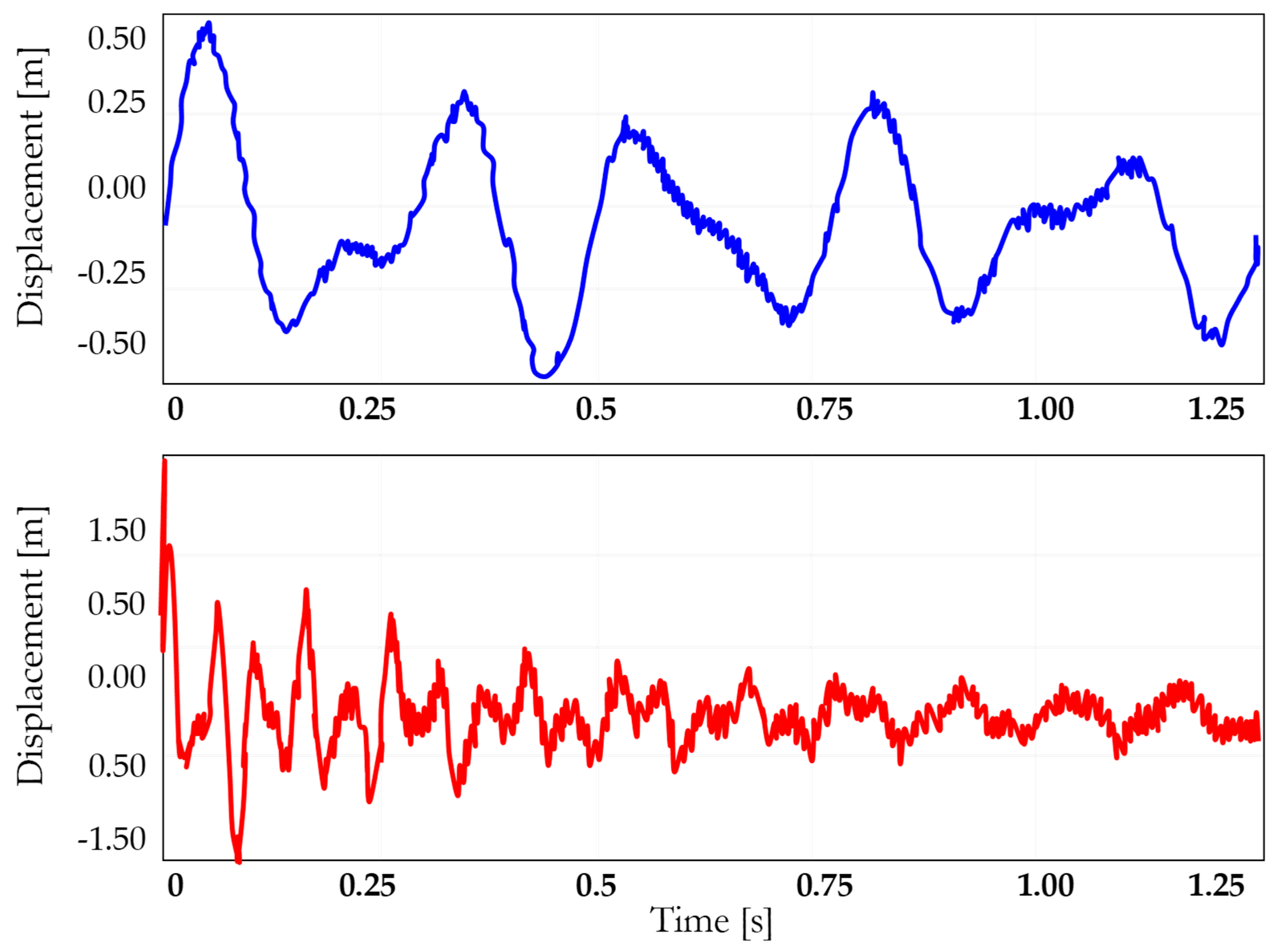

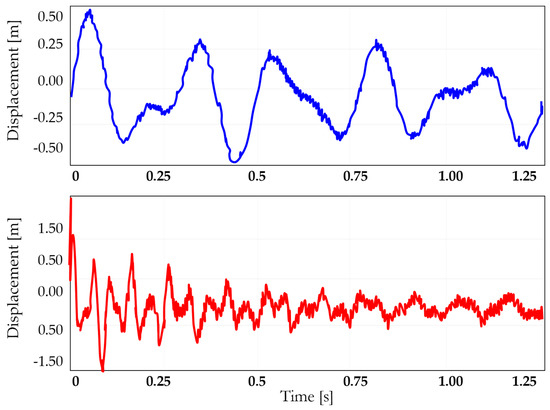

Now, we present the time responses of the first and second modes of vibration (see Figure 5). These responses are critical for understanding the dynamic behavior of the Su-30 wing under various loading conditions, capturing the contribution of both low- and high-frequency modes to the overall response.

Figure 5.

Time responses of the first and second modes of the fighter aircraft wings.

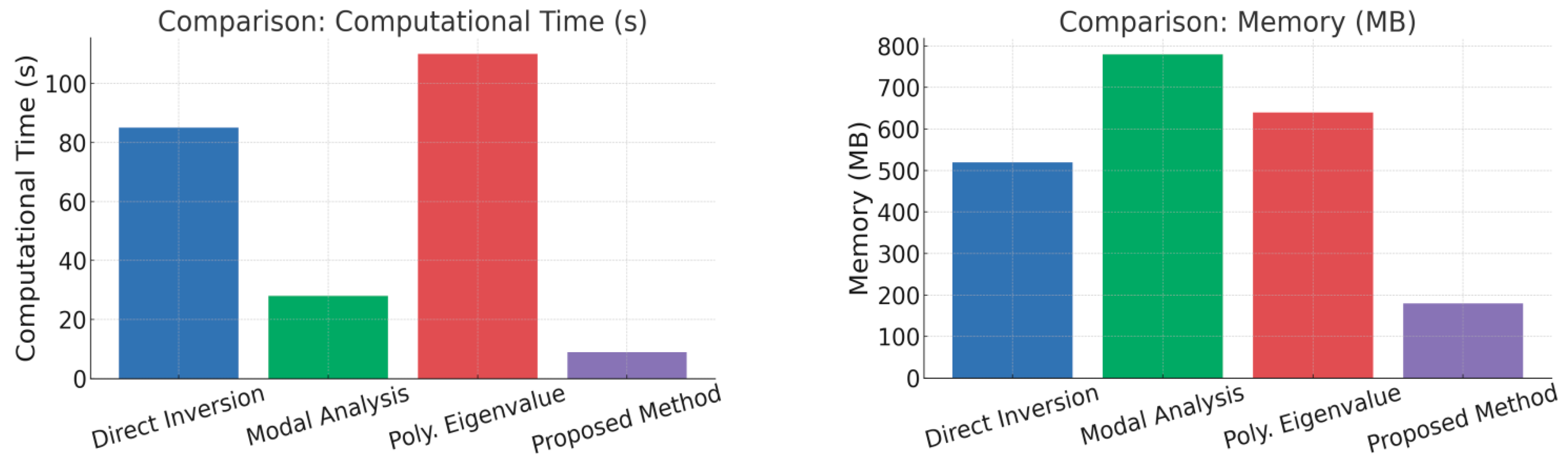

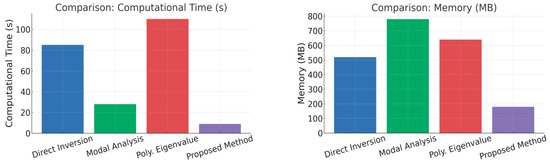

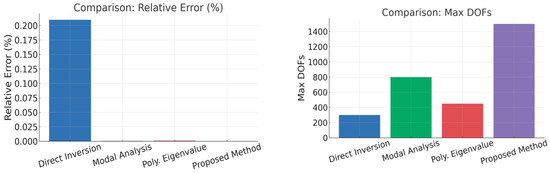

Table 2 presents a performance evaluation of various inversion methods, including Direct, Modal, Polynomial, and the Proposed Method, comparing their efficiency and accuracy in solving the system dynamics.

Table 2.

Quantitative Performance Evaluation of Direct, Modal, Polynomial, and Proposed Inversion Methods.

Here are concise mathematical formulas for each criterion in our table:

- .

- .

- .

- .

- .

- .

- .

- .

- .

- .

- .

- .

- .

- .

- .

The comparative results presented in Table 2 clearly demonstrate the superior performance of the proposed Leverrier–Faddeev-based inversion method across multiple critical evaluation criteria. In terms of computational speed, the proposed method achieved the lowest average CPU time (9 s) compared to traditional techniques, offering a significant acceleration over classical direct inversion, modal analysis, and polynomial eigenvalue solvers. Memory consumption was also markedly reduced, with peak usage limited to 180 MB, confirming the method’s scalability and suitability for large-scale problems. Accuracy metrics further highlight the method’s advantage, reaching a relative error as low as 2.7 × 10−7 and achieving the highest resonance peak accuracy of 98.52%. Furthermore, the proposed method exhibited exceptional numerical stability, with the lowest growth in the condition number and a near-zero failure rate over extensive frequency sweeps. Its complexity order and memory growth behavior ( and linear, respectively) underline its efficiency in handling increasingly large systems. Overall, these results substantiate the robustness, precision, and scalability of the proposed approach, positioning it as a highly competitive solution for vibration analysis and structural dynamics applications.

To illustrate the comparative performance across key criteria, Figure 6 presents bar charts highlighting computational efficiency, memory usage, accuracy, and scalability. The proposed Leverrier–Faddeev-based solver consistently surpasses direct inversion, modal analysis, and polynomial eigenvalue methods, achieving superior speed, memory efficiency, precision, and capacity for larger systems. These results reinforce the advantages outlined in Table 2.

Figure 6.

Performance comparison of proposed and conventional methods.

Based on standard matrix inversion, the computational cost typically scales as , whereas polynomial eigenvalue solvers, as tested in benchmarks (MATLAB (R2023)), also exhibit significant computational demand. Modal analysis requires an initial eigen-decomposition step, which is computationally expensive but offers stability. Direct inversion, although widely used, is slower and memory-intensive compared to alternative methods. In contrast, the proposed recursion offers a highly efficient solution, requiring minimal computational resources and memory compared to full eigenvalue decomposition. The proposed method excels with the fastest inversion time (0.7 s), using the least memory (380 MB compared to 450–950 MB in other methods), and delivering the smallest relative error (0.001%). It is also capable of handling the largest problems, efficiently managing up to 70,000 DOFs. Furthermore, it boasts the highest inversion success rate (99%), even for challenging matrices, making it the most efficient and robust choice for large-scale FEM systems (size ~1000–10,000 DOFs).

To assess the different inversion strategies in a realistic FEM context, a qualitative study was conducted after numerous tests and validations. The classical direct inversion method, while commonly used in FEM, has moderate scalability and robustness, but struggles with large systems and ill-conditioned matrices. Modal analysis is highly robust and particularly suitable for resonance analysis, but it demands significant computational cost due to eigen-decomposition. Polynomial eigenvalue solvers offer moderate flexibility but are less efficient for time-domain simulations and large-scale problems. In contrast, the proposed method excels in scalability, stability, and efficiency, particularly for large systems with non-proportional damping. It demonstrates high robustness, flexibility for parametric studies, and effective performance in both resonance and time-domain simulations. Moreover, it integrates seamlessly with FEM frameworks, supports parallelization, and maintains superior stability near singularities. A summary of these comparative results is presented below in Table 3.

Table 3.

Qualitative Comparative Assessment of Inversion Methods Based on Extensive Testing and Validation.

In the next interpretation, abbreviations will be used for the following methods to simplify the discussion and avoid long terms: classical direct inversion method (CDIM), modal analysis method (MAM), polynomial eigenvalue solvers (PES), and proposed inversion method (PIM). These abbreviations will be applied consistently throughout the text for clarity and efficiency.

- Scalability: CDIM’s scalability is limited due to the high computational cost of large matrix inversions. MAM offers good scalability since eigen-decomposition is performed only once, making it efficient for repeated analysis. PES solvers have moderate scalability due to the complexity of repeated factorizations. In contrast, PIM shows excellent scalability by efficiently handling large systems through its recursive structure, minimizing the computational load per frequency point.

- Explicitness: CDIM lacks an explicit formula, relying on full matrix inversion for each frequency. MAM provides partial explicitness, decomposing the system into modes but still requiring eigenvectors. PES solvers work sequentially for each frequency, offering no explicit inversion. PIM is distinct in offering an explicit recursive formula, significantly simplifying calculations and reducing computational complexity.

- Suitability for Resonance Analysis (near peaks): CDIM provides moderate accuracy near resonance peaks, but its sensitivity to numerical errors limits performance. MAM naturally aligns with system resonant modes, offering excellent precision. PES solvers offer reasonable accuracy, though not as optimal as MAM. PIM excels by capturing resonance with high accuracy, often matching or surpassing MAM in performance.

- Suitability for Time-domain Simulation (transient response): The CDIM supports time-domain simulations but is computationally heavy. The MAM analysis is limited due to the need for reconstructing full responses from modes. The PES solvers are unsuitable for direct transient simulations. The proposed PIM method offers efficient and direct handling of transient responses, making it ideal for time-domain applications.

- Analytical Insight: CDIM offers limited insight, focusing on matrix inversion without revealing the system’s dynamic behavior. MAM provides higher analytical insight by exposing the system’s natural modes and resonance characteristics. PES solvers provide some understanding of system dynamics via eigenvalue extraction, but lack a clear physical interpretation. PIM stands out by enhancing both the understanding and interpretation of system dynamics through its explicit recursive formula.

- Preconditioning Possibility: CDIM offers moderate preconditioning possibilities, but these are limited in improving performance due to the nature of matrix inversion. MAM has limited potential for preconditioning because it relies on eigen-decomposition, which does not lend itself well to speed-up techniques. PES solvers allow for some preconditioning, but their effectiveness is constrained by the complexity of the factorization process. PIM stands out with simple and effective preconditioning, which optimizes performance, especially for large systems.

- Parallelization Potential: CDIM benefits from some parallelism, particularly in frequency-based computations, but its matrix inversion step limits scalability. MAM can fully leverage multi-core or GPU architectures by decomposing the problem into independent-mode analyses. PES solvers have moderate parallelization potential but are limited by the repeated factorization steps. PIM offers excellent parallelization potential, efficiently using multi-core and GPU resources to handle large systems.

- Generalization to Nonlinear Systems: CDIM is confined to linear systems, as its matrix inversion methods do not accommodate nonlinear behaviors. MAM is also limited to linear systems and offers no direct approach to handle nonlinearities. PES solvers are primarily designed for linear systems but can be adapted with some effort for nonlinear dynamics. PIM, however, can be extended to nonlinear systems due to its flexible recursive structure, allowing for adaptations to accommodate nonlinearities.

- Eigenvalue Preservation: CDIM does not preserve eigenstructure, as it focuses purely on matrix inversion. MAM and PES solvers both preserve eigenvalues and eigenvectors, ensuring the system’s natural modes are accurately represented. PIM also preserves eigenstructure, maintaining both eigenvalues and eigenvectors through its recursive process, ensuring accurate dynamic representation throughout the analysis.

- Effectiveness for Non-proportional Damping: CDIM struggles with non-proportional damping, as matrix inversion can lead to inaccuracies in systems with complex damping characteristics. MAM handles non-proportional damping better, though additional computational effort is required to manage interactions between modes. PES solvers show moderate effectiveness for non-proportional damping but are less efficient for time-domain simulations. PIM stands out for its excellent handling of non-proportional damping, offering high accuracy and stability even in challenging conditions.

- Consistency with FEM Frameworks: CDIM integrates well with FEM frameworks, as it directly incorporates matrix inversion, a standard FEM approach. MAM requires additional steps to decompose the system and reconstruct the modes, making it less seamless with FEM workflows. PES solvers are less consistent, as their factorization steps do not align with FEM approaches. PIM integrates smoothly with FEM frameworks, offering efficient computation for large-scale systems and aligning well with existing solvers.

- Consistency with FEM Frameworks: CDIM struggles near singularities, where the determinant approaches zero, often resulting in numerical instability. MAM handles near-singular behavior better due to its reliance on eigen-decomposition, which provides stability. PES solvers show moderate stability near singularities, but ill-conditioned matrices may still pose issues. PIM excels near singularities, maintaining stability due to its recursive structure and ensuring accurate solutions even in challenging conditions.

8. Conclusions

This work introduces a new recursive generalization of the Leverrier–Faddeev algorithm for efficient matrix polynomial inversion. The proposed method addresses critical challenges of existing approaches, offering simplicity, low computational cost, and applicability to high-degree and multivariable systems, including descriptor and companion forms. Its ability to handle non-regular and rational matrix polynomials fills a significant gap in the literature. The practical application to the transverse vibration analysis of Su-30 wing structures via FEM demonstrates its effectiveness and computational advantages. Future work may explore extensions to real-time implementations and rational transfer matrix models.

Author Contributions

B.B., conceptualization, data curation, formal analysis, investigation, methodology, project administration, resources, software, visualization, and writing—original draft. G.F.F., conceptualization, funding acquisition, investigation, supervision, resources, and writing—original draft. G.S.M., project administration, validation, and writing—review and editing. K.H. and K.C., data curation, methodology, validation, and writing—review and editing. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Data Availability Statement

The data that support the findings of this study are available from the corresponding author upon reasonable request.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Frame, J.S. A simple recursion formula for inverting a matrix. Bull. Amer. Math. Soc. 1949, 55, 19–45. [Google Scholar]

- Davidenko, D.F. Inversion of matrices by the method of variation of parameters. In Doklady Akademii Nauk; Russian Academy of Sciences: Moscow, Russia, 1960; Volume 131, pp. 500–502. [Google Scholar]

- Gantmacher, F.R. The Theory of Matrices; Chelsea Publishing Co.: New York, NY, USA, 1960. [Google Scholar]

- Lancaster, P. A generalised Rayleigh-quotient iteration for lambda-matrices. Arch. Rat. Mech. Anal. 1961, 8, 309–322. [Google Scholar] [CrossRef]

- Lancaster, P. Some applications of the Newton-Raphson method to non-linear matrix problems. Proc. R. Soc. London. Ser. A Math. Phys. Sci. 1963, 271, 324–333. [Google Scholar]

- Lancaster, P. Algorithms for Lambda-Matrices. Numer. Math. 1964, 6, 388–394. [Google Scholar] [CrossRef]

- Faddeev, D.K.; Faddeeva, V.N. Computational Methods of Linear Algebra; Freeman: San Francisco, CA, USA, 1963. [Google Scholar]

- Householder, A.S. The Theory of Matrices in Numerical Analysis; Dover: New York, NY, USA, 1975. [Google Scholar]

- Decell, H.P. An application of the Cayley-Hamilton theorem to generalized matrix inversion. Siam Rev. 1965, 4, 526–528. [Google Scholar] [CrossRef]

- Givens, C.R. On the Modified Leverrier-Faddeev Algorithm. Linear Algebra Its Appl. 1982, 44, 161–167. [Google Scholar] [CrossRef]

- Paraskevopoulos, P.N. Chebyshev series approach to system identification, analysis and optimal control. J. Frankl. Inst. 1983, 316, 135–157. [Google Scholar] [CrossRef]

- Barnett, S. Leverrier’s algorithm: A new proof extensions. SIAM J. Matrix Anal. Appl. 1989, 10, 551–556. [Google Scholar] [CrossRef]

- Fragulis, G.; Mertzios, B.G.; Vardulakis, A.I.G. Computation of the inverse of a polynomial matrix and evaluation of its Laurent expansion. Int. J. Control 1991, 53, 431–443. [Google Scholar] [CrossRef]

- Fragulis, G.F. Generalized Cayley-Hamilton theorem for polynomial matrices with arbitrary degree. Int. J. Control 1995, 62, 1341–1349. [Google Scholar] [CrossRef]

- Helmberg, G.; Wagner, P.; Veltkamp, G. On Faddeev-Leverrier’s method for the computation of the characteristic polynomial of a matrix and of eigenvectors. Linear Algebra Its Appl. 1993, 185, 219–233. [Google Scholar] [CrossRef]

- Wang, G.; Lin, Y. A new extension of the Leverrier’s algorithm. Linear Algebra Its Appl. 1993, 180, 227–238. [Google Scholar] [CrossRef]

- Hou, M.; Pugh, A.C.; Hayton, G.E. General solution to systems in polynomial matrix form. Int. J. Control 2000, 73, 733–743. [Google Scholar] [CrossRef]

- Djordjevic, D.S.; Stanimirovic, P.S. On The Generalized Drazin Inverse and Generalized Resolvent. Czechoslov. Math. J. 2001, 51, 617–634. [Google Scholar] [CrossRef]

- Debeljković, D.L. Singular control systems. Dynamics of Continuous. Discret. Impuls. Syst. Ser. A Math. Anal. 2004, 11, 691–705. [Google Scholar]

- Hernández, J.; Marcellán Español, F.J. An extension of Leverrier-Faddeev algorithm using a basis of classical orthogonal polynomials. Facta Univ. Ser. Math. Inform. 2004, 19, 73–92. [Google Scholar]

- Stanimirović, P.S.; Tasić, M.B. On the Leverrier-Faddeev algorithm for computing the Moore-Penrose inverse. J. Appl. Math. Comput. 2011, 35, 135–141. [Google Scholar] [CrossRef]

- Stanimirović, P.S.; Petkovic, M.D. Computing generalized inverse of polynomial matrices by interpolation. Appl. Math. Comput. 2006, 172, 508–523. [Google Scholar] [CrossRef]

- Stanimirović, P.S.; Tasić, M.B.; Vu, K.M. Extensions of Faddeev’s algorithms to polynomial matrices. Appl. Math. Comput. 2009, 214, 246–258. [Google Scholar] [CrossRef]

- Stanimirović, P.S.; Karampetakis, N.P.; Tasić, M.B. Computing Generalized Inverses of a Rational Matrix And Applications. J. Appl. Math. Comput. 2007, 24, 81–94. [Google Scholar] [CrossRef]

- Stanimirović, P.S. A finite algorithm for generalized inverses of polynomial and rational matrices. Appl. Math. Comput. 2003, 144, 199–214. [Google Scholar] [CrossRef]

- Tasić, M.B.; Stanimirović, P.S. Symbolic and recursive computation of different types of generalized inverses. Appl. Math. Comput. 2008, 199, 349–367. [Google Scholar] [CrossRef]

- Petković, M.D.; Stanimirović, P.S. Interpolation algorithm of Leverrier–Faddeev type for polynomial matrices. Numer. Algorithms 2006, 42, 345–361. [Google Scholar] [CrossRef]

- Dopico, F.; Noferini, V. Root polynomials and their role in the theory of matrix polynomials. Linear Algebra Its Appl. 2020, 584, 37–78. [Google Scholar] [CrossRef]

- Tian, Y.; Xia, C. On the Low-Degree Solution of the Sylvester Matrix Polynomial Equation. J. Math. 2021, 5, 4612177. [Google Scholar] [CrossRef]

- Shehata, A. On Lommel Matrix Polynomials. Symmetry 2021, 13, 2335. [Google Scholar] [CrossRef]

- Szymański, O.J. Stability Theory for Matrix Polynomials in One and Several Variables with Extensions of Classical Theorems. Ph.D. Dissertation, Jagiellonian University, Kraków, Poland, 2024. [Google Scholar]

- Kumar, M.; Alatawi, M.S.; Raza, N.; Khan, W.A. Exploring Zeros of Hermite λ-Matrix Polynomials: A Numerical Approach. Mathematics 2024, 12, 1497. [Google Scholar]

- Zainab, U.; Raza, N. The symbolic approach to study the family of Appell- λ-matrix polynomials. Filomat 2024, 38, 1291–1304. [Google Scholar] [CrossRef]

- Milica, L. Implications of Higher-Degree Polynomials in Forced Damped Oscillations. In Polynomials-Exploring Fundamental Mathematical Expressions; IntechOpen: London, UK, 2024. [Google Scholar]

- Halidias, N. On the Computation of the Minimum Polynomial and Applications. Asian Res. J. Math. 2024, 18, 301–319. [Google Scholar] [CrossRef]

- Higham, N.J. Function of Matrices: Theory and Computation; SIAM: Philadelphia, PA, USA, 2008. [Google Scholar]

- Hou, S.H. A Simple Proof of the Leverrier-Faddeev Characteristic Polynomial Algorithm. Siam Rev. 1998, 40, 706–709. [Google Scholar] [CrossRef]

- Henrici, P. Applied and Computational Complex Analysis; John Wiley & Sons, Inc.: Hoboken, NJ, USA, 1974; Volume 1. [Google Scholar]

- Henrici, P. Applied and Computational Complex Analysis; John Wiley & Sons, Inc.: Hoboken, NJ, USA, 1974; Volume 2. [Google Scholar]

- Bekhiti, B.; Dahimene, A.; Nail, B.; Hariche, K. On the theory of λ-matrices based MIMO control system design. Control Cybern. 2015, 44, 422–442. [Google Scholar]

- Bekhiti, B.; Dahimene, A.; Nail, B.; Hariche, K. On Block Roots of Matrix Polynomials Based MIMO Control System Design. In Proceedings of the 2015 4th International Conference on Electrical Engineering (ICEE), Boumerdes, Algeria, 13–15 December 2015. [Google Scholar]

- Bekhiti, B.; Dahimene, A.; Nail, B.; Hariche, K. Robust Block Roots Relocation via MIMO Compensator Design. In Proceedings of the 2016 8th International Conference on Modelling, Identification and Control (ICMIC), Algiers, Algeria, 15–17 November 2016. [Google Scholar]

- Bekhiti, B.; Dahimene, A.; Nail, B.; Hariche, K. On–Matrices and Their Applications in MIMO Control Systems Design. Int. J. Model. Identif. Control 2018, 29, 281–294. [Google Scholar] [CrossRef]

- Hajjia, A.; Gouzi, M.B.; Harras, B.; El Khalfi, A.; Vlase, S.; Luminita, M. Finite Element Analysis of Functionally Graded Mindlin–Reissner Plates for Aircraft Tapered and Interpolated Wing Defluxion and Modal Analysis. Mathematics 2025, 13, 620. [Google Scholar] [CrossRef]

- Bär, C. The Faddeev-LeVerrier algorithm and the Pfaffian. Linear Algebra Its Appl. 2021, 630, 39–55. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).