To Exit or Not to Exit: Cost-Effective Early-Exit Architecture Based on Markov Decision Process

Abstract

1. Introduction

1.1. Preliminaries on Early-Exit Architecture

1.2. Our Contributions

- We model the procedure in a typical early-exit model as an MDP formulation that makes sequential early-exit decisions at each exit point. This formulation allows us to design an early-exit criterion of the model that dynamically decides whether to exit or not to exit at each exit point in order to systematically achieve a goal specified as a cumulative reward in MDP.

- We propose a cost-effective early-exit architecture that can be applied to any type of early-exit model. The cost-effective early-exit algorithm in the architecture enables the early-exit model to balance the trade-off between accuracy and cost regardless of the environment, using reinforcement learning. Furthermore, it adaptively makes early-exit decisions to each input sample for prediction, considering the characteristics of the input sample.

- Through experimental results using a synthetic early-exit model, we verify that our proposed architecture addresses the trade-off according to the relative importance of computational cost compared with accuracy. Furthermore, we demonstrate via experiments using real datasets that it can do so effectively even in various practical environments, while the state-of-the-art baselines cannot.

1.3. Paper Structure

2. MDP Formulation for Cost-Effective Early Exiting

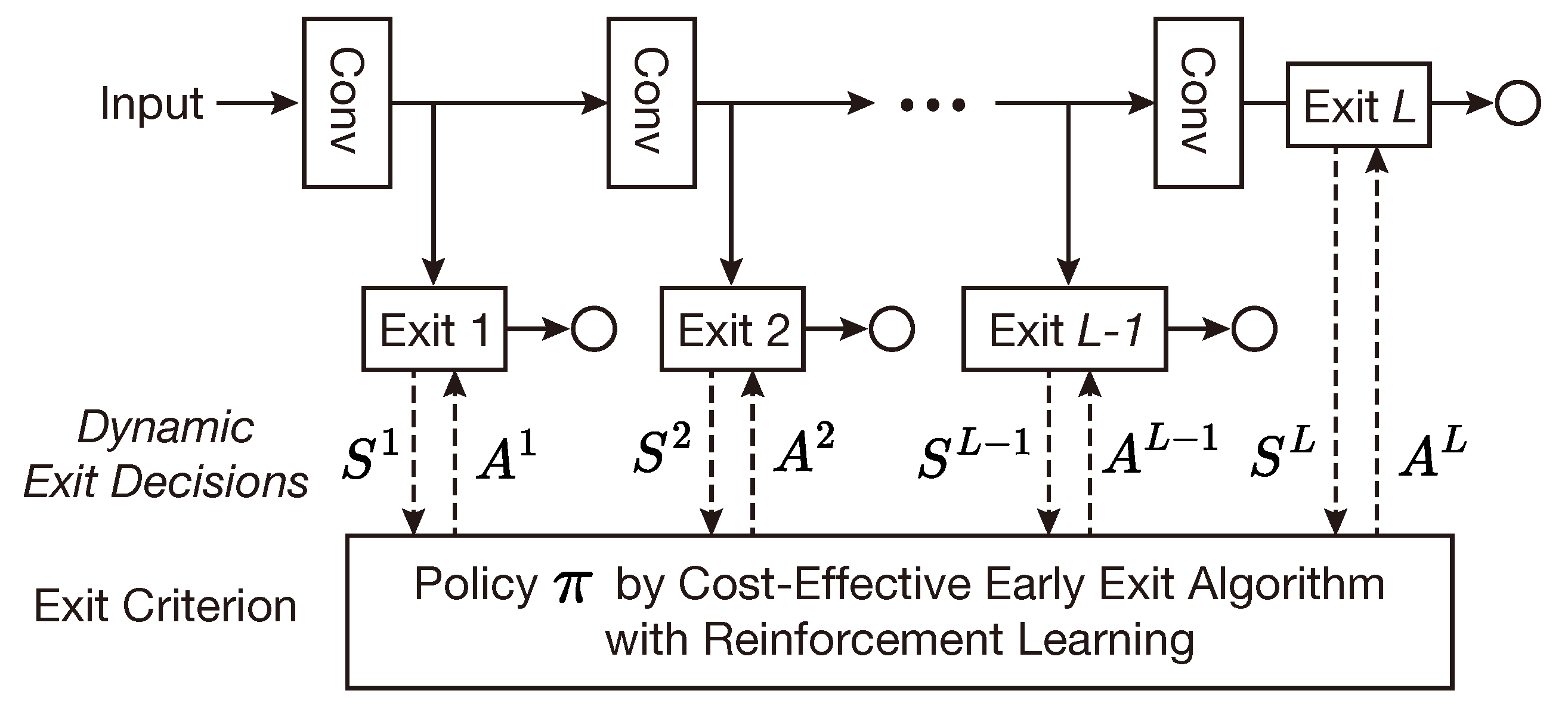

2.1. Deep Learning Model with Early-Exit Architecture

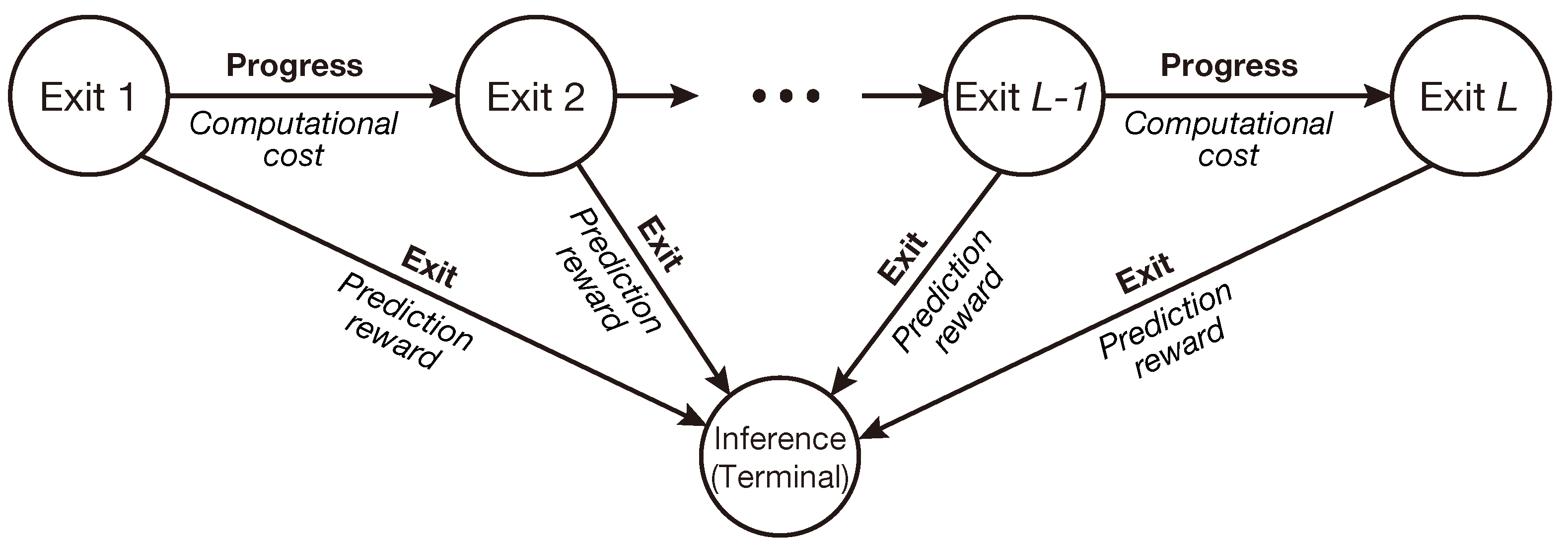

2.2. Design of Early-Exit Criterion Based on MDP

2.3. MDP-Based Cost-Effective Early-Exit Problem

3. Cost-Effective Early Exit with Reinforcement Learning

3.1. Cost-Effective Early-Exit Architecture with Deep Reinforcement Learning

3.2. Description of Cost-Effective Early-Exit Algorithm

| Algorithm 1: Cost-Effective Early-Exit Algorithm |

|

4. Experimental Result

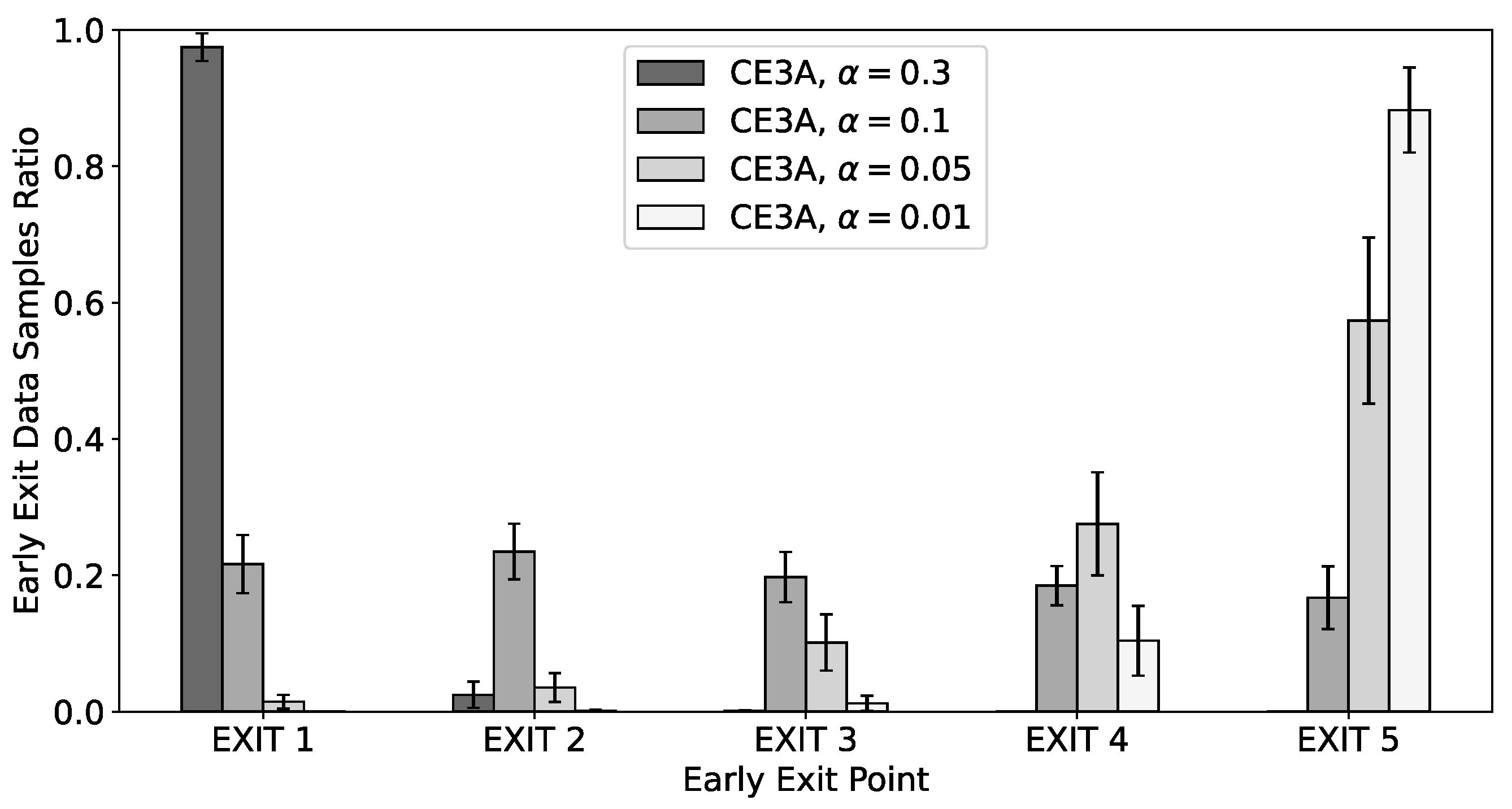

4.1. Toy Example Environment

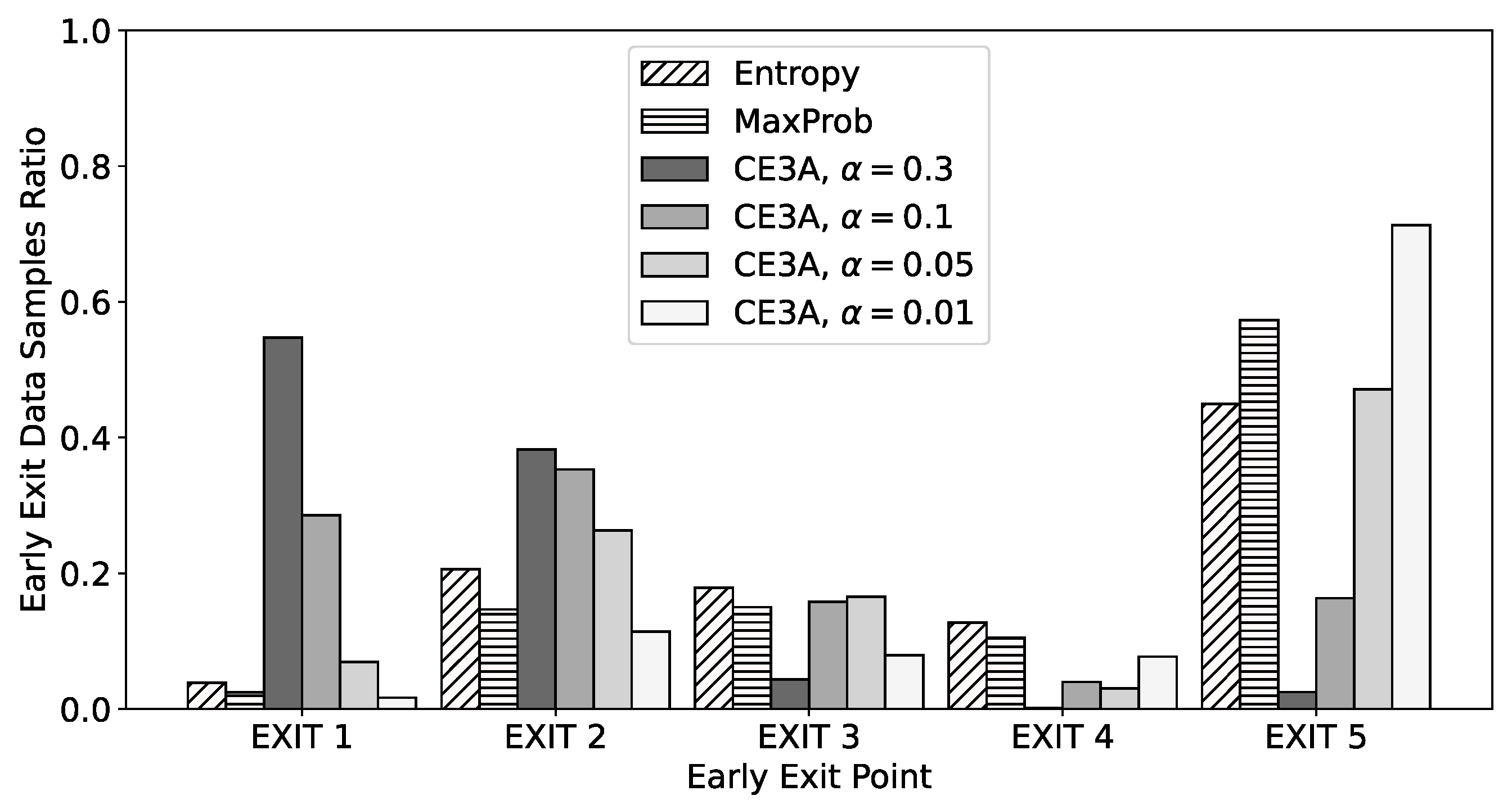

4.2. Real Dataset Environment with CIFAR-10

4.3. Real Dataset Environment with CIFAR-100

5. Conclusions and Future Work

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Pouyanfar, S.; Sadiq, S.; Yan, Y.; Tian, H.; Tao, Y.; Reyes, M.P.; Shyu, M.L.; Chen, S.C.; Iyengar, S.S. A survey on deep learning: Algorithms, techniques, and applications. ACM Comput. Surv. (CSUR) 2018, 51, 1–36. [Google Scholar] [CrossRef]

- Santana, L.M.Q.d.; Santos, R.M.; Matos, L.N.; Macedo, H.T. Deep Neural Networks for Acoustic Modeling in the Presence of Noise. IEEE Lat. Am. Trans. 2018, 16, 918–925. [Google Scholar] [CrossRef]

- Falcini, F.; Lami, G.; Costanza, A.M. Deep Learning in Automotive Software. IEEE Softw. 2017, 34, 56–63. [Google Scholar] [CrossRef]

- Dai, Y.; Wang, G. A deep inference learning framework for healthcare. Pattern Recognit. Lett. 2020, 139, 17–25. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Kim, Y.D.; Park, E.; Yoo, S.; Choi, T.; Yang, L.; Shin, D. Compression of Deep Convolutional Neural Networks for Fast and Low Power Mobile Applications. In Proceedings of the International Conference on Learning Representations (ICLR), Juan, Puerto Rico, 2–4 May 2016. [Google Scholar]

- Le, K.H.; Le-Minh, K.H.; Thai, H.T. BrainyEdge: An AI-enabled framework for IoT edge computing. ICT Express 2023, 9, 211–221. [Google Scholar] [CrossRef]

- Chen, J.; Ran, X. Deep learning with edge computing: A review. Proc. IEEE 2019, 107, 1655–1674. [Google Scholar] [CrossRef]

- Scardapane, S.; Scarpiniti, M.; Baccarelli, E.; Uncini, A. Why should we add early exits to neural networks? Cogn. Comput. 2020, 12, 954–966. [Google Scholar] [CrossRef]

- Laskaridis, S.; Kouris, A.; Lane, N.D. Adaptive Inference through Early-Exit Networks: Design, CHALLENGES and directions. In Proceedings of the International Workshop on Embedded and Mobile Deep Learning (EMDL), Virtual, 24 June 2021. [Google Scholar]

- Teerapittayanon, S.; McDanel, B.; Kung, H.T. BranchyNet: Fast Inference via Early Exiting from Deep Neural Networks. In Proceedings of the IEEE International Conference on Pattern Recognition (ICPR), Cancun, Mexico, 4–8 December 2016; pp. 2464–2469. [Google Scholar]

- Wang, M.; Mo, J.; Lin, J.; Wang, Z.; Du, L. DynExit: A Dynamic Early-Exit Strategy for Deep Residual Networks. In Proceedings of the IEEE International Workshop on Signal Processing Systems (SiPS), Nanjing, China, 20–23 October 2019; pp. 178–183. [Google Scholar]

- Liu, W.; Zhou, P.; Zhao, Z.; Wang, Z.; Deng, H.; Ju, Q. Fastbert: A self-distilling bert with adaptive inference time. arXiv 2020, arXiv:2004.02178. [Google Scholar]

- Xin, J.; Tang, R.; Lee, J.; Yu, Y.; Lin, J. DeeBERT: Dynamic early exiting for accelerating BERT inference. arXiv 2020, arXiv:2004.12993. [Google Scholar]

- Bonato, V.; Bouganis, C.S. Class-specific early exit design methodology for convolutional neural networks. Appl. Soft Comput. 2021, 107, 107316. [Google Scholar] [CrossRef]

- Savchenko, A. Fast inference in convolutional neural networks based on sequential three-way decisions. Inf. Sci. 2021, 560, 370–385. [Google Scholar] [CrossRef]

- Dong, R.; Mao, Y.; Zhang, J. Resource-Constrained Edge AI with Early Exit Prediction. J. Commun. Inf. Netw. 2022, 7, 122–134. [Google Scholar] [CrossRef]

- Bajpai, D.J.; Trivedi, V.K.; Yadav, S.L.; Hanawal, M.K. SplitEE: Early Exit in Deep Neural Networks with Split Computing. arXiv 2023, arXiv:2309.09195. [Google Scholar]

- Lee, C.; Hong, S.; Hong, S.; Kim, T. Performance analysis of local exit for distributed deep neural networks over cloud and edge computing. ETRI J. 2020, 42, 658–668. [Google Scholar] [CrossRef]

- Van Otterlo, M.; Wiering, M. Reinforcement Learning and Markov Decision Processes. In Reinforcement Learning: State-of-the-Art; Springer: Berlin/Heidelberg, Germany, 2012; pp. 3–42. [Google Scholar]

- Shani, G.; Heckerman, D.; Brafman, R.I.; Boutilier, C. An MDP-based recommender system. J. Mach. Learn. Res. 2005, 6, 1265–1295. [Google Scholar]

- Lu, Z.; Yang, Q. Partially observable markov decision process for recommender systems. arXiv 2016, arXiv:1608.07793. [Google Scholar]

- Ferrá, H.L.; Lau, K.; Leckie, C.; Tang, A. Applying Reinforcement Learning to Packet Scheduling in Routers. In Proceedings of the Innovative Applications Conference on Artificial Intelligence (IAAI), Acapulco, Mexico, 12–14 August 2003; pp. 79–84. [Google Scholar]

- Wei, Y.; Yu, F.R.; Song, M.; Han, Z. User Scheduling and Resource Allocation in HetNets With Hybrid Energy Supply: An Actor-Critic Reinforcement Learning Approach. IEEE Trans. Wirel. Commun. 2018, 17, 680–692. [Google Scholar] [CrossRef]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition. In Proceedings of the International Conference on Learning Representations (ICLR 2015), San Diego, CA, USA, 7–9 May 2015. [Google Scholar]

- François-Lavet, V.; Henderson, P.; Islam, R.; Bellemare, M.G.; Pineau, J. An introduction to deep reinforcement learning. Found. Trends Mach. Learn. 2018, 11, 219–354. [Google Scholar] [CrossRef]

- Achiam, J.; Knight, E.; Abbeel, P. Towards characterizing divergence in deep Q-learning. arXiv 2019, arXiv:1903.08894. [Google Scholar]

- Mnih, V.; Kavukcuoglu, K.; Silver, D.; Rusu, A.A.; Veness, J.; Bellemare, M.G.; Graves, A.; Riedmiller, M.; Fidjeland, A.K.; Ostrovski, G.; et al. Human-level control through deep reinforcement learning. Nature 2015, 518, 529–533. [Google Scholar] [CrossRef]

- Ladosz, P.; Weng, L.; Kim, M.; Oh, H. Exploration in deep reinforcement learning: A survey. Inf. Fusion 2022, 85, 1–22. [Google Scholar] [CrossRef]

- Wu, T.; Ding, X.; Zhang, H.; Gao, J.; Tang, M.; Du, L.; Qin, B.; Liu, T. Discrimloss: A universal loss for hard samples and incorrect samples discrimination. IEEE Trans. Multimed. 2024, 26, 1957–1968. [Google Scholar] [CrossRef]

| Methodology | Penalty | Accuracy | Cost | Cumulative Reward |

|---|---|---|---|---|

| No early exit | 0.3 | 90.1% | 1.200 | −0.299 |

| 0.1 | 0.400 | 0.501 | ||

| 0.05 | 0.200 | 0.701 | ||

| 0.01 | 0.040 | 0.861 | ||

| CE3A | 0.3 | 50.6% | 0.008 | 0.498 |

| 0.1 | 77.5% | 0.185 | 0.589 | |

| 0.05 | 88.9% | 0.168 | 0.721 | |

| 0.01 | 89.9% | 0.039 | 0.860 |

| Work | Methodology | Year | Work | Methodology | Year |

|---|---|---|---|---|---|

| [11] | Entropy-based threshold | 2016 | [15] | Maximum class probability-based threshold | 2021 |

| [12] | 2019 | [16] | 2021 | ||

| [13] | 2020 | [17] | 2022 | ||

| [14] | 2020 | [18] | 2023 |

| Methodology | Penalty | Accuracy | Cost | Cumulative Reward |

|---|---|---|---|---|

| Entropy [11,12,13,14] | 0.3 | 87.5% | 1.059 | −0.184 |

| 0.1 | 0.353 | 0.522 | ||

| 0.05 | 0.177 | 0.699 | ||

| 0.01 | 0.035 | 0.840 | ||

| MaxProb [15,16,17,18] | 0.3 | 87.8% | 1.083 | −0.205 |

| 0.1 | 0.361 | 0.517 | ||

| 0.05 | 0.180 | 0.698 | ||

| 0.01 | 0.036 | 0.842 | ||

| CE3A | 0.3 | 72.2% | 0.243 | 0.479 |

| 0.1 | 86.9% | 0.171 | 0.698 | |

| 0.05 | 88.0% | 0.097 | 0.783 | |

| 0.01 | 88.4% | 0.024 | 0.860 |

| Methodology | Penalty | Accuracy | Cost | Cumulative Reward |

|---|---|---|---|---|

| Entropy [11,12,13,14] | 0.3 | 67.8% | 0.823 | −0.145 |

| 0.1 | 0.274 | 0.404 | ||

| 0.05 | 0.137 | 0.541 | ||

| 0.01 | 0.027 | 0.651 | ||

| MaxProb [15,16,17,18] | 0.3 | 69.0% | 0.917 | −0.227 |

| 0.1 | 0.306 | 0.384 | ||

| 0.05 | 0.153 | 0.537 | ||

| 0.01 | 0.031 | 0.659 | ||

| CE3A | 0.3 | 48.4% | 0.172 | 0.312 |

| 0.1 | 59.8% | 0.144 | 0.454 | |

| 0.05 | 67.9% | 0.129 | 0.551 | |

| 0.01 | 69.2% | 0.034 | 0.658 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kim, K.-S.; Lee, H.-S. To Exit or Not to Exit: Cost-Effective Early-Exit Architecture Based on Markov Decision Process. Mathematics 2024, 12, 2263. https://doi.org/10.3390/math12142263

Kim K-S, Lee H-S. To Exit or Not to Exit: Cost-Effective Early-Exit Architecture Based on Markov Decision Process. Mathematics. 2024; 12(14):2263. https://doi.org/10.3390/math12142263

Chicago/Turabian StyleKim, Kyu-Sik, and Hyun-Suk Lee. 2024. "To Exit or Not to Exit: Cost-Effective Early-Exit Architecture Based on Markov Decision Process" Mathematics 12, no. 14: 2263. https://doi.org/10.3390/math12142263

APA StyleKim, K.-S., & Lee, H.-S. (2024). To Exit or Not to Exit: Cost-Effective Early-Exit Architecture Based on Markov Decision Process. Mathematics, 12(14), 2263. https://doi.org/10.3390/math12142263