Machine Learning Alternatives to Response Surface Models

Abstract

1. Introduction

2. Response Surface Methodology

3. Machine Learning Approaches

3.1. knn

3.2. CART

3.3. Ensemble Methods

3.4. Support Vector Machines

3.5. Neural Networks

3.6. Multidimensional Output Approaches

3.7. Hyperparameters and Their Tuning

3.8. Metrics Used to Compare the Models

- Root Mean Square Error,

- Mean Absolute Error,

- Mean Absolute Percentage Error,

- Determination Coefficient,

- Akaike Information Criterion, , where k is the number of parameters to be estimated in the model and L is the maximum likelihood function of the model

- The explained variance by the model is the proportion of the variance due to the factors,

- The Nash and Sutcliffe Efficiency is equivalent to the but uses absolute differences rather than quadratic,

- The agreement index d is a standardized measure of the degree of model prediction error,

- The average absolute deviations from a central point (this metric is defined and used in [8], but the name given by the authors does not seem appropriate for us as it is not in line with their definition),

4. Comparisons between RSM and ML Approaches

5. Experiments

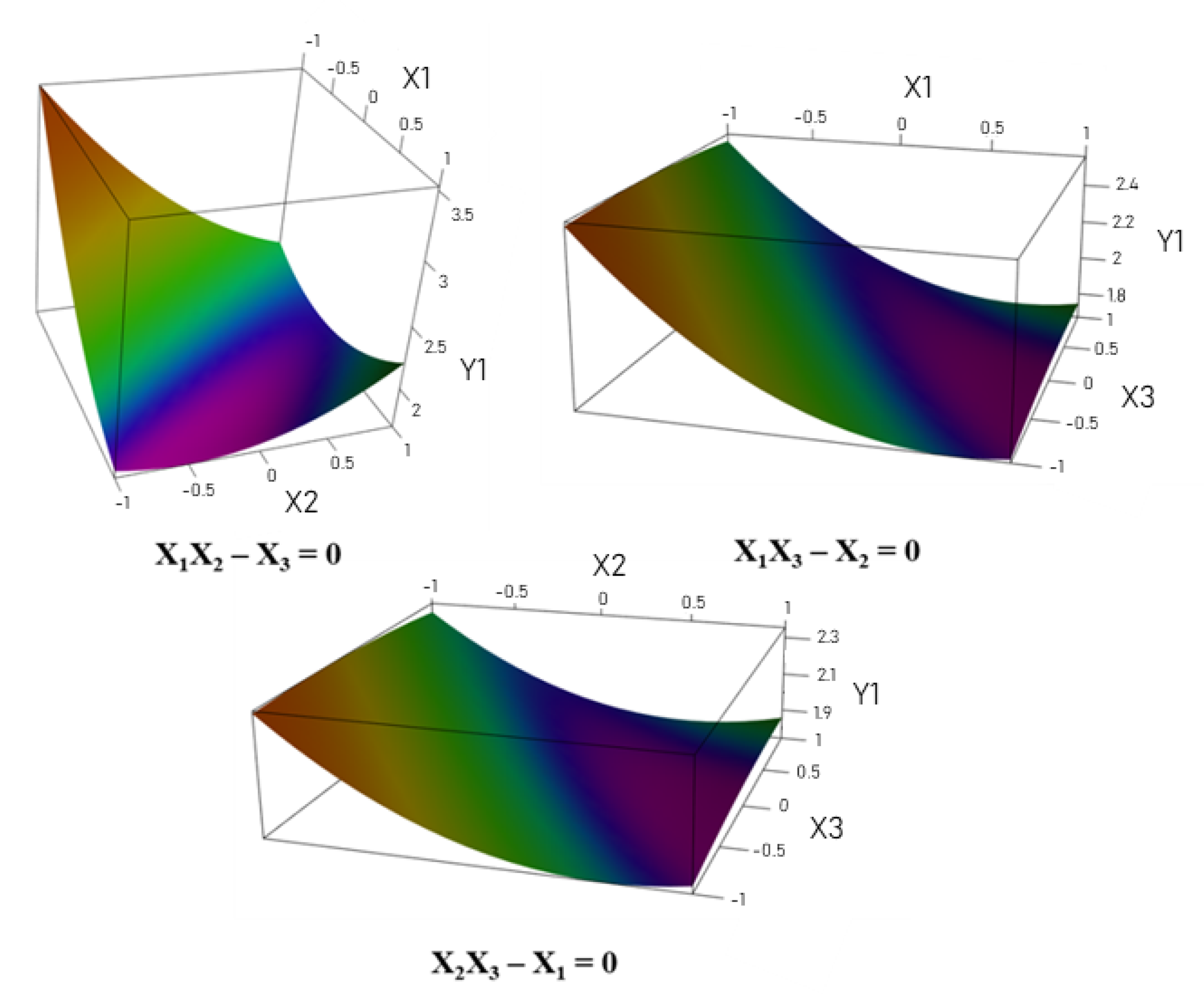

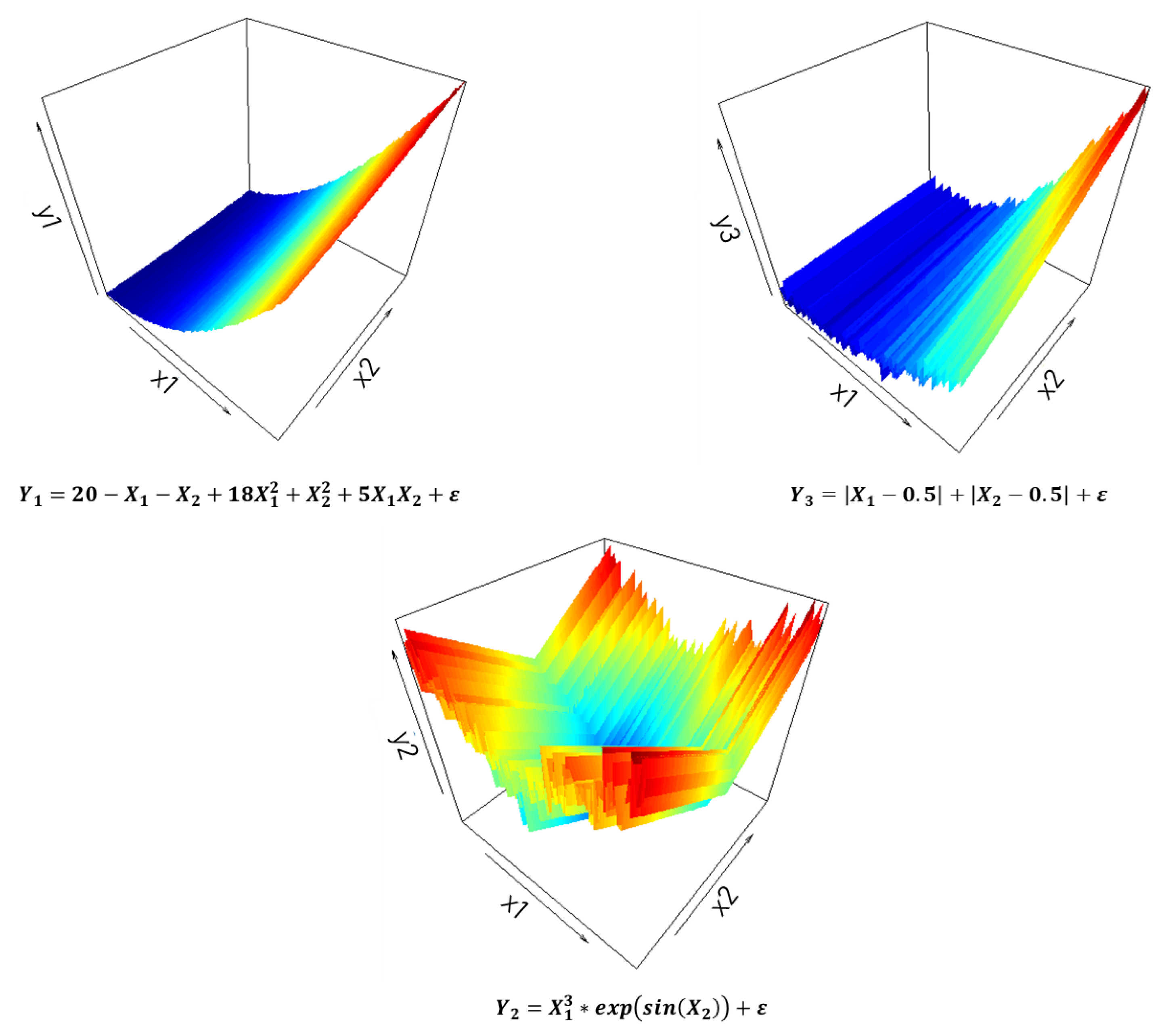

5.1. Simulated Model

5.1.1. Tuning the Models

5.1.2. Results

5.2. Use Case

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A. Supplementary Material

Appendix A.1. Mean and Standard Deviation of Hyperparameter Values

| Hyperparameters | Average Y1 | SD Y1 | Average Y2 | SD Y2 | Average Y3 | SD Y3 |

|---|---|---|---|---|---|---|

| k | 4.06 | 1.268 | 9.34 | 2.21 | 8.4 | 2.304 |

| minsplit | 3 | 2.474 | 1.3 | 1.199 | 1.2 | 0.99 |

| minbucket | 1.8 | 1.852 | 5.4 | 1.641 | 5.6 | 1.37 |

| cp | 0.003 | 0.002 | 0.023 | 0.011 | 0.018 | 0.012 |

| minsize | 5.46 | 3.382 | 5.54 | 3.57 | 5.44 | 3.144 |

| mtry | 3.2 | 0.7 | 2.3 | 0.839 | 2.64 | 1.208 |

| n.trees | 93 | 58.038 | 50 | 0 | 54 | 28.284 |

| interaction.depth | 1.96 | 0.88 | 1.08 | 0.34 | 1.08 | 0.396 |

| shrinkage | 0.1 | 0 | 0.1 | 0 | 0.1 | 0 |

| n.minobsinnode | 10 | 0 | 10 | 0 | 10 | 0 |

| nrounds | 70 | 47.38 | 50 | 0 | 51 | 7.071 |

| max_depth | 1.2 | 0.495 | 1.36 | 1.064 | 1.12 | 0.521 |

| eta | 0.3 | 0 | 0.306 | 0.024 | 0.304 | 0.02 |

| gamme | 0 | 0 | 0 | 0 | 0 | 0 |

| colsample_bytree | 0.712 | 0.1 | 0.684 | 0.1 | 0.664 | 0.094 |

| min_child_weight | 1 | 0 | 1 | 0 | 1 | 0 |

| subsample | 0.743 | 0.178 | 0.828 | 0.187 | 0.833 | 0.197 |

| degree | 1.32 | 0.653 | 1.76 | 0.87 | 1.42 | 0.758 |

| scale | 2.312 | 3.661 | 0.141 | 0.292 | 0.412 | 1.977 |

| 1.66 | 1.358 | 1.535 | 1.445 | 0.48 | 0.756 | |

| sigma | 0.537 | 0.42 | 0.525 | 0.515 | 0.497 | 0.503 |

| 5.08 | 7.359 | 0.35 | 0.196 | 0.46 | 1.1 | |

| layer1 | 4.96 | 1.355 | 5.04 | 1.525 | 3.64 | 1.549 |

| layer2 | 5.12 | 1.223 | 4.64 | 1.306 | 3.64 | 1.601 |

| layer3 | 4.92 | 1.523 | 5.04 | 1.355 | 3.72 | 1.715 |

| minsplit | 2.8 | 2.424 | 2.8 | 2.424 | 2.8 | 2.424 |

| minbucket | 2.4 | 2.268 | 2.4 | 2.268 | 2.4 | 2.268 |

| cp | 0.006 | 0.006 | 0.006 | 0.006 | 0.006 | 0.006 |

| layer1 | 4 | 1.512 | 4 | 1.512 | 4 | 1.512 |

| layer2 | 4.04 | 1.484 | 4.04 | 1.484 | 4.04 | 1.484 |

| layer3 | 3.92 | 1.712 | 3.92 | 1.712 | 3.92 | 1.712 |

| Hyperparameters | Average Y1 | SD Y1 | Average Y2 | SD Y2 | Average Y3 | SD Y3 |

|---|---|---|---|---|---|---|

| k | 3.08 | 1.047 | 8.28 | 2.241 | 8.52 | 2.297 |

| minsplit | 1.9 | 1.94 | 1.1 | 0.707 | 1.3 | 1.199 |

| minbucket | 1.3 | 1.199 | 5.7 | 1.199 | 5.7 | 1.199 |

| cp | 0.006 | 0.006 | 0.013 | 0.011 | 0.013 | 0.013 |

| minsize | 5.6 | 3.169 | 5.64 | 3.269 | 5.04 | 3.597 |

| mtry | 3.78 | 1.016 | 2.22 | 0.737 | 2.34 | 0.917 |

| n.trees | 113 | 62.93 | 57 | 26.745 | 51 | 7.071 |

| interaction.depth | 1.58 | 0.499 | 1.28 | 0.454 | 1.26 | 0.443 |

| shrinkage | 0.1 | 0 | 0.1 | 0 | 0.1 | 0 |

| n.minobsinnode | 10 | 0 | 10 | 0 | 10 | 0 |

| nrounds | 106 | 73.29 | 68 | 51.27 | 51 | 7.071 |

| max_depth | 2.06 | 1.449 | 1.86 | 1.414 | 1.74 | 1.44 |

| eta | 0.31 | 0.03 | 0.318 | 0.039 | 0.322 | 0.042 |

| gamme | 0 | 0 | 0 | 0 | 0 | 0 |

| colsample_bytree | 0.704 | 0.101 | 0.656 | 0.091 | 0.684 | 0.1 |

| min_child_weight | 1 | 0 | 1 | 0 | 1 | 0 |

| subsample | 0.69 | 0.189 | 0.745 | 0.197 | 0.735 | 0.198 |

| degree | 1.4 | 0.639 | 1.56 | 0.787 | 1.66 | 0.895 |

| scale | 1.824 | 3.359 | 0.139 | 0.293 | 0.252 | 1.421 |

| 1.555 | 1.226 | 1.135 | 1.292 | 0.995 | 1.243 | |

| sigma | 0.397 | 0.27 | 0.518 | 0.635 | 0.494 | 0.339 |

| 6.16 | 6.973 | 0.68 | 2.24 | 0.345 | 0.214 | |

| layer1 | 4.96 | 1.228 | 4.64 | 1.588 | 3.68 | 1.684 |

| layer2 | 5.52 | 0.953 | 4.96 | 1.228 | 4.24 | 1.791 |

| layer3 | 5.32 | 1.186 | 4.04 | 1.784 | 4.12 | 1.637 |

| minsplit | 2.7 | 2.393 | 2.7 | 2.393 | 2.7 | 2.393 |

| minbucket | 1.5 | 1.515 | 1.5 | 1.515 | 1.5 | 1.515 |

| cp | 0.009 | 0.008 | 0.009 | 0.008 | 0.009 | 0.008 |

| layer1 | 4.12 | 1.637 | 4.12 | 1.637 | 4.12 | 1.637 |

| layer2 | 3.88 | 1.686 | 3.88 | 1.686 | 3.88 | 1.686 |

| layer3 | 4.28 | 1.512 | 4.28 | 1.512 | 4.28 | 1.512 |

| Hyperparameters | Average Y1 | SD Y1 | Average Y2 | SD Y2 | Average Y3 | SD Y3 |

|---|---|---|---|---|---|---|

| k | 2.88 | 0.849 | 8.3 | 2.485 | 8.54 | 2.786 |

| minsplit | 1.8 | 1.852 | 1.7 | 1.753 | 1.7 | 1.753 |

| minbucket | 1 | 0 | 4.7 | 2.215 | 5.2 | 1.852 |

| cp | 0.005 | 0.005 | 0.012 | 0.012 | 0.006 | 0.009 |

| minsize | 5.58 | 3.308 | 5.24 | 3.384 | 4.48 | 3.209 |

| mtry | 4.08 | 1.007 | 2.62 | 1.159 | 2.34 | 0.848 |

| n.trees | 72 | 55.476 | 51 | 7.071 | 59 | 40.013 |

| interaction.depth | 1 | 0 | 1 | 0 | 1 | 0 |

| shrinkage | 0.1 | 0 | 0.1 | 0 | 0.1 | 0 |

| n.minobsinnode | 10 | 0 | 10 | 0 | 10 | 0 |

| nrounds | 110 | 74.231 | 62 | 38.545 | 59 | 34.538 |

| max_depth | 2.72 | 1.356 | 2.26 | 1.601 | 1.68 | 1.332 |

| eta | 0.324 | 0.043 | 0.32 | 0.04 | 0.32 | 0.04 |

| gamme | 0 | 0 | 0 | 0 | 0 | 0 |

| colsample_bytree | 0.716 | 0.1 | 0.656 | 0.091 | 0.68 | 0.099 |

| min_child_weight | 1 | 0 | 1 | 0 | 1 | 0 |

| subsample | 0.67 | 0.169 | 0.75 | 0.192 | 0.725 | 0.192 |

| degree | 1.32 | 0.621 | 1.58 | 0.758 | 1.26 | 0.6 |

| scale | 2.33 | 3.652 | 0.488 | 1.975 | 0.223 | 1.411 |

| 1.415 | 1.173 | 1.675 | 1.581 | 0.675 | 0.892 | |

| sigma | 0.308 | 0.222 | 0.323 | 0.219 | 0.695 | 1.371 |

| 7 | 9.318 | 0.455 | 0.562 | 0.485 | 0.64 | |

| layer1 | 4.76 | 1.333 | 4.08 | 1.614 | 3.68 | 1.731 |

| layer2 | 5.36 | 1.174 | 4.44 | 1.68 | 4.04 | 1.641 |

| layer3 | 5.6 | 0.808 | 3.52 | 1.644 | 4.08 | 1.85 |

| minsplit | 2.1 | 2.092 | 2.1 | 2.092 | 2.1 | 2.092 |

| minbucket | 1 | 0 | 1 | 0 | 1 | 0 |

| cp | 0.01 | 0.01 | 0.01 | 0.01 | 0.01 | 0.01 |

| layer1 | 3.92 | 1.563 | 3.92 | 1.563 | 3.92 | 1.563 |

| layer2 | 4.2 | 1.471 | 4.2 | 1.471 | 4.2 | 1.471 |

| layer3 | 3.88 | 1.48 | 3.88 | 1.48 | 3.88 | 1.48 |

| Hyperparameters | Average Y1 | SD Y1 | Average Y2 | SD Y2 | Average Y3 | SD Y3 |

|---|---|---|---|---|---|---|

| k | 2.74 | 0.853 | 7.16 | 2.691 | 7.84 | 2.985 |

| minsplit | 1.2 | 0.99 | 1.4 | 1.37 | 1.7 | 1.753 |

| minbucket | 1.1 | 0.707 | 4.8 | 2.157 | 4.5 | 2.315 |

| cp | 0.008 | 0.009 | 0.005 | 0.007 | 0.005 | 0.008 |

| minsize | 5.72 | 3.084 | 6.32 | 3.365 | 6.48 | 3.346 |

| mtry | 4.52 | 0.863 | 2.66 | 1.099 | 2.5 | 1.055 |

| n.trees | 107 | 90.356 | 81 | 70.631 | 55 | 29.014 |

| interaction.depth | 1 | 0 | 1 | 0 | 1 | 0 |

| shrinkage | 0.1 | 0 | 0.1 | 0 | 0.1 | 0 |

| n.minobsinnode | 10 | 0 | 10 | 0 | 10 | 0 |

| nrounds | 115 | 76.432 | 62 | 42.33 | 68 | 52.255 |

| max_depth | 2.6 | 1.539 | 2.52 | 1.581 | 2.76 | 1.611 |

| eta | 0.314 | 0.035 | 0.332 | 0.047 | 0.336 | 0.048 |

| gamme | 0 | 0 | 0 | 0 | 0 | 0 |

| colsample_bytree | 0.728 | 0.097 | 0.652 | 0.089 | 0.664 | 0.094 |

| min_child_weight | 1 | 0 | 1 | 0 | 1 | 0 |

| subsample | 0.72 | 0.185 | 0.68 | 0.194 | 0.685 | 0.188 |

| degree | 1.16 | 0.468 | 1.7 | 0.814 | 1.32 | 0.683 |

| scale | 2.492 | 3.814 | 0.872 | 2.726 | 0.257 | 1.42 |

| 1.715 | 1.489 | 1.175 | 1.311 | 0.74 | 1.087 | |

| sigma | 0.262 | 0.184 | 0.346 | 0.23 | 0.489 | 0.55 |

| 3.5 | 3.066 | 1.435 | 3.188 | 1.51 | 3.361 | |

| layer1 | 4.52 | 1.446 | 3.92 | 1.614 | 4.04 | 1.784 |

| layer2 | 5.32 | 1.186 | 4.72 | 1.604 | 4.52 | 1.607 |

| layer3 | 5.44 | 0.993 | 3.48 | 1.798 | 3.72 | 1.807 |

| minsplit | 2.3 | 2.215 | 2.3 | 2.215 | 2.3 | 2.215 |

| minbucket | 1.2 | 0.99 | 1.2 | 0.99 | 1.2 | 0.99 |

| cp | 0.007 | 0.007 | 0.007 | 0.007 | 0.007 | 0.007 |

| layer1 | 4.04 | 1.641 | 4.04 | 1.641 | 4.04 | 1.641 |

| layer2 | 4.12 | 1.586 | 4.12 | 1.586 | 4.12 | 1.586 |

| layer3 | 4.64 | 1.367 | 4.64 | 1.367 | 4.64 | 1.367 |

| Hyperparameters | Average Y1 | SD Y1 | Average Y2 | SD Y2 | Average Y3 | SD Y3 | Average Y4 | SD Y4 | Average Y5 | SD Y5 |

|---|---|---|---|---|---|---|---|---|---|---|

| k | ||||||||||

| minsplit | ||||||||||

| minbucket | ||||||||||

| cp | ||||||||||

| minsize | ||||||||||

| mtry | ||||||||||

| n.trees | ||||||||||

| interaction.depth | ||||||||||

| shrinkage | ||||||||||

| n.minobsinnode | ||||||||||

| nrounds | ||||||||||

| max_depth | ||||||||||

| eta | ||||||||||

| gamma | ||||||||||

| colsample_bytree | ||||||||||

| min_child_weight | ||||||||||

| subsample | ||||||||||

| degree | ||||||||||

| scale | ||||||||||

| sigma | ||||||||||

| layer1 | ||||||||||

| layer2 | ||||||||||

| layer3 | ||||||||||

| minsplit | ||||||||||

| minbucket | ||||||||||

| cp.1 | ||||||||||

| layer1 | ||||||||||

| layer2 | ||||||||||

| layer3 |

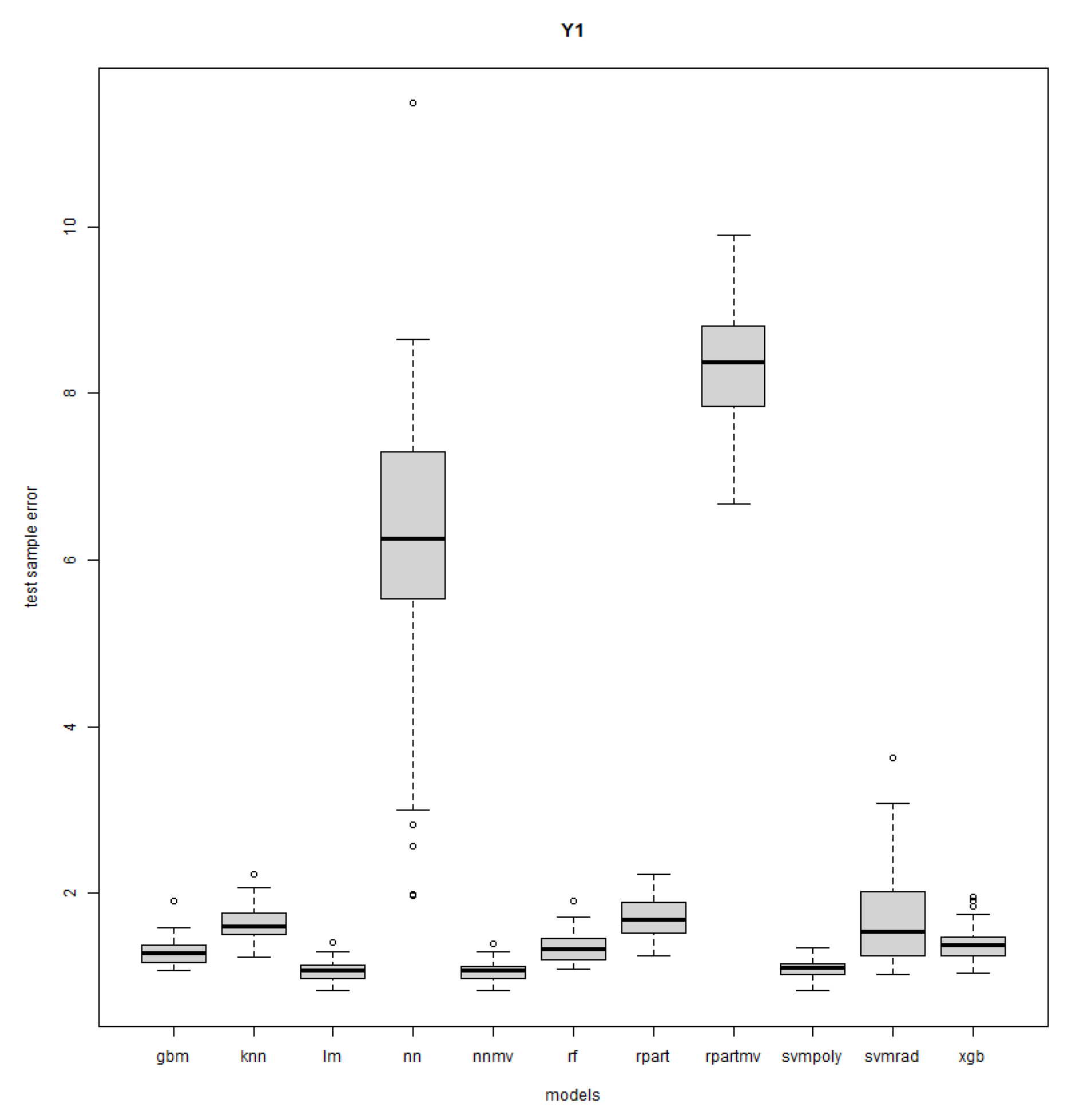

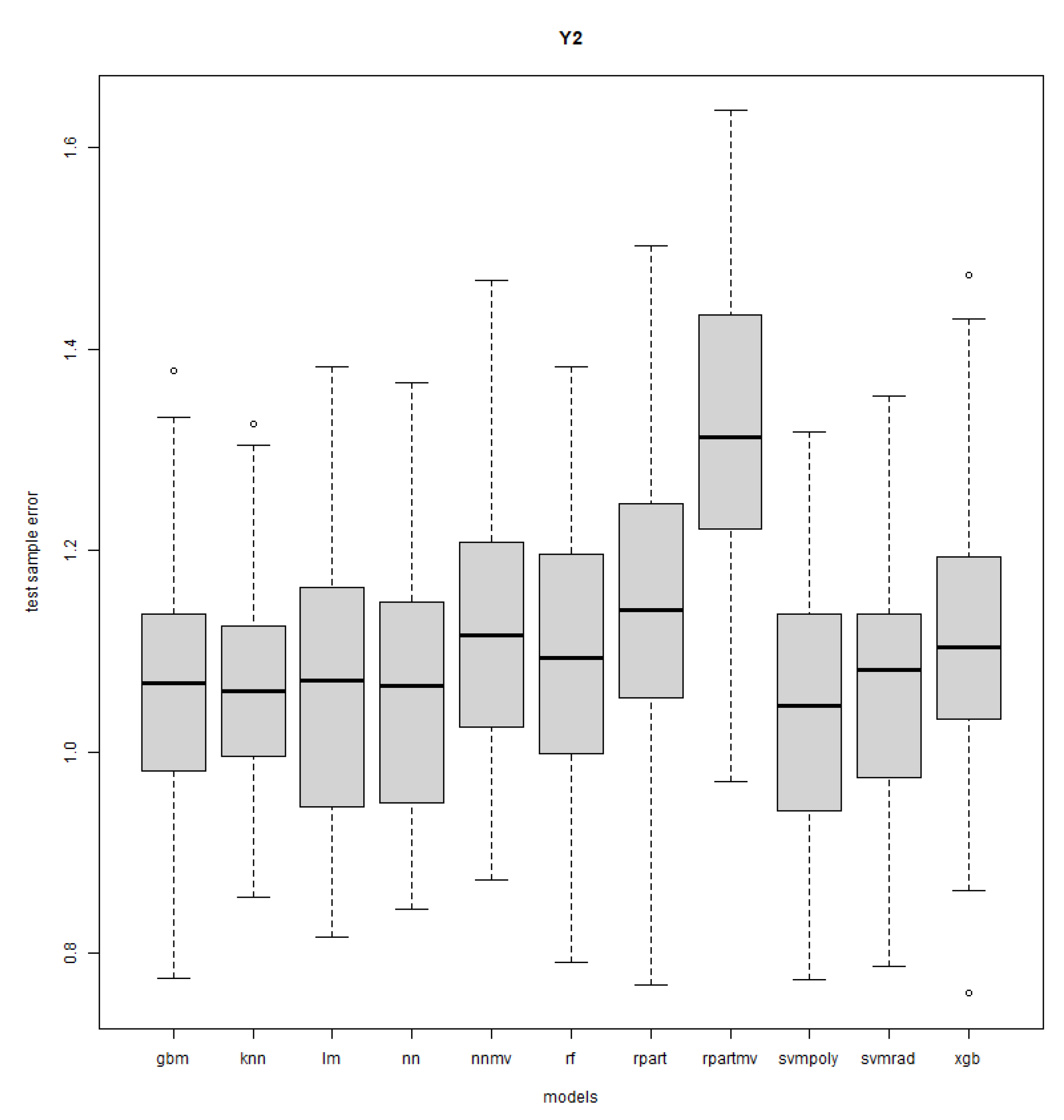

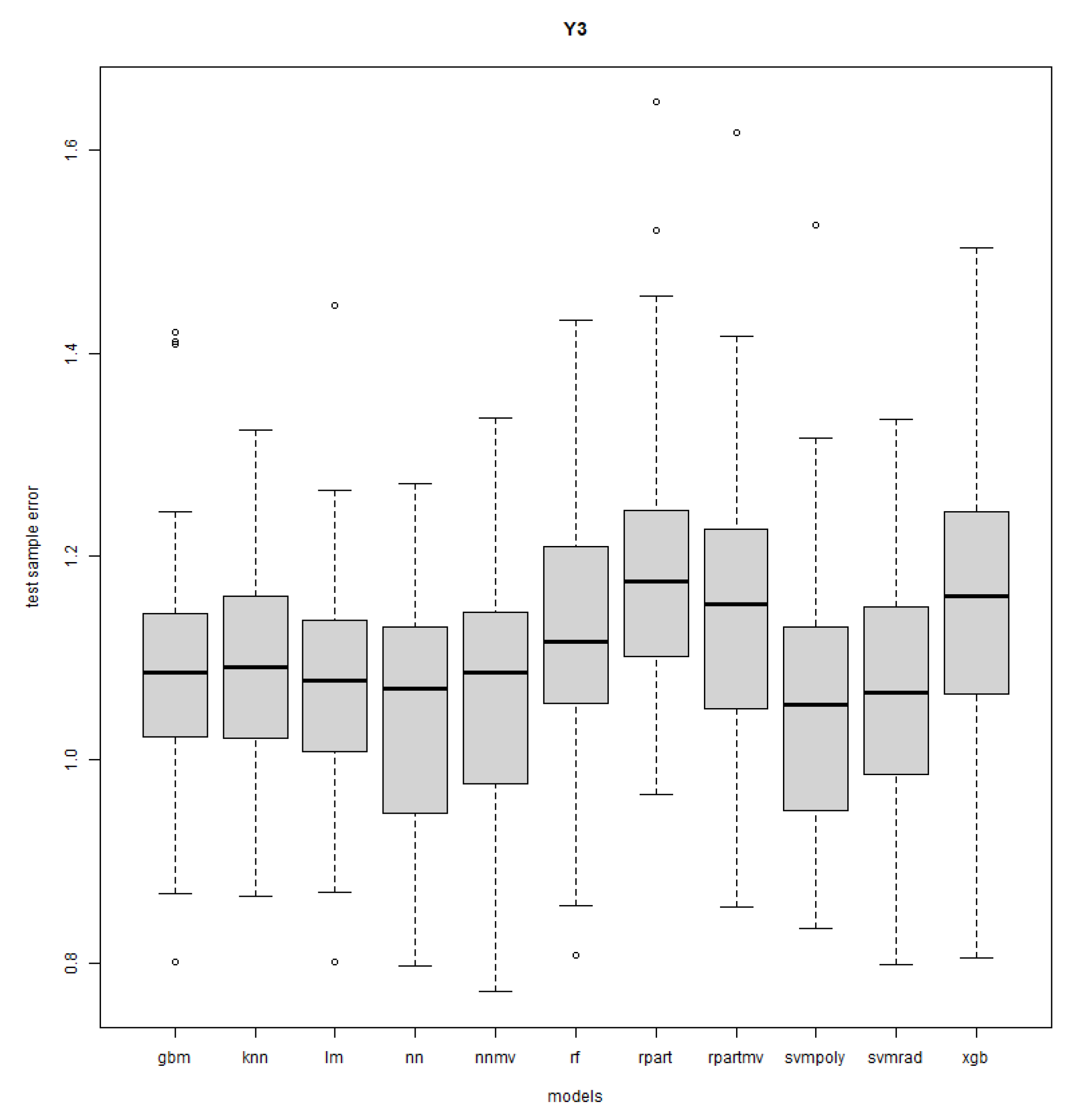

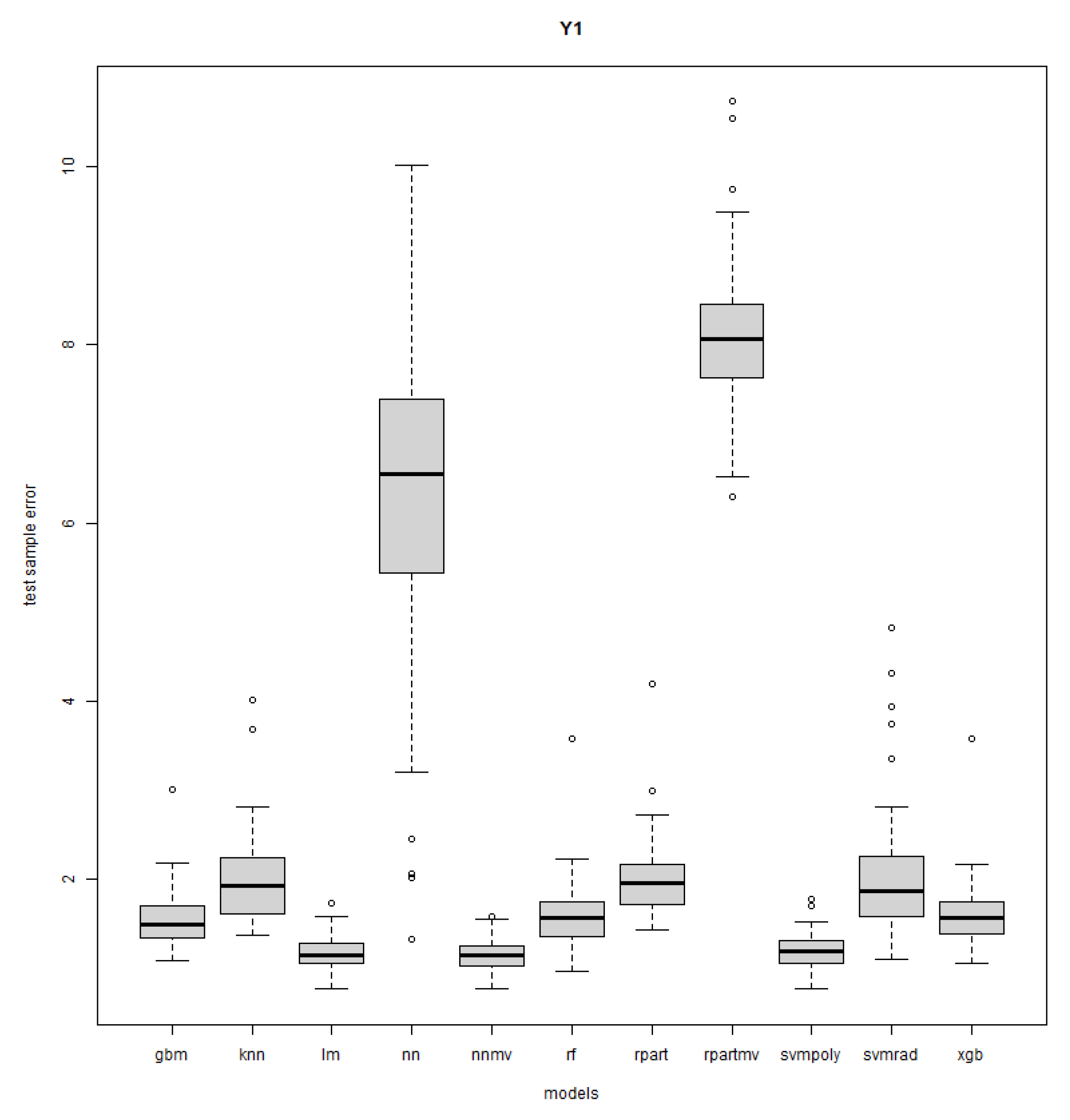

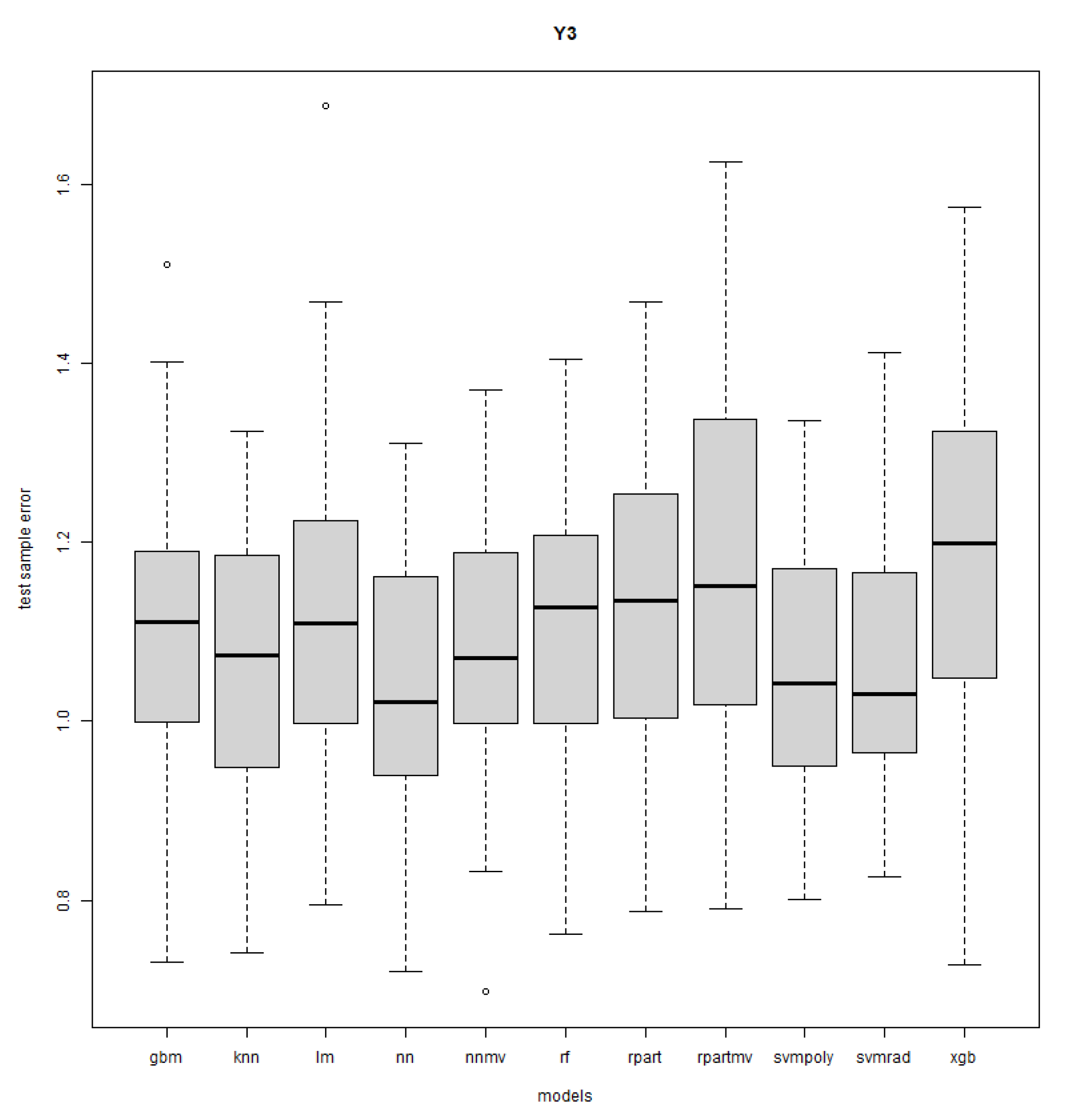

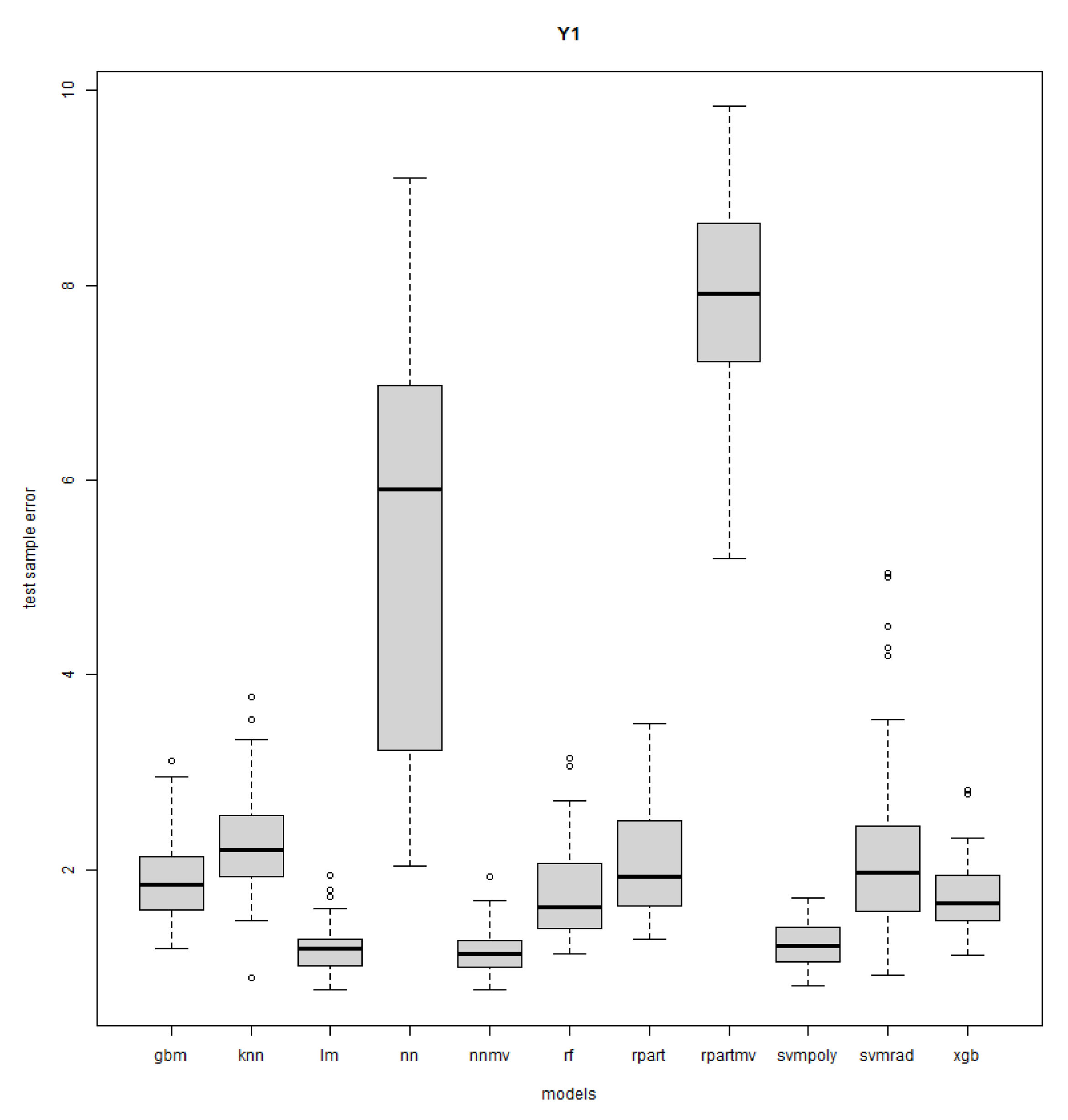

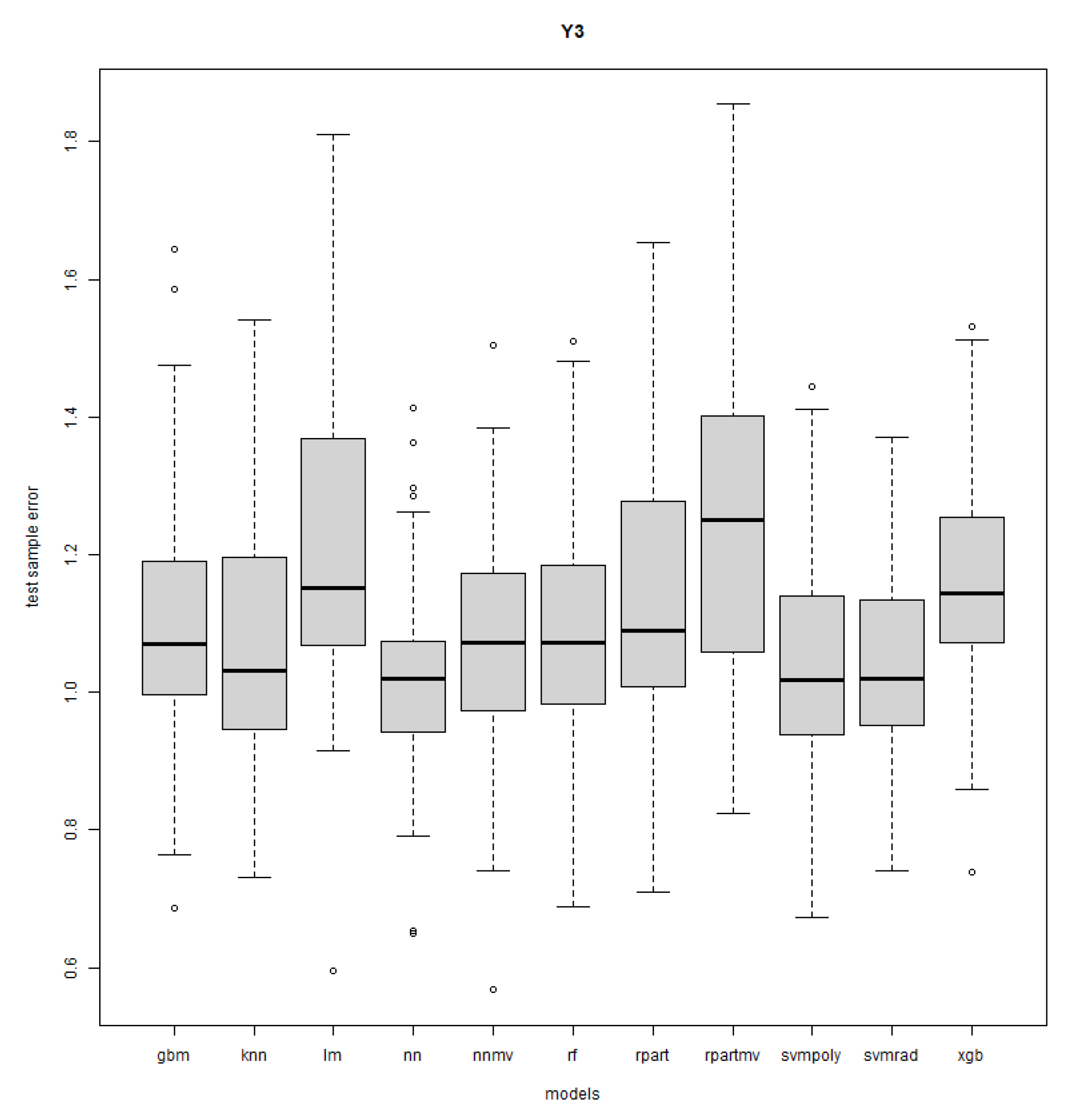

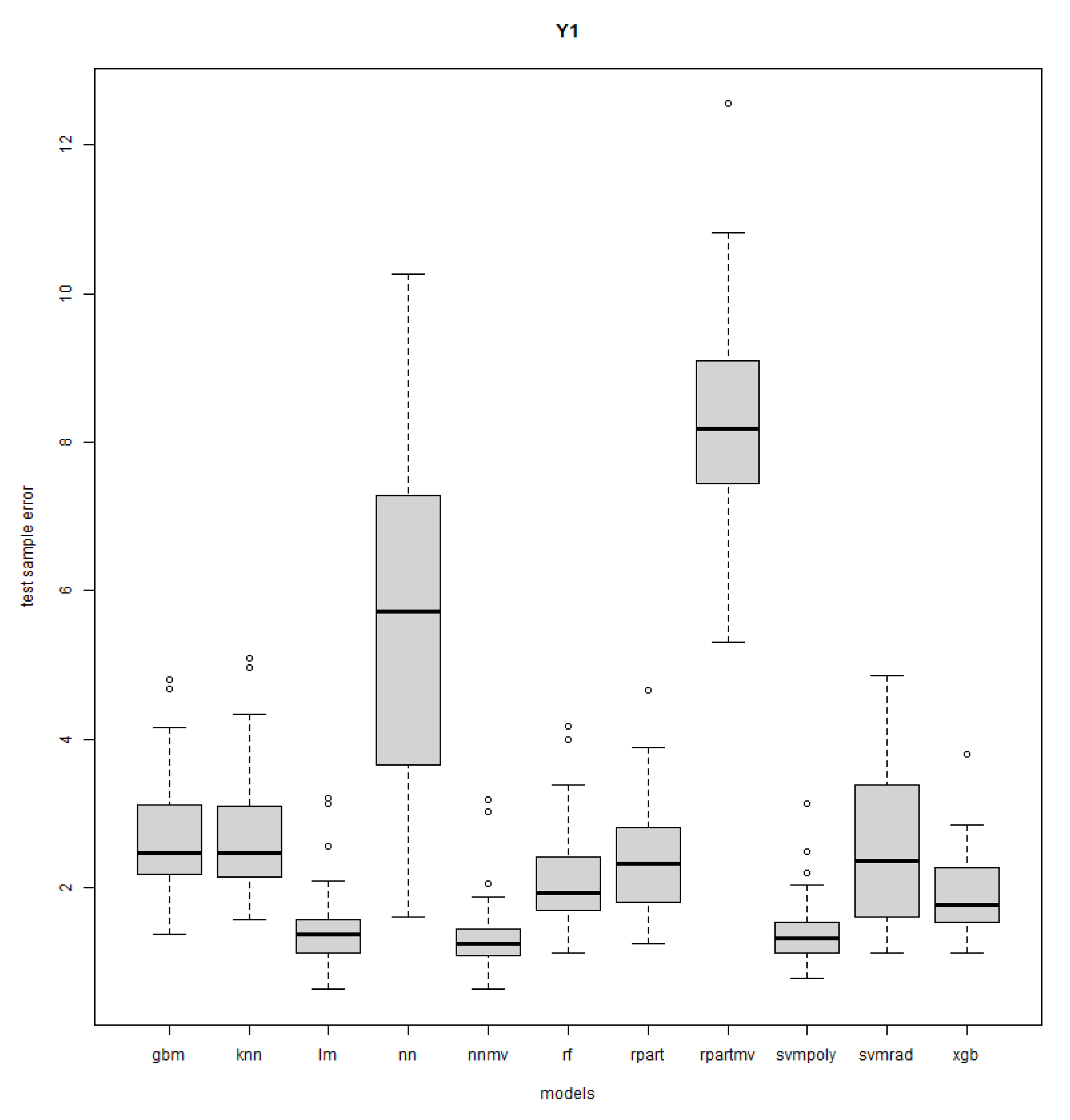

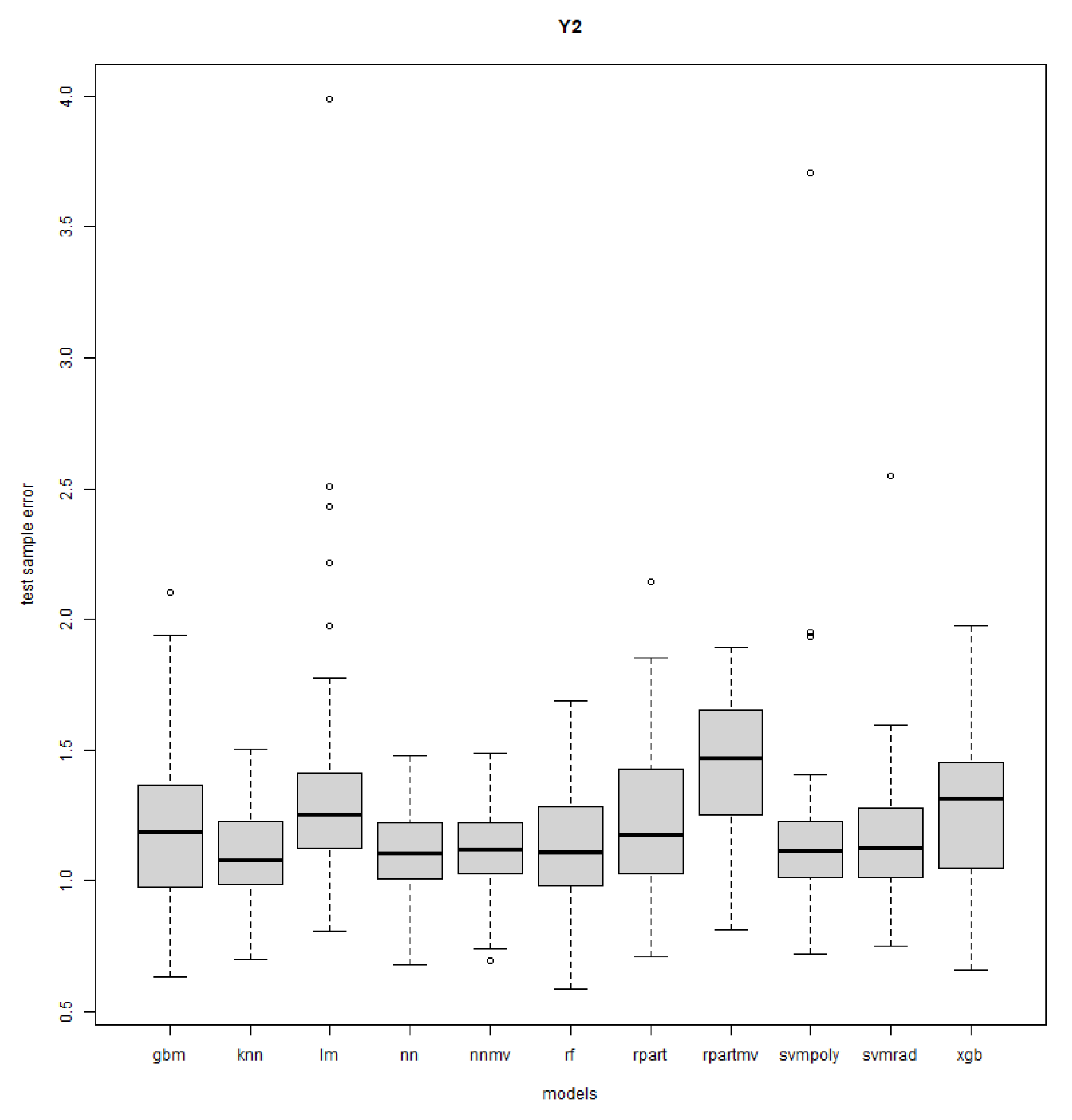

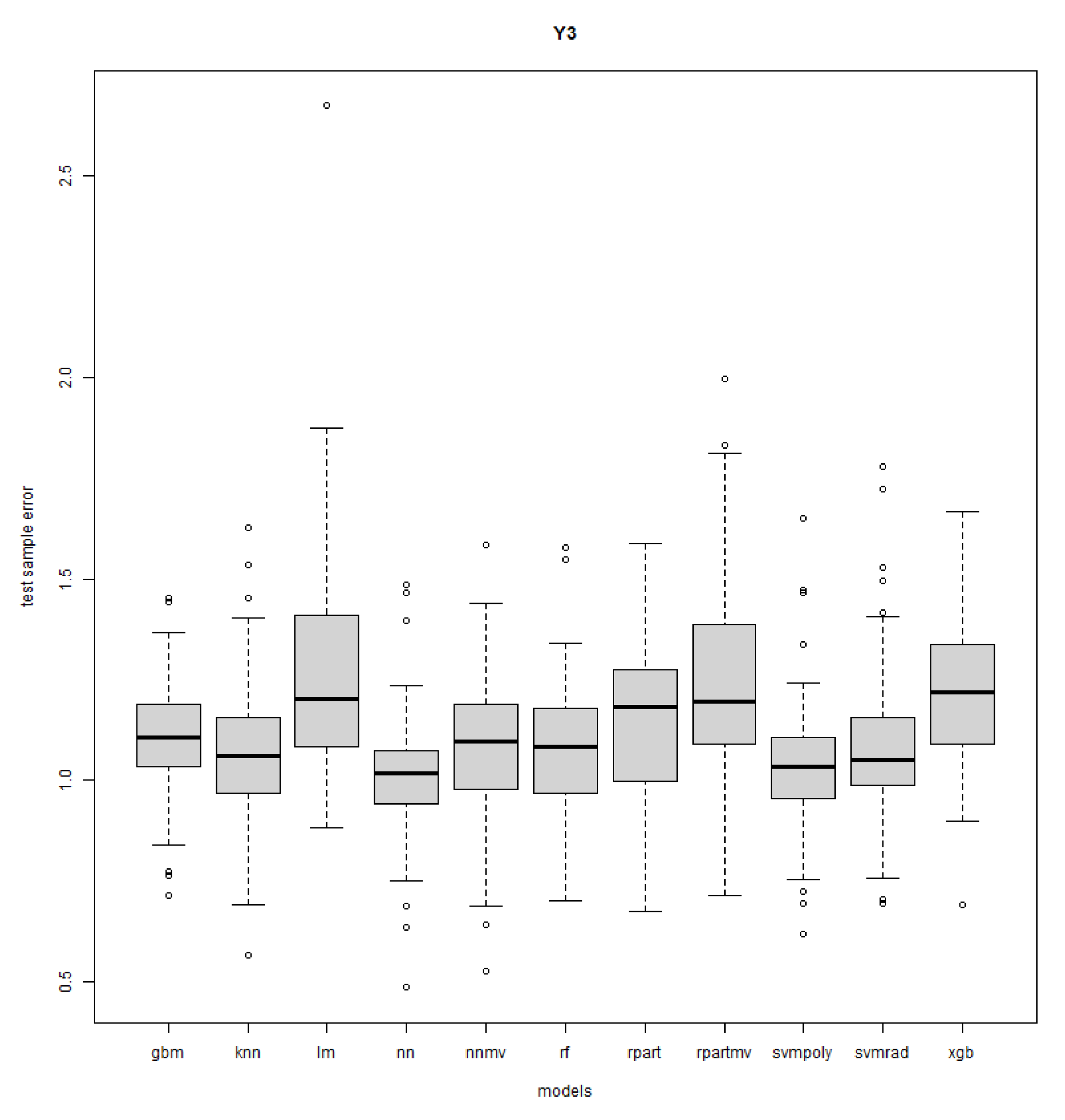

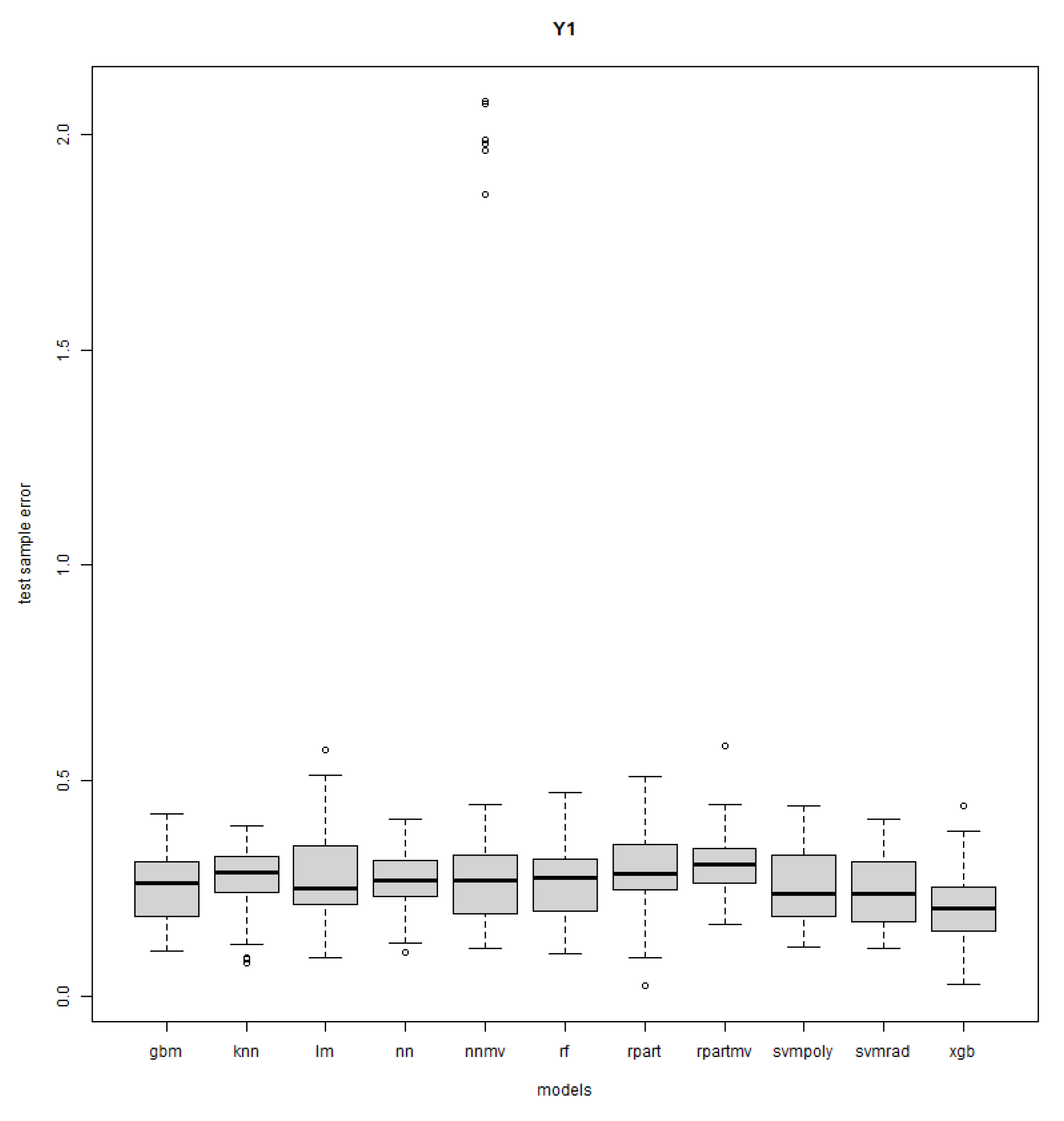

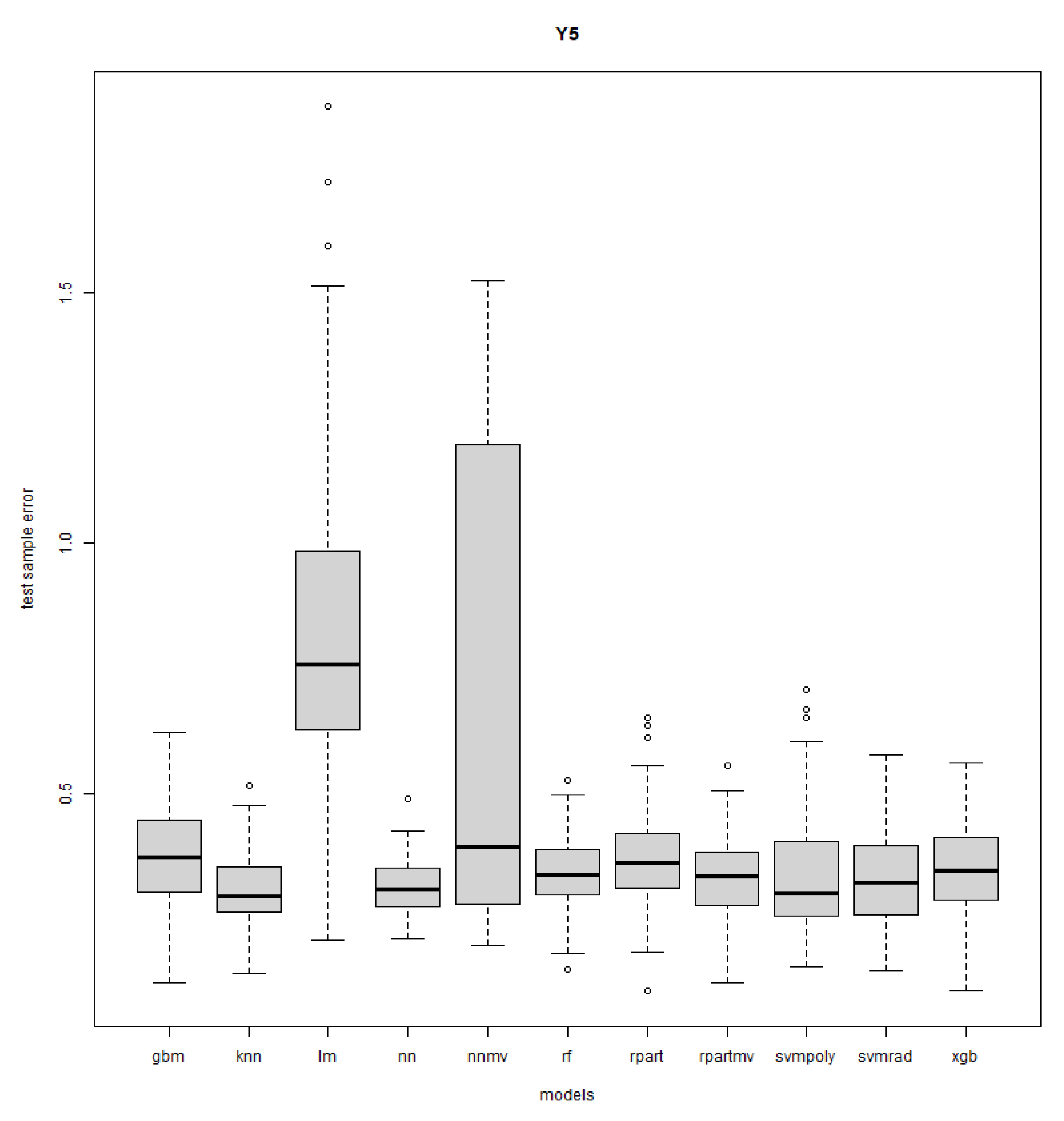

Appendix A.2. Boxplots of Errors for All Approaches and Responses

References

- Zhang, Y.; Wu, Y. Introducing Machine Learning Models to Response Surface Methodologies. In Response Surface Methodology in Engineering Science; IntechOpen: London, UK, 2021. [Google Scholar]

- Paturi, U.M.R.; Reddy, N.S.; Cheruku, S.; Narala, S.K.R.; Cho, K.K.; Reddy, M.M. Estimation of coating thickness in electrostatic spray deposition by machine learning and response surface methodology. Surf. Coat. Technol. 2021, 422, 127559. [Google Scholar] [CrossRef]

- Lashari, N.; Ganat, T.; Otchere, D.; Kalam, S.; Ali, I. Navigating viscosity of GO-SiO2/HPAM composite using response surface methodology and supervised machine learning models. J. Pet. Sci. Eng. 2021, 205, 108800. [Google Scholar] [CrossRef]

- Shozib, I.A.; Ahmad, A.; Rahaman, M.A.; Alam, M.; Beheshti, M.; Taufiqurrahman, I. Modelling and optimization of microhardness of electroless Ni-P-TiO2 composite coating based on machine learning approaches and RSM. J. Mater. Res. Technol. 2021, 12, 1010–1025. [Google Scholar] [CrossRef]

- Keshtegar, B.; Gholampour, A.; Thai, D.K.; Taylan, O.; Trung, N.T. Hybrid regression and machine learning model for predicting ultimate condition of FRP-confined concrete. Compos. Struct. 2021, 262, 113644. [Google Scholar] [CrossRef]

- Lou, H.; Chung, J.I.; Kiang, Y.H.; Xiao, L.Y.; Hageman, M.J. The application of machine learning algorithms in understanding the effect of core/shell technique on improving powder compactability. Int. J. Pharm. 2019, 555, 368–379. [Google Scholar] [CrossRef]

- Haque, S.; Khan, S.; Wahid, M.; Dar, S.A.; Soni, N.; Mandal, R.K.; Singh, V.; Tiwari, D.; Lohani, M.; Areeshi, M.Y.; et al. Artificial Intelligence vs. Statistical Modeling and Optimization of Continuous Bead Milling Process for Bacterial Cell Lysis. Front. Microbiol. 2016, 7, 1852. [Google Scholar] [CrossRef]

- Pilkington, J.L.; Preston, C.; Gomes, R.L. Comparison of response surface methodology (RSM) and artificial neural networks (ANN) towards efficient extraction of artemisinin from Artemisia annua. Ind. Crops Prod. 2014, 58, 15–24. [Google Scholar] [CrossRef]

- Bourquin, J.; Schmidli, H.; van Hoogevest, P.; Leuenberger, H. Advantages of Artificial Neural Networks (ANNs) as alternative modelling technique for data sets showing non-linear relationships using data from a galenical study on a solid dosage form. Eur. J. Pharm. Sci. 1998, 7, 5–16. [Google Scholar] [CrossRef] [PubMed]

- Souza Lima, E.; Lima, V.; Almeida, C.; Justi, K. Application of response surface methodology and machine learning combined with data simulation to metal determination of freshwater sediment. Water Air Soil Pollut. 2017, 228, 370. [Google Scholar] [CrossRef]

- Bi, Q.; Goodman, K.E.; Kaminsky, J.; Lessler, J. What is Machine Learning? A Primer for the Epidemiologist. Am. J. Epidemiol. 2019, 188, 2222–2239. [Google Scholar] [CrossRef]

- Crisci, C.; Terra, R.; Pacheco, J.; Ghattas, B.; Bidegain, M.; Goyenola, G.; Lagomarsino, J.; Méndez, G.; Mazzeo, N. Multi-model approach to predict phytoplankton biomass and composition dynamics in a eutrophic shallow lake governed by extreme meteorological events. Ecol. Model. 2017, 360, 80–93. [Google Scholar] [CrossRef]

- Myers, R.H.; Montgomery, D.C.; Anderson-Cook, C.M. Response Surface Methodology: Process and Product in Optimization Using Designed Experiments; John Wiley and Sons: New York, NY, USA, 1995. [Google Scholar]

- Sarabia, L.; Ortiz, M. 1.12—Response Surface Methodology. In Comprehensive Chemometrics; Brown, S.D., Tauler, R., Walczak, B., Eds.; Elsevier: Oxford, UK, 2009; pp. 345–390. [Google Scholar] [CrossRef]

- Manzon, D.; Claeys-Bruno, M.; Declomesnil, S.; Carité, C.; Sergent, M. Quality by Design: Comparison of Design Space construction methods in the case of Design of Experiments. Chemom. Intell. Lab. Syst. 2020, 200, 104002. [Google Scholar] [CrossRef]

- dos Moreira, C.S.; Lourenço, F.R. Development and optimization of a stability-indicating chromatographic method for verapamil hydrochloride and its impurities in tablets using an analytical quality by design (AQbD) approach. Microchem. J. 2020, 154, 104610. [Google Scholar] [CrossRef]

- Hastie, T.; Tibshirani, R.; Friedman, J.; Franklin, J. The elements of statistical learning: Data mining, inference, and prediction. Math. Intell. 2004, 27, 83–85. [Google Scholar] [CrossRef]

- Breiman, L.; Friedman, J.; Stone, C.J.; Olshen, R.A. Classification and Regression Trees; Chapman and Hall/CRC: Boca Raton, FL, USA, 1984. [Google Scholar]

- Breiman, L. Random Forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Freund, Y.; Schapire, R.E. A decision-theoretic generalization of on-line learning and an application to boosting. J. Comput. Syst. Sci. 1997, 55, 119–139. [Google Scholar] [CrossRef]

- Natekin, A.; Knoll, A. Gradient boosting machines, a tutorial. Front. Neurorobot. 2013, 7, 21. [Google Scholar] [CrossRef]

- Chen, T.; He, T. xgboost: eXtreme Gradient Boosting. 2021. Available online: https://cran.r-project.org/web/packages/xgboost/vignettes/xgboost.pdf (accessed on 27 July 2023).

- Cristianini, N.; Shawe-Taylor, J. An Introduction to Support Vector Machines and Other Kernel-Based Learning Methods; Cambridge University Press: Cambridge, UK, 2000. [Google Scholar] [CrossRef]

- Nerini, D.; Durbec, J.; Mante, C.; Garcia, F.; Ghattas, B. Forecasting physicochemical variables by a classification tree method: Application to the Berre Lagoon (South France). Acta Biotheor. 2001, 48, 181–196. [Google Scholar] [CrossRef]

- Tibshirani, R. Regression shrinkage and selection via the Lasso. J. R. Stat. Soc. Ser. B (Methodol.) 1996, 58, 267–288. [Google Scholar] [CrossRef]

- Nelder, J.A.; Wedderburn, R.W.M. Generalized linear models. J. R. Stat. Soc. Ser. (Gen.) 1972, 135, 370. [Google Scholar] [CrossRef]

- Rumelhart, D.E.; Hinton, G.E.; Williams, R.J. Learning representations by back-propagating errors. Nature 1986, 323, 533–536. [Google Scholar] [CrossRef]

- Marquardt, D.W.; Snee, R.D. Ridge regression in practice. Am. Stat. 1975, 29, 3–20. [Google Scholar] [CrossRef]

- Geurts, P.; Ernst, D.; Wehenkel, L. Extremely randomized trees. Mach. Learn. 2006, 63, 3–42. [Google Scholar] [CrossRef]

- Schaffer, J.; Whitley, D.; Eshelman, L. Combinations of genetic algorithms and neural networks: A survey of the state of the art. In Proceedings of the COGANN-92: International Workshop on Combinations of Genetic Algorithms and Neural Networks, Baltimore, MD, USA, 6 June 1992; pp. 1–37. [Google Scholar] [CrossRef]

- Jie, J.; Zeng, J.; Han, C. An extended mind evolutionary computation model for optimizations. Appl. Math. Comput. 2007, 185, 1038–1049. [Google Scholar] [CrossRef]

- Huang, G.B.; Zhu, Q.Y.; Siew, C.K. Extreme learning machine: Theory and applications. Neurocomputing 2006, 70, 489–501. [Google Scholar] [CrossRef]

- Aljazzar, H.; Leue, S. K*: A heuristic search algorithm for finding the k shortest paths. Artif. Intell. 2011, 175, 2129–2154. [Google Scholar] [CrossRef]

- RStudio Team. RStudio: Integrated Development Environment for R; RStudio, PBC: Boston, MA, USA, 2020. [Google Scholar]

- Kuhn, M. Building predictive models in R using the caret package. J. Stat. Softw. 2008, 28, 1–26. [Google Scholar] [CrossRef]

- Dai, Y.; Yang, C.; Liu, Y.; Yao, Y. Latent-Enhanced Variational Adversarial Active Learning Assisted Soft Sensor. IEEE Sens. J. 2023, 23, 15762–15772. [Google Scholar] [CrossRef]

- Zhu, J.; Jia, M.; Zhang, Y.; Deng, H.; Liu, Y. Transductive transfer broad learning for cross-domain information exploration and multigrade soft sensor application. Chemom. Intell. Lab. Syst. 2023, 235, 104778. [Google Scholar] [CrossRef]

- Jia, M.; Xu, D.; Yang, T.; Liu, Y.; Yao, Y. Graph convolutional network soft sensor for process quality prediction. J. Process Control 2023, 123, 12–25. [Google Scholar] [CrossRef]

- Liu, K.; Zheng, M.; Liu, Y.; Yang, J.; Yao, Y. Deep Autoencoder Thermography for Defect Detection of Carbon Fiber Composites. IEEE Trans. Ind. Inform. 2023, 19, 6429–6438. [Google Scholar] [CrossRef]

| References | # Factors | # Responses | n | Models | Criteria |

|---|---|---|---|---|---|

| [1] | 63 | 1 | 56,512 | PM (1) PM (2) Linear ML model- LASSO, GLM rf, GBDT MLP, SVR | EV MAPE RMSE |

| [2] | 3 | 1 | 30 | PM (2) BPNN, SVM | MAPE |

| [3] | 5 | 1 | 57 | PM (2) Ridge regression LASSO, SVM CART, rf ET, GBR xgboost | AIC MAE RMSE |

| [4] | 5 | 1 | 36 | PM (2) NN, SVM rf, ET | MSE MAE |

| [5] | 8 | 2 | 765 | PM (2), SVR RSM-SVR | RMSE MAE NSE d |

| [6] | 2 (+2) | 2 | 28 | PM (2) BPNN GA-BPNN, MEA-BPNN ELM | CV RMSE RMSE AIC (for NN) |

| [10] | 3 | 6 | 18 | PM (2) Lazy KStar rf | RMSE, |

| [8] | 3 | 1 | 20 | PM (2) MLP | AAD |

| [9] | 6 | 2 | 102 | PM (2) MLP |

| Method | Hyperparameter Grid | Description |

|---|---|---|

| knn | k: {1, …, 10} | number of neighbors |

| CART | minsplit: {1, 5, 10} cp: {0.002, 0.005, 0.01, 0.015, 0.02, 0.03} minbucket: {1, 5, 10} | minimum number of observations to attempt a split complexity parameter minimum number of observations in a leaf |

| rf | minsize: {2, 5, 10} mtry: {1, …, 5} | minimum size of a node number of variables to test for each split |

| gbm | n.trees: {50, 100, 150, 200, 250} interaction.depth: {1, 2, 3, 4, 5} shrinkage: 0.1 n.minobsinnode: 10 | total number of trees to fit maximum depth of trees learning rate minimum number of observations in leaves |

| xgboost | nrounds: {50, 100, 150, 200, 250} max_depth: {1, 2, 3, 4, 5} eta: {0.3,0.4} gamma: 0 colsample_bytree: {0.6, 0.8} min_child_weight: 1 subsample: [0.5, 0.625, 0.750, 0.875, 1} | maximum number of boosting iterations maximum depth of a tree control the learning rate minimum loss reduction subsample ratio of columns when constructing each tree minimum sum of instance weight needed in a child subsample ratio of the training instance |

| svmPoly | degree: {0.25, 0.5, 1, 2, 4} scale: {0.001, 0.01, 0.1, 1, 10} : {1, 2, 3} | polynome degree polynomial scaling factor control parameter |

| svmRadial | sigma: constant value : {0.25, 0.5, 1, 2, 4, 8, 16, 32} | bandwidth of kernel function control parameter |

| NN | layer1: {2, 4, 6, 8, 10} layer2: {2, 4, 6, 8, 10} layer3: {2, 4, 6, 8, 10} | number of hidden neurons in each layer |

| CARTmv | minsplit: {1,5,10} cp: {0.002, 0.005, 0.01, 0.015, 0.02, 0.03} minbucket: {1, 5, 10} | minimum number of observations to attempt a split complexity parameter minimum number of observations in a leaf |

| NNmv | layer1: {2, 4, 6, 8, 10} layer2: {2, 4, 6, 8, 10} layer3: {2, 4, 6, 8, 10} | number of hidden neurons in each layer |

| Method | ||||||||

|---|---|---|---|---|---|---|---|---|

| lm | ||||||||

| knn | ||||||||

| CART | 10 | 11 | ||||||

| rf | ||||||||

| gbm | ||||||||

| xgb | ||||||||

| svmpoly | ||||||||

| svmrad | ||||||||

| NN | 10 | 10 | ||||||

| CARTmv | 11 | 11 | 10 | 11 | ||||

| NNmv |

| Method | ||||||||

|---|---|---|---|---|---|---|---|---|

| lm | ||||||||

| knn | ||||||||

| CART | ||||||||

| rf | ||||||||

| gbm | ||||||||

| xgb | 10 | 11 | ||||||

| svmpoly | ||||||||

| svmrad | ||||||||

| NN | 10 | 10 | ||||||

| CARTmv | 11 | 11 | 10 | 11 | ||||

| NNmv |

| Method | ||||||||

|---|---|---|---|---|---|---|---|---|

| lm | 10 | |||||||

| knn | ||||||||

| CART | ||||||||

| rf | ||||||||

| gbm | ||||||||

| xgb | 10 | |||||||

| svmpoly | ||||||||

| svmrad | ||||||||

| NN | 10 | 10 | ||||||

| CARTmv | 11 | 11 | 11 | 11 | ||||

| NNmv |

| Method | ||||||||

|---|---|---|---|---|---|---|---|---|

| lm | 10 | 11 | ||||||

| knn | ||||||||

| CART | ||||||||

| rf | ||||||||

| gbm | ||||||||

| xgb | ||||||||

| svmpoly | ||||||||

| svmrad | ||||||||

| NN | 10 | 10 | ||||||

| CARTmv | 11 | 11 | 10 | 11 | ||||

| NNmv |

| Method | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| lm | 11 | 11 | 10 | 11 | 11 | |||||||

| knn | ||||||||||||

| CART | 10 | 11 | 10 | |||||||||

| rf | ||||||||||||

| gbm | ||||||||||||

| xgb | ||||||||||||

| svmpoly | 10 | |||||||||||

| svmrad | ||||||||||||

| NN | ||||||||||||

| CARTmv | 10 | |||||||||||

| NNmv | 11 | 10 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ghattas, B.; Manzon, D. Machine Learning Alternatives to Response Surface Models. Mathematics 2023, 11, 3406. https://doi.org/10.3390/math11153406

Ghattas B, Manzon D. Machine Learning Alternatives to Response Surface Models. Mathematics. 2023; 11(15):3406. https://doi.org/10.3390/math11153406

Chicago/Turabian StyleGhattas, Badih, and Diane Manzon. 2023. "Machine Learning Alternatives to Response Surface Models" Mathematics 11, no. 15: 3406. https://doi.org/10.3390/math11153406

APA StyleGhattas, B., & Manzon, D. (2023). Machine Learning Alternatives to Response Surface Models. Mathematics, 11(15), 3406. https://doi.org/10.3390/math11153406