1. Introduction

Robotics is increasingly permeating diverse sectors, spanning both civilian and industrial applications, and is becoming integral to everyday life. Service robots are now prevalent in public spaces, interacting with individuals and delivering services. Within robotics, autonomous driving is a particularly dynamic area that continues to attract extensive research attention.

A recurring challenge in real-world autonomy is rule-governed decision making. In many robotic domains, rules range from basic collision avoidance in navigation to complex traffic regulations in driving. While such rules are primarily designed for safety, strict adherence to all rules is not always feasible or even desirable. In autonomous driving, for instance, a safe and efficient maneuver may require temporarily relaxing a rule—such as, changing lanes in dense traffic, deciding whether to stop or proceed at a yellow light, or briefly crossing a lane marker to avoid a stalled vehicle. These situations require robots to evaluate trade-offs between partially conflicting objectives and to make nuanced decisions about how much each rule should be respected in the current context. Designing controllers that systematically support such context-dependent flexibility remains a core problem.

Model Predictive Control (MPC) is a powerful paradigm for autonomous control due to its ability to optimize trajectories online under dynamics and constraints [

1]. MPC naturally combines an objective function characterizing desired behavior with constraints encoding safety or physical limitations, and has demonstrated strong performance across a wide range of robotic applications, including whole-body control of humanoid robots [

2,

3,

4] and driving-related control tasks. Despite these strengths, designing effective MPC controllers is challenging: expert behavior must be encoded through cost terms, constraint margins, and tuning parameters, and these choices must generalize across diverse scenarios. In driving, for example, expert decisions such as whether to decelerate, follow closely, or change lanes depend on traffic interactions and road context, making manual tuning of MPC parameters highly nontrivial.

Imitation learning (IL) offers an appealing alternative by learning control policies directly from expert demonstrations [

5,

6]. In autonomous driving, end-to-end IL approaches map observations and high-level commands to control actions and can capture rich nonlinear behaviors [

7,

8]. However, pure IL does not inherently guarantee compliance with safety-critical rules, and performance can degrade under distribution shift or rare configurations. This motivates hybrid approaches that retain explicit rule representations while leveraging learning for human-like behavior.

In this work, we study control synthesis in the presence of rule specifications, building on our prior MPC–STL framework [

9]. We represent rules using Signal Temporal Logic (STL) [

10,

11], a formal language for specifying temporal properties of real-valued signals. STL has been widely adopted for robotic task specification and control synthesis [

12,

13,

14,

15,

16]. A key benefit of STL is its robustness degree, which quantifies how strongly a trajectory satisfies a rule, enabling rule margins to be incorporated into optimization-based controllers.

Rather than prescribing rule priorities manually, our earlier work learned robustness slackness from expert demonstrations [

9]. Slackness values define rule-wise lower bounds on STL robustness, capturing how strictly experts tend to satisfy each rule in context. In that framework, a Conditional Variational Autoencoder (CVAE) [

17] was trained to map contextual features to slackness values, which were then plugged into STL-constrained MPC to enable selective, flexible rule compliance. While effective, the CVAE prior can be limiting when expert behavior is inherently multimodal, as in driving (e.g., both braking and lane changing can be valid responses), and our prior framework did not explicitly represent intermediate sub-goals (e.g., target lanes or waypoints) that organize longer-horizon decisions.

Concurrently, diffusion models have emerged as expressive generative models that can represent complex multimodal distributions. Beyond vision, diffusion-based policies have recently been applied to robot control by modeling distributions over actions or trajectories conditioned on observations [

18]. Their ability to capture diverse behaviors makes diffusion a natural candidate for learning human-like decision variables in rule-governed domains.

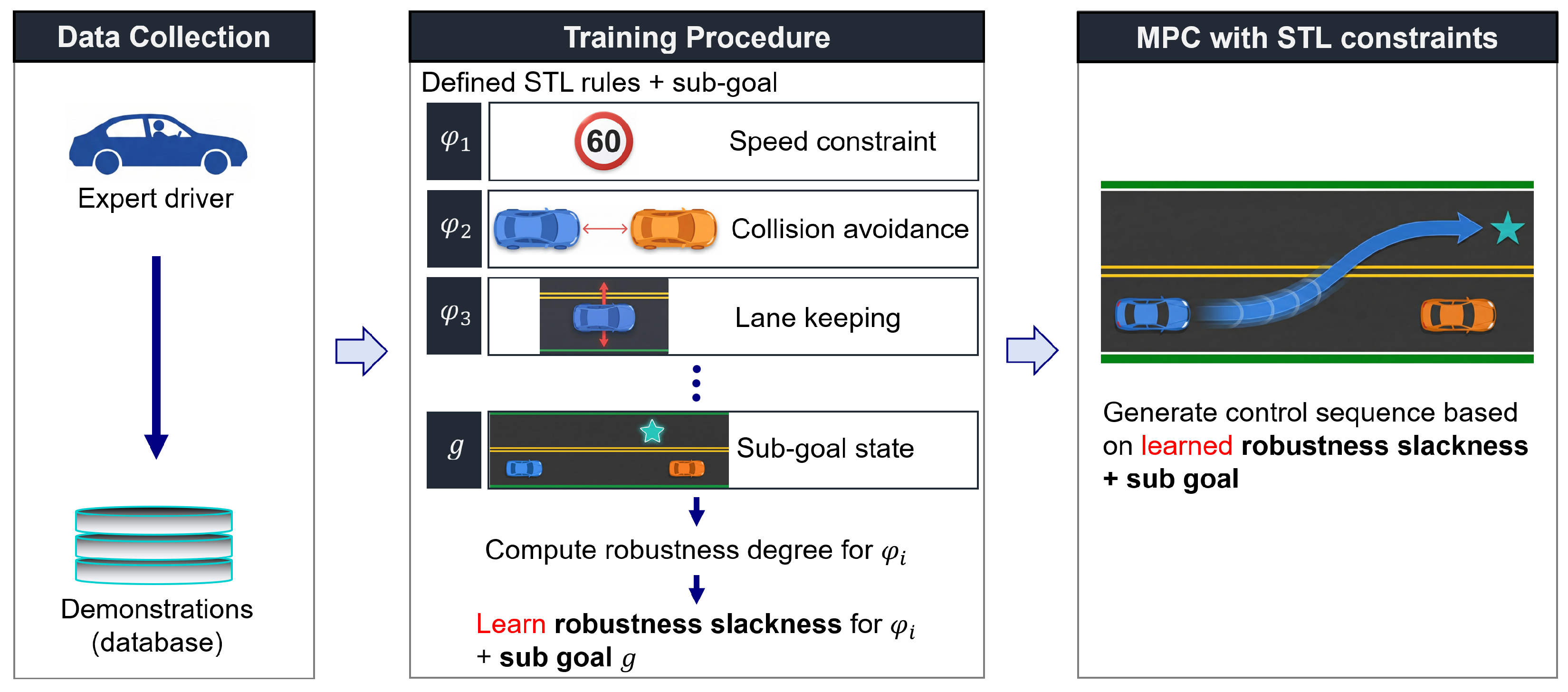

Motivated by these developments, we propose a diffusion-guided MPC–STL framework that extends our previous approach in two key ways. First, we replace the CVAE with a conditional diffusion model to learn a richer distribution over rule-wise robustness slackness conditioned on the current traffic context. Second, we augment the learned outputs with sub-goals that encode intermediate intent in the state/output space (e.g., lane-level targets or waypoints). The diffusion model jointly predicts slackness and sub-goals, and the predicted sub-goals are injected into the MPC objective (terminal and, optionally, stage costs), guiding the optimizer toward human-like intermediate targets while enforcing STL constraints with learned margins.

At run time, given the current feature vector, the conditional diffusion model generates samples of rule-wise slackness and sub-goals. The slackness values define soft margins for STL constraints, and the sub-goals shape the MPC optimization, resulting in a closed-loop controller that combines the expressive, multimodal modeling capacity of diffusion with the interpretability and structure of STL-constrained MPC. More broadly, our framework bridges symbolic task specifications (STL) and continuous control by learning context-dependent “soft” margins and intent signals while preserving constrained receding-horizon optimization. This principle extends beyond driving to robotics domains that must balance formal safety/task constraints with multimodal human preferences.

The main contributions of this paper are as follows:

We formulate an STL-constrained MPC framework in which both rule-wise robustness slackness and intermediate sub-goals are learned from demonstrations as context-dependent decision variables.

We propose a conditional diffusion model that jointly generates robustness slackness and sub-goals, providing improved multimodal coverage compared to VAE-based slackness learning, which yields a more diverse set of feasible MPC–STL plans under the same context.

We demonstrate in highD-based track-driving simulations that diffusion-guided MPC–STL improves task success and induces more realistic lane-changing behavior compared to imitation-learning baselines and MPC–STL variants (CVAE slackness and strict STL enforcement), while remaining computationally tractable for receding-horizon control in our experimental setting.

2. Related Work

Temporal logic specifications in planning and control. A large body of work studies trajectory optimization and MPC under temporal logic, most prominently Linear Temporal Logic (LTL). Early formulations rely on mixed-integer programs to encode finite- or infinite-horizon specifications for continuous systems [

19,

20,

21], and sampling or graph(tree)-based planners for co-safe LTL variants [

22]. Signal Temporal Logic (STL) has subsequently been integrated into MPC to optimize robustness of satisfaction [

23], address uncertainty via probabilistic predicates [

24], and avoid combinatorial search using successive convexification [

25]. Beyond single-agent settings, distributed STL planning has been explored for multi-robot teams, improving scalability while preserving task-level correctness [

15]. More recently, learning-augmented formulations that optimize STL robustness in closed loop have shown promise for improving computational efficiency and empirical satisfaction [

16].

Learning for MPC and rule flexibility. Coupling learning with MPC has been widely investigated for model identification, residual dynamics, and task-specific performance [

26,

27,

28]. In rule-governed domains (e.g., driving), strict adherence to all rules may be infeasible; related efforts therefore either learn temporal-logic structure from data or plan under partially unsatisfiable specifications. On the learning side, Kong et al. infer reactive parameter STL (rPSTL) formulae directly from labeled trajectories, discovering discriminative temporal-logic properties and exposing causal, spatial, and temporal relations useful for monitoring and design tuning [

29]. On the planning side, minimum-violation methods explicitly search for trajectories that relax low-priority requirements when all constraints cannot be met [

30]. Our prior work learned robustness slackness—rule-wise lower bounds on STL robustness inferred from demonstrations—and embedded them into MPC–STL via a conditional VAE mapping from context to slackness values [

9]. While effective, VAE-based priors can suffer from limited expressiveness in inherently multimodal settings and may underrepresent rare but valid expert behaviors; moreover, they did not explicitly capture intermediate sub-goals that structure longer-horizon decisions. In contrast, the present work employs a conditional diffusion model to jointly predict robustness slackness and sub-goals, which are then integrated into the MPC–STL constraints and objective, respectively.

Imitation learning for autonomous driving. Imitation learning (IL) provides an alternative to manual cost design by fitting policies to expert data [

6]. In end-to-end driving, Conditional Imitation Learning (CIL) conditions policy outputs on high-level commands, improving intersection handling and intent disambiguation [

7]. Although IL methods can capture complex mappings from observations to actions, they provide limited mechanisms for explicit rule enforcement and safety constraint handling under distribution shift, motivating hybrid approaches that retain constraint-based structure.

Diffusion models for control and driving. Diffusion models have recently emerged as expressive generative priors for sequential decision making in robotics. Diffusion Policy learns action distributions conditioned on observations and has demonstrated strong multimodal control from demonstrations [

18]. In autonomous driving, diffusion-based planners have been explored for closed-loop planning with explicit guidance mechanisms [

31], as well as diffusion-based driving trajectory generation/planning frameworks [

32]. Recent work also studies more efficient planning-time sampling/optimization with diffusion, e.g., using truncated diffusion within search to improve exploration and performance in closed-loop driving benchmarks [

33]. These results suggest that diffusion priors can mitigate mode-averaging limitations of VAEs and provide richer multimodal representations of expert strategies. In contrast to diffusion planners that directly generate trajectories/actions, our diffusion model serves as a multimodal prior over decision variables (slackness and sub-goals) that parameterize a downstream constrained MPC–STL optimizer.

Recent RL-based decision making and eco-driving. Recent studies have investigated end-to-end reinforcement-learning decision modules for challenging road geometries (e.g., consecutive sharp turns), and multi-objective eco-driving strategies that jointly consider safety and energy objectives for intelligent (hybrid/electric) vehicles [

34,

35]. These approaches are complementary to our setting: they primarily rely on reward design and policy optimization, whereas our work focuses on rule-governed control with explicit STL robustness constraints embedded in MPC.

Positioning of our contribution. Compared to prior MPC–STL works that either (i) encode logic via MILP or convexified surrogates [

23,

25], (ii) rely on fixed rule weights or pre-specified priorities [

30], or (iii) learn only rule-wise slackness with VAE priors [

9], our approach introduces a conditional diffusion model that jointly predicts (a) rule-wise robustness slackness and (b) state-space sub-goals from expert demonstrations and current context. The learned slackness values define soft margins in the STL constraints, and the learned sub-goals are injected into the MPC objective (including the terminal term). This diffusion-guided MPC–STL preserves the constraint-based structure of MPC–STL while capturing multimodal expert preferences and intermediate intent, enabling flexible yet rule-aware trajectories that align more closely with human driving behavior.

4. Problem Formulation

We consider STL-constrained MPC where both the rule-wise margins of satisfaction and the behavioral intent (sub-goal) are learned functions of context. At each time

t, given a feature vector

extracted from the current state, neighbors, and map cues, we assume access to learned predictors

which provide (i) a rule-wise robustness slackness vector and (ii) a target sub-goal, respectively. (Their concrete realization is described in

Section 5.)

Let the prediction horizon be

H. Denote

,

, and the finite-horizon signal

as in (

3).

STL constraints with learned slackness. Here,

is a learned lower bound on the robustness of rule

over the horizon; allowing

permits bounded robustness violation when strict satisfaction is infeasible. For

, we enforce

Goal-shaped MPC objective. Let

be the terminal state under

. We define

with

,

, and

. Typical choices for

include (i)

(full-state goal,

); (ii)

(subset tracking, e.g., position only); (iii) a user-defined linear map to a task/output space. (If the goal is defined in a nonlinear output

, one may replace

with

in (

7).)

MPC–STL. At each time

t, the optimization in (

8) searches for a control sequence

that (a) steers the terminal state toward the context-dependent sub-goal

via the quadratic objective in (

7), while (b) satisfying all STL rules with learned per-rule margins

over the prediction horizon, as enforced by (

6). In words, the planner balances “go to the intended sub-goal” against “respect each rule with the required slackness,” where both the intent (

) and the required margins (

) are learned functions of the current context (see

Section 5).

After solving (

8), we apply only the first input

, observe

, and repeat the optimization in a receding-horizon manner.

6. Experimental Results

6.1. Implementation Details and Dataset

All methods were implemented in Python (v3.10) with PyTorch (v2.7.1) for learning. The MPC problems were solved using Gurobi [

37]. Experiments were conducted on a workstation equipped with an AMD R7-7700 CPU and an RTX 4080 Super GPU. Unless stated otherwise, the MPC prediction horizon is set to

and we use a single diffusion sample (

) per control step.

We use the highD dataset [

38] as expert demonstrations. The highD recordings used in this work consist of 60 tracks, which we group into three subsets: highD dataset1 (tracks 1–20), highD dataset2 (tracks 21–40), and highD dataset3 (tracks 41–60). To avoid leakage across train/test, we construct the training set by extracting samples only from even-numbered tracks within each subset, and we perform all closed-loop evaluations on the remaining odd-numbered tracks that were never used to construct training samples. From each subset, we extract 5000 training samples from even-numbered tracks, resulting in

training pairs

in total. Here,

is the context feature at time step

n and

contains (i) rule-wise robustness slackness targets computed from the horizon-wise minimum robustness in (

9), and (ii) goal-point targets extracted from demonstrations using (

10). We uniformly sample training pairs across the even-numbered tracks within each subset to avoid over-representing a small number of long trajectories. We do not use a separate validation split; all hyperparameters are fixed across experiments, and all reported closed-loop metrics are computed only on the held-out odd-numbered test tracks.

For the diffusion model, we normalize each component of

to the range

using min–max statistics computed from the training set, and train the model in the normalized space. At inference time, we denormalize the generated outputs back to the original units before passing

to the MPC–STL solver. We train the diffusion model with

forward diffusion steps, a standard choice that yields stable training. Unless stated otherwise, we use a DDIM sampler [

36] for diffusion inference with 20 denoising steps, which provides a practical quality–runtime trade-off. For a fair comparison, all imitation-learning baselines are trained using the same dataset with the same split and sample size.

On our workstation (AMD R7-7700 CPU, RTX 4080 Super GPU), the average Gurobi-based MPC solve time is approximately per control step, and DDIM sampling with 20 denoising steps takes approximately per sample (averaged over the evaluated scenarios). With the default setting , this yields an end-to-end wall-clock time of about per control step (excluding minor overhead).

The proposed pipeline is computationally tractable for receding-horizon evaluation in our experimental setting; however, the current implementation (diffusion sampling + mixed-integer MPC–STL) may not meet strict real-time constraints at high control rates. Accordingly, we use a conservative default setting of with DDIM inference (20 denoising steps) in the main experiments. While Algorithm 1 supports multimodal sampling with , increasing S can improve robustness by enabling selection of the best feasible plan among multiple candidate pairs , at a computation cost that scales approximately linearly with S because sampling and MPC–STL solves are repeated per candidate. With our measured timings, the per-step wall-clock time is approximately in a non-parallel setting without solver warm-starting. This overhead can be mitigated via fewer DDIM steps, solver warm-starting, parallel candidate evaluation, and faster optimization backends or compiled implementations.

We use a kinematic unicycle model and do not explicitly impose near-limit vehicle stability constraints such as tire friction limits, sideslip, or detailed lateral dynamics. This choice matches the scope of highway driving scenarios in highD, where recorded maneuvers are typically smooth and far from handling limits. Extending the MPC layer with stability constraints (e.g., lateral-acceleration bounds and friction-circle/ellipse constraints) and higher-fidelity dynamics (e.g., a dynamic bicycle model with sideslip) is left for future work.

6.2. Baselines

We compare the proposed method against two representative alternatives:

(1) Imitation Learning (IL). We consider imitation-learning baselines that predict a length-H action sequence from the current context feature (and oracle nearby-vehicle futures over the same horizon when used in the evaluation protocol), and execute them in closed loop in a receding-horizon manner by applying only the first action at each step. The resulting ego trajectory is obtained by rolling out the applied actions through the same vehicle dynamics model for reporting and analysis. These baselines do not explicitly enforce STL constraints and do not solve an optimization problem.

(2) MPC–STL with CVAE slackness (MPC–STL (CVAE)) [

9]

. This baseline follows our previous framework where a conditional VAE predicts only the rule-wise robustness slackness

from

, and an MPC–STL optimizer generates the control sequence under the predicted margins. In contrast, our proposed method uses a conditional diffusion model and jointly predicts both slackness and sub-goals, injecting the latter into the MPC objective as in (

7).

6.3. System Description

We model vehicle dynamics using a unicycle model with state

and control input

, where

is the angular velocity and

is the acceleration:

For optimization, we linearize the dynamics around a reference point

and obtain the first-order approximation

, with

defined as in the following.

In all experiments, the sub goal (goal point) is defined in the planar position space as ; accordingly, we set and use to select from .

6.4. Rule Description

We consider five driving rules encoded as STL formulas . In our coordinate convention, larger y corresponds to the left side of the road, and smaller y corresponds to the right side.

- 1.

Lane keeping (right/lower boundary): ;

- 2.

Lane keeping (left/upper boundary): ;

- 3.

Collision avoidance (neighbor vehicle bounding box): - 4.

Speed limit: ;

- 5.

Slow down before the preceding vehicle:

where

denotes the rear boundary of the preceding vehicle along the lane axis.

All rules are evaluated over the MPC horizon using the minimum robustness as in (

6).

Figure 3 illustrates the environment and notations used in the rules. In all experiments, the temporal parameters for

are set to

and

.

6.5. Simulation Results

Figure 4 shows representative closed-loop rollouts on the highD dataset. For each scenario, the conditional diffusion model predicts (i) a sub-goal (goal point)

(top-left) and (ii) rule-wise robustness slackness

(bottom-left). Given these predictions, the MPC–STL solver computes the optimized control sequence and the resulting trajectory (right). In the slackness plots, entries with

are highlighted (red boxes), which indicates that the learned margins allow controlled (bounded) relaxation of the corresponding rules when necessary.

In

Figure 4a, the predicted goal point lies in the left lane and the slackness relaxes

(upper/left boundary) and

(slow-down) relative to the other rules. Accordingly, the MPC executes a left-lane maneuver and maintains speed while approaching the preceding vehicle, reflecting the learned intent and context-dependent flexibility. In

Figure 4b, the predicted goal point lies in the right lane and the slackness relaxes

(lower/right boundary), leading to a rightward lane change. Overall, these examples illustrate that the diffusion model captures both intermediate intent (goal points) and rule flexibility (slackness), and that MPC–STL converts them into human-like maneuvers in closed loop.

6.5.1. Comparison with Imitation Learning (IL)

We first compare our diffusion-guided MPC–STL against representative imitation-learning (IL) approaches. To ensure a fair comparison, we provide the same level of future information to all methods: in addition to the current context feature

, we supply the future trajectories of nearby vehicles over the horizon (obtained from the held-out dataset). Concretely, each IL baseline receives as input

where

denotes the future states of nearby vehicles (up to six vehicles) over the same horizon, and

is the predicted action sequence of the ego vehicle. All IL baselines are executed in a receding-horizon manner: at each time step, they predict

and apply only the first action

. For reporting and analysis, the executed ego trajectory is obtained by rolling out the applied actions through the same vehicle dynamics model. This oracle protocol removes prediction error as a confounder and focuses the comparison on the decision mechanism (optimization with constraints vs. direct action prediction).

In our method, the same nearby-vehicle future trajectories are used inside the MPC–STL optimization (e.g., to evaluate collision-related STL predicates and constraints), whereas in IL they are used only as additional conditioning inputs, without explicit constraint evaluation or optimization. We emphasize that the goal of this comparison is not to claim IL as the closest architectural match, but to quantify the empirical gap between purely learned action prediction and constraint-aware receding-horizon planning under matched access to oracle neighbor futures.

We consider three IL variants: (i) Diffusion Policy [

18], which generates a length-

H ego action sequence via an action diffusion model conditioned on

; (ii) a Transformer–VAE, using a Transformer decoder and a conditional VAE; and (iii) LSTM–GMM [

39], using an LSTM decoder with a Gaussian mixture output layer. All baselines are trained on the same number of samples (

) from the highD dataset and use the same train/test split as our method. For fairness, since our method does not use the ego vehicle’s past trajectory as input, we also remove past-trajectory inputs from all IL baselines.

The evaluation task is long-horizon track driving: the ego vehicle starts near one end of the track and must reach the opposite end. A rollout is counted as a success if the ego vehicle reaches the goal region without leaving the track boundaries and without colliding with any other vehicle; otherwise it is a failure. The primary metric is the success rate over repeated trials.

We evaluate in held-out highD scenarios across three disjoint subsets: highD dataset1 (tracks 1–20), highD dataset2 (tracks 21–40), and highD dataset3 (tracks 41–60). For each subset, we perform closed-loop rollouts on the test tracks that were not used for training sample extraction (i.e., odd-numbered tracks in the corresponding range). For each test scenario, we select one vehicle and designate it as the ego vehicle. Importantly, the ego vehicle does not follow the recorded trajectory; instead, it is controlled by the tested algorithm (our method or an IL baseline). The surrounding vehicles follow the dataset trajectories, and their future trajectories over the horizon are provided to the tested method as described above. We run 200 rollouts per subset for each method under the same evaluation protocol (scenario set and initialization procedure), resulting in 600 rollouts in total across the three subsets.

Table 1 reports the success statistics under the same test environments. Although all IL baselines are provided with oracle future trajectories of nearby vehicles, they can still fail in long-horizon rollouts due to error accumulation in the rolled-out (executed) ego trajectory and the absence of explicit rule enforcement. In contrast, our method achieves a higher success rate, consistent with explicitly solving an STL-constrained receding-horizon optimization problem at every step, which enforces safety rules and goal-reaching while adapting to the context-dependent slackness and sub-goal predicted by the diffusion model.

Figure 5 shows qualitative snapshots from a representative test scenario. The proposed method performs a smooth lane change and reaches the goal region while maintaining feasibility with respect to the MPC–STL constraints. In contrast, the diffusion-policy baseline progresses toward the goal but collides with a nearby vehicle near the terminal region. The Transformer–VAE reaches the goal; however, it exhibits an undesirable behavior of traveling along the dashed lane marking for an extended period. The LSTM–GMM baseline shows a similar tendency to linger on the dashed line and ultimately results in a collision. These snapshots highlight that, even with access to oracle nearby-vehicle futures, purely learned IL rollouts can exhibit long-horizon instability or unsafe maneuvers, whereas the proposed optimization-based controller yields more reliable, rule-aware behavior.

6.5.2. Comparison with MPC–STL with CVAE Slackness

We further compare the proposed diffusion-guided MPC–STL against two MPC–STL variants to isolate the impact of (i) the generative prior used to infer robustness slackness and (ii) learning sub-goals to shape the MPC objective. Specifically, we consider:

The proposed method infers both rule-wise slackness and a sub-goal, i.e.,

, from the conditional diffusion model, and uses

in the MPC–STL objective (Equation (

7)). In contrast, MPC–STL (CVAE) and Strict MPC–STL do not infer sub-goals. To keep the goal term in the MPC objective identical across MPC–STL variants, we provide these two baselines with a simple heuristic sub-goal: a point located at a fixed look-ahead distance

ahead of the ego vehicle along the (local) lane direction (aligned with the longitudinal axis in the highD coordinate frame). Thus, all MPC–STL methods share the same objective form, while differing only in how

are obtained (learned

for the proposed method vs. heuristic

for the baselines). This design isolates the effect of learning

(and the diffusion prior) from the choice of objective structure itself.

We perform controlled closed-loop evaluations under the same evaluation protocol. We evaluate on three disjoint held-out subsets of highD scenarios: highD dataset1 (tracks 1–20), highD dataset2 (tracks 21–40), and highD dataset3 (tracks 41–60). For each subset, we construct 100 test trials, where each trial corresponds to a different initial condition and traffic context (i.e., different starting positions and surrounding vehicle configurations). For each trial and each method, we repeat the rollout 10 times to account for stochasticity when sampling is enabled in the learned generative models (Proposed and MPC–STL (CVAE)). Strict MPC–STL is deterministic; repeating yields identical outcomes, and we include it for consistency in reporting.

We evaluate (i) the success rate, where a rollout is successful if the ego vehicle reaches the goal region without leaving the track boundaries and without colliding with any other vehicle, and (ii) the lane-change rate, the fraction of rollouts in which the ego vehicle performs at least one lane change. This metric matters because lane changes occur with a non-negligible frequency in real driving data; for example, highD reports an average lane-change frequency on the order of

per vehicle (depending on the subset and filtering) [

38]. Overly conservative planners that never change lanes may therefore succeed only in limited situations and can fail to make progress in dense traffic.

Table 2 summarizes the quantitative comparison across the three subsets. The proposed method achieves the highest success rate and a higher lane-change rate than MPC–STL (CVAE), suggesting that jointly predicting slackness and sub-goals helps the optimizer resolve long-horizon dilemmas more consistently. In contrast, strict MPC–STL yields near-zero lane changes and the lowest success rate in our setting, suggesting that strict satisfaction of all rules can be overly conservative and may prevent progress in dense traffic.

Figure 6 provides a qualitative example illustrating the behavioral difference between the proposed method and MPC–STL (CVAE). In this scenario, MPC–STL (CVAE) remains in the current lane and does not execute a lane change, which limits progress toward the goal. In contrast, the proposed method predicts a sub-goal that encourages a lane change and, together with context-dependent slackness, enables the MPC–STL optimizer to perform a safe lane-change maneuver.

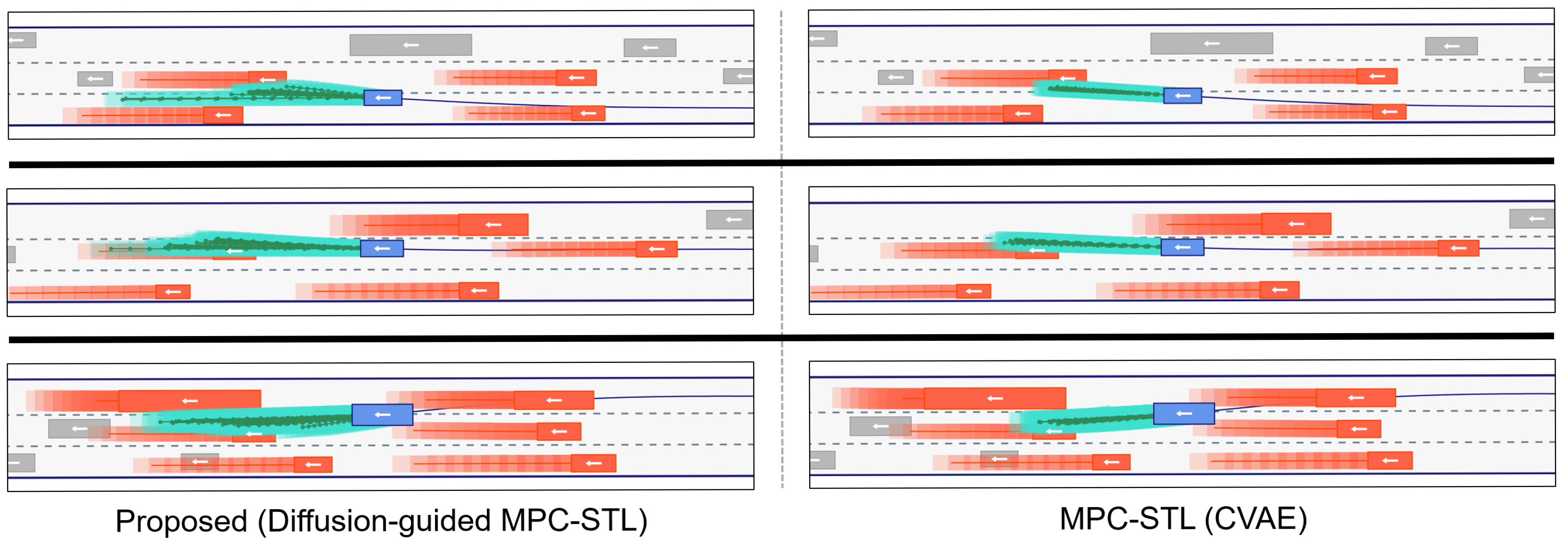

To highlight the practical benefit of using diffusion as a multimodal prior within the constrained MPC–STL loop, we visualize the diversity of MPC solutions induced by multiple samples from the learned generative models. In the proposed method, the conditional diffusion model jointly samples a pair , i.e., rule-wise robustness slackness and a sub-goal, and MPC–STL then computes a trajectory for each sampled pair. In contrast, MPC–STL (CVAE) samples only from the CVAE prior (without learned sub-goals), and computes trajectories under the sampled slackness values.

Figure 7 compares the resulting sets of planned trajectories in representative highD scenarios. For this visualization only, we use

to reveal the diversity of candidate plans induced by each learned prior under the same context. The proposed diffusion-guided MPC–STL produces a noticeably more diverse set of feasible plans, capturing multiple plausible strategies (e.g., varying degrees of lateral motion and lane-change timing) under the same context. By contrast, the CVAE-based prior yields trajectories that are more concentrated around a single mode, resulting in limited behavioral diversity. This qualitative comparison supports our motivation for using diffusion in the MPC–STL setting: better mode coverage at the level of decision variables

translates into richer candidate plans, which is especially useful in driving contexts where multiple expert-like responses can be valid.

7. Conclusions

We presented a diffusion-guided MPC–STL framework that learns context-dependent rule flexibility and intermediate intent from expert demonstrations, and synthesizes closed-loop driving behaviors under STL constraints. Unlike prior learning-based MPC–STL approaches that predict only robustness slackness with VAE priors, our method employs a conditional diffusion model to jointly predict (i) a rule-wise robustness slackness vector that sets soft margins for STL satisfaction and (ii) a sub-goal that shapes the MPC objective. This coupling preserves the interpretability and constraint-based structure of STL specifications while enabling multimodal, human-like decision making by sampling decision variables and selecting feasible plans via receding-horizon optimization. More broadly, the proposed recipe—combining symbolic temporal-logic specifications with learned multimodal priors—offers a general pathway toward interpretable, constraint-aware controllers beyond driving.

Experiments on held-out highD scenarios showed that the proposed approach improves task success and induces more realistic lane-changing behavior compared to representative imitation-learning baselines, even when all methods are given oracle future trajectories of surrounding vehicles. Comparisons with MPC–STL (CVAE) and strict MPC–STL further indicated that jointly learning slackness and sub-goals yields more consistent long-horizon progress, whereas strict rule enforcement can lead to overly conservative behavior and reduced success in dense traffic. Qualitative results also suggested that diffusion-based sampling provides a richer set of plausible maneuver candidates than VAE-based slackness priors, translating into diverse feasible plans under the same context.

Several limitations and extensions remain for practical deployment. While the proposed pipeline is computationally tractable for receding-horizon evaluation in our experimental setting, the current implementation (diffusion sampling + mixed-integer MPC–STL) may not meet strict real-time constraints at high control rates; runtime can be reduced through fewer DDIM steps/samples, warm-starting, parallel candidate evaluation, and faster optimization backends or compiled implementations. The controller also depends on learned decision variables and can be affected by prediction error or distribution shift; practical safeguards include projecting to a meaningful range, filtering outlier sub-goals, sampling multiple candidates and discarding infeasible ones, and reverting to a conservative fallback policy (e.g., strict MPC–STL or safe braking/keep-lane) when all candidates are infeasible. In addition, our evaluation provides oracle neighbor futures to isolate the decision-making mechanism; a deployable system must integrate a prediction module and handle uncertainty via multi-hypothesis prediction and uncertainty-aware constraint handling (e.g., scenario-based or chance-constrained MPC).

Beyond deployment concerns, our current study is intentionally scoped to structured highway track-driving with a fixed rule set. Extending to complex urban environments likely requires richer and more compositional specifications (e.g., context-conditioned rule-set selection or composition from STL templates) and semantic sub-goals/options (e.g., “yield to pedestrian, then turn”). Moreover, the formulation does not detect “unknown unknowns” where the specification itself becomes invalid (e.g., emergency vehicles or atypical hazards); a promising direction is a hierarchical architecture that augments MPC–STL with anomaly/out-of-distribution (OOD) and intent-reasoning modules to enable mode switching or activation of context-appropriate rule sets.