1. Introduction

With growing global awareness of environmental protection and energy efficiency, achieving energy-saving and carbon reduction in transportation has become a key issue. Meanwhile, autonomous driving remains a hot topic, with vehicle automation levels having been defined [

1], and ongoing discussions comparing the advantages and limitations of LiDAR and vision-based systems. The functionality of autonomous vehicles relies heavily on sensors to detect the external environment, and driving characteristics and driver behavior significantly influence fuel consumption and emissions. Traditional driver assistance systems are mainly based on human experience and often lack real-time analysis and alert capabilities, making it difficult to support drivers in making environmentally friendly driving decisions.

Green driving refers to a smoother driving style that reduces rapid acceleration and sudden braking, which helps save fuel and improve driving safety [

2]. By integrating deep learning and computer vision, real-time road detection can be achieved. When specific signs such as speed limits or traffic lights are detected, and if the vehicle is moving too fast or approaching a yellow light that has not yet turned red, the system can use deep learning and vision results to gently apply the brakes in advance. This approach supports both comfortable and energy-efficient driving. In our previous research, we developed an integrated OBD-II system and applied two types of neural networks for fuel consumption analysis and prediction, with generated reports offering evaluations and behavioral suggestions [

3,

4].

Traditional warning systems often use rigid, predefined messages and require internet access to provide additional information. However, connecting a vehicle to the internet introduces potential risks, such as hacking or personal data breaches. An offline assistant system can reduce these risks by eliminating one common entry point for cyberattacks. With the integration of generative AI, the system’s role goes beyond that of a simple assistant. For example, linking generative AI with OBD-II allows for fault diagnosis [

5,

6]. In cases where an abnormal throttle opening is detected—a condition that may lead to uncontrolled acceleration but is difficult to notice in the early stage—generative AI can help monitor and issue early warnings. By combining YOLO, generative AI, and OBD-II, more advanced functions can be developed, further enhancing the capabilities of driver-assistance systems.

Numerous studies have applied the YOLO [

7,

8,

9] family of models for vehicle detection tasks [

10,

11]. Our laboratory has also developed a custom-integrated OBD-II system and used two types of neural networks to analyze and predict fuel consumption [

3,

4]. Generative AI has demonstrated strong capabilities in text processing and information integration, making it a promising core component for an in-vehicle assistant. Although some recent research has explored combining YOLO with image-based generative AI [

12], there is currently no study that fully integrates YOLO, generative AI, and OBD-II into a unified system.

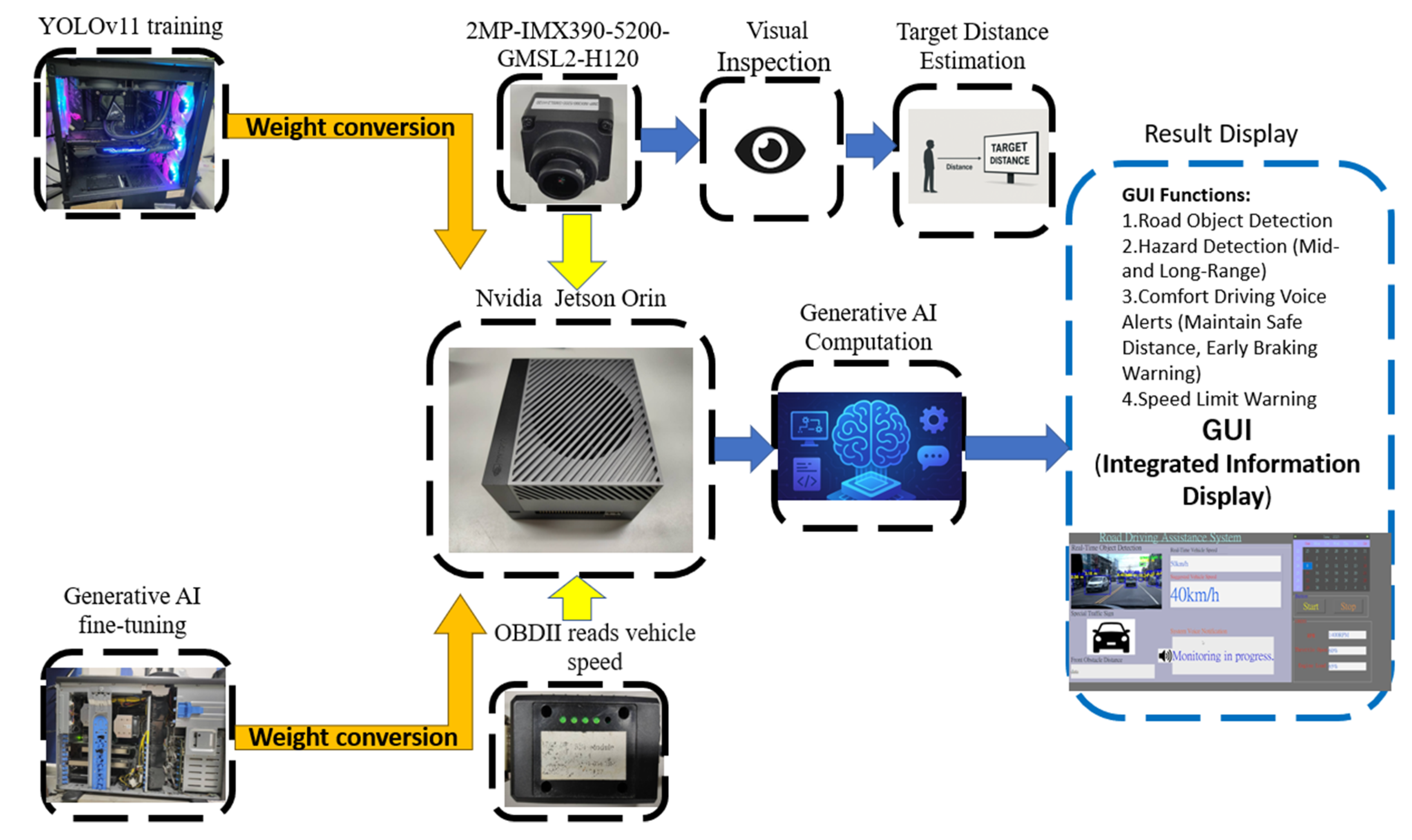

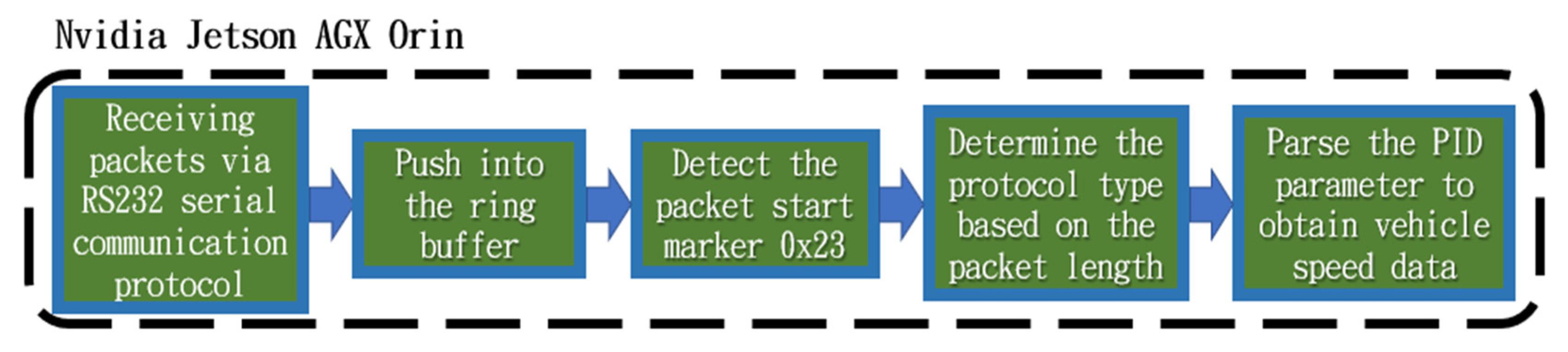

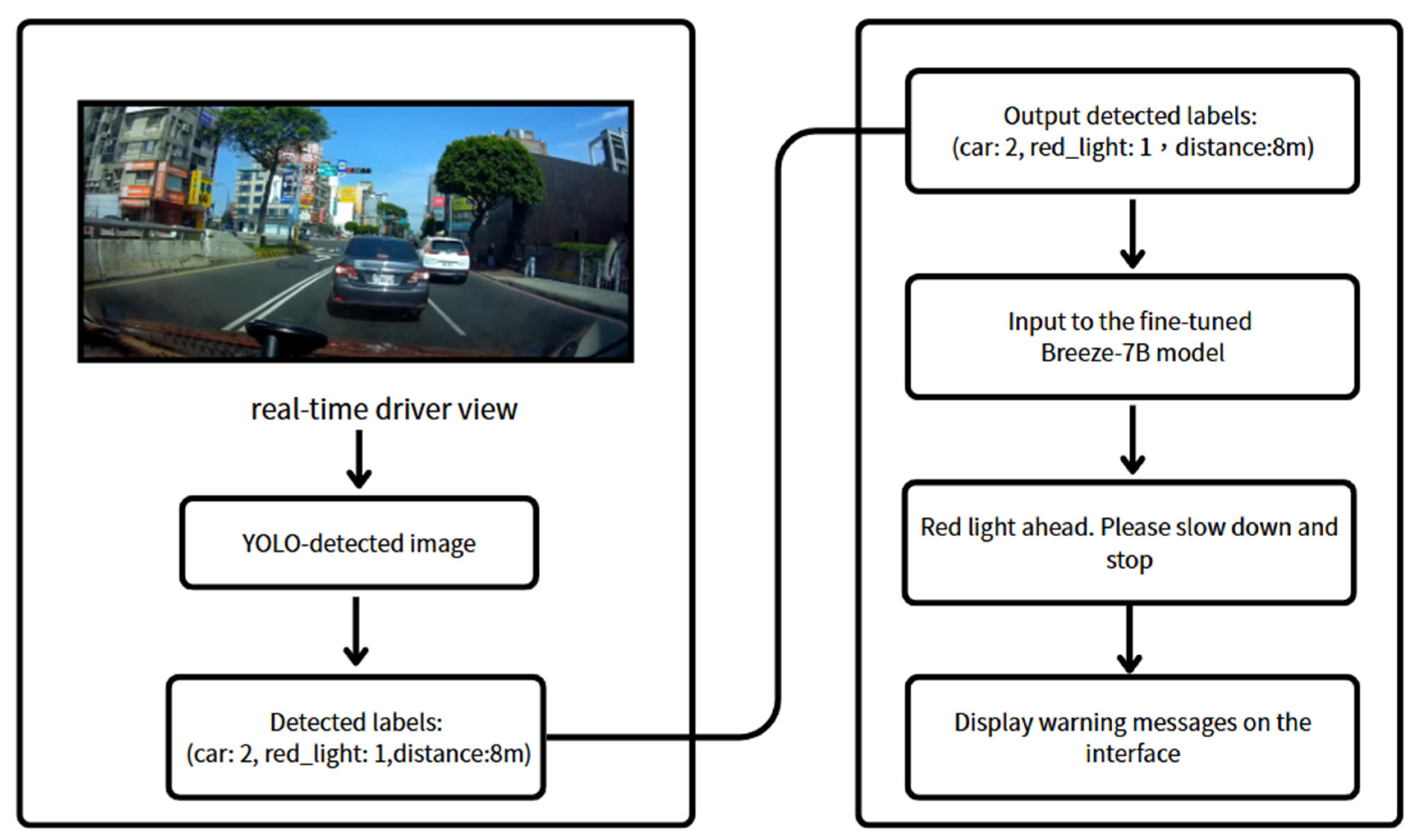

This study integrates an On-Board Diagnostics II (OBD-II) system [

13] with real-time road condition recognition to develop an eco-driving assistance system. Using OBD-II, real-time vehicle operation data such as engine RPM, fuel consumption, and speed can be collected. These data are combined with the YOLOv11 deep-learning model, which is used to detect road objects ahead, including pedestrians, traffic lights, vehicles, and speed limit signs, in order to evaluate whether the driver’s behavior aligns with energy-saving principles. Furthermore, information from both OBD-II and image recognition is converted into textual input, which is then processed by a fine-tuned generative language model, Breeze:7B [

14,

15]. This model can generate context-aware suggestions, such as gently applying the brakes in advance or issuing a warning when the vehicle is speeding. The generated content is then delivered via a speech synthesis module, helping reduce driver distraction and enhancing both user experience and system intelligence.

4. Discussion

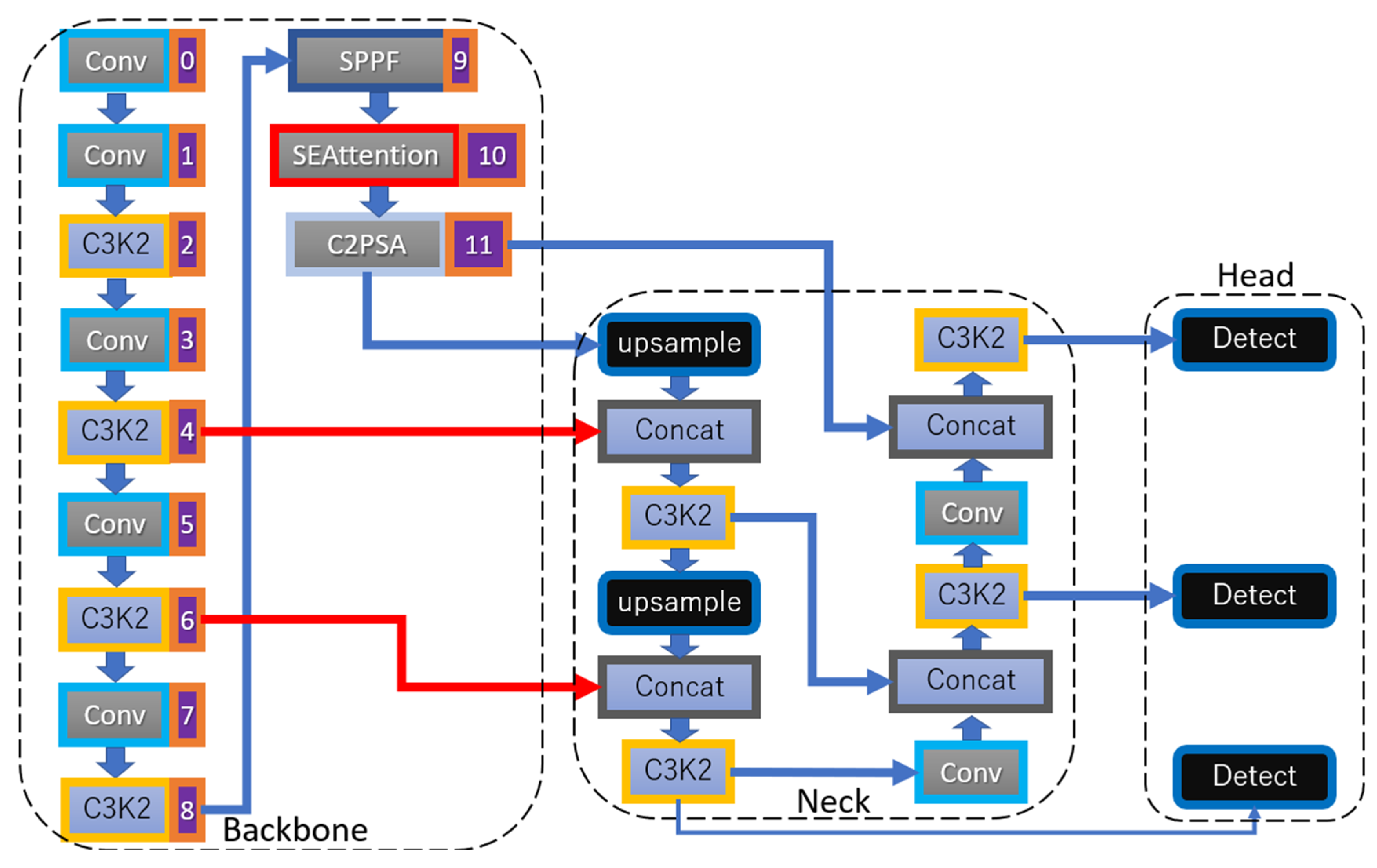

This study integrates three core components: YOLOv11, OBD-II, and a generative AI model. The YOLOv11 architecture was customized to improve the accuracy of road object detection, enabling the system to better interpret environmental information. The OBD-II module captures real-time vehicle speed data, allowing the system to comprehensively gather both external (road) and internal (vehicle) information.

The more complete the incoming data, the more effectively the generative AI model can consolidate and reason over this information to generate responses. In this study, the generative AI serves as the “brains” of the system, offering several advantages over traditional rule-based systems. Not only can it integrate multimodal inputs more intelligently, but it also produces natural and flexible alert messages rather than rigid warnings. Furthermore, it supports offline interaction, enabling real-time question answering without requiring cloud access.

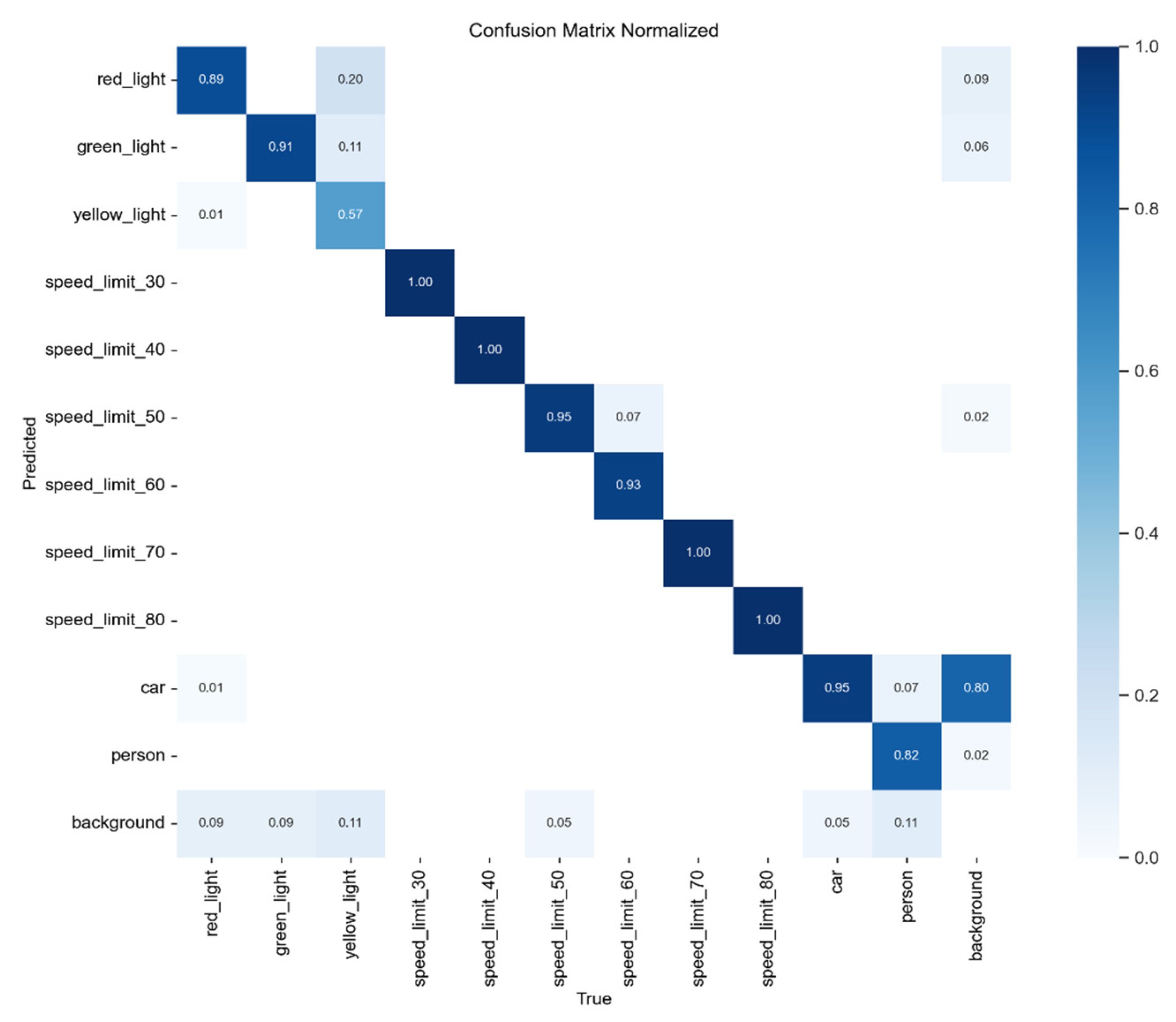

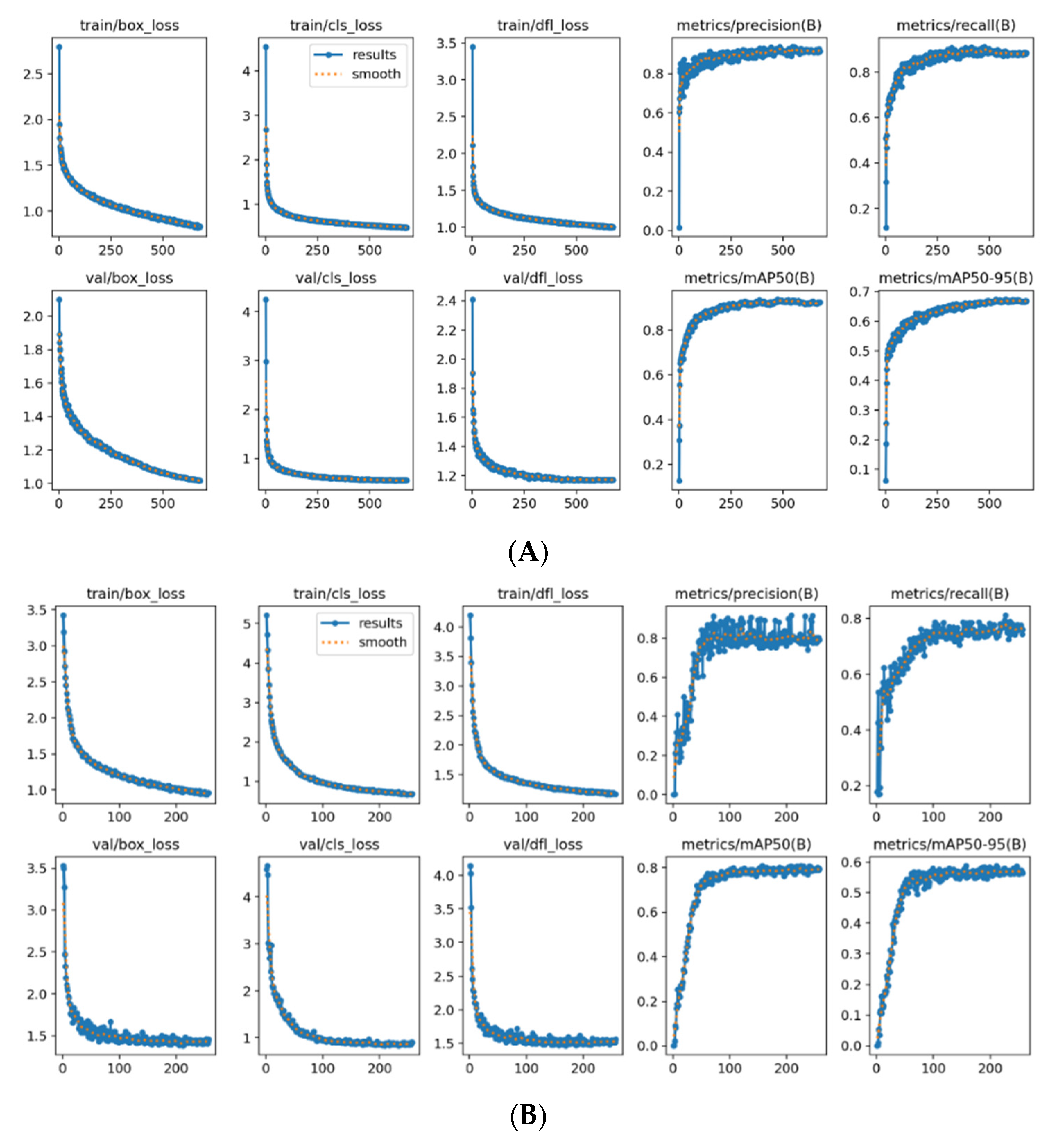

As shown in

Figure 15A, the final training results were evaluated using common performance metrics, including Precision, mean Average Precision (mAP), Recall, and F1-score. The figure demonstrates that both the mAP and Precision curves not only consistently increased but also remained stable without significant fluctuations. Specifically, the model achieved a Precision of 0.91 and an mAP50–95 of 0.668. The smooth trend lines indicate that the model converged in a stable manner throughout training.

Compared to the original YOLOv11 model [

13], the experimental results show that the addition of SEAttention improves both accuracy and mAP50–95, as well as the overall convergence behavior. As shown in

Figure 15B, when trained using the same hyperparameters and dataset, the baseline YOLOv11 exhibited greater oscillation in its training loss curve.

With SEAttention integrated into the YOLOv11 architecture, the model not only achieved higher precision but also demonstrated smoother convergence during training, reducing fluctuations in the learning process. This indicates that the enhanced model is more stable and accurate under the same training conditions.

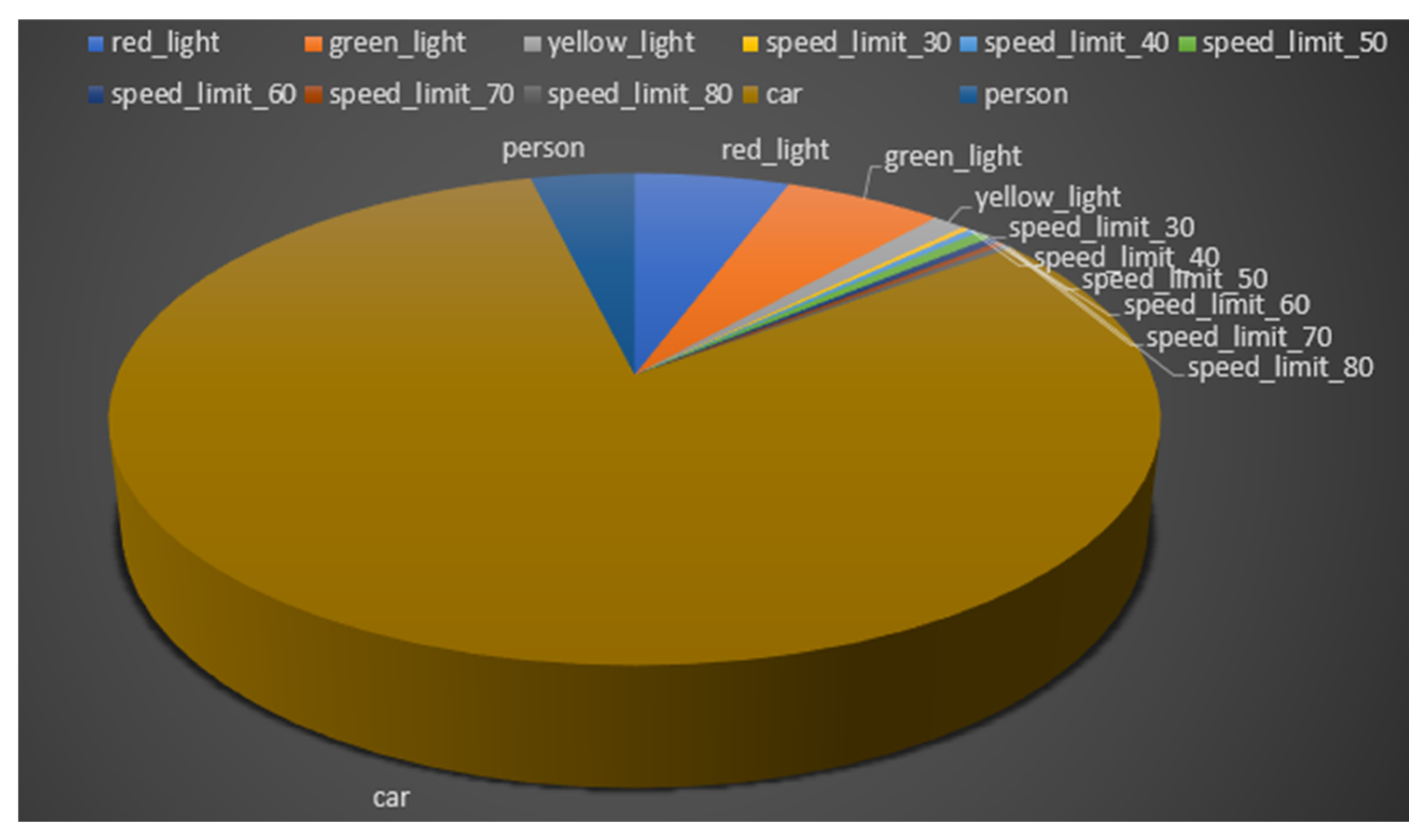

Although the training performance of YOLOv11 was generally satisfactory, misclassification between yellow lights and red lights was occasionally observed. This issue is likely caused by variations in lighting conditions or viewing angles. To address this, the dataset can be augmented with additional images of yellow lights captured under diverse scenarios.

Furthermore, an approximately 80% similarity between the “background” and “car” classes was noted. This can be attributed to incomplete annotations during data labeling—certain vehicles were occluded or too small and thus were not annotated, resulting in visual overlap between these two categories. Improving the labeling strategy is expected to reduce this confusion and enhance classification accuracy. Detailed values are shown in

Table 6.

In the case of the generative AI component, there are corresponding limitations. Due to the relatively high computational resource requirements, the model is unable to perform inference on every single frame in real time. Nevertheless, the system is capable of issuing warnings when critical events occur, such as red light detection or the presence of imminent hazards. These alerts provide drivers with sufficient time to respond and thus fulfill the system’s role as a driving assistance mechanism.

In future research, more comprehensive traffic-related elements—such as pedestrian movement and vehicle behavior—can be further integrated into the proposed visual recognition system. Expanding the dataset to include a wider variety of road conditions and scenarios will enhance the system’s robustness and generalization. This approach could also be extended to autonomous driving (AV) applications, aligning with the principles of eco-driving to support energy-efficient and intelligent decision-making in future unmanned vehicle systems.

Additionally, to improve the reliability of Breeze: 7B in critical tasks, the integration of Retrieval-Augmented Generation (RAG) [

29] is recommended. RAG can enhance the model’s response accuracy by incorporating external knowledge retrieval during inference, thereby reducing the likelihood of factual errors [

30] and improving performance in mission-critical environments.

Table 7 presents the comparison between the modified YOLOv11-SE and other models. In the table, results highlighted in red indicate the best performance, while those in blue indicate the worst. It can be observed that, aside from being slightly lower than YOLOv7 in accuracy, YOLOv11-SE does not show inferior performance in any other category compared with the other versions, and it achieves strong results in mAP.

As shown in

Table 8, after integrating the SEAttention module, all evaluation metrics exhibit a clear improvement.

In the evaluation results for BERTScore and ROUGE, as shown in

Table 9, Breeze7B (fine-tuned) outperforms other non-fine-tuned models. For the ROUGE-2 metric, the non-fine-tuned models, lacking an understanding of YOLO’s label structure, tend to produce outputs with broken phrases and disordered word sequences. This leads to ROUGE-2 scores that are nearly zero, as the models fail to generate continuous word pairs corresponding to the reference answers.

In contrast, Breeze7B (fine-tuned) achieves a Precision of 0.3126, Recall of 0.2528, and F1-score of 0.2756, demonstrating a significantly better ability to interpret YOLO output labels and generate coherent Chinese prompt sentences compared with the near-zero scores of the base models. The results are presented in

Table 9.

All latency values in this table are based on actual measurements. Using Python, we recorded the time difference before and after the execution of each module with the time. Time() function to obtain the latency for each stage. After collecting 100 latency measurements for each stage, we calculated the average total latency.

Table 10 presents the quantified latency values for each step.

As shown in

Table 10, the generative AI module also has inherent limitations. Due to its relatively high computational resource requirements, it is not feasible to perform inference for every single frame. However, in this study, the latency introduced by the generative AI does not compromise driving safety. When a red light or hazardous situation is detected, the system bypasses the generative AI and issues a direct alert instead. This approach ensures that the driver has sufficient time to respond, thereby fulfilling the goal of providing effective driver assistance.

5. Conclusions

This study implemented an energy-efficient driving assistance system based on generative AI. The system utilizes the YOLOv11 neural network to recognize common object types encountered on the road. YOLOv11 demonstrated favorable detection speed and accuracy, particularly in rapidly changing and complex driving environments. By integrating real-time vehicle speed data from the OBD-II interface, the system can provide contextual driving analysis. The collected information is then passed to Breeze: 7B, a generative language model, which successfully interprets the input and delivers appropriate driving suggestions or warnings.

The experimental site of this study was located in the urban area of Taichung City, where dedicated bicycle lanes have been planned. As a result, non-motorized vehicles (e.g., bicycles) typically do not interact with the main traffic flow, and thus bicycles were not included as one of the detection targets in this study. In future work, we will consider expanding the data collection scope to include scenarios with non-motorized vehicles in order to enhance the model’s generalization capability in more diverse road environments.

Encouraging drivers to brake earlier not only helps reduce fuel consumption but also decreases brake wear; however, it may extend travel time. This study did not incorporate these factors into our system for quantification. Nevertheless, in our previous work, we measured fuel consumption under different driving behaviors. In future research, we plan to integrate those findings with the proposed system to further calculate the fuel savings attributable to its interventions while also assessing its impact on greenhouse gas emissions. This approach will enable us to more comprehensively validate the system’s energy-saving and environmental benefits.

In future application scenarios, the proposed system is particularly well-suited for deployment on fully or semi-autonomous platforms. For example, autonomous taxis, shuttle buses, or other driverless vehicles can directly benefit from the system’s ability to process traffic scene data (through YOLO-based object detection and OBDII information) combined with a fine-tuned language model to generate context-aware driving commands. In such applications, the system can not only control driving behaviors but also function as an interactive assistant, responding to passengers’ questions or concerns regarding the driving route.