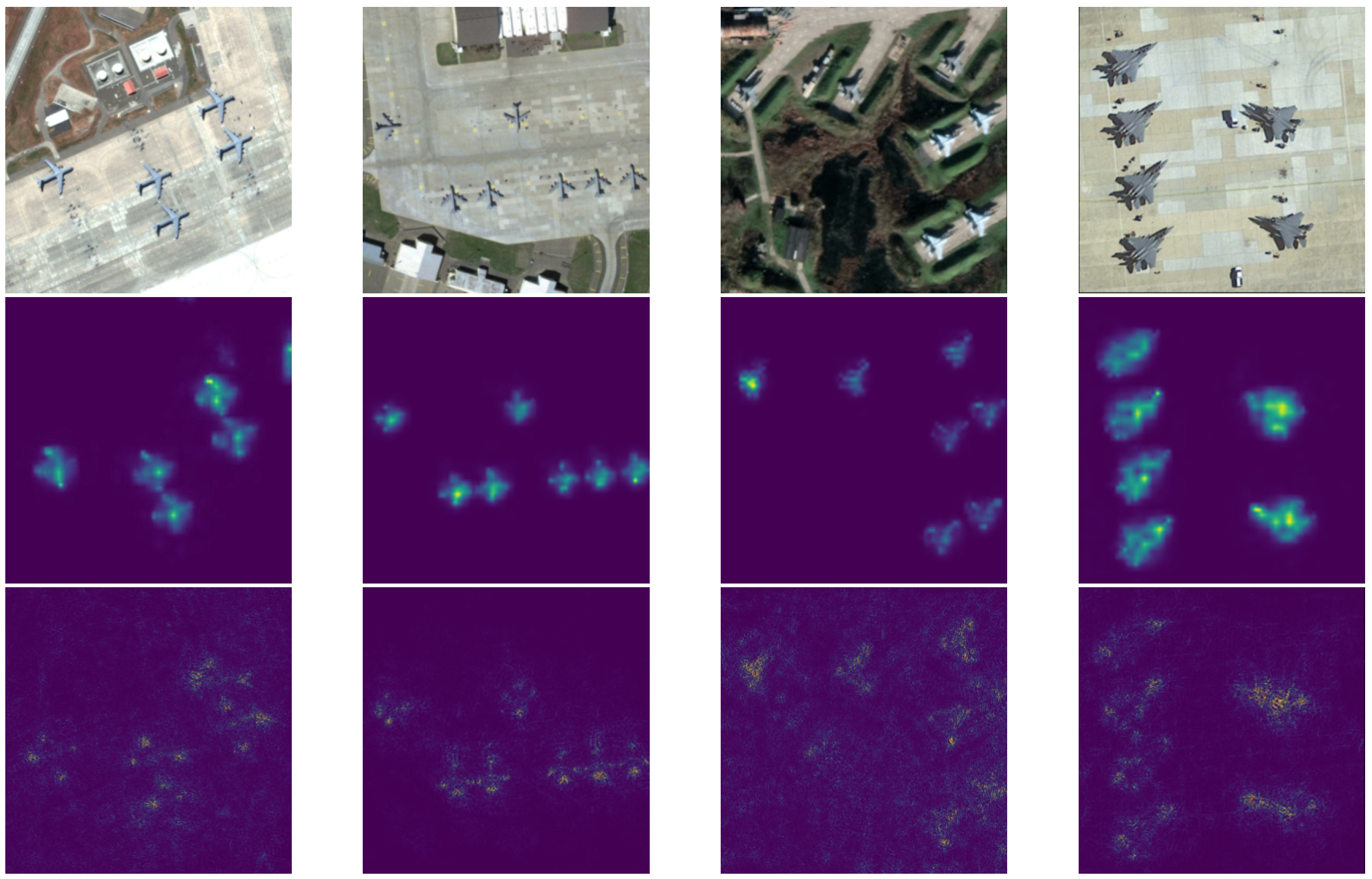

3.1. Overview

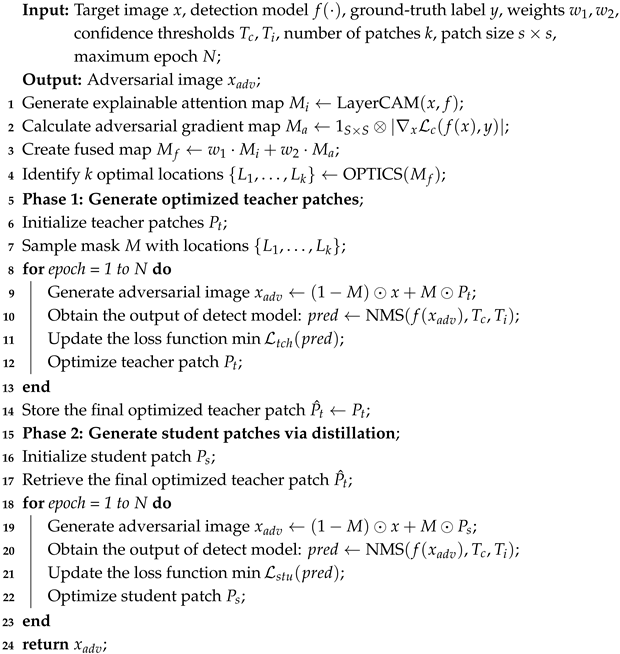

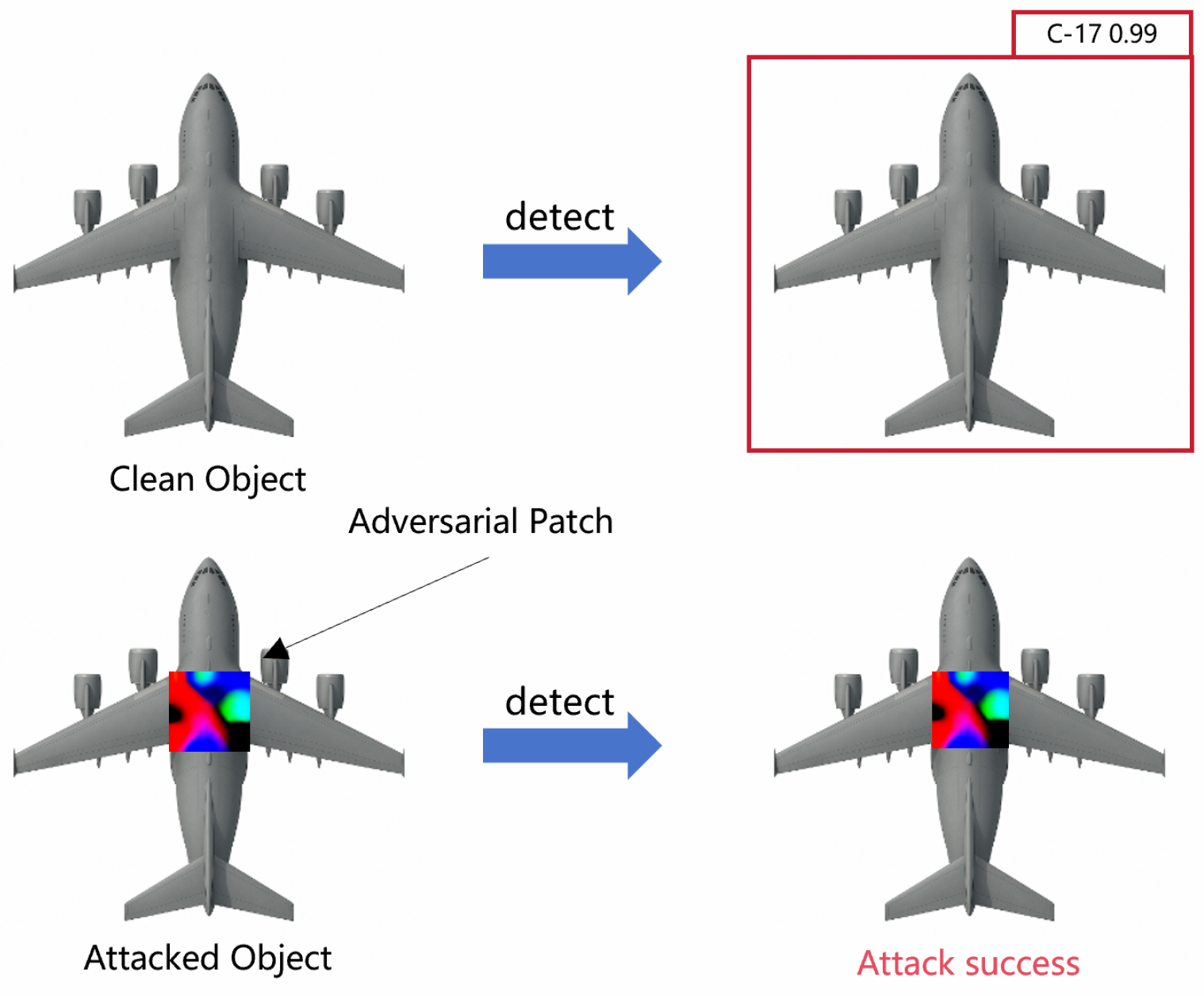

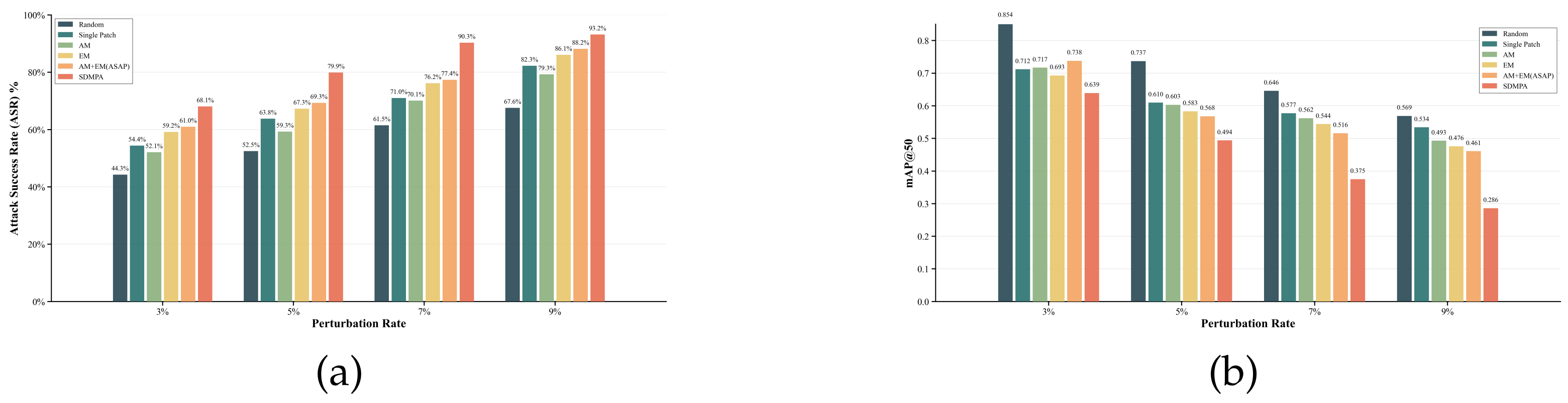

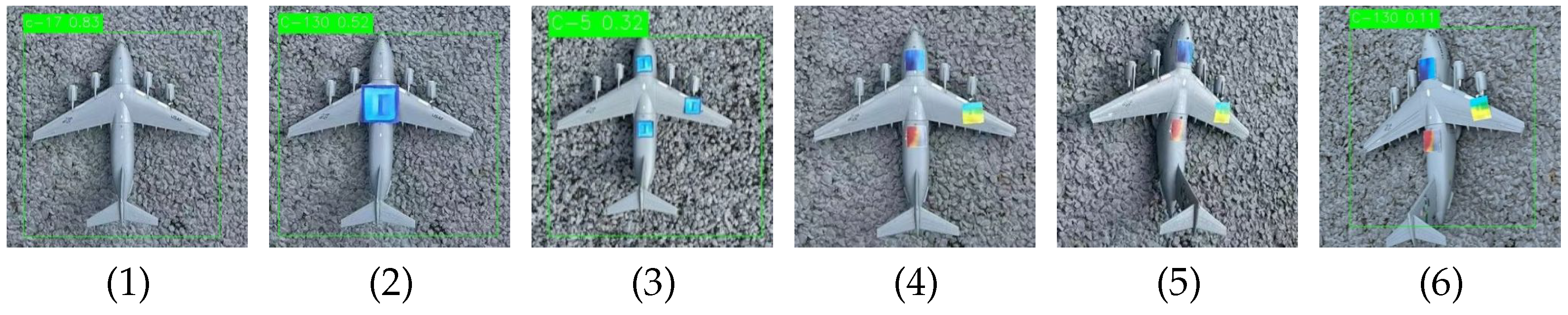

The Spatially Adaptive and Distillation-Enhanced Mini-Patch Attack (SDMPA) framework is designed to address the impracticality of deploying single, large adversarial patches against large-scale physical targets in RSI. As illustrated in

Figure 2, our framework overcomes this by strategically using multiple compact patches, tackling the dual challenges of optimal patch placement and adversarial strength retention through two core components.

First, the ASAP module solves the collaborative positioning problem. Rather than selecting vulnerable points individually, ASAP identifies a complementary set of locations that produce a synergistic attack effect. It achieves this by fusing two sources of information: a inner model attention map () for explainable view guidance and a adversarial view gradient map () for pinpointing adversarial weak spots within those regions. This fusion ensures that patch locations possess both global importance and local attack efficiency.

Second, after ASAP determines the optimal locations, the Distillation-based Mini-Patch Generation (DMPG) module addresses the challenge of retaining attack potency in small patches. It employs a knowledge distillation strategy where a larger, more powerful “teacher” patch is first optimized at each designated location. This teacher’s adversarial knowledge is then transferred to a compact “student” patch via a specialized distillation loss function, guiding it to inherit potent attack characteristics despite its limited spatial budget. This combination of intelligent placement and guided generation allows SDMPA to achieve high attack success rates, making it a highly practical approach for real-world applications.

We provide a detailed description of the optimization procedures of the SDMPA approach in Algorithm 1. Specifically, given initial patch parameters, we first generate the explainable attention map

using LayerCAM and compute the adversarial gradient map

, fusing them into

to identify

k optimal patch locations

. Next, in Phase 1, we initialize the teacher patches

and sample mask

M, placing the patches on the clean image

x to form the adversarial image

. Subsequently, we feed the adversarial image into the target detection model

f, extract predictions

via NMS, and use it as part of the teacher loss

. Then, we perform backpropagation to update the teacher patches

. Finally, we repeat the above steps until the end of the training process. In Phase 2, we use the distillation mechanism to transfer adversarial knowledge from the teacher patches to the student patches

, similarly generating

, computing the student loss

(including distillation loss), optimizing

, and repeating iterations to output the final adversarial image

.

| Algorithm 1: SDMPA. |

![Electronics 14 03433 i001 Electronics 14 03433 i001]() |

3.2. Adaptive Sensitivity-Aware Positioning (ASAP) Module

The Adaptive Sensitivity-Aware Positioning (ASAP) module is designed to precisely identify multiple, high-value locations on a target for our novel multi-mini-patch attack strategy. Its core idea is that the optimal attack points should simultaneously possess both semantic importance, reflecting the model’s focus during normal recognition, and adversarial sensitivity, representing its vulnerabilities under attack. To this end, the ASAP module employs a dual-perspective fusion strategy to locate these critical regions.

First, we analyze the model’s focus from a standard perspective by examining its behavior on unperturbed inputs. We use the LayerCAM [

36] method to generate the model’s internal attention map, denoted as

, while this map is rich in semantic information, highlighting the key regions the model relies on, it also has a low resolution and coarse granularity. Intuitively, perturbing these highly attended areas can most directly impact the model’s decision-making process.

However, as shown by Wu et al. [

37], a model’s high-attention regions are not always equivalent to its adversarially vulnerable regions. Our multi-patch approach allows us to explore more complex attack patterns beyond targeting a single high-attention area. Adversarial perturbations can cause significant shifts in the model’s attention, and these shifted feature points represent the model’s sensitive areas from an adversarial perspective.

To capture these vulnerabilities from the adversarial perspective [

38], we first generate an adversarial example by iteratively applying a small, imperceptible perturbation to the original sample. The number of iterations is empirically set to 10, with a step size

0.001. The process for generating this adversarial example is defined as follows:

Next, we obtain the model’s attention map under adversarial conditions, which we define as the Adversarial Attention Map

. This map reveals the new, sensitive regions to which the model’s attention shifts when under attack. To obtain a more fine-grained attention map, it is generated as follows:

where

denotes the gradient of the loss function

with respect to the input image

,

is an all-one convolution kernel of size

, and ⊗ represents the convolution operation. The kernel’s side length,

S, is equal to the patch’s side length.

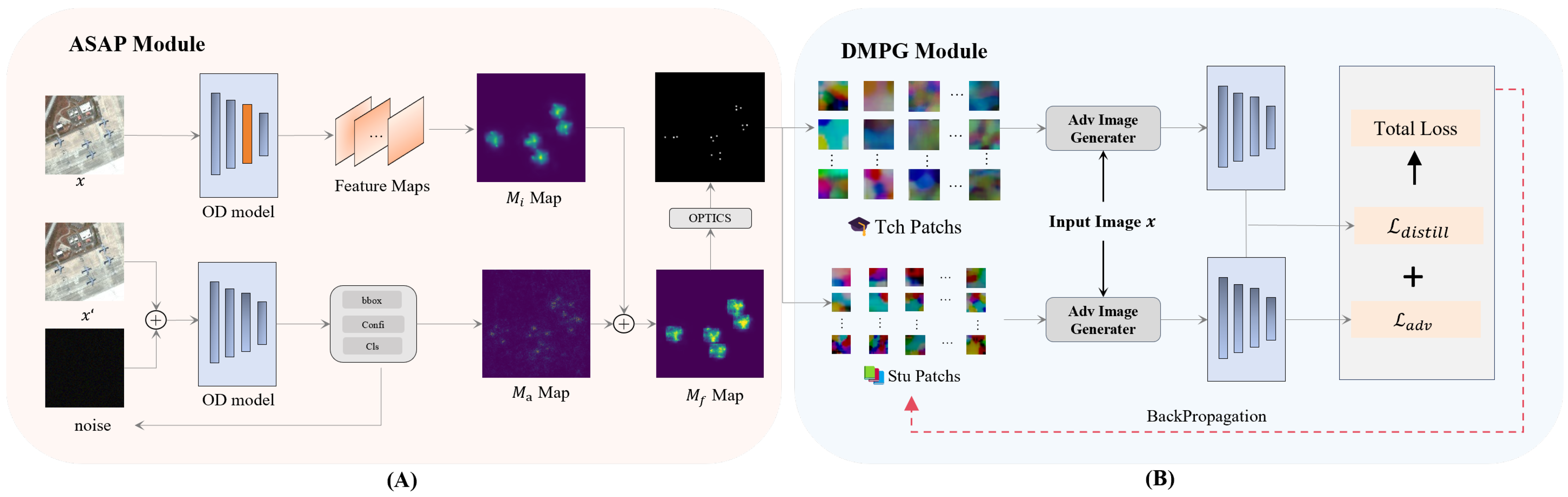

With these steps, we obtain two guidance maps that describe the model’s behavior from complementary perspectives:

represents semantic importance, while

reveals adversarial vulnerability. The illustration of

and

is shown in

Figure 3. To find optimal locations that possess both attributes, we combine them through a weighted fusion:

Here, and are hyperparameters that balance the contribution of the two perspectives. Through preliminary experiments, we determined that a weighting of and provides an effective balance between the model’s standard attention and its adversarial sensitivities.

Finally, to extract k discrete and spatially distinct attack points from the continuous fused map , we employ the Ordering Points To Identify the Clustering Structure (OPTICS) algorithm. This approach is adept at identifying high-density core regions, which we designate as the final patch locations to maximize synergistic effects. Before applying the algorithm, we convert the map into a weighted point set, where each pixel’s intensity determines its importance. To accommodate targets of varying scales, the algorithm’s key parameter max_eps are dynamically adjusted based on the pixel count within the target area.

Specifically, we first construct weighted coordinate data based on pixel intensity values. The pixel intensities are normalized to the range of 1–5 and used as repetition counts, so that high-intensity pixels are assigned higher importance weights during clustering. Then, the key parameters of the OPTICS algorithm are dynamically adjusted according to the number of valid pixels within the target region: when the number of pixels is fewer than 50, we set max_eps = 30; when the number of pixels is between 50 and 200, we set max_eps = 40; and when the number of pixels exceeds 200, we set max_eps = 50.

For cluster identification, we utilize the xi-steep extraction method with

, a technique designed to automatically detect clusters by analyzing steep upward and downward shifts in the OPTICS reachability plot. Since this process yields cluster assignments rather than explicit centers, we then compute the weighted centroid

for each valid cluster

i:

where

p represents the pixel coordinates and

is its intensity. To select the most potent locations, we score each centroid based on its cluster’s total intensity and spatial compactness. In cases where the number of identified clusters is less than the required

k, a supplementary strategy is activated. This strategy selects additional high-activation points from the remaining target area, ensuring that a sufficient number of spatially independent and optimally placed attack locations are always generated.

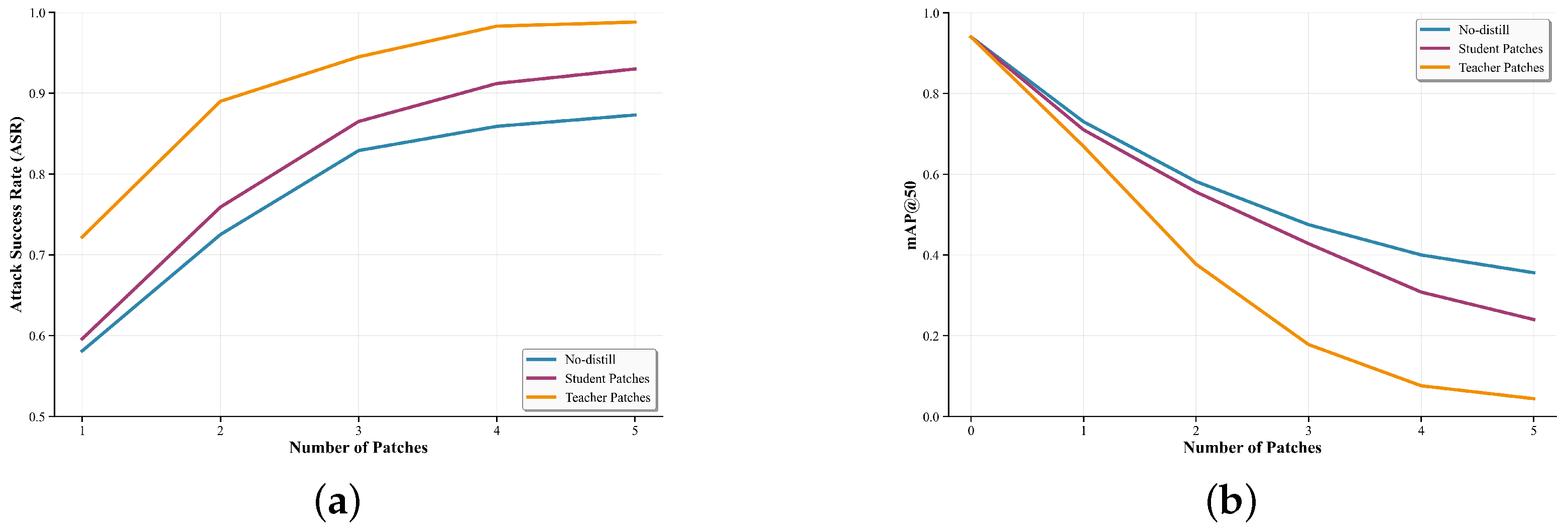

3.3. Distillation-Based Mini Patch Generation (DMPG) Module

Knowledge distillation is a well-known technique where a lightweight student model is trained by a more complex teacher model. Traditionally used for model compression, KD has been successfully applied in adversarial learning to enhance the robustness of models against adversarial perturbations. In the context of adversarial patch generation, we extend this concept to guide the optimization of compact patches. Instead of learning classification predictions, our goal is to offer a better training patch to compact patches by build the connection of strong adversarial patches. This allows the student patch to inherit the ability to perturb the model effectively while maintaining a small size, making it more deployable and harder to detect.

Figure 2 illustrates the core intuition behind the DMPG module: by leveraging a strong teacher patch as guidance, the student patch can obtain better attack information in the feature space, bridging the gap between weak and strong attacks while preserving compactness.

To implement this idea, we first generate larger teacher patches by optimization the teacher patch training loss . These teacher patches serve as high-capacity ability of adversarial knowledge. We then distill the adversarial knowledge from teacher patches into smaller student patches through loss function .

3.3.1. Teacher Patch Generation

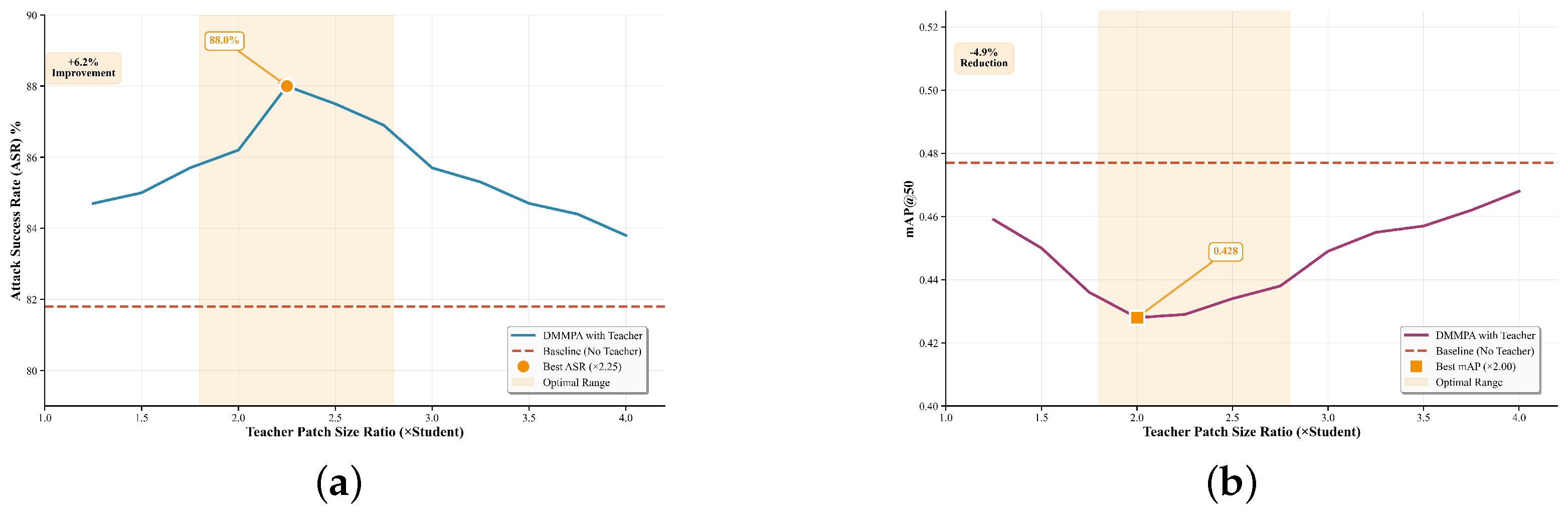

The teacher patches, denoted as , serve as a powerful knowledge source for the distillation process. Unlike the highly compact student patches, the teacher patches are generated with a larger size. The larger patch size not only means the bigger information capacity but also means it will be farther away from the ground truth in the high-dimensional space.

If the teacher patch is significantly larger than the student patch, the guidance effect may be weakened due to the substantial distribution gap between their feature representations in the high-dimensional space. The precise impact of the teacher–student size ratio will receive a detailed analysis in

Section 4.4.3. The placement of these teacher patches follows the same spatial guidance as the student patches. Specifically, for each of the optimal locations identified by the ASAP module, we concurrently optimize a larger teacher patch and a smaller student patch. The optimization of the teacher patch is driven by three loss functions, each targeting a specific aspect of its performance:

Detection Loss

: This loss is designed to suppress the objectness confidence of predicted bounding boxes that correspond to ground-truth objects. To isolate relevant predictions from background noise that could hinder the optimization process, we first apply non-maximum suppression (NMS) to the detector’s raw output. Specifically, we configure the NMS with a confidence threshold of 0.001 and an IoU threshold of 0.1. This pre-processing step ensures that the loss function focuses squarely on suppressing the most salient object predictions, thereby training the patch to diminish the detector’s belief that a target is present.

Here, denotes the objectness score of the i-th predicted bounding box. Our goal is to directly minimize the detector’s objectness confidence for true objects. Other scoring components (e.g., classification confidence) are not considered in this loss, since reducing objectness alone often suffices for successful suppression.

Total Variation Loss

: This loss promotes spatial smoothness within the patch, reducing pixel-level artifacts that could make the patch detectable by human observers. This smoothness ensures that the perturbation remains subtle and stealthy, as described by:

Saliency Loss

: The saliency loss reduces the perceptibility of the perturbation by discouraging overly bright or saturated colors. By controlling the color differences between the patch and surrounding pixels, this loss helps avoid detection by visual inspection, as defined by:

The total loss for optimizing the teacher patch is the weighted sum of the three individual losses:

3.3.2. Student Patch Generation

Once the high-capacity teacher patches are optimized, the primary challenge shifts to generating the compact student patches . In order to combine the information between student patches and teacher patches, we use the detection model’s output layer to achieve this purpose.

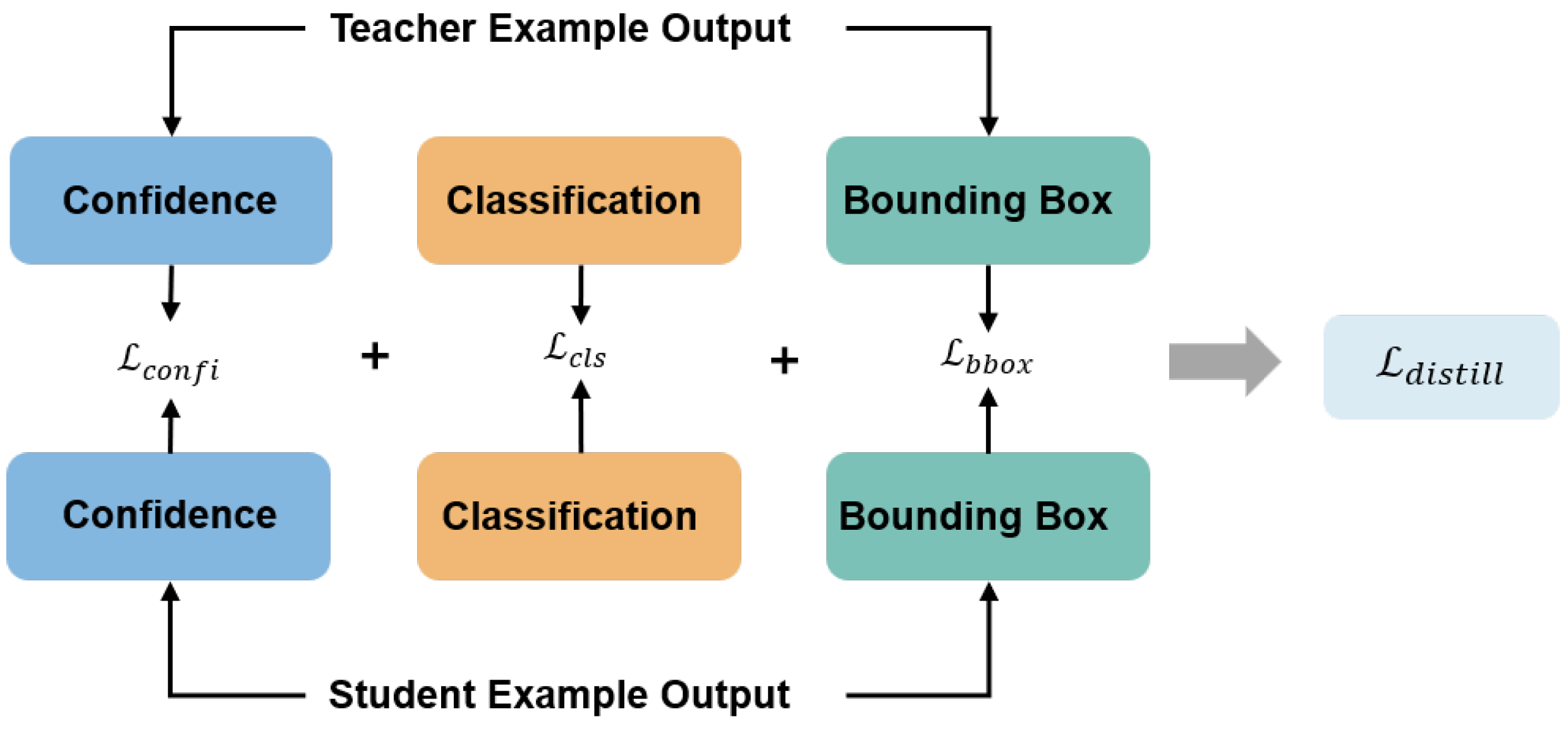

The detection model provides three types of information: confidence scores, classification probabilities, and bounding box predictions. In the standard training phase, each type of information serves a distinct optimization objective. Similarly, during the adversarial distillation phase, these three types of information play complementary roles in guiding the optimization of student patches. The components of the distillation loss are illustrated in

Figure 4.

Specifically, the distillation of bounding box predictions helps student patches learn the optimal spatial directions for perturbation, ensuring maximal disruption to object localization. The distillation of classification probabilities informs the student patches which categories are more susceptible to attack, enabling more targeted adversarial effects. Finally, the distillation of confidence scores indicates which targets are easier to suppress, guiding the student patches to focus on the most vulnerable objects. The distillation loss

can be formulated as follows:

Confidence Alignment Loss (

): The primary goal of the attack is to suppress object detections. This loss function is designed to efficiently transfer this capability from the teacher to the student:

The design of this loss is principled, efficiently handling both matched and unmatched detections—where a pair is considered matched if their IoU exceeds 0.2.

For matched detection pairs (indexed by k), we innovatively minimize the L1 distance of the square root of the confidence scores. The core objective is to amplify the otherwise faint discrepancies between the teacher and student models in the low-confidence regime (e.g., 0.1 vs. 0.05). This “amplification effect” provides a clearer, more potent guidance signal for the student’s optimization.

For unmatched boxes produced by the student patch (, indexed by j), where the teacher model detects nothing, the teacher provides an implicit “zero-confidence” target. In other words, the teacher’s silence is itself a form of guidance, indicating these detections should not exist. Therefore, the term is not a simple regularization penalty, but a direct distillation loss against this implicit zero-target, essentially minimizing .

Classification Alignment Loss (

): To ensure the student patch can induce the same misclassifications as the teacher, we align their probability distributions. Following standard practice in knowledge distillation, we employ the Kullback–Leibler (KL) divergence with a temperature scaling parameter

:

The temperature softens the probability distributions, allowing the student model to learn from the nuanced class relationships captured by the teacher’s logits ().

Bounding Box Alignment Loss (

): The purpose of this loss is to extend the possible attack path. A bounding box predicted after the teacher’s attack represents a successful adversarial state, where the model’s internal features are likely severely corrupted. Thus, the true objective of

is to guide the student’s predicted box locations toward the more adversarially potent directions established by the teacher, forcing it to predict in the most disruptive spatial state to study a more efficient attack knowledge. We employ the Generalized Intersection-over-Union (GIoU) loss to achieve this goal:

Here, K is the number of matched bounding box pairs between the teacher and student outputs. For the k-th matched pair, are confidence scores, are classification logits, and are bounding box coordinates.

The final loss used to train the student patch integrates this distillation guidance with the fundamental attack objectives:

where

,

,

, and

are weighting coefficients. By jointly optimizing these components, the student patch is guided to inherit a rich and multi-faceted adversarial capability from its teacher, thereby achieving high attack efficacy despite its compact size.