1. Introduction

Shot scale refers to the perceived distance between the camera and the subject within a frame, typically classified into standardized categories such as extreme long shot, long shot, medium shot, close-up, and extreme close-up. As a fundamental element of cinematic grammar, shot scale shapes narrative rhythm, conveys emotional tone, and establishes spatial relationships [

1,

2]. For instance, wide framings like extreme long shot provide contextual or environmental information, while tighter framings such as close-up or extreme close-up emphasize facial expressions and character emotion [

3,

4]. Sequences composed of varying shot scales form structured visual patterns that contribute to genre-specific stylistic conventions.

Recent advances in automatic photo curation have employed techniques based on aesthetic scoring [

5,

6], object detection [

7], and clustering of visual similarity [

8]. However, most approaches operate at the level of individual images, often overlooking the structural and rhythmic coherence across photo sequences. As a result, curated outputs may lack the structural rhythm and stylistic flow that characterize cinematic storytelling, particularly in the absence of sequence-level modeling.

To address these limitations, we present a genre-conditioned sequencing framework that incorporates cinematic shot scale patterns as a proxy for visual rhythm and stylistic identity. Rather than arranging images based on visual similarity or chronological order, our system applies lightweight, genre-informed transformations to generate sequences that reflect stylistic conventions found in real films. Importantly, our goal is not to preserve narrative coherence in the cinematic sense, but to emulate genre-specific structural patterns that contribute to a sense of rhythm and style. This approach is particularly suited for contexts such as automated content creation, memory curation, or interactive authoring, where visual rhythm and aesthetic alignment often take precedence over storyline fidelity. In this study, we constrain our focus to Korean commercial films as stylistic reference sources, both to ensure cultural coherence in the modeled genre conventions and to facilitate controlled evaluation within a well-defined cinematic context rooted in Korean popular cinema.

The effectiveness of our method is evaluated through both objective and perceptual metrics. Specifically, we assess improvements in genre-aligned structural adaptation through rhythm-based embeddings and clustering, and we validate the perceptual impact via a user study measuring rhythm alignment, genre consistency, and subjective preference. These evaluation axes are designed to isolate the stylistic contributions of shot scale-based sequencing while identifying its limitations across user groups.

The proposed system (

Figure 1) operates as follows. Users select a sequence of images and specify a target film genre. Each image is normalized through center cropping and then classified into one of six shot scale categories using a lightweight frame-level classifier. The resulting shot scale sequence is embedded via a genre-conditioned encoder and compared against a repository of representative shot sequences derived from real scenes in Korean commercial films, which serve as the cultural and stylistic reference point for this study. The most similar reference sequence is used to guide transformation of the input, resulting in a curated sequence with reordered images and adjusted framing to reflect genre-consistent style.

This work makes the following key contributions. First, we construct a domain-specific dataset of shot-labeled frames and genre-annotated shot sequences, exclusively curated from Korean commercial films, which serve as the primary source of genre-specific visual patterns in this study. Second, we design an efficient, mobile-ready architecture comprising a shot scale classifier and a sequence encoder optimized for on-device execution. Third, we propose a style-aware transformation algorithm that adapts input sequences to match genre exemplars through permutation and framing adjustments. Fourth, we integrate all components into an iOS-based application, enabling genre-aligned photo sequencing with interactive latency on smartphones and supporting perceptual evaluation through user studies.

The remainder of this paper is organized as follows.

Section 2 reviews related work in photo curation, cinematic shot theory, and sequence-level visual understanding.

Section 3 details our dataset construction and annotation procedures.

Section 4 describes the architecture and training of each system component.

Section 5 presents the mobile integration and execution pipeline.

Section 6 reports experimental results including classification accuracy, genre-aligned adaptation quality, mobile inference performance, and perceptual evaluations from a user study.

Section 7 discusses potential applications, extensibility, and limitations. Finally,

Section 8 concludes the paper and outlines directions for future research.

2. Related Work

2.1. Automated Photo Curation and Sequential Structuring

Early approaches to automatic photo curation primarily focused on selecting high-quality images based on the aesthetic properties of individual frames. Some methods ranked images using hand-crafted aesthetic metrics such as composition, contrast, and color harmony [

5], while others employed learned models to estimate “interestingness” based on semantic cues such as human presence or lighting conditions [

6]. These systems effectively translated subjective visual preferences into frame-level saliency scores.

To promote diversity and reduce redundancy in curated outputs, subsequent research adopted clustering and subset selection methods. Techniques based on submodular optimization [

8] and deep neural discriminators [

9] were developed to maximize coverage and visual uniqueness within selected image sets. Recent work also explores metaheuristic slideshow optimization, as in Arbneshi et al. [

10]. Sentiment-guided methods have also been explored; for example, Bum et al. [

11] proposed sub-event segmentation and key photo selection based on visual sentiment features to improve highlight extraction. Commercial implementations such as Adobe Sensei [

12] and Apple Photos (Apple Inc., Cupertino, CA, USA;

https://support.apple.com/photos, accessed on 24 August 2025) [

7] also incorporate object detection, event segmentation, and aesthetic scoring to enhance user experience.

Beyond individual image selection, several works have explored temporal and contextual coherence in photo streams. For example, Wang et al. [

13] proposed identifying event-specific highlights, while Sinha et al. [

14] clustered photos into semantically coherent events using metadata. Wang et al. [

15] further introduced a hierarchical model that learns high-level narrative structure across albums. However, these methods rarely model stylistic progression or visual rhythm across images, and they often default to chronological ordering as a proxy for coherence.

Despite these advances, relatively few systems have addressed how visual structure—particularly shot-scale patterns—can shape the pacing and stylistic consistency of photo sequences. In our earlier work [

16], we heuristically applied fixed genre-inspired shot templates to photo reordering, but we did not employ learned representations or formal adaptation procedures.

The present study extends our earlier heuristic approach by introducing a learned, genre-conditioned embedding framework that captures structural patterns in shot scale sequences from real films. In contrast to prior methods focused on image-level features or metadata, this work models genre-specific visual rhythm as an organizing cue for photo sequencing.

2.2. Theoretical Background of Shot Scale in Cinematography

Shot scale is a fundamental component of cinematic grammar, encoding spatial context, narrative emphasis, and emotional tone. Classical theorists such as Katz [

1], Bordwell and Thompson [

2], and Monaco [

17] defined categories from extreme long shot to extreme close-up based on perceived camera–subject distance (

Figure 2). These framings not only structure spatial relations but also direct viewer attention. Kuhn and Westwell [

18] emphasized shot scale’s semiotic role within the standardized visual language of film.

The narrative and communicative functions of shot scale have been widely discussed. Balázs [

3] highlighted how close-ups can foreground subtle visual cues, while Doane [

4] described them as collapsing spatial boundaries to heighten visual intimacy. Plantinga [

19] further identified close-ups as enabling empathy through facial visibility. These accounts support the role of shot scale in structuring viewer attention and stylistic emphasis.

At the sequence level, shot scale distributions contribute to stylistic rhythm and genre. Benini et al. [

20] showed that art films maintain consistent shot scale patterns reflecting directorial style. Canini et al. [

21] linked genre-specific shot combinations to emotional tone. Cutting [

22] analyzed temporal arrangements for narrative clarity, and Rooney and Bálint [

23] found that close-up frequency influences theory-of-mind inference. Collectively, these studies position shot scale as both a compositional and structural device in cinematic storytelling.

These insights inform our approach, which models shot scale sequences as structural proxies for genre-specific rhythm and stylistic structure.

2.3. Structure and Dynamics of Shot Sequences

Accurate shot boundary detection is essential for structuring shot sequences. Early approaches relied on low-level visual features like color histograms and edge differences [

24,

25], while TRECVid [

26] established benchmarks for comparative evaluation. Recent deep learning models such as TransNet V2 [

27], AutoShot [

28], and depthwise-3D architectures with visual attention [

29] achieve real-time performance, especially for short-form content. In parallel, comprehensive reviews such as Kar et al. [

30] have highlighted persistent challenges—including motion artifacts and illumination variance—and discussed trade-offs between multi-feature accuracy and computational efficiency.

Scene segmentation has also progressed from visual clustering [

31] to multimodal fusion techniques that incorporate audio, visual, and textual cues [

32], supported by datasets like MovieNet [

33] and MovieCuts [

34]. More recent approaches incorporate higher-level semantics beyond low-level similarity. For example, Kovarski et al. [

35] emphasized character-focused foreground modeling, Tan et al. [

36] leveraged character reappearances for scene boundary detection, and Tseng et al. [

37] applied a transformer-based linker model to identify temporally coherent shot clusters.

Beyond boundaries, research has focused on classifying shot-level attributes such as motion, framing, and angle. Petrogianni et al. [

38] evaluated temporal models for camera movement classification, and Savardi et al. [

39] used convolutional neural networks (CNNs) to infer camera angle and height from single frames. Other frameworks combine field size and motion semantics to model shots as semantically rich units that shape sequence rhythm [

40,

41,

42].

Shot timing and order further encode narrative pacing. Cutting et al. [

43] showed that average shot length has decreased over decades, correlating with faster rhythms. Redfern [

44] statistically modeled shot length distributions across genres, while Cinemetrics [

45] enabled large-scale rhythm analysis. Zhang and Jhala [

41] categorized mid-scale transitions into rhythmic patterns, highlighting how sequence dynamics influence emotional tone.

These insights inform our work, which models shot scale sequences as a rhythm-aware structuring mechanism for genre-conditioned photo curation.

2.4. Shot Scale Classification from Visual Data

Shot scale classification is typically framed as a multi-class image recognition task using a single frame or shot representative as input. Early methods relied on handcrafted features like histogram of oriented gradients descriptors combined with support vector machines [

46,

47]. With the rise of CNNs, models such as VGG and ResNet were applied to 7-class taxonomies [

48], and semantic segmentation was used to enhance object-context understanding [

49]. Ensemble models [

50] addressed inter-class confusion, especially for closely related categories.

Efficiency and deployability have become key concerns. Lightweight networks like MobileNet [

40] and AlexNet [

51] reduce inference cost while maintaining acceptable accuracy. LWSRNet uses shallow layers to capture spatial cues, and VH-Pooling [

52] improves location generalization. These models aim for real-time performance on mobile and embedded devices. Recent work has also emphasized educational applications: Bai and Li [

53] developed a YOLOv5-based shot scale recognition model tailored for film and television pedagogy, demonstrating high classification accuracy and improved annotation efficiency in classroom settings. Zheng et al. [

54] proposed a foreground-aware alternative using integrated masking to enhance subject focus, but at the cost of architectural complexity. In contrast, our system adopts MobileNetV3-Small to balance accuracy and speed while remaining lightweight enough for on-device deployment.

Temporal modeling has been introduced to enhance prediction consistency. Savardi et al. [

48] applied temporal smoothing across frames, while SGNet [

55] jointly infers shot scale and motion using short video clips. These approaches view shot scale as a temporal feature embedded in cinematic rhythm. However, our system maintains a frame-based classification structure and instead models stylistic progression and rhythm through downstream shot scale sequencing, without applying frame-to-frame smoothing.

Recent work increasingly treats shot scale as part of a broader set of cinematographic attributes. Multi-task models jointly learn composition and shot type [

56], and datasets like FullShots [

40] combine scale and motion labels. However, our framework isolates shot scale as an organizing signal. It models sequence-level patterns as genre-aligned visual rhythms, offering a complementary perspective on structured photo curation.

3. Dataset Construction

This study employs two types of learning models, each requiring a dedicated dataset. The first is a single-frame classification model designed to predict the shot scale from a single image. To support this model, we constructed a dataset composed of shot-level representative frames. The second is a sequence embedding model that operates on temporally ordered shot scale sequences with genre labels. For this purpose, we created a separate dataset in which each sequence corresponds to a scene and is encoded as a series of integers representing shot scales.

All data were sourced from 106 Korean feature films selected to ensure diversity across genres. From each film, narratively significant scenes were manually identified and extracted. These scenes were then segmented into individual shots based on visual discontinuities such as camera angle, framing, or spatial transition. The two datasets were constructed in parallel from this shared shot-level segmentation.

The image classification dataset was constructed by extracting a single representative frame from each shot. Specifically, the center-most frame of each shot was selected to minimize intra-shot redundancy while preserving representativeness. This yielded a total of 25,986 unique frames, each corresponding to a distinct shot. All frames were stored with original resolution and color fidelity to retain visual quality.

Each frame was manually labeled with one of six predefined shot scale categories: Extreme Long Shot (XLS), Long Shot (LS), Full Shot (FS), Medium Shot (MS), Close-Up (CU), and Extreme Close-Up (ECU). These were mapped to integer labels from 1 to 6. Annotation was conducted by trained undergraduate and graduate students majoring in film studies, following standardized criteria based on subject scale, composition, and framing. Ambiguous cases were resolved through cross-validation and consensus.

The sequence embedding dataset consists of shot scale sequences extracted at the scene level. Each sequence comprises a temporally ordered list of shot scales encoded as integers from 1 to 6, corresponding to the same class definitions used in frame annotation. In addition, each sequence is tagged with the genre label of the film to which the scene belongs. Scene boundaries were defined according to narrative segmentation principles such as temporal or spatial discontinuity. Sequence lengths vary across scenes but maintain the original shot order.

In total, the dataset comprises 25,986 labeled frames and 1117 shot sequences. The shot scale distribution is inherently imbalanced, with MSs and CUs appearing more frequently. The average number of shots per sequence is approximately 23.3. Detailed statistics, including class distributions and genre-wise sequence counts, are presented in

Table 1 and

Table 2.

4. Methodology

This section details the integrated computational framework that underpins the proposed mobile photo curation system. Unlike prior approaches that typically relied on frame-level classification in isolation, our method consolidates multiple modules into a unified end-to-end pipeline that reorganizes user-selected photo sequences according to genre-specific stylistic patterns. The pipeline proceeds sequentially from low-level shot scale recognition to high-level genre-conditioned sequence adaptation, ensuring that both local framing cues and global rhythmic structures are jointly captured.

The framework consists of four interdependent components: (1) a lightweight single-frame shot scale classifier, (2) a genre-conditioned sequence encoder, (3) a latent-space clustering module, and (4) a similarity-driven sequence adaptation mechanism. Together, these modules form a coherent network that transforms raw image sequences into genre-aligned outputs. The classifier leverages MobileNetV3-Small to balance accuracy and computational efficiency on mobile hardware. The encoder employs a conditional variational autoencoder (cVAE) architecture to embed shot scale sequences in a manner sensitive to genre-specific rhythm—extending prior sequence models by explicitly conditioning on genre labels. The clustering module organizes these embeddings into representative genre-specific medoids, enabling retrieval of concrete reference sequences rather than relying on abstract centroids alone. Finally, the adaptation mechanism applies lightweight transformation operations (permutation and crop-based reframing) to minimize the gap between an input sequence and its nearest genre reference, providing a practical means of aligning structural style under mobile constraints.

By integrating these four components, the system constitutes not merely a collection of models but a novel, unified pipeline for genre-aware sequencing. This integration addresses key limitations of earlier work—namely, the absence of sequence-level modeling, the neglect of genre conditioning, and the lack of deployability on resource-constrained devices. Each module contributes a specific improvement, and their combined design ensures that the final outputs achieve stylistic alignment while remaining computationally lightweight.

Section 4.1,

Section 4.2,

Section 4.3 and

Section 4.4 describe each module in detail, while their runtime integration and deployment strategies are discussed in

Section 5.

4.1. Single-Frame Shot Scale Classifier

We designed a lightweight classification model to predict the shot scale from a single image. The primary design objective was to ensure efficient inference and minimal memory footprint on mobile devices while preserving the structural cues necessary for shot scale recognition. To this end, we selected MobileNetV3-Small as the backbone architecture, which is known for its favorable trade-off between performance and computational cost. In particular, its squeeze-and-excitation (SE) modules and h-swish activation functions enhance the extraction of local contrast and textural cues, which are critical for distinguishing relative subject size and framing. This combination makes MobileNetV3-Small suitable not only for mobile deployment but also for the specific demands of shot scale classification.

The model takes an RGB image of size 224 × 224 as input, to align with the native configuration of MobileNetV3 while also minimizing latency on mobile devices. Each input frame is resized from the original movie still by cropping the center region and applying per-channel normalization based on ImageNet statistics (mean: [0.485, 0.456, 0.406]; std: [0.229, 0.224, 0.225]). The output is a categorical label corresponding to one of six predefined shot scales, indexed from 1 to 6 in ascending order from XLS to ECU.

As shown in

Figure 3, the core structure of the classifier follows the standard MobileNetV3-Small configuration, with the final classification head modified to produce six output logits. A softmax activation layer is applied to yield a probability distribution over the six shot scale classes. The model was initialized with ImageNet-pretrained weights and fine-tuned in an end-to-end manner on our custom dataset.

Training was performed using the cross-entropy loss function with the Adam optimizer. The initial learning rate was set to 1 × 10−4, and training proceeded for 50 epochs. To mitigate class imbalance, each mini-batch was constructed to maintain a uniform distribution across classes. Data augmentation was restricted to operations that preserve the subject’s relative scale, such as horizontal flipping and color jittering (e.g., brightness variation). In contrast, geometric transformations like rotation or scaling were intentionally excluded, as they would distort the subject-to-frame ratio or relative location within the frame that defines shot scale categories and thus reduce the semantic validity of the labels.

4.2. Genre-Conditioned Shot Sequence Embedding

To embed variable-length shot scale sequences into fixed-dimensional vectors, we implemented a genre-conditioned cVAE. Each input sequence consists of integers ranging from 1 to 6, denoting discrete shot scales. A genre label accompanies every sequence and is treated as a conditioning signal. The model maps the input sequence and its associated genre to a compact latent representation that captures stylistic properties in a genre-sensitive manner. Unlike prior sequence embedding methods based on plain variational autoencoders (VAEs), our design explicitly incorporates genre conditioning at every time step, allowing the latent representation to reflect not only local shot transitions but also higher-level stylistic rhythms specific to cinematic genres.

As illustrated in

Figure 4, the proposed encoder architecture consists of four main components. First, the integer-based shot scale sequence is passed through an embedding layer to produce a dense vector representation. Second, the genre label is independently embedded and concatenated to each time step of the sequence input to condition the entire encoding process. Third, the conditioned sequence is processed by a unidirectional long short-term memory (LSTM) encoder, chosen over CNN-based alternatives to better capture the sequential and rhythmic properties of shot scale patterns. Fourth, this state is concatenated with the genre embedding and passed through two linear layers to estimate the mean and log-variance of the latent distribution. The decoder, which is used only during training, reconstructs the input sequence from a latent sample and the genre embedding.

The model employs the standard reparameterization trick to enable gradient-based optimization through the stochastic latent space. The training loss consists of two terms: a reconstruction loss measured by cross-entropy between the original and reconstructed sequences, and a Kullback–Leibler divergence that regularizes the latent distribution toward a standard normal prior. These two terms are combined using equal weighting.

To handle sequences of varying lengths, input sequences are padded to a fixed maximum length prior to batching. We utilize PyTorch (version 1.11.0)’s pad_sequence function to construct mini-batches, and the padding index is excluded from loss computation. The same preprocessing is applied during inference, with the effective sequence length determined dynamically for each input. This formulation ensures that the embedding model is not merely a conventional VAE but a genre-aware sequence encoder, tailored to capture temporal dynamics and stylistic regularities in cinematic shot structures.

4.3. Latent-Space Clustering of Shot Sequences

The latent representations obtained from the cVAE encoder capture genre-sensitive shot sequence structures in a compact vector format. Clustering these representations enables the identification of prototypical shot patterns that recur within each genre. This section describes the procedure used to summarize intra-genre sequence structures and extract representative sequences for downstream reference. In contrast to earlier approaches that often relied on heuristic templates, our method explicitly learns genre-specific distributions and selects representative sequences that reflect real cinematic structures.

We adopted a Gaussian Mixture Model (GMM) as the clustering algorithm. GMMs each cluster as a multivariate Gaussian distribution and supports soft assignment of data points, which is advantageous in latent spaces where sequence boundaries may not be well-separated. Importantly, the number of clusters was not predefined but instead determined via Bayesian Information Criterion (BIC)-based model selection, ensuring that the resulting structure reflects genre-specific variability rather than arbitrary design choices.

Clustering was performed separately for each genre. All sequences belonging to a given genre were encoded into latent vectors using the trained cVAE encoder, and a GMM was fitted to this genre-specific vector set. The number of components was selected by comparing BIC values for K ∈ {2, …, 7}, and a distinct GMM was trained per genre. The clustering relied exclusively on latent vectors, without access to raw shot sequences or genre labels during fitting.

Each cluster center was defined as the mean vector (centroid) produced by the GMM. Since centroids do not correspond to real sequences, we additionally identified a representative sequence for each cluster using a medoid selection approach. The medoid is the real sequence whose latent vector is closest to the cluster centroid in Euclidean space, and serves as a concrete reference for later comparison, retrieval, and adaptation tasks. This medoid-based strategy ensures that subsequent adaptation is guided by authentic shot sequences drawn from actual films, rather than by abstract centroids alone. As a result, the clustering module provides not only a statistical summary of stylistic variation but also a practical mechanism that enables downstream modules to align user inputs with realistic genre exemplars.

4.4. Similarity-Driven Adaptation of Shot Sequences

When adjusting an input shot sequence to better align with a target genre’s style, two strategies are available. One approach is to optimize the sequence toward the cluster centroid, thereby enforcing alignment with the most typical pattern. The other is to match the input to the most similar actual sequence within the target cluster. While the former offers improved regularity and computational efficiency, the latter enables richer variation and facilitates the reuse of metadata from real sequences (e.g., associated audio or context). We adopt the latter strategy and perform sequence adaptation by referencing the closest real sequence (medoid or nearest neighbor) within the target cluster. This design ensures that adaptation is guided by authentic cinematic structures rather than abstract statistical averages, thereby increasing stylistic plausibility.

Similarity is computed in latent space using Euclidean distance between the mean vectors generated by the cVAE encoder. The comparison is made against a fixed set of latent vectors corresponding to stored medoid or candidate sequences. All embeddings are generated under consistent encoding conditions, and the comparison does not consider sequence length or label distribution explicitly.

Two types of transformation operations are permitted for modifying the input sequence. The first is a permutation of shot order, implemented as a restricted partial swap. This operation is motivated by the fact that shot scale rhythm depends not only on the distribution of scales but also on their temporal ordering; allowing local swaps provides a lightweight means of restoring rhythm without destroying coherence. The second operation is a one-step increment of the shot scale label for selected elements. In the context of photo curation, this corresponds to simulating a closer framing by cropping the associated image. This crop-based operation directly addresses framing mismatches and enables alignment with genres that favor tighter shots. Cropping is performed with the detected facial region as the center of focus; when no face is detected, the image is cropped based on center coordinates. This fallback strategy allows continuity of adaptation even in face-absent or poorly exposed frames. To prevent excessive visual degradation, we constrain shot scale increments to a single step and cap the resulting value at the maximum class index of 6. Each operation is treated as atomic, and candidates are generated through combinations of these operations.

Adaptation is performed via an iterative greedy search over the space of valid transformations. At each step, a set of candidate sequences is constructed by applying a single allowed operation (either swap or crop) to the current sequence. All candidates are encoded into latent space, and the one that yields the greatest reduction in Euclidean distance to the target medoid is selected. The process repeats until no further improvement is achieved or a predefined limit on the number of operations is reached. The greedy strategy was chosen deliberately: it provides a tractable optimization procedure that balances local optimality with computational efficiency, making it feasible for real-time use on mobile devices.

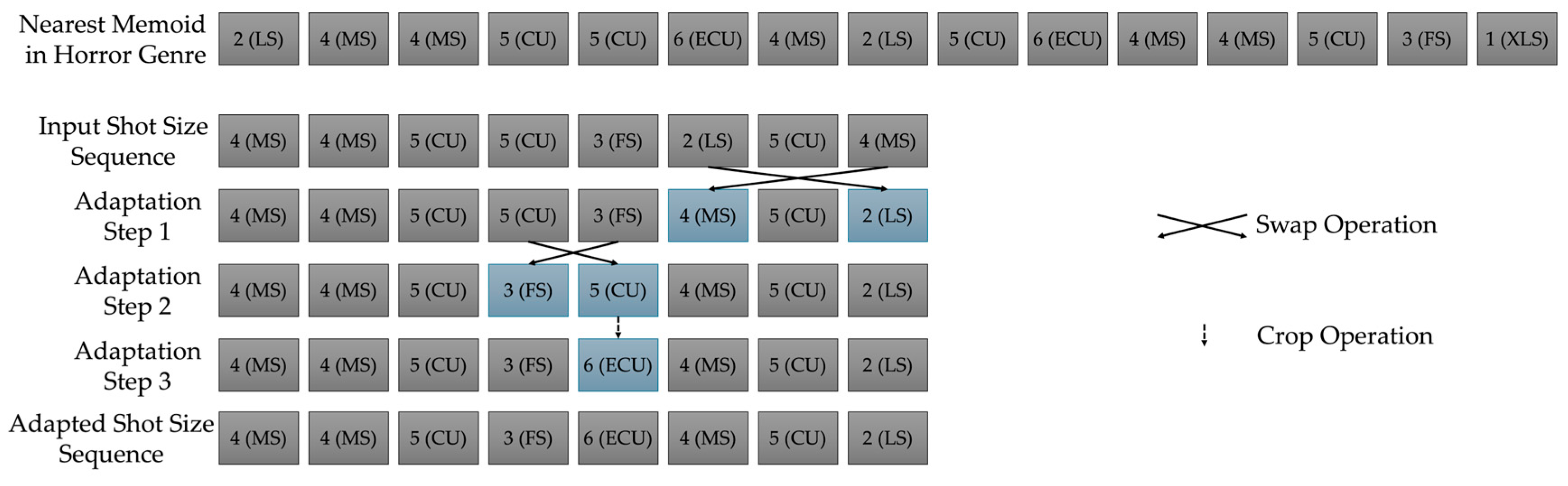

Figure 5 illustrates this adaptation process for a shot sequence in the horror genre.

5. Mobile System Deployment

This section presents the deployment of the proposed system on mobile devices, focusing on the integration and execution of all core components introduced in

Section 4. The system is designed to operate fully on-device, supporting shot scale prediction and genre-conditioned sequence adaptation for user-selected photo sequences without reliance on remote servers or cloud infrastructure. The latency of each module is sufficiently low to enable interactive use on mobile hardware.

Section 5.1 and

Section 5.2 describe the platform-specific conversion and optimization of the single-frame classifier and sequence encoder for iOS. These modules are restructured to support efficient input processing and low-latency inference on resource-constrained hardware.

Section 5.3 details the integration of these components into a unified runtime pipeline, illustrating how the full photo curation workflow—spanning shot scale classification, sequence embedding, cluster matching, and sequence transformation—is executed in a mobile application context.

This section outlines the runtime architecture and system integration on mobile devices. Performance evaluation is reported separately in

Section 6.

5.1. On-Device Execution of Shot Scale Classifier

The single-frame shot scale classifier, originally trained in PyTorch using the MobileNetV3-Small architecture, was converted into a format compatible with iOS deployment. We selected CoreML framework (Apple Inc., Cupertino, CA, USA;

https://developer.apple.com/machine-learning/core-ml/, accessed on 24 August 2025) as the target inference engine due to its tight integration with Apple’s runtime environment, native execution efficiency, and support for seamless integration within iOS applications. The conversion pipeline involved exporting the model to ONNX (

https://onnx.ai/, accessed on 24 August 2025) via torch.onnx.export() and subsequently converting the ONNX model to CoreML format using CoreMLTools (version 6.2,

https://github.com/apple/coremltools, accessed on 24 August 2025).

The input to the model is a 224 × 224 RGB image. For each photo selected from the user’s gallery, the center region is cropped and resized to match the model’s input dimension. Prior to inference, the image is normalized channel-wise using ImageNet statistics (mean: [0.485, 0.456, 0.406]; std: [0.229, 0.224, 0.225]) and converted into an MLMultiArray structure. The model outputs a softmax distribution over six shot scale classes, and the class with the highest probability is selected as the predicted label.

To reduce memory usage and ceimprove inference efficiency, we applied float16 quantization during model export. The resulting .mlmodel00 file was approximately 1.2 MB in size. Within the application, the model is loaded into memory at launch time to avoid loading delays during repeated use. All inferens were executed on-device using CoreML’s CPU backend. Deployment and testing were conducted on an iPhone 14 Pro (Apple Inc., Cupertino, CA, USA) running iOS 18, with the model loaded into a pre-allocated memory structure to ensure consistency during latency evaluation.

Latency measurements were conducted to characterize on-device performance; details on measurement protocols and evaluation results are provided in

Section 6.

5.2. On-Device Execution of Sequence Encoder

To enable genre-conditioned embedding of shot sequences on mobile devices, we extracted the encoder component from the cVAE architecture described in

Section 4.2. The decoder was excluded from deployment, as it is not required at inference time. The resulting encoder-only model accepts a shot scale sequence and a genre label as input and outputs the mean vector of the latent distribution. We selected CoreML for its seamless integration with iOS and support for efficient on-device inference.

The input consists of a variable-length sequence of integers ranging from 1 to 6, representing discrete shot scales, along with a separate integer genre label. The sequence is padded to a fixed maximum length, and each token is passed through an embedding layer. The genre label is also embedded and repeated across all time steps to provide conditioning at every position. In the iOS application, both inputs are converted to MLMultiArray format prior to inference.

The encoder produces a 32-dimensional latent vector, which is used to compute similarity to pre-stored cluster representatives within the target genre. Each cluster representative corresponds to the latent vector of a medoid sequence and is stored in the application as a JSON-formatted asset. At runtime, the JSON file is loaded into memory, and Euclidean distances between the encoded input and each medoid vector are computed to identify the closest match.

Inference and distance computation were performed in the same mobile environment (iPhone 14 Pro, iOS 18, CPU backend via CoreML) as described in

Section 5.1. The converted model size was approximately 1.3 MB. The entire embedding and comparison process, including input preprocessing and output postprocessing, was designed to run efficiently on-device without external dependencies, ensuring responsiveness suitable for interactive use.

5.3. Integrated Runtime Pipeline

The proposed system integrates all core components into a unified on-device pipeline that executes efficiently upon user selection of a photo sequence. The full processing flow consists of the following steps: (1) normalizing each image for shot scale analysis, (2) classifying its shot scale, (3) constructing a shot scale sequence, (4) encoding the sequence with a genre-conditioned encoder, (5) identifying the most similar representative sequence from the target genre cluster, and (6) adapting the input sequence to align more closely with the reference sequence to produce the final curated output.

Although each module is developed independently, they are executed sequentially within the application as a single inference pipeline. Input images, typically in JPEG format, are center-cropped to a predefined square ratio. The shot scale classifier, implemented via CoreML, is then invoked to generate a discrete label for each frame. These labels are assembled into an integer sequence, which, along with the user-selected genre label, is fed into the encoder. The resulting latent vector is compared against preloaded cluster representatives stored in memory.

The matching stage identifies the closest medoid sequence based on Euclidean distance in the latent space. The input sequence is then adapted using this reference as a target, following the adaptation strategies defined in

Section 4.4. The final curated sequence is constructed by reordering the original images and, where applicable, applying face-centered cropping to simulate shot scale increases.

To support responsive performance on mobile hardware, the pipeline minimizes redundant computation through component caching and shared in-memory data transfer. The entire process—from user input to result display—is executed on-device without reliance on cloud infrastructure, culminating in a curated output that includes the reordered images. While not implemented in the current system, future versions may incorporate genre-specific media such as background audio or textual overlays.

6. Experiments and Results

We evaluated the proposed system using four complementary sets of metrics. (1) For the single-frame classifier, we measured Top-1 accuracy, precision, recall, and F1-score to assess frame-level shot scale prediction quality. (2) For genre-conditioned adaptation, we quantified improvements using latent-space distance reduction, which captures the extent to which perturbed sequences are restored toward genre-consistent patterns. (3) For system efficiency, we reported inference latency and model size to validate the feasibility of on-device deployment. (4) Finally, to assess perceptual outcomes, a user study measured visual rhythm alignment, genre consistency, and overall preference through Likert-scale ratings. Together, these metrics provide a comprehensive evaluation of classification accuracy, structural adaptation, computational efficiency, and user perception.

6.1. Shot Scale Classification Performance

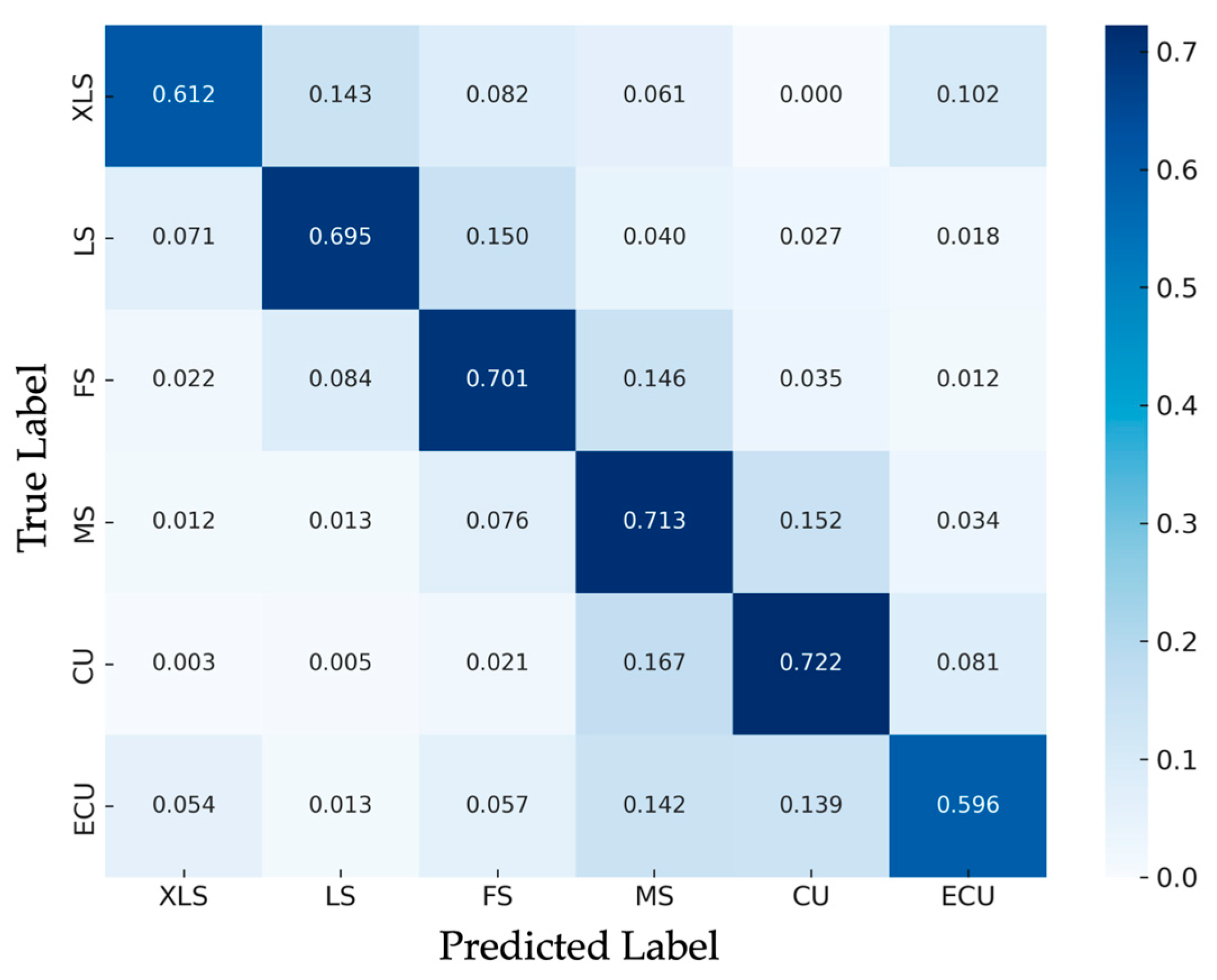

We evaluated the shot scale classifier on a held-out test set comprising 3000 manually labeled frames that were not used during training. The class distribution of this test set was adjusted to reflect that of the training set. Evaluation metrics included Top-1 accuracy, as well as macro-averaged precision, recall, and F1-score. For benchmarking purposes, we also trained a shallow CNN baseline on the same data.

To contextualize the performance of our MobileNetV3-Small classifier, we implemented a baseline CNN composed of five convolutional layers with ReLU activations and batch normalization, followed by two fully connected layers. This architecture was chosen to provide a fair and capacity-matched baseline and deliberately excludes advanced optimization techniques, allowing the effect of network depth to be evaluated in isolation.

The MobileNetV3-Small classifier achieved a Top-1 accuracy of 69.9% and a macro-averaged F1-score of 0.646 on the held-out test set. The corresponding macro precision and recall were 0.631 and 0.673, respectively. Although these scores reflect moderate classification performance, they are adequate for supporting downstream modules such as sequence embedding and adaptation in our mobile pipeline. By comparison, the baseline CNN achieved a Top-1 accuracy of 63.1% and a macro-averaged F1-score of 0.615. This indicates that MobileNetV3-Small improves not only accuracy but also recall (0.673 vs. lower values in the baseline), showing that the gain is not limited to precision but extends to the model’s ability to correctly capture minority and ambiguous shot classes. These results underscore the benefits of a deeper, mobile-optimized backbone, even within lightweight model constraints.

Performance varied substantially across shot scale categories, driven by both class imbalance and inter-class visual ambiguity. Categories with abundant training examples and moderate framing (e.g., MS and CU) exhibited higher classification reliability. In contrast, XLS and ECU recorded lower F1-scores, likely due to their relative rarity and distinct visual challenges—such as minimal subject presence in XLS and overly magnified facial fragments in ECU.

Figure 6 visualizes the normalized confusion matrix across the six shot scale categories. The matrix highlights strong class separability in mid-scale categories and reveals recurring misclassifications between visually adjacent shot types such as MS–CU and FS–LS. In addition, ECU frames were occasionally misclassified as XLS, likely due to perceptual fragmentation or face detection failure caused by excessive magnification. This form of cross-category confusion illustrates the difficulty of resolving edge cases where semantic cues are sparse or structurally distorted.

Table 3 summarizes the class-wise precision, recall, and F1-score. These metrics confirm the overall pattern: mid-scale categories such as Medium Shot and Close-Up benefit from both visual distinguishability and class frequency, while the extremes—XLS and ECU—remain more prone to misclassification.

This demonstrates that our lightweight MobileNetV3-Small backbone offers a novel balance between efficiency and accuracy, making it particularly suited for on-device deployment—an aspect often overlooked in prior frame-level approaches.

6.2. Genre-Conditioned Sequence Adaptation Results

We conducted two experiments to evaluate the effectiveness of genre-conditioned sequence embeddings and the proposed adaptation mechanism. Evaluation was performed on a test set of 29 shot sequences, selected from the same six genres used during training: action, drama, thriller, romance, comedy, and horror. To avoid excessive reduction in training data and ensure balance across genres, we used at most six test sequences per genre, with lower counts for genres with fewer total samples.

The first experiment assessed whether genre-conditioned embeddings produce distinct latent representations that capture genre-specific structural patterns. Each input sequence was embedded six times, once per genre condition, and the resulting vectors were compared to genre-specific cluster centers in latent space. We assigned a predicted genre based on the closest centroid and measured classification accuracy across all test sequences. The overall classification accuracy was 69.0%, with genre-wise results of 83.3% for action, 66.7% for drama, 80.0% for thriller, 50.0% for romance, 60.0% for comedy, and 66.7% for horror.

Misclassifications often occurred in sequences that featured overlapping stylistic cues—such as emotionally charged scenes in romance films that visually resembled dramas, or suspense-driven thrillers with visual patterns similar to action films. As the genre labels were assigned at the film level rather than the scene level, such stylistic contamination was expected and likely contributed to cross-genre confusion.

The second experiment evaluated the effect of adaptation in restoring genre structure from perturbed inputs. To generate perturbed data, we first applied random shuffling to each test sequence, thereby destroying its original temporal rhythm while keeping the set of shot scales unchanged. In this shuffled state, the latent-space distance to the nearest cluster center showed only a small and inconsistent reduction of about 3.4% on average (M = 0.034, SD = 0.11), and given the limited sample size and high variability, this effect cannot be considered meaningful. In contrast, applying our adaptation procedure reduced the average distance by 22.7% (M = 0.227, SD = 0.08) relative to the shuffled baseline, confirming that the observed improvement is specifically attributable to the adaptation module rather than to embedding or clustering alone. It should be noted that this comparison does not concern accuracy or recall at the frame level, but rather evaluates structural alignment in latent space, reflecting how well degraded sequences can be restored toward genre-consistent patterns.

This genre-conditioned adaptation strategy represents a novel contribution, as it operationalizes lightweight swap and crop operations to recover stylistic rhythm—an aspect largely neglected in prior frame-level approaches.

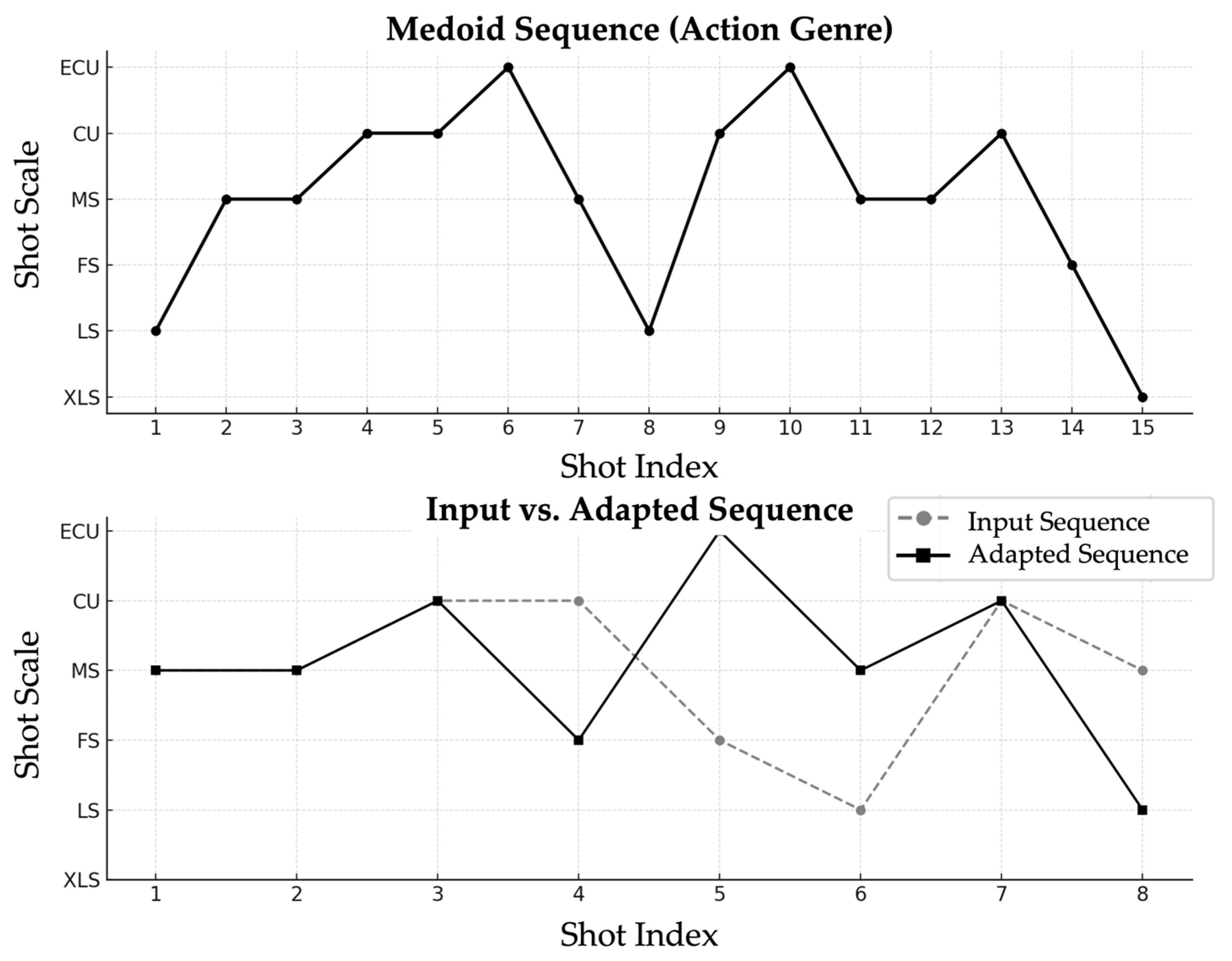

Figure 7 shows how an input sequence is transformed to better match the rhythmic contour of a target genre.

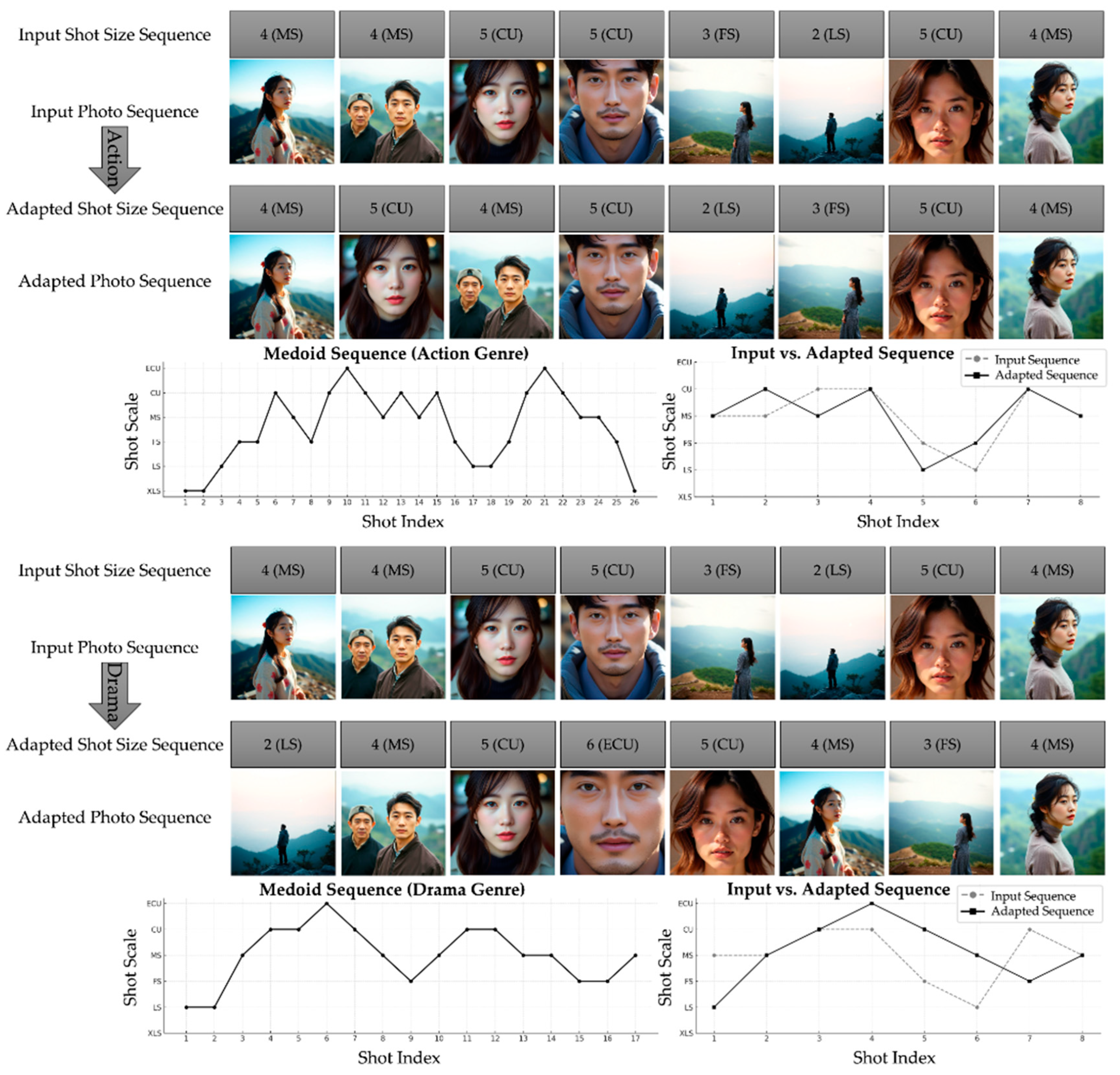

Figure 8 presents side-by-side visual examples of image order and framing before and after adaptation.

Figure 9 further illustrates the generality of this process by adapting the same input sequence to different genre references (action and drama). Together, they demonstrate that the adaptation process not only improves latent-space alignment but also yields output sequences that visually conform more closely to genre expectations.

6.3. On-Device Inference Efficiency

To assess the deployment feasibility of the proposed pipeline, we measured its inference time and storage footprint on an iPhone 14 Pro using CoreML. All evaluations were performed using the CPU backend for consistency with earlier assumptions. Each metric represents the average of 10 repeated trials under fixed input conditions.

The MobileNetV3-Small classifier processed a single image in 19.8 ms, while the genre-conditioned sequence encoder required 32.7 ms per execution. Given a shot sequence consisting of 8 images, the initial classification and encoding steps took approximately 191 ms in total. However, the most computationally intensive component was the sequence adaptation stage, which applied iterative shot-level transformations (e.g., swap, crop) and repeatedly evaluated their latent embeddings against genre-specific clusters. Under typical settings—evaluating 8 candidate transformations across maximum 7 adaptation steps—this stage involved 56 separate encoder invocations, amounting to 1831 ms in isolation.

Overall, the full end-to-end pipeline required approximately 2022 ms to process an 8-shot input sequence, with adaptation accounting for 90.6% of the total latency. This suggests that although the approach may not be optimized for real-time execution, its latency remains well within the bounds of interactive responsiveness. As such, it is well suited for on-device photo curation tasks where structural coherence and genre alignment take precedence over minimal processing delay.

The converted model sizes were 1.2 MB for the classifier and 1.3 MB for the encoder, with an additional 380 KB required for storing serialized cluster centroids. The total deployment footprint remained under 3 MB, indicating low storage cost despite the pipeline’s structural complexity.

To further illustrate the generality of the adaptation process, we reused the same perturbed horror input sequence from

Section 6.2 and applied the adaptation procedure under different genre conditions. By conditioning on action and drama clusters, the identical input was reshaped into outputs reflecting the rhythmic structures of these genres. This example highlights how a single sequence can be flexibly aligned with multiple stylistic references by reusing the same adaptation pipeline. The ability to achieve such genre-aware adaptation within only two seconds and under a 3 MB footprint underscores the novelty of our framework, bridging cinematic style modeling with practical mobile deployment in ways not previously demonstrated.

6.4. User Evaluation of Genre-Adaptive Curation

6.4.1. Design

To complement the quantitative evaluations described in

Section 6.1,

Section 6.2 and

Section 6.3, we conducted a user study to assess whether the proposed genre-adaptive curation method yields improved perceptual alignment with film genres and higher user preference compared to two baseline approaches. The three compared conditions were as follows:

- (1)

The proposed genre-informed transformation method;

- (2)

A random-order baseline in which the same images were presented in a randomly permuted sequence;

- (3)

A native iOS slideshow generated using Apple Photos, which replays images in their original (chronological) order using generic, non-genre-specific transitions.

Unlike the frame-level experiments in

Section 6.1 and

Section 6.2 that used accuracy, precision, or recall, the user study relied exclusively on perceptual measures—namely rhythm alignment, genre consistency, and overall preference. Descriptive statistics summarizing mean ratings and standard deviations across all conditions are reported in

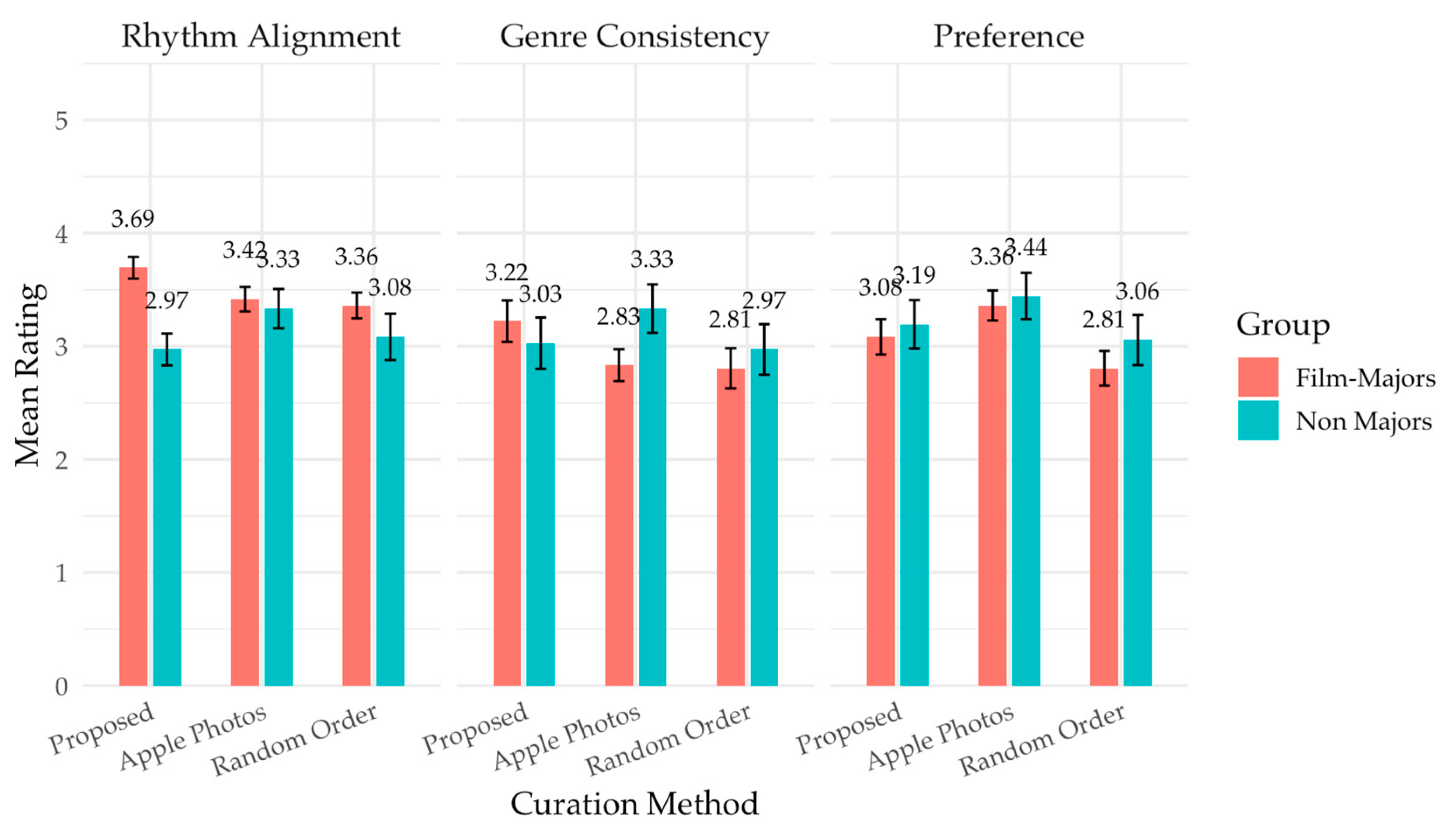

Figure 10.

6.4.2. Participants

A total of 24 undergraduate students participated in the study. Among them, 12 participants were female students majoring in film or media studies, while the remaining 12 were from unrelated disciplines with balanced gender distribution. This division into film/media majors and non-majors was central to the analysis, as group-level contrasts were of primary interest. All participants viewed the stimuli simultaneously in a lecture hall equipped with large-screen projection and stereo audio. Prior to the experiment, they were informed that they would be evaluating visual sequences representing different cinematic genres but were not made aware of the underlying curation methods or hypotheses being tested.

6.4.3. Procedure

Nine stimuli were generated by applying the three sequencing methods—our proposed genre-adaptive method, Apple-style playback with chronological ordering and native transition effects, and random ordering—to image sets representing three distinct genres: horror, drama, and action. The same image sets were used across conditions to isolate the effects of sequencing and framing. Each stimulus was rendered as a 15 s slideshow accompanied by a fixed background music track selected to match the associated genre. All participants viewed the nine sequences in a randomized block structure, with curation method order randomized within each genre.

6.4.4. Measures

Following each sequence, participants were given one minute to complete a short questionnaire on their personal devices. They rated the sequence on three five-point Likert-scale items:

- -

“This sequence visually resembles the style of [genre]” (genre consistency);

- -

“The flow and transitions of this sequence reflect the typical visual rhythm of [genre]” (rhythm alignment);

- -

“I would be willing to use or share this sequence” (preference).

Optional short-form comments were also collected for qualitative insight. To ensure independent judgment, participants were instructed not to discuss their responses and were physically spaced to prevent screen sharing. The total experimental session, including briefing and response collection, was completed within 20 min.

6.4.5. Results

The results revealed distinct patterns between participant groups, particularly in their evaluation of visual rhythm. A significant main effect of participant group was observed for rhythm ratings (F(1, 22) = 11.56,

p = 0.003), indicating that film/media majors and non-majors responded differently to the sequencing methods. Although the main effect of curation method was not significant (F(1.99, 43.74) = 0.65,

p = 0.526), a trend-level interaction between group and method approached significance (F(1.99, 43.74) = 2.80,

p = 0.072), suggesting that domain expertise may have moderated perceptions of visual rhythm. These contrasts were part of a planned exploratory analysis motivated by our hypothesis that domain knowledge could influence sensitivity to genre structure. Among film/media majors, the genre-adaptive method received the highest rhythm scores (M = 3.69, SD = 0.58), while non-majors favored the Apple Photos sequence (M = 3.33, SD = 1.04). This pattern underscores that the genre-adaptive method excels in rhythm alignment for expert viewers, whereas Apple Photos is more effective in driving overall preference among general users (

Figure 10).

Genre consistency ratings, in contrast, showed no statistically significant effects across either group or method. Although the genre-adaptive method yielded the highest overall mean (M = 3.12, SD = 0.17), followed closely by Apple Photos (M = 3.08, SD = 0.14), these differences were small and not statistically meaningful (F(1.86, 41.00) = 0.89, p = 0.412). Furthermore, no interaction was found between group and curation method. These results indicate that the curated image flow—regardless of structural sequencing—was insufficient to shift participants’ global perception of genre style. This limitation suggests that while shot size sequencing may influence local visual rhythm, it may not constitute a dominant cue for genre identification. For general users in particular, genre recognition likely depends on a broader range of stylistic cues, including color grading, framing composition, or narrative context. Future research should explore how such elements can be integrated into automated curation pipelines to improve genre salience.

In terms of preference, a statistically significant main effect of curation method was observed (F(1.82, 40.13) = 4.31, p = 0.023). Participants rated the Apple Photos sequences highest overall (M = 3.40, SD = 0.13), followed by the genre-adaptive (M = 3.14, SD = 0.13) and original sequences (M = 2.93, SD = 0.12). Post hoc comparisons confirmed that Apple Photos was significantly preferred over the original baseline (p = 0.0106), while no other contrasts—including adapted versus random—reached significance. This suggests that genre-informed sequencing did not reliably increase user preference relative to a baseline ordering. Optional comments indicated that participants appreciated the Apple-generated sequences for their aesthetic transitions, timing effects, and polished presentation. Although the internal rendering logic of Apple Photos was not analyzed in this study, these findings point to the importance of transitional smoothness and visual continuity in shaping affective responses. Moreover, the elevated preference for Apple sequences may reflect not only aesthetic appeal but also users’ familiarity with the iOS interface—raising the possibility of a familiarity bias or brand-based expectation effect. Future experiments should explicitly control for such confounds.

Overall, the user evaluation highlights both the strengths and limitations of the proposed genre-adaptive method. While it demonstrates potential for enhancing rhythm perception among expert users, its impact on genre recognition and user preference remains limited in the absence of complementary design features. Genre-aware shot sequencing alone may not suffice to convey genre identity or drive user satisfaction. One limitation of this study is the relatively small sample size (n = 24), which constrains the generalizability of the findings. Nevertheless, the results provide an initial proof-of-concept validation. Importantly, the study offers novel evidence that lightweight, genre-conditioned sequencing can measurably enhance rhythm perception—a dimension often neglected in prior photo curation methods. Future work will extend the evaluation to larger and more diverse participant groups to strengthen statistical power and ensure broader applicability.

7. Discussion

7.1. Application Potential

The proposed genre-based shot sequence curation system provides an alternative to conventional photo sequencing methods that typically rely on chronological order or metadata such as timestamps and geolocation. By reorganizing photo collections based on cinematic shot scale patterns, the system generates outputs that reflect genre-specific visual structure and rhythm.

This functionality can support personalized content creation scenarios—such as vlog editing, social media stories, and digital photo albums—where stylistic consistency across image sequences is desirable. By offering genre-conditioned transformations through an interactive interface, the system allows users to produce coherent visual arrangements without requiring technical editing expertise.

Furthermore, the ability to apply multiple genre styles to the same input enables exploratory and creative use cases. While the current implementation focuses on shot scale and sequence rhythm, future enhancements may integrate additional modalities (e.g., color schemes, soundtracks) to support richer thematic composition and audience-specific alignment.

7.2. Extensibility and Modularity

While the current system focuses on shot scale sequences, its modular design permits future integration of additional shot-level features, such as camera angles, lens effects, or high-level stylistic attributes. These features could be incorporated as auxiliary inputs or used to condition the sequence embedding process, enabling more nuanced curation behavior.

The architecture also allows for future multimodal extensions. For instance, textual metadata (e.g., captions or user-provided tags) and audio tracks could be processed through dedicated encoders and fused with visual features to enhance cross-modal coherence. Such configurations would require additional training data and evaluation protocols tailored to multimodal alignment.

Beyond genre conditioning, the framework may also support user-defined parameters—such as thematic mood, intended audience, or context of capture—as hypothetical conditioning signals. These could modulate latent representations or influence cluster selection during adaptation, opening up possibilities for more personalized and context-aware photo curation in future applications.

Another important direction is to evaluate the model’s applicability beyond Korean cinema. As genre conventions can vary across cultures, future work should examine whether shot scale rhythms learned from Korean films transfer to international contexts or require region-specific adaptation.

7.3. Limitations and Considerations

The current implementation adjusts shot scale through cropping centered on detected face regions. When face detection fails—due to small subject size, occlusion, or low image quality—fallback cropping based on image center coordinates is applied. In such cases, particularly when subjects are not centrally framed, the resulting composition may reduce perceived framing consistency or omit contextual cues, potentially affecting the overall visual appeal of the curated output.

Additionally, user interpretations of genre-specific sequencing patterns can vary considerably depending on cultural background, prior exposure, or media literacy. As observed in the user study (

Section 6.4), the proposed genre-adaptive method was more favorably rated by participants with film/media backgrounds, particularly for rhythm alignment. However, this effect was observed at trend-level significance and did not extend consistently across all evaluation criteria. For instance, improvements in rhythm alignment did not translate into higher genre recognition or stronger preference among general users.

Furthermore, the dissociation between genre-consistent structure and subjective preference suggests that user satisfaction may depend on additional aesthetic or interactional factors. Participant comments frequently mentioned the appeal of smooth transitions and familiar pacing in the Apple Photos sequences. These effects—potentially arising from platform-specific animations or rendering styles—were not replicated in our prototype, which may explain the relatively lower preference ratings for the proposed method despite its structural genre alignment. Future research should systematically assess how such surface-level visual refinements interact with structural cues to influence user evaluation.

Finally, while the proposed architecture is lightweight, its extensibility is constrained by mobile hardware limitations. Adding more clusters, integrating conditional variables, or supporting multimodal inputs would increase model complexity and inference latency. These extensions may require further optimization through model compression, quantization, or partial offloading to external processing environments in order to preserve real-time responsiveness on lower-end devices. Another important limitation is the scale of the datasets used for validation. The present evaluation relied on a limited number of genre-labeled sequences, which constrain statistical power and generalizability. Although the findings provide an initial proof of concept, broader validation across larger and more diverse datasets will be essential in future work.

8. Conclusions

This study presented a genre-adaptive photo curation framework centered on shot scale sequencing. We constructed a manually annotated dataset of shot-labeled images and sequences, developed lightweight models comprising a MobileNetV3-based frame-level classifier and a genre-conditioned variational sequence encoder, and implemented a fully integrated iOS application capable of performing on-device inference. Together, these components form a unified pipeline spanning data acquisition, modeling, deployment, and empirical evaluation.

Unlike conventional curation methods that prioritize chronological ordering or visual similarity, our approach reorders user photo sequences based on genre-specific shot scale patterns, thereby reflecting structural features characteristic of cinematic rhythm and style. Quantitative evaluations demonstrated clear improvements: the classifier achieved 69.9% accuracy and a macro-averaged F1-score of 0.646, while the adaptation module reduced latent distance to genre clusters by 22.7% relative to shuffled baselines. These results highlight the effectiveness of combining lightweight classification, genre-conditioned embedding, and similarity-driven adaptation into a single pipeline. To our knowledge, this represents the first framework to operationalize cinematic grammar for mobile-ready photo sequencing, addressing the gap left by prior frame-level or template-based approaches.

To assess perceptual validity, we conducted a user study comparing our method against two baselines. The proposed method elicited higher rhythm alignment scores among film/media majors, suggesting potential for expert-facing applications. However, the absence of consistent improvements in genre recognition and preference across all users highlights the need to combine structural adaptation with additional aesthetic or interactional enhancements.

Future work should explore the integration of fine-grained visual effects—such as transition animations or dynamic pacing—to improve user satisfaction. In parallel, the incorporation of multimodal features (e.g., music, text, or captions) may offer a path toward more expressive curation outcomes. Additionally, refining genre clusters using user feedback or enabling context-aware personalization may further enhance the applicability of the system in memory curation, media archiving, and lightweight content creation. Additionally, refining genre clusters using user feedback or enabling context-aware personalization may further enhance the applicability of the system in memory curation, media archiving, and lightweight content creation. Future work will also extend validation to larger and more diverse datasets to ensure broader generalizability of the findings.