1. Introduction

Feedback is a fundamental component of learning (including game-based learning) in modern educational settings [

1,

2,

3]. Feedback is “conceptualised as information provided by an agent (e.g., teacher, peer, book, parent, self, experience) regarding aspects of one’s performance or understanding” [

4] and “intended to modify [their] thinking or behaviour for the purpose of improving learning” [

5]. Yet, as Nicol and colleagues have found, national student surveys in the UK and Australia demonstrate that across the sector, students are generally less satisfied with the quality of feedback than any other category related to their student experience [

6,

7,

8]. Whilst researchers such as Hattie and others have argued for increasing and improving the quality of feedback in universities, the results from the research are variable, seldom based on a consistent theory, and can be very task- or assessment-focused, potentially skewing outcomes [

3]. Hattie argues that “feedback effects are among the most variable in influence” [

9]. Additionally, a clear link between performance and feedback is difficult to define in terms not restricted to given learners or contexts. Improvement is often theorised as the purpose of feedback, supported by qualitative research centred on the learner and on feedback literacy [

7,

10]. This thread of research addresses learner and teacher behaviours, rather than the design of feedback.

Several observations from the literature have attempted to explain this high level of variability, including practitioners and students failing to fully understand the role of feedback in an educational context, a lack of innovation despite many new feedback mechanisms (e.g., peer feedback, self-feedback, Learning Management System feedback, and AI-based automated feedback) [

11], and a disjunction between learning design, assessment, and feedback. This has led to teaching practitioners receiving inconsistent messaging regarding how to implement feedback effectively. Such uncertainty is evidenced by student satisfaction surveys which imply students feel under-served [

12], but which arguably ask the wrong questions, perpetuating outdated views on feedback [

13].

Our review found a dearth of robust quantitative studies and noted the lack of a solid theory to inform practitioners. Kluger and DeNisi [

14] found that studies have often been based upon several erroneous and anachronistic assumptions, arising from the initial premise that all feedback is positive, whilst Nicol and colleagues [

6] argue that there is no coherent underpinning theory of feedback that is both theoretically and pragmatically sound.

Our approach is to address the main issues highlighted by these studies, by developing a feedback model based upon constructivist theory and a framework of learning that has been validated and used widely: the four-dimensional framework, with its key elements: the learner, pedagogy, representation, and context [

15]:

Learner—involves profiling the learner to ensure that the learning intervention satisfies their requirements.

Pedagogy—considers which instructional model(s) could be most effective to satisfy the learning requirements (e.g., constructivist and situative models).

Representation—considers how the learning content should be represented to include fidelity level, interactivity, and accessibility requirements.

Context—considers the environment where learning is taking place and the requirements for resources to support the learning.

Feedback design can be affected by any of these four elements. This paper explores how feedback design can be overlayed within this model towards defining a feedback model that is theory-based and can be used by learning practitioners to aid them with designing and evaluating feedback in their own courses, particularly those that include game-based learning. The feedback model discussed in this paper was first published as a book chapter [

16] and emerged from a pragmatic randomised control trial designed to compare traditional to games-based learning approaches in a medical triage training context. Since the study, we have been iterating the model to provide a systematic approach to the design of automated game-based feedback.

This paper thus revisits this model, with the aim of assessing its efficacy to be used to support practitioners. Over the last ten years, we have found that feedback has become increasingly prominent in models of assessment and quality assurance, and in the design of effective, engaging, and reflective teaching and learning (e.g., [

17,

18]). As such, the feedback model has become more relevant to pedagogical practice in general and to Technology-Enhanced learning (TEL) in particular, with its increased use of digital feedback. With this paper, we seek to assess the model by applying it retrospectively to contrasting cases, to evaluate whether it is a useful tool for practitioners.

2. Models of Feedback in Education: Literature and Research Overview

As highlighted above, the theoretical/conceptual basis of how we use feedback is a major critical stumbling block in the literature. In part, this is due to how we consider learning has changed over time and varies significantly according to context, learner specifics, representation of learning (e.g., interactivity), and the pedagogy used. Learning experiences are becoming less focused on only knowledge acquisition (e.g., curriculum-based online learning) and more focused on building critical skills. For example, the QAA Descriptors for level 6 qualifications in the UK ([

19]), as well as outlining required systematic and conceptual understanding, also reference the application of knowledge and transferable skills and “learning ability”. This shift has implications on how we design and enact feedback.

One established model for feedback was developed by Rogers [

20]. It defines feedback descriptively in “five layers” as:

Evaluative: makes a judgement about a person, evaluating worth or goodness;

Interpretive: interpreting and paraphrasing back to the person;

Supportive: positive affirmation of the person;

Probing: seeking additional information;

Understanding: not just what is said but the deeper meaning.

Evaluative feedback can be broadly equated to the common interpretation of summative feedback. It provides the learner with information on whether they have performed a correct action or calculation, but does not seek to understand or address why this was, or failed to be, the case. As the layers are moved through, they require more detailed understanding of, and interaction with, the learner, and the tailoring of feedback to fit their needs in terms of addressing specific misconceptions, errors in judgment, or misunderstandings.

Another impediment is that in most training and educational settings, we tend to think of feedback in much simpler terms: giving students feedback versus not giving feedback; immediate feedback versus delayed feedback; “good” or “bad” feedback; and formative versus summative. However, while these models and classifications are available, questions about how to provide feedback in contextualised learning environments tend to dominate current literature (e.g., [

21,

22]). Such focus upon how to implement feedback, rather than understand it from a pedagogical standpoint, can limit innovation. Therefore, we advocate a theory-based approach for the feedback model. Any successful model needs to consider not only the format of feedback (e.g., task level) and the type of feedback given (e.g., leaderboard), but also the pedagogy used, context of learning, and the method of communication.

Research findings are inconsistent regarding the value of feedback in education and performance improvement. An Australian study of 4514 university students involved asking them to rate the level of detail, personalisation, and suitability of feedback [

23]. The results showed that different feedback modes may offer certain challenges and benefits for students. For Hattie and Gan [

9], the large effect sizes for feedback in school studies reviewed “places feedback amongst the ten influences on achievement” (p. 249). However, Balcazar and colleagues [

24] argued that “feedback does not uniformly improve performance” (p. 65). Meanwhile, Latham and Locke [

25] point out that few concepts in psychology have been written about more “uncritically and incorrectly” (p. 224).

Furthermore, Kluger and DeNisi [

14] argue that many feedback interventions are ineffective and, indeed, may have a negative effect on learning. In their meta-analysis of 604 studies, they showed that a third of studies found interventions were ineffective, or even had negative effects on performance. They also argue that a lack of a feedback intervention theory could be partly responsible for the variability of feedback interventions.

Even the role of the agent awarding the feedback can create negative learning. For example, Kluger and DeNisi [

14] often found feedback interventions were limited due to an external agent (providing the feedback) or there was a misalignment of task with the feedback, favouring feedback from the task, as in discovery learning over “feedback from an

external agent” ([

14], p. 265). This demonstrates an important insight that feedback from an “external agent”—in this context, a teacher—can, in fact, have a negative impact upon performance. In particular, critical or controlling feedback can result in negative transfer of learning, as can being rated comparatively to peers; additionally, non-specific feedback can create confusion [

5]. Schmidt et al. [

26] found that faculty-tutored groups achieved slightly better performance over peer-tutored groups, but “peer tutoring was equally beneficial in the first year of the course” ([

27], p. 329). The role of computer-mediated feedback can help neutralise the impact of negative feedback; if feedback is anonymised and purely used for scaffolding and not reflected in grades, then this approach may be liberating for some learners [

28].

As well as the agent applying the feedback, the timing and frequency of feedback is important. For example, interrupted learning with an external agent feedback during problem solving can be counterproductive [

5]. The broader literature is inconsistent on whether delayed or immediate feedback should be given [

29,

30].

However, one of the most powerful forms of feedback arises through peer interactions (e.g., [

6]). Nicol and colleagues [

6] are quick to point to peer review and more active student engagement with feedback for improving performance and advocate the move away from the delivery model of teacher feedback. Feedback needs to empower the learner, motivate them, and allow them to make modifications in their behaviour. Our studies around game-based learning and feedback have been informative here.

Over the last ten years, studies have been undertaken that show the effectiveness of games as learning tools (e.g., Boyle et al. [

31]). However, as [

31] noted, there is comparatively little conclusive evidence on best practices, and a general paucity of large, robust trials of game-based learning. Even as this gap is addressed, the question itself of whether games are an effective learning tool in isolation is analogous to that of whether books, lecture series, videos, or other media are effective on their own. They certainly can be, but it is dangerous to attempt to infer generalisable conclusions from single instances.

Hattie describes three forms of feedback in his work [

32]: task, process, and self-regulatory feedback. If we consider constructivist learning, particularly experiential or active learning, then these groupings of task, process, and self-regulated feedback cannot be used. This is because the learning is an experience rather than a defined set of knowledge that is task-centred and has observable outcomes when transferred to practice. To conceptualise and design feedback as a mechanism that helps students apply and transfer learning, a more sophisticated model needs to be developed to ensure that feedback is given at the appropriate time and the right way to acknowledge the following:

Learner: what the learner is doing and what they already know;

Learning/game: how learning is conceptualised and developed within the game;

Context and the social interactions: how the learner interacts within/outside the game.

Through review (e.g., [

31]), best practices have been loosely identified as linking feedback to motivation, considering different approaches, making feedback central to game play, and considering whether feedback is formative and immediate. In many ways, game-based learning feedback overcomes some of the major issues identified in the literature on feedback: it anonymises the feedback agent, it can be programmed to objectively scaffold learning while empowering learners, and it can be varied into multiple types, formats, and modes presented in varied ways that can be personalised to the learner/cohort. Research, therefore, often focuses on how these formats and modes might best be selected, with reviews such as that of Johnson et al. [

33] frequently identifying the factors of content, modality, timing, and learner characteristics.

Whilst such frameworks can be useful as a design aid, games are particularly difficult to design to a pattern, given the many different design routes that can be taken. This is often compounded by a dual agenda for a serious game: often they are expected to engage and educate, and overcome barriers faced by other media when attempting to reach a disengaged audience. As such, entertainment design principles, which commonly emphasise engagement, must be offset against educational design principles, which commonly emphasise learning outcomes and learning transfer. Typically, in a game design intervention when attempting to provide direct, instructional feedback, the purpose is to minimise both content and frequency as much as possible to avoid interrupting gameplay [

33,

34]; moreover, “good” feedback in entertainment games is often viewed as arising naturalistically from gameplay, rather than through disruptive messaging.

The emerging application of flow [

35] as a means of formalising and understanding engagement with a game also raises interesting questions regarding the provision of feedback. Kiili et al. [

36] extended the flow theory by replacing Csikszentmihalyi’s “action-awareness merging” dimension with the “playability” antecedent. The original dimension states that all flow-inducing activities become spontaneous and automatic, which is not desirable from a learning point of view. Kiili at al. [

36] argue that the principles of experiential and constructive learning approaches suggest that learning should be an active and conscious knowledge construction process. Even though reflection during game playing may not always be a conscious action by a player, only “when a player consciously processes his experiences can he make active decisions about his playing strategies and thereby form a constructive hypothesis to test” ([

36], p. 372). With these perspectives, it is possible to make a distinction between activities related to learning and game/play control—meaning controlling a game should be automatic, but the learning of the educational content should be consciously processed and reflected upon [

37].

Hence, if the game designer’s goal is to establish and maintain flow, this can be implemented by linking the task difficulty and context against the player’s skill level and prior knowledge. However, it could be argued that establishing and maintaining flow is more a task of balancing the perceived task difficulty against a user’s perceived level of skill, because the outcome depends on this perception rather than absolute performance measures. Hence, it can be suggested that a core role of effective game-based feedback in terms of sustaining engagement is influencing these perceptions.

3. Developing the Feedback Model (4F) as an Extension of the Four-Dimensional Framework (4DF)

In a previous study, we identified the importance of feedback as a central aspect of learning in a training game [

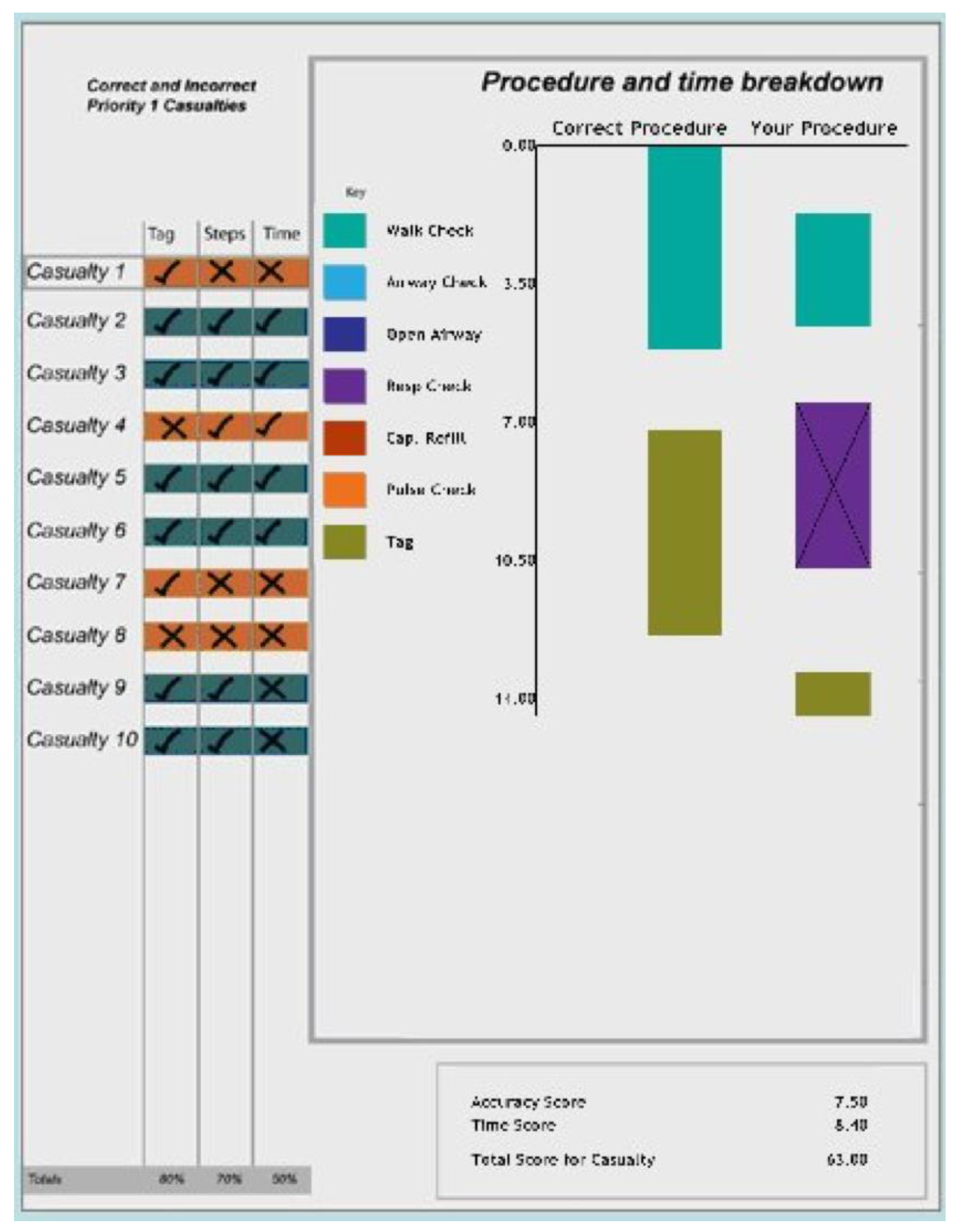

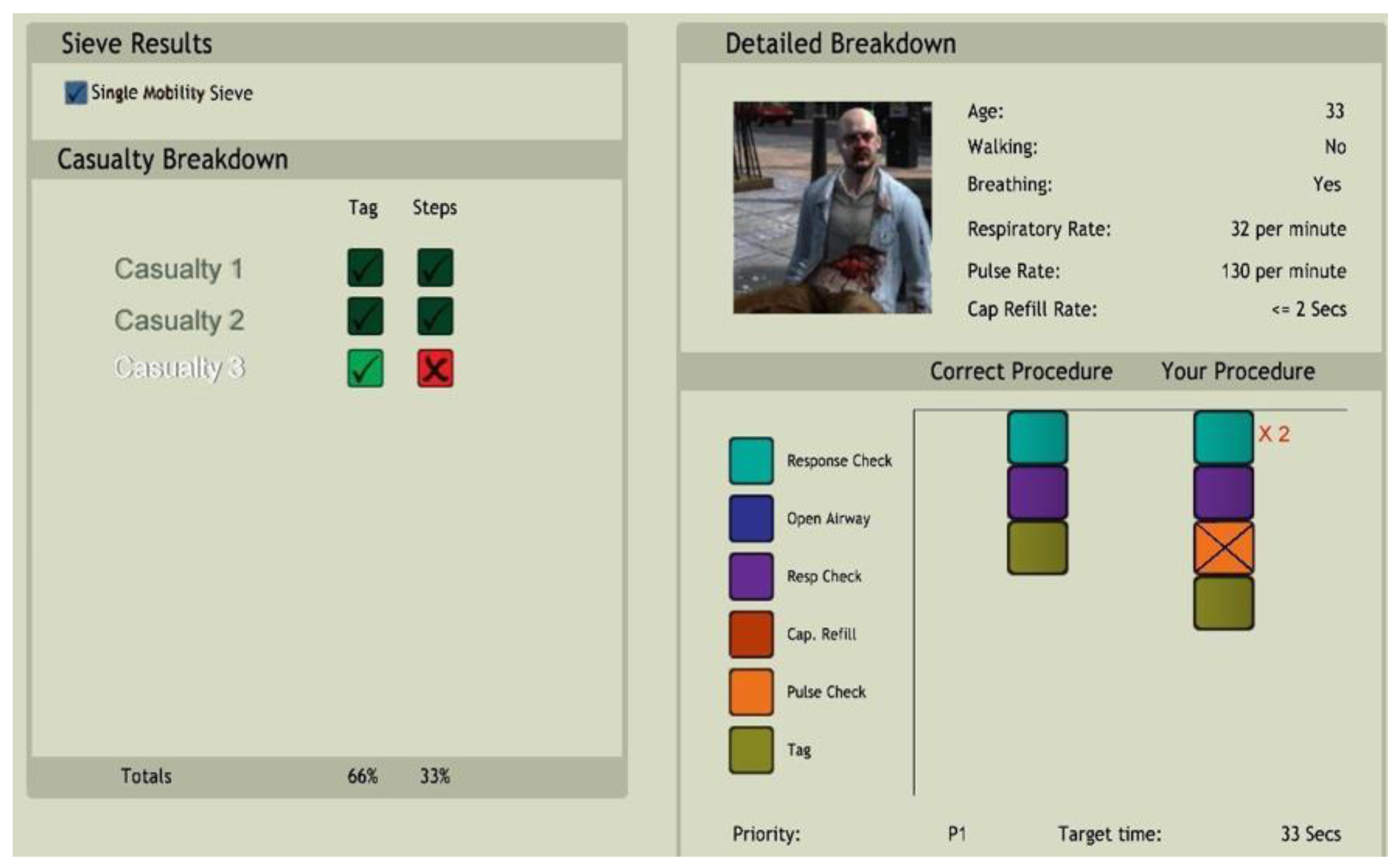

38], focusing on medical triage training. In the study, we compared the game version of a triage sieve-and-sort exercise with a card-sort version of the exercise. The game used 3D modelling of the scene and casualties. The evaluation used actors as casualties. The main feedback we received from participants in the pilot of the experiment was that they wanted more regular feedback. In the pilot version of the triage game, the participant sorted through ten victims before receiving any feedback (

Figure 1). The design was changed to three casualties before feedback was given (

Figure 2). What the researchers found was that feedback was needed by the participants immediately. The sooner it could be provided, the sooner that learning could be reflected upon (meta reflection) and reinforced. Research investigating the relationship of feedback timing to learning and performance reveals inconsistent findings [

5].

Our first research observation related to the representation dimension of the 4DF. The triage game presented feedback differently compared to traditional learning (e.g., it was more embedded and hidden than in traditional learning). The anonymisation of the feedback empowered rather than criticised the learner. In the first version of the pilot study, we included pages of feedback on every move taken, but direct feedback from the participants indicated they only wanted to know the relevant information. This led to our second observation: that feedback had to be presented in a way that participants could clearly understand at a glance. Importantly, the study found that “[p]articipants who used the revised feedback version performed significantly better (χ

2 = 16.44,

p < 0.05) […] Therefore, this analysis posited that … predicted frequency (timing) and form (complexity) of feedback has an impact on learning outcomes” ([

16], p. 50).

Drawing on these findings, Dunwell et al. [

16] developed the feedback model to support effective design and evaluation of feedback (

Figure 3).

The feedback model takes two starting points; first, any learning is designed and developed using good pedagogical principles, including a consideration of the learner, the context of learning (including discipline), the way learning is (re)presented (e.g., face-to-face, online), and the learning theory used (e.g., experiential, exploratory). Once the learning has been designed and developed, it must be delivered. This involves agents (e.g., teacher, team of teachers, online, or in-game) interacting with the learner either directly or in a mediated way (computer mediation). Once the learning is designed, developed, and delivered, this model can be used to design, develop, or deliver feedback either in situ (within the learning experience, task, or activities) or after the learning (after-action review, debriefing, or reflection session). As with all good learning design, the importance of aligning learning objectives with assignment, assessment, and outcomes is central [

39]. In addition, a specific implementation of the feedback model should be evaluated with learners and refined as necessary. Considering each variable in the feedback model (see also summary of main sources in

Table 1):

Type of feedback. This variable of the feedback model is concerned with the type of feedback used (e.g., the level of support provided to the learner when errors are made). We have chosen to adopt Rogers’s model for the type of feedback given. As Shute [

5] outlines, each has a place in learning; often, directive is more useful at the early stages of learning. In the later stages, facilitative may be more effective. Scaffolded feedback may include models, cues, prompts, hints, partial solutions, and direct instruction [

5]. In line with Vygotsky [

40], the higher the cognitive understanding of the learner, the less scaffolding is required as they build more detailed cognitive maps. The literature also indicates that the more specific the feedback the better, especially for novice learners. When selecting the type of feedback used, it is worth reflecting on the new approaches to learning design that are used widely by practitioners today. Learning design has become less about knowledge conveying and more about experience design [

41]. One example of this tendency is “action mapping”, used widely by training practitioners [

42]. The action mapping approach focuses on what people need to do rather than what they need to know. The practice activities are linked to a business performance goal agreed with the client and only the essential information needed to perform these activities is provided.

Content of feedback. This variable of the feedback model is concerned with the content. This should be relevant to achieving the learning objectives. In the pilot version of the feedback for the triage game, not all the content was essential to the learning. In the revised version, the feedback content was simplified so it only included essential information relevant to achieving the learning objectives.

The action mapping approach to instructional design helps ensure that learning content (including the feedback) is essential to perform the practice activities to achieve proficiency.

Format of feedback. This variable of the feedback model is concerned with the format of the feedback and the media used to deliver this (e.g., text, audio, or video). In a detailed synthesis of 74 meta-analyses that included some information about feedback [

4], the results demonstrated that most effective forms of feedback are in the form of video, audio, or computer-assisted instructional feedback.

Frequency of feedback. This variable of the feedback model is concerned with timing and frequency. Shute ([

5], p.163) argues that researchers have evaluated immediate feedback and delayed feedback with inconclusive results as to which is better at supporting learning. The four dimensions of learning need to be considered, in particular, the learner preferences and type and context of learning. For example, a novice learner might need more immediate and regular feedback than an expert learner. The age of the learner is also a factor, with school age students requiring more directive feedback than university students, who may benefit from delayed feedback, providing more time for cognitive independence to be developed. According to Shute [

5], delayed feedback has been shown to be as effective as immediate feedback. Interestingly, field studies showed that immediate feedback worked more effectively, while laboratory studies found that delayed feedback produced better results ([

26] quoted in Shute [

5], p.165-6). Shute concludes that both feedback types have positive and negative outcomes depending on other variables (e.g., novice vs. expert). In Dunwell et al. [

16], it was the participants who asked for more immediate feedback, and as a field study, we certainly feel that it is an interesting finding, but it also lends weight to the importance of iterating the feedback approach with participants. The frequency of feedback is also significant. Again, novice learners might require short feedback delivered regularly, while an expert learner might benefit more from fewer feedback interventions but possibly with more details given. This also depends on the type of learning activity or assignment provided. A shorter learning task would require proportionately less feedback than a longer and more complex learning task.

The remaining addition to the 4F model emerged while analysing the use case and is presented here to present the description of the model in one place:

Delivered by Agent is not a variable of the feedback model, but instead is the person or technology that presents and regulates feedback. Feedback may be delivered by an external agent [

14] or directly from the task or educational technology. In higher education, external agents are most often typified as peer vs. faculty (e.g., [

26,

27]). In some contexts, technology may act as an agent; for example, if medical communication training is live, then feedback may be delivered by role play professionals, but in simulations, a simulated patient often delivers feedback [

43]. The task may be a factor in the selection of the agent; for example, peer feedback has been found to be effective in developing teamwork [

44]. The needs of the learner must to be taken into account; for example, there is evidence that learners at lower levels may respond better to peer feedback, while more advanced learners benefit from faculty feedback [

26]. Agents play a role in regulating feedback to match the needs of individual learners. This is an important skill for faculty [

45,

46]. Similarly, regulating feedback to performance is recommended practice for the design of educational games [

47], which is often implemented through the design of levels with increasing degrees of challenge.

Table 1.

Summary of key sources.

| 4F Dimension | Literature Sources |

|---|

| Type | Rogers [20], Vygotsky [40] |

| Content | Hattie [32], Shute [5] |

| Format | Ryan et al. [23], Lachner et al. [28], Johnson et al. [33], Killi et al. [36] |

| Frequency | Shute [5], Schroth [29], Butler et al. [30], Johnson et al. [33] |

| Agent | Schmidt et al. [26], Topping [27], Donia et al. [44] |

It is noteworthy that the effectiveness of feedback does not rely solely upon any of these feedback variables or the effectiveness of the agent, but also on the nature of the task and ability of the learner ([

5], p. 165). The feedback model (4F) is presented in

Figure 3. The idea is that you conduct analysis (user research) to find out about the learner and the context that can inform the pedagogy and suitable representation, and this information feeds into the design of the feedback for each agent. For example, the analysis (user research) finds that the target audience are novices and lack confidence in the skill to be taught [learner characteristics]. This informs the feedback design (using the rubric in the feedback model), with greater consideration given to the timing for feedback (i.e., that feedback is given close to when the new knowledge/skill is introduced).

Figure 3 shows the four feedback variables type, content, format, and frequency. We also show a link to evaluation, which refers to the iterations often used to strengthen feedback: stakeholder analysis through survey and focus group methods and analyses through data and evaluation research. This was derived through literature review findings (noted above and in [

16]), outcomes of our research, and by adopting Rogers’s feedback model. Rogers’s model focuses on categories of feedback (evaluative, interpretive, supportive, probing, and understanding [

20]).

We have used the four feedback variables (

Figure 3) to create a grid (rubric) which can be used either retrospectively or in advance. A separate team applied the rubric to a use-case as a tool to help them reflect on the effectiveness of the feedback design. The use-case is a module delivered to undergraduate business students in England regarding the task of a business simulation game. In the following section, this case is described and discussed in terms of the feedback issues identified.

4. Use-Case: Feedback in a Market Simulation Game

This use-case concerns a second-year university module, which used an off the shelf market simulation game. Responses to the standard end of module survey question “I have received helpful feedback on my work (verbal or written)” was 2.6 (on a 5-point Likert scale where 1 is ‘definitely disagree’ and 5 is ‘definitely agree’, n = 87, 8.8% of the cohort). It is known that students are less satisfied with feedback than other aspects of their experience, and the standard question prompts the students to only consider “verbal or written” feedback, thereby predisposing them to ignore other sources of feedback, particularly the game. However, the module team wished to articulate and reflect on the feedback design and used the feedback model as a framework for reflection within a continuous improvement process.

The module was taught as an experiential learning experience in which business school students were expected to synthesise and apply knowledge learnt in previous modules to challenges in the game. The content comprised a small number of technical lectures, clarifying decisions to be made in the game in terms of prior learning on marketing, operations, finance, etc., and playing the game. The game used was an educational simulation of a mobile phone market. During the module, teams of students (companies) played four rounds of the game with rival teams in each class of about 35 students competing in their own unique simulated market. Teams were assigned randomly and included students who were enrolled in a range of business school programmes, including accounting, information systems, law, and general management.

The first two rounds of the game were played twice, with the first iteration being treated as two practice rounds. Thus, a total of six rounds were played. The game provided summative feedback, in the form of results at the end of each round. Students could also access rich feedback on their performance in the form of typical finance, marketing, and operational data. This feedback is a simplified version of real-world business data, providing a high-fidelity game experience. Success depends on students learning how their business decisions affect the business results. For example, an elementary lesson is that setting the price of a low-tech phone high, compared to fancier phones offered by your competitors, will reduce sales.

Classes were held in alternate weeks to offer face-to-face time with peers and tutors. This provided students with opportunities to gather peer feedback and tutor feedback to help interpret the game results. The simulation was accessed via a web interface which was available during the module, and students were encouraged to interact with the game outside of tutorial time, alone or with other team members.

The feedback model was articulated by identifying each agent that provided feedback to the learners and summarising the feedback the agent provided according to the variables of the model (

Table 2). This was an orthogonal dimension to the original proposal for the model. There were three agents involved. The first is the game/simulation which provides feedback on performance in the form of business data. Second, there are the tutors, who provide formative feedback during class time and in office hours. The tutors also provide summative feedback on a piece of reflective writing, which was the main assessment for the module. The third agent is peer interaction, principally with other team members. The module encouraged group discussion of the decisions, with students required to sit at team tables in class, and encouraged to meet and communicate outside class to discuss the data and decisions.

Next, the team reflected on the different variables in the feedback model for this use case:

Type of feedback. The competitive nature of the game meant that groups received comparative evaluation of their performance. For the groups that were not “winning”, tutors observed that this could produce a negative effect. This is where feedback from tutors became an essential part of the learning design. Tutors typically took a Socratic approach, asking groups to verbalise their decision processes, diagnosing their level and regulating the game feedback by pointing learners towards parts of the business data relevant to their next decisions and explaining it at a level they could understand. As a result, tutors’ formative feedback was mainly interpretive and probing. In parallel, students were receiving peer feedback, mostly from members of their team, but also from friends on the course; based upon observations in class, students could both be supportive of each other and evaluative. Behavioural deficiencies were critiqued, such as team members who did not contribute or stick to deadlines. The last type of feedback students received was the summative feedback on their written assignment. This was typical assessment-focused feedback of the type which is often perceived by students as the most important feedback they receive because it is associated with a mark.

Content of feedback. The framework provided an opportunity to evaluate how the feedback provided aligned with the learning objectives and, thus, the learning design of the module. It was found that the feedback types available aligned with the objective of the module well.

Format of feedback. Feedback was provided in a range of written and verbal formats, as summarised in

Table 2.

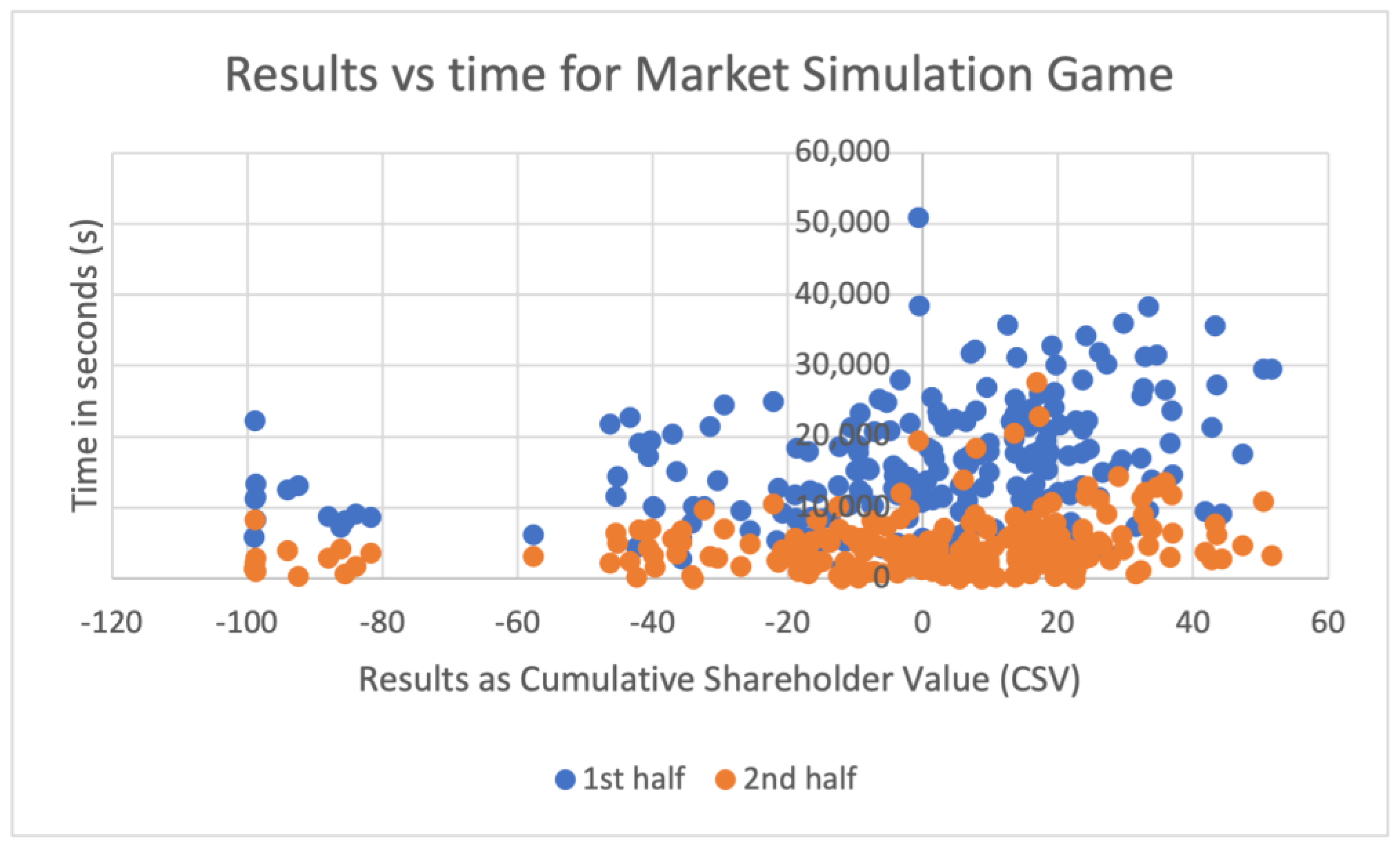

Frequency of feedback. The simulation game system provided access to data on the amount of time students spent logged into the system. This provided a proxy measurement of the time teams spent obtaining feedback from the game, in and out of class. There were n = 986 students enrolled in the cohort, who competed in 30 simulated markets, with between five and seven teams (companies) in each simulated market. Data were analysed on a per-team basis because teams were encouraged to sit together to work through the simulation. Hence, logged time for a particular student is not a good measure of their time spent interpreting feedback and making decisions. It was found that students typically spend longer interpreting the data in the first half of the module than the second half (see

Figure 4 and

Figure 5). Furthermore, there is a correlation (Pearson’s

r = 0.31,

p < 0.05) between final round CSV (Cumulative Shareholder Value) result and time logged in the simulation during the

first half of the module (i.e., practice rounds 1 and 2, and round 1). No such correlation at the 0.05% level was found for the second half of the module (rounds 2, 3 and 4). This suggests that novice students were empowered by the game format to seek more feedback in the early stages, but could self-regulate the amount of time they spent when they became more expert. Tutor formative feedback was delivered in class for most students and was available bi-weekly. The tutor summative feedback was delivered once at the end of the module. The frequency of peer feedback could not be measured, especially as much of it took place in the student’s own time and through secure messaging services.

Delivered by Agent. Three types of agents delivered feedback, the game, tutors, and peers. Two key points from the above analysis are that tutors had an important role in regulating the game feedback, and that teamwork issues were a common theme in peer feedback.

Analysis of the use-case using the feedback framework has made it apparent that, contrary to indications from the student survey, the feedback provided to students is rich across all variables in the model. Furthermore, the feedback aligns well with the learning objectives. These are strong foundations on which to build. One weakness identified is that the type and amount of tutor feedback needed to scaffold understanding of the business data, together with the observed frequency of seeking feedback from the game at early stages, suggest that the task level was not well balanced against the perceived level of skill of the learners at the beginning of the game. In order to obtain a better balance between the learner’s perceived skills and the task, while attaining the same level of learning, a game which can increase the complexity of the business data round by round could be identified. This would allow the learners to build cognitive independence in early rounds. The competitive nature of the game created comparison between peers, which could create negative effect and the disengagement of learners. Given that the nature of a competitive marketplace is that most players do lose, many students inevitably received negative evaluative feedback from the game. Negative effects could be mitigated by avoiding use of leaderboards and instead drawing learners’ attention to improvement and good decisions. Lessons could be learnt here from entertainment games with mechanisms for giving small but frequent rewards, such as points, badges, and automated praise for good play. Finally, it can be recommended that the module team develop learners’ feedback literacy, including communicating to the students that the business data is feedback and that their aim is to improve.

5. Discussion and Conclusions

In this section, we reflect on the assessment of the 4F feedback model and articulate on its contribution to emancipating meaningful and purposeful feedback through interactive multimedia systems such as games. Recommendations are made to aid the design of feedback for activity-centred learning, of the kind explored in the examples in this paper. Future work is outlined.

It became clear, from the assessment of the business game case study, that the framework was helpful in supporting and reflecting upon the design of feedback, but not yet complete. The module team, who carried out the use-case assessment, identified a need to examine the

agent, the human or technological actor that provides the feedback. Therefore, the revised feedback model (

Section 3) acknowledges the role of the provider of the feedback and areas where the agent can be aligned with the learner needs in designing feedback. This refinement of the model makes a novel contribution to the 4DF model and our understanding of factors to be considered in the design of feedback. Furthermore, the inclusion of an agent dimension in the model is well supported by the literature, and our observations align with the literature findings on faculty agents regulating feedback and the effectiveness of peer agents in delivering feedback on teamwork behaviours.

Table 2 summarises the main assessment outcome of the analysis of the business game use-case. This is presented here as a rubric which could be used for both the design of feedback and the assessment of it. The main aim is to provide insights into current modes of feedback, to present opportunities for discussion, reflection, and iteration of feedback with students and/or teaching practitioners. There are few models and tools available to practitioners to support their selection, use, and evaluation of feedback. The model and rubric together provide a conceptual underpinning and a tool to address this gap. Practitioners might use the rubric to consider the four feedback variables, plus the delivery agent, with respect to feedback design practices, the literature, and their own experience, and should be able to iterate these approaches over time with courses and activities to strengthen student performance outcomes.

The business game use-case deployed more than one feedback type, content, format, and frequency, and three different agents. This variety provides a range of opportunities for improving the design of feedback according to learner needs. For example, should peer feedback become a more explicit process, guiding learners to reflect on their own role as feedback agents? Could the game feedback be designed in such a way that the students can more easily see how to formulate actions to improve their performance? An example of this kind of response can be found in the triage game example. The researchers found that feedback had to be presented in a way that participants could clearly understand at a glance, and redesigning the feedback delivered by the system resulted in significant improvements in their performance.

In the triage training evaluation, the research team found that learners wanted more regular feedback, and the feedback was needed immediately. The importance of regulating the frequency of feedback also emerged in the business game use-case, which found that university students sought more feedback in the early rounds of the game and students who actively engaged with feedback early on ended up performing better.

From this assessment of the 4F feedback model, the evaluation of the triage training game, the use-case, and the literature review, we make the following recommendations for aiding in the design of student-centred and activity-based feedback:

The format of feedback (e.g., dashboards) is critical in supporting learners, but we found variations in how individuals and groups responded to it.

The

frequency at which feedback is given is critical. However, the literature and our findings are not in agreement about whether it should be immediate or delayed or both [

5]. More research is needed.

Frequent feedback in the early part of the course/module seems to be valuable to scaffold learning. Ideally, it should be given in parallel with the main tasks and activities being learnt.

The content of feedback should be presented in a clear, simple, and usable way for learners to understand, with clear pointers to improvements needed.

The agent that delivers feedback has an important role in regulating feedback, and agent type may sometimes need to be aligned to the content of feedback.

Ideally, feedback should be provided in multiple configurations to meet the needs of individual learners and address different learning outcomes.

As we have found, there are pedagogic and learning gains according to how we give feedback, when we give feedback, and what the feedback is, and these may have a significant impact upon learner efficacy, autonomy, motivation, and performance. More research is needed to understand the shift implied by digital feedback and game-based feedback modes.

The 4F feedback model provides a conceptual underpinning based upon constructivist theory and informed by practice. We recognise that a full validation would be beneficial, but the case study presented here suggests that the use of this model does allow for practitioner reflection, allowing educators to reflect on feedback design. While the examples presented here concern game-based learning systems, the model should be generalisable to broader learning design instantiations, and further validation should examine other delivery modes. Future work will, therefore, seek to develop a protocol and guidelines for deployment of the model, consider the development of feedback design tools and rubrics for practitioners, consider the importance of feedback literacy, and develop more case studies to evaluate which types, timing, and modes of feedback communication are the most effective in different contexts and with different learners.

Author Contributions

Conceptualisation, S.d.F., V.U., K.K., M.N., P.P., P.L., I.D., S.A., S.J. and K.S.; methodology, S.d.F., V.U., K.K., M.N., P.P., P.L., I.D., S.A., S.J. and K.S.; validation, S.d.F., V.U., K.K., M.N., P.P., P.L., I.D., S.A., S.J. and K.S.; formal analysis, S.d.F., V.U., K.K. and M.N.; investigation, P.P., P.L. and V.U.; resources, P.P. and P.L.; data curation, V.U. and K.K.; writing—original draft preparation, S.d.F., V.U., K.K., M.N., P.P., P.L., I.D., S.A., S.J. and K.S.; writing—review and editing, P.P. and P.L. All authors have read and agreed to the published version of the manuscript.

Funding

This research is partly funded by: The UK Department of Trade and Industry Technology Program: The Serious Games-Engaging Training Solutions Project (SG-ETS), Partners included Birmingham, London, and Sheffield Universities and Trusim, VEGA Group, PLC, and also by the Strategic Research Council (SRC) (Finland).

Conflicts of Interest

The authors declare no conflict of interest.

References

- Petridis, P.; Traczykowski, L. Introduction on Games, Serious Games, Simulation and Gamification; Edward Elgar Publishing: Cheltenham, UK, 2021; pp. 1–13. [Google Scholar]

- Ovando, M.N. Constructive feedback: A key to successful teaching and learning. Int. J. Educ. Manag. 1994, 8, 19–22. [Google Scholar] [CrossRef]

- Schneider, M.; Preckel, F. Variables associated with achievement in higher education: A systematic review of meta-analyses. Psychol. Bull. 2017, 143, 565–600. [Google Scholar] [CrossRef] [PubMed]

- Hattie, J.; Timperley, H. The Power of Feedback. Rev. Educ. Res. 2007, 77, 81–112. [Google Scholar] [CrossRef]

- Shute, V.J. Focus on Formative Feedback. Rev. Educ. Res. 2008, 78, 153–189. [Google Scholar] [CrossRef]

- Nicol, D.; Thomson, A.; Breslin, C. Rethinking feedback practices in higher education: A peer review perspective. Assess. Eval. High. Educ. 2014, 39, 102–122. [Google Scholar] [CrossRef]

- Carless, D.; Boud, D. The development of student feedback literacy: Enabling uptake of feedback. Assess. Eval. High. Educ. 2018, 43, 1315–1325. [Google Scholar] [CrossRef]

- Mortara, M.; Catalano, C.; Bellotti, F.; Fiucci, G.; Houry-Panchetti, M.; Petridis, P. Learning cultural heritage by serious games. J. Cult. Herit. 2010, 15, 318–325. [Google Scholar] [CrossRef]

- Hattie, J.; Gan, M. Instruction based on feedback. In Handbook of Research on Learning and Instruction; Routledge: London, UK; New York, NY, USA, 2011; pp. 249–271. [Google Scholar]

- Molloy, E.; Boud, D.; Henderson, M. Developing a learning-centred framework for feedback literacy. Assess. Eval. High. Educ. 2020, 45, 527–540. [Google Scholar] [CrossRef]

- Lameras, P.; Arnab, S. Power to the Teachers: An Exploratory Review on Artificial Intelligence in Education. Information 2022, 13, 14. [Google Scholar] [CrossRef]

- Williams, J.; Kane, D. Assessment and Feedback: Institutional Experiences of Student Feedback, 1996 to 2007. High. Educ. Q. 2009, 63, 264–286. [Google Scholar] [CrossRef]

- Winstone, N.E.; Ajjawi, R.; Dirkx, K.; Boud, D. Measuring what matters: The positioning of students in feedback processes within national student satisfaction surveys. Stud. High. Educ. 2022, 47, 1524–1536. [Google Scholar] [CrossRef]

- Kluger, A.N.; DeNisi, A. The effects of feedback interventions on performance: A historical review, a meta-analysis, and a preliminary feedback intervention theory. Psychol. Bull. 1996, 119, 254–284. [Google Scholar] [CrossRef]

- de Freitas, S.; Oliver, M. How can exploratory learning with games and simulations within the curriculum be most effectively evaluated? Comput. Educ. 2006, 46, 249–264. [Google Scholar] [CrossRef]

- Dunwell, I.; de Freitas, S.; Jarvis, S. Four-dimensional consideration of feedback in serious games. In Digital Games and Learning; de Freitas, S., Maharg, P., Eds.; Continuum Publishing: London, UK, 2011; pp. 42–62. [Google Scholar]

- Lameras, P.; Arnab, S.; de Freitas, S.; Petridis, P.; Dunwell, I. Science teachers’ experiences of inquiry-based learning through a serious game: A phenomenographic perspective. Smart Learn. Environ. 2021, 8, 7. [Google Scholar] [CrossRef]

- Yang, K.-H. Learning behavior and achievement analysis of a digital game-based learning approach integrating mastery learning theory and different feedback models. Interact. Learn. Environ. 2017, 25, 235–248. [Google Scholar] [CrossRef]

- QAA—Quality Assurance Agency for Higher Education. UK Quality Code for Higher Education: Part A; QAA: Gloucester, UK, 2014. [Google Scholar]

- Rogers, C. Client-Centered Therapy: Its Current Practice, Implications and Theory; Constable: London, UK, 1951. [Google Scholar]

- Boud, D.; Molloy, E. Feedback in Higher and Professional Education: Understanding It and Doing It Well; Routledge: London, UK; New York, NY, USA, 2013. [Google Scholar]

- Dawson, P.; Henderson, M.; Mahoney, P.; Phillips, M.; Ryan, T.; Boud, D.; Molloy, E. What makes for effective feedback: Staff and student perspectives. Assess. Eval. High. Educ. 2019, 44, 25–36. [Google Scholar] [CrossRef]

- Ryan, T.; Henderson, M.; Phillips, M. Feedback modes matter: Comparing student perceptions of digital and non-digital feedback modes in higher education. Br. J. Educ. Educ. Technol. 2019, 50, 1507–1523. [Google Scholar] [CrossRef]

- Balcazar, F.; Hopkins, B.L.; Suarez, Y. A Critical, Objective Review of Performance Feedback. J. Organ. Behav. Manag. 1985, 7, 65–89. [Google Scholar] [CrossRef]

- Latham, G.P.; Locke, E.A. Self-regulation through goal setting. Organ. Behav. Hum. Decis. Process. 1991, 50, 212–247. [Google Scholar] [CrossRef]

- Schmidt, H.; van der Arend, A.; Kokx, I.; Boon, L. Peer versus staff tutoring in problem-based learning. Instr. Sci. 1994, 22, 279–285. [Google Scholar] [CrossRef]

- Topping, K.J. The effectiveness of peer tutoring in further and higher education: A typology and review of the literature. High. Educ. 1996, 32, 321–345. [Google Scholar] [CrossRef]

- Lachner, A.; Burkhart, C.; Nückles, M. Formative computer-based feedback in the university classroom: Specific concept maps scaffold students’ writing. Comput. Hum. Behav. 2017, 72, 459–469. [Google Scholar] [CrossRef]

- Schroth, M.L. The effects of delay of feedback on a delayed concept formation transfer task. Contemp. Educ. Psychol. 1992, 17, 78–82. [Google Scholar] [CrossRef]

- Butler, A.C.; Karpicke, J.D.; Roediger Iii, H.L. The effect of type and timing of feedback on learning from multiple-choice tests. J. Exp. Psychol. Appl. 2007, 13, 273–281. [Google Scholar] [CrossRef] [PubMed]

- Boyle, E.A.; Hainey, T.; Connolly, T.M.; Gray, G.; Earp, J.; Ott, M.; Lim, T.; Ninaus, M.; Ribeiro, C.; Pereira, J. An update to the systematic literature review of empirical evidence of the impacts and outcomes of computer games and serious games. Comput. Educ. 2016, 94, 178–192. [Google Scholar] [CrossRef]

- Hattie, J. Know Thy Impact. In On Formative Assessment: Readings from Educational Leadership (EL Essentials); Marge Scherer, A., Ed.; ASCD: Alexandria, VA, USA, 2016; pp. 36–45. [Google Scholar]

- Johnson, C.I.; Bailey, S.K.T.; Buskirk, W.L.V. Designing Effective Feedback Messages in Serious Games and Simulations: A Research Review. In Instructional Techniques to Facilitate Learning and Motivation of Serious Games; Springer: Cham, Switzerland, 2017. [Google Scholar]

- Pagulyan, R.; Keeker, K.; Fuller, T.; Wixon, D.; Romero, R. The Human-Computer Interaction Handbook: Fundamentals, Evolving Technologies and Emerging Applications, 2nd ed.; User Centered Design in Games; Sears, A., Jacko, J., Eds.; Lawrence Erlbaum Associates: Mahwah, NJ, USA, 2007; pp. 741–790. [Google Scholar]

- Csikszentmihalyi, M. Flow: The Psychology of Optimal Experience; HarperCollins: New York, NY, USA, 1990. [Google Scholar]

- Kiili, K.; Lainema, T.; de Freitas, S.; Arnab, S. Flow framework for analyzing the quality of educational games. Entertain. Comput. 2014, 5, 367–377. [Google Scholar] [CrossRef]

- Arnab, S. Game Science in Hybrid Learning Spaces; Routledge Taylor & Francis Group: New York, NY, USA; Abingdon, UK, 2020. [Google Scholar]

- Knight, J.; Carly, S.; Tregunna, B.; Jarvis, S.; Smithies, R.; de Freitas, S.; Mackway-Jones, K.; Dunwell, I. Serious gaming technology in major incident triage training: A pragmatic controlled trial. Resusc. J. 2010, 81, 1174–1179. [Google Scholar] [CrossRef]

- Biggs, J. Enhancing teaching through constructive alignment. High. Educ. 1996, 32, 347–364. [Google Scholar] [CrossRef]

- Vygotsky, L.S. Thinking and Speech; Plenum: New York, NY, USA, 1987. [Google Scholar]

- de Freitas, S. Education in Computer Generated Environments; Routledge: London, UK; New York, NY, USA, 2014. [Google Scholar]

- Moore, C. Map it: The Hands-on Guide to Strategic Training Design; Montesa Press, 2017. [Google Scholar]

- Bouter, S.; van Weel-Baumgarten, E.; Bolhuis, S. Construction and validation of the Nijmegen Evaluation of the Simulated Patient (NESP): Assessing simulated patients’ ability to role-play and provide feedback to students. Acad. Med. 2013, 88, 253–259. [Google Scholar] [CrossRef]

- Donia, M.B.L.; O’Neill, T.A.; Brutus, S. The longitudinal effects of peer feedback in the development and transfer of student teamwork skills. Learn. Individ. Differ. 2018, 61, 87–98. [Google Scholar] [CrossRef]

- Brookhart, S.M. Tailoring feedback. Educ. Dig. 2011, 76, 33. [Google Scholar]

- Wiggins, G. Seven keys to effective feedback. Educ. Leadersh. 2012, 70, 10–16. [Google Scholar]

- Linehan, C.; Kirman, B.; Lawson, S.; Chan, G. Practical, appropriate, empirically-validated guidelines for designing educational games. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Vancouver, BC, Canada, 7–12 May 2011; pp. 1979–1988. [Google Scholar]

| Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).