1. Introduction

With the continuous advancement of industrial technologies, rotating machinery has rapidly evolved toward larger scale, greater complexity, and enhanced intelligence. As a core component supporting the shaft, rolling bearings directly determine the operational reliability and safety of the entire system [

1,

2]. In practical service conditions, bearings frequently operate under high loads, elevated speeds, and corrosive environments, while also being subjected to the combined effects of severe vibration and shock, which accelerate their degradation [

3,

4]. Research indicates that approximately 30% of rotating-machinery failures originate from bearing damage [

5,

6]. Bearing faults not only lead to unplanned downtime and maintenance—thereby reducing production efficiency—but can also precipitate serious safety incidents, resulting in substantial economic and societal losses. Hence, developing scientifically rigorous models for remaining useful life (RUL) prediction of rolling bearings, coupled with real-time monitoring for dynamic condition assessment, holds both significant theoretical value and practical engineering importance [

7,

8].

Existing approaches to bearing RUL prediction can be broadly categorized into three classes: physics-based methods, statistical modeling techniques, and data-driven strategies [

9]. Physics-based models depend on explicit degradation mechanisms, expert rules, and empirical knowledge, which limits their generality and applicability [

10,

11]. Statistical methods—while easy to implement and interpret—typically capture only the common degradation patterns among like systems, yielding narrow applicability [

12]. In contrast, data-driven RUL prediction models have attracted considerable attention in recent years, driven by advances in sensor technology and the proliferation of industrial data. Deep learning, in particular, offers adaptive feature extraction and strong generalization capabilities, making it well-suited for modern machinery characterized by massive data volumes, complex structures, and numerous parameters [

13].

Shallow machine learning–based methods include statistical regression [

14], support vector machines [

15], and neural networks [

16]. These methods are straightforward to implement and computationally efficient; however, they heavily rely on manual feature engineering and struggle to capture complex nonlinear degradation trends, limiting their generalization to diverse operating conditions.

To address this, researchers introduced convolutional neural networks (CNNs) to learn spatial representations directly from data. For instance, Sateesh et al. [

17] transformed vibration signals into images for CNN-based feature extraction, improving prediction accuracy; however, this approach mainly focuses on spatial textures and lacks direct modeling of temporal dependencies inherent in time series. Zhu et al. [

18] proposed a multi-scale CNN to capture both global and local spatial features, yet CNNs inherently struggle to model sequential patterns over time, which constrains their effectiveness for long-term degradation prediction.

To overcome temporal modeling limitations, recurrent neural networks (RNNs) and Long Short-Term Memory (LSTM) networks have been widely used. Wang et al. [

19] combined a convolutional autoencoder (CAE) with LSTM to enhance sequential modeling; however, standard unidirectional LSTM can only use past information, lacking awareness of future context, which may reduce accuracy under complex degradation patterns. Chang et al. [

20] optimized LSTM hyperparameters to improve performance, but hyperparameter tuning can be computationally intensive and dataset-specific. Dong et al. [

21] integrated multi-channel CNN features with bidirectional LSTM (Bi-LSTM) to capture richer temporal context, and Wang et al. [

22] employed separable convolutions with residual connections to deepen feature hierarchies. While these improvements strengthen temporal modeling, they increase model complexity and computational cost, which can hinder real-time applications.

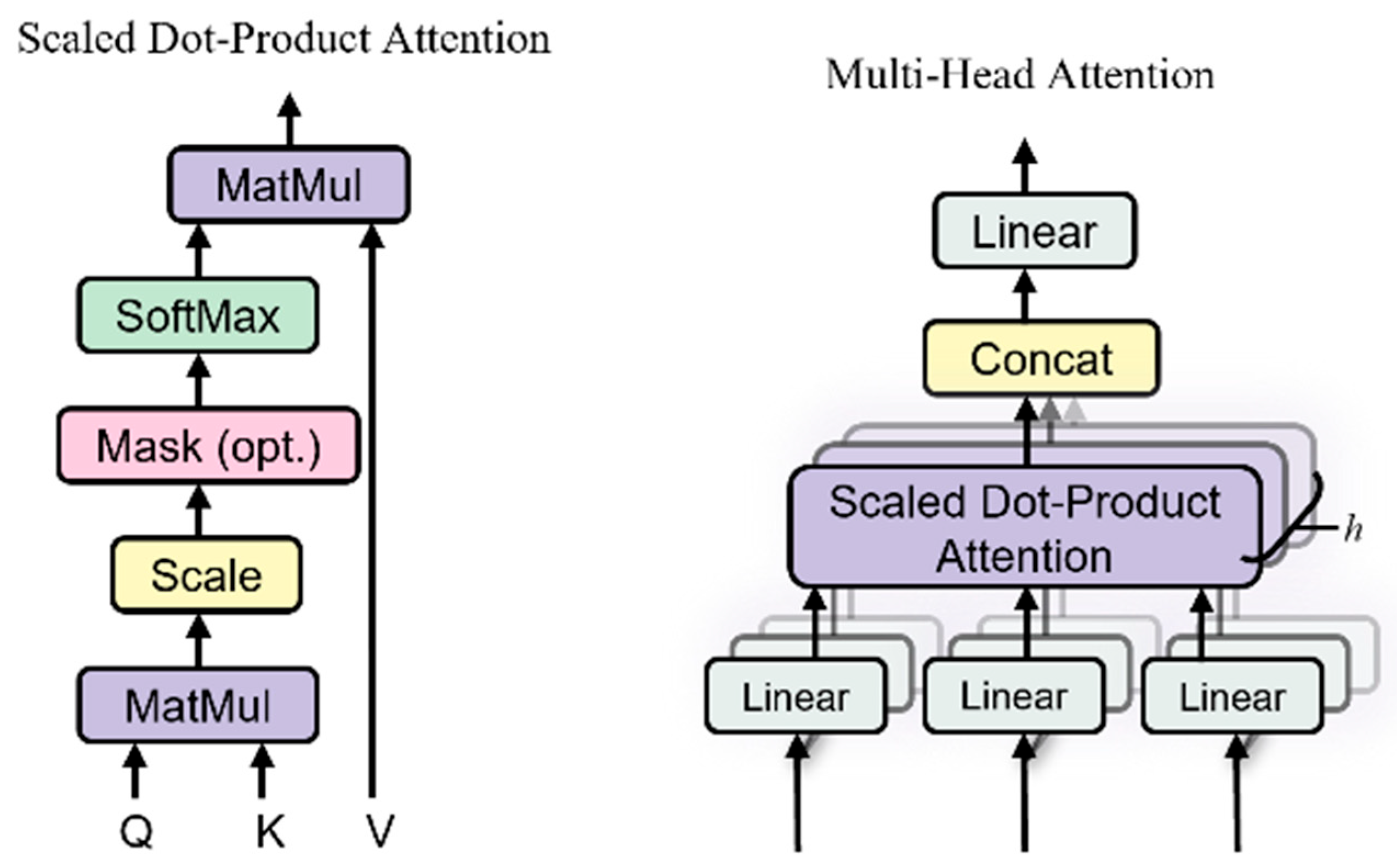

More recently, the Transformer architecture [

23] has been adopted for its ability to capture global dependencies and enable parallel computation, leading to faster training. Zhou et al. [

24] applied Transformers to model long-range feature correlations, achieving accurate RUL predictions; however, Transformers typically require large training datasets to avoid overfitting and may underperform in extracting fine-grained local degradation details. To address this, Lu et al. [

25] combined multi-wavelet convolution with Transformers to balance local and global feature extraction, and Tang et al. [

26] designed a parallel structure integrating temporal convolution networks with Transformer modules, further improving stability. However, this design increases model complexity and parameter size, leading to higher computational cost and potential challenges for deployment in real-time industrial applications.

In summary, while these methods each offer advantages, they share concrete limitations: reliance on handcrafted or shallow feature extraction, difficulty balancing local transient features and global degradation trends, limited sensitivity to early fault evolution, and potential challenges in generalizing to noisy or highly variable real-world environments.

In addition to these deep learning architectures, Empirical Mode Decomposition (EMD) and its enhanced variants (e.g., EEMD, CEEMDAN) have been increasingly applied in recent years to extract multi-scale intrinsic mode functions (IMFs) from non-stationary vibration signals for bearing RUL prediction. For instance, Peng et al. [

27] employed EMD to derive robust RMS indicators for adaptive failure thresholding; Anil Kumar et al. [

28] utilized non-parametric EEMD to extract weak fault features under noisy conditions; and Zhang et al. [

29] integrated CEEMDAN with a hybrid CNN–LSTM framework to improve prediction accuracy. Ref. [

30] while these studies demonstrate the value of multi-scale feature extraction and deep learning architectures, there remains limited systematic exploration of how to effectively integrate EMD-derived multi-scale degradation features with hybrid parallel networks such as Bi-LSTM and Transformer. Such integration could jointly capture local transient behaviors and long-term global dependencies, further enhancing RUL prediction robustness and accuracy. In this study, standard EMD is chosen over its improved variants mainly because of its computational simplicity and lower parameter sensitivity, which makes it suitable for small-to-medium-scale or laboratory datasets. Although EEMD and CEEMDAN can better mitigate mode mixing, they often introduce additional noise, require complex parameter tuning, and substantially increase computational cost. Considering that this study uses publicly available laboratory datasets, where signal quality is relatively high and noise is limited, standard EMD is sufficient to extract accurate and interpretable multi-scale degradation features for subsequent prediction, while avoiding unnecessary computational overhead.

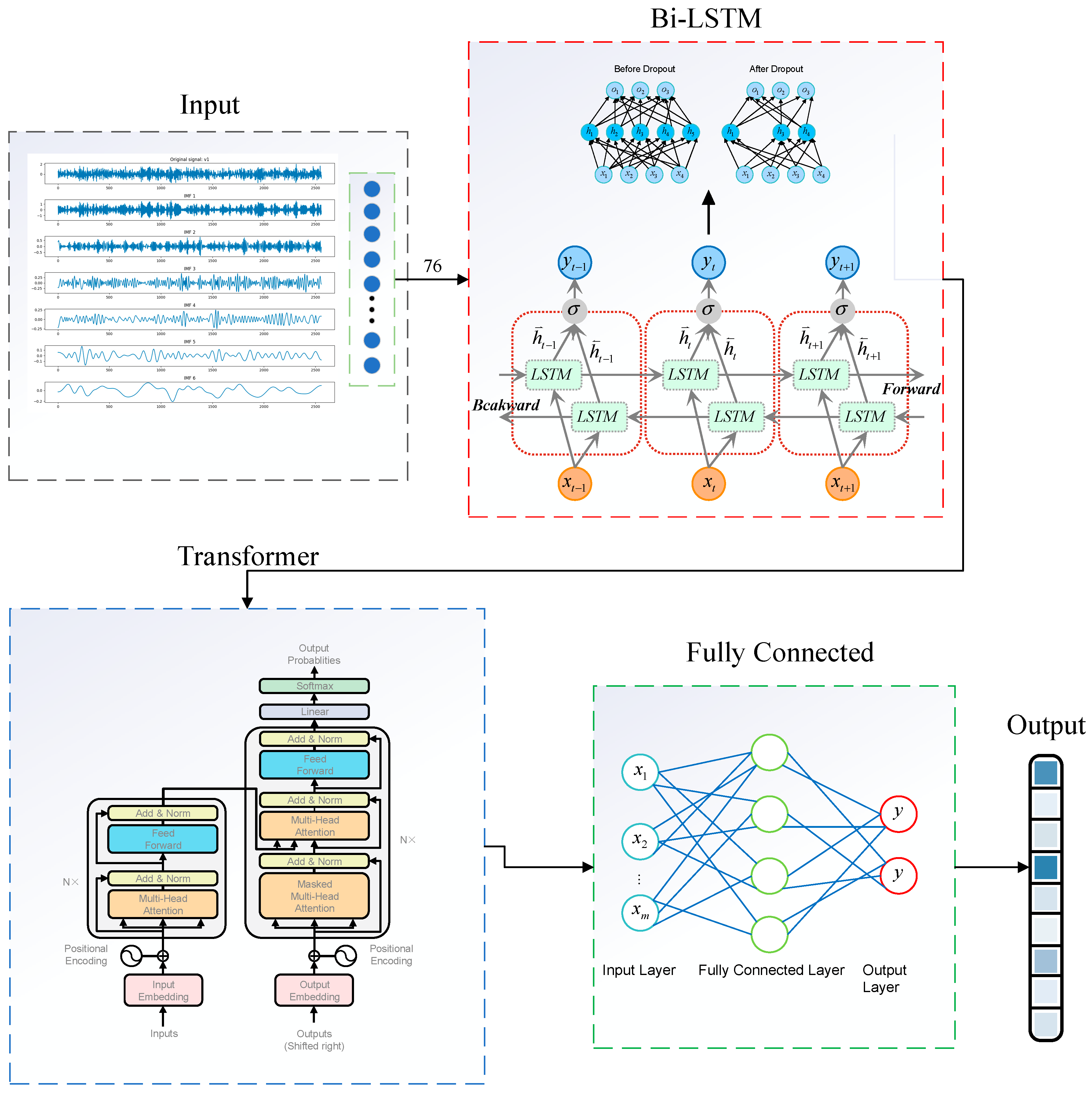

To overcome the challenges of capturing local dynamic features and modeling global dependencies in rolling-bearing RUL prediction—and to address the high dimensionality, noise interference, and feature modeling difficulties inherent in bearing vibration signals—this paper proposes an RUL prediction method based on EMD and a hybrid Transformer Bi-LSTM architecture. First, EMD adaptively decomposes the non-stationary vibration signal into a series of IMFs. Time-domain statistical indicators (e.g., kurtosis, energy, entropy) are then computed from each IMF to form representative, multi-scale degradation features. To reduce redundancy and enhance feature compactness, an autoencoder is employed for feature dimensionality reduction and reconstruction, extracting key sensitive features for subsequent prediction. The degraded features are first input to a Bi-LSTM network to model local temporal dependencies; the Bi-LSTM outputs are then fed into a Transformer module, which employs multi-head self-attention to capture global sequence relationships and reinforce perception of long-term degradation trends. During training, feature normalization is applied to improve adaptability across operating conditions, mean squared error (MSE) serves as the loss function, and the Adam optimizer updates model parameters. After training, the model is used to predict RUL on test data, and the prediction results are compared with true RUL values to evaluate accuracy and generalization.

The overall framework of this study is illustrated in

Figure 1. The main contributions are as follows: We propose an EMD-based decomposition and statistical-feature construction method that effectively captures multi-scale degradation information from non-stationary signals.

We develop a parallel modeling architecture combining Bi-LSTM and Transformer networks, which balances local dependency learning with global context modeling to enhance RUL prediction robustness.

We conduct comprehensive experiments on the PHM2012 and XJTU-SY datasets. Results demonstrate that the proposed method outperforms several benchmark models in prediction accuracy, generalization capability, and stability.

4. Application and Analysis

To validate the effectiveness of the proposed model, experiments were conducted using the PHM2012 bearing degradation dataset and the XJTU-SY bearing degradation dataset. The network model was developed in Python 3.8 using the PyTorch 2.4.1 framework with CUDA 11.8 support for GPU acceleration. Model training and testing were performed on a computer equipped with a 12th Gen Intel® Core™ i7-12700H @ 2.30 GHz processor, an NVIDIA GeForce RTX 4050 Laptop GPU, and 16 GB of RAM. All experiments were conducted under the same hardware and software environment to ensure consistency and reliability of the results.

4.1. Description of Experimental Data

In this study, two publicly available bearing degradation datasets, namely PHM2012 and XJTU-SY, were utilized to evaluate the performance of the proposed model. A detailed description of each dataset is provided below.

4.1.1. PHM2012 Degradation Dataset

The PHM2012 [

34] rolling bearing accelerated life test dataset was utilized in this study. This dataset was collected using the PRONOSTIA platform developed by the FEMTO-ST Institute in France. As illustrated in

Figure 8, the experimental setup consists of an asynchronous motor, a rotating shaft, a speed controller, two pulley systems, and the test bearings. The horizontal and vertical vibration signals of the bearings were continuously monitored by accelerometers mounted on the bearing housing. Signal acquisition was performed every 10 s at a sampling frequency of 25.6 kHz, with each sampling session lasting 0.1 s.

In the PHM2012 dataset, a total of 17 full-lifecycle bearing datasets were collected under three different operating conditions, as summarized in

Table 1. Specifically, Operating Condition 1 includes seven datasets, from Bearing 1_1 to Bearing 1_7; Operating Condition 2 also includes seven datasets, from Bearing 2_1 to Bearing 2_7; and Operating Condition 3 comprises three datasets, from Bearing 3_1 to Bearing 3_3. Detailed information regarding the 17 bearing datasets is provided in

Table 2.

The PHM2012 dataset contains vibration signals in both horizontal and vertical directions. However, the horizontal signals are more effective in accurately and rapidly reflecting the degradation of the bearings. Therefore, only the horizontal vibration signals are utilized for bearing life prediction in this study.

4.1.2. XJTU-SY Degradation Dataset

The XJTU-SY [

35] bearing dataset was used in the experiments, and the test platform is shown in

Figure 9. The test bearings were LDK UER204 rolling bearings. The accelerated degradation experiments conducted in this study involved three types of faults: outer race faults, inner race faults, and cage faults. A schematic diagram comparing normal and faulty bearings is presented in

Figure 10.

The bearing accelerated degradation tests were performed under three different operating conditions, as summarized in

Table 3. For Operating Condition 1, the rotational speed was 2100 r/min with a radial load of 12 kN; for Operating Condition 2, the speed was 2250 r/min with a radial load of 11 kN; and for Operating Condition 3, the speed was 2400 r/min with a radial load of 10 kN.

In each case, five bearings were tested. The sampling frequency was set to 25.6 kHz, the sampling interval was 1 min, and the sampling duration was 1.28 s.

4.2. Data Preprocessing

In order to enhance the quality of feature extraction and mitigate the adverse effects of outliers present in the collected bearing signals, the Empirical Mode Decomposition technique is introduced. By decomposing the original vibration signals into a set of intrinsic mode functions (IMFs), EMD enables the isolation of meaningful information at various frequency scales, which is crucial for capturing localized anomalies associated with bearing faults.

For illustrative purposes, the EMD results for the Bearing 1_1 signal from the PHM2012 dataset are presented in

Figure 11.

After performing EMD decomposition, eleven time-domain statistical features, as previously described, are extracted from each obtained IMF component. The extracted features are then normalized using min–max normalization, as shown in Equation (11).

In the normalization formula, xmax and xmin represent the maximum and minimum values of the signal, respectively, while xi denotes a specific data point. It should be noted that extreme values (outliers) in the signal may strongly affect the values of xmax and xmin, potentially leading to biased normalization results. To address this issue, common approaches include applying smoothing filters, removing obvious outliers prior to normalization, or using the 95th and 5th percentiles instead of the absolute maximum and minimum to achieve robust normalization.

As an example, the IMF components of Bearing 1_1 from the PHM dataset are decomposed, and the feature degradation time series of the IMF0, IMF2, and IMF4 components are shown in the figure. Since the original vibration signals inherently contain both positive and negative values, the statistical features, such as mean and median, will naturally exhibit negative values. Negative feature values can affect the visualization; therefore, they are not presented in the figure.

The feature degradation plots, as shown in

Figure 12, demonstrate that the extracted time-domain features effectively characterize the degradation behavior of the bearing. The key features exhibit distinct and consistent trends as degradation progresses, indicating their high sensitivity and strong discriminative ability in capturing the evolution of bearing faults. Specifically, the original vibration signals are decomposed by EMD into six components: IMF0, IMF1, IMF2, IMF3, IMF4, and the residual. Each IMF captures characteristic information from a different frequency band: IMF0 and IMF1 correspond to high- and medium–high-frequency components sensitive to early-stage subtle impact features; IMF2 and IMF3 contain medium–low-frequency information reflecting mid-stage degradation and operational stability; and IMF4 together with the residual represent low-frequency trends describing the long-term degradation process. By extracting time-domain statistical features from these IMFs, the model comprehensively represents multi-scale characteristics of the bearing’s degradation, enhancing sensitivity to both local transients and global trends. These results provide an intuitive and robust feature foundation for subsequent network-based RUL prediction.

4.3. Experimental Analysis

4.3.1. Prediction Experiments on the PHM Dataset

In our experiments, the hyperparameters of the multi-head attention mechanism were set to three layers and four attention heads. When using fewer layers or heads, the model could not sufficiently capture the complex features in the data, leading to noticeably reduced prediction accuracy, although the training time was slightly shorter. Conversely, increasing the number of layers and heads beyond three and four did not further improve the prediction performance; instead, it significantly increased training time and introduced overfitting. Therefore, by balancing model expressiveness, training efficiency, and generalization capability, we finally set the number of attention layers to three and the number of attention heads to four.

The hyperparameter settings of the experimental network are listed in

Table 4. Based on the hyperparameter settings listed in

Table 4, the model was trained accordingly. The experiments were conducted using the dataset partitioning detailed in

Table 3. The training loss curves are shown in

Figure 13. Specifically, for Operating Condition 1, the training loss decreased from 0.12674 to 0.00144; for Operating Condition 2, it decreased from 0.09881 to 0.00127; and for Operating Condition 3, it decreased from 0.2553 to 0.00113. Overall, the final average training loss stabilized around 0.00128, indicating that the proposed Transformer–Bi-LSTM model achieved low prediction error and was able to effectively fit the training data.

To verify that the model trained on the training dataset can be effectively applied to the testing dataset, we divided the dataset as shown in

Table 5. The feature extraction layers and parameters of the Bi-LSTM–Transformer model were frozen in each of the three experiments, preserving the model’s feature extraction capability. The top layers of the model were then retrained. This approach aims to validate the transferability and generalization ability of the learned feature representations. After 100 additional training epochs on the testing dataset, the results are shown in

Figure 14. The experimental prediction results demonstrate that the proposed Transformer–Bi-LSTM model can accurately capture the degradation trends of bearings. The predicted RUL curves exhibit a strong consistency with the ground truth, thereby validating the effectiveness and reliability of the proposed approach.

4.3.2. Comparative Experiments

In this chapter, the data under operating PHM Condition 1 is utilized for prediction, and the prediction accuracy of the proposed model is compared with that of several baseline networks, including RNN, GRU, LSTM, and Bi-LSTM.

To evaluate the prediction performance, the root mean square error (RMSE) and mean absolute error (MAE) between the predicted RUL and the actual RUL are adopted as the evaluation metrics. Lower values of these indicators indicate higher prediction accuracy. The calculation formulas are given in Equations (12) and (13).

To illustrate the advantage of adopting a bidirectional Long Short-Term Memory (Bi-LSTM) architecture over a one-way LSTM, we conducted a comparative experiment on the PHM1-1 bearing. In this experiment, the combined time-domain features extracted from all IMFs components of Bearing 1_1 were used as input. As shown in

Table 6 and

Figure 15, the Bi-LSTM achieved lower prediction errors compared to the one-way LSTM. This confirms the effectiveness of the bidirectional approach in capturing temporal dependencies within degradation signals, whereas a one-way LSTM is less capable of fully modeling complex sequential patterns.

In the experiments, Bearing 1_2 and Bearing 1_4 were used as the training set, while Bearing 1_1, Bearing 1_3, and Bearing 1_5 were used for testing. The prediction results are presented in

Table 7 and

Figure 16. The experimental results demonstrate that the proposed Bi-LSTM–Transformer model outperforms other models in terms of both MAE and RMSE across all test sets, achieving an average MAE of 0.0469 and RMSE of 0.0563. These results indicate that the model offers superior accuracy and stability in predicting the remaining useful life (RUL) of rolling bearings. To further illustrate the prediction performance,

Figure 17 compares the proposed model with the conventional Bi-LSTM model. As shown in the figure, the Bi-LSTM–Transformer model exhibits more stable and accurate predictions than the traditional Bi-LSTM approach.

4.3.3. Generalization Experiment on the XJTU-SY Dataset

In this study, the experimental data were partitioned based on the operating conditions of the XJTU-SY dataset, as detailed in

Table 8. For Condition 1, Bearing 1_2 and Bearing 1_5 were selected as the training set, while Bearing 1_1, Bearing 1_3, and Bearing 1_4 were used as the validation set. Under Condition 2, the training set consisted of Bearing 2_2 and Bearing 2_5, with Bearing 2_1, Bearing 2_3, and Bearing 2_4 forming the validation set. For Condition 3, Bearing 3_4 and Bearing 3_5 were used for training, and Bearing 3_1, Bearing 3_2, and Bearing 3_3 served as the validation set. All experiments were conducted on the same hardware platform, and the hyperparameter settings used are summarized in

Table 2. The experimental results are presented in

Figure 18. The prediction results indicate that the proposed model also achieves promising performance on the XJTU dataset. The MAE and RMSE values of the experiment are summarized in

Table 9 below. The results show that the model maintains low error levels in both mean absolute error (MAE) and root mean square error (RMSE), demonstrating its excellent predictive capability on this dataset. This suggests that the model not only achieves high prediction accuracy on the original dataset but also generalizes well to industrial data with different sources and characteristics, effectively capturing the degradation trend of bearings with strong robustness.

To further evaluate the effectiveness and robustness of the proposed Transformer–Bi-LSTM model on the XJTU-SY dataset, additional comparative experiments were performed under Operating Condition 2. In particular, the prediction performance of the proposed approach was benchmarked against several baseline models, including TCN, GRU, and Bi-LSTM. This comparative analysis aims to demonstrate the advantages of the proposed method in accurately capturing degradation trends and improving RUL prediction accuracy.

Table 10 and

Figure 19 and

Figure 20 report the comparative prediction results of the proposed method against TCN, GRU, and Bi-LSTM under Operating Condition 2 of the XJTU-SY dataset. It can be observed that the proposed method consistently achieves lower MAE and RMSE values across all test bearings, thereby demonstrating enhanced predictive accuracy and robustness.

Specifically, the proposed method attains an MAE of 0.0423 and RMSE of 0.0486 for Bearing 2-1; an MAE of 0.0422 and RMSE of 0.0485 for Bearing 2-3; and an MAE of 0.0484 and RMSE of 0.0623 for Bearing 2-4. In all cases, both metrics are markedly lower than those obtained by the other models.

Overall, the proposed method achieves an average MAE of 0.0443 and an average RMSE of 0.0531 across the test bearings, outperforming the baseline models and confirming its superior capability in accurately modeling degradation patterns and predicting the remaining useful life of rolling bearings.

5. Conclusions

This paper proposes a remaining useful life prediction method for rolling bearings based on Empirical Mode Decomposition and a Transformer–Bi-LSTM hybrid network.

(1) The method integrates Bi-LSTM and Transformer architectures and directly feeds vibration signals—preprocessed through EMD-based statistical time-domain feature extraction and min–max normalization—into the model.

(2) By applying EMD and extracting time-domain features, the method effectively reduces the dimensionality of complex high-dimensional signals and suppresses noise interference. The Bi-LSTM network captures local temporal patterns, while the Transformer models global dependencies. Their combination significantly improves prediction accuracy and stability.

(3) The proposed model is validated on both the PHM2012 and XJTU-SY bearing datasets. Under variable load conditions, the model achieves an average MAE of 0.0469 and RMSE of 0.0563 on the PHM2012 dataset. On the XJTU-SY dataset, it attains an average MAE of 0.0374 and RMSE of 0.0442, demonstrating excellent accuracy and generalization capability.

(4) In future work, the model will be further validated and evaluated using real-world industrial vibration data to assess its practical applicability and enhance robustness under noisy or variable operating conditions.

6. Discussion

While the proposed method shows promising results on publicly available laboratory datasets, it also exhibits several limitations. First, this study uses standard Empirical Mode Decomposition (EMD) to extract multi-scale features. Although EMD is computationally efficient and suitable for relatively clean signals, it can suffer from mode mixing when processing complex or noisy vibration data, potentially affecting feature stability. Advanced methods, such as EEMD and CEEMDAN, can alleviate mode mixing but require careful parameter tuning and introduce higher computational cost.

Empirical wavelet transform (EWT) has recently been demonstrated to effectively mitigate mode mixing and preserve signal morphology in noisy environments [

36]. We performed preliminary tests on the same vibration signal segments and observed that EMD processing took approximately 5 s per segment, whereas EWT required about 35 s, resulting in a significant increase in computational time. Due to the significantly higher computational complexity of EWT compared to EMD, fully adopting EWT-based preprocessing methods would substantially increase the computational cost of model training and result generation. Considering the computational resources and time constraints of this study, this approach was not implemented in the current work and will be further explored in future research.

Additionally, detailed analysis reveals that the model tends to show higher prediction errors during the early stages of degradation, when signal changes are subtle, and near rapid degradation phases or sudden failures, where the degradation pattern shifts abruptly. We believe these discrepancies arise because time-domain statistical features may be less sensitive to early weak degradation signals, and the hybrid Transformer–Bi-LSTM architecture may struggle to adapt quickly to sharp transitions.

In future work, we plan to explore and compare improved decomposition techniques, design features or attention mechanisms that better capture early and sudden degradation trends, and validate the model on more complex, real-world datasets to enhance robustness and generalization.