Improving Graph Neural Network Models in Link Prediction Task via A Policy-Based Training Method

Abstract

1. Introduction

- We introduce a negative sample selector, which employs a policy network as its core module, to reduce the impact of existing false and easy negative samples in the training set for the GNN models in the link prediction task.

- Armed with the negative sample selector, we design a policy-based training method (PbTRM), which generates the negative samples through the negative sample selector in each sampling period and updates the parameters of the policy network after finishing a sampling period.

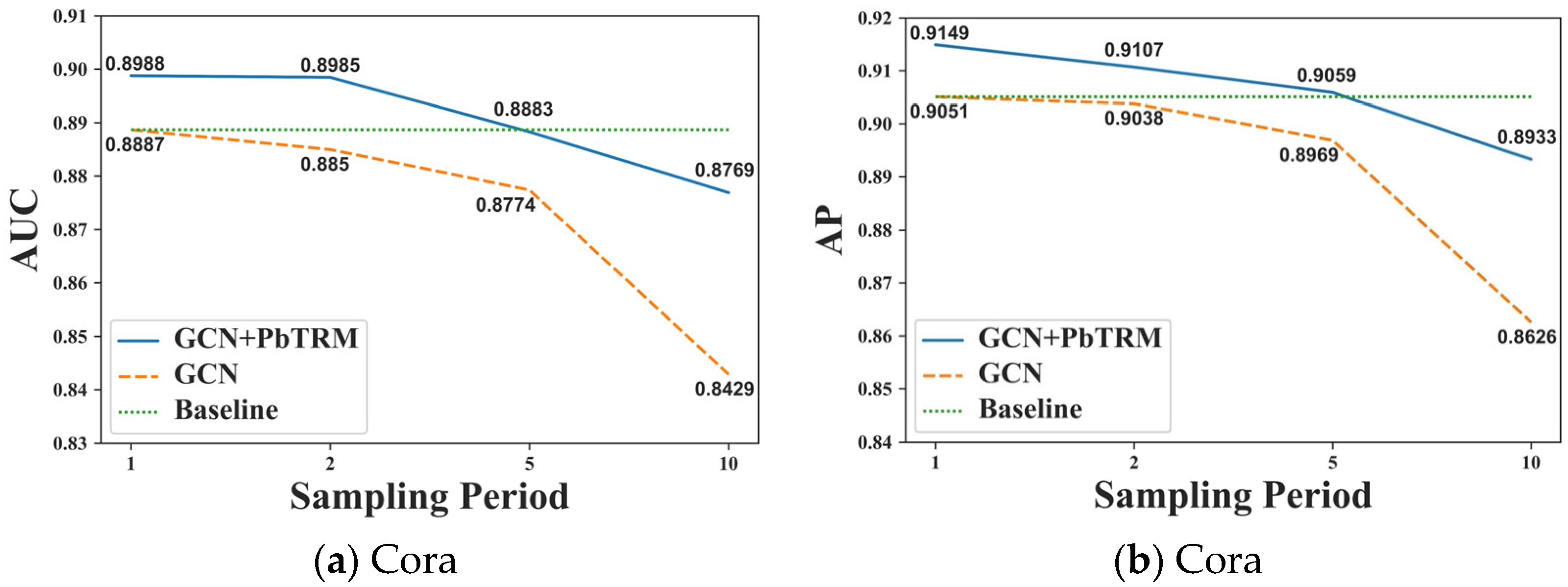

- We demonstrate the effectiveness of the proposed PbTRM by experiments with three GNN models on two datasets. In the remainder of the experiments, we also illustrate the influence of the sampling period on the proposed PbTRM.

2. Preliminary

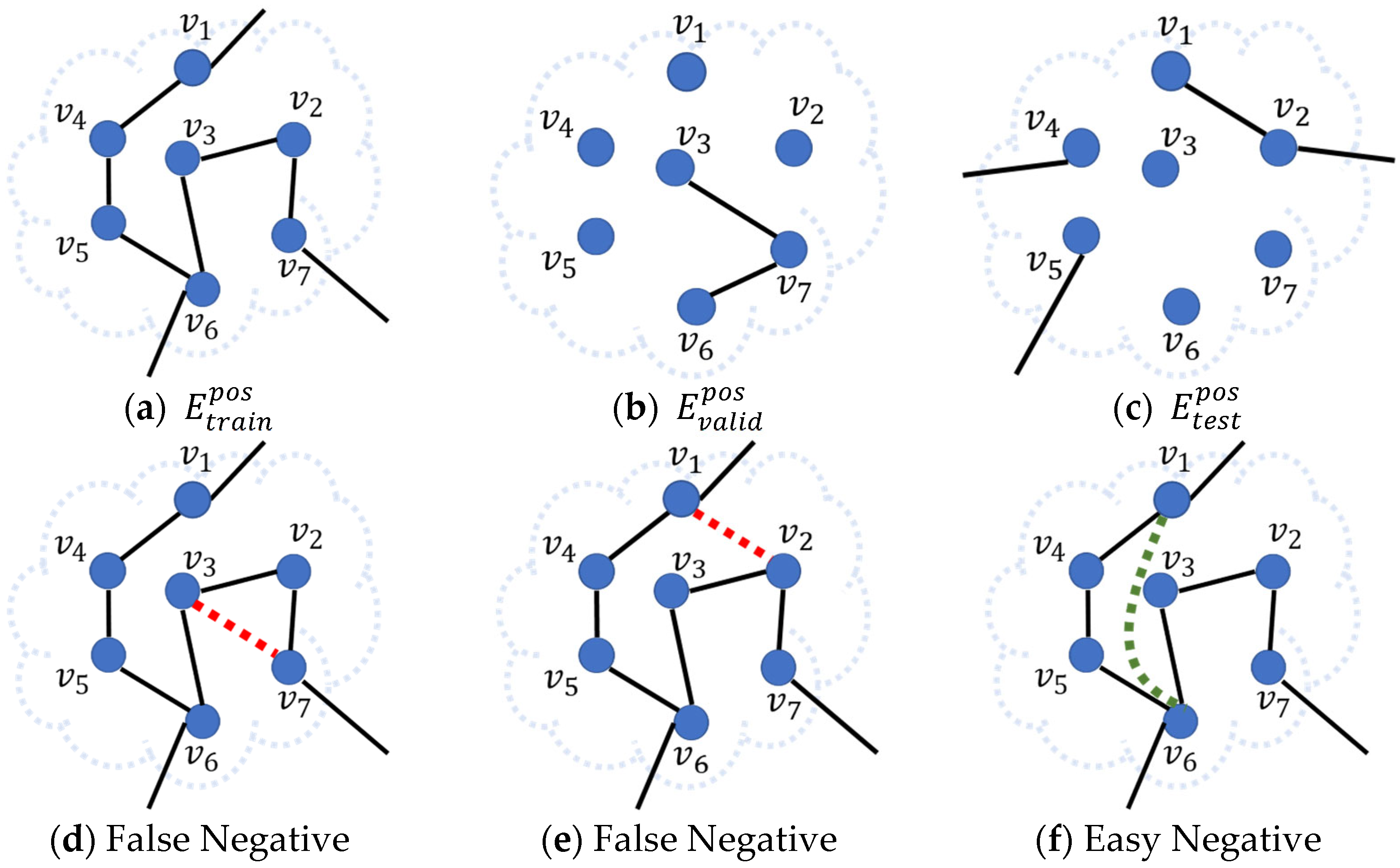

2.1. Problem Definition

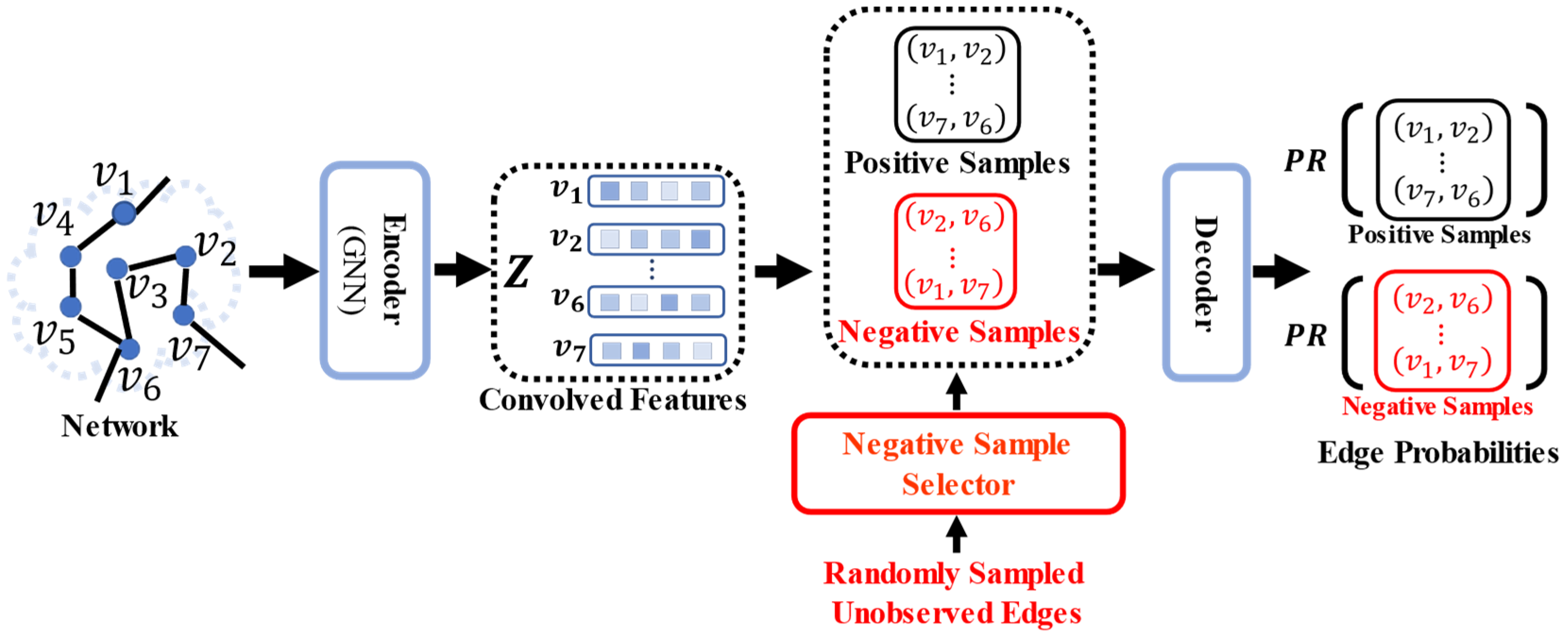

2.2. Graph Neural Network in Link Prediction Task

3. Methodology

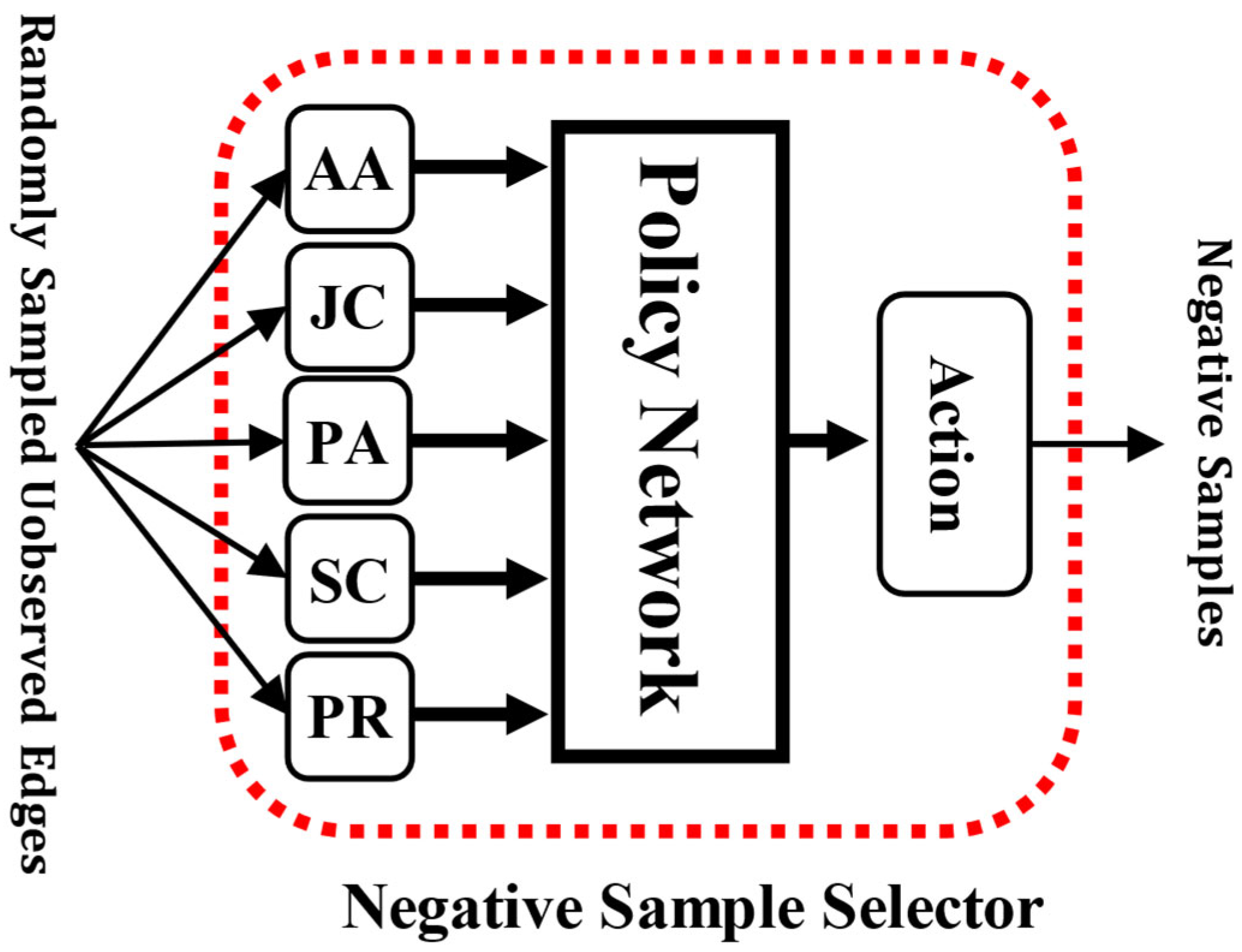

3.1. The Inputs for the Negative Sample Selector

3.2. The Outputs of the Negative Sample Selector

3.3. The Reward for the Policy Network

3.4. The Updating Procedure of the Policy Network

| Algorithm 1: The negative sampling procedure with the negative sample selector |

| INPUT: Network and number of required negative samples OUTPUT: Negative sample set |

|

3.5. The Training Procedure of the GNN Model with PbTRM in the Link Prediction Task

| Algorithm 2: The policy-based training method (PbTRM) |

| INPUT: Network and value of a sampling period OUTPUT: Edge probabilities of edges in |

| 1:a for epoch in : 2: if or : #In the first training epoch of a sampling period 3: if epoch = 1: #In the first training epoch of the first sampling period 4: Obtain the negative sample set by randomly sampling from 5: else: 6: Obtain the negative sample set following Algorithm 1 7: Calculate the edge probabilities of the edges in and 8: Calculate the loss function value for the GNN model 9: Update the parameters of the GNN model according to the loss function value 10: if or : #In the last training epoch of a sampling period 11: Evaluate the prediction performance of the GNN model following Equation (5) 12: if epoch = : #In the last training epoch of the first sampling period 13: # Base value for the first update of the policy network 14: else: 15: 16: Calculate the reward for the policy network following Equation (4) 17: Update the parameters of the policy network following Equation (8) 18: 19: end for 20: Calculate the edge probabilities of edges in |

4. Experiments

4.1. Datasets

4.2. Implementation Details

4.3. Effectiveness of the PbTRM

4.4. Influence of the Sampling Period

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Kipf, T.N.; Welling, M. Semi-Supervised Classification with Graph Convolutional Networks. In Proceedings of the International Conference on Learning Representations, San Juan, Puerto Rico, 2–4 May 2016. [Google Scholar]

- Tsitsulin, A.; Palowitch, J.; Perozzi, B.; Müller, E. Graph Clustering with Graph Neural Networks. arXiv 2020, arXiv:2006.16904. [Google Scholar]

- Zhang, M.; Cui, Z.; Neumann, M.; Chen, Y. An end-to-end deep learning architecture for graph classification. In Proceedings of the Thirty-Second AAAI Conference on Artificial Intelligence, New Orleans, LA, USA, 2–7 February 2018. [Google Scholar]

- Kumar, A.; Singh, S.S.; Singh, K.; Biswas, B. Link prediction techniques, applications, and performance: A survey. Phys. A Stat. Mech. Its Appl. 2020, 553, 124289. [Google Scholar] [CrossRef]

- Kipf, T.N.; Welling, M. Variational graph auto-encoders. arXiv 2016, arXiv:1611.07308. [Google Scholar]

- van den Berg, R.; Kipf, T.N.; Welling, M. Graph convolutional matrix completion. arXiv 2017, arXiv:1706.02263. [Google Scholar]

- Zhang, M.; Chen, Y. Link Prediction Based on Graph Neural Networks. In Proceedings of the 32nd International Conference on Neural Information Processing Systems, Montreal, QC, Canada, 3–8 December 2018; pp. 5171–5181. [Google Scholar]

- Grover, A.; Zweig, A.; Ermon, S. Graphite: Iterative generative modeling of graphs. In Proceedings of the 36th International Conference on Machine Learning, Long Beach, CA, USA, 10–15 June 2019; pp. 2434–2444. [Google Scholar]

- Salha, G.; Hennequin, R.; Remy, J.B.; Moussallam, M.; Vazirgiannis, M. FastGAE: Scalable Graph Autoencoders with Stochastic Subgraph Decoding. Neural Netw. 2021, 142, 1–19. [Google Scholar] [CrossRef] [PubMed]

- Zhu, Z.; Zhang, Z.; Xhonneux, L.P.; Tang, J. Neural Bellman-Ford Networks: A General Graph Neural Network Framework for Link Prediction. In Proceedings of the 35th Conference on Neural Information Processing Systems, Virtually, 6–14 December 2021; pp. 29476–29490. [Google Scholar]

- Guo, Z.; Wang, F.; Yao, K.; Liang, J.; Wang, Z. Multi-Scale Variational Graph AutoEncoder for Link Prediction. In Proceedings of the Fifteenth ACM International Conference on Web Search and Data Mining, Tempe, AZ, USA, 21–25 February 2022; pp. 334–342. [Google Scholar]

- Salha-Galvan, G.; Lutzeyer, J.F.; Dasoulas, G.; Hennequin, R.; Vazirgiannis, M. Modularity-Aware Graph Autoencoders for Joint Community Detection and Link Prediction. arXiv 2022, arXiv:2202.00961. [Google Scholar] [CrossRef] [PubMed]

- Wang, X.; Vinel, A. Benchmarking Graph Neural Networks on Link Prediction. arXiv 2021, arXiv:2102.12557. [Google Scholar]

- Sutton, R.S.; Mcallester, D.; Singh, S.; Mansour, Y. Policy Gradient Methods for Reinforcement Learning with Function Approximation. In Proceedings of the 12th International Conference on Neural Information Processing Systems, Denver, CO, USA, 29 November–4 December 1999. [Google Scholar]

- Hamilton, W.; Ying, Z.; Leskovec, J. Inductive representation learning on large graphs. In Proceedings of the 31st International Conference on Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017. [Google Scholar]

- Veličković, P.; Cucurull, G.; Casanova, A.; Romero, A.; Lio, P.; Bengio, Y. Graph attention networks. In Proceedings of the International Conference on Learning Representations, Vancouver, BC, Canada, 30 April–3 May 2018. [Google Scholar]

- Yang, Z.; Cohen, W.W.; Salakhutdinov, R. Revisiting Semi-Supervised Learning with Graph Embeddings. In Proceedings of the International Conference on Machine Learning, New York, NY, USA, 19–24 June 2016; pp. 40–48. [Google Scholar]

- Lu, L.; Zhou, T. Link prediction in complex networks: A survey. Phys. A Stat. Mech. Its Appl. 2011, 390, 1150–1170. [Google Scholar] [CrossRef]

- Zhou, J.; Cui, G.; Zhang, Z.; Yang, C.; Liu, Z.; Wang, L.; Li, C.; Sun, M. Graph Neural Networks: A Review of Methods and Applications. arXiv 2018, arXiv:1812.08434. [Google Scholar] [CrossRef]

- Bruna, J.; Zaremba, W.; Szlam, A.; LeCun, Y. Spectral networks and locally connected networks on graphs. arXiv 2014, arXiv:1312.6203. [Google Scholar]

- Adamic, L.A.; Adar, E. Friends and neighbors on the web. Soc. Netw. 2003, 25, 211–230. [Google Scholar] [CrossRef]

- Jaccard, P. Distribution de la flore alpine dans le bassin des Dranses et dans quelques régions voisines. Bull. Soc. Vaud. Des Sci. Nat. 1901, 37, 241–272. [Google Scholar]

- Barabâsi, A.-L.; Jeong, H.; Néda, Z.; Ravasz, E.; Schubert, A.; Vicsek, T. Evolution of the social network of scientific collaborations. Phys. A Stat. Mech. Its Appl. 2002, 311, 590–614. [Google Scholar] [CrossRef]

- Lei, T.; Huan, L. Leveraging social media networks for classification. Data Min. Knowl. Discov. 2011, 23, 447–478. [Google Scholar] [CrossRef]

- Fey, M.; Lenssen, J.E. Fast Graph Representation Learning with PyTorch Geometric. arXiv 2019, arXiv:1903.02428. [Google Scholar]

| Dataset | Nodes | Edges | Features | Train/Valid/Test Edges |

|---|---|---|---|---|

| Cora | 2708 | 5429 | 1433 | 70%/10%/20% |

| CiteSeer | 3327 | 4732 | 3703 | 70%/10%/20% |

| Cora | ||

|---|---|---|

| Method | AUC | AP |

| GCN | 0.8887 | 0.9051 |

| GCN+PbTRM | 0.8988 | 0.9149 |

| GAT | 0.8527 | 0.8851 |

| GAT+PbTRM | 0.8618 | 0.8906 |

| GraphSAGE | 0.8298 | 0.8221 |

| GraphSAGE+PbTRM | 0.8457 | 0.8538 |

| CiteSeer | ||

|---|---|---|

| Method | AUC | AP |

| GCN | 0.8905 | 0.9065 |

| GCN+PbTRM | 0.9011 | 0.9149 |

| GAT | 0.8295 | 0.8619 |

| GAT+PbTRM | 0.8447 | 0.8699 |

| GraphSAGE | 0.7711 | 0.7717 |

| GraphSAGE+PbTRM | 0.7989 | 0.8030 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Shang, Y.; Hao, Z.; Yao, C.; Li, G. Improving Graph Neural Network Models in Link Prediction Task via A Policy-Based Training Method. Appl. Sci. 2023, 13, 297. https://doi.org/10.3390/app13010297

Shang Y, Hao Z, Yao C, Li G. Improving Graph Neural Network Models in Link Prediction Task via A Policy-Based Training Method. Applied Sciences. 2023; 13(1):297. https://doi.org/10.3390/app13010297

Chicago/Turabian StyleShang, Yigeng, Zhigang Hao, Chao Yao, and Guoliang Li. 2023. "Improving Graph Neural Network Models in Link Prediction Task via A Policy-Based Training Method" Applied Sciences 13, no. 1: 297. https://doi.org/10.3390/app13010297

APA StyleShang, Y., Hao, Z., Yao, C., & Li, G. (2023). Improving Graph Neural Network Models in Link Prediction Task via A Policy-Based Training Method. Applied Sciences, 13(1), 297. https://doi.org/10.3390/app13010297