1. Introduction

Medical co-design, as used here, refers to collaborative design in the medical domain. In such projects, a project sponsor (organizer) brings together clinicians and designers and, when appropriate, engineers, patients, or industry partners to create human-centered responses to clinical needs (e.g., products, services, or information systems), most often through structured workshops (

Bate & Robert, 2006;

Greenhalgh et al., 2019;

Jones, 2013;

Slattery et al., 2020). We view these efforts through the lens of collective intelligence, understood as the team’s capacity to integrate the knowledge of different individuals and apply it effectively. Our focus is on small, task-focused clinical teams, which differ from public-health co-design at the community or population level, where the emphasis is on prevention and social determinants (

Vargas et al., 2022). Foundational work on co-creation and design provides the conceptual backdrop for this framing (

Cross, 2007;

Sanders & Stappers, 2008).

Success in such settings rarely depends on a single expert. Instead, it turns on whether the group reliably displays properties typically associated with collective intelligence: timely sharing and reuse of information, complementary role taking, balanced participation, effective pacing, and progressive convergence on decisions of sufficient quality (

Jiang et al., 2025a;

Riedl et al., 2021/2022;

Suran et al., 2021;

Woolley et al., 2010). In routine practice, however, these properties are often left to the tacit skills of facilitators and the goodwill of participants. Consequently, co-design sessions may drift or stall, or become dominated by a few voices. Even when a session appears to go “well”, it is difficult to reproduce and evaluate it in a way that supports organizational learning and methodological refinement (

Choi et al., 2022;

Donetto et al., 2014;

Lavallee et al., 2019).

This study examines whether simple, AI-supported process guidance can make it easier to measure and improve teamwork in such sessions. Rather than treating facilitation as an art form that lies outside the scope of measurement, we align session guidance with internal mechanisms known to support collective intelligence in collaborative work, including information flow, role complementarity, pacing and regrouping, and decision convergence (

Jiang et al., 2025a,

2025b). The goal is not to automate creativity, but to make the conditions for collective intelligence more visible and adjustable in situ, and to make the effects of facilitation more assessable afterwards. To this end, we use a five-phase co-design prototype (v0.3) that structures multi-party sessions into a five-phase procedure, standardizes basic artifacts and prompts, and records lightweight traces of collaboration. Around this prototype, we assembled a field-ready package consisting of instruments for usability and acceptance (SUS, PU, and PEOU), team-level outcomes (TP, PP, and S-TP), and a simple logging framework that captures key steps of collaboration, such as timelines, contributions by role, and artifact evolution.

Two gaps motivate this package. First, much of the co-design literature presents successful cases or conceptual frameworks but offers little operational guidance on how to recognize, in real time, whether conditions relevant to collective intelligence are improving or deteriorating, and how to steer the process accordingly (

Brandt et al., 2013;

Kleinsmann & Valkenburg, 2008;

Sanders & Stappers, 2008,

2014;

Steen, 2013). Second, evaluation often focuses on end-of-session artifacts or satisfaction ratings, leaving the process itself under-instrumented. Without process-level signals, organizations cannot determine which facilitation moves were consequential, nor can they reliably transfer learning across settings (

Choi et al., 2022;

Donetto et al., 2014;

Green et al., 2020;

Lavallee et al., 2019;

Tsianakas et al., 2012;

Ward et al., 2018). Our approach addresses both gaps by combining mechanism-informed facilitation with measures that are deployable in realistic environments, thereby yielding interpretable feedback for facilitators and accumulating comparable evidence across projects.

Beyond system design, recent work calls for examining AI as a social actor and characterizing machine behavior at scale, which underscores the need for fieldable measures of human–AI teaming (

Rahwan et al., 2019). In parallel, emerging agendas on hybrid human–AI collaboration argue for treating AI as a teammate and structuring interaction patterns accordingly, rather than regarding it solely as a tool (

Dellermann et al., 2019;

Seeber et al., 2020).

In this paper, we report a field pilot study with 24 participants organized into six four-person teams. All teams followed the same five-phase procedure, using standardized materials supported by a prototype for multi-human and multi-agent collaboration. The study was designed to assess feasibility rather than to establish causal effects: we focused on procedural adherence across teams, successful data capture, and interpretable distributions for usability/acceptance and team outcomes, complemented by process-level visualizations of participation balance, session pacing, and information reuse. Analyses are descriptive (phase-level comparisons and correlations) and are intended to inform practical iteration rather than to adjudicate specific causal models.

This paper provides three practical contributions. First, it introduces a toolkit for medical co-design in real-world settings, including a language-model assistant that guides timing and turn-taking, an automatic log of key steps, and a concise set of transparent measures. Second, it reports feasibility findings from a six-team study, covering usability and acceptance alongside team outcomes, each summarized with 95% bootstrap confidence intervals. Third, it shows that simple process signals—such as more balanced participation and fewer elongated or fragmented timelines—tend to co-occur with higher perceived performance, and it translates these signals into concrete facilitation routines (e.g., targeted prompts, time-boxing).

2. Related Work and Mechanism-Informed Rationale

2.1. Key Concepts and Definitions

Medical co-design in this paper refers to a structured, participatory design approach in healthcare settings, in which clinicians, patients (or their proxies), and designers work together to co-create concepts within clinical, ethical, and organizational constraints (

Bate & Robert, 2006;

Greenhalgh et al., 2019;

Jones, 2013;

Slattery et al., 2020). Our focus is not on any specific branded methodology, but on how concrete practices shape interaction among participants.

We define collective intelligence as a team-level capacity to integrate distributed knowledge through coordinated interaction, thereby achieving outcomes that are superior to what individuals could produce alone (

Jiang et al., 2025a;

Riedl et al., 2021/2022;

Suran et al., 2021;

Woolley et al., 2010). In this study, collective intelligence is observed through two complementary lenses: expert ratings of team products (TP) and participants’ perceptions of collaboration and contribution (PP, S-TP). We also distinguish orchestration from facilitation. Facilitation typically concerns what teams discuss or decide, whereas orchestration focuses on when and how teams interact—structuring sequences of activities, pacing work through time-boxes, guiding turn-taking via role rotation, and prompting artifact hand-offs—while remaining agnostic about content. The present study uses orchestration only, as it produces observable process traces that are well-suited for analysis.

The AI support in this work is explicitly scoped to process assistance. A language-model-based Design Service Agent (DSA) delivers short natural-language prompts related to timing, turn-taking, role rotation, and hand-offs, and records timestamped actions in a shared workspace (DIMS). Within DIMS, participants may, if they wish, invoke creative assistants for ideation. Such usage is logged, and these assistants are not used to provide medical advice or definitive design recommendations.

Throughout the paper, we use the term “markers” to denote observable process features derived from interaction logs—such as the number of dialogue turns, total and average turn duration, per-role turn counts, and the balance of turn-taking. In

Section 5, we relate these markers descriptively to team outcomes.

2.2. Collective Intelligence in Co-Design: Internal Mechanisms

Prior research suggests that group performance depends not only on the abilities of individual members, but also on the quality of their interaction—how information flows, how roles complement one another, how pacing is managed, and how groups converge on shared decisions (

Boimabeau, 2009;

Jiang et al., 2025b;

Malone et al., 2010;

Radcliffe et al., 2019;

Reia et al., 2019;

Suran et al., 2021;

Woolley et al., 2010). Early experimental work identified a latent collective intelligence factor that predicts team performance across multiple tasks, shifting the focus from individual traits to emergent, interaction-level properties (

Woolley et al., 2010). Subsequent studies formalized multi-level quantification strategies and argued for pairing behavioral outputs with multimodal process signals to characterize collective intelligence during collaboration (

Almaatouq et al., 2021;

Malone & Woolley, 2020;

Mao et al., 2016;

Riedl et al., 2021/2022). Reviews have further synthesized mechanisms that shape collective intelligence—such as information-exchange structures, feedback loops, and cognitive diversity—emphasizing that higher collective intelligence reflects the organization of interactions rather than a simple aggregation of abilities (

Jiang et al., 2025a;

Salminen, 2012;

Suran et al., 2021;

Zhang & Mei, 2020).

Within co-design, these mechanisms align naturally with health-system design practice. Information sharing is expressed through artifact reuse and cross-referencing; role complementarity appears in rotating facilitation, synthesis, and evaluation functions; pacing is influenced by time-boxing and regrouping; and convergence is achieved through iterative framing and resolution (

Chmait et al., 2016;

Jiang et al., 2025a,

2025b;

Kittur et al., 2009). In clinical and organizational contexts, considering these mechanisms through a human-centered and inclusive lens also foregrounds the importance of equitable participation and adherence to safety constraints.

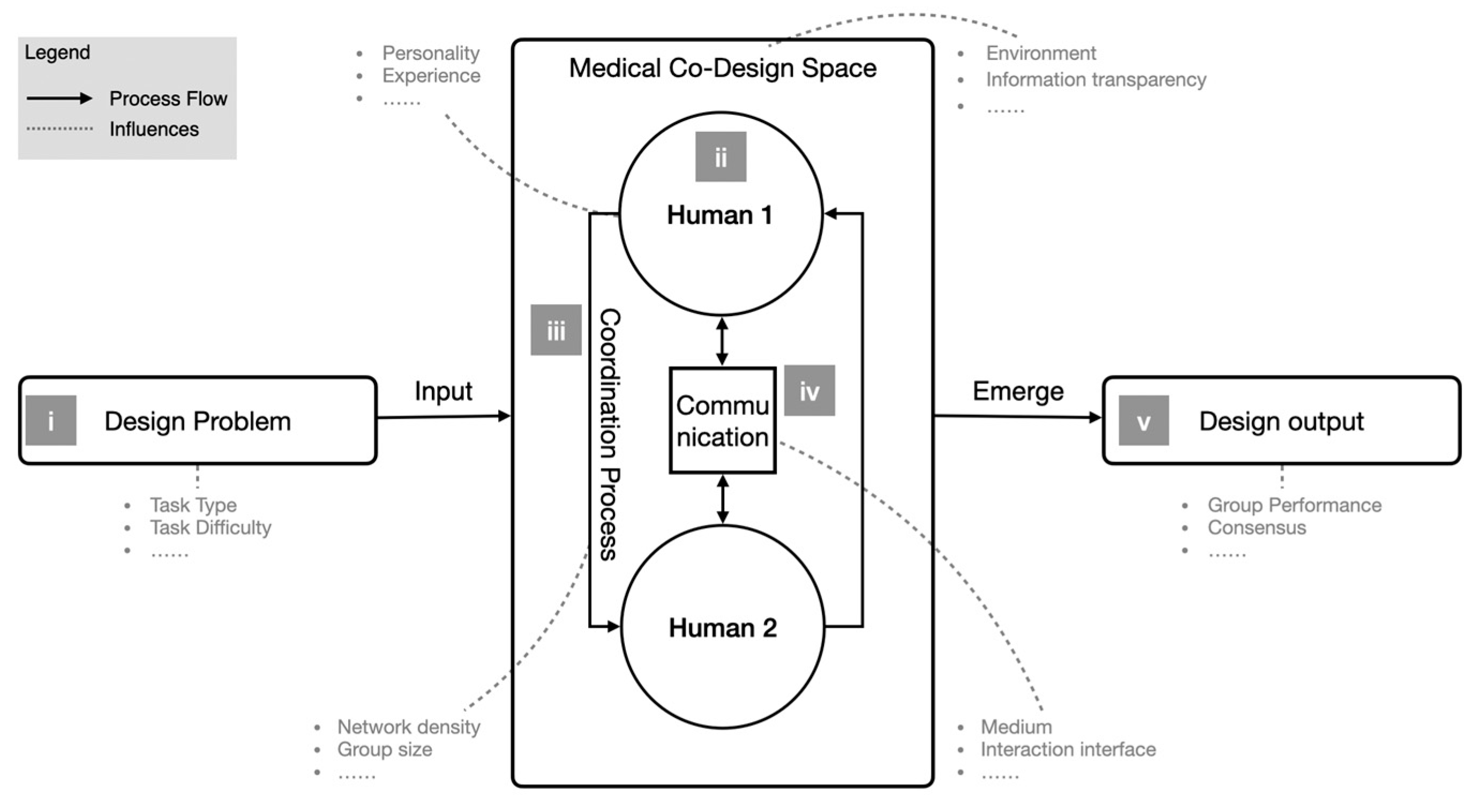

Figure 1 consolidates our earlier schematics (

Jiang et al., 2025a,

2025b) and clarifies the interaction flow and factors that shape collective intelligence in medical co-design (

Amelkin et al., 2018;

Malone & Woolley, 2020;

Riedl et al., 2021/2022;

Woolley et al., 2010). For clarity, the figure depicts two actors (the primitive unit in our context) and is organized into five elements: (i) the design problem entering the medical co-design space; (ii) individual (human 1/2) and team factors within that space; (iii) coordination processes between partners inside the co-design space; (iv) communication patterns that shape interactions; and (v) emergent phenomena and outputs that reflect collective intelligence at the team level. Solid arrows indicate the main process flow (inputs → co-design space → emergence → outputs), whereas dashed arrows indicate sub-influences. We use this descriptive schematic both to align orchestration with internal mechanisms and to delimit the scope of instrumentation (SUS/PU/PEOU; TP/PP/S-TP; lightweight logs of phase timing, role participation, and artifact linking).

2.3. Facilitation in User-Centered Collaborative Design

User-centered design provides a substantial tradition of facilitation practices and co-design frameworks; however, many accounts place greater emphasis on outcomes or case narratives than on in situ guidance—especially in health system contexts—regarding how to shape the interaction process as it unfolds (

Cardoso & Clarkson, 2012;

Dong et al., 2004;

Goodman-Deane et al., 2010;

Moss et al., 2023). Existing reviews often describe governance structures, roles, and artifacts in detail but offer less insight into how facilitators interpret process signals and adjust pacing or information surfacing in real time (

Bevan Jones et al., 2020;

Greenhalgh et al., 2019;

Slattery et al., 2020;

Sumner et al., 2021).

In this work, we distinguish facilitation from orchestration. Facilitation typically focuses on what teams discuss or decide (for example, eliciting needs or reframing problems). Orchestration, in contrast, structures when and how teams interact—sequencing activities, enforcing time-boxes and role rotation, and prompting artifact hand-offs—while remaining agnostic about content. Our study concentrates on orchestration because it yields observable process markers and can be executed by a rule-based controller that does not generate domain content. In practice, this approach translates facilitator intent into adjustable prompts, role swaps, and time structures that are aligned with mechanisms relevant to collective intelligence, such as participation balance, dependency-aware pacing, and decision convergence. This stance is consistent with behavioral evidence that trust in automation depends on perceived reliability and clarity of roles, which process-level orchestration can help make explicit (

Hancock et al., 2011).

Operationally, we employ a small set of reusable rules. For example, if participation remains skewed over a short dwell period, the system issues a prompt directed at a quieter role; if a dependency has been cleared but no new action begins within that dwell period, the system nudges the next step. The controller evaluates such guards against the live session state and produces short natural-language prompts or phase transitions, while logging all events for post hoc analysis. It operates solely on workspace state—event metadata such as timestamps, roles, phase IDs, action types, counters, and status flags—and emits only brief process prompts; it does not access artifact text or images and does not generate clinical or design content. An architectural summary is provided in

Appendix C (

Figure A1), where formal symbols (

dwell time,

cooldown,

rule priority) are defined on first use. These rules encode facilitation expertise in an operational form while remaining compatible with routine governance and data-protection constraints (

Kephart & Chess, 2003;

Widom & Ceri, 1995).

2.4. Practical UX and Team-Level Measures in Co-Design

This section describes the practical toolkit used in the study, which combines SUS, PU, and PEOU for assessing usability and acceptance with team-level outcomes TP, PP, and S-TP. In parallel, an automatic event log records timestamps, roles, phases, action types, and artifact references (

Clarkson, 2022;

Clarkson et al., 2013,

2017). The package runs on standard laptops with a shared workspace and does not require any additional system integration.

First, usability and acceptance are measured using the System Usability Scale (SUS) and Technology Acceptance constructs—Perceived Usefulness (PU) and Perceived Ease of Use (PEOU)—all of which are widely used in HCI. SUS is scored by re-centering odd and even items and multiplying the resulting sum by 2.5 to yield a 0–100 score (Equation (1)) (

Bangor et al., 2008;

Brooke, 1996).

where

is the raw item score and

. We adopt standard Technology Acceptance Model (TAM) definitions for PU and PEOU (

Davis, 1989). In our prior deployment, different Likert anchors (0–5 and 0–7) were linearly rescaled to canonical ranges prior to aggregation; the same standardization scripts and data schema are reused here to support reproducibility.

Second, team-level outcomes comprise Technical Performance (TP), Perceived Performance (PP), and Self-rated Technical Performance (S-TP). TP reflects expert ratings of output quality and technical attainment; PP captures participants’ subjective assessment of team collaboration and outcomes; S-TP records each member’s self-rated technical contribution. A unified scale and item structure ensure cross-source comparability, and the same standardization and ID-cleaning pipeline is used to enable replication.

Third, process logging provides observables that are aligned with mechanisms relevant to collective intelligence—participation balance, coordination latency, information reuse, and convergence cues—without interfering with ongoing work. The logs record timestamped actions, actor roles, phases, artifact references, and dependency events.

Table 1 summarizes the instruments and their operationalization, including items and scales, scoring, and standardization procedures. And in the two right-most columns, this table shows (i) analytical use and (ii) conceptual role in the study (item texts are provided in

Appendix A and

Appendix B).

Table 2 lists the event-log fields—timestamp, actor_role, phase_id, action_type, artifact_id, and dependency—and shows how each field reads out team behaviors linked to collaboration quality (e.g., pacing, participation balance, convergence trajectory, information sharing/reuse, coordination latency) used in the visual diagnostics in

Section 5. The table defines an analysis-ready export schema; platforms may log these fields directly or map them from native traces. The mapping is implementation-specific and does not constrain backend design.

The log fields allow us to observe simple teamwork behaviors: pacing (how quickly a session progresses through steps), participation balance (whether speaking and turns are shared across roles), convergence trajectory (whether work narrows toward a decision over time), information sharing/reuse (how often earlier notes or artifacts are brought back into play), and coordination latency (how long hand-offs between dependent steps take).

These design choices are conservative. We rely on widely used instruments, reuse a previously tested scoring workflow and scripts, and capture only those process-log fields needed to derive the observables reported here—SUS/PU/PEOU distributions, TP/PP/S-TP aggregates, RTC stacks, and associated correlations. This keeps instrumentation low-burden and reusable while maintaining compatibility with routine governance and data-protection practices. The measurement emphasis also aligns with organizational findings that intention and acceptance shape transformation outcomes in AI-enabled workplaces (

T.-J. Wu et al., 2025).

2.5. Organizational Behavior Lens for Human-AI Collaboration

Building on our prior CoX framework (

Jiang et al., 2025b), which separates external conditions from the interaction mechanisms that shape teamwork, we view small co-design teams as groups whose collective performance improves when interactions are structured. Here, “structured” means that timing and progression follow a few simple rules that keep work moving toward a decision (e.g., clear phases start and end, and prompts that help resume stalled discussions).

Based on this framework and our v0.3 DSA/DIMS prototype, the AI acts as a process guide. It opens and closes phases, presents the next step only after the current one is acknowledged as complete, and records each transition and prompt. When a discussion idles for a while (no input detected), it posts a short nudge to summarize or continue. These content-agnostic controls make sessions easier to run consistently, and the resulting event traces (phase changes, prompts, timestamps) enable us to quantify behavior in

Section 5.

From an organizational-behavioral angle, such processes align with evidence that employees’ intentions are pivotal in shaping the outcomes of digital-intelligence transformation, reinforcing our choice to pair usability/acceptance (SUS, PU, PEOU) with mechanism-readouts (

T.-J. Wu et al., 2025). Clear role boundaries and lightweight nudges are also relevant controls, as recent work shows that human–AI collaboration can influence employees’ cyberloafing via AI-identity processes (

Xu et al., 2025).

4. Study Design and Procedure

4.1. Sites, Participants, and Roles

We conducted the study in a real-world applied setting relevant to medical innovation. To preserve anonymity while maintaining authenticity, we describe the site only in generic terms and focus on the procedure and instrumentation. Twenty-four participants were organized into six four-person teams. Teams were formed to reflect the typical multi-disciplinary composition of medical co-design—mixing domain-side stakeholders (e.g., clinical or operational) with design/engineering roles—so that information, perspectives, and artifact work could circulate in a way that resembled routine projects (

Malone & Bernstein, 2015;

Malone & Woolley, 2020;

Riedl et al., 2021/2022;

Suran et al., 2021;

Woolley et al., 2010). All procedures complied with institutional guidelines. Sessions were non-clinical and of minimal risk; no patient data were involved. No personally identifiable information was collected in the logs; role identifiers were limited to coarse categories, and all exports were anonymized prior to analysis.

4.2. Tasks and Five-Phase Procedure Aligned with the Mechanism-Informed Rationale

All teams followed a five-phase session framework typical of co-design in health settings: (I) Orientation and Briefing, a short all-hands introduction to the study background, session goals, and core functions; (II) Access and Onboarding, where participants logged in with role-based accounts and received task materials; (III) Co-Creation, the main work stage with guided cycles of idea generation, consolidation, and evaluation; (IV) Sharing and Inter-Team Exchange, where outputs were summarized and briefly presented; and (V) Post-Session Survey, where usability/acceptance and team-outcome instruments were completed. Measurement anchors and data flows for each phase were summarized in

Table 5.

Before Phase I, all teams received a standardized physical briefing and verbal orientation that stated and confirmed the following agreements: (1) fixed time-boxes per phase with countdown timers; (2) turn-taking rules enforced by the system, including role rotation at predefined checkpoints; (3) single-threaded conversation (no side discussions); (4) artifact hand-offs via DIMS with prompts; and (5) respectful interaction and compliance with the logging protocol. Participants acknowledged these agreements before proceeding. The orchestrator then implemented the five-phase procedure; any deviations were recorded in the log and were addressed by automated prompts rather than human content facilitation.

Creative assistants within DIMS were available throughout as optional tools for ideation; their use was neither required nor restricted to specific phases of the project. Participants were reminded that these tools do not provide clinical advice and that any generated artifacts served only as stimuli for team discussion.

Prompts and templates were identical across teams, and transitions were guided by rules that supported conditions relevant to collective intelligence—balanced participation, dependency-aware pacing, and progressive convergence—while lightweight process events (phase enter/exit, action types, and artifact references) were logged without disrupting work. TP (expert) was administered after the Sharing stage; SUS/PU/PEOU and PP/S-TP were administered in the Post-Session Survey (

Section 3).

4.3. Deployment History

A formative classroom walkthrough preceded the field study (shown in

Figure 3). Its purpose was to validate the procedural choreography, prompt content, and artifact templates in a formative, non-evaluative setting. No systematic logs were collected; therefore, the walkthrough is excluded from analyses and retained only as design context. As a result of the walkthrough, we tightened time-boxes for Co-Creation, simplified the prompt taxonomy, and added a dependency tag to support latency diagnostics. These adjustments reduced session overhead and improved the signal quality of pacing and dependency latency metrics in the field.

The field study then deployed the full procedure with 24 participants in six four-person teams, each using standardized accounts, identical phases, and templates, along with on-site prompts (shown in

Figure 4). This deployment targeted feasibility in realistic conditions, focusing on procedural adherence across teams, data capture as planned for SUS/PU/PEOU and TP/PP/S-TP, and maintaining clean process logs suitable for mechanism-aligned visualization. The field setting ensured that patterns we report (

Section 5) reflect the interaction constraints and coordination demands of actual co-design practice rather than a laboratory abstraction.

4.4. Data Preparation and Analysis Plan

The main text presents only the statistics and figures necessary for the study’s objectives. Scripts and file layouts are available on reasonable request (see Data Availability Statement). Instrument responses were inspected for missingness and range errors; respondent IDs were checked against team rosters; and a single, anonymized analysis ID was assigned to each participant. SUS was scored per Brooke’s convention, yielding

using Equation (1). PU and PEOU item scores were linearly rescaled to

using Equation (2), then averaged to obtain scale means. TP was computed from expert rubric ratings, averaged after rater-wise z-standardization; PP was rescaled to [1, 7] and aggregated as team means; S-TP retained its native [1, 5] anchors and was aggregated to a team mean (

Section 3.2). Process events were exported to CSV, filtered for duplicates, and aligned to phase intervals to support the construction of timelines, role-contribution heatmaps, and information-reuse graphs.

Analyses emphasized description and correlation, consistent with our feasibility aim and the state of the literature on collective intelligence in naturalistic collaboration. We report distributions (mean, standard deviation, median, interquartile range) for SUS, PU, PEOU; summary statistics for TP, PP, and S-TP; and phase-level coverage and durations. Correlations between process observables—dialogue volume, total duration, average turn duration, participation balance, and role-specific turn count—and team-level outcomes (TP, PP, S-TP) were examined using Pearson correlations. Results are interpreted descriptively, given the small-N and non-controlled design. Planned diagnostics, such as artifact-reuse density and decision latency, require richer logs and are reserved for future iterations. Because teams shared an identical procedure but worked independently, the tests are interpreted as exploratory and non-causal; the results inform design directions for the next prototype iteration rather than adjudicating among competing causal models.

Team products were scored during the workshop’s plenary session using a common rubric; raters did not have access to PP/S-TP responses while scoring. No imputation was performed; analyses use available observations, and any missingness or protocol deviations are logged and summarized in the

Supplementary Materials. For outcomes on bounded scales, we report means with bootstrap confidence intervals (BCa) at the appropriate analysis level. Inter-rater reliability for TP is quantified as ICC(2,k) with 95% CIs and reported in the

Supplement (Section S1.2). Correlations between log-derived markers and outcomes are summarized with Pearson’s

r and bootstrap CIs in the main text (

Section 5.5), with Spearman’s ρ and bootstrap CIs provided as a robustness check in the

Supplement (Section S1.3). All perception scales and log-derived markers are linked to item/field definitions in

Section 3.2 and the

Appendix A,

Appendix B,

Appendix C and

Appendix D.

5. Results

5.1. Adherence and Completion

We first report procedural adherence, instrument completion, and deliverables before turning to distributions and correlations. All six teams completed the full five-phase procedure within an approximately 90-min session, producing the required deliverables (problem-framing notes, option sets, consolidated clusters, decision artifacts). The 90-min duration reflected site logistics and participant scheduling rather than a theoretical constraint; phase time-boxes were preset to fit this window, and analyses do not depend on the absolute session length.

Instrument completion after cleaning was: SUS/PU/PEOU, n = 5 valid responses (from 7 collected); TP, n = 12 valid expert ratings (from 16; four removed due to duplication/missingness or all-zero strings); and PP/S-TP, n = 12 valid responses.

Process logging covered the entire session for all teams. One team initiated two runs in the system, with the first low-quality run excluded and the second retained, resulting in six valid team logs for analysis. This met the feasibility targets and enables mechanism-aligned analyses reported below.

5.2. Usability and Acceptance

Table 6 summarizes System Usability (SUS, 0–100) and TAM measures (Perceived Usefulness, PU; Perceived Ease of Use, PEOU; both rescaled to 1–7 per Equation (2)). Given the small cleaned participant sample (

n = 5), we report mean ± SD together with medians and interquartile ranges (IQR), and we add 95% confidence intervals estimated via bias-corrected and accelerated bootstrap (BCa; B = 10,000) to reflect sampling uncertainty. SUS centers around common “good” thresholds (mean = 69.20, BCa 95% CI = 60.00–80.00; median = 66.00, IQR = 16.00). PEOU is high (mean = 5.63, CI = 4.71–6.43; median = 5.57, IQR = 1.14), whereas PU is moderate-to-high with wider dispersion (mean = 4.89, CI = 3.86–6.20; median = 4.14, IQR = 2.00).

These patterns align with participant comments that standardized prompts reduced coordination overhead, while task-specific value could be surfaced more explicitly for certain workflows (

Bangor et al., 2008;

Brooke, 1996;

Davis, 1989).

Figure 5 shows box plots on the original scales with medians and IQRs annotated.

Reported BCa CIs indicate ranges of plausible population means under conditions of small sample size and potential skew. Given n = 5, intervals are interpreted as an orientation for design decisions rather than as hypothesis tests.

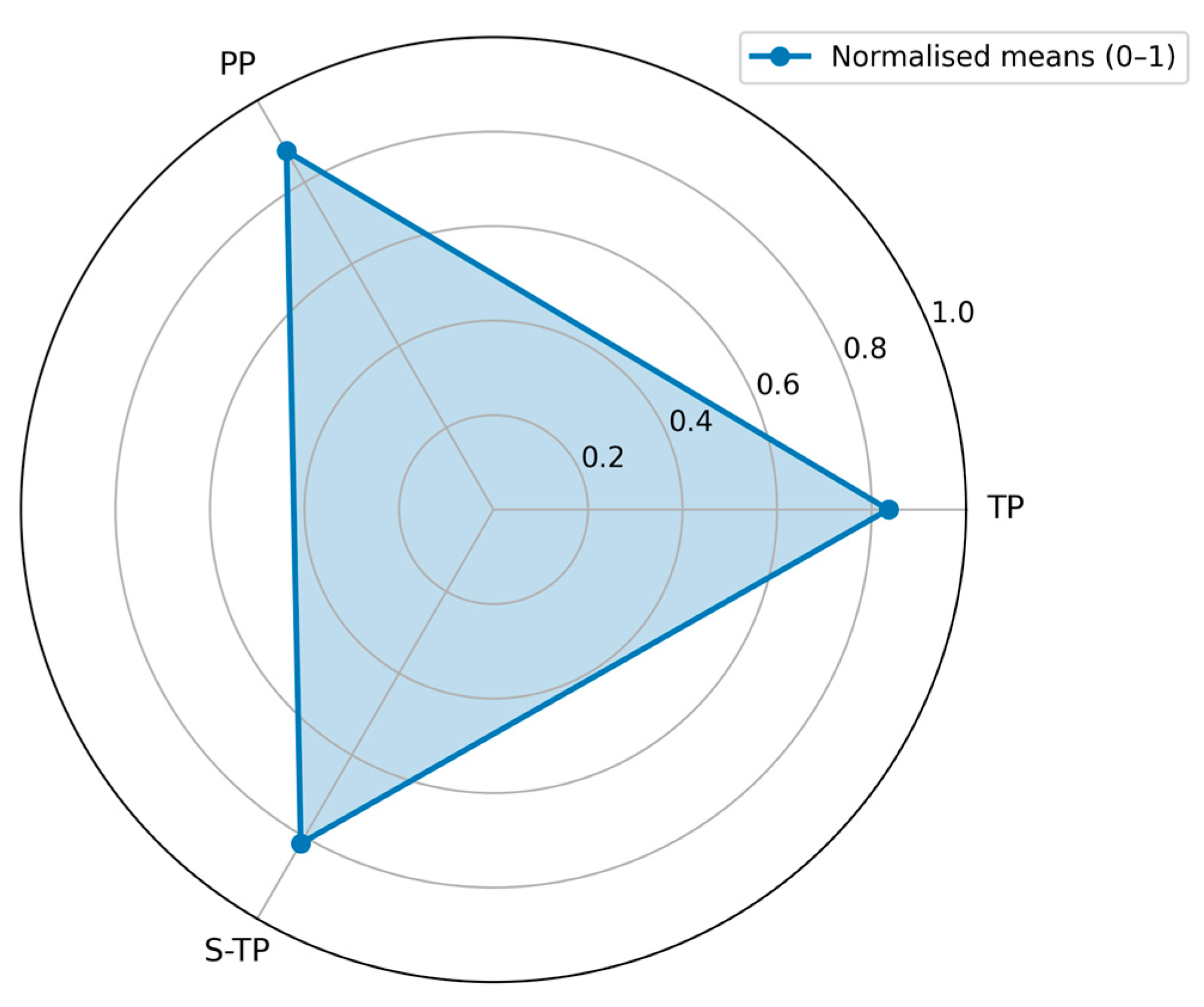

5.3. Team-Level Outcomes

Team outcomes for expert-rated Technical Performance (TP), Perceived Performance (PP), and Self-rated Technical Performance (S-TP) are summarized in

Table 7 on their raw scales, with normalized means of 0–1 shown for cross-scale comparability. The ordering remains consistent—PP highest, TP next, S-TP lowest—matching the common pattern that collaborative appraisals tend to exceed individual self-appraisals, with expert judgments in between.

TP averaged 4.18/5 at the team level with a narrow 95% BCa CI of 4.14–4.22, indicating generally high product quality in this sample. PP averaged 6.14/7 (95% BCa CI 5.60–6.67), while S-TP averaged 4.08/5 (95% BCa CI 3.57–4.57). Intervals were estimated via bias-corrected and accelerated bootstrap (B = 10,000) at the team level and interpreted descriptively given the small number of teams.

Figure 6 visualizes the normalized means; PP extends furthest toward the outer rim, followed by TP and then S-TP, mirroring the tabulated ordering.

For completeness, inter-rater reliability for TP is reported in the

Supplementary Materials as a two-way random-effects, absolute-agreement ICC(2,k) with BCa 95% confidence intervals; in the main text, we summarize team-level means with BCa CIs due to the small number of teams.

5.4. Process-Level Diagnostics (Visualizations)

We summarize participation balance using role-specific turn counts (RTC) aggregated over the session.

Figure 7 shows stacked distributions of RTC by team. Two patterns are salient. First, clinician and designer turns are relatively stable across teams, while several teams exhibit strong dominance by the process-guidance agent (DSA). For example, in Team 4, the guidance agent contributes a disproportionately large share of turns, whereas teams with higher PP/TP scores display more even role distributions. Second, auxiliary agents (e.g., research helper, media generator) intervene only sporadically, suggesting that their current triggers are conservative. These observations align with the correlation analyses (

Section 5.5): more balanced participation is associated with higher perceived performance, whereas fragmented or agent-dominated exchanges are associated with lower perceived performance. We do not claim causality; the RTC view is a descriptive diagnostic to help facilitators notice when human roles are being overshadowed and when rebalancing prompts may be useful.

5.5. Synthesis: What Supported or Hindered Collective Intelligence Emergence in Practice

Correlation analyses (

teams

1) relate basic conversational features to team outcomes (

Table 8;

Figure 8 provides exemplars). We examined NDT (number of dialogue turns), TDD (total dialogue duration), ATD (average turn duration), RTC per role (not tabulated), and TTB (turn-taking balance), against TP, PP, and S-TP using Pearson correlations (

); given the small-N, non-controlled design, results are interpreted descriptively. Patterns are co-occurrences consistent with collective intelligence theory—organized interaction, not volume alone, is accompanied by better perceived outcomes.

Volume vs. quality: NDT showed essentially no relation to TP (); simply “talking more” did not predict expert-judged output quality.

Pacing burden: TDD correlated negatively with PP () and more strongly with S-TP (), suggesting that overlong sessions undermine perceived effectiveness and individual contribution.

Turn structure: ATD was positively related to PP (), indicating that longer, more substantive turns were associated with better perceived collaboration. TTB also showed a positive relationship with PP (), highlighting the value of balanced participation.

Fragmentation: A strong negative NDT–PP correlation () indicates that more fragmented turn-taking co-occurred with lower perceived performance.

Together with the visual diagnostics (

Figure 5,

Figure 6,

Figure 7 and

Figure 8), these correlations reinforce three practice-level levers: pursue balanced participation, guard against overlong timelines and fragmented micro-turns, and scaffold more substantive turns. These levers are operational within the instrumented package and map directly to facilitation moves (e.g., targeted prompts, time-boxing), offering a practical pathway to understand and steer collective intelligence in medical co-design.

To make the uncertainty of the exploratory correlations explicit, we computed 95% confidence intervals for each Pearson coefficient using a percentile bootstrap with Fisher-z transformation (B = 10,000;

Table 9). With only six teams, intervals are necessarily wide and should be interpreted descriptively. Intervals spanning zero indicate that the data are compatible with both positive and negative relations in this sample, whereas intervals that remain on one side of zero suggest a directionally stable association under resampling.

Two patterns stand out. First, the fragmentation signal persisted: the relationship between the number of dialogue turns (NDT) and perceived performance (PP) was strongly negative (r = −0.922; 95% CI, −1.000 to −0.729). Second, longer total durations were associated with lower self-rated technical performance (S-TP) (TDD-S-TP: r = −0.750; 95% CI, −0.998 to −0.168). Other estimates—e.g., ATD-PP (r = 0.570; 95% CI −0.438 to 1.000), TTB-PP (r = 0.377; 95% CI −0.526 to 0.907), and NDT-TP (r = −0.014; 95% CI −0.984 to 0.875)—had intervals that cross zero, so we treat them as suggestive tendencies aligned with the practice levers above rather than firm effects.

A rank-based robustness check yielded the same qualitative picture (e.g., ρ = −0.928 for NDT-PP and ρ = −0.771 for TDD-S-TP; see

Tables S3 and S4). Taken together, the CI analysis reinforces our practical guidance: avoid fragmented, overlong exchanges while promoting balanced, more substantive turns.