Abstract

Image segmentation has been one of the most active research areas in the last decade. The traditional multi-level thresholding techniques are effective for bi-level thresholding because of their resilience, simplicity, accuracy, and low convergence time, but these traditional techniques are not effective in determining the optimal multi-level thresholding for image segmentation. Therefore, an efficient version of the search and rescue optimization algorithm (SAR) based on opposition-based learning (OBL) is proposed in this paper to segment blood-cell images and solve problems of multi-level thresholding. The SAR algorithm is one of the most popular meta-heuristic algorithms (MHs) that mimics humans’ exploration behavior during search and rescue operations. The SAR algorithm, which utilizes the OBL technique to enhance the algorithm’s ability to jump out of the local optimum and enhance its search efficiency, is termed mSAR. A set of experiments is applied to evaluate the performance of mSAR, solve the problem of multi-level thresholding for image segmentation, and demonstrate the impact of combining the OBL technique with the original SAR for improving solution quality and accelerating convergence speed. The effectiveness of the proposed mSAR is evaluated against other competing algorithms, including the L’evy flight distribution (LFD), Harris hawks optimization (HHO), sine cosine algorithm (SCA), equilibrium optimizer (EO), gravitational search algorithm (GSA), arithmetic optimization algorithm (AOA), and the original SAR. Furthermore, a set of experiments for multi-level thresholding image segmentation is performed to prove the superiority of the proposed mSAR using fuzzy entropy and the Otsu method as two objective functions over a set of benchmark images with different numbers of thresholds based on a set of evaluation matrices. Finally, analysis of the experiments’ outcomes indicates that the mSAR algorithm is highly efficient in terms of the quality of the segmented image and feature conservation, compared with the other competing algorithms.

Keywords:

search and rescue optimization algorithm; meta-heuristics; opposition-based learning; multi-level thresholding; fuzzy entropy and Otsu method; image segmentation MSC:

68Txx; 68Uxx

1. Introduction

Image thresholding is a popular operation used in computer vision to process and analyze images in fields such as medicine, engineering, agriculture, and manufacturing. It is most commonly used in image segmentation to provide accurate feature extraction in biological image processing, pattern recognition, and robotic vision [1]. Thresholding is one of the most important main segmentation steps that has proven effective in different applications [2,3]. The main goal of image thresholding is to obtain optimal threshold values from the image, for which the image histogram is used. The histogram is a vital step in the segmentation methods for defining the probability distribution value of pixels in the image [4]. The thresholding technique can be classified into two different groups: multi-level and bi-level thresholding. Multi-level thresholding usins two or more thresholding values to split an image into many different groups, while bi-level thresholding uses one threshold value to split an image into two groups [5,6].

Recently, many researchers have illustrated the capability of MHs to solve a diversity of complex optimization problems in different fields, such as biomedical [7], engineering [8,9], medical [10,11], communications [12], image segmentation [13], and feature selection [14]. MHs are considered flexible, non-derived, and highly clever in obtaining an optimal solution. Furthermore, these algorithms are considered the best method for providing the best solutions for complex optimization problems with the latest developments in computer techniques. Due to their advantages, MHs have become widely utilized to realize the optimal thresholds of color and gray-level images. MHs applied the search procedure to a problem landscape with a set of search agents that act as candidate solutions created repeatedly in an iterative procedure using heuristic operators. These operators, when utilized in various orders, generate different search strategies. In the same context, MHs are classified into four main groups: swarm-based algorithms, natural evolution-based algorithms, human-based algorithms, and physics-based algorithms [15,16].

As per published researches on swarm-based algorithms (SA), SA mimics the behavior of an organism within groups. Organisms usually interact with one another to achieve the best collective behavior [17]. Some works published in these offshoots, such as particle swarm optimization (PSO) [18], mimic the hunting behavior of birds and fish swarms. The natural evolution-based algorithms imitate biological evolution processes such as recombination, crossover, mutation, and feature inheritance in offspring [19]. The fitness function determines the quality of candidate solutions to the optimization problems, which work as individuals in a population. The genetic algorithm (GA) [20] and differential evolution (DE) [21] are two evolutionary algorithms inspired by biological evolution. The third category of MHs is human-based algorithms that mimic gregarious human attitudes. Many algorithms belong to these branches, such as the heap-based optimizer (HBO) [22], election campaign algorithm (ECA) [23], and teaching–learning-based optimization (TLBO) [24]. The fourth category of MHs is called physics-based algorithms, and these are inspired by physics to create factors that allow for the search of the best solution within the search scope. Many algorithms have been published in this branch, such as the electromagnetism-based algorithms (EMO) [25] and the GSA algorithm [26].

In the literature, several theories have been proposed to explain the efficacy of MHs on image thresholding [27,28]. There are several examples of MHs in this field; nevertheless, the following are a few notable state-of-the-art research efforts. In [29], the moth-swarm algorithm (MSA) was used to determine the optimal threshold values with the Kapur method. Additionally, ant colony optimization (ACO) in [30] is utilized in image segmentation based on a non-local 2D histogram and Kapur’s entropy as object functions with the multi-threshold image segmentation (MTH) method. In [31], researchers used the Otsu and Kapur methods with a modified firefly algorithm to process images. In the same context, three new versions of the manta ray foraging optimization algorithm and the chimp optimization algorithm (ChOA) have been proposed to tackle the image segmentation problem using multi-level thresholding [32,33,34]. The researchers in [35] used (MTH) image segmentation with a novel concept of MHs called hyper-heuristics, in which each iteration defined the best execution sequence of MHs to determine the best thresholds. In [27], the black widow optimization (BWO) algorithm used Otsu or Kapur techniques as an objective function with multi-level thresholding to determine the optimal thresholding value in the gray level. An efficient krill herd (EKH) algorithm in [36] was utilized to determine the best thresholding values at different levels for color images, with Kapur’s entropy, Tsallis entropy, and the Otsu methods. The HHO algorithm is the novel algorithm in [37], and the hybridization of HHO is accomplished by adding another efficient algorithm, the DE algorithm, which together is termed HHO-DE. In particular, the entire population is divided into two equal subpopulations that will be assigned to the DE and HHO algorithms, respectively, and this hybridization used Otsu and Kapur’s methods as the objective functions.

Regardless of the previous optimization methods, SAR [38] is also used to determine the optimal multi-level thresholding for image segmentation. In terms of efficiency and simplicity, the SAR outperforms several other biologically inspired procedures as a competitive and modern population-based optimization technique. It mimics the exploration behavior of humans during search and rescue operations. The researchers [38] have proven that SAR performs better in terms of stability and accuracy when compared with other optimization techniques when tested on standard benchmark functions.

Many of the MHs used to solve various optimization problems in the literature, such as a lack of global search capability, early conversion, or trapping in local regions. This gives researchers a yardstick to propose hybrid and modified versions. Many researchers use OBL to improve the search efficiency of MHs [39]. Tizhoosh released the first version of OBL in 2005 [40], and MHs have used it in a variety of ways to improve exploratory searchability. The researchers improved the elephant herding optimization (EHO) using dynamic Cauchy mutation (DCM) and OBL learning for solving multi-thresholding problems [41]. DCM healed premature convergence, and OBL resolved slow convergence in EHO, according to the authors. This study utilized Otsu and Kapur’s methods as two fitness functions to determine threshold values for image segmentation. In [42], the marine predator algorithm (MPA) is improved using OBL (MPA-OBL) for enhancing convergence and search efficiency and solving the IEEE CEC’2020 benchmark problems. MPA-OBL used Kapur’s and the Otsu methods as two fitness functions with a diversity of benchmark images at different thresholds.

In spite of some studies related to MHs and OBL to solving image thresholding problems, it is difficult to find more research related to relevant literature. Nevertheless, OBL-based MHs are often applied to different optimization problems. As a result, the purpose of this study is to deepen the research in the image segmentation field by employing the most recent SAR; in reality, this is the first instance of SAR implemented on the image segmentation and CEC’2020 benchmark functions. SAR was introduced in July 2020 to mimic the exploration behavior of humans during search and rescue operations, which outperformed a huge number of well-known counterparts on a variety of engineering and mathematics benchmark problems. Despite the efficacy of SAR’s search mechanisms, there are some fields where it may be enhanced further to prove its efficiency on various optimization problems, such as image thresholding problems, because the algorithm may miss some critical search regions. To solve this problem, we combined the SAR algorithm with OBL (SAR-OBL) to generate solutions from potential regions for exploring search space more rigorously. More crucially, we enhance its local search capability by utilizing solutions generated from the surrounding promising regions to avoid the trap of local optima. As a result, the proposed SAR diversity comes with a trade-off balance between exploration and exploitation. We evaluate the proposed SAR-OBL on CEC’2020 benchmark functions before it is used to solve multi-level image thresholding problems.

The main goal of this paper is to enable researchers to use a novel metaheuristic algorithm with opposition-based learning (OBL) to increase the performance of image segmentation in the medical field. Furthermore, this paper aims to discuss the benefits and downsides of the mSAR algorithm in image segmentation. Finally, the main contributions of this paper can be summarized as follows:

- An improved opposition-based search and rescue optimization algorithm (mSAR) is presented.

- The search efficiency and convergence of mSAR have been improved.

- Fuzzy entropy and the Otsu method are applied.

The paper is structured as follows: in Section 2, the SAR algorithm and OBL search strategy will be discussed. Section 2.3 is devoted to a mathematical model of fuzzy entropy and Otsu methods. Section 3 presents the proposed algorithm. The evaluation of SAR-OBL is presented in Section 4. The experimental results are discussed and analyzed in Section 5. The conclusion is reported in Section 6.

2. Preliminaries

This section studies the inspiration of the SAR algorithm and its mathematical model and discusses the OBL strategy.

2.1. Search and Rescue Optimization Algorithm

The mathematical model of the SAR algorithm is discussed in this section. Search and rescue operations are one type of group exploration. A search is a methodical operation that employs resources and available personnel to identify the individuals involved in the ordeal. A rescue operation seeks to recover people who are in danger and transport them to a safe location. Humans search in groups, and each searching group regulates its practice to be ready for its respective operations. Based on their training, humans can find clues and traces of missing people. The found clues are of varying value and contain varying amounts of information regarding the missing individuals. For example, some clues refer to the probability of the presence of lost individuals in that area. The clues are evaluated by each group member depending on his or her training. This evaluation delivers information about the found clues to other members via communication equipment. Last but not least, they search based on the information gleaned from them and the significance of these hints. Social and individual stages are the main stages in search and rescue operations. In the social stage, searching is based on the location of found clues and their importance, while in the individual stage, the search is executed regardless of the importance of clues. Clues can be divided into two types: hold clues and abandoned clues.

2.1.1. The Clues

The positions of the left clues are saved in a memory matrix X in the model we proposed, while the positions of the humans are saved in a position matrix Y. The dimensions of matrix X are the same as those of matrix Y. They are matrices, where M is the number of the members of the group and N refers to the problems’ dimension. The clues matrix Z contains the locations of the discovered clues, and it is composed of matrices Y and X. Most new solutions in individual and social stages are introduced dependent on the clue matrix, and this is a primary part of SAR. Equation (1) presents the clue matrix Z and matrices X, Y, and Z are upgraded in each of the human search stages.

where Y and X are human locations and memory matrices, respectively, and is the first dimension location for human Mth. Furthermore, the first memory’s Nth dimension is located at . The two stages of human search are modeled in the following section.

2.1.2. Social and Individual Stages

According to the preceding explications, Equation (2) is utilized to determine the search direction.

where the location of the jth clue, the location of the kth human, and the search direction for the kth human are , , and , respectively. j is a random parameter inside (1, 2M). For will be equal to . Thus, j is elected in such a way that . The social phase can be implemented by Equation (3).

where the location of the ith dimension for the jth human is . The new location of the ith dimension for the kth clue is . The fitness function values for the solution and are and , respectively. is a random parameter inside , and are a distributed random parameters inside range . is a random parameter inside range 1 and N which used to ensure that at least one dimension of is different from . Equation (3) shows the new location of the jth human in all dimensions.

The individual stage used Equation (4) to determine the new location of the jth human.

where k, m are random values ranging between 1 and . This way, was employed for choosing k and m to prevent moving along other clues. is a random value with a uniform distribution inside the range . Equation (5) is used to modify the new location of the jth human.

where , are the values of the minimum and maximum threshold for ith dimension, respectively.

2.1.3. Update Locations and Information

Members of the group will search according to these two stages in each iteration, and if the value of the fitness function after each stage in the first location is less than the second location . The first location will be saved in a random location of the memory matrix (M).

where indicates the location of the nth saved clue in the memory matrix and the random value ranged between 1 and N is n.

2.1.4. Constraint-Handling Strategies

The penalty functions strategy, the -constrained method, and stochastic ordering are all methods for dealing with constraints. The -constrained method is one of the prevalent constraint-handling methods used in this study. According to this strategy, for a maximizing problem, this technique considers one solution to be better than another solution if the following requirements are satisfied:

The parameter is used to control the size of feasible space and this parameter can be calculated by Equation (8).

2.2. Opposition-Based Learning

OBL is a new mechanism in computational intelligence, and the main principle of OBL is to enhance the efficiency of the optimization OBL of MHs [42]. HR. Tizhoosh [40] introduced the OBL. Many researchers use OBL to enhance the quality of their population-initiated solutions by diversifying these solutions. In general, in MHs, when the initial solutions are near the optimal location, convergence occurs quickly; moreover, late convergence is expected. In this case, the OBL technique provides additional solutions by taking into account opposite search regions that may be near the global optimum. For understanding the definition of OBL, the opposite point of P is computed by:

where and are upper and lower bounds for the jth component of a vector z.

Previous studies indicate that researchers in the MH community have predominantly used various MHs. For instance, in [43], the HHO algorithm used the OBL strategy to enhance the search efficiency of the algorithm. While the researchers in [44] presented a new version of SCA based on OBL, this version is designed to jump out of local optima during the search processes. An improved whale optimization algorithm (WOA) is developed in [45] by combining the OBL and DE algorithms for improving exploration and exploitation by generating opposite values. In [46], researchers used OBL to improve the grasshopper optimization algorithm to solve engineering problems and benchmark functions. The EO algorithm used OBL and a set of novel update rules in [47] for modifying the EO, and this modification is called m-EO. The shuffled frog-leaping algorithm (SFLA) used the OBL to improve SFLA in [48], where OBL is combined into the memeplexes before the frog initiates foraging. In [49], researchers combine OBL with an enhanced scatter search (eSS) for large-scale parameter estimation in kinetic models of medical fields. According to this research work, OBL is employed only during the initiation phase for improving the rate of convergence and avoiding tumbling into the local optima of SAR. After that, mSAR is utilized for solving the problem of multi-thresholding for image segmentation using fuzzy entropy and the Otsu variance as objective functions.

2.3. Image Thresholding Methods

In this subsection, the mathematical model of two object functions used in image thresholding is explained, and these object functions are fuzzy entropy [50] and Otsu variance [51].

2.3.1. Fuzzy Entropy

In fuzzy entropy [52], the size of an image is termed as , and the original image I will be . and are two thresholds for dividing the image into three regions , in which these regions define the pixels of high gray levels, pixels of low gray levels, and pixels of middle gray levels repetitively. Usually, Equation (13) is utilized to calculate the histogram of the image as follows:

where k is an intensity level inside ranged . is the number of occurrences of the k in the image represented by the histogram. The probability distribution of gray level k is . The probability distribution of , , and can be computed as:

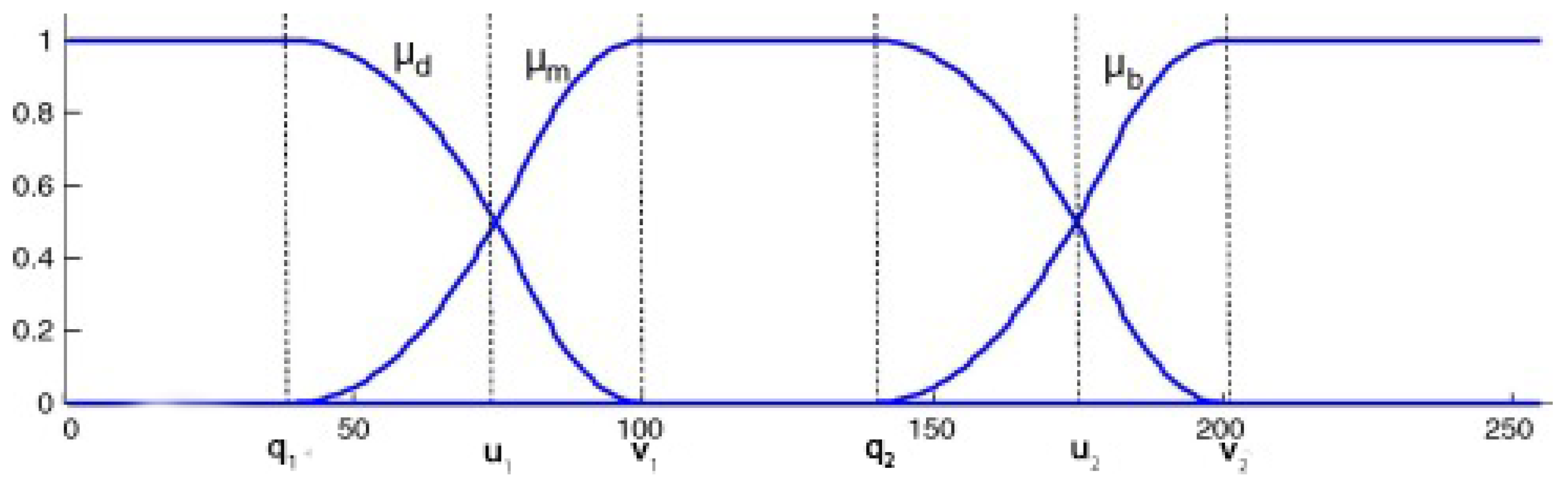

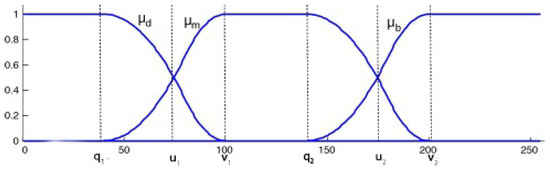

We choose as the membership functions of , , as shown in Figure 1. There are six fuzzy parameters, and these are , , , , , . Therefore, these parameters are used to determine and . According to the previous statements, the probability distribution of three regions can be calculated by the following formula:

where the conditional probability of a pixel that is classified into three partitions are and satisfy the constraint of .

Figure 1.

Membership function graph.

The mathematical model of the three membership functions is defined as follows:

the six parameters , , , , , must fulfill the condition . In order to reach the maximum value of entropy, a combination of the membership function’s variables must be found. As a result, the most applicable threshold can be computed as follows:

2.3.2. Otsu Method

The Otsu technique [51] is one of the most commonly utilized segmentation methods for finding optimal threshold values by maximizing the class variance. The intensity levels L for each color image are within the range . The probability distribution of the intensity value is computed as follows:

where denotes the number of pixels in the image and j is an intensity level in the range . The number of gray level i in the image represented by the histogram is , and the probability distribution of the intensity levels is . For bi-level segmentation, the classes are computed as follows:

where and are the cumulative probability distributions for and , respectively. and can be computed by the following:

The mean levels and must be computed that define the classes using Equation (24). Equation (25) is used for computing the Otsu-based between-class .

Additionally, and are the variance of and and are calculated as follows:

where and based on the values and , the objective function is defined by Equation (27). As a result, the optimization problem is decreased to determine the intensity level that maximizes Equation (27):

where the Otsu method variance for a given value is . EBO methods are utilized for determining the intensity level to maximize the objective function according to Equation (27). The objective or fitness function can be modulated for multiple thresholds as:

where illustrates a vector including MTH, and the variance is calculated by Equation (29).

is the mean of a class, is the occurrence probability, and j represents a specific class.

3. The Proposed mSAR

In this section, a new technique that improves SAR is explained in detail and called mSAR. The SAR algorithm is improved based on OBL as a local research strategy to reinforce the convergence of the algorithm by enhancing the variety of its solutions and avoiding the drawbacks of the random population. As a result, mSAR utilizes OBL in the initialization phase to improve the search process as follows:

where is a vector-maintaining solution obtained by using OBL. and are the lower and upper bounds of the component of a vector X. Finally, the phases below are given further detail on the phases of the proposed image thresholding model.

3.1. Initialization Phase in mSAR

In the first phase, the proposed model begins by reading the image from the benchmark dataset, calculating the histogram of the selected image, and calculating the probability distribution of the selected images by Equation (13). In the next step, the algorithm initializes the mSAR parameters, such as problem dimensions D, population size N, boundaries of the search space (, ), and the maximum iteration number T. The SAR, like many other optimization algorithms, begins by randomly initializing the first population and then storing the results. Following that, the OBL concept is used to compute the vector maintaining solution using Equation (30).

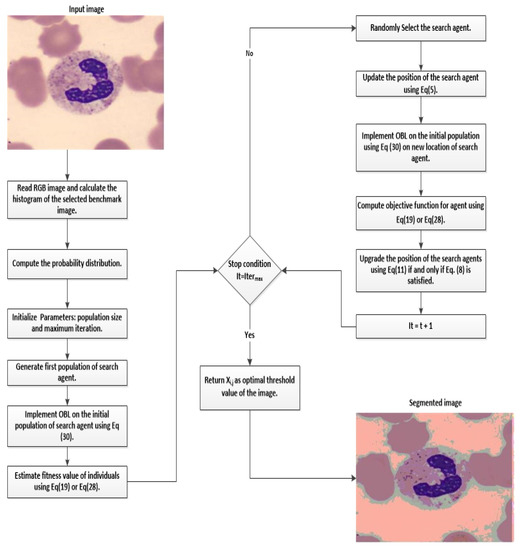

3.2. Optimization Phase

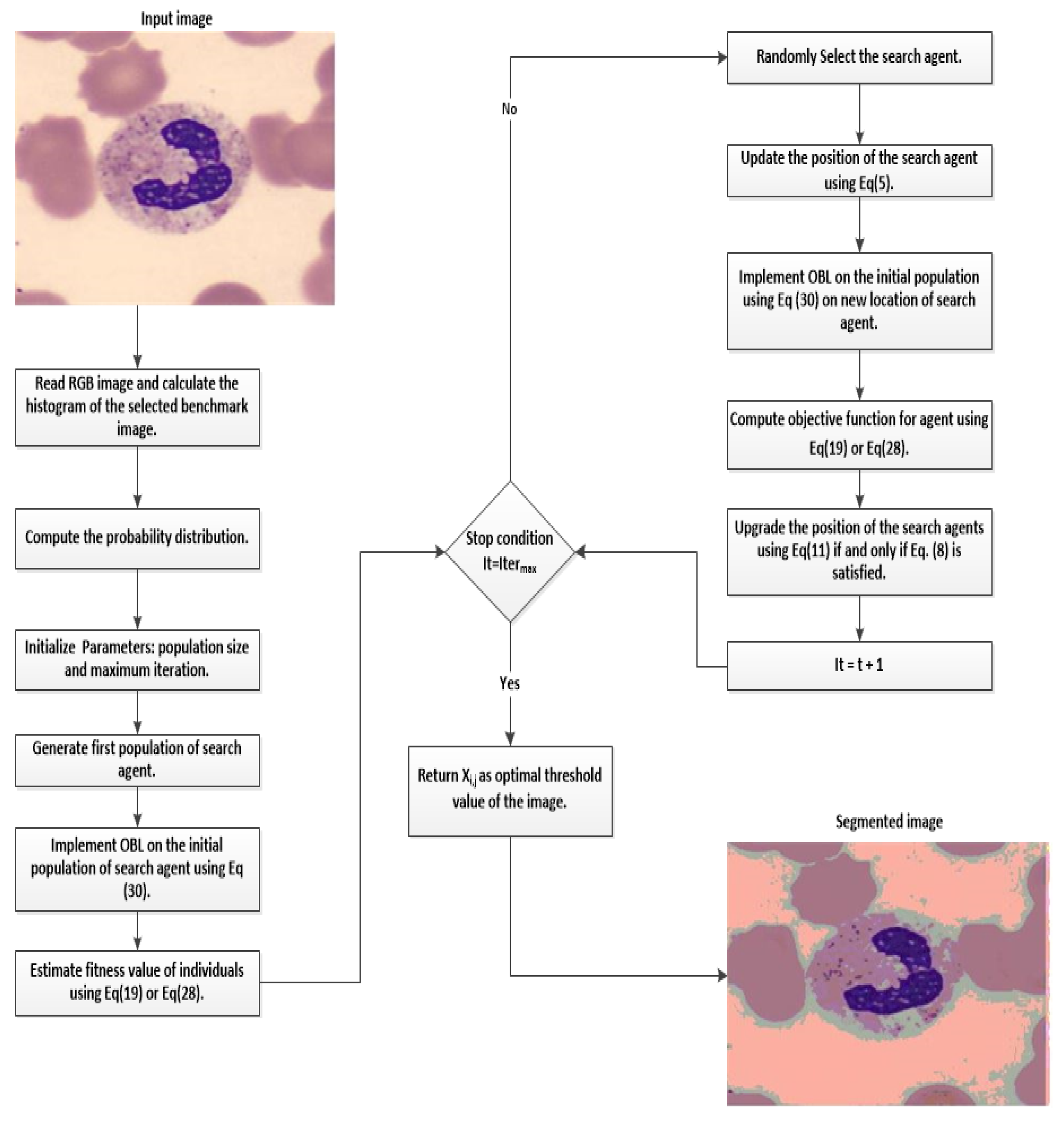

During this phase, we estimate the fitness values of the and populations to determine the optimal algorithmic threshold values. For updating the search agents’ locations, use the fitness value of the best threshold of the fuzzy entropy [52] or the Otsu [51] methods as the fitness function, and then compare the fitness values of and and store the optimal solution with the highest fitness. The optimization algorithm processes are split into two stages: the social and individual stages, as studied in the subsection on SAR. Following the implementation of the two phases of the optimization algorithm, each search agent updates its location repeatedly based on the best fitness values of and , using the fuzzy entropy Equation (19) or the Otsu Equation (28), as described in Algorithm 1. The flowchart of the proposed mSAR algorithm is represented in Figure 2.

Figure 2.

The flowchart of mSAR algorithm.

3.3. Final Phase of mSAR

After executing the optimization phase, fitness upgrade, memory storing, and search agents’ location upgrade using Equation (30), the proposed algorithm selects the optimal threshold values to generate the segmented image. The pseudo-code of the proposed algorithm is displayed in Algorithm 1.

| Algorithm 1 The proposed mSAR algorithm |

| Input: Set parameters values (, , , , ); |

| Output: Return the optimal solution with the optimal threshold values onto the image. |

| Generate the first population randomly with dimensions D. |

| Utilize Equation (30) to apply OBL on the first population and save outcomes in . |

| while do |

| for do |

| Estimate utilizing fuzzy entropy Equation (19) or Otsu Equation (28) and save outcomes in . |

| Estimate utilizing fuzzy entropy Equation (19) or Otsu Equation (28) and save outcomes in . |

| if then |

| end if |

| end for |

| Complete the memory saving. |

| if then |

| Upgrade nominee solutions by Equation (9). |

| else if then |

| Upgrade search agents’ location by Equation (11). |

| else if then |

| Upgrade nominee solutions by Equation (30). |

| end if |

| The optimal solution found so far will update. |

| for each candidate solution do |

| Estimate after upgrade by fuzzy entropy Equation (19) or Otsu Equation (28) and save outcomes in . |

| Estimate after upgrade by fuzzy entropy Equation (19) or Otsu Equation (28) and save outcomes in . |

| if then |

| Set |

| end if |

| end for |

| Execute memory storing and fitness updates. |

| Define the optimal value of search agents’ location. |

| Save the outcomes. |

| end while |

| Return the optimal solution with the optimal threshold values onto the image. |

3.4. Computational Complexity of mSAR

This subsection illustrates the computational complexity of the proposed mSAR. Firstly, the complexity of the initialization process can be described as , where N and D indicate the number of search agents and the dimension of the problem, respectively. During the initialization phase of the mSAR algorithm, sorting the search agent takes times. In the worst cases, the complexity of the social and individual phases is . However, the complexity of the abandoning clue and restart processes in the worst cases are . Furthermore, time complexity is required for mSAR to execute T of its main operations (initialization phase, social phase, abandoning clue, individual phase, restart strategy, and OBL). As a result, the mSAR computes the fitness of each agent with complexity as follows:

Overall, the total computational complexity of the proposed mSAR can be represented by .

4. Performance Evaluation of mSAR on CEC’20 Test Suit

4.1. Parameter Settings of CEC’2020

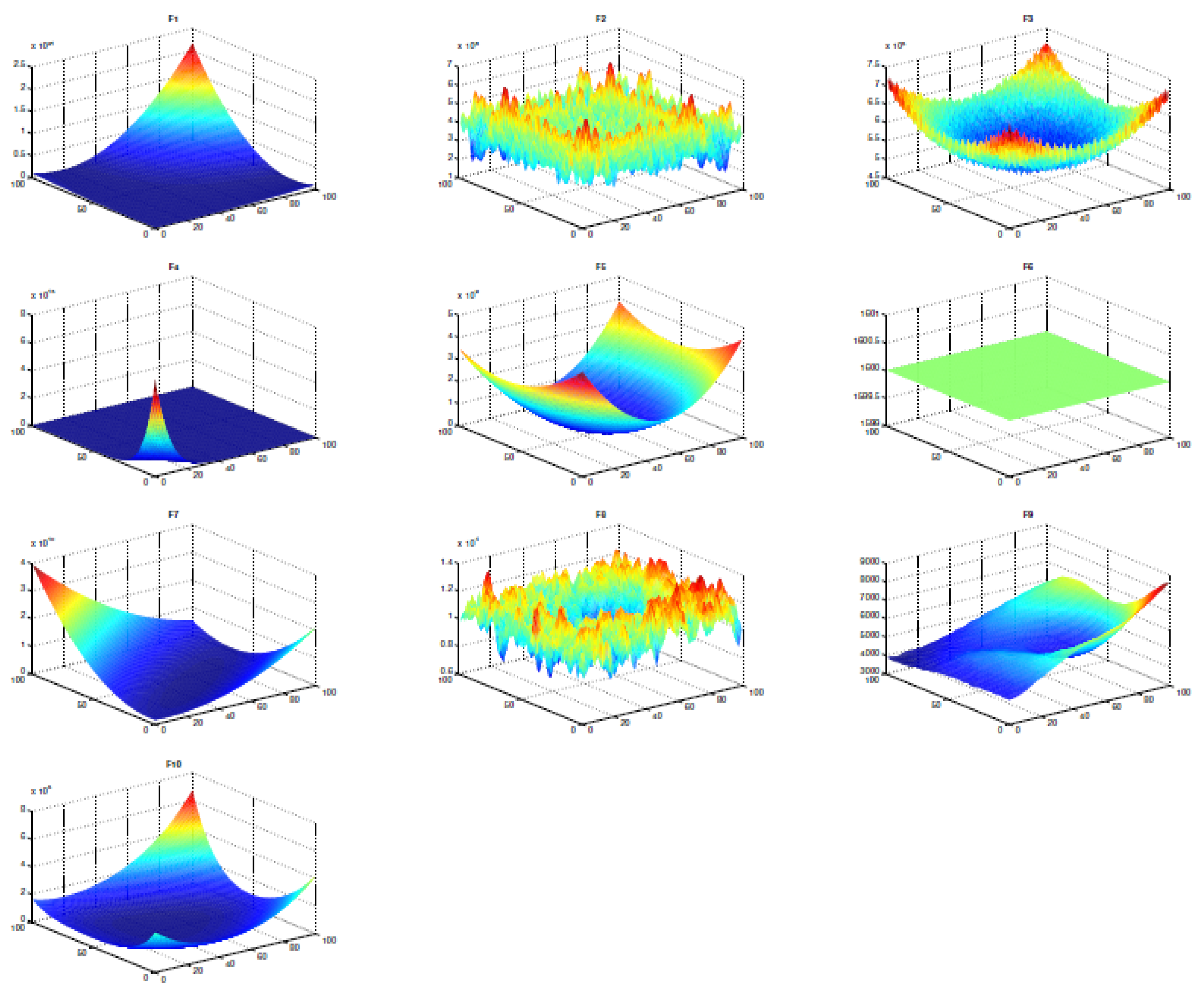

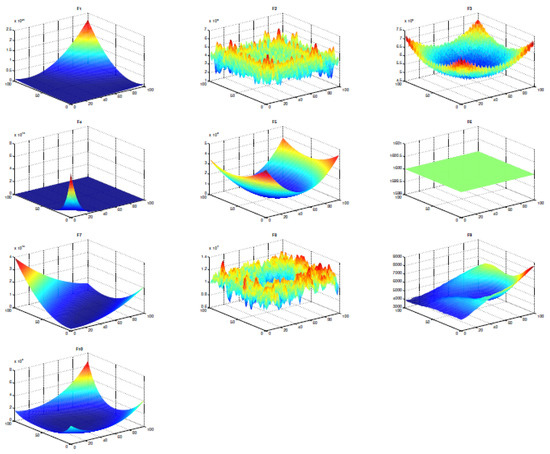

In this subsection, the evaluation of the proposed mSAR algorithm is introduced. Therefore, the proposed mSAR algorithm used the IEEE Congress on Evolutionary Computation (CEC) [53] as a test problem for estimating the algorithms’ performance. The performance of mSAR is estimated over the CEC’2020 benchmark functions. Firstly, this benchmark function includes 10 test problems and can be classified into four types. This benchmark function includes unimodal, multimodal, hybrid, and composition functions. The mathematical formulation and parameter-setting of the CEC’2020 benchmark test are shown in Table 1; ’Fi*’ indicates the optimal global value. A two-dimensional visualization of the CEC’2020 functions is shown in Figure 3 to explain the differences and the nature of each problem.

Table 1.

CEC’2020 benchmark functions.

Figure 3.

CEC’2020 benchmark functions in two-dimensional view.

4.2. Statistical Results Analysis

The performance of the proposed mSAR is estimated by utilizing the CEC’2020 benchmark test, which contains qualitative and quantitative metrics. The mean and standard deviation (STD) of optimal solutions obtained by the proposed algorithm and all other algorithms compared are quantitative metrics. Furthermore, the average fitness history, search history, and convergence curve are qualitative metrics. We utilized these qualitative metrics for estimating the performance of the proposed mSAR on the CEC’2020 benchmark test against seven well-known metaheuristic algorithms, namely LFD, HHO, SCA, EO, GSA, AOA, and the original SAR. The STD and mean of the optimum value produced from the proposed mSAR algorithm against other competing algorithms are illustrated in Table 2, and the optimum value of the mean and STD are minimum values in outcomes. According to the STD and mean results in Table 2, the proposed mSAR algorithm outperforms other competing algorithms on the CEC’2020 benchmark functions.

Table 2.

STD and mean of the optimum value obtained from the proposed mSAR algorithm against other competing algorithms on the CEC’2020 benchmark functions with .

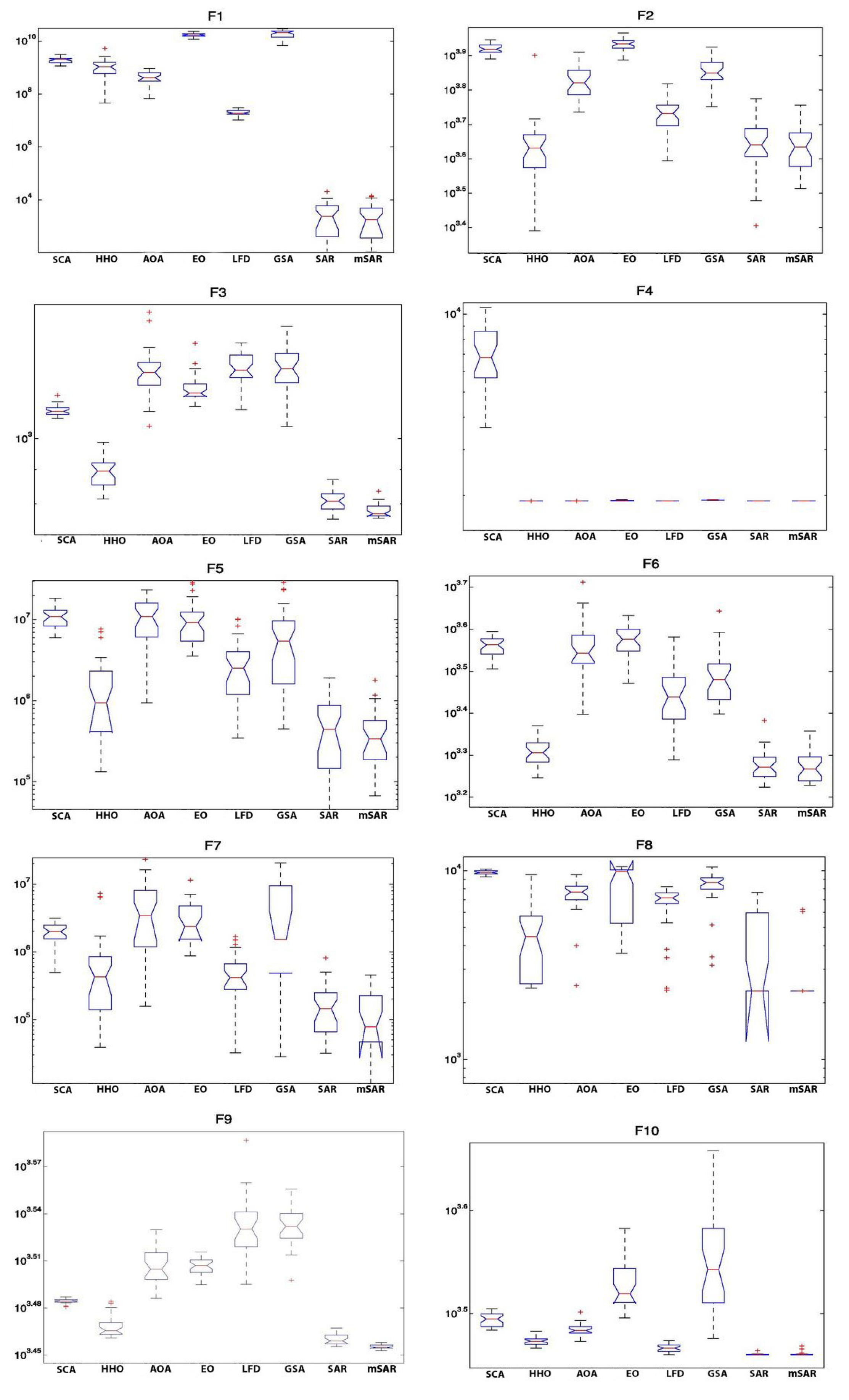

4.3. Boxplot Analysis of mSAR Algorithm

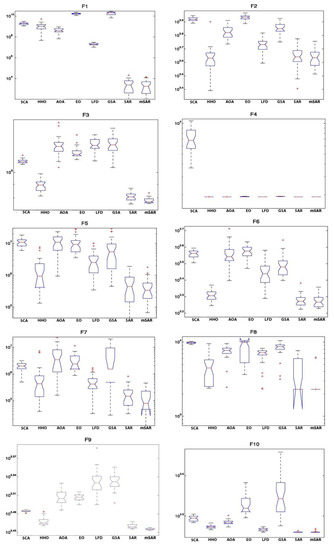

Boxplot analysis is utilized to represent the data distribution characteristics. The boxplot analysis indicates the quality of the optimizers’ solutions and is used for reporting data with a homogeneous variance and a normal distribution. Figure 4 illustrates the outcomes of the boxplot of the proposed mSAR algorithm compared with other competitive algorithms. The boxplot is an excellent plot for showing data distributions in quartiles. The maximum and minimum are the highest and lowest data points acquired by the algorithm, which are the whiskers’ edges. These quartiles are the lower and upper quartiles, which are determined by the ends of the rectangles, and a narrow boxplot indicates the highest agreement among data. The outcomes of the boxplot of the proposed mSAR algorithm with other competitive algorithms and the outcomes of the ten functions boxplot for D = 20 are illustrated in Figure 4. Indeed, the proposed mSAR algorithm outperforms other competing algorithms on the majority of the benchmark functions, but its performance on and is limited.

Figure 4.

The outcomes of the boxplot of the proposed mSAR algorithm with other competitive algorithms over CEC’2020 functions with .

4.4. Curves of Convergence of mSAR Algorithm

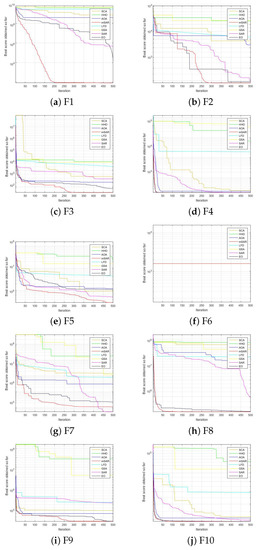

This subsection describes the convergence curves of the proposed SAR algorithm and other competing algorithms. The convergence curves of SCA, HHO, AOA, EO, LFD, GSA, SAR, and mSAR for the CEC’2020 functions are shown in Figure 5. Moreover, the mSAR algorithm reached a stable point for most functions and acquired optimal solutions for an algorithm. Therefore, most problems that need fast computation, such as online optimization problems, can be solved by the mSAR algorithm. The mSAR algorithm displayed stable behavior, and due to space limitations, its solutions converged quickly in most of the problems in which it was evaluated.

Figure 5.

The convergence curves of the proposed mSAR algorithm with other competitive algorithms over CEC’2020 functions with .

4.5. Qualitative Metrics

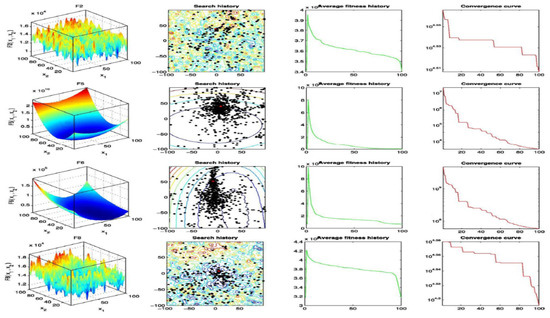

Even though previous outcome studies assured the proposed mSAR algorithms’ superior performance, more experiments and analyses would help us draw more definitive conclusions regarding the algorithms’ performance in solving real problems. The qualitative analysis of the mSAR algorithm is illustrated in Figure 6. The first and second columns in the figure describe the CEC’2020 benchmark functions in two-dimensional space and show the search history of the agent. The average fitness history over 350 iterations is shown in the third column, which explains the agents’ overall behavior and their contribution to locating the optimum solution. Most of the history curves gradually decrease according to average fitness history, indicating that the population improves with each iteration. The optimization history and convergence curve are shown in the fourth column. The optimization history is reduced according to Figure 6. This means that the solution is optimized during iterations until the best solution is found.

Figure 6.

Qualitative metrics on F2, F5, F6, and F8: functions in 2D view, search history, average fitness history, and convergence curve.

5. Application of mSAR: Blood Cells Images Segmentation

The experimental environment of the proposed mSAR algorithm is illustrated in this section. The test images used for the experiments are presented first, followed by the empirical setup. Then, we analyzed the outcomes acquired by mSAR in terms of PSNR, SSIM, FSIM, and fitness.

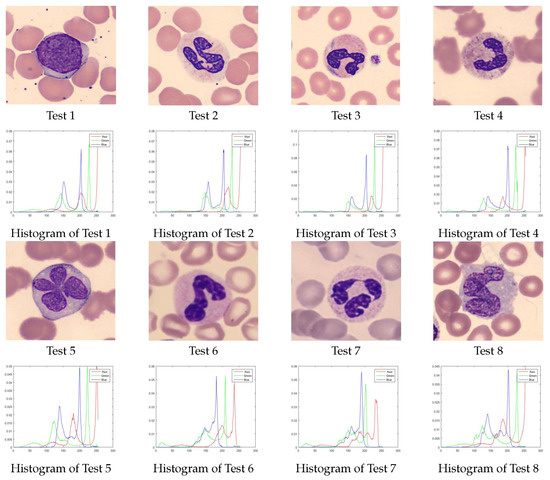

5.1. Benchmark Images

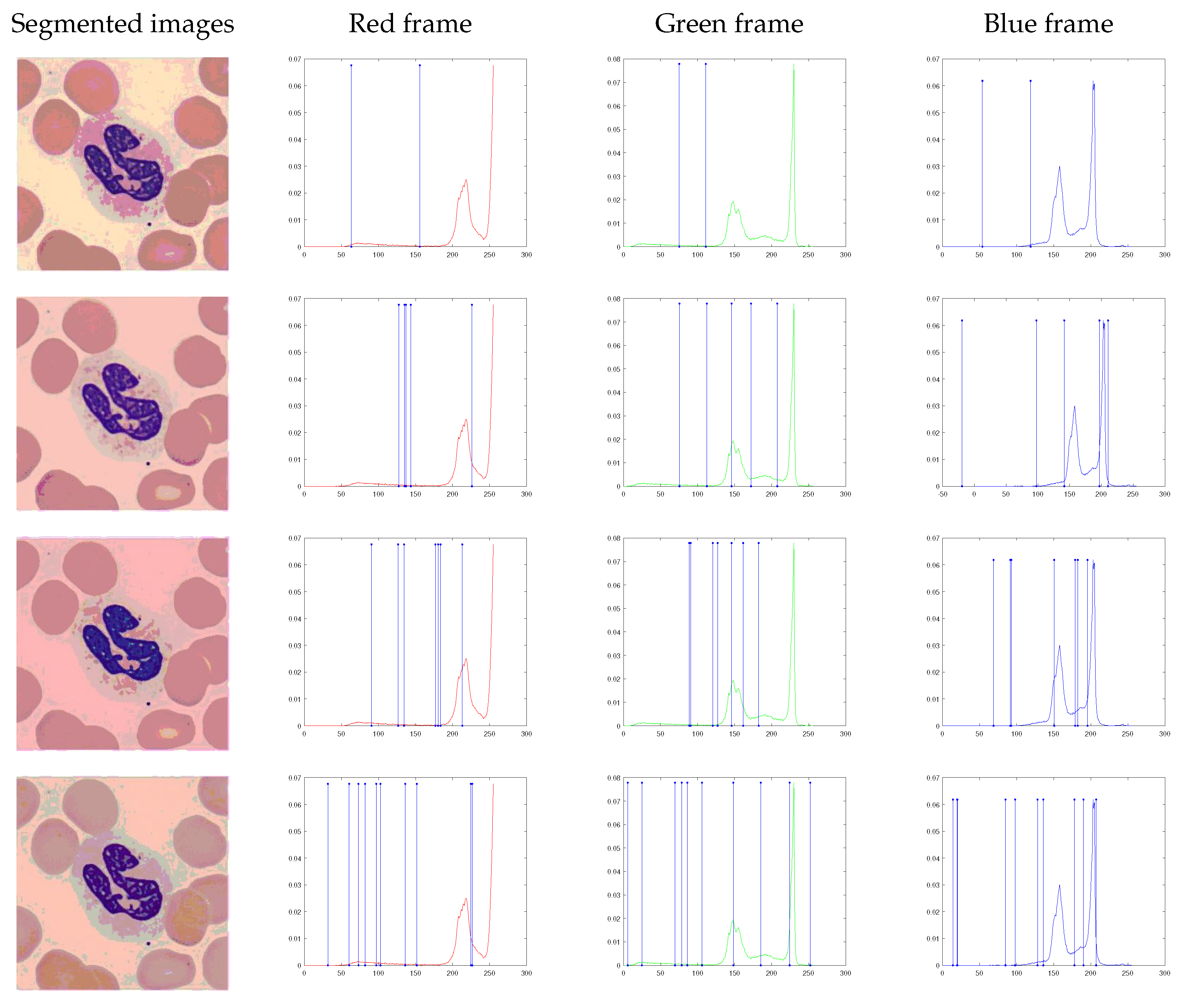

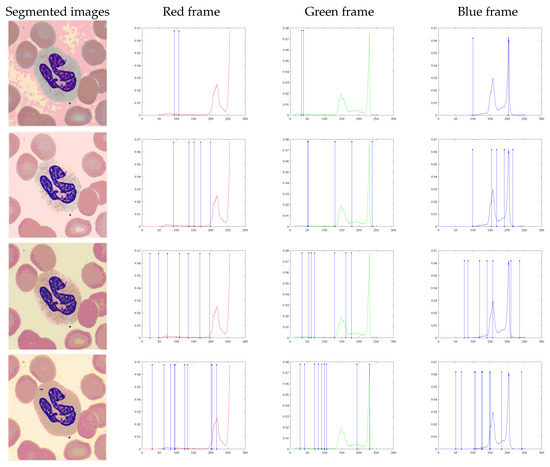

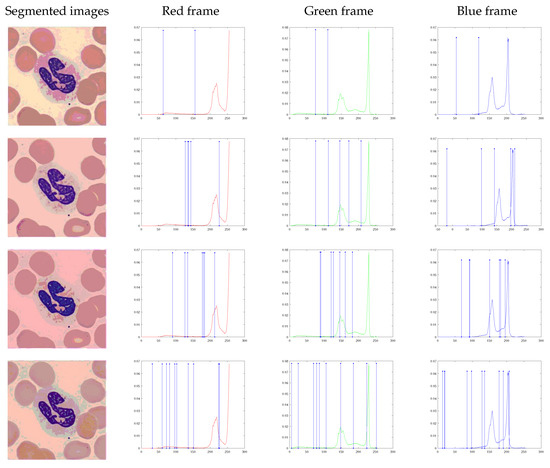

To examine the performance of the proposed mSAR algorithm and determine the best threshold values, eight images of the benchmark were used. These images were taken in the core laboratory at the Hospital Clinic of Barcelona during the diagnosis of malaria infection [54]. The images in the dataset are in the format JPG (RGB, 2400 × 1800 pixels). These images have different features based on their histograms, as observed in Figure 7. The purpose of selecting these images is to test the performance and quality of the segmented images.

Figure 7.

Set of test images and relative histograms.

5.2. Experimental Setup

This subsection compares the proposed mSAR algorithm to seven metaheuristic algorithms: LFD [8], HHO [37], SCA [55], EO [56], GSA [26], AOA [57], and the original SAR [38]. All experiments were implemented in Matlab 2015 and executed on an Intel Core I5 with 6 GB of RAM running in a Windows 8.1 64-bit environment. To achieve a fair comparison, all competitor algorithms executed 30 runs per test image, the maximum number of iterations was set to 350, and the population was 50. Each algorithm’s parameter settings were defined using standard criteria and information from previous literature (default values). All experiments were executed on images using various thresholds: are and according to related literature [58]. Table 3 presents the parameter settings of the mSAR algorithm and its value.

Table 3.

The parameter settings of the mSAR algorithm and its value.

5.3. Performance Metrics

Five metrics were used for evaluating the quality of the image and comparing the edges of the segmented images: Wilcoxon rank test, standard deviation (STD), peak signal-to-noise ratio (PSNR) [59], feature similarity (FSIM) [60], and structural similarity (SSIM) [61]. The algorithm becomes more stable when the value of STD is decreased by [62]. The STD can be defined as follows:

where n is the total number of pixels in the image, is the mean of pixels, and is the value of jth in the image.

5.3.1. Peak Signal-To-Noise Ratio (PSNR)

PSNR [59,60] is a metric utilized for estimating the performance of the segmented image and computing the difference between the original image and its segmented image, and defined as follows:

In Equation (33), I is the original image, and is the segmented image of size . The root-mean-squared error is .

5.3.2. Feature Similarity Index (FSIM)

FSIM [61] is another measure used to map the features and measure the similarities based on the internal features of the image. FSIM is computed by the following:

where the entire domain of the image is , and the value of is described as follows:

where the magnitude of the vector in on n is , and the amplitude’s local on scale n is . is the small positive value and .

5.3.3. Structural Similarity Index (SSIM)

The value of SSIM is higher when the original image will be better segmented because the structures in the two images match. SSIM is defined in Equation (36).

where the mean value of segmented image and the original image I are and , respectively. The standard deviation values of segmented image and the original image I are and , respectively. and are two constant numbers, and the covariance of I and is .

5.3.4. Wilcoxon Rank-Sum Test

The Wilcoxon rank-sum test is a non-parametric test that determines if two independent samples come from the same population distribution [63]; it is also utilized to compare the results obtained by algorithms. The test is constructed with two hypotheses in mind: the null hypothesis and the alternative hypothesis. According to the values of and , the value of the null hypothesis is true when (p > 0.05) or , but the value of the alternative hypothesis is true when (p < 0.05) or .

5.3.5. Friedman Mean Rank Test

The Friedman test is also a non-parametric statistical test utilized to find differences in treatments across three or more matched groups and evaluate the performance of the competitive algorithms. This statistical test is utilized for one-way repeated measures analysis of variance by the value of ranks and was generated by Milton Friedman [64]. The values of the mean ranked are computed by Friedman’s statistics [64]. Friedman’s statistics compare the critical values obtained from competitors’ algorithms to see whether the null hypothesis is rejected or not.

5.4. Results Analysis of the Proposed Algorithm Based on Fuzzy Entropy

This subsection discusses and analyzes the outcomes of the proposed algorithm mSAR using different numbers of thresholds []. The fuzzy entropy method is used in this section as an objective function to obtain optimal thresholds.

Figure A1 in Appendix A illustrates the resulting images of the mSAR algorithm with various numbers of thresholds for all the test images utilized in the experiments. Figure 8 illustrates the resulting images of the mSAR with a different number of thresholds over test image 2 and presents a graphical analysis of the thresholds of the segmented images. The optimal thresholding values obtained by the mSAR algorithm and the original SAR algorithm using the fuzzy entropy method are shown in Table A1 in Appendix A. The mean and STD outcomes of the fitness, PSNR, SSIM, and FSIM are illustrated in Table 4, Table 5, Table 6 and Table 7, respectively. When the mean value of an algorithm is high, the algorithm is efficient and accurate. Hwoever, when the value of the STD of an algorithm is low, the algorithm is more stable.

Figure 8.

Threshold outcomes of each layer of a color image using the mSAR algorithm with the fuzzy entropy method.

Table 4.

mSAR fuzzy entropy in terms of fitness values over all competed algorithms.

Table 5.

mSAR fuzzy entropy in terms of PSNR values over all competed algorithms.

Table 6.

mSAR fuzzy entropy in terms of SSIM values over all competed algorithms.

Table 7.

mSAR fuzzy entropy in terms of FSIM values over all competed algorithms.

Table 4 displays the mean and STD of the fitness outcomes for the test images using fuzzy entropy as an objective function. According to these outcomes, the proposed mSAR algorithm is in the first position with 22 higher fitness values in 32 experiments, followed by the original SAR in the second position with six experiments, while the SCA and EO obtained the optimal fitness values in four experiments, and HHO comes in the fourth position with two experiments. GSA is in the fifth position with only one experiment, and the remaining algorithms, LFD and AOA, obtained zero higher fitness values. The values of STD computed for the 32 independent outcomes for all benchmark images with a different number of thresholds are shown in Table 4, and the algorithm is more stable when the value of STD is lower.

The mean and STD of the PSNR outcomes for all test images are illustrated in Table 5. The mSAR algorithm comes in the first position in terms of the mean values of PSNR with 20 experiments, while SCA is in the second position with six experiments. The original SAR and AOA come in the third position in only four experiments, followed by HHO in the fourth position in three experiments. Finally, the worst result was obtained by EO, which obtained only one experiment, while GSA and LFD could not obtain the optimal PSNR value in any of the experiments. According to STD, the proposed mSAR comes in the first position, followed by the GSA in the second position, while SCA and HHO come in the third position, the LFD is in the fourth position, and the original SAR comes in the fifth position. Finally, AOA and EO are ranked sixth.

Table 6 displays the mean and STD outcomes of SSIM for all test images, the optimal outcomes obtained are displayed in bold. It can be noticed that the mSAR displayed superiority in MTH after obtaining optimal SSIM values for 20 cases from 32 experiments. The original SAR comes in the second position with seven experiments, while SCA comes in the third position with five experiments, followed by HHO and EO in the fourth position with three experiments, and AOA in the fifth position with two experiments. Finally, without any experiments, GSA and LFD are in the last position. In terms of the value of STD, the original SAR is the best algorithm because its value is lower in the majority of experiments. The SCA is in the second position, followed by HHO and mSAR, while the AOA comes in the fourth position with the lower value of the STD. The LFD is in the fifth position, followed by the GSA. Finally, the EO comes in last with the least stable algorithm. Where n is the total number of pixels in image, is the mean of pixels, and is the value of jth in the image.

In Table 7, the outcomes of the mean and STD values of FSIM obtained by the proposed mSAR and other competing algorithms are presented, and the optimal results are highlighted in bold. mSAR obtains the highest FSIM values and comes in the first position with 21 experiments, while SCA comes in second with six experiments, followed by the original SAR in the third position with five experiments. However, the HHO comes in the fourth position with four experiments, followed by the EO, which comes in the fifth position with only two experiments. Finally, LFD, GSA, and AOA cannot obtain the optimal FSIM value in any experiments. The mSAR algorithm is the best stable algorithm because its STD value is lower in most experiments, while the SAR comes in second, followed by the SCA algorithm. Then, GSA and HHO come in the fourth position, and LFD comes in the fifth position. Finally, the two most unstable algorithms are EO and AOA due to their high STD values in most experiments.

Finally, Table A2 in Appendix A illustrates the Wilcoxon rank-sum test for fitness using the fuzzy entropy method as an objective function. It is observed that there is a difference between the proposed mSAR algorithm and other competing algorithms during the execution of the Wilcoxon rank-sum test between mSAR and the following algorithms: LFD, HHO, SCA, EO, GSA, AOA, and SAR. This difference indicates that the proposed mSAR algorithm has undergone significant development. However, when compared to the EO, AOA, and SAR, it is clear that they have comparable behavior, as seen in the tables when or NaN values occur.

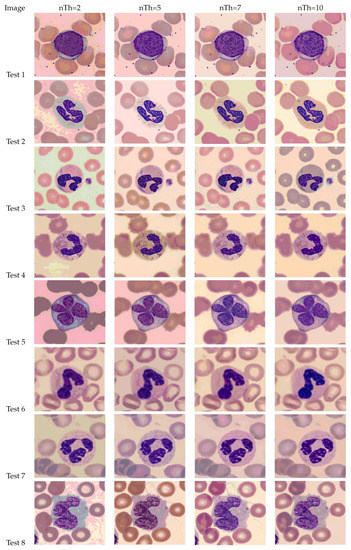

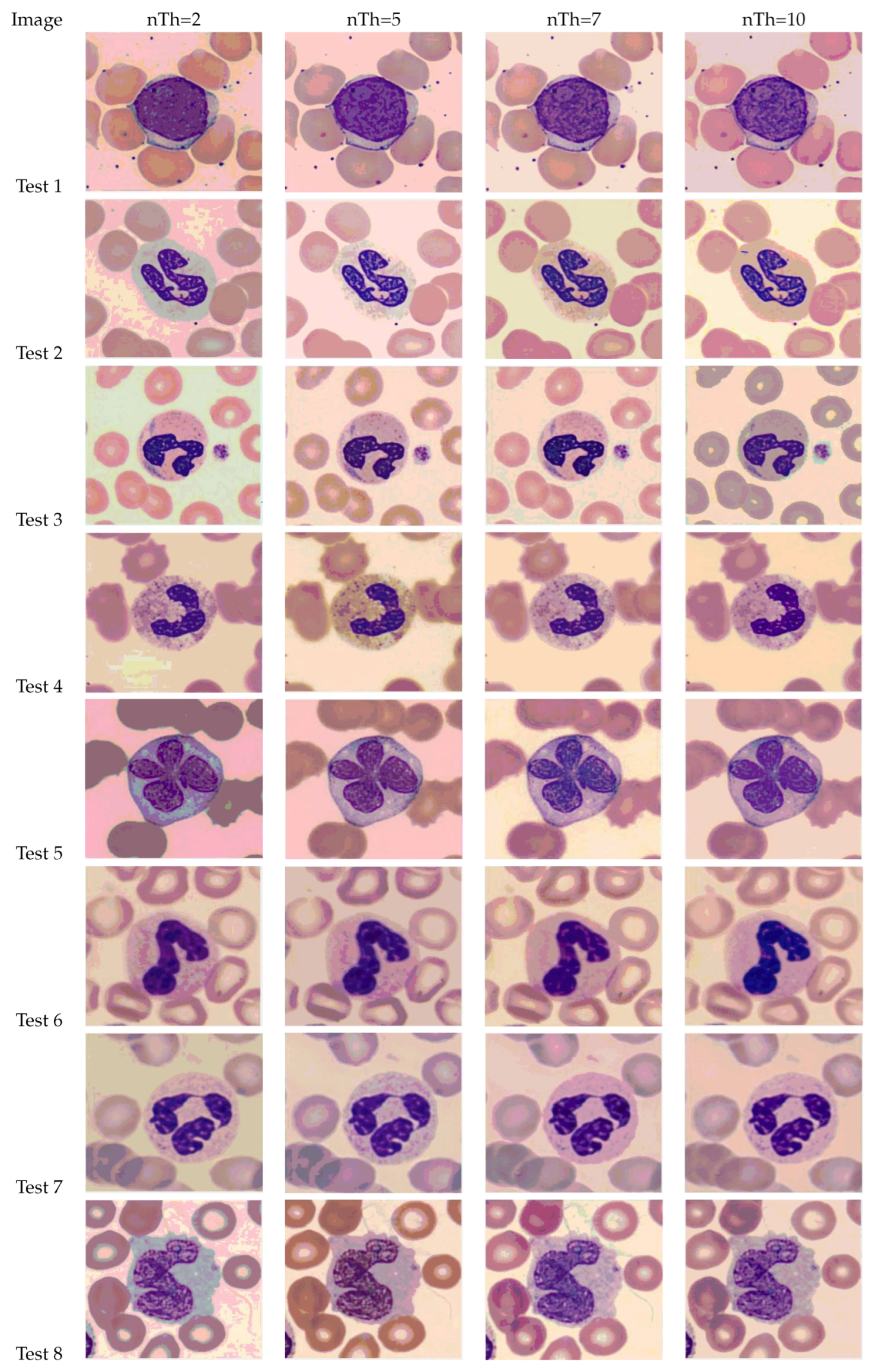

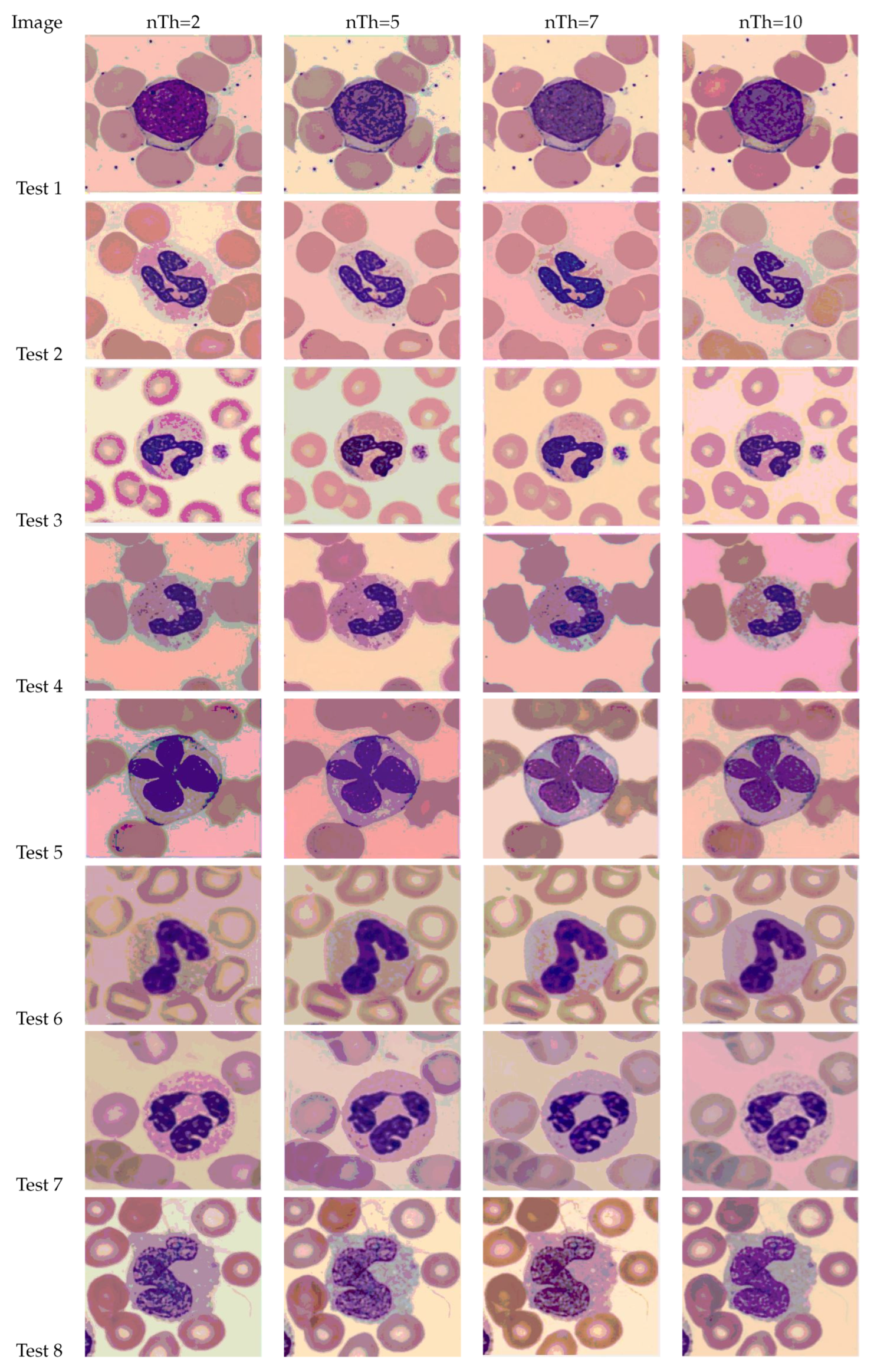

5.5. Results Analysis of the Proposed Algorithm Based on the Otsu Method

This section discusses and analyzes the outcomes of the proposed mSAR algorithm using different numbers of thresholds [nT h = 2, 5, 7, 10]. The Otsu method is utilized in this subsection as an objective function for obtaining the best thresholds. The resulting images of the mSAR algorithm and other completed algorithms with various numbers of thresholds for all the test images used in the experiments are shown in Figure A2 in Appendix A. Figure 9 illustrates the resulting images of the mSAR with a different number of thresholds over test image 2, and shows a graphical analysis of the thresholds of the segmented images. Table A3 of Appendix A illustrates the optimal thresholding values obtained by the mSAR algorithm and the original SAR algorithm using the Otsu method. Table 8, Table 9, Table 10 and Table 11 illustrate the mean and STD outcomes of the fitness, PSNR, SSIM, and FSIM, respectively.

Figure 9.

Threshold outcomes after executing mSAR on the Otsu method over the set of test images.

Table 8.

mSAR Otsu in terms of fitness values competing overall for algorithms.

Table 9.

mSAR Otsu in terms of PSNR values competed overall for algorithms.

Table 10.

mSAR Otsu in terms of SSIM values overall competed for algorithms.

Table 11.

mSAR Otsu in terms of FSIM values overall competed for algorithms.

The mean and STD of fitness outcomes obtained by the mSAR algorithm and other completed algorithms for all test images are shown in Table 8. The proposed mSAR algorithm comes in the first position with 22 higher fitness values in 32 experiments, followed by the original SAR in the second position with seven experiments, and HHO in the third position with four experiments. However, GSA comes in at the fourth position with three experiments, and SCA obtains the fifth position with only two experiments. The remaining algorithms, LFD, EO, and AOA, finish last with no experiments. The values of STD computed for each of the benchmark images with a different number of thresholds are shown in Table 8, and the algorithm is less stable when the value of STD is higher. According to the values of the STD, HHO comes in the first position, while the mSAR and SAR come in the second position, followed by LFD. AOA comes in the fourth position, and the GSA takes the fifth position. The final algorithms, SCA and EO, come in the last position.

Table 9 illustrates the Mean and STD of the PSNR outcomes for all test images, the optimal outcomes obtained are highlighted in bold. It is noticed that the mSAR displayed superiority in MTH after obtaining optimal PSNR values for 17 cases from 32 experiments. The original SAR obtains the second position with nine experiments, while HHO comes in the third position with seven experiments, followed by SCA in the fourth position with six experiments. However, the AOA and EO rank fifth with only one experiment, while the GSA and LFD come in last with no experiments. According to the values of STD, LFD is in the first position, followed by GSA. However, the original SAR comes in third place, while mSAR comes in fourth position. AOA comes in the fifth position, while the SCA and HHO come in the sixth position. In the end, the EO comes in the last position with the highest value of STD and a less stable algorithm.

In Table 10, the outcomes of the mean and STD values of SSIM obtained by the proposed mSAR and other completed algorithms are presented, and the optimal results are noted in bold. The outcomes indicate that mSAR is in the first position for twenty-one cases from thirty-two experiments in terms of the SSIM. The HHO is in the second position with eleven experiments, while the original SAR comes in the third position with four experiments. However, the EO obtains the fourth position with three experiments, followed by the LFD in the fifth position with only two experiments. Finally, the remaining algorithms, SCA, GSA, and AOA, come in the last position, without any experiments. The original SAR and mSAR algorithms are the best stable algorithms because their STD values are lower in most experiments, while GSA comes in the second position followed by AOA. However, SCA and HHO obtain the fourth position, and LFD comes in the fifth position. Finally, due to its high STD values in the majority of experiments, EO is the most unstable algorithm.

The mean and STD of the FSIM outcomes for all test images are displayed in Table 11, and the optimal outcomes are highlighted in bold. According to the outcomes of the FSIM, the EO comes in the first position with fifteen cases from thirty-two experiments, followed by mSAR in the second position with thirteen higher cases. HHO comes in the third position with six experiments, while the LFD is in the fourth position with five experiments. However, the original SAR obtained the fifth position with four experiments, and the SCA came in the sixth position with only three experiments. Finally, GSA and AOA come in last, without any experiments. According to the STD values, GSA comes in the first position, followed by the original SAR and mSAR in the second position. However, LFD obtains the third position, and AOA comes in the fourth position. EO comes in the fifth position, while the remaining algorithms, SCA and HHO, come in last, with less stable algorithms.

The outcomes of the Wilcoxon rank-sum test for fitness using the Otsu method as an objective function are shown in Table A4 of Appendix A. During execution of the Wilcoxon rank-sum test between mSAR and the following algorithms (LFD, HHO, SCA, EO, GSA, AOA, and SAR), it was noticed that there was a difference between the proposed mSAR algorithm and the other competing algorithms. This difference indicates that the proposed mSAR algorithm has undergone significant development. However, when compared to the EO and SAR, it is clear that they have comparable behavior, as noticed in tables with or NaN values. According to previous results, the results of the mSAR algorithm in the Fuzzy Entropy method are better than the results of the Otsu method, especially in . Additionally, the quality of segmented color images using the fuzzy entropy method is high, as shown in Figure 8.

5.6. The Pros and Cons of the mSAR Algorithm

This subsection discusses the benefits and downsides of the proposed mSAR algorithm. The main benefits of the proposed mSAR can be summarized as its ease of implementation and understanding. The proposed mSAR algorithm has proven superior in solving color image-segmentation problems when compared to competing algorithms and gives good outcomes in terms of the quality of the segmented images.Furthermore, the mSAR produces a high convergence speed, indicating that the mSAR avoids entrapping in local optima and successfully balances the interchange between exploration and exploitation phases, owing to the fast finding of the threshold values and the high accuracy of the outcomes. The main defect of the mSAR is that the proposed algorithm is simple but computationally expensive. Additionally, the outcomes of STD values are not good enough to compete with the other algorithms.

6. Conclusions and Future Work

This paper presents an improved version of the SAR algorithm utilizing the concept of the OBL technique called mSAR. OBL improves the searchability of the original SAR, enables it to avoid entrapping in local optima, and successfully balances between the exploration and exploitation phases. mSAR is utilized to segment blood cell images and solve the problems of multi-level thresholding for image segmentation. IEEE CEC’2020 benchmark functions are utilized to prove the sturdiness of mSAR, and the proposed mSAR is implemented on a real application and executed as a multi-level image segmentation tool for estimating its performance on a set of color blood images. The proposed algorithm utilized fuzzy entropy and Otsu methods as objective functions to determine the optimal thresholds during the segmentation processes. The outcomes of the experiment proved the superiority of the proposed mSAR algorithm in producing good segmentation performance to segment the blood images and solve the CEC’2020 benchmark functions compared with other optimization algorithms. In future work, the proposed mSAR can be utilized to track the size of blood cells and tackle different problems such as feature selection, parameter identification, and task scheduling.

Author Contributions

Supervision, E.H.H.; Conceptualization, E.H.H., N.A.S. and R.A.; methodology, E.H.H. and G.M.M.; software, E.H.H. and G.M.M.; validation, I.A.I., N.A.S., R.A. and Y.M.W.; formal analysis, E.H.H. and G.M.M.; investigation, E.H.H. and G.M.M.; resources, I.A.I., N.A.S., R.A. and Y.M.W.; data curation, E.H.H., G.M.M., I.A.I. and Y.M.W.; visualization, E.H.H., G.M.M., I.A.I., N.A.S., R.A. and Y.M.W.; writing—original draft preparation, E.H.H., G.M.M., I.A.I., N.A.S., R.A. and Y.M.W.; writing—review and editing, E.H.H., G.M.M., I.A.I., N.A.S., R.A. and Y.M.W.; funding acquisition, N.A.S. All authors discussed the results and approved the final paper. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by Princess Nourah bint Abdulrahman University Researchers Supporting Project number (PNURSP2023R323), Princess Nourah bint Abdulrahman University, Riyadh, Saudi Arabia.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

All data generated or analysed during this study are included in this published article [54].

Acknowledgments

The authors express their gratitude to Princess Nourah bint Abdulrahman University Researchers Supporting Project number (PNURSP2023R323), Princess Nourah bint Abdulrahman University, Riyadh, Saudi Arabia.

Conflicts of Interest

The authors declare that there is no conflict of interest. This article does not contain any studies with human participants or animals performed by any authors.

Appendix A. The Results of Optimal Threshold Values and the Wilcoxon Signed-Rank Test

The results of optimal threshold values and the Wilcoxon signed-rank over the set of test images test obtained by the mSAR algorithm and other competitive algorithms using Fuzzy Entropy and the Otsu method are as follows:

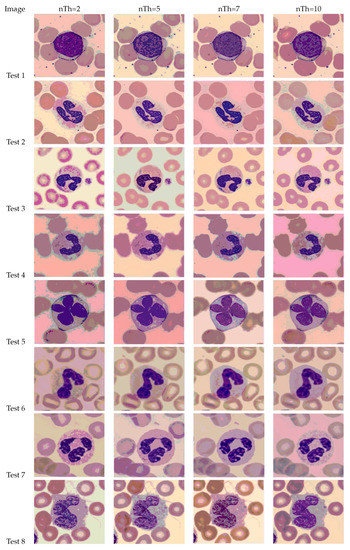

Figure A1.

Outcomes after executing mSAR on Fuzzy Entropy’s method over the set of test images.

Figure A1.

Outcomes after executing mSAR on Fuzzy Entropy’s method over the set of test images.

Table A1.

The optimal threshold values are obtained by the Fuzzy Entropy method.

Table A1.

The optimal threshold values are obtained by the Fuzzy Entropy method.

| mSAR | SAR | ||||||

|---|---|---|---|---|---|---|---|

| Test Image | nTh | Red | Green | Blue | Red | Green | Blue |

| Test 1 | 2 | 68 115 | 99 175 | 70 100 | 70 114 | 98 176 | 73 95 |

| 5 | 15 43 86 89 137 | 63 69 113 172 198 | 46 84 98 138 150 | 16 39 86 92 143 | 60 66 110 176 200 | 48 81 100 143 149 | |

| 7 | 53 97 113 149 189 196 210 | 76 103 118 134 165 195 213 | 60 107 109 112 139 209 246 | 50 85 120 150 189 200 210 | 70 109 112 130 156 199 230 | 56 110 114 116 125 201 238 | |

| 10 | 23 68 87 160 171 185 189 200 216 218 | 38 47 56 72 96 122 156 219 222 230 | 49 82 100 183 198 203 221 233 235 247 | 68 77 123 134 135 160 177 184 200 246 | 80 89 96 129 160 159 179 190 197 220 | 87 102 103 110 136 151 216 236 246 253 | |

| Test 2 | 2 | 93 107 | 40 44 | 100 205 | 92 108 | 41 42 | 99 205 |

| 5 | 91 136 151 170 198 | 50 51 129 178 239 | 99 154 168 191 215 | 85 130 150 166 202 | 45 63 125 178 237 | 92 140 162 186 211 | |

| 7 | 23 47 74 108 134 168 196 | 34 54 62 70 128 161 177 | 75 85 119 141 157 210 235 | 20 44 68 112 139 155 201 | 32 51 54 64 117 160 169 | 72 78 114 137 164 199 221 | |

| 10 | 29 63 82 94 95 123 132 201 203 216 | 28 41 70 81 91 98 98 105 194 230 | 50 66 104 107 125 127 149 149 183 241 | 30 63 75 98 99 123 142 200 210 220 | 25 34 66 88 91 100 120 132 178 226 | 46 67 104 107 132 146 166 172 185 232 | |

| Test 3 | 2 | 18 166 | 77 130 | 100 210 | 20 165 | 76 132 | 102 208 |

| 5 | 46 55 167 178 191 | 19 42 48 116 169 | 61 69 82 98 200 | 44 56 170 177 195 | 20 38 41 112 172 | 73 79 89 106 209 | |

| 7 | 16 35 74 109 165 178 189 | 83 113 127 160 181 185 190 | 96 130 139 159 215 236 239 | 18 35 76 112 150 177 190 | 82 113 125 156 182 184 192 | 98 136 146 160 211 213 240 | |

| 10 | 21 39 56 56 68 79 86 95 119 201 | 43 60 63 70 72 99 122 125 234 253 | 64 119 149 170 185 186 188 219 221 254 | 20 35 55 57 65 80 84 102 120 211 | 36 56 61 72 76 100 123 134 221 250 | 62 112 140 166 176 177 190 213 226 239 | |

| Test 4 | 2 | 23 98 | 69 112 | 35 105 | 23 96 | 70 111 | 31 107 |

| 5 | 32 36 97 119 181 | 73 163 170 227 243 | 138 174 193 196 206 | 33 36 100 121 175 | 69 162 168 221 244 | 135 180 193 201 209 | |

| 7 | 35 43 81 149 171 217 241 | 40 49 82 125 129 131 178 | 39 53 59 155 159 183 244 | 32 46 78 149 168 214 236 | 35 52 87 123 138 142 160 | 28 46 63 142 155 178 235 | |

| 10 | 17 23 24 27 28 71 93 122 156 201 | 56 107 107 142 163 203 208 217 219 241 | 61 68 93 115 155 188 196 197 223 244 | 14 20 24 27 30 76 92 122 155 216 | 56 103 116 142 166 209 208 218 220 241 | 65 73 93 111 145 188 193 200 223 240 | |

| Test 5 | 2 | 149 222 | 130 186 | 44 208 | 149 220 | 133 187 | 46 207 |

| 5 | 29 33 59 186 241 | 26 26 75 116 186 | 54 128 165 226 241 | 30 36 62 186 234 | 26 36 77 111 166 | 52 122 163 224 239 | |

| 7 | 11 15 63 202 204 232 240 | 36 93 97 98 106 115 160 | 31 31 68 76 89 106 162 | 16 23 63 222 204 221 231 | 38 93 106 111 123 115 169 | 31 38 77 87 90 110 156 | |

| 10 | 48 49 55 55 79 96 103 122 176 232 | 30 63 110 141 148 152 178 208 232 239 | 100 121 147 149 154 161 209 213 224 241 | 30 39 55 63 79 96 109 136 196 243 | 36 66 111 136 152 165 188 208 230 234 | 89 123 147 145 162 169 211 216 232 246 | |

| Test 6 | 2 | 156 172 | 76 136 | 42 127 | 156 173 | 75 136 | 42 128 |

| 5 | 29 48 78 92 180 | 38 73 138 145 221 | 60 78 153 202 236 | 23 38 81 106 176 | 36 71 132 139 214 | 54 74 146 211 232 | |

| 7 | 36 115 128 130 176 203 211 | 37 71 89 151 155 163 189 | 53 85 170 209 225 232 254 | 31 120 136 140 181 201 221 | 39 64 84 146 152 169 191 | 46 76 168 203 219 229 242 | |

| 10 | 16 35 53 61 64 73 75 196 211 226 | 64 95 125 133 145 192 198 215 218 238 | 32 35 53 81 149 170 171 224 228 240 | 13 38 55 69 74 79 89 203 216 236 | 58 88 118 146 150 192 203 219 222 240 | 31 38 55 78 151 168 176 226 239 245 | |

| Test 7 | 2 | 123 160 | 92 109 | 108 199 | 122 161 | 92 110 | 107 199 |

| 5 | 25 96 177 193 230 | 82 97 133 149 220 | 21 82 116 153 209 | 31 102 179 200 234 | 80 103 128 145 218 | 19 78 111 146 203 | |

| 7 | 23 34 34 46 113 142 231 | 68 137 139 146 149 164 250 | 19 28 79 113 118 156 159 | 19 33 35 42 111 138 230 | 66 132 133 144 148 162 243 | 23 35 77 111 121 155 162 | |

| 10 | 61 63 79 110 115 132 150 162 181 189 | 59 83 91 106 109 113 116 163 189 225 | 12 45 51 83 136 177 189 186 217 220 | 57 62 80 111 116 134 152 160 179 192 | 60 84 90 111 116 119 121 152 184 221 | 11 41 48 78 126 163 179 192 213 224 | |

| Test 8 | 2 | 89 142 | 53 211 | 86 177 | 88 141 | 51 142 | 87 177 |

| 5 | 54 142 178 228 245 | 76 108 200 248 251 | 68 86 91 106 132 | 52 138 176 223 239 | 71 99 189 239 248 | 67 83 89 111 128 | |

| 7 | 43 43 64 92 108 141 153 | 42 108 134 172 173 184 245 | 16 29 129 138 143 192 215 | 38 45 66 92 111 141 155 | 42 111 128 172 181 192 234 | 19 36 129 146 155 191 211 | |

| 10 | 14 34 63 106 137 171 187 203 212 241 | 26 43 111 158 173 196 213 223 233 239 | 39 48 66 94 142 158 161 190 206 209 | 11 33 62 102 133 164 181 212 216 237 | 31 43 105 146 177 196 219 223 240 249 | 28 44 66 100 144 162 169 201 211 235 | |

Table A2.

Comparison of the p-value and H-value obtained by the Wilcoxon signed-rank test between the pairs of mSAR vs LFD, mSAR vs HHO, mSAR vs SCA, mSAR vs EO, mSAR vs GSA, and mSAR vs AOA for fitness outcomes using Fuzzy Entropy’s method.

Table A2.

Comparison of the p-value and H-value obtained by the Wilcoxon signed-rank test between the pairs of mSAR vs LFD, mSAR vs HHO, mSAR vs SCA, mSAR vs EO, mSAR vs GSA, and mSAR vs AOA for fitness outcomes using Fuzzy Entropy’s method.

| LFD | HHO | SCA | EO | GSA | AOA | SAR | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Test Image | nTh | P | H | P | H | P | H | P | H | P | H | P | H | P | H |

| Test 1 | 2 | 6.0277E-13 | 1 | 6.1441E-07 | 1 | 1.3234E-06 | 1 | NaN | 0 | 3.5515E-05 | 1 | 1.8168E-04 | 1 | 3.2683E-07 | 1 |

| 5 | 2.8784E-14 | 1 | 2.9055E-01 | 0 | 1.0896E-10 | 1 | NaN | 0 | 6.6496E-05 | 1 | NaN | 0 | NaN | 0 | |

| 7 | 7.6340E-01 | 0 | 7.7059E-11 | 1 | 1.1971E-01 | 0 | 1.5134E-14 | 1 | 6.3305E-01 | 0 | NaN | 0 | 3.6698E-01 | 0 | |

| 10 | 6.0867E-16 | 1 | 3.4468E-08 | 1 | 1.3333E-16 | 1 | 1.3664E-14 | 1 | 1.5773E-07 | 1 | 2.8047E-10 | 1 | NaN | 0 | |

| Test 2 | 2 | 2.8843E-14 | 1 | 3.4468E-01 | 0 | 3.4228E-11 | 1 | NaN | 0 | 8.7150E-10 | 1 | 1.5045E-03 | 1 | NaN | 0 |

| 5 | 3.7658E-07 | 1 | NaN | 0 | 6.0880E-06 | 1 | 1.3284E-01 | 0 | 8.9271E-13 | 1 | 1.7487E-11 | 1 | 1.6951E-01 | 0 | |

| 7 | 7.1903E-01 | 0 | 1.8322E-06 | 1 | 6.3259E-09 | 1 | 1.6634E-14 | 1 | 2.8648E-01 | 0 | 8.7888E-05 | 1 | 2.1346E-01 | 0 | |

| 10 | 2.8784E-14 | 1 | 9.6534E-08 | 1 | NaN | 0 | 1.4164E-14 | 1 | 6.2125E-15 | 1 | 2.0439E-05 | 1 | 1.5679E-07 | 1 | |

| Test 3 | 2 | NaN | 0 | NaN | 0 | 1.9354E-11 | 1 | NaN | 0 | 3.7768E-07 | 1 | 2.2483E-07 | 1 | 1.5843E-04 | 1 |

| 5 | 2.7230E-01 | 0 | 2.1070E-12 | 1 | 1.9354E-06 | 1 | 1.3808E-01 | 0 | 2.1030E-01 | 0 | 5.5186E-12 | 1 | 1.4070E-08 | 1 | |

| 7 | 8.3963E-15 | 1 | 2.3017E-10 | 1 | 5.3941E-01 | 0 | 1.1664E-14 | 1 | 8.6481E-07 | 1 | 9.8562E-15 | 1 | 3.3336E-01 | 0 | |

| 10 | 3.0718E-13 | 1 | 9.7777E-08 | 1 | 1.4390E-09 | 1 | 1.4315E-14 | 1 | 1.1266E-01 | 0 | 8.3233E-14 | 1 | 3.3336E-01 | 0 | |

| Test 4 | 2 | 3.8096E-08 | 1 | 9.3557E-01 | 0 | 5.9317E-01 | 0 | 1.5765E-14 | 1 | 6.2876E-01 | 0 | NaN | 0 | NaN | 0 |

| 5 | 3.7658E-06 | 1 | 3.8424E-12 | 1 | 1.0573E-06 | 1 | 1.3664E-01 | 0 | 3.9056E-08 | 1 | 4.0878E-07 | 1 | NaN | 0 | |

| 7 | 4.0161E-10 | 1 | 1.7406E-10 | 1 | 1.3620E-01 | 0 | 1.3646E-15 | 1 | 8.1116E-05 | 1 | 3.3498E-03 | 1 | NaN | 0 | |

| 10 | 6.5873E-16 | 1 | 9.4370E-09 | 1 | 1.3333E-16 | 1 | 1.6634E-15 | 1 | 1.8348E-10 | 1 | 1.6465E-15 | 1 | 1.4069E-01 | 0 | |

| Test 5 | 2 | NaN | 0 | NaN | 0 | NaN | 0 | NaN | 0 | 2.3713E-06 | 1 | NaN | 0 | NaN | 0 |

| 5 | 8.8756E-06 | 1 | 2.5657E-10 | 1 | 3.9604E-10 | 1 | NaN | 0 | 2.2425E-10 | 1 | 4.4022E-06 | 1 | 1.9822E-01 | 0 | |

| 7 | 4.5622E-01 | 0 | 1.9040E-10 | 1 | 2.6701E-10 | 1 | 3.1557E-05 | 1 | 6.3412E-01 | 0 | 1.1344E-08 | 1 | 1.8464E-01 | 0 | |

| 10 | 2.8784E-01 | 0 | 3.8776E-01 | 0 | 3.7095E-13 | 1 | 1.3019E-15 | 1 | 8.7232E-12 | 1 | 1.7373E-15 | 1 | 1.8024E-06 | 1 | |

| Test 6 | 2 | 2.8603E-01 | 0 | 1.1192E-01 | 0 | 8.8169E-01 | 0 | 2.1092E-14 | 1 | 1.0730E-10 | 1 | 2.3619E-10 | 1 | 2.3411E-01 | 0 |

| 5 | 2.7077E-09 | 1 | 1.1192E-14 | 1 | 8.8169E-10 | 1 | 2.1092E-01 | 0 | 1.0730E-07 | 1 | 2.4459E-01 | 0 | 3.2202E-01 | 0 | |

| 7 | 6.1778E-15 | 1 | 8.0918E-16 | 1 | 2.1505E-14 | 1 | NaN | 0 | 4.5494E-01 | 0 | 2.3408E-12 | 1 | 2.5595E-01 | 0 | |

| 10 | 1.7293E-16 | 1 | 8.6143E-08 | 1 | 2.7239E-18 | 1 | NaN | 0 | 1.1159E-01 | 0 | 6.4539E-01 | 0 | 2.5546E-01 | 0 | |

| Test 7 | 2 | 2.8653E-08 | 1 | 3.9472E-01 | 0 | 2.8673E-01 | 0 | 1.5765E-14 | 1 | 6.2876E-07 | 1 | 1.7835E-05 | 1 | 2.5459E-01 | 0 |

| 5 | 2.7077E-09 | 1 | 3.8428E-06 | 1 | 1.3512E-06 | 1 | 1.0111E-05 | 1 | 2.3713E-03 | 1 | 9.6307E-03 | 1 | 2.8447E-05 | 1 | |

| 7 | 1.2173E-12 | 1 | 1.5557E-13 | 1 | 4.7489E-15 | 1 | 1.4366E-14 | 1 | 1.1052E-08 | 1 | 9.3744E-08 | 1 | 3.2304E-06 | 1 | |

| 10 | 2.9808E-13 | 1 | 2.0897E-01 | 0 | 1.5107E-15 | 1 | 1.6634E-01 | 0 | 9.0344E-01 | 0 | 2.7644E-12 | 1 | NaN | 0 | |

| Test 8 | 2 | 3.9119E-10 | 1 | 3.8428E-08 | 1 | NaN | 0 | NaN | 0 | 5.7833E-03 | 1 | NaN | 0 | NaN | 0 |

| 5 | 3.9484E-05 | 1 | 3.8428E-05 | 1 | NaN | 0 | 1.4624E-14 | 1 | 8.0258E-06 | 1 | NaN | 0 | NaN | 0 | |

| 7 | 2.6925E-07 | 1 | 1.8575E-01 | 0 | 1.2903E-01 | 0 | NaN | 0 | 6.3841E-01 | 0 | 1.0330E-01 | 0 | 3.3336E-01 | 0 | |

| 10 | 1.7407E-01 | 0 | 1.0298E-13 | 1 | 4.5518E-16 | 1 | 1.4318E-15 | 1 | 1.5987E-15 | 1 | NaN | 0 | NaN | 0 | |

Figure A2.

Outcomes after executing mSAR on the Otsu method over the set of test images.

Figure A2.

Outcomes after executing mSAR on the Otsu method over the set of test images.

Table A3.

The optimal threshold values are obtained by the Otsu method.

Table A3.

The optimal threshold values are obtained by the Otsu method.

| mSAR | SAR | ||||||

|---|---|---|---|---|---|---|---|

| Test Image | nTh | Red | Green | Blue | Red | Green | Blue |

| Test 1 | 2 | 70 167 | 79 194 | 101 215 | 72 170 | 88 192 | 71 170 |

| 5 | 215 136 153 182 182 | 82 116 137 182 232 | 47 112 128 150 209 | 215 133 150 182 120 | 82 120 141 182 230 | 50 110 131 150 210 | |

| 7 | 71 93 127 174 180 190 212 | 74 85 85 152 180 245 254 | 78 95 117 141 201 217 232 | 73 92 165 174 183 190 212 | 73 85 78 150 180 240 254 | 78 98 110 117 201 217 230 | |

| 10 | 65 77 132 134 135 162 177 184 198 255 | 75 84 97 129 158 159 179 188 197 218 | 87 102 103 107 135 151 231 238 246 250 | 68 77 123 134 135 160 177 184 200 246 | 80 89 96 129 160 159 179 190 197 220 | 87 102 103 110 136 151 216 236 246 253 | |

| Test 2 | 2 | 63 155 | 75 115 | 154 165 | 65 145 | 73 118 | 150 162 |

| 5 | 127 135 136 143 226 | 75 112 145 172 207 | 20 98 141 197 210 | 130 135 142 151 230 | 76 139 148 182 210 | 46 105 160 200 213 | |

| 7 | 90 126 135 176 180 183 213 | 88 90 120 127 145 162 183 | 70 91 120 127 145 161 182 | 92 121 142 180 186 187 218 | 86 94 117 122 147 167 186 | 73 94 127 130 144 167 188 | |

| 10 | 31 60 73 82 96 105 136 151 224 230 | 13 28 71 79 89 108 148 185 224 251 | 19 23 23 85 97 129 135 178 190 207 | 33 63 76 83 98 104 133 151 226 234 | 14 28 73 79 88 108 164 185 228 254 | 23 27 23 87 97 129 137 178 196 210 | |

| Test 3 | 2 | 40 174 | 15 106 | 15 123 | 38 170 | 18 109 | 13 128 |

| 5 | 28 112 117 145 189 | 73 87 94 126 191 | 43 60 79 80 153 | 29 112 117 140 195 | 72 90 95 130 194 | 43 58 96 103 169 | |

| 7 | 23 48 96 133 213 217 217 | 17 82 86 93 143 175 213 | 13 29 48 68 83 113 119 | 24 49 101 145 177 200 216 | 35 37 46 186 188 196 216 | 19 36 56 123 163 192 230 | |

| 10 | 33 44 59 60 65 143 147 192 239 245 | 64 67 68 70 86 142 145 162 180 223 | 60 73 98 112 119 132 155 158 176 239 | 35 60 60 78 102 146 196 200 210 245 | 64 69 70 90 99 104 168 189 200 229 | 63 71 76 98 165 173 190 209 230 243 | |

| Test 4 | 2 | 39 176 | 39 142 | 49 155 | 43 177 | 40 144 | 53 156 |

| 5 | 28 116 120 145 189 | 101 114 121 156 223 | 44 61 77 82 154 | 25 120 149 163 203 | 100 123 136 160 241 | 38 56 68 93 159 | |

| 7 | 62 73 104 117 119 135 198 | 40 49 130 142 145 186 250 | 60 85 88 100 173 179 234 | 63 109 132 140 189 206 233 | 43 53 120 156 190 206 230 | 68 77 89 106 163 176 204 | |

| 10 | 10 16 48 73 78 138 147 150 239 245 | 46 60 67 73 119 176 179 219 169 215 | 58 73 98 112 119 132 155 158 223 258 | 13 19 49 76 79 145 149 180 201 246 | 42 56 86 146 159 169 180 210 233 243 | 60 73 90 101 120 136 163 180 206 239 | |

| Test 5 | 2 | 40 109 | 36 86 | 80 148 | 46 110 | 37 84 | 78 148 |

| 5 | 24 68 73 160 179 | 33 39 159 161 183 | 63 111 128 149 220 | 26 71 83 86 183 | 30 40 160 168 194 | 68 100 130 145 218 | |

| 7 | 29 41 68 89 98 108 143 | 46 58 103 106 169 209 246 | 89 99 100 113 126 240 254 | 32 41 76 85 93 110 144 | 40 55 102 100 163 210 241 | 90 103 110 123 180 235 239 | |

| 10 | 53 66 69 76 87 126 196 210 214 223 | 29 55 63 79 106 173 179 196 199 226 | 93 106 116 117 120 148 153 163 242 242 | 55 66 70 80 91 112 196 215 220 223 | 34 58 63 75 110 173 177 196 200 229 | 92 110 116 120 123 156 163 166 249 250 | |

| Test 6 | 2 | 39 176 | 41 142 | 48 155 | 41 179 | 43 156 | 49 159 |

| 5 | 32 112 120 148 187 | 103 115 123 158 223 | 43 59 79 82 153 | 34 113 123 186 193 | 108 132 142 169 230 | 45 63 82 86 160 | |

| 7 | 83 105 129 143 170 181 238 | 103 115 129 198 203 230 233 | 23 80 110 116 136 190 230 | 86 100 124 140 163 178 235 | 102 113 132 200 206 241 245 | 25 79 112 119 143 201 248 | |

| 10 | 15 66 69 76 87 126 196 210 214 223 | 35 73 83 96 129 148 160 200 241 242 | 15 46 64 69 158 167 189 189 211 232 | 18 62 71 80 91 130 200 214 216 220 | 37 70 79 87 123 152 172 206 230 254 | 18 53 57 79 146 163 182 184 210 229 | |

| Test 7 | 2 | 70 94 | 109 126 | 88 140 | 73 96 | 112 132 | 87 143 |

| 5 | 49 76 165 205 205 | 81 86 128 162 210 | 24 69 133 139 220 | 42 80 168 201 206 | 84 93 134 160 215 | 25 70 130 142 217 | |

| 7 | 65 92 129 138 149 163 216 | 30 86 104 130 131 132 205 | 16 86 104 120 139 145 198 | 62 89 124 135 152 164 210 | 29 84 102 129 138 136 209 | 18 89 100 118 141 149 201 | |

| 10 | 56 58 76 126 135 162 183 186 221 238 | 34 46 76 79 130 149 172 189 236 252 | 54 62 64 64 141 142 158 198 228 253 | 58 60 81 130 137 164 189 189 222 240 | 30 41 71 80 129 151 170 184 232 248 | 55 64 69 88 146 152 162 203 230 249 | |

| Test 8 | 2 | 63 203 | 32 131 | 43 149 | 68 205 | 34 130 | 46 142 |

| 5 | 31 116 121 143 189 | 73 86 93 134 196 | 45 62 81 83 155 | 28 113 136 145 193 | 76 92 99 124 201 | 52 65 95 90 193 | |

| 7 | 83 95 125 143 139 152 215 | 107 120 136 198 204 234 240 | 49 57 83 109 136 198 230 | 81 94 119 148 169 170 229 | 104 116 120 190 208 228 243 | 53 63 89 117 140 212 229 | |

| 10 | 29 68 95 99 117 128 148 176 221 238 | 49 68 104 112 119 129 158 163 236 252 | 38 47 49 56 94 99 112 149 228 253 | 31 65 93 106 120 139 143 172 220 249 | 56 87 100 111 139 142 179 184 210 238 | 32 54 55 68 103 123 130 168 180 241 | |

Table A4.

Comparison of the p-value and H-value obtained by the Wilcoxon signed-rank test between the pairs of mSAR vs LFD, mSAR vs HHO, mSAR vs SCA, mSAR vs EO, mSAR vs GSA, and mSAR vs AOA for fitness outcomes using the Otsu method.

Table A4.

Comparison of the p-value and H-value obtained by the Wilcoxon signed-rank test between the pairs of mSAR vs LFD, mSAR vs HHO, mSAR vs SCA, mSAR vs EO, mSAR vs GSA, and mSAR vs AOA for fitness outcomes using the Otsu method.

| LFD | HHO | SCA | EO | GSA | AOA | SAR | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Test Image | nTh | P | H | P | H | P | H | P | H | P | H | P | H | P | H |

| Test 1 | 2 | 5.4624E-10 | 1 | 5.5908E-01 | 0 | 1.2044E-01 | 0 | NaN | 0 | 3.4391E-03 | 1 | 1.6672E-01 | 0 | 2.9745E-01 | 0 |

| 5 | 2.6084E-11 | 1 | 2.6439E-01 | 0 | 9.9165E-06 | 1 | 1.5636E-13 | 1 | 6.4391E-01 | 0 | 2.2570E-06 | 1 | 3.0340E-07 | 1 | |

| 7 | 6.9180E-10 | 1 | 7.0120E-06 | 1 | 1.0895E-08 | 1 | 1.5306E-13 | 1 | 6.1301E-02 | 0 | 7.6170E-03 | 1 | 3.3399E-01 | 0 | |

| 10 | 5.5159E-13 | 1 | 3.1364E-01 | 0 | 1.2135E-11 | 1 | 1.5346E-13 | 1 | 1.5273E-05 | 1 | 2.5737E-09 | 1 | NaN | 0 | |

| Test 2 | 2 | 2.6137E-11 | 1 | 3.1364E-01 | 0 | 3.1152E-06 | 1 | 1.5300E-01 | 0 | 8.4391E-01 | 0 | 1.3807E-01 | 0 | 1.5125E-01 | 0 |

| 5 | 3.4126E-01 | 0 | 1.5526E-03 | 1 | 5.5409E-01 | 0 | NaN | 0 | 8.6445E-11 | 1 | 1.6047E-10 | 1 | 1.5427E-01 | 0 | |

| 7 | 6.5159E-06 | 1 | NaN | 0 | 5.7574E-04 | 1 | NaN | 0 | 2.7741E-10 | 1 | 8.0651E-04 | 1 | NaN | 0 | |

| 10 | 2.6084E-11 | 1 | 8.7841E-03 | 1 | 3.0826E-12 | 1 | 1.5346E-13 | 1 | 6.0158E-13 | 1 | 1.8756E-04 | 1 | 1.4270E-01 | 0 | |

| Test 3 | 2 | 8.2306E-01 | 0 | 5.4887E-01 | 0 | 1.7615E-06 | 1 | 1.4586E-01 | 0 | 3.6573E-05 | 1 | 2.0632E-05 | 1 | 1.4419E-01 | 0 |

| 5 | NaN | 0 | 1.9173E-07 | 1 | 1.7615E-01 | 0 | NaN | 0 | 2.0364E-06 | 1 | 5.0641E-10 | 1 | NaN | 0 | |

| 7 | 7.6088E-12 | 1 | 2.0944E-05 | 1 | 4.9093E-01 | 0 | NaN | 0 | 8.3743E-02 | 0 | 9.0446E-13 | 1 | NaN | 0 | |

| 10 | 2.7837E-10 | 1 | 8.8971E-01 | 0 | 1.3097E-04 | 1 | 1.5606E-13 | 1 | 1.0910E-06 | 1 | 7.6379E-12 | 1 | NaN | 0 | |

| Test 4 | 2 | 3.4523E-01 | 0 | 8.5131E-01 | 0 | 5.3986E-01 | 0 | 1.4790E-13 | 1 | 6.0885E-01 | 0 | 3.8450E-07 | 1 | 1.5925E-01 | 0 |

| 5 | 3.4126E-01 | 0 | 3.4964E-05 | 1 | 9.6229E-02 | 0 | 1.8626E-13 | 1 | 3.7820E-06 | 1 | 3.7512E-05 | 1 | 1.6804E-01 | 0 | |

| 7 | 3.6394E-07 | 1 | 1.5838E-05 | 1 | 1.2396E-04 | 1 | 1.5631E-13 | 1 | 7.8548E-03 | 1 | 3.0739E-01 | 0 | 1.8804E-01 | 0 | |

| 10 | 5.9695E-13 | 1 | 8.5872E-04 | 1 | 1.2135E-11 | 1 | 1.5606E-13 | 1 | 1.7767E-08 | 1 | 1.5109E-13 | 1 | 1.2804E-01 | 0 | |

| Test 5 | 2 | 7.0424E-01 | 0 | 8.7424E-01 | 0 | 3.6045E-01 | 0 | 1.5300E+00 | 0 | 2.2962E-01 | 0 | 7.0856E-01 | 0 | 1.6475E-01 | 0 |

| 5 | 8.0431E-01 | 0 | 2.3346E-05 | 1 | 3.6045E-05 | 1 | NaN | 0 | 2.1715E-08 | 1 | 3.7825E-01 | 0 | NaN | 0 | |

| 7 | 4.1343E-07 | 1 | 1.7325E-05 | 1 | 2.4302E-05 | 1 | NaN | 0 | 6.1405E-02 | 0 | 1.0410E-06 | 1 | 1.6804E-01 | 0 | |

| 10 | 2.6084E-11 | 1 | 3.5284E-06 | 1 | 3.3762E-08 | 1 | 1.5316E-13 | 1 | 8.4471E-10 | 1 | 1.5943E-13 | 1 | 1.6404E-01 | 0 | |

| Test 6 | 2 | 2.5921E-01 | 0 | 1.0184E-09 | 1 | 8.0245E-05 | 1 | 1.9788E-12 | 1 | 1.0390E-05 | 1 | 2.1674E-08 | 1 | 2.1307E-01 | 0 |

| 5 | 2.4538E-06 | 1 | 1.0184E-09 | 1 | 8.0245E-05 | 1 | 1.9788E-12 | 1 | 1.0390E-05 | 1 | 2.1674E-08 | 1 | 2.9307E-01 | 0 | |

| 7 | 5.5983E-12 | 1 | 7.3631E-11 | 1 | 1.9572E-09 | 1 | 1.5306E-13 | 1 | 4.4054E-01 | 0 | 2.1882E-11 | 1 | 2.3294E-01 | 0 | |

| 10 | 1.5671E-13 | 1 | 7.8385E-01 | 0 | 2.4791E-13 | 1 | 1.3645E-13 | 1 | 1.0806E-06 | 1 | NaN | 0 | 2.3249E-01 | 0 | |

| Test 7 | 2 | NaN | 0 | NaN | 0 | 2.6096E-01 | 0 | NaN | 0 | NaN | 0 | 1.6672E-01 | 0 | NaN | 0 |

| 5 | 2.4538E-06 | 1 | 3.4967E-01 | 0 | 1.2298E-01 | 0 | 9.4860E-02 | 0 | 2.2962E-01 | 0 | 9.0029E-02 | 0 | 2.4494E-01 | 0 | |

| 7 | 1.1032E-09 | 1 | 1.4156E-08 | 1 | 4.3222E-10 | 1 | 1.5366E-13 | 1 | 1.0702E-06 | 1 | 8.7632E-07 | 1 | NaN | 0 | |

| 10 | 2.7012E-10 | 1 | 1.9015E-05 | 1 | 1.3749E-10 | 1 | 1.5066E-13 | 1 | 8.7484E-11 | 1 | 2.5842E-11 | 1 | NaN | 0 | |

| Test 8 | 2 | 3.5450E-01 | 0 | 3.4967E-01 | 0 | 9.4924E-02 | 0 | NaN | 0 | 5.6002E-01 | 0 | 7.7421E-01 | 0 | NaN | 0 |

| 5 | 3.5781E-01 | 0 | 3.4967E-01 | 0 | 5.7411E-05 | 1 | 1.5346E-13 | 1 | 7.7717E-02 | 0 | 5.6581E-04 | 1 | 4.6219E-05 | 1 | |

| 7 | NaN | 0 | 1.6902E-01 | 0 | 1.1743E-01 | 0 | NaN | 0 | 6.1821E-06 | 1 | NaN | 0 | 3.0340E-06 | 1 | |

| 10 | NaN | 0 | 9.3703E-09 | 1 | 4.1427E-11 | 1 | NaN | 0 | 1.5481E-13 | 1 | 7.6170E-12 | 1 | 3.9900E-01 | 0 | |

References

- Merzban, M.H.; Elbayoumi, M. Efficient solution of Otsu multilevel image thresholding: A comparative study. Expert Syst. Appl. 2019, 116, 299–309. [Google Scholar] [CrossRef]

- Bhandari, A.K.; Singh, V.K.; Kumar, A.; Singh, G.K. Cuckoo search algorithm and wind driven optimization based study of satellite image segmentation for multilevel thresholding using Kapur’s entropy. Expert Syst. Appl. 2014, 41, 3538–3560. [Google Scholar] [CrossRef]

- Sanei, S.H.R.; Fertig III, R.S. Uncorrelated volume element for stochastic modeling of microstructures based on local fiber volume fraction variation. Compos. Sci. Technol. 2015, 117, 191–198. [Google Scholar] [CrossRef]

- Sarkar, S.; Sen, N.; Kundu, A.; Das, S.; Chaudhuri, S.S. A differential evolutionary multilevel segmentation of near infra-red images using Renyi’s entropy. In Proceedings of the International Conference on Frontiers of Intelligent Computing: Theory and Applications (FICTA), Odisha, India, 22–23 December 2013; Springer: Berlin/Heidelberg, Germany, 2013; pp. 699–706. [Google Scholar]

- Houssein, E.H.; Helmy, B.E.d.; Oliva, D.; Jangir, P.; Premkumar, M.; Elngar, A.A.; Shaban, H. An efficient multi-thresholding based COVID-19 CT images segmentation approach using an improved equilibrium optimizer. Biomed. Signal Process. Control 2022, 73, 103401. [Google Scholar] [CrossRef]

- Abd El Aziz, M.; Ewees, A.A.; Hassanien, A.E. Whale optimization algorithm and moth-flame optimization for multilevel thresholding image segmentation. Expert Syst. Appl. 2017, 83, 242–256. [Google Scholar] [CrossRef]

- Houssein, E.H.; Sayed, A. Dynamic Candidate Solution Boosted Beluga Whale Optimization Algorithm for Biomedical Classification. Mathematics 2023, 11, 707. [Google Scholar] [CrossRef]

- Houssein, E.H.; Saad, M.R.; Hashim, F.A.; Shaban, H.; Hassaballah, M. Lévy flight distribution: A new metaheuristic algorithm for solving engineering optimization problems. Eng. Appl. Artif. Intell. 2020, 94, 103731. [Google Scholar] [CrossRef]

- Houssein, E.H.; Mahdy, M.A.; Eldin, M.G.; Shebl, D.; Mohamed, W.M.; Abdel-Aty, M. Optimizing quantum cloning circuit parameters based on adaptive guided differential evolution algorithm. J. Adv. Res. 2021, 29, 147–157. [Google Scholar] [CrossRef]

- Houssein, E.H.; Emam, M.M.; Ali, A.A.; Suganthan, P.N. Deep and machine learning techniques for medical imaging-based breast cancer: A comprehensive review. Expert Syst. Appl. 2021, 167, 114161. [Google Scholar] [CrossRef]

- Houssein, E.H.; Hosney, M.E.; Oliva, D.; Mohamed, W.M.; Hassaballah, M. A novel hybrid Harris hawks optimization and support vector machines for drug design and discovery. Comput. Chem. Eng. 2020, 133, 106656. [Google Scholar] [CrossRef]

- Houssein, E.H.; Saad, M.R.; Hussain, K.; Zhu, W.; Shaban, H.; Hassaballah, M. Optimal sink node placement in large scale wireless sensor networks based on Harris’ hawk optimization algorithm. IEEE Access 2020, 8, 19381–19397. [Google Scholar] [CrossRef]

- Abualigah, L.; Al-Okbi, N.K.; Elaziz, M.A.; Houssein, E.H. Boosting Marine Predators Algorithm by Salp Swarm Algorithm for Multilevel Thresholding Image Segmentation. Multimed. Tools Appl. 2022, 81, 16707–16742. [Google Scholar] [CrossRef] [PubMed]

- Neggaz, N.; Houssein, E.H.; Hussain, K. An efficient henry gas solubility optimization for feature selection. Expert Syst. Appl. 2020, 152, 113364. [Google Scholar] [CrossRef]

- Hashim, F.A.; Houssein, E.H.; Mabrouk, M.S.; Al-Atabany, W.; Mirjalili, S. Henry gas solubility optimization: A novel physics-based algorithm. Future Gener. Comput. Syst. 2019, 101, 646–667. [Google Scholar] [CrossRef]

- Hashim, F.A.; Hussain, K.; Houssein, E.H.; Mabrouk, M.S.; Al-Atabany, W. Archimedes optimization algorithm: A new metaheuristic algorithm for solving optimization problems. Appl. Intell. 2020, 51, 1531–1551. [Google Scholar] [CrossRef]

- Eberhart, R.C.; Shi, Y. Comparison between genetic algorithms and particle swarm optimization. In Proceedings of the International Conference on Evolutionary Programming, San Diego, CA, USA, 25–27 March 1998; Springer: Berlin/Heidelberg, Germany, 1998; pp. 611–616. [Google Scholar]