Abstract

A hybrid metaheuristic methodology that combines the Red-billed Blue Magpie Optimization (RBMO) algorithm with the Aquila Optimizer (AO) is introduced in this work as the RBMOAO method. The novel algorithm addresses a critical shortcoming of the standard AO: its exploration-to-exploitation ratio across different optimization stages is inefficient, yielding premature convergence and low diversity within the population. This is achieved by using RBMO’s Group-Based Directional Perturbation (GDP) and its dynamic convergence factor (CF) as part of the methodology. The early stages of the optimization process are characterized by a grouping methodology to maintain population diversity through coordinated exploration across subgroups of varying sizes using GDP. Later iterations are characterized by a CF-guided updating process that increases the resolution of the search for the best areas, thereby improving convergence precision without sacrificing solution quality. Empirical testing of the proposed methodology using the CEC 2015 and CEC 2020 test sets demonstrated RBMOAO’s superior performance compared to other metaheuristics, outperforming other optimizers in 73.33% of CEC 2015 functions and 80% of CEC 2020 functions, with statistical significance in the increased precision and robustness of solutions across all problem types. Additionally, the RBMOAO methodology demonstrated outstanding performance in constrained engineering design problems. In addition to optimization, an RBMOAO-optimized ensemble architecture was implemented to predict cybersecurity intrusion threats, achieving an accuracy of 89.6%. Through the dynamic calibration of the base learner weights via metaheuristic search, the RBMOAO ensemble achieved the top ranking. These results illustrate the wide range of applications of the RBMOAO methodology and provide support for its deployment in the context of high-stakes predictive analytics.

1. Introduction

Nature-inspired optimization algorithms are a fundamental part of computational intelligence, providing engineers with powerful tools to solve problems in all areas of engineering design, machine learning, energy, and scientific modeling [1,2,3]. To date, nature-inspired optimization algorithms have provided an effective way to find the global optimum or near-optimum solution to problems that are difficult to solve due to their high dimensionality, non-linearity, multiple optima, and strict constraints [4,5]. Recent research has introduced new nature-inspired optimization algorithms, such as the Aquila Optimizer (AO) [6], Red-billed Blue Magpie Optimization (RBMO) [7], Mountain Gazelle Optimizer [8], and Dung Beetle Optimizer [9], which can be used to effectively find the global optimum solution to these types of problems [10,11]. Nature-inspired optimization algorithms have been successfully applied to many different engineering problems. They have been utilized to find the minimum weight of structures, given that the safety constraints are satisfied, through structural optimization. They have been used to determine the optimal parameters of fuel cells [12] and the optimal configuration of photovoltaic arrays [13]. They have also been used to optimize ensemble models used in the area of cybersecurity to maximize the accuracy of intrusion detection systems [14]. These applications demonstrate that nature-inspired optimization algorithms are versatile, converting theoretical research into practical engineering solutions. It is worth noting, however, that not all nature-inspired algorithms are equal in their justification of biological analogy. A growing body of research has critiqued metaphor-based optimization, arguing that biological and physical analogies are sometimes employed merely as narrative devices that obscure rather than illuminate the underlying search mechanics. While such concerns are valid for algorithms where the metaphor serves no functional purpose, they do not apply to nature-inspired methods whose biological analogs map directly onto well-defined and interpretable search strategies [15,16]. A recent example is the Aquila Optimizer (AO) [6], whose hunting-inspired mechanisms provide a principled basis for designing exploration and exploitation strategies rather than acting as a superficial label, forming the foundation of the hybrid method proposed in this work.

The Aquila Optimizer (AO) integrates both adaptive search strategies that are similar to the hunting patterns of the eagle and the Lévy flight algorithm to produce a multimodal benchmark-based search strategy that is competitive [6]. The AO uses several different search mechanisms, each designed to be used in either an exploratory or exploitative manner to create a search strategy that can alternate between the two modes of operation. In addition to this flexibility, the AO has two major limitations. The first limitation arises from the fact that there is no explicit method of coordinating the movement of elite individuals during the course of the search process; therefore, many promising regions of the search space that were discovered early in the search process are not sufficiently exploited. The second limitation occurs when the AO switches between the exploratory and exploitative modes of search. This switch is made statically according to the number of iterations completed in the search process. As such, when the AO is applied to problems whose fitness landscapes are rugged, the switching between the two modes of search often results in premature stagnation [17,18]. Previous attempts to enhance the performance of the AO have been largely limited to parameter adaptations or hybridization of the AO with a single operator. These modifications result in improved performance on particular problem classes but do not address the lack of an explicit mechanism for coordinating the search process. More specifically, none of these previous enhancements of the AO include a biologically motivated mechanism for adjusting the intensity of the search process relative to the amount of diversity present in the population at any given time. An improvement of the AO would be to simulate natural foraging behaviors, which adjust the trade-off between exploration and exploitation in response to environmental feedback.

To address these limitations, this research introduces RBMOAO as a hybrid metaheuristic that combines the heterogeneous grouping (GDP) and convergence factor (CF) components of RBMO with the fundamental search operators of the AO. During both the exploration and exploitation stages of the search, the RBMO component creates dynamic subgroups that enable elite agents to share information and improve global convergence. The CF component provides adaptive modulation of the step size during the search process via a non-linear decay function that ensures aggressive exploration at earlier stages of the search and increasingly refined exploitation at later stages. Through the integration of both components into a single hybrid metaheuristic, RBMOAO provides a means of phase-adaptive search behaviors that dynamically adjust the search between exploration and exploitation based upon the current level of convergence. This research has three primary contributions:

- The development of a biologically inspired hybridization framework that addresses the static nature of the AO’s search dynamics through the use of RBMO’s GDP and CF mechanisms;

- The extensive empirical evaluation of RBMOAO against standardized benchmarks and real-world engineering design problems, providing evidence of its superiority in terms of solution quality and robustness;

- The application of RBMOAO’s ensemble learning for high-stakes predictive analytics, demonstrating its applicability outside of the traditional domain of optimization.

The structure of this research continues with Section 2 describing existing research on AO enhancements and metaheuristic approaches. Section 3 provides an overview of the theoretical foundation of the AO and RBMO, then describes the detailed formulation of the approach using the new RBMOAO method. Experimental results are presented in Section 4 for benchmark functions, engineering problems, and cybersecurity prediction. Section 5 provides the conclusion and points to possible avenues for further study.

2. Literature Review

Since its introduction, the AO has attracted significant academic interest, and several recent papers have proposed modifications to improve its convergence speed, solution quality, and stability across a variety of problem types. This section reviews some of the recent works, which can be grouped into two categories:

2.1. Aquila Optimizer-Based Approaches

Fu et al. [19] introduced the Tent-Enhanced Aquila Optimizer (TEAO), which employs a chaotic tent map for population initialization and new update rules to balance exploration and exploitation. The tent map generates a diverse initial population, while new formulas reduce the likelihood of premature convergence. Experimental performance shows improved exploitation and exploration. Cui et al. [20] proposed a Multi-Strategy Boosted Aquila Optimizer (PGAO). The PGAO uses a chaotic map for initialization, pinhole imaging learning to generate opposite search directions, a nonlinear switching factor to balance exploration and exploitation, and a golden sine operator for local refinement. Kan et al. [21] introduced a Multi-Strategy Aquila Optimizer (MSAO) that introduces a random sub-dimension update mechanism, memory and dream-sharing strategies, and adaptive parameters with dynamic opposition-based learning. These hybrid strategies improve exploitation and maintain solution diversity. The proposed MSAO demonstrated faster convergence and higher solution accuracy. Zeng et al. [22] introduced the Spiral Gaussian Mutation Aquila Optimizer (SGAO). The SGAO introduces a nonlinear control factor, spiral search strategy, and dynamic Gaussian mutation to adjust exploration and exploitation adaptively, achieving significant improvements in solution accuracy. Bai et al. [23] introduced the Multi-Strategy Improved Aquila Optimizer (MIAO), which eliminates the control parameters in the flight phase using a phasor operator and introduces a flow-direction operator. These operators enhance exploration and avoid stagnation. Experimental results showed improved solution accuracy. Sharma et al. [24] developed the Improved Aquila Optimizer (IAO). The IAO models additional hunting behaviors, such as low flight with leisurely descent, vertical dives, contour flights, and swooping maneuvers, to improve both exploration and exploitation. The proposed algorithm achieved superior solution accuracy compared with the basic AO and other metaheuristics. Wang et al. [25] proposed the Velocity-Aided Adaptive Aquila Optimizer (VAIAO). The VAIAO introduces velocity and acceleration terms into the position update formula, as well as adaptive opposition-based learning. These enhancements improve global exploration and speed up convergence. Abualigah et al. [26] developed the Locality Opposition-Based Learning Aquila Optimizer (LOBLAO), which incorporates locality opposition-based learning and a mutation search strategy to diversify initial solutions. These mechanisms enlarge exploration, maintain diversity, and avoid local optima. The LOBLAO consistently achieved improved results compared to the traditional AO. Yu et al. [27] proposed the Restart Opposition Chaotic Aquila Optimizer (mAO). The mAO integrates a restart strategy, opposition-based learning, and chaotic local search to increase population diversity and escape local optima. The results showed significant improvement over the compared algorithms.

2.2. Hybrid Aquila Optimizer Approaches

Xiao et al. [28] introduced the Improved Hybrid Aquila Optimizer and African Vultures Optimization Algorithm (IHAOAVOA). It combines the AO’s exploration with the exploitation ability of the African Vultures Optimization Algorithm. It uses composite opposition-based learning and fitness-distance balance selection to maintain diversity and avoid local optima. The hybrid algorithm delivered superior accuracy, convergence speed, and robustness compared with the AO and other swarm algorithms. Wang et al. [29] proposed an Improved Hybrid Aquila Optimizer and Harris Hawks Optimization (IHAOHHO). This hybrid retains the AO’s explorative behavior and Harris Hawks Optimization’s exploitative hunting. It introduces representative-based hunting and opposition-based learning to improve search diversity and help escape local optima. IHAOHHO demonstrated superior performance. Zhao et al. [30] developed a Heterogeneous Aquila Optimizer (HAO). The HAO introduces a multiple-updating principle to increase heterogeneity within the population, enhancing intensification and diversification. The proposed HAO demonstrated improved robustness over the AO. Al-Majidi et al. [18] developed the Hybrid Aquila Optimizer–Modified Sine Cosine Algorithm (HSCAO). HSCAO runs the AO and the Modified Sine Cosine Algorithm. The AO offers strong global exploration, while MSCA provides better local exploitation. The hybrid leverages the strengths of both and mitigates their weaknesses. The proposed optimizer demonstrated improved performance.

Hybridization of the AO with other techniques has improved optimization performance. A key shortcoming of the reviewed methods is that they do not include any form of dynamic partitioning of populations into multiple heterogeneous groups of cooperating individuals or dynamic adjustment of the step size based upon continuously updated indicators of convergence. This study proposes a hybrid method, RBMOAO, that combines GDP-based direction perturbations and CFs from RBMO as an additional layer of organization and control, complementing the AO’s existing search operators rather than duplicating them.

3. Methodology

3.1. Aquila Optimizer

The Aquila Optimizer (AO) is a nature-inspired metaheuristic algorithm that utilizes the predatorial hunting methods of Aquila birds to find and catch a target in a natural environment [6]. The AO is a population-based stochastic optimization technique. It starts the search process by initializing a population matrix () with candidate solutions over a dimensional () search space [26]. Each candidate solution is initialized using a uniform random sampling method within pre-defined boundary limits, as described by Equation (1).

where and are the upper and lower boundaries of the j-th dimension and ∈ [0, 1] is a random value from a uniform distribution. The optimization procedure switches between exploration and exploitation until a set of convergence criteria is met. The AO has two global search strategies. The first strategy uses a combination of the best current solution () and the population mean () to drive candidate solutions toward the most promising areas, as defined in Equations (2) and (3).

In this equation, represents the current iteration, and represents the maximum number of iterations. The factor expressed as () is a linearly decreasing function used to maintain the exploratory ability throughout the early iterations. The second global search strategy utilizes Lévy flight dynamics to improve search diversity. Candidate solutions are updated according to Equation (4).

where represents the candidate solution at time , represents the best current solution, represents a randomly selected solution from the population, and represents a spiral trajectory given by Equation (5).

where θ is the angle of rotation and is the radius of the circle, which can be computed with Equation (6).

The constants are ω = 0.005, and ∈ [1, 20]. The Lévy distribution is represented by Equations (7) and (8).

where and are arbitrary variables and = 1.5. During the exploitation phase, the AO uses two different update rules to intensify the search around high-quality regions. The first rule uses a combination of boundary-based randomization and directional movement toward the best solution, as defined in Equation (9).

where and are adaptive control parameters used to govern the intensity of exploitation. The second exploitation strategy uses a quality-dependent factor () to modulate solution updates based upon convergence progress, as expressed in Equations (10)–(12).

This formulation allows for dynamic adjustment of the search behavior as the algorithm advances while maintaining an optimal balance between intensifying the search and controlling diversification to avoid premature convergence.

3.2. Red-Billed Blue Magpie Optimization

Fu et al. (2024) [7] introduced Red-billed Blue Magpie Optimization (RBMO) as a new evolutionary metaheuristic inspired by the foraging behavior of the red-billed blue magpie [31], a corvid species endemic to East and South East Asia. This bird species has been shown to exhibit complex hunting behaviors, such as the formation of heterogeneous groups while searching for food, coordination of attacks to capture prey, and strategic storage of food for later use. These behaviors are used to both guide how RBMO balances its exploitation–exploration process and preserve high-quality solutions during the optimization process. RBMO starts with initialization of a population of candidate solutions at random within the possible range of values of each decision variable. Each decision variable is generated using a uniform random distribution over all possible values, given the problem constraints as defined in Equation (13).

where and are the upper- and lower-bound vectors for the search space.

3.2.1. RBMO Group-Based Directional Perturbation (GDP)

The exploratory phase of RBMO utilizes heterogeneous grouping of red-billed blue magpies when searching for food. At each time step, the candidate solutions are divided into smaller cooperative units (each containing 2–5 solutions) and/or large groups (containing 10 to N solutions). The size of the groups varies depending on the environment being searched for food sources. Candidate solutions in the smaller groupings are updated based on their positions, as given in Equation (14).

The update rule used to explore large clusters is given by Equation (15).

where q ∈ [10, N] is the cluster size. The algorithm probabilistically chooses between these two ways of exploring using a “balance” coefficient (ε, usually set at 0.5), as defined in Equation (16).

The dual mode of exploration for the magpie population enhances the global search capabilities of the population by switching between a process of localized information exchange within a small group of agents and one of broader information dissemination throughout a larger aggregation of agents.

3.2.2. RBMO’s CF-Based Convergence

The exploitation phase models how magpies work together to hunt prey, which involves multiple agents converging on identified sources by way of a more intense local search than was performed before. The position updates utilize the current best solution () and a step-size control parameter () that decreases with the number of iterations completed so that the population can converge better, as defined in Equation (17).

where and denote the current and maximum number of iterations. Equation (18) represents a case in which there are only a few agents exploiting in a small group.

When there are many agents in a large group, exploitation is defined as shown in Equation (19).

where is a random variable taken from the standard normal distribution (). The controlled randomness introduced by the normal distribution prevents the population from becoming stuck early in the optimization process due to excessive premature convergence. The probability of selecting among group sizes follows the same sequence as the exploration phase with a threshold of ε. In addition to maintaining high-quality solutions and preventing regression during the stochastic position updates, RBMO uses a greedy selection mechanism inspired by magpies’ caching behavior to store food sources. Each time a new solution is generated and updated, RBMO evaluates the quality of the solution with respect to the objective function (f(⋅)) and keeps the better solution, as given in Equation (20).

where and represent the quality of the previous and new solution, respectively. By storing the better solution after each generation, RBMO preserves the quality of the population over the entire optimization process. Together, the mechanisms described above allow RBMO to achieve a good trade-off between exploration and exploitation while also ensuring that the quality of the solutions is preserved throughout the optimization process. These are important characteristics of RBMO that contribute to the effectiveness of this algorithm for the solution of complex, multimodal optimization problems.

3.3. Proposed RBMOAO

The AO is chosen for its potential to offer mechanisms complementary to those of RBMO. The AO lacks a method to coordinate among elite solutions and employs a static phase switch (t ≤ 2T/3) that does not adapt to the population’s convergence state. RBMO addresses the coordination issue through the use of its GDP mechanism, which divides the agents into cooperative subgroups (small groups of 2–5 and large groups of 10–N), allowing for a structured sharing of information, and the CF mechanism, which replaces the static phase transition with a continuous decrease in the step size. An alternative hybridization with PSO, GA, or DE could create redundant mechanisms of operation. On the other hand, RBMO offers two fundamentally new mechanisms of operation compared to existing optimization algorithms: dynamic formation of subgroups and adaptive modulation of intensities. Finally, several established works in the literature show that the performance of these optimizers is outstanding.

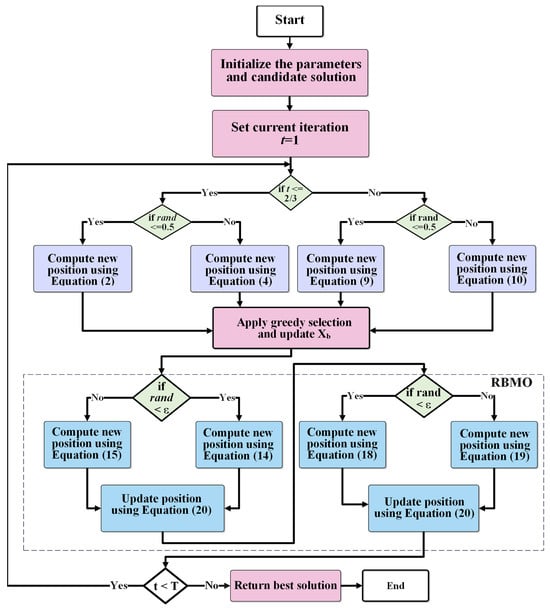

To address the shortcomings of the initial version of the AO—specifically, the propensity for premature convergence and reduced variability in later generations—we propose a hybrid metaheuristic called the RBMOAO. The RBMOAO uses a combination of the stage-based exploratory–exploitative paradigm inherent in the AO and the group-based intelligence and CF convergence elements from RBMO. The RBMOAO starts by initializing a set of candidate solutions within the search space specified for the optimization problem. Each candidate solution is a probable solution to the optimization problem and is evaluated using the objective function. The best candidate solution found to date is stored as the global elite, referred to as The algorithm operates through T generations and dynamically transitions between exploration and exploitation generations based on the current generation number. In the exploration generation (), the RBMOAO utilizes two distinct methodologies. During the first stage of the proposed optimizer, an AO-inspired movement methodology provides wide-range movements and finally converges to promising areas for solution based on Equations (2)–(10). At the second stage of the optimization process, movement inspired by RBMO is applied to the population, and Group-Based Directional Perturbation (GDP) is employed as expressed in Equations (14) and (15). The GDP methodology generates updates to the agents’ positions based on the mean of a random sampling of their peers and scaled by random learning factors. In the exploitation generation, the RBMOAO performs an intensified search around the global elite using a time-decay feedback exploitation scheme (), as defined in Equation (17). Here, the scheme attracts each agent towards the global elite, which is perturbed by Gaussian noise and scaled by a feedback control parameter according to Equations (18) and (19). This creates a gradual reduction in the distance traveled at each iteration to improve the accuracy of convergence without losing diversity in earlier generations. Following each generation, an agent maintains its current position if it finds that its new fitness value is greater than its current fitness value. This maintains the highest-quality candidate solutions and greatly increases the reliability and resistance of regression in the face of stochastic behavior. The hybridization of these update methods results in an optimizer that allows for global searchability throughout its run but also concentrates its search into areas of the landscape that are likely to have the most promise. The pseudo code of the RBMOAO is given in Algorithm 1. The flow chart of the proposed optimizer is given in Figure 1.

| Algorithm 1: Pseudo Code of the RBMOAO |

| Set AO and RBMO parameters and population size N. |

| Set Maximum iteration number to T |

| Initialize positions Xi (i = 1, 2, …, N) using Equation (1) |

| Evaluate fitness: fi = f(Xi) for all i ∈ {1, …, N} |

| Identify initial best: Xb = arg_min{Xi} fi; fb = min(fi) |

| While ( |

| For |

| If (t 2T/3) |

| If (r < 0.5) |

| Compute new position using Equation (2) |

| Else |

| Compute new position using Equation (4) |

| End if |

| Else |

| If (r < 0.5) |

| Compute new position using with Equation (9) |

| Else |

| Compute new position using with Equation (10) |

| End if |

| End if |

| If f( < f(): #Evaluate fitness of new position |

| Xi = |

| fi = f( |

| Else |

| Xi = |

| End If |

| End For |

| Update |

| For |

| If (r < ) |

| Compute new position with Equation (15) |

| Else |

| Compute new position with Equation (14) |

| End If |

| If f( < f(): #Evaluate fitness of new position |

| Xi = |

| fi = f( |

| Else |

| Xi = |

| End If |

| End For |

| For |

| If (r < ) |

| Compute new position with Equation (18) |

| Else |

| Compute new position with Equation (19) |

| End if |

| If f( < f(): #Evaluate fitness of new position |

| Xi = |

| fi = f( |

| Else |

| Xi = |

| End If |

| End For |

| t = t + 1 |

| End While |

| Return |

Figure 1.

Flowchart of the Proposed RBMOAO.

Computational Complexity of the RBMOAO

The computational complexity of the RBMOAO algorithm is dependent upon three parameters: the population size (N), the number of solution dimensions (D), and the maximum number of iterations (T). In each iteration of the RBMOAO algorithm, there are three position-updating phases: an AO-inspired phase that utilizes conditional exploration/exploitation rules to govern agent movements and two phases of RBMO that implement group-based movements and convergence factor-driven refinements. In each phase, the algorithm conducts vector operations on vectors representing the positions of all agents (N) in each of the D dimensions. Thus, the time complexity of each phase is . Following each of the first two phases, the algorithm evaluates the fitness of all candidate solutions and uses a greedy selection process to select superior candidate solutions to be preserved. The fitness evaluation and greedy selection process have a time complexity of , where () represents the time required to evaluate the objective function. Therefore, the time complexity of one iteration of the RBMOAO algorithm is . Since the RBMOAO algorithm iterates through its phases T times, the asymptotic time complexity is . Despite the fact that the RBMOAO algorithm has three position-updating phases, it still has a time complexity that is comparable to that of other population-based metaheuristics due to the multiplicative constant associated with the sequential phases. As a result, the additional computation required by the RBMOAO’s three position-updating phases enhances the solution quality and convergence behavior of the RBMOAO while also enhancing the search diversification capabilities of the RBMOAO.

4. Experimental Results and Evaluation

In order to prove the validity of the empirical results and to support the reliability of the proposed methodology, this research uses both the 2015 and 2020 Congress on Evolutionary Computation (CEC) benchmark suites to standardize testing of algorithms [32,33]. Each of these suites contains a large variety of different mathematical functions structured to test the ability of optimizers to perform various types of optimizations under controlled conditions. In the 2015 CEC suite, each test function is classified according to a specific type of function. Functions F1–F2 are categorized as unimodal, and they are utilized to determine how accurately an algorithm can converge toward its optimal solution. Functions F3–F5 are defined as multimodal, and they are designed to verify whether an algorithm can find the global optimum by escaping from a local optimum. Functions F6–F8 represent hybrid formulations, and they are utilized to examine the exploratory behavior of an algorithm within a multi-dimensional search space that contains a combination of heterogeneous search spaces. Functions F9–F15 include composite formulations, and they are designed to test the robustness of an algorithm under complex, non-separable conditions that may include weighted rotations and/or biases.

The 2020 CEC suite extends this framework with an analogous classification scheme as follows: Function F16 represents a unimodal function. Functions F17–F19 are defined as multimodal, and they are designed to verify whether an algorithm can identify the global optimum by escaping from a local optimum. Functions F20–F22 are classified as hybrid formulations, and they are utilized to examine the exploratory behavior of an algorithm within a multi-dimensional search space that contains a combination of heterogeneous search spaces. Functions F23–F25 represent advanced composite formulations, and they are designed to test the robustness of an algorithm under complex, non-linear, and potentially deceptive conditions that may include rotations and/or biases. All the metaheuristics used to compare the RBMOAO algorithm used the same parameters for all the experiments in order to make the comparison fair and to allow for a quantitative analysis of the performances of the algorithms across the aforementioned benchmark categories. A total of 5000 iterations was the common stop condition for all the experiments, while 30 independent repetitions were run to obtain statistically reliable results. The solution vector had a dimensionality of 30 for all the experiments, and the population size was always set to 30 individuals for all the algorithms. The lower and upper search boundaries are between −100 and 100. Finally, the RBMOAO algorithm underwent a comparative study against the most recent and widely used metaheuristics in order to analyze its relative performance using the previously mentioned benchmarks. These algorithms include the original Aquila Optimizer (AO) [6], Augmented Grey Wolf Optimizer and Cuckoo Search (AGWOCS) [34], the Grey Wolf Optimizer (GWO) [35], Harris Hawks Optimization (HHO) [36], the Opposition-Based Learning Path Finder Algorithm (OBLPFA) [37], the PathFinder Algorithm (PFA) [38], Sand Cat Swarm Optimization (SCSO) [39], and the Sea Lion Optimization Algorithm (SLO) [40]. Table 1 shows the parameters of each algorithm. All experiments were conducted on a workstation equipped with an Intel Core i7 processor and 16 GB of RAM. Python 3.10.12 was used with NumPy 1.24.3. In this section, bold values in the table indicate the best results.

Table 1.

Optimizer hyperparameters.

4.1. CEC 2015 and CEC 2020 Experiment

The overall empirical performance analysis conducted on the CEC 2015 benchmark suite clearly shows that the RBMOAO produces better overall results than other optimized versions of the hybridized algorithms and the original version on the CEC 2015 benchmark suite, as illustrated in Table 2. Analysis of the performance of the hybrid optimizer on unimodal functions (F1–F2), as indicated by how well each algorithm converges towards global minimum values within smooth, convex landscapes, shows that the RBMOAO consistently produces lower objective function values than competing hybridized versions of other algorithms and their original versions, such as AGWOCS, GWO, HHO, and OBLPFA. Therefore, this illustrates the improved exploitation capabilities of the RBMOAO on unimodal function sets. On multimodal function sets (F4–F5), which include multiple local optima to test the exploratory capabilities of an optimizer, the RBMOAO demonstrated greater robustness than the competing optimizers. Since the hybrid optimizer is able to explore the fitness landscape effectively, without premature convergence of the search process, the effectiveness of the hybrid optimizer’s two-phase update mechanism can be attributed to the combination of the AO’s Lévy flight enhanced exploration and RBMO’s heterogeneous grouping strategy to provide sufficient diversity in the search space at any time during the search process. As regards hybrid function sets (F6–F8), which are created using rotated and shifted subfunctions to represent non-separable problems, the hybrid optimizer produced competitive results against the competing algorithms. Although GWO was found to produce a slightly better value than the RBMOAO on function F6, which is consistent with the implications of the No Free Lunch Theorem, i.e., that there does not exist one optimization algorithm that will perform best on all types of problems, the RBMOAO performed statistically significantly better on F7 and F8. This pattern supports the hypothesis that hybridization of the RBMOAO framework provides a good balance between exploration and exploitation, improving the efficiency of the hybrid framework when searching through partially separable, moderately complex landscapes. With respect to composition function sets (F9–F15), which create highly deceptive landscapes by combining multiple transformed functions with different characteristics, the results indicate that the RBMOAO achieved better performance on F10–F15 and that GWO achieved better performance on F9. However, despite the marginal performance difference, the RBMOAO still maintains competitive results on most of the composition functions, demonstrating that the hybrid framework is not drastically diminished in terms of the quality of solutions produced for complex, multi-decomposable landscapes. In general, the results presented above support the idea that the RBMOAO framework is versatile and that it is particularly proficient in both unimodal convergence and multimodal exploration, in addition to exhibiting reasonable performance on many highly complex composite landscapes. The empirical evidence supports the notion that the synergistic integration of the AO and RBMO mechanisms into a hybrid optimizer framework provides a metaheuristic that can address a wide variety of optimization tasks, regardless of whether special parameter tuning is required.

Table 2.

Results of optimizers on CEC 2015.

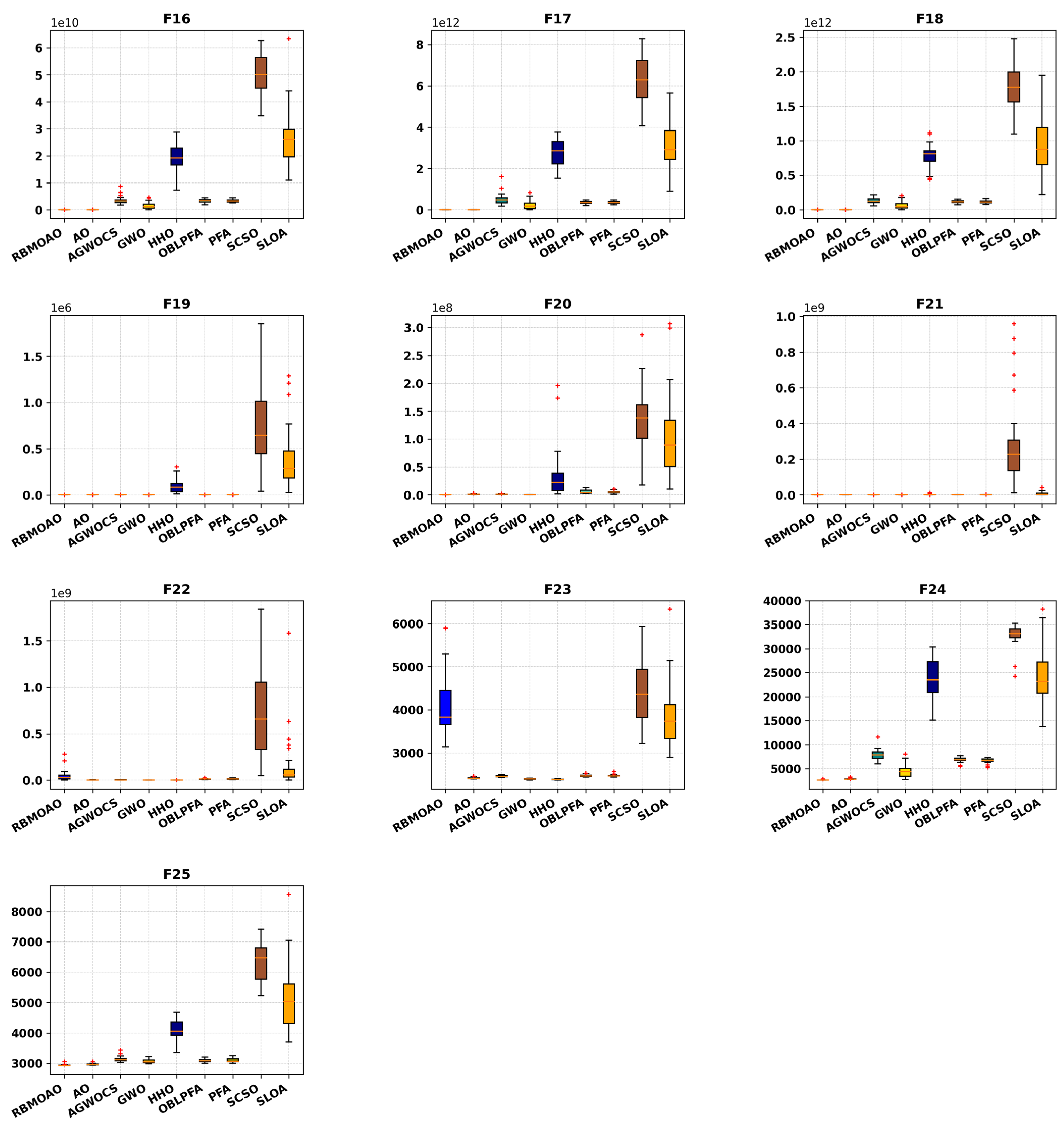

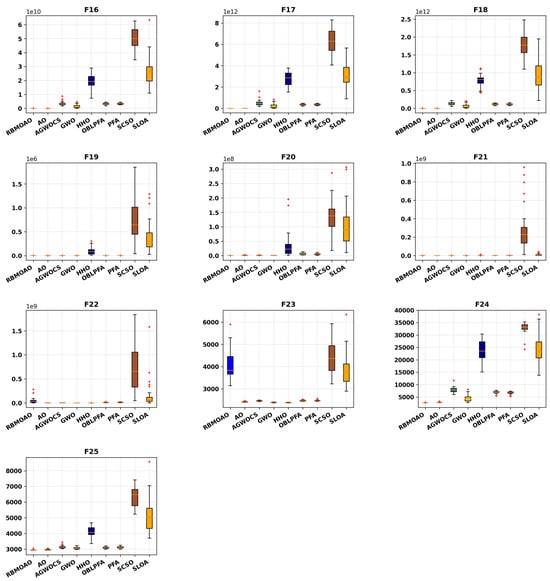

As shown in Table 3, the comparative study on the CEC 2020 benchmark suite shows that the RBMOAO performs well compared to the other well-known metaheuristic optimizers, i.e., AGWOCS, GWO, HHO, OBLPFA, PFA, SCSO, and SLO. Four different function categories were used for this comparative study to analyze the optimization capabilities of each of the metaheuristics. In the unimodal function (F16) which measures exploitation and convergence precision within a unimodal search space, the RBMOAO attained the result closest to the optimal solution; it demonstrated higher levels of convergence precision than AGWOCS, OBLPFA, PFA, and SLO. In the basic multimodal category (F17–F19), which characterizes multiple local optima (thereby requiring an exploratory capability), the RBMOAO performed well on all three functions. The RBMOAO was competitive on F17–F19. This competitiveness is a direct result of the inclusion of RBMO’s heterogeneous grouping strategy. In addition to the basic multimodal category, there exists a hybrid category (F20–F22), where some rotated and shifted sub-components are embedded into the functions to represent non-separable problem structures. Results show that RBMOAO excelled at finding near-optimal solutions to F20 and F21. HHO outperformed the RBMOAO on F22 by a small amount. Nevertheless, the RBMOAO maintained acceptable solution quality and avoided catastrophic degradation on moderately complex landscapes, showing its reliability. In the last category (composition functions F23–F25), which consists of the combination of multiple transformed functions with deceptive interactions to create extremely rugged fitness landscapes, the RBMOAO had the best overall results. Importantly, however, the RBMOAO outperformed its base component (the standalone AO) on F24 and F25. These results indicate that the hybridization strategy effectively improved both the exploratory breadth and the exploitative depth of the base algorithm. Therefore, these empirical studies demonstrate that the RBMOAO has the ability to perform as a versatile metaheuristic framework with high strength in unimodal convergence and multimodal navigation while remaining competitive across diverse problem types, without the need to adjust the parameters of the specific metaheuristics for each type of problem. Table 2 and Table 3 show the results of the average values and deviations from the mean of all the proposed methods on the two benchmark suites, i.e., CEC 2015 and CEC 2020. On the majority of the test problems of both suites, the RBMOAO achieved superior performance. On the CEC 2015 benchmark suite, the RBMOAO had the best mean objective value on 11 of the 15 test problems (corresponding to a 73.33% success ratio). On the CEC 2020 benchmark suite, the RBMOAO had the best mean objective value on eight of ten test problems (corresponding to an 80% success ratio). Overall, the RBMOAO achieved the best performance on nineteen of twenty-five overall test functions (76%) on the two benchmark suites, demonstrating that it is able to obtain the best performance on different categories of problems. These results confirm the effectiveness of the GDP and CF mechanisms introduced in this work to improve AO capabilities.

Table 3.

Results of optimizers on CEC 2020.

4.2. Statistical Analysis of Results

The statistical significance of the RBMOAO compared to well-established metaheuristic optimization methods was evaluated through two comparative, non-parametric statistical tests: the Friedman test for global ranking and the Wilcoxon signed-rank test for pairwise comparisons. These tests were run at a significance level of α = 0.05 for 30 independent evaluations of the RBMOAO on both the CEC 2015 and CEC 2020 benchmark suites, with the data summarized in Table 4. The Friedman test evaluates performance across the set of all benchmarks to provide an overall ranking of the algorithms based on their average ranks. On the CEC 2015 suite, the RBMOAO had the best rank (mean rank of 1.20), followed closely by the AO and GWO (ranked number 2), with SCSO ranked last. On the CEC 2020 suite, similarly, the RBMOAO ranked first, and the AO and GWO ranked second and third respectively. Consistent top-tier ranking across different problem types is a good indicator of the RBMOAO’s performance robustness across diverse problem domains. A subsequent analysis using a pairwise Wilcoxon signed-rank test was used to evaluate if differences in performance between the RBMOAO and the other optimization algorithms were statistically significant. In evaluating the performance difference between the RBMOAO and each of the other optimization algorithms on the CEC 2015 benchmark, it was found that the RBMOAO significantly outperformed all of the other optimization algorithms, including the AO, with a p-value that provided strong evidence supporting the rejection of the null hypothesis of no difference in performance. Interestingly, when evaluating the performance difference between the RBMOAO and the AO on the CEC 2020 suite, the Wilcoxon test produced a p-value of 0.1141, which exceeded the 0.05 threshold, indicating that the performance difference between the RBMOAO and the AO on this particular suite of benchmark functions was not statistically significant, although the RBMOAO has a slightly better mean rank. Several factors may contribute to this finding: (i) the CEC 2020 functions have specific landscape characteristics, such as rotation symmetry or biases, that are beneficial to the AO’s search strategies, diminishing the incremental benefits of RBMO integration; (ii) the increased variability of solution quality across independent runs reduces statistical power to detect the small performance differences between the two algorithms; (iii) the hybridization strategy produces greater benefits on the function combinations of the CEC 2015 suite than on the structural variations of the CEC 2020 suite. However, RBMOAO was still able to demonstrate statistically significant superiority over other remaining competitors on CEC 2020 (p < 0.05) and achieve the highest overall Friedman ranking, confirming its effectiveness, even though there was not a statistically resolvable performance distinction between the RBMOAO and the AO on CEC 2020. These statistical analyses confirm that the RBMOAO is an effective and reliable optimization method that consistently achieves top-tier rankings across multiple benchmark suites. The fact that there was no statistically significant difference in performance between the RBMOAO and the AO on the CEC 2020 suite does not negate the overall superiority of the RBMOAO but, rather, illustrates how the relationship between the design of the optimization method and the specific characteristics of the problems being optimized can vary—a consequence of the No Free Lunch Theorem’s implications for empirical evaluations of metaheuristics.

Table 4.

Non-parametric analysis.

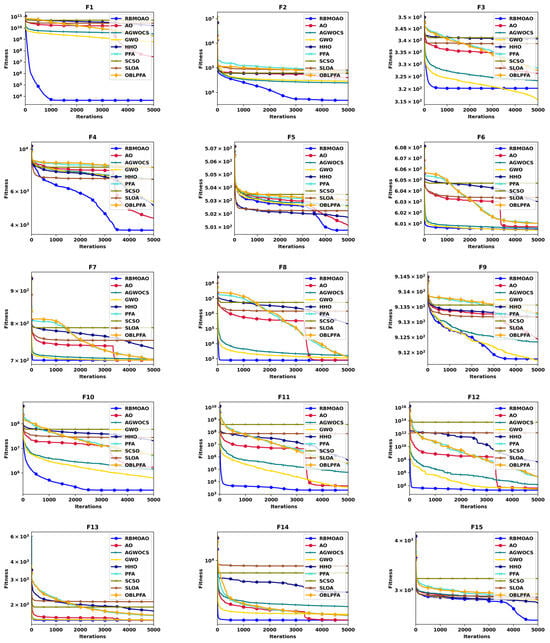

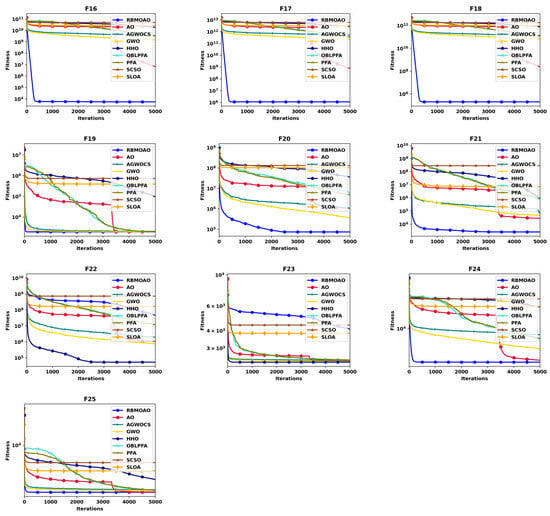

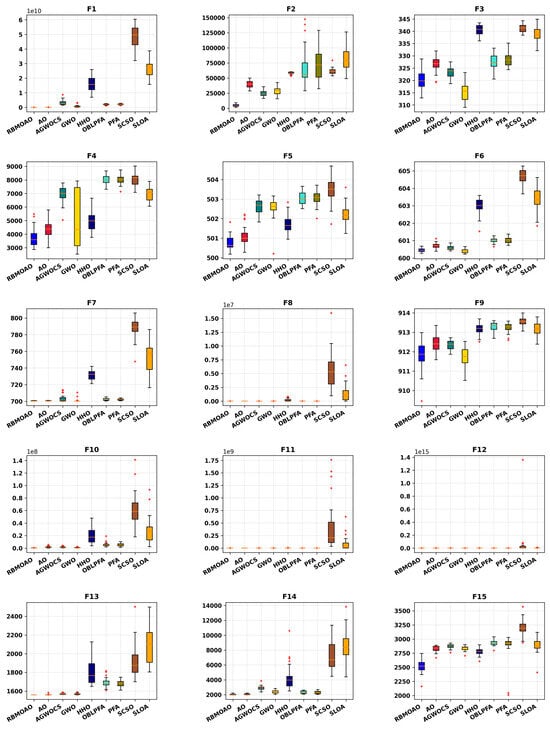

4.3. Convergence Analysis

The results shown in Figure 2, Figure 3, Figure 4 and Figure 5 present a complete comparison of the performance of the RBMOAO relative to other existing metaheuristics, using both the convergence pattern and box-plot data representations on the CEC 2015 and CEC 2020 test sets. As previously explained, the convergence curves in Figure 2 and Figure 3 show how the mean fitness value of the best known solution changes over time. Therefore, the speed at which the curve drops and the lowest achieved value are indicators of search efficiency, providing a good balance between exploration and exploitation mechanisms within the search space. In addition to this analysis of the progress of the searches over time, the box plots in Figure 4 and Figure 5 give a statistical summary of the distribution of solution quality based on 30 independent experiments for each of the considered algorithms. Each box contains the Interquartile Range (IQR), defined as Q3 (third quartile)–Q1 (first quartile). The median is represented by an orange line inside the box. The whiskers of the box are extended until they reach the nearest observation that is not an outlier. Isolated red + symbols indicate outlier observations that significantly differ from the median value of the set of observations. The smaller the size of the box, the less dispersion of quality of the solutions obtained in different experiments; therefore, the more robust the algorithm, the less sensitive it is to initial conditions. On the contrary, longer boxes or the presence of many outliers indicate that the algorithm is unstable and can converge prematurely or be stuck in local optima. Therefore, together, the convergence patterns allow us to analyze the progressive development and the asymptotic behavior of the searches, while the box plots allow us to measure the reliability of the final solutions obtained after an independent experiment. The experimental results presented in the figures above clearly illustrate the double advantage of the RBMOAO: rapid convergence toward the regions where high-quality solutions are located and very low variability between experiments, providing clear evidence of its interest as a competitive optimization method for solution of problems with multiple optima.

Figure 2.

Convergence curves of optimizers on CEC 2015.

Figure 3.

Convergence curves of optimizers on CEC 2020.

Figure 4.

Box plots of optimizers on CEC 2015.

Figure 5.

Box plots of optimizers on CEC 2020.

As illustrated in Figure 2, the convergence patterns exhibited by the hybrid RBMOAO on the CEC 2015 benchmark suite demonstrate distinct advantages in terms of both the rate at which convergence occurs and the steadiness of the path that is followed during convergence.

During early iterations, the RBMOAO demonstrates rapid descent into areas of good quality, exhibiting greater rates of convergence than most other competing optimizers, particularly on the unimodal functions (F1–F2), where the efficiency of the exploitation phase is paramount. In contrast to many other comparative optimization methods, whose convergence paths plateau before the end of the optimization process, the RBMOAO continues to exhibit a steadily decreasing path of convergence throughout the entire optimization process, indicating sustained capacity for exploration and thereby minimizing the likelihood of being trapped in local optima.

The RBMOAO demonstrates considerable superiority in terms of effectiveness on multimodal functions (F3–F5), where rugged landscapes provide challenging conditions for an optimizer to move between local extrema. The RBMOAO achieves better final objective function values than competing optimizers on these functions, demonstrating the effectiveness of the RBMOAO’s method of avoiding local minima when optimizing over multimodal landscapes. For hybrid functions (F7–F8), where the landscape consists of two or more rotated and/or shifted sub-components representing a non-separable problem structure, the RBMOAO’s convergence pattern is characterized by a remarkably smooth and monotonically decreasing curve, implying effective coordination between its individual search mechanisms. Composition functions (F10–F15), where there are deceptive interactions between sub-functions that have been transformed, the RBMOAO is able to achieve competitive solution quality while showing little evidence of stagnation, further illustrating the robustness of the RBMOAO’s ability to optimize over complex landscapes. The synergy of RBMO’s heterogeneous grouping strategy, enhancing population diversity via dynamic subgroup formation, and its convergence factor (CF)-based exploitation mechanism, modulating the level of search intensity as iterations proceed, enables the RBMOAO to maintain competitive levels of solution quality and avoid stagnating in such highly complex landscapes. The grouping strategy allows for global exploration to occur by enabling information to be exchanged amongst various sub-populations, while the CF-based update rule ensures a progressive increase in the search intensity in promising regions without inducing premature convergence. Figure 3 provides a continuation of the analysis provided in Figure 2, but in this case, it applies the analysis to the CEC 2020 benchmark suite and demonstrates advantages similar to those shown in Figure 2 but for F16, F17–F19, F20–F21, and F24–F25. The RBMOAO consistently achieves better final objective function values than peer methods and demonstrates less variability in the convergence trajectory than peer methods, validating the ability of the RBMOAO to optimize both unimodal and multimodal problems, as well as hybrid problems, with both accuracy and robustness. Collectively, these empirical results support the notion that the RBMOAO achieves a superior balance between exploration and exploitation, a major determinant of the efficacy of any metaheuristic within a wide variety of complex, multimodal optimization environments.

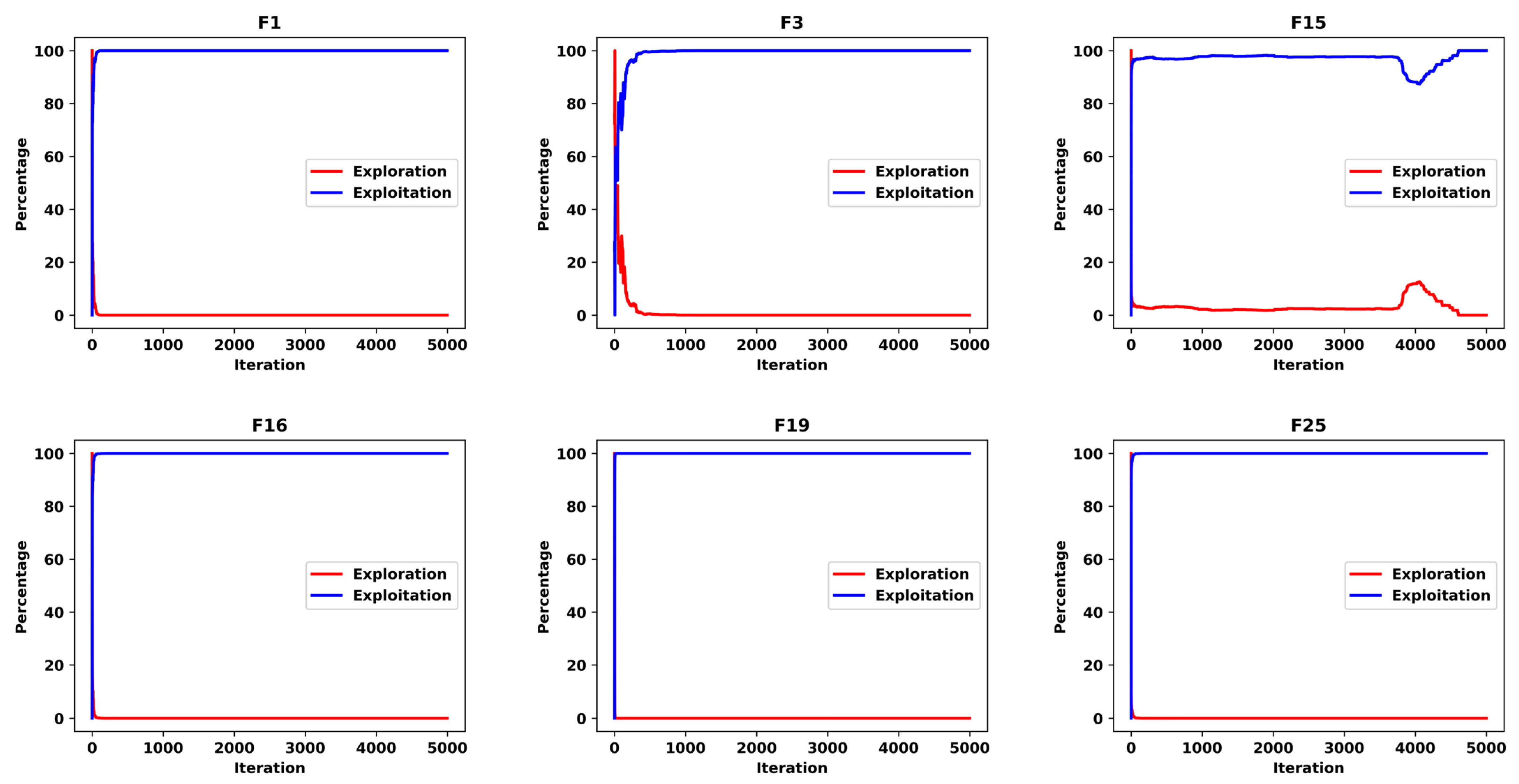

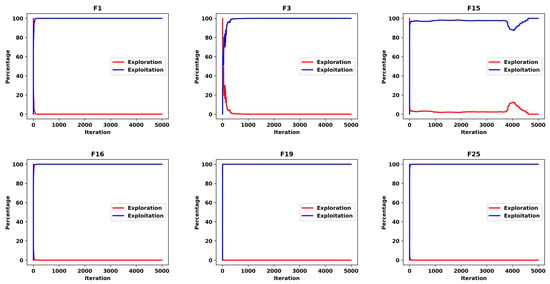

4.4. Exploration and Exploitation Analysis

The exploration and exploitation phases’ equilibrium in a metaheuristic optimization algorithm is evaluated using a population dispersion measure called diversity (denoted as ), which describes the spatial distribution of potential solutions with respect to the centroid at iteration time t. The formula for is expressed as follows in Equation (21) [41]:

where indicates the number of dimensions in the solution, is the size of the population, is the average position on dimension d at iteration t, and is the position of the i-th agent. Then, the proportion of exploration and exploitation is calculated as expressed in Equations (22) and (23).

where represents the largest observed dispersion during the optimization process. A good balance between these two types of search processes is essential to algorithm performance. Overexploiting can lead to premature convergence in a poor area of the search space, while excessive exploration may result in long, dispersed search paths and low precision of the found solutions. Figure 6 shows the results of experiments demonstrating how the RBMOAO adapts over time between these two modes. Figure 6 shows how the proportion of exploration (red) and exploitation (blue) evolves over time for the RBMOAO. Initially, there is a strong emphasis on exploration of the search space to locate good areas for a wide range of initial values. At this stage, the algorithm has a tendency to favor exploration because it uses the mechanism for heterogeneous grouping inherited from RBMO, which fosters interaction among different parts of the population. In contrast, the exploitation part of the search increases in intensity as the algorithm iterates, in line with the increase in the CF, which controls the reduction in step size towards better regions of the search space. This gradual transition shows that the RBMOAO can dynamically adjust its allocation of search effort without undergoing abrupt changes in phases that could destabilize convergence.

Figure 6.

Exploration and exploitation analysis plot.

Furthermore, the illustrated shift from exploration dominance to exploitation dominance (about 100%) within the first 100–500 iterations of (Figure 6), as opposed to at iteration 3333 (or 2T/3), is due to an important difference between algorithmic-phase and measuring behavior based on diversity; t ≤ 2T/3 determines which equations to use (exploration updates), while the diversity metric (Equations (21)–(23)) is used to measure the population dispersion. The hybrid nature of the RBMOAO’s approach, using the best solution as a guide for updates, coordinating across groups, modulating refinement with the CF, and selecting strictly greedily results in rapid identification and convergence toward promising areas of the search space, leading to rapid decreases in diversity, even in the formal exploration phase. On complex optimization problems (F1, F3, F16, F19, an F25), rapid cluster formation demonstrates successfully optimization by the RBMOAO, as demonstrated by the better final objective values reported in Table 2 and Table 3. Additionally, F15 (a complex composite problem) displays periods of re-diversification throughout iterations 3500–4500, illustrating the RBMOAO’s ability to adaptively continue exploration when the problem landscape requires it. Thus, the dominance of early exploitation demonstrates efficient search dynamics rather than algorithm failure.

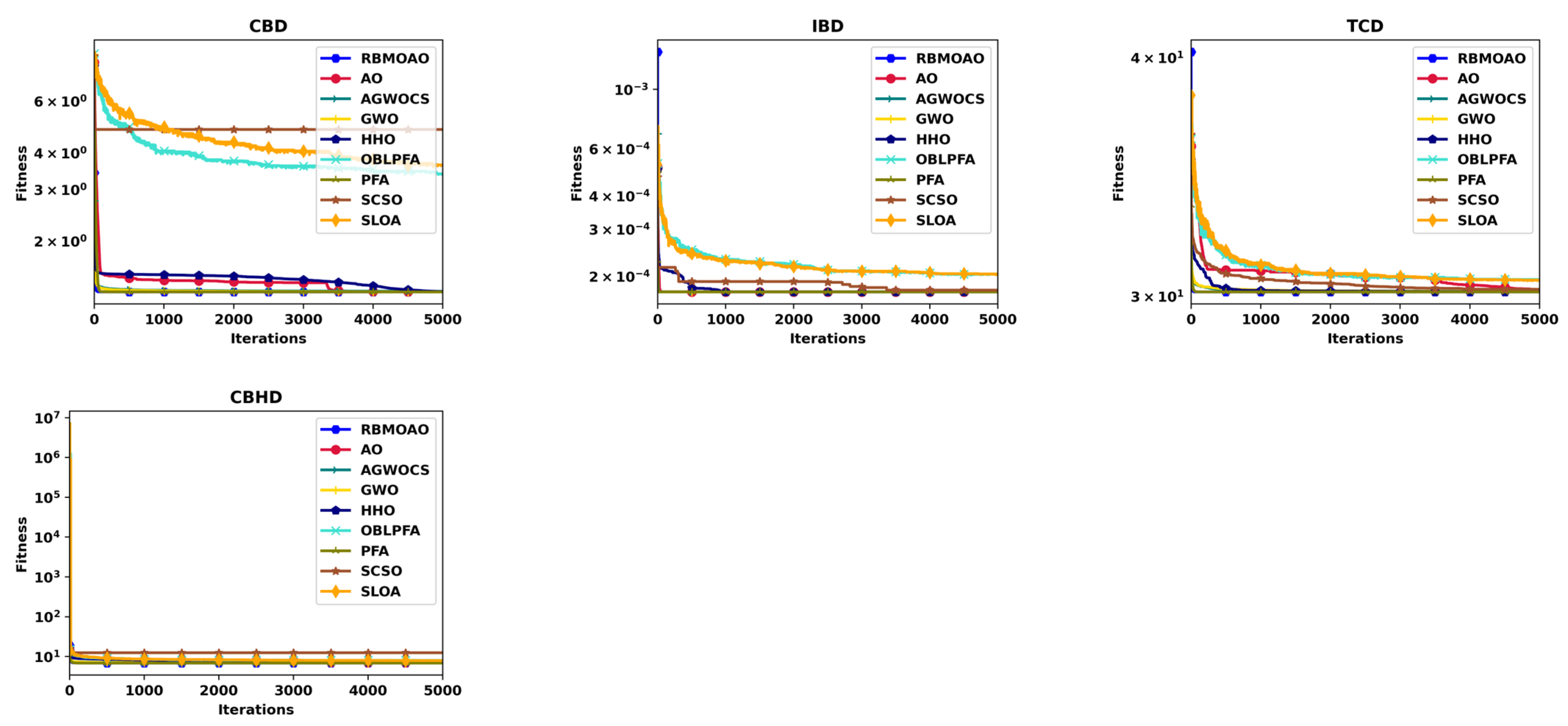

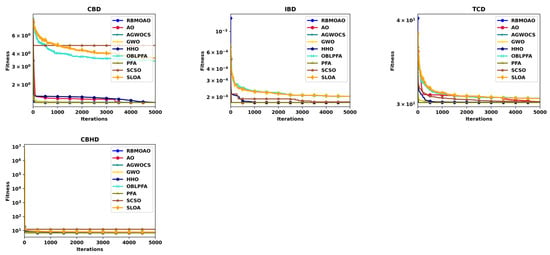

4.5. Application of Engineering Problems

In this section, all the experimental parameters remain the same as in the previous section.

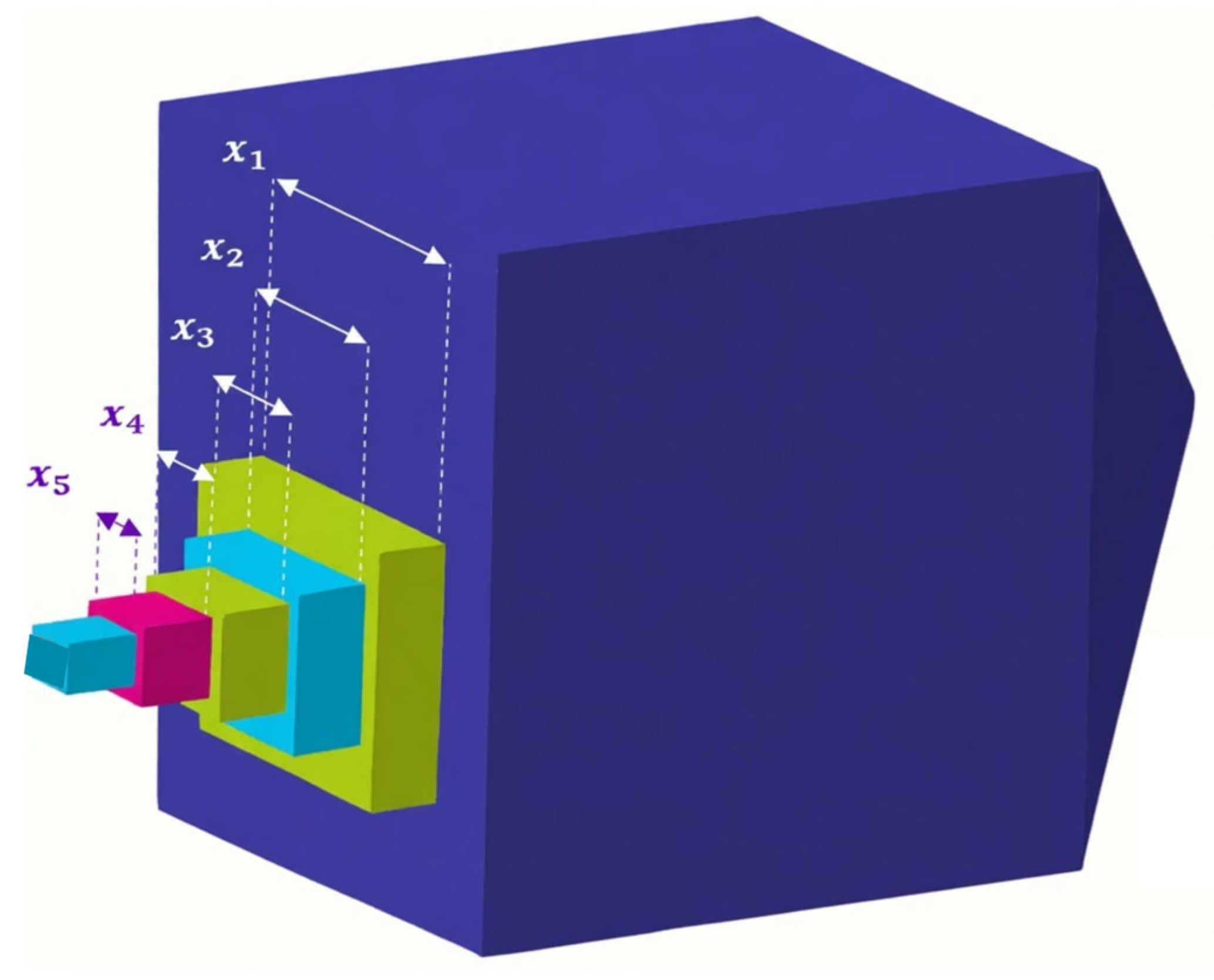

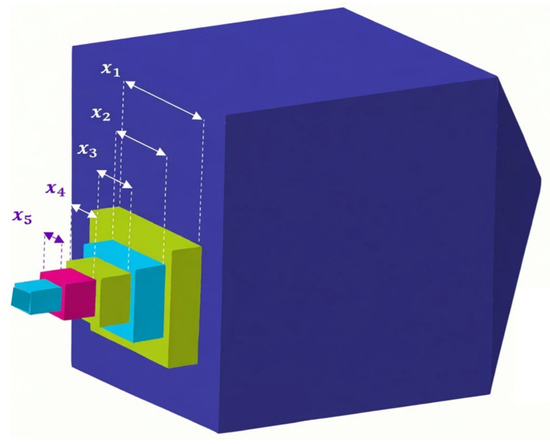

4.5.1. The Cantilever Beam Design (CBD) Problem

The RBMOAO was applied to solve the cantilever beam design (CBD) problem, which is a well-established structural engineering benchmark. The design configuration consists of five sequential hollow square cross-section beam-segment designs of equal wall thickness. As shown in Figure 7, the goal of the optimization process is to minimize the total weight of the structure. The decision variable includes the height of each of the five structural segments, and due to square symmetry, this is equivalent to their respective widths [42]. The CBD problem can be represented mathematically as a constrained minimization problem, as described in Equation (24). The objective function in this equation describes the cumulative mass of the cantilever assembly, and the constraints describe the feasibility limits based on the structural integrity requirements.

subject to

Figure 7.

Schematic diagram of the CBD.

The comparative experimental results presented in Table 5 show the mean and standard deviation of 30 independent runs. The results of the experiments demonstrate that most of the metaheuristics were able to find the same near-globally optimal structural configuration. While the RBMOAO outperformed them in terms of mean objective values, its mean objective value was slightly better than those obtained by some other algorithms; however, the RBMOAO also exhibited very little variability among the solutions it produced, indicating that it consistently produces high-quality solutions. The convergence of the RBMOAO is presented graphically in Figure 8.

Table 5.

Results of the compared algorithms on engineering problems.

Figure 8.

Convergence plot of optimizers on engineering problems.

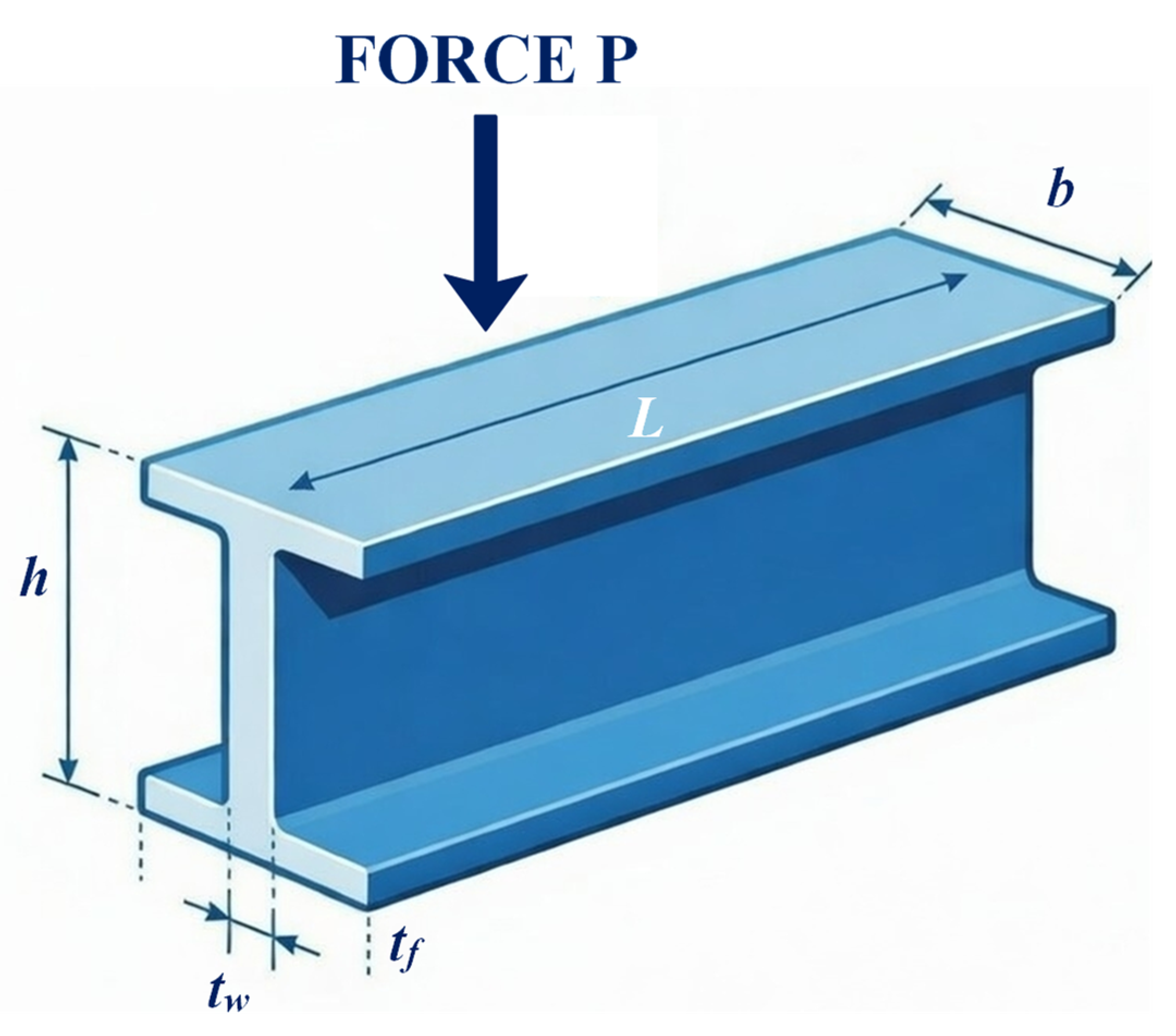

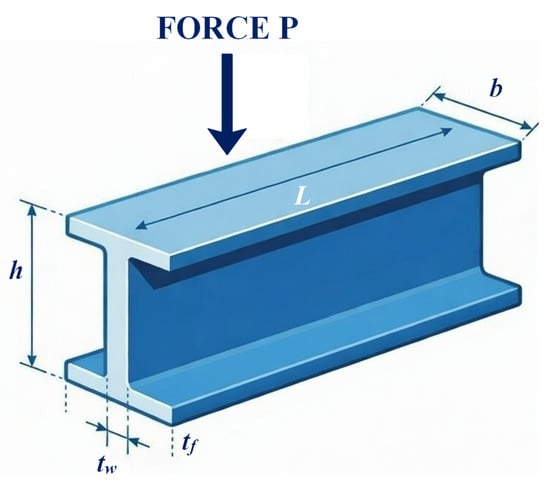

4.5.2. I-Beam Design (IBD) Optimization

In this section, the RBMOAO is applied to the IBD problem, a structural engineering test case designed to optimize vertical deflection under specified loading conditions. The geometric structure shown in Figure 9 includes four design parameters: flange width , total beam height , web thickness , and flange thickness . The optimization function, as defined in Equation (25) [42], includes two important constraints based upon the cross-sectional area and the maximum allowed stress to provide structural feasibility. Results are presented in Table 5. Most of the investigated algorithms were able to find a good design configuration. However, the RBMOAO found competitive solutions similar to other investigated metaheuristics. Furthermore, the RBMOAO found solutions that had less variability compared to many of the other metaheuristics, which is indicative of robustness during the stochastic initialization process. The convergence trajectory is depicted in Figure 8.

subject to

Figure 9.

Schematic diagram of the IBD problem.

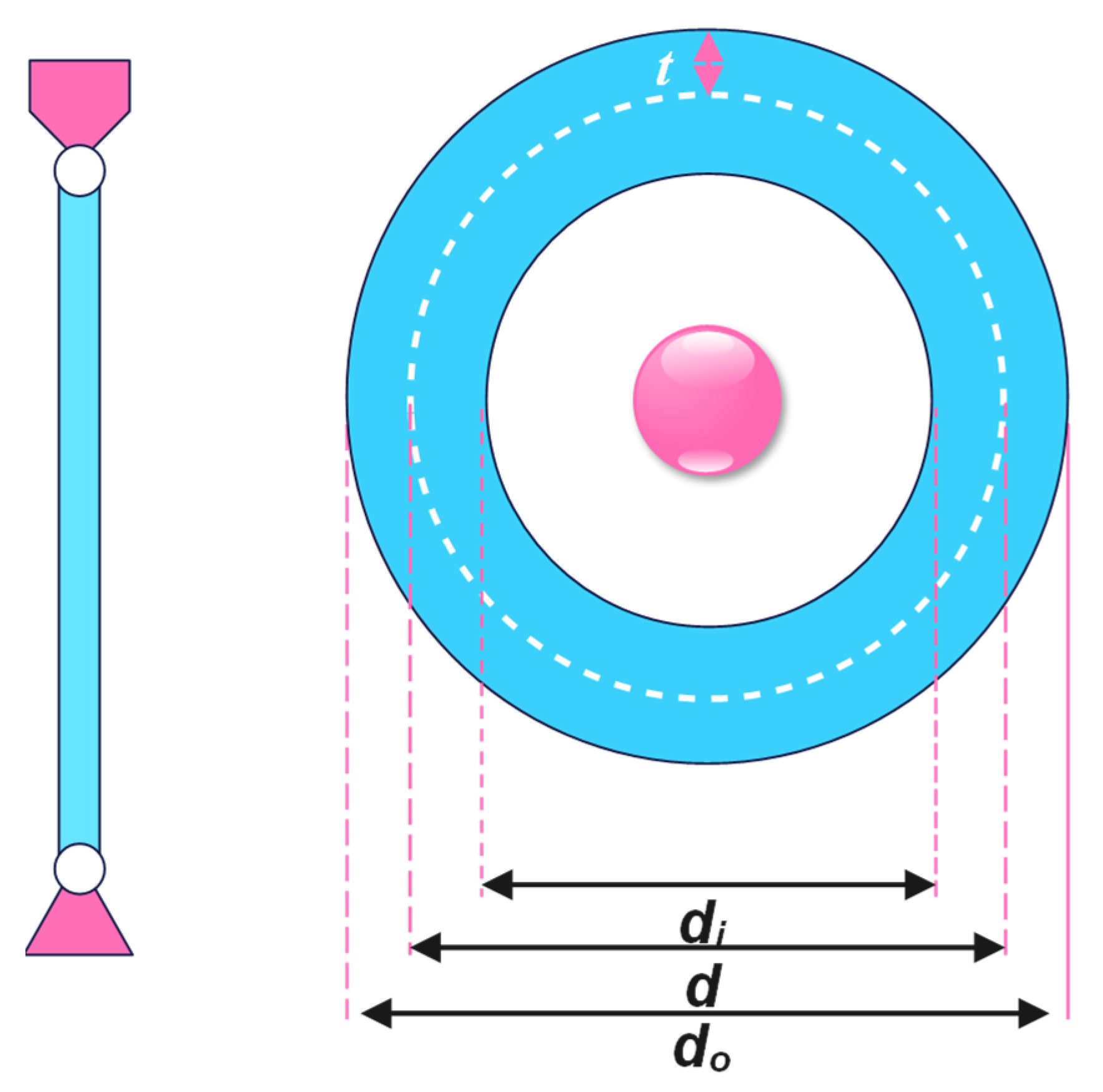

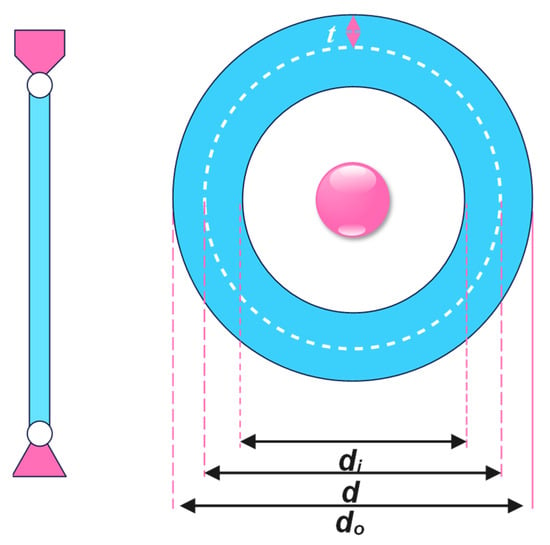

4.5.3. Tubular Column Design (TCD)

The optimization of a tubular column (tubular column design, TCD) is defined as a constrained engineering optimization problem that aims to minimize the manufacturing costs for a uniform hollow cylindrical column loaded axially (compressively). Two continuous decision variables define the design space—namely, the mean cross-sectional diameter () and the wall thickness () of the hollow cylindrical column—as seen in Figure 10. The structural material has a specified yield stress of and a modulus of elasticity of [42]. The optimization problem is formally stated as Equation (26) and includes constraints related to buckling instability, yielding, and geometry to ensure that the structure is safe under operational loads.

subject to

Figure 10.

Schematic diagram of the TCD problem.

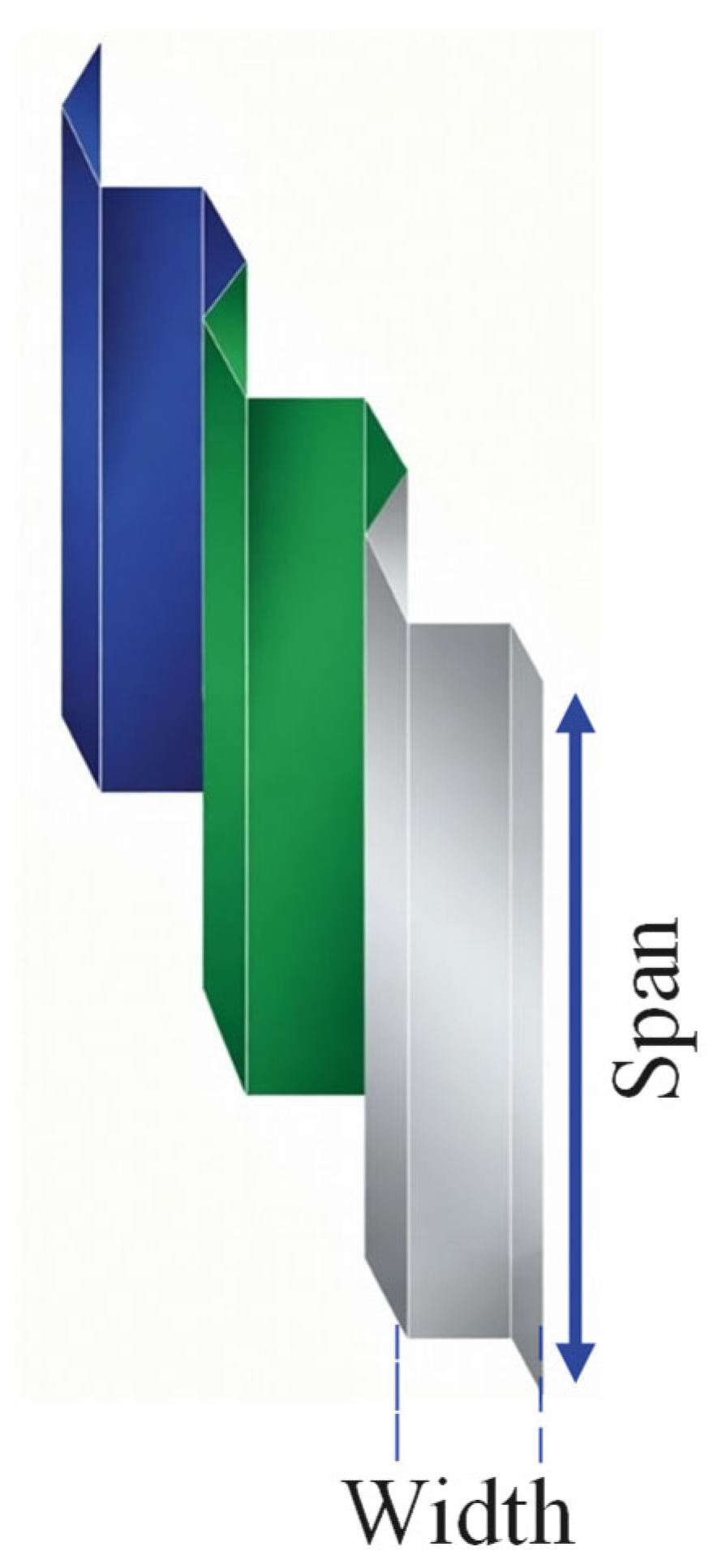

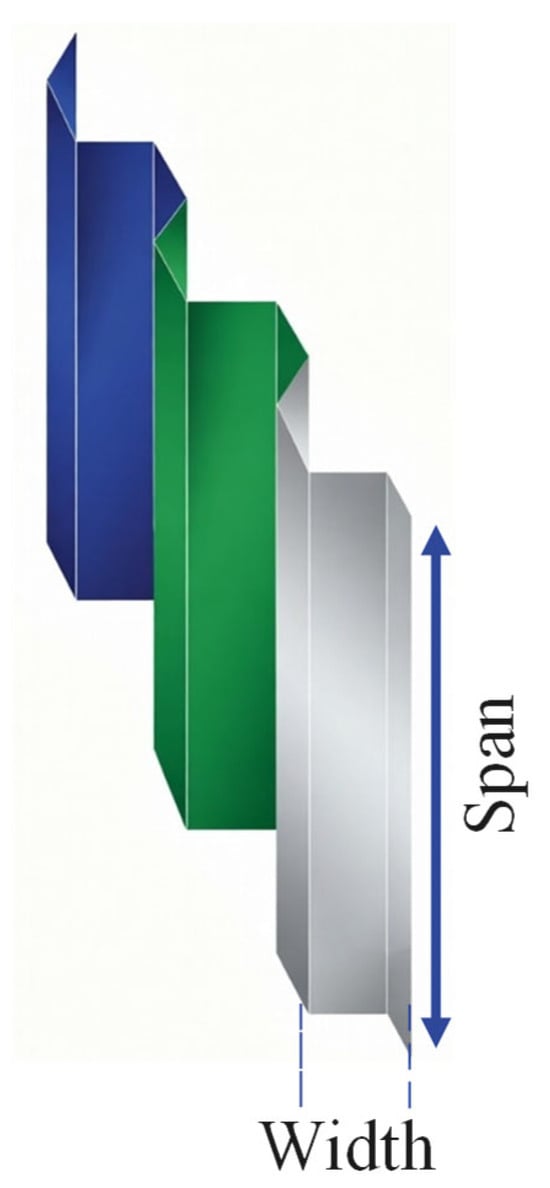

4.5.4. Corrugated Bulkhead Design (CBHD)

The CBHD problem was created to find the minimum structural weight for corrugated bulkheads that satisfied key operational and safety constraints [43]. As shown in Figure 11, the CBHD problem is parameterized using four decision variables: plate width , depth , length () and plate thickness (). The mathematical definition of the constrained optimization problem is presented as Equation (27) and includes all relevant constraints to ensure that the structure is able to resist buckling and withstand stresses due to hydrostatic loads.

subject to

Figure 11.

Schematic diagram of the CBHD problem.

The RBMOAO produced competitive results compared to other well-established metaheuristics, as shown in Table 5, and achieved solutions of quality similar to the most efficient algorithms, although the PFA achieved slightly better mean weight values than the RBMOAO. The convergent trajectory of the RBMOAO is shown in Figure 8.

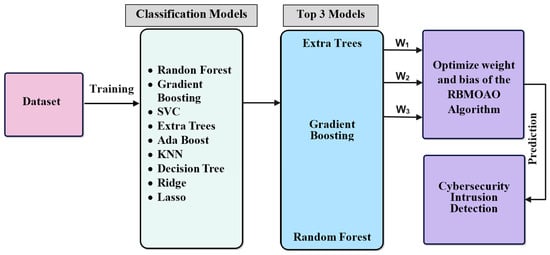

4.6. Application of the RBMOAO Ensemble in Cybersecurity Intrusion Data Prediction

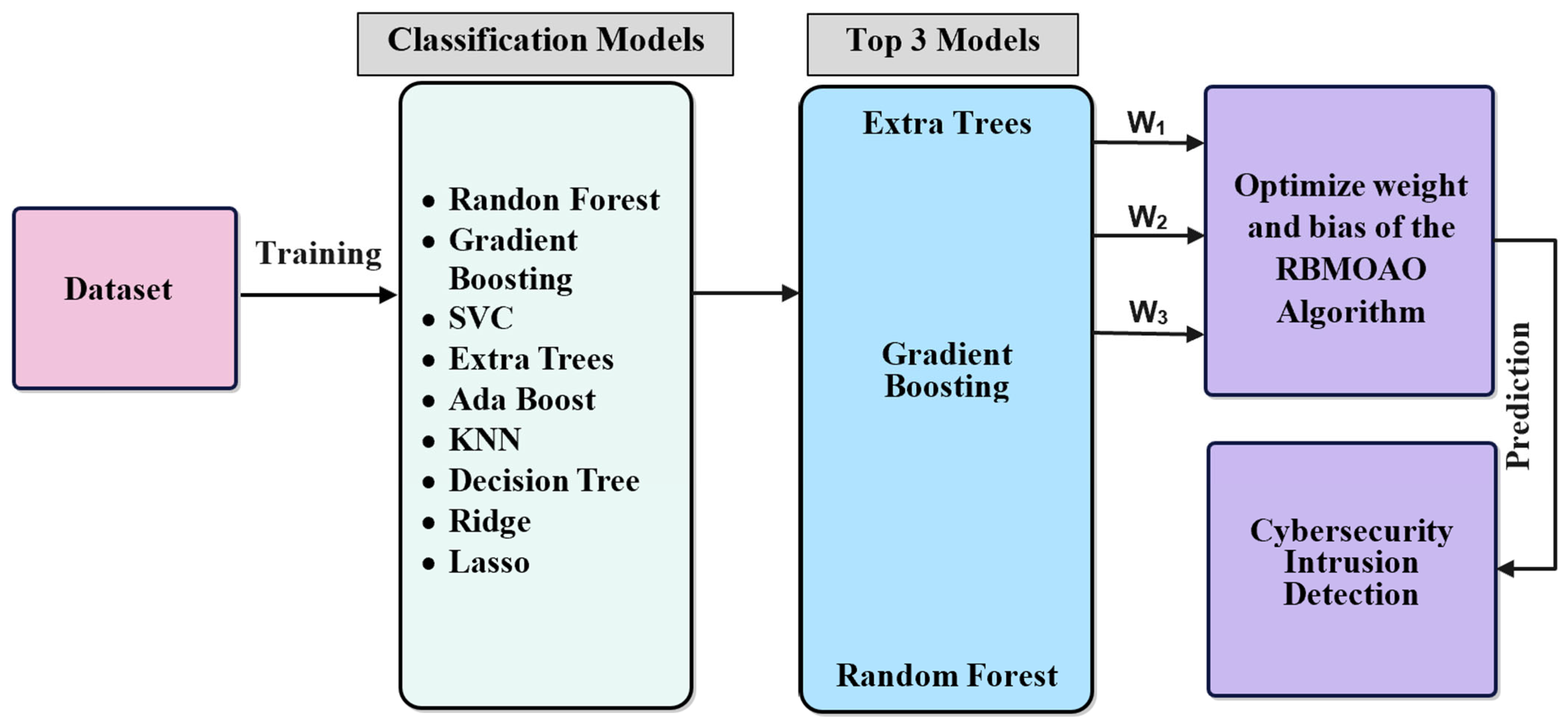

In this section, we describe the RBMOAO ensemble Framework, a metaheuristic optimized ensemble learning framework that can be utilized for effective predictive modeling of cyber threats, as well as intrusion detection in networks [44]. The model is illustrated in Figure 12 and includes four primary components of processing: data preprocessing, base learner training and selection, optimization of weights via the RBMOAO, and ensemble inference.

Figure 12.

Proposed RBMOAO ensemble architecture.

4.6.1. Dataset and Preprocessing

For all experiments, we utilized the Cybersecurity Intrusion Detection Dataset, which was obtained through the public dataset repository provided by Kaggle [45]. The dataset contained 9537 labeled instances of network traffic characterized by eleven distinct features. The target variable represents two possible classifications based on whether the network connection is considered “normal” traffic or a malicious intrusion. All numerical features in the dataset were preprocessed prior to model development, utilizing min–max normalization so that all of the features had an equivalent scale to allow for more accurate comparison and utilization of all of the features in the analysis. One-hot encoding was utilized to encode categorical features. Stratified sampling with a 70:15:15 split of the dataset for training, validation, and testing phases was utilized to create three representative datasets and prevent any potential data leakage during model development and evaluation.

4.6.2. Base Learner Training and Selection

A pool of nine different classification algorithms was utilized as base models to provide a variety of learning paradigm types. The algorithms used were: Random Forest, Gradient Boosting, Support Vector Classifier (SVC), Extra Trees, AdaBoost, K-Nearest Neighbors (KNN), Decision Tree, Ridge Classifier, and Lasso Classifier. All of these algorithms were trained individually with the default parameters on the training data in order to develop a baseline for comparison purposes. After training, the same models were tested against the validation set using several performance metrics: accuracy, precision, recall, F1 score, ROC-AUC, and log loss. The three best performing base learners from the previous evaluations were chosen for use in the final ensemble model: Extra Trees, Gradient Boosting, and Random Forest.

4.6.3. RBMOAO-Derived Weights

The main innovation of the proposed system is its use of the RBMOAO algorithm to determine the weights assigned to each base learner within the ensemble. Traditional ensemble systems utilize a static weight scheme (such as uniform average or accuracy-proportionate), whereas the RBMOAO ensemble uses dynamic weights determined through metaheuristics to find an optimal solution that can adapt to changes in performance based on the validation results.

The problem of optimizing the weights is defined as follows: Let M = 3 be the number of base learners selected, and let w = [w1, w2, w3]T be the weight vector such that wm ∈ [0, 1] represents the weight assigned to the m-th base learner. The weights are constrained so that they meet the conditions stated in the normalization equation (Equation (28)):

Each candidate solution in the RBMOAO population has a weight vector (w). To be minimized is the fitness function (f(w)), which is a log loss score that is weighted by each candidate solution (w). The optimization parameters are set as follows: the population size is 30, and the maximum number of iterations is 500, with weight bounds of [0, 1].

An evaluation of the performance of the RBMOAO ensemble was conducted on the test set using five standard classification metrics, i.e., accuracy, precision, recall, F1 score, and ROC-AUC, and an additional metric (log loss) was used to evaluate the quality of the predictions of the calibrated ensemble model. As illustrated in Table 6, the RBMOAO ensemble demonstrated superior performance relative to both the individual base learners across several metrics. Furthermore, the RBMOAO ensemble exhibited significant improvement in precision and F1 scores over the second-ranked method (Random Forest), indicating that the metaheuristic weight optimizations successfully identified synergistic weights that accentuated the strengths of each of the base learners while minimizing their respective weaknesses.

Table 6.

Result of models on cybersecurity intrusion prediction.

Adaptively, the use of the RBMOAO provides multiple advantages over traditional static ensemble methods. Specifically, the population-based search mechanism employed in the RBMOAO allows for the identification of potentially diverse weight combinations that would likely be missed by either gradient-based or heuristic searches. Secondly, the adaptive search behavior inherent to the RBMOAO search mechanism, which includes phase-adaptive search, enables the initial iterations of the search process to thoroughly explore the weight space prior to focusing on precise exploitation of promising weight combinations during subsequent iterations. Finally, the ability of the weights to dynamically adjust to the characteristics of the dataset avoids the need for manual adjustments to the ensemble for it to perform well on future intrusion detection datasets. This makes the proposed framework highly adaptable to other intrusion detection datasets.

5. Conclusions

The major drawback of the basic AO is the lack of a structured way to coordinate elite individuals when they are searching for solutions. The AO does not have the ability to use a dynamic method to divide high-performance agents into cooperative subgroups to increase the intensity of search in areas of the search space that are likely to produce good solutions. As a result, the AO does not have the ability to adjust how it balances the exploration–exploitation trade off as it moves from one optimization phase to another. Instead, it continues to perform at a similar level of search activity from initialization until it converges, without making adjustments based on which phase it is in. The RBMOAO addresses these limitations by using two mechanisms derived from the foraging behavior of red-billed blue magpie that can be used in conjunction with the AO to create a hybrid framework. The first mechanism is a heterogeneous grouping strategy used to partition the population into groups of different sizes throughout the entire search process (both the exploration phase and the exploitation phase). In addition, the grouping mechanism allows the elite agents to share information in order to increase the amount of searching that takes place in the areas of the search space that have high potential for producing good solutions. The second mechanism is an adaptive convergence factor (CF) that can be used to adjust the size of each step taken by the population members during each iteration of the search process. Therefore, the size of the steps that are taken during the initial iterations of the search process are large enough to allow the population to explore all or most of the search space before narrowing down the focus of the search. During the latter iterations of the search process, the size of the steps is small enough to enable the search to converge quickly to the optimal solution(s).

Empirical testing showed that the RBMOAO hybrid framework is effective in improving the performance of the AO when it is applied to the 2015 IEEE Congress on Evolutionary Computation (CEC) and 2020 IEEE Congress on Evolutionary Computation (CEC) benchmarks. The results show that the RBMOAO hybrid framework produces higher quality solutions than both the AO and other state-of-the-art evolutionary algorithms with respect to their ability to find the optimal solution for the test functions. The results also show that the RBMOAO hybrid framework is more robust than both the AO and other state-of-the-art evolutionary algorithms with respect to their ability to find the optimal solution(s) for the test functions. The RBMOAO demonstrated competitive performance on all four constrained structural design problems. Additionally, the RBMOAO was extended into a dynamic ensemble-based framework for prediction of cyber threats. Within this architecture, the RBMOAO is used to continuously update the weights assigned to each of the heterogeneous base learner algorithms such that the overall predictor is dynamically adapted and performs better than conventional regression models and fixed-weight ensembles using the same base learner algorithms. With the successful application of the RBMOAO-driven ensemble framework, the potential of applying metaheuristically optimized ensembles to high-stakes predictive analytics that require high levels of both accuracy and robustness to changes in the underlying probability distributions is established.

Future work will be focused on extending the capabilities of the RBMOAO in two primary areas: (i) multiobjective optimization through the use of search strategies that are aware of Pareto frontiers and (ii) the development of adaptive constraint-handling methods to dynamically manage the trade-off between exploration of the feasible region and exploitation of the infeasible region. Both of these extensions have the goal of further increasing the effectiveness of the RBMOAO in solving constrained engineering design problems, which are common in aerospace, civil engineering, and energy system optimization. Lastly, in response to the emergence of metaphor-free optimization, we intend to explore how parameterless operators may be integrated into the system. For example, best–worst–random operator update mechanisms could enhance to group-based perturbations during exploration phases of the search process as proposed by the RBMOAO. These best–worst–random operator update mechanisms would remove the need for specification of group sizes (p and q) while maintaining diversity through worst-solution repulsion. Further comparative studies of other AO versions will also be implemented. A major area for further empirical testing is a comprehensive study of the RBMOAO in comparison with state-of-the art AO versions.

Author Contributions

O.R.A.: Conceptualization, Editing, Methodology, and Original Draft; A.K.F.: Methodology, Formal Analysis, and Original Draft; H.K.: Supervision, Resources, Experiment, and Editing. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Data Availability Statement

The data obtained through the experiments are available upon request from corresponding author.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Chen, S.; Yang, G.; Cui, G.; Dong, X. Raindrop optimizer: A novel nature-inspired metaheuristic algorithm for artificial intelligence and engineering optimization. Sci. Rep. 2025, 15, 34211. [Google Scholar] [CrossRef] [PubMed]

- Li, J.-P.; Polovina, N.; Konur, S. A Review of AI-Driven Engineering Modelling and Optimization: Methodologies, Applications and Future Directions. Algorithms 2026, 19, 93. [Google Scholar] [CrossRef]

- Kyriklidis, C.; Koutouvou, A.; Moustakas, K.; Karayannis, V.; Tsanaktsidis, C. Artificial Intelligence and Nature-Inspired Techniques on Optimal Biodiesel Production: A Review—Recent Trends. Energies 2025, 18, 768. [Google Scholar] [CrossRef]

- Balaji, P.; Babu, S.; Chaurasia, M.A.; Thiyagarajan, A.; Akleylek, S.; Cengiz, K. A novel human-inspired solution to high-dimensional optimization problems. PeerJ Comput. Sci. 2025, 11, e3344. [Google Scholar] [CrossRef]

- Cui, E.H.; Zhang, Z.; Chen, C.J.; Wong, W.K. Applications of nature-inspired metaheuristic algorithms for tackling optimization problems across disciplines. Sci. Rep. 2024, 14, 9403. [Google Scholar] [CrossRef] [PubMed]

- Abualigah, L.; Yousri, D.; Abd Elaziz, M.; Ewees, A.A.; Al-qaness, M.A.A.; Gandomi, A.H. Aquila Optimizer: A novel meta-heuristic optimization algorithm. Comput. Ind. Eng. 2021, 157, 107250. [Google Scholar] [CrossRef]

- Fu, S.; Li, K.; Huang, H.; Ma, C.; Fan, Q.; Zhu, Y. Red-billed blue magpie optimizer: A novel metaheuristic algorithm for 2D/3D UAV path planning and engineering design problems. Artif. Intell. Rev. 2024, 57, 134. [Google Scholar] [CrossRef]

- Abdollahzadeh, B.; Gharehchopogh, F.S.; Khodadadi, N.; Mirjalili, S. Mountain Gazelle Optimizer: A new Nature-inspired Metaheuristic Algorithm for Global Optimization Problems. Adv. Eng. Softw. 2022, 174, 103282. [Google Scholar] [CrossRef]

- Xue, J.; Shen, B. Dung beetle optimizer: A new meta-heuristic algorithm for global optimization. J. Supercomput. 2023, 79, 7305–7336. [Google Scholar] [CrossRef]

- Xia, H.; Ke, Y.; Liao, R.; Zhang, H. Fractional order dung beetle optimizer with reduction factor for global optimization and industrial engineering optimization problems. Artif. Intell. Rev. 2025, 58, 308. [Google Scholar] [CrossRef]

- Wang, X.; Li, R.; Luo, X.; Guan, X. Improved Differential Mutation Aquila Optimizer-Based Optimization Dispatching Strategy for Hybrid Energy Ship Power System. IEEE Trans. Transp. Electrif. 2025, 11, 12667–12683. [Google Scholar] [CrossRef]

- Vellaiyan, S.; Kandasamy, M.; Arulprakasajothi, M.; Santhanakrishnan, R.; Srimanickam, B.; Elangovan, K. Optimization of fuel modification parameters for effective and environmentally-friendly energy from plant waste biodiesel. Results Eng. 2024, 22, 102177. [Google Scholar] [CrossRef]

- Yousri, D.; Allam, D.; Eteiba, M.B. Optimal photovoltaic array reconfiguration for alleviating the partial shading influence based on a modified harris hawks optimizer. Energy Convers. Manag. 2020, 206, 112470. [Google Scholar] [CrossRef]

- Markkandeyan, S.; Ananth, A.D.; Rajakumaran, M.; Gokila, R.G.; Venkatesan, R.; Lakshmi, B. Novel hybrid deep learning based cyber security threat detection model with optimization algorithm. Cyber Secur. Appl. 2025, 3, 100075. [Google Scholar] [CrossRef]

- Rao, R.V.; Davim, J.P. Optimization of Different Metal Casting Processes Using Three Simple and Efficient Advanced Algorithms. Metals 2025, 15, 1057. [Google Scholar] [CrossRef]

- Rao, R.V.; Shah, R. BMR and BWR: Two simple metaphor-free optimization algorithms for solving real-life non-convex constrained and unconstrained problems. arXiv 2024. [Google Scholar] [CrossRef]

- Varshney, M.; Kumar, P.; Abualigah, L. Hybridizing remora and aquila optimizer with dynamic oppositional learning for structural engineering design problems. J. Comput. Appl. Math. 2025, 462, 116475. [Google Scholar] [CrossRef]

- Al-Majidi, S.D.; Alturfi, A.M.; Al-Nussairi, M.K.; Hussein, R.A.; Salgotra, R.; Abbod, M.F. A robust automatic generation control system based on hybrid Aquila Optimizer-Sine Cosine Algorithm. Results Eng. 2025, 25, 103951. [Google Scholar] [CrossRef]

- Fu, Y.; Liu, D.; Fu, S.; Chen, J.; He, L. Enhanced Aquila optimizer based on tent chaotic mapping and new rules. Sci. Rep. 2024, 14, 3013. [Google Scholar] [CrossRef] [PubMed]

- Cui, H.; Xiao, Y.; Hussien, A.G.; Guo, Y. Multi-strategy boosted Aquila optimizer for function optimization and engineering design problems. Clust. Comput. 2024, 27, 7147–7198. [Google Scholar] [CrossRef]

- Kan, H.; Xiao, Y.; Gao, Z.; Zhang, X. Improved Multi-Strategy Aquila Optimizer for Engineering Optimization Problems. Biomimetics 2025, 10, 620. [Google Scholar] [CrossRef]

- Zeng, L.; Li, M.; Shi, J.; Wang, S. Spiral Aquila Optimizer Based on Dynamic Gaussian Mutation: Applications in Global Optimization and Engineering. Neural Process. Lett. 2023, 55, 11653–11699. [Google Scholar] [CrossRef]

- Bai, L.; Pei, Z.; Wang, J.; Zhou, Y. Multi-Strategy Improved Aquila Optimizer Algorithm and Its Application in Railway Freight Volume Prediction. Electronics 2025, 14, 1621. [Google Scholar] [CrossRef]

- Sharma, H.; Arora, K.; Mahajan, R.; Ansarullah, S.I.; Amin, F.; AlSalman, H. Improved aquila optimizer for swarm-based solutions to complex engineering problems. Sci. Rep. 2024, 14, 30714. [Google Scholar] [CrossRef] [PubMed]

- Wang, Y.; Zhang, Y.; Yan, Y.; Zhao, J.; Gao, Z.; Wang, Y.; Zhang, Y.; Yan, Y.; Zhao, J.; Gao, Z. An enhanced aquila optimization algorithm with velocity-aided global search mechanism and adaptive opposition-based learning. Math. Biosci. Eng. 2023, 20, 6422–6467. [Google Scholar] [CrossRef]

- Abualigah, L.; Alomari, S.A.; Almomani, M.H.; Abu Zitar, R.; Migdady, H.; Saleem, K.; Smerat, A.; Snasel, V.; Gandomi, A.H. Enhanced aquila optimizer for global optimization and data clustering. Sci. Rep. 2025, 15, 13079. [Google Scholar] [CrossRef]

- Yu, H.; Jia, H.; Zhou, J.; Hussien, A.G.; Yu, H.; Jia, H.; Zhou, J.; Hussien, A.G. Enhanced Aquila optimizer algorithm for global optimization and constrained engineering problems. Math. Biosci. Eng. 2022, 19, 14173–14211. [Google Scholar] [CrossRef] [PubMed]

- Xiao, Y.; Guo, Y.; Cui, H.; Wang, Y.; Li, J.; Zhang, Y.; Xiao, Y.; Guo, Y.; Cui, H.; Wang, Y.; et al. IHAOAVOA: An improved hybrid aquila optimizer and African vultures optimization algorithm for global optimization problems. Math. Biosci. Eng. 2022, 19, 10963–11017. [Google Scholar] [CrossRef] [PubMed]

- Wang, S.; Jia, H.; Liu, Q.; Zheng, R.; Wang, S.; Jia, H.; Liu, Q.; Zheng, R. An improved hybrid Aquila Optimizer and Harris Hawks Optimization for global optimization. Math. Biosci. Eng. 2021, 18, 7076–7109. [Google Scholar] [CrossRef]

- Zhao, J.; Gao, Z.-M.; Zhao, J.; Gao, Z.-M. The heterogeneous Aquila optimization algorithm. Math. Biosci. Eng. 2022, 19, 5867–5904. [Google Scholar] [CrossRef]

- Lu, B.; Xie, Z.; Wei, J.; Gu, Y.; Yan, Y.; Li, Z.; Pan, S.; Cheong, N.; Chen, Y.; Zhou, R. MRBMO: An Enhanced Red-Billed Blue Magpie Optimization Algorithm for Solving Numerical Optimization Challenges. Symmetry 2025, 17, 1295. [Google Scholar] [CrossRef]

- Liang, J.J.; Qu, B.Y.; Suganthan, P.N.; Chen, Q. Problem Definitions and Evaluation Criteria for the CEC 2015 Competition on Learning-Based Real-Parameter Single Objective Optimization; Computational Intelligence Laboratory, Zhengzhou University: Zhengzhou, China; Nanyang Technological University: Singapore, 2014. [Google Scholar]

- Yue, C.T.; Price, K.V.; Suganthan, P.N.; Liang, J.J.; Ali, M.Z.; Qu, B.Y.; Awad, N.H.; Biswas, P.P. Problem Definitions and Evaluation Criteria for the CEC 2020 Special Session and Competition on Single Objective Bound Constrained Numerical Optimization; Computational Intelligence Laboratory, Zhengzhou University: Zhengzhou, China; Nanyang Technological University: Singapore, 2019. [Google Scholar]

- Sharma, S.; Kapoor, R.; Dhiman, S. A Novel Hybrid Metaheuristic Based on Augmented Grey Wolf Optimizer and Cuckoo Search for Global Optimization. In Proceedings of the 2021 2nd International Conference on Secure Cyber Computing and Communications (ICSCCC), Jalandhar, India, 21–23 May 2021; pp. 376–381. [Google Scholar] [CrossRef]

- Mirjalili, S.; Mirjalili, S.M.; Lewis, A. Grey Wolf Optimizer. Adv. Eng. Softw. 2014, 69, 46–61. [Google Scholar] [CrossRef]

- Heidari, A.A.; Mirjalili, S.; Faris, H.; Aljarah, I.; Mafarja, M.; Chen, H. Harris hawks optimization: Algorithm and applications. Future Gener. Comput. Syst. 2019, 97, 849–872. [Google Scholar] [CrossRef]

- Talha, A.; Bouayad, A.; Malki, M.O.C. An improved pathfinder algorithm using opposition-based learning for tasks scheduling in cloud environment. J. Comput. Sci. 2022, 64, 101873. [Google Scholar] [CrossRef]

- Yapici, H.; Cetinkaya, N. A new meta-heuristic optimizer: Pathfinder algorithm. Appl. Soft Comput. 2019, 78, 545–568. [Google Scholar] [CrossRef]

- Seyyedabbasi, A.; Kiani, F. Sand Cat swarm optimization: A nature-inspired algorithm to solve global optimization problems. Eng. Comput. 2022, 39, 2627–2651. [Google Scholar] [CrossRef]

- Masadeh, R.; Mahafzah, B.A.; Sharieh, A. Sea Lion Optimization Algorithm. Int. J. Adv. Comput. Sci. Appl. 2019, 10, 388–395. [Google Scholar] [CrossRef]

- Zhong, R.; Hussien, A.G.; Houssein, E.H.; Yu, J. Enhanced crested ibis algorithm: Performance validation in benchmark functions, engineering problems, and application in brain tumor detection. Expert Syst. Appl. 2025, 289, 128231. [Google Scholar] [CrossRef]

- Ezugwu, A.E.; Agushaka, J.O.; Abualigah, L.; Mirjalili, S.; Gandomi, A.H. Prairie Dog Optimization Algorithm. Neural Comput. Appl. 2022, 34, 20017–20065. [Google Scholar] [CrossRef]

- Gandomi, A.H.; Yang, X.-S.; Alavi, A.H. Cuckoo search algorithm: A metaheuristic approach to solve structural optimization problems. Eng. Comput. 2013, 29, 17–35. [Google Scholar] [CrossRef]

- Cao, Y.; Du, X.; Yu, J.; Zhong, R.; Munetomo, M. Dynamic fitness-distance balance competitive swarm optimizer: Performance investigation, engineering Simulation, and application in superconductor critical temperature prediction. Clust. Comput. 2025, 28, 745. [Google Scholar] [CrossRef]

- Cybersecurity Intrusion Detection Dataset. Available online: https://www.kaggle.com/datasets/dnkumars/cybersecurity-intrusion-detection-dataset (accessed on 11 February 2026).

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.