Abstract

Environmental perception is a cornerstone for autonomous underwater vehicles (AUVs) to achieve robust self-localization and scene understanding, which are pivotal for the intelligent management of marine ranching. However, underwater image degradation and weak-textured scenes significantly hinder reliable self-localization and fine-grained environmental perception. To address the perceptual asymmetry arising from these challenges, this paper proposes a robust visual–inertial simultaneous localization and mapping (SLAM) and biomass assessment scheme for marine ranching. Specifically, we first propose a robust tightly coupled underwater visual–inertial localization scheme, which leverages a multi-sensor fusion strategy to solve the image degradation problem of localization in complex underwater environments. Furthermore, we propose a novel underwater scene perception method, which enables the simultaneous visual reconstruction of aquaculture species and the quantitative mapping of their spatial distribution in marine ranching. Finally, we develop a low-cost, agile, and portable multisensor-integrated system that consolidates autonomous localization and aquaculture biomass assessment modules, with its performance validated through extensive real-world underwater experiments. The experimental results demonstrate that the proposed methods can effectively overcome the interference of complex underwater environments and provide high-precision perception support for both AUV state estimation and aquaculture asset management.

1. Introduction

Marine ranching, as a sustainable fishery model that integrates modern mariculture with ecological restoration, has become a cornerstone of the global blue economy [1,2,3]. Achieving high-efficiency and intelligent management in these complex underwater environments necessitates advanced perception capabilities for biological monitoring and asset assessment [4,5]. Traditional aquaculture survey methods, primarily relying on manual diving or static monitoring platforms, are increasingly inadequate due to high operational costs, low efficiency, and limited spatial coverage, often failing to provide the real-time, large-scale data required for precision decision-making [6,7]. Therefore, autonomous underwater vehicles (AUVs) equipped with multimodal sensors have emerged as a promising solution for achieving autonomous, large-scale, and fine-grained underwater monitoring [8].

However, achieving reliable self-localization for AUVs in marine ranching remains a significant challenge. Due to the rapid attenuation of electromagnetic waves in conductive seawater, conventional radio-based positioning and Global Navigation Satellite Systems (GNSS) are rendered ineffective for submerged operations [9]. While acoustic-based systems offer long-range stability, their practical application is often constrained by high deployment costs, low update rates, and multipath interference in shallow-water ranching environments [10,11]. In particular, modern high-frequency imaging sonar systems, while capable of providing high-resolution morphology in turbid water, involve substantial hardware expenditures that limit large-scale commercial adoption. Furthermore, these acoustic sensors are highly susceptible to multipath interference in the presence of complex cage structures and seabed reflections, which introduces ghost artifacts and complicates the automated extraction of biological features.

In light of these acoustic limitations, visual sensing has emerged as a higher-resolution and more cost-effective alternative for local trajectory estimation [12]. Recently, research progress in pure vision-based learning underwater SLAM has introduced promising architectures to address these optical challenges. Deep learning models are increasingly utilized to replace traditional geometric pipelines, such as using self-supervised networks to estimate camera ego-motion and dense depth maps directly from monocular sequences [13]. Alternatively, learning-based feature descriptors have been developed and trained specifically on underwater datasets to maintain robust data association under scattering and low-visibility conditions [14,15]. However, the generalization of these end-to-end SLAM frameworks remains constrained by the scarcity of diverse underwater training datasets and the high computational demands for real-time inference on resource-limited AUVs. Furthermore, visual odometry is susceptible to the unique optical properties of water, where light scattering and absorption attenuate feature distinctiveness and induce significant localization drift [16,17]. This susceptibility is further exacerbated during agile AUV maneuvers, where fast camera motion results in motion blur and data association failures [18,19]. To address these compounded limitations, the integration of inertial measurement units (IMUs) has become essential, leveraging the complementary error characteristics of visual and inertial modalities to maintain trajectory consistency during rapid movements [20,21].

Despite the advantages of multi-sensor fusion, ensuring robust performance under the perceptual asymmetry caused by the persistent interference typical of marine ranching remains a significant challenge. In this context, perceptual asymmetry is formally defined as the breakdown of bidirectional consistency during visual tracking, where the forward feature mapping from one frame to the next is no longer mathematically consistent with its reciprocal backward transformation. Addressing this inherent disruption of sensing equilibrium requires more sophisticated, tightly coupled frameworks capable of specifically mitigating degraded visual inputs [22,23]. Moreover, reliable localization alone provides only the geometric foundation, which cannot satisfy the practical requirements of modern aquaculture management. Most current underwater localization and mapping systems focus primarily on obstacle avoidance or trajectory tracking, but they often lack the detailed information needed for biological assessment [1,10,24,25]. To bridge this gap, our work extends the functionality of conventional SLAM by integrating biological semantic perception with spatial mapping. By explicitly modeling the spatial distribution of aquaculture species within the reconstructed 3D environment, the proposed system enables precise biological counting and density estimation. Integrating these biological characteristics with geometric reconstruction to achieve quantitative mapping of species distribution is a key step toward automated biomass assessment in marine ranching [26].

The practical implementation of AUVs in scientific research is frequently hampered by their substantial physical dimensions, excessive weight, and prohibitive capital costs. These attributes present formidable operational challenges within the spatially constrained and structurally complex environments of marine ranching. Specifically, the bulky profiles of traditional platforms significantly escalate collision risks when navigating between cages, artificial reefs, and dense aquaculture moorings [27,28,29]. Furthermore, the reliance of these large-scale systems on specialized launch-and-recovery equipment markedly restricts their maneuverability in shallow or obstacle-rich settings [11,30,31,32]. Beyond these physical limitations, the simultaneous execution of high-precision localization and deep-learning-based perception algorithms imposes a massive computational burden that typically necessitates power-hungry, high-performance workstations [33,34,35]. Such requirements not only curtail operational endurance but also preclude the system’s ability to achieve full task autonomy without external support. Addressing these bottlenecks requires a highly integrated, energy-efficient architecture capable of orchestrating both robust localization and sophisticated biomass assessment modules on a single, compact embedded platform. Integrating these diverse functionalities into a portable and cost-effective device is essential for enabling real-time, on-site monitoring independent of external computing infrastructure, a transition that holds significant engineering value for the intelligent management of marine ranching [7,36,37].

To address these challenges, this paper proposes a robust visual–inertial SLAM and biomass assessment scheme for AUVs. The main contributions of this work are summarized as follows:

- We propose a robust tightly coupled underwater visual–inertial localization scheme, which leverages a multi-sensor fusion strategy to solve the image degradation problem of localization in complex underwater environments.

- We propose a novel underwater scene perception method, which enables the simultaneous visual reconstruction of aquaculture species and the quantitative mapping of their spatial distribution in marine ranching.

- We develop a low-cost, agile, and portable multisensor-integrated system that consolidates autonomous localization and aquaculture biomass assessment modules, with its performance validated through extensive real-world underwater experiments.

This article is structured in the following manner. Section 2 provides a brief review of some related works. Section 3 presents the proposed visual–inertial SLAM and biomass assessment method for AUVs in detail. In Section 4, we describe the experimental results obtained from both public datasets and water tank environments to validate the performance of the system. Finally, the conclusions and directions for future work are summarized in Section 5.

2. Related Work

Self-localization remains a fundamental prerequisite for AUVs to execute specialized missions such as ecological restoration, infrastructure inspection, and biomass assessment in marine ranching environments. In the early stages of underwater robotics, navigation relied predominantly on magnetic compasses and dead reckoning; however, these approaches are inherently limited by cumulative drift and the inability to provide absolute global positioning without auxiliary velocity data. Currently, the mainstream underwater navigation paradigm is heavily anchored in acoustic sensors, including Sonar, Ultra-Short Baseline (USBL), and Doppler Velocity Log (DVL) [28,37,38]. For instance, sophisticated filtering frameworks have been developed to utilize sonar for mapping complex underwater structures, while pose-graph SLAM has been employed to enhance acoustic localization accuracy [31,39]. Despite their long-range stability, the high capital costs of acoustic transducers and the logistical complexity of deploying transponder networks in shallow-water ranching often render these systems economically unfeasible for large-scale civil applications [10,11,40].

With the rapid maturation of underwater communication technologies, Underwater Acoustic Sensor Networks (UASNs) have emerged as a pivotal research area for coordinated localization [41]. Recent studies have explored AUV-aided localization schemes for UASNs that incorporate real-time current field estimation to mitigate environmental disturbances [33,42]. To optimize data transmission, researchers have introduced Software-Defined Networking (SDN) architectures for delay-sensitive spatiotemporal routing in distributed sensor nodes [30]. Nevertheless, the operational efficiency of these networks in aquaculture scenarios is frequently constrained by limited bandwidth and high transmission latency. Furthermore, the passive motion of sensor nodes induced by tides or currents introduces significant positioning noise, which complicates the task of maintaining a stable reference frame for autonomous vehicles. These communication bottlenecks necessitate the development of more self-contained, high-resolution sensing modalities that do not rely on external node infrastructures.

Drawing parallels from terrestrial domains, self-driving vehicles and unmanned aerial systems (UAVs) typically leverage high-precision 3D LiDAR for spatial awareness and 6-DOF pose estimation [43]. Recent breakthroughs in LiDAR-only odometry have demonstrated exceptional robustness in complex urban landscapes by matching learning-based descriptions of point cloud segments [34]. To further refine performance, multi-modal fusion strategies that combine sparse LiDAR data with dense camera imagery have been widely adopted [44]. However, the application of LiDAR in the subsea domain faces severe physical constraints, because the rapid absorption of laser energy in conductive seawater and the substantial physical footprint of high-power scanners make them ill-suited for compact, energy-constrained AUVs. Consequently, optical cameras have gained traction as a lightweight, data-rich alternative, offering a cost-effective pathway to achieve high-resolution perception in complex underwater scenes.

High-precision state estimation is essential for autonomous underwater operations. Thus, achieving reliable localization in complex environments has driven research toward robust feature tracking in low-texture or turbid settings [32]. To this end, Wang et al. [23] investigated the integration of point and line features to improve the stability of visual odometry. However, purely visual approaches are highly susceptible to optical scattering and motion blur. Low-cost IMUs are typically integrated to compensate for these visual failures during agile maneuvers. Qin et al. [45,46] proposed a versatile optimization-based framework that treats various sensors as general factors, enabling seamless pose graph optimization across heterogeneous sensor suites. However, this framework is not directly applicable to underwater applications. Campos et al. [47] developed ORB-SLAM3, which extends the feature-based SLAM paradigm to support multi-map management and diverse sensor configurations. He et al. [48] proposed an online visual SLAM system that enables robust localization in dynamic environments without relying on predefined object labels. Despite these advancements, most existing frameworks focus on geometric mapping and state estimation. A significant gap remains between robust localization and the comprehensive scene understanding required for biological assessment.

While robust localization is fundamental, the concurrent development of dense scene representation remains essential for comprehensive perception. Li et al. [49] proposed an end-to-end 3D reconstruction system which achieves fine scene reconstruction without prior information by utilizing a neural implicit encoding. Wang et al. [50] proposed a CPU-based dense surfel mapping system that utilizes superpixels to achieve memory efficiency and employs a pose-graph-based organization to maintain real-time global consistency across various scales. Yang et al. [51] developed SeaSplat, which integrates a physically grounded image formation model with 3D Gaussian Splatting to enable real-time underwater scene rendering and medium effect removal. In addition, some researchers have explored object recognition to enhance perception capabilities. Geng [52] proposed a causal intervention paradigm to improve recognition robustness under limited samples. Tian et al. [53] introduced YOLOv12, which achieves a superior balance between speed and accuracy through an attention-centric architecture, providing a potential backbone for high-performance perception tasks. However, these developments in scene perception typically remain fragmented and are rarely integrated into a unified SLAM framework for the specific requirements of marine ranching.

Unlike existing studies that rely on high-cost sensors for data acquisition, our work develops a unified architecture that couples visual–inertial SLAM with a biological mapping module. By projecting object detection results into a globally consistent 3D model, this approach enables precise spatial distribution analysis and biomass estimation for intelligent marine ranching.

3. Methods

3.1. Overview

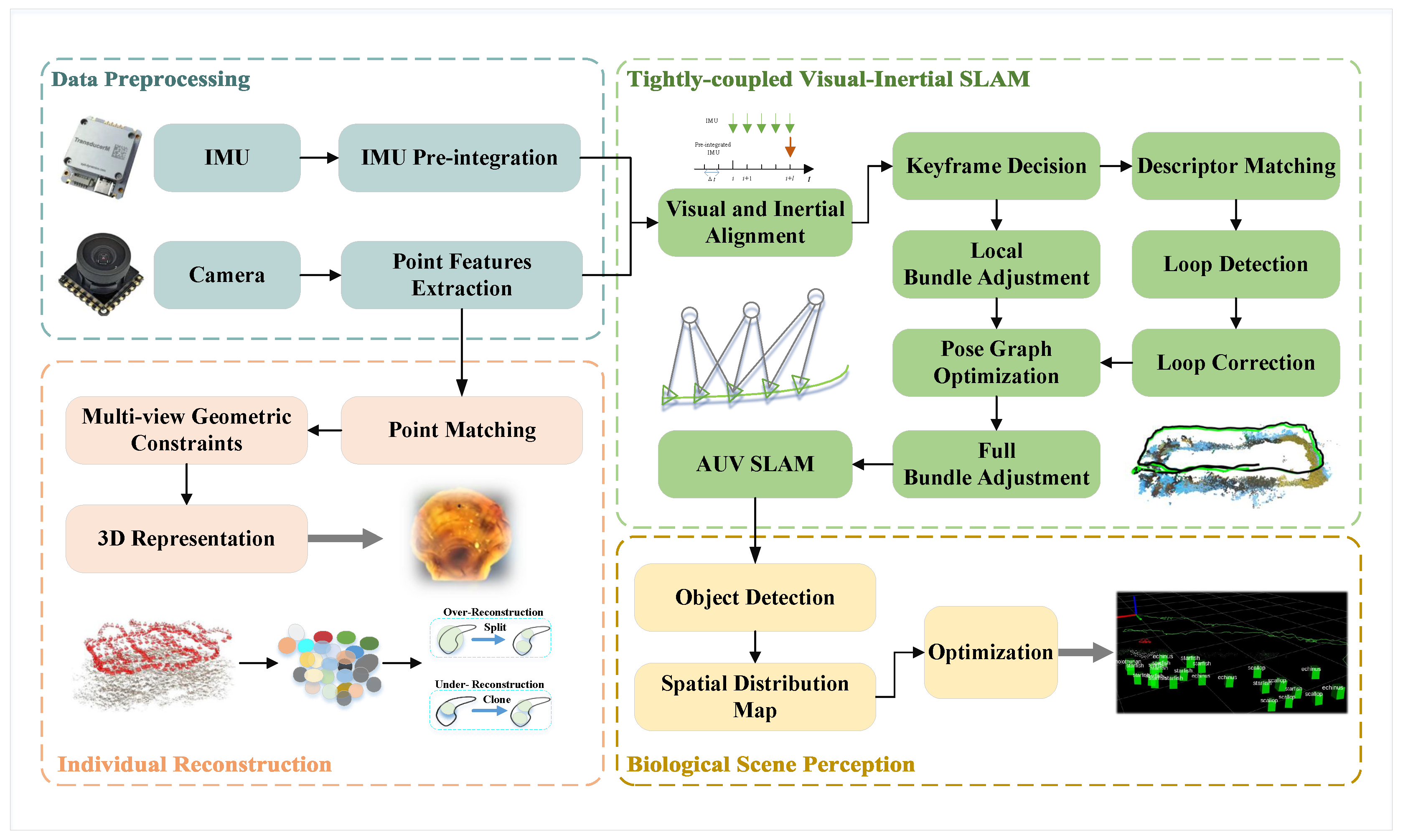

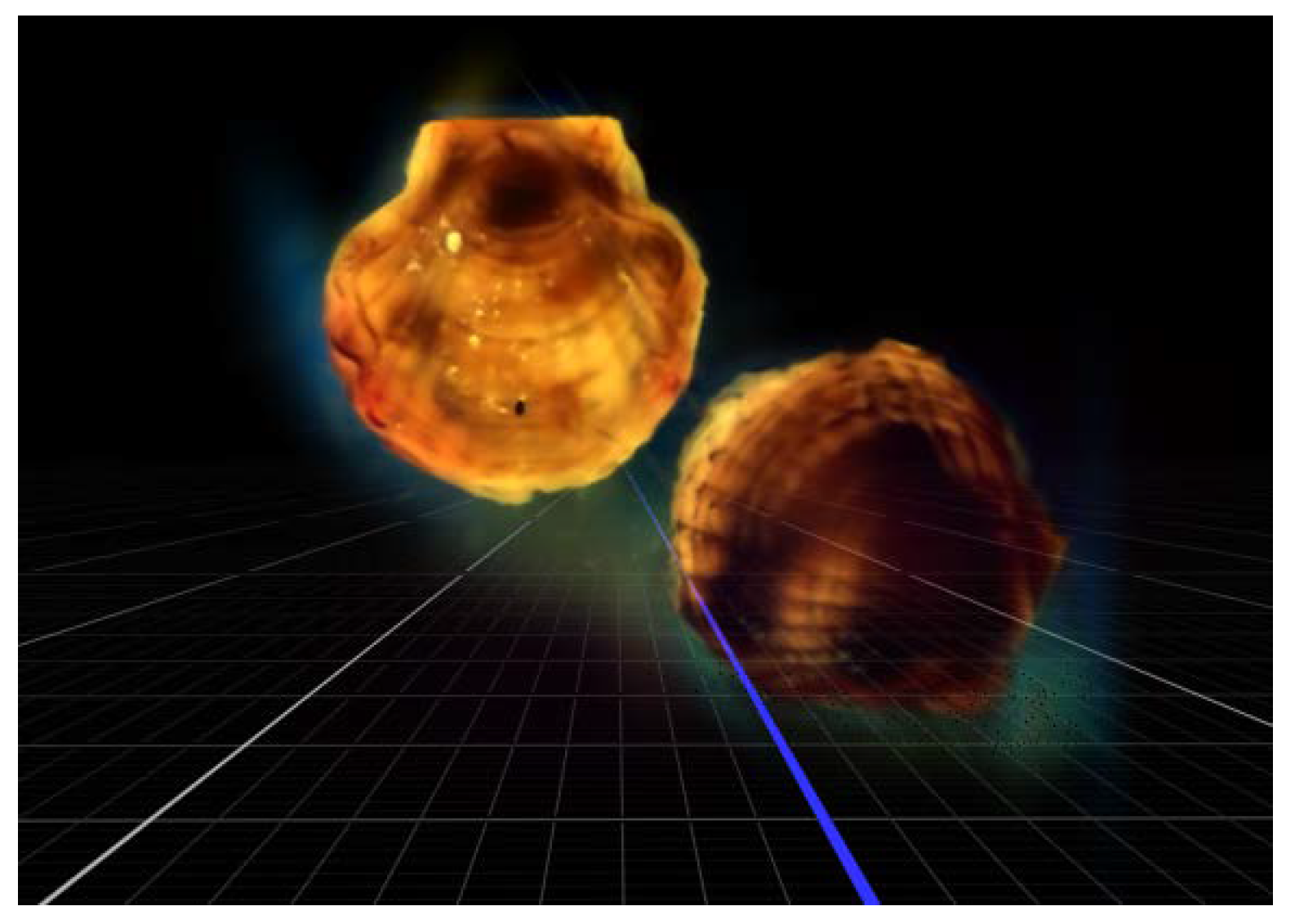

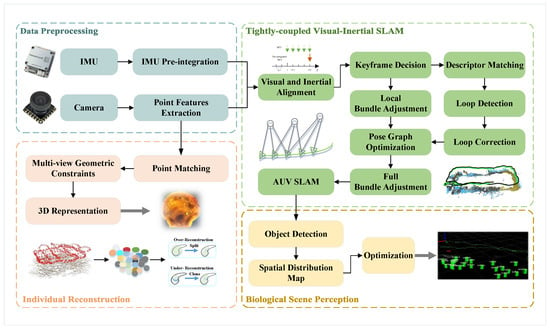

The overall architecture of the proposed visual–inertial SLAM and biomass assessment system is illustrated in Figure 1, comprising a series of concurrent modules designed to ensure spatial consistency within the marine ranching environment. The process initiates with the Data Preprocessing module, where robust visual features are extracted from synchronized frames and IMU measurements are pre-integrated to provide initial motion constraints. Subsequently, the tightly coupled Visual–Inertial SLAM module performs state estimation by minimizing a joint cost function of visual re-projection errors and inertial residuals, effectively mitigating the drift induced by underwater optical degradation. Following the localization stage, the Biological Scene Perception module leverages the estimated AUV trajectory to perform trajectory-based back-projection, which enables the quantitative mapping of species distribution while eliminating the redundant counting of biological targets. Simultaneously, the Individual Reconstruction module utilizes multi-view geometric constraints to generate high-fidelity 3D models of specific specimens, capturing intricate morphological features for biomass assessment.

Figure 1.

The outline of the proposed algorithm.

3.2. Underwater Visual–Inertial SLAM

To achieve robust state estimation and environment representation in visually degraded marine ranching scenarios, we formulate the SLAM task as a nonlinear optimization problem within a sliding window framework. The state vector to be optimized encompasses the kinematic properties of the AUV and the geometry of the observed scene across a window of size n. Specifically, the full state vector is defined as:

where m denotes the total number of features observed in the sliding window, and the state variable represents the IMU state at the time of the k-th image.

This state consists of the position , velocity , and orientation quaternion in the world frame, alongside the accelerometer bias and gyroscope bias . In this formulation, the position and orientation collectively define the pose of the AUV, denoted as , which maps coordinates from the body frame to the world frame w.

The extrinsic parameters between the camera frame and the body frame are denoted by , while represents the inverse depth of the l-th visual feature, acting as the fundamental geometric element for spatial mapping.

To estimate the optimal state , we employ a Maximum A Posteriori (MAP) estimation approach, which minimizes the sum of the prior residuals, inertial measurement residuals, and visual reprojection errors. The objective function is formulated as follows:

where and encapsulate the prior information derived from marginalization; represents the set of IMU pre-integration measurements; and denotes the set of visual observations within the window.

The construction of the residual terms is specifically tailored to address the challenges of underwater perception. The inertial residual is computed using the pre-integration theory, providing a rigid motion constraint that allows the system to maintain trajectory continuity during temporary visual degradation. For the visual term, represents the reprojection error of the l-th feature. In contrast to general-purpose frameworks, we introduce an Environmental Reliability Matrix to account for the non-uniform light absorption and scattering characteristics of water. This diagonal matrix is derived from local image statistics by quantifying the local texture richness and the temporal stability of each feature track. Specifically, the diagonal elements are computed as a function of the local image contrast, which reflects the signal-to-noise ratio under turbid conditions, and the historical tracking consistency of the feature across the sliding window. This mechanism assigns higher confidence to features with distinct morphological traits and stable motion trajectories, thereby providing a physics-informed weight for the optimization task. Furthermore, the Huber robust loss function is applied to mitigate the impact of outliers caused by moving biological targets, ensuring that the optimization process remains convergent despite the dynamic disturbances.

Finally, the system constructs a spatially consistent map by organizing landmarks according to the optimized pose graph. Following the principles of scalable mapping, each feature is associated with its corresponding keyframe pose in , ensuring that the global geometry can be updated efficiently when a loop closure is detected. For each landmark, the system maintains its visibility history and local normal vector to facilitate subsequent biomass assessment. To guarantee real-time performance on the compact embedded platform, a selective marginalization strategy using the Schur complement is implemented, which bounds the computational complexity while preserving the historical geometric information necessary for long-term underwater operations.

3.3. Biological Scene Perception and Reconstruction

This section delineates the methodology for transforming sparse geometric cues into actionable biological intelligence through integrated scene perception and individual specimen reconstruction. By leveraging the optimized trajectory and the refined landmarks from the SLAM module, the system facilitates both precise population density analysis via spatial de-duplication and high-fidelity morphological modeling through depth-aware physical compensation. The core of this process lies in the spatial synchronization between 2D biological detections and the global 3D coordinate system, ensuring that each identified specimen is uniquely localized and its intrinsic optical properties are accurately restored.

To achieve accurate population density analysis while avoiding redundant counting, we implement a trajectory-based back-projection mechanism. As the AUV traverses the survey grid, the 2D detection results of biological targets are projected into the 3D space. By utilizing the synchronized camera poses , the system resolves spatial overlaps and assigns a unique spatial coordinate to each identified specimen. To maintain global consistency, biological landmarks are organized according to the optimized pose graph . When a loop closure is detected, a map deformation is performed to update the landmark positions as follows:

where is the set of neighboring keyframes influencing the landmark, is the proximity-based weight, is the updated pose, and denotes the historical pose estimate prior to global optimization.

This relative transformation ensures that biological landmarks are re-aligned with the corrected trajectory, effectively eliminating redundant counts caused by cumulative sensor drift or overlapping views. By propagating the trajectory refinement to the spatial biological distribution, the system maintains a consistent mapping state. Consequently, the population density can be calculated accurately within specific sub-regions of the aquaculture tank, providing a reliable metric for biomass assessment.

Following the establishment of a consistent spatial reference, the system performs fine-grained 3D reconstruction of specific individuals. To mitigate the adverse effects of underwater light scattering and absorption, we incorporate a physically grounded image formation model. The observed radiance I is modeled using the distance derived from the SLAM landmarks:

where represents the recovered true radiance of the biological target, is the medium attenuation coefficient, and B denotes the background light. By inverting this model, we recover the inherent color and morphological details of the specimens.

Building upon these compensated observations, we employ a 3D Gaussian representation to model the geometry of organisms. Each specimen is represented by a set of 3D Gaussians characterized by a spatial covariance matrix Σ. To ensure that the covariance remains positive semi-definite during optimization, it is decomposed as follows:

where and denote the rotation and scaling matrices respectively.

The learned parameters, including the spatial distribution, the covariance Σ, and the opacity , are optimized by minimizing the following loss function:

where represents the radiance synthesized through differentiable rendering of the Gaussian primitives, and denotes the reference radiance recovered from the physical model. The term defines the norm measuring the pixel-level absolute color difference between the rendered and reference images. The balancing coefficient reconciles the pixel-level error with the Structural Similarity (SSIM) loss to ensure both intensity accuracy and morphological integrity. According to our sensitivity analysis, the reconstruction performance remains highly stable within the intermediate range of [0.4, 0.6], where the framework effectively captures fine morphological features, such as radial textures of scallops, without significant grayscale distortion. In this study, is set to 0.5 to provide an equitable weight distribution for multi-species biological modeling.

Through this optimization, the framework integrates multi-view radiance information into a unified 3D Gaussian representation. The resulting geometric model preserves the spatial structure and surface appearance of the identified specimens as a finalized 3D representation.

4. Experiments and Analysis

4.1. Experimental Setup

To rigorously evaluate the proposed integrated scheme, the experimental validation is divided into two phases: (1) a comparative study on standardized public datasets to benchmark localization precision, and (2) real-world trials in a large-scale indoor experimental tank (23 × 8 × 2 ) to verify system integration and biomass mapping capabilities. The proposed algorithmic architecture is implemented using C++11 and Python 3.6 within the Robot Operating System (ROS) Melodic framework, running on Ubuntu 18.04. All onboard computations are executed on the NVIDIA Jetson AGX Orin high-performance embedded platform, ensuring the seamless integration and execution of the SLAM and the perception modules.

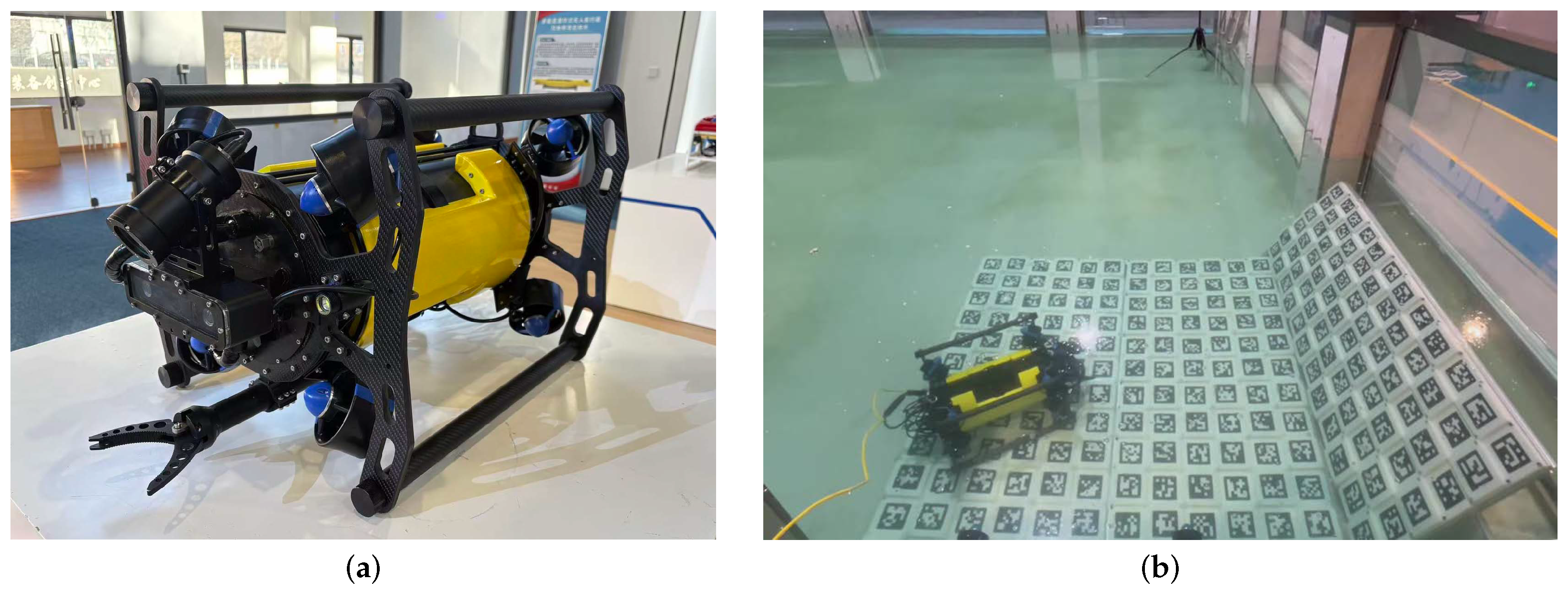

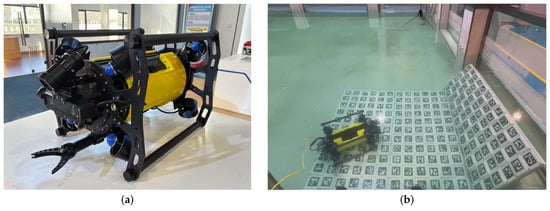

The customized AUV prototype developed for this research features a compact structural design () to facilitate agile maneuvering in complex underwater settings, as shown in Figure 2. The hardware configuration of the testbed is summarized as follows:

Figure 2.

The customized AUV testbed. (a) External view of the prototype. (b) Real-world testing in a water tank.

- Visual–Inertial Module: A forward-looking stereo global shutter camera ( resolution) is utilized to ensure high-fidelity image acquisition and minimize motion blur. This is rigidly coupled with a high-frequency IMU to provide synchronized motion constraints for the tightly coupled estimator.

- Propulsion and Power: The vehicle is powered by a high-capacity lithium-ion battery (25.2VDC, 26Ah; Shanghai Nangyuan Electronic Technology Co., Ltd., Shanghai, China). The propulsion system features an eight-thruster configuration, which provides full 6-DOF maneuverability to ensure stable and precise trajectory tracking during autonomous surveys.

- Auxiliary Systems: To maintain robust feature extraction in low-visibility conditions, dual high-intensity LED arrays are integrated into the front housing to provide consistent active illumination.

4.2. Experimental Results on Public Datasets

The localization accuracy of the proposed visual–inertial SLAM module is first validated using the AQUALOC dataset [54]. This dataset is a specialized benchmark for underwater localization, providing synchronized grayscale stereo imagery and IMU data recorded in environments such as harbor seafloors and archaeological sites. These sequences present typical underwater challenges, including suspended particles and low-texture substrates, which test the robustness of the state estimation under degraded visual conditions.

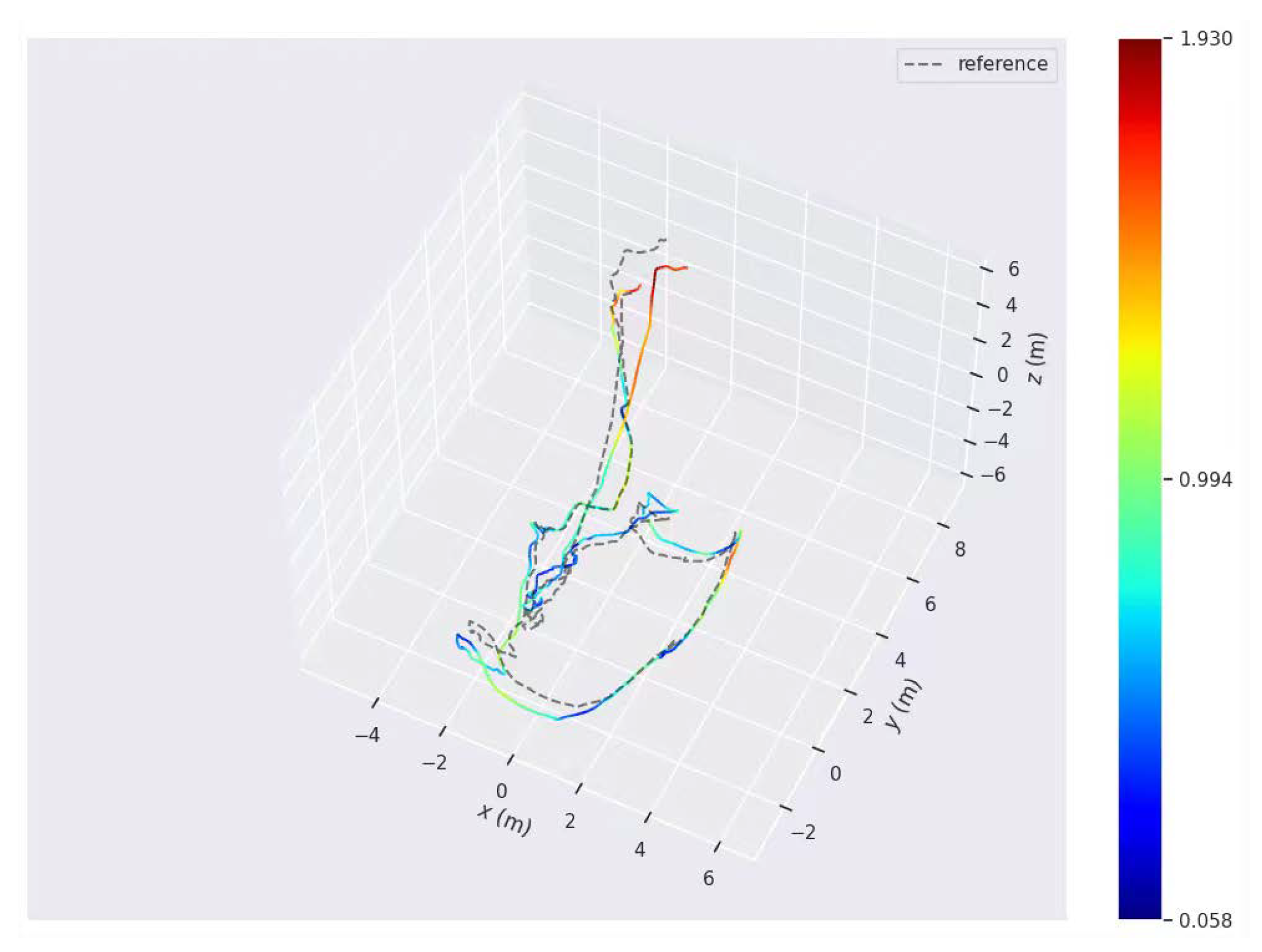

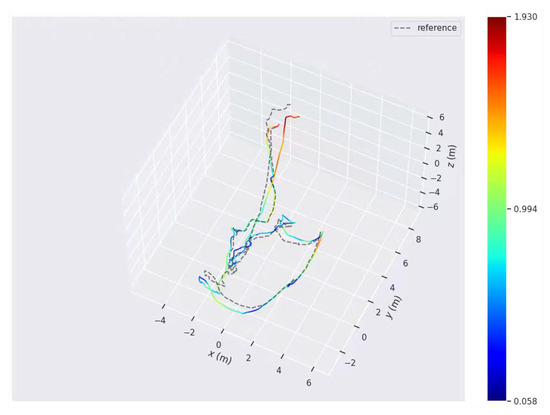

In this phase, the Absolute Trajectory Error (ATE) is employed as the primary quantitative metric to evaluate the global consistency of the estimated paths. The quantitative evaluation results are illustrated in Figure 3. The experimental results demonstrate that our proposed method achieves stable and continuous trajectory estimation throughout the tested sequences. By effectively integrating the IMU pre-integration with visual features, the system successfully suppresses the cumulative drift often encountered in purely visual-based methods, ensuring high localization precision even during complex maneuvering.

Figure 3.

Comparison of the estimated trajectories and ground truth for sequences in the AQUALOC dataset, illustrating the ATE performance.

To further verify the generalization capability of the proposed scheme across diverse underwater environments, we conducted additional evaluations on the HAUD (High-Accuracy Underwater Dataset) [55]. This dataset, developed by Shanghai Jiao Tong University, provides a high-precision benchmark featuring synchronized stereo imagery and high-frequency IMU data. Recorded in various indoor pool settings with complex underwater structures, the HAUD sequences offer millimeter-level ground truth captured by an underwater motion capture system, making it an ideal platform to test the stability of our SLAM system in controlled yet challenging scenarios.

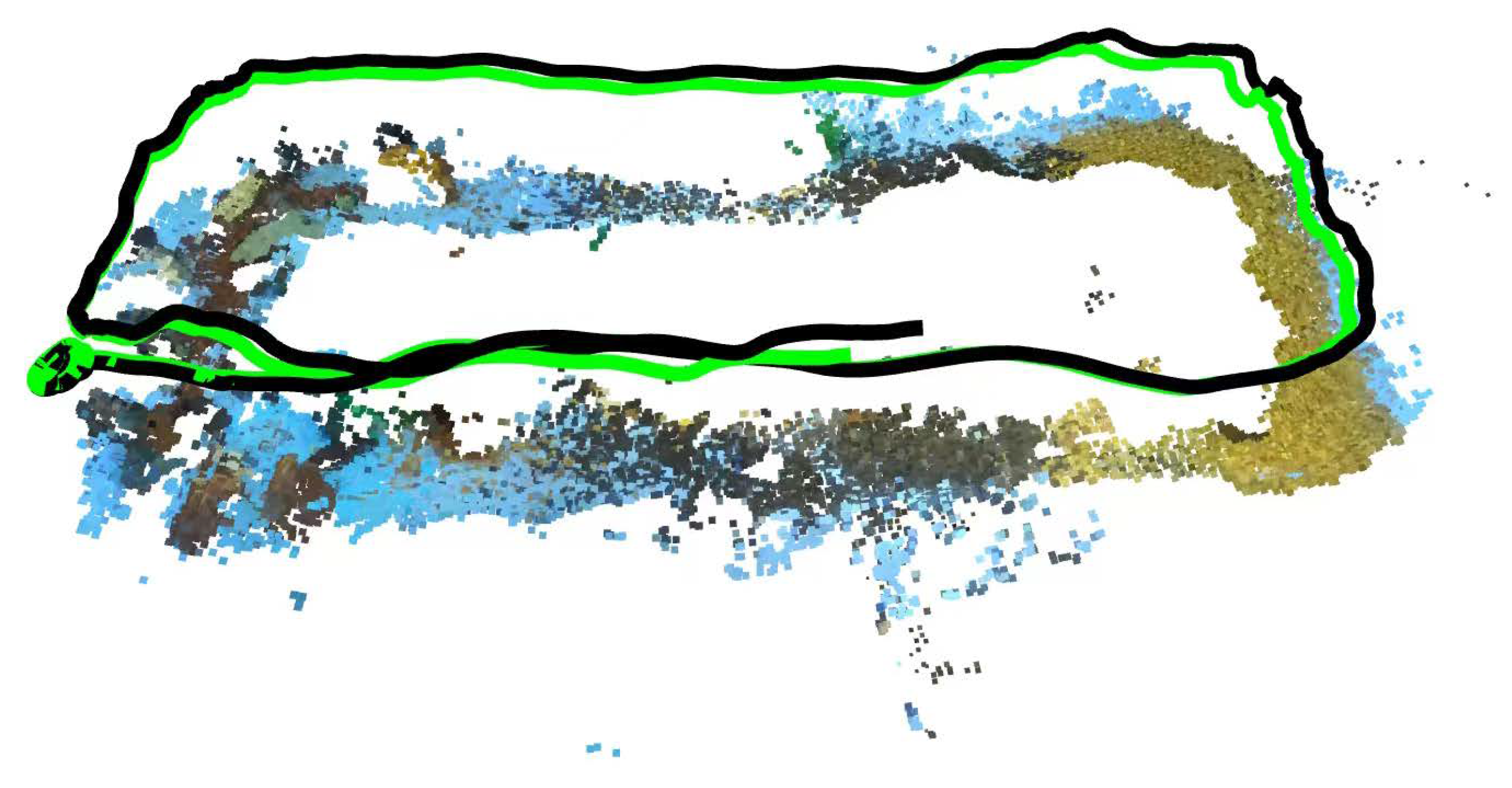

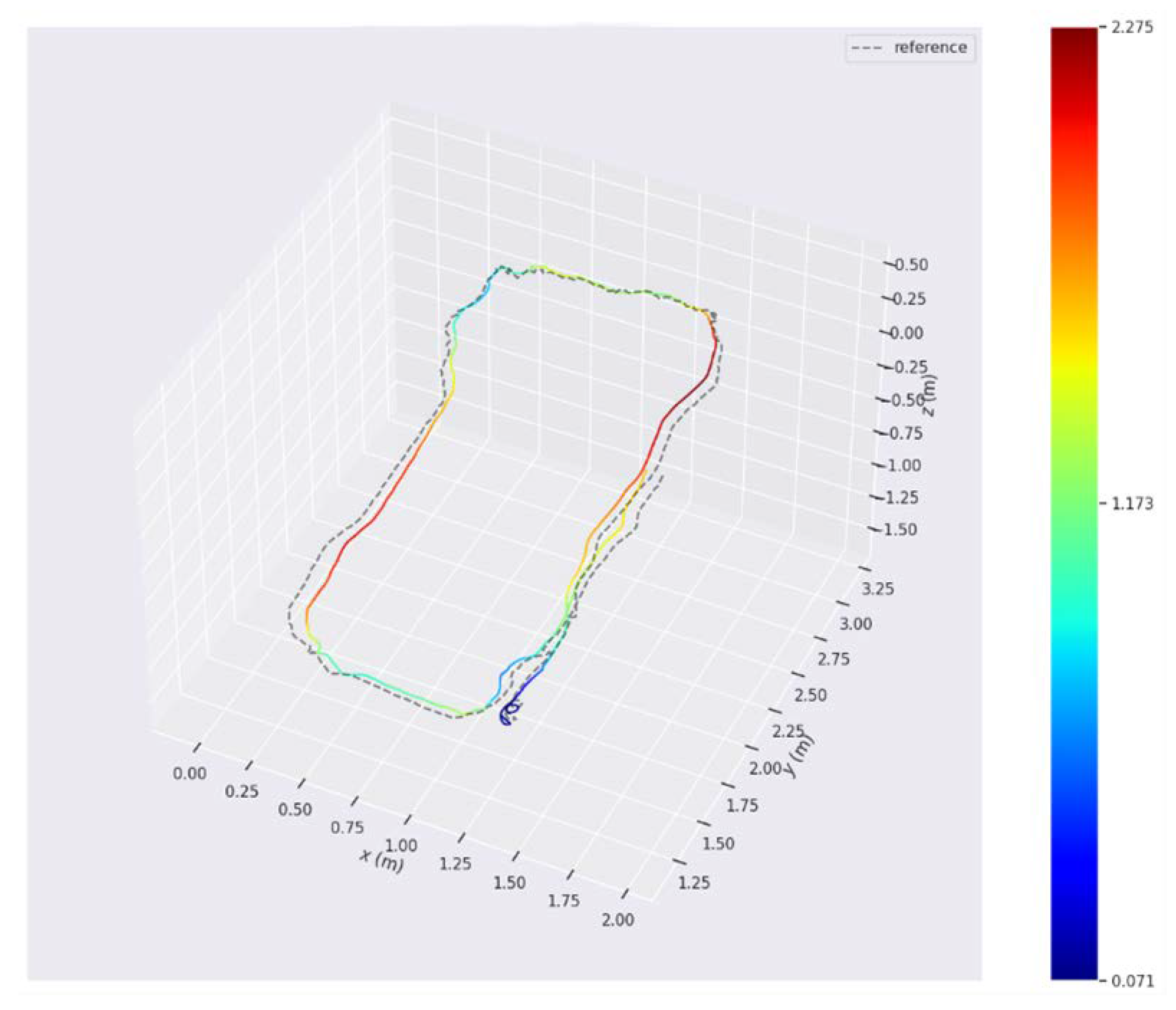

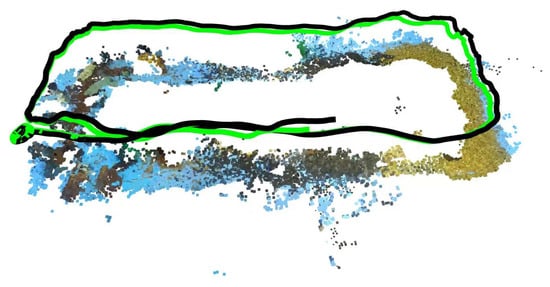

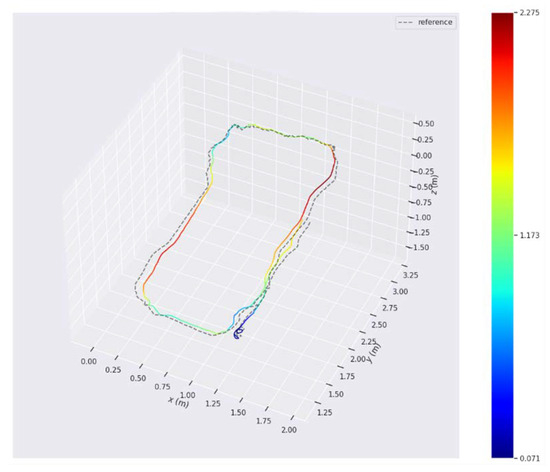

The qualitative results of localization and 3D mapping on the HAUD dataset are illustrated in Figure 4. To provide a detailed quantitative assessment of the localization accuracy, the ATE for these sequences is presented in Figure 5. The experimental visualization demonstrates that our method maintains a smooth and continuous trajectory that closely aligns with the actual movement path. Even in pool environments characterized by repetitive floor textures or sparse visual features, the generated 3D map remains spatially consistent without significant drift or distortion. These intuitive results confirm that the tightly coupled visual-inertial optimization effectively handles the complexities of indoor underwater scenes, providing a robust spatial framework for high-fidelity scene perception and localized biological biomass estimation.

Figure 4.

Qualitative visualization of the localization and 3D mapping performance on the HAUD dataset. The black curve represents the real-time estimated trajectory, while the green curve indicates the trajectory optimized by loop closure detection. The smooth trajectory and consistent 3D map structure validate the robustness of the tightly coupled visual–inertial optimization under repetitive textures and sparse visual features.

Figure 5.

Comparison of the estimated trajectories and ground truth for sequences in the HAUD dataset, illustrating the ATE performance.

To ensure a rigorous and fair evaluation, the baseline models, including VINS-Mono and ORB-SLAM3, were configured with their original default parameters while sharing identical sensor inputs, such as image resolution and frame rate, with the proposed system. This approach provides a standardized benchmark to assess the intrinsic generalization capability of these general-purpose SLAM frameworks under the specific constraints of underwater biological mapping. The detailed quantitative statistics, including the ATE and Root Mean Square Error (RMSE) for the HAUD dataset, are summarized in Table 1. The numerical results indicate that our method maintains a stable error profile across varying motion patterns. Overall, the consistent performance across these benchmarks confirms the robustness of the proposed spatial framework. The high localization precision ensures accurate 3D spatial registration for biological targets, providing a reliable foundation for high-fidelity scene perception and localized biomass estimation in subsequent aquaculture tasks.

Table 1.

Comparison of localization accuracy.

4.3. Experimental Results in Large-Scale Tank

4.3.1. Experimental Design and System Real-Time Performance

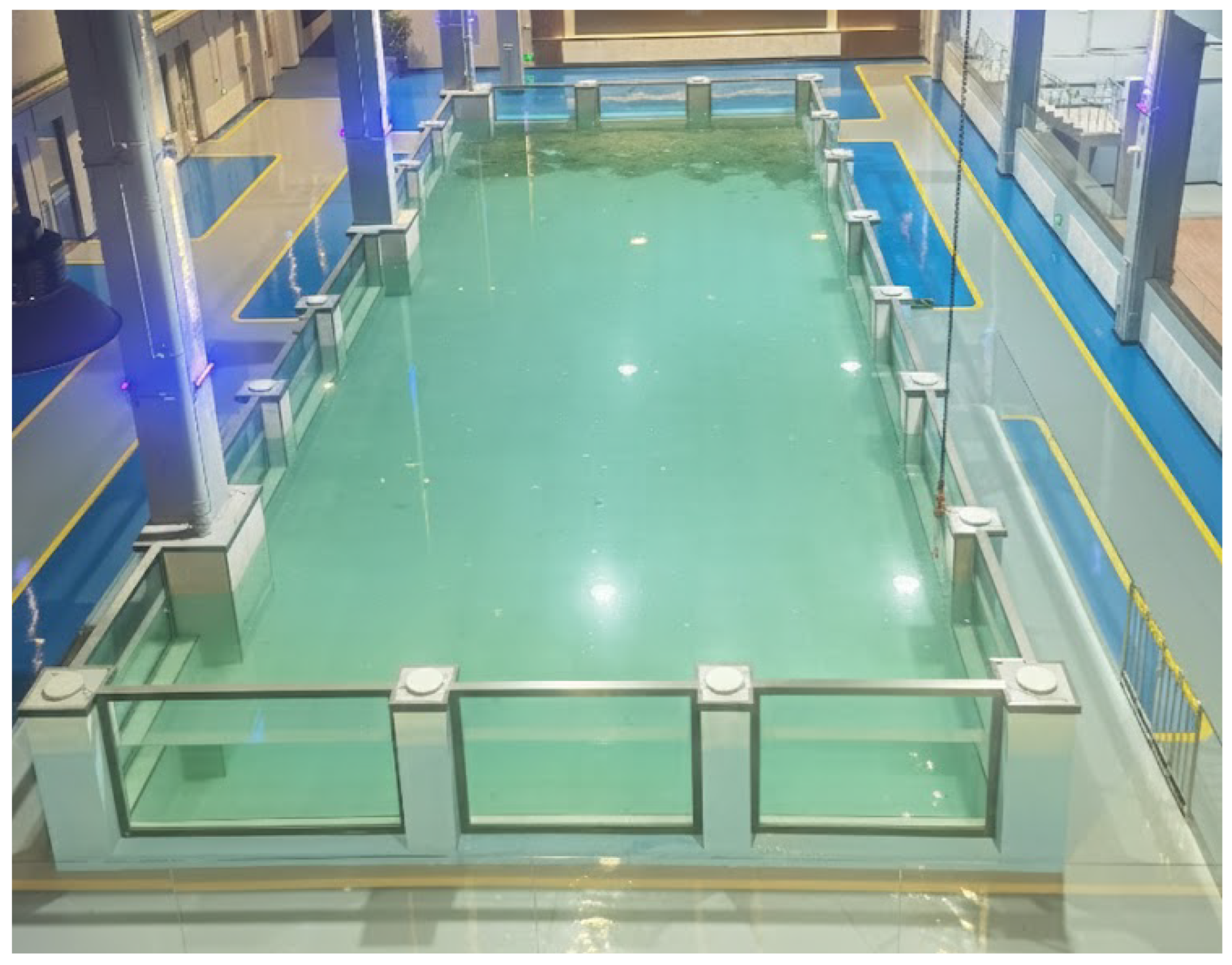

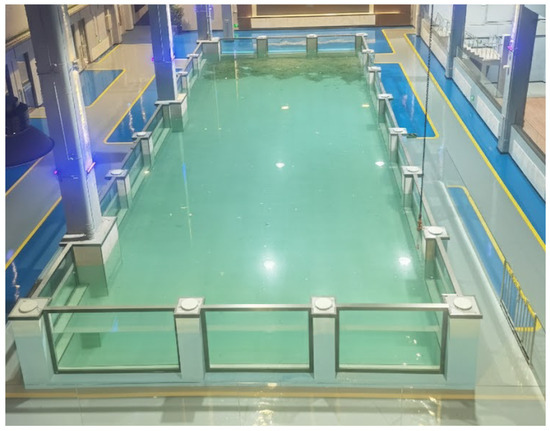

To evaluate the practical effectiveness of the proposed system in a real-world aquaculture-like scenario, field trials are conducted in a large-scale experimental tank, as shown in Figure 6. This experimental environment is specifically designed as a dual-validation platform where the robustness of the visual–inertial SLAM under long-range trajectory maneuvers is assessed concurrently with the accuracy of underwater scene perception and localized biomass estimation. By performing these tasks simultaneously, the trials focus on the spatio-temporal alignment between the state estimation module and the biological perception frontend, ensuring that individual biological detections are accurately mapped into a persistent 3D coordinate system for reliable biomass assessment.

Figure 6.

Overview of the large-scale experimental tank (23 × 8 × 2 ). The setup simulates a representative aquaculture environment to evaluate the synergistic performance of visual–inertial SLAM and biological biomass estimation modules.

The entire algorithmic pipeline, including the tightly coupled SLAM and the deep-learning-based biological detection module, is deployed on the NVIDIA Jetson AGX Orin platform. During the survey maneuvers, the system maintained a stable processing rate, with the SLAM backend operating at approximately 30 Hz and the perception frontend at 30 FPS. This synchronized execution ensures that each biological detection is precisely timestamped and associated with a spatial coordinate, which is critical for avoiding redundant counting in biomass estimation.

4.3.2. Visual–Inertial SLAM and Perception for Biomass Estimation

The functional coordination between the underwater visual–inertial SLAM framework and the biological perception module is verified by evaluating the system’s capability for localized biomass estimation. In this experiment, typical marine ranching products, including sea cucumbers, sea urchins, and scallops, are deployed as targets. The real-time object detection performance of the proposed system is illustrated in Figure 7. The perception frontend accurately identifies and classifies these targets within the field of view of the camera, validating the ability of the system to distinguish between different species with high confidence under experimental underwater conditions.

Figure 7.

Real-time object detection results of the proposed system. The panels present a sequence of keyframes extracted from the underwater image sequence, arranged in chronological order from left to right and top to bottom. These frames demonstrate the successful identification and classification of sea urchins, sea cucumbers, and scallops. Each panel illustrates the stability of the detection algorithm during the continuous maneuvering of the AUV.

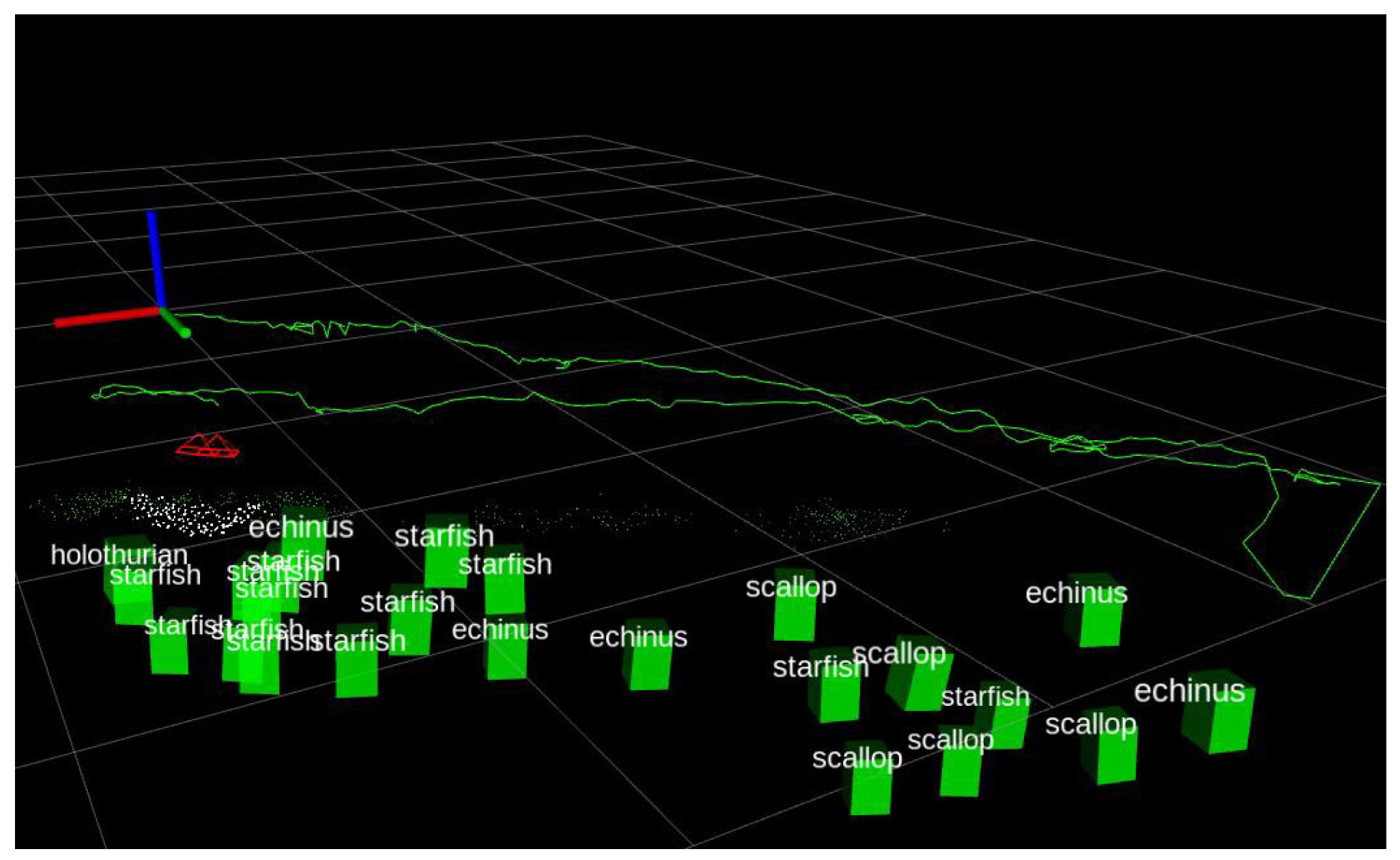

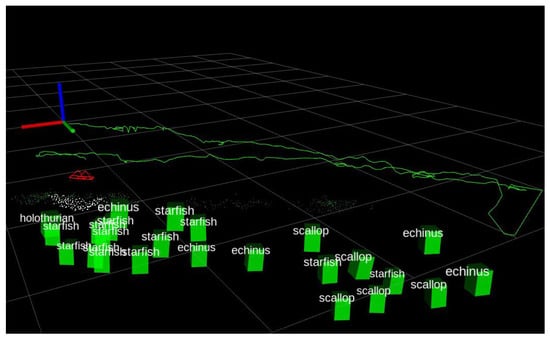

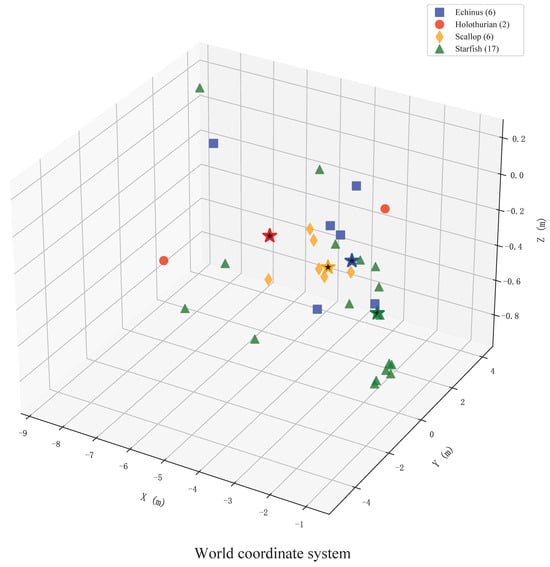

Building upon these detections, Figure 8 presents the global spatial distribution of the marine products overlaid with the estimated trajectory of the AUV. As the AUV traverses the survey grid, the SLAM module provides a continuous and consistent trajectory estimation. This spatial reference allows the 2D detection results to be back-projected into the global 3D coordinate system. The robust handling of occlusions is achieved through the integration of multi-view observations along the trajectory. By associating the recognized targets with the trajectory of the AUV, the system effectively resolves spatial overlaps and assigns a unique spatial coordinate to each identified specimen. This mechanism prevents redundant counting caused by overlapping views or loop closures, thereby ensuring the accuracy of population density analysis within specific sub-regions of the tank.

Figure 8.

Spatial mapping and coordinate alignment of marine products. The figure demonstrates the back-projection of 2D detections into the 3D global coordinate system, aligned with the estimated trajectory of the AUV. The green curve represents the AUV trajectory, while the red indicators denote the camera orientations. The detected marine products are represented by green 3D bounding boxes.

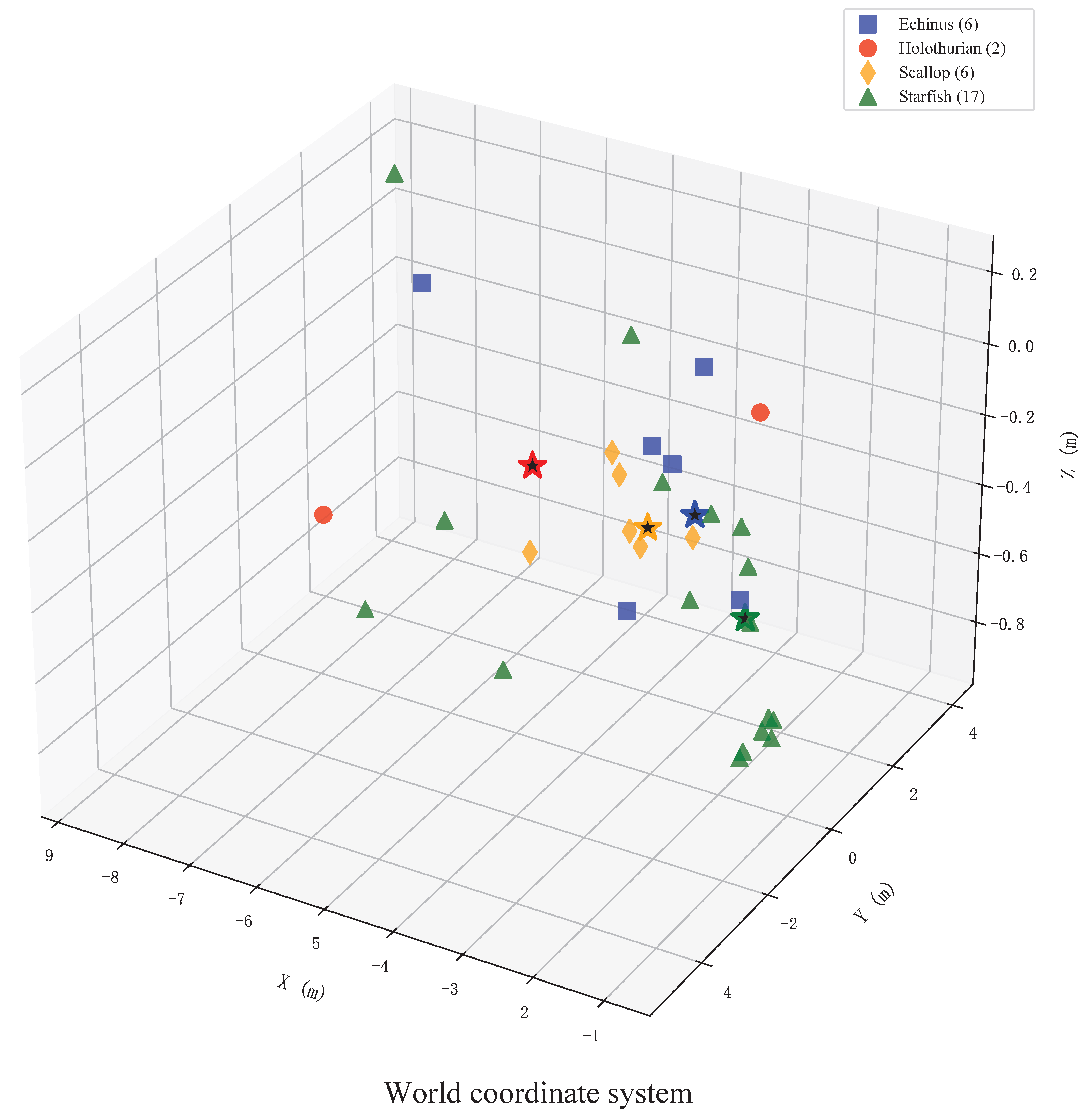

To further visualize and quantify the survey results, Figure 9 illustrates the final spatial distribution and statistical results of the marine products within the experimental tank. In this framework, the biological population is quantified through a multi-stage process. Individual organisms identified by the semantic detection module are mapped into the globally consistent 3D coordinate system through spatial back-projection. Simultaneously, a spatiotemporal filtering mechanism tracks these targets across consecutive frames, reducing redundant counts and noise from transient occlusions. In this representation, different colors represent distinct categories of marine products, providing an intuitive visualization of the biological population. Complementing these visual results, Table 2 summarizes the quantitative assessment of the aquaculture species, including the total count, coverage area, and population density for each category. Unlike traditional manual surveys, which are often compromised by subjective bias and the absence of verifiable records, the proposed system utilizes an objective 3D back-projection mechanism to ensure data integrity. While achieving accuracy comparable to manual counting in high-visibility scenarios, the framework remains superior for marine ranching by enabling long-duration operations and eliminating safety risks for divers. By transforming raw visual observations into actionable spatial intelligence, this approach provides a scalable and objective solution for the sustainable management of aquaculture resources.

Figure 9.

Global spatial distribution and localization results of marine products. This figure illustrates the spatial distribution and statistical results of the specimens within the experimental tank, where different colors represent distinct categories of marine products. The five-pointed stars of corresponding colors denote the spatial centers of maximum density for each category.

Table 2.

Statistical Results of Marine Product Distribution and Localization.

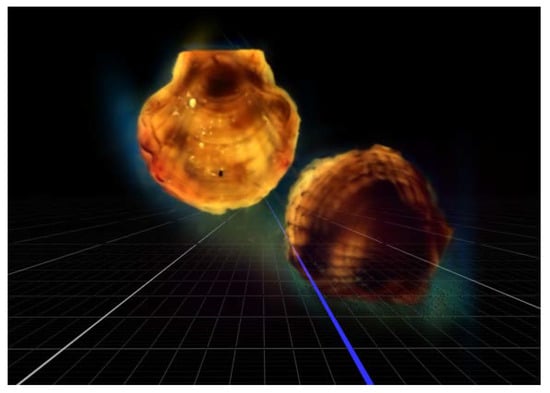

4.3.3. High-Precision 3D Reconstruction

In addition to global population estimation and localization, the capacity of the proposed system for morphological analysis was demonstrated by performing high-precision 3D reconstruction on individual scallop specimens. By leveraging the optimized pose stream provided by the SLAM module in conjunction with high-resolution localized imagery, the system is capable of generating dense point clouds and textured meshes of specific marine products.

The reconstruction results for a selected scallop specimen are presented in Figure 10. The experimental results demonstrate that the system successfully captures intricate morphological features, including textures of the shell surface and characteristics related to growth. Such high-fidelity 3D modeling facilitates non-contact measurement of biological parameters, such as shell height and volumetric estimations. This transition from macro-scale mapping of population distribution to micro-scale analysis of individual growth provides a comprehensive decision-support tool for monitoring the health and development cycles of high-value marine products, ultimately enhancing the management efficiency of intelligent marine ranching.

Figure 10.

3D reconstruction and morphological analysis of an individual scallop. The panels demonstrate the recovery of detailed surface textures and growth characteristics, enabling non-contact measurement of biological parameters for precise growth assessment.

5. Conclusions

This paper has presented a robust visual–inertial SLAM and biomass assessment scheme designed to address the challenges of image degradation and weak-textured environments in marine ranching. By developing a tightly coupled underwater visual–inertial localization strategy, we have effectively utilized a multi-sensor fusion approach to overcome the limitations of single-modality sensing, ensuring reliable self-localization in complex underwater conditions. In addition, the proposed underwater scene perception method achieves the simultaneous 3D reconstruction of aquaculture species and the quantitative mapping of their spatial distribution, transforming raw visual data into high-precision perception support for asset management. The integration of these modules into a low-cost, agile, and portable multisensor system demonstrates the feasibility of performing autonomous localization and biomass assessment on a single embedded platform. Extensive real-world experiments confirm that the system can maintain high-precision state estimation and provide a comprehensive decision-support tool for the intelligent management of marine ranching.

Despite these contributions, the experimental validation also highlights areas for future improvement. The localization precision of the SLAM module is susceptible to the scarcity of distinctive landmarks and the dynamic disturbances from biological targets, which can lead to spurious artifacts or data association failures. Additionally, the fidelity of individual 3D reconstruction remains constrained by the optical properties of the water, such as turbidity and non-uniform lighting, which can degrade the textures of the shell surface. Future work will focus on the incorporation of image enhancement algorithms to mitigate the impact of water turbidity and the optimization of computational efficiency to support real-time processing of high-density biological targets. These advancements will aim to further refine the automation of underwater biological census, providing a more resilient and scalable solution for on-site monitoring in intelligent marine ranching.

Author Contributions

Conceptualization, methodology, visualization, funding acquisition, writing original draft preparation, Y.W.; validation, software, data curation, review and editing, Z.L.; resource, review and editing, T.G.; data curation, X.D. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Data Availability Statement

The data presented in this study are available upon request from the corresponding author.

Conflicts of Interest

The authors declare no conflicts of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| AUV | Autonomous Underwater Vehicle |

| SLAM | Simultaneous Localization and Mapping |

| IMU | Inertial Measurement Units |

| GNSS | Global Navigation Satellite Systems |

| USBL | Ultra-Short Baseline |

| DVL | Doppler Velocity Log |

| UASNs | Underwater Acoustic Sensor Networks |

| ROS | Robot Operating System |

| ATE | Absolute Trajectory Error |

| RMSE | Root Mean Square Error |

References

- Zhu, P.; Li, H.; Chen, J.; Guo, C. Research on fouling shellfish on marine aquaculture cages detection technology based on an improved symmetric Faster R-CNN detection algorithm. Symmetry 2025, 17, 2107. [Google Scholar] [CrossRef]

- Chen, Y.-H.; Chen, Y.-J.; Zhang, Y.-P.; Chu, T.-J. Revealing the current situation and strategies of marine ranching development in China based on knowledge graphs. Water 2023, 15, 2740. [Google Scholar] [CrossRef]

- Tang, S.; Xu, P.; Zhang, S.; Yin, Y.; Liang, J.; Jiang, Y.; Xu, K.; Li, J.; Feng, J.; Gao, J. An idea for marine ranching planning based on ocean currents and its practice in Zhongjieshan Archipelago national marine ranching, China. Aquaculture 2025, 596, 741780. [Google Scholar] [CrossRef]

- Aung, T.; Abdul Razak, R.; Rahiman Bin Md Nor, A. Artificial intelligence methods used in various aquaculture applications: A systematic literature review. J. World Aquacult. Soc. 2025, 56, e13107. [Google Scholar] [CrossRef]

- Glaviano, F.; Esposito, R.; Cosmo, A.D.; Esposito, F.; Gerevini, L.; Ria, A.; Molinara, M.; Bruschi, P.; Costantini, M.; Zupo, V. Management and sustainable exploitation of marine environments through smart monitoring and automation. J. Mar. Sci. Eng. 2022, 10, 297. [Google Scholar] [CrossRef]

- Wu, A.-Q.; Li, K.-L.; Song, Z.-Y.; Lou, X.; Hu, P.; Yang, W.; Wang, R.-F. Deep learning for sustainable aquaculture: Opportunities and challenges. Sustainability 2025, 17, 5084. [Google Scholar] [CrossRef]

- Liu, H.; Ma, X.; Yu, Y.; Wang, L.; Hao, L. Application of deep learning-based object detection techniques in fish aquaculture: A review. J. Mar. Sci. Eng. 2023, 11, 867. [Google Scholar] [CrossRef]

- Føre, M.; O’Brien, E.M.; Kelasidi, E. Deep learning methods for 3D tracking of fish in challenging underwater conditions for future perception in autonomous underwater vehicles. Front. Robot. AI 2025, 12, 1628213. [Google Scholar] [CrossRef] [PubMed]

- Alexandris, C.; Papageorgas, P.; Piromalis, D. Positioning systems for unmanned underwater vehicles: A comprehensive review. Appl. Sci. 2024, 14, 9671. [Google Scholar] [CrossRef]

- Aparicio, J.; Álvarez, F.J.; Hernández, Á.; Holm, S. A survey on acoustic positioning systems for location-based services. IEEE Trans. Instrum. Meas. 2022, 71, 8505336. [Google Scholar] [CrossRef]

- Zhuang, Y.; Wu, C.; Wu, H.; Zhang, Z.; Xu, H.; Jia, Q.; Li, L. Event coverage hole repair algorithm based on multi-AUVs in multi-constrained three-dimensional underwater wireless sensor networks. Symmetry 2020, 12, 1884. [Google Scholar] [CrossRef]

- Wang, Y.; Ma, X.; Wang, J.; Hou, S.; Dai, J.; Gu, D.; Wang, H. Robust AUV visual loop-closure detection based on variational autoencoder network. IEEE Trans. Ind. Inform. 2022, 18, 8829–8838. [Google Scholar] [CrossRef]

- Wang, Y.; Liu, X.; Gu, D.; Wang, J.; Fu, X. Depth-consistent monocular visual trajectory estimation for AUVs. IEEE Internet Things J. 2025, 12, 14909–14920. [Google Scholar] [CrossRef]

- Heshmat, M.; Saad Saoud, L.; Abujabal, M.; Sultan, A.; Elmezain, M.; Seneviratne, L.; Hussain, I. Underwater SLAM meets deep learning: Challenges, multi-sensor integration, and future directions. Sensors 2025, 25, 3258. [Google Scholar] [CrossRef] [PubMed]

- Liu, X.; Jiang, Y.; Wang, Y.; Liu, T.; Wang, J. MDA-Net: A multidistribution aware network for underwater image enhancement. IEEE Trans. Geosci. Remote Sens. 2025, 63, 5603713. [Google Scholar] [CrossRef]

- Xu, S.; Zhang, K.; Wang, S. AQUA-SLAM: Tightly coupled underwater acoustic-visual-inertial SLAM with sensor calibration. IEEE Trans. Robot. 2025, 41, 2785–2803. [Google Scholar] [CrossRef]

- Chen, G.; Du, G.; Yang, C.; Xu, Y.; Wu, C.; Hu, H.; Dong, F.; Zeng, J. An underwater visual SLAM system with adaptive image enhancement. Ocean Eng. 2025, 326, 120896. [Google Scholar] [CrossRef]

- Wang, Y.; Ma, X.; Wang, J.; Wang, H. Pseudo-3D vision-inertia based underwater self-localization for AUVs. IEEE Trans. Veh. Technol. 2020, 69, 7895–7907. [Google Scholar] [CrossRef]

- Hu, C.; Zhu, S.; Liang, Y.; Song, W. Tightly-coupled visual-inertial-pressure fusion using forward and backward IMU preintegration. IEEE Robot. Autom. Lett. 2022, 7, 6790–6797. [Google Scholar] [CrossRef]

- Rahman, S.; Quattrini Li, A.; Rekleitis, I. SVIn2: A multi-sensor fusion-based underwater SLAM system. Int. J. Robot. Res. 2022, 41, 1022–1042. [Google Scholar] [CrossRef]

- He, J.; Li, M.; Wang, Y.; Wang, H. PLE-SLAM: A visual-inertial SLAM based on point-line features and efficient IMU initialization. IEEE Sens. J. 2025, 25, 6801–6811. [Google Scholar] [CrossRef]

- Bucci, A.; Franchi, M.; Ridolfi, A.; Secciani, N.; Allotta, B. Evaluation of UKF-based fusion strategies for autonomous underwater vehicles multisensor navigation. IEEE J. Ocean. Eng. 2023, 48, 1–26. [Google Scholar] [CrossRef]

- Wang, Y.; Gu, D.; Ma, X.; Wang, J.; Wang, H. Robust real-time AUV self-localization based on stereo vision-inertia. IEEE Trans. Veh. Technol. 2023, 72, 7160–7170. [Google Scholar] [CrossRef]

- Nauert, F.; Kampmann, P. Inspection and maintenance of industrial infrastructure with autonomous underwater robots. Front. Robot. AI 2023, 10, 1240276. [Google Scholar] [CrossRef] [PubMed]

- Yan, J.; Zhu, Y.; Xiong, W.; Cai, C.S.; Zhang, J. A sonar point cloud driven method for underwater steel structure defects inspection. Ocean Eng. 2025, 325, 120770. [Google Scholar] [CrossRef]

- Jiang, L.; Hesham, S.A.S.; JunHang, L.; Kelvin, S.K.W.; Jiang, Y.; Mengdi, Y.; Dongliang, W.; Jiang, B. Tracking and monitoring of underwater object with SLAM. In Proceedings of the 2024 IEEE 19th Conference on Industrial Electronics and Applications (ICIEA), Kristiansand, Norway, 5–8 August 2024; pp. 1–6. [Google Scholar]

- Madeo, D.; Pozzebon, A.; Mocenni, C.; Bertoni, D. A Low-Cost Unmanned Surface Vehicle for Pervasive Water Quality Monitoring. IEEE Trans. Instrum. Meas. 2020, 69, 1433–1444. [Google Scholar] [CrossRef]

- Zhang, Z.; Lin, M.; Li, D.; Wu, R.; Lin, R.; Yang, C. An AUV-Enabled Dockable Platform for Long-Term Dynamic and Static Monitoring of Marine Pastures. IEEE J. Ocean. Eng. 2025, 50, 276–293. [Google Scholar] [CrossRef]

- Tang, R.; Qi, L.; Ye, S.; Li, C.; Ni, T.; Guo, J.; Liu, H.; Li, Y.; Zuo, D.; Shi, J.; et al. Three-Dimensional Path Planning for AUVs Based on Interval Multi-Objective Secretary Bird Optimization Algorithm. Symmetry 2025, 17, 993. [Google Scholar] [CrossRef]

- Lopez, A.L.; Morris, A.; Jones, O.; Phillips, A.B.; Tejera, F.M.H.; Penate-Sanchez, A. Developing a Reconfigurable Architecture for the Remote Operation of Marine Autonomous Systems. IEEE Softw. 2024, 41, 160–170. [Google Scholar] [CrossRef]

- Real, M.; Vial, P.; Palomeras, N.; Carreras, M. Underwater Acoustic Localization using pose-graph SLAM. In Proceedings of the OCEANS 2023—Limerick, Limerick, Ireland, 5–8 June 2023; pp. 1–6. [Google Scholar]

- Maurelli, F.; Krupiński, S.; Xiang, X.; Hernandez, J.D.; Zhao, S.S. AUV localisation: A review of passive and active techniques. Int. J. Intell. Robot. Appl. 2022, 6, 246–269. [Google Scholar] [CrossRef]

- Yan, X.; Chang, S.; Wang, X.; Zhang, L.; Liu, J. A dual-stage coverage path planning method for bathymetric survey using an AUV in graph-based SLAM framework considering positioning uncertainty. Ocean Eng. 2024, 312, 119252. [Google Scholar] [CrossRef]

- Qi, C.; Ma, T.; Li, Y.; Ling, Y.; Liao, Y.; Jiang, Y. A Multi-AUV Collaborative Mapping System With Bathymetric Cooperative Active SLAM Algorithm. IEEE Internet Things J. 2025, 12, 12441–12452. [Google Scholar] [CrossRef]

- Abu, A.; Diamant, R. A SLAM Approach to Combine Optical and Sonar Information From an AUV. IEEE Trans. Mob. Comput. 2024, 23, 7714–7724. [Google Scholar] [CrossRef]

- Ruff, E.O.; Alleway, H.K.; Gillies, C.L. The role of policy in supporting alternative marine farming methods: A case study of seafloor ranching in Australia. Ocean Coast. Manag. 2023, 240, 106643. [Google Scholar] [CrossRef]

- Chen, X.; Bian, H.; Li, F.; Wang, R.; Hu, Y.; Li, J. Time-Varying Current Estimation Method for SINS/DVL Integrated Navigation Based on Augmented Observation Algorithm. Symmetry 2025, 17, 1881. [Google Scholar] [CrossRef]

- Paull, L.; Saeedi, S.; Seto, M.; Li, H. AUV navigation and localization: A review. IEEE J. Ocean. Eng. 2014, 39, 131–149. [Google Scholar] [CrossRef]

- Vial, P.; Palomeras, N.; Solà, J.; Carreras, M. Underwater pose SLAM using GMM scan matching for a mechanical profiling sonar. J. Field Robot. 2024, 41, 511–538. [Google Scholar] [CrossRef]

- Piao, Z.; Sun, S.; Chen, Y.; Ju, M. Finite-Time Control for Automatic Berthing of Pod-Driven Unmanned Surface Vessel with an Event-Triggering Mechanism. Symmetry 2024, 16, 1575. [Google Scholar] [CrossRef]

- Song, S.; Wu, X.; Xu, C.; Pan, M.; Han, G. Enhancing Network Reliability in UASNs: A Collision-Aware Critical Node Identification Algorithm. IEEE Trans. Mob. Comput. 2026, 25, 1398–1413. [Google Scholar] [CrossRef]

- Yang, J.; Wu, Z.; Huang, Y.; Gong, M.; Zhang, X.; Ma, Y.; Han, G. K-Means++-Based Secure and Efficient Routing Protocol Design for Underwater Sensor Networks. IEEE Internet Things J. 2026, 13, 1702–1717. [Google Scholar] [CrossRef]

- Zhao, H.; Shen, Q.; Zhuang, H.; Qin, T.; Wang, C.; Yang, M. CrossGLoc: Cross-Modal Global Localization Leveraging Pretrained Diffusion Models and Semantic Cues for Intelligent Vehicles. IEEE Trans. Intell. Transp. Syst. 2025, 26, 18513–18524. [Google Scholar] [CrossRef]

- Shen, Y.; Hong, S.; Shen, S.; Qin, T. Direct, Targetless and Automatic Joint Calibration of LiDAR-Camera Intrinsic and Extrinsic. In Proceedings of the 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Hangzhou, China, 18–22 October 2025; pp. 8720–8726. [Google Scholar]

- Qin, T.; Pan, J.; Cao, S.; Shen, S. A General Optimization-based Framework for Local Odometry Estimation with Multiple Sensors. arXiv 2019, arXiv:1901.03638. [Google Scholar] [CrossRef]

- Qin, T.; Li, P.; Shen, S. VINS-Mono: A Robust and Versatile Monocular Visual-Inertial State Estimator. IEEE Trans. Robot. 2018, 34, 1004–1020. [Google Scholar] [CrossRef]

- Campos, C.; Elvira, R.; Rodríguez, J.J.G.; Montiel, J.M.M.; Tardós, J.D. ORB-SLAM3: An Accurate Open-Source Library for Visual, Visual–Inertial, and Multimap SLAM. IEEE Trans. Robot. 2021, 37, 1874–1890. [Google Scholar] [CrossRef]

- He, J.; Li, M.; Wang, Y.; Wang, H. OVD-SLAM: An Online Visual SLAM for Dynamic Environments. IEEE Sens. J. 2023, 23, 13210–13219. [Google Scholar] [CrossRef]

- Li, M.; He, J.; Wang, Y.; Wang, H. End-to-End RGB-D SLAM With Multi-MLPs Dense Neural Implicit Representations. IEEE Robot. Autom. Lett. 2023, 8, 7138–7145. [Google Scholar] [CrossRef]

- Wang, K.; Gao, F.; Shen, S. Real-time Scalable Dense Surfel Mapping. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019; pp. 6919–6925. [Google Scholar]

- Yang, D.; Leonard, J.J.; Girdhar, Y. SeaSplat: Representing Underwater Scenes with 3D Gaussian Splatting and a Physically Grounded Image Formation Model. In Proceedings of the 2025 IEEE International Conference on Robotics and Automation (ICRA), Atlanta, GA, USA, 19–23 May 2025; pp. 7632–7638. [Google Scholar]

- Geng, J.; Ma, W.; Jiang, W. Causal intervention and parameter-free reasoning for few-shot SAR target recognition. IEEE Trans. Circuits Syst. Video Technol. 2024, 34, 12702–12714. [Google Scholar] [CrossRef]

- Tian, Y.; Ye, Q.; Doermann, D. YOLOv12: Attention-Centric Real-Time Object Detectors. arXiv 2025, arXiv:2502.12524. [Google Scholar]

- Ferrera, M.; Creuze, V.; Moras, J.; Trouvé-Peloux, P. AQUALOC: An underwater dataset for visual–inertial–pressure localization. Int. J. Robot. Res. 2019, 38, 1549–1559. [Google Scholar] [CrossRef]

- Song, Y.; Qian, J.; Miao, R.; Xue, W.; Ying, R.; Liu, P. HAUD: A High-Accuracy Underwater Dataset for Visual–Inertial Odometry. In Proceedings of the 2021 IEEE Sensors, Sydney, Australia, 31 October–3 November 2021; pp. 1–4. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.