1. Introduction

Destructive earthquakes frequently inflict severe damage on transportation lifelines, isolating disaster zones and significantly impeding the delivery of emergency supplies and post-disaster reconstruction. Intense ground motion results in widespread subgrade collapse, pavement cracking, and road burial caused by secondary disasters. Consequently, the disruption of transportation networks critically prolongs the time required for emergency rescue operations [

1,

2]. In this context, the rapid and accurate acquisition of spatial distribution information regarding road damage is crucial for planning rescue routes and assessing disaster losses. With the rapid advancement of Earth observation technology [

3,

4,

5], high-resolution remote sensing imagery has emerged as a vital data source for disaster emergency response [

6,

7,

8], owing to its extensive coverage and high timeliness [

9,

10]. While research on post-earthquake building collapse detection is relatively mature [

11,

12,

13], studies focusing on the identification of damage to roads—critical linear features—remain comparatively limited. The inherent complexity and unique characteristics of road networks continue to present numerous unresolved challenges in this field. Deep learning methods have achieved remarkable success in remote sensing interpretation, yet their performance depends critically on large-scale, high-quality annotated data. Unlike routine road detection or pavement crack identification, which are supported by established datasets such as HighRPD [

14] and EGY_PDD [

15], road damage in seismic scenarios presents unique challenges. In remote sensing imagery, damage features (e.g., fractures and burial) are typically fragmented and limited in spatial extent, resulting in extreme data scarcity and sparsity. Taking the Turkey earthquake as a case study, despite the extensive scope of the disaster, preliminary statistics from the CEMS (Copernicus Emergency Management Service) reveal that the total length of visually identifiable road damage across the epicenter and 20 key surrounding areas is only approximately 30 km [

16]. This severe data imbalance renders models highly susceptible to overfitting, making it difficult to extract generalizable features. Although researchers in remote sensing have attempted to employ techniques such as Copy-Paste, GANs, and CoCosNet for data augmentation [

17,

18], existing synthesis methods often exhibit poor performance in cross-domain adaptation. Synthetic images frequently suffer from inconsistencies in illumination and scene context, along with unnatural boundary artifacts. Furthermore, it remains challenging to accurately simulate complex backgrounds, such as earthquake-induced landslides and occlusion by collapsed structures. Consequently, synthetic data often fails to improve model robustness in real-world disaster scenarios and may instead introduce noise interference.

Beyond data limitations, existing detection paradigms encounter dual challenges regarding timeliness and topological preservation in practical applications. Mainstream methods for road damage detection typically fall into two categories: bi-temporal change detection [

19] and prior knowledge-driven detection [

20,

21]. The former relies heavily on pre-disaster imagery as a baseline. However, acquiring recent, high-quality pre-disaster data is often difficult during emergency responses. Historical basemaps (e.g., Google Earth) frequently suffer from update lags, leading to false positives where engineering modifications are misidentified as seismic damage; furthermore, the registration process for multi-source data is time-consuming. Conversely, prior knowledge-driven methods face significant limitations regarding automation and generalization capabilities across diverse scenes. Moreover, unlike areal features, roads exhibit distinct continuity and topological characteristics. Most existing semantic segmentation models rely on pixel-level classification, often neglecting global topological structures. This tendency results in discontinuous predictions in areas with occlusion or ambiguous features. Morphologically, distinguishing between such “model-induced discontinuities” and “physical damage caused by real disasters” is difficult. Consequently, assessing road possibility based solely on model outputs remains challenging, severely undermining the credibility of the identification results.

To address the challenges of sample scarcity, synthetic data distortion, and inadequate topological preservation, this paper proposes a road damage detection framework that integrates generative models with prior information, providing a reliable solution for practical disaster response operations. The main contributions of this study are summarized as follows: (1) A data simulation pipeline utilizing the stable diffusion model with topological constraints was constructed, generating high-fidelity synthetic datasets to effectively mitigate the extreme scarcity of post-earthquake labeled samples. (2) A vector-guided segmentation model, VRD-U2Net, was developed to align visual features with vector priors, ensuring the topological integrity of linear features.

2. Materials and Methods

As illustrated in

Figure 1, this study first constructs an automated data simulation pipeline based on the DeepGlobe Road Extraction Challenge [

22] dataset utilizing Stable Diffusion 3 Inpainting model [

23] (In this paper, we refer to it as the stable diffusion model). By incorporating a manual screening mechanism, this approach effectively fills the gap in post-disaster damaged road datasets. Second, by integrating vector data to provide trajectory priors, the deep learning segmentation model is optimized to enhance its perception of linear topological structures, thereby facilitating more accurate discrimination between algorithmic prediction errors and actual physical damage. Finally, the proposed framework is applied to real-world scenarios from the Turkey earthquake for end-to-end evaluation, verifying its superior performance and application potential in data-scarce and emergency response contexts.

2.1. Diffusion Model-Based Generation of Realistic Road Damage Samples

Given the extreme scarcity and sparse distribution of road damage samples in real seismic scenarios, relying solely on limited real-world data is insufficient for effectively training deep learning models. To address this, this study proposes utilizing stable diffusion model to generate high-quality synthetic data. Specifically, this strategy employs the DeepGlobe road dataset as the source domain. By leveraging automated masking and text-guided image inpainting techniques, realistic damage features are embedded while preserving the semantic integrity of the background. Notably, the DeepGlobe dataset contains extensive imagery from developing nations, offering balanced coverage of both urban and rural road scenarios. The richness of these geographic features provides a critical guarantee for the diversity of the simulated data.

2.1.1. Construction of Damage Region Masks with Topological Constraints

To ensure that generated damage regions adhere to the physical distribution patterns of roads rather than appearing randomly in non-interest areas, we designed a random mask generation algorithm based on road topological constraints. The core logic of this algorithm involves generating damage masks that satisfy physical size limitations while exhibiting natural morphology through geometric constraints and morphological operations. First, precise binary road masks are extracted from the original labels using color threshold segmentation. Subsequently, center points are randomly sampled exclusively from the set of valid road pixels to construct elliptical geometrically constrained masks. To simulate the irregular morphology of road collapse or debris accumulation caused by earthquakes, the generation of damage regions is formalized as an intersection operation between the elliptical regions and the original road topology. Specifically, the major and minor axes of these ellipses are randomly sampled from the range of 50–300 pixels. Given the 0.5 m resolution of the DeepGlobe dataset, this parameterization corresponds to physical damage scales of approximately 25 m to 150 m, effectively covering varying degrees of disaster severity. Finally, to mimic the natural diffusion of debris edges, a morphological dilation using an elliptical kernel (size = 3–5 pixels) is applied, which helps to integrate the simulation into the surrounding environment and enhances the realism of the synthetic damage. The logical framework of the entire simulation process is illustrated in

Figure 2.

2.1.2. Text-Guided Image Inpainting Based on the Stable Diffusion Model

After acquiring the damage masks, this study employs the pre-trained stable diffusion model as the core engine for image generation. Unlike traditional GANs models that require training from scratch on specific datasets, this approach leverages the rich visual priors learned by the model from large-scale datasets. Inference is performed directly within a compressed low-dimensional latent space, ensuring generation quality while avoiding high computational training costs.

This process is primarily executed during the model’s inference phase. Specifically, the input remote sensing imagery is encoded into latent variables and combined with text prompts and binary damage masks as conditional inputs. Utilizing its internal cross-attention mechanism, the model injects textual semantics—such as “road damage” and “collapsed buildings”—into the denoising network. Through a stepwise reverse diffusion process, the model reconstructs only the areas covered by the masks, utilizing pre-trained prior knowledge to inpaint plausible damage textures while preserving the pixels in non-masked regions.

A critical advantage of this method is its sensitivity to environmental context. As shown in

Figure 2, the model adaptively generates various damage features based on the geographic semantics of the surrounding original imagery. In rural scenarios (

Figure 3a), generated damage tends to exhibit characteristics of soil coverage and subgrade failure; conversely, in urban settings (

Figure 3b), it predominantly manifests as pavement fragmentation and the accumulation of building debris. This adaptive generation mechanism effectively eliminates the “collage effect” often found in synthetic images, achieving a high degree of visual consistency.

2.1.3. Quality Control and Selection of Synthetic Samples

Despite the remarkable performance of stable diffusion models in image generation, they may still produce physically implausible artifacts or blurriness when processing complex remote sensing scenes [

24]. Given that the damage samples generated in this study are synthetic and lack corresponding ground truth, traditional full-reference image quality assessment metrics (e.g., PSNR [

25], SSIM [

26]) are not entirely suitable for evaluating the realism and plausibility of the generated content. Currently, robust automated metrics capable of quantifying the semantic rationality of disaster scenes remain absent, rendering manual verification an indispensable step to prevent the model from learning incorrect physical features.

To ensure that the constructed dataset effectively enhances the performance of downstream segmentation models and prevents noisy data from interfering with the training process, this study implemented a rigorous human-in-the-loop quality control procedure. We established three qualitative screening criteria: (1) Texture Consistency: The generated damage textures must remain consistent with the resolution and noise levels of the surrounding pavement, free from obvious blurring or pixelated blocking artifacts. (2) Boundary Naturalness: The transition between damaged areas and intact pavement should be smooth and natural, avoiding abrupt edges or sudden changes in illumination. (3) Background Semantic Preservation: The generation process must not exceed the mask region to corrupt non-road backgrounds (such as surrounding vegetation or buildings), thereby ensuring the integrity of contextual semantics.

To ensure rigor, the screening process was executed via a cross-review mechanism involving two experienced remote sensing researchers over a period of 6 days. Through manual visual inspection, failed samples exhibiting geometric distortions, color anomalies, or semantic conflicts were discarded. Ultimately, a high-confidence synthetic damage dataset was constructed, comprising 2345 images with corresponding label sets.

2.2. VRD-U2Net: Vector-Guided Damaged Road Segmentation Network

Traditional segmentation networks often struggle in post-disaster scenarios due to interference from photometric noise generated by debris and rubble in RGB imagery, which compromises feature extraction. Furthermore, repeated downsampling operations frequently lead to the loss of fine edge details in narrow roads. To address these challenges, this study constructs a deep network that integrates frequency-domain filtering with full-resolution calibration, as illustrated in

Figure 4. Built upon a nested U-structure, the network comprises six encoder stages and five decoder stages. Leveraging the architectural strengths of U2Net [

27], we introduced two critical structural reconstructions to the original framework to enhance robustness in complex seismic backgrounds: (1) In the first four stages of the encoder (Stages 1–4), we replaced specific dilated convolution layers with WTConv [

28]. By exploiting the time-frequency decoupling properties of wavelet transforms, WTConv effectively separates low-frequency topological components (macro-scale road trends) from high-frequency textural disturbances (damage details) during the early phases of feature extraction. This design enables the network to perceive road connectivity even under severe occlusion, thereby suppressing the ingestion of background noise at the source. (2) Constrained by the resolution of remote sensing imagery, pixel-level false edges often lead to confusion. Consequently, we embedded scSE (Spatial and Channel Squeeze & Excitation) [

29] modules at both ends of the processing chain—specifically, prior to downsampling in Stage 1 and following upsampling restoration in Stage 11. Acting as a “feature purifier,” the scSE module utilizes concurrent spatial and channel attention mechanisms to perform a secondary refinement of both shallow raw features and deep reconstructed features. This mechanism effectively suppresses linearly distributed false positives arising from debris while simultaneously sharpening the boundary contours of actual roads.

Building on this foundation, we designed the MSRA (Multi-Scale Residual Attention) module at skip connections to bridge the cross-modal semantic gap, and adopted an improved Dynamic Upsample [

30] strategy in the decoder to reconstruct narrow topologies, both of which will be detailed in subsequent sections.

2.2.1. Frequency-Aware Cross-Modal Alignment MSRA Module

An inherent semantic gap exists between the fine-grained textures of RGB imagery and the geometric structures of vector priors. Naive feature concatenation often induces semantic misalignment; for instance, a road depicted as connected in vector data may be physically buried in the corresponding optical imagery. To address this, we designed the Multi-Scale Residual Attention (MSRA) module. This module aims to establish a frequency-aware feature calibration mechanism, ensuring that the model’s attention remains consistently focused on damage features within road regions. As illustrated in

Figure 5, the module comprises two parallel branches that decouple input features across two dimensions: spatial scale and channel dependence.

To address the variability in road widths and the challenge of background occlusion, the Spatial Aggregation Branch constructs a cascaded frequency-domain receptive field. Specifically, we parallelize multiple sets of WTConv layers with kernel sizes of 3

3, 5

5, and 7

7. By leveraging wavelet transforms, this design effectively separates high-frequency damage interference from low-frequency road structures within the frequency domain.

where

denotes the generated spatial attention map.

represents the WTConv operation with a kernel size of

(aggregated from

), and

denotes the average pooling operation.

refers to a

convolution for channel reduction, and

is the Sigmoid activation function.

Complementing this, the Channel Aggregation Branch focuses on semantic screening along the feature dimension. This branch employs global average pooling to capture global context and models the non-linear relationships between channels using two layers of 1

1 convolution to compute the channel weights:

where

represents the channel attention weights.

denotes the Global Average Pooling operation used to aggregate global context.

and

represent the two layers of

convolutions utilized to model channel interdependencies, while

denotes the ReLU activation function.

Ultimately, the output feature is generated through residual fusion, a process that preserves original information while injecting multi-dimensional attention gains:

where

and

represent the input and output feature maps, respectively.

and

stand for element-wise multiplication and element-wise addition. This formulation ensures that the original features are enhanced by the complementary spatial (

) and channel (

) attention cues.

2.2.2. Vector Prior-Driven Dynamic Scope Upsampling

The upsampling process in the decoder is inherently an ill-posed mapping from low-resolution features to high-resolution space. Traditional interpolation operators, lacking geometric constraints, often produce discontinuities or jagged edges when restoring narrow road topologies. Although the MSRA module at the encoding stage introduces global semantic alignment, explicit geometric guidance is still required during the pixel reconstruction phase to regulate the positional distribution of sampling points. To this end, we constructed a vector prior-driven dynamic scope mechanism based on Dynamic Upsample.

As illustrated in

Figure 6, this structure discards the traditional DySample’s reliance on singular visual features, adopting instead a decoupling strategy that comprises two complementary components: “texture-dominated direction prediction” and “vector-dominated scope modulation.” specifically, the lower branch continues the independent processing of visual features, utilizing the sensitivity of convolutional layers to local texture gradients to establish the base sampling direction. Conversely, the upper branch innovatively introduces “geometric navigation.” By concatenating the vector prior with visual features along the channel dimension to achieve explicit geometric injection, it adaptively generates the dynamic scope factor via linear projection.

where

represents the learned dynamic scope factor.

represents the concatenation of the decoding visual features

and the vector prior

along the channel dimension.

refers to the linear projection layer that maps the fused features to the scalar scope coefficient.

Building on this, we introduced a regulation coefficient, set to 0.25, to impose tight constraints on the offsets. The final sampling offset is defined as:

where

denotes the final sampling offsets.

is the convolution layer responsible for predicting the base sampling direction from visual features. The constant 0.25 serves as the regularization coefficient

, scaling the dynamic scope

to enforce strict local topological constraints.

This parameter setting, distinct from the conventional value (0.5), imposes stricter local topological restrictions. The rationale for this deviation lies in the fundamental difference between general semantic segmentation and our specific road extraction task. While the original setting is designed to capture contextual textures of large-scale regional targets, roads in post-earthquake urban environments appear as thin, linear features tightly flanked by interfering factors such as building shadows and collapsed debris. We observed that the original sampling range tends to exceed the narrow road width, inadvertently introducing background noise by sampling these adjacent non-road textures. Therefore, we empirically set the coefficient to 0.25 to restrict the effective receptive field of the offsets. This tighter constraint forces sampling points to remain strictly confined within the valid road neighborhood, thereby minimizing the impact of urban environmental noise while significantly enhancing the sharpness and connectivity of road edges.

2.3. Data Sources for Real-World Verification

On 6 February 2023, southern Turkey experienced double strong earthquakes with magnitudes of Mw 7.8 and Mw 7.5 [

31]. This catastrophic crustal movement resulted in devastating consequences, inflicting severe damage on infrastructure in the epicenter and surrounding areas. This study selects the severely affected Islahiye region as the test area to verify the generalization capability of VRD-U2Net.

2.3.1. Overview of the Test Area

To objectively evaluate the generalization capability of VRD-U2Net in real disaster scenarios, we selected the EMSR648 emergency data published by the CEMS as the testing benchmark [

32]. Among the 20 Areas of Interest (AOIs) covered by EMSR648, based on official statistical data, Islahiye (AOI 10) was ultimately identified as the core testing area.

The rationale for selecting this area lies in the fact that, compared to other affected locations, Islahiye is situated in a heavily devastated zone. It exhibits the highest density of road damage samples and the most complex damage morphologies—encompassing both debris burial in urban settings and pavement destruction in rural areas. Consequently, it provides the most challenging testing environment for the model to detect the sparse category of “damage.” This study acquired high-resolution optical satellite imagery collected post-earthquake in this region as input data.

Regarding the construction of Ground Truth labels, we utilized the official vector assessment data provided by Copernicus EMS as a basis. We filtered for road vector features with attributes labeled as “Damaged” and “Destroyed” to generate the test set labels used for quantitative evaluation.

Figure 7 illustrates the geographical location and post-earthquake imagery overview of the Islahiye study area. The red vectors denote the officially confirmed damaged road segments, clearly reflecting the spatial distribution characteristics of seismic damage along the road network.

2.3.2. Vector Correction Strategy

The identification results for road damage typically manifest as fragmented patches. To align with the official emergency visualization standards of CEMS and facilitate quantitative analysis, we implemented a vector correction strategy for the segmentation results. Specifically, this involves a logical analysis where any road vector intersecting with a predicted damage patch is explicitly marked as damaged. The detailed correction strategy is illustrated in

Figure 8. Notably, any intersection between a predicted damage patch and a vector triggers a damage classification. To prevent false alarms from insignificant roadside debris, we implemented a noise filtering step. Predicted masks first undergo a morphological opening operation, and isolated fragments smaller than a predefined area threshold are discarded before the vector intersection check. This ensures that only significant damage features are retained.

2.4. Experimental Settings and Evaluation Metrics

Regarding the diffusion model inference task for data generation, the process was executed on an NVIDIA RTX 4090 GPU to ensure high-fidelity texture synthesis. To guarantee reproducibility, the stable diffusion model was configured with 100 inference steps and a guidance scale of 3, balancing the generation quality with prompt alignment. A denoising strength of 0.8 was applied to introduce significant structural modifications to the road surface, while an elliptical dilation with a kernel size of 5 was utilized to ensure natural blending at the mask boundaries. The generation was driven by specific prompts, with negative prompts implemented to strictly exclude intact or clean road features.

To balance the distribution of positive and negative samples, the dataset utilized in this study consists of 2345 synthetic damage samples generated via the proposed stable diffusion model. The entire dataset was partitioned into training, validation, and testing sets in a ratio of 7:2:1. Additionally, high-resolution imagery from the 2023 earthquake in the Islahiye region, Turkey, was selected to construct a target test set, aimed at verifying the model’s generalization capabilities in real-world scenarios.

All experiments were conducted on a system equipped with an NVIDIA RTX 4090 GPU (24 GB) and an Intel Xeon Gold 6226R CPU, implemented using PyTorch (version 2.6.0 + cu124). Model training utilized the AdamW optimizer with a weight decay set to 0.0001. The initial learning rate was established at 0.001. The total number of training epochs was set to 300, with a batch size of 16.

To mitigate overfitting, an online data augmentation strategy, SODPresetTrain, was incorporated during the training process. Augmentation operations included horizontal flipping (probability: 0.5), vertical flipping (probability: 0.3), and random rotation (10°). Furthermore, color jittering (brightness, contrast, and saturation factors of 0.2; hue factor of 0.1) and Gaussian blur (probability: 0.1) were applied. Input images underwent standard normalization, with the exception of the fourth channel (Road Line), which retained its original values without normalization. Note that for all baseline models, the input layer was modified to accept a 4-channel tensor (RGB + Vector) to ensure a fair comparison with our proposed method.

To comprehensively quantify model performance, Mean Intersection over Union (mIoU), Precision, and Mean F1-score (mF1) were adopted as evaluation metrics.

3. Results

3.1. Performance Comparison of Road Damage Detection Models

3.1.1. Comparison with Baseline Models

We conducted a comparative analysis between VRD-U2Net and several established segmentation networks, including the classic U-Net [

33], U-Net++ [

34], D-LinkNet [

35] (a model specifically optimized for road extraction), SegFormer [

36], Swin-UNet [

37] (Transformer-based segmentation model), YOLO26-seg [

38] and the original U2Net backbone. All models were trained to convergence on the identical dataset. As presented in

Table 1, VRD-U2Net achieved significant performance advantages across all metrics. It attained an mIoU of 0.884, representing a 7.0% improvement over the original U2Net (0.814) and a 9.30% increase compared to UNet (0.791). Crucially, addressing the issue of confusion between post-disaster background noise and damaged sections, our model achieved a precision of 0.931, demonstrating exceptional noise resistance in complex scenarios.

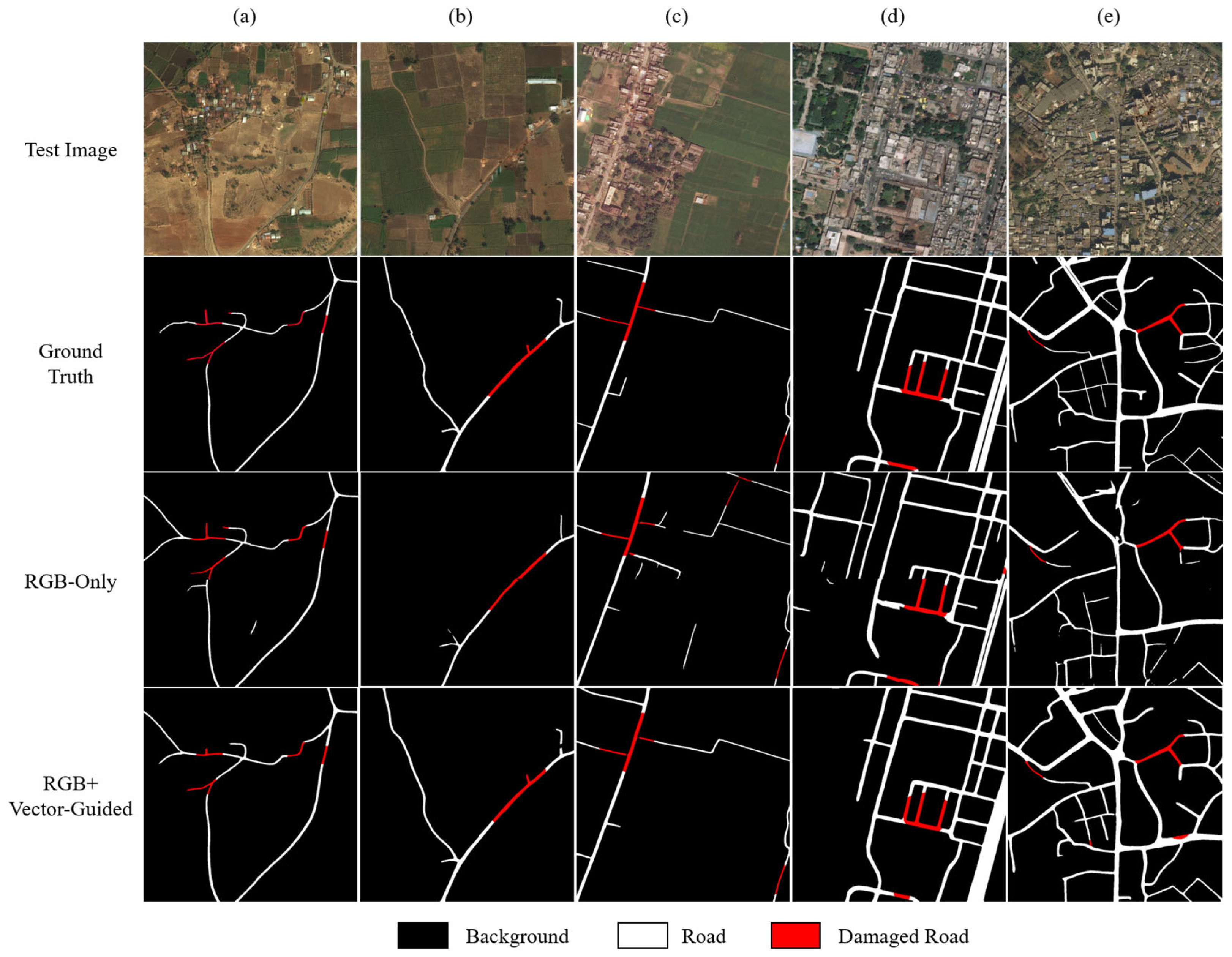

The visualization results in

Figure 9 further reveal performance disparities among the different models in rural and urban settings. Benefiting from the introduction of vector guidance, all models maintained the macro-structural integrity of the road network to a certain extent, confirming the effectiveness of the vector-guided strategy. However, regarding damage feature recognition, baseline models exhibited distinct limitations. D-LinkNet suffered from severe fragmented misclassification in both rural and urban roads. While the U-Net series performed acceptably in rural scenes, they almost completely lost the capability to discriminate damaged roads in complex urban environments. Regarding topological preservation, although the D-LinkNet possesses an inherent advantage in maintaining network connectivity, it failed to accurately segment road width, resulting in blurred boundaries. Conversely, the U-Net series frequently produced minor disconnections and even ignored vector guidance, misclassifying road or damage features as background. Although the Transformer-based Swin-UNet and SegFormer demonstrated strong performance in damage discrimination, they frequently failed to strictly adhere to the vector guidance information. Consequently, the segmentation of complete road networks exhibited certain limitations, often ignoring the linear continuity required by the priors. Similarly, the segmentation results of YOLO26-seg often presented fragmented damage patches. A closer inspection reveals that these artifacts were primarily triggered by irrelevant road interferences, such as building shadows and tree occlusion, indicating a susceptibility to environmental noise. In contrast, VRD-U2Net balances the dual requirements of semantic recognition and topological preservation. In the rural scenes (Rows 1–3), our model precisely detected damaged sections covered by light soil—features that D-LinkNet and U-Net failed to identify. In complex urban backgrounds, VRD-U2Net not only repaired topological breaks common in traditional models but also eliminated issues of blurred segmentation boundaries and inaccurate semantic expression, generating damage masks with sharp edges and complete structures.

3.1.2. Ablation Studies

To rigorously investigate the contribution of the vector guidance strategy and the specific components within the VRD-U2Net, we designed ablation experiments across two dimensions: an analysis of the effectiveness of vector guidance and an analysis of the performance gains attributed to individual network modules.

- (1)

Analysis of Vector Guidance Effectiveness

To quantify the core role played by the Vector Prior, we compared two distinct input modalities. Notably, since the MSRA module in the proposed VRD-U2Net is specifically designed for multi-modal feature fusion, directly removing the vector input would compromise the network’s internal architecture, rendering a direct comparison invalid. Therefore, to ensure a fair and direct assessment of the independent contribution of vector data, we utilized the original U2Net as the baseline model. Training and testing were conducted under two settings: (1) RGB-Only: The standard U2Net architecture with 3-channel input; (2) Vector-Guided: The U2Net with a modified input layer to accept 4-channel tensors (RGB + Vector) via simple concatenation.

The experimental results are presented in

Table 2, The experimental results are presented in

Table 2, and the visualization results are shown in

Figure 10. Upon introducing vector guidance, the model achieved significant improvements across all key metrics. When relying solely on RGB imagery, the Road-IoU was limited to 0.625. This limitation arises because purely visual models are highly susceptible to interference from tree occlusion and shadows, resulting in fragmented road predictions. Conversely, the incorporation of vector priors increased the Road-IoU to 0.830. This demonstrates that vector prior data provides robust topological constraints, acting effectively as a navigational guide that enables the network to maintain prediction continuity even in regions with weak visual features.

Crucially, the IoU for damaged roads increased substantially from 0.582 to 0.798. In the absence of priors, the model struggles to distinguish between “debris on the road” and “building rubble along the roadside.” By constraining the Region of Interest (ROI), vector data effectively filters out background noise from non-road areas. This forces the network to focus on abrupt texture variations within the buffer zone of the road vectors, thereby enabling the precise identification of actual road damage.

- (2)

Analysis of VRD-U2Net Modules Contribution

To validate the rationality of the VRD-U2Net architecture design, we conducted a series of stepwise cumulative ablation experiments on the standard test set. Using the unmodified U2Net as the baseline, we sequentially integrated the WTConv, MSRA, Dynamic Upsample, and scSE modules. The variations in metrics for both road classification and damage classification were recorded, as detailed in

Table 3,

Table 4 and

Table 5.

First, the introduction of WTConv in the encoder stage raised the overall mIoU to 0.839. Notably, for the challenging “Damaged Road” category, the mIoU surged from 0.798 to 0.823. This strongly demonstrates that the frequency-domain feature separation mechanism effectively suppresses background noise in post-disaster imagery, significantly enhancing the capability to capture minute damage features. Building on this, the inclusion of the Multi-Scale Residual Attention (MSRA) module further elevated the overall mIoU to 0.861. The segmentation accuracy for the “Intact Road” category showed the most significant improvement (increasing from 0.855 to 0.878). This indicates that the module successfully establishes semantic correlations between RGB features and vector priors, effectively correcting misidentifications of road damage caused by shadows or occlusions. Subsequently, by integrating the Dynamic Upsample (DySample) operator, the model achieved a breakthrough in preserving topological connectivity. The overall mIoU reached 0.879, while the Precision for the “Damaged Road” category rose substantially to 0.924. This confirms that content-aware point-to-point offset sampling overcomes the edge blurring associated with traditional interpolation, achieving high-fidelity feature restoration. The scSE attention mechanism was introduced to refine features across spatial and channel dimensions, resulting in a final performance of mIoU 0.884 and mF1 0.916 for VRD-U2Net. Compared to the baseline model, the complete architecture achieved an overall accuracy gain of 7.0% and a 6.8% improvement in damaged road identification. These results fully validate the necessity and complementarity of each module in addressing noise interference, semantic gaps, and topological discontinuities.

3.2. Verification of Generalization Capability in Real-World Scenarios

Although the proposed model demonstrates superior performance on standard test sets, real-world disaster scenarios—characterized by environmental variations, sensor artifacts, and unknown noise—pose severe challenges to model robustness. To validate the generalization capability of the VRD-U2Net model and indirectly corroborate the effectiveness of synthetic data in mitigating the scarcity of real samples, we applied the pre-trained model directly to real imagery from the 2023 Turkey earthquake for testing.

3.2.1. Quantitative Results

Based on the vector correction strategy, the pixel-level masks output by the model were mapped back onto the road vector network. The official damage assessment data published by the CEMS served as the ground truth for quantitative evaluation.

Table 6 presents the detection performance of VRD-U2Net within the Turkey earthquake test area.

Despite not being fine-tuned on the Turkey dataset, VRD-U2Net achieved an F1 score of 65.3% and a Recall of 72.3%. In contrast, traditional models failed significantly in terms of generalization. This failure is primarily attributed to the complexity of post-disaster scenes, where roadside vegetation and vehicles accumulated on roads are frequently misclassified as damage by conventional models. These results demonstrate that the synthetic data training strategy effectively endows the model with the capability to identify heterogeneous damage features in real-world contexts.

It is worth noting that the quantitative analysis revealed a counter-intuitive phenomenon: the model’s precision was lower than anticipated. A detailed case-by-case investigation indicated that this discrepancy does not stem entirely from model false positives, but rather from the latency and incompleteness inherent in the official baseline data. CEMS annotations rely primarily on manual visual interpretation; during the rapid response phase of large-scale disasters, it is often difficult to capture details across all secondary roads. Consequently, numerous samples identified as “Damaged” by the model but labeled as “No visible damage” in the official records were found to be actual damage missed by human annotators. This implies that the quantitative metrics in

Table 6 underestimate the model’s true performance, highlighting its potential to assist in identifying omissions in manual assessments.

Beyond accuracy, computational efficiency is paramount for time-sensitive emergency response. As recorded in the Inference Time column of

Table 6, we measured the total processing time required to generate damage assessments for the entire Islahiye test area (covering approximately 25 km

2). While the lightweight YOLO26-seg exhibited the fastest inference speed (124 s), its segmentation precision was compromised, leading to missed detections in complex debris areas. Conversely, the Transformer-based models Swin-UNet and SegFormer incurred significant computational overhead (taking 292 s and 265 s), and the precision is also moderate. VRD-U2Net struck an optimal balance, completing the regional assessment in just 203 s. This performance confirms that our model can provide city-scale damage maps within the golden window of rescue operations, verifying its practical value for emergency applications.

3.2.2. Visual Results

To intuitively demonstrate the detection capabilities of VRD-U2Net in complex real-world scenarios and its advantage in supplementing manual annotations, representative regions were selected for visual analysis.

As illustrated in

Figure 11a,b, within dense urban areas near the epicenter, VRD-U2Net accurately identified arterial roads buried under collapsed structures. Despite the chaotic texture of the debris and its spectral similarity to the pavement, the model successfully reconnected the fragmented road network by leveraging the topological constraints provided by vector priors. The detection results not only show a high degree of consistency with official high-severity damage annotations but also reveal certain instances of human error and omission in the official records.

Figure 11c,d depict a rural zone with sparse building distribution in the southwest of the test area. Official annotations indicate that the road conditions in this region remain intact (marked in gray), with no damage recorded. Conversely, VRD-U2Net detected distinct damage signals (indicated by red patches) along multiple rural roads within this area. A close-up inspection of the original high-resolution imagery (Zoom-in view) confirms that the areas flagged by the model indeed exhibit spectral anomalies in the pavement caused by subgrade failure, with certain segments even being occluded by collapsed structures.

4. Discussion

While the proposed framework validates the efficacy of integrating synthetic data with vector guidance for road damage identification, three critical uncertainties and limitations warrant further examination. Regarding diffusion-based simulation, although it effectively expands the training manifold, the process remains inherently probabilistic. Despite the implementation of human-in-the-loop quality control to filter logical anomalies, a domain gap persists between synthetic textures and the complex spectral characteristics of real seismic ruins, which may limit the model’s ability to represent fine-grained features in unseen environments. Furthermore, the current prompt design strategy was specifically tailored to the dominant visual features of seismic damage identifiable in satellite imagery—namely, the occlusion of road surfaces by accumulation of foreign objects. We deliberately excluded prompts describing micro-scale deformations, such as fine fissures caused by ground shaking, as these features are typically indistinguishable at the available spatial resolution. While this focused prompt strategy proved effective for detecting severe structural damage, future research involving higher-resolution aerial photography or other disaster typologies should explore a more diverse prompt space to capture scenario-specific features, such as subtle pavement distress, water submersion, or sediment deposition. The simulation strategy relies on the DeepGlobe dataset, which covers diverse urban and rural contexts but does not fully encapsulate the heterogeneity of global geographic landscapes or specific secondary disasters like landslides. This limitation could constrain generalization performance when the model is applied to disaster zones with significantly different vegetation cover or urban morphological patterns. Specifically, in regions characterized by dense vegetation cover, the high canopy coverage often occludes road, leading to severe spectral confusion between vegetation and actual road damage. In such scenarios, the current optical-based segmentation may fail, necessitating more precise vegetation exclusion algorithms to aid feature discrimination. Conversely, in highly standardized urban grids typical of developed nations, the complexity arises from multi-level transportation networks and pervasive shadows cast by high-rise buildings. The model, primarily trained on planar road features, may struggle to distinguish between elevated structures and ground-level damage, or misinterpret the uniform concrete textures of rooftops as road surfaces due to the lack of 3D elevation information.

Post-disaster scenarios are characterized by extreme class imbalance, where visual indicators of road damage are spatially sparse compared to the vast background of intact infrastructure. Although the synthetic dataset was constructed to be balanced, real-world inference involves processing highly imbalanced data streams. This discrepancy contributes to the observation that while recall is high, precision remains sensitive to false positives triggered by debris accumulation in non-damaged zones. Consequently, future implementations require more robust hard-negative mining strategies and the design of adaptive threshold adjustment methods specifically for real-world inference tasks.

The reliance on vector data introduces potential risks regarding spatial-temporal alignment and the intrinsic reliability of prior information. The effective operation of MSRA and dynamic upsampling modules depends on accurate registration between vector priors and satellite imagery. However, the timeliness and accuracy of these vector priors are not always guaranteed. In high-magnitude seismic events, in addition to physical ground deformation, inherent lags in map updates or topological inaccuracies in open-source datasets can lead to spatial offsets between pre-disaster vector data and post-disaster imagery. Specifically, in rapidly developing regions, outdated vector maps may fail to reflect the latest infrastructure, resulting in wrong guidance or missed road segments. When significant registration deviations exist, the strong geometric constraints imposed by vector guidance might inadvertently force the model to hallucinate road structures in incorrect locations or suppress valid damage features that have physically shifted, highlighting the critical need for future research into deformable alignment mechanisms.

5. Conclusions

This study establishes a viable pathway for automated post-earthquake road damage assessment by bridging the technical gap between generative data augmentation and physical prior-based deep learning. The development of the VRD-U2Net model confirms that integrating frequency-domain filtering with vector-guided attention mechanisms significantly enhances the segmentation accuracy of linear damage features amidst complex rubble backgrounds. Experimental results indicate that the proposed model achieves an mIoU of 0.884 and a precision of 0.931 on the synthetic test set, outperforming the original U2Net backbone by 7.0%. Furthermore, quantitative and qualitative validations across both synthetic datasets and real-world data from the Turkey earthquake demonstrate that the model possesses strong robustness, maintaining an F1 score of 65.3% and a recall of 72.3% even in real world scenarios. It effectively overcomes the topological connectivity issues often missed by traditional methods. The adopted “synthetic training, real-world inference” paradigm validates the potential of diffusion models in breaking the bottleneck of labeled sample scarcity in post-disaster scenarios.

Future work will focus on addressing the domain shift between synthetic and real textures, improving model resilience to vector-image registration deviations, and expanding the simulation strategy to cover a broader spectrum of disaster typologies.

Author Contributions

Conceptualization, C.Q., W.W. and Z.W.; methodology, C.Q. and Z.W.; validation, C.Q., Z.W. and T.H.; formal analysis, C.Q. and W.W.; investigation, H.T.; resources, K.L.; data curation, C.Q. and J.J.; writing—original draft preparation, C.Q.; writing—review and editing, C.Q. and W.W.; visualization, C.Q.; supervision, L.W.; project administration, W.W. and Z.M.; funding acquisition, W.W. and L.W. All authors have read and agreed to the published version of the manuscript.

Funding

This research was supported by the National Key Research and Development Programs (2023YFE0208000), and the National Natural Science Foundation of China (42371392).

Data Availability Statement

The original contributions presented in this study are included in the article. Further inquiries can be directed to the corresponding author.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Liu, K.; Zhai, C.; Dong, Y.; Meng, X. Post-Earthquake Functionality Assessment of Urban Road Network Considering Emergency Response. J. Earthq. Eng. 2023, 27, 2406–2431. [Google Scholar] [CrossRef]

- Bai, X.; Dai, Y.; Zhou, Q.; Yang, Z. An MDT-based rapid assessment method for the spatial distribution of trafficable sections of roads hit by earthquake-induced landslides. Front. Earth Sci. 2023, 11, 1287577. [Google Scholar] [CrossRef]

- Han, G.; Zhang, H.; Huang, Y.; Chen, W.; Mao, H.; Zhang, X.; Ma, X.; Li, S.; Zhang, H.; Liu, J. First global XCO2 observations fromspaceborne lidar: Methodology and initial result. Remote Sens. Environ. 2025, 330, 114954. [Google Scholar] [CrossRef]

- Li, H.; Liu, B.; Gong, W.; Ma, Y.; Jin, S.; Wang, W.; Fan, R.; Jiang, S. Influence of clouds on planetary boundary layer height: A comparative study and factors analysis. Atmos. Res. 2025, 314, 107784. [Google Scholar] [CrossRef]

- Yang, J.; Gan, R.; Luo, B.; Wang, A.; Shi, S.; Du, L. An improved method for individual tree segmentation in complex urban scenes based on using multispectral LiDAR by deep learning. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2024, 17, 6561–6576. [Google Scholar] [CrossRef]

- Zou, R.; Liu, J.; Pan, H.; Tang, D.; Zhou, R. An improved instance segmentation method for fast assessment of damaged buildings based on post-earthquake UAV images. Sensors 2024, 24, 4371. [Google Scholar] [CrossRef] [PubMed]

- He, X.; Xu, C.; Tang, S.; Huang, Y.; Qi, W.; Xiao, Z. Performance Analysis of an Aerial Remote Sensing Platform Based on Real-Time Satellite Communication and Its Application in Natural Disaster Emergency Response. Remote Sens. 2024, 16, 2866. [Google Scholar] [CrossRef]

- Wiguna, S.; Adriano, B.; Mas, E.; Koshimura, S. Evaluation of deep learning models for building damage mapping in emergency response settings. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2024, 17, 5651–5667. [Google Scholar] [CrossRef]

- Wang, W.; Li, B.; Chen, B. Comprehensive reconstruction of missing aerosol optical depth in MAIAC product: A multi-layer LightGBM approach leveraging multi-scale spatio-temporal information. J. Clean. Prod. 2025, 523, 146384. [Google Scholar] [CrossRef]

- He, J.; Wang, W.; Wang, N. Seamless reconstruction and spatiotemporal analysis of satellite-based XCO2 incorporating temporal characteristics: A case study in China during 2015–2020. Adv. Space Res. 2024, 74, 3804–3825. [Google Scholar] [CrossRef]

- Wu, Z.; Qu, C.; Wang, W.; Miao, Z.; Feng, H. Remote Sensing Extraction of Damaged Buildings in the Shigatse Earthquake, 2025: A Hybrid YOLO-E and SAM2 Approach. Sensors 2025, 25, 4375. [Google Scholar] [CrossRef] [PubMed]

- Chen, F.; Sun, Y.; Wang, L.; Wang, N.; Zhao, H.; Yu, B. HRTBDA: A network for post-disaster building damage assessment based on remote sensing images. Int. J. Digit. Earth 2024, 17, 2418880. [Google Scholar] [CrossRef]

- Takhtkeshha, N.; Mohammadzadeh, A.; Salehi, B. A rapid self-supervised deep-learning-based method for post-earthquake damage detection using UAV data (case study: Sarpol-e Zahab, Iran). Remote Sens. 2022, 15, 123. [Google Scholar] [CrossRef]

- He, J.; Gong, L.; Xu, C.; Wang, P.; Zhang, Y.; Zheng, O.; Su, G.; Yang, Y.; Hu, J.; Sun, Y. HighRPD: A high-altitude drone dataset of road pavement distress. Data Br. 2025, 59, 111377. [Google Scholar] [CrossRef]

- Abdelkader, M.F.; Hedeya, M.A.; Samir, E.; El-Sharkawy, A.A.; Abdel-Kader, R.F.; Moussa, A.; El-Sayed, E. EGY_PDD: A comprehensive multi-sensor benchmark dataset for accurate pavement distress detection and classification. Multimed. Tools Appl. 2025, 84, 38509–38544. [Google Scholar] [CrossRef]

- Copernicus Emergency Management Service. EMSR648: Earthquake in Türkiye and Syria. Available online: https://mapping.emergency.copernicus.eu/activations/EMSR648/ (accessed on 27 December 2025).

- Cannas, E.D.; Baireddy, S.; Bestagini, P.; Tubaro, S.; Delp, E.J. Enhancement strategies for copy-paste generation & localization in RGB satellite imagery. In Proceedings of the International Workshop on Information Forensics and Security (WIFS); IEEE: New York, NY, USA, 2023; pp. 1–6. [Google Scholar]

- Asmat, B.N.; Bilal, H.S.M.; Uddin, M.I.; Karim, F.K.; Mostafa, S.M. Utilizing Conditional GANs for Synthesis of Equilibrated Hyperspectral Data to Enhance Classification and Mitigate Majority Class Bias. IEEE Access 2025, 13, 49271–49289. [Google Scholar] [CrossRef]

- Chen, L.-A.; Lin, S.-Y. Enhancing road damage identification in satellite images through synthetic data. Int. J. Disaster Risk Reduct. 2025, 116, 105091. [Google Scholar] [CrossRef]

- Zhao, K.; Liu, J.; Wang, Q.; Wu, X.; Tu, J. Road damage detection from post-disaster high-resolution remote sensing images based on TLD framework. IEEE Access 2022, 10, 43552–43561. [Google Scholar] [CrossRef]

- Wang, J.; Qin, Q.; Zhao, J.; Ye, X.; Qin, X.; Yang, X.; Wang, J.; Zheng, X.; Sun, Y. A knowledge-based method for road damage detection using high-resolution remote sensing image. In Proceedings of the IEEE International Geoscience and Remote Sensing Symposium (IGARSS); IEEE: New York, NY, USA, 2015; pp. 3564–3567. [Google Scholar]

- Demir, I.; Koperski, K.; Lindenbaum, D.; Pang, G.; Huang, J.; Basu, S.; Hughes, F.; Tuia, D.; Raskar, R. Deepglobe 2018: A challenge to parse the earth through satellite images. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW); IEEE: New York, NY, USA, 2018; pp. 172–181. [Google Scholar]

- Esser, P.; Kulal, S.; Blattmann, A.; Entezari, R.; Müller, J.; Saini, H.; Levi, Y.; Lorenz, D.; Sauer, A.; Boesel, F. Scaling rectified flow transformers for high-resolution image synthesis. In ICML’24: Proceedings of the 41st International Conference on Machine Learning; JMLR.org: New York, NY, USA, 2024. [Google Scholar]

- Du, C.; Li, Y.; Qiu, Z.; Xu, C. Stable diffusion is unstable. Adv. Neural Inf. Process. Syst. 2023, 36, 58648–58669. [Google Scholar]

- Tanchenko, A. Visual-PSNR measure of image quality. J. Vis. Commun. Image Represent. 2014, 25, 874–878. [Google Scholar] [CrossRef]

- Wang, Z.; Bovik, A.C.; Sheikh, H.R.; Simoncelli, E.P. Image quality assessment: From error visibility to structural similarity. IEEE Trans. Image Process. 2004, 13, 600–612. [Google Scholar] [CrossRef]

- Qin, X.; Zhang, Z.; Huang, C.; Dehghan, M.; Zaiane, O.R.; Jagersand, M. U2-Net: Going deeper with nested U-structure for salient object detection. Pattern Recognit. 2020, 106, 107404. [Google Scholar] [CrossRef]

- Finder, S.E.; Amoyal, R.; Treister, E.; Freifeld, O. Wavelet convolutions for large receptive fields. In Proceedings of the Computer Vision—ECCV 2024—18th European Conference, Proceedings; Springer Science and Business Media Deutschland GmbH: Berlin/Heidelberg, Germany, 2024; pp. 363–380. [Google Scholar]

- Roy, A.G.; Navab, N.; Wachinger, C. Concurrent spatial and channel ‘squeeze & excitation’ in fully convolutional networks. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention; The Medical Image Computing and Computer Assisted Intervention Society (MICCAI): Rochester, MN, USA, 2018; pp. 421–429. [Google Scholar]

- Liu, W.; Lu, H.; Fu, H.; Cao, Z. Learning to upsample by learning to sample. In Proceedings of the IEEE/CVF International Conference on Computer Vision; IEEE: New York, NY, USA, 2023; pp. 6027–6037. [Google Scholar]

- Goldberg, D.E.; Taymaz, T.; Reitman, N.G.; Hatem, A.E.; Yolsal-Çevikbilen, S.; Barnhart, W.D.; Irmak, T.S.; Wald, D.J.; Öcalan, T.; Yeck, W.L. Rapid characterization of the February 2023 Kahramanmaraş, Türkiye, earthquake sequence. Seism. Rec. 2023, 3, 156–167. [Google Scholar] [CrossRef]

- Copernicus Emergency Management Service (© 2023 European Union). [EMSR648] Islahiye (AOI10): Grading Vector. Available online: https://mapping.emergency.copernicus.eu/activations/EMSR648/EMSR648_AOI10_GRA_MONIT02_r1_RTP01_v1.pdf (accessed on 27 September 2025).

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Proceedings of the Medical Image Computing and Computer-Assisted Intervention—MICCAI 2015; Springer: Cham, Switzerland, 2015; pp. 234–241. [Google Scholar]

- Zhou, Z.; Rahman Siddiquee, M.M.; Tajbakhsh, N.; Liang, J. Unet++: A nested u-net architecture for medical image segmentation. In Proceedings of the Deep Learning in Medical Image Analysis and Multimodal Learning for Clinical Decision Support; Springer: Cham, Switzerland, 2018; pp. 3–11. [Google Scholar]

- Zhou, L.; Zhang, C.; Wu, M. D-LinkNet: LinkNet with pretrained encoder and dilated convolution for high resolution satellite imagery road extraction. In Proceedings of the 2018 IEEE Conference on Computer Vision and Pattern Recognition Workshops, CVPR Workshops 2018, Salt Lake City, UT, USA, 18–22 June 2018; IEEE Computer Society: New York, NY, USA, 2018; pp. 182–186. [Google Scholar]

- Xie, E.; Wang, W.; Yu, Z.; Anandkumar, A.; Alvarez, J.M.; Luo, P. SegFormer: Simple and Efficient Design for Semantic Segmentation with Transformers. In Proceedings of the 35th Conference on Neural Information Processing Systems (NeurIPS 2021); Neural Information Processing Systems Foundation, Inc. (NeurIPS): San Diego, CA, USA, 2021; pp. 12077–12090. [Google Scholar]

- Cao, H.; Wang, Y.; Chen, J.; Jiang, D.; Zhang, X.; Tian, Q.; Wang, M. Swin-Unet: Unet-Like Pure Transformer for Medical Image Segmentation. In Proceedings of the Computer Vision—ECCV 2022 Workshops: Tel Aviv, Israel, 23–27 October 2022, Proceedings, Part III; Springer-Verlag: Berlin/Heidelberg, Germany, 2023; pp. 205–218. [Google Scholar]

- Jocher, G.; Qiu, J. Ultralytics YOLO26, version 26.0.0; Ultralytics: Frederick, MD, USA, 2026.

Figure 1.

The framework of the proposed damaged road extraction method.

Figure 1.

The framework of the proposed damaged road extraction method.

Figure 2.

Road damage simulation strategy based on stable diffusion model.

Figure 2.

Road damage simulation strategy based on stable diffusion model.

Figure 3.

Visualization of simulated road damage results: (a–c) Examples of rural road damage simulation results. (d,e) Examples of urban road damage simulation results.

Figure 3.

Visualization of simulated road damage results: (a–c) Examples of rural road damage simulation results. (d,e) Examples of urban road damage simulation results.

Figure 4.

Overview of the proposed Vector-Guided Damaged Road Segmentation Network.

Figure 4.

Overview of the proposed Vector-Guided Damaged Road Segmentation Network.

Figure 5.

Schematic diagram of the Multi-Scale Residual Attention (MSRA) module.

Figure 5.

Schematic diagram of the Multi-Scale Residual Attention (MSRA) module.

Figure 6.

Architecture of the Vector-Prior Driven Dynamic Upsample strategy.

Figure 6.

Architecture of the Vector-Prior Driven Dynamic Upsample strategy.

Figure 7.

Overview of the Test Area.

Figure 7.

Overview of the Test Area.

Figure 8.

Schematic of the vector correction strategy. (a) Spatial overlay of predicted damage masks with road network vectors. (b) Damage verification via mask-vector intersection analysis. (c) Final identification of damaged road segments.

Figure 8.

Schematic of the vector correction strategy. (a) Spatial overlay of predicted damage masks with road network vectors. (b) Damage verification via mask-vector intersection analysis. (c) Final identification of damaged road segments.

Figure 9.

Visualization of segmentation results. (a–c) Examples of rural road segmentation results. (d,e) Examples of urban road segmentation results.

Figure 9.

Visualization of segmentation results. (a–c) Examples of rural road segmentation results. (d,e) Examples of urban road segmentation results.

Figure 10.

Visual ablation study on the effectiveness of the Vector Guidance module. (a–c) Examples of rural road ablation results. (d,e) Examples of urban road ablation results.

Figure 10.

Visual ablation study on the effectiveness of the Vector Guidance module. (a–c) Examples of rural road ablation results. (d,e) Examples of urban road ablation results.

Figure 11.

Visual results of the real-world scenarios. (a,b) Examples of urban road results. (c,d) Examples of rural road results.

Figure 11.

Visual results of the real-world scenarios. (a,b) Examples of urban road results. (c,d) Examples of rural road results.

Table 1.

Performance evaluation of the proposed method against baseline models.

Table 1.

Performance evaluation of the proposed method against baseline models.

| Model | mIoU | Precision | mF1 |

|---|

| UNet | 0.791 | 0.814 | 0.803 |

| UNet++ | 0.803 | 0.823 | 0.813 |

| Swin-UNet | 0.822 | 0.832 | 0.827 |

| D-Linknet | 0.805 | 0.834 | 0.820 |

| SegFormer | 0.839 | 0.847 | 0.843 |

| YOLO26-seg | 0.816 | 0.829 | 0.823 |

| U2Net | 0.814 | 0.851 | 0.834 |

| Ours | 0.884 | 0.931 | 0.916 |

Table 2.

Ablation study on the impact of vector guidance.

Table 2.

Ablation study on the impact of vector guidance.

| RGB | Vector-Guided | Road-IoU | Damaged-IoU | mIoU | Precision | mF1 |

|---|

| √ | × | 0.625 | 0.582 | 0.729 | 0.642 | 0.686 |

| √ | √ | 0.830 | 0.798 | 0.814 | 0.851 | 0.834 |

Table 3.

Ablation study on the overall segmentation performance.

Table 3.

Ablation study on the overall segmentation performance.

| WTConv | MSRA | Dynamic

Upsample | scSE | mIoU | Precision | mF1 |

|---|

| × | × | × | × | 0.814 | 0.851 | 0.834 |

| √ | × | × | × | 0.839 | 0.861 | 0.849 |

| √ | √ | × | × | 0.861 | 0.892 | 0.872 |

| √ | √ | √ | × | 0.879 | 0.924 | 0.909 |

| √ | √ | √ | √ | 0.884 | 0.931 | 0.916 |

Table 4.

Ablation study on the segmentation performance for the intact road class.

Table 4.

Ablation study on the segmentation performance for the intact road class.

| WTConv | MSRA | Dynamic

Upsample | scSE | IoU | Precision | mF1 |

|---|

| × | × | × | × | 0.830 | 0.868 | 0.850 |

| √ | × | × | × | 0.855 | 0.878 | 0.865 |

| √ | √ | × | × | 0.878 | 0.910 | 0.890 |

| √ | √ | √ | × | 0.896 | 0.942 | 0.927 |

| √ | √ | √ | √ | 0.902 | 0.950 | 0.934 |

Table 5.

Ablation study on the segmentation performance for the damaged road class.

Table 5.

Ablation study on the segmentation performance for the damaged road class.

| WTConv | MSRA | Dynamic

Upsample | scSE | IoU | Precision | mF1 |

|---|

| × | × | × | × | 0.798 | 0.834 | 0.818 |

| √ | × | × | × | 0.823 | 0.844 | 0.833 |

| √ | √ | × | × | 0.844 | 0.874 | 0.854 |

| √ | √ | √ | × | 0.862 | 0.906 | 0.891 |

| √ | √ | √ | √ | 0.866 | 0.912 | 0.898 |

Table 6.

Quantitative results of the real-world scenarios.

Table 6.

Quantitative results of the real-world scenarios.

| Model | Precision | Recall | F1-Score | Inference Time (s) |

|---|

| UNet++ | 0.328 | 0.418 | 0.373 | 215 |

| Swin-UNet | 0.423 | 0.518 | 0.471 | 292 |

| D-Linknet | 0.378 | 0.476 | 0.427 | 158 |

| SegFormer | 0.512 | 0.587 | 0.550 | 265 |

| YOLO26-seg | 0.445 | 0.603 | 0.524 | 124 |

| U2Net | 0.462 | 0.597 | 0.529 | 186 |

| Ours | 0.582 | 0.723 | 0.653 | 203 |

| Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |