1. Introduction

Sustainability science has been defined as “an emerging field of research dealing with interactions between natural and social systems, and with how those interactions affect the challenge of sustainability, meeting the needs of the present and future generations while substantially reducing poverty and conserving the planet’s life-supporting systems” (cited from PNAS-homepage in [

1]). The move towards a scientific understanding of the interaction between natural and social systems also affects technologies, as Cyber-Physical Systems (CPS) are increasingly socio-technical systems, and can have substantial impact on the interaction and the stakeholders involved. Recent initiatives, such as Industry 5.0 [

2], have recognized the development needs to that respect, and recent visions direct industrial attention “towards intelligent machines that learn more like animals and humans, that can reason and plan, and whose behavior is driven by intrinsic objectives” [

3] (p. 1).

Self-awareness, global perspective and societal consciousness have been recently defined as enablers of sustainability intelligence [

4].

Table 1 provides explanations for these three aspects.

These enablers refer to human activities in organization and societal context on the fringe between the global perspective and societal consciousness. Beyne et al. [

4] could identify several challenges in developing sustainability intelligence when taking into account the three human-centered enablers mentioned above.

A major challenge concerns the relation between natural systems and the nature of human beings. Self-awareness of individual stakeholders does not necessarily imply collective or societal consciousness. This finding impacts organizations and their networks given the socio-technical nature of these systems. Hence, acting on the individual level may need to be differentiated from organizational behavior, e.g., encapsulating it and thus separating it from its interface to its networked actors.

Beyne et al. [

4] argue that societal consciousness in particular needs to be further developed towards recognizing and understanding underlying assumptions of activities, and couple transformation processes occurring on the individual and collective level. The latter has been recognized for autonomous actors as collective autonomy [

5,

6,

7]. Such a move implies transparent individual behaviors of autonomous actors and some “fluid “negotiated knotworking” as a new type of expertise in which no single party is the permanent center of power: the center does not hold” concerning autonomy on the collective level, as Engström puts it in an interview [

8] (p. 517).

According to Beyne et al. [

4], the key to coupling individual to societal consciousness and thus to sustainability intelligence is the awareness of the context of individual activities. It “simultaneously opens doors to better communicate with each other, to establish a dialogue, to create dynamics and to work better together. And a breakthrough to more complex collaborations in our society is the essential meaning of sustainable development” [

4] (p. 79). Bentz et al. [

9] recently commented on the aspect of “individual consciousness” [

4] (p. 80), asserting that any transformation towards sustainable agency requires not only integrative activities of being and becoming, but also contextual sharing based on meaning-making activities.

Consequently, sustainability intelligence includes both the individual and the collective level. The context of individual acting has been defined by Beyne et al. [

4] as self- awareness and societal consciousness. These contexts challenge the management of autonomy in collective settings with respect to sustainability intelligence. In particular, the social awareness of individually autonomous actors in networked settings, as well as the individual compliance of those actors with collective objectives, influence sustainability.

Today’s networked and volatile intertwining of physical and digital components in CPS gives urgency to system developers and stakeholders when handling individual and collective autonomy. Of particular importance are the nature, extent, and normative force of technology and its relation to individual possibilities for design and development in the formation of collective agency, e.g., manipulation through social or technical means [

10]. Should individual actors delegate the authority over their autonomy to some decisive agency? In the case of delegation, individual behavior is formed and sustained by collective affiliations rather than by individual autonomous agency. These affiliations can develop a normative claim which could be handled by respective technologies.

On the other hand, individuals bridge the gap between individual and shared autonomy to achieve collective objectives. This leads to challenges in terms of reflection on individualistic variants of autonomy. In case of comprehensive technology support, dedicated aggregation components are required [

11]. Sharing autonomy is in both cases an operational concept that requires the following:

Understanding the ways in which autonomy can be handled in socio-technical systems given the contextual nature of human action with respect to self-awareness and societal consciousness,

Capturing continuous evolvement of socio-technical systems,

Sustaining the dynamic relationship between individual autonomy and collective system behavior.

We focus on contextual sharing of autonomy recognizing the role of human agency as mechanism or lever of change that can trigger tipping points of sustainability actions in socio-technical settings. To keep the human in control of contextual transformation processes, the doing dimension of sustainability intelligence captures active stakeholder participation. We suggest stakeholder participation in terms of design and engineering activities for human-centered control.

Stakeholders are considered autonomous actors in increasingly digitalized, cyber-physical settings that are able to generate and execute models representing networked social actors and technological (cyber-physical) components. Such an instantiation of the doing dimension enables to build socio-technical intelligence involving both the global perspective and societal consciousness.

Becoming a designer requires cognitive sustainability intelligence driven by societal and socio-emotional values and principles. They become transparent in models that human actors design to represent mutual dependencies in heterogeneous and dynamically changing networks. The key aspect of design is that actors or components are able to share autonomy for a particular purpose, e.g., exchange a certain type of information to achieve privacy as part of socio-technical sustainability intelligence.

In the following, we detail the various concepts of autonomy that constitute socio-technical sustainability intelligence. We capture transformational aspects as well as experiences with existing technology-based autonomous systems. In the subsequent section, we derive the proposed framework for sharing autonomy building the basis for handling socio-technical sustainability intelligence. The framework represents an architecture and mechanism for sharing autonomy. An exemplar use case illustrates the feasibility of the approach and the mechanism based on the generic model. The conclusion wraps up the objectives and achievements to offer the paths of further research.

2. Related Work

This section reviews architectures and implementation schemes related to socio-technical autonomy that originate either in the field of technology (e.g., autonomic computing) or in the social sciences. Furthermore, we discuss their handling in the setup and operation of intelligent systems.

2.1. Shared Autonomy in Self-Adaptive Systems

Not only due to the growing complexity of advanced information systems, but also driven by conceptual developments in the recent years, autonomic computing has gained interest. Originally characterized by being distributed, open and dynamical, systems have been increasingly developed including self-management capabilities. Typically, self-organization, self-configuration, self-adaptation, and other self-capabilities imply a high degree of digital control and computational capacity with minimal human intervention [

12]. Aiming to achieve a goal, autonomic systems can configure and reconfigure themselves automatically under changing (and unpredictable) conditions, and have the intelligence to optimize their business logic through monitoring and adjusting components and their environment [

12].

Dehraj et al. [

13] have reviewed the developments in autonomic computing since its introduction. In the conclusion, they report on a vision for autonomic computing that indicates the need for sharing autonomy—the coupling of change in “requirement of autonomous features … with the change in the internal or external environment of the system during run time” [

13] (p. 414). When a system has the capability to self-adapt to change, as part of its autonomy, it should also be “capable of handling high-level management task automatically” [

13] (p. 414). Sharing of autonomy could then be considered a high-level management task that enables dynamical adjustment of system behavior. It can be a centralized or decentralized component [

14]. Hence, sharing autonomy can either be implemented through a central control component or a distributed architecture. In either case, it has to capture high-level features to meet autonomy (sharing) requirements by adapting system behavior.

In the context of recently implemented concepts, in particular Industry 4.0 [

15], diagnosing causes of (complex) events in heterogeneous and open environments, predictive maintenance, and self-adjustment according to the diagnosis have become essential features of autonomic systems [

16,

17,

18]. In the pursuit of better organization of all productive means, not only have the boundaries between the physical and digital worlds shrunk, giving rise to CPS, but also the interaction between people, machines, processes and products has increased to achieve this goal. Consequences are transformation processes towards using digital intelligence cooperatively when customizing everything in detail, reducing material and service costs, digitizing work places and network relations [

19,

20].

Digital intelligence is used in autonomic systems to create a more holistic ecosystem. It includes all relevant actors involved in manufacturing processes, namely people, things, processes, and data. Recognizing the requirement that these actors should carry out their work in a more autonomous way, they need to be able to make decisions for themselves and to manage themselves in alignment with others in the whole factory. The latter includes autonomous negotiation in order to reach agreements linked to achieving both individual and collective production goals. Given the interoperability and integration requirement to set up such an operation of autonomic systems or a platform supporting self-management [

21], several layers of intervention have been considered by Sánchez et al. [

19]—see also

Figure 1, left side.

From this overarching architecture scheme in

Figure 1, it can be concluded that not only connection and communication are essential enablers for coordination, cooperation, and collaboration, but also differentiating layers for handling autonomy. At the bottom, the scheme includes the physical components represented as digital agents [

22] that are controlled by business processes on an integration layer. In this architecture, the prominent role of the internet becomes evident as substantial carrier of information. This layer also contains receiving sensor data and activating actuators, both relevant for adapting systems and being considered on the reflection layer. That decisive layer captures issues affecting components (e.g., through self-adaptation) and their coordination (e.g., interoperability).

Connection and communication are considered essential enablers for coordination, cooperation, and collaboration. When developing an architecture, Sánchez et al. [

19] propose a multi-agent system with the physical components represented as digital agents [

22]. Multi-agent systems support autonomy, decentralization, and self-management features in a coordinated way. In order to fully adopt multi-agent systems, Sánchez et al. [

19] propose the creation of a development cycle for autonomic systems based on knowledge identification and data clearance for the data analytics tasks of the autonomic cycle. These tasks aim at knowledge modeling mainly for description and prediction. They are completed with creating a prototype of the autonomic cycle.

Sánchez et al. [

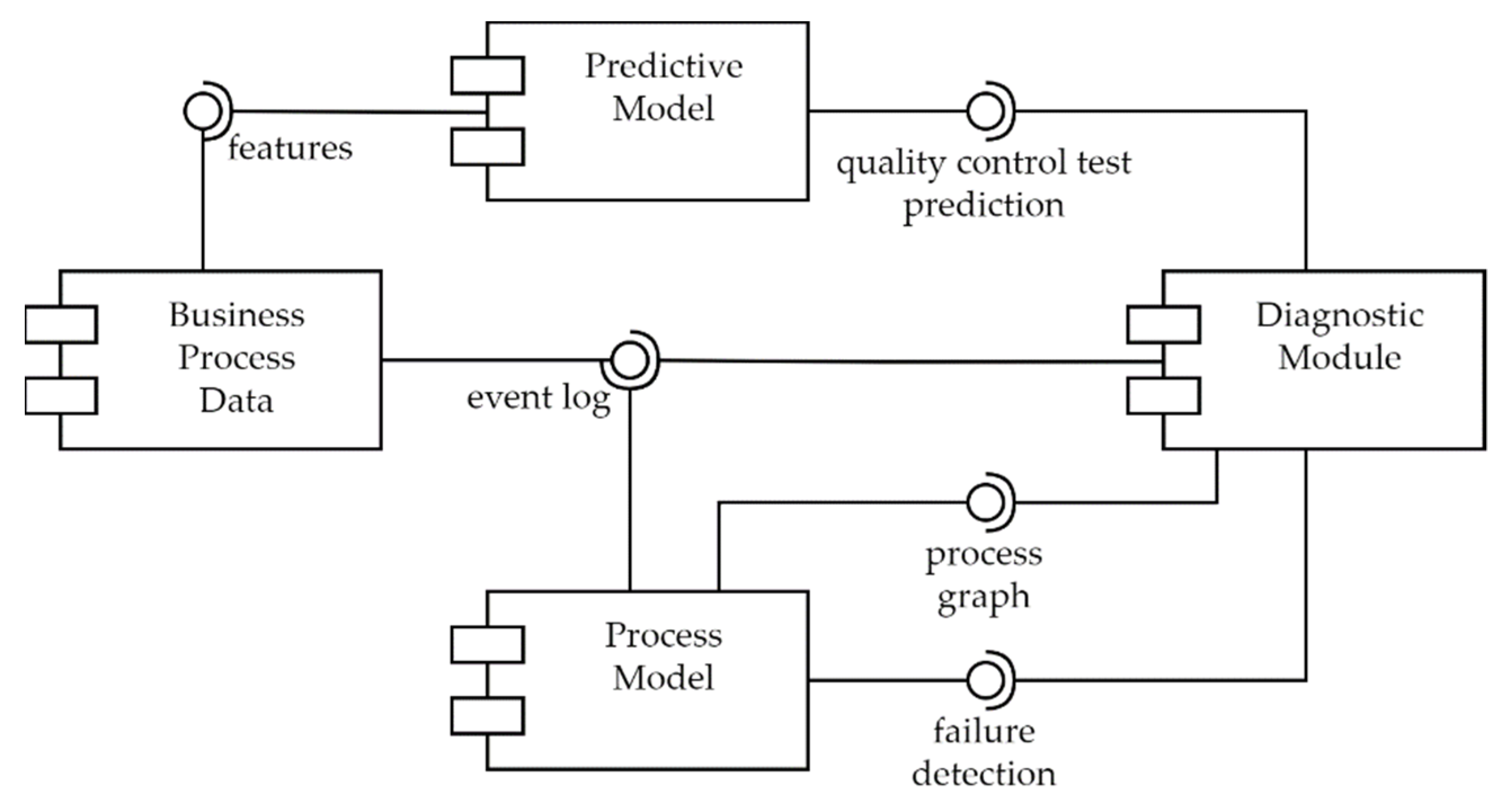

19] developed an autonomic cycle for self-supervision, which they could test in an industrial setting. Their autonomic cycle comprises process models and three data analytics tasks, as shown in the component diagram of the autonomic cycle for the self-supervising capacity of a CPS (see

Figure 2). “The Business Process is the component that is supervised. The Predictive model is the output of Task 2, while the Process model is the output of Task 1. Finally, the diagnostic module characterizes Task 3. The Business process provides the categorical, numeric, and date features required by the Predictive model in order to make the quality control test prediction. Similarly, the event log required by the Process model to detect failures is provided by the Business Process. The diagnostic module uses the event log, the process graph (provided by the Process model), the result from the Predictive model, and the result from the Process model, in order to determine the status of the manufacturing process (decision-making), and invoke the autonomic cycle for self-healing when needed” [

19] (p. 7). From these developments, we can learn that continuous monitoring and prediction as a means of self-adaptation can help increase the autonomy of components or systems.

From the recent work in autonomic computing, it becomes evident that the key to autonomy are process data and process models, either as “glue” to achieve some business goal with a set of CPS components along a specific flow of information and control, or as behavior representation to encapsulate CPS component behavior. However, as the diversity of business process requirements and the heterogeneity of resources increases, in particular when using cloud services, special attention has to be paid to optimizing the cost management of a business process in the cloud [

14]. These findings do not only refer to the economic context of sharing autonomy features and activities, but also to the flow of data, and thus, the interaction between components has to be considered as an essential concern of sharing features or schemes.

Apart from considering stakeholder-specific Quality of Service requirements, the degree of automation of self-adaptation is continuously increasing due to advances in AI, machine learning, and computational technologies [

23,

24]. When components of autonomous systems are primarily in charge of managing resources on their own, the interaction between them becomes crucial for system operation. It includes sharing of messages on system performance [

23]. Hence, these interaction mechanisms referring to a system’s behavior based on its operating components are candidates to influence its structure and dynamics. They can be used for sharing autonomy features, in particular when pictures of reality should be based on large-scale distributed simulations that can be shared across many agents and lay ground for a consensus of the environment and potentially a knowledge beyond that perceivable by a single agent’s sensors [

25]. However, the assumed closed feedback loop for self-adaptation in systems engineering, as emphasized in a recent review [

24], does not indicate socio-technical interventions in the process of behavior changes so far. However, in the realm of Industry 5.0, as detailed in the introduction of this paper, it needs to be considered an essential part of socio-technical sustainability intelligence.

2.2. Shared Autonomy as Socio-Technical Endeavor

The more CPS propagate to the application domain, the more interfaces with humans, and thus an information systems perspective, move to the center of interest [

26]. Typical application domains are driverless cars, smart cities, smart homes, smart home healthcare, and digitalized production. The independence of autonomous system components that can make decisions and perform actions influences human actors as well as artificial ones. “This asks for a better understanding of AS [Autonomous Systems] in a broader context, where the autonomy of technical systems as agents must be analyzed in relation to human agents. In fact, changes in the autonomy of one (human or technology) agent may have consequences for the autonomy of another agent” [

26] (p. 265–266). The authors also suggest long-term considerations of autonomous systems, since sustainable systems of this kind should be able to learn and use that capability for self-adaptation in terms of constant self-improvement.

The criteria for optimization could concern all types of sustainability and impact ecological, economic, social, and environmental concerns [

26]. Based on these considerations and a detailed analysis of categories of autonomy, Beck et al. [

26] could synthesize and integrate different perspectives on autonomy and develop the multi-perspective framework shown in

Figure 3. In line with the established and still valid dimensions of socio-technical system design [

27], it captures mutually related human, task, and technology autonomy.

With respect to human autonomy, Koeszegi [

28] recently investigated the impact of automated decision systems and the set of requirements that need to “be placed on automated decision systems in order to protect individuals and society” [

28] (p. 155). Automated decision-making concerns self-determination of humans and thus refers to human autonomy and the way humans and digital agents can share it [

29]. Knowledge elicitation and representation required for contextual representations have been addressed in the field of human work, e.g., by Patriarca et al. [

30]. It can not only lay foundation for reflection on work processes and the associated work autonomy, but also for active participation in sustained humanization of work through stakeholder involvement [

31].

With respect to technical autonomy, Simmler et al. [

32] could distill several dimensions from published findings: non-transparency, indetermination, adaptability, and openness. According to their framework, developers need to specify which technical system components feature which dimension. Then, the level of technical autonomy can be determined based on a specific combination of features.

Although frameworks and schemes mainly provide technology grounded concepts, they are of benefit for developing a socio-technical understanding of autonomy and sharing capabilities. On one hand, they provide a sufficient level of abstraction to integrate other existing conceptualizations of (domain-specific) technical autonomy, as, e.g., for autonomous vehicles [

33]. On the other hand, they facilitate instantiating relations to other types of autonomy, e.g., task autonomy in case of shifting driving tasks to a CPS component of a car.

From the analyzed studies on autonomy and associated sharing approaches, several items can be concluded for our research:

Autonomy is a multi-faceted construct that has evolved from technology-driven fields, such as autonomic computing. Based on these developments, sharing autonomy is increasingly digitized and occurs in closed technical feedback loops.

This development has triggered socio-technical approaches to recognize categories of autonomy related to information systems design. Multi-dimensional frameworks integrate technology autonomy and relate it to task and human autonomy.

Business process representations can serve as baseline for increasing capabilities of self-adaptation both from a technological/automation and human/organizational perspective. Therefore, sharing autonomy is understood as an activity inherent to the adaptation of CPS and its components.

Consequently, we can bridge the relation gap between technologically closed feedback loops in increasingly automated systems and socio-technical implementations of autonomy in system design by contextualizing business processes and developing component architectures enabling shared autonomy solutions. We will address sharing of autonomy as an active system entity for sustainable transitions to socio-technical system settings with embedded autonomy-sharing capabilities and a corresponding technological functionality. While featuring human and task autonomy, we focus on the doing dimension of sustainability with intelligible process descriptions as (executable) design representations on the individual and collective level.

3. Developing an Architecture and a Mechanism for CPS Sharing Autonomy

After we identified various development inputs on sharing autonomy from adaptable and socio-technical systems, in this section, we first detail the move from the original, technology-driven system understanding of autonomy towards an active, design-driven engineering task. Multiple layers and activity bundles require a flexible CPS architecture which is based on a structured interaction scheme. The presented architecture is the backbone for developing and preserving socio-technical sustainability intelligence, as it represents the CPS structure and behavior and accompanies its evolvement.

3.1. Shift of Focus: Understanding Autonomy as Driver of Transformational Change

In their seminal work on autonomous systems, Watson and Scheidt consider systems that can change their behavior in response to unanticipated events during operation to be “autonomous” [

34] (p. 368). The initial drivers of autonomous systems development and technologies are considered transformational in order to gain benefits in both cost and risk reduction. The first generation of autonomous systems research, combining simple sensors and effectors with analog control electronics, created systems that could exhibit a variety of interesting reactive behaviors. The advent of CPS has led to reconsidering autonomy as socio-technical endeavor [

35]. From the analysis provided by [

36]—see also

Table 2—several levels of information processing can be identified. In a so-called adaptive automation, humans should remain in control and kept responsible for meaningful and well-designed tasks when co-operatively controlling and managing CPS [

36].

Recognizing CPS as driver of transformative change, the authors consider work autonomy and its corresponding management task as key attributes of system design. When relating autonomy to information processing capabilities increasingly enabled by (CPS) technologies, control can be transferred from humans to the CPS. By doing so, human-decision making may be reduced or even eliminated, in particular when work processes are normative and become standardized, depending on the underlying tasks and their complexity. It depends on the design when and how decision-making and adaptation are allocated to humans or technology and which impact is created on CPS behavior and environment.

When task allocation is focused on technology, fully autonomous systems are the result, as the example of a fully autonomous technology taken from [

36] reveals that autonomous vehicles that possess advanced driver-assistance systems (e.g., cruise control, emergency breaking) have been around for a while now. Throughout the last decade, driving automation has minimized the role of the driver in driving the car. To evolve driving automation towards full autonomy in which no human intervention is needed, many car manufacturers are currently experimenting with self-driving cars that can sense their environment and navigate without human input. However, it is still unclear how these autonomous cars are able to handle various extreme and unpredictable situations. Hence, despite the readiness of the technology, an important question that still remains unanswered is who is responsible when a self-driving car has an accident? Up until now, there is still a considerable gap between self-drive technology and regulation on this topic.

Figure 4 shows the required management activities for CPS development from a technological and organizational perspective in light of evolving technologies. According to Waschull et al. [

36], two flavors of sharing autonomy need to be distinguished:

Sharing autonomy in operation: Users can design and adapt CPS in terms of managerial decision making.

Designing sharing autonomy in development: Developers create the capability through technology. The scope and extent to which information or features become sharable is a design decision that finally needs to be engineered (put to operational practice).

We now build on this differentiation and integration of two roles or stakeholder groups as part of the social sustainability intelligence. Our goal is to provide an architecture and a mechanism for the ways in which CPS components might share autonomy over time. When applied to dynamically evolving settings, such as autonomous driving, control could vary due to heterogeneity of situations or system configuration. A core mechanism is required for system components to interact with each other.

According to Fisher et al. [

37], building a baseline for representing socio-technical sustainability intelligence requires addressing the following developmental and operational issues:

Modeling the behavior and describing the interactions between CPS elements, and thus the input and output to an actor or component in charge of making decisions within the system.

Checking the sharing of autonomy within the anticipated environment representing the actual operation and those sub-systems of the systems to be external to the element in order to implement a specific system property.

Analyzing the involved CPS elements to check larger system for operational constraints.

In case the behavior needs to be adapted, modifying respective model representations.

As McKee et al. [

25] noted, it is not only that CPS share collected data, but they also require some shared model of “reality” that can be simulated to test intelligent systems or their components. Hence, the subsequently introduced architecture needs to meet the requirements listed above and user support, both in terms of structural dimensions and behavior specifications. As shown in the next section, the latter lay foundation for designing socio-technical sustainability intelligence as executable representations and interactive process experience.

3.2. CPS Achitecture for Sharing Autonomy as Part of Socio-Technical Transformations

In this section, we introduce the CPS structure and components that are used to support sharing autonomy in a flexible way. The proposed architecture represents the socio-technical sustainability intelligence in terms of the following characteristic items:

It is generated at an implementation-independent layer with the intention to transform an existing (cyber-physical) system, featuring a technology-independent perspective on shared autonomy.

It is designed to enable people to design and adapt a system in terms of components and their behavior, including their mutual interactions when sharing autonomy while achieving a specific goal.

The specification of components and their behavior is executable by a digital process engine, and thus shared autonomy can be experienced interactively (before the system is finally put into operation).

The specification as a digital model forms the baseline representing the current state of (shared) autonomy in terms of structure and behavior of a CPS and consequently sustains the development process with respect to federated intelligence.

In the following, we detail both the system structure and operational logic for sharing autonomy. We start with modeling the CPS components and their arrangements for sharing autonomy. We then introduce the gateway component to manage the sharing process and the operation of a CPS with shared autonomy components.

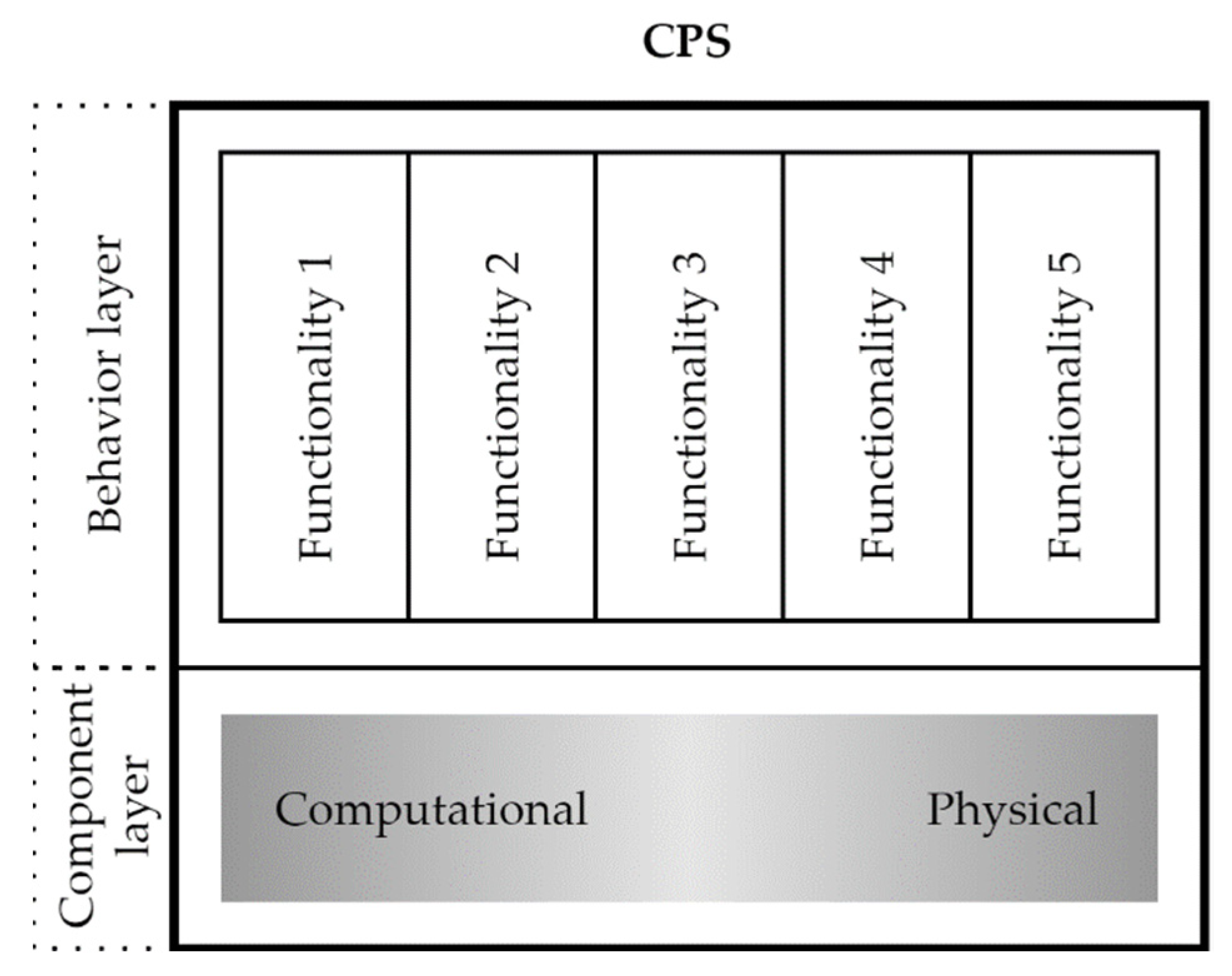

Figure 5 shows a schematic structure of a CPS, which includes a component layer and a behavior layer. “CPS are engineered systems that are built from, and depend upon, the seamless integration of computation and physical components” [

38]. Therefore, the component layer consists of a single element that outlines this seamless integration with a gradient. In this scheme, there is no need to further disaggregate the components because for our consideration, the focus is on the behavior of CPS. It is precisely this behavior that is outlined in the behavior layer. The elements in this layer represent the functionalities of the CPS. Depending on the complexity, the number of functionalities of a CPS varies. We have sketched five functionalities as examples in

Figure 5. This representation is on an abstract level in which we aim to show the encapsulation of functionalities. These may be basic functionalities to operate the CPS or functionalities to fulfill the mission or purpose of the CPS. In the following, we take a closer look at the behavior layer and these functionalities in terms of their autonomy.

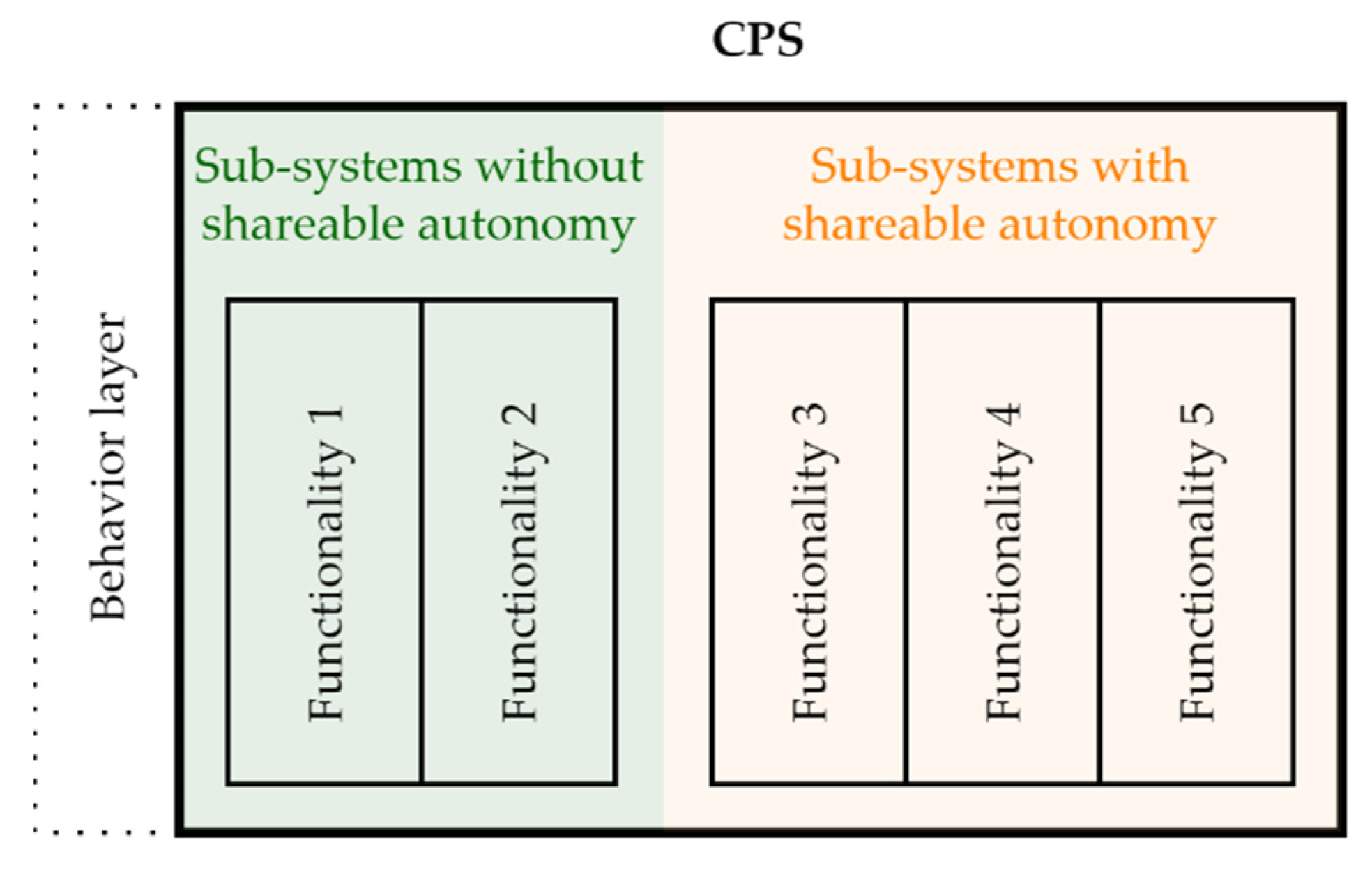

The autonomous behavior of CPS requires that some functionalities cannot share their autonomy, i.e., they must always be able to decide for themselves. We have defined such functionalities as examples and marked them in

Figure 6 as “sub-systems without shareable autonomy” and highlighted them in green. The other functionalities can in principle share their autonomy; they are labeled as “sub-systems with shareable autonomy” and highlighted in orange.

Figure 7 illustrates an example of two CPS that present functionalities with shared autonomy. These are labeled as “sub-systems with shared autonomy” and highlighted with a blue background. It can be seen that not all functionalities have to share their autonomy.

Figure 8 outlines that shared autonomy between functionalities can also change dynamically at runtime.

Figure 5,

Figure 6,

Figure 7 and

Figure 8 outline the schematic structure of autonomous CPS. Moreover, in these figures, we illustrate shared autonomy (or sharing of autonomy), which can change dynamically during runtime, at the behavioral layer. We next present an architecture for this abstract concept that shows the way in which the sharing capabilities work. It is essentially based on the system-of-systems concept and introduces a gateway component that serves as an intermediate control entity. This gateway component has codified the purpose of sharing and is able to negotiate before executing and monitoring a particular CPS.

Figure 9 outlines the concept including the gateway, the two autonomy-sharing CPS, and the required communication or message channels between the CPS and the gateway. The socio-technical context requires that the concept also works with human actors (see

Figure 10). For example, they could be involved via a graphical user interface (e.g., via a smartphone app) or via another CPS with the ability to interact (e.g., via buttons).

The gateway handles both the process of sharing autonomy, and the operation of a CPS with components sharing their autonomy:

Registration: Each CPS component needs to be registered to share autonomy with other components in the system. It lays ground for mechanisms to optimize or balance the functional and operational implementation of requirements.

Specification: Sharing autonomy requires explicating the purpose of sharing autonomy. The provision of a rationale for sharing is the baseline for elaborating the ways in which the functionality of the CPS can be improved.

Negotiation: It may be required to handle different options in order to ensure technical operation or improvements of the CPS.

Execution/Monitoring: Running a system requires control whether the engineering design meets the requirements of the CPS.

Socio-technical sustainability intelligence does not only mean the design is understood and controlled by humans, but also that humans may interact with CPS components in the course of task accomplishment involving cyber-physical components.

Figure 10 shows the involvement of humans as actors in the dual role already addressed in the previous section. On the one hand, humans can be involved in the execution of CPS tasks. On the other hand, with respect to the gateway, humans can be involved in any functionality during implementation as a socio-technical component.

4. A Use Case for Building Socio-Technical Sustainability Intelligence

We now want to test the concept using a case study. In our case study, we deal with a CPS, which is a smart logistics system. In [

39], we have elaborated the scenario aiming for human intelligibility as required for socio-technical design. In this contribution, the following part of the smart logistics system is considered: A robot has to pack a good into a smart transport box. To achieve this, it first picks up the goods at a checkpoint. At this moment, the robot receives the requirements for the smart transport box from the transferred goods, for example, whether the temperature must be monitored. The robot then assembles the smart transport box according to the requirements. The smart transport box can be equipped by the robot with IoT elements, such as a temperature sensor, a tracking sensor and/or other sensors as well as actuators. In the scenario, the robot performs this operation in a room with smart actors. These are a smart shelf with the smart transport boxes in different sizes, a smart shelf with the different sensors, the checkpoint equipped with sensors for the delivery and the shipment of goods, and a workbench for assembling the transport box. In addition, the room itself is equipped with sensors, for example a room air sensor [

39].

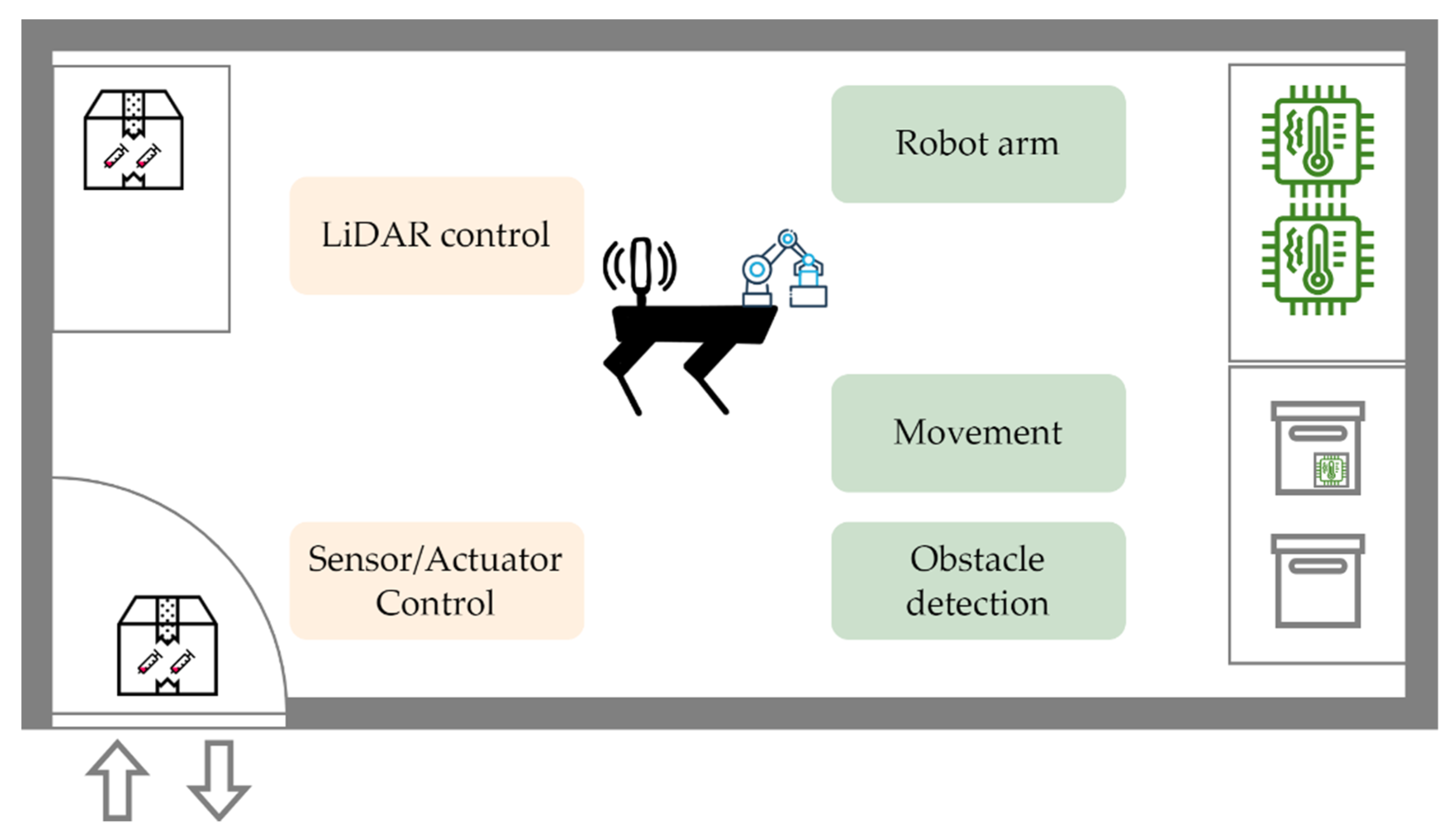

Figure 11 illustrates the scenario focused on the sub-systems of the robot. LiDAR (Light Detection And Ranging) control and sensors/actuators control have shareable autonomy, while the robot arm, movement, and obstacle detection have no shareable autonomy. LiDAR and sensors/actuators are thus basically controlled by the robot; however, they could also be controlled by another subsystem within the smart logistics system.

The sub-systems of the smart transport box are shown in

Figure 12. Geospatial location reporting and sensor/actuator control have the capability of shared autonomy, while locking control and location detection do not.

The sensor/actuator control subsystem of the robot and the smart transport box refers to the function for controlling the sensors and actuators that can be installed in or on the smart transport box. Accordingly, at certain times in our scenario, the robot may have control over these components, and at other times, the smart transport box may have control. A typical sharing event occurs when the sensors need to be picked up by the robot to be installed at the smart box.

We can now apply our concept to the smart logistics system.

Figure 13 depicts the robot and the smart transport box as well as the sub-systems with the capability to share their autonomy. According to our concept, the gateway takes over the process of sharing autonomy of these sub-systems. The capabilities of the gateway can either be integrated into each sub-system or operated as a separate system.

Registration: both subsystems have to register themselves in order to share their autonomy.

Specification: requirements of both sub-systems must be collected and stored.

Negotiation: the sub-systems have to be able to negotiate their autonomy.

Execution/Monitoring: exception handling with respect to shared autonomy has to be implemented in the sub-systems.

For the implementation of the use case, we use subject-oriented modeling and execution capabilities [

40,

41] where we consider the smart logistics system as a network of interacting subjects. Subjects are defined as encapsulations of behaviors that describe the generation of value. The broad term behavior can include tasks, machine operations, organizational units, or roles that people hold in organizations that generate value. From an operational perspective, subjects operate in parallel and may exchange messages asynchronously or synchronously. Consequently, value streams can be interpreted as the exchange of messages between subjects.

CPS or sub-systems specified in subject-oriented models operate as autonomous entities with concurrent behavior that represent distributed elements. Each entity (subject) is capable of performing (local) actions that do not require interaction with other subjects. Subjects can also perform communicative actions that involve the transmission of messages to other subjects, i.e., sending and receiving messages. Subjects are specified in two different types of diagrams: subject interaction diagrams (SIDs) and subject behavior diagrams (SBDs).

Figure 14 depicts a conceptual SID to illustrate the mapping of CPS components to the model representation. It exemplifies an architecture, as shown in

Figure 9, exclusively containing technical role carriers. Human intervention could be replaced or added in case of critical situations or handling complex events as indicated in

Figure 10. The sensor/actuator control sub-systems represent subjects that encapsulate the negotiation capability. The arrows represent the messages exchanged. We understand the capabilities of the gateway as internal behavior of each sub-system. Although we decided to not explicitly model the gateway as a separate subject, the negotiating functionality could also be considered as self-contained entity and thus be outsourced in a separate component. The arrows represent the exchanged messages and point in only one direction in

Figure 14. However, the messages could theoretically be exchanged in the other direction as well, since both subsystems can initiate the process of shared autonomy.

The SBD of the subject that encapsulates the negotiation is shown in

Figure 15. Green boxes are so-called “Receive states” that receive messages from other subjects, yellow boxes represent “Function states” that perform actions, and red boxes stand for “Send states” that send messages to other subjects. When a negotiation request from another actor sharing autonomy is received, all involved actors are identified. In our case, that might be only two sub-systems: the sensor/actuator control sub-system of the robot and of the smart transport box. The next step is to send an autonomy-sharing request to all identified (and registered) actors who are capable of sharing autonomy. After responses are received, conflicts will have to be detected. If no conflicts are detected, a respective confirmation is sent to all involved actors and the negotiation is completed. If conflicts are detected, such as one subsystem being unwilling or unable to share autonomy, the subject searches for alternatives and starts over, thus having to identify and contact the actors involved again, and so on. The selected level of abstraction allows humans to continuously design and control the behavior of a CPS. Therefore, the algorithm represented by the SBD is the codification of sustainability intelligence. It is at disposition for dynamic adaptation and execution, since either humans or technology can be assigned to implement the specified activities.

The same holds for extending the scenario to resource sharing—an essential issue for sustaining systems.

Figure 16 depicts such a scenario. For instance, sharing autonomy of a fixed industrial robot arm on a smart shelf requires to encapsulate behavior of the two functions “grasp and place”. The presented behavior modeling approach supports both the encapsulation of functionality as a subject of sharing autonomy, and as an entity to implement a possible entity of socio-technical sustainability intelligence.

Hence, the presented mechanism of handling of socio-technical sustainability intelligence is grounded on the following:

identifying relevant functional resources to be shared in principle,

ensuring the operational feasibility of sharing autonomy for all involved actors and components, and

allocating human actors or technology to implement the resulting system architecture.

These steps ensure not only transparent development procedures, but also human control with respect to including machine intelligence, and thus the extent of decision making supported or implemented by artificial intelligence components.

5. Discussion

Given the contextual nature of human action with respect to self-awareness and societal consciousness, handling autonomy in socio-technical systems such as CPS requires some representation in order to reflect on and (re-)design the constituting structure and corresponding behavior of these systems. The latter is required for representing the continuous evolvement of socio-technical systems, which is considered an inherent part of socio-technical sustainability intelligence, both on the individual (component or actor) and collective system level.

Sharing of autonomy recognizes the role of human agency as a mechanism leveraging actions sustaining systems in socio-technical settings. Behavior modeling from a stakeholder participation keeps humans in control of (continuous) transformation processes, which relates to the doing dimension of sustainability intelligence. In the presented approach, doing has been refined to designing and engineering for human-centered control of the ways in which CPS components can share autonomy to achieve some objective. This know-how is considered the core of socio-technical sustainability intelligence in heterogeneous and continuously evolving environments.

Since effective development support is of paramount importance to handle socio-technical sustainability intelligence, the presented approach considers stakeholders and technological components as autonomous actors of a behavior-centered system representation. Humans are supported in generating and executing these representations when addressing the sharing of autonomy of components and actors. Such an instantiation of the doing dimension enables to build socio-technical intelligence and addresses societal consciousness in terms of autonomy and mutual dependencies of system elements.

According to the presented model representations and their characteristics, handling autonomy sharing as part of socio-technical sustainability intelligence requires two aspects. First, modeling the behavior and describing the interactions between system elements is needed. This includes the inputs and outputs for an actor or component responsible for decision making within the system. Second, it must be possible to dynamically adapt the system architecture by sharing autonomy to operate the system in a given environment and achieve a common objective.

Sharing autonomy is linked to adaptation of component or system behavior. Handling socio-technical sustainability intelligence at an implementation-independent layer enables humans to keep control over transforming an existing socio-technical system. The technology-independent perspective on shared autonomy also allows to allocate tasks either to humans or technological components in the course of implementation. However, since the model representations of system components and their behavior become executable, shared autonomy can be experienced interactively before being put to operation in actual settings. Since a (digital) model represents the baseline for reflecting on the current state of shared autonomy in terms of system structure and behavior, it sustains the development process with respect to federated intelligence.

For handling the process of sharing autonomy, fundamental operations need to be supported. They start with registering all system components and making them part of a pool of system elements to share autonomy with other components. They also have to capture the specification of behavior when autonomy is shared according to a dedicated purpose. In case of conflicts with operating system components or sub-systems of the system, negotiations may be required together with various options to resolve conflicts, e.g., through executing the model representations. Finally, monitoring enables the control throughout operation and adapts a system in a timely fashion.

Such procedures are of significant importance in customized CPS settings. For in-stance, in smart healthcare systems, care-taking activities could require various CPS components for user-centered assistance, including robots, sensors, and actuators [

42]. For some medical homecare users, autonomous robotic assistance may be welcome since they want to keep their individual privacy at home and prefer being supported by robot control systems embedded in their smart home environment. Others may prefer social interaction in the course of medical caretaking at home and opt for interactive robot intervention delivered through remote control by experts [

42].

Each of the resulting CPS scenarios requires the service company technologies in collaboration with users according to their specific healthcare needs and preferences. The architecture and mechanism should enable configuring tracking of a person’s condition, such as blood pressure, sleep patterns, diet, and blood sugar levels, concerning a variety of functionalities and affecting the autonomy of several system components. The mechanism should also be able to configure alerting relevant stakeholders to adverse situations and suggesting behavior modifications to them toward a different outcome, such as reducing blood pressure through a different diet or reducing the dose of pills for the sake of daytime agility. In the long run, sharing autonomy is affecting everyday convenience, in particular alerting for timely healthcare and medical supply.

Such scenarios (see also Stary [

43] for details on healthcare, Stary et al. [

44] for smart mobility applications, and Barachini et al. [

45] for integrating social behavior into socio-technical sustainability intelligence) require considering autonomy as a multi-faceted construct that has evolved from technology-driven domains such as autonomic computing to trigger socio-technical approaches. Further categories of autonomy can be related to information systems design in terms of task and human autonomy due to the business process model representations used as baseline for increasing capabilities of self-adaptation. Therefore, the gateway to set up and operate design-integrated autonomy-sharing concepts remains of utmost importance for human control and the development of socio-technical sustainability intelligence. The use case revealed that the gateway can be handled as component to be activated on demand as separate entity or be embodied in those behavior specifications that represent sharable components. Such a generic approach also allows for dynamic shifts, in case additional system components should become shareable. It helps manage situations with limited resources. For example, a LiDAR carried as a payload might not be available for its purpose of processing the geospatial context. However, another LiDAR, additionally installed on the robot for other purposes, could step in.

6. Conclusions

In this work, we suggested to extend scientific research on sustainability beyond its focus on interactions between natural and social systems to socio-technical systems. We were able to exemplify the ways in which those interactions can form the development ground for building socio-technical sustainability intelligence. As crucial components for human-centered development of increasingly digitized societies worldwide, human-centered enablers, such as self-awareness, global perspective, and societal consciousness, can become part of design representations. They form the basis for reflective socio-technical practice in dynamically evolving ecosystems.

Once design representations become part of engineering tools, socio-technical practice includes active stakeholder engagement throughout design and implementation. Sharing autonomy then becomes an inherent feature of sustainable socio-technical system development and operation. The exemplified benefits guide our future research. We still need to conduct empirical research to further evaluate the proposed architecture and mechanism. In addition, future research will include a formal concept analysis (of shared autonomy) and its underlying processes [

46]. The multi-facet nature of the sharing autonomy construct in terms of structure and behavior in socio-technical settings requires a methodologically sound analysis. It has to be sufficiently precise and consistent to operationalize conceptual inputs for further development of socio-technical sustainability intelligence.