PACER: Platform for Android Malware Classification, Performance Evaluation and Threat Reporting †

Abstract

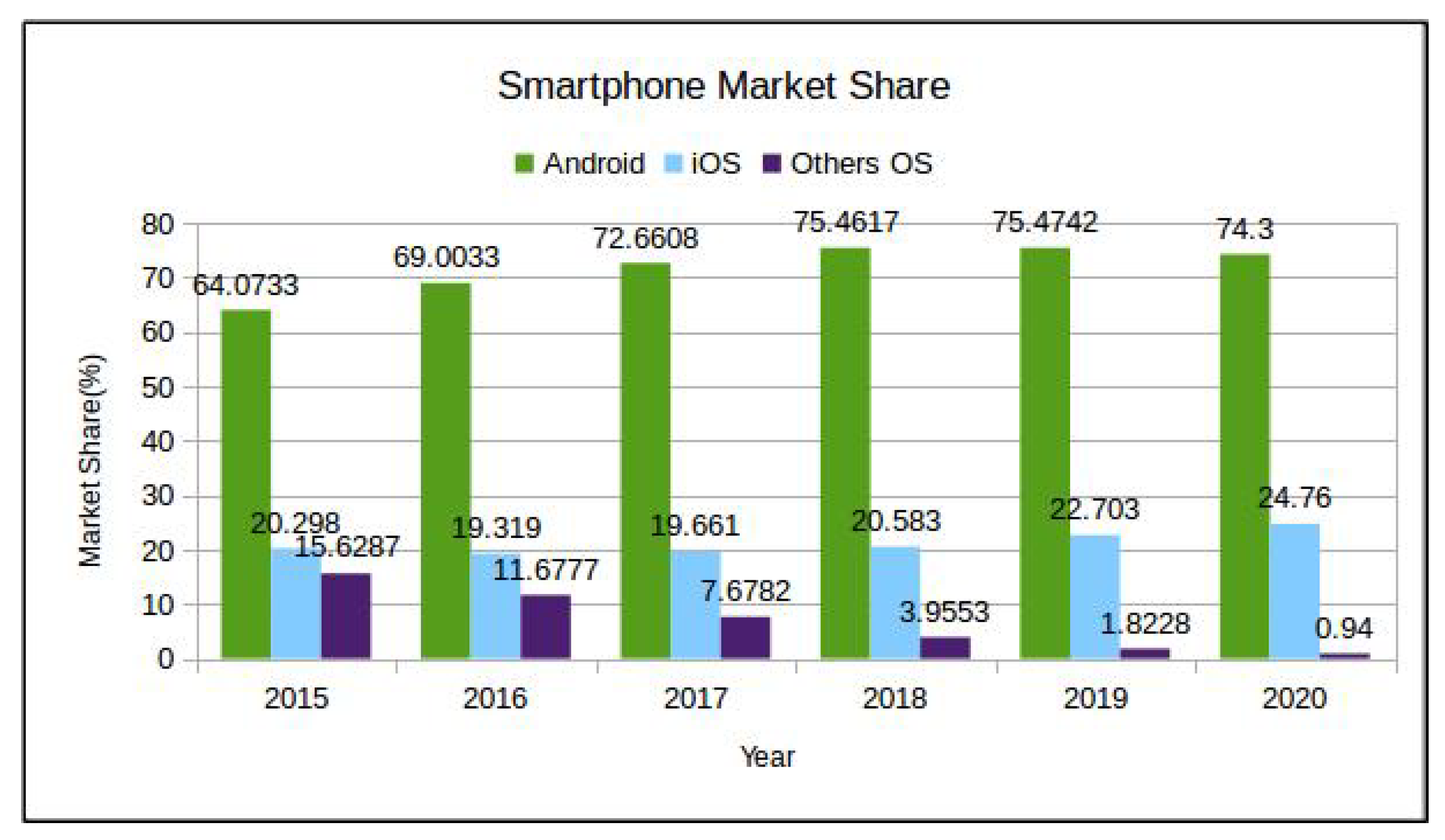

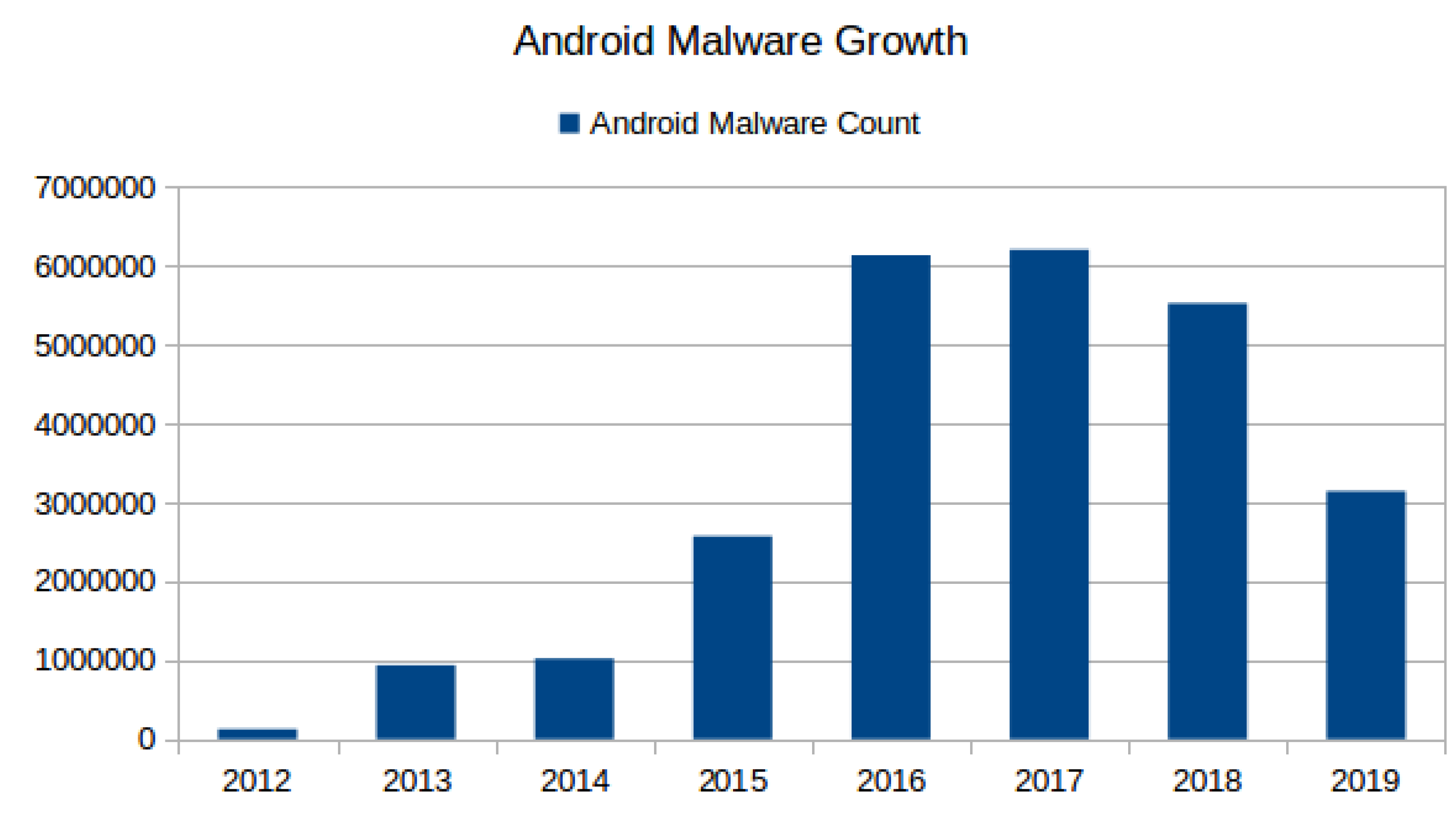

1. Introduction

- Reproducible and transparent research;

- Improved Android malware detection;

- Multi-application integration;

- Triaging and fast incident response;

- Android threat intelligence;

- Simple, descriptive and readable in-device threat report.

2. Background

2.1. Machine-Learning-Based Android Malware Detection

2.1.1. Static Features-Based Detection

2.1.2. Dynamic Features-Based Detection

2.1.3. Threat Intelligence Reporting

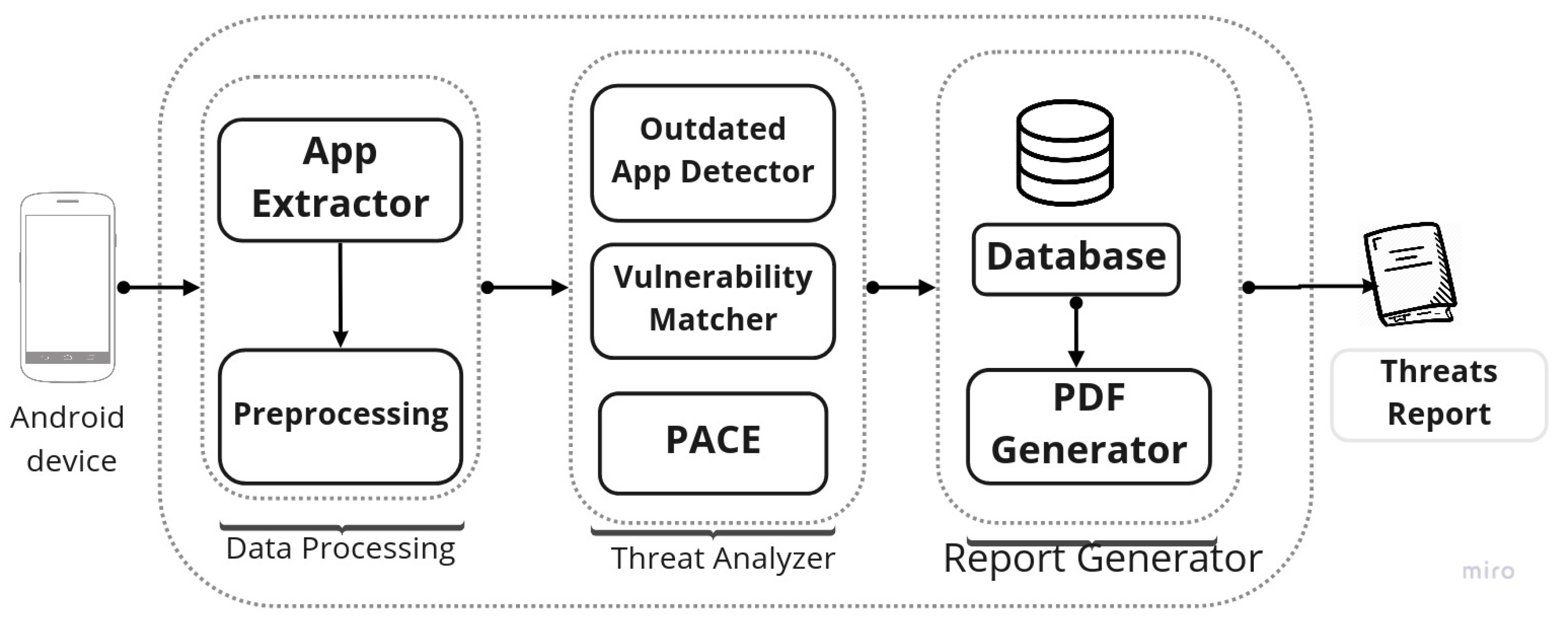

3. PACER: Platform for Android Malware Classification, Performance Evaluation, and Threat Reporting

- There are many quality studies on Android malware detection using both static and dynamic features, but they all are up to academic research level and are not available to end users or researchers in a usable form. Our earlier work PACE is an attempt to fill this gap between academic research and the end user.

- The comparison of various detection techniques is complex and difficult because of varying datasets and the unavailability of implementation of the earlier works. With PACE we offer a common platform to compare the results of multiple methods on the same dataset and evaluate performance.

- The reproducibility and transparency is an important concern of the applied research domain. PACE provides a platform for conducting reproducible and transparent research.

- The implementation of many earlier studies and datasets are either closed source or available on request. As research work grows older, keeping pace with contact and project becomes difficult, as the research contact (i.e., website, link, email) became obsolete.

- Very often, research works are not available in a usable format, with various interface options such as REST API, Web Interface, ADB, etc. PACE is a solution that makes research available to different kinds of users i.e., from end users to security practitioners.

- With a centralized submission system, PACE would be very helpful for Android threat intelligence by analyzing the sample daily and sharing the intelligence through the dashboard.

- Automatically generating a simple, meaningful, and readable threat intelligence report for the user device is very important for convincing and making the naive and non-technical user understand about security risks. With the extended work PACER, we try to address the issue of threat accessibility and understandability for the naive user by providing a simple, meaningful and readable report that has PACE scanning results along with other threat results such as CVE matching, outdated apps, etc.

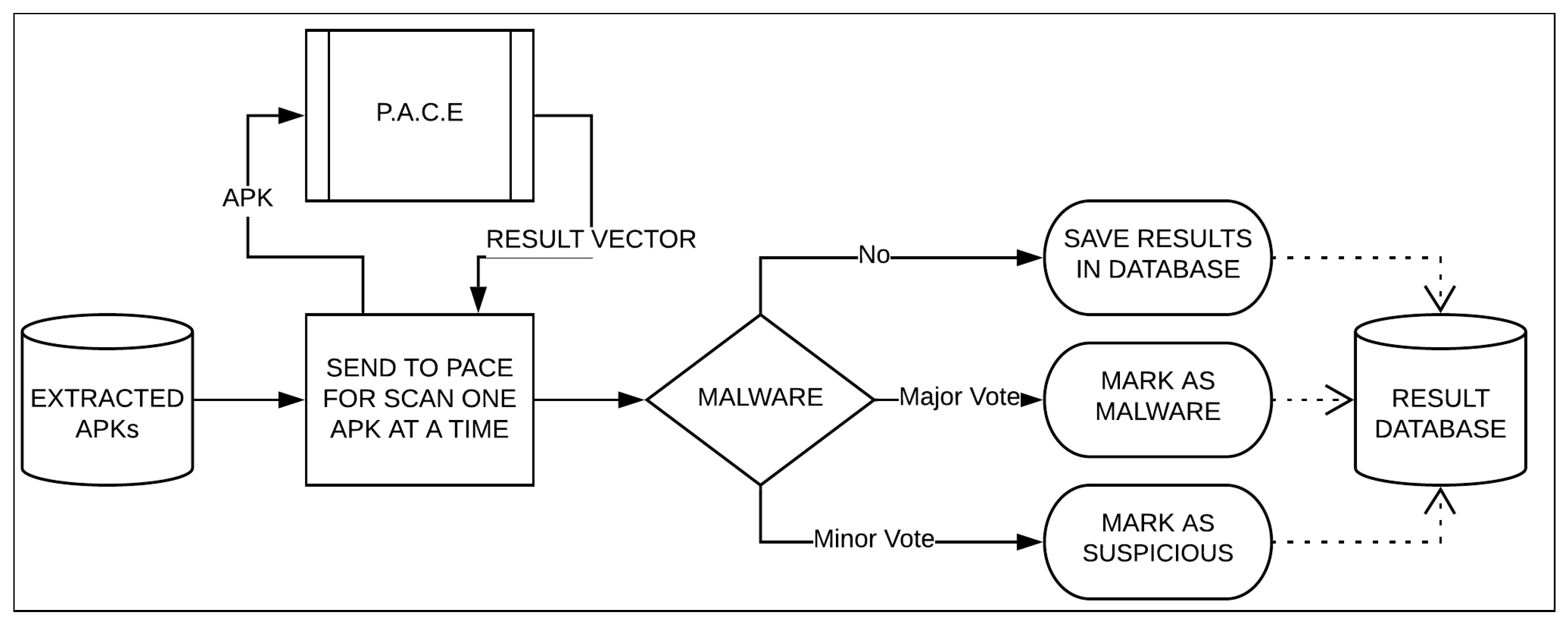

3.1. Data Processing

3.2. Threat Analyzer

3.2.1. Outdated App Detector

3.2.2. Vulnerability Matcher

3.2.3. PACE: Platform for Android Malware Classification and Performance Evaluation

3.3. Report Generator

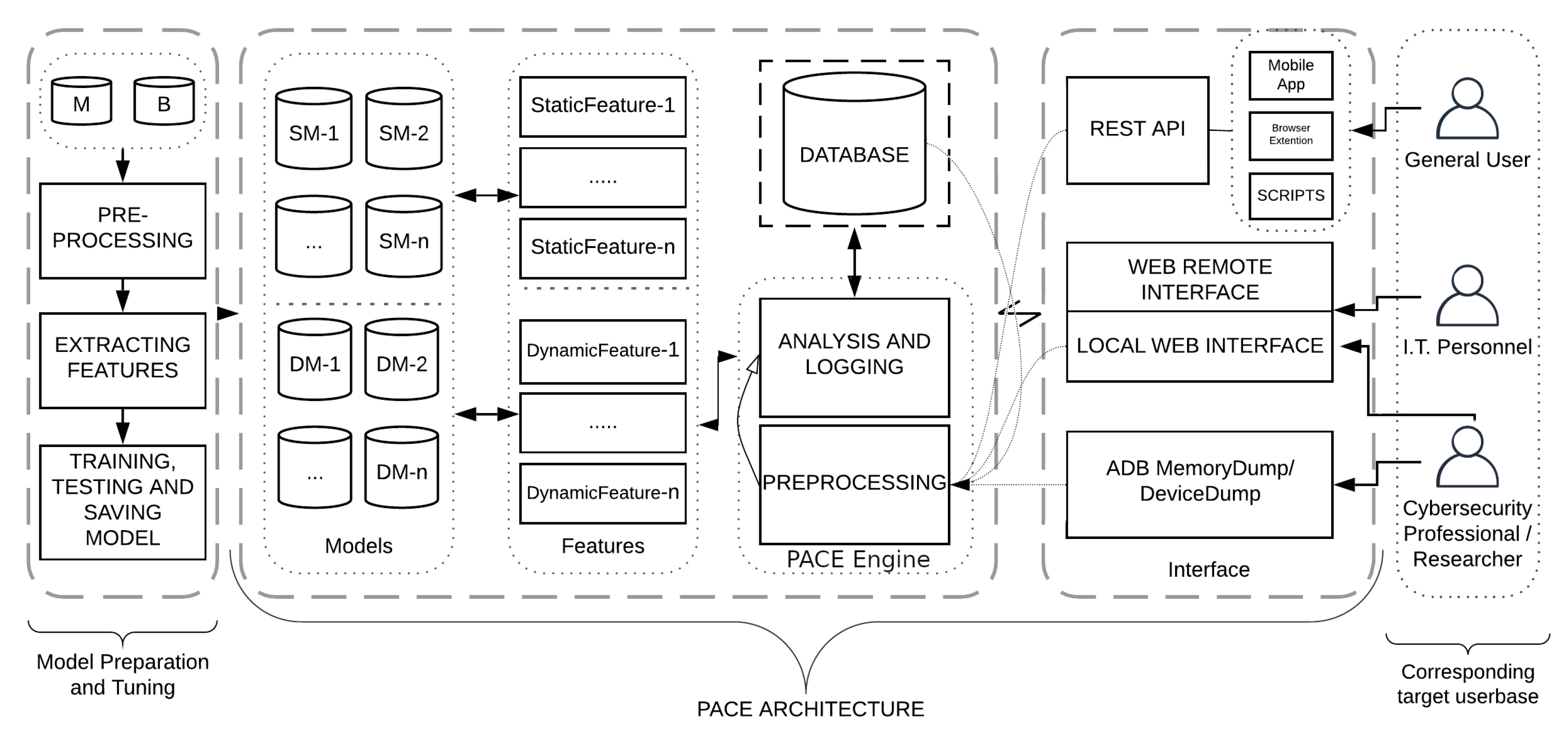

4. PACE: Platform for Android Malware Classification and Performance Evaluation

4.1. Model Building

- File Type Detection: As a first step, it is very important to detect the file type of each sample since further steps are file type-dependent.

- Duplicate Removal: The same sample in the dataset can be present with a different file name. To have only a unique sample in the dataset, file hash is used to filter duplicate samples.

- Class Labeling: Since PACE is used to perform binary classification, the dataset should have samples from both classes. Class labels are decided based on VirusTotal result. We considered a sample as benign if the VirusTotal’s engine shows that the sample is clean, otherwise the sample is considered to be malware.

- Sample Reversal: Each sample is reversed as per the demand of the feature type of a model. For example, if the underlying model is using permissions-based features for training, the manifest file will be extracted from each sample by simply unzipping the .apk file. On the other hand, if the model is dependent upon features extracted from the source code, the sample would be reverse engineered using tools like apktool, etc. Likewise, various methods will be applied to extract respective features.

4.2. PACE: Architecture

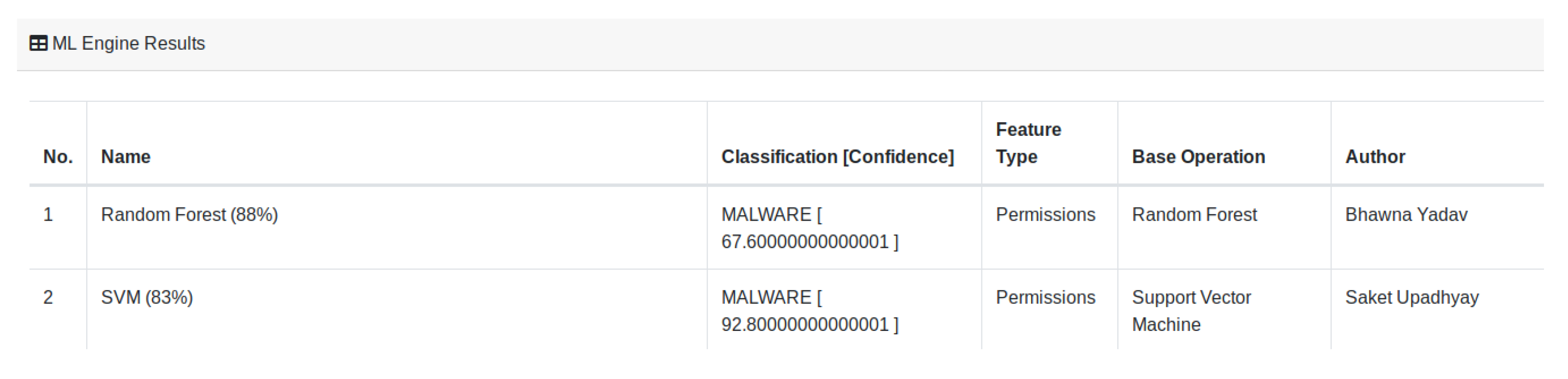

4.2.1. Models

4.2.2. Feature Set

4.2.3. Pre-Processing

4.2.4. Analysis and Logging

4.3. PACE: Interface

4.3.1. REST API

4.3.2. Web Interface

4.3.3. ADB and Memory Dump

4.4. Targeted Users

5. Experimental Setup and Implementation

5.1. Dataset

5.2. Implementation

6. Discussion and Conclusions

- A unique framework to standardize the existing studies for comparative analysis. This certainly reduces the programming efforts and code duplicity while providing a facility to compare, create, verify, and deploy new malware detection techniques.

- Its availability in public domain under GNU General Public License allows it to run, study, share, and modify the techniques. Also, due to regular additions of newly published methods, it will be easy and quick to deliver those techniques to the end user via various available interfaces (API, App, etc.).

- The proposed work will assist malware analysts in triaging the new sample and help to filter out samples quickly, which requires manual analysis.

- Due to multiple machine-learning-based methods, the detection will be more accurate and the threat reporting and sharing (along with scanning result, Exif information, Permissions Ranks and a nicely Decompiled Source Code of the submitted sample ready to download, all in one place) will be fast.

Supplementary Materials

Author Contributions

Funding

Conflicts of Interest

References

- Kumar, A.; Kuppusamy, K.; Aghila, G. FAMOUS: Forensic Analysis of MObile devices Using Scoring of application permissions. Future Gener. Comput. Syst. 2018, 83, 158–172. [Google Scholar] [CrossRef]

- Suarez-Tangil, G.; Tapiador, J.E.; Peris-Lopez, P.; Ribagorda, A. Evolution, detection and analysis of malware for smart devices. IEEE Commun. Surv. Tutor. 2013, 16, 961–987. [Google Scholar] [CrossRef]

- Faruki, P.; Ganmoor, V.; Laxmi, V.; Gaur, M.S.; Bharmal, A. AndroSimilar: Robust statistical feature signature for Android malware detection. In Proceedings of the 6th International Conference on Security of Information and Networks, Aksaray, Turkey, 26–28 November 2013; pp. 152–159. [Google Scholar]

- Qamar, A.; Karim, A.; Chang, V. Mobile malware attacks: Review, taxonomy & future directions. Future Gener. Comput. Syst. 2019, 97, 887–909. [Google Scholar]

- Gupta, S.; Buriro, A.; Crispo, B. A Risk-Driven Model to Minimize the Effects of Human Factors on Smart Devices. In Proceedings of the International Workshop on Emerging Technologies for Authorization and Authentication, Luxembourg City, Luxembourg, 27 September 2019. [Google Scholar] [CrossRef]

- Tam, K.; Feizollah, A.; Anuar, N.B.; Salleh, R.; Cavallaro, L. The evolution of android malware and android analysis techniques. ACM Comput. Surv. (CSUR) 2017, 49, 76. [Google Scholar] [CrossRef]

- Yan, P.; Yan, Z. A survey on dynamic mobile malware detection. Softw. Qual. J. 2018, 26, 891–919. [Google Scholar] [CrossRef]

- Felt, A.P.; Finifter, M.; Chin, E.; Hanna, S.; Wagner, D. A survey of mobile malware in the wild. In Proceedings of the 1st ACM workshop on Security and privacy in smartphones and mobile devices, Chicago, IL, USA, 17 October 2011; pp. 3–14. [Google Scholar]

- Qiu, J.; Nepal, S.; Luo, W.; Pan, L.; Tai, Y.; Zhang, J.; Xiang, Y. Data-Driven Android Malware Intelligence: A Survey. In Proceedings of the International Conference on Machine Learning for Cyber Security, Xi’an, China, 19–22 September 2019; pp. 183–202. [Google Scholar]

- Samra, A.A.A.; Qunoo, H.N.; Al-Rubaie, F.; El-Talli, H. A survey of Static Android Malware Detection Techniques. In Proceedings of the 2019 IEEE 7th Palestinian International Conference on Electrical and Computer Engineering (PICECE), Gaza, Palestine, 26–27 March 2019; pp. 1–6. [Google Scholar]

- Sahay, S.K.; Sharma, A. A Survey on the Detection of Android Malicious Apps. In Advances in Computer Communication and Computational Sciences; Springer: Berlin/Heidelberg, Germany, 2019; pp. 437–446. [Google Scholar]

- Doğru, İ.; KİRAZ, Ö. Web-based android malicious software detection and classification system. Appl. Sci. 2018, 8, 1622. [Google Scholar] [CrossRef]

- Au, K.W.Y.; Zhou, Y.F.; Huang, Z.; Lie, D. Pscout: Analyzing the android permission specification. In Proceedings of the 2012 ACM conference on Computer and communications security, Raleigh, NC, USA, 16–18 October 2012; pp. 217–228. [Google Scholar]

- McLaughlin, N.; Martinez del Rincon, J.; Kang, B.; Yerima, S.; Miller, P.; Sezer, S.; Safaei, Y.; Trickel, E.; Zhao, Z.; Doupé, A.; et al. Deep Android Malware Detection. In Proceedings of the Seventh ACM on Conference on Data and Application Security and Privacy, Scottsdale, AZ, USA, 22–24 March 2017; pp. 301–308. [Google Scholar] [CrossRef]

- Kumar, A.; Agarwal, V.; Shandilya, S.K.; Shalaginov, A.; Upadhyay, S.; Yadav, B. PACE: Platform for Android Malware Classification and Performance Evaluation. In Proceedings of the 2019 IEEE International Conference on Big Data (Big Data), Los Angeles, CA, USA, 9–12 December 2019; pp. 4280–4288. [Google Scholar]

- Zhang, L.; Thing, V.L.; Cheng, Y. A scalable and extensible framework for android malware detection and family attribution. Comput. Secur. 2019, 80, 120–133. [Google Scholar] [CrossRef]

- Kim, H.M.; Song, H.M.; Seo, J.W.; Kim, H.K. Andro-Simnet: Android Malware Family Classification using Social Network Analysis. In Proceedings of the 2018 16th Annual Conference on Privacy, Security and Trust (PST), Belfast, UK, 28–30 August 2018. [Google Scholar] [CrossRef]

- Le Thanh, H. Analysis of malware families on android mobiles: Detection characteristics recognizable by ordinary phone users and how to fix it. J. Inf. Secur. 2013, 4, 213–224. [Google Scholar] [CrossRef]

- Xie, N.; Wang, X.; Wang, W.; Liu, J. Fingerprinting Android malware families. Front. Comput. Sci. 2019, 13, 637–646. [Google Scholar] [CrossRef]

- Massarelli, L.; Aniello, L.; Ciccotelli, C.; Querzoni, L.; Ucci, D.; Baldoni, R. Android malware family classification based on resource consumption over time. In Proceedings of the 2017 12th International Conference on Malicious and Unwanted Software (MALWARE), Fajardo, PR, USA, 11–14 October 2017; pp. 31–38. [Google Scholar]

- Di Cerbo, F.; Girardello, A.; Michahelles, F.; Voronkova, S. Detection of malicious applications on android os. In Computational Forensics; Springer: Berlin/Heidelberg, Germany, 2010; pp. 138–149. [Google Scholar]

- Sanz, B.; Santos, I.; Laorden, C.; Ugarte-Pedrero, X.; Bringas, P.G.; Álvarez, G. Puma: Permission usage to detect malware in android. In International Joint Conference CISIS’12-ICEUTE 12-SOCO 12 Special Sessions; Springer: Berlin/Heidelberg, Germany, 2013; pp. 289–298. [Google Scholar]

- Ghorbanzadeh, M.; Chen, Y.; Ma, Z.; Clancy, T.C.; McGwier, R. A neural network approach to category validation of android applications. In Proceedings of the 2013 International Conference on Computing, Networking and Communications (ICNC), San Diego, CA, USA, 28–31 January 2013; pp. 740–744. [Google Scholar]

- Yerima, S.Y.; Sezer, S.; McWilliams, G.; Muttik, I. A new android malware detection approach using bayesian classification. In Proceedings of the 2013 IEEE 27th International Conference on Advanced Information Networking and Applications (AINA), Barcelona, Spain, 25–28 March 2013; pp. 121–128. [Google Scholar]

- Talha, K.A.; Alper, D.I.; Aydin, C. APK Auditor: Permission-based Android malware detection system. Digit. Investig. 2015, 13, 1–14. [Google Scholar] [CrossRef]

- Geneiatakis, D.; Fovino, I.N.; Kounelis, I.; Stirparo, P. A Permission verification approach for android mobile applications. Comput. Secur. 2015, 49, 192–205. [Google Scholar] [CrossRef]

- Milosevic, N.; Dehghantanha, A.; Choo, K.K.R. Machine learning aided Android malware classification. Comput. Electr. Eng. 2017, 61, 266–274. [Google Scholar] [CrossRef]

- Li, J.; Sun, L.; Yan, Q.; Li, Z.; Srisa-an, W.; Ye, H. Significant permission identification for machine-learning-based android malware detection. IEEE Trans. Ind. Inform. 2018, 14, 3216–3225. [Google Scholar] [CrossRef]

- Peiravian, N.; Zhu, X. Machine learning for android malware detection using permission and api calls. In Proceedings of the 2013 IEEE 25th International Conference on Tools with Artificial Intelligence, Herndon, VA, USA, 4–6 November 2013; pp. 300–305. [Google Scholar]

- Arp, D.; Spreitzenbarth, M.; Hubner, M.; Gascon, H.; Rieck, K. DREBIN: Effective and Explainable Detection of Android Malware in Your Pocket. In Proceedings of the 21th Annual Network and Distributed System Security Symposium (NDSS), San Diego, CA, USA, 23–26 February 2014. [Google Scholar]

- Sanz, B.; Santos, I.; Laorden, C.; Ugarte-Pedrero, X.; Nieves, J.; Bringas, P.G.; Álvarez Marañón, G. MAMA: Manifest analysis for malware detection in android. Cybern. Syst. 2013, 44, 469–488. [Google Scholar] [CrossRef]

- Wu, W.C.; Hung, S.H. DroidDolphin: A dynamic Android malware detection framework using big data and machine learning. In Proceedings of the 2014 Conference on Research in Adaptive and Convergent Systems, Towson, MD, USA, 5–8 October 2014; pp. 247–252. [Google Scholar]

- Amos, B.; Turner, H.; White, J. Applying machine learning classifiers to dynamic android malware detection at scale. In Proceedings of the 2013 9th International Wireless Communications and Mobile Computing Conference (IWCMC), Sardinia, Italy, 1–5 July 2013; pp. 1666–1671. [Google Scholar]

- Burguera, I.; Zurutuza, U.; Nadjm-Tehrani, S. Crowdroid: Behavior-based malware detection system for android. In Proceedings of the 1st ACM workshop on Security and privacy in smartphones and mobile devices, Chicago, IL, USA, 17 October 2011; pp. 15–26. [Google Scholar]

- Rastogi, V.; Chen, Y.; Enck, W. AppsPlayground: Automatic security analysis of smartphone applications. In Proceedings of the third ACM conference on Data and application security and privacy, San Antonio, TX, USA, 18–20 February 2013; pp. 209–220. [Google Scholar]

- Alam, M.S.; Vuong, S.T. Random forest classification for detecting android malware. In Proceedings of the 2013 IEEE International Conference on Green Computing and Communications and IEEE Internet of Things and IEEE Cyber, Physical and Social Computing, Beijing, China, 20–23 August 2013; pp. 663–669. [Google Scholar]

- Dai, S.; Wei, T.; Zou, W. DroidLogger: Reveal suspicious behavior of Android applications via instrumentation. In Proceedings of the 2012 7th International Conference on Computing and Convergence Technology (ICCCT), Seoul, Korea, 3–5 December 2012; pp. 550–555. [Google Scholar]

- Yuan, Z.; Lu, Y.; Wang, Z.; Xue, Y. Droid-sec: Deep learning in android malware detection. In Proceedings of the ACM SIGCOMM Computer Communication Review, Chicago, IL, USA, 17–22 August 2014; pp. 371–372. [Google Scholar]

- Yuan, Z.; Lu, Y.; Xue, Y. Droiddetector: Android malware characterization and detection using deep learning. Tsinghua Sci. Technol. 2016, 21, 114–123. [Google Scholar] [CrossRef]

- Shabtai, A.; Tenenboim-Chekina, L.; Mimran, D.; Rokach, L.; Shapira, B.; Elovici, Y. Mobile malware detection through analysis of deviations in application network behavior. Comput. Secur. 2014, 43, 1–18. [Google Scholar] [CrossRef]

- Jang, J.W.; Yun, J.; Mohaisen, A.; Woo, J.; Kim, H.K. Detecting and classifying method based on similarity matching of Android malware behavior with profile. SpringerPlus 2016, 5, 273. [Google Scholar] [CrossRef] [PubMed]

- Chang, W.L.; Sun, H.M.; Wu, W. An Android Behavior-Based Malware Detection Method using Machine Learning. In Proceedings of the 2016 IEEE International Conference on Signal Processing, Communications and Computing (ICSPCC), Hong Kong, China, 5–8 August 2016; pp. 1–4. [Google Scholar]

- Moran, K.; Linares-Vásquez, M.; Bernal-Cárdenas, C.; Vendome, C.; Poshyvanyk, D. Automatically discovering, reporting and reproducing android application crashes. In Proceedings of the 2016 IEEE International Conference on Software Testing, Verification and Validation (ICST), Chicago, IL, USA, 11–15 April 2016; pp. 33–44. [Google Scholar]

- Grover, J. Android forensics: Automated data collection and reporting from a mobile device. Digit. Investig. 2013, 10, S12–S20. [Google Scholar] [CrossRef]

- Eder, T.; Rodler, M.; Vymazal, D.; Zeilinger, M. Ananas-a framework for analyzing android applications. In Proceedings of the 2013 International Conference on Availability, Reliability and Security, Regensburg, Germany, 2–6 September 2013; pp. 711–719. [Google Scholar]

- Moran, K.; Linares-Vásquez, M.; Bernal-Cárdenas, C.; Poshyvanyk, D. Auto-completing bug reports for android applications. In Proceedings of the 2015 10th Joint Meeting on Foundations of Software Engineering, Bergamo, Italy, 30 August–4 September 2015; pp. 673–686. [Google Scholar]

- Winkler, I.; Gomes, A.T. Advanced Persistent Security: A Cyberwarfare Approach to Implementing Adaptive Enterprise Protection, Detection, and Reaction Strategies; Syngress: Rockland, MA, USA, 2016. [Google Scholar]

- Mitra, J.; Ranganath, V.P. Ghera: A repository of android app vulnerability benchmarks. In Proceedings of the 13th International Conference on Predictive Models and Data Analytics in Software Engineering, Toronto, ON, Canada, 8 November 2017; pp. 43–52. [Google Scholar]

- Zhang, M.; Yin, H. AppSealer: Automatic Generation of Vulnerability-Specific Patches for Preventing Component Hijacking Attacks in Android Applications. Available online: http://lilicoding.github.io/SA3Repo/papers/2014_zhang2014appsealer.pdf (accessed on 8 April 2020).

- Allix, K.; Bissyandé, T.F.; Klein, J.; Le Traon, Y. AndroZoo: Collecting Millions of Android Apps for the Research Community. In Proceedings of the 13th International Conference on Mining Software Repositories, Austin, TX, USA, 14–15 May 2016; pp. 468–471. [Google Scholar] [CrossRef]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-learn: Machine Learning in Python. J. Mach. Learning Res. 2011, 12, 2825–2830. [Google Scholar]

| Platform | Features | Open-Source | ML-Based | Status |

|---|---|---|---|---|

| VirusTotal (https://www.virustotal.com/) | Scan with Commercial AV | No | No | Active |

| Apkdetect (https://www.apkdetect.com) | Android malware analysis and classification | No | No | Limited Access |

| AndroZoo (https://androzoo.uni.lu/) | Android Dataset and Analysis | No | No | Only Dataset Access |

| Mobile-Sandbox (http://mobilesandbox.org) | Analysis and Forensic Features extraction | Yes (https://github.com/mspreitz/mobile-sandbox) | No | Outdated |

| NVISO ApkScan (https://apkscan.nviso.be/) | Static and Dynamic Scanning | No | No | Going Offline (https://blog.nviso.be/2019/10/01/sunsetting-nviso-apkscan/) |

| JoeSandbox (https://www.joesandbox.com/#android) | Analysis and Detection | No | No | Limited Access |

| MobSF (https://github.com/MobSF/Mobile-Security-Framework-MobSF) | Static and Dynamic Analysis | Yes | No | Active (Local Machine) |

| SandDroid (http://sanddroid.xjtu.edu.cn) | Static and Dynamic Analysis | No | No | Active |

| AMAaaS (https://amaaas.com) | Static and Dynamic Analysis | No | No | Active |

| AVC UnDroid (https://undroid.av-comparatives.org/about.php) | Static Analysis | No | No | Active |

| PACE | Analysis and Detection | Yes | Yes | Active |

| Authors | Features | Performance Metrics | Dataset | Best Model | ||

|---|---|---|---|---|---|---|

| Accuracy | FPR | TPR | ||||

| Di Cerbo et al. [21] | Permission and Metadata | NA | NA | NA | Not Available | Apriori |

| Sanz et al. [22] | Permission | 86.41 | 0.19 | 0.91 | Not Available | Random forest |

| Sanz et at. [31] | Manifest | 94.83 | 0.05 | 0.94 | Not Available | Random Forest |

| Ghorbanzadeh et el. [23] | Permission | 0.65 | 0.65 | 0.65 | Not Available | Neural Network |

| Yerima et al. [24] | Permission | 92.1 | 0.06 | 0.90 | Available | Bayesian |

| Peiravian et al. [29] | Permission and API | 93.60 | 0.92 | 0.88 | Available | Bagging |

| Daniel et al. [30] | Manifest and Source code | 93.90 | NA | NA | Available | SVM |

| Ajit et al. [1] | Permission | 94.84 | 0.95 | 0.95 | Available | Random Forest |

| Milosevic et al. [27] | Permission | 89 | NA | NA | Available | Ensemble |

| Milosevic et al. [27] | Source Code | 95.1 | NA | NA | Available | Ensemble |

| Jin Li et al. [28] | Permission | 93.62 | NA | NA | Not available | SVM |

| Authors | Features | Performance Metrics | Best Model | ||

|---|---|---|---|---|---|

| Accuracy | FPR | TPR | |||

| BrandonAmos et al. [33] | App traces | 94.53 | 14.85 | 97.66 | Random Forest |

| Burguera et al. [34] | User’s trace (Crowdsourcing) | NA | NA | NA | Clustering |

| Vaibhav et al. [35] | Only Dynamic analysis | NA | NA | NA | NA |

| Chieh Wu et al. [32] | Network, File I/O etc. | 86.1 | 0.857 | NA | SVM |

| Alam et al. [36] | Features based on CPU, Memory, Battery etc. | 99 | NA | NA | Random Forest |

| Dai et al. [37] | API and corresponding arguments | NA | NA | NA | NA |

| Zhenlong Yuan et al. [38] | Static and Dynamic | 96.5 | NA | NA | Deep Learning (DBN) |

| DroidDetector [39] | Static and Dynamic | 96.76 | NA | NA | Deep Learning (DBN) |

| Shabtai et al. [40] | Network and Device Activities | NA | NA | NA | Anomaly-Based |

| Jang et al. [41] | Integrated system logs | 98 | NA | NA | Profiling |

| Chang et al. [42] | Network activities, and File R/W | 97 | 3 | 97.1 | Random Forest |

| Algorithms | Pre-Processing | Matching | Space |

|---|---|---|---|

| Naive String-Search | None | None | |

| Knuth–Morris–Pratt | |||

| Boyer–Moore |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kumar, A.; Agarwal, V.; Kumar Shandilya, S.; Shalaginov, A.; Upadhyay, S.; Yadav, B. PACER: Platform for Android Malware Classification, Performance Evaluation and Threat Reporting. Future Internet 2020, 12, 66. https://doi.org/10.3390/fi12040066

Kumar A, Agarwal V, Kumar Shandilya S, Shalaginov A, Upadhyay S, Yadav B. PACER: Platform for Android Malware Classification, Performance Evaluation and Threat Reporting. Future Internet. 2020; 12(4):66. https://doi.org/10.3390/fi12040066

Chicago/Turabian StyleKumar, Ajit, Vinti Agarwal, Shishir Kumar Shandilya, Andrii Shalaginov, Saket Upadhyay, and Bhawna Yadav. 2020. "PACER: Platform for Android Malware Classification, Performance Evaluation and Threat Reporting" Future Internet 12, no. 4: 66. https://doi.org/10.3390/fi12040066

APA StyleKumar, A., Agarwal, V., Kumar Shandilya, S., Shalaginov, A., Upadhyay, S., & Yadav, B. (2020). PACER: Platform for Android Malware Classification, Performance Evaluation and Threat Reporting. Future Internet, 12(4), 66. https://doi.org/10.3390/fi12040066