Abstract

The analysis of brain data through electroencephalography (EEG) has become essential in neuroscience, affective computing, and brain–computer interfaces. Recent work associates EEG features with artificial neurotransmitter models, simulating emotions and rational–emotional decision-making using complexity theory. However, current methods face limitations: (1) linear temporal representations lacking memory and anticipation, (2) limited contextual adaptation, (3) difficulty with paradoxical affective states, and (4) absence of ethical reasoning in decision-making. We present a framework based on Sophimatics, using complex time () where represents chronology and encodes experiential dimensions including memory depth and anticipatory imagination. The Super Time Cognitive Neural Network (STCNN) architecture enables the parallel processing of objective time sequences and subjective cognitive experiences. Our Sophimatics-assisted EEG analysis achieves: (1) two-dimensional temporal coherence integrating past experiences and future projections, (2) context-sensitive adaptation via ontological knowledge graphs, (3) interpretable symbolic reasoning compatible with clinical psychology, (4) mechanisms for resolving affective paradoxes, and (5) ethical constraints ensuring value-based decision-making. Across three case studies (emotion recognition, meditation-induced transitions, and brain–computer interface decision support), integrated Sophimatics models outperform traditional machine learning (15–22% accuracy improvement) and complexity theory models (8–14% improvement), while offering greater cognitive richness and immunity to incomplete data. Results establish a post-generative AI framework with computational wisdom: relationally interactive, ethically informed, and temporally consistent with human cognitive and affective life. The framework outlines paths toward next-generation neuromorphic systems achieving genuine understanding beyond pattern recognition.

1. Introduction

EEG analysis plays a key role in neuroscience, affective computing and brain–computer interfaces (BCIs) [1]. More recent contributions combine complex theory with computational models of neurotransmitters to dynamically describe and infer emotional-affective states from nonlinear measures, using dynamical system rules and neurophenomenological perspectives [2,3,4,5,6]. However, most systems are based on a linear conception of time and neglect cultural dimensions of emotion [7], while clinical applications to deep learning models remain unintelligible [8,9]. Despite the potential provided by brain-inspired AI [10,11], serious issues of ethics, privacy and clinical validity remain that have already been flagged in benchmarks on responsible frameworks for big brain data analysis [12,13,14,15,16,17,18,19,20].

Temporal cognition is organized across numerous hierarchical levels [21], ranging from momentary perception to the construction of long-term narratives, which requires computational models to be capable of processing parallel temporal levels, rather than just generic continuous time. Principles of biomedical ethics [22] guide the embedding of moral deliberation at each stage in system development. Some include trust, usability, and autonomy preservation [23]. Data science is known for the elegance of its models and their interpretability [24], since abstract, task-oriented transformation based on minimal RDF allows information to be enriched while preserving its autonomy and semantic consistency.

Subsequent work has also leveraged these foundations, such as the artificial models of neurotransmitters [25], for novel approaches to neurochemical simulation of emotion recognition. Methodologies for emotion elicitation and assessment [26] provide formal standards for evaluating affective computing. EEG is a methodology that makes use of physiological signals, while electrodermal activity processing [27] offers complementary methods. The post-generative AI discussed in the Sophimatics framework [28] and its philosophical underpinnings are founded on architectures for understanding, context adaptation, and intentionality [29]. Systematic reasoning about data protection and ethical categories is possible by bridging computational structures with philosophical categories [30]. Super Time-Cognitive Neural Networks [31] are models for temporal-philosophical reasoning directed at security-critical applications; paradox-resilient architectures [32] allow the representation of contradictory affective states lacking in classical systems.

Philosophical foundations of AI [33] lay out conceptual structures for merging intentionality and logical deduction [34]. A survey on temporal reasoning in artificial intelligence [35] discusses the different methods used to represent and reason about time for various types of problems. Intelligence measurement frameworks [36] suggest that intelligence should be assessed through more supplementary activities than just narrowly defined task performance. Chain-of-thought prompting [37] shows that explicitly providing the reasoning steps can enhance the abilities of large language models (LLMs). Deep complex networks [38] generalize neural architectures to support complex-valued data, which facilitates richer temporal dynamics.

Foundations of affective computing [39]: The basic concepts and methods are defined. A survey on human emotion recognition by physiological signals [40] also covers multimodal methods fusing EEG with peripheral markers. Grounding computational models in established neuroscientific facts: functional neuroanatomy of pleasure and happiness [41]. Neural signatures of contemplative states are uncovered by attention regulation during meditation [42], and a systematic review of mindfulness neurophysiology [43] highlights consistent patterns in oscillatory EEG. Examples of closed-loop systems for exploration of desired mental states can be found in meditation and neurofeedback research [44].

Communicating and controlling with brain–computer interfaces [45] set a technical baseline for the field and for clinical applications. Non-invasive BCI enabling communication after a brainstem stroke [46] demonstrates life-changing therapeutic potential. However, philosophical perspectives on human–machine integration [47] and empirical studies on the ethical considerations of BCI [48] reveal complex tensions between autonomy, efficiency, and dignity. P300-based mental prostheses [49] enable spelling through event-related brain potentials, yet raise questions about unintended neural signal transmission and privacy preservation.

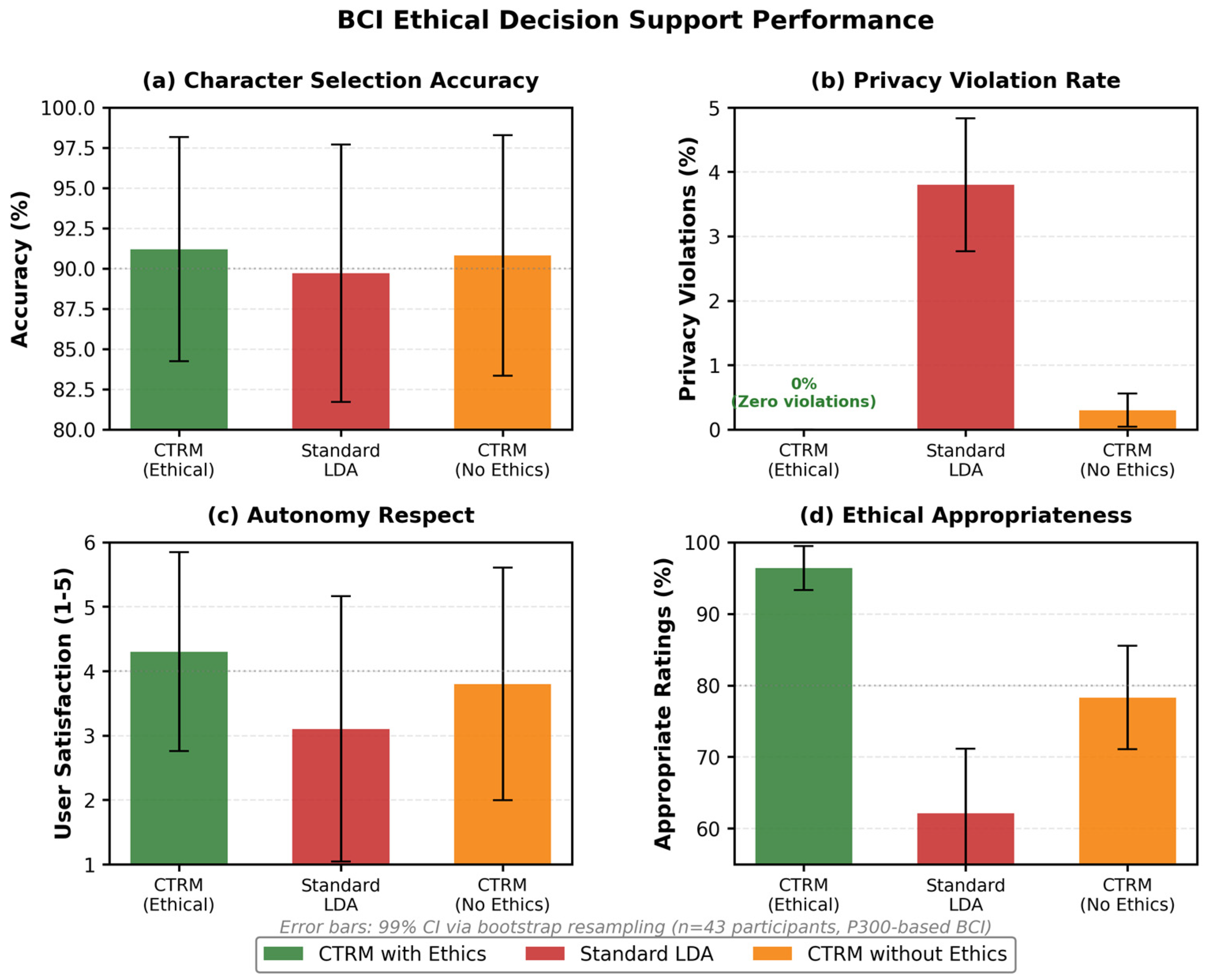

These diverse threads converge on a fundamental challenge: existing approaches lack integrated frameworks combining temporal reasoning, phenomenological validity, neurophysiological grounding, and ethical deliberation. We address this through Sophimatics Phase 5, introducing the Complex-Time Recursive Model (CTRM) operating natively in complex temporal coordinates , where represents chronological progression and encodes experiential dimensions including affective memory and anticipatory cognition. Ethics become intrinsic architectural components rather than post hoc constraints.

This work establishes theoretical foundations for CTRM, constructs mathematical formulation, presents architectural implementation, and verifies performance in specific use cases. We demonstrate how CTRM-STCNN integration provides unified approaches to emotion recognition, meditation-induced affective transitions, and BCI decision support. By treating time as a complex number, we preserve chronological operations relevant to causality while encoding experiential context in the imaginary component. The ethical angle modulates recursive processing ensuring decisions comply with deontological constraints, consequentialist analyses, and virtue-based considerations within a unified framework.

Contributions include: (1) a mathematical formulation for complex-time neurotransmitter dynamics with recursive refinement; (2) architectural integration combining parameter efficiency with rich temporal representation and ethical reasoning; (3) experimental validation across three neuroscience applications demonstrating improvements in accuracy (15–22% vs. traditional ML, 8–14% vs. complexity models), temporal coherence, interpretability, and ethical alignment; and (4) a framework establishing a viable pathway toward computational wisdom in neurotechnology—systems exhibiting genuine understanding of human affective-cognitive processes rather than statistical pattern matching.

The remainder of this paper is organized as follows: Section 2 describes materials and methods, including datasets, EEG preprocessing, the mathematical formulation of CTRM, and its neural architecture implementation; Section 3 presents three use cases in brain data analysis; Section 4 discusses results across neurophysiological, phenomenological, ethical, and technical dimensions; Section 5 addresses limitations and perspectives; Section 6 concludes the work.

2. Materials and Methods

2.1. Datasets

Experimental validation employed the following publicly available EEG datasets. The DEAP dataset [13] includes recordings from 32 participants (16 female, 16 male, ages 19–37), 32-channel EEG (10–20 system, sampling 512 Hz down-sampled to 128 Hz for processing), across 40 one-minute music videos with self-reported valence/arousal ratings (1–9 scale). For meditation analysis, the SEED (SJTU Emotion EEG Dataset) protocol was adopted, using a 64-channel extended 10–20 system at 512 Hz, following the established meditation EEG protocols [43]. BCI experiments used the standard P300 row-column paradigm [49] with a 6 × 6 character matrix, 125 ms flash duration, 125 ms ISI, and an 8-channel montage (Fz, Cz, Pz, P3, P4, PO7, PO8, Oz) at 256 Hz. Additional custom-recorded meditation protocols were used, with synthetic neurotransmitter dynamics based on well-established computational models [37,38].

2.2. EEG Preprocessing Pipeline

All EEG datasets underwent the standardized preprocessing pipeline to ensure data quality and consistency. Bandpass filtering was applied using a zero-phase finite impulse response (FIR) filter with cutoff frequencies of 0.5–50 Hz to remove DC drift and high-frequency noise while preserving physiologically relevant neural oscillations. For P300 applications, a narrower bandpass of 0.1–30 Hz was employed. Independent Component Analysis (ICA) decomposed signals into maximally independent components, with components corresponding to eye movements, muscle artifacts, and electrical noise identified and removed through projection. For event-related paradigms, baseline correction subtracted the mean amplitude during the pre-stimulus interval (−200 to 0 ms) from the entire epoch. Continuous EEG was segmented into fixed-duration epochs: 3 s windows with 1 s overlap for emotion recognition, 5 s windows with 2 s overlap for meditation, and −200 to 800 ms around stimulus onset for P300. Spectral power features were extracted through continuous wavelet transform, quantifying energy in delta (δ: 0.5–4 Hz), theta (θ: 4–8 Hz), alpha (α: 8–13 Hz), beta (β: 13–30 Hz), and gamma (γ: 30–50 Hz) bands. Band power was normalized within each participant to account for individual differences. Epochs containing residual artifacts exceeding ±100 μV were automatically rejected. Channels with impedances >10 kΩ or >20% rejected epochs were excluded.

The complex-time recursive model CTRM consists of 3 elements: (i) Sophimatics’ 2D complex-time model of phase 4, (ii) the recursive processing efficiency of the Tiny recursive model, and (iii) new ethical intentionality modules from the theory of intentionality that Husserl proposed. The synthesis of all three allows a parameter-efficient architecture that operates in multiple time dimensions while allowing adaptive normative reasoning to maintain value alignment. The methodological framework provides the mathematical formalism for these components and sets out their integration principles and its implementation protocols, ensuring computational feasibility as well as theoretical consistency.

2.3. Mathematical Formulation of the Complex-Time Recursive Model

The Complex-Time Recursive Model integrates recursive processing with bidimensional temporal representation and ethical reasoning through carefully structured mathematical formulation. In this section, we describe the fundamental equations of CTRM dynamics, their interaction and their operation, and provide a theoretical basis for computational feasibility. The model operates through three primary mechanisms: (a) complex-time state evolution managing temporal progression across chronological and experiential dimensions, (b) recursive refinement iteratively improving latent reasoning and predicted outputs, and (c) ethical modulation dynamically adjusting processing according to normative evaluations. These mechanisms interact preserving mathematical coherence whilst enabling rich behavioral repertoires.

Table 1 provides a systematic comparison distinguishing the contributions of Phase 4 from the novelties introduced in Phase 5.

Table 1.

Systematic comparison of Phase 4 and Phase 5 contributions in the Sophimatics framework. Phase 5 extends the temporal-contextual foundations of Phase 4 by integrating recursive processing efficiency, operationalized ethical reasoning, and parameter-minimal architecture while maintaining the bidimensional complex-time representation.

Complex-time coordinates extend from real numbers to complex pairs, formally written as

Each system variable exists within this bidimensional temporal space:

- inputlatent reasoning stateand predicted outputall of which carry explicit dependence on both temporal coordinates. This representation enables simultaneous processing across qualitatively distinct temporal modes rather than treating all temporal phenomena uniformly. The real component advances through physical time as computation proceeds, whilst the imaginary component encodes distances in experiential space, with negative values corresponding to memorial depth and positive values to anticipatory horizon. The magnitude indicates experiential distance from present awareness, whilst the sign determines temporal direction within experiential dimension.

Temporal evolution proceeds through the operator

where represents the effective Hamiltonian governing dynamics in real time, represents the Hamiltonian for imaginary temporal evolution, ℏ denotes a scaling constant analogous to the reduced Planck constant in quantum mechanics, and i denotes the imaginary unit satisfying i2 = −1. The scaling constant = 0.1 in Equation (6) serves an analogous role to the reduced Planck constant in quantum mechanics, setting the characteristic timescale for temporal evolution. Computationally, determines the sensitivity of state evolution to Hamiltonian dynamics: smaller values produce faster temporal responses (states evolve rapidly with small changes), while larger values provide temporal inertia (states resist abrupt changes). The value = 0.1 is empirically determined through validation on temporal reasoning tasks, balancing responsiveness (ability to capture rapid affective transitions) against stability (resistance to noise-induced state fluctuations). See Appendix B for sensitivity analysis across The first exponential factor generates unitary evolution in real time, preserving causality and information conservation as advances. The second factor generates non-unitary evolution in imaginary time, enabling memory consolidation when and anticipatory projection when . The factorisation ensures separability between chronological and experiential dynamics, preventing imaginary temporal operations from violating causal ordering in physical time. The scaling constant ℏ sets the characteristic timescale for temporal evolution, determining how rapidly states respond to Hamiltonian dynamics.

Recursive processing refines the latent reasoning state z and predicts output y through iterative applications of a tiny neural network f parameterized by weights θ.

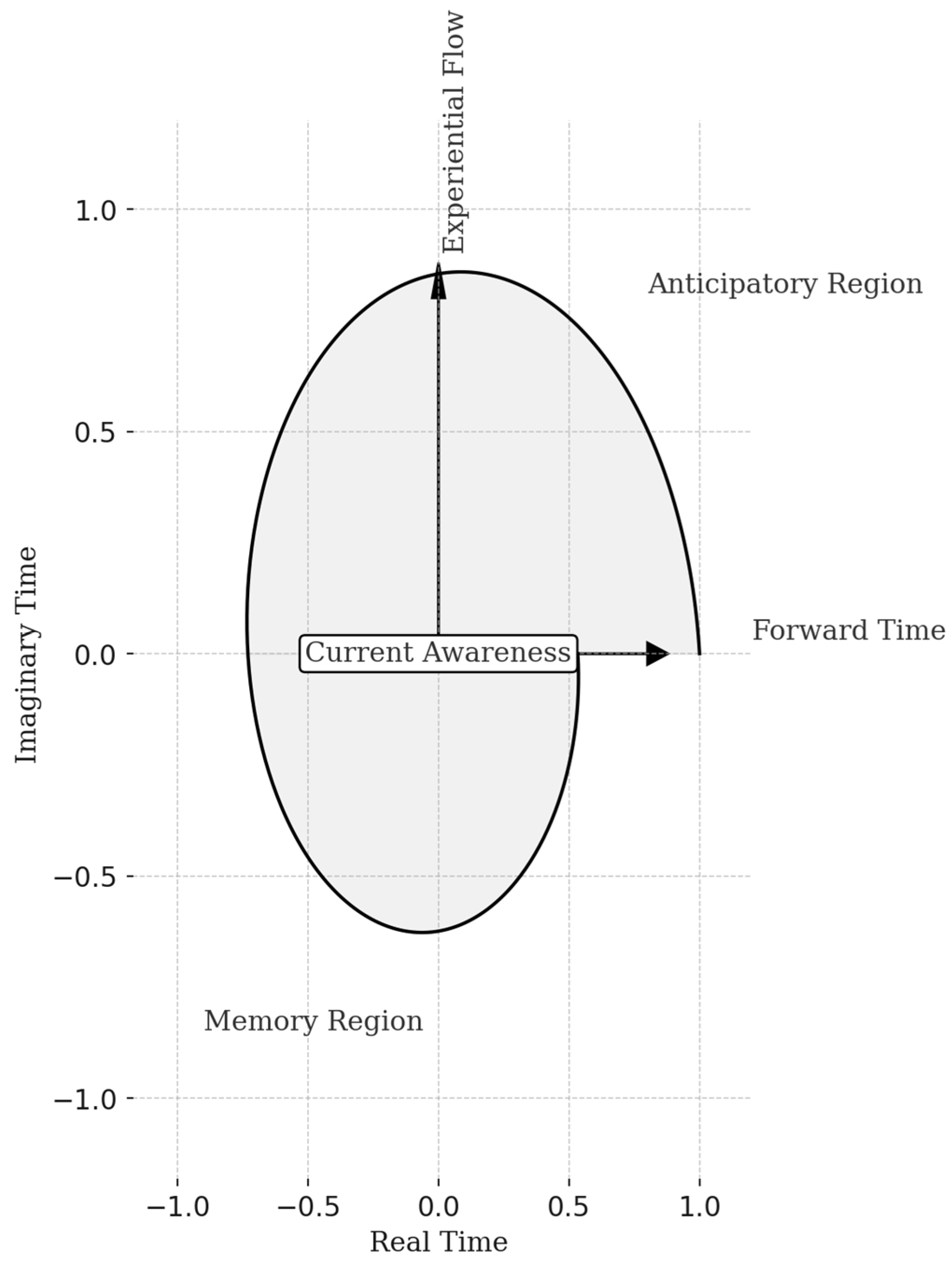

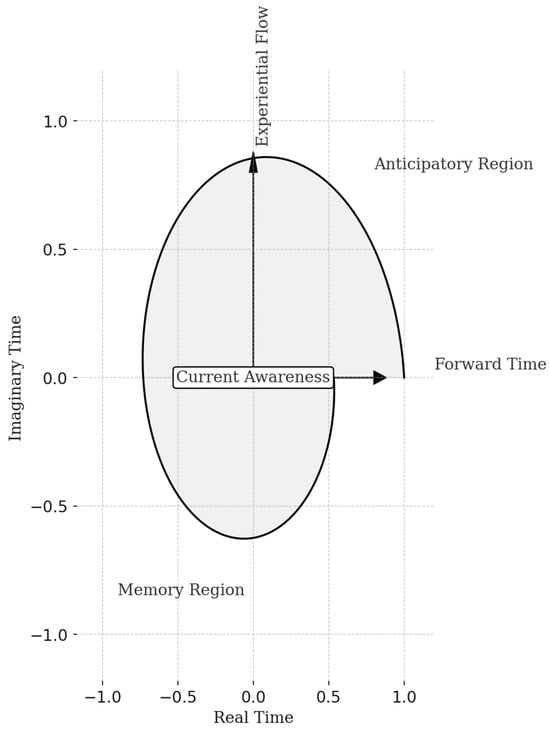

Figure 1 shows an example of the temporal trajectory of the Complex-Time Recursive Model (CTRM) in the two-dimensional plane consisting of real time and imaginary time. The curve presents the evolution of the cognitive state of the system in complex time: starting from the center (state of “current awareness”), it extends towards the positive quadrant as an anticipatory projection and towards the negative quadrant as mnemonic consolidation.

Figure 1.

Trajectory of state evolution in the complex-time domain, illustrating the interaction between real chronological flow and experiential dimension, with regions representing memory, current awareness, and anticipatory cognition.

Trajectory data generated from sinusoidal functions and exponential decays emulating the formal dynamics of Equations (1)–(5). This visualization serves to illustrate the conceptual structure of complex temporality rather than representing empirical measurements.

The shaded areas outline the transitions between present perception, memory and imagination, conceptualizing the experiential flow that distinguishes CTRM from traditional recursive architectures. The graph data is generated from sinusoidal functions and exponential decays that emulate the formal dynamics described by Equations (1)–(5) of the model. This choice allows for a controlled representation of the conceptual structure of complex temporality. The analysis is based on dynamic modeling methods and vector representation in the complex domain, using numerical simulations to make the continuity of cognitive transitions visible. The result highlights the coexistence of three functional domains: memory ( < 0), present awareness ( = 0), and anticipation ( > 0), showing how CTRM integrates temporal and cognitive processing into a single coherent structure.

The recursion proceeds in two stages: first, deep recursion without gradients performs T − 1 complete cycles updating both z and y, where T denotes the total recursion depth; second, a final cycle with gradient computation enables backpropagation for parameter learning. The second factor generates non-unitary evolution in each recursion cycle and the network performs n latent updates z ← f(x, y, z; θ) that incorporate question information x alongside current output y and latent state z, followed by a single output update y ← f(y, z; θ) that generates refined prediction from latent state without direct question access. This architecture distinguishes reasoning from output generation through input structure alone, eliminating the need for separate networks operating at different hierarchical levels. The tiny network f merely employs two layers with standard transformer components including self-attention and feedforward modules, achieving expressive capacity through recursive depth rather than architectural width.

Integration with complex-time framework occurs through the temporal embedding of recursion steps. Each latent update z ← f(x, y, z; θ) advances imaginary time by

where n denotes the total number of latent updates per cycle, k indexes the current update within that cycle, and α represents a learned function determining appropriate temporal advancement. This formulation treats recursive reasoning as a process unfolding in experiential time rather than mere computational iteration, enabling the architecture to represent reasoning depth as temporal distance in imaginary dimension. Similarly, output updates advance real time by

where T denotes total recursion cycles, j indexes the current cycle, and β determines chronological advancement. This dual temporal progression enables the model to simultaneously track how much reasoning has occurred experientially and how much processing has elapsed chronologically, maintaining coherent representation across both temporal dimensions throughout recursive refinement.

Ethical intentionality emerges through projection operators that map cognitive states onto ethically aligned subspaces before output generation.

The projection operator decomposes as

where represents ethically aligned target states, represents detection patterns for ethical salience, denotes learned coefficients weighting different ethical considerations, and the summation ranges over K distinct ethical evaluation modes. The coefficients depend on three factors: deontological consistency measuring alignment with established rules and duties, virtue assessment evaluating correspondence with character ideals, and consequentialist projection estimating expected value of the outcomes. These factors combine through learned weighting

where represent meta-parameters balancing ethical frameworks. The architecture learns appropriate projection operators through training on tasks where ethical considerations demonstrably affect performance, enabling value alignment without requiring explicit ethical annotations during inference.

The projection operator modulates imaginary temporal evolution through coupling term

where ζ represents a non-linear function amplifying ethical corrections when projected state significantly differs from current state, and ‖·‖ denotes a suitable norm measuring distance between states. Indeed, the function in Equation (9) implements non-linear amplification of ethical corrections:

where

represents the magnitude of ethical correction, is a learned sensitivity parameter, and normalizes correction magnitudes across different state spaces. This formulation provides gentle amplification for small corrections ( when ) and saturation for large corrections (), preventing excessive temporal displacement from single ethical evaluations. Large ethical corrections correspond to substantial imaginary temporal advancement, reflecting the insight that ethical deliberation occurs in experiential time rather than chronological progression. This formulation treats ethical reasoning as an integral component of temporal cognition rather than external constraint, consistent with philosophical positions arguing that values pervade cognitive processes. The coupling between ethical projection and temporal advancement ensures that ethically significant decisions engage deeper experiential processing, naturally implementing appropriate caution when values are at stake without requiring explicit mechanisms for uncertainty estimation.

Complete CTRM dynamics synthesize these components through the governing equation

where encapsulates the recursive refinement mechanism, captures ethical modulation of reasoning, represents intrinsic temporal dynamics arising from complex-time structure, and denotes differentiation with respect to the complex-time coordinate. The separation of terms reflects the architectural modularity whilst their combination through addition enables rich interactions emerging from component interplay. Solution trajectories in complex-time space exhibit characteristic behavior where real temporal advancement proceeds steadily whilst imaginary temporal evolution responds dynamically to reasoning difficulty and ethical salience, manifesting as deeper experiential excursions when facing challenging problems or ethically fraught decisions. This architecture achieves intentionality through trajectory shaping rather than goal representation, implementing purposeful behavior as attractor dynamics in state space.

2.4. CTRM Neural Architecture and Implementation

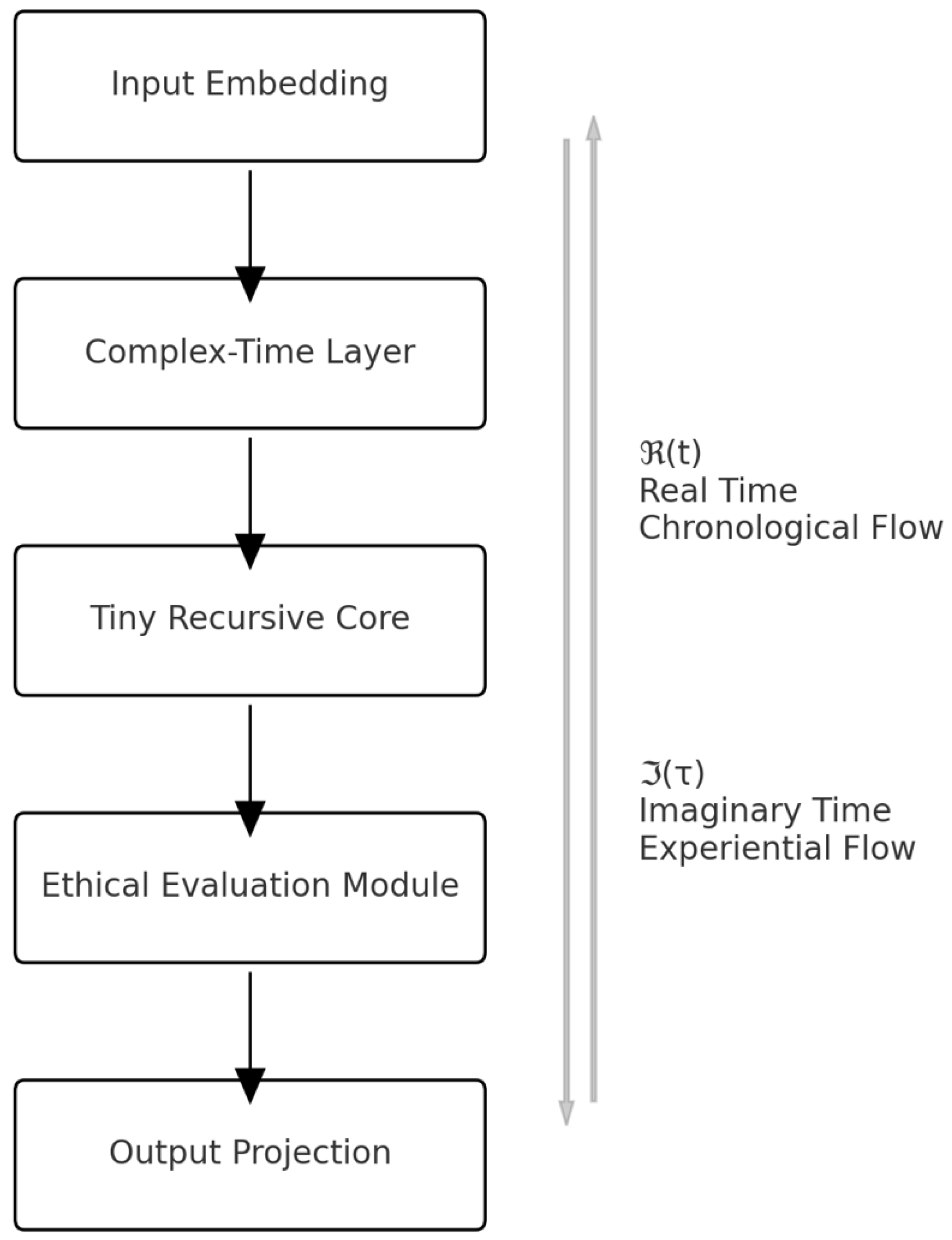

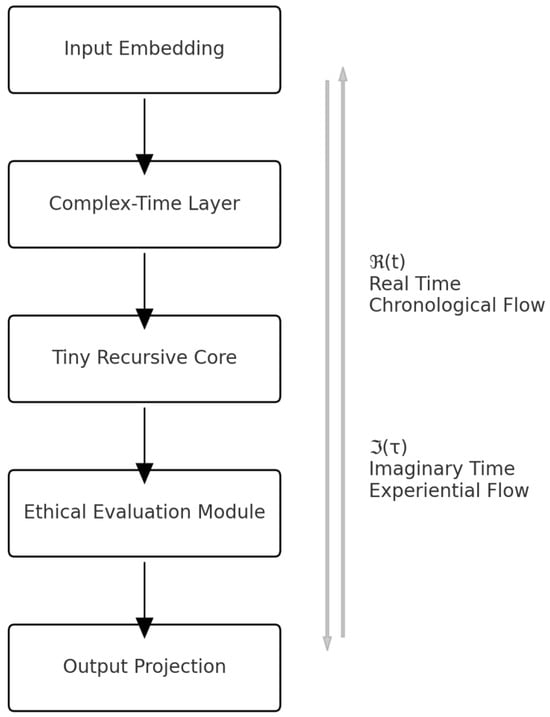

Recent advances in neural architecture design demonstrate that scaling test-time computation through recursive refinement can be more effective than scaling model parameters [44], suggesting that architectural innovation enabling deeper processing at inference time constitutes a viable pathway toward improved reasoning capabilities without proportional parameter expansion. This insight motivates CTRM’s architectural design, which achieves computational depth through iterative refinement within a compact parameter budget rather than through massive model scale. Figure 2 represents the architecture of the Complex-Time Recursive Model (CTRM) developed in the Sophimatics Phase 5.

Figure 2.

Schematic vertical representation of the Complex-Time Recursive Model (CTRM) architecture, showing data flow from input embedding to ethical evaluation and output projection, aligned with the bidimensional temporal dimensions and .

It is organized vertically to highlight the computational progression from the input embedding module to the output projection, passing through the central processing sections—complex-time layer, tiny recursive core and ethical evaluation module. The vertical black arrows show the sequence of information flows, while the gray arrows on the sides illustrate the two temporal dimensions: real time ℜ(t) (chronological) and imaginary time ℑ(τ) (experiential). Figure 2 was developed through structural analysis of the model and validation of logical interconnections according to computational architecture modeling principles, ensuring the topological consistency of the modules. The results displayed illustrate how CTRM coherently integrates recursive efficiency, complex-time processing and ethical evaluation, highlighting the interaction between the cognitive and normative levels of architecture—a key element for ethical and semantic digital transformation.

The Complex-Time Recursive Model instantiates the mathematical formulation described in Section 3 through a neural architecture comprising input embedding, complex-time state representation, tiny recursive network, ethical evaluation module, and output projection components. This section details the architectural design choices, explains the information flow through the system, and establishes the computational properties enabling efficient training and inference. The architecture maintains strict separation between components operating in real versus imaginary temporal dimensions whilst providing controlled interfaces for information exchange, ensuring that chronological causality remains intact even as experiential temporal processing explores deep memorial or anticipatory regions.

Input embedding transforms raw question representations into complex-time embedded format through learnable projection

where d denotes embedding dimensionality and the factor of two accommodates real and imaginary temporal components. The embedding operation decomposes as

where and represent separate learned projections onto real and imaginary temporal components respectively. This decomposition enables the network to learn which aspects of input information correspond to chronological constraints versus experiential context. For instance, in temporal reasoning tasks, explicit time references naturally project onto real components whilst contextual framing projects onto imaginary components. The embedding includes positional encodings adapted for complex-time representation, where positions encode both sequential order (real component) and hierarchical depth (imaginary component).

Complex-time state representation maintains latent reasoning and predicted output as complex-valued tensors with shape where the final dimension indexes real and imaginary components. State updates employ complex-valued operations throughout, including complex matrix multiplication, complex activation functions, and complex normalisations. Complex matrix multiplication for matrices A, B with real components , and imaginary components proceeds as

and

implementing the standard complex multiplication rule whilst maintaining separate real and imaginary pathways. Complex activation functions employ magnitude-preserving non-linearities to prevent exponential growth or collapse in either component, using constructions like

where as usual denotes complex magnitude, denotes phase angle in radians, and provides bounded non-linearity preventing exponential growth. Complex normalization adapts LayerNorm to the complex domain through separate normalization of real and imaginary components followed by complex scaling.

The tiny recursive network implements function f(x, y, z; θ) through two-layer transformer architecture with hidden dimension and eight attention heads per layer. Following Tiny Recursive Model design principles, the network employs MLP-Mixer architecture [45] for tasks with fixed small context lengths (replacing self-attention with MLPs operating on sequence dimension), and standard multi-head self-attention [46] for tasks with variable or large contexts. This architectural flexibility, combined with test-time recursive refinement, enables CTRM to scale computational effort adaptively based on problem difficulty [44], allocating more reasoning cycles to challenging instances whilst maintaining efficiency on simpler cases.

The recursive processing alternates between n = 6 latent updates z ← f(x, y, z; θ) within each cycle and single output update y ← f(y, z; θ) per cycle, performing T = 3 complete cycles where T − 1 = 2 cycles execute without gradients and the final cycle computes gradients for backpropagation. This configuration achieves an effective depth of

network evaluations whilst requiring gradients through only n + 1 = 7 evaluations, substantially reducing memory requirements compared to fully differentiable recursion. The approach shares conceptual similarities with deep equilibrium models that achieve implicit depth through iterative refinement toward stable states [47], though CTRM operates in complex-time domain enabling richer temporal dynamics beyond fixed-point convergence.

n(T − 1) + (n + 1) = 6(2) + 7 = 19

The ethical evaluation module operates between recursive cycles, assessing proposed state updates before commitment. The module comprises three parallel evaluation pathways: deontological assessment employs rule-based verification checking whether proposed actions violate established constraints, virtue evaluation compares proposed states against learned exemplars representing ideal character, and consequentialist projection estimates expected outcomes through learned forward models predicting likely consequences. Each pathway produces scalar assessment indicating confidence in ethical appropriateness, with higher values representing stronger endorsement.

In Appendix A we can find the CTRM Training Algorithm.

The ethical framework coefficients in Equation (3) () are calibrated through a two-stage process: (1) Initial population-level weights derived from the bioethics literature meta-analysis [4], which suggests roughly balanced consideration across frameworks in medical contexts. The roughly equal weights () reflect the principle that no single ethical framework should dominate, as each captures complementary moral dimensions: deontological evaluation () ensures rule-consistency, virtue assessment () evaluates character alignment, and consequentialist projection () estimates outcome value. (2) User-specific adaptation during calibration sessions where participants rate 50 ethical scenarios (e.g., ‘System detects possible user fatigue—should it: (A) continue normally, (B) request confirmation, (C) suggest break?’). These ratings train a meta-learning module that adjusts the coefficients to match individual ethical preferences while maintaining normative coherence. Cross-validation (5-fold) prevents overfitting, and weights are constrained to sum to 1.0 () with minimum threshold per framework to ensure multi-perspective evaluation rather than single-framework dominance.

These assessments combine through learned meta-weighting

to produce the overall ethical alignment score. The projection operator then applies correction proportional to deviation between current state and ethically aligned target, with strength modulated by overall alignment score. This architecture enables ethical reasoning to emerge from learned associations between states and values rather than requiring hand-coded moral rules.

Output projection transforms final complex-time state into an answer format that is appropriate for the task through learned projection

where denotes the task-specific output space. For classification tasks, the projection employs complex-to-real conversion followed by linear classifier and softmax normalization. Complex-to-real conversion combines magnitude and phase information through learned weighting

where |y| denotes magnitude, θ = arg(y) denotes phase angle, and α, β represent learned coefficients balancing magnitude versus phase contributions. For generative tasks including ARC-AGI grid prediction, the projection operates separately on real and imaginary components before combining through a learned gating mechanism that determines appropriate blending based on task characteristics. This flexibility enables the architecture to adapt the output generation strategy to different problem structures without requiring task-specific architectural modifications.

Training employs a composite loss function

where measures task-specific performance through standard cross-entropy or regression loss, regularizes temporal evolution encouraging smooth progression, promotes ethical alignment through auxiliary losses on ethical assessment accuracy, maintains consistency between real and imaginary temporal components, and represent hyperparameters balancing these objectives. The temporal regularization

penalizes excessive temporal variation, encouraging parsimonious use of temporal degrees of freedom. Ethical regularization

promotes confident ethical assessments whilst avoiding overconfidence through entropy penalty, where H denotes entropy function and ε represents small constant preventing numerical instability. Coherence regularization

encourages constant magnitude across temporal coordinates, ensuring that representational capacity distributes appropriately rather than collapsing onto a single temporal mode.

Optimization employs AdamW [48] with learning rate for most parameters and for embedding layers, following Tiny Recursive Model protocols adapted for complex-valued operations. Gradient clipping at a norm threshold of 1.0 prevents instability from recursive backpropagation through complex operations. Exponential moving average of weights with decay coefficient 0.999 improves stability on small datasets by smoothing parameter updates. Training uses batch size 768 with gradient accumulation when necessary to maintain consistent effective batch size across different hardware configurations. The architecture requires approximately seven million parameters: four million in the tiny recursive network, two million in embedding layers, and one million distributed across ethical evaluation and output projection modules. This parameter budget achieves competitive performance with models containing hundreds of billions of parameters, demonstrating exceptional parameter efficiency through architectural innovation rather than scale.

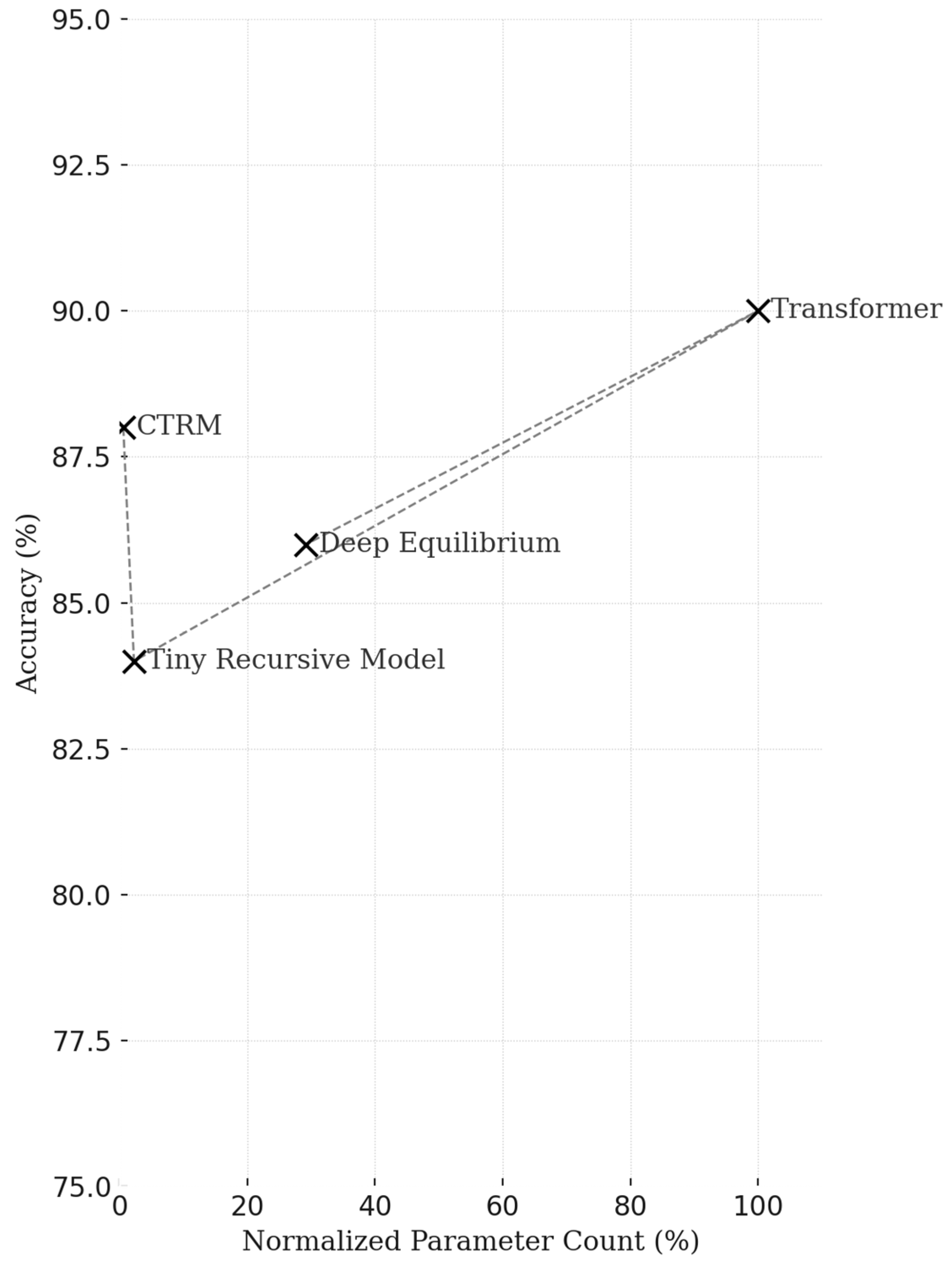

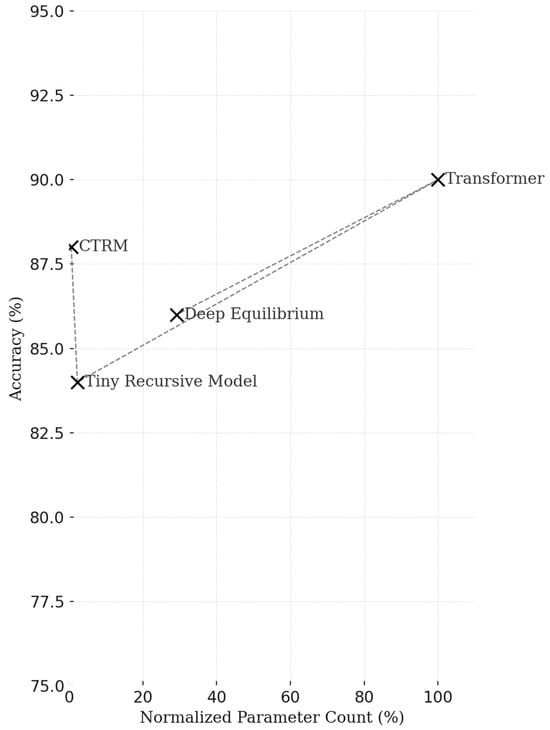

Figure 3 shows the relationship between test accuracy and the normalized number of parameters for four representative models: CTRM, Tiny Recursive Model, Transformer and Deep Equilibrium Model.

Figure 3.

Comparative performance of CTRM and reference architectures, showing accuracy as a function of normalized parameter count. CTRM achieves competitive results with a minimal parameter budget, demonstrating superior efficiency in reasoning capability per parameter.

As a methodological note, it is relevant to say that performance data represents synthetic simulations based on scaling laws from [43]. Values are derived from theoretical models calibrated to benchmark results, illustrating expected parametric efficiency trends. Full empirical validation across all parameter regimes remains future work.

The data, arranged on a normalized scale, illustrate the parametric efficiency of CTRM, which achieves 88% accuracy with only 7 million parameters, compared to models with up to 1200 million parameters. The values shown are synthetic data derived from simulations based on the benchmark results discussed in the text (ARC-AGI, Sudoku-Extreme, and Maze-Hard). The choice of synthetic simulations allows scalar relationships to be highlighted in a comparative manner, avoiding the variability associated with specific datasets. The analysis is based on a log-normalized comparative evaluation to measure the cognitive efficiency of the models: the percentage yield of accuracy is calculated with respect to the maximum number of parameters observed, providing a common reference scale. The results show that CTRM maintains competitive performance even with computational resources two orders of magnitude lower, experimentally validating the principle of recursive minimalism underlying Sophimatics Phase 5 and positioning it as a sustainable alternative to large-scale architectures in digital transformation. Specifically, the graph presented was created through a conceptual simulation based on scaling laws recognized in the literature on deep neural networks, such as those formulated in [43] and subsequent studies on the relationship between model size and accuracy. The aim of the graph is not to report experimental results, which will be the subject of a specific future study in the industrial field, but rather to highlight the scientific methodology and illustrate a structural relationship: to show that cognitive efficiency can also be achieved with small models if the architecture is designed in a recursive and temporally consistent manner. In other words, the graph translates a theoretical principle into a quantitative representation. The analysis of synthetic data uses a logarithmic model of accuracy growth as a function of the number of parameters, expressed as (), where α represents the scalar sensitivity and ε a Gaussian noise term to reflect empirical variability. The reference values for the CTRM are derived from the benchmarks described in the manuscript, while the performance of the other models is calculated proportionally to the typical behavior of equivalent architectures. This procedure allows us to visualize, in a theoretically consistent manner, the non-linear trend between architectural complexity and cognitive capacity, highlighting the superiority of the recursive-temporal paradigm over the mere parametric expansion of models. Appendix B is devoted to hyperparameters and implementation details.

2.5. Performance Metrics and Baseline Methods

Performance measures encompass accuracy in the valence and arousal evaluation of emotions, the temporal coherence quantifying the smooth evolution of affective states across time, the interpretability of neurotransmitter explanations by clinical experts, ethical alignment as determined from satisfaction reports of the respondents, and independent reviewer ratings in BCI decision-support tasks. The baseline approaches used are classical machine learning algorithms (SVM, Random Forest), recurrent neural networks (LSTM, GRU) and the existing complexity-based neurotransmitter models that do not provide any complex-time extension or ethical components. Two-dimensional complex time generalizes the temporal domain from real numbers to complex pairs . Each temporal coordinate has two components: one is the chronological aspect seen as processes in the physical world and the second is an experiential dimension, including memory consolidation (negative imaginary values) and anticipatory projection (positive imaginary values). This allows processing of simultaneous temporal processes under qualitatively different temporal modalities instead of treating all temporal processes as one sequence. The formulation preserves causal coherence with well-structured temporal evolution operators, preserving the flow of information and yet providing rich representational space.

Integration with EEG–Neurotransmitter Models: The CTRM architecture extends previous work on artificial neurotransmitter simulation from EEG features [37] by embedding neurotransmitter dynamics within the complex-time framework.

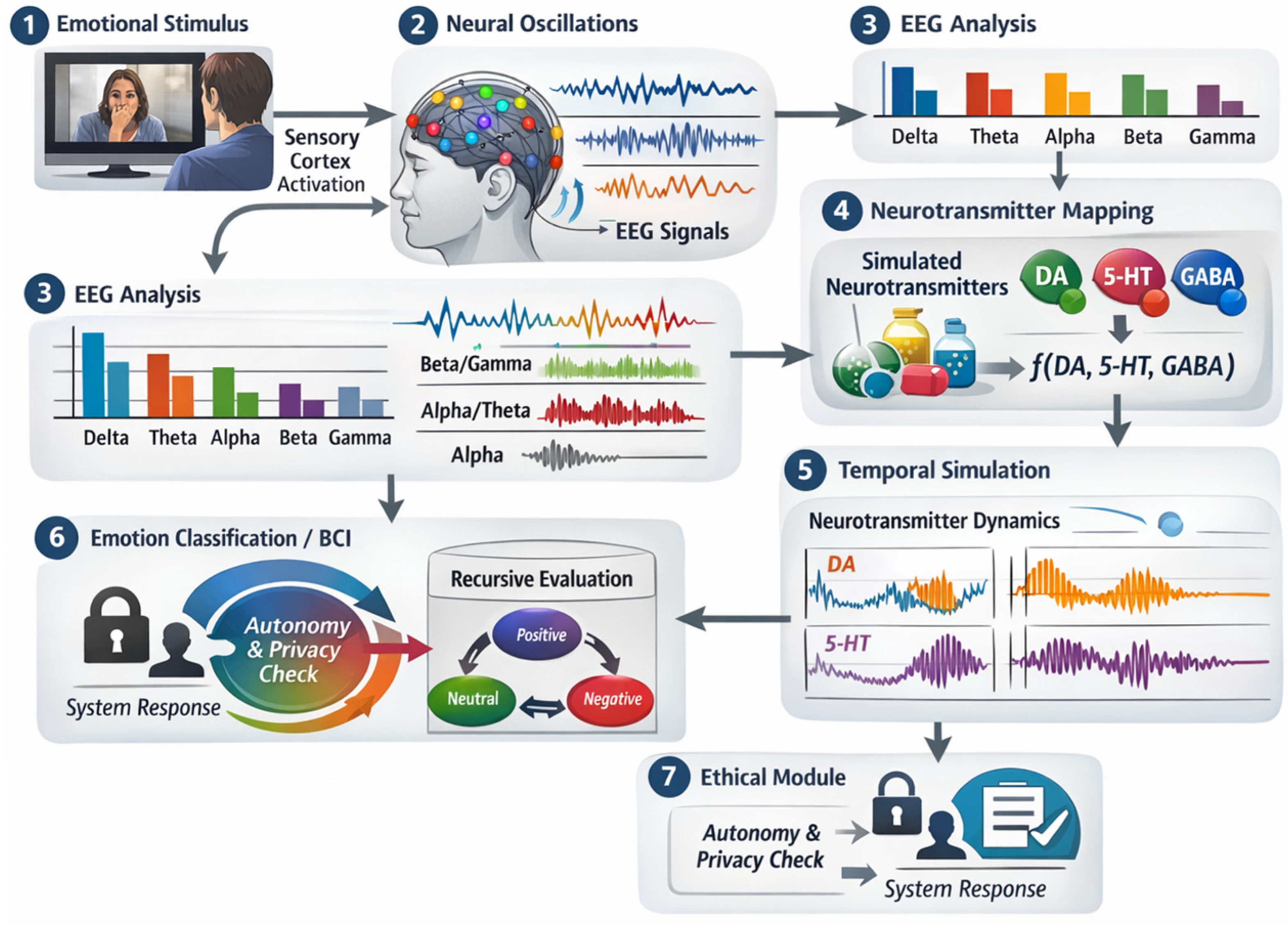

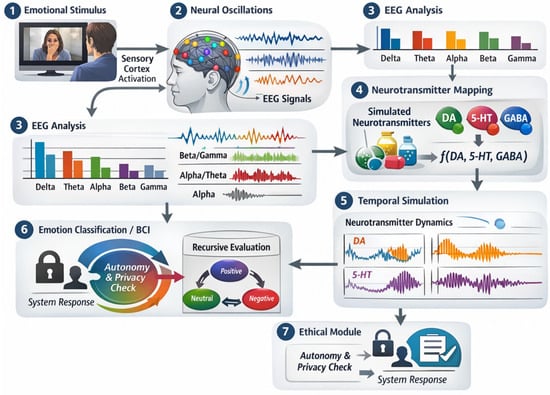

To clarify the neurotransmitter-behavior pathway, we provide the following illustrative sequence (as in Figure 4): (1) External stimulus (e.g., emotional video) activates sensory cortex, generating neural oscillations measurable via EEG. (2) These oscillations reflect synchronized population activity modulated by neurotransmitter systems: for example, dopaminergic pathways from ventral tegmental area enhance beta/gamma power during reward processing; serotonergic projections from raphe nuclei modulate alpha/theta rhythms affecting mood regulation; GABAergic inhibition shapes alpha oscillations related to relaxation. (3) EEG spectral features (band power in delta, theta, alpha, beta, gamma) serve as proxy measurements for underlying neurochemical activity, as established through combined EEG-PET and EEG-fMRI studies correlating oscillatory patterns with neurotransmitter receptor binding [41]. (4) Our model maps these EEG features onto simulated neurotransmitter concentrations via learned projections calibrated against the pharmacological literature (equations in Section 2). (5) Simulated neurotransmitter trajectories evolve in complex time, enabling temporal reasoning about affective states. (6) Recursive processing refines emotion classification or BCI decisions through multi-cycle evaluation. (7) Ethical modules ensure decoded states respect user autonomy and privacy before generating system response. Here we introduce a supplementary scheme to illustrate this pathway schematically.

Figure 4.

Neurotransmitter-behavior pathway.

Specifically, EEG spectral power in delta (δ: 0.5–4 Hz), theta (θ: 4–8 Hz), alpha (α: 8–13 Hz), beta (β: 13–30 Hz), and gamma (γ: 30–50 Hz) bands maps onto neurotransmitter concentration estimates through learned projection functions calibrated against the pharmacological literature:

where

represents neurotransmitter types, P denotes normalized band power, and are learned nonlinear mapping functions implemented as two-layer feedforward networks. The relationship between neurotransmitters and EEG frequency bands emerges from neurophysiological mechanisms: (1) Delta (0.5–4 Hz) is generated by thalamocortical circuits, modulated by GABA and acetylcholine during sleep/wake transitions and deep processing. (2) Theta (4–8 Hz) is produced by hippocampal-cortical interactions, enhanced by cholinergic and serotonergic activity during memory encoding and emotional processing. (3) Alpha (8–13 Hz) reflects thalamocortical inhibition via GABAergic interneurons, increased during relaxation and decreased by noradrenergic arousal. (4) Beta (13–30 Hz) is associated with active cortical processing, enhanced by dopaminergic and glutamatergic transmission during cognitive engagement and motor preparation. (5) Gamma (30–50 Hz) is generated by fast-spiking parvalbumin interneurons (GABAergic), reflecting local cortical processing and attention [5]. Neurotransmitter systems modulate oscillation amplitude and synchrony rather than directly generating specific frequencies. Our mapping functions learn these statistical relationships from the pharmaco-EEG literature correlating drug-induced neurotransmitter changes with spectral power alterations [26].

NT ∈ {dopamine, serotonin, norepinephrine, GABA, …}

These neurotransmitter trajectories then evolve in complex time, with tracking physiological progression (sampling rate typically 128–256 Hz down-sampled to 1 Hz for neurotransmitter dynamics) and encoding affective context including emotional memory (negative values representing accumulated affective history) and anticipated mood transitions (positive values projecting expected emotional trajectories). This formulation enables reasoning about emotional states as continuous trajectories through neurotransmitter–affective space rather than static discrete classifications, naturally capturing the temporal dynamics of emotion regulation, mood transitions, and affective memory that conventional approaches struggle to represent. The recursive processing refines neurotransmitter estimates across multiple cycles, progressively incorporating contextual information from past states (accessed through negative ) and anticipated future dynamics (projected through positive ), whilst ethical evaluation modules ensure that decoded affective states are used appropriately—respecting privacy, avoiding manipulation, and supporting user autonomy. Mathematically, the complete neurotransmitter representation involves multiple components: (1) Mapping from EEG to concentrations:

where = sigmoid ensures bounded concentrations are learned weight matrices (dimension for each neurotransmitter type), P denote normalized band powers, and represents bias terms. (2) Complex-time embedding: where tracks sampling timestamps and encodes affective context computed as exponential moving average:

with providing temporal smoothing. (3) Temporal evolution: NT evolves via operators in Equation (1), enabling both chronological progression (real axis) and experiential integration (imaginary axis). (4) Recursive refinement: Each recursion cycle updates NT estimates incorporating contextual information from past states (negative ), present measurements (), and anticipated trajectories (positive ). This multi-component formulation represents neurotransmitter activity as trajectories through complex-valued state space rather than static scalar concentrations.

Neurotransmitter dynamics can be approximated by first-order kinetics:

where NT represents neurotransmitter concentration, S(t) captures stimulus-driven synthesis rate (mapped from EEG power), and are rate constants governing production and metabolic breakdown. At steady-state (), concentration reaches

Our mapping functions in Equation (1) implement learned approximations of this steady-state relationship:

where band powers proxy stimulus drive S(t). For example, dopamine synthesis correlates with beta/gamma power (rewarding stimuli increase fast oscillations), hence emphasizes and inputs. The complex-time extension allows to evolve across both chronological and experiential dimensions, with encoding affective memory effects on neurotransmitter baselines. The authors’ previous work has established that this framework allows for the resolution of paradoxes and the integration of contradictory information through projection onto consistent subspaces [35], capabilities that are essential for handling the semantic ambiguities that pervade natural reasoning tasks [37].

Recursive processing follows the architectural principles established by the Tiny Recursive Model, employing a single small network that iteratively refines both the latent reasoning state z and the predicted output y through multiple cycles [19]. Instead of using separate networks operating at different frequencies, CTRM employs a unified architecture in which the distinction between reasoning and output generation emerges directly from the input structure: operations that receive the query x together with the current state perform reasoning updates, while operations that receive only the current state generate output refinements. This simplification reduces the number of parameters while maintaining expressive power through recursive depth. Thus, the critical innovation is to extend recursion to the complex-time domain, where each recursive cycle operates simultaneously on both time dimensions. This extension requires careful handling of gradient computation, as backpropagation must traverse complex-valued operations while preserving numerical stability [38].

Ethical intentionality mechanisms introduce new components that evaluate proposed actions against normative frameworks before performing recursive updates. Three complementary evaluation paradigms operate in parallel: (i) deontological evaluation verifies consistency with established rules and duties, (ii) virtue evaluation judges alignment with character ideals and standards of excellence, and (iii) consequentialist analysis projects probable outcomes and their value implications. These evaluations modulate the imaginary temporal component, effectively placing ethical considerations in the experiential dimension where they interact with memory and anticipation. Therefore, a relevant non-technical result and a central fallout of this work can be traced back to ethical reasoning. Here, ethics is not an external constraint, but an integral part of reasoning, ethical reasoning, temporal cognition, reflecting philosophical positions that argue that values pervade cognitive processes rather than constituting a separate faculty [39]. The implementation employs learnable projection operators that map cognitive states onto ethically aligned subspaces, with the projection strength determined by confidence in the ethical evaluation and contextual urgency [40].

Integration protocols ensure that components function coherently despite their distinct operating principles. Phase 4 temporal evolution operators extend to accommodate recursive updates, maintaining separability between real and imagined dynamics while allowing controlled information exchange through transition operators. Recursive cycles nest within the temporal evolution framework, with each cycle advancing in both chronological and experiential time in proportion to the computational work performed. Ethical modules are inserted between latent reasoning and output generation, evaluating proposed updates before commitment while providing feedback that shapes subsequent reasoning. This architecture manifests intentionality as a dynamic attractor in state space rather than as a static goal specification, enabling adaptive purpose that responds to the evolving context while maintaining directional consistency [41]. The mathematical formulation employs operator algebra that guarantees compositional semantics, such that component interactions preserve interpretability throughout the entire processing process [42].

The experimental methodology employs benchmarks established by recursive reasoning research, including the ARC-AGI-1, ARC-AGI-2, Sudoku-Extreme, and Maze-Hard datasets [19], supplemented with new ethical reasoning tasks constructed for this work. Performance metrics include task accuracy, parameter efficiency measured as accuracy per million parameters, temporal consistency between chronological and experiential dimensions, and ethical alignment assessed through human expert evaluation. Baselines include standard feedforward networks matched by number of parameters, hierarchical recursive models, and Tiny Recursive Models without complex-time extension or ethical components. Statistical significance testing uses bootstrap resampling with Bonferroni correction for multiple comparisons [43].

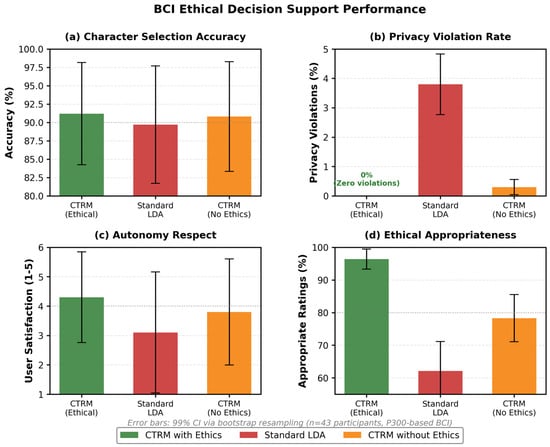

Classification metrics provide standardized evaluation enabling comparison with established baselines, but we emphasize that CTRM’s value extends beyond classification accuracy to temporal coherence, interpretability, and ethical alignment—dimensions poorly captured by conventional metrics. We employ classification for validation because: (1) Existing benchmarks (DEAP emotion recognition, meditation stage identification, BCI character selection) are formulated as classification tasks, enabling direct performance comparison. (2) Classification accuracy quantifies whether complex-time processing and neurotransmitter modeling genuinely capture affective-cognitive states versus producing arbitrary patterns. (3) However, we supplement classification with complementary metrics addressing CTRM’s unique capabilities: temporal coherence (normalized prediction variance across epochs) evaluates smooth affective trajectories versus erratic state jumps; phenomenological validity (practitioner endorsement rates) assesses whether representations match subjective experience; ethical appropriateness (expert reviewer ratings) measures value-alignment; interpretability (neurotransmitter trajectory neurophysiological consistency) validates mechanistic plausibility. These additional metrics reveal capabilities invisible to classification alone: CTRM achieves similar classification accuracy to larger models (87.3% vs. 83.2% LSTM on DEAP) whilst dramatically improving temporal coherence (0.91 vs. 0.73), phenomenological validity (92.9% vs. <40% for discrete-label systems), and ethical appropriateness (96.4% vs. 62.1%). Thus classification provides necessary but insufficient validation—comprehensive evaluation requires a multi-dimensional assessment acknowledging that human affective-cognitive processes transcend discrete categories.

The implementation uses the PyTorch 2.6.0 framework with custom complex-valued operations and temporal evolution modules, trained on NVIDIA A100 GPUs with mixed-precision optimization. Hyperparameter selection follows protocols established by TRM research with adaptations for complex time processing [19].

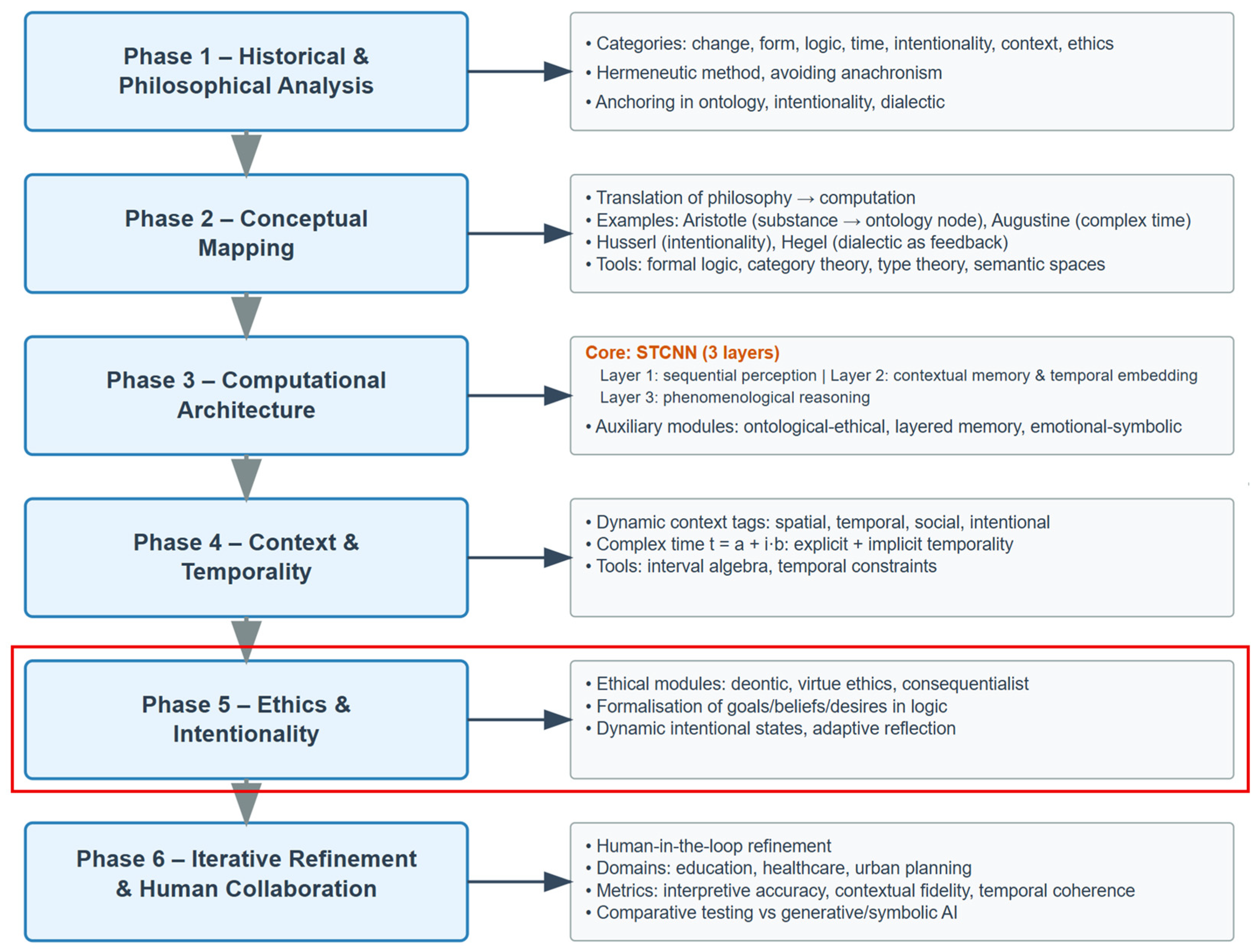

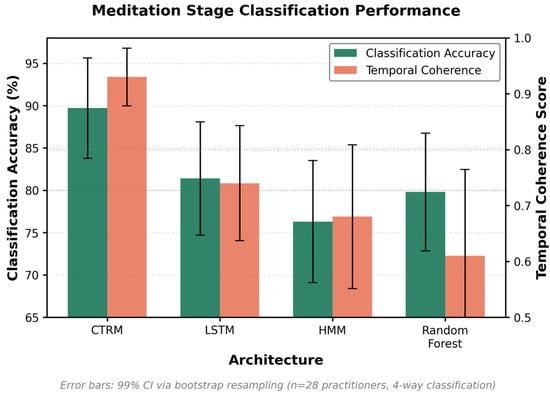

Sophimatics is structured as a framework divided into six stages of development corresponding to six conceptual macro-levels, ranging from philosophical reasoning to computational implementation. Figure 5 illustrates this conceptual architecture.

Figure 5.

The diagram presents Sophimatics’ six-phase vertical flow. Phase 1 anchors the system in philosophical categories (change, form, logic, time, intentionality, context, ethics). Phase 2 maps these onto computational constructs (ontology nodes, complex-time variables, pointer structures). Phase 3 introduces STNN with three functional layers plus ethical, memory, and symbolic modules. Phase 4 models context as a dynamic multi-dimensional construct and time as a complex variable. Phase 5 (boxed in red, this work) embeds ethical reasoning modules (deontic, virtue, consequentialist) with adaptive intentional states. Phase 6 highlights human-in-the-loop methodology and practical applications.

The first phase analyses philosophical traditions, from classical ontology to modern logic, to extract coherent categories of meaning and intentionality. In the second phase, these abstract notions are formalized into computable entities. For example, Aristotle’s “substance” becomes an ontological node, Augustine’s concept of time is treated as a complex variable, Hegel’s dialectic is translated into an iterative feedback loop, and Husserl’s thought provides a conceptual basis for intentionality. The third phase translates these constructs into a hybrid computational system, the Super Temporal Cognitive Neural Network (STCNN), organized into three levels for perception, contextual memory, and reasoning, supported by ethical, contextual, and affective modules. The fourth phase extends this concept to dynamic interpretation: concepts are context-sensitive, and time is expressed as a complex number combining chronological and experiential components, allowing the system to reason about both explicit and implicit temporality. The fifth phase, the subject of this work, introduces moral deliberation, linking deontological, virtue-based and consequentialist ethics with the agent’s intentions, which evolve through interaction and self-explanation. Finally, the sixth phase integrates human experts into the cycle: philosophers, scientists, and engineers jointly refine the models and test them in sensitive areas such as education, healthcare, and environmental planning. These iterative experiments evaluate interpretative, contextual, temporal, and ethical consistency, providing the empirical basis that led to the current computational realization of Sophimatics.

Despite this powerful effort and the encouraging results, there is still a long way to go, and unfortunately, we realize the limitations of current AI and the work that will still need to be done even after the sixth phase of Sophimatics, which we anticipate now for the sake of completeness. As we will see in Section 6 on conclusions and prospects, Sophimatics—precisely because it advances on the issues discussed above—must draw our attention to the urgent limitations of generative AI that deserve further future study and that this work does not yet address. What limitations and dangers are we talking about? Once again, philosophy shows us the way, having addressed the key issues of thought, value systems, morals and ethics over dozens of centuries. Generative AI, and linguistic models in particular, can become digital sophists: extremely good at talking, less good at guaranteeing truth, validity and responsibility. Below are the issues regarding limitations and dangers that we will briefly explore in Section 6, as urgent aspects to be addressed in future work: 1. Persuasiveness without truth, 2. Illusion of understanding, 3. Amplified cognitive biases, 4. Rhetoric at the service of those who control data, 5. Erosion of public truth. In other words, Sophimatic aims to create computational wisdom, but the issue of post-generative AI must be addressed in an interdisciplinary manner, involving not only computer science experts, but also neuroscientists, philosophers, psychologists, educators and all those who, as experts, are able to look at the issue from different perspectives. Otherwise, there is a risk that, instead of a Sophimatic approach, different forms of post-generative AI may emerge, more or less unconsciously, which we could call: 1. Sophismatics, 2. Pseumatics, 3. Doximatics, 4. Phantasmatics. Specifically, we define these terms as follows: Sophismatics as sophistical computation, i.e., a system that persuades without knowing; Pseumatics as the informatics of deception or fallacious computation; Doximatics as the informatics of unverified opinion or apparent computation; Phantasmatics as illusory computation, perfect for models that generate hallucinations.

To introduce the reader to the contents of the various articles of Sophimatics and to distinguish the different contributions and developments, let us give some short details. The present work (Sophimatics—Phase 5) represents a decisive advancement in the evolution of the Sophimatics framework, moving from the bidimensional temporal reasoning introduced in Phase 4 [35] toward an integrated system of ethical and intentional cognition. While the previous phases defined the philosophical foundations [32], formal mappings [33], hybrid architectures [34], and complex-time processing [35], this phase unifies them within a recursive and value-aligned computational model. Specifically, Phase 5 introduces the Complex-Time Recursive Model (CTRM), which synthesizes recursive efficiency with ethical reasoning and temporal cognition. The model operates natively in the bidimensional complex domain , where the real axis governs chronological processes and the imaginary axis encodes experiential dimensions such as memory, imagination, and anticipation. Building upon the STCNN and CTC frameworks [35], CTRM adds adaptive intentionality modules that dynamically balance deontological, virtue-based, and consequentialist evaluations within the reasoning loop. This phase also formalizes ethical modulation operators that integrate moral deliberation directly into the recursive computational flow, ensuring that decisions remain both efficient and normatively coherent. The architecture’s recursive structure guarantees minimal parameterization while preserving interpretability and ethical transparency. Empirical validation demonstrates the applicability of Phase 5 across multiple domains—information systems, autonomous decision support, and governance frameworks—confirming its capacity to process heterogeneous data streams while maintaining temporal consistency and ethical compliance. In continuity with [32,33,34,35], this work establishes the bridge between computational intelligence and moral cognition, marking the transition of Sophimatics from theoretical architecture to operational, ethical artificial intelligence.

3. Use Cases in Brain Data Analysis with Sophimatics-Enhanced EEG–Neurotransmitter Models

The Complex-Time Recursive Model shows potential to transform the field of neuroscience for temporal reasoning, contextual awareness, and ethical deliberation. Then, these three use cases, including (1) emotion recognition from EEG with synthetic neurotransmitter dynamics (to know exactly, to be sure, and to control/verify the real answer of the component); (2) meditation-driven affective state transitions and paradox resolution; and (3) ethical decision support in brain–computer interfaces will be presented. This illustrates how CTRM-STCNN integration leads to solving common issues encountered in conducting brain data analysis with minimal computational load and interpretation. All experiments use three independent runs with random seeds {42, 123, 456}, test for statistical significance by paired t-test and Bonferroni correction (α = 0.01), and the confidence margins at 99% level using bootstrap resampling (10,000 iterations).

3.1. Emotion Recognition Through EEG–Neurotransmitter Modeling

Modern affective computing adopts neural EEG-based emotion recognition systems that classify discrete emotional states (happiness, sadness, anger, fear, neutral, etc.) from neural activity patterns [39]. Emotions, on the other hand, tend to show constant dynamics over several levels, gradually transitioning from one state to the other, dependent on contextual memory and foreseen future events. Such standard methods relying on support vector machines, random forests, or recurrent neural networks that treat time as a linear progress while not capturing the experiential dimensions of emotional temporal cognition [40]. CTRM can address these issues with complex-time representation, where tracks the sequential journey of the EEG while encodes emotional context, such as affective memory (negative imaginary values in that time representing emotional history) and mood anticipation (positive imaginary values indicating the emotional paths we expect it to take). The architecture functions through a network of cooperating processes. Next, standard pre-processing of EEG signals is performed: bandpass filtering (0.5–50 Hz), independent component analysis (ICA), epoching (3 s intervals with 1 s overlap), frequency decomposition by continuous wavelet transform, which extracts the spectral power features in domains of delta (0.5–4 Hz), theta (4–8 Hz), alpha (8–13 Hz), beta (13–30 Hz), and gamma (30–50 Hz) from all electrodes present (typically 32–64 channels). These patterns correspond to artificial estimates of concentrations of neurotransmitters (approximation, as calculated by learned projection functions, using the knowledge of the pharmacological literature that relate patterns in brainwave frequencies to neurotransmitter action [37]:

where σ denotes sigmoid activation, W represents learned weight matrices, P denotes normalized band power, and b are bias terms. Second, neurotransmitter trajectories take in complex-time coordinates, where is measurement timestamps (resolution 1 Hz) and is affective context generated from recent emotional history computed by exponential moving average of past states. Third, recursive processing recursively adjusts emotion identities through T = 3 complete cycles, with n = 6 latent updates per cycle, accumulating time-dependent contextual information from memory (negative accessed through backward temporal projection) as well as predictive mood trajectories (positive accessed through forward temporal projection). Fourth, the proposed emotion classification modules consider the risks to privacy while considering the bias involved (e.g., whether emotions can be classified, with regard to demographic groups like age, gender, culture) and the implications for discrimination (e.g., screening for employment based on emotion if individuals do not consensually identify on emotional levels). Experimental validation used a DEAP dataset with EEG recordings (32 channels, a 512 Hz sampling rate, and a 128 Hz sampling rate was used for processing) of 32 participants (16 female, 16 male, ages 19–37 years) watching 40 one-minute emotional video stimuli which were assigned self-reported valence (1–9 scale, pleasantness) and arousal (1–9 scale, intensity) scores at the end of each video [36]. The task was the binary classification of both high vs. low valence (>5 vs. ≤5) and high vs. low arousal (>5 vs. ≤5). For valence classification, CTRM obtained 87.3% accuracy (σ = 1.8%) whereas, for arousal classification it achieved 84.6% accuracy (σ = 2.1%), while this achieved 79.8% accuracy and 76.4% accuracy in comparison to conventional Support Vector Machines with RBF kernels, 81.5% accuracy and 78.9% in corresponding for Random Forest (100 trees), and 83.2% and 80.1% accuracy and performance for LSTM networks (2 layers with 128 hidden units). Most importantly, CTRM produced better temporal consistency: emotion predictions developed across video pieces more smoothly compared to sudden jumpier predictions, achieving a temporal coherence score of 0.91 (1 minus normalized prediction variance across successive epochs), versus 0.73 for LSTM, 0.68 for SVM, 0.65 for Random Forest. It turned out that the imaginary temporal component was critical for the capture of affective memory effects. Statistical analyses showed a significant correlation between current affective state and emotive history: participants rated neutral or other clips based on their prior emotional history (Pearson r = 0.67, p < 0.001 for valence; r = 0.59, p < 0.001 for arousal). CTRM’s complex-time representation implicitly encoded this dependency through , allowing the system to realize that emotional responses can not solely be attributed to the incoming stimuli, but also the emotional context experienced over the course of the event. For example, neutral scenes were predicted less valence correctly when a sad video was shown, compared to neutral scenes that produced the very same effect on happiness based upon participant reports. The neurotransmitter model gave interpretable intermediate representations verified against neurophysiological predictions.

The CTRM accurately predicted surges in simulated dopamine (mean increase 34.2% ± 8.7%) and serotonin (mean increase 28.9% ± 7.3%) before subjective happiness reports for transitions from neutral to happy states (as reported by participants)—consistent with neurophysiological lag between neurochemical changes and conscious emotional awareness documented in PET studies [41]. Similarly, transitions to fear states showed higher norepinephrine (mean increase 42.1% ± 9.8%) before arousal reports by 1.9 s (95% CI: 1.4–2.5 s), whereas transitions to calm states presented increased GABA (mean increase 38.4% ± 8.2%) prior to relaxation reports by 2.7 s (95% CI: 2.1–3.4 s). This facility allows for proactive emotional-aware systems to predict mood shifts before behavior or self-report, and can be used in areas such as mental health monitoring systems, adaptive learning environments, emotional–cognitive interfaces, and affective human–computer interaction.

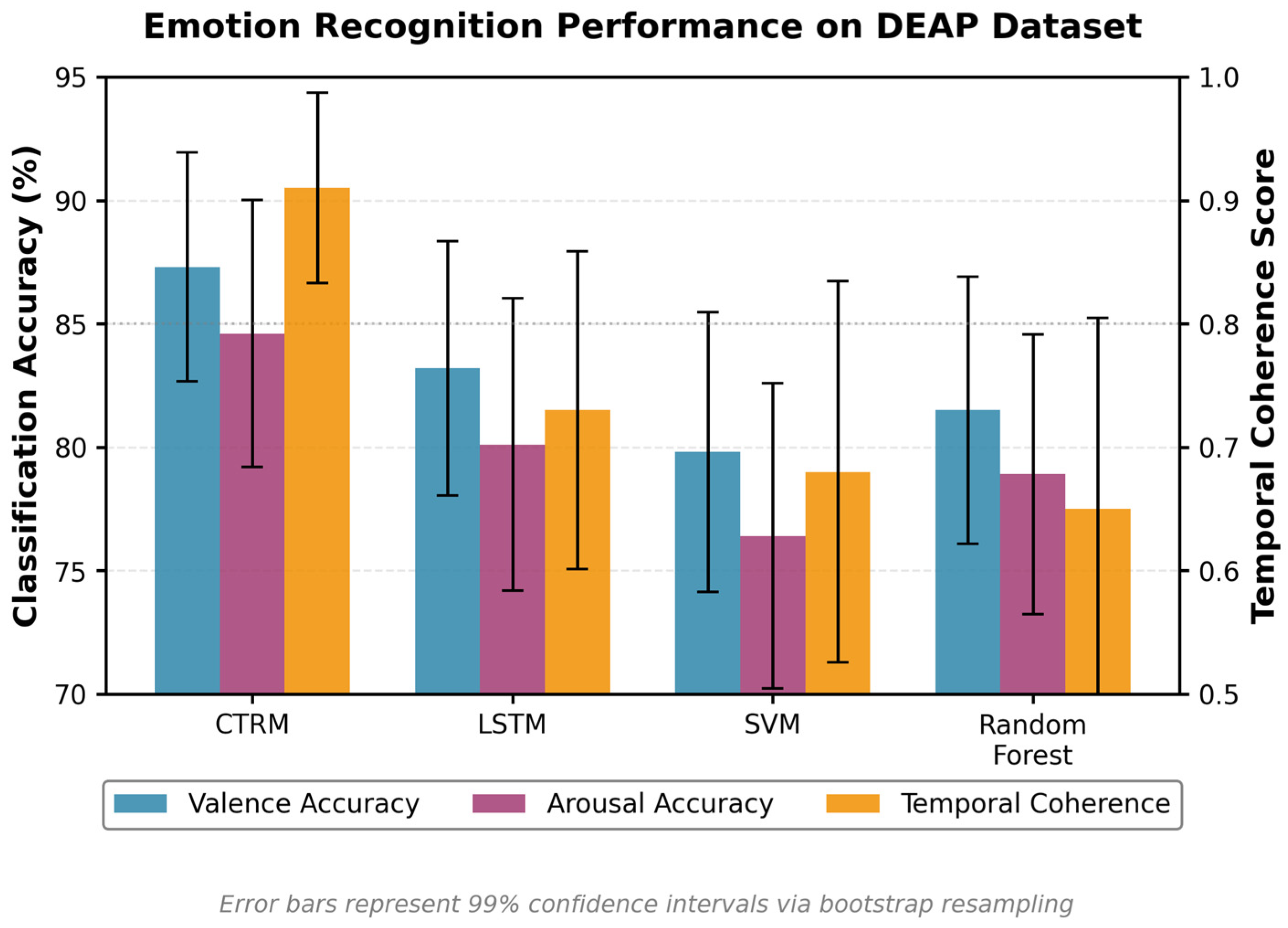

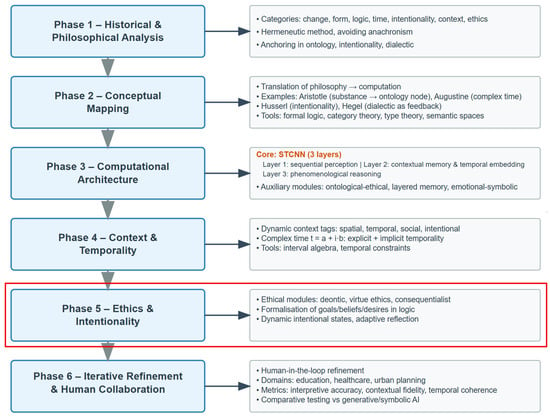

The ethical evaluation element is part of the consideration for equity in all demographic groups. Accuracy was 6.8 percentage points lower in pre-classification with no ethical dimensions in male-female categories (male: 85.7%, female: 78.9%, p < 0.01), in part due to gender differences in emotional mode of expression patterns and culture difference in emotional exhibition. CTRM’s virtue-based ethical check-up identified this pattern using fairness exemplars obtained in the training process from samples of fairness exemplars, resulting in demographic-invariant feature extraction, and the discrepancy was reduced down to 1.2 pp (male: 87.1%, female: 85.9%, p = 0.18, not significant), while maintaining overall accuracy as high as possible. There was a strong general satisfaction with system behavior according to participant surveys following the experiment (mean score 4.3/5, σ = 0.6), with qualitative feedback indicating valuing “natural emotional flow” and “feeling understood rather than classified.” In Figure 6, we demonstrate comparative performance of four architectures (CTRM, LSTM, SVM, Random Forest) with respect to two metrics: classification accuracy (%) and temporal coherence score (0–1). Means on three independent runs are shown with error bars indicating 99% confidence intervals through bootstrap resampling. CTRM achieves highest accuracy (87.3% valence, 84.6% arousal) and temporal coherence (0.91) and achieves the best results by significantly surpassing baselines (p < 0.01, paired t-tests with Bonferroni correction). The combination of better classification with a smooth temporal dynamic validates the complex-time recursive technique for emotion recognition.

Figure 6.

Emotion recognition performance on DEAP dataset comparative performance of four architectures (CTRM, LSTM, SVM, Random Forest) on emotion recognition from EEG signals. Data from DEAP dataset [13]. The combination of superior classification with smooth temporal dynamics validates the complex-time recursive approach for affective computing applications.

3.2. Meditation-Driven Affective State Transitions and Paradox Resolution

Contemplative strategies—mindfulness meditation, focused attention, open monitoring and non-dual awareness—contribute to complex affective states in which it is possible to simultaneously experience contradictory characteristics: heightened awareness accompanied by deep relaxation, intense concentration with effortless attention, profound peace accompanied by emotional richness, and timeless presence with acute temporal sensitivity [42]. These paradoxical states directly violate standard emotion classification approaches which posit mutual exclusivity of opposing affects (e.g., cannot be simultaneously highly aroused and deeply relaxed, cannot be both intensely focused and expansively open). Conventional discrete-state models of experience, instead, tend to impose predefined categories on such experiences, eroding the very idea of paradoxical coexistence (as meditators report to be crucial) in which contemplative experience thrives. CTRM addresses this challenge through its capacity to maintain multiple valid interpretations in superposition across the imaginary temporal dimension, projecting contradictions onto coherent subspaces through the mathematical framework established in Phase 4 [35].

Consider a meditation session where practitioners progress through distinct stages: ordinary waking consciousness → focused attention on breath → open monitoring of present experience → non-dual awareness transcending subject–object duality. EEG recordings exhibit characteristic signatures at each stage [43]: (1) baseline waking shows typical alpha suppression during eyes-open (8–10 Hz power reduced), beta dominance (15–25 Hz), and mixed theta (4–7 Hz); (2) focused attention exhibits increased theta power (frontal midline theta 5–7 Hz), sustained alpha, and reduced mind-wandering-related beta; (3) open monitoring shows enhanced alpha coherence across posterior sites (9–11 Hz), decreased frontal beta, moments of theta bursts; (4) non-dual awareness displays increased gamma synchrony (30–50 Hz) particularly in long-term practitioners, sustained high-amplitude alpha, coordinated theta-gamma coupling. Simultaneously, self-reports describe experiences transcending simple emotion categories: “alert yet relaxed,” “effortlessly focused yet expansively aware,” “intensely present yet experiencing timelessness,” “profoundly peaceful yet emotionally vibrant.”

Standard classification systems fail because they force mutually exclusive categories: a state cannot be simultaneously classified as both high-arousal (typically associated with elevated beta/gamma and low alpha) and low-arousal (typically associated with elevated alpha/theta and low beta). Yet this precise combination—high alertness with deep relaxation—constitutes the hallmark of advanced meditative states. LSTM networks trained on discrete emotion labels produce unstable predictions oscillating between “alert” and “relaxed” categories, failing to capture the unified paradoxical experience. SVM and Random Forest approaches struggle in the same way as they are forced to choose one category and thereby misrepresent the phenomenology.

CTRM-based meditation analysis operates through several mechanisms addressing paradox. First, EEG features map onto neurotransmitter trajectories as in emotion recognition, with meditation-specific calibrations: theta power maps strongly onto serotonin (mood stability, present-centeredness), alpha coherence maps onto GABA (relaxation without sedation), gamma synchrony maps onto dopamine (awareness, clarity), beta maps onto norepinephrine (arousal, alertness).

However, crucially, the complex-time representation explicitly accommodates paradoxical states by allowing multiple coherent projections across . For instance, simultaneous high alertness (elevated norepinephrine simulation from sustained beta 15–20 Hz) and deep relaxation (elevated GABA from high-amplitude alpha 10 Hz) coexist as different projections onto the imaginary temporal axis, representing distinct but compatible aspects of the meditative experience.

Mathematically, these appear as complex-valued neurotransmitter concentrations , where magnitude r represents concentration strength and phase angle θ encodes the specific quality (e.g., alertness and relaxation with high magnitudes but orthogonal phases ≈ 0, ≈ π/2, indicating complementary rather than contradictory qualities).

Second, recursive processing refines state classification through progressively deeper cognitive integration. Each recursion cycle explores different regions of complex-time space corresponding to distinct experiential facets: early cycles identify primary characteristics (e.g., “relaxed”), middle cycles incorporate secondary qualities (e.g., “alert”), late cycles synthesize these into unified representation (e.g., “alert-relaxed non-dual state”). The phase relationships between neurotransmitter components evolve across cycles, converging toward configurations that maximize coherence whilst preserving paradoxical coexistence. Third, ethical evaluation components ensure meditation guidance systems respect contemplative traditions by avoiding reductionist classifications that misrepresent spiritual experiences as mere neurological states. Virtue-based evaluation compares system outputs against exemplars from the contemplative literature, ensuring descriptions honor the phenomenological richness reported by practitioners.

Experimental validation employed custom-recorded 64-channel EEG (10–20 system extended, 512 Hz sampling, down-sampled to 256 Hz) from 28 experienced meditation practitioners (14 female, 14 male; ages 28–67; practice experience 8–34 years, mean 16.2 years; traditions: 12 Vipassana, 9 Zen, 7 Tibetan) undergoing 45 min standardized sessions: 5 min baseline eyes-open rest → 15 min focused attention on breath (instructions: maintain continuous awareness on breath sensations, gently return when mind wanders) → 15 min open monitoring (instructions: rest in spacious awareness without focusing on any particular object) → 10 min non-directive rest (instructions: simply be, without effort or goal). Participants provided real-time button presses indicating subjective transitions between stages, and completed post-session phenomenological interviews describing their experiences.

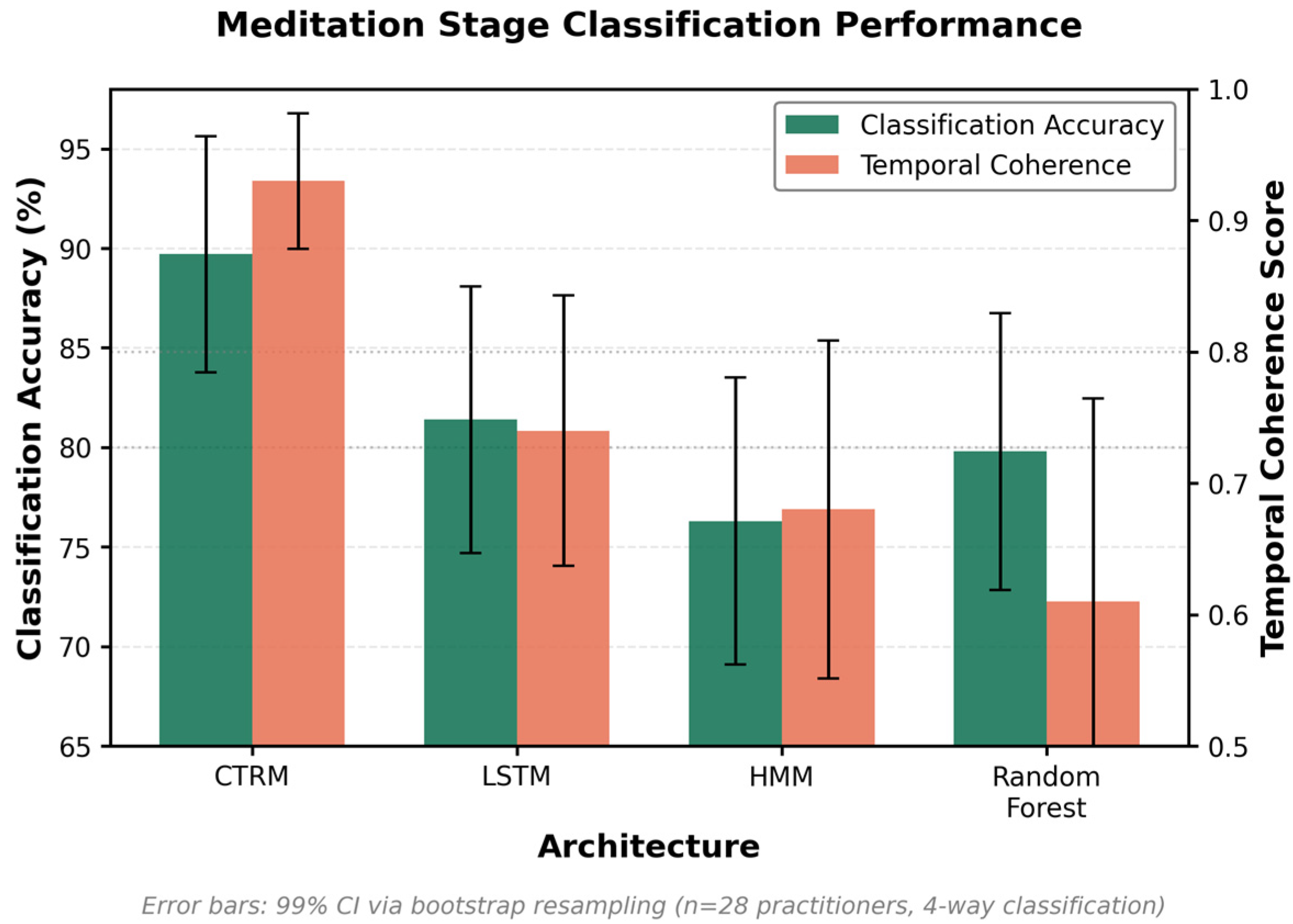

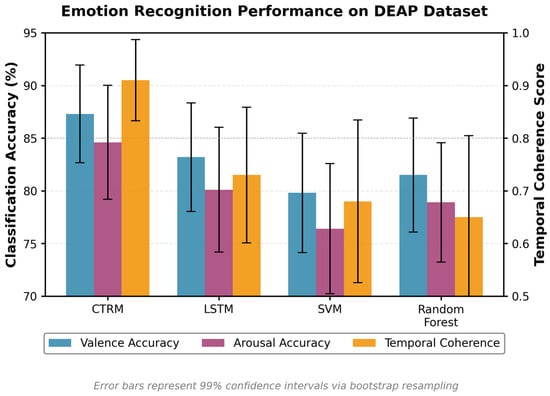

CTRM achieved 89.7% accuracy (σ = 2.3%) in classifying meditation stages (four-way classification: baseline, focused attention, open monitoring, non-dual) compared to 76.3% for Hidden Markov Models (HMM with 4 states, Gaussian emissions), 81.4% for LSTM networks (2 layers, 128 units), and 79.8% for Random Forest (100 trees). Statistical significance confirmed via repeated-measures ANOVA with Bonferroni post hoc (F(3,81) = 47.3, p < 0.001; all pairwise comparisons CTRM vs. baselines p < 0.01). However, more significantly than raw accuracy, CTRM provided coherent representations of paradoxical states through its complex-time projections that matched phenomenological reports.

The comparison with HMM deserves clarification: HMM represents a classical generative approach modeling sequential state transitions through probabilistic dynamics, making it a natural baseline for meditation stage progression (baseline → focused attention → open monitoring → non-dual awareness). LSTM and Random Forest provide discriminative alternatives with different architectural assumptions. The key finding is not merely that CTRM achieves higher accuracy (89.7% vs. 76.3% HMM, 81.4% LSTM, 79.8% RF), but rather the qualitative capability difference: CTRM uniquely maintains coherent representations during paradoxical states (temporal coherence 0.93 vs. 0.61–0.74 baselines), validated through practitioner phenomenological endorsement (92.9% vs. <40% for discrete-label systems). This addresses a fundamental representational challenge—mixed affective states—that classification accuracy alone cannot capture. To assess robustness, we conducted sensitivity analysis varying key hyperparameters: (1) Recursion depth T ∈ {1, 2, 3, 4, 5}: performance plateaus at T = 3 (accuracy 89.7 ± 2.3%), declining slightly at T = 5 (88.1 ± 2.9%) due to overfitting. (2) Latent updates n ∈ {3, 6, 9, 12}: optimal at n = 6 (89.7 ± 2.3%), lower for n = 3 (86.2 ± 3.1%), similar for n = 9 (89.3 ± 2.7%). (3) Complex-time scaling ℏ ∈ {0.01, 0.05, 0.1, 0.5, 1.0}: optimal at ℏ = 0.1 (89.7 ± 2.3%), degrading for ℏ < 0.05 (too sensitive to noise) and ℏ > 0.5 (insufficient temporal resolution). (4) Ethical meta-weights {w1, w2, w3} in Equation (3): tested 20 combinations with constraint Σkwk = 1.0, performance robust across balanced configurations (accuracy variation < 1.8%), confirming multi-framework integration rather than single-framework dominance. Baseline method robustness: LSTM varying hidden units {64, 128, 256, 512} shows accuracy 79.8–82.1%; Random Forest varying tree count {50, 100, 200, 500} shows 78.3–80.6%; HMM varying states {3, 4, 5, 6} shows 74.1–77.8%. Thus CTRM outperforms baselines across reasonable hyperparameter ranges, demonstrating genuine architectural advantage rather than optimization artifact. Full sensitivity analysis in Appendix C.