Abstract

In the presence of multitasking, a worker has to concurrently handle interruptions from the waiting jobs and routine jobs while processing a primary job. For over a decade, various studies in this research direction have been conducted aiming to figure out how jobs are scheduled so as to reduce the effect due to multitasking. In this paper, two late-job problems in line with the classical late-job problems are tackled. In contrast to the classical setting in which all jobs must be completed, we suggest the idea of outsourcing. Some jobs are outsourced. Thus, the worker only processes the on-time jobs and handles the interruptions from the waiting jobs. Each outsourced job is assigned to a single freelancer to ensure that all jobs are completed on-time. The overhead is the charges to the freelancers, i.e., the total outsourcing cost. If the service charges of all the jobs are the same, the late-job problem is called the total number of outsourcing jobs (TNOJ) problem, which is in-line with the classical total number of late-job problems. If the service charges are different, the late-job problem is called the total weighted number of outsourcing jobs (TWNOJ) problem, which is in-line with the classical total weighted number of late-job problems. For general settings, it is proved that the TNOJ problem is NP-hard and the TWNOJ problem is strongly NP-hard. If the interruption of a waiting job is proportional to its remaining processing time, the TNOJ problem can be solved in -time and the TWNOJ problem can be solved in -time, where n is the number of jobs and P denotes the sum of their processing times.

1. Introduction

Integrating human factors in scheduling problems has been studied for many decades [1]. Two notable factors are the learning effect [2,3,4] and aging (equi. deterioration) effect [5]. In the last decade, multitasking has attracted scheduling theorists to incorporate this human factor in scheduling problems. Multitasking refers to a human behavior in which a person handles multiple tasks in a period of time [6,7]. While some studies [8,9] have found that multitasking can help to improve the skills of a worker in handling multiple tasks, the actual benefit of multitasking has yet to be discovered. Normally, multitasking is not recommended [6,7] as multitasking could cause learning problem [10], attention deficit trait (ADT) [11] and stress [12,13] of a person; it can also decrease a person’s productivity [14,15,16,17]. Nevertheless, mental response delay is inevitable in task switching [18] and humans have difficulties in switching between functional brain networks [19].

In reality, however, multitasking is necessary in some works like air traffic control (ATC) and flight control [20]. An air traffic controller needs to schedule multiple flights’ taking off and landing. A pilot needs to control a flight as well as communicate with the air traffic controllers (sometimes together with pilots from other flights) for taking off or landing. In a weekend evening, the kitchen team of a restaurant has to serve orders concurrently and at the same time handle interruptions from some guests’s special requests, such as re-heat a soup and re-do a steak. In a semester, a professor needs to concurrently conduct multiple research projects, supervise multiple research students, prepare teaching materials and respond to student enquires in weekly consultations.

Eventually, this led Hall, Leung and Li [21,22] to incorporate interruption function and switching cost in the scheduling models to capture this multitasking behavior. Thus, the effect of multitasking from the perspective of operations scheduling was delineated.

1.1. Interruption-Based Multitasking Scheduling Models

Take a set of jobs . Let be a schedule. With multitasking, the owners of the jobs could interrupt the worker while he is processing . The worker will have to switch to each interrupting job and handle part of it. The amount of a job to be processed in its interruption is defined by the interruption function , for . The amount of time switching from to is defined by the switching cost function .

1.1.1. Model

Using the terminologies in [21,22], is called the primary job and are called the waiting jobs when is being processed. Let be the remaining processing time of after to have been completed. We can get that

and for . Thus the completion time of is given by

if and if . Here .

1.1.2. Objectives

With the new problem setting and as a constant, Hall, Leung and Li [21,22] developed polynomial-time algorithms for solving the total weighted completion time (TWCT) problem and the maximum lateness (ML) problem. While they have further shown that the TNLJ (resp. the TWNLJ) problem is NP-hard (resp. strongly NP-hard), a polynomial-time (resp. pseudo-polynomial-time) algorithm is developed for the case that the interruption function is defined as (), where is the remaining processing time. Empirical analyses on the effect of multitasking on operations scheduling were then conducted.

1.1.3. Real Case Study I: Research Projects’ Supervision

As a college professor, we need to supervise a number of research students including master and doctoral students. Suppose that we are going to retire in five years, i.e., 60 months (resp. we are not going to recruit new students in the coming five years), and four current students expected graduations are in one, two, three and five years. One major problem is that the students are weak in English and they are unable to write any papers in English. So, as the supervisor, we need to complete conference and journal papers for the students to fulfill their graduation criteria.

So, the professor needs to estimate the processing times for conducting the remaining projects and writing the papers, i.e., . Next, the professor needs to schedule the times for meetings and the time spent on each meeting. The time spent on each meeting is clearly the interruption time. (Even more challenging, the meeting times are constraints due to the availabilities of the student.) With their due dates in term of months, their due dates (equi. graduation dates) are respectively , , and . With all of this information, the supervisor can schedule to optimize the total number of on-time graduations.

For those students who are concerned about their thesis (resp. dissertation) quality, the above setting is not applicable as the students are willing to extend their dates of graduations. However, like some EMBA students, they pay for their theses and the certifications. They are much more concerned about their dates of graduation rather than what they have contributed (resp. done). Our settings indicate what the reality is and are the motivations for why a supervisor has to multitask.

1.1.4. Real Case Study II: Startup Intelligent Service Firm

For an intelligent service startup firm, it needs to deliver intelligent service by a due date as requested by the investors. If the startup cannot commit, it might not get further funding from the investors. Usually, the system supporting the intelligent service consists of multiple sub-systems. Without a time limit, the startup can develop all sub-systems in-house. With a due date and limited workforce, the startup has to outsource some sub-systems development to freelance programmers. If the development costs of different sub-systems are different, this outsourcing problem is essentially the same as our TWNOJ problem.

1.2. Subsequent Works Along Interruption-Based Models

Many related works have been conducted in the last decade. For clarity, the settings for the multitasking scheduling problems are depicted in Table 1. Considering that is a constant and , Sum and Ho [23] statistically analyzed the effect of multitasking on the expected performance of a worker. As anticipated, the performance (say TWCT) would drastically degrade if the number of jobs is large. If the switching cost is symmetric (i.e., ) instead of a constant value, Sum and Ho in [24] have shown that the polynomial-time algorithms developed by Hall, Leung and Li [22] for the TWCT and ML problems can be equally applied to solve the problems with symmetric switching cost. Sum and Ho in [24] have further introduced two slightly different late-job problems in which the owners of the late-jobs are not allowed to interrupt the worker. The purpose is to let the worker finish more on-time jobs.

Table 1.

Settings for the multitasking scheduling problems.

The multitasking scheduling problems with asymmetric switching costs are investigated [25] and it was shown that the makespan problem is already -hard but some special cases are polynomial-time solvable. If (i) or and/or (ii) the switching cost is given by ( and are constants; is a job-dependent factor), the makespan problem, the total completion time problem and the common due date assignment problem are polynomial-time solvable. By formulating a due date assignment problem as a linear assignment problem, Liu et al. showed that the problem is polynomial-time solvable [26]. Furthermore, if , the problem is -time solvable. Various multitasking scheduling problems with position-dependent processing rates were studied in [27,28,29]. If the interruption function , the makespan problem, the total completion time problem, the maximum lateness problem and the common due date assignment problem are polynomial-time solvable.

1.3. Other Multitasking Scheduling Models

Owing to the reduced effects due to the following, Hall, Leung and Li in [30] introduced two multitasking scheduling models. The first one is due to the idea of alternate period processing. Two time spans and are respectively assigned as the odd period working time and the even period working time. The jobs assigned on the odd periods must be different from the jobs assigned on the even periods. The worker is then required to switch to the other job after they have been working on a job for a period of time. Algorithms were developed for the related scheduling problems. The other multitasking scheduling model deals with the problem that the worker has to handle routine job interruptions while the worker is engaging in a primary job.

As a result, multitasking scheduling problems with progress control and lag-behind penalty [31], machine-dependent slack due-window assignment [32], multi-agent setting [33,34] and multi-agent-multi-machine setting [35] were introduced. Liu, Chen & Tian [36] investigated the multitasking scheduling problems with job switching costs incurred and the jobs to be completed by two agents. Wu et al. [37] investigated the multitasking scheduling problems, in which two agents share a single machine. With due date assignment, Yang et al. [38] investigated the problem setting with two agents. Fu, Hua & Zhao [39] investigated the setting on parallel machines with shared processing, i.e., each machine needs to share processing time to handle both multitasking jobs and routine jobs.

1.4. Interruption-Based Late-Job Problems Without Outsourcing

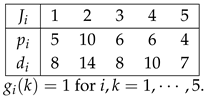

In the Hall–Leung–Li late-job problem setting [22], the in-house worker has to complete all the jobs and so he needs to handle the interruptions from the owners of the waiting jobs. Suppose we have five jobs to be processed. Their processing times and due dates are given below.

As all waiting jobs can interrupt the primary job, only one job (either or ) can be on-time and four other jobs are late.

For , the completion time of the on-time job is . For , the completion time of the on-time job is that .

As all waiting jobs can interrupt the primary job, only one job (either or ) can be on-time and four other jobs are late.

For , the completion time of the on-time job is . For , the completion time of the on-time job is that .

As all waiting jobs can interrupt the primary job, only one job (either or ) can be on-time and four other jobs are late.

As all waiting jobs can interrupt the primary job, only one job (either or ) can be on-time and four other jobs are late.1.5. Interruption-Based Late-Job Problems with Outsourcing (Our Problems)

If there is no late penalty incurred, it is fine to schedule the jobs like or . In reality, however, a late penalty could be incurred and it could be unaffordable. Thus, it is not possible to let the in-house worker finish all the jobs. Some of them have to be outsourced. If an outsourced job is assigned to a single freelance worker, the in-house worker can finish more jobs on-time. Furthermore, all jobs will be completed on-time. For the above example, one possible schedule is to partition the jobs in two sets.

The jobs in are assigned to the in-house worker. has to be processed before . The jobs in are outsourced to three freelance workers. The overhead is simply the service charges for the freelance workers.

1.5.1. Objectives (Total Outsourcing Costs)

Here, we consider two objectives resembling the classical total late-jobs and total weighted late-jobs. With outsourcing, all the jobs must be on-time. In other words, no job is late. Therefore, we use to denote if a job is outsourced.

First, the service charges of all the jobs are equal. The objective to be minimized is the ‘total number of outsourced jobs (TNOJ)’, i.e., . It is in-line with the classical TNLJ problem. We denote this problem as , where the shorthand ‘’ stands for multitasking, ‘’ stands for outsourcing and

Second, each job has its own service charge for . The objective to be minimized is the ‘total weighted number of outsourced jobs (TWNOJ)’, i.e., . It is in-line with classical TWNLJ problem. We denote this problem as , where is the service charge to be paid to the freelance worker.

As suggested by a reviewer, either objective can simply be called the total outsourcing cost. The objective of our problems is to find schedules that can minimize their respective outsourcing costs.

1.5.2. Assumptions

To optimize the efficiency of a worker (resp. a team) to complete the jobs in the presence of multitasking, scheduling seems to be a recommended method [20,40,41]. Without proper scheduling, the effect of multitasking could be severe [6,42,43]. For both the TNOJ and TWNOJ problems, it is assumed that the information of all n jobs is available at time . The jobs do not arrive dynamically. Thus, we have made the following assumptions for our problems: (i) the jobs’ processing times , (ii) their due dates , (iii) their outsourcing costs and (iv) their interruption models are available at time ; (v) the actual processing time of a job is identical to the information available at time , i.e., for ; and (vi) there is only one working team processing the on-time jobs.

For those problems in which the jobs arrive dynamically, dynamic scheduling algorithms as presented in [44,45] or AI-based scheduling algorithms [46,47] could be applied. If , sensitivity analysis advocated in [48,49] is needed to analyze the robustness and the effect of the proposed scheduling algorithms due to uncertainty.

1.6. Contributions and Organization of the Paper

In this paper, four major contributions are presented. It will be shown in Section 2 that (i) the problem is NP-hard and (ii) the problem is strongly NP-hard. For the special case that , we present in Section 3 that (iii) can be solved in -time and (iv) can be solved in -time. Finally, the conclusions of the paper follow in Section 4.

2. Complexity Analysis

To analyze the complexities of the problems and , we need the following lemma regarding the due dates of the jobs in an optimal schedule for the in-house worker.

Lemma 1.

For the late-job problems, there exists an optimal schedule , in which is the set of on-time jobs assigned to the in-house worker and the jobs in are outsourced. Moreover, the jobs in are scheduled in EDD order with no inserted idle time.

Proof.

As is a nondecreasing function of for all i, there exists an optimal schedule with no inserted idle time. Let be an optimal schedule with m on-time jobs.

If , we can construct a new schedule by interchanging and , i.e.,

Since and , is on-time. On the other hand, the total amount of interruptions from is the same. . So, is on-time. is also an optimal schedule. By repeatedly applying pairwise interchange, a schedule consisting of all on-time jobs sequenced in EDD order can be obtained. The proof is completed. □

Without loss of generality, it is assumed that the processing time of a job is always smaller than its due date, i.e., for . Let be the service charges for the jobs . If for all , the problem is denoted as . If are not all equal, the problem is denoted as .

2.1. Total Number of Outsourced Jobs (TNOJ)

If there is no multitasking and the late-jobs are outsourced, the problem is essentially the same as the classical TNLJ problem. Thus, it can be solved using the Moore algorithm in -time. In the presence of multitasking, the problem is NP-hard.

Theorem 1.

The problem is NP-hard.

Proof.

Without loss of generality, we assume that there is no switching cost. We prove the theorem by reduction from the Equal Cardinality Partition problem which is known to be NP-hard [50]. Given a set and a size for each element , does there exist such that and ? The key to completing this proof is the definition of the interruption function .

Given an arbitrary instance of Equal Cardinality Partition, we construct an instance of the problem with jobs, . We call the first jobs the element jobs. The last q jobs are called the unit-time jobs. Furthermore, we set that and N is a positive number such that for all i. The processing times are defined as follows:

Their due dates are defined as follows:

For the interruption, only the element jobs are allowed to interrupt but not the unit-time jobs, i.e.,

The threshold for TNOJ y is q, i.e., .

Clearly, the above construction can be done in polynomial time. We will show that there exists a solution to the constructed instance if and only if there exists a solution to the given instance of the Equal Cardinality Problem.

- (⇒) Suppose there exists such that and . We can construct a schedule simply as follows:where .

As only the element jobs will interrupt all the unit-time jobs, the completion time of is , which is equal to , and the completion time of is , which is equal to ; all jobs in are on-time and .

- (⇐) Let be an optimal schedule. Hence, . By Lemma 1, the set of unit-time jobs in must be scheduled before the set of element jobs. Moreover, must have exactly q unit-time jobs and q element jobs on-time. As , to process an additional element job on-time will lead to discarding half of the unit-time jobs. Therefore, must have exactly q unit-time jobs and followed by q element jobs.

As the completion time of the last unit-time job must be earlier than , we have , or . On the other hand, the completion of the last element job must be earlier than . So, we get that , or . Therefore, we can get that . □

2.2. Total Weighted Number of Outsourced Jobs (TWNOJ)

It is known that the problem is NP-hard [51]. In the presence of multitasking and outsourcing, the problem is strongly NP-hard.

Theorem 2.

The problem is strongly NP-hard.

Proof.

We prove the theorem by reduction from the Exact Cover by 3-Sets (X3C) problem, which is known to be strongly NP-hard [50]. An X3C instance contains a finite set , where q is a positive integer, and a collection of 3-element subsets of , where . The X3C problem is to ask if there exists a such that every element of appears in exactly one member of . In other words, all the members in are mutually exclusive and the union of all the members in equals .

Let and . Given an arbitrary instance of X3C, the corresponding instance of the problem can be constructed. First, we define a set of jobs.

For the sake of presentation, we call the jobs , , ⋯, the element jobs referring the elements in X and the jobs , , ⋯, the member jobs referring the member sets in .

For each job , its processing time, due date and weight are defined as follows:

In (5)–(7),

For the element jobs, there is no interruption, i.e., for . For the member jobs, their interruptions are defined as follows:

for . The threshold of TWNOJ y is defined as

where .

- (⇒) Let be a subset of such that and for . It is clear that we can construct the schedule as follows:All the jobs are on-time and .

- (⇐) Let be a feasible schedule and by Lemma 1, consists of a set of element jobs scheduled in EDD order and followed by a set of member jobs. All the jobs in are on-time and . Furthermore, must have exactly element jobs and q member jobs. Here, we use the notation to differentiate it from the schedule above.

As the processing time of a member job is larger than , to process an additional element job on-time will lead to discarding all element jobs. In the end, the TWNOJ value will be larger than threshold. In the reverse case, discarding any number of element jobs again cannot reduce the value of TWNOJ. must have exactly element jobs and q member jobs. For the sake of presentation,

We further denote that , to differentiate it from . Its property will be investigated shortly.

As the completion times of the last job is , it is clear that all q member jobs are on-time. For the elements jobs, we have that

Now, we are going to figure out under what condition the inequality (11) holds. Let be the number of times appeared in . Clearly,

As for , we can get that

Moreover, the TWNOJ must be smaller than the threshold. We get that

or equivalently

It has been shown in (Online Appendix pp. 5–6, [22]) that is the unique solution for (12)–(14). In other words, the number of times the numbers appeared in are all one. and . The X3C problem is solved. The proof is completed. □

3. Special Case:

Theorems 1 and 2 have shown that job scheduling in the presence of multitasking is generally at least an -hard problem. For the special case that the interruption of a waiting job is given by and there is no switching cost, Hall et al. in [22] have shown that the problem is polynomial-time solvable if all the jobs are completed by a single agent.

For the problems to be investigated in this section, the amount of interruption to a waiting job (say ) is proportional to its remaining processing time . Moreover, it is assumed that there is no switching cost and the agent can discard jobs. Let . for , , where and .

3.1. Total Number of Outsourced Jobs

Before describing our algorithm for solving the problem , let us review the problem posed by Hall, Leung and Li [22] in which the in-house worker has to process all the jobs.

3.1.1. Hall–Leung–Li TNLJ Algorithm

Let be a set of jobs with their due dates in EDD order, i.e., . Let be the set of on-time jobs after some jobs have been checked. The set of on-time jobs is set to be the empty set, i.e., . We further let be the set of on-time jobs after the jobs have been checked.

To determine if has to be appended to , we check the condition of whether it is included and its completion time . If is included, the set of on-time jobs is and there will be on-times jobs. Thus, the completion time is given by

Let be the total processing times. We can equivalently get that

If , and otherwise , where . Then, we set and repeat the steps.

Eventually, an optimal schedule can be obtained. Suppose the schedule has m on-time jobs. , where is the set of on-time jobs and are late-jobs. This optimal schedule can be obtained by the above Moore-like algorithm in -time and the optimal schedule has the following property.

Property 1.

If , the completion times of the on-time jobs are given by

for . If , we can further have that the completion time of the job is given by

Property 1 gives a necessary condition for an optimal schedule of n jobs. It is the key idea behind the algorithms proposed shortly.

3.1.2. Idea Behind the Algorithm

For the algorithm presented in Section 3.1.1, all jobs have to be processed in-house and cannot be outsourced. Thus, the completion time of the last job is P. In our problem setting, the total number of outsourced jobs and the completion time of the last job in are unknown until the optimal schedule has been found. The only known property is stated in the following.

Property 2.

If is an optimal schedule, there exists an integer t () such that the following conditions hold. For ,

if is the completion time of the last on-time job.

It should be noted that Property 2 holds for all feasible schedules. The major problem is what the value of t should be.

Problem 1.

What should the value of t be?

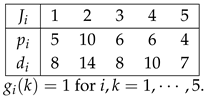

As long as the value t for the optimal schedule is unknown, the key idea is to emulate all possible combinations of t (for ) for which the completion time of the last on-time job in the schedule and the optimal set of jobs satisfies conditions (17) and (18). For each t, the Moore-like algorithm presented in [21,22] for the late-job problem is applied to schedule the jobs. The pseudo-code is listed as Algorithm 1 below. The function FindSchedule is yet another Moore-like algorithm which finds the set of on-time jobs.

| Algorithm 1: Total Number of Outsourced Jobs (TNOJ) |

|

We repeat the function FindSchedule() for . For each t, we can get the number of on-time jobs. So, we can search for all t to see which t gives the largest number of on-time jobs and t is just equal to the total completion times of the on-time jobs.

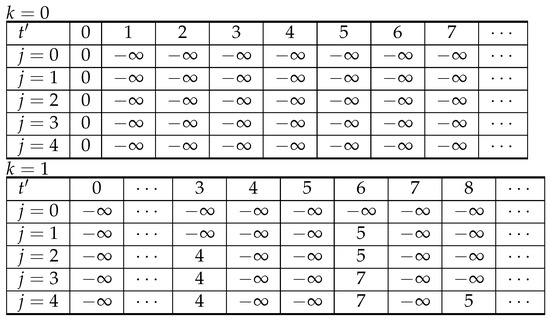

3.1.3. Function FindSchedule(t,D,)

Basically, the idea of the design of the function FindSchedule is from Hall–Leung–Li algorithm for solving their TNLJ problem (Algorithm U, [22]). Instead of using to calculate the completion time of a job, we use t. So, the optimality property of Algorithm U also holds true for the function FindSchedule(t,D,).

Lemma 2.

Given an integer t and a set of jobs , the function FindSchedule is able to find the optimal number of on-time jobs in -time.

Proof.

The proof is inspired by the proof of the Moore algorithm in the book authored by Pinedo [51]. Let be the set of on-time jobs after the for-loop in the function FindSchedule has repeated for k times. Moreover, we let be the number of on-time jobs, i.e., . Given an integer t, for , the following conditions hold.

- The number of jobs in must be maximized.

- The completion time of the last job in , denoted as , must be the smallest amongst other schedules consisting of on-time jobs.

- The total processing time of is smaller or equal to t, i.e., .

Condition (B) is equivalent to the condition that is the smallest.

It is clear that Conditions (A)–(C) hold for . We hypothesize that Conditions (A)–(C) hold for . That is to say, is maximized and

is the smallest. Moreover .

For , we can get that

Two possible cases will happen: (I) and ; (II) either one of them is violated.

- Case (I): As , must be maximized. Condition (A) holds for . By the hypothesis that , is the smallest. Hence, and it must be the smallest. The completion of the last job is , which is the smallest for . Condition (B) and Condition (C) hold for .

- Case (II): As , . It is the largest number of on-time jobs. Condition (A) holds for . The total processing time is now given bywhere . By the hypothesis that and it is the smallest for , as . Condition (C) holds for . Moreover, must be the smallest for and hence,and is the smallest. Condition (B) holds for .

By the principle of mathematical induction, Conditions (A)–(C) hold for . For a given t, FindSchedule is able to find the maximum number of on-time jobs. The proof is completed. □

As the function FindSchedule is applied for all possible , there must exist an integer t in which the number of on-time jobs is maximum and . As running time complexity of the function FindSchedule is , the running time of Algorithm 1 is . So, we can state the following theorem without proof.

Theorem 3.

The problem can be solved by Algorithm 1 in -time.

3.1.4. Bounds on t ( and )

As a matter of fact, the values of t can be restricted to , . The maximum (resp. minimum) value t in Algorithm 1 can be smaller (resp. larger) than P (resp. 1). In such cases, the computational complexity of Algorithm 1 is given by instead of .

To obtain the value , we can apply the Moore algorithm [52] for scheduling n jobs. Let m be the number of on-time jobs obtained by the Moore algorithm [52]. The value can be defined as the sum of the m largest processing times.

To obtain the value , we can apply the Algorithm U introduced in (Algorithm U, [22]). Let be the number of on-time jobs obtained. The value can be defined as the sum of the total processing times of the on-time jobs obtained.

The following theorem states that the maximum number of on-time jobs in the presence of multitasking must be smaller than or equal to m.

Theorem 4.

Suppose there is a list of n jobs and the number of on-time jobs obtained by the Moore algorithm is m, where . If for , the maximum number of on-time jobs obtained by Algorithm 1 must not be larger than m.

Proof.

The proof is based on the method of contradiction. Consider that for and there exists a schedule which consists of on-time jobs. The sum of the on-time jobs’ processing time is . We can then get for

- that

For the first equality, we can equivalently get for (19) that

for . As , we can definitely get that and hence the schedule must be an optimal schedule in the absence of multitasking. The number of on-time jobs in the absence of multitasking is larger than m which contradicts to the m on-time jobs obtained by the Moore algorithm for the TNLJ problem in the absence of multitasking. Therefore, the number of on-time jobs obtained by Algorithm 1 must not be larger than . □

The following theorem states that the minimum number of on-time jobs in the presence of multitasking must be larger than or equal to .

Theorem 5.

Suppose there is a list of n jobs and the number of on-time jobs obtained by the Algorithm U (Algorithm U, [22]) is , where . If for , the minimum number of on-time jobs obtained by Algorithm 1 must not be smaller than .

Proof.

Let be the number of on-time jobs. For , we can have that

If we set , it is clear that for and for . Therefore, the number of on-time jobs obtained by Algorithm 1 must not be smaller . □

In this regard, we do not need to apply the FindSchedule function starting from . Instead, we start from and then and so on until . Thus, Algorithm 1 can solve the TNLJ problem in -time.

3.2. Total Weighted Number of Outsourced Jobs

By using the same idea as in Algorithm 1, a pseudo-polynomial algorithm can be developed for solving the problem t. Here, let us state an important property for the optimal schedule for the TWNOJ problem.

Property 3.

If is an optimal schedule and is the minimum total weighted number of outsourced jobs, there exists an integer such that the following conditions hold. For ,

if t is the completion time of the last on-time job and

3.2.1. Idea Behind the Algorithm

By Property 3, the Algorithm WU1 in [22] can be applied for the TWNOJ problems given that the last scheduled job completion time is t. In this regard, repeating the Algorithm WU1 for and comparing the , the optimal schedule can be found.

3.2.2. Algorithm TWNOJ

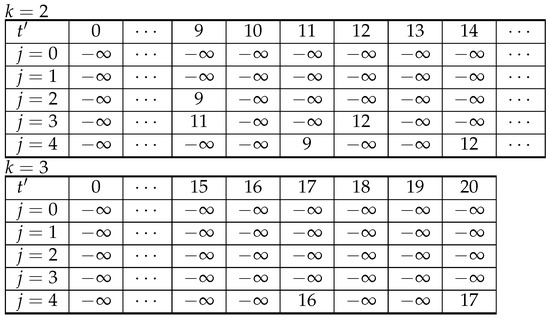

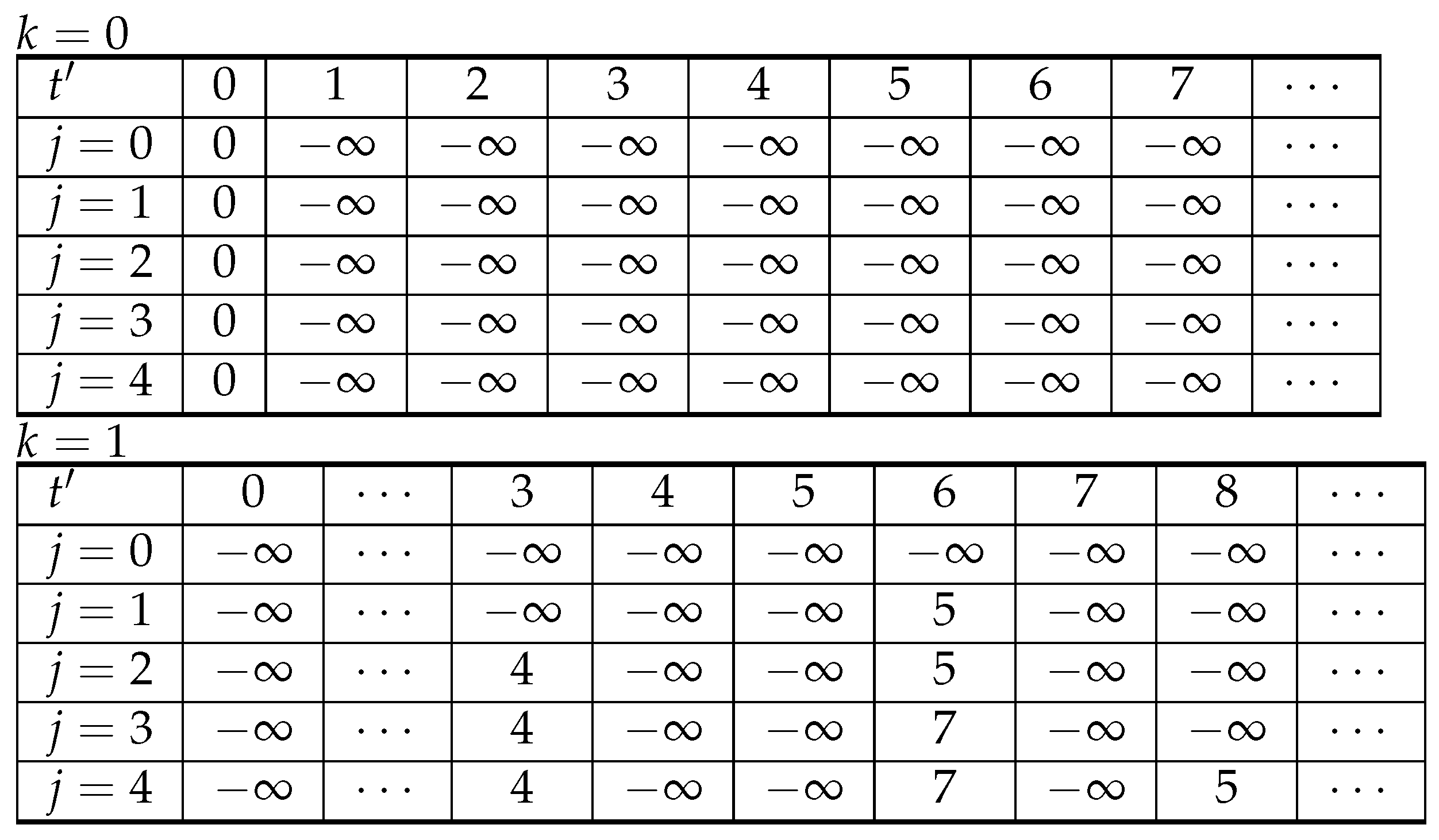

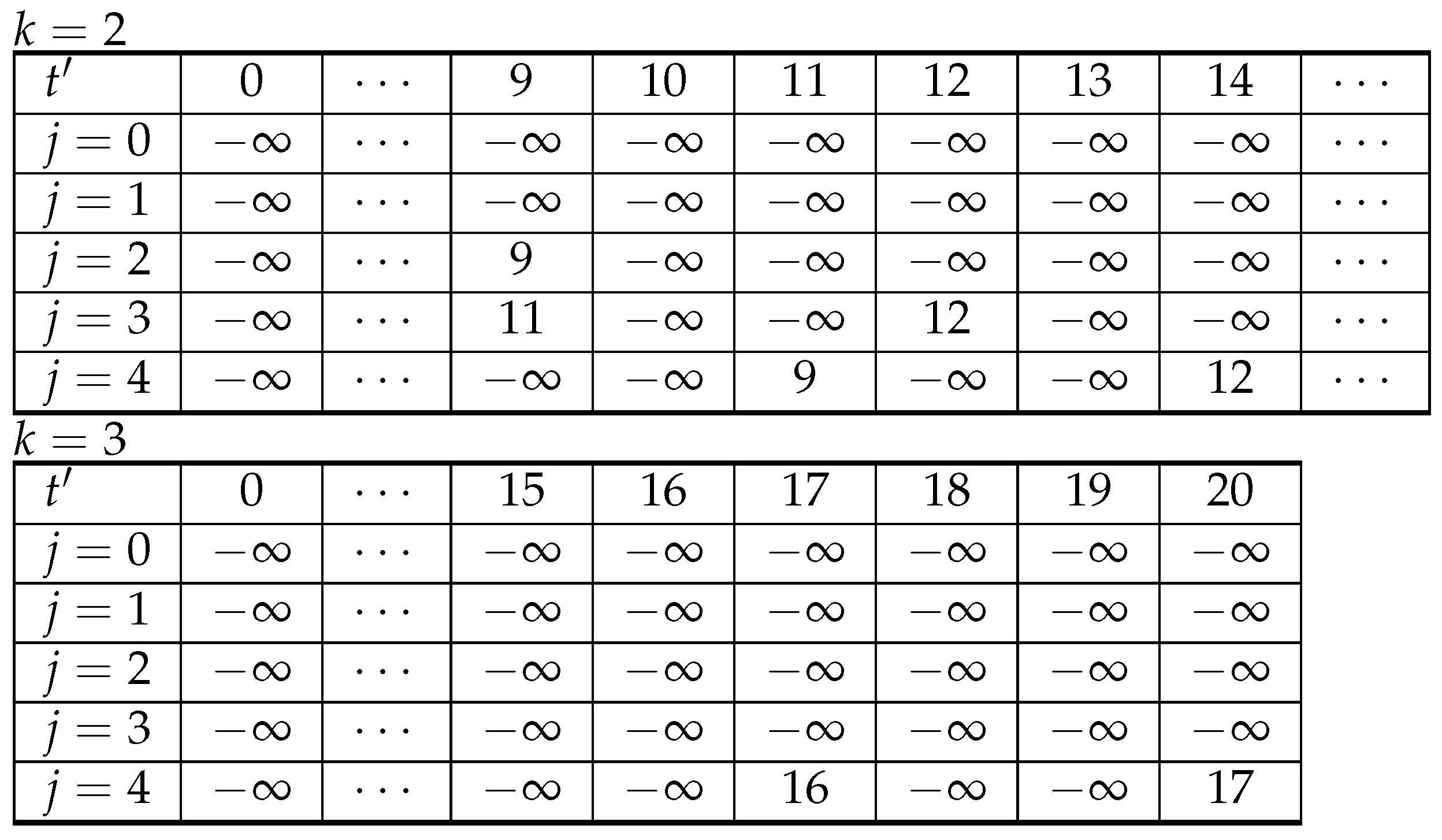

We introduce the optimal value function as , where and . To evaluate the value for , tables of size are needed for each . The boundary conditions are defined in a similar way as in Algorithm WU1. For and ,

The recurrence equation for and is defined as follows:

if and . Otherwise,

where

The optimal solution can be found in for all , and , the maximum value, i.e.,

3.2.3. Illustrative Example

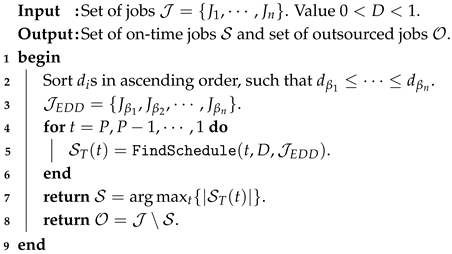

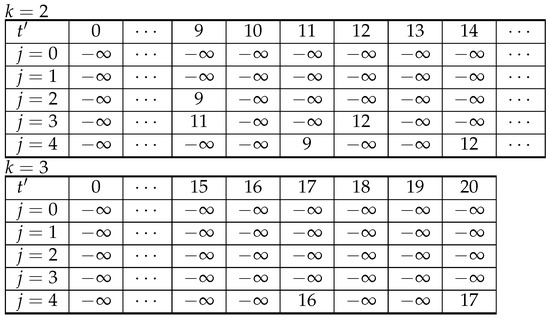

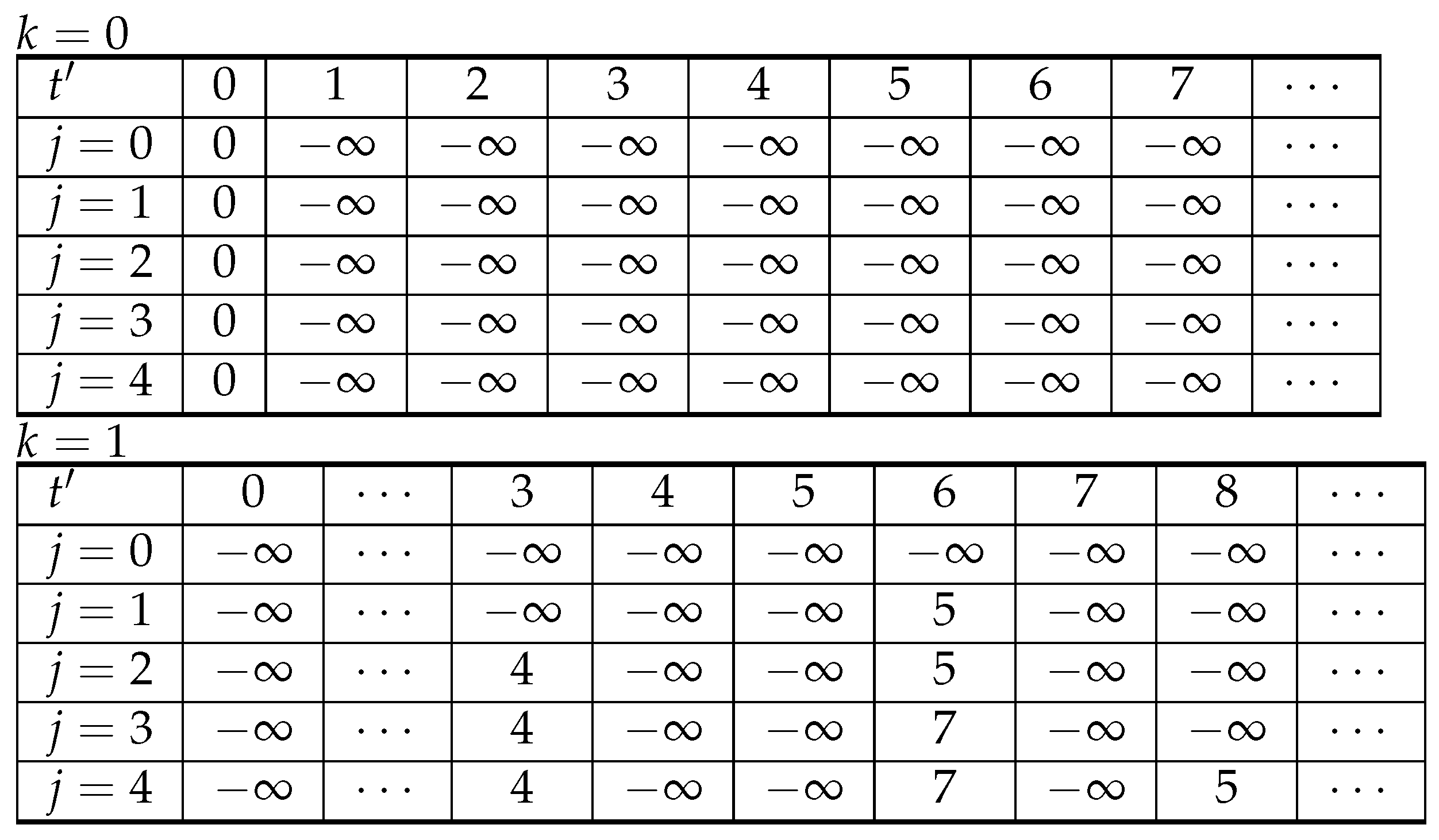

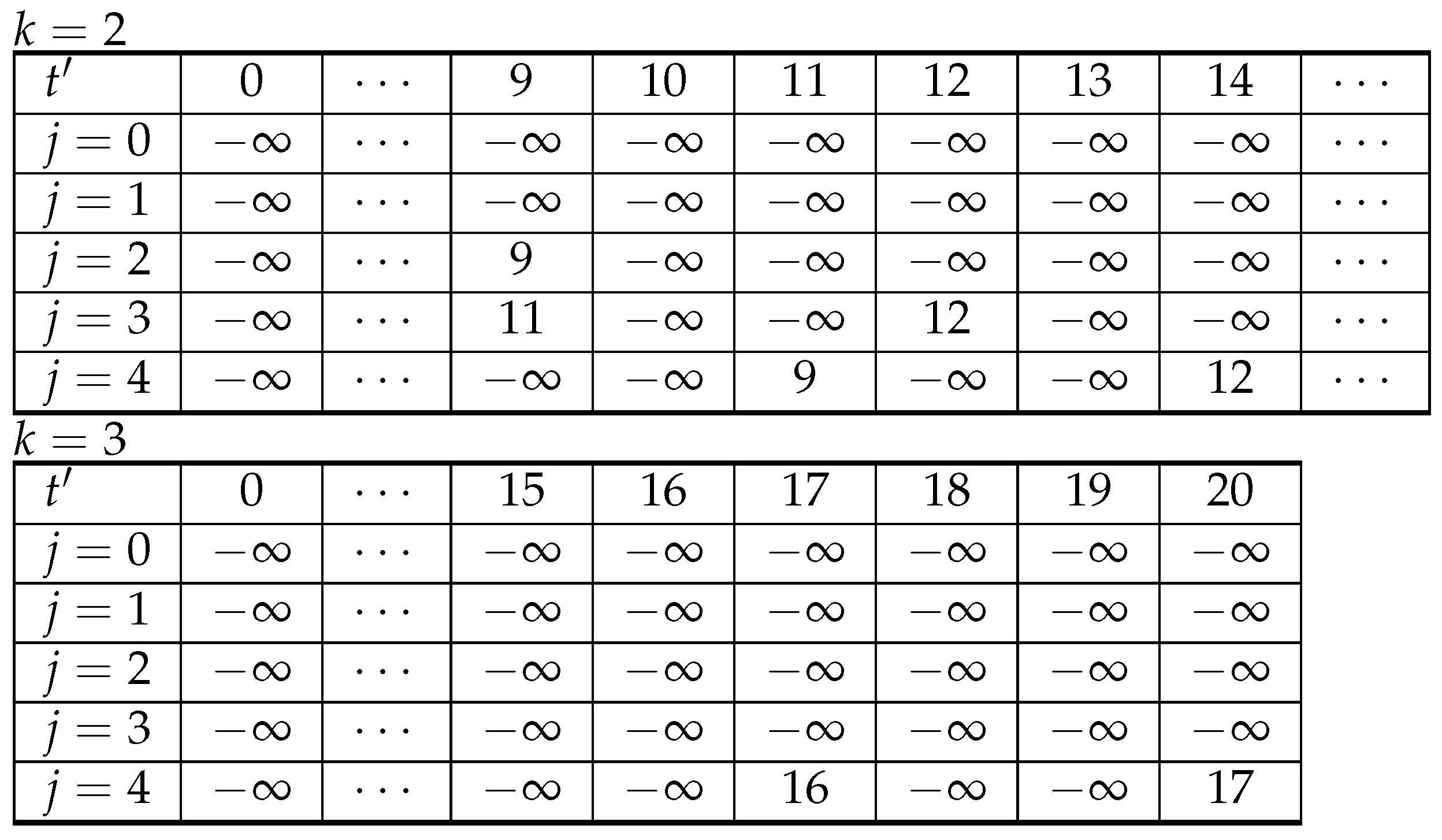

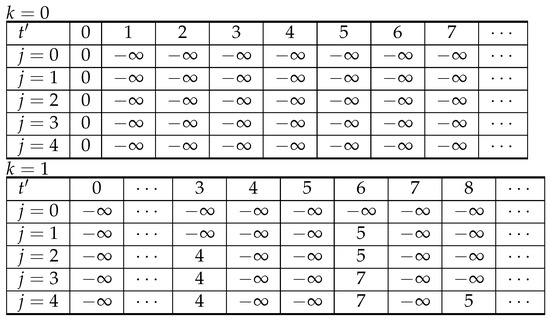

Figure 1 shows the results of the problem with the specifications listed in Table 2. Note that there are more than 20 sets of tables. Here, only the tables for are depicted as it contains the information for the optimal schedule. The optimal schedule is . Job is outsourced. The outsourcing charge is 4. The maximum weighted number of on-time jobs is 17.

Table 2.

Illustrative example.

In comparison with the TWNLJ setting in [22], the optimal schedule obtained by the Hall–Leung–Li Algorithm WU1 is and is the late-job. The maximum weighted number of on-time jobs is 16, i.e.,

Figure 1.

Algorithm 2 is applied to solve the problem specified in Table 2. Here only the tables for and are shown. The table for is omitted as are all . Once the algorithm has been completed, we can check from those for the minimum . Its corresponding schedule is an optimal schedule which minimizes the total weighted number of outsourcing jobs (equi. total outsourcing cost) .

Figure 1.

Algorithm 2 is applied to solve the problem specified in Table 2. Here only the tables for and are shown. The table for is omitted as are all . Once the algorithm has been completed, we can check from those for the minimum . Its corresponding schedule is an optimal schedule which minimizes the total weighted number of outsourcing jobs (equi. total outsourcing cost) .

| Algorithm 2: FindSchedule (Moore-Like Algorithm) |

|

3.2.4. Optimality

It is clear that Algorithm TWNOJ can identically apply Algorithm WU1 in [22] repeatedly for ; the optimality property of the Algorithm WU1 holds for Algorithm TWNOJ. The running time complexity of Algorithm TWNOJ is . So, the reader could refer to the Lemma 3 in [22] for the optimality proof. We can state without proof the following theorem.

Theorem 6.

The problem can be solved by Algorithm TWNOJ in -time.

Proof.

By Lemma 4, [22] and Algorithm WU2, [22], the problem with a fixed t can be solved in -time. Repeating for , we can get that the complexity of the Algorithm TWNOJ is . □

4. Conclusions

In Ref. [22], Hall, Leung and Li have introduced two late-jobs problems in the presence of multitasking. In their settings, the in-house worker has to process all the jobs, i.e., no job is outsourced. The worker has to handle the interruptions from the owners of the late-jobs and clearly the productivity of the worker is affected. In this paper, we have introduced two parallel problems in which some jobs are allowed to be outsourced to freelance workers and each freelancer can only work on one outsourced job. As a result, the owners of the outsourced jobs will no longer interrupt the in-house worker. All in-house jobs and outsourced jobs can be completed on-time. These parallel problems are called multitasking scheduling problems with outsourcing. A real case regarding project supervision is presented in Section 1.1.3.

4.1. Our Accomplishments

In reference to the classical late-job problems [51,53], two objectives are investigated. We assume that (i) the jobs’ processing times , (ii) their due dates , (iii) their outsourcing costs and (iv) their interruption models are available at time ; (v) the actual processing time of a job is identical to the information available at time , i.e., for and (vi) there is only one working team processing the on-time jobs. For the first objective, it is assumed that the service charges of all the jobs are the same. So, the problem is to find a schedule to minimize the total number of outsourced jobs (TNOJ). It is denoted by , where if the job is outsourced. Otherwise, . For the second objective, it is assumed that each job has its own service charge, say for . So, the problem is to find a schedule to minimize the ‘total weighted number of outsourced jobs’ (TWNOJ). This problem is denoted by . Accordingly, it is proved that the TNOJ problem is NP-hard and the TWNOJ is strongly NP-hard. For the special case that , where is the remaining processing time, an -time algorithm has been developed for solving the TNOJ problem and an -time algorithm has been developed for solving the TWNOJ problem.

4.2. Future Works

If the actual processing time of a job is not identical to the information available at time , sensitivity analysis [48,49] on the algorithms proposed in this paper could be a valuable future endeavour. It should be noted that sensitivity analysis on the interruption proportional factor D has already been conducted and presented in [23]. If new jobs arrive whilst the scheduled jobs are being processed, dynamic scheduling algorithms [44,45] or AI-based scheduling algorithms [46,47] might aid in scheduling those not-yet-complete jobs with better performance.

Finally, it should be noted that the objectives, respectively TNOJ and TWNOJ, investigated in this paper can be treated as the total outsourcing costs. For other objectives defined in classical scheduling, like the total weighted completion time, the maximum (weighted) lateness, common due date assignment and others [3,4,51,53], their physical meanings in relation to scheduling problems with human factors are yet to be identified.

Author Contributions

Conceptualization, J.S. and K.I.J.H.; methodology, J.S. and K.I.J.H.; software, J.S.; validation, J.S. and K.I.J.H. All authors have read and agreed to the published version of the manuscript.

Funding

The work presented in this paper is supported in part by research grants from the National Science and Technology Council (NSTC) of Taiwan numbering 110-2221-E-005-053, 111-2221-E-005-084, 112-2221-E-005-076 and 113-2221-E-005-072.

Data Availability Statement

No data is available.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Lodree, E.J.; Geiger, C.D.; Jiang, X. Taxonomy for integrating scheduling theory and human factors: Review and research opportunities. Int. J. Ind. Ergon. 2009, 39, 39–51. [Google Scholar] [CrossRef]

- Ho, K.I.; Leung, J.Y.; Wei, W. Complexity of scheduling tasks with time-dependent execution times. Inf. Process. Lett. 1993, 48, 315–320. [Google Scholar] [CrossRef]

- Biskup, D. Single-machine scheduling with learning considerations. Eur. J. Oper. Res. 1999, 115, 173–178. [Google Scholar] [CrossRef]

- Biskup, D. A state-of-the-art review on scheduling with learning effects. Eur. J. Oper. Res. 2008, 188, 315–329. [Google Scholar] [CrossRef]

- Zhao, C.l.; Tang, H.Y. Single machine scheduling with general job-dependent aging effect and maintenance activities to minimize makespan. Appl. Math. Model. 2010, 34, 837–841. [Google Scholar] [CrossRef]

- Rosen, C. The myth of multitasking. New Atlantis 2008, 20, 105–110. [Google Scholar]

- BAUM, N. The Myth of Multitasking. Healthc. Adm. Leadersh. Manag. J. 2024, 2, 127. [Google Scholar] [CrossRef]

- Lee, F.J.; Taatgen, N.A. Multitasking as skill acquisition. In Proceedings of the Annual Meeting of the Cognitive Science Society, Boston, MA, USA, 1 July–3 August 2003; Routledge: Oxfordshire, UK, 2002; Volume 24, Available online: https://escholarship.org/uc/item/02b1h7dg (accessed on 20 December 2025).

- Taatgen, N.A.; Lee, F.J. Production compilation: A simple mechanism to model complex skill acquisition. Hum. Factors 2003, 45, 61–76. [Google Scholar] [CrossRef]

- Foerde, K.; Knowlton, B.J.; Poldrack, R.A. Modulation of competing memory systems by distraction. Proc. Natl. Acad. Sci. USA 2006, 103, 11778–11783. [Google Scholar] [CrossRef] [PubMed]

- Hallowell, E.M. Overloaded circuits. Harv. Bus. Rev. 2005, 83, 55–62. [Google Scholar]

- Woolston, C. Multitasking and Stress. Available online: https://simhcottumwa.org/multitasking-and-stress/ (accessed on 14 January 2026).

- Becker, L.; Kaltenegger, H.C.; Nowak, D.; Weigl, M.; Rohleder, N. Physiological stress in response to multitasking and work interruptions: Study protocol. PLoS ONE 2022, 17, e0263785. [Google Scholar] [CrossRef] [PubMed]

- Aral, S.; Brynjolfsson, E.; Van Alstyne, M. Information, technology and information worker productivity: Task level evidence. Inf. Syst. Res. 2007, 23, 849–867. [Google Scholar] [CrossRef]

- Coviello, D.; Ichino, A.; Persico, N. Don’t Spread Yourself Too Thin: The Impact of Task Juggling on Workers’ Speed of Job Completion; Technical Report; National Bureau of Economic Research: Cambridge, MA, USA, 2010. [Google Scholar]

- Aral, S.; Brynjolfsson, E.; Van Alstyne, M. Information, technology, and information worker productivity. Inf. Syst. Res. 2012, 23, 849–867. [Google Scholar] [CrossRef]

- Coviello, D.; Ichino, A.; Persico, N. Time allocation and task juggling. Am. Econ. Rev. 2014, 104, 609–623. [Google Scholar] [CrossRef]

- Rubinstein, J.S.; Meyer, D.E.; Evans, J.E. Executive control of cognitive processes in task switching. J. Exp. Psychol. Hum. Percept. Perform. 2001, 27, 763. [Google Scholar] [CrossRef]

- Clapp, W.C.; Rubens, M.T.; Sabharwal, J.; Gazzaley, A. Deficit in switching between functional brain networks underlies the impact of multitasking on working memory in older adults. Proc. Natl. Acad. Sci. USA 2011, 108, 7212–7217. [Google Scholar] [CrossRef]

- Loukopoulos, L.D.; Dismukes, R.K.; Barshi, I. The Multitasking Myth: Handling Complexity in Real-World Operations; Routledge: Oxfordshire, UK, 2016. [Google Scholar]

- Hall, N.G.; Leung, J.Y.T.; Li, C.L. Scheduling with Multitasking. 2013; unpublished manuscript. [Google Scholar]

- Hall, N.G.; Leung, J.Y.T.; Li, C.L. The effects of multitasking on operations scheduling. Prod. Oper. Manag. 2015, 24, 1248–1265. [Google Scholar] [CrossRef]

- Sum, J.; Ho, K. Analysis on the effect of multitasking. In Proceedings of the 2015 IEEE International Conference on Systems, Man, and Cybernetics, Kowloon Tong, Hong Kong, 9–12 October 2015; IEEE: New York, NY, USA, 2015; pp. 204–209. [Google Scholar]

- Sum, J.; Ho, K.I.J. Operations scheduling in the presence of multitasking and symmetric switching cost. In Proceedings of the International Conference on Management Science and Decision Making, Taipei, Taiwan, 30 May 2015; Available online: https://john.digi-pack.io/papers/2015-ICMSDM-Operations-Scheduling.pdf (accessed on 24 December 2025).

- Ho, K.I.J.; Sum, J. Scheduling jobs with multitasking and asymmetric switching costs. In Proceedings of the 2017 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Banff, AB, Canada, 5–8 October 2017; IEEE: New York, NY, USA, 2017; pp. 2927–2932. [Google Scholar]

- Liu, M.; Wang, S.; Zheng, F.; Chu, C. Algorithms for the joint multitasking scheduling and common due date assignment problem. Int. J. Prod. Res. 2017, 55, 6052–6066. [Google Scholar] [CrossRef]

- Zhu, Z.; Zheng, F.; Chu, C. Multitasking scheduling problems with a rate-modifying activity. Int. J. Prod. Res. 2017, 55, 296–312. [Google Scholar] [CrossRef]

- Zhu, Z.; Li, J.; Chu, C. Multitasking scheduling problems with deterioration effect. Math. Probl. Eng. 2017, 2017, 4750791. [Google Scholar] [CrossRef]

- Zhu, Z.; Liu, M.; Chu, C.; Li, J. Multitasking scheduling with multiple rate-modifying activities. Int. Trans. Oper. Res. 2019, 26, 1956–1976. [Google Scholar] [CrossRef]

- Hall, N.G.; Leung, J.Y.T.; Li, C.L. Multitasking via alternate and shared processing: Algorithms and complexity. Discret. Appl. Math. 2016, 208, 41–58. [Google Scholar] [CrossRef]

- Li, C.L.; Zhong, W. Task scheduling with progress control. IISE Trans. 2018, 50, 54–61. [Google Scholar] [CrossRef]

- Ji, M.; Zhang, W.; Liao, L.; Cheng, T.; Tan, Y. Multitasking parallel-machine scheduling with machine-dependent slack due-window assignment. Int. J. Prod. Res. 2019, 57, 1667–1684. [Google Scholar] [CrossRef]

- Xiong, X.; Zhou, P.; Yin, Y.; Cheng, T.; Li, D. An exact branch-and-price algorithm for multitasking scheduling on unrelated parallel machines. Nav. Res. Logist. 2019, 66, 502–516. [Google Scholar] [CrossRef]

- Wang, D.; Yu, Y.; Yin, Y.; Cheng, T.C.E. Multi-agent scheduling problems under multitasking. Int. J. Prod. Res. 2021, 59, 3633–3663. [Google Scholar] [CrossRef]

- Xin, X.; Zhou, S.; Gao, J. An exact approach for multitasking scheduling with two competitive agents on identical parallel machines. Appl. Sci. 2025, 15, 12111. [Google Scholar] [CrossRef]

- Li, S.S.; Chen, R.X.; Tian, J. Multitasking scheduling problems with two competitive agents. Eng. Optim. 2020, 52, 1940–1956. [Google Scholar] [CrossRef]

- Wu, C.C.; Azzouz, A.; Chen, J.Y.; Xu, J.; Shen, W.L.; Lu, L.; Ben Said, L.; Lin, W.C. A two-agent one-machine multitasking scheduling problem solving by exact and metaheuristics. Complex Intell. Syst. 2022, 8, 199–212. [Google Scholar] [CrossRef]

- Yang, Y.; Yin, G.; Wang, C.; Yin, Y. Due date assignment and two-agent scheduling under multitasking environment. J. Comb. Optim. 2022, 44, 2207–2223. [Google Scholar] [CrossRef]

- Fu, B.; Huo, Y.; Zhao, H. Multitasking scheduling with shared processing. Nav. Res. Logist. (NRL) 2024, 71, 595–608. [Google Scholar] [CrossRef]

- Itoh, H. Job design, delegation and cooperation: A principal-agent analysis. Eur. Econ. Rev. 1994, 38, 691–700. [Google Scholar] [CrossRef]

- Corts, K.S. Teams versus individual accountability: Solving multitask problems through job design. RAND J. Econ. 2007, 38, 467–479. [Google Scholar] [CrossRef]

- Gillie, T.; Broadbent, D. What makes interruptions disruptive? A study of length, similarity, and complexity. Psychol. Res. 1989, 50, 243–250. [Google Scholar] [CrossRef]

- Monsell, S. Task switching. Trends Cogn. Sci. 2003, 7, 134–140. [Google Scholar] [CrossRef]

- Ouelhadj, D.; Petrovic, S. A survey of dynamic scheduling in manufacturing systems. J. Sched. 2009, 12, 417–431. [Google Scholar] [CrossRef]

- Vanhoucke, M. Project Management with Dynamic Scheduling: Baseline Scheduling, Risk Analysis and Project Control, 2nd ed.; Springer: Berlin/Heidelberg, Germany, 2012. [Google Scholar]

- Ngwu, C.; Liu, Y.; Wu, R. Reinforcement learning in dynamic job shop scheduling: A comprehensive review of AI-driven approaches in modern manufacturing. J. Intell. Manuf. 2025, 1–16. [Google Scholar] [CrossRef]

- Sanjalawe, Y.; Al-E’mari, S.; Fraihat, S.; Makhadmeh, S. AI-Driven job scheduling in cloud computing: A comprehensive review. Artif. Intell. Rev. 2025, 58, 197. [Google Scholar] [CrossRef]

- Penz, B.; Rapine, C.; Trystram, D. Sensitivity analysis of scheduling algorithms. Eur. J. Oper. Res. 2001, 134, 606–615. [Google Scholar] [CrossRef]

- Hall, N.G.; Posner, M.E. Sensitivity analysis for scheduling problems. J. Sched. 2004, 7, 49–83. [Google Scholar] [CrossRef]

- Garey, M.R.; Johnson, D.S. Computers and Intractability: A Guide to the Theory of NP-Completeness; WH Freeman and Company: New York, NY, USA, 1979. [Google Scholar]

- Pinedo, M. Scheduling: Theory, Algorithms, and Systems, 1st ed.; Prentice-Hall: Saddle River, NJ, USA, 1992. [Google Scholar]

- Moore, J.M. An n job, one machine sequencing algorithm for minimizing the number of late jobs. Manag. Sci. 1968, 15, 102–109. [Google Scholar] [CrossRef]

- Pinedo, M. Scheduling: Theory, Algorithms, and Systems, 3rd ed.; Springer: Berlin/Heidelberg, Germany, 2015. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.