Variance Preserving Spectral Subsampling

Abstract

1. Introduction

1.1. Background and Motivation

1.2. Prior Application Examples

1.3. Limitations and Scope of Applicability

2. Materials and Methods

2.1. Notation and Criteria

- Run-to-Run Variance: For a given channel, the variation observed across multiple child spectra should be consistent with that expected from independent experimental replicates. This criterion aligns with key factor (2) from Flynn et al. [13].

- Channel-to-Channel Variance: Within a single child spectrum, variation between adjacent channels (“fuzziness”) should reflect the statistical behavior expected from a truly Poisson-distributed signal.

- Losslessness: The synthetic children should collectively partition the parent spectrum so that the total number of counts in each channel is preserved. All parent counts must be allocated exactly once, with no duplication or omission. Because reusing parent counts violates this requirement and can introduce undesired dependence among children, methods that sample with replacement are unsuitable for strictly lossless applications. We acknowledge that in some contexts, such as generating large sets of synthetic replicates where strict unbiasedness is not essential, the losslessness requirement may be reasonably relaxed. However, this is not appropriate for our use case.

2.2. Method 1A: Poisson Sampling

2.3. Method 1B: Variance-Corrected Poisson Sampling

2.4. Method 2: Binomial Sampling

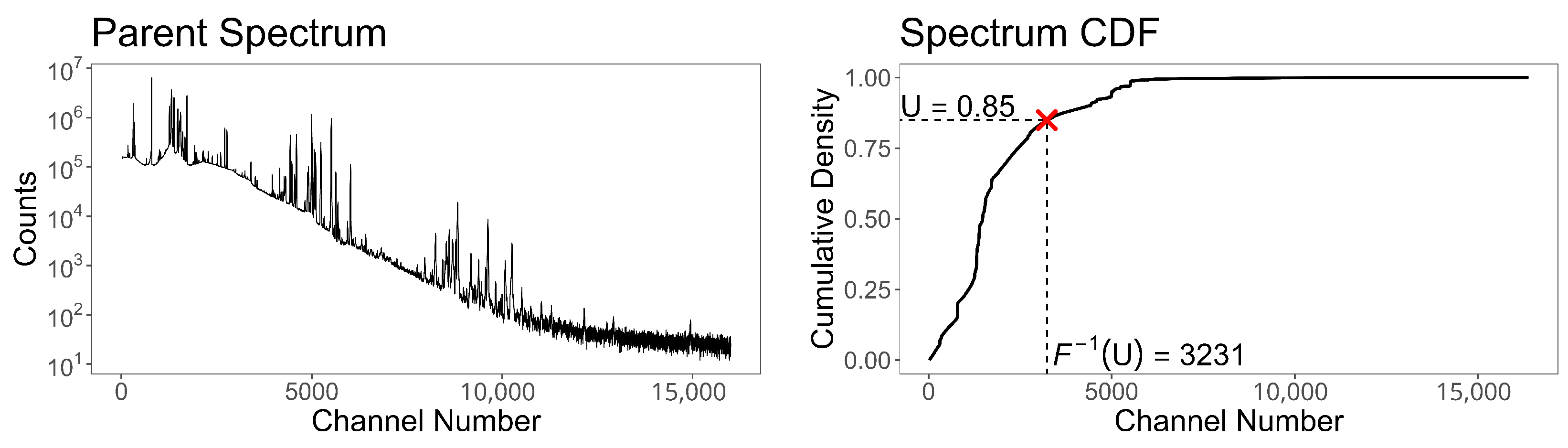

2.5. Method 3A: Inverse Transform Sampling

- (i)

- Sample n independent random variables .

- (ii)

- For each , find the channel k such that and increment channel k in the child spectrum.

2.6. Method 3B: Inverse Transform Sampling with Partial Replacement

- (i)

- Sample n independent random variables .

- (ii)

- For each , find the channel k such that , then

- (a)

- increment channel k in the child spectrum;

- (b)

- decrement channel k in the parent spectrum, i.e., set .

- (iii)

- After completing all n allocations, recompute the empirical probabilities and CDF from the updated parent spectrum.

2.7. Other Methods

3. Results and Discussion

3.1. Run-to-Run Variance

3.2. Channel-to-Channel Variance

3.3. Losslessness

3.4. Summary of Evaluation

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| LOD | Limit of Detection |

| RIID | Radiation Isotope Identification Devices |

| BAT | Burst Alert Telescope |

| DPH | Detector Plane Histogram |

| XRF | X-ray Fluorescence |

| AIP | Algorithm Improvement Project |

| SNM | Special Nuclear Material |

| GADRAS | Gamma Detector Response and Analysis Software |

| MLP | Multi-Layer Perceptron |

| CNN | Convolutional Neural Network |

| LSTM | Long Short-Term Memory |

| RASE | Replicative Assessment of Spectroscopic Equipment |

| FRAM | Fixed-Energy Response-function Analysis with Multiple efficiencies |

| CDF | Cumulative Distribution Function |

| PMF | Probability Mass Function |

| MLE | Maximum Likelihood Estimation |

Appendix A

Appendix A.1. Variance Derivation for Method 1B

Appendix A.2. Marginal Distribution Under Binomial Subsampling

References

- NASA/GSFC HEASARC. Batsurvey—Perform BAT Survey Imaging Analysis. HEASoft. 2008. Available online: https://heasarc.gsfc.nasa.gov/docs/software/lheasoft/help/batsurvey.html (accessed on 21 December 2025).

- XRD & XRF Raw Data. Available online: https://doi.org/10.17632/nkpmdtdkfw.1 (accessed on 21 December 2025).

- Figueroa-Rosales, E.X.; Martínez-Juárez, J.; García-Díaz, E.; Hernández-Cruz, D.; Sabinas-Hernández, S.A.; Robles-Águila, M.J. Photoluminescent properties of hydroxyapatite and hydroxyapatite/multi-walled carbon nanotube composites. Crystals 2021, 11, 832. [Google Scholar] [CrossRef]

- Enghauser, M. Algorithm Improvement Program Nuclide Identification Algorithm Scoring Criteria and Scoring Application; Technical Report; Sandia National Laboratories (SNL-NM): Albuquerque, NM, USA, 2016. [Google Scholar] [CrossRef]

- Mitchell, D.J.; Harding, L.; Thoreson, G.G.; Horne, S.M. GADRAS Detector Response Function; Technical Report; Sandia National Laboratories (SNL-NM): Albuquerque, NM, USA, 2014. [Google Scholar] [CrossRef]

- Fournier, S.D.; Enghauser, M.; Leonard, E.J.; Thoreson, G.G. GADRAS Batch Inject Tool User Guide; Technical Report; Sandia National Laboratories (SNL-NM): Albuquerque, NM, USA, 2020. [Google Scholar] [CrossRef]

- Lalor, P.; Adams, H.; Hagen, A. Sim-to-real supervised domain adaptation for radioisotope identification. Nucl. Instrum. Methods Phys. Res. Sect. A Accel. Spectrometers Detect. Assoc. Equip. 2026, 1083, 171159. [Google Scholar] [CrossRef]

- PyRIID v.2.0.0. Available online: https://doi.org/10.11578/dc.20221017.2 (accessed on 21 December 2025).

- Kwon, J.; Kim, J.; Kim, H.; Kim, S.; Jang, S.; Lee, J.; Kim, Y.s. Development of gamma-spectrum data generation method by Monte Carlo simulation. J. Korean Phys. Soc. 2023, 82, 658–670. [Google Scholar] [CrossRef]

- Agostinelli, S.; Allison, J.; Amako, K.; Apostolakis, J.; Araujo, H.; Arce, P.; Asai, M.; Axen, D.; Banerjee, S.; Barrand, G.; et al. Geant4—A simulation toolkit. Nucl. Instrum. Methods Phys. Res. Sect. A Accel. Spectrometers Detect. Assoc. Equip. 2003, 506, 250–303. [Google Scholar] [CrossRef]

- Chavez, J.R.; Czyz, S.A.; Sangiorgio, S.; Brodsky, J.P.; Kosinovsky, G.A. Replicative Assessment of Spectroscopic Equipment; Lawrence Livermore National Laboratory (LLNL): Livermore, CA, USA, 2020. [Google Scholar] [CrossRef]

- Arlt, R.; Baird, K.; Blackadar, J.; Blessenger, C.; Blumenthal, D.; Chiaro, P.; Frame, K.; Mark, E.; Mayorov, M.; Milovidov, M.; et al. Semi-empirical approach for performance evaluation of radionuclide identifiers. In Proceedings of the 2009 IEEE Nuclear Science Symposium Conference Record (NSS/MIC), Orlando, FL, USA, 24 October–1 November 2009; pp. 990–994. [Google Scholar] [CrossRef]

- Flynn, A.; Boardman, D.; Reinhard, M.I. The validation of synthetic spectra used in the performance evaluation of radionuclide identifiers. Appl. Radiat. Isot. 2013, 77, 145–152. [Google Scholar] [CrossRef] [PubMed]

- Vo, D.T.; Sampson, T.E. FRAM, Version 6.1 User Manual; Technical Report; Los Alamos National Laboratory (LANL): Los Alamos, NM, USA, 2020. [Google Scholar] [CrossRef]

- Burr, T.; Hamada, M.S.; Graves, T.L.; Myers, S. Augmenting real data with synthetic data: An application in assessing radio-isotope identification algorithms. Qual. Reliab. Eng. Int. 2009, 25, 899–911. [Google Scholar] [CrossRef]

- Burr, T.; Hamada, M. Radio-isotope identification algorithms for NaI γ spectra. Algorithms 2009, 2, 339–360. [Google Scholar] [CrossRef]

- Raikov, D. On the decomposition of Gauss and Poisson laws. Izv. Math. 1938, 2, 91–124. [Google Scholar]

- Steutel, F.W.; van Harn, K. Discrete analogues of self-decomposability and stability. Ann. Probab. 1979, 7, 893–899. [Google Scholar] [CrossRef]

- Weiss, C.H. Thinning operations for modeling time series of counts—A survey. Adv. Stat. Anal. 2008, 92, 319–341. [Google Scholar] [CrossRef]

- Bossew, P. A very long-term HPGe-background gamma spectrum. Appl. Radiat. Isot. 2005, 62, 635–644. [Google Scholar] [CrossRef] [PubMed]

- Wang, Y.; Liu, Y.; Wu, B.; Meng, X.; Wang, J.; Cheng, J. Reconstruction of indoor gamma-ray background spectrum for HPGe detectors. Radiat. Meas. 2024, 174, 107139. [Google Scholar] [CrossRef]

- Panaretos, V.M.; Zemel, Y. Statistical aspects of Wasserstein distances. Annu. Rev. Stat. Its Appl. 2019, 6, 405–431. [Google Scholar] [CrossRef]

| Genuine | |||

| Poisson (naïve) | |||

| Poisson (variance-corrected) | |||

| Binomial (no replacement) | |||

| inverse transform (with replacement) | |||

| inverse transform (partial replacement) |

| Result | ||||

|---|---|---|---|---|

| Poisson (naïve) | 0.6398 | 0.2863 | 0.2863 | Pass |

| Poisson (variance-corrected) | 0.0179 ** | 0.0000 ** | 0.0000 ** | Fail |

| Binomial (no replacement) | 0.2863 | 0.5982 | 0.4787 | Pass |

| inverse transform (with replacement) | 0.6133 | 0.2701 | 0.6170 | Pass |

| inverse transform (partial replacement) | 0.0000 ** | 0.0000 ** | 0.0000 ** | Fail |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Hansen, H.J.; Burr, T.L.; Croft, S.; Kirkpatrick, J.; Mercer, D.J.; Sagadevan, A.A.; Stockman, T.J., III; Stark, E.N. Variance Preserving Spectral Subsampling. Algorithms 2026, 19, 25. https://doi.org/10.3390/a19010025

Hansen HJ, Burr TL, Croft S, Kirkpatrick J, Mercer DJ, Sagadevan AA, Stockman TJ III, Stark EN. Variance Preserving Spectral Subsampling. Algorithms. 2026; 19(1):25. https://doi.org/10.3390/a19010025

Chicago/Turabian StyleHansen, Hyrum J., Thomas L. Burr, Stephen Croft, John Kirkpatrick, David J. Mercer, Athena A. Sagadevan, Tom J. Stockman, III, and Emily N. Stark. 2026. "Variance Preserving Spectral Subsampling" Algorithms 19, no. 1: 25. https://doi.org/10.3390/a19010025

APA StyleHansen, H. J., Burr, T. L., Croft, S., Kirkpatrick, J., Mercer, D. J., Sagadevan, A. A., Stockman, T. J., III, & Stark, E. N. (2026). Variance Preserving Spectral Subsampling. Algorithms, 19(1), 25. https://doi.org/10.3390/a19010025