ARTNet for Micro-Expression Recognition

Abstract

1. Introduction

- We present a novel approach to processing amplified micro-expression features, aiming to enhance the distinction between the micro-expression onset frame and apex frame. This addresses the issue of the limited range of micro-expression movements.

- We introduce an innovative self-attention mechanism that prioritizes facial local areas over global areas like the eye and lip regions. Since micro-expression recognition heavily relies on local facial muscle movements, our model effectively captures essential features by focusing on specific facial regions using local attention while disregarding irrelevant areas.

- Through experiments on three datasets, our proposed method demonstrates significant improvements over previous approaches, showcasing its effectiveness and superiority in micro-expression recognition tasks.

2. Related Work

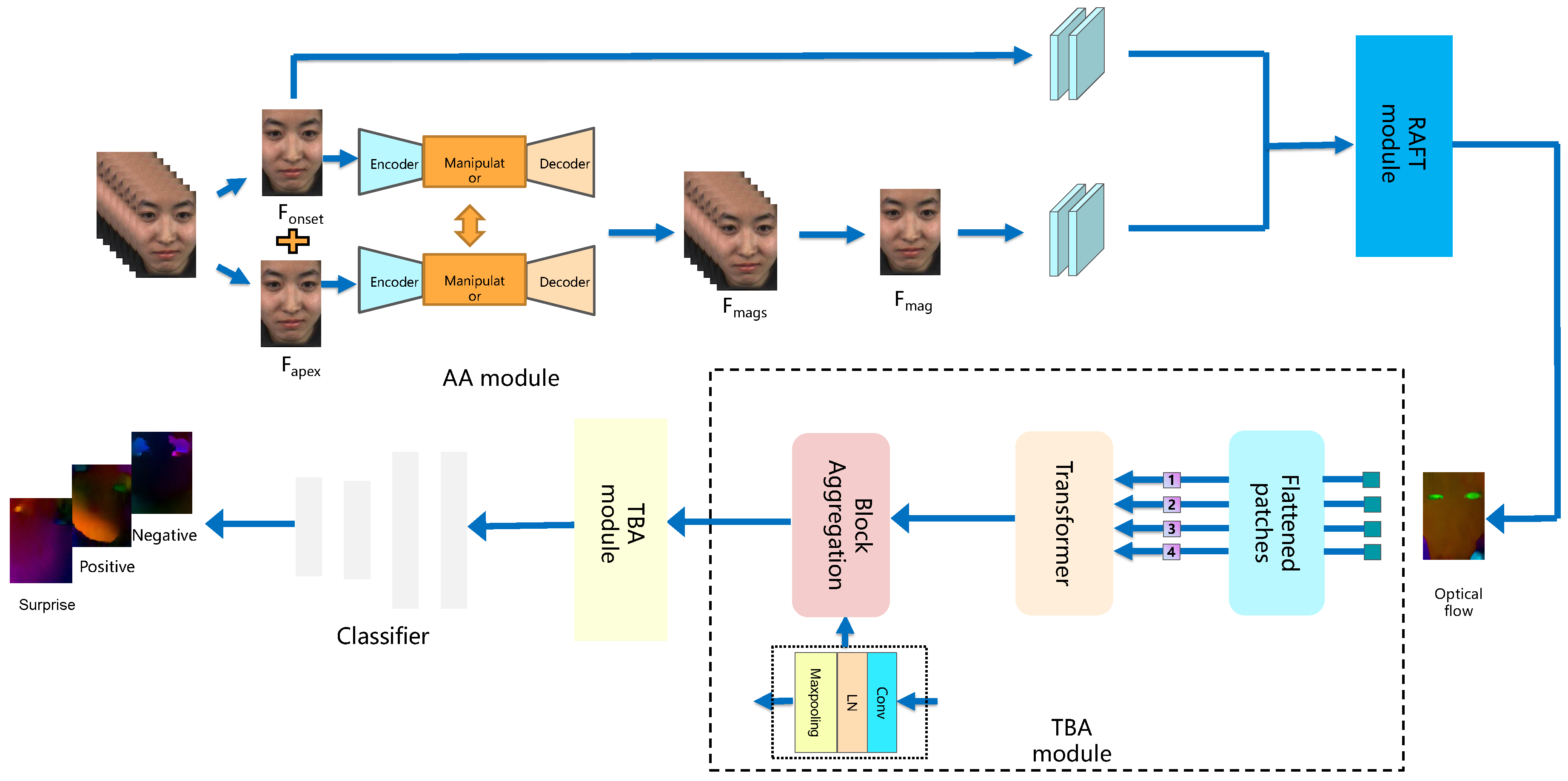

3. ARTNet

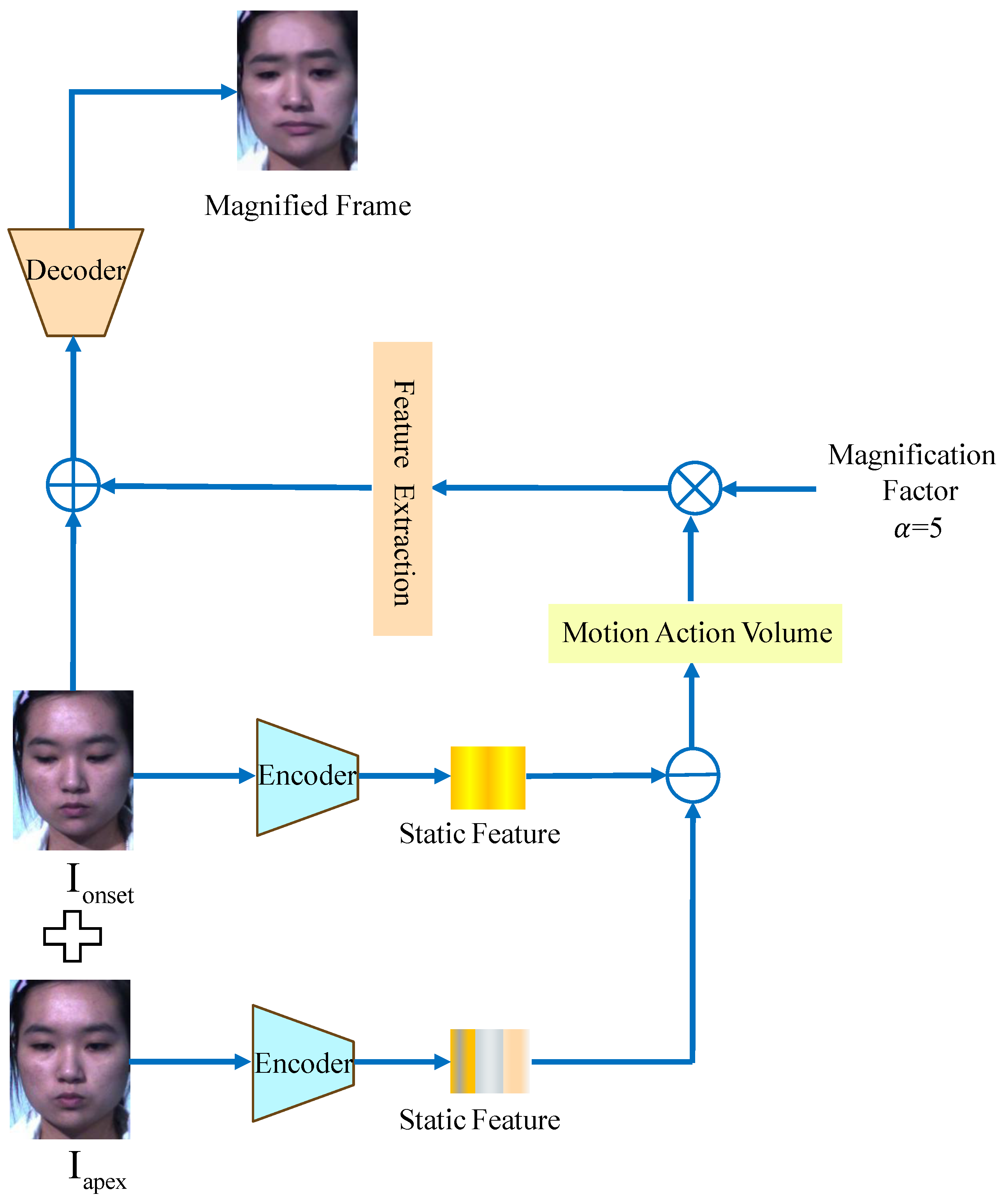

3.1. AA Module

3.2. RAFT Module

3.3. TBA Module

3.3.1. Transformer Layer

3.3.2. Block Aggregation

3.4. Loss Function

4. Experiments

4.1. Datasets

4.2. Evaluation Metrics

4.3. Implementation Details

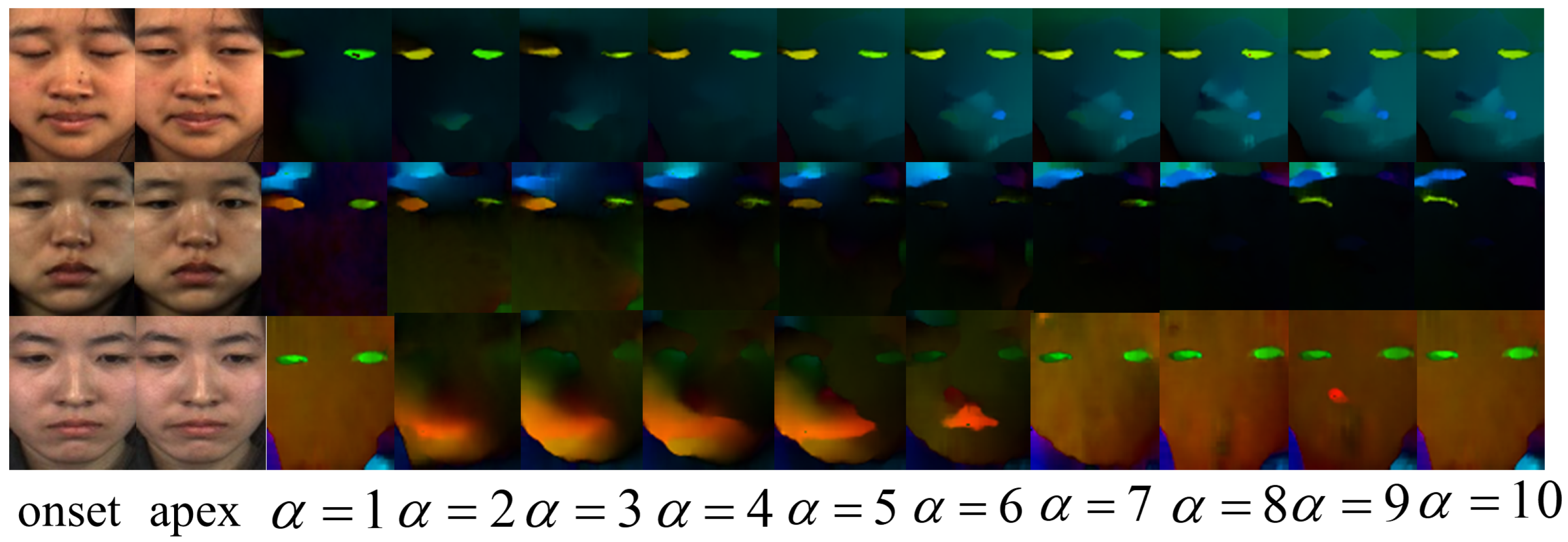

4.4. Magnification Setting

4.5. Comparison with the State of the Art

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Li, J.; Dong, Z.; Lu, S.; Wang, S.-J.; Yan, W.-J.; Ma, Y.; Liu, Y.; Huang, C.; Fu, X. CAS (ME)3: A third generation facial spontaneous micro-expression database with depth information and high ecological validity. IEEE Trans. Pattern Anal. Mach. Intell. 2022, 45, 2782–2800. [Google Scholar] [CrossRef] [PubMed]

- Ekman, P.; Friesen, W.V. Nonverbal leakage and clues to deception. Psychiatry 1969, 32, 88–106. [Google Scholar] [CrossRef] [PubMed]

- Ben, X.; Ren, Y.; Zhang, J.; Wang, S.-J.; Kpalma, K.; Meng, W.; Liu, Y.-J. Video-based facial micro-expression analysis: A survey of datasets, features and algorithms. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 44, 5826–5846. [Google Scholar] [CrossRef] [PubMed]

- Yan, W.-J.; Wu, Q.; Liang, J.; Chen, Y.-H.; Fu, X. How fast are the leaked facial expressions: The duration of micro-expressions. J. Nonverbal Behav. 2013, 37, 217–230. [Google Scholar] [CrossRef]

- Li, Y.; Huang, X.; Zhao, G. Joint local and global information learning with single apex frame detection for micro-expression recognition. IEEE Trans. Image Process. 2020, 30, 249–263. [Google Scholar] [CrossRef]

- Wu, H.-Y.; Rubinstein, M.; Shih, E.; Guttag, J.; Durand, F.; Freeman, W. Eulerian video magnification for revealing subtle changes in the world. ACM Trans. Graph. (TOG) 2012, 31, 1–8. [Google Scholar] [CrossRef]

- Ben, X.; Jia, X.; Yan, R.; Zhang, X.; Meng, W. Learning effective binary descriptors for micro-expression recognition transferred by macro-information. Pattern Recognit. Lett. 2018, 107, 50–58. [Google Scholar] [CrossRef]

- Wang, Y.; See, J.; Phan, R.C.-W.; Oh, Y.-H. Lbp with six intersection points: Reducing redundant information in lbp-top for micro-expression recognition. In Computer Vision—ACCV 2014: 12th Asian Conference on Computer Vision, Singapore, Singapore, November 1–5, 2014, Revised Selected Papers, Part I 12; Springer: Berlin/Heidelberg, Germany, 2015; pp. 525–537. [Google Scholar]

- Wei, C.; Xie, L.; Ren, X.; Xia, Y.; Su, C.; Liu, J.; Tian, Q.; Yuille, A.L. Iterative reorganization with weak spatial constraints: Solving arbitrary jigsaw puzzles for unsupervised representation learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 1910–1919. [Google Scholar]

- Zhao, G.; Pietikainen, M. Dynamic texture recognition using local binary patterns with an application to facial expressions. IEEE Trans. Pattern Anal. Mach. Intell. 2007, 29, 915–928. [Google Scholar] [CrossRef]

- Liong, S.-T.; See, J.; Wong, K.; Phan, R.C.-W. Less is more: Micro-expression recognition from video using apex frame. Signal Process. Image Commun. 2018, 62, 82–92. [Google Scholar] [CrossRef]

- Fleet, D.; Weiss, Y. Optical flow estimation. In Handbook of Mathematical Models in Computer Vision; Springer: Berlin/Heidelberg, Germany, 2006; pp. 237–257. [Google Scholar]

- Liu, Y.; Du, H.; Zheng, L.; Gedeon, T. A neural micro-expression recognizer. In Proceedings of the 2019 14th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2019), Lille, France, 14–18 May 2019; pp. 1–4. [Google Scholar]

- Khor, H.-Q.; See, J.; Liong, S.-T.; Phan, R.C.W.; Lin, W. Dual-stream shallow networks for facial micro-expression recognition. In Proceedings of the 2019 IEEE International Conference on Image Processing (ICIP), Taipei, Taiwan, 22–25 September 2019; pp. 36–40. [Google Scholar]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef]

- Van Quang, N.; Chun, J.; Tokuyama, T. CapsuleNet for micro-expression recognition. In Proceedings of the 2019 14th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2019), Lille, France, 14–18 May 2019; pp. 1–7. [Google Scholar]

- Wang, S.-J.; Chen, H.-L.; Yan, W.-J.; Chen, Y.-H.; Fu, X. Face recognition and micro-expression recognition based on discriminant tensor subspace analysis plus extreme learning machine. Neural Process. Lett. 2014, 39, 25–43. [Google Scholar] [CrossRef]

- Wang, S.-J.; Yan, W.-J.; Li, X.; Zhao, G.; Zhou, C.-G.; Fu, X.; Yang, M.; Tao, J. Micro-expression recognition using color spaces. IEEE Trans. Image Process. 2015, 24, 6034–6047. [Google Scholar] [CrossRef]

- Xu, F.; Zhang, J.; Wang, J.Z. Microexpression identification and categorization using a facial dynamics map. IEEE Trans. Affect. Comput. 2017, 8, 254–267. [Google Scholar] [CrossRef]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An image is worth 16×16 words: Transformers for image recognition at scale. arXiv 2020, arXiv:2010.11929. [Google Scholar]

- Bertasius, G.; Wang, H.; Torresani, L. Is space-time attention all you need for video understanding? ICML 2021, 2, 4. [Google Scholar]

- Dong, X.; Long, C.; Xu, W.; Xiao, C. Dual graph convolutional networks with transformer and curriculum learning for image captioning. In Proceedings of the 29th ACM International Conference on Multimedia, Chengdu, China, 20–24 October 2021; pp. 2615–2624. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Adv. Neural Inf. Process. Syst. 2017, 30, 1–11. [Google Scholar]

- Wadhwa, N.; Rubinstein, M.; Durand, F.; Freeman, W.T. Phase-based video motion processing. ACM Trans. Graph. (ToG) 2013, 32, 1–10. [Google Scholar] [CrossRef]

- Oh, T.-H.; Jaroensri, R.; Kim, C.; Elgharib, M.; Durand, F.; Freeman, W.T.; Matusik, W. Learning-based video motion magnification. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 633–648. [Google Scholar]

- Patel, D.; Zhao, G.; Pietikäinen, M. Spatiotemporal integration of optical flow vectors for micro-expression detection. In Advanced Concepts for Intelligent Vision Systems: 16th International Conference, ACIVS 2015, Catania, Italy, October 26–29, 2015. Proceedings 16; Springer: Berlin/Heidelberg, Germany, 2015; pp. 369–380. [Google Scholar]

- Wang, S.-J.; Wu, S.; Qian, X.; Li, J.; Fu, X. A main directional maximal difference analysis for spotting facial movements from long-term videos. Neurocomputing 2021, 230, 382–389. [Google Scholar] [CrossRef]

- Teed, Z.; Deng, J. Raft: Recurrent all-pairs field transforms for optical flow. In Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, August 23–28, 2020, Proceedings, Part II 16; Springer: Berlin/Heidelberg, Germany, 2020; pp. 402–419. [Google Scholar]

- Yan, W.-J.; Li, X.; Wang, S.-J.; Zhao, G.; Liu, Y.-J.; Chen, Y.-H.; Fu, X. CASME II: An improved spontaneous micro-expression database and the baseline evaluation. PLoS ONE 2014, 9, e86041. [Google Scholar] [CrossRef]

- Li, X.; Pfister, T.; Huang, X.; Zhao, G.; Pietikäinen, M. A spontaneous micro-expression database: Inducement; collection; baseline. In Proceedings of the 2013 10th IEEE International Conference and Workshops on Automatic Face and Gesture Recognition (fg), Shanghai, China, 22–26 April 2013; pp. 1–6. [Google Scholar]

- Davison, A.K.; Lansley, C.; Costen, N.; Tan, K.; Yap, M.H. Samm: A spontaneous micro-facial movement dataset. IEEE Trans. Affect. Comput. 2018, 9, 116–129. [Google Scholar] [CrossRef]

- Jose, E.; Greeshma, M.; Haridas, M.T.P.; Supriya, M. Face recognition based surveillance system using facenet and mtcnn on jetson tx2. In Proceedings of the 2019 5th International Conference on Advanced Computing & Communication Systems (ICACCS), Coimbatore, India, 15–16 March 2019; pp. 608–613. [Google Scholar]

- Zhou, L.; Mao, Q.; Xue, L. Dual-inception network for cross-database micro-expression recognition. In Proceedings of the 2019 14th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2019), Lille, France, 14–18 May 2019; pp. 1–5. [Google Scholar]

- Liong, S.-T.; Gan, Y.S.; See, J.; Khor, H.-Q.; Huang, Y.-C. Shallow triple stream three-dimensional cnn (ststnet) for micro-expression recognition. In Proceedings of the 2019 14th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2019), Lille, France, 14–18 May 2019; pp. 1–5. [Google Scholar]

- Zhou, L.; Mao, Q.; Huang, X.; Zhang, F.; Zhang, Z. Feature refinement: An expression-specific feature learning and fusion method for micro-expression recognition. Pattern Recognit. 2022, 122, 108275. [Google Scholar] [CrossRef]

- Wang, Z.; Yang, M.; Jiao, Q.; Xu, L.; Han, B.; Li, Y.; Tan, X. Two-level spatio-temporal feature fused two-stream network for micro-expression recognition. Sensors 2024, 24, 1574. [Google Scholar] [CrossRef] [PubMed]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- Sengupta, A.; Ye, Y.; Wang, R.; Liu, C.; Roy, K. Going deeper in spiking neural networks: VGG and residual architectures. Front. Neurosci. 2019, 13, 95. [Google Scholar] [CrossRef]

- Zhang, L.; Hong, X.; Arandjelović, O.; Zhao, G. Short and long range relation based spatio-temporal transformer for micro-expression recognition. IEEE Trans. Affect. Comput. 2022, 13, 1973–1985. [Google Scholar] [CrossRef]

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | |

|---|---|---|---|---|---|---|---|---|

| UF1 | 0.7398 | 0.7545 | 0.8380 | 0.7697 | 0.7693 | 0.7716 | 0.6915 | 0.7816 |

| UAR | 0.6858 | 0.7178 | 0.7983 | 0.7222 | 0.7160 | 0.7337 | 0.6594 | 0.7370 |

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | |

|---|---|---|---|---|---|---|---|---|

| UF1 | 0.7281 | 0.6479 | 0.6961 | 0.6944 | 0.7217 | 0.7300 | 0.7720 | 0.7137 |

| UAR | 0.7660 | 0.6470 | 0.6886 | 0.6932 | 0.7172 | 0.7304 | 0.7692 | 0.7105 |

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | |

|---|---|---|---|---|---|---|---|---|

| UF1 | 0.6214 | 0.7425 | 0.6534 | 0.6623 | 0.7257 | 0.6997 | 0.5506 | 0.7181 |

| UAR | 0.5591 | 0.6690 | 0.5942 | 0.5983 | 0.6684 | 0.6480 | 0.5154 | 0.6474 |

| Database | CASME II | SAMM | SMIC | |||

|---|---|---|---|---|---|---|

| UF1 | UAR | UF1 | UAR | UF1 | UAR | |

| LBP-TOP [8] | 0.7026 | 0.7429 | 0.3954 | 0.4102 | 0.2000 | 0.5280 |

| CapsuleNet [16] | 0.7068 | 0.7018 | 0.6209 | 0.5989 | 0.5820 | 0.5877 |

| Bi-WOOF [11] | 0.7805 | 0.8026 | 0.5211 | 0.5139 | 0.5727 | 0.5829 |

| GoogLeNet [37] | 0.5989 | 0.6414 | 0.5124 | 0.5992 | 0.5123 | 0.5511 |

| VGG16 [38] | 0.8166 | 0.8202 | 0.4870 | 0.4793 | 0.5800 | 0.5964 |

| Dual-Inception [33] | 0.8621 | 0.8560 | 0.5868 | 0.5663 | 0.6645 | 0.6726 |

| STSTNet [34] | 0.8382 | 0.8686 | 0.6588 | 0.6810 | 0.6801 | 0.7013 |

| FeatRef [35] | 0.8915 | 0.8873 | 0.7372 | 0.7155 | 0.7011 | 0.7083 |

| SLSTT-LSTM [39] | 0.9010 | 0.8850 | 0.7150 | 0.6430 | 0.7400 | 0.7200 |

| TFT [36] | 0.9070 | 0.9090 | 0.7090 | 0.6560 | 0.7410 | 0.7180 |

| ARTNet | 0.8380 | 0.7983 | 0.7425 | 0.6690 | 0.7720 | 0.7692 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Wan, C.; Zhang, W.; Chen, Y.; Song, L.; Cheng, P. ARTNet for Micro-Expression Recognition. Sensors 2026, 26, 247. https://doi.org/10.3390/s26010247

Wan C, Zhang W, Chen Y, Song L, Cheng P. ARTNet for Micro-Expression Recognition. Sensors. 2026; 26(1):247. https://doi.org/10.3390/s26010247

Chicago/Turabian StyleWan, Chao, Wenbing Zhang, Yadong Chen, Liangliang Song, and Peng Cheng. 2026. "ARTNet for Micro-Expression Recognition" Sensors 26, no. 1: 247. https://doi.org/10.3390/s26010247

APA StyleWan, C., Zhang, W., Chen, Y., Song, L., & Cheng, P. (2026). ARTNet for Micro-Expression Recognition. Sensors, 26(1), 247. https://doi.org/10.3390/s26010247