FAIR-Net: A Fuzzy Autoencoder and Interpretable Rule-Based Network for Ancient Chinese Character Recognition

Abstract

Highlights

- Developed FAIR-Net, a hybrid model combining deep autoencoder-based feature extraction with an interpretable fuzzy rule-based classifier for ancient Chinese character recognition.

- Achieved state-of-the-art accuracy and high efficiency, with 97.91% accuracy on modern handwritten datasets and 83.25% on a 9233-class ancient character dataset, while being 5.5× faster than SwinT-v2-small.

- Demonstrates that integrating interpretable fuzzy rules with deep representations can significantly enhance both performance and interpretability in large-scale character recognition tasks.

- Provides a practical and explainable solution for processing degraded and stylistically diverse ancient scripts, enabling future applications in digital humanities and cultural heritage preservation.

Abstract

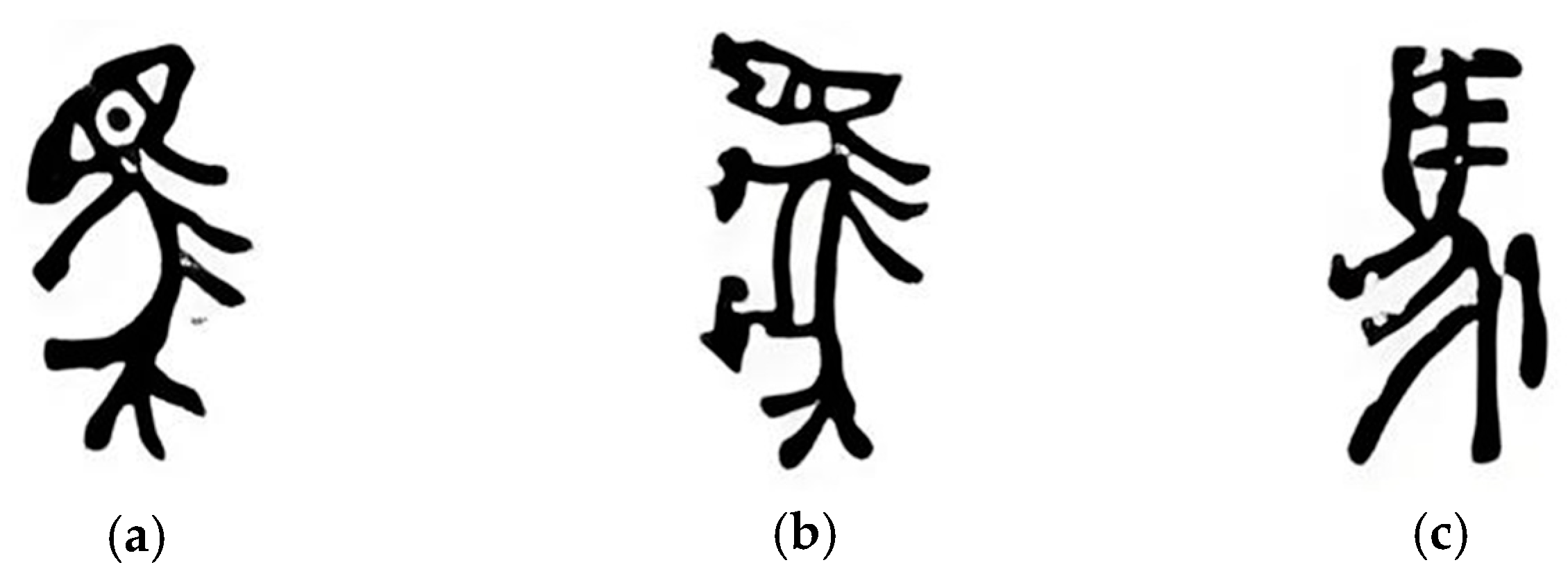

1. Introduction

- (a)

- We propose FAIR-Net, a novel hybrid framework that combines deep autoencoding with fuzzy rule-based reasoning for robust, interpretable recognition of ancient characters.

- (b)

- We design a fully explainable fuzzy neural network, where each hidden node corresponds to a meaningful fuzzy rule validated through semantic traceability.

- (c)

- We conduct extensive experiments on three public datasets, demonstrating FAIR-Net’s superior performance in both accuracy and efficiency, supported by statistical significance (p < 0.01) and large effect sizes (Cohen’s d > 0.8).

- (d)

- We provide visual and quantitative insights into the model’s generalization ability, showing resilience to degraded inputs and consistent rule activation behavior.

2. Dimensionality Reduction and Feature Extraction via Autoencoder

| Algorithm 1. Autoencoder-Based Dimensionality Reduction and Feature Extraction |

| Input: Preprocessed dataset = {xi}, xi ∈ ℝ64×64 Output: Encoder function fenc(·) mapping x → z ∈ ℝd, where d ∈ {10, 20, 30, 40} 1 Initialize Autoencoder AE: Encoder: ConvLayer × 3 → FC(4096→512) → FC(512→d) Decoder: FC(d → 512) → FC(512→4096) → DeconvLayer × 3 Activation: ReLU (hidden), Sigmoid (output) Loss function: = MSE(x, AE(x)) Optimizer: Adam (learning rate η = 1 × 10−3) 2 for epoch = 1 to 50 do 3 for each mini-batch ⊂ , || = 128 do 4 ← fenc() 5 ← fdec() 6 Compute loss: ←) 7 Backpropagate ∇ and update AE parameters 8 end for 9 if validation loss not improved for 5 epochs then 10 break 11 end if 12 end for 13 Save trained encoder fenc(·) 14 for each x ∈ do 15 z ← fenc(x) // latent vector of dimension d 16 Store z for fuzzy classification 17 end for |

3. Architecture and Learning Mechanism of the Fuzzy Neural Network in FAIR-Net

3.1. Fuzzy Rule-Based Network Structure with FCM Clustering

- Input layer: Receives the d-dimensional latent feature vectors (d ∈ {10, 20, 30, 40}) from the autoencoder.

- Hidden layer: Composed of fuzzy rule nodes generated via FCM clustering on the encoded feature space.

- Output layer: Performs linear aggregation followed by a Softmax transformation to produce probabilistic predictions over character classes.

3.2. Newton’s Method-Based IRLS for Output Layer Parameter Estimations

4. Design Framework of the Proposed Fuzzy Neural Recognition System

- Preprocessing: All character images are converted to grayscale, resized to 64 × 64, and normalized to [0, 1]. Standard data augmentation strategies (random rotation, translation, Gaussian noise) are optionally applied to improve robustness.

- Partitioning: The training set is used for autoencoder training, hyperparameter tuning, and fuzzy classifier learning, while the testing set is reserved exclusively for evaluating generalization performance.

- Encoder: Three convolutional layers followed by two fully connected layers map the input image x ∈ ℝ64×64 to a latent vector z ∈ ℝd, where d ∈ {10, 20, 30, 40}.

- Decoder: A mirrored structure reconstructs the input image from the latent representation, ensuring that the latent features preserve semantic structure.

- Training Objective: The autoencoder is optimized using Mean Squared Error (MSE) loss with Adam optimizer. Early stopping is employed to prevent overfitting.

- The hidden layer is constructed using Fuzzy C-Means (FCM) clustering applied to the latent feature vectors (z) obtained from the autoencoder.

- Each cluster center vi defines the premise of a fuzzy rule, while the corresponding fuzzy membership function μi(z), computed directly from the FCM procedure, serves as the activation of the i-th hidden node.

- These membership activations quantify the degree to which an input vector belongs to each fuzzy prototype.

- Consequently, the fuzzy rule base provides an interpretable representation, where each rule corresponds to a localized region of the latent feature space.

- A Cross-Entropy (CE) loss is used to train a multi-class softmax classifier over the fuzzy rule activations.

- To prevent overfitting and mitigate issues from feature collinearity, L2-norm regularization is added.

- This step ensures that the final decision layer maintains a balance between model expressiveness and numerical stability.

5. Experiments and Analysis

5.1. Dataset Description and Experimental Setup

- CASIA-HWDB1.0: Contains 3740 common Chinese characters based on GB2312-80 level-1 standard, written by 420 different individuals.

- CASIA-HWDB1.1: Covers 3755 character classes written by 300 new individuals.

- ICDAR2013 CompetitionDB: A test set of 3755 characters contributed by another 60 writers, officially used in the ICDAR 2013 offline Chinese handwriting recognition competition.

- Ancient Chinese Character Dataset: A large-scale, self-constructed dataset covering 9233 distinct classes of ancient Chinese scripts (oracle bone inscriptions, bronze inscriptions, large/small seal scripts, bamboo and silk manuscripts, coin inscriptions, and stone carvings). The dataset contains 979,907 images after enhancement (originally 673,639), sourced from scanned archaeological rubbings and photographs.

5.2. Experimental Results and Comparative Evaluation (CompetitionDB)

5.3. Ancient Chinese Character Dataset Evaluation

5.4. Ablation Study

- FCM-LSE: A basic model using Fuzzy C-Means clustering with Least Squares Estimation as the output layer, without any robust loss or regularization.

- FCM-IRLS-CE: A stronger variant incorporating Iteratively Reweighted Least Squares (IRLS) and the Cross Entropy (CE) loss, improving robustness to noisy labels and outliers.

- FCM-IRLS-CE-L2 (Proposed): The full version of our classifier, adding L2 regularization to further stabilize learning and prevent overfitting.

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Liu, C.L.; Yin, F.; Wang, D.H.; Wang, Q.F. CASIA Online and Offline Chinese Handwriting Databases. In Proceedings of the ICDAR, Beijing, China, 18–21 September 2011; pp. 37–41. [Google Scholar]

- Kim, D.; Im, H.; Lee, S. Adaptive Autoencoder-Based Intrusion Detection System with Single Threshold for CAN Networks. Sensors 2025, 25, 4174. [Google Scholar] [CrossRef]

- Huang, Y.; Fu, X.; Li, L.; Zha, Z.-J. Learning Degradation-Invariant Representation for Robust Real-World Person Re-identification. Int. J. Comput. Vis. 2022, 130, 2770–2796. [Google Scholar] [CrossRef]

- Sturgeon, D. Large-Scale Optical Character Recognition of Pre-Modern Chinese Texts. Int. J. Buddh. Thought Cult. 2018, 28, 11–44. [Google Scholar] [CrossRef]

- Wang, H.; Pan, C.; Guo, X.; Ji, C.; Deng, K. From Object Detection to Text Detection and Recognition: A Brief Evolution History of Optical Character Recognition. WIREs Comput. Stat. 2021, 13, e1547. [Google Scholar] [CrossRef]

- Kim, E.-H.; Wang, Z.; Zong, H.; Jiang, Z.; Fu, Z.; Pedrycz, W. Design of Tobacco Leaves Classifier Through Fuzzy Clustering-Based Neural Networks with Multiple Histogram Analyses of Images. IEEE Trans. Ind. Inform. 2024, 20, 4698–4709. [Google Scholar] [CrossRef]

- Dalal, N.; Triggs, B. Histograms of Oriented Gradients for Human Detection. In Proceedings of the CVPR, San Diego, CA, USA, 20–25 June 2005; Volume 1, pp. 886–893. [Google Scholar]

- Cireşan, D.; Meier, U.; Schmidhuber, J. Multi-column Deep Neural Networks for Image Classification. In Proceedings of the CVPR, Providence, RI, USA, 16–21 June 2012; pp. 3642–3649. [Google Scholar]

- Rudin, C. Stop Explaining Black Box Machine Learning Models for High Stakes Decisions and Use Interpretable Models Instead. Nat. Mach. Intell. 2019, 1, 206–215. [Google Scholar] [CrossRef] [PubMed]

- Wang, Z.; Kim, E.-H.; Oh, S.-K.; Pedrycz, W.; Fu, Z.; Yoon, J.H. Reinforced Fuzzy-Rule-Based Neural Networks Realized Through Streamlined Feature Selection Strategy and Fuzzy Clustering with Distance Variation. IEEE Trans. Fuzzy Syst. 2024, 32, 5674–5686. [Google Scholar] [CrossRef]

- Yousef, M.; Hussain, K.F.; Mohammed, U.S. Accurate, Data-Efficient, Unconstrained Text Recognition with Convolutional Neural Networks. Pattern Recognit. 2020, 108, 107482. [Google Scholar] [CrossRef]

- Hinton, G.E.; Salakhutdinov, R.R. Reducing the Dimensionality of Data with Neural Networks. Science 2006, 313, 504–507. [Google Scholar] [CrossRef] [PubMed]

- Zhu, Y.; Duan, H.; Wang, Z.; Kim, E.-H.; Fu, Z.; Pedrycz, W. Robust Classification via Interval Type-2 Fuzzy C-Means and Gradient Boosting. IEEE Trans. Fuzzy Syst. 2025, 33, 3103–3117. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin Transformer: Hierarchical Vision Transformer Using Shifted Windows. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; pp. 10012–10022. [Google Scholar]

- Wang, Z.; Oh, S.-K.; Pedrycz, W.; Kim, E.-H.; Fu, Z. Design of Stabilized Fuzzy Relation-Based Neural Networks Driven to Ensemble Neurons/Layers and Multi-Optimization. Neurocomputing 2022, 486, 27–46. [Google Scholar] [CrossRef]

- Zheng, Y.; Chen, Y.; Wang, X.; Qi, D.; Yan, Y. Ancient Chinese Character Recognition with Improved Swin-Transformer and Flexible Data Enhancement Strategies. Sensors 2024, 24, 2182. [Google Scholar] [CrossRef]

- Vincent, P.; Larochelle, H.; Bengio, Y. Extracting Robust Features with Denoising Autoencoders. In Proceedings of the 25th International Conference on Machine Learning, Helsinki, Finland, 5–9 July 2008; pp. 1096–1103. [Google Scholar]

- You, Y.; Kim, E.-H.; Huang, H.; Pedrycz, W. Data Transformation-driven Fuzzy Clustering Neural Network with Layer-Wise and End-to-End Training. IEEE Trans. Fuzzy Syst. 2025, 1–16. [Google Scholar] [CrossRef]

- Wang, Z.; Oh, S.-K.; Fu, Z.; Pedrycz, W.; Roh, S.-B.; Yoon, J.H. Self-Organizing Hybrid Fuzzy Polynomial Neural Network Classifier Driven Through Dynamically Adaptive Structure and Compound Regularization Technique. IEEE Trans. Fuzzy Syst. 2024, 32, 5385–5399. [Google Scholar] [CrossRef]

- Wang, Z.; Oh, S.-K.; Wang, Z.; Fu, Z.; Pedrycz, W.; Yoon, J.H. Design of Progressive Fuzzy Polynomial Neural Networks through Gated Recurrent Unit Structure and Correlation/Probabilistic Selection Strategies. Fuzzy Sets Syst. 2023, 470, 108656. [Google Scholar] [CrossRef]

- Arante, H.R.C.; Sybingco, E.; Roque, M.A.; Ambata, L.; Chua, A.; Gutierrez, A.N. Development of a Secured IoT-Based Flood Monitoring and Forecasting System Using Genetic-Algorithm-Based Neuro-Fuzzy Network. Sensors 2025, 25, 3885. [Google Scholar] [CrossRef]

- Yang, C.; Wang, Z.; Oh, S.-K.; Pedrycz, W.; Yang, B. Ensemble Fuzzy Radial Basis Function Neural Networks Architecture Driven with the Aid of Multi-Optimization through Clustering Techniques and Polynomial-Based Learning. Fuzzy Sets Syst. 2022, 438, 62–83. [Google Scholar] [CrossRef]

- Cao, Z.; Lu, J.; Cui, S.; Zhang, C. Zero-Shot Handwritten Chinese Character Recognition with Hierarchical Decomposition Embedding. Pattern Recognit. 2020, 107, 107488. [Google Scholar] [CrossRef]

- Hadjahmadi, A.H.; Homayounpour, M.M. Robust Feature Extraction and Uncertainty Estimation Based on Attractor Dynamics in Cyclic Deep Denoising Autoencoders. Neural Comput. Appl. 2019, 31, 7989–8002. [Google Scholar] [CrossRef]

- Roh, S.-B.; Oh, S.-K.; Pedrycz, W.; Wang, Z.; Fu, Z.; Seo, K. Design of Iterative Fuzzy Radial Basis Function Neural Networks Based on Iterative Weighted Fuzzy C-Means Clustering and Weighted LSE Estimation. IEEE Trans. Fuzzy Syst. 2022, 30, 4273–4285. [Google Scholar] [CrossRef]

- Shen, L.; Chen, B.; Wei, J.; Xu, H.; Tang, S.-K.; Mirri, S. The Challenges of Recognizing Offline Handwritten Chinese: A Technical Review. Appl. Sci. 2023, 13, 3500. [Google Scholar] [CrossRef]

- Zhong, Z.; Jin, L.; Xie, Z. High Performance Offline Handwritten Chinese Character Recognition Using GoogLeNet and Directional Feature Maps. In Proceedings of the 2015 13th International Conference on Document Analysis and Recognition (ICDAR), Tunis, Tunisia, 23–26 August 2015; pp. 846–850. [Google Scholar]

- Zhong, Y.; Daud, K.M.; Nor, A.N.B.M.; Ikuesan, R.A.; Moorthy, K. Offline Handwritten Chinese Character Using Convolutional Neural Network: State-of-the-Art Methods. J. Adv. Comput. Intell. Intell. Inform. 2023, 27, 567–575. [Google Scholar]

| Dataset | Sample Size | Image Dimension | Number of Classes | Number of Writers | Usage |

|---|---|---|---|---|---|

| CASIA-HWDB1.0 | 1,680,258 | 64 × 64 (raw) | 3740 | 420 | Training/Validation |

| CASIA-HWDB1.1 | 1,172,907 | 64 × 64 (raw) | 3755 | 300 | Training/Validation |

| ICDAR2013 CompetitionDB | 224,000 | 64 × 64 (raw) | 3755 | 60 | Testing |

| Ancient Chinese Character Dataset | 979,907 (enhanced; originally 673,639) | 256 × 256 (raw) | 9233 | N/A | Training/Testing |

| Model | Input Size | Mask Size | Normalization | Convolution Layers | Fully Connected Layers |

|---|---|---|---|---|---|

| AlexNet | 108 × 108 | 114 × 114 | [0, 1] float | 5 | 2 |

| GoogLeNet | 112 × 112 | 120 × 120 | [0, 1] float | 22 | 1 |

| CNN-Fujitsu | 96 × 96 | 120 × 120 | [0, 1] float | 3 | 2 |

| ART-CNN | 96 × 96 | 120 × 120 | [0, 1] float | 7 | 2 |

| R-CNN Voting | 96 × 96 | 120 × 120 | [0, 1] float | 7 | 2 |

| ResNet101 | 224 × 224/256 × 256 | – | [0, 1] float | 101 | 1 |

| SwinT-v2-small | 256 × 256 | 7 | [0, 1] float | – | 2 |

| FAIR-Net (Proposed) | Latent vector (10–40) | – | – | – | – |

| Parameter | Range/Values |

|---|---|

| Number of Clusters | {2, 3, 4, 5} |

| Fuzzification Coefficient (m) | {1.1, 1.2, 2.0} |

| Burn-in Iterations | {50, 100, 150} |

| Thinning Interval | {25, 50, 75} |

| L2 Regularization Coefficient | {0.0001, 0.001, 0.01, 0.05} |

| Number of Features | Number of Clusters | Training Accuracy (%) | Testing Accuracy (%) |

|---|---|---|---|

| 10 | 2 | 98.30 ± 0.41 | 96.68 ± 1.24 |

| 3 | 98.61 ± 0.38 | 97.91 ± 1.03 | |

| 4 | 98.44 ± 0.55 | 96.38 ± 2.07 | |

| 5 | 98.72 ± 0.49 | 97.03 ± 1.89 | |

| 20 | 2 | 98.33 ± 0.37 | 96.59 ± 1.66 |

| 3 | 98.69 ± 0.46 | 97.86 ± 1.27 | |

| 4 | 98.50 ± 0.52 | 96.21 ± 2.38 | |

| 5 | 98.78 ± 0.43 | 97.01 ± 1.94 | |

| 30 | 2 | 98.28 ± 0.60 | 96.50 ± 2.13 |

| 3 | 98.66 ± 0.42 | 96.89 ± 1.42 | |

| 4 | 98.47 ± 0.58 | 96.42 ± 2.26 | |

| 5 | 98.28 ± 0.48 | 97.79 ± 1.12 | |

| 40 | 2 | 98.35 ± 0.39 | 96.62 ± 1.78 |

| 3 | 98.67 ± 0.36 | 97.83 ± 1.01 | |

| 4 | 98.48 ± 0.57 | 96.47 ± 2.06 | |

| 5 | 98.79 ± 0.41 | 97.12 ± 1.95 | |

| Avg. | 98.52 | 96.96 |

| Algorithms | Testing Accuracy (%) | Computing time |

|---|---|---|

| AlexNet [27] | 95.49 ± 1.22 | 43 s |

| GoogLeNet [28] | 96.26 ± 1.18 | 2 m 24 s |

| CNN-Fujitsu [28] | 94.77 ± 1.65 | 36 m 15 s |

| ART-CNN [29] | 95.04 ± 1.47 | 1 m 16 s |

| R-CNN Voting [19] | 95.55 ± 1.30 | 4 m 53 s |

| FAIR-Net (Proposed) | 97.91 ± 1.03 | 25 s |

| Algorithms | Training Accuracy (%) | Testing Accuracy (%) | Computing Time |

|---|---|---|---|

| ResNet101 [14] | 85.42 ± 0.57 | 83.09 ± 1.64 | 28 h 36 m |

| SwinT-v2-small [15] | 87.16 ± 0.49 | 85.74 ± 2.52 | 24 h 24 m |

| FAIR-Net (Proposed) | 84.03 ± 0.46 | 83.25 ± 1.48 | 4 h 24 m |

| Data | Work | 10 Features | 20 Features | 30 Features | 40 Features |

|---|---|---|---|---|---|

| ICDAR2013 CompetitionDB | FCM-LSE | 94.82 ± 1.94 | 94.51 ± 2.12 | 94.75 ± 1.87 | 94.43 ± 2.05 |

| FCM-IRLS-CE | 97.52 ± 1.20 | 97.41 ± 1.36 | 97.33 ± 1.18 | 97.46 ± 1.15 | |

| FCM-IRLS-CE-L2 (Proposed) | 97.91 ± 1.03 | 97.86 ± 1.27 | 97.79 ± 1.12 | 97.83 ± 1.01 | |

| Ancient Chinese Character Dataset | FCM-LSE | 81.47 ± 1.82 | 81.96 ± 1.77 | 81.88 ± 1.90 | 81.41 ± 1.98 |

| FCM-IRLS-CE | 82.87 ± 1.55 | 82.96 ± 1.60 | 82.83 ± 1.62 | 82.90 ± 1.58 | |

| FCM-IRLS-CE-L2 (Proposed) | 82.98 ± 1.50 | 83.25 ± 1.48 | 82.94 ± 1.45 | 83.02 ± 1.53 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ge, Y.; Zhang, Y.; Roh, S.-B. FAIR-Net: A Fuzzy Autoencoder and Interpretable Rule-Based Network for Ancient Chinese Character Recognition. Sensors 2025, 25, 5928. https://doi.org/10.3390/s25185928

Ge Y, Zhang Y, Roh S-B. FAIR-Net: A Fuzzy Autoencoder and Interpretable Rule-Based Network for Ancient Chinese Character Recognition. Sensors. 2025; 25(18):5928. https://doi.org/10.3390/s25185928

Chicago/Turabian StyleGe, Yanling, Yunmeng Zhang, and Seok-Beom Roh. 2025. "FAIR-Net: A Fuzzy Autoencoder and Interpretable Rule-Based Network for Ancient Chinese Character Recognition" Sensors 25, no. 18: 5928. https://doi.org/10.3390/s25185928

APA StyleGe, Y., Zhang, Y., & Roh, S.-B. (2025). FAIR-Net: A Fuzzy Autoencoder and Interpretable Rule-Based Network for Ancient Chinese Character Recognition. Sensors, 25(18), 5928. https://doi.org/10.3390/s25185928